Abstract

Background:

We sought to quantify the efficiency and acceptability of Internet-based recruitment for engaging an especially hard-to-reach cohort (college-students with type 1 diabetes, T1D) and to describe the approach used for implementing a health-related trial entirely online using off-the-shelf tools inclusive of participant safety and validity concerns.

Method:

We recruited youth (ages 17-25 years) with T1D via a variety of social media platforms and other outreach channels. We quantified response rate and participant characteristics across channels with engagement metrics tracked via Google Analytics and participant survey data. We developed decision rules to identify invalid (duplicative/false) records (N = 89) and compared them to valid cases (N = 138).

Results:

Facebook was the highest yield recruitment source; demographics differed by platform. Invalid records were prevalent; invalid records were more likely to be recruited from Twitter or Instagram and differed from valid cases across most demographics. Valid cases closely resembled characteristics obtained from Google Analytics and from prior data on platform user-base. Retention was high, with complete follow-up for 88.4%. There were no safety concerns and participants reported high acceptability for future recruitment via social media.

Conclusions:

We demonstrate that recruitment of college students with T1D into a longitudinal intervention trial via social media is feasible, efficient, acceptable, and yields a sample representative of the user-base from which they were drawn. Given observed differences in characteristics across recruitment channels, recruiting across multiple platforms is recommended to optimize sample diversity. Trial implementation, engagement tracking, and retention are feasible with off-the-shelf tools using preexisting platforms.

The transition into emerging adulthood is a critical period when health outcomes may be imperiled for adolescents and young adults (AYA) with type 1 diabetes (T1D),1-4 making it especially important to develop strategies to engage and support them. Ensuring effective disease self-management and self-regulation of risk behaviors are vital for avoiding diabetes complications5,6 and minimizing costs. 7 Movement away from established care relationships and supports, common for college attending AYA, can undermine self-management and expose youth to potentially devastating health consequences.4,8,9 College students with T1D may be especially vulnerable to these risks; many reside in settings that are ill-equipped to identify and support their health needs. 10 The older adolescent and young adult years are a high-risk period for many risk behaviors including substance use. 11 Youth who are under the influence of any substance may have higher risk for poor self-care and nontreatment. In particular, the profoundly “alcogenic” features of college environments12,13 may promote consumption of alcohol including at heavy and binge levels,8,14 increasing risks for acute hypoglycemia 15 and treatment nonadherence. 16 Despite the potential health problems for youth with T1D in college, 17 we know little about how best to engage them around diabetes self-management and avoidance of risk behaviors.

Consistent with their developmental status, successful interventions are likely to require a patient-centered focus, emphasis on shared decision making and autonomy, and peer and/or provider directives reinforcing the value of maintaining health and avoiding risks.18-21 The model may require flexibility and delivery on a web-enabled platform given the mobile, digitally oriented nature of this group. Still, recruiting AYA participants into studies targeting health behaviors remains challenging.22,23 Internet-based research, particularly social media-based research, has gained traction for reaching AYA;24-27 98% of AYA use the Internet 28 and 88% use social media. 29 Web-based technologies hold promise for expanding the reach of health research,30-35 providing an opportunity to cast a broader net than traditional recruitment and extending recruitment to previously hard-to-reach populations, including AYA. 36 However, web-enabled studies may experience unique problems with recruitment, retention, safety concerns, and validity and representativeness of samples.37,38

An emerging evidence base has begun to describe the areas of opportunity and barriers to engaging hard-to-reach populations in Internet-enabled research.39-45 We sought to contribute to this literature by ascertaining the utility of various Internet-based methods for engaging college students with T1D in completing a comparative effectiveness trial testing competing versions of an educational intervention designed to influence diabetes self-management and alcohol consumption behaviors. In addition to measuring study interest and participation across recruitment strategies, we further describe the implementation of our study, including consideration and balance of issues related to conducting an entirely online health-related study within the auspices of academic research and human subject oversight; technical implementation of the content delivery and data collection platform; approach to monitoring safety and ensuring validity of study data and protecting against invalid (duplicate/false) records.

Methods

Participatory Research / Partnership Building

To promote recruitment, we established partnerships with two diabetes advocacy groups with large AYA followings—TuDiabetes (a program of Beyond Type 1) and College Diabetes Network (CDN). Study investigators contacted these groups, presented aligned goals, and proposed utilizing their networks for targeted recruitment. We worked closely with liaisons from both groups to select the platforms, iterate on recruitment materials, and distribute recruitment messages. CDN was provided a monetary donation to their nonprofit organization for their collaboration efforts.

Study Design and Procedures

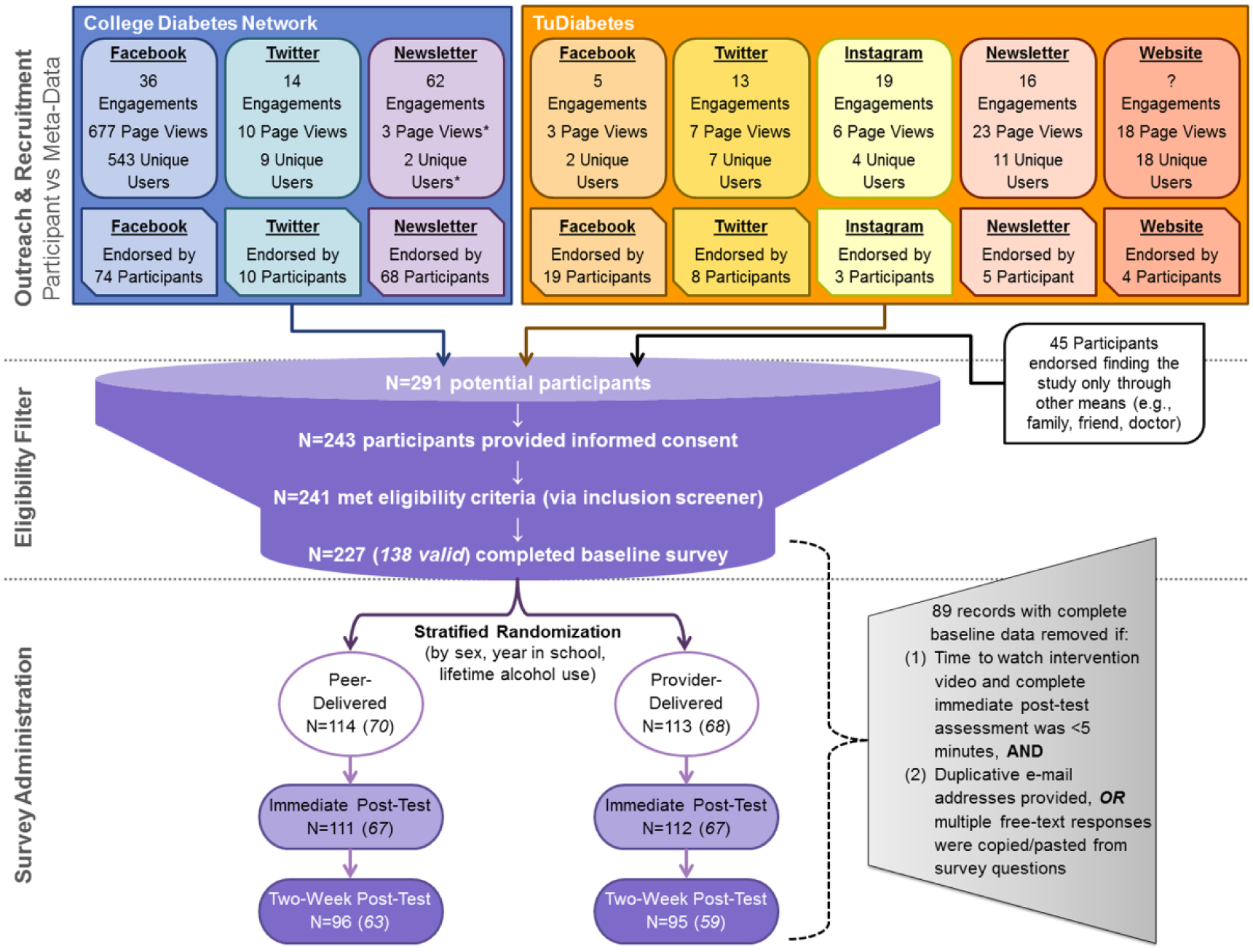

Recruitment messages were posted on the social media accounts (ie, Facebook, Twitter, Instagram) of the two advocacy groups, also via direct newsletters to the email distribution lists of both networks and a website banner on one network’s website. The two collaborating networks provided the research team with engagement metrics for each recruitment message posted (Figure 1). The recruitment message posted on each platform promoted the study (brief descriptions, inclusion criteria) and provided a unique link directing participants to one of eight identical versions of the study website, with visit and user metrics separately tracked using Google Analytics (GA). The eight identical landing pages included general study information, research team contact information, and a GA disclaimer.

Trial recruitment flow diagram.

Each recruitment platform was intended to be uniquely paired to its own website for tracking (eg, CDN’s Facebook post directed users to a different landing page than the CDN’s Twitter post). In two instances, in addition to providing the correct webpage link, the CDN email newsletter also included the link to the webpage identified for tracking CDN Facebook engagement and the TuDiabetes newsletter included the link identified for tracking TuDiabetes Instagram engagement—thus there may be some landing page miscounts. We tracked traffic to each page during the recruitment period (Appendix 1), and determined device on which the page was accessed (mobile, tablet, desktop), referral source, and basic demographics characteristics (Appendix 2).

Landing pages directed participants to click an outbound link that transferred them to a Research Electronic Data Capture (REDCap) survey, 46 hosted on secure servers at Boston Children’s Hospital. The number of clicks on the outbound link were tracked and quantified with GA; however, survey entry directly through the web address (ie, when the link was copied and distributed) would not be captured via GA tracking. After entering the survey, participants viewed an online consent form with language included in a typical written consent. Of the 291 survey entries, 243 participants consented by selecting “Yes, I consent to participate in this study.” at the end of the online form (versus a converse decline statement). No respondents declined consent (which would have exited them from the survey after again displaying the study safety information) but 48 participants exited the survey prior to affirming or declining consent.

Following consent, there were three screening questions to ascertain if respondents were between the ages of 17 and 25 years, had received a diagnosis of T1D, and were currently attending or enrolled in a college/university; respondents who did not meet inclusion criteria (N = 2) were exited from the survey and directed to the study safety resources and contact information. The 241 participants who met these criteria were directed to complete the remainder of the baseline survey; 14 participants did not complete the baseline survey and were subsequently not randomized to either version of the intervention video.

Intervention

Upon baseline survey completion, participants were automatically randomized to receive one of two brief educational videos using a randomization module programmed in REDCap that executed a stratified randomization scheme based on sex, year in college, and lifetime alcohol use. REDCap automatically showed participants the appropriate intervention video, either narrated by an endocrinologist (provider) or a college student with T1D (peer), which was housed on a private YouTube channel and embedded in REDCap. Aside from the spokesperson delivering the message, all other video content was identical.

Following receipt of the intervention, participants were directed to complete a brief (“immediate”) follow-up survey. Two weeks after completion of the initial session (baseline survey, intervention video, and immediate follow-up survey), participants were sent a follow-up survey via automated email invitation through REDCap. If the participant did not complete the two-week follow-up survey, they received up to two additional automated email reminders. Participants received a $20 gift card for an online retailer for completion of each of the two survey time points (“initial” session and the two-week follow-up survey).

Safety

Each recruitment landing page provided a link to a separate safety resources page (identified with: “Please click here if you are interested in learning more about Protecting Your Health, if you are in crisis, or if you think you may need help regarding a substance abuse problem”; the latter featured given the goal of addressing alcohol use risk). The safety resource page included the website and phone number for the National Suicide Prevention Lifeline and contact information and websites for national substance use and mental health services. A safety protocol was developed to convey these resources directly to participants if they displayed signs of distress/crisis in communication with the research team via the study email. The study email included an automatic reply to any incoming message that contained these resources and notified participants that messages were not guaranteed be viewed instantly nor was the research account monitored routinely on evenings or weekends. These safety procedures were critical for study approval by the Institutional Review Board.

Survey Measures

Survey data were also used to ascertain platform yield; participants were asked how they heard about the survey and invited to select all that apply from a preset list, including “other” (free text).

Participants provided demographic data (eg, age, sex, race/ethnicity, parental education, school year, enrollment, and college/university attended) and health/diabetes management information (eg, age diagnosed, last hemoglobin A1c [HbA1c], insulin pump use, continuous glucose monitoring [CGM] use, average blood glucose tests per day, self-rated health). Participants also provided data on several measures designed to assess the effectiveness of the intervention (ie, alcohol/substance use behaviors and attitudes). Participants were asked about their likelihood of study participation based on hypothetical recruitment methods and rated each of seven options from “very unlikely” to “very likely.” Survey time and date stamps tracked completion across time-points and determined time to complete each study component.

Assessing Data Validity

Because we relied on existing software that did not have a setting to prevent duplicative or fraudulent entries, we applied post hoc exclusion of records that were identified as invalid (ie, the same individual completing the survey multiple times) through the application of a series of decision rules. Invalid records were categorized if (1) time to complete the immediate follow-up survey and watch the video was <5 minutes AND (2) repeated email address noted across multiple records (where repetition is defined as identical replication of a previous email, with or without only one differentiating number or letter, or pattern of multiple, three or more, appearances of the same word combination, N = 54 records from seven email clusters, with each cluster likely from distinct individuals) OR multiple qualitative/free text responses were verbatim “copy and paste” from survey text (N = 35). Applying these rules identified 89 invalid records out of a total 227; these records were subsequently excluded from our analytic sample.

Statistical Analysis

All analyses were performed using SAS 9.4 (Cary, NC). To ascertain the impact of excluding invalid records from our sample, we calculated descriptive statistics for the full sample (N = 227), valid cases (N = 138), and invalid records (N = 89); differences in characteristics between valid and invalid records were assessed using appropriate bivariate tests. To compare the features of (valid) participants (N = 138) across recruitment platform, we calculated descriptive statistics for each recruitment platform and sampling frame endorsed by participants; as participants could endorse multiple referral sources, differences in characteristics were assessed for each source (eg, saw recruitment materials on Facebook vs did not see any recruitment materials on Facebook) using appropriate bivariate tests. Overall summaries of outreach platform acceptability among valid cases were characterized descriptively. Finally, sample representativeness was assessed by comparing descriptive characteristics of valid cases to user features derived from GA and to previously described characteristics of the CDN source population; 47 differences were assessed using appropriate bivariate tests.

Results

Although no participant communication to study emails indicated safety concerns or sought additional safety information, there were two visits to the safety resource information page listed on the study website.

Over half of all study webpage users (337 of 596, Appendix 1) accessed the study website within the first day after recruitment posts went live and 105 participants progressed through to study consent within the first day. Facebook was the highest yield recruitment source (endorsed by 41% of respondents; Figure 1).

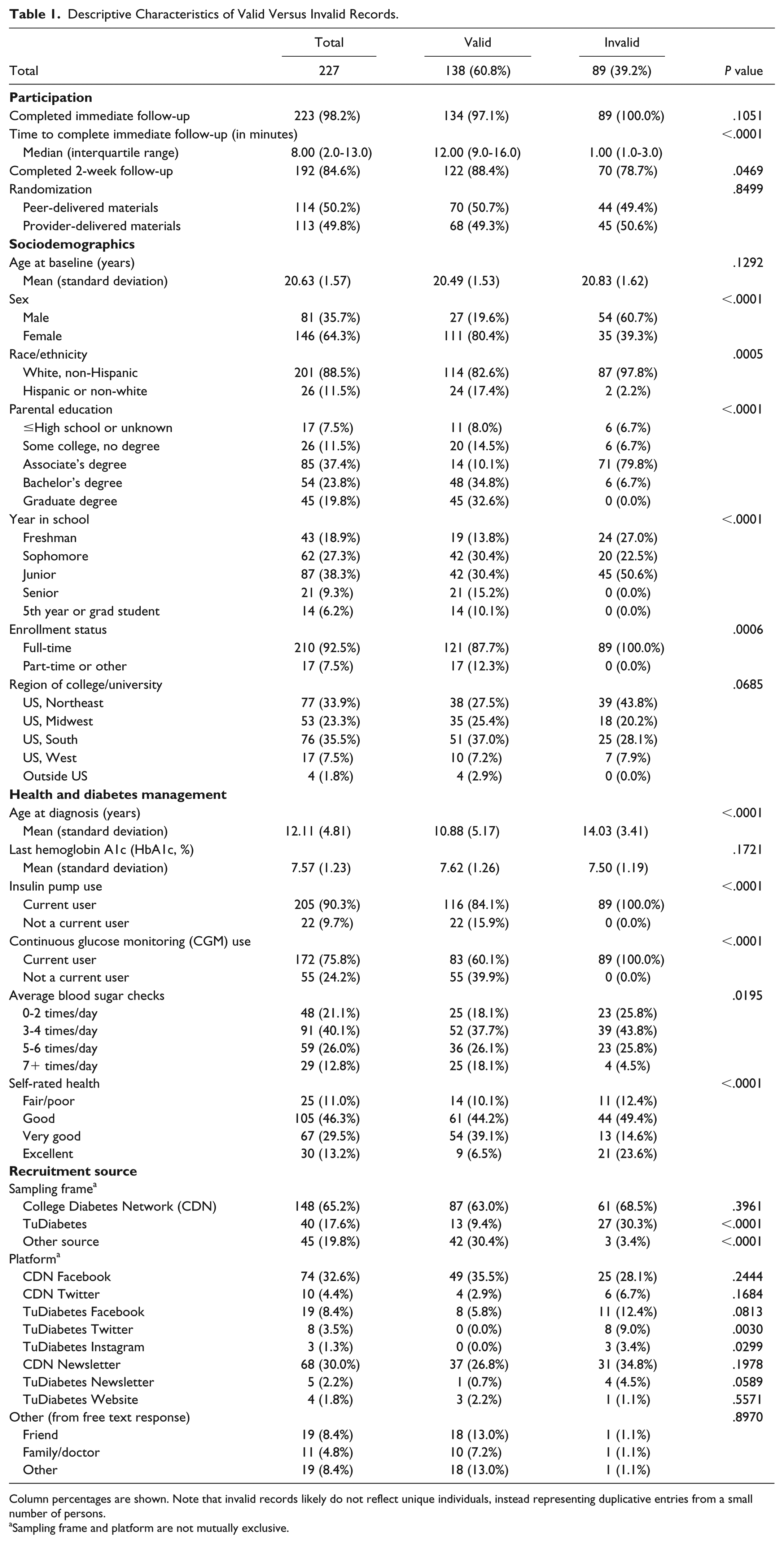

Among the 227 data records, 60.8% were determined to be valid cases (Table 1). Compared to valid cases, invalid records were significantly more likely to have been recruited from TuDiabetes Twitter or Instagram posts and differed substantially across most sociodemographic and health characteristics.

Descriptive Characteristics of Valid Versus Invalid Records.

Column percentages are shown. Note that invalid records likely do not reflect unique individuals, instead representing duplicative entries from a small number of persons.

Sampling frame and platform are not mutually exclusive.

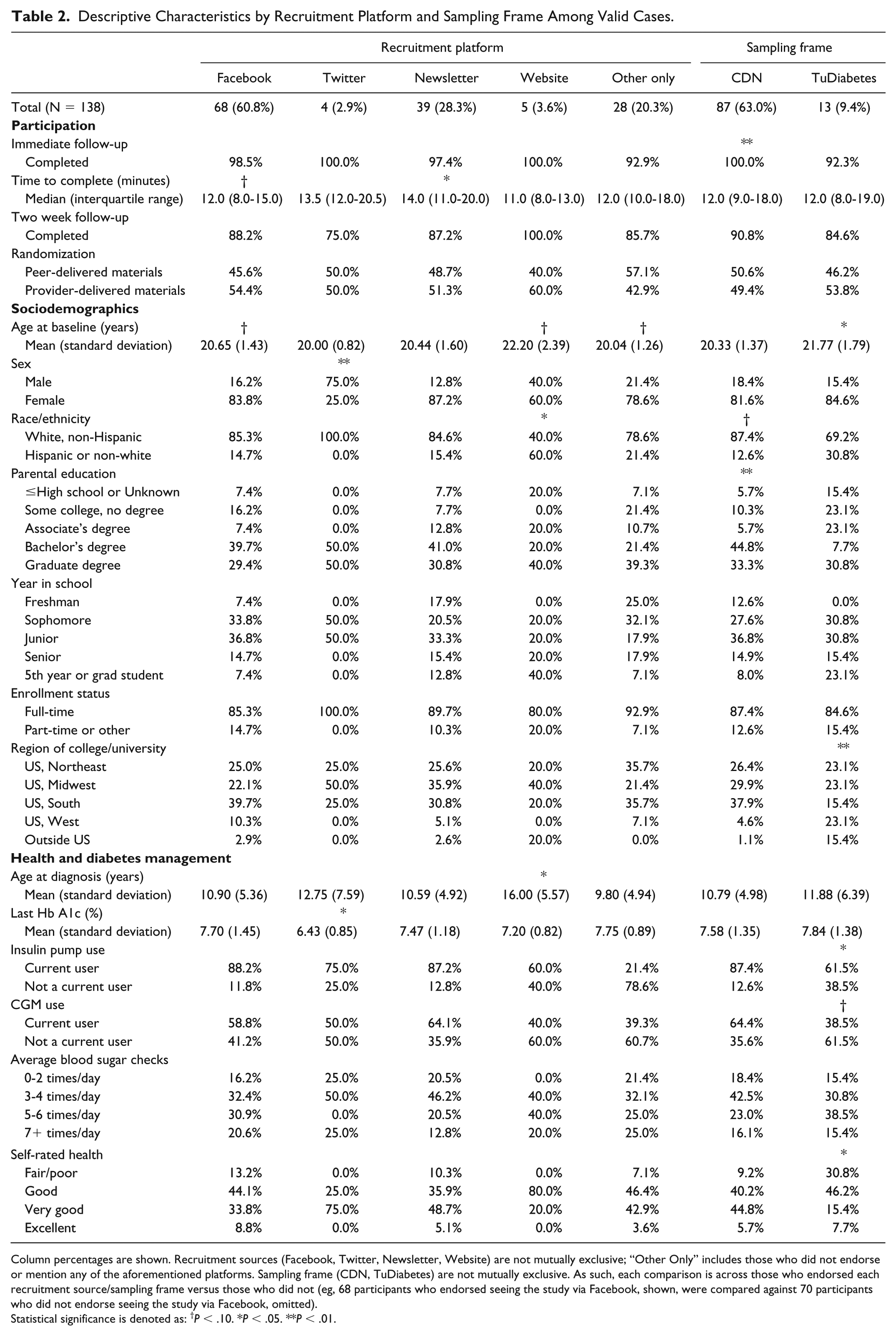

Characteristics of valid cases also differed by recruitment platform (Table 2). Cases recruited from Twitter were more likely to be male and reported a lower HbA1c on average. Cases recruited from a website banner were more likely to be non-white or Hispanic, and have been diagnosed at an older age. Other qualitative (nonsignificant) differences in year in school, region, and diabetes management were observed. Differences between individuals recruited from CDN (more educated parents) versus TuDiabetes (older age, living outside the United States, in worse health) were also noted.

Descriptive Characteristics by Recruitment Platform and Sampling Frame Among Valid Cases.

Column percentages are shown. Recruitment sources (Facebook, Twitter, Newsletter, Website) are not mutually exclusive; “Other Only” includes those who did not endorse or mention any of the aforementioned platforms. Sampling frame (CDN, TuDiabetes) are not mutually exclusive. As such, each comparison is across those who endorsed each recruitment source/sampling frame versus those who did not (eg, 68 participants who endorsed seeing the study via Facebook, shown, were compared against 70 participants who did not endorse seeing the study via Facebook, omitted).

Statistical significance is denoted as: †P < .10. *P < .05. **P < .01.

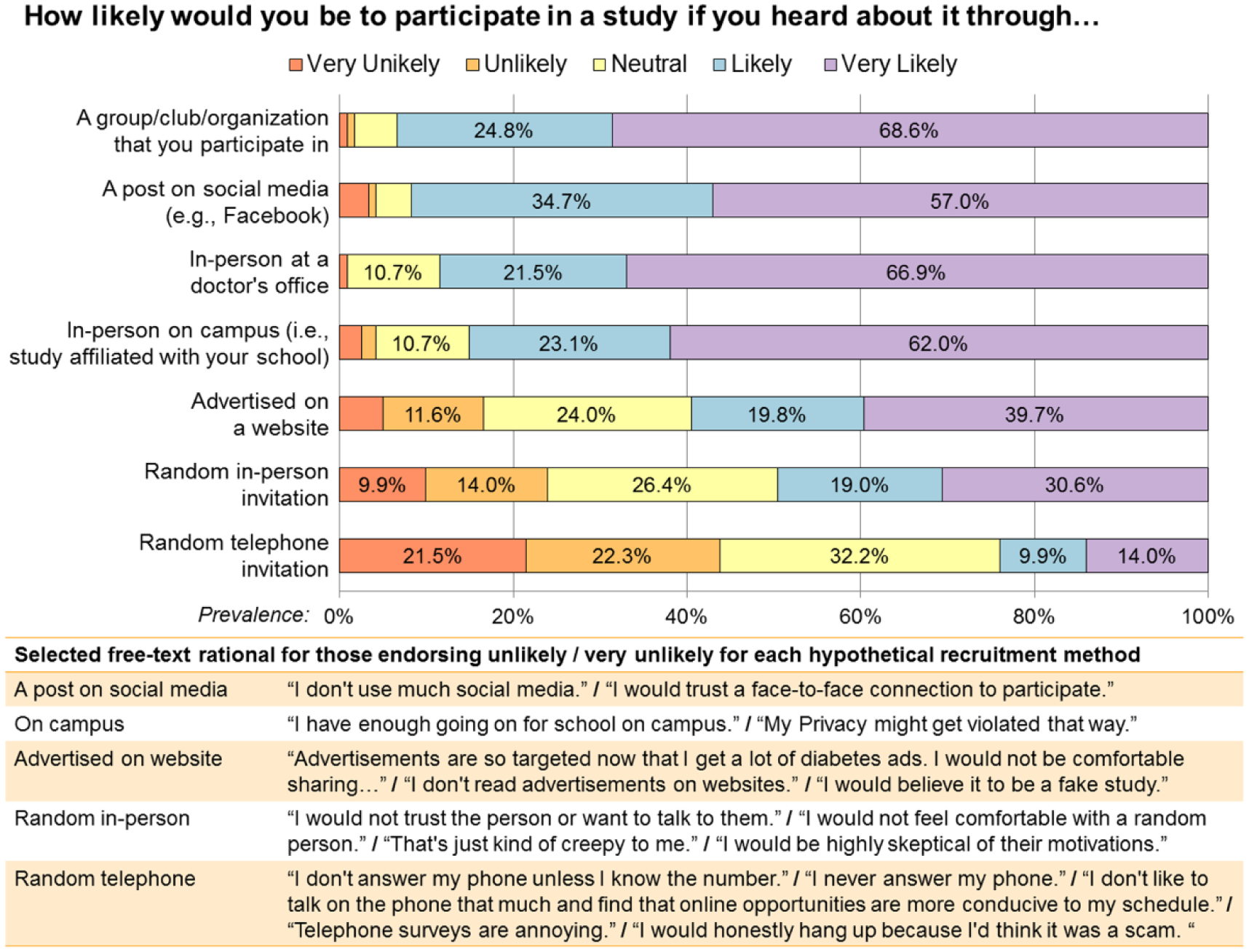

Participants reported high acceptability for future recruitment through a post on social media, with 91.7% reporting that they would be likely or very likely to participate (Figure 2). Lowest acceptability was for web-based advertisement (59.5%), random in-person invitation (49.6%), and random telephone invitation (24.0%). For the least accepted forms of recruitment, participants reported concerns about credibility/trustworthiness and overwhelmingly noted that they do not answer phone calls from unknown numbers.

Acceptability of recruitment methods.

Finally, the analytic sample of valid cases closely resembled the user distribution of sex and country, obtained from GA, and was similar to the CDN population with respect to reported population characteristics (Appendix 2).

Discussion

We demonstrate that recruitment of a traditionally hard-to-reach population (college students with T1D) into a longitudinal comparative effectiveness intervention trial via outreach through social media is feasible, efficient, acceptable to participants, and yields a sample that is largely representative of the user-base from which the sample was drawn. As there are subtle differences in participant characteristics across different recruitment platforms, researchers may wish to consider diversifying recruitment efforts across platforms to maximize sample diversity in future research. Tracking of initial engagement with recruitment posts and subsequent drop-off at each stage of the study is facilitated by off-the-shelf software and preexisting platforms.

A major criticism of Internet-based recruitment is that the underlying population (“denominator”) is ill-defined 37 and it may thus be hard to identify issues with sample representativeness. Although these issues are not always clearly addressed even when more traditional recruitment methods are utilized, we demonstrate that relying on well-defined social media spaces and careful tracking of engagement from initial post through study completion can be used to shed light on the potential for selection bias and issues with external validity/generalizability. User demographics derived from recruitment landing pages were taken to reflect the population of invited participants, and though limited, aligned closely with demographics of valid cases. Moreover, more extensive comparison of demographics revealed that valid cases were similar to the predominant sampling frame (CDN), with small differences in year in school and age. Although this sample is likely not representative of all college students with T1D (eg, given our higher proportion of female respondents), these analyses suggest participant loss during sampling efforts and refusal were nondifferential and led to a sample that was fairly representative of the sampling frame. Importantly, achieving sample representativeness was likely affected by relying on different modalities (ie, social media versus Internet-based) and platforms (eg, Facebook, Twitter) as differences in user features are known to exist across social media platforms and users (versus nonusers), 29 and these underlying differences were reflected in our data as well.

Overall, social media appears to be a highly acceptable way to recruit young people to participate in clinical research, with nearly equivalent acceptability as recruitment in a doctor’s office but perhaps with higher potential for retention, as we achieved complete follow-up for 88.4% of valid cases. Youth exist online to a greater degree than older populations, 29 so it is unsurprising that hosting all trial components virtually enabled their participation. Interestingly, when asked why youth were unlikely to participate in studies with a phone invitation, participants overwhelmingly noted that they generally do not answer their phones (especially from unknown callers) and that their schedules are often not conducive to phone calls; as such, the utility of using telephone calls for initial recruitment or even study follow-up for AYA may be limited. Endeavoring to provide youth with opportunities to participate in research virtually including using approaches that support online consent and follow-up, may optimize representativeness and retention.

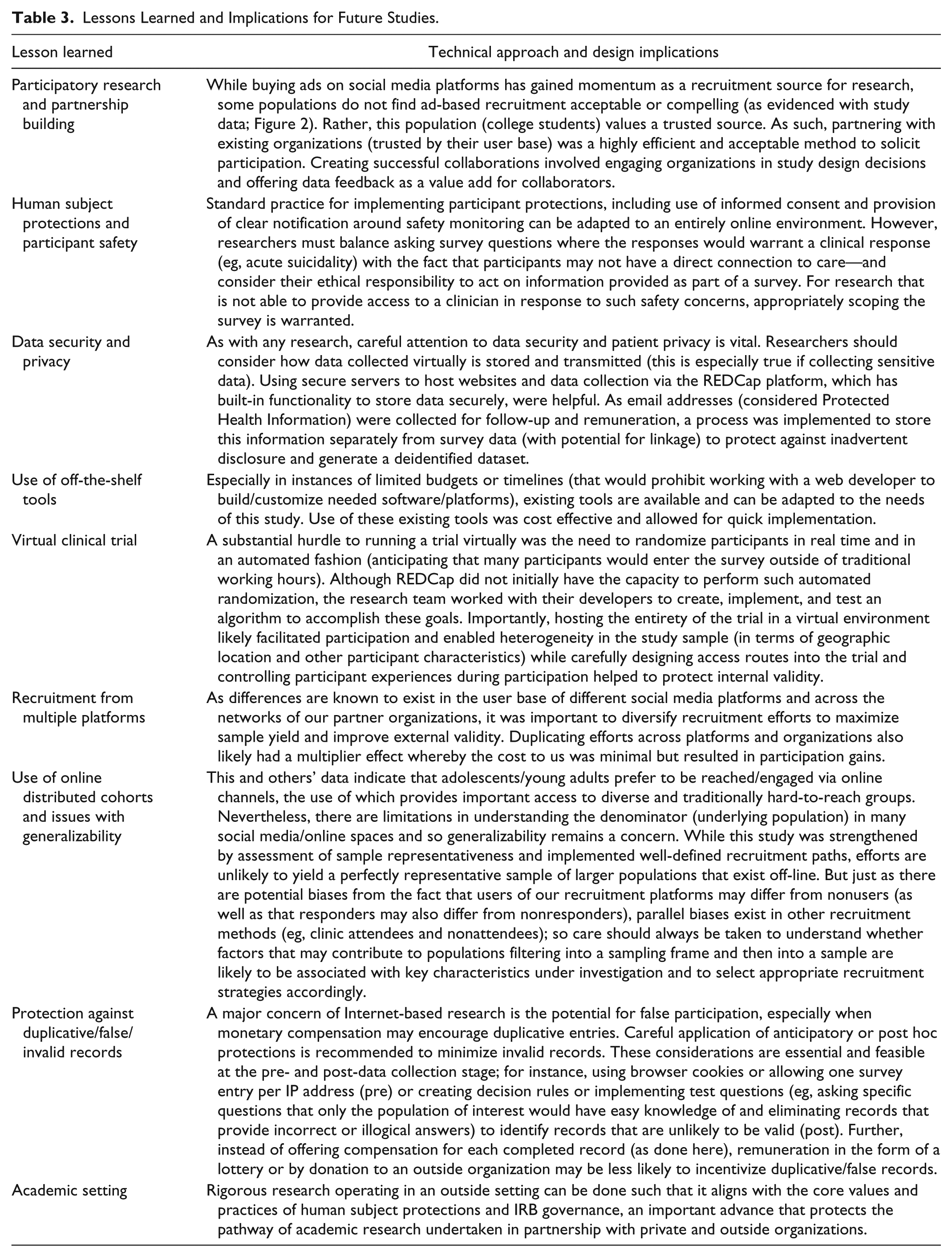

Undertaking this study in partnership with two large and very different organizations enabled highly efficient implementation of a rigorous trial with online distributed cohorts, use of online consenting and safety procedures, and collection of valuable information about recruiting and health status and behaviors. Summaries of this information were made available to partners to “close the loop” and ensure optimal use of study information, consistent with principles of community-based participatory research within an online ecosystem. Limitations were present (Table 3), notably the high prevalence of invalid records, which likely reflects provision of a small remuneration for participation (common in research) without a mechanism to gatekeep duplicate entries (ie, a “one IP address, one survey” algorithm or use of browser cookies—advisable for future studies). While gatekeep protections are not guaranteed to prevent all duplicate entries by motivated individuals, they likely would have reduced the number observed here. Application of post hoc decisions rules based on process measures (eg, time to complete) enabled exclusion of invalid records and could be applied similarly in other studies.

Lessons Learned and Implications for Future Studies.

Conclusion

The unique challenges of studies recruiting via social media often raise concerns about safety, efficiency, generalizability, and validity. We demonstrate the feasibility of implementing a rigorous trial that engaged and retained a hard-to-reach group using tracking to quantify sample representativeness and post hoc protections to minimize invalid records. Partnership with multiple platforms and using off-the-shelf tools optimizes sample diversity, increasing external validity with no cost to internal validity. In light of our success in virtually recruiting for and conducting a comparative-effectiveness trial targeting a traditionally hard-to-reach population, researchers may wish to implement similar strategies for both observational and experimental studies—the results of which can further be used to evaluate the effectiveness and acceptability of these methods.

Supplemental Material

Online_Appendix – Supplemental material for Clinical Trial Recruitment and Retention of College Students with Type 1 Diabetes via Social Media: An Implementation Case Study

Supplemental material, Online_Appendix for Clinical Trial Recruitment and Retention of College Students with Type 1 Diabetes via Social Media: An Implementation Case Study by Lauren E. Wisk, Eliza B. Nelson, Kara M. Magane and Elissa R. Weitzman in Journal of Diabetes Science and Technology

Footnotes

Abbreviations

AYA, adolescent(s) and young adult(s); CDN, College Diabetes Network; CGM, continuous glucose monitoring; GA, Google Analytics; HbA1c, hemoglobin A1c; T1D, type 1 diabetes.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: We wish to acknowledge the generous funding support for this project provided by the Boston Children’s Hospital Research Faculty Council Awards Committee Pilot Research Project Funding FP01017994 (co-PIs: LEW and ERW), the Agency for Healthcare Research and Quality K12HS022986 (PI: Finkelstein), and the Conrad N. Hilton Foundation Clinical Research 20140273 (co-PIs: Levy and ERW). The trial was registered at ClinicalTrials.gov (#NCT02883829).

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.