Abstract

Purpose:

In recent years, generative artificial intelligence systems have transformed the landscape of patient’s access to medical information and education. As increases in general and subspeciality physician shortages lead to longer lead times for patients to get access to physicians, we aim to understand how effectively different AI platforms can respond to questions asked by parents about both operative and nonoperative scoliosis.

Methods:

A survey comprised of 31 questions, among the most commonly asked, regarding scoliosis with responses from ChatGPT, Google Gemini, and Microsoft Copilot was administered to board-certified Orthopedic surgeons, fellowship trained in either pediatric or spine surgery. (four reviewers). They evaluated each output from Likert Scale of 1–5 with 5 meaning an excellent response was given and 1 meaning a poor response was given. Pairwise comparisons were used for analysis.

Results:

All three generative AI technologies performed well with an overall mean rating of 3.4 which is between good and very good on the Likert Scale provided. ChatGPT performed the best out of the three, with a mean rating of 4.0, Google Gemini was second best with a mean rating of 3.1, and Copilot was third best with a mean rating of 3.1. ChatGPT compared with Gemini and Copilot revealed statistically significant differences with a p-value <0.001, with no statistical difference between Gemini and Copilot.

Conclusion:

In response to common scoliosis questions asked by parents, ChatGPT, Microsoft Copilot, and Google Gemini, were scored highly by our Spine team and has important indications for use in the future.

Introduction

In recent years, generative artificial intelligence systems such as ChatGPT, Google Gemini, Microsoft Copilot have transformed the landscape of patient education. These systems allow patients to ask questions and receive real time answers in the platform, pulling from data input into the model over years and years. All generative AI technology is defined as a machine-learning model that can learn based on existing data, to generate new outputs. 1 Due to ease of accessibility and prompting through these interfaces, it is thought that the population with internet access will increasingly turn towards such technologies to find information regarding various health-related conditions. As increases in general and subspeciality physician shortages lead to longer and longer lead times for patients to get access to physicians and resultant data related to medical conditions, it is only natural for the trend to use generative AI to bridge those deficits in access since the current use of AI technology is replacing common search engines.

Scoliosis is a difficult condition for parents to understand, especially considering the vast and variety of information in surgical and nonsurgical treatment. Nonoperative interventions vary from bracing, therapy, to more unconventional methods such as chiropractic interventions. Conventionally, surgery is indicated for progressive curves with significant curve magnitude varying based on patients’ degree of skeletal maturity and progression risk. Given the complexity of the condition, we want to evaluate how effectively different AI technologies can respond to questions asked by parents related to both operative and nonoperative scoliosis. We used board-certified pediatric orthopedic surgeons to rate the responses to these questions to determine if the responses are accurate and reliable. We also utilized different AI software (Gemini, Copilot, and ChatGPT) to understand if there’s any variation between them in terms of medical accuracy. No other study to date in the orthopedic surgery literature has looked at head-to-head comparison of these three AI search platforms.

Methods

A thorough internet search was done to compile 55 questions from a variety of sources some of which include the American Academy of Orthopaedic Surgery, Pediatric Orthopaedic Society of North America, Scoliosis Research Society content, different orthopedic clinics, and general search engine searches for scoliosis.2 –5 With the help of a board-certified orthopedic surgeons with fellowship training in pediatric and spine, thirty-one questions were included in the final questionnaire. Each question was run through the three generative AI technologies (ChatGPT, Google Gemini, and Microsoft Copilot) independently through with the prompt “answer each of these questions separately” and the question list provided.

Once all the outputs were generated, they were compiled into a google form (Figure 1). The google form had the question and one of the potential answers listed (Figure 2). The surgeons were given instructions to rank the generated response from 1 to 5 with 1 being poor, 2 as fair, 3 as good, 4 as very good, 5 as excellent based off the Likert scale. Each generated response was randomized throughout the questionnaire to ensure there was no bias. The generative AI technologies studied were also not listed, rather we just provided that there are three systems, without naming any of them.

Description at the top of the Google form given to the orthopedic surgeons on the instructions of how to rate the responses.

Example of one of scoliosis questions asked by parents in the Google form.

We had four surgeons, all from our institution, evaluate the questions, and then once we had all the data, they were matched to the specific AI technology and statistical analysis was done.

Results

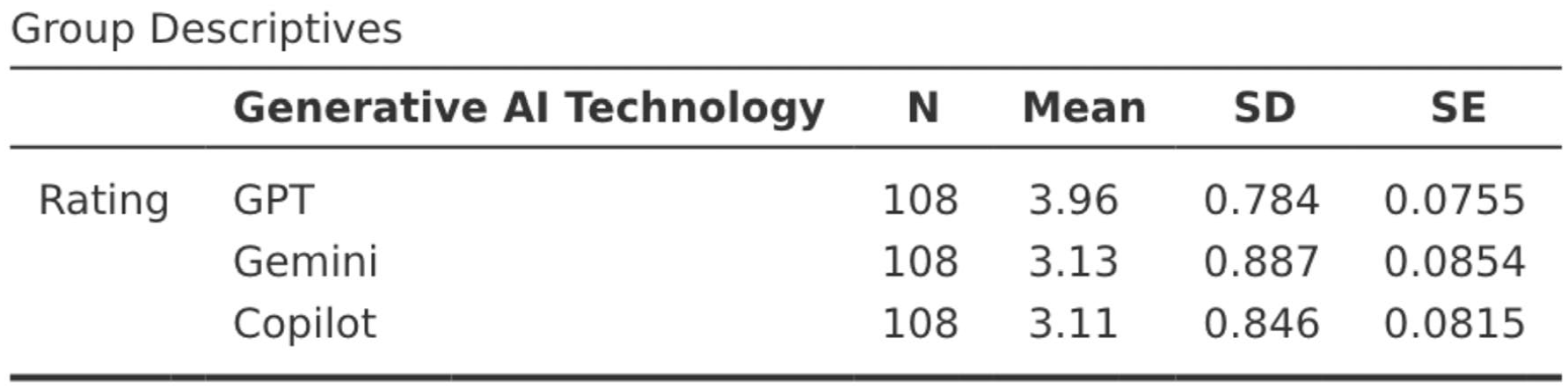

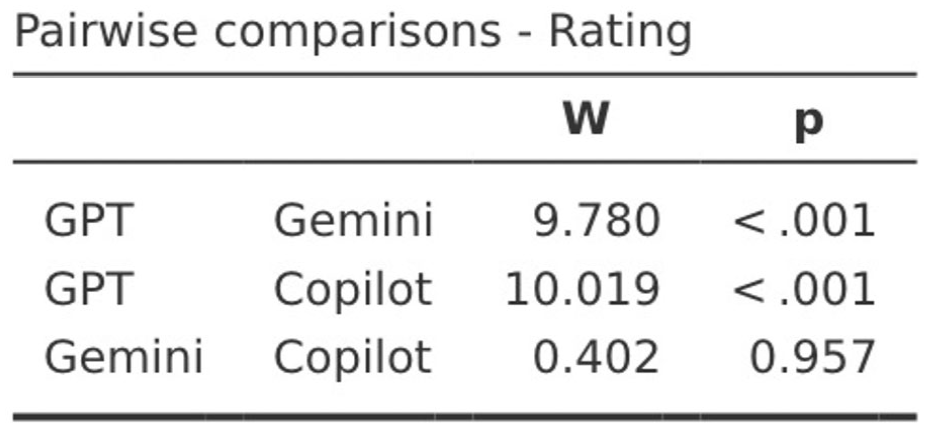

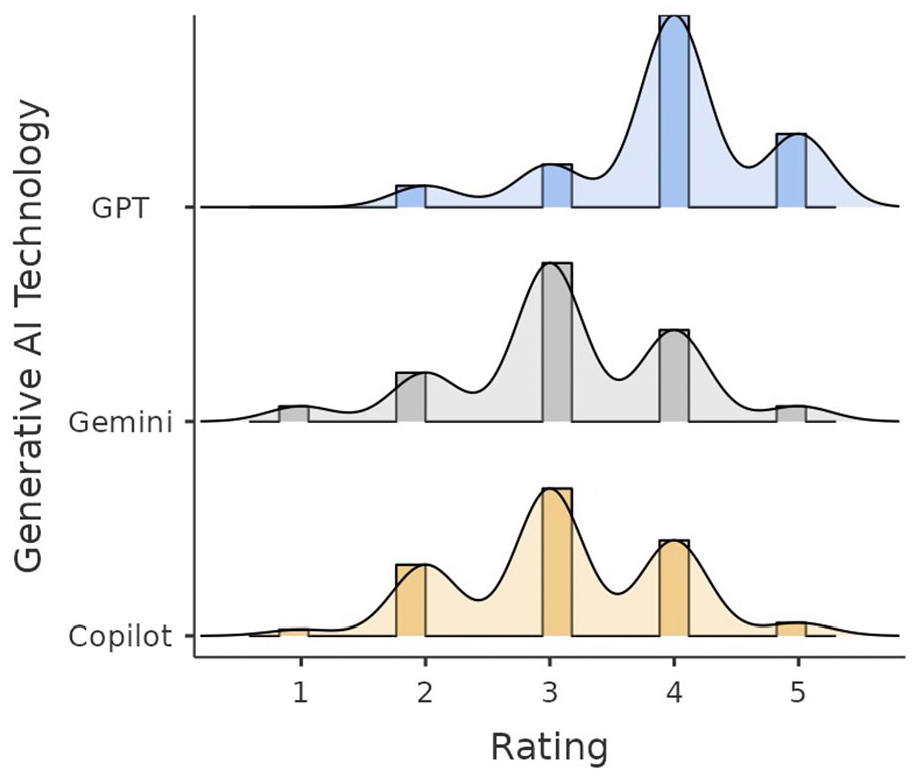

All three generative AI technologies performed well with an overall mean rating of 3.4 which is between good and very good on the scale provided. ChatGPT performed the best out of the three compared, with a mean rating of 4.0, Google Gemini was second best with a mean of 3.1, and Copilot was third best with a mean of 3.1 (Figure 3). Pairwise comparisons were conducted, GPT compared with Gemini and Copilot revealed statistically significant differences with a p-value <0.001, with very little difference was noted between Gemini and Copilot (Figure 4). All three generative AI technologies (ChatGPT, Gemini, Copilot), had a high Shapiro–Wilk coefficient indicating a normal distribution (Figure 5).

Group descriptives with mean and standard deviations for each generative AI technology.

Pairwise comparisons with each generative AI technology.

Graph that shows each generative AI technology with number of responses for each category rating.

Discussion

There have been a few studies looking into generative AI capabilities in medicine. Ayers et al. found that ChatGPT provided quality and empathetic responses to various medical questions from a social media forum. 6 There have been two studies analyzing the effect of ChatGPT in pediatric orthopedic surgery regarding in-toeing questions and slipped capital femoral epiphysis.7,8

The high rating of 4.0 for ChatGPT, and above 3 rating (good) for both Microsoft Copilot and Google Gemini have strong implications for the use of generative AI technology to help answer medical questions. With longer wait times for doctors’ appointments, especially in the field of orthopedics which has seen a 42% increase in wait times between 2017 and 2022, AI can become a valuable source to answer questions could help parents feel more at ease and keep them informed. 10 Additionally, with population booms, there is less access to direct healthcare information for patients. By validating generative AI as an accurate source of medical information with scoliosis, we can begin to bridge the gap in knowledge.

This study also considered different combinations to understand the variations between survey takers, interobserver and intraobserver reliability. With the four different reviewers we recruited to help with the study, all top board-certified Orthopedic Spine surgeons, there was a high level of intraobserver reliability. There were four questions in the total survey out of ninety-three total questions which served as tests to see if the reviewers maintained their rating for the same inputs. This was done in different combinations, using two GPT responses instead of one from Google Gemini, and was done with each software. These four questions (12 total inputs were excluded from the mean calculation). We found that one reviewer out of the four changed their rating to one rating higher twice, but the other three reviewers maintained the same rating for the same input.

The top three highest rated responses (4.5/5) by ChatGPT were to the questions, “Does a leg length difference cause or worsen the curve in my child with scoliosis?,” “What happens if no treatment for scoliosis is done? Will the curve get worse?”, and “How long will my child need to wear the brace for scoliosis?” The three lowest rated responses (3.3/5) were to the questions “How does sleeping work with scoliosis?,” “Can scoliosis curves get better on their own?,” and “What health problems might my child have later in life as a result of scoliosis?”. The top-rated response (4.0/5) by Google Gemini was to the question “For what degree curve of the spine do you decide to prescribe a brace for scoliosis?” The lowest rated responses (2.0/5) by Google Gemini were to the questions “Does having scoliosis make my child more prone to osteoporosis?” and “How long will my child need to wear the brace for scoliosis?” The four top rated responses (3.8/5) for Microsoft Copilot were to the questions “Will scoliosis prevent my child from playing sports or doing certain activities?,” “What are conservative treatment options for scoliosis?,” “Will physical therapy help my child’s scoliosis?,” and “How much pain will my child be in after surgery, and how long is recovery?” The lowest rated responses (2.5/5) for Microsoft Copilot were to the questions “How should I take care of a child with scoliosis?,” “Q: What is a Cobb angle?,” “Q: Do scoliotic curves progress?,” “Q: How long will my child need to wear the brace for scoliosis?,” and “Q: Can my child wear their scoliosis brace at night only?.” The difference between the highest-rated responses and lowest-rated responses for each platform did not follow any predictable pattern and the response was likely curated based on the technicalities related to the underling algorithm driving the specific technology. We initially hypothesized that all the platforms would share similar best and worst questions, however, our study revealed that there were variations across the generative AI technologies.

One limitation of our current study is that we had to limit the questions to 31 total. A more comprehensive questions bank would potentially been even more telling of the differences between the platforms studied, however, to allow the survey to remain concise enough to be reasonably performed we chose to continue with a representative yet finite number. Another limitation to note is that the generative AI technology is constantly being updated and evolving rapidly. The versions of the platforms that were utilized for the purposes of this study are in a constant state of improvement so there could very easily be a difference in quality of responses even at the publishing of this article. However, given this is a novel study comparing these technologies, it was appropriate to make an assessment to help future the understanding of what these technologies are able to provide our patient population in terms of content quality and value.

This study has many different implications for the future. Previous studies have had similar results, but to our knowledge within the pediatric orthopedic space, this is the first study of its kind to look at AI related to the subject of scoliosis and compare the effectiveness of different common AI technologies against each other. The hope is that future versions of these technologies will also have validation by citing source material, an internal control to assess quality and offer the opportunity to make iterative changes based on expert opinion to provide better more reliable and accurate responses to our patients and family. We hope that this study inspires others to understand the different AI technology out there and take advantage of its capabilities in daily practice.

Conclusion

Generative AI technology has been rising in popularity and has started to be used more often in a medical context. In response to common scoliosis questions asked by parents, ChatGPT, Microsoft Copilot, and Google Gemini, were scored highly by our Spine team with ChatGPT scoring the overall best based on our expert panel blinded opinion. This technology has changed the way that patients and family members are able to access information and has a huge potential for improving patients’ access to quality and evidence-based information in the future.

Supplemental Material

sj-pdf-1-cho-10.1177_18632521251359098 – Supplemental material for Comparing the effectiveness of generative AI technology in commonly asked scoliosis questions

Supplemental material, sj-pdf-1-cho-10.1177_18632521251359098 for Comparing the effectiveness of generative AI technology in commonly asked scoliosis questions by Adarsh Suresh, Jacob Siahaan, Rex AW Marco, Eric Klineberg, Timothy Borden, Rohini Vanodia, Lindsay Crawford, Shah-Nawaz Dodwad, Shiraz Younas and Surya Mundluru in Journal of Children’s Orthopaedics

Footnotes

Index

Author contributions

All authors contributed to the study conception and design. Material preparation, data collection and analysis were performed by Adarsh Suresh and Surya Mundluru. Jacob Siahaan, department statistician, validated the statistics. Rex AW Marco, Eric Klineberg, Timothy Borden, and Surya Mundluru completed the survey. Rohini Vanodia, Lindsay Crawford, Shah-Nawaz Dodwad, and Shiraz Younas helped with the manuscript and editing. The first draft of the manuscript was written by Adarsh Suresh and all authors commented on previous versions of the manuscript. All authors read and approved the final manuscript.

Declaration of conflicting interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Adarsh Suresh, Jacob Siahaan have no conflicts of interests to disclose. Rex Marco has the following disclosures: DePuy, A Johnson & Johnson Company: Paid presenter or speaker. Globus Medical: IP royalties. Eric Klineberg has the following disclosures: Agnovos, AONA Spine, DePuy Synthes, IMAST Chair, Medtronic, MMI, Relateable, Seaspine, SI Bone, SRS, Stryker Spine.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Compliance with ethical standards

No human subjects or patient information was accessed, given the survey nature of the study.

Study involved no patients, survey of the authors listed, who agreed to have their results published

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.