Abstract

Patient satisfaction is critical to a health organization’s sustainability, making patient feedback an important source of insight for improving healthcare delivery. This study analyzes 31,054 patient responses across seven healthcare units to identify factors that influence patient satisfaction and to examine how satisfaction is reflected in qualitative comments across diverse care settings. Deep learning methods were employed to extract features related to sentiment and topics from patient comments. Linear regressions were used to evaluate the explanatory power of both quantitative ratings of healthcare service attributes and features extracted from qualitative comments, accounting for unit-specific healthcare settings. Our results reveal that trust and communication factors are the strongest predictors of patient satisfaction. However, the explanatory power of quantitatively rated survey items shows clear variations across units, suggesting that standardized survey questions inadequately capture patient experience in specific contexts. Text analysis uncovered important unit-specific priorities, such as prescription management in Medical Practice and result efficiency in OP Lab, that were absent from the rated survey items. Patients who mentioned topics not covered in the rated survey items tended to report lower satisfaction scores, indicating potential service gaps. The integration of quantitative ratings and qualitative comments in our analysis significantly improved explanatory power in six of the seven units. Based on the identified key determinants of patient satisfaction and emerging concerns from patient comments, we propose a tiered approach for healthcare practitioners to leverage both forms of feedback for more targeted, emotionally responsive, and context-aware improvements in patient care. As healthcare delivery continues to evolve, our findings also underscore the value of flexible, multi-modal feedback strategies—supported by an adaptive analytical framework—that can inform future research and system-level responses to the diverse and shifting expectations of patients.

Keywords

Introduction

Patient satisfaction is important for both treatment outcomes and healthcare providers’ financial performance, as satisfied patients are more likely to choose the same healthcare providers for future care and recommend the provider to family and friends.1,2 Assessment of patient satisfaction has identified multiple aspects of health service delivery that could influence it such as provider communication, care coordination, wait times, service accessibility, and administrative rules. 3 Past patient satisfaction research has primarily relied on surveys where various health service attributes were measured quantitatively in the form of patient ratings.4,5 Although quantitative surveys allow standardized data collection and analysis, they often overlook contextual details or emerging patient concerns, especially in today’s evolving healthcare contexts shaped by rapid technological advancements. 6 Patient comments collected through open-ended survey questions can reveal contextual details missing from quantitative surveys and offer insights into why patients gave certain ratings. Indeed, analyzing qualitative comments provided a deeper understanding of patient experiences, uncovering factors that influence satisfaction beyond what quantitative measures capture. This approach supports healthcare providers better address patient needs and improve care quality by incorporating patient voices into service evaluation and development. 7

Although quantitative and qualitative patient satisfaction research has contributed greatly to our understanding of patient satisfaction, research opportunities remain. First, most studies have focused on single specialty units,8–10 thereby limiting generalizability and hindering comprehensive comparisons across diverse healthcare settings.11,12 Second, existing research has predominantly relied on either quantitative ratings13–15 or qualitative comments and reviews,11,16,17 rarely examining how these two forms of patient feedback can jointly influence patient satisfaction. We argue that integrating both data types is crucial because scale ratings may overlook nuanced patient concerns, while comments alone often lack the quantitative clarity needed for actionable insights. Combining scale ratings and patient comments could provide a holistic view, highlighting potential synergies and uncovering new service dimensions not captured by rated survey items. Third, qualitative patient satisfaction research has primarily relied on manual or software-assisted traditional coding methods, such as thematic coding, phenomenology, grounded theory, or content analysis. 18 These methods are most effective when the qualitative dataset is not overly large. Text analytics has expanded the capabilities of traditional coding methods and has been increasingly used in recent years to extract deeper insights from patients’ comments and online reviews.19,20 However, while traditional text analytics methods offer valuable baseline capabilities, deep learning approaches—such as Bidirectional Encoder Representations from Transformers (BERT)—provide more advanced means of capturing the nuanced and contextual nature of patient experiences and emerging concerns in today’s evolving healthcare environment.21,22 BERT-based models can capture contextual relationships and semantic subtleties that keyword-based approaches may overlook. This is particularly important in healthcare, where the same term may carry different meanings depending on its context. Specifically, sentiment analysis powered by deep learning benefits from semantic embeddings that account for contextual meaning, enabling a more accurate interpretation of the emotional tone in patient feedback than rule-based or lexicon-based approaches. 23 BERT’s bidirectional processing enhances this understanding by accounting for negations and complex sentence structures commonly found in patients’ comments. Furthermore, BERT models can identify emerging themes and sentiments without relying on predefined lexicons or rules, thereby supporting the discovery of novel patient concerns that traditional data mining techniques might miss.

The limitations in the current literature identified above highlight the need for a comprehensive, multi-unit approach that integrates quantitative ratings and patients’ comments to understand the evolving patient experience and satisfaction. To address these limitations, we analyze post-care surveys of patients concerning their patient experiences in seven healthcare units within a health system. The dataset includes both quantitatively rated questionnaire items that elicited patient responses with respect to various health service attributes as well as open-ended questions, allowing us to examine how ratings of those health service attributes and qualitative comments relate to patient satisfaction. Specifically, we investigate the following research questions: • What key determinants drive patient satisfaction in the healthcare environment, and how do these determinants vary in importance across diverse healthcare settings? • Do qualitative comments uncover insights not revealed in quantitatively rated survey items? If yes, what additional insights do they uncover about patient satisfaction? • How can we improve questionnaire design and patient experience based on the analysis of patient feedback?

By combining rated survey responses with deep learning–based analysis of patient comments across multiple units, this study offers both methodological and managerial contributions. Methodologically, we demonstrate how integrating quantitative ratings with qualitative feedback can reveal both core and emerging areas of patient concern that may be overlooked or insufficiently captured by quantitatively rated survey items alone. From a managerial perspective, our findings can guide healthcare administrators in refining or redesigning patient satisfaction measurement and analytical tools that are both responsive to evolving patient needs and comparable across diverse clinical settings.

In the following sections, we detail our data collection and analytical approach, describe the results, and discuss their implications for healthcare providers adapting to a rapidly changing clinical landscape. We then acknowledge the study’s limitations, suggest avenues for future research, and conclude the paper.

Data and methods

Our dataset comprises 31,054 post-care survey responses from patients concerning their patient experiences at seven healthcare units in a university health system in the US, collected in December 2020 by the health system as part of their healthcare delivery processes. The patient experience surveys were designed by the healthcare system’s patient experience team in collaboration with National Research Corporation, a professional survey vendor specializing in healthcare feedback measurement. Data collection utilizes an invitation-based, multi-modal approach including email, text messaging, interactive voice recording, and mail distribution, rather than public online review platforms. Data privacy is a critical concern in healthcare research, especially when handling sensitive patient information. In line with best practices highlighted by Lin et al., 24 our study adheres strictly to privacy and security protocols. Our research protocol was approved by the Institutional Review Board, allowing us to use this patient survey dataset. Given the differences in service settings and the tailored design of questionnaires, we analyzed the survey responses separately for each healthcare unit. Several units included in this study have received Magnet Recognition, an award granted by the American Nurses Credentialing Center to recognize excellence in nursing practice.

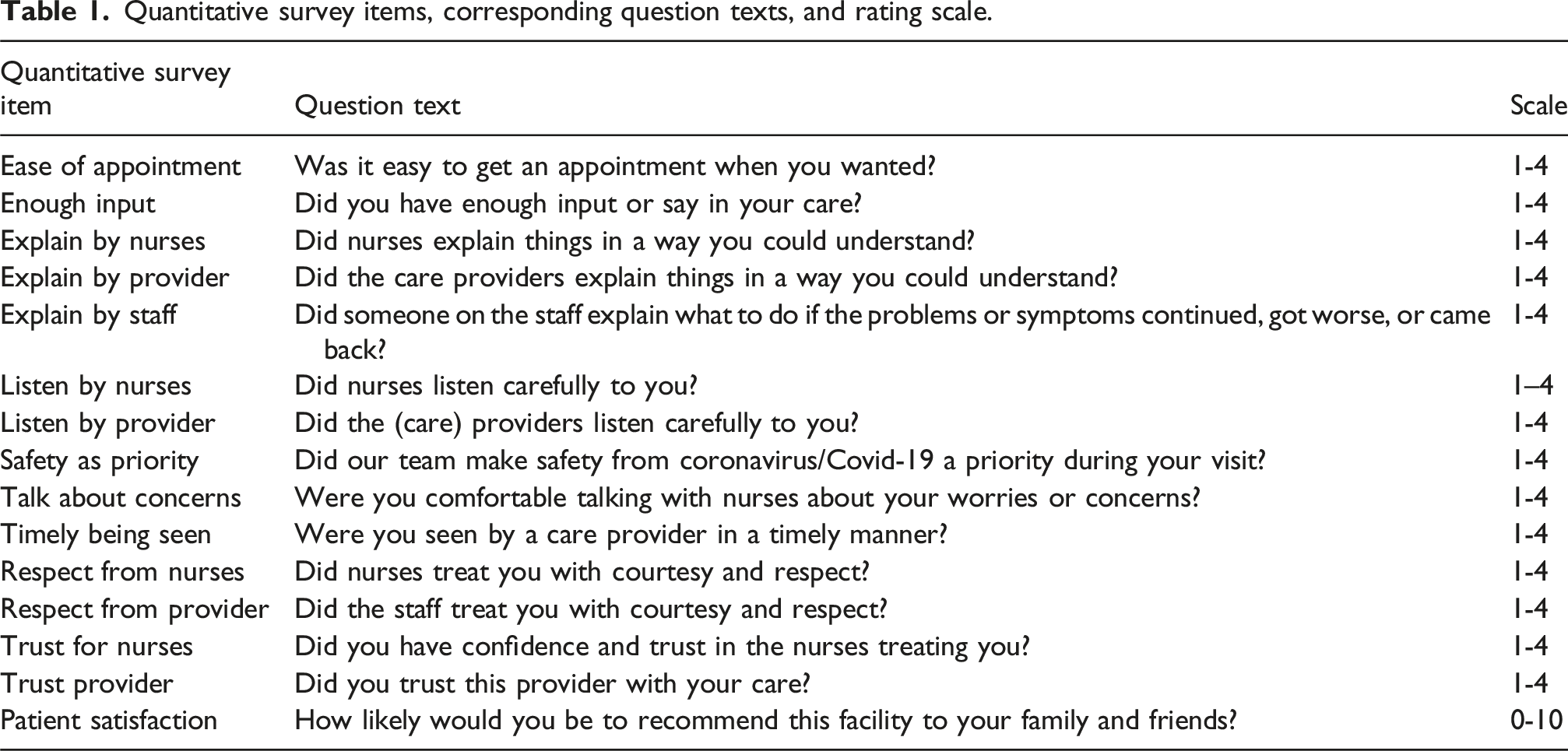

Quantitative survey items, corresponding question texts, and rating scale.

Characteristics of survey responses.

Note: Med, Prac, Mag, OP, and Rehab refer to Medicine, Practice, Magnet, Outpatient, and Rehabilitation, respectively.

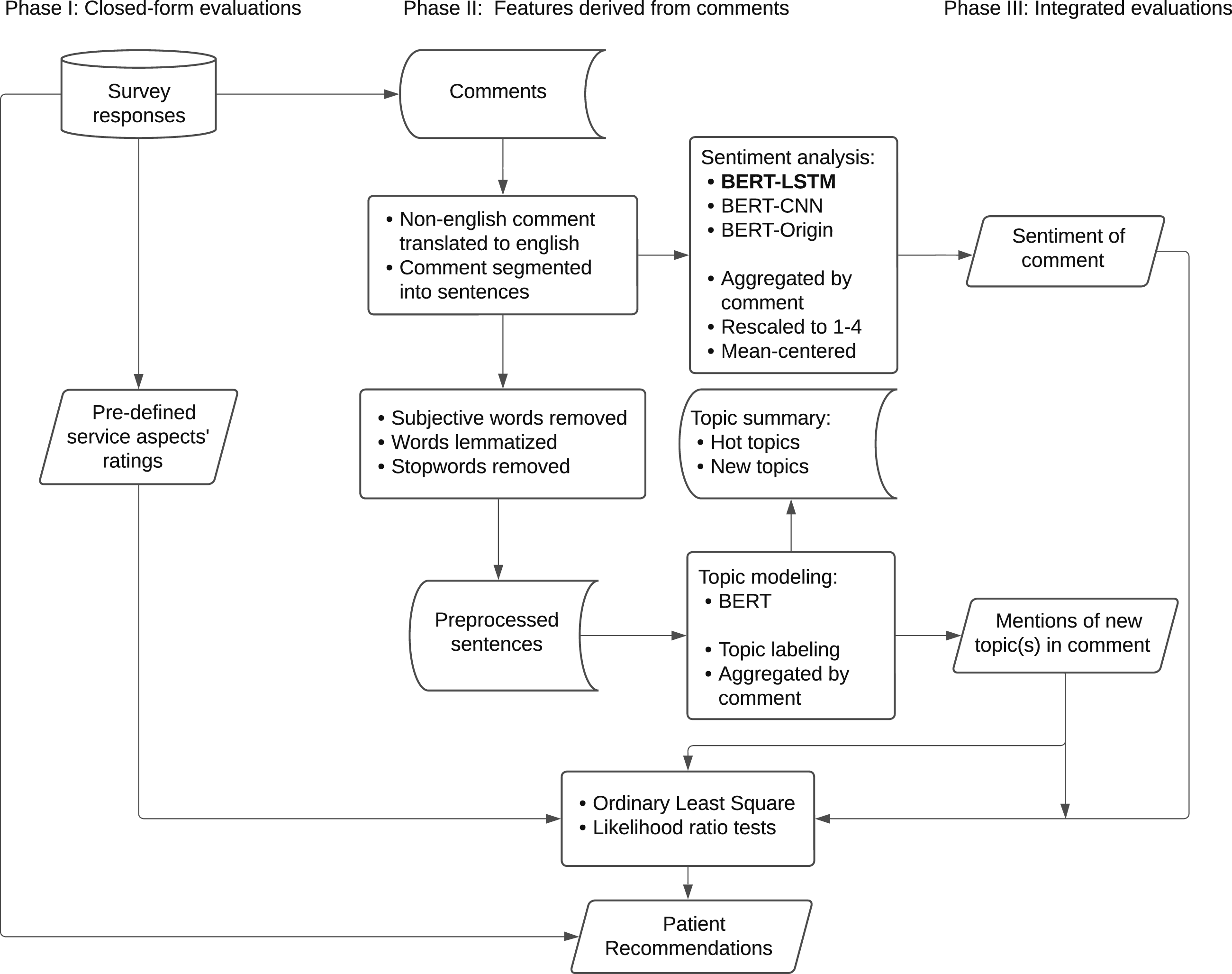

Figure 1 illustrates the three-phase analytical framework developed to address the research questions. Phase I examines the association between service attributes and patient satisfaction. Phase II focuses on extracting informative features from qualitative comments. This phase consists of three primary analytical components: text preprocessing, sentiment analysis, and topic modeling. In Phase III, we further examine how service attribute ratings and qualitative comments jointly explain patient satisfaction. Details of each phase are provided in the following subsections. Analytical framework for the medical survey data.

Service attribute ratings

The existing surveys at the studied health system assess four major aspects of healthcare service: communication, trust, safety, and accessibility. Communication includes measures such as “listen carefully by nurses and/or providers” (7 of 7 units; Medical Practice Magnet includes both items (listen carefully by nurses and providers), while all other units have one), “treat with respect from nurses, staff, and/or providers” (6 of 7; not included in OP Rehabilitation), “enough input during care” (5 of 7; not included in Medical Practice or Urgent Care), “comfort in talking about concerns with nurses,” and “explanations provided understandably by nurses, staff, and/or providers” (3 of 7; included only in the Magnet units of Medical Practice, Emergency, and OP Rehabilitation). Trust is assessed by the item “trust in providers and/or nurses” (5 of 7; not included in OP Rehabilitation or OP Lab). Safety is measured through the item “prioritization of safety” (7 of 7). Accessibility evaluates factors such as “ease of scheduling an appointment” (4 of 7; not included in Emergency Magnet, Urgent Care, or OP Lab) and “timeliness of being seen” (1 of 7; only present in Emergency Magnet). Based on healthcare settings, units such as Medical Practice, Medical Practice Magnet, Emergency Magnet, and Urgent Care involve both providers and nurses/staff in patient interactions, whereas others, such as OP Rehabilitation units and OP Lab, primarily involve nurses and/or staff, with limited or no direct provider involvement.

To examine the associations between ratings of those service attributes and patient satisfaction, we fitted ordinary least square (OLS) regressions for each healthcare unit and examined their statistical significance and coefficients as well as the total variance explained. The analytical model employed follows the general linear form specified in equation (1):

Extracting features from qualitative comments

Our analysis of patient comments was informed by recent methodological advances in text analytics. Text mining techniques can reveal underlying patterns in qualitative data that complement quantitative metrics, providing a framework for integrating mixed data types in topic identification. 21 Meanwhile, benchmarks in sentiment classification have demonstrated the effectiveness of deep learning models, with LSTM networks achieving up to 91% accuracy in identifying sentiments of customer feedback. 23 Building on these developments, we employed deep learning methods to extract both topic and sentiment information from patient comments, identify concerns not covered in quantitative ratings in the survey, and examine how these features influence overall patient satisfaction.

Text data preprocessing

To prepare patient comments into an analyzable format for sentiment extraction and topic modeling, we implemented the following preprocessing steps. First, while most of the comments to open-ended questions were in English, some were in other languages. Therefore, all non-English texts were translated into English to ensure consistency and inclusiveness in the analysis. Next, we performed sentence segmentation, dividing each comment into individual sentences as the unit of analysis. This step is essential for both sentiment analysis and topic modeling, as it facilitates a more granular examination by accounting for variations in emotions and topics across sentences within the same comment, thereby enhancing analytical precision and coherence. We conducted additional preprocessing steps to improve data quality for topic modeling, including converting text to lowercase, removing stop words, eliminating punctuation, and excluding heavily subjective terms to ensure that subsequent topic clusters are not dominated by positive or negative adjectives. 25 These steps support the identification of more meaningful themes.

Sentiment analysis

Sentiment analysis is widely applied in fields such as social media monitoring and customer feedback analysis. 26 In this paper, BERT-based approaches were chosen over traditional sentiment analysis methods (such as lexicon- or rule-based approaches) because healthcare feedback requires a deep contextual understanding of domain-specific terminology and nuanced sentiment shifts within patient narratives. Traditional methods struggle with medical terminology in context and often miss sentiment shifts within comments (e.g., “Initially concerned…but ultimately satisfied”). In other words, BERT’s bidirectional contextual representations facilitate thorough semantic understanding by simultaneously analyzing both preceding and subsequent tokens, outperforming traditional unidirectional methods in capturing sentiment based on context. 27 In this research, we evaluated three deep learning approaches related to BERT for sentiment analysis: the original BERT model (BERT-Origin), 27 BERT combined with Long Short-Term Memory (BERT-LSTM), 28 and BERT integrated with Convolutional Neural Network (BERT-CNN). 29 Using our manually annotated dataset of over 9000 sentences, we trained, fine-tuned, and tested the candidate models. The BERT-LSTM model achieved the highest performance, with accuracy around 91.45% on test folds, surpassing BERT-Origin and BERT-CNN models as it effectively captured both the contextual representations from BERT and the sequential dependencies of LSTM layers.

Topic modeling

In this study, we also adopted the BERT algorithm for the topic modeling. BERT’s pre-trained language model architecture makes topic modeling more effective in capturing contextual word relationships and semantic details.27,30 Recent research also shows its improved performance in identifying coherent and emerging topics among varied document collections. 31

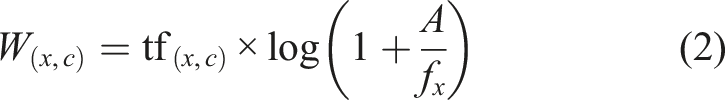

After generating sentence embeddings, we applied the Uniform Manifold Approximation and Projection (UMAP) algorithm ,

32

which integrates the dimensionality reduction with the Hierarchical Density-Based Spatial Clustering of Applications with Noise (HDBSCAN) for clustering. To represent topics within the identified clusters, we adopted a bag-of-words approach, which computes the frequency of each word within a cluster. This method is particularly effective when combined with HDBSCAN, as it does not rely on predefined cluster structures, making it suitable for clusters with diverse shapes and densities. Furthermore, we refined the topic word importance using the class-based TF-IDF (C-TF-IDF) algorithm. Through this approach, each cluster is treated as a single document, and the importance of a word x within a cluster c is computed as:

Extracted features

Each patient’s comment sentiment was aggregated from individual sentences into a single metric and scaled to a range of 1 to 4, aligning with the scale of quantitative ratings to ensure coefficient comparability, where higher values indicate more positive sentiment and lower values indicate more negative sentiment. Each sentence was assigned to the topic with the highest probability based on the BERT output, and topic meanings were represented using keywords and representative sentences. Topics that did not align with those service attributes were labeled as new topics, and a binary variable, “mentions of new topic(s),” was created to indicate whether a comment included a sentence introducing a new theme. An interaction term between sentiment and the new topic indicator was constructed as an additional feature.

Integrated evaluations

To assess how quantitative ratings and qualitative comments jointly reflect patient satisfaction, we employed OLS regressions integrating both sets of measures. This approach enabled us to evaluate the additional explanatory power of variables derived from patient comments while controlling for quantitative ratings. Besides examining the significance and coefficients of individual predictors, we used likelihood ratio tests to assess the added value of textual features, including sentiment, mentions of new topics, and their interaction term. This approach also allowed us to examine the robustness of quantitative predictors when qualitative comment-derived features were included.

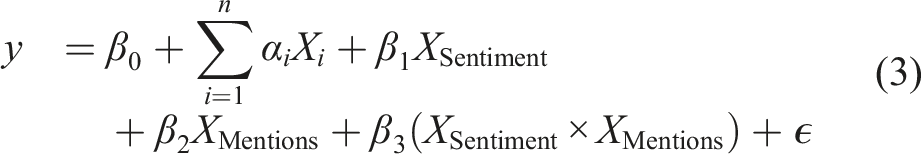

To examine the joint contribution of quantitative ratings and qualitative feedback to patient satisfaction, we extended the baseline regression model specified in equation (1) to incorporate features extracted from comments. The expanded model is formulated in equation (3):

Results

Quantitative ratings and patient satisfaction

Quantitatively rated questions across different healthcare units.

*Statistical significance: p < 0.001***, p < 0.01**, p < 0.05*, and p < 0.1. Number of responses refers to the number of valid responses included in the linear regression analysis for each healthcare unit. Empty cells indicate that the corresponding quantitative survey items were not included in that particular survey pod.

Similarly, “enough input” is a consistently significant predictor across all healthcare settings that included this item (coefficients: 0.607–0.774, p < 0.001). Medical Practice and Urgent Care did not assess this item but instead measured ”listen by provider”, which also demonstrates strong significance (coefficient: 0.308–0.527, p < 0.001). “Listen by nurses” was measured in Emergency Magnet (coefficient: 0.577, p < 0.001) and OP Rehabilitation Magnet (coefficient: 0.368, p < 0.01). While Medical Practice Magnet assessed both provider and nurse attentiveness, the results show that only provider attentiveness has a significant association with patient satisfaction (coefficient: 0.308, p < 0.01). The item “respect from provider” is significantly associated with patient satisfaction in Urgent Care (coefficient: 0.725, p < 0.001), whereas “respect from staff” shows strong positive effects across all units (coefficients: 0.174 - 1.134, p < 0.001). However, “respect from nurses” does not exhibit statistical significance in the Magnet units of Medical Practice, Emergency, or OP Rehabilitation. Additionally, ”explain by provider, nurses, and/or staff” and “talk about concerns” do not show significant relationships with patient satisfaction in the Magnet healthcare units.

Among other factors, “prioritizing safety” maintains significant positive associations across most healthcare units (coefficients: 0.126–0.891, p < 0.001 – p < 0.01), except for Urgent Care. “Ease of appointment” shows weak but significant positive associations in Medical Practice, OP Rehabilitation, and OP Rehabilitation Magnet (coefficients: 0.108–0.151, p < 0.001 – p < 0.01), but is not significant in Medical Practice Magnet. “Timely being seen” also does not reach statistical significance in Emergency Magnet.

The adjusted R2 ranges from 0.292 to 0.707 as shown in Table 3, with relatively lower variance explained in OP Rehabilitation, Medical Practice, and Medical Practice Magnet departments (R2 < 0.4), and higher variance explained towards Emergency Magnet (R2 > 0.7).

Sentiment and topics in patient comment

For each patient comment in the survey, we extracted its sentiment and identified key topics. Each key topic could correspond to an existing service attribute in the quantitative rating items, or an emerging service attribute.

Summary NPS and sentiment statistics for responses with comments.

*NPS ranges from 1 to 10. The sentiment score (sent_score) is obtained from the BERT-LSTM sentiment analysis and then scaled to a 1-4 range (sent_scaled). N refers to the number of responses containing the comment. The first correlation is between NPS and sentiment, and the second is between NPS and sent_scaled.

Hot topics across healthcare units.

New topics across healthcare units.

Integrating quantitative service attributes and comment features for patient satisfaction

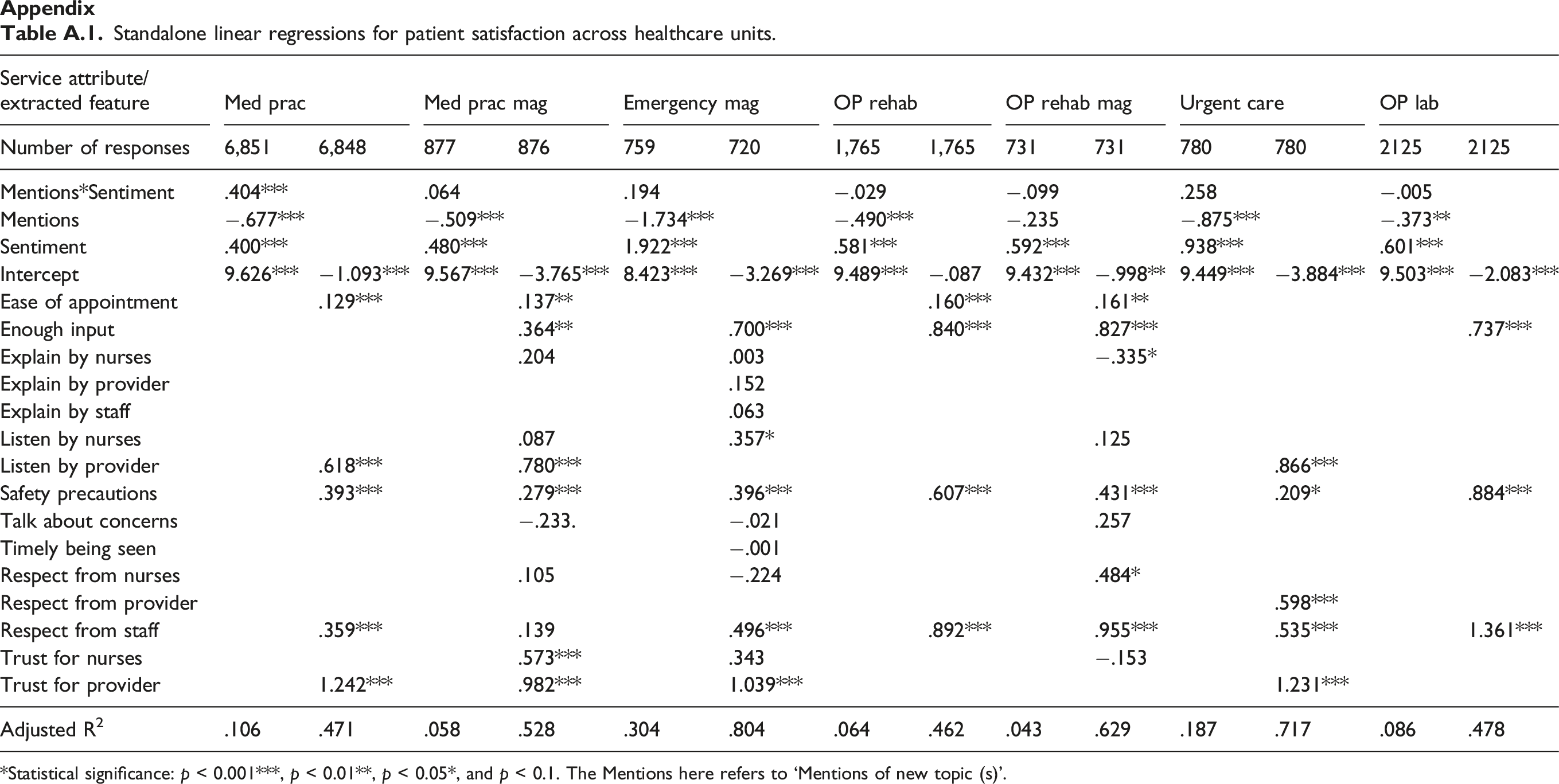

The integrated evaluations across different healthcare units.

*Statistical significance: p < 0.001***, p < 0.01**, p < 0.05*, and p < 0.1. ‘Mentions’ refers to ‘Mentions of new topic(s)’. Sentiment was scaled and mean-centered to reduce multicollinearity given the inclusion of interaction term in models.

Based on the significance and coefficients of the quantitatively rated attributes shown in Table 7, while controlling comment-derived features, “trust for provider” remains the significant and most important factor across the four healthcare units that measured it (coefficients: 0.958 - 1.186, p < 0.001). And the significance of “trust for nurses” becomes stronger for Medical Practice Magnet (coefficient: 0.567, p < 0.001) and also appears significant for Emergency Magnet (coefficient: 0.280, p < 0.05). Similar to the results based on quantitatively rated service attributes in completed survey responses in Table 3, “listen by provider” and “enough input” keep being significant and important while for the Medical Practice Magnet unit, the influence of “enough input” weakens, with its coefficient declining from 0.774 (p < 0.001) to 0.345 (p < 0.01) and the coefficient of “listen by provider” increases from 0.308 (p < 0.01) to 0.777 (p < 0.001). The item “listen by nurses” remains significant only for Emergency Magnet but not for Medical Practice or OP Rehab Magnet for this subset of responses containing comments. The item “respect from staff and/or provider” is consistently statistically significant across healthcare units (coefficients: 0.336 - 1.32, p < 0.001) except for Medical Practice Magnet. The previously insignificant item ”respect from nurses” becomes significant in OP Rehab Magnet, with its coefficient increasing from −0.61 to 0.543 (p < 0.001). However, for OP Rehab Magnet, the absolute value for the coefficient of “explain by nurses” increases, indicating a stronger effect, despite becoming more negative (from −0.086 to −0.324, p < 0.05). The item “explain by provider” stays insignificant for Emergency Magnet. The “safety as priority” keeps being significant across units (coefficient: 0.259-0.872, p < .001) and becomes significant for Urgent Care (coefficient: 0.182, p < 0.05) as well. The “ease of appointment” stays weakly positively associated and also shows significance for Medical Practice Magnet. “Timely being seen” stays insignificant for Emergency Magnet.

Among the features extracted from patient comments, sentiment exhibits a positive association with patient satisfaction in all units, reaching statistical significance in Medical Practice, OP Rehabilitation, Urgent Care, and OP Lab, but not in Magnet departments. The feature “mentions of new topic(s)” shows a statistically significant negative association with patient satisfaction in four units: Medical Practice, Medical Practice Magnet, OP Rehabilitation, and Urgent Care (coefficients between −0.249 and −0.179, p < 0.001 to p < 0.05). The interaction term between sentiment and new topic mentions is significant in three units, but the direction of moderation varied by healthcare setting. For Medical Practice (coefficient: 0.194, p < 0.001) and Emergency Magnet (coefficient: 0.351, p < 0.05), there were significantly positive interactions. For Urgent Care, it also shows a positive interaction effect with marginal significance (coefficient: 0.169, p < 0.1). On the other hand, for OP Rehab (coefficient: −0.191, p < 0.05), there was a significant negative moderation effect, indicating that the influence of sentiment on satisfaction is weaker when new topics are present. And for OP Rehab Magnet (coefficient: −0.018) and OP Lab (coefficient: −0.088), the negative moderation is small and statistically insignificant.

Model fit without and with extracted features from comments.

*Statistical significance: p < 0.001*** and p < 0.01**. The Quan model refers to regressions in Table 3 using only quantitative service attributes from the complete sample; The Quan-Comment model refers to regressions in Table A.1 using only quantitative service ratings from responses that include comments; The Both-Comment model refers to regressions in Table 7 incorporating both quantitative service attributes and comment-extracted features from responses with comments. The LR test compares the standalone model using comment-extracted features in Table A.1 with the Both-Comment model in Table 7. The Comment model refers to regressions in Table A.1 using only comment-extracted features from responses with comments.

Discussion

This study yields three primary findings. First, the analysis of quantitative ratings of health service attributes indicates that trust in the provider, provider–patient communication, and operational aspects such as accessibility and safety are central to patient satisfaction across all units. However, the relative importance of these factors varies across units, underscoring the importance of unit-specific evaluation and strategy. Additionally, ratings of service attributes do not adequately explain patient satisfaction, particularly for units whose surveys contained fewer service attribute items being rated or which delivered non-standardized care services not captured in the post-care patient satisfaction surveys. This inadequacy points to the need for additional measurement dimensions beyond existing quantitatively rated items to more comprehensively capture patients’ experiences and drivers of satisfaction.

Second, patient comments in response to open-ended questions provide valuable complementary insights. Frequently mentioned topics not only enrich the interpretation of quantitative ratings of service attributes by adding contextual depth but also suggest new areas of concern not captured by quantitatively rated items. Findings from the analysis of patient comments highlight distinct and emerging service priorities across healthcare units and underscore the importance of integrating narrative feedback into the patient satisfaction assessment process.

Third, mentions of new service attributes in patient comments are generally associated with lower satisfaction scores and can moderate the relationship between comment sentiment and satisfaction rating, with patterns varying across healthcare settings. This suggests that patient concerns raised in the comments and not already captured in the quantitatively rated items may reflect unmet expectations or service gaps that warrant closer attention. Additionally, patients who chose to leave comments tended to provide more informative quantitative ratings, as reflected in relatively higher explained variance in patient satisfaction than those who did not leave comments. This pattern suggests deeper engagement with their care experience may be fruitful and points to the potential value of paying greater attention to the responses of this subgroup in future patient experience analysis.

The relationship between rated service attributes and patient satisfaction

Trust in providers was identified as the most prominent factor associated with patient satisfaction based on the analysis of patient ratings of service attributes specified in the survey. This result highlights the uniqueness of the healthcare context, where treatment outcomes greatly depend on patients’ adherence to treatments and their openness about their health and behavior. Revealing sensitive information such as mental health, substance use, and sexual health requires emotional safety since patients may fear being judged or embarrassed, confidence in confidentiality, assurance of privacy, and confidence in the diagnoses and treatments. Both Ardito and Rabellino 34 and Hatcher and Barends 35 emphasize that therapeutic alliance, a collaborative, trusting relationship between provider and patient is essential for fostering openness and engagement in therapy, which is viewed as a prerequisite for positive health outcomes.

Besides patient trust in healthcare providers, communication was identified as the most prominent factor associated with patient satisfaction, based on the analysis of patient ratings of service attributes specified in the survey. This finding aligns with prior research of Hays and Skootsky 36 and Mehra and Mishra, 37 which emphasizes the role of provider communication, empathy, and sufficient consultation time in shaping patient satisfaction. Communication-related service attributes, including adequate input, active listening, and respectful behavior from the staff and provider consistently demonstrated statistical significance across healthcare units. These patterns suggest that patients place considerable importance on relational and interpersonal dimensions of care. The communication behaviors are central tenets of the Patient-Centered Communication framework, which frames communication not as a soft skill but as a set of core clinical functions. 38 This is because empathy, active listening, and compassion expressed through communication help patients feel cared for, not just medically, but personally. Communications also help patients develop understanding of diagnoses, treatments, risks, and options, and provide needed emotional support, all of which facilitate shared decision-making in patients’ own treatment plans. Clear communications build confidence in the treatment plans, which leads to greater trust and improved satisfaction with the provider.

Our finding, that trust and communication represent the strongest drivers of patient satisfaction, is consistent with the broad importance placed on these interpersonal dimensions on digital healthcare devices. 39 In addition, our study extends existing research by analyzing large-scale, multi-modal patient feedback to reveal how these factors are actually present in patient experiences and intersect with changing service priorities, providing insights not fully captured by traditional surveys or reviews.

Given that data were collected during the Covid pandemic, “safety precautions” was consistently statistically significant across most units, reflecting the enduring influence of pandemic-related concerns on patient expectations. This finding supports previous studies such as Chekijian et al., 40 which identified personal safety and perceived risk of virus exposure as notable concerns in patient experience. Our study further shows that the impact of safety prioritization is particularly evident in OP Lab (coefficient: 0.891, p < 0.001), while it shows insignificance in Urgent Care based on the overall responses. These variations likely reflect differing sensitivities to safety concerns based on patient acuity and procedural risk.

The item “appointment scheduling” was found to be a significant factor across multiple healthcare units, highlighting the importance of care access in patient satisfaction. This observation is consistent with findings from Atinga et al., 41 which highlighted access as a recurring concern in patient satisfaction. Notably, appointment scheduling was not found to be a significant concern in Medical Practice Magnet, suggesting possible operational differences in scheduling systems or patient flow management.

The total variance explained by quantitatively rated service attributes shown in Table 3 and Table 8 suggest that additional dimensions may improve the ability to predict patient satisfaction. Across all healthcare units, 15 unique items were used to measure service attributes. Most items were related to communication, with other items covering service accessibility and personal safety. However, OP Rehabilitation Magnet only measured three items, explaining just 29.2% of the variance, indicating potential limitations in capturing the full range of patient experiences. Furthermore, Medical Practice and Medical Practice Magnet measured five and twelve attributes, respectively, yet explained only 34.2% and 38.4% of the variance in patient satisfaction. In contrast, Urgent Care (measured five items) and Emergency Magnet (measured twelve items) explain 54.5% and 70.7% of the variance, respectively, suggesting that more complex service processes or situational factors in the former units may require additional measurement dimensions beyond existing structured ratings to better capture patient satisfaction in these settings.

The value of patient feedback in response to open-ended questions

Contextualizing core service attributes and uncovering blind spots in healthcare services

The text analysis of patient comments reinforces the importance of key factors identified in the quantitative ratings part of the survey and adds contextual depth to ratings. Frequently mentioned topics—such as “listening by providers,” “respect from staff,” and operational attributes like “appointment scheduling” and “safety precautions”—support the significance of those service attributes in patient experience. Patient comments in response to open-ended questions also shed light on why patients held certain perceptions, offering explanations that the rated questionnaire items alone cannot fully capture. Take “listen by provider” for instance, while ratings by patients reflect the extent to which patients felt heard, patient comments provide insight into what patients wanted providers to hear—such as specific symptoms or concerns—and how their experiences were shaped when these were overlooked. For example, one patient noted that their provider listened attentively to their symptoms, while another felt dismissed when the provider ordered routine tests without engaging in a meaningful discussion. These descriptions deepen the understanding of communication quality, revealing variation in patient expectations and perceptions within high- or low-scoring areas.

Patient comments also revealed emerging and unit-specific priorities not captured by the quantitatively rated survey items. One salient area relates to diagnostics and treatments specific to each healthcare setting—for example, blood tests and medications in Medical Practice, and symptom checks and pain management in Emergency care. Patients also emphasized operational factors influencing their experience, including care process efficiency and the physical environment. Comments identified concerns such as wait times, result turnaround, discharge procedures, waiting room conditions, and parking—underscoring the need for patient-centered improvements beyond clinical interactions. Digital health services, including telehealth and electronic communication channels, were frequently mentioned in patient feedback from Medical Practice and Urgent Care, highlighting the emerging role of accessible, technology-supported care in shaping perceptions and experiences in these ambulatory settings. Notably, patients in Emergency Magnet and Urgent Care notably commented on the facility—an aspect not included in its quantitatively rated survey items, yet warranting further consideration. While sharing certain common themes, specific concerns varied across healthcare units. From “telehealth visits” in Medical Practice to the “drive-through experience” in OP Lab, these setting-specific concerns reflect the distinct characteristics of each care context and would likely remain hidden without the analysis of patient comments to open-ended questions.

Taken together, the topics identified through qualitative comments both contextualize patient ratings of service attributes and reveal critical blind spots. Our findings confirm and extend the work of Khanbhai et al., 11 underscoring the evolving dimensions of patient experience and the meaningful variation across healthcare settings.

Enhancing explanatory power through integrating comments

Beyond providing descriptive insights, patient comments demonstrate their complementary value in explaining patient satisfaction while controlling for quantitative ratings. Mentions of new topics in patient comments are found to be negatively associated with patient satisfaction scores and was statistically significant for four of seven units. This pattern may be partially explained by the direct and spillover effects observed in Xu, 42 which showed that giving lower ratings of service attributes increased the likelihood of patients writing detailed comments about those attributes, and the lower rating of one service attribute also prompted comments expressing other concerns. Extending this logic, patients who introduced new topics in their qualitative comments could be raising concerns that were not addressed by the quantitatively rated survey items, and these unmeasured issues might have contributed to lower overall satisfaction scores.

Sentiment itself was found to be positively associated with patient satisfaction and was statistically significant across all non-Magnet units. This supports prior research linking sentiment in patient narratives to satisfaction43,44 but also underscores its explanatory value in settings without formal nursing excellence structures, even when used alongside quantitative ratings. Furthermore, mentions of new topics moderated the relationship between sentiment and patient satisfaction in certain healthcare settings. More specifically, when comments introduced topics not measured by the quantitative survey items, the influence of sentiment on satisfaction wass generally amplified—except in OP Rehabilitation (coefficient: −0.191, p < 0.05), where the association weakened. This negative moderation effect in rehabilitation settings may be attributed to the nature of their services, which involve repeated visits and sustained patient–provider interactions. 45 In such contexts, patient satisfaction may rely more on perceived care quality, recovery progress, and staff rapport rather than on newly introduced service attributes. This finding extends the scope of Chatterjee et al. 46 from healthcare products to services and suggests that the mentioning of new topic(s) may moderate the sentiment–satisfaction relationship, with their effects varying by care setting.

Patient comments further elucidated the association between quantitative ratings and satisfaction. We found that patients who left comments were found to be more engaged and expressed stronger or more consistent opinions. After controlling for extracted features from comments, several rated service attributes in the survey—particularly those related to trust and communication, such as “trust in provider,” “enough input,” “listening by provider,” and “respect from provider”—remained statistically significant across both the overall and commenting-group models. The consistency of these associations suggests that the rated service attributes in the survey represent robust dimensions of patient experience, even after accounting for additional explanatory content expressed in qualitative comments. Notably, “trust in provider” consistently emerged as the strongest predictor across all four units where it was measured, with high and stable coefficients, reinforcing its central role. “Listening by provider” showed slightly higher coefficients in the commenting group across multiple units, suggesting its heightened salience among patients who chose to elaborate on their care experiences. Together, these findings underscore the importance of provider trust and communication as foundational components of patient experience that persist across levels of engagement and feedback formats.

Taking comments into account shifted the importance of quantitatively rated service attributes, suggesting that patients who left comments might have weighed certain elements of care differently or that comment-derived features partially explained variance captured by the models that considered only quantitatively rated items. In Medical Practice Magnet, for example, “trust in nurses” became more strongly associated with satisfaction scores, while “respect from staff” lost significance. This change may reflect patients’ more refined differentiation between general courtesy and interpersonal trust, particularly among those motivated to comment. “Ease of appointment” also emerged as significant in this setting, potentially indicating that logistical barriers or access issues became more salient to those who took time to share detailed feedback. Shifts in the importance of “enough input” and “listening by provider” in the same unit may further reflect the expressive nature of the commenting group, suggesting that engaged patients was more attentive to whether their voices are heard and their perspectives are genuinely considered. In Emergency Magnet, “trust in nurses” became newly significant, while “listening by nurses” declined in importance. This may indicate a focus on confidence in clinical judgment over relational communication during high-pressure healthcare settings. Meanwhile, in OP Rehabilitation and Testing, all four surveyed service attributes showed stronger coefficients while remaining statistically significant. In the OP Rehabilitation Magnet unit, “respect from nurses” gained significance as “listening by nurses” diminished—suggesting a potential overlap in how these communication-related items were interpreted by patients in long-term or follow-up care contexts. In Urgent Care, “safety as a priority” became significant in the commenting group, possibly highlighting their sensitivity to personal safety in fast-paced outpatient settings.

Interestingly, the items “explanation by nurses, staff, and/or provider” and “feeling comfortable talking about concerns” were generally not significantly associated with the satisfaction score in rated survey data. However, when integrated with patient comments, these items displayed a small but statistically significant negative relationship in the model. This pattern may suggest that among patients who provided comments, provider explanations alone—particularly when not paired with active listening or respectful engagement—were not consistently viewed as enhancing their care experience. This is because provider explanations may sometimes be perceived as insufficient or formulaic, especially when they fail to directly address the patient’s individual concerns. This highlights the importance of context-sensitive communication, where being heard and actively involved may weigh more heavily in shaping overall impressions than simply receiving care information.

The significant Likelihood Ratio Test across most units alongside a slight increase in R2 (Table 8) suggests that those extracted features contribute to patient satisfaction but do not drastically enhance the variance explained. However, the R2 value increases more substantially for the model based solely on rated service attributes using the full dataset (n = 31, 054) when compared to the model using only responses with both ratings and comments (n = 17, 036). This indicates that ratings of service attributes in the survey have stronger explanatory power among respondents who provided comments. These results highlight the strategic value of analyzing responses that include qualitative comments, as they offer clearer insights into patient priorities and strengthen the interpretability of rated questionnaire items among more engaged respondents.

Taken together, these findings confirm and extend prior work suggesting that patient comments serve multiple functions in healthcare quality evaluation.47,48 They provide rating responses with additional context, reveals emotional dimensions of care experiences, surfaces new concerns not captured by rated survey items, and enhances the explanatory power of models predicting patient satisfaction. Integrating comment-derived variables transforms not only the model fit but also the interpretive landscape—helping to distinguish between patient concerns that are robust and those that shift with engagement level or care setting. These patterns reveal the limitations of relying solely on quantitatively rated surveys in capturing nuanced or emotionally charged experiences and reinforce the value of open-ended questions in advancing a more complete understanding of patient experience.

Implications for healthcare service measurement and patient experience improvement

This study offers several practical and research implications for healthcare questionnaire design, patient experience improvement, and patient feedback analysis. Our findings first highlight opportunities to enhance questionnaire design in capturing patient experience—particularly in units where quantitatively rated survey items explain only a modest proportion of the variance in patient satisfaction. To address this gap, we recommend strengthening the measurement of core dimensions—such as trust in providers and nurses, communication quality, and respect—which consistently demonstrate strong associations with patient satisfaction. For example, the Medical Practice, OP Rehabilitation and Testing, and OP Lab units currently include only three to five service attribute questionnaires, with variance explained ranging from less than 35% to 50% in the overall and commenting-group models, respectively. These limitations suggest a need to more comprehensively capture core aspects of patient experience and to broaden the scope of quantitatively rated items where appropriate.

Beyond clearly measured core healthcare service attributes, survey instruments should remain adaptable and incorporate new dimensions identified through qualitative feedback to capture evolving and context-specific patient concerns. Themes such as diagnostic and treatment experiences, as well as operational factors, were frequently raised in comments. Since these emerging topics were found to negatively impact patient satisfaction when unaddressed, they should be considered for inclusion in quantitatively rated questionnaire items. For example, questionnaire design may incorporate satisfaction measures related to telehealth in Medical Practice and Urgent Care, or drive-through services and result efficiency in OP Lab. The integration of these evolving and unit-specific dimensions into surveys would enhance sensitivity to local concerns, increase the actionability of patient feedback, and deepen cross-unit insights, enabling health systems to better align with patient needs and expectations.

Our findings also suggest a tiered approach for healthcare administrators and practitioners aiming to improve patient experience and enhance patient satisfaction. First, healthcare organizations should prioritize resource allocation and team-based training focused on communication, access to care, and safety precautions—areas consistently shown to be significant in structured ratings and frequently highlighted in open-ended comments. Second, practitioners should regularly review and analyze patient comments to proactively identify emerging patient needs and emotionally charged aspects of care, enabling more targeted and responsive improvement efforts. For example, in units where the interaction between sentiment and newly raised topics is positive—such as Medical Practice and Emergency Magnet, where patient interactions are often time-limited or high-pressure—addressing novel concerns through emotionally positive engagement strategies may amplify patient satisfaction. Additionally, administrators should pay particular attention to feedback from patients who leave comments, as this subgroup typically demonstrates deeper engagement and provides more informative evaluations.

From a research perspective, our findings recommend a multi-form data analysis approach, quantitative and qualitative patient feedback on health services to accurately capture patient experience and its link to their satisfaction. The variations in importance of determinants and prevalent themes across healthcare units underscore the necessity of unit-specific survey design and contextualized comparative analysis. Additionally, our study identifies a previously unexplored negative spillover effect, wherein mentions of new topics in comments often correspond with lower satisfaction scores and can moderate sentiment-satisfaction relationships depending on departmental service settings. Furthermore, our use of deep learning methods (BERT-based models) illustrates the effectiveness of advanced text analytics in identifying nuanced, emerging themes from qualitative comments—insights often missed by conventional text analysis methods. Researchers may also consider the role of commenting behavior and examine how it influences the importance and explanatory value of quantitatively rated service attributes in predicting service outcomes. Overall, we demonstrate that the value of patient comments should not be judged solely by incremental R2 increases but by their ability to provide rich contextual insights that enhance the interpretation of quantitative measures, identify evolving and unit-specific patient concerns, and strengthen the clarity of associations with outcomes of interest.

Limitations and future work

Despite its contributions, this study has three primary limitations. First, the analyses are based on data from a single month (December 2020), which limits the ability to capture temporal variation or evolving trends in patient feedback. A longitudinal study using data across a broader time span would offer a more comprehensive view of shifting patient concerns. Second, we were unable to assess the association of each identified new topic individually due to the exploratory nature of topic modeling and the unequal distribution of topic occurrences, which limited the feasibility of topic-level statistical analysis. Future research can investigate these nuanced associations further to refine measurement and intervention strategies. Third, while the multi-modal approach effectively uncovers emerging patient concerns, the findings may be influenced by the organizational structures and patient demographics of the participating healthcare units. This framework could be applied across multiple institutions or geographic regions to evaluate the robustness and generalizability of the findings.

Furthermore, while our deep learning model is highly scalable for discovering service aspects of concern, the subsequent aggregation and evaluation of qualitative information can also be viewed as a Group Decision Making (GDM) problem. A promising avenue for future work is a hybrid approach in which a panel of healthcare practitioners acts as experts to confirm and prioritize the emerging themes identified by the model. For example, Ji et al. (2021) introduce a biobjective optimization model that balances consensus and confidence, thereby enhancing the quality of GDM and the effectiveness of service resource allocation. 49

Beyond future research related to our current limitations, another direction of work could explore how artificial intelligence (AI)-driven solutions enhance patient satisfaction by optimizing service delivery processes, drawing on the theoretical framework proposed by Chiang 50 for interpreting AI applications in service operations. For example, the implementation of AI-enabled communication platforms may enable healthcare providers to offer more personalized and empathetic interactions with patients while simultaneously improving operational efficiency.

Additionally, future research could adopt a systematic methodology to enhance patient satisfaction, grounded in the unified service system framework proposed by Wang et al. 51 Specifically, leveraging work domain analysis as outlined by Wang et al. 52 would enable a comprehensive mapping of all variables associated with determinant factors such as trust and communication. This process should include an examination of organizational structures, technology interfaces, staff training programs, and environmental factors that collectively shape trust-building and communication effectiveness within healthcare service systems.

Conclusion

Given the impact of ongoing technological advancements, this study examines how patient concerns in healthcare services may be shifting and how ratings of questionnaire items and comments in response to open-ended questions jointly relate to patient satisfaction. Using deep learning and regression methods, we identified key drivers—such as trust and communication—that consistently influence patient satisfaction, while also revealing emerging, unit-specific concerns. Mentions of new aspects tend to lower satisfaction scores and may moderate the sentiment–satisfaction relationship depending on the healthcare setting. These findings suggest that qualitative patient feedback captures evolving priorities and provides complementary predictive value for patient satisfaction that standardized survey items may overlook. As healthcare services continue to evolve, our findings highlight the need for flexible, multi-modal feedback strategies supported by a context-aware analytical framework to address the diverse and changing needs of patients.

Supplemental Material

Supplemental Material - Assessing patient satisfaction in healthcare: Integrating ratings of service attributes and BERT-based analysis of comments

Supplemental Material for Assessing patient satisfaction in healthcare: Integrating ratings of service attributes and BERT-based analysis of comments by Lin Lu, Qiong Hu, Zhiping Walter, Donglai Huo, Hongbo Zhang in International Journal of Engineering Business Management.

Footnotes

Funding

The authors acknowledge financial support from the Business School at the University of Colorado Denver and the Dolan School of Business at Fairfield University for this research.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Appendix

Standalone linear regressions for patient satisfaction across healthcare units. *Statistical significance: p < 0.001***, p < 0.01**, p < 0.05*, and p < 0.1. The Mentions here refers to ‘Mentions of new topic (s)’.

Service attribute/extracted feature

Med prac

Med prac mag

Emergency mag

OP rehab

OP rehab mag

Urgent care

OP lab

Number of responses

6,851

6,848

877

876

759

720

1,765

1,765

731

731

780

780

2125

2125

Mentions*Sentiment

.404***

.064

.194

−.029

−.099

.258

−.005

Mentions

−.677***

−.509***

−1.734***

−.490***

−.235

−.875***

−.373**

Sentiment

.400***

.480***

1.922***

.581***

.592***

.938***

.601***

Intercept

9.626***

−1.093***

9.567***

−3.765***

8.423***

−3.269***

9.489***

−.087

9.432***

−.998**

9.449***

−3.884***

9.503***

−2.083***

Ease of appointment

.129***

.137**

.160***

.161**

Enough input

.364**

.700***

.840***

.827***

.737***

Explain by nurses

.204

.003

−.335*

Explain by provider

.152

Explain by staff

.063

Listen by nurses

.087

.357*

.125

Listen by provider

.618***

.780***

.866***

Safety precautions

.393***

.279***

.396***

.607***

.431***

.209*

.884***

Talk about concerns

−.233.

−.021

.257

Timely being seen

−.001

Respect from nurses

.105

−.224

.484*

Respect from provider

.598***

Respect from staff

.359***

.139

.496***

.892***

.955***

.535***

1.361***

Trust for nurses

.573***

.343

−.153

Trust for provider

1.242***

.982***

1.039***

1.231***

Adjusted R2

.106

.471

.058

.528

.304

.804

.064

.462

.043

.629

.187

.717

.086

.478

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.