Abstract

Background:

Underdiagnosis and delayed diagnosis of rheumatoid (RA) and psoriatic arthritis (PsA) remain major concerns, causing untimely treatments and impacting outcomes.

Objective:

We hypothesize that natural language processing (NLP) may identify red flags from clinical notes in non-specialist settings during early disease phases, thereby improving patient referral.

Design and methods:

Patients diagnosed with RA or PsA were retrospectively reviewed. Clinical notes from Emergency Department visits occurring in the 12 months prior to the diagnosis were analyzed through NLP into a final classification layer. Propensity score-matched controls without arthritis accessing the same Emergency Department were selected for comparison.

Results:

Among 650 patients with inflammatory arthritis seeking emergency evaluations in the 12 months before the diagnosis, 294 (45.2%) had PsA and 356 (54.8%) had RA. NLP achieved modest performance in training (AUCROC 70% for RA and 69% for PsA) with a marked drop in independent test sets (AUCROC 62% and 61%, respectively), suggesting limited robustness and accuracy for routine clinical use. Further analysis suggested an overlap between terms included in notes of cases classified correctly versus incorrectly. Manual selection of notes suggestive of arthritis by Rheumatologists did not improve the model performance, which remained of limited generalizability. NLP identified demographic and clinical peculiarities of RA and PsA patients presenting to the Emergency Department, including differing temporal trends of admission between the diseases.

Conclusion:

While NLP showed potential for extracting disease-related signals from Emergency Department clinical notes, its current performance does not support day-to-day clinical implementation. These findings highlight the need for enhanced data quality, interpretability, and algorithm robustness. NLP provided insights into the characteristics of patients seeking emergency care during prodromal phases of RA and PsA.

Plain language summary

People with rheumatoid arthritis (RA) or psoriatic arthritis (PsA) often experience delays in getting diagnosed, which can lead to worse outcomes. We wanted to see if an artificial intelligence tool called natural language processing (NLP)—which analyzes text—could help spot early signs of these diseases in clinical notes written during Emergency Department (ED) visits. We looked back at patients who were later diagnosed with RA or PsA and analyzed the notes from their ED visits in the year before their diagnosis. We then compared them to people who went to the same ED but did not have arthritis. NLP was able to recognize patterns linked to RA and PsA, but its performance lacked generalizability. Even when Rheumatologists tried to manually choose more relevant notes, the tool’s accuracy didn’t improve much. Still, the analysis revealed some peculiarities of people with RA and PsA using emergency care, such as their older age. In summary, NLP shows promise for identifying early signs of arthritis from ED notes, but needs to be trained on more robust data. This approach could eventually help spot patients earlier and get them the right care faster.

Introduction

The prevalence of rheumatoid arthritis (RA) and psoriatic arthritis (PsA) ranges from 0.5% to 1% in Western countries.1,2 Nevertheless, underdiagnosis and diagnostic delay remain significant clinical challenges, 3 often hindering timely treatments which are crucial for maximizing the chance of remission and preventing irreversible joint damage, 4 and reducing the risk of permanent disability. 5 While the time from symptom onset to diagnosis has decreased,6,7 it may still span several years,8,9 with delays typically longer in PsA than in RA. 3

Non-rheumatologist physicians, including primary care and Emergency doctors, may be the first point of contact for patients with early symptoms of inflammatory arthritis.10,11 Given the complexity of these diseases, achieving early diagnosis requires maintaining a high index of suspicion, particularly in “atypical” clinical settings and in the absence of validated screening tools.

Natural language processing (NLP) is a branch of Artificial Intelligence (AI) enabling computers to process and interpret human language, including unstructured data such as free-text entries in electronic health records (EHRs). 12 In the context of immune-mediated diseases, NLP can identify patterns that may be overlooked by the human eye, 13 as demonstrated for patient classification, severity stratification, and outcome prediction in Emergency Department settings. 14 This study aims to evaluate the capacity of NLP on Emergency Department clinical notes to intercept RA and PsA up to 12 months before their first rheumatological evaluation.

Patients and methods

Study design, population, and setting

This retrospective case-control study included adult patients (⩾18 years) who received a first rheumatological evaluation and confirmed diagnosis of inflammatory arthritis—encompassing RA or PsA—at the Rheumatology and Clinical Immunology Unit of Humanitas Research Hospital (Rozzano, Italy) between January 2017 and December 2023. For each case, the Emergency Department dataset from the same institution was retrospectively reviewed to identify any access occurring within the 12 months preceding the index rheumatological evaluation. Matched controls were selected in a 1:10 ratio among adult patients who presented to the Emergency Department on the same dates as the corresponding cases but never underwent a rheumatological evaluation at our institution. Propensity score-matching was applied to enhance the comparability of the control group, with scores calculated based on the priority level (triage “color code”) assigned at Emergency Department admission.

Outcomes

The primary outcome was to evaluate the performance of an NLP-based classification model in identifying patients who would go on to receive a first rheumatological evaluation confirming RA or PsA within 12 months, using only the free-text content from Emergency Department clinical notes. The ability to intercept RA, PsA, and the pooled “inflammatory arthritis” (i.e., RA or PsA) group was tested. Secondary outcomes included the explorative analysis of the characteristics of identified RA and PsA patients seeking emergency care during the exposure period.

Study phases and procedures

Given the explorative nature of the study, along with the limited evidence on the application of NLP models in this scenario, two sequential phases were followed. During the first phase, the analysis of unstructured data from Emergency Department EHRs was conducted with the Rheumatologists blinded to the content of the records. After performing NLP-based classification, a qualitative explainability analysis was obtained to assess the interpretability and clinical plausibility of the model outputs. The aim of this first phase was to evaluate whether NLP could distinguish among disease classes and generate clinically meaningful results, with no human-based intervention, editing, or pre-processing. During the second phase, Emergency Department records of all arthritis cases were manually reviewed independently by three blinded specialists. Clinical notes were further included in subsequent analyses only if they met the following criteria: (i) no evidence of a known history of RA or PsA from Emergency Department medical records, including common Italian synonyms and abbreviations; and (ii) the Emergency Department visit was prompted by symptoms potentially suggestive of inflammatory arthritis (e.g., joint pain, swelling, and morning stiffness). Any discrepancy in clinical notes evaluation was resolved through discussion until consensus was reached among the three Rheumatology clinicians. The aim of this second phase was to improve clinical consistency by focusing on clinical pictures more likely to be associated with undiagnosed inflammatory arthritis, while excluding emergency notes of conditions that are common to both cases and controls (e.g., trauma, surgical emergencies and infections).

AI tools, computational and statistical analysis

To identify RA and PsA cases, diagnoses were extracted using regular expressions applied to the outpatient EHRs from the Rheumatology and Clinical Immunology Unit database, aiming to detect mentions of the conditions of interest. 13 This enabled the identification of patients with a first outpatient evaluation for RA or PsA; these patients were then matched to Emergency Department records to identify relevant visits in the 12 months preceding the index rheumatological visit.

For subsequent classification, during both study phases, a supervised learning framework was applied to the unstructured data of Emergency Department clinical notes. First, text data were processed using “dbmdz/bert-base-italian-uncased,” a pre-trained Bidirectional Encoder Representations from Transformers (BERT) Language Models for Italian, accessed via the transformers (version 4.42.3) Huggingface Python library. The model was fine-tuned over five epochs using AdamW optimizer with a learning rate of 2 × 10−5 and a batch size of 16 to extract features from Emergency Department notes. A dropout layer (rate = 0.3) was placed between the BERT base model and the final classification head to reduce overfitting. To mitigate class imbalance, class weights inversely proportional to class frequencies were incorporated into the cross-entropy loss function. Early stopping based on validation loss was applied to prevent overfitting, with training terminated if no improvement was observed for two consecutive epochs. The target variable was the diagnosis category: RA, PsA, or either disease—collectively referred to as “inflammatory arthritis.” The dataset was split into 70% training and 30% testing sets. Model performance was evaluated using log-likelihood for model fit, and classification metrics including precision, recall, and F1-score. Performance was also analyzed across patient subgroups (e.g., gender and age) to assess consistency and fairness.

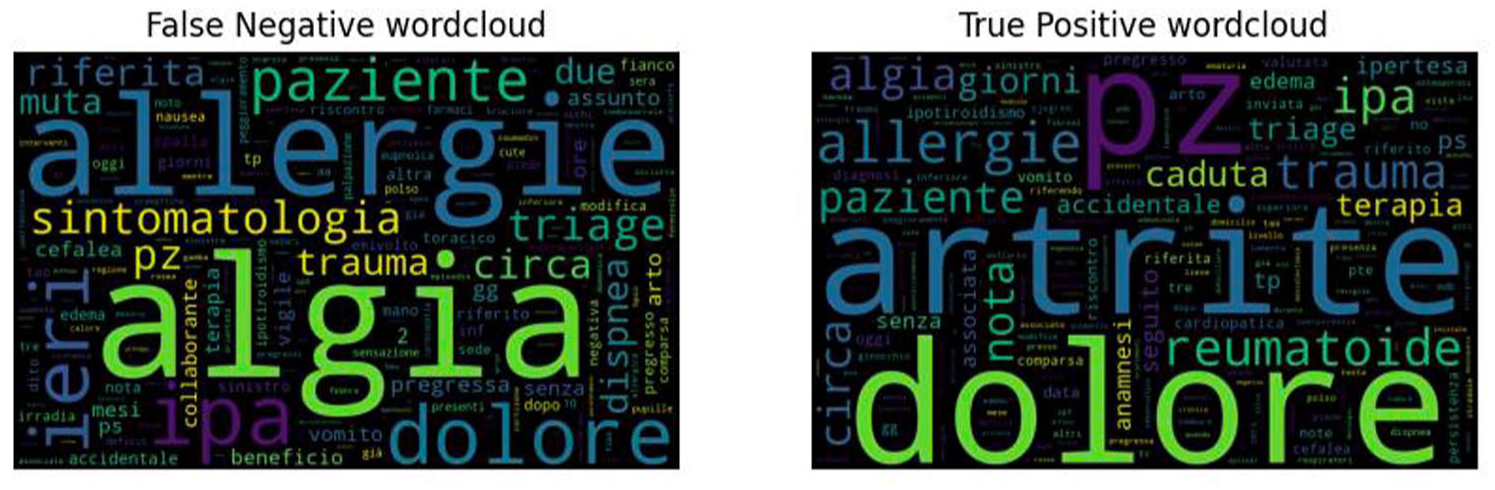

After the first phase, key terms contributing to the model’s predictions were explored through class-specific word clouds, allowing a qualitative assessment of the textual features associated with each diagnostic category. Due to poor model performance, the analysis was not re-conducted during the second phase (see “Results” section for details).

Continuous variables are reported as means and standard deviations (SD), and categorical variables as frequencies. Pairwise group comparisons were made using the two-tailed t-test for continuous variables and the Chi-square test for categorical variables. A p-value <0.05 was considered statistically significant. All analyses were performed using Python. Details regarding software versions and key dependencies are provided in the “requirements.txt file,” which is available in the GitHub repository associated with this project: https://github.com/pimorandi/ed-rheumatoid-classification/tree/main.

Results

Patients’ characteristics

Among patients receiving a first rheumatological evaluation for inflammatory arthritis between January 2017 and December 2023, 650 had accessed the Emergency Department of the same institution during the 12 months prior to diagnosis. Of these, 379 (58%) were females, with a mean age of 63 (SD 15) years. In comparison, the 6500 propensity score-matched controls without arthritis (1:10 ratio) had a mean age of 62 (SD 16) years, and a lower proportion of female patients (51%).

Of the 650 Emergency Department patients, 294 (45%) were subsequently diagnosed with PsA, representing 7.5% of all PsA cases evaluated at our institution during the established period. Among them, 112 (38%) were women, with a mean age of 63 (SD 15) years; the demographic characteristics of PsA patients were comparable to propensity score-matched controls (41% female; mean age 63, SD 16). The remaining 356/650 (55%) patients were diagnosed with RA, representing 10% of all RA cases seen at the institution during the study period. This subgroup was predominantly female (267/356, 75%), more represented than the matched controls (58%, p < 0.001), while the mean age was 63 (SD 16) years, comparable to controls (62, SD 17 years).

Over the 12 months prior to the first rheumatologic evaluation, the frequency of Emergency Department admissions remained relatively stable among PsA patients, while showing an upward trend in the last 3 months among those later diagnosed with RA (Figure 1). According to ICD-9 classification, “musculoskeletal complaints” (ICD-9 codes 710–739) were the most common reason for Emergency Department visits in both PsA and RA groups, followed by “nonspecific symptoms and conditions” (ICD-9 780–799; Supplemental Figure 1).

Frequency of Emergency Department visits over the 12 months preceding the index rheumatological evaluation in patients subsequently diagnosed with PsA (a) or RA (b).

First phase: Explorative evaluation of the NLP model applied blindly to Emergency Department clinical notes

As outlined in the “Methods” section, BERT embeddings followed by deep neural network classification were blindly applied to clinical notes retrieved from Emergency Department EHRs belonging to patients later diagnosed with PsA, RA, or included in the control group. In the training datasets, the model demonstrated fair performance in distinguishing PsA (AUCROC 0.69) and RA (AUCROC 0.70) from controls, with sensitivity of 71% and 57%, and negative predictive values of 82% and 74%, respectively. However, when applied to the corresponding test sets, a marked decrease in performance was observed. Specifically, AUCROC dropped to 0.61 for PsA and 0.62 for RA, with notably low sensitivity values (63% for PsA and 58% for RA), despite maintaining similar negative predictive values (80% and 74%, respectively). Detailed results for both training and test sets of the first study phase are shown in Supplemental Figure 2.

The model’s interpretability was then qualitatively assessed through the word clouds in Figure 2. As shown, the results were inconclusive, with a substantial overlap emerging between terms contributing to true-positive and false-negative classifications. Moreover, vocabulary contributing to the prediction included disease-related keywords such as “arthritis” (in Italian “artrite”), disease names abbreviations, and multiple elements of limited clinical relevance, including allergy history, standard Emergency Department admission phrases, and nonspecific complaints, along with widely used descriptors such as “patient” (in Italian “paziente”). These patterns were observed consistently across both PsA and RA classification tasks, witnessing limited model interpretability.

Word clouds of Italian terms extracted from Emergency Department clinical notes associated with false-negative (left) and true-positive (right) RA classifications. A substantial lexical overlap is evident between the two groups, contributing to misclassification. Words related to pain (e.g., algia and dolore), nonspecific symptoms and medical terms (e.g., paziente—patient, sintomatologia—symptoms, circa—approximately, and ieri—yesterday), and routinely collected emergency data (e.g., comorbidities, allergies, and medications) are commonly represented and overlapping in both scenarios. Similar results were observed for PsA (not shown).

Second phase: Evaluation of the NLP model on rheumatologist-selected emergency clinical notes

In the second phase, clinical notes from Emergency Department visits of patients subsequently diagnosed with PsA or RA were manually reviewed to select only those records deemed clinically relevant, according to the criteria defined in the “Methods” section. Specifically, notes were excluded if they mentioned a prior diagnosis of PsA or RA or if the reason for admission was unrelated to clinically suspected inflammatory arthritis. Following this selection process, notes from 151 PsA and 175 RA patients were included in the subsequent analysis, with age and sex distributions remaining comparable to those observed in the groups tested during the first phase.

In the PsA cohort, the model reached an AUCROC of 0.68 in the training subset, with a recall of 77% and a negative predictive value of 92%. However, performance on the test subset dropped, showing 0.58 AUCROC, 65% recall, and 86% negative predictive value. A similar trend was observed for RA: in the training set, AUCROC was 0.71, with 67% recall and 90% negative predictive value, whereas the test set yielded 0.57 AUCROC, 48% recall, and 87% negative predictive value.

To evaluate whether the performance could be improved by pooling PsA and RA cases into a single “inflammatory arthritis” group, patients were merged and compared to controls (Figure 3). This strategy did not yield substantial improvement. In the training set, AUCROC reached 0.76, with 68% recall and 92% negative predictive value. However, the performance on the test set remained suboptimal, with AUCROC falling to 0.61, recall to 45%, and negative predictive value to 86%, suggesting for limited generalizability of the model when applied to unseen, real-world data. Therefore, further analysis on explainability was not performed in this study phase.

Performance of the NLP model in training and test subsets for patients with “inflammatory arthritis” (i.e., merged class “RA or PsA” vs “controls”), after manual review of Emergency Department EHRs by Rheumatologists, as described in the “Methods” section. While a fair model’s performance is observed in the train set, metrics drop in the test set, reflecting poor generalizability.

Discussion

The capacity of NLP to derive clinically relevant insights from unstructured text renders it a particularly suitable approach for unsupervised clinical note analysis. 12 This was the first application of NLP to identify new cases of inflammatory arthritis from Emergency Department EHRs prior to the rheumatological evaluation and showed potential in pattern recognition. Of note, while a significant proportion of patients diagnosed with PsA or RA were reported to access the Emergency Department for potential rheumatologic concerns in the year preceding their first Rheumatology consultation, the tool ultimately did not succeed in reliably distinguishing patients presenting with suggestive symptoms from propensity score-matched controls by triage priority level. In particular, patients seeking emergency care during this prodromal period showed peculiar demographic and, possibly, clinical characteristics compared to what is expected for incident RA or PsA cohorts.

In fact, among patients receiving their first rheumatologic evaluation, 7.5% of new PsA and 10.4% of new RA diagnoses visited the Emergency Department within the prior 12 months. Moreover, 3.9% of PsA and 5.1% of RA cases presented with symptoms deemed suggestive of inflammatory arthritis, according to the Rheumatologist-led review of clinical notes. Aligning with previous evidence, 11 this further suggests the existence of a diagnostic window of opportunity for both Rheumatologists and Emergency doctors. Given the monocentric nature of our dataset, limited to a single institution, these data may even underestimate the true prevalence of emergency visits, and confirmation in multicenter datasets is warranted. Also, a trend toward an increase was observed in the 3 months leading up to the diagnosis in the RA subgroup while the frequency of Emergency Department admissions remained consistent across the 12 months preceding PsA. While this pattern might be stochastic, it could reflect genuine clinical dynamics such as different severity and diagnostic delays in PsA and RA. 6 Our findings may reinforce such observations by applying an advanced, unsupervised methodological approach in a unique clinical setting.

Consistent with established knowledge, a strong female predominance (75%) was noted among RA patients admitted to the Emergency Department, whereas sex distribution was more balanced in the PsA group. 15 Additionally, patients seeking emergency care were, on average, older than the typical age reported at disease onset in RA and PsA, possibly reflecting a greater tendency among older individuals to access emergency services. 16 We may hypothesize that specific characteristics could have contributed to clinical heterogeneity, negatively impacting the ability of NLP to detect patterns and accurately classify patients, as selection bias should be considered. In particular, elderly-onset RA more frequently follows an acute course, involving the large joints, and is accompanied by systemic features resembling polymyalgia rheumatica 17 and elderly-onset PsA has been similarly linked to higher disease activity, functional impairment, and more systemic inflammation, compared to young patients.18–20 Therefore, the higher disease burden, combined with the intrinsic frailty of aging people, 21 may drive elder patients to more frequently resort to emergency care for new-onset inflammatory arthritis. Furthermore, it is worth noting that a proportion of patients later receiving a diagnosis of inflammatory arthritis attended the Emergency Department for unrelated reasons. Although beyond the scope of this study, unconventional putative associations may have been missed, as is the case of infectious events preceding or mimicking autoimmunity.22–25 Larger datasets and more robust follow-up could allow for deeper exploration of this aspect.

Despite the adoption of several computational strategies to mitigate overfitting—including class weighting, dropout regularization, and early stopping—this issue remains a concern. The model exhibited fair performance on the training sets but showed limited generalizability, as evidenced by a marked decline in performance metrics on the test sets. Pooling PsA and RA cases into a combined “inflammatory arthritis” group did not yield substantial improvements and probably should not be used as an approach for future studies. Moreover, efforts to interpret the model’s decision-making through qualitative techniques proved inconclusive, showing substantial lexical overlap between correctly and incorrectly classified notes, and many high-weighted terms lacking proper clinical relevance. Inconclusive results were also observed after manual review of clinical notes. On one hand, this supports the need to develop proper feature selection techniques, while we cannot exclude that a proportion of false negatives could be attributable to the quality of data, not sufficient to establish an adequate classification. These findings underscore a key limitation of complex machine learning models, that is the difficulty in interpreting their internal logic, often addressed as a “black box.” Indeed, NLP models are characterized by autoregression, lacking the weighing of competing risk and Bayesian approach to reasoning that are—and will always be—unique to the human thought. 26 Implementation of cutting-edge techniques appearing on the landscape of NLP should be further evaluated to overcome these limits 27 in the proposed scenario.

This study represents the first attempt to assess NLP in detecting inflammatory arthritis from clinical documentation in a non-specialist setting. One of its strengths lies in the comprehensive sampling strategy, aiming to review all patients receiving a first Rheumatology evaluation for RA or PsA during the specified timeframe and, among them, including all subjects admitted to Emergency Department. The use of propensity score-matched controls, based on triage coding, helped, on one hand, to correct for bias associated with demographic confounders. Also, it contributed to simulate a realistic clinical scenario, approximating the population typically encountered by emergency physicians in their clinical activity. Moreover, the NLP approach leveraged state-of-the-art models: adopted embedding techniques were pre-trained on Italian texts and fine-tuned on medical notes, classification was performed using deep neural networks.

Nonetheless, several limitations should also be acknowledged. First, although the 1:10 matching ratio between cases and controls was chosen to ensure statistical power, it introduced class imbalance, potentially affecting classification performance. Second, albeit representing a real-word scenario, heterogeneity of unstructured data could have mined the performance of NLP. Indeed, the model operated on clinical notes authored by many different non-Rheumatology physicians, with likely different degrees of familiarity with rheumatologic conditions, and no standardized format was adopted to record for symptoms and physical findings. Routine laboratory results such as inflammatory markers were unavailable in most cases and therefore excluded from the model, which relied solely on free texts. This intrinsic variability represents a core limitation. Incorporating structured data, including standardized templates for symptom documentation and systematically performed routine laboratory tests (e.g., inflammatory biomarkers), could help enhance data consistency and improve NLP signal detection in future studies. Also, a multimodal approach comprehensive of imaging data should be explored whenever available. Third, unsupervised selection of cases and controls may have introduced misclassification bias, since some patients could have already been diagnosed with RA or PsA at the time of emergency admission, prior to their first Rheumatology visit at our institution. Clinical note review was performed to mitigate this issue, but it is possible that some cases were still not excluded if past medical history had not been documented in Emergency Department EHRs. Fourth, the monocentric design based on a single-institution core of data limits the generalization of results, and multicenter datasets will be essential for validation and further exploration. Finally, as previously discussed, the demographic profile of our cohort likely reflects a degree of referral and selection bias and may not be representative of the broader population of patients with new-onset inflammatory arthritis.

In conclusion, while previously demonstrating promising results in uncovering patterns that may escape human observation, 28 NLP demonstrated suboptimal performance in identifying new-onset RA and PsA from clinical notes recorded in a non-specialist setting. Nonetheless, NLP contributed to elucidating that patients presenting to the Emergency Department with prodromal arthritis-related complaints may exhibit distinct demographic features from typical incident cohorts and, thus, could potentially diverge from “conventional” phenotypes. To improve the clinical utility, interpretability, and generalizability of AI-based tools, future research should focus on methodological advancements, such as adaptation to different languages and registers, enhanced quality and more comprehensive data collection, and the development of more robust and inclusive classification models.

Supplemental Material

sj-doc-1-tab-10.1177_1759720X261431361 – Supplemental material for Natural language processing to intercept rheumatoid and psoriatic arthritis from clinical notes in the Emergency Department

Supplemental material, sj-doc-1-tab-10.1177_1759720X261431361 for Natural language processing to intercept rheumatoid and psoriatic arthritis from clinical notes in the Emergency Department by Antonio Tonutti, Pierandrea Morandini, Cosimo Faeti, Nicoletta Luciano, Saverio D’Amico, Antonio Voza, Victor Savevski and Carlo Selmi in Therapeutic Advances in Musculoskeletal Disease

Supplemental Material

sj-docx-2-tab-10.1177_1759720X261431361 – Supplemental material for Natural language processing to intercept rheumatoid and psoriatic arthritis from clinical notes in the Emergency Department

Supplemental material, sj-docx-2-tab-10.1177_1759720X261431361 for Natural language processing to intercept rheumatoid and psoriatic arthritis from clinical notes in the Emergency Department by Antonio Tonutti, Pierandrea Morandini, Cosimo Faeti, Nicoletta Luciano, Saverio D’Amico, Antonio Voza, Victor Savevski and Carlo Selmi in Therapeutic Advances in Musculoskeletal Disease

Footnotes

Acknowledgements

None.

Declarations

Supplemental material

Supplemental material for this article is available online.

Generative AI in the writing process

A LLM (ChatGPT-5, OpenAI) was used to assist with proofreading the manuscript text. After using this tool, the authors reviewed and edited the content as needed and take full responsibility for the content of the publication.