Abstract

Objective

To examine how artificial intelligence and machine learning methods are used to develop chronic disease case definitions using primary care electronic medical records and to evaluate their methodological transparency and clinical applicability.

Methods

A scoping review was conducted according to the Arksey and O’Malley framework and Preferred Reporting Items for Systematic Reviews and Meta-Analyses extension for Scoping Reviews (PRISMA-ScR) guidelines across Medical Literature Analysis and Retrieval System Online (MEDLINE), Excerpta Medica Database (EMBASE), Cumulative Index to Nursing and Allied Health Literature (CINAHL), Scopus, and Web of Science (2000–2024). Eligible studies applied machine learning to define or validate chronic disease cases using primary care electronic medical record data. Twenty-three studies were analyzed for data sources, machine learning approaches, validation strategies, and knowledge translation activities.

Results

Most studies were from Canada or the United Kingdom and used supervised machine learning, primarily random forests and neural networks. Validation metrics commonly included sensitivity and specificity, although external validation was rare. Interpretable, rules-based definitions could be derived from approximately 50% of the studies, while none described formal knowledge translation.

Conclusions

Machine learning methods can improve electronic medical records-based disease identification and surveillance. However, greater transparency, validation, and clinician–machine learning collaboration are essential to translate these approaches into trustworthy, decision-support tools for primary care.

INPLASY registration number: 2025120002

Introduction

Primary care electronic medical records (EMRs) refer to the clinical records of patients receiving care from family medicine physicians. These records systematically and longitudinally document a patient’s health information, health services used, and prescribed medications, among other data. 1 The utilization of EMRs has grown rapidly with the widespread adoption of computerized documentation systems, providing a comprehensive data source for health research, surveillance, and quality improvement initiatives. Primary care EMR data capture the first point of contact and ongoing management for most health concerns, thus offering rich clinical context, enabling real-time data capture, and facilitating longitudinal follow-up across a broad patient population. 2 These features make primary care EMRs especially valuable for identifying early disease markers, understanding care trajectories, and supporting population-level decision-making.

Using these clinical data effectively for accurate identification of disease cases (EMR phenotyping) is challenging but can be accomplished.3,4 However, due to variability across healthcare systems and the data EMR systems collect, each database requires its own unique operational case definition. 5 For chronic conditions, which are particularly relevant to primary care where early detection and long-term management influence patient outcomes, disease case phenotyping can be particularly challenging because of long latency periods and unclear or evolving diagnostic criteria. 6 Traditional methods for EMR phenotyping rely on clinical documentation, diagnostic criteria, patient-reported outcomes, laboratory data, and imaging; however, they are often time-intensive to create, static, and prone to data limitations such as inconsistency in coding practices across primary care providers or clinics. 7 Recent studies have explored machine learning (ML) methods that can utilize both structured and unstructured EMR data for this purpose, 8 which differ from traditional EMR phenotyping in that they use data-driven algorithms rather than manually defined rules. 9

Despite the growing use of ML in health-related fields, there is limited discussion of its application for developing and validating EMR-based phenotyping case definitions in primary care, with no published systematic reviews currently available. Although a variety of ML techniques have been used, their documentation, rationale, and interpretation remain inconsistent and incomplete, hindering replication and making it difficult for practitioners, researchers, and policymakers with limited ML expertise to interpret and apply the study findings.

According to the World Health Organization, chronic diseases are responsible for nearly 75% of global nonpandemic deaths. 10 Although the definition of “chronic disease” varies across organizations,10–12 these conditions share features of long duration, slow progression, and the need for ongoing management in primary care. The aim of this scoping review was to provide a summary of ML methods used to develop chronic disease case definitions for primary care datasets, including the evaluation and validation metrics reported in these studies (e.g. case definition accuracy). This focused review on chronic diseases includes findings that are directly relevant to the most pressing and common challenges in primary care case identification. Additionally, given that the methods by which ML algorithms select cases may be complicated and/or opaque (e.g. black-box approaches), the secondary objective was to describe the knowledge translation (KT) and dissemination of these results to different user groups, where available. By focusing on ML approaches, we aimed to highlight the potential and limitations of these emerging tools, which may offer more scalable, adaptive, and data-driven solutions to EMR phenotyping in complex primary care settings. A scoping review approach was selected due to the relatively low, but growing, number of publications and the diversity of study designs, outcome definitions, and dataset types in this field. This enabled comprehensive mapping of methodologies and identification of knowledge gaps, 13 rather than quantitative synthesis, which is not yet supported by the current volume of literature.

Methods

A review protocol was developed internally by the research team; it was registered post-study with the International Platform of Registered Systematic Review and Meta-Analysis Protocols (INPLASY, https://inplasy.com/): registration number INPLASY202512002. The procedures for this review were guided by the established recommendations for scoping reviews proposed by Arksey and O’Malley (2005), with subsequent refinements by Levac et al. (2010),14,15 and reported using the Preferred Reporting Items for Systematic reviews and Meta-Analyses extension for Scoping Reviews (PRISMA-ScR) guidelines. 16 Following the Population–Concept–Context (PCC) framework, 17 the population of interest was patients represented in primary care EMRs; the concept was the use of ML methods to develop case definitions for chronic diseases; and the context was primary care data, including studies based on EMRs or electronic health records (EHRs) from primary care settings.

Eligibility and exclusion criteria

This review followed strict inclusion and exclusion criteria. Studies were included if they met the following criteria: (a) use of primary care EMR or EHR data, including datasets linked to other sources, provided that the primary care component remained the focus; (b) application of ML methods to develop case definitions for chronic diseases or conditions, with the goal of identifying or refining diagnostic criteria. Prediction models for incident disease were excluded because their objectives and validation frameworks differ fundamentally from those of phenotyping studies, which aim to identify existing cases; (c) original, data-based research articles; and (d) articles published in English.

Studies were excluded if they were published before 2000; primarily used data from settings other than nonspecialty primary care (e.g. outpatient specialty clinics or biobanks); or were reviews or gray literature (e.g. newspaper articles, textbooks, editorials, commentaries, conference abstracts, or letters to the editor). Studies developing tools to predict incident cases of disease, such as predictive modeling, were also excluded. There were no restrictions on study design (e.g. cross-sectional studies, nonrandomized, or randomized trials), patient demographic characteristics (e.g. age, ethnicity, or sex), or the region or country of origin of the database(s) used.

Information sources and search strategy

A research librarian (AS) finalized the search criteria using Medical Literature Analysis and Retrieval System Online (Medline), Excerpta Medica Database (EMBASE), Scopus, and Web of Science. The terms and concepts related to “neural networks/AI/ML concept,” “EHR/EMR concept,” “case selection/definition concept,” and “primary care” were used. The search was performed on 15 December 2022. A secondary search using the same strategies was performed on 5 July 2024, to identify articles published since the first search. The full search strategy is described in Appendix 1. Gray literature sources were not included due to time and human resources constraint and the focus on peer-reviewed research, which ensured the methodological rigor of the included studies.

Selection of evidence sources and analyses

A research librarian (AS) imported retrieved articles from the search strategy into Covidence systematic review software 18 and removed duplicate documents. Prior to formal screening, the review team conducted a brief pilot test of the title/abstract form to ensure consistency in the application of the inclusion and exclusion criteria. Two reviewers (AP and CL) then independently completed title and abstract review based on the inclusion and exclusion criteria. All disagreements were resolved through paired or group discussions.

Following title and abstract review process, each article was assigned to two of five reviewers (AP, CL, SAH, MC, and KK). Reviews were conducted independently, and all differences in perceived eligibility were resolved through discussions between all five reviewers. Summary data from full-text extraction were also complied by two reviewers independently. This process involved coding through an online data extraction intake form designed by the project team.

Data extraction was performed using a codebook consisting of the following categories: characteristics of the document (author, year of study, and geographic location of the research); characteristics of the study (publication status, publication type, and design); and characteristics of sample (sample size, age, sex, and disease/condition(s)). Information pertaining to the data source and ML method (method name, number of samples, feature type, feature reduction method, ML class, reference standard, imbalanced classes, resampling of training data, cross-validation, classification and/or regression, and metrics used to optimize the ML model) were collected. The data extraction form was piloted by all members of the review team using five articles before applying it to the full set of included studies. Notably, we documented the case definition optimization method (e.g. accuracy, sensitivity, and specificity) and whether the number of rules were presented to the reader. To fulfill the secondary objective, we recorded whether the article described KT and dissemination of these results to knowledge users (e.g. health care practitioners, policymakers, and sponsors). The codebook is included in Appendix 2.

The aim of this review was to map existing ML-based approaches rather than assess the methodological quality of each study; therefore, in accordance with the scoping review methodology, no formal quality appraisal of individual studies was performed. Data were coded, and frequency counts were calculated for each categorical variable. We performed narrative analysis and presented the findings in summary tables.

Results

Figure 1 16 presents a flowchart of the literature search and selection processes in accordance with the PRISMA-ScR guidelines. The initial search of the databases yielded 1272 articles with 94 duplications. After title and abstract screening, 1093 articles were excluded. Overall, 85 full-text articles were reviewed, and 63 of them were excluded. According to our codebook, only articles that included primary care data, used ML methods, developed case definition(s) for one or more specific chronic conditions, and have been published in a peer-reviewed journal were included. Since our first search was performed in 2022, AP and AS performed a second search in July 2024 to identify more recent publications using the same strategies on the same databases. The second search yielded 436 additional abstracts to be screened. Ultimately, 18 additional full-text articles were reviewed, and 1 additional article was included. A complete list of 23 articles captured during data extraction is presented in Table 1.4,19–40

Flowchart of data screening and reviewing, adapted from Tricco et al., 2018. 16

Details of the included articles (n = 24).

CPCSSN: Canadian Primary Care Sentinel Surveillance Network; SAIL: Secure Anonymised Information Linkage databank; HER: Health Electronic Records; CPRD: Clinical Practice Research Datalink; MAPCReN: Manitoba Primary Care Research Network; EMRPC: Electronic Medical Record Primary Care; EMRALD: Electronic Medical Record Administrative data Linked Database; IPCI: Integrated Primary Care Information; Nivel PCD & DIS: Nivel Primary Care Database and Diagnosis Related Groups Information System; THIN: Health Improvement Network; OSCAR: Open Source Clinical Application Resource

Characteristics of the included articles

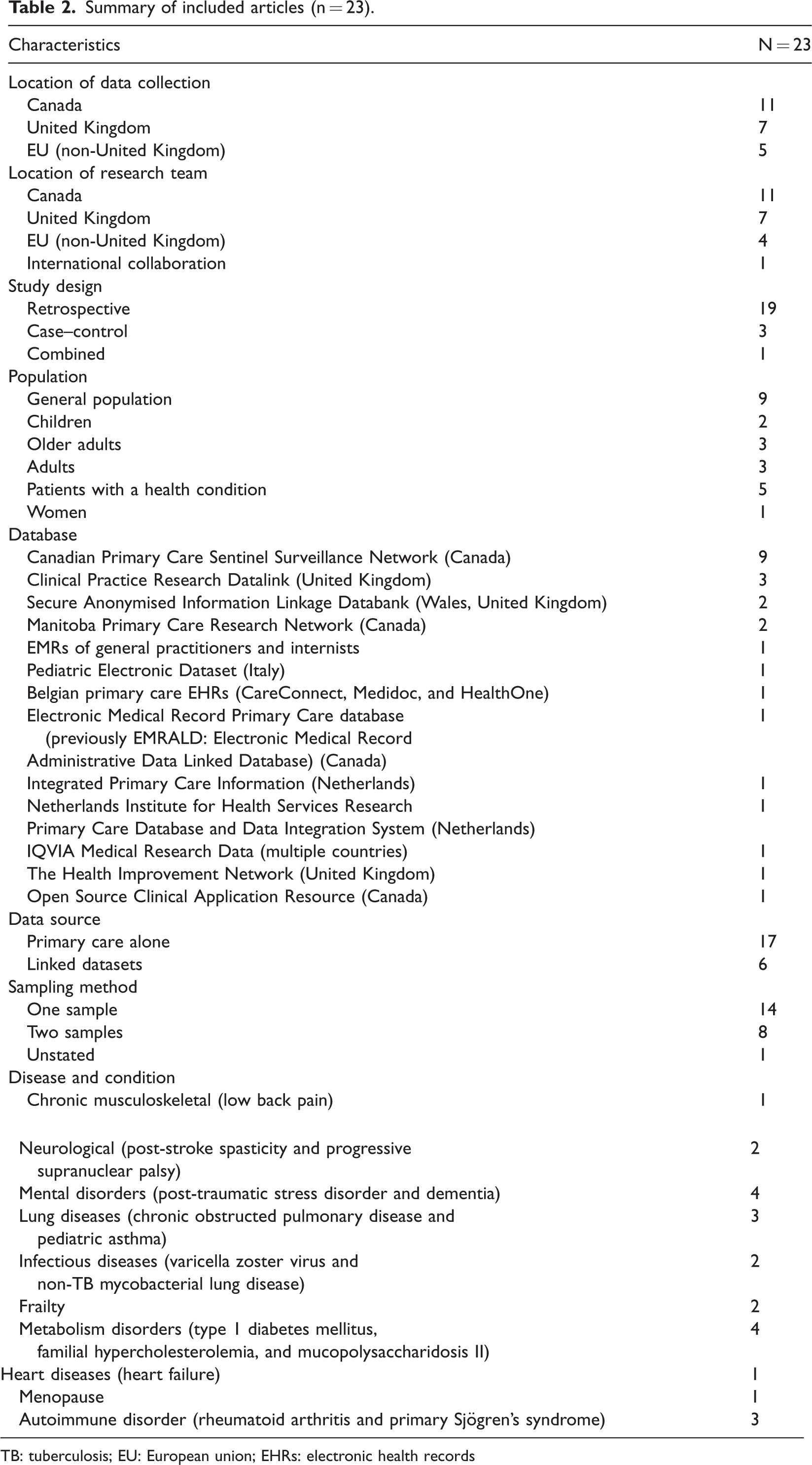

Table 1 lists the region of origin and source database for each article, while Table 2 presents the summary characteristic of each study. Overall, 11 articles were from Canada, while the other 12 were conducted in one or more European countries, with 7 from the United Kingdom. One study was a product of an international collaboration between the US and Germany. 23

Summary of included articles (n = 23).

TB: tuberculosis; EU: European union; EHRs: electronic health records

The most used database was the Canadian Primary Care Sentinel Surveillance Network (CPCSSN) (n = 8; 35%), followed by the United Kingdom’s Clinical Practice Research Datalink (CPRD) (n = 3; 13%). Other databases included the Secure Anonymised Information Linkage (SAIL) databank linked with Cellma (Wales, n = 2; 9%), Pedianet database (Italy, n = 1), Belgian primary care EHRs: CareConnect, Medidoc, and HealthOne (Belgium, n = 1), Integrated Primary Care Information (IPCI) database (Netherlands, n = 1), Nivel Primary Care Database (Nivel PCD) and Diagnosis Related Groups Information System (DIS) (Netherlands, Italy, and Spain, n = 1), IQVIA Medical Research Data (United Kingdom, Netherlands, Switzerland, and Denmark, n = 1), The Health Improvement Network (THIN, UK, n = 1), and Open Source Clinical Application Resource EMR (Canada, n = 1). One study mentioned the use of the EMRs of general practitioners and internists; however, it was unclear which specific database was used. 23

Most often, databases included data collected from the general population with no age restrictions (n = 9; 39%), although some databases included age-stratified populations as follows: adults (aged >18 years, n = 3; 13%), children (n = 2; 9%), older adults (aged >50 and >65 years, n = 3; 13%) or patients with a health condition of interest (n = 5; 22%). In terms of patient sex, one study on mucopolysaccharidosis type II included only male patients; 35 one study on menopause included only female patients; 40 in 11 studies (48%), the proportion of female participants ranged from 47% to 64%; while the other 10 studies did not mention sex distribution.

Seventeen studies (74%) used only a single primary care database, while the remaining six studies used linked databases, including four linkages with hospital and discharge datasets and one linkage with a cost utilization dataset. 31

Most studies used a retrospective cohort design where the whole dataset was used (n = 19; 83%); three studies used a case–control design, in which controls were matched to pre-identified cases based on demographic characteristics, and one study combined the two methods. 21 More than half of the studies (n = 14; 61%) used a single sample to train and test the algorithms and to validate the case definition; seven studies used two separate samples; while one study did not describe how the dataset was formed. 31

In the included articles, case definitions were developed for a range of diseases and conditions. In ranked order, the most common conditions were chronic musculoskeletal disorders (n = 1; 4%), heart diseases (n = 1; 4%), menopause (n = 1; 4%), autoimmune disorders (n = 2; 9%), frailty (n = 2; 9%), infectious diseases (n = 2; 9%), neurological diseases (n = 2; 9%), lung diseases (n = 3; 13%), mental disorders (n = 4; 17%), and metabolism disorders (n = 5; 22%).

Characteristics of the ML methods used in the included studies

Supplementary Table 1 presents the attributes of the ML methods from the included articles. We considered three phases of ML-based EMR phenotype development: feature generation, algorithm training, and validation. The numbers reported for each characteristic below might not add up to a total of 23 as some studies did not report specific features or methodological details.

Feature generation. Studies often used computer-based or literature/expert opinion-based methods to generate the feature list (n = 11 and n = 8, respectively), and four studies used a combination of these two methods.

Most studies used diagnosis codes and medication codes as their feature sets (n = 20 and n = 19, respectively). Use of demographic characteristics was also common (n = 15). Other feature types included unstructured text, laboratory results, existing validated conditions/diseases, health risk factors, biometrics, referral codes, and validated scores. No study included scanned or image-based data.

Only thirteen studies mentioned methods used to reduce the number of features. Those methods included ranking index (n = 5), truncated singular value decomposition (SVD, n = 3), backward elimination (n = 2), chi-square (n = 1), decision tree (n = 1), and code grouping (n = 1).

2. Algorithm training. All studies used supervised ML methods. The reference datasets (i.e. “gold standard”) were primarily labeled through manual review (n = 16). Two studies used ML-based automated review, and two studies used a hybrid of these two methods.

The training datasets were most often re-balanced using data-based methods (i.e. case–control design, n = 13), while two studies used model-based methods, and one study 24 used a combination of the two methods.

Training datasets were resampled using cross-validation (n = 12), bootstrapping (n = 2), or both methods (n = 1); in seven studies, the dataset was not resampled.

In the 20 studies with supervised classification, tree-based methods were the most popular and included random forest (n = 11), traditional decision trees (n = 7), and gradient boosted decision trees (n = 7). Other applied methods were neural networks (NNs) (n = 9), feed-forward NNs (n = 6), support vector machines (SVM, n = 5), naïve Bayes (n = 5), convolutional NNs (n = 1), recurrent NNs (n = 1), least absolute shrinkage and selection operator (LASSO, n = 1), and k-nearest neighbors (KNN, n = 1).

The primary metrics used to optimize the performance of the algorithms were receiver operating characteristic curve (ROC)/area under the curve (AUC) (n = 6), F1-score (n = 5), and sensitivity (recall, n = 3). Other metrics that were used included weighted binary cross-entropy log-loss (wbcell) measure, specificity, positive predicted value (PPV, precision), negative predicted value (NPV), accuracy, Youden J, and misclassification rate.

3. Validation. Three studies used a separate dataset for validation. The validation datasets were left out after feature generation in seven studies, while the same dataset was used for both training and validation in six studies. 4. The case definition. Among 23 studies, 12 developed human-readable (clear rules-based) criteria. For example, problematic menopause was defined as two occurrences within 24 months of International Classification of Diseases, Ninth Revision (ICD-9) code 627 (or any sub-codes) OR one occurrence of Anatomical Therapeutic Chemical (ATC) classification code G03CA (or any sub-codes) within the patient chart.

40

Only eight provided human-readable rules, with the number of rules ranging from 2 to 26, while nine studies produced “black-box” case definitions. Case definition validation results of ML study (n = 23). ML: machine learning

Overall, the methodological rigor of the reviewed studies was highly variable. Only three studies used separate datasets for external validation, while the others used a single dataset for both training and testing, which raises the risk of overfitting. Additionally, sample sizes varied widely, ranging from a few hundred to hundreds of thousands of records, with deep learning methods often being applied to the largest datasets. In addition, there was no mention of KT activities in any of the included articles.

Discussion

Geographical distribution and database usage

Our analysis showed that most studies in North America used Canadian data from the CPCSSN. Most European studies were spread across multiple countries; however, the largest number of studies were from the UK, and they used data from CPRD and THIN. However, we could not identify any studies from Observational Health Data Sciences and Informatics (OHDSI), Phenotype Knowledge Base (PheKB), and Health Data Research United Kingdom (HDRUK) in our literature search, despite their active involvement in the development of electronic algorithms (case definition). A possible reason is that their work often spans a wide variety of data sources that may not be centralized to primary care. Alternatively, their documentations could have been published on their internal platforms, 41 rather than in peer-reviewed journals, and were therefore not identified by our search strategies.

The absence of US-based studies stands out, given the country’s extensive use of EHRs and strong research infrastructure in both health informatics and ML. This might reflect differences in healthcare systems, data accessibility, and research priorities between the US and other countries such as Canada and the UK, where more studies meeting the review inclusion criteria have been published. The factors that create barriers to large-scale standardized EHR research in primary care settings in the US, such as proprietary data silos and complex access barriers, may differ from those in countries with centralized health systems.42,43

A study by Kwasny et al. 23 exemplifies the potential benefits of international collaboration. By integrating data from the US and Germany, this study highlights how international efforts can broaden research scopes in an effort to enhance generalizability. However, such collaborations also introduce complexities in data harmonization and analysis, which must be carefully managed to achieve reliable results. 44

Given the variation in database structures that are used in clinical practice and for administrative management, the use of linked databases in five studies indicates an encouraging movement toward more comprehensive research methods that integrate primary care with hospital or discharge datasets. This link provides a more holistic view of patient care and outcomes. The integration of diverse data sources reflects a growing recognition of the value of multi-faceted approaches to understanding patient health trajectories, although it may complicate data management and analysis processes and thus require carefully constructed data governance frameworks. 44 Although the EMR–claims linkage can support phenotyping and such studies were eligible for inclusion, none of them met our criteria.

ML methods

The analysis of ML methods employed across studies reveals several noteworthy trends and practices for feature generation, algorithm training, and validation.

Feature generation and selection

Studies used both computer-based methods (n = 11) and literature/expert opinion-based methods (n = 8) for feature generation. A combination of these two methods was used in four studies, highlighting a balanced approach to feature selection. Computer-based feature generation methods are more objective and consistent, 45 which can provide a comprehensive list of low-bias features. This is especially useful for larger, more complicated datasets; however, it may require high-performance computational resources and can result in the generation of complex case definitions without intuitive explanations. 46 In comparison, expert opinion-based methods can be cost-effective and produce clear rules for defining cases, although they can be subject to bias caused by researcher preference and experience. 47

Diagnosis codes (n = 20) and medication codes (n = 19) were the most frequently used features, demonstrating their central role in defining health conditions. Demographic characteristics (n = 15) were also commonly used, reflecting the importance of accurate contextual factors in health research; however, there was a notable absence of features derived from laboratory test results, scans, or images. This suggests areas for future exploration, as integrating other data types could enhance the accuracy of case definitions. Furthermore, if data on social determinants of health and patient lifestyle are available, these variables may provide useful features for EMR phenotyping.

Algorithm training

All studies in this review employed supervised ML methods, and the majority relied on a manually reviewed reference dataset (n = 16). This reliance on manual review (or manual annotation) underscores the importance of accurate and reliable data labeling in developing effective case definitions. However, this approach can be time consuming and potentially expensive as it relies on the availability of expert reviewers and involves a higher cost; hence, the size of the reference set may be limited. 7 Other methods that can be applied to create a reference dataset in a faster and more cost-effective way, such as automated labeling and crowdsourcing, 48 are less frequently documented in or absent from primary care research.

Most studies addressed class imbalance through data-based methods (n = 13) using a case–control design, which involves the addition of patients who are likely to be positive for the disease, thereby artificially increasing the disease prevalence. Typically, a majority of the dataset contains patients who do not have the disease, and performance of the trained model can be biased toward this majority class. Re-balancing positive and negative cases (i.e. classes) is one way to reduce this bias; however, adding or duplicating potential cases or deleting noncases in the dataset can also cause biases as it alters the frequency distribution of some features, which will affect the final ML model. 49 Other methods for tackling class imbalance, such as the use of cost-sensitive models and selection of appropriate validation metrics, can prevent some of the limitations of data-based methods.50,51

Cross-validation (n = 12) was the most common resampling technique; however, the lack of resampling in seven studies could limit the generalizability of the findings. 52

The most popular classification algorithms were tree-based methods such as random forest (n = 11) and gradient boosted decision trees (n = 7). Black-box methods, including NNs (n = 9) and support vector machines (SVM, n = 5), were also employed, indicating the range of approaches that can be used in case definition development.

Although black-box ML models, including those employing deep neural networks, can be powerful tools for classification, they create significant interpretability issues in the final case definition. This can be problematic in clinical environments as they may require transparent definitions for both trust and adoption. The patient classification process of these models remains unclear, which makes it challenging for clinicians and researchers to validate or dispute their results. The lack of transparency in these models may create ethical concerns as it reduces accountability while increasing the risk of bias and reducing the ability to generalize findings, especially if the training data underrepresents the diversity of the modelled population. Tools such as Shapley additive explanations (SHAP) and local interpretable model-agnostic explanations (LIME) are explainability methods that highlight which features contribute most to a model’s case/noncase classification and may also support transparency by providing insight into decisions made by black-box models. 53

Validation practices

Only three studies used separate datasets for validation, while many studies used the same dataset for both training and validation. The limited use of separate validation datasets introduces three major limitations: (a) overfitting, wherein the model learns the specifics of the training data rather than general patterns; (b) reduced generalizability, which is a model’s ability to perform well on new, unseen data that is not part of the training process; and (c) introduction of biases, errors, or inaccuracies in a model’s predictions due to certain assumptions or flaws in data collection, processing, and/or evaluation processes. All seven studies with a separate validation dataset performed the split into training/testing and validating datasets after feature generation. This method is more robust than that involving the use of the same dataset for both training and validation. However, it has limitations because feature generation based on the entire dataset can lead to data leakage, potentially resulting in inflated performance estimates and less reliable generalizability assessments. 49

For validation metrics, sensitivity and specificity were the most frequently reported, reflecting a focus on evaluating the accuracy of the resulting case definitions in patient classification. Sensitivity measures the ability of a case definition to correctly identify true positive cases, while specificity reveals how well true negatives are captured. These metrics are essential when assessing how well a model-defined case definition aligns with a reference standard, such as clinician adjudication or chart review. Several studies have also reported metrics that assess the performance of the ML model itself, such as ROC/AUC and F1-score, typically derived from validation or test sets during model development. ROC/AUC offers a global view of the model’s discriminative ability, assessing the functional relationship between sensitivity and specificity, while the F1-score balances precision (positive predictive value) and recall (sensitivity) to quantify performance in imbalanced datasets. Given that there are two distinct purposes of validation metrics (evaluating the ML process versus validating the final case definition), future studies should clearly report both and consider using a diverse set of metrics to capture a comprehensive picture of model and case definition performance. 54

KT

The variation in case definition development, from human-readable criteria to black-box approaches, reflects differing research priorities and methodologies. Of the reviewed studies, 12 developed human-readable criteria; eight of these provided detailed rules. In contrast, nine studies employed black-box models that, while potentially offering more sophisticated case discrimination, lacked simple interpretability. As end users of these case definitions, including health researchers, physicians, and policymakers, often prioritize transparency and interpretability, 55 human-readable criteria tend to be more widely accepted. However, none of the included studies explicitly addressed KT plans or activities, underscoring a gap in ensuring the practical application of these case definitions.

Our review highlights the need for more studies that explore, develop, and validate ML approaches tailored to primary care datasets. Furthermore, the absence of contributions from the US is notable and surprising, considering the country’s large, diverse population and the widespread adoption of EHRs, and deserves investigation. Future research should also address the knowledge gap regarding data science among healthcare professionals; educating health workers about ML methods and fostering collaboration across different user groups will benefit patients and researchers while strengthening the health care system. Finally, and most importantly, our findings underscore a need for greater transparency in the documentation of ML workflows. When processes are poorly described, particularly in studies using black-box models, it becomes challenging for other researchers to interpret, apply, and/or replicate case definitions. Improving methodological transparency and standardization is essential for advancing the field and enabling the broader adoption of ML-generated case definitions across diverse healthcare contexts. Future studies should investigate interpretable ML methods in combination with explainability tools to achieve a performance–transparency balance.

Notably, none of the included studies described our second objective of formal KT activities, highlighting a major gap in ensuring that these findings reach clinical or policy end users. This gap may be attributable to several factors: most ML phenotyping studies prioritize methodological performance over end-user implementation, resulting in limited clinician engagement or co-design; the use of black-box models reduces interpretability and makes it difficult for clinicians and policymakers to understand how cases are identified; and the absence of standardized reporting frameworks for ML phenotyping contributes to limited reproducibility and portability across settings.

Although no consensus framework exists for reporting ML-based EMR phenotyping studies, several core elements emerged as critical for transparency and reproducibility: (a) clear description of feature sources and feature reduction methods; (b) explicit reporting of the reference standard used to label cases; (c) documentation of class imbalance and the techniques used to address it; (d) specification of model development procedures, including train–test splits and cross-validation; and (e) standardized reporting of key performance metrics such as sensitivity, specificity, PPV, NPV, F1-score, and AUC. Future methodological work could build upon existing guidance such as Transparent Reporting of a multivariable prediction model for Individual Prognosis Or Diagnosis (TRIPOD)-ML 56 and Reporting of studies Conducted using Observational Routinely collected Data (RECORD)-ML 57 to develop reporting standards tailored to EMR-based phenotyping.

Limitations

This review has certain limitations. First, there is no universal keyword for the concept of case definition or EMR phenotyping; therefore, although we included the most common terms, we may have missed relevant articles that use alternative terminology. Second, as ML is a rapidly evolving field with a growing body of literature, our secondary search in August 2024 might have missed some of the latest publications. Third, we excluded conference abstracts and non-English full texts, which could have contained valuable information. Fourth, our search criteria focused on “primary care” and “ML” in titles and/or abstracts, potentially overlooking studies where these keywords appeared only in the full text. Fifth, the substantial heterogeneity across studies with respect to design, data sources, chronic condition definitions, and validation metrics limits direct comparison of the findings. Finally, as no formal quality appraisal was undertaken, our findings reflect the scope and reporting quality of the included literature and should not be interpreted as an assessment of study validity. Post-study registration may have introduced bias in our results.

Conclusion

We found that most studies that develop and validate case definitions for chronic diseases in primary care EMR data are conducted in Canada and the UK. A diverse range of ML methods, database structure, and phenotyping approaches have been used; however, no consensus has been reached regarding the preferred methods for case identification. In addition, the limited use of robust validation techniques and lack of clear documentation are barriers that prevent ML from being widely understood and used by health care researchers, health care providers, and policymakers. More research is needed to explore the potential use of ML in primary care, with particular focus on methodological transparency and standardization. This could facilitate the practical application of these ML techniques in primary care settings, ultimately improving chronic disease management and patient care outcomes.

Supplemental Material

sj-pdf-1-imr-10.1177_03000605251412076 - Supplemental material for Use of machine learning for chronic disease case identification in primary care settings: A scoping review of methods, validation, and clinical relevance

Supplemental material, sj-pdf-1-imr-10.1177_03000605251412076 for Use of machine learning for chronic disease case identification in primary care settings: A scoping review of methods, validation, and clinical relevance by Anh NQ Pham, Cliff Lindeman, Michael Cummings, Sylvia Aponte-Hao and Katie Kjelland in Journal of International Medical Research

Footnotes

Acknowledgments

We thank Alison Sylvak, MLIS, PhD, University of Alberta librarian, for her assistance in refining and translating the database search strategies. We also thank her for conducting the search and contributing to content development. We acknowledge the use of AI-assisted tools for grammar and clarity refinement only; no generative AI was used for conceptual development, analysis, or interpretation of findings.

Author contributions

All authors contributed to the development of the protocol, participated in screening and reviewing of the articles, provided critical revisions to the manuscript, and approved the final version for submission.

Declaration of conflicting interests

The authors declare no conflicts of interest.

Funding

No funding was received for this work.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.