Abstract

Inflammatory bowel disease (IBD) is a chronic and relapsing immune-mediated condition with a rising global prevalence. Endoscopic diagnosis, monitoring and surveillance currently depend on individual endoscopists, introducing subjectivity, variability, delays and potential diagnostic discrepancies. Artificial intelligence (AI) is poised to transform these processes. To date, most AI applications have focused on ulcerative colitis (UC) severity assessment, demonstrating promising results in replicating human evaluation, standardizing severity evaluation and facilitating the application of more complex scoring systems. Research into AI for Crohn’s disease (CD) has lagged behind UC, due to challenges such as disease heterogeneity and transmural extension; nevertheless, significant progress has been made to automate capsule endoscopy readings for CD. Beyond the grading of disease severity, AI is also being explored for tasks such as identifying dysplastic lesions, differentiating IBD from other conditions, assessing intestinal barrier permeability, guiding treatment decisions and integrating data from multiple omics, though studies in these areas remain exploratory. This review examines the current landscape of AI applications in IBD endoscopy, summarizes key studies in the field and explores the future potential of AI in IBD care.

Plain language summary

Inflammatory bowel disease (IBD), including both ulcerative colitis (UC) and Crohn’s disease (CD), is a long-term condition affecting the digestive system and its prevalence is increasing worldwide. Currently, the diagnosis and monitoring of IBD by endoscopy relies heavily on the expertise of individual endoscopists, which can lead to differences in interpretation and delays in making the correct diagnosis. Artificial intelligence (AI) has the potential to improve this process by making it more accurate and consistent. Most AI research to date has focused on ulcerative colitis. AI systems have shown promise in assessing disease severity, providing more consistent assessments and allowing for more accurate scoring methods. Research into AI for Crohn’s disease has been slower due to the disease’s complexity and the deeper tissue involvement, although significant progress has been made in automating capsule endoscopy reading for CD. Beyond grading disease severity, AI is also being explored for detecting precancerous changes, differentiating IBD from other diseases and even guiding treatment decisions. However, these applications are still at an early stage. This review looks at the current role of AI in IBD endoscopy, highlights key studies and discusses how AI could shape the future of IBD care.

Keywords

Introduction

Artificial intelligence (AI) refers to the ability of machines to perform tasks such as learning and problem-solving, typically associated with human cognition. Through continuous learning and processing of large datasets, AI systems could, at least in theory, 1 day surpass humans in detecting patterns opening the door to personalized medicine.

Deep learning (DL), a prominent branch of AI, leverages multi-layered neural networks to learn complex data representations. Among these, convolutional neural networks (CNNs) are particularly effective for image and video analysis, making them a cornerstone of many DL applications in medical imaging.1,2

Gastrointestinal (GI) endoscopy is a particularly fertile ground for AI applications due to the wealth of data generated and stored in the recording of endoscopic procedures. Indeed, computer-aided detection (CADe) systems for polyps were among the first applications of AI to be approved for use in medicine.3,4

The heterogeneity of inflammatory bowel disease (IBD), its unpredictable course and the difficulty in measuring its severity have sparked interest in AI-based tools to solve these issues. To date, most applications of AI in IBD have focused on detecting and assessing disease activity to reduce subjectivity and improve the prediction of disease course. However, other tasks are being explored such as identifying dysplastic lesions, differentiating IBD from other conditions and guiding treatment decisions (Figure 1).

Opportunities and challenges of AI in IBD endoscopy.

In this manuscript, we will review the most relevant studies using AI applications to interpret and improve endoscopy, including advanced endoscopic tools and capsule endoscopy (CE), in the setting of IBD. Finally, we will discuss future developments and limitations.

Disease monitoring

Ulcerative colitis

Endoscopy is the cornerstone of the treat-to-target management of UC and ‘mucosal healing’ is associated with reduced steroid use, hospitalizations, colectomies and improved quality of life.

UC endoscopy activity is assessed using various scoring systems including the Mayo endoscopic subscore (MES) and the ulcerative colitis endoscopic index of severity (UCEIS).

However, the high interobserver variability challenges the reproducibility of the measures, which is especially problematics in clinical trials.

Agreement among physicians tends to be higher for worse disease severity and lower for moderate or mild activity. For example, a study from Travis et al. 5 found an inter-observer agreement of 76% for severe disease, but only of 37% for moderate disease and 27% for remission. Such inconsistencies are problematic in clinical trials where accurate outcome measures are essential. To overcome this, most clinical trials now rely on central reading by blinded reviewers to reduce bias, though this process is expensive and imperfect. AI offers a fast, cost-effective and more standardized solution (Table 1).

Main studies on AI-based tools for UC monitoring.

AI, artificial intelligence; MES, mayo endoscopic score; UC, ulcerative colitis; UCEIS, ulcerative colitis endoscopic index of severity; VCE, virtual chromoendoscopy.

The first proof-of-concept application of AI to IBD endoscopy was reported in 2019 by Stidham et al. This CNN model separated endoscopic images of endoscopic remission (MES 0 or 1) from those of moderate-to-severe disease (MES 2 or 3), with an accuracy of 92.8% and a strong agreement with human reviewers (k-value 0.84), which were nearly equivalent to the agreement of human endoscopists between themselves (k-value 0.86). 6

Soon after, Takenaka et al. published a neural network-based system designed to classify endoscopic images of UC in remission, based on the UCEIS of 0, which is a more strict definition compared to MES 0 or 1. The study was based on a larger dataset (40,758 images vs 16,514) and a more balanced partition between training and testing, possibly accounting for the slightly lower accuracy (90.1%) and reviewer agreement (k = 0.798), but a higher potential generalizability.

The same study went a step further training the model to predict also the corresponding histological activity/remission, for which the model achieved 92.9% accuracy and 0.859 κ agreement score compared to pathologists. The high agreement with biopsy suggests that AI could potentially reduce reliance on invasive biopsy procedures, streamline diagnostic processes or guide the endoscopist on where to take the biopsies. More importantly, this demonstrated the ability of AI models to make prediction on endpoints (histology) different from the inputs (endoscopy). 7

While both studies showed that a neutral network-based model could reach high diagnostic performance, they relied on still images introducing a potential selection bias. In fact, a real colonoscopy includes moments of suboptimal vision, due to poor lighting, bubbles, debris or other obstacles. Images captured in these settings are instead removed from the dataset.

Gottlieb et al. advanced AI applications by incorporating real-time video analysis of colonoscopies. Their machine model achieved impressive accuracy rates of 97% for UCEIS defined remission and 95.5% for the MES. Agreement metrics were equally robust, with weighted K values of 0.844 for MES (95% confidence interval (CI), 0.787–0.901) and 0.855 (95% CI, 0.80–0.91) for UCEIS, when compared to central reader performance. 8

Similarly, Takenaka et al. presented an updated model to process full video colonoscopy in a prospective multicentre study. Their system demonstrated high accuracy in identifying endoscopic remission (UCEIS ⩽ 1) with 82% sensitivity and 95% specificity and a strong correlation between the model’s and the central readers, with an intraclass correlation coefficient of 0.93. These models represented a significant innovation as the first assessments of UC severity in full-length colonoscopy videos. Avoiding the selection bias inherent in fixed image and enhances clinical applicability through real-time functionality. 9

These studies also raise the question of whether the reference score used to train the model impacts its performance. Indeed, Gottlieb’s findings suggest that the UCEIS-based model performed better than the MES-based one, perhaps as UCEIS allowed the system to anchor classification on more specific features. This is a common observation in clinical practice where more detailed scoring systems provide more accurate assessments at the price of less practicality as in the case of UCEIS that is more accurate than MES though less used.10,11

Supporting these findings a meta-analysis showed that AI models trained with UCEIS had significantly superior performances, with sensitivities of 93.6% (95% CI, 87.5–96.8%) for UCEIS compared to 82% (95% CI, 75.6–87%) for MES (p = 0.003), and higher positive predictive value (PPV) 93.6% versus 83.6% for UCEIS and MES, respectively (p = 0.007). 12

Another study presenting two models trained using UCEIS and the PICaSSO score (Paddington International virtual ChromoendoScopy ScOre), which is even more detailed than UCEIS, found the model trained with the PICaSSO score to be slightly more accurate than the one based on UCEIS. 13 Taken together, these studies support the idea that AI could facilitate the use of more sophisticated scores, which are known to be more accurate but are less used in practice because of being time-consuming. 14

While most early models focused on binary classification (remission vs active disease), recent work has aimed at building multiclass models to distinguish between degrees of severity, a task that remains clinically challenging. One notable example is the UC-Dense-Net model, which combines convolutional and recurrent neural networks. By integrating temporal information across video frames, the model achieved up to 4% higher accuracy and 3.5% better precision compared to previous methods, facilitating better detection of subtle inflammation.15,16

Another major limitation of traditional endoscopic scoring systems for UC is the rigid classification of severity based on the most severely affected segment, overlooking activity in other bowel regions. For example, a patient with severe pancolitis may achieve a significant remission through most of the colon but still have a single persistent ulcer in the rectum and nevertheless the MES would remain unchanged, leading to a miscalculation of the true disease burden. 17 AI has the potential to solve this, and several approaches have already been proposed such as having the AI score on a continuous scale from 0 to 10 18 or dividing the colon into pre-defined sections, allowing the system to autonomously assess the inflammation at multiple points within each video. 19

However, the most significant progress has been the cumulative disease score (CDS) proposed by Stidham et al. 20 This model analyses endoscopic videos and calculates a cumulative score by summing the contributions from all colonic segments, providing a more complete representation of disease activity. While endoscopists can manually sum the scores for each segment, this process is tedious and can only be done for a finite number of segments. Automating such task with AI significantly improves the efficiency and accuracy of clinical workflows.

The CDS showed a strong correlation with MES21,22 and increased the sensitivity to improvements in disease activity, a feature known as responsiveness. By capturing cumulative recovery across all segments of the colon, it better reflects treatment effects and, as a result, it increases the statistical power and reduces the sample size needed to observe a statistical difference in clinical trials. In fact, when applying the CDS to the endoscopies of the UNIFI trial, the differences between ustekinumab and placebo increased as compared to MES, resulting in a 50% drop of sample size needed to detect a difference between the two groups. This makes the AI quantification of severity a valuable tool for improving the efficiency of clinical trials. 20

More recently, the UC-SCALE has built on these advances. This AI-based algorithm processes colonoscopy videos, assigns a MES to each readable frame and maps it to specific anatomical locations. UC-SCALE was trained on a large and heterogeneous dataset of 4326 sigmoidoscopy videos from 1953 UC patients collected at 554 clinical sites as part of 5 clinical trials evaluating Etrolizumab. To date, this is the largest dataset used to develop an automated scoring system in IBD, and more than four times larger than datasets used in previously published models. The diversity of this dataset, both in terms of clinical sites and patient demographics, significantly reduces the risk of overfitting and improves the generalisability of the model to new data. To ensure quality, an automated algorithm pre-selected readable frames from the videos.

UC-SCALE showed good inter-rater agreement (quadratic weighted kappa = 0.73) with expert readings provided by a central group of five gastroenterologists. While this level of agreement is not perfect, it is consistent with the heterogeneity of the data. The model also showed significant correlations with clinical markers, including faecal calprotectin (rs = 0.50), C-reactive protein (CRP; rs = 0.45) and Physician Global Assessment (rs = 0.45), all of which were highly significant (p < 0.0001), supporting the clinical validity of UC-SCALE. 23

Aside of CNNs, other computational methods have been employed to detect mucosal inflammation. One such method, the red density (RD) score, isolates colours from endoscopic images and correlates the density of red with inflammation, thus quantitatively measuring mucosal hypervascularization in real time. This approach is a promising alternative to assessing inflammation based on the detection of disease features such as erosions or ulcers, and does not require a human input for training. 24

The RD score showed strong correlations with established indices, including the MES (r = 0.76, p < 0.0001), the UCEIS (r = 0.74, p < 0.0001) as well as with histological activity measured with the Robarts Histopathology Index (RHI; r = 0.74, p < 0.0001) and effectively differentiated active inflammation from histological remission (RHI of ⩽6), with 96% sensitivity and 80% specificity. However, the study did not report detailed metrics of diagnostic performance for endoscopy, which remains to be determined. 25

In a pilot study of 39 patients, RDS was also tested as a potential predictor of sustained clinical remission over 5 years resulting in a disappointing AUC of 0.68. This modest performance is likely imputed to the difficulty of predicting outcome, even for human physicians especially on the long term, when multiple factors come into play and reduce the relevance of the initial endoscopy. A larger study assessing RD in UC, the PROCEED trial, is ongoing (NCT04408703). 26

The same group investigated another CADx system using short-wavelength monochromatic LED light to assess mucosal architecture, including crypts and peri-cryptal capillaries, at depths of 50–200 µm. In active UC, increased inter-cryptal distance and crypt wall thickness, associated with inflammatory cell infiltration, are currently difficult to quantify in vivo. Although not included in histological scores, changes in peri-cryptal mucosal vascularization are associated with the degree of inflammation. The algorithm outperformed MES and UCEIS in detecting histological remission, achieving 86% accuracy compared to 74% and 79%, respectively, and demonstrated a PPV of 0.83 for histological remission compared to 0.65 for UCEIS and 0.59 for MES. 27

Another innovative technique in the assessment of ulcerative colitis (UC) is endocytoscopy (EC), an optical contact-based endoscopic system with up to 520-fold magnification. EC, coupled with the vital stains such as methylene blue and crystal violet, allows in vivo cellular imaging during GI endoscopy.

Maeda et al. developed a CAD system using ultra-magnification to detect histological inflammation (Geboes score >3), achieving a sensitivity of 74% (95% CI: 65%–81%), specificity of 97% (95% CI: 95%–99%) and diagnostic accuracy of 91% (95% CI: 93%–95%) and, even more impressively, a perfect reproducibility with a k-value of 1.

The system was further developed in the EndoBRAIN, which classifies patients into healing or histologically active. 28 A prospective study showed that EndoBRAIN can predict relapse in UC patients in clinical remission, with significantly higher relapse rates in the AI-active group (28.4%) compared to the AI-healing group (4.9%) over a 12-month follow-up. To date, ENDOBRAIN is the only commercially available AI model for IBD endoscopy, although its use is limited by the need for specialised equipment and expertise, and it is only available in Japan. 29

Another system leveraging the subtle changes in vasculature, but compatible with a wider range of endoscopes, has been proposed as well. The AI provides an objective binary diagnosis of ‘AI-based vascular healing’ or ‘AI-based vascular activity’ showing significantly higher recurrence rate in patients classified as vascularly active group (23.9%) compared in the vascularly healed group (3.0%), although the AUC for outcome prediction remained modest, suggesting that even with the highest quality scopes the prediction of flares remains elusive. 30

In summary, by automating the analysis of endoscopic images and videos, AI offers a promising solution for achieving a more standardized, accurate and cost-effective assessment of disease activity both in daily practice and clinical trials. In addition, the integration of high-resolution imaging and novel scoring systems might improve our ability to predict histological remission and therefore outcomes, although accurate flare prediction is still far from being achieved.

Crohn’s disease

While AI has been widely applied to conventional endoscopy for UC, in CD AI applications have focused primarily on CE and, more recently, intestinal ultrasound (IUS) rather than conventional endoscopy. This difference is due to the discontinuous and transmural nature of inflammation in CD and its frequent involvement of the proximal small bowel, all of which pose significant challenges to traditional endoscopy. CE is particularly useful for the detection of proximal small bowel lesions, which are associated with poorer long-term outcomes and can effectively guide a treat-to-target strategy.31,32 In a recent randomised controlled trial patients with CD in clinical remission but high Lewis score (>350; a CE measure of disease activity) benefited from an optimised treatment approach and showed a reduced risk of clinical relapse compared to those who continued with standard care. 33

Nevertheless, CE adoption remains limited by long reading times, interobserver variability, difficulty in interpreting findings and cost. These limitations present a compelling case for AI-based solutions to improve both efficiency and diagnostic accuracy.

DL models, particularly CNNs, have demonstrated high performance in identifying small bowel erosions, ulcers and strictures with AUC values exceeding 0.94.34,35 Building on these results, research has progressively shifted towards investigating the role of pan-enteric CE – allowing simultaneous assessment of both the small bowel and colon – to improve the clinical utility of CE in CD management. Promising results have been achieved, with a reported sensitivity of 95.7% and specificity of 99.8%, for the detection of CD-related lesions. Notably, this model not only detected lesions but also classified disease severity, a critical factor in predicting disease progression and guiding therapy.36–39

However, these studies did not validate results across different capsule devices and clinical settings. This issue was later addressed in another multicentre study that validated an AI model using data from two different CE platforms across multiple centres in Europe and the USA. Although the diagnostic sensitivity (94.6%) and accuracy (86.1%) were slightly lower than those reported in previous studies, the real-world performance underscores the robustness and interoperability of the model required for clinical translation. 40

Additionally, CE is emerging as a potential rapid rule-out tool for patients suspected of CD. The AXARO framework has demonstrated a negative predictive value of 97% for IBD and to a mean review time of less than 4 min per patients while maintaining an almost perfect agreement with human readers. This approach aligns with the increasing need to optimize the diagnostic workflow in response to growing workload and suggests that AI-assisted CE could serve as non-invasive option to rule out CD.41–43

Another valuable non-invasive tool for CD monitoring is IUS. Adoption of IUS is increasing though its operator-dependence and image interpretation still limit widespread use. AI-driven solutions, including emerging vision transformers (ViTs), have shown potential in automating the detection of inflamed bowel regions in IUS, further expanding the role of AI in CD management.44,45 Significant progress has also been made in applying AI to therapeutic decision-making. Waljee et al. 46 have shown that, in patients with active Crohn’s disease (CD), machine learning models using the week-6 albumin to CRP ratio can predict long-term responders to ustekinumab by week 8, potentially reducing both costs and delays in remission. Although these topics are beyond the scope of our review.

Surveillance

Risk of colorectal cancer (CRC) is increased in patients with IBD proportionally to the extent, severity and duration of inflammation. Current guidelines recommend periodic surveillance colonoscopy starting 8–10 years after initial diagnosis to ensure early detection of dysplastic changes in the colonic mucosa.47,48 Endoscopic surveillance of IBD is considered to be among the most challenging settings of diagnostic endoscopy due to the similarity of inflammatory and dysplastic changes.

Various surveillance strategies are possible including high-definition endoscopy with either white light, virtual chromoendoscopy or dye-based chromoendoscopy49–51 and advanced endoscopic imaging technologies, including laser confocal endomicroscopy and EC, are being investigated to improve detection. However, the early detection of IBD-associated dysplasia remains a challenge. 52

While, numerous CAD systems have been commercialized for the detection of colorectal lesions in the general population, none has been validated specifically for use in patients with IBD.53,54

For instance, in a multicentre study conducted by Kudo et al. 55 to evaluate the accuracy of EndoBRAIN, a system trained on endocytoscopic images, in differentiating neoplastic from non-neoplastic colorectal lesions, patients with IBD were excluded due to the lack of sufficient data for adequate machine learning training. Similarly, the same exclusion criteria for IBD patients were applied in the EndoBRAIN EYE, a CAD system with over 90% sensitivity and specificity for detecting colorectal polypoid lesions. 56

Nevertheless, the use of CAD systems designed for sporadic polyps has been occasionally reported in IBD. Using EndoBRAIN, Fukunaga et al. 57 identified a flat lesion with high-grade dysplasia in a patient with long-standing UC, which was subsequently removed by endoscopic submucosal dissection. Similarly, Maeda et al. 58 reported the detection of two low-grade dysplastic lesions in a patient with long-standing UC using EndoBRAIN EYE. Although anecdotal, these cases support the potential utility of the AI system in the detection of colitis-associated dysplasia and CRC.

However, the diagnosis of colitis-associated dysplasia remains particularly challenging due to the limited visibility of dysplastic lesions – often flat with poorly defined borders – and the high risk of false positives due to mucosal changes induced by chronic inflammation. 59

To address these issues, several CAD systems specifically designed for IBD surveillance are currently under investigation, although not yet clinically validated for routine use.

Yamamoto et al. conducted a pilot study in which CNNs were trained on 862 images to classify IBD-associated neoplasia into two categories: ‘adenocarcinoma/high-grade dysplasia’ and ‘low-grade dysplasia/sporadic adenoma/normal mucosa’. The AI achieved a sensitivity of 72.5%, specificity of 82.9% and overall accuracy of 79.0%, outperforming expert endoscopists (sensitivity: 60.5%, specificity: 88.0%, accuracy: 77.8%) and non-experts (sensitivity: 70.5%, specificity: 78.8%, accuracy: 75.8%). Interestingly, the non-experts showed higher sensitivity than the experts, which may reflect a less conservative diagnostic approach, aimed at avoiding missed lesions even at the expense of specificity.

Although preliminary, this study highlights the potential of AI to improve the accuracy of IBD surveillance by outperforming both expert and non-expert endoscopists in the diagnostic classification of IBD-associated neoplasia. 60

In another study, Vinsard et al. first applied a model trained on non-IBD lesions to detect IBD dysplasia observing a poor sensitivity (50%) that testifies the difficulty in identifying IBD dysplasia and need for specifically trained systems. The authors then retrained the model on IBD images achieving promising sensitivity and specificity particularly with images in dye-chromoendoscopy (95.1% and 98.8%, respectively), albeit selected. 61

Finally, Abdelrahim et al. developed a DL AI model for the detection and characterisation of IBD-associated neoplasia. The system was trained on over 18,000 endoscopic images, combining data from both IBD and non-IBD mucosa to improve generalizability and minimize overfitting. Specifically, the training set included images of both flat lesions and inflamed background mucosa with varying degrees of inflammation to address the two major challenges in IBD surveillance. The model was then validated on a separate dataset of 478 images from 30 IBD patients, achieving 93.5% sensitivity and 80.6% specificity for lesion detection, and 87.5% sensitivity and 80.6% specificity for lesion characterization. This approach also took into account regenerative and inflammatory lesions that may macroscopically resemble neoplasia but are histologically benign – such as pseudopolyps – and trained the system to detect and characterize them appropriately. 62

Overall, AI-based surveillance systems for IBD still face significant challenges, though progress is being made and a combination of CADe models with high sensitivity, for detection of lesions, and others with high specificity, for confirmation of dysplastic findings, is a realistic possibility for the near future.

Limitations

Despite the advances in the development of AI applications for IBD endoscopy, there are still several limitations.

Most of the models available have been developed and validated in patients with UC. While their performance is promising, these tools are more realistically suited for centralized reading in clinical trials, rather than immediate integration into routine clinical practice. Wider adoption will require external validation across different clinical settings, endoscopic devices and patient populations. This need was recently emphasized in a systematic review which highlighted the lack of external validation in many published studies, limiting the generalizability and real-world applicability of AI tools. External validation should be considered a standard part of the development of robust AI models. 63 Another common limitation is the reliance on binary classification systems – active versus inactive disease – without capturing the nuanced spectrum of inflammation severity. Although recent approaches have introduced multiclass classification and continuous scoring systems, these are still at an early stage. 64

In CD, the challenges are compounded by the limited focus of AI research on conventional ileo-colonoscopy. Most AI models have focused on CE, leaving a significant gap in the development of models applicable to conventional ileo-colonoscopy, which remains the standard diagnostic and monitoring tool in clinical practice.

A further challenge lies in developing reliable AI models for the detection of colitis-associated dysplasia and cancer. These lesions are relatively rare, subtle and heterogeneous, often appearing as flat or poorly demarcated areas within an inflamed mucosal background. Their rarity limits the availability of large training datasets; their subtle morphological features make them difficult to distinguish – similar to how AI performance drops with modest inflammatory changes. Moreover, their heterogeneity and frequent association with pseudopolyps and regenerative changes introduce additional noise, further complicating AI-based detection.

Although recent studies have attempted to address these challenges by including diverse datasets with inflammatory changes and flat lesions, further research and large-scale, multicentre validation are needed.

Another critical issue is the differentiation of IBD from its mimics (Table 2). Although AI has made strides, it still falls short in reliably differentiating between the different forms of colitis that resemble IBD, as evidenced by the available data. Endoscopic image-based algorithms, while promising, do not outperform experienced endoscopists.65,66 This limitation is primarily due to the variability in clinical presentations, which complicate effective algorithm training without large and diverse datasets that adequately represent different conditions. One option could be combining clinical parameters with image-based data to enhance diagnostic accuracy, reflecting the multifaceted nature of human clinical decision-making.66,67 The main diagnostic challenge in IBD diagnosis does not lie in distinguishing between UC and CD41,68,69 – where diagnostic accuracy exceeds 90% – but rather in differentiating IBD from non-IBD conditions, for which accuracy is lower and the clinical cost of misdiagnosis higher.

Main studies on AI-based tools for IBD diagnosis and differentiation from mimics.

AI, artificial intelligence; CD, Crohn’s disease; CE, capsule endoscopy; CLE, confocal laser endomicroscopy; GI, gastrointestinal; IBD, inflammatory bowel disease; UC, ulcerative colitis; WLE, white-light endoscopy.

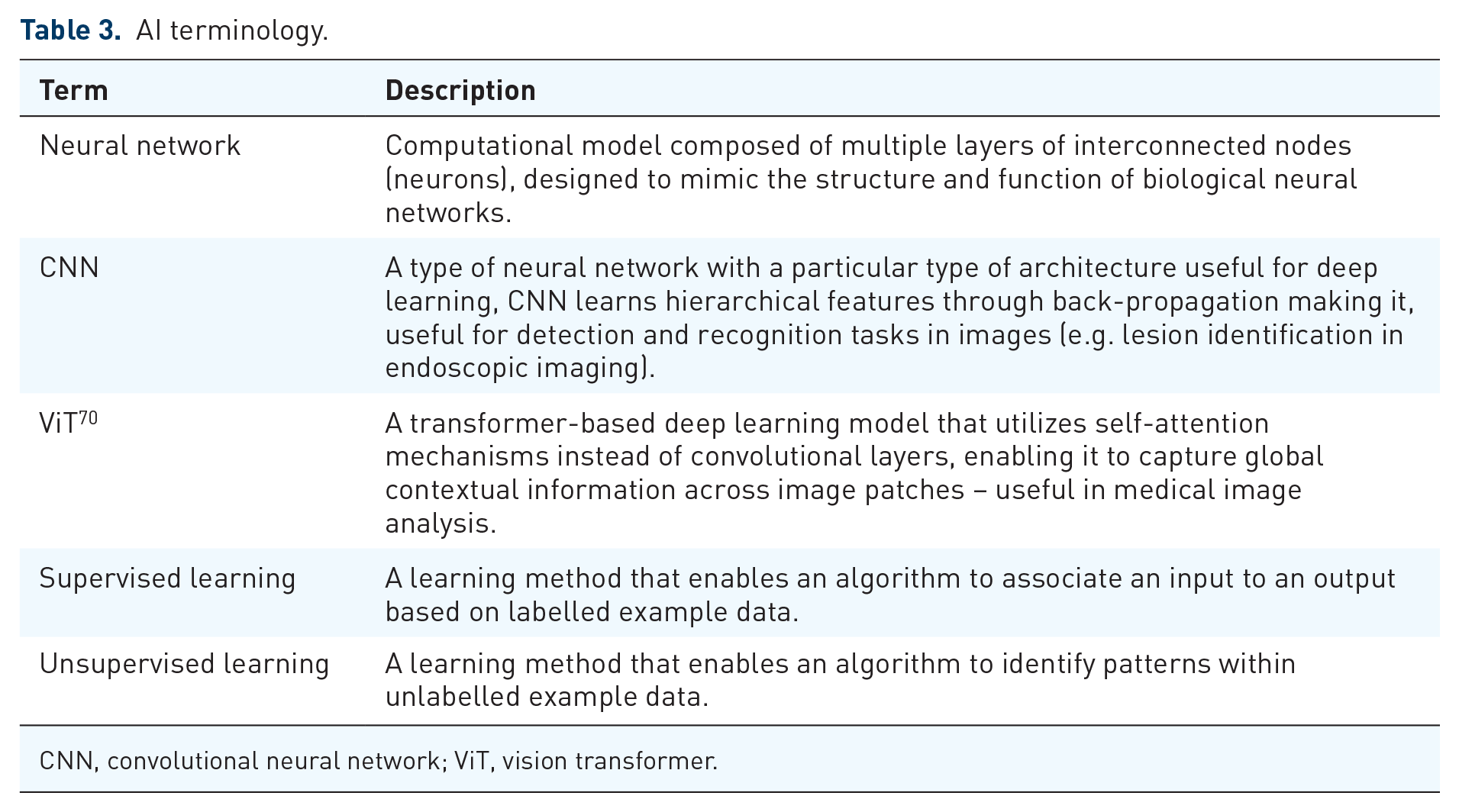

The gap between the technological potential of AI and its clinical implementation remains a significant challenge. In uncontrolled real-world settings, AI models struggle due to flaws in training data, such as selection bias, overfitting due to small datasets, data leakage, underrepresentation of certain subgroups (e.g. minorities or anatomical variants) and the lack of a universally reliable ‘ground truth’. However, advanced approaches such as self-supervised models and ViTs (Table 3) offer promising solutions to some of these challenges by enabling models to learn from large-scale unlabelled data and capture complex patterns with improved generalizability.

AI terminology.

CNN, convolutional neural network; ViT, vision transformer.

Addressing these limitations requires a multifaceted strategy. The integration of open data repositories can help mitigate overfitting, while publicly accessible algorithms promote transparency and reproducibility in AI research. A particularly promising approach is federated learning, which allows AI models to be trained across multiple institutions without sharing sensitive patient data. This method can help overcome data-sharing barriers, foster collaboration and preserve patient confidentiality.71–74

Beyond technical and practical considerations, there are also ethical implications of AI in healthcare, particularly regarding the responsibility for AI-assisted medical decisions. AI algorithms are often considered ‘black boxes’ meaning their decision-making processes are not always transparent or understandable to healthcare professionals. If an AI system contributes to a medical error: who is responsible for it? The developer of the algorithm, the healthcare institution or the medical professional who relied on it. To address this issue, it is crucial to establish clear frameworks for legal responsibility in AI-assisted medical decisions and to improve the transparency of AI models through the application of explainable AI so that clinicians can interpret the results of the models and provide the patient with clear information on how a decision was made.1,75

Explainability is also important to build trust in AI models, which are necessary for successful adoption in clinical practice. Equally important is ensuring that healthcare professionals become familiar with AI tools. This may require integrating AI education into medical training and offering continuous professional development programmes to keep clinicians informed about emerging technologies. Moreover, embracing AI in healthcare will demand a cultural shift, addressing scepticism and helping to overcome ‘technophobia’ among practitioners. 76

In addition, developing globally accepted safety and efficacy standards for AI applications in healthcare could improve the consistency of AI-driven solutions and ensure the protection of patients’ rights across borders. Currently, there are significant regulatory differences between jurisdictions. The European Union, through the Medical Device Regulation and the Artificial Intelligence Act, has introduced stricter requirements to ensure shared standards on quality and data protection, but this may result in additional obstacles for small and medium-sized companies and leading some to reconsider their market strategies. In contrast, the US FDA is adopting a more flexible regulatory framework for AI technologies. These regulatory discrepancies can lead to inequalities in the development and deployment of AI solutions. International harmonization of standards could help overcome these challenges, ensure safety and promote innovation. 77

An additional consideration is the economic feasibility of AI deployment. Currently, the costs of AI models for IBD are unknown, as no tool is commercially available. However, extrapolating from existing economic models for AI-assisted CRC screening and polyp detection, after the initial investment, AI model could be cost-efficient in the long term. 78

Only by addressing these challenges with collaborative approaches, appropriate regulations and technological innovations can the full potential of AI in IBD endoscopy be realized.

Future perspectives

Recent advances in AI-assisted endoscopy have seen a shift from traditional CNNs to ViTs, which use self-attention mechanisms to analyse images globally and capture complex relationships across an image (Table 3). 79 Unlike CNNs which focus on local feature extraction, ViTs provide a more comprehensive understanding of complex datasets, enhancing their robustness to image imperfections such as bubbles, debris or suboptimal lighting.

A significant breakthrough in ViT applications is their integration with self-supervised learning, a paradigm that allows models to learn from unlabelled data, overcoming the limitations of traditional CNNs that rely on manually annotated datasets, which often introduce biases and inconsistencies. A notable real-world application of this approach is the Certai model for disease assessment of UC. By integrating ViTs with the self-supervised DINOv2 framework, Certai analyses large endoscopic datasets without requiring manually labelled ground truth. The model is pre-trained using DINOv2 and then refined through expert input, following a hybrid AI-human approach that enhances accuracy and clinical reliability.80,81 Despite these advances, hybrid AI models remain essential, as human oversight continues to play a critical role in validating AI-driven decisions. Furthermore, challenges such as class imbalance in medical datasets persist, requiring advanced techniques like high-frequency balancing, including up-sampling (e.g. by rotating or modifying underrepresented images), and generative adversarial networks to generate synthetic data, thereby ensuring stable model performance across disease severities.79,82

Moreover, AI is increasingly moving towards multimodal approaches, integrating multiple data sources for a more comprehensive understanding of disease, in line with the goals of precision medicine. 83 A ‘Fusion Model’ that combines endoscopic and histological data to predict patient outcomes and identify early responders to therapy has recently been presented, although the clear benefits over individual assessment are yet to be proved. 84

The studies discussed highlight the trajectory of AI in endoscopy, underscoring the growing importance of self-supervised learning, multimodal approaches and hybrid AI-human models (Figure 2).

Present and future of AI.

Conclusion

AI holds significant promise for transforming IBD endoscopy by enabling more accurate, standardized and accessible care. In the short term, its most realistic application lies in enhancing the consistency and reproducibility of clinical trials. Looking ahead, as more sophisticated and robust models emerge, AI may streamline diagnostic workflows, reduce unnecessary interventions and ultimately improve patient outcomes. However, key challenges remain, including the development of generalizable and transparent algorithms, the navigation of complex regulatory frameworks and the need for strong interdisciplinary collaboration to ensure the design of safe, effective and patient-centred human–AI systems.