Abstract

Links between musicality and vocal emotion perception skills have only recently emerged as a focus of study. Here we review current evidence for or against such links. Based on a systematic literature search, we identified 33 studies that addressed either (a) vocal emotion perception in musicians and nonmusicians, (b) vocal emotion perception in individuals with congenital amusia, (c) the role of individual differences (e.g., musical interests, psychoacoustic abilities), or (d) effects of musical training interventions on both the normal hearing population and cochlear implant users. Overall, the evidence supports a link between musicality and vocal emotion perception abilities. We discuss potential factors moderating the link between emotions and music, and possible directions for future research.

Introduction

Human social communication depends on the exchange and mutual representation of multiple social signals. Among these, vocally expressed emotions are fundamental to human interaction (Grandjean, 2020; Sauter, 2017; Scherer, 1986; Scherer et al., 2016; A. W. Young et al., 2020). While humans perceive emotions efficiently and often automatically, interindividual differences in vocal emotion perception skills have recently become a focus of scientific attention (Mill et al., 2009; Schirmer et al., 2005). For humans, voices and music are both prominent means of auditory communication of emotions. Some researchers have emphasized the similarities in the acoustic features of certain emotions in the voice and in music (Juslin & Laukka, 2003; Scherer, 1995), whereas others reported similarities in the neural circuits involved in recognizing basic emotions from music as compared to voices (Aubé et al., 2015). Accordingly, differences in vocal emotion perception skills could be associated with different levels of musicality. Music forms a central part of human culture, and appreciation of music is interconnected with intense emotional experiences (Schäfer et al., 2013). However, there is huge variation in terms of musical aptitude and musical training. Here, we integrate the currently available research that assessed possible links between musicality—defined as sensitivity and/or talent regarding music in terms of both aptitude and training effects—and vocal emotion perception.

This review adds vocal emotion perception to several previous integrative works that discussed the association of musical training with other nonmusical abilities. Compared to nonmusical peers, musicians show superior perception of pitch (referring to the perceived frequency of a sound) and timbre (referring to the perceived quality or “color” of a sound, which allows a listener to perceive that two sounds of the same loudness and pitch can be dissimilar), as well as superior temporal processing (Kraus & Chandrasekaran, 2010), and those advantages expand from the musical into the vocal domain (Chartrand et al., 2008). Beyond the auditory modality, musicians have better audiovisual and auditory-motor integration, working memory, spatial abilities, executive functioning, general intelligence, as well as speech and language skills (Elmer et al., 2018; Schellenberg, 2001, 2016). At the brain level, musicality is associated with widespread functional and structural differences such as larger grey matter volume and stronger connectivity between areas associated with auditory, motor, and visuospatial functions (Kraus & Chandrasekaran, 2010; Pantev & Herholz, 2011). Against this background of established links between musicality and comparatively distant competencies, the field remarkably lacks systematic integration of evidence concerning the related domain of voices, and vocal emotion perception in particular.

Given the aforementioned benefits of musicality, a link between musicality and vocal emotion perception seems plausible. We considered that individual musicality may be determined by a combination of genetic (“talent”) and environmental factors (“training”), the contributions of which can be difficult to determine. Similarly, underlying mechanisms for the link between musicality and vocal emotion could be determined by both nature and nurture factors. On the nature side, some people might have an innate capacity to perceive fine-grained acoustic structures of both musical and vocal sounds, alongside with an inner drive to engage in musical activities. Accordingly, musical and vocal emotional capacities would be linked through genetic factors. This view is in line with Darwin’s protolanguage hypothesis, claiming that music and speech both evolved from the same origin, a musical protolanguage comprised of rudimental vocalizations (Darwin, 1871/2008; Thompson et al., 2012) in which the expression of vocal emotions was a key aspect of communication (Fitch, 2013). Indeed, expression of emotion in music and vocal channels seems to be based on similar acoustic cues, supporting the idea that emotional communication in both channels is intertwined (Juslin & Laukka, 2003). This gives rise to the possibility that capacities in both channels are driven by the same underlying genetic factors, and that there are innate forces that create transfer between musical and vocal capacities.

On the nurture side, it is typically assumed that musical training causes the differences that are observed in musicians and nonmusicians. From this perspective, the acoustic similarity between music and vocal emotions might be a reason why extensive training in the musical domain can lead to an improvement in vocal emotion perception. A more elaborated nurture-based approach is offered by the OPERA hypothesis (Patel, 2011). Although it was originally developed to explain music-to-speech transfer effects, it can also be considered in the context of vocal emotion perception. The OPERA hypothesis states that musical-training benefits’ transfer to other domains only occurs when five conditions are met: (1) overlap, (2) precision, (3) emotion, (4) repetition, and (5) attention. Overlap refers to shared neural networks between music and vocal emotion processing. Indeed, neuroimaging data suggest common neural networks for the processing of emotional sounds, including vocal, musical, and environmental sources (Escoffier et al., 2013; Frühholz et al., 2014, 2016; Grandjean, 2020; Schirmer et al., 2012). Core structures include the auditory cortex, the superior temporal cortex, frontal regions, the insula, the amygdala, the basal ganglia, and the cerebellum (Frühholz et al., 2016). Precision refers to the high auditory-motor demands that musical training places on these shared networks. The third condition, emotion, claims that the musical activity has to be perceived as rewarding. The subjective feeling of highly pleasurable experiences, such as “chills” or “shivers-down-the-spine,” is among the main reasons why humans engage in musical activities, and those experiences are associated with brain activity changes in regions involved in reward, emotion, and arousal—including the amygdala, the ventral striatum, midbrain structures, and orbitofrontal and ventromedial prefrontal areas (Blood & Zatorre, 2001; Stewart et al., 2006). Finally, the necessities of repetition and attention stress the point that training-induced benefits depend critically on how frequently and focused musical activity is pursued over time. It may take years of active musical engagement to observe stable differences in musicians’ brains compared to nonmusicians’ (Kraus & White-Schwoch, 2017), which can be regarded as truly reflecting training-induced changes (Elbert et al., 1995; Kraus & Chandrasekaran, 2010; Pantev & Herholz, 2011).

As it stands, the link of musicality with vocal emotion perception has received relatively little attention and is poorly understood. This seems surprising given that adequate emotion perception is crucial for well-being and perceived quality of life (Phillips et al., 2010; Schorr et al., 2009). However, to the best of our knowledge, there has been no attempt so far to integrate the existing evidence in a systematic manner. In this review, we aim at closing this gap while including the full range of musical abilities: We survey findings from highly trained musicians but also from people with exceptionally poor musical abilities. We also assess studies that investigate the role of individual differences in terms of musical interests or psychoacoustic abilities. Finally, we include music intervention studies with normal hearing individuals as well as cochlear implant (CI) users, who have significant difficulty recognizing vocal emotions due to degraded auditory input (Jiam et al., 2017), which might be improved with music-based interventions (Paquette et al., 2018).

Systematic Literature Search

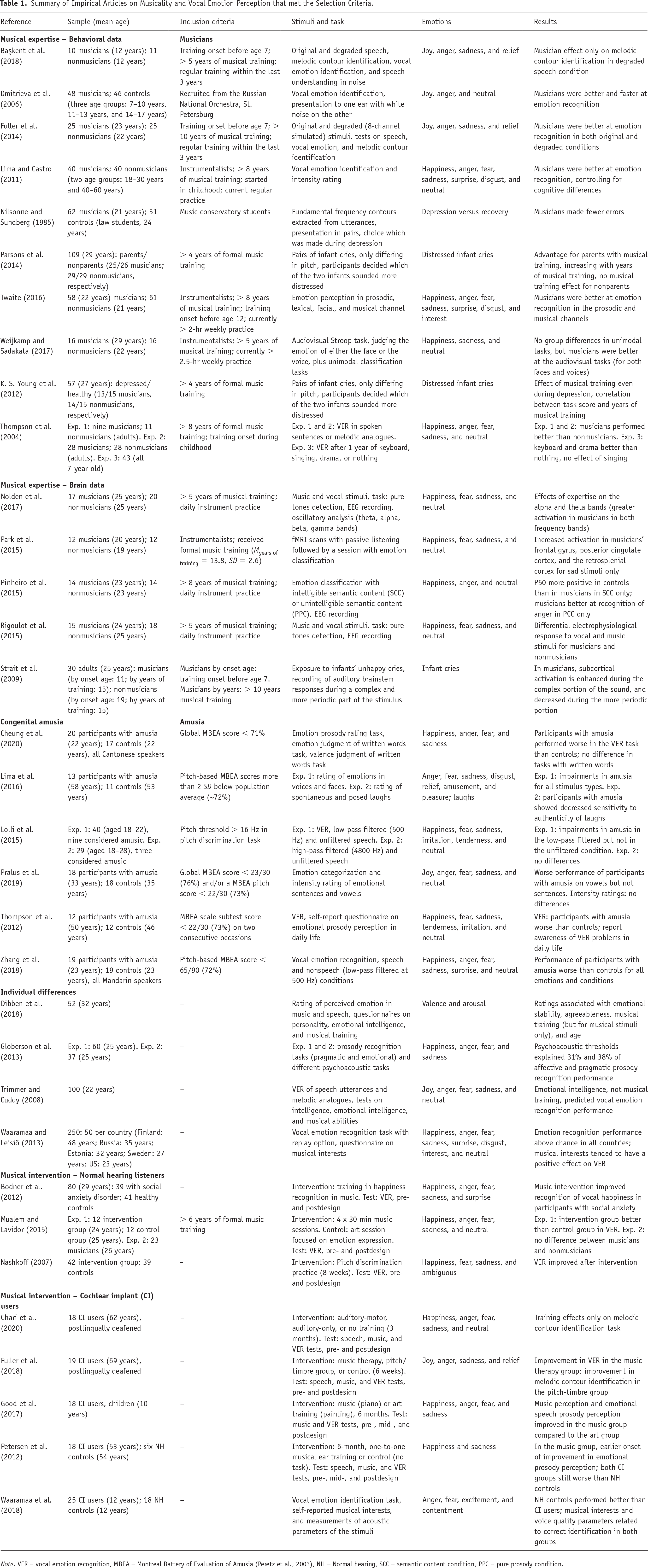

We conducted parallel literature searches on Web of Science, PubMed, and PsychInfo on March 19, 2020, using the search terms “(voice OR prosody) AND (emotion* OR affect*) AND (music* OR auditory expert* OR amusi* OR auditory training).” We restricted publication language to English and considered empirical studies only. In total, the initial search returned 1,723 articles (Web of Science: N = 755; PubMed: N = 405; PsychInfo: N = 563) to which we applied the following inclusion criteria: (a) vocal emotion perception was assessed as dependent variable, (b) musicality was assessed, manipulated, or used as a defining criterion for a group-based comparison, and (c) responses were measured at the behavioral or brain level. We also screened the reference lists of the identified articles for relevant publications. This selection procedure resulted in a total of 33 articles, which we review in what follows (for a summary, please refer to Table 1). When screening the relevant literature, we noted that a substantial proportion (27 out of 33; 82%) of identified articles were published in 2011 or later, reflecting the current attention to this topic that contributed to motivating our present review.

Summary of Empirical Articles on Musicality and Vocal Emotion Perception that met the Selection Criteria.

Note. VER = vocal emotion recognition, MBEA = Montreal Battery of Evaluation of Amusia (Peretz et al., 2003), NH = Normal hearing, SCC = semantic content condition, PPC = pure prosody condition.

Review of Identified Literature

After screening the identified literature, it became apparent that studies could be grouped according to different operationalizations of musicality, which focused either on individual differences that existed before the study was conducted or on experimental interventions seeking to create such differences via controlled treatment designs. Following this structure, we first review the evidence concerning individual differences, in three sections focusing on (a) differences between musicians and nonmusicians, (b) data on individuals with congenital amusia, and (c) correlations of vocal emotion recognition with either musical interests or psychoacoustic abilities. Subsequently, (d) we discuss effects of musical training interventions on vocal emotion perception.

Differences in Vocal Emotion Perception Between Musicians and Nonmusicians

Behavioral data

Several studies found a musician effect on vocal emotion recognition in the adult population. The presumably first study comes from Nilsonne and Sundberg (1985), where music students outperformed law students in judging whether a vocal sample was recorded during a depressive period of the speaker or not. These findings were replicated by Thompson et al. (2004). Musically trained participants performed better than untrained participants at categorizing emotional tone sequences extracted from vocal utterances. In a second experiment, however, which included additional sentences in familiar and unfamiliar languages, the effects were less straightforward: musicians’ performance was better for sad, fearful, and neutral, but not for happy and angry prosody. As a caveat, musical training was associated with differences in cognitive abilities too, limiting conclusions from this study. This issue was addressed by Lima and Castro (2011), who compared highly trained musicians with nonmusicians in two age groups (18–30 and 40–60 years). Musicians outperformed their nonmusical peers similarly across emotions and age groups, even when effects were controlled for cognitive differences. Further, similar patterns of misattributions and acoustic cue utilization were observed in both groups, suggesting that group differences were of a quantitative rather than a qualitative nature.

Evidence of a musician effect on vocal emotion perception in children and adolescents is less clear. Dmitrieva et al. (2006) tested vocal emotion perception by musicians and controls in children of three age groups (7–10, 11–13, and 14–17 years). Musicians outperformed their nonmusical peers, but this effect was mainly driven by the youngest group. This finding could indicate either that the limited musical experience in very young children allowed innate aptitude to be more visible or that musical experience might promote an earlier development of emotion sensitivity. Başkent et al. (2018) also studied adolescent musicians and nonmusicians. Their work was motivated by Fuller et al. (2014), who conducted a similar design with adults. Both studies compared emotion recognition performance of unprocessed and degraded speech intended to reproduce the spectro-temporal degradation experienced by CI users (Başkent et al., 2018). Whereas Fuller et al. (2014) found a small musician advantage in both conditions, Başkent et al. (2018) did not, potentially due to limited test power with a much smaller sample compared to Fuller et al.’s (2014). Statistical comparison of both experiments revealed significant age effects, suggesting a maturation of the auditory system during emerging adulthood (Başkent et al., 2018).

At an even earlier stage of development, sensitivity to vocal prosody is of particular relevance in parent–infant interactions, where parents’ adequate behavior crucially depends on their capacity to infer the infants’ needs from the sound of their cries. Parsons et al. (2014) found that parents’ musicality could foster a positive parent–child interaction due to enhanced sensitivity to the infants’ emotional state. K. S. Young et al. (2012) used the same paradigm on musicians and nonmusicians with and without depression to show that musicality can potentially protect against compromised auditory sensitivity towards the infant during a depression period.

Several studies raised the question whether emotional sensitivity in musicians is restricted to the auditory domain. Twaite (2016) compared performance of musicians and nonmusicians for prosodic, lexical, facial, and musical emotions, and reported a musician advantage for the prosodic and the musical channels only, indicating that the musicians’ advantage was limited to the auditory modality. However, Weijkamp and Sadakata (2017) reported somewhat conflicting results. In this study, musicians performed better in an audiovisual task, which might point towards more efficient cross-modal integration.

Up to now, most studies comparing musicians and nonmusicians support the hypothesis that musicians have an advantage in vocal emotion perception. This advantage seems to be of a quantitative rather than a qualitative nature (Lima & Castro, 2011; Twaite, 2016). However, the degree to which this advantage is moderated by factors such as innate musicality, the amount of musical training, age at training onset, or maturation of the auditory system all remain subjects for future research.

Brain data

A small number of studies investigated differences between musicians and nonmusicians with respect to the brain basis of vocal emotional processing. Existing work on brainstem potentials and auditory-evoked responses in the electroencephalogram (EEG) suggests that effects of musicality can be observed in very early stages of vocal emotion processing. Strait et al. (2009) recorded brainstem potentials evoked by acoustically simple and complex portions of an infant’s cry, and reported an intriguing interaction between musical expertise and stimulus complexity: Compared to controls, musicians showed reduced responses in the simple, but increased responses in the complex portion of the sound. The authors interpret these findings as indicating that (a) musical expertise results in fine neural tuning to acoustic features that are important to vocal communication, and (b) subcortical mechanisms contribute to vocal emotion perception. At the cortical level, Pinheiro et al. (2015) and Rigoulot et al. (2015) recorded event-related potentials (ERPs) and found that modulatory effects of musical expertise can be observed in early stages of cortical processing before 100 ms (P50, N100), as well as in later stages (P200). Nolden et al. (2017) reanalyzed the data of Rigoulot et al. (2015) with a focus on induced oscillatory activity, and found larger induced power for musicians in the theta (4–8 Hz) and the alpha bands (8–12 Hz).

Using functional magnetic resonance imaging (fMRI), Park et al. (2015) showed that musicians exhibited increased activation in frontal areas, the posterior cingulate cortex, and the retrosplenial cortex. However, these differences were observed for sad stimuli only. Accordingly, the authors hypothesized that sadness might be of “higher affective saliency” for musicians.

Taken together, the existing work on brainstem potentials and EEG suggests that modulatory effects of musicality can be observed in very early processing steps of vocal emotions, which are associated with a basic analysis of auditory cues and allocation of emotional significance (Schirmer & Kotz, 2006). Neuroimaging complements this by implicating brain regions associated with higher order functions, such as evaluative judgements or empathic engagement (Park et al., 2015). Overall, neuroscientific research on links between musicality and vocal emotion perception is still in its infancy, although it clearly has potential to shed light on the underlying mechanisms of musicians’ enhanced ability to process vocal emotions.

Impairments of Vocal Emotion Perception in Individuals With Congenital Amusia

To gain a full understanding of the association between musicality and vocal emotion perception, it is worthwhile to consider the entire performance spectrum by including individuals with exceptionally poor musical abilities, such as in congenital amusia. Individuals with congenital amusia display a perceptual disorder specific to the musical domain, in the presence of normal hearing and otherwise intact cognition (Ayotte et al., 2002; Stewart et al., 2006). Congenital amusia is usually measured using the Montreal Battery of Evaluation of Amusia (MBEA; Peretz et al., 2003). Thompson et al. (2012) were the first to show poorer vocal prosody recognition in participants with amusia compared to controls, and further observed a certain degree of awareness of their perceptual limitations in daily life. Lolli et al. (2015) suggested that a core problem could be poor pitch (F0) perception: although participants with suspected amusia performed similar to controls on emotion perception from unfiltered or high-pass filtered (4800 Hz) utterances, they performed poorer for low-pass filtered (500 Hz) utterances, which presumably degraded timbre while preserving pitch information. Corroborating a selective deficit in pitch perception, Pralus et al. (2019) found that controls and participants with amusia exhibited comparable emotion recognition for whole sentences, but participants with amusia performed worse for vowels. Of relevance, perceived emotional intensity was comparable in both groups for all stimuli, which was interpreted as preserved implicit processing of emotional prosody in amusia.

Lima et al. (2016) took a cross-modal approach: in two experiments, they tested adults with amusia and matched controls on their ability to identify emotions in different types of vocal stimuli and silent facial expressions. Participants with amusia were found to be impaired in the auditory and the visual domain, implying more universal emotion processing difficulties. Zhang et al. (2018) and Cheung et al. (2020) were interested in relationships between amusia and emotional prosody processing in tonal languages. Compared to controls, they reported poorer performance in participants with amusia, both with a Mandarin-speaking and a Cantonese-speaking background, disconfirming the hypothesis that tonal language acquisition might compensate for pitch processing deficits in participants with amusia. Taken together, published findings on amusia paint a fairly consistent picture, suggesting that musical impairments transfer to vocal emotion perception, and that impairments for vocal emotions may originate in poor pitch perception.

Correlation of Vocal Emotion Perception With Musical Interests or Psychoacoustic Abilities

Complementing studies on extreme groups, other researchers measured normal interindividual variation in musicality in the general population, to link it to variability in vocal emotion perception. These studies result in conflicting findings. The most compelling evidence against such a link may have been provided by Trimmer and Cuddy (2008). Their correlational analysis of 100 participants revealed that musicality, as assessed via MBEA scores, was not associated with vocal emotion perception, a finding they replicated with another 92 participants. Trimmer and Cuddy (2008) concluded that emotion perception in music and the voice is not linked via auditory sensitivity but rather via a supramodal emotional processor. This finding conflicts with many results discussed before, and provoked large debates in the field. For instance, Lima and Castro (2011) argued that participants in the study had only 6.5 years of musical training on average, which might have been insufficient to observe a significant effect. However, Dibben et al. (2018) also failed to observe a relationship between musical interests and moment-to-moment reports of perceived emotion in longer (2–3 min) vocal excerpts. As a limitation, musical interests were assessed with a single dichotomous item in this study, which hardly captured fine-grained interindividual variation in musicality.

Other studies reported positive correlations. Globerson et al. (2013) did not assess full-scale musical ability, but psychoacoustic measures of sensitivity to pitch were found to predict vocal emotion perception performance. This highlights the importance of subtle pitch variations for emotional prosody perception, in line with the impairments found in amusia discussed in the previous section. Finally, Waaramaa and Leisiö (2013) investigated the link between musical interests and emotional prosody perception in a large-scale, cross-cultural study across five different countries (Estonia, Finland, Russia, Sweden, US). Musical interests tended to have a positive effect on vocal emotion identification, but as in Dibben et al. (2018), this finding was based on very few self-reported items only.

In summary, correlational studies on the link between musicality and vocal emotion perception have yielded conflicting results. This could be due to substantial differences in the assessment of musicality across studies, ranging from musical tests to short questionnaires. Indeed, the use of standardized and validated instruments for the assessment of musical interests is desirable, as this should promote better comparability across future studies, and contribute to resolving remaining controversies.

Effects of Musical Training Interventions on Vocal Emotion Perception

Apart from comparing individual differences in musicality, the effectiveness of musical interventions was the focus of several studies, which we review in this section. Note that designs with randomized assignments to intervention and control conditions are particularly valuable in the context of the nature/nurture debate, as they permit to de-confound training effects from self-selection effects when seeking musical education. Our literature survey indicated that intervention studies could be grouped into interventions for normal hearing listeners, on the one hand, and interventions for hearing-impaired individuals with cochlear implants, on the other hand.

Intervention-based studies on normal hearing individuals

A few studies suggest the effectiveness of musical training interventions for normal hearing individuals. Thompson et al. (2004) randomly assigned six-year-old children to one year of training in keyboard, singing, drama, or no lesson. Postintervention, the drama and keyboard groups outperformed the no-lesson group in vocal emotion perception. Perhaps surprisingly, this effect was not found in the singing group. Thompson et al. (2004) speculated that singing may have trained vocal production of pitch contours over time that conflicts with natural prosodic use of the voice. Nashkoff (2007) reported that simple pitch perception training alone can improve speech prosody decoding skills, but only for already highly trained musicians. Another attempt to show the effectiveness of musical interventions was made by Mualem and Lavidor (2015), who assigned participants either to music-based or visual-art-based interventions, which focused explicitly on expression of emotions in the respective domain. After only four sessions, an improvement in vocal emotion recognition performance was observed in the music compared to the art group. However, when both groups were compared to a group of highly trained musicians, no performance differences were found. This could suggest that the effectiveness of the intervention partially reflected “training to the test,” as the intervention explicitly focused on emotions. Finally, Bodner et al. (2012) reported that a music-based intervention improved recognition of happiness in patients with social anxiety disorder (SAD), who often display a persistent bias towards negative emotions. Although these findings need further verification, they suggest that perceptual biases in affective disorders may be attenuated by musical interventions. Together, the body of literature on interventions suggests musical training effects, but it is still sparse for the normal hearing population. Of interest, musical training/interventions have been studied more intensely in the field of hearing rehabilitation for cochlear implant users.

Intervention-based studies on cochlear implant users

All studies reviewed in this section included vocal emotion perception as a part of larger test batteries to assess musical training effects on voice, speech, and music perception in cochlear implant (CI) users. Petersen et al. (2012) recruited CI users within 14 days after implantation. Half of them received a 6-month musical ear training. While distinct improvements were observed for musical perception, the pattern was less clear for vocal emotions: the intervention group showed an earlier onset of improvement but the endpoints were comparable. As a qualification, the freshly implanted CI users in this study were in speech therapy during the intervention, which could have interfered with the musical training. In contrast, Fuller et al. (2018) studied adult CI users with a minimum of one year postimplantation, who were randomly assigned to either (a) a pitch/timbre group that received receptive training, (b) a music therapy group with face-to-face sessions including active music production, or (c) a control group with nonmusical activities, over a period of 6 weeks. Crucially, vocal emotion recognition improved only in the music therapy group, emphasizing the importance of active musical engagement and/or social interaction for training success. Similarly, Chari et al. (2020) also studied adult CI users with at least one year of implant experience, and assigned them to auditory-motor, auditory-only, or no training, over a period of 3 months. However, there was no effect on vocal emotion perception even though the intervention period was about twice as long as in Fuller et al. (2018). Notably, both studies used very small sample sizes, with less than 10 participants per group. While findings are intriguing and potentially important, they call for further exploration and replication with more powerful designs, particularly when effect sizes and statistical power are not (yet) routinely reported.

Only one study investigated the role of musical training in children with CIs, aged 6 and 15 years (Good et al., 2017). Improvements in vocal emotion perception were found after 6 months of piano lessons compared to a visual art training. The authors concluded that musical training might be an effective supplement to auditory rehabilitation in children. In addition to intervention-based approaches, Waaramaa et al. (2018) showed that self-reported musical interests—especially a preference for dancing—predicted vocal emotion perception capacity in CI users. Taken together, findings emphasize an importance of active musical engagement, as compared to pure receptive training, in order to promote recovery of emotion perception after cochlear implantation.

Discussion

While the transfer of musicality to speech perception abilities is well documented, the transfer to emotion perception attracted substantial scientific interest only recently. Overall, while associations between musicality and vocal emotion perception ranged from strongly positive to absent, the majority of studies supported the idea that musicality is associated with better vocal emotion perception capacities. Both studies with highly trained musicians, on the one hand, and with individuals with amusia, on the other hand, suggest that musical capacities are positively associated with vocal emotion recognition. Correlational analyses with varying degrees of musicality in a normal population revealed less consistent results, presumably partly due to their methodological heterogeneity. Musical intervention studies are still sparse but illustrate great potential to improve vocal emotion perception capacities both in the normal hearing population and in cochlear implant users. In the following lines, we will first discuss potential moderators by evaluating the effectiveness of active versus receptive musical training, and the role of different acoustic cues signaling emotionality in both music and the voice. We then will discuss how these studies inform us about the contribution of nature and nurture factors to this link.

Active Engagement in Musical Activities Versus Receptive Training

Several studies suggest that active engagement in a musical task is a crucial factor. They compared purely receptive training to auditory-motor training, and reported stable benefits of auditory-motor training in the vocal emotion domain (Chari et al., 2020; Fuller et al., 2018). There is high consensus in the neuroscientific literature that active engagement in music and the synchronized tuning of auditory, visual, somatosensory, and motor processes is a driving force to adaptive neuroplasticity (Kraus & Chandrasekaran, 2010; Kraus & White-Schwoch, 2017; Palomar-García et al., 2017). Specifically, it has been shown that sensorimotor musical training leads to more robust changes in the auditory cortex compared to pure receptive training (Lappe et al., 2008). This surely does not imply that purely receptive music training is ineffective (Bigand & Poulin-Charronnat, 2006), but motor engagement may add a boost to the auditory fine-tuning process during training. This could be of particular relevance for cochlear implant users (Lehmann & Paquette, 2015), who during rehabilitation face the challenge of massive postimplantation adaptation to the new auditory input. Here, auditory-motor interventions could be particularly efficient in fostering neuroplasticity in auditory areas, and in aiding hearing rehabilitation.

The Role of Different Acoustic Cues and Supramodal Processes

Previous literature suggests that musicians show superior processing of auditory cues (Elmer et al., 2018). The present review reveals that superior pitch processing capacities in people with high levels of musicality are particularly tightly associated with vocal emotion perception. On the one hand, pitch discrimination performance was correlated with emotion perception performance in musicians; on the other hand, there was strong agreement that impaired pitch processing was a key deficit in people with amusia, accounting for impairments in the domain of vocal emotions. However, this conclusion has its limitations since amusia was often defined only based on low scores on the pitch subsets of the MBEA (see Table 1). According to Juslin and Laukka (2003), pitch and timbre cues are highly relevant for vocal emotion perception, but timing parameters like speech rate were found to be equally important. The potential role of timing was largely neglected in all reviewed studies, despite its central role in music, therein often referred to as tempo and rhythm. Lagrois and Peretz (2019) showed that although pitch and rhythm deficits are often linked in people with amusia, they sometimes can appear as distinct disorders. In parallel, there is current evidence of different brain mechanisms processing pitch-related versus timing-related structures in music (Sun et al., 2020). In the future, it would be very informative to investigate vocal emotion perception in people with specific impairments related to the temporal domain of music.

Alongside the notion that enhanced sensitivity to acoustic cues may lead to better emotion perception in people with a higher level of musicality, it was also suggested that there might be a domain-general supramodal process that mediates the link between musicality and emotional perception across domains (Schellenberg & Mankarious, 2012; Trimmer & Cuddy, 2008). Lima and Castro (2011) suggested that musical training might increase the level of “emotional granularity,” meaning a more fine-grained conceptualization and differentiation of emotions that, in turn, could aid emotional perception in other domains. However, although the involvement of supramodal processes seems plausible, the reviewed brain data suggest that modulatory effects of musicality can be observed in very early vocal emotion processing steps, which are associated with a basic analysis of auditory cues and detection of emotional saliency (Schirmer & Kotz, 2006). Hence, the link between musicality and vocal emotion perception seems to be based, at least partially, on a more sophisticated analysis of auditory cues.

Nature and Nurture

Musicality in people emerges from a combination of genetic and environmental factors. Likewise, the observed link between musicality and vocally expressed emotions could be either explained by a dispositional sensitivity to the musical and the vocal channels or by a transfer from musical training effects into the vocal domain. Additionally, conditions of nature and nurture interact in individuals, making it difficult to estimate the degree of their respective contributions. Unfortunately, apart from few randomized treatment studies that potentially isolated training effects, all reviewed articles established correlational designs or studied preexisting groups, and thus cannot provide direct evidence of the relative contributions of nature or nurture conditions. Nevertheless, it is worthwhile to consider implications of certain findings for this debate.

Without exception, all the studies on people with amusia suggested that vocal emotion perception deficits can be associated with a congenital music perception impairment. In that sense, the link between musicality and vocal emotion perception seems to occur in the absence of training effects and might therefore be mediated by genetic factors. These may have evolved in parallel with acoustic similarities between vocal and musical emotions (Juslin & Laukka, 2003), and may be expressed in overlapping neural circuits involved in recognizing basic emotions in voices and music (Frühholz et al., 2016). As a qualification, Bigand and Poulin-Charronnat (2006) showed that a remarkable degree of auditory sophistication can be acquired through exposure to music only, without explicit training. Accordingly, it remains possible that these implicit musical learning processes could be limited in people with amusia if they avoid exposure to music because they enjoy it less. Thus, while the limited vocal emotion perception capacities observed in amusia could hint to a genetic predisposition, they could also result in part from selective exposure.

At the same time, a consistent set of findings in highly trained musicians suggests that explicit musical training does play a central role in the development of auditory and vocal perceptual skills. This points to an influence of environmental factors but there is always a possible confound with natural inclination, as people with better auditory skills may be more likely to start and pursue musical training (Pantev & Herholz, 2011). Accordingly, Dmitrieva et al. (2006) observed superior vocal emotion perception capacities in a very young group of musicians, who presumably had very little musical training yet but might have been selected for musical education based on their auditory sensitivity. Note that many authors who found the musician effect on vocal emotion perception argued that it is very unlikely that it is entirely based on predispositional differences (Lima & Castro, 2011; Strait et al., 2009; Thompson et al., 2004): On the one hand, the effect was still present when participants were matched in socioeducational variables, general intelligence, cognitive control, and personality traits (Lima & Castro, 2011). On the other hand, some studies found a correlation between emotion perception capacities and years of musical education, suggesting a clear impact of training duration (Parsons et al., 2014; Twaite, 2016; K. S. Young et al., 2012). However, this could also reflect a gene–environment interaction since people who have a dispositional aptitude might stick longer to the training. Further, vocal emotion perception was found to be related to age at training onset (Strait et al., 2009). Although it is often difficult to disentangle age at onset from years of musical training, this could suggest a sensitive period for the acquisition of some music-training-induced skills. Accordingly, many studies required musicians to have started training before the age of 7 (see Table 1).

Finally, a few intervention studies with randomized assignment to treatment and control groups aimed at isolating learning effects of musical training and succeeded to improve vocal emotion perception in a healthy population. Note that these interventions were qualitatively different from more “natural” settings of musical education where the focus lies on mastery of an instrument or the singing voice. They were shorter and often particularly focused on emotion expression in music (Bodner et al., 2012; Mualem & Lavidor, 2015), except for Thompson et al. (2004), who randomly assigned children to 1 year of keyboard or singing lessons, but found mixed results. Likewise, studies on cochlear implant users showed that musical training can improve vocal emotion perception in this particular group, but, again, those interventions had an entirely different purpose than for normal hearing participants: instead of fine-tuning a healthy auditory system, CI users have to learn how to restore perception from a severely degraded input. Music-based interventions may be particularly effective in groups with poor auditory resolution to improve sensitivity to auditory cues in the vocal domain (Fuller et al., 2018; Good et al., 2017). Overall, while it may be difficult to generalize the results of these intervention studies to settings of instrumental or vocal music lessons, they show that vocal emotion perception can be improved through musical training in some circumstances. Accordingly, it seems worthwhile to incorporate emotionally oriented teaching units in music lessons or treatment programs.

Identification of Relevant Topics for Future Research

The findings surveyed in this article highlight many relevant aspects that can guide future research on relationships between musicality and vocal emotion perception. We hope this article will inform systematic research programs with better powered designs and standardized research materials, and ultimately promote a refined understanding of the putative common mechanisms underlying musicality and vocal emotion perception. Unfortunately, neuroscientific research in this field is still sparse and unsystematic, and the heterogeneity of the previous studies illustrates the need for more systematic research on candidate subcortical and cortical mechanisms to mediate the link between musicality and vocal emotion perception (for a recent review on subcortical and cortical mechanisms of nonverbal voice perception, see Frühholz & Schweinberger, 2021). Important questions for neuroscientific research include how perceptual neuroplasticity is induced in musicians, how this relates to motor plasticity in musicians’ brains (Elbert et al., 1995), what are the relative roles of training or ongoing maintenance (Merrett et al., 2013), and how each of these aspects relates to vocal emotion perception. Moreover, a particularly relevant comparison in the context of vocal emotion perception is the one between singers and instrumentalists. Most of the reviewed studies only included instrumentalists or did not report on that matter. Only Thompson et al. (2004) compared participants with piano and singing lessons, and their results suggested that singing lessons might even hinder vocal emotion perception, perhaps because the vocal patterns that are trained during singing lessons may conflict with natural vocal emotion expression. Another neglected but related field is the link between musicality and emotion production. It may be reasonable to assume that people who are highly trained in an emotionally expressive art have an advantage in vocal expression of emotion.

Conclusion and Outlook

In this review, we systematically identified and discussed the current state of research on the link between musicality and vocal emotion perception. Overall, the available evidence suggests that musicality is indeed associated with better vocal emotion perception performance. Since adequate perception of vocal emotions is a fundamental prerequisite for everyday social interaction, these results also may add weight to the presumed importance of music and musical education for personal development and quality of life. Musical training can provide a promising supplemental intervention for people who struggle with vocal emotion perception, and while supporting evidence can now be considered strong in the case of cochlear implant users, future applied research seems promising in the context of other target groups as well (e.g., individuals with autism or with auditory impairments compromising social communication). Although data often do not allow for causal inferences, their combined consideration can provide useful information on the question of how different factors of nature and nurture contribute to related skills of emotion perception in the domains of voice and music.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Author note:

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: C. N. research has been supported by the German National Academic Foundation (Studienstiftung des Deutschen Volkes). We thank Annett Schirmer for her helpful and critical comments. We are grateful to Susan Müller and Frank Nussbaum for their support in the preparation of the manuscript.