Abstract

The nonnegative representation-based classification (NRC) method has attracted increasing attention in the field of face recognition. Building upon collaborative representation (CR), NRC incorporates a nonnegative constraint on the representation coefficients, thereby reducing the contribution of irrelevant training samples and enhancing overall classification performance. Despite these improvements, NRC inherits the same decision-making mechanism as the CR method, resulting in a decoupling of the representation and classification stages. This separation limits the method’s classification effectiveness. Furthermore, the presence of multicollinearity in the nonnegative representation may introduce inaccuracies in classification estimates, further undermining performance. To address these limitations, this paper proposes the competitive-collaborative nonnegative representation (CCNR) model. CCNR integrates two regularization terms: A competitive constraint and a collaborative constraint. The competitive constraint adopts a residual-based strategy during the classification stage, thereby strengthening the connection between representation and classification. This approach enables training samples from different classes to compete in representing the query sample, significantly improving classification performance. In parallel, the collaborative constraint applies an

Keywords

Introduction

In recent years, face recognition technology has experienced a surge in popularity, finding diverse applications in identity verification, security surveillance, and augmented reality. To enhance classification accuracy, a multitude of methods have been proposed.1–4 However, deep learning models often fail to deliver satisfactory performance when confronted with limited training data. In this context, representation-based classification methods (RBCM) have emerged as prominent methods, leading to their widespread adoption. Distinct from conventional methods, RBCM represent a query sample as a linear combination of the entire set of training samples. The label of the query sample is then determined based on the class of training samples that best reconstruct it. This strategy enhances classification accuracy, even under conditions of limited training data, thereby providing a crucial solution for face recognition tasks.

Sparse representation-based classification (SRC) 5 emerged as a pioneering method, distinguished by its ability to represent a query sample as a sparse linear combination of all training samples. The sparsity constraint ensures that only a small subset of training samples, ideally from the same class as the query sample, contributes to the representation. This property significantly improves robustness against occlusion and corruption, achieving high accuracy even with limited training data. The innovative framework of SRC represents a substantial advancement in the field, demonstrating strong performance under challenging conditions. Kernel SRC (KSRC) 6 extends SRC by employing kernel functions to map original data into higher-dimensional feature spaces, capturing complex nonlinear relationships between samples and thereby enhancing classification accuracy. To address the inefficiency of SRC, Li et al. proposed local SRC (LSRC), 7 which applies the sparse representation strategy within local neighborhoods rather than the entire training set.

Unlike SRC, which employs the

Despite the advancements of SRC, CRC, and their variants, the representation coefficients in these methods inevitably include negative values. Xu et al. 13 argued that although the mathematical operation of addition and subtraction of samples is straightforward, it lacks physical interpretability. Drawing inspiration from nonnegative matrix factorization (NMF), 14 they proposed nonnegative representation-based classification (NRC), which imposes a non-negativity constraint on the representation coefficients, ensuring that all entries are nonnegative. This constraint effectively enhances the contribution of homogeneous training samples, thus improving the representational capacity of the model. However, since NRC performs classification in the original feature space, its performance may deteriorate when the samples are not linearly separable. Kernel NRC (KNRC) 15 addresses this issue by employing kernel functions to map samples into a higher-dimensional kernel feature space. Yuan et al. 16 proposed double discriminative constraint-based affine nonnegative representation (DCANR), which incorporates two discriminative constraints alongside an affine constraint, reducing inter-class similarity and enhancing classification performance. Li et al. 17 observed that the absence of estimated samples during classification may hinder the effectiveness of NRC, and proposed locally-constrained discriminative nonnegative representation (LDNR), which integrates local information and optimizes estimated samples to yield a more discriminative representation vector.

The imposition of a nonnegative constraint on the entire representation coefficients alone is insufficient to enhance the representation capability of homogeneous samples. NRC fails to consider consider the relationships between the query sample and training samples from different classes, which reduces its classification performance. Moreover, the absence of further constraint on the representation coefficients results in instability during the solution process. To address these issues, we propose the competitive-collaborative nonnegative representation (CCNR) method. CCNR extends the NRC framework by introducing a competitive term and a collaborative term. Specifically, the competitive term enables training samples from different classes to compete in representing the query sample, thereby integrating classification strategies into the representation process and reducing the influence of heterogeneous samples. The collaborative term improves the stability of the solution process. The CCNR model is efficiently solved using the alternating direction method of multipliers (ADMM), 18 and this paper provides a detailed description of the iterative optimization steps. Additionally, to implement the proposed method, we deployed it on the smart campus platform of Wuxi University, yielding favorable results. Comparative experiments on three challenging face recognition datasets validate the effectiveness of the CCNR method.

This paper proposes a novel CCNR model that integrates a competitive constraint into NRC, linking the representation and classification phases to enhance model performance. To address the multicollinearity problem in nonnegative representation, a The CCNR has been successfully deployed on the smart campus platform, yielding positive outcomes. Extensive comparative experiments conducted on publicly available face datasets validate the competitiveness of the CCNR model.

The structure of this paper is as follows: Section “Related works” introduces related work; Section “CCNR based classification” describes the CCNR method in detail; Section “Application for face recognition in smart campus” shows the application of CCNR in smart campus; Section “Experiments and analysis” validates the effectiveness of the CCNR method through extensive experiments; Section “Conclusion” concludes the entire paper. For convenience in describing the formulations in this paper, Table 1 summarizes the universal mathematical symbols.

The universal mathematical symbols in this paper.

Related works

Nonnegative representation-based classification

NRC has garnered significant attention in the field of face recognition. Its core idea is to represent the query sample as a linear combination of training samples, with a nonnegative constraint imposed on the representation coefficients to prevent negative values. This constraint ensures that the data representation aligns more closely with the physical characteristics observed in practical scenarios, where samples from different classes are often unrelated rather than negatively correlated. By restricting coefficients to nonnegative values, NRC enhances the representation capacity of homogeneous samples while reducing the influence of heterogeneous ones. Furthermore, the nonnegative constraint inherently promotes sparsity, resulting in a sparser distribution of coefficients and thereby improving the model’s classification performance. The NRC model can be formulated as follows:

The optimization model of NRC is formulated as a nonnegative least squares problem, which lacks an analytical solution. By introducing additional optimization variables and employing an iterative solving method, the nonnegative representation vector

Neighboring subspace classifier

CRC utilizes all training samples for collaborative representation of the query sample, whereas the neighboring subspace classifier (NSC)

19

reconstructs the query sample using only the training samples within each class, thereby focusing on the neighboring subspace of each class. This mechanism differs from CRC by aiming to improve classification accuracy through a more localized representation. The objective function of NSC can be expressed as:

The equation corresponds to a simple least squares problem, and its analytical solution is given by:

CCNR based classification

Representation

NRC has demonstrated outstanding performance by introducing a nonnegative constraint, which enhances the contribution of homogeneous samples in representation. However, the absence of class competition representation in NRC results in a weak connection between the representation and classification process. We believe this is a key factor limiting the performance of NRC. To address this, we propose incorporating a competition term and a regularization term into NRC to suppress the influence of heterogeneous samples and simultaneously improve the stability of the solution. The proposed CCNR model can be formulated as follows:

In equation (5), the second and third terms represent the competitive and collaborative constraints, respectively. The regularization coefficients

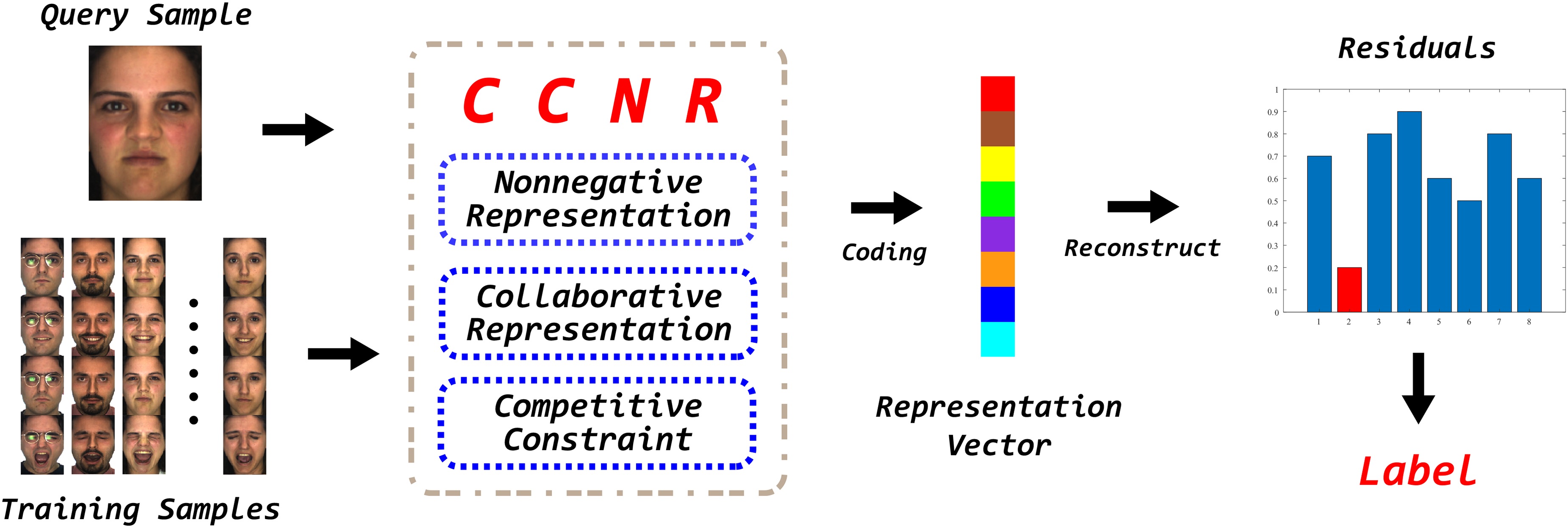

The overall classification flowchart of competitive-collaborative nonnegative representation (CCNR). The blue boxes signifies the characteristics of CCNR, while the red Label indicate the predicted class of the query sample.

Optimization

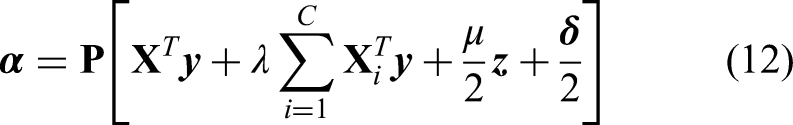

In RBCM, determining the label of a query sample critically depends on obtaining the representation coefficients. In the CCNR method, the nonnegative constraint prevents a direct analytical solution of the optimization function. To address this issue, we introduce an auxiliary variable

The problem can be solved using the ADMM method, and the corresponding Lagrangian function is:

Here,

(1) Update

To solve the subproblem and determine the optimal value of

To find the optimal

Simplifying, we obtain:

Thus, the analytical solution for

The matrix

(2) Update

Clearly, the analytical solution for

(3) Update

Convergence

To ensure the convergence of the iterative solution procedure for the CCNR method, we conducted a comprehensive convergence analysis using the AR, Extended Yale B (EYB), and GT datasets. Specifically, a representative query sample was randomly selected from each dataset, and the evolution of the convergence criteria was plotted against the iteration count. These plots provide a clear depiction of the iterative solution’s progression towards achieving convergence.

As illustrated in Figure 2, for the AR dataset, the convergence criteria exhibit a rapid decrease within the first 20 iterations, stabilizing after approximately 150 iterations. Similar behavior is observed for the EYB dataset. In contrast, for the GT dataset, the convergence criteria quickly decrease to zero within the initial 20 iterations. These results collectively confirm the convergence of the CCNR solution process, thereby demonstrating the effectiveness and stability of the iterative method.

Convergence characteristic of competitive-collaborative nonnegative representation (CCNR) on three datasets.

Classification

Complexity

In our solution, each parameter is iteratively updated to converge towards an optimal value. The computational complexity of each subproblem in Algorithm 1 is as follows. (1) For the update of

In Algorithm 2, the main computational cost stems from matrix multiplications, where the complexity of calculating the reconstruction residuals is

Application for face recognition in smart campus

Application background

As an advanced form of educational informatization, the smart campus enables deep perception and intelligent recognition of the physical campus environment through the seamless integration of cutting-edge technologies such as cloud computing, the Internet of Things (IoT), big data, and artificial intelligence. It not only precisely captures learning and working scenarios as well as personal characteristics of teachers and students, but also facilitates the seamless integration of the school’s physical and digital spaces. The construction of the smart campus cultivates an intelligent and open environment for teachers and students, significantly enhancing their living experiences and altering the interaction patterns between them and school resources and environments. Consequently, it promotes a leap in educational and teaching quality and an increase in management efficiency, laying a solid foundation for the comprehensive development of teachers and students.

Face recognition serves as a crucial component in the development of the smart campus, and its application demands are becoming increasingly prominent. Specifically, its applications are primarily manifested in the following aspects: (1) Attendance management: High-precision face recognition technology can automatically complete student attendance records, effectively avoiding the cumbersome and error-prone nature of manual attendance and significantly improving the accuracy and efficiency of attendance. (2) Consumption payment: In campus cafeterias and supermarkets, face recognition technology provides a convenient and rapid payment method, reducing the reliance on cash and bank cards and further enhancing payment efficiency. (3) Personalized services: By leveraging face recognition technology, the system can accurately identify students’ identities and characteristics, thereby offering personalized services such as book recommendations and course customization, greatly optimizing the learning experience. (4) Data analysis: By collecting data on students’ entries, exits, and consumption patterns, face recognition technology offers robust support for data analytics, assisting institutions in gaining a comprehensive understanding of students’ learning and living conditions and furnishing a scientific basis for informed management decisions. Currently, the face recognition algorithm proposed in this paper has been successfully deployed and validated within the smart campus face recognition system at Wuxi University. The system framework design achieves a high degree of integration, aiming to comprehensively enhance the efficiency of campus management.

System framework

The smart campus face recognition system framework of Wuxi University is organized hierarchically into four core layers, from the bottom up: The Perception Layer, Link Layer, Computing Layer, and Business Layer. As illustrated in Figure 3, each layer fulfills specific functions and responsibilities, collectively enabling a comprehensive enhancement of the smart campus infrastructure.

Framework of face recognition in smart campus.

Some typical hardware applied in smart campus.

Face recognition

This subsection illustrates the application of the CCNR method within the smart campus platform of Wuxi University, demonstrated through the construction of a comprehensive face recognition system. As depicted in Figure 5, the face recognition system is organized into six core components: The Recognition Image Display Area, the Captured Image Display Area, the Database Image Display Area, the Operation Button Area, the Personal Information Area, and the Message Area. When users click the “Load Image” button, they can select an image for recognition. Upon successful loading, the system immediately provides feedback in the Message Area. Subsequently, when users click the “Recognize” button, and if the recognition process executes successfully, the system automatically retrieves the matching face image from the database and displays it in the Database Image Display Area. Simultaneously, the corresponding personal information is presented clearly in the Personal Information Area.

Application process of the competitive-collaborative nonnegative representation (CCNR) method.

Experiments and analysis

Experimental setting

In this section, the paper evaluates the classification performance of the CCNR method through extensive comparative experiments conducted on publicly available face datasets, which are recognized as challenging benchmarks in face recognition. The datasets used include AR, EYB, and GT. The CCNR method is compared against several classical classification techniques, including NSC, 19 SVM, 20 SRC, 5 CRC, 8 CROC, 21 ProCRC, 10 NRC, 13 and DRC. 12 Some experimental results are adopted directly from the literature, 13 while others were obtained by reproducing experiments under the same conditions as those used for the proposed method, ensuring a fair comparison. The best results for each experiment are highlighted in bold in the corresponding tables.

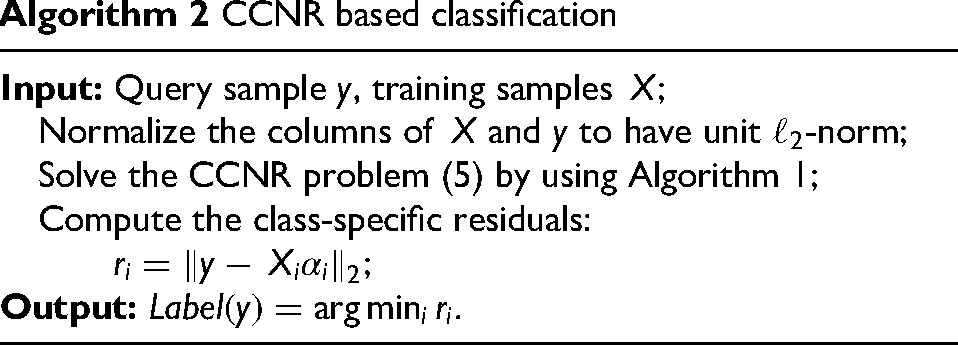

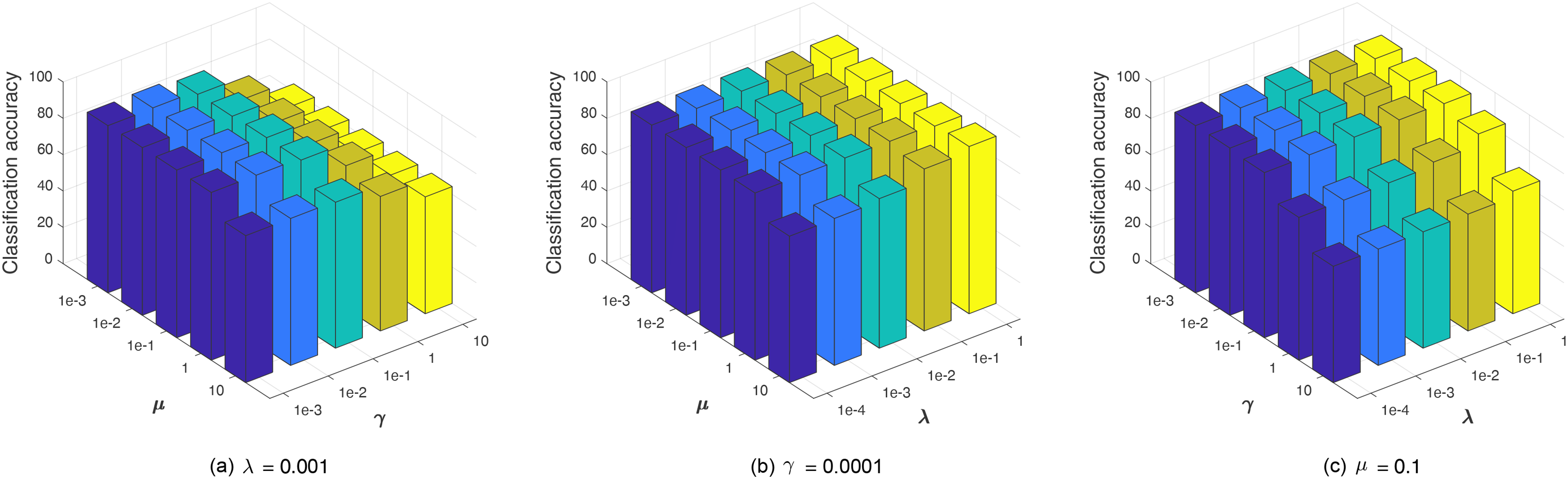

The CCNR model involves three parameters:

AR face recognition

The AR dataset is a widely recognized benchmark in face recognition research. It comprises approximately 4,000 color images of faces from 126 individuals, including 70 women and 56 men. Each individual’s images exhibit a broad range of facial variations, including different expressions and lighting conditions, making the dataset particularly challenging for face recognition tasks. Figure 6 presents typical sample images from the AR dataset. In this experiment, 14 images per individual are utilized: 7 images from session 1 for training and the remaining 7 images from session 2 for testing. The images are cropped to a standardized size of

Sample images from AR dataset.

The experimental results are summarized in Table 2. CCNR consistently outperforms both NRC and DRC in the 54- and 300-dimensional feature spaces. Although it achieves comparable performance with NRC in the 120-dimensional space, it maintains a clear advantage over DRC. Notably, in the 300-dimensional space, CCNR surpasses NRC by 0.8% and DRC by 0.3%, demonstrating a particularly significant performance gain.

Classification accuracy (%) of various methods on AR dataset (best results given in bold).

SRC: sparse representation-based classification; ProCRC: probabilistic collaborative representation-based classification; CRC: collaborative representation-based classification; DRC: discriminative representation-based classification; NSC: neighboring subspace classifier; SVM: support vector machine.

EYB face recognition

The EYB dataset comprises 2432 face images from 38 individuals, with approximately 64 images per person. These images were captured under a variety of lighting conditions. The original images have a resolution of

Sample images from Extended Yale B dataset.

The experimental results are summarized in Table 3. CCNR consistently outperforms other methods across all evaluated dimensions. Specifically, in the 84-dimensional subspace, it achieves a 1.8% improvement over NRC. In the 150-dimensional subspace, it demonstrates a 1% advantage over NRC, while in the 300-dimensional subspace, it maintains a 0.4% lead over NRC. Notably, on this dataset, CCNR showcases robust classification capabilities, particularly in lower-dimensional feature spaces.

Classification accuracy (%) of various methods on Extended Yale B dataset (best results given in bold).

SRC: sparse representation-based classification; CRC: collaborative representation-based classification; ProCRC: probabilistic collaborative representation-based classification; DRC: discriminative representation-based classification; NSC: neighboring subspace classifier; SVM: support vector machine.

GT face recognition

The GT dataset contains 750 face images from 50 distinct individuals, with an average of 15 images per person. Each image has a resolution of

Sample images from GT dataset.

The experimental results are provided in Table 4. On this dataset, CCNR achieves the same classification accuracy as DRC, while maintaining a 0.3% advantage over NRC. Collectively, the experiments conducted across the three face datasets robustly demonstrate the competitiveness of the CCNR method in the field of face recognition.

Classification accuracy (%) of various methods on GT dataset (best results given in bold).

SRC: sparse representation-based classification; CRC: collaborative representation-based classification; ProCRC: probabilistic collaborative representation-based classification; DRC: discriminative representation-based classification; NSC: neighboring subspace classifier; SVM: support vector machine; CCNR: competitive-collaborative nonnegative representation.

Parameter characteristic

The performance of a model can be significantly influenced by its parameters. The CCNR method proposed in this paper involves three key parameters:

Parameter characteristic of competitive-collaborative nonnegative representation (CCNR) on AR dataset.

Parameter characteristic of competitive-collaborative nonnegative representation (CCNR) on Extended Yale B dataset.

Parameter characteristic of competitive-collaborative nonnegative representation (CCNR) on GT dataset.

On the AR dataset, it is observed that excessively large values of both

In summary, these parameters exhibit distinct influences across the different datasets. However, the overall classification accuracy remains relatively stable across the examined parameter ranges. These findings underscore the importance of careful parameter tuning to optimize model performance for specific datasets.

Ablation study

This study introduces competitive and collaborative constraints into the NRC method to enhance model performance. This subsection aims to analyze the specific contribution of these two regularization terms to performance improvement. To this end, we investigate the effect of each constraint by removing one while retaining the other. Classification accuracy comparisons between the original CCNR model and its variants are conducted on the AR dataset (300 dimension), EYB dataset (300 dimension), and GT dataset. The experimental results are presented in Table 5.

Classification accuracy (%) of competitive-collaborative nonnegative representation (CCNR) and its variants on the three dataset (best results given in bold).

Based on the results, the following conclusions can be drawn:

The experimental results demonstrate that the competitive constraint can effectively enhance model performance, and when combined with the collaborative constraint, the CCNR model achieves superior classification results.

Conclusion

RBCM have been widely applied in the field of face recognition. However, given the inherent limitations of the NRC approach, this paper proposes the CCNR method to further enhance the capabilities of nonnegative representation. CCNR introduces two highly effective regularization terms: A competitive term and a collaborative term. The competitive term enables training samples from different classes to compete in representing the query sample, thereby assigning higher weights to the class coefficients with stronger representation capabilities. Meanwhile, the collaborative term ensures the stability of the representation coefficients, further enhancing the model’s classification performance. CCNR has been successfully deployed on the smart campus platform of Wuxi University, and this paper provides a detailed illustration of its application workflow. Finally, the advantages of CCNR are comprehensively demonstrated through rigorous experiments conducted on three challenging face datasets, highlighting its superior classification performance and robustness.

Footnotes

Acknowledgements

The authors express their sincere gratitude to the editors and reviewers for their invaluable contributions to the publication of this paper.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Key Research and Development Program of China (2021YFE0116900), the National Natural Science Foundation of China (42175157, 42475151), the General Project of Natural Science Research of Jiangsu Higher Education Institutions (22KJB520037), the "Taihu Light" Science and Technology Project of Wuxi (K20231003), the Wuxi University Research Start-up Fund for Introduced Talents (2021r032).

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and publication of this article.