Abstract

The nonnegative representation-based classification only imposes an overall nonnegative constraint on the representation coefficients but fails to apply differentiated penalties on the coefficients of different classes, which inevitably limits its effectiveness. In response, this article proposes a dual flexible competitive nonnegative representation method, which introduces two competitive mechanisms: mean competition and inter-class competition. Mean competition relies on the class-wise mean of training samples as the competitive target, encouraging the representation coefficients to accurately capture the unique features of each class and enhancing the discriminability between the representation coefficients. Inter-class competition fully considers the intrinsic relationship between the overall representation and the class representations, strengthening the competitive representation between the true class and all remaining classes, thereby improving classification performance. Meanwhile, flexible factors are ingeniously incorporated into both competitive terms to effectively reduce interference from incorrect classes in the classification decision. The alternating direction method of multipliers is employed to solve the dual flexible competitive nonnegative representation problem, with a comprehensive explanation of the iterative procedure provided. Experimental findings indicate that the dual flexible competitive nonnegative representation method demonstrates significant advantages in face recognition tasks. The source code will be made available upon the acceptance of this article at https://github.com/li-zi-qi/DFCNR.

Keywords

Introduction

Face recognition occupies an indispensable position in the field of computer vision, with numerous methods achieving remarkable research outcomes and driving its continuous advancement.1–3 Recently, representation-based classification methods (RBCMs) have garnered increasing interest within the academic community. The fundamental principle of these methods lies in their capability to utilize the complete training dataset to represent a given test sample effectively. More specifically, RBCM approximates a test sample by applying a linear combination of training samples through a superposition fitting approach, generating in a representation coefficient. Subsequently, the test sample is reconstructed, and residuals between the reconstructed sample and training samples from each class are computed. The class corresponding to the smallest residual is then selected as the predicted label. Deep learning methods have achieved excellent classification performance. However, the RBCM framework offers distinct advantages in terms of interpretability and data efficiency. The linear representation model provides transparent, physically meaningful decisions, contrasting with the black-box nature of deep learning, and its reliance on sample-based representations instead of complex training makes it inherently more robust in small-sample scenarios. Generally, RBCM approaches can be divided into three primary categories: sparse representation, collaborative representation, and nonnegative representation.

As a pioneering work in RBCM, sparse representation-based classification (SRC)

4

as well as its variants5,6 employ the

Neither SRC nor CRC considers the potential presence of negative values in the representation coefficients. However, Xu et al. 13 argue that while such additive and subtractive interpretations are mathematically feasible, they do not align with the physical reality of the real world. Inspired by nonnegative matrix factorization (NMF), 14 they proposed the nonnegative representation-based classification (NRC), imposing a nonnegative constraint on the representation coefficients. Compared to SRC and CRC, NRC produces coefficients with more physically meaningful interpretations and inherent sparsity, thereby avoiding the cancelation effect between positive and negative values, enhancing the contribution of samples from the correct class, and suppressing interference from incorrect classes. This strategy ensures that heterogeneous samples exhibit no correlation, rather than negative correlations, thereby better describing the relationships among the samples.

Zhou et al.

15

integrated the kernel trick with nonnegative representation, proposing the kernel nonnegative representation-based classification (KNRC). This approach further enhances the performance of nonnegative representation models, offering greater advantages when handling complex data. Yuan et al.

16

introduced the double discriminative constraint-based affine nonnegative representation (DCANR) method. Building upon nonnegative representation, this method incorporates an additional affine constraint and two discriminative constraints, further improving the model’s representational capability. Li et al.

17

proposed the locality-constraint discriminative nonnegative representation (LDNR) method, which incorporates both locality constraint based on distance and discriminative information to enhance classification performance. To address the inability of RBCM to effectively handle imbalanced data, Li et al.

18

introduced the density-based discriminative nonnegative representation (DDNR) method. This method incorporates a class-specific regularization term to reduce inter-class sample correlations, as well as an adaptive weighting mechanism to mitigate the impact of varying sample sizes across classes on the model’s representation performance. To address the issues of the separation between the representation and decision-making stages as well as the multicollinearity issue in nonnegative representation, the competitive-collaborative nonnegative representation (CCNR)

19

model introduces a residual-based competitive constraint term. This term promotes competition among samples from different classes in representing the test sample, thereby integrating classification strategy into the representation process. Simultaneously, an

However, traditional nonnegative representation methods impose a global nonnegative constraint on the representation coefficients without introducing differentiated penalization for coefficients associated with different classes. In fact, competitive representations can effectively complement the overall collaborative representation, while the absence of such competitive constraints may hinder the classification performance of nonnegative representation.

This article proposes a novel dual flexible competitive nonnegative representation (DFCNR) method, which introduces two competitive representation mechanisms: mean competition and inter-class competition. In the mean competition, the mean sample is defined as the average of all training samples within a class and is assumed to capture the most salient features of that class. The competitive representation of the mean sample by its training samples drives the representation coefficients to capture the unique characteristics of each class more precisely. This process improves the discriminability of the representation coefficients, making it easier for the model to distinguish between different classes during classification. In the inter-class competition, DFCNR considers the intrinsic relationship between the overall representation and class-specific representations. It uses a globally reconstructed sample as the target for competitive representation, which closely resembles the test sample in feature space. The inter-class competition mechanism emphasizes competitive representation between the true class and all remaining classes, thereby contributing to improved classification accuracy. Moreover, DFCNR introduces flexible factors into both competitive terms. These factors allow for a more adaptive representation process, where not all training samples (particularly those from incorrect classes) are required to participate with equal strength. The weights of representation coefficients associated with incorrect classes are appropriately diminished, reducing their interference in classification decisions. As a result, the model becomes more focused on the discriminative features of the correct class, thereby enhancing overall classification performance. To effectively solve the DFCNR optimization problem, we employ the alternating direction method of multipliers (ADMM), 20 with a comprehensive explanation of the iterative procedure provided. Additionally, thorough experiments are performed on commonly used public face recognition datasets, and the results clearly indicate that DFCNR outperforms current methods in this field. The key contributions of this study can be summarized as follows:

Introducing mean competition. Mean competition utilizes the class-wise mean of training samples as a competitive target, encouraging the representation coefficients to precisely capture unique features, thereby enhancing the discriminability of representation coefficients. Introducing inter-class competition. Inter-class competition fully considers the intrinsic relationship between the overall representation and class-specific representations, strengthening the competitive representation between the true class and all remaining classes, thus improving classification performance. Incorporating flexible factors into both competition terms. This allows all training samples to participate in the competition at varying intensities, where the weights of representation coefficients for incorrect classes are significantly reduced, thereby minimizing classification interference. The ADMM algorithm is utilized to address the DFCNR problem. Moreover, experiments on public face datasets demonstrate the proposed method’s superior performance.

The remainder of this article is organized as follows: the “Related works” section reviews the related work; the “Dual flexible competitive nonnegative representation-based classification” section provides in detail the DFCNR method; the “Model analysis” section describes a thorough analysis of the DFCNR model; the “Experiments and analysis” section validates the DFCNR method through extensive experiments; the “Conclusion” section concludes this article. To facilitate the description of the proposed DFCNR method, Table 1 presents the mathematical symbols utilized throughout this article.

Universal mathematical symbols used throughout this article.

Related works

Nonnegative representation-based classification

In the field of RBCM, numerous methods have improved performance by imposing various constraints on representation coefficients. However, they fail to consider the potential occurrence of negative values in these coefficients, which contradicts the actual physical properties of the data. Essentially, samples from the same class typically exhibit positive correlations, while samples from different classes are generally uncorrelated rather than negatively correlated. Thus, NRC introduces a nonnegative constraint, which effectively enhances the weights of homogeneous samples in the representation process while suppressing the weights of heterogeneous samples, thereby improving classification accuracy. The model is defined as follows:

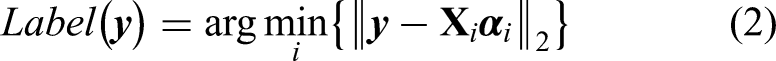

Since equation (1) is a nonnegative least squares problem without an analytical solution, the ADMM is employed to iteratively solve for the representation coefficients. Subsequently, the label of the test sample is assigned to the class with the minimum reconstruction residual as follows:

Collaborative-competitive representation-based classification

The core of CRC lies in two stages: (1) the test sample is collaboratively represented using the entire training set; and (2) it is classified by identifying the class with the smallest representation residual. In essence, the first step focuses on collaborative representation, while the second step emphasizes competitive representation of the test sample using training samples from different classes. However, traditional CRC models typically treat these two steps as independent processes, failing to fully account for their intrinsic relationship. To address this limitation, collaborative-competitive representation-based classification (CCRC)

21

introduces a competitive mechanism to enhance the representation effectiveness of the CRC model. The model is defined as follows:

In equation (3), the first term represents collaborative representation, while the third term corresponds to competitive representation. The regularization parameter

Dual flexible competitive nonnegative representation-based classification

Representation

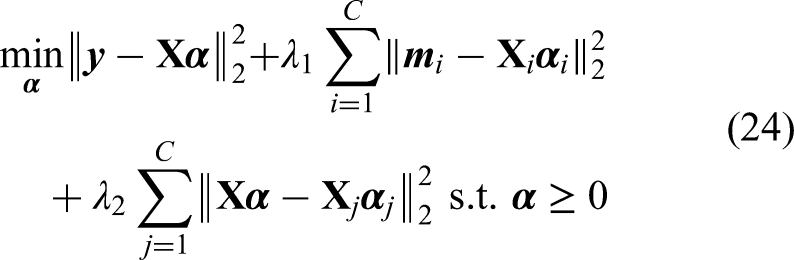

The proposed DFCNR method aims to introduce two significant competitive representation terms to improve classification accuracy. It can be mathematically expressed as follows:

In equation (4), the first component signifies collaborative representation, the second term denotes mean competition, and the third term represents the inter-class competition.

The mean competition term employs the class-wise mean of training samples as the competition target, promoting more discriminative representation coefficients by highlighting class-specific characteristics. The inter-class competition term captures the inherent relationships between the global representation and those of individual classes, thereby reinforcing the competitive representation between the correct class and the remaining classes to enhance classification accuracy. To mitigate the influence of irrelevant categories, flexible factors are integrated into both competition terms, ensuring that incorrect classes do not engage in competition with equal intensity. Importantly, when both

The overall classification flowchart of dual flexible competitive nonnegative representation (DFCNR).

Optimization

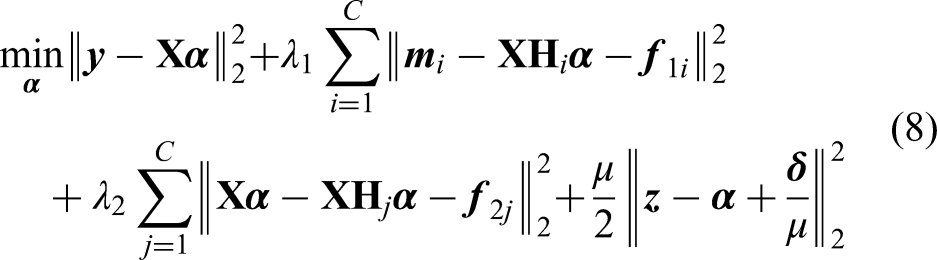

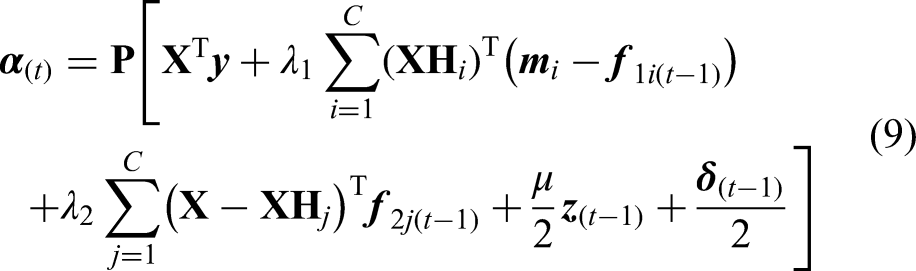

The representation coefficients serve as both the outcome of the representation phase and the basis for the classification phase, making their efficient solution crucial. Similar to NRC, the DFCNR model can be formulated as a nonnegative least squares problem, which lacks an analytical solution. To address this, we introduce an auxiliary variable

Equation (5) can be efficiently iteratively solved through ADMM, and its augmented Lagrange function (ALF) is as follows:

In equation (6),

(1) Update

We define the matrix

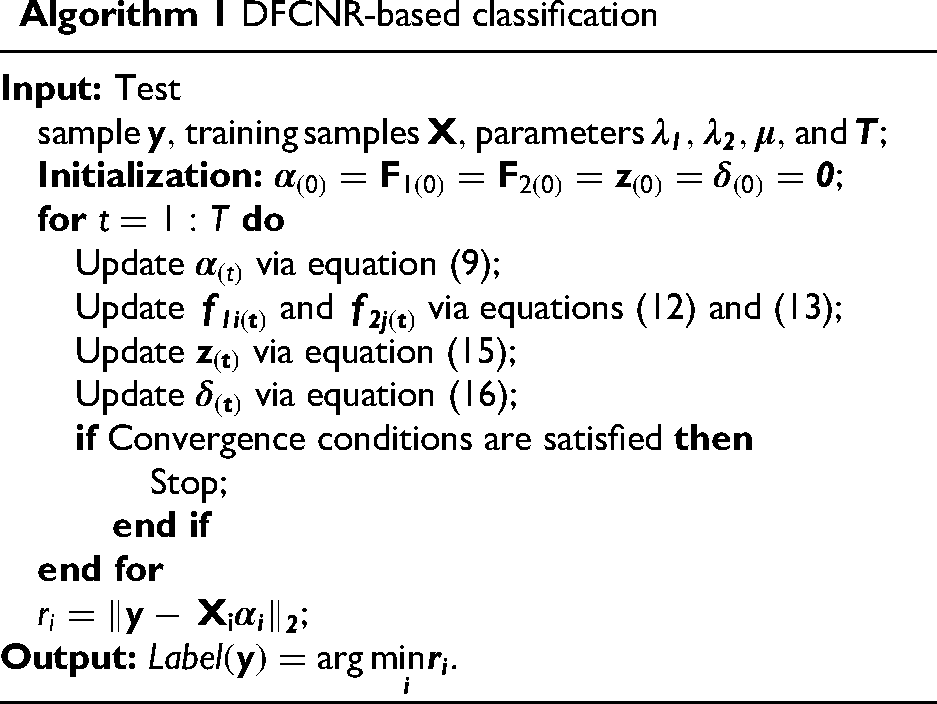

In equation (8), taking the partial derivative with respect to

In equation (9), the matrix

(2) Update

In equation (11), taking the partial derivative of

Similarly, the closed-form solution for

(3) Update

The analytical solution for

(4) Update

The iteration proceeds until convergence is achieved or the maximum number of iterations

Classification

Model analysis

Comparative analysis with baseline model

The DFCNR model optimizes on the basis of traditional nonnegative representation methods, aiming to enhance the performance of classification tasks. Traditional methods only impose a nonnegative constraint during the representation stage, neglecting the connection between representation and classification. DFCNR introduces a mean flexible competition and an inter-class flexible competition, leveraging training samples from different classes to represent test samples in a flexible competition manner. The representation coefficients not only serve as the output of the representation stage but also as the basis for classification decisions. In the classification decision stage, the decisive factor is the representation contribution. The representation contribution of the

Under the framework RBCM, a test sample can be expressed as a weighted sum of different classes, where the most relevant class exhibits the largest representation contribution. To compare the differences in representation contributions between DFCNR and NRC, a test sample from the AR dataset 22 was selected, and the representation contributions across different classes were computed for both models. Figure 2 presents the top five class-wise representation contributions of the NRC and DFCNR models for a test sample among 28 classes, with the class labels and their corresponding percentage contributions indicated in parentheses.

Comparison of the first five reconstructed samples between NRC and DFCNR. NRC: nonnegative representation-based classification; DFCNR: dual flexible competitive nonnegative representation.

As shown in Figure 2, the NRC model incorrectly amplifies the representation contribution of class 24, leading to a misclassification, whereas the DFCNR model accurately emphasizes the contribution of class 28, achieving correct classification. This improvement primarily stems from the flexible factors, mean competition, and inter-class competition mechanism introduced by DFCNR, which effectively enhances classification performance.

Comparative analysis with DFCNR-MFC

To investigate the significance of the mean flexible competition in the DFCNR model, this subsection removes this component from DFCNR and constructs the DFCNR-MFC (mean flexible competition) model for comparative analysis with the original DFCNR. The mathematical formulation is expressed as follows:

The DFCNR-MFC model employs the ADMM for solution, and its closed-form solution for the representation coefficients is as follows:

In Eq. (19), the matrix

For complex image samples, the features of heterogeneous samples have a significant impact on the overall reconstruction, particularly in RBCM. When evaluating data sample properties, the mean serves as a key indicator that summarizes the fundamental properties of a class. To address this issue, the mean of class samples is used to represent the features of that class, and training samples are employed to represent the mean sample in a competitive manner. This allows the representation coefficients to more accurately capture class-specific features and enhances the discriminability of the class-wise representation coefficients. To visually compare the differences in representation contributions between the two models, Figure 3 presents the results using a face-overlapping visualization.

Comparison of the first five reconstructed samples between DFCNR-MFC and DFCNR. DFCNR-MFC: dual flexible competitive nonnegative representation-mean flexible competition; DFCNR: dual flexible competitive nonnegative representation.

As illustrated in Figure 3, given a test sample from class 28, the DFCNR-MFC model demonstrates the most prominent representation contribution from class 24. However, its reconstructed sample appears blurred and indistinguishable, ultimately leading to misclassification. In contrast, the DFCNR model correctly identifies class 28 with the highest representation contribution, producing a clear and well-resolved reconstructed sample. These results further validate the advantages of mean competition in representation.

Comparative analysis with DFCNR-IFC

To thoroughly investigate the significance of inter-class flexible competition in the DFCNR, we eliminate this mechanism and construct a new comparative model, DFCNR-IFC (inter-class flexible competition). The mathematical formulation can be expressed as follows:

The DFCNR-IFC model employs the ADMM for solution, and its closed-form solution for the representation coefficients is as follows:

In equation (22), the matrix

The DFCNR model employs collaborative representation as its primary term, where test samples are represented by the complete set of training samples. Ideally, if a test sample belongs to the

As demonstrated in Figure 4, the DFCNR model correctly identifies the 90th class sample with the highest representation contribution, whereas the DFCNR-IFC model erroneously assigns the highest contribution to the 58th class sample (with the 90th class ranking only third). The DFCNR-IFC model overemphasizes localized features (e.g. mouth expression and illumination) while neglecting global sample similarity, leading to misclassification. In contrast, the DFCNR model captures critical discriminative information by intensifying competitive representation between the correct class and competing classes, thus achieving superior classification performance.

Comparison of the first five reconstructed samples between DFCNR-IFC and DFCNR. DFCNR-IFC: dual flexible competitive nonnegative representation-inter-class flexible competition; DFCNR: dual flexible competitive nonnegative representation.

Comparative analysis with DFCNR-F

Through the DFCNR-MFC and DFCNR-IFC models, we have systematically analyzed the effects of mean competition and inter-class competition mechanisms. To further investigate the advantages of the flexible factor in our framework, we construct a variant model, DFCNR-F (flexible), with the following objective function:

The DFCNR-F model employs the ADMM approach for solution, and its closed-form solution for the representation coefficients is as follows:

In equation (25), the matrix

The competitive representation mechanism serves as the crucial link between representation and classification, enabling reconstructed samples from the correct class to better approximate test samples. Since the class labels of test samples are typically unknown, conventional methods impose constraints on competitive representations across all classes, which may introduce interference from incorrect classes during decision-making. DFCNR innovatively optimizes this process through the introduction of flexible factors. These factors allow samples to participate in competition in a more adaptive manner, rather than enforcing universal participation from all classes. Under this mechanism, samples from the correct class naturally dominate the competition due to their high feature similarity with the test sample, while samples from incorrect classes have their lower weights due to feature dissimilarity, thereby reducing their interference in classification decisions. This flexible competition strategy not only enhances the influence of the correct class but also significantly mitigates the negative impact of incorrect classes, ultimately improving the model’s classification performance.

Figure 5 presents reconstructed samples corresponding to the top five classes with the highest representation contributions. For a test sample from class 55, the DFCNR-F method incorrectly ranked class 51 as the top candidate, relegating the true class (class 55) to second place and leading to misclassification. In contrast, the proposed DFCNR approach accurately identified class 55 as the ground-truth class, yielding a representation contribution of 16.52%, significantly surpassing the 9.39% attributed to class 51. This comparison highlights the superior accuracy of DFCNR in correct class identification and underscores the effectiveness of the flexible factor strategy in enhancing the model’s discriminative capability.

Comparison of the first five reconstructed samples between DFCNR-F and DFCNR. DFCNR-F: dual flexible competitive nonnegative representation-flexible; DFCNR: dual flexible competitive nonnegative representation.

Experiments and analysis

Experimental setting

This section comprehensively evaluates the effectiveness of the proposed DFCNR method on multiple public face datasets: AR, 22 Extended Yale B, 23 and GT. 24 We conduct comparative experiments against excellent methods, including NSC, 10 SVM, 25 SRC, 4 CRC, 7 CROC, 9 ProCRC, 8 NRC, 13 DRC, 11 DCANR, 16 and MWCRC. 12 Partial experimental results are cited from reference, 13 while other methods are optimized through parameter tuning under identical experimental conditions to achieve their highest classification accuracy (with the best performance indicated in bold). Moreover, we conduct a thorough analysis of the convergence and parameter properties of DFCNR, discussing the model’s characteristics and examining how different parameters influence its performance.

AR face recognition

The AR dataset, a benchmark in face recognition research, comprises over 4000 images of 126 subjects with varying facial expressions and illumination conditions. Our experiments utilized 100 subjects (50 males and 50 females), with 14 images per subject, 7 for training and 7 for testing. All images were cropped to 60

Sample images on the Aleix and Robert (AR) dataset.

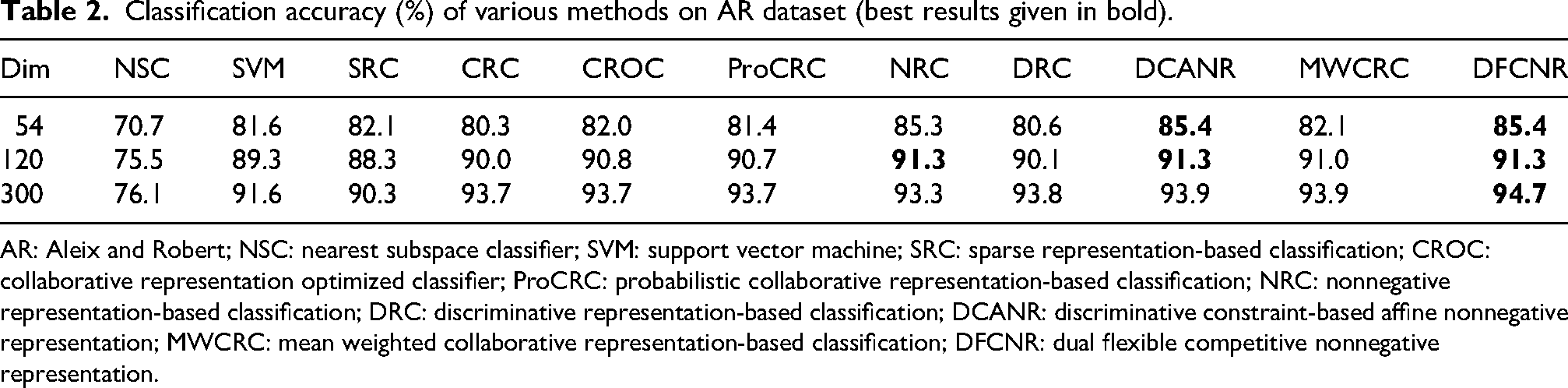

Table 2 presents the experimental results on the AR dataset. DFCNR performs excellently across all dimensions. At 54 dimensions, DFCNR outperforms NRC, DRC, and MWCRC by 0.1%, 4.9%, and 3.3%, respectively, and matches the performance of DCANR. At 120 dimensions, DFCNR achieves comparable results to NRC and DCANR, and surpasses MWCRC by 0.3%. At 300 dimensions, DFCNR achieves an accuracy of 94.7%, exceeding NRC and outperforming DCANR and MWCRC by 0.8%.

Classification accuracy (%) of various methods on AR dataset (best results given in bold).

AR: Aleix and Robert; NSC: nearest subspace classifier; SVM: support vector machine; SRC: sparse representation-based classification; CROC: collaborative representation optimized classifier; ProCRC: probabilistic collaborative representation-based classification; NRC: nonnegative representation-based classification; DRC: discriminative representation-based classification; DCANR: discriminative constraint-based affine nonnegative representation; MWCRC: mean weighted collaborative representation-based classification; DFCNR: dual flexible competitive nonnegative representation.

Extended Yale B face recognition

The Extended Yale B dataset is a widely used benchmark in face recognition, containing 2414 grayscale images of 38 individuals. Figure 7 illustrates some sample images. In the experiment, 32 images per person were selected for training, while the remaining images were used for testing. All images were cropped to 54

Sample images on the Extended Yale B dataset.

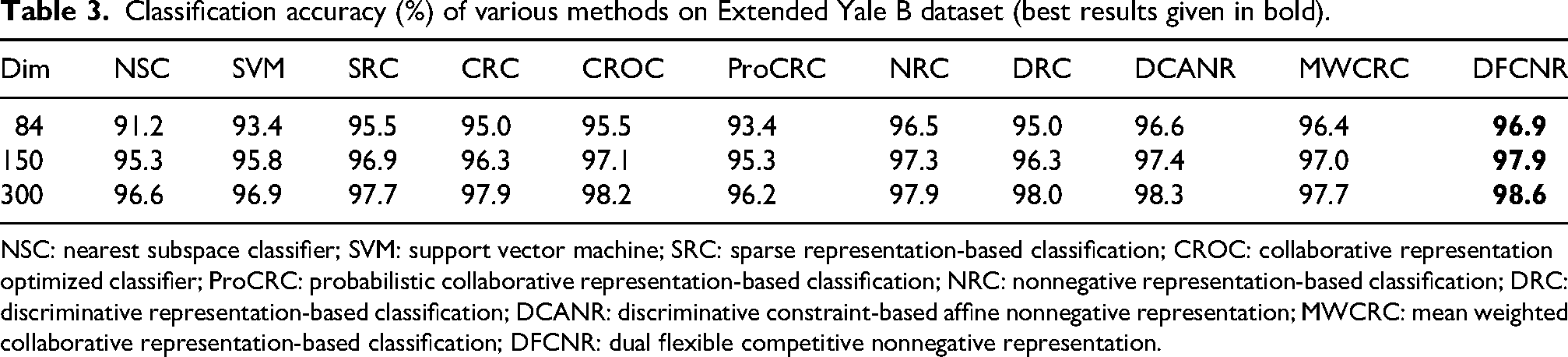

Table 3 presents the experimental results on this dataset, where DFCNR achieves the highest classification accuracy across all dimensions. At 84, 150, and 300 dimensions, DFCNR outperforms NRC by 0.4%, 0.6%, and 0.7%, respectively, while also surpassing state-of-the-art methods such as DCANR and MWCRC.

Classification accuracy (%) of various methods on Extended Yale B dataset (best results given in bold).

NSC: nearest subspace classifier; SVM: support vector machine; SRC: sparse representation-based classification; CROC: collaborative representation optimized classifier; ProCRC: probabilistic collaborative representation-based classification; NRC: nonnegative representation-based classification; DRC: discriminative representation-based classification; DCANR: discriminative constraint-based affine nonnegative representation; MWCRC: mean weighted collaborative representation-based classification; DFCNR: dual flexible competitive nonnegative representation.

GT face recognition

The GT dataset contains 750 images of 50 individuals, with an average of 15 images per person. The dataset includes a wide variety of expressions, backgrounds, and lighting conditions. Figure 8 displays several sample images. In the experiments, the first eight images of each individual are used for training, while the remaining images serve as the test set. All images are downsampled to 32

Sample images on the Georgia Tech (GT) dataset.

Table 4 presents the experimental results on the GT dataset. DFCNR achieves the same accuracy as DRC, DCANR, and MWCRC, while outperforming NRC by 0.3%. Through experiments on three challenging datasets, DFCNR demonstrates outstanding classification performance, marking a significant advancement in nonnegative representation research.

Classification accuracy (%) of various methods on GT dataset (best results given in bold).

GT: Georgia Tech; NSC: nearest subspace classifier; SVM: support vector machine; SRC: sparse representation-based classification; CROC: collaborative representation optimized classifier; ProCRC: probabilistic collaborative representation-based classification; NRC: nonnegative representation-based classification; DRC: discriminative representation-based classification; DCANR: discriminative constraint-based affine nonnegative representation; MWCRC: mean weighted collaborative representation-based classification; DFCNR: dual flexible competitive nonnegative representation.

Convergence analysis

By solving the DFCNR problem using the ADMM method, the iterative algorithm employed ensures robust convergence. To further explore this property, we investigated the convergence of the algorithm during the iterative process on three datasets. Figure 9 visually illustrates the trend of the ADMM algorithm’s convergence criteria as the number of iterations increases. It clearly demonstrates that the algorithm exhibits excellent convergence performance across all datasets.

Convergence curves of dual flexible competitive nonnegative representation (DFCNR) on three datasets.

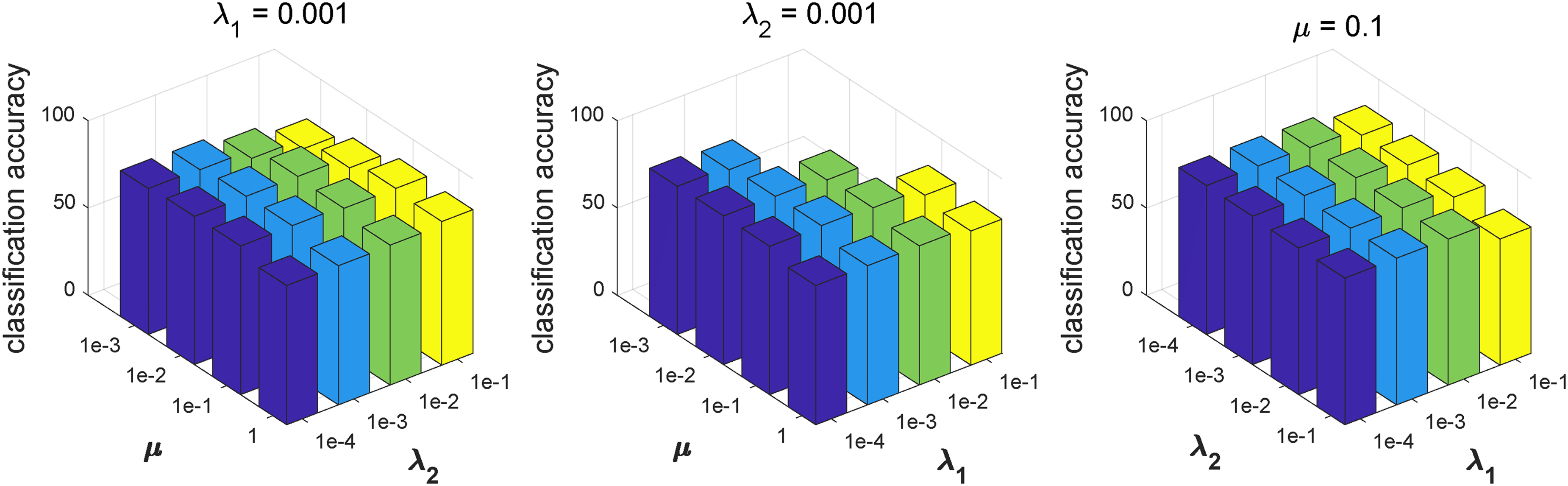

Parameter analysis

To further investigate the impact of different parameters on model performance, this subsection conducts a detailed analysis of the three key parameters of DFCNR:

The impact of parameters on classification accuracy in the Aleix and Robert (AR) dataset.

The impact of parameters on classification accuracy in the Extended Yale B dataset.

The impact of parameters on classification accuracy in the Georgia Tech (GT) dataset.

On the AR dataset, when

Conclusion

This article proposes the DFCNR method, which enhances the NRC framework by introducing a mean competition and an inter-class competition. The mean competition leverages the mean value of each class’s training samples as the competitive benchmark, guiding the representation coefficients to precisely extract the unique class-specific features. The inter-class competition links the global representation with class-specific components, reinforcing the contrast between the correct class and the others to boost classification accuracy. Moreover, DFCNR introduces flexible factors into the competition process, allowing for adaptive representation that effectively reduces the influence of incorrect classes. Extensive experiments demonstrate that DFCNR significantly outperforms existing methods in face recognition tasks.

A promising direction for future work is the integration of nonnegative representation with deep learning architectures into a hybrid model. In such a framework, a deep network would serve as a feature extractor, while nonnegative representation acts as the final decision-maker. This strategy would harness the complementary strengths of both: the powerful discriminative power of deep features and the interpretability, as well as the robustness in small-sample scenarios, inherent to nonnegative representation.

Footnotes

Acknowledgements

The authors express their sincere gratitude to the editors and reviewers for their invaluable contributions to the publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Key Research and Development Program of China (2021YFE0116900), the National Natural Science Foundation of China (42175157 and 42475151), the General Project of Natural Science Research of Jiangsu Higher Education Institutions (22KJB520037), the "Taihu Light" Science and Technology Project of Wuxi (K20231003), the Wuxi University Research Start-up Fund for Introduced Talents (2021r032).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and publication of this article.