Abstract

In this paper, we consider performance of relaxation iterative methods for four types of image deblurring problems with different regularization terms. We first study how to apply relaxation iterative methods efficiently to the Tikhonov regularization problems, and then we propose how to find good preconditioners and near optimal relaxation parameters which are essential factors for fast convergence rate and computational efficiency of relaxation iterative methods. We next study efficient applications of relaxation iterative methods to Split Bregman method and the fixed point method for solving the L1-norm or total variation regularization problems. Lastly, we provide numerical experiments for four types of image deblurring problems to evaluate the efficiency of relaxation iterative methods by comparing their performances with those of Krylov subspace iterative methods. Numerical experiments show that the proposed techniques for finding preconditioners and near optimal relaxation parameters of relaxation iterative methods work well for image deblurring problems. For the L1-norm and total variation regularization problems, Split Bregman and fixed point methods using relaxation iterative methods perform quite well in terms of both peak signal to noise ratio values and execution time as compared with those using Krylov subspace methods.

Keywords

Introduction

Image deblurring is the process of restoring or estimating the true image from the observed blurred and noisy image. There are many mathematical models which have been proposed to solve image deblurring problems. In this paper, we consider four types of image deblurring problems which are expressed as the following minimization problems

Notice that the blurring matrix A is determined by the point spread function (PSF) and the boundary condition imposed outside of the image. In this paper, we only use the reflexive boundary condition, which means that the scene outside the image boundaries is a mirror image of the scene inside the image boundaries.

Let the operator vec denote an operator which transforms an m × n matrix C into a long vector c of size N = m n by stacking the columns of the matrix C from left to right, i.e.

Four types of image deblurring problems (1) to (4) usually use Krylov subspace iterative methods to solve large sparse linear systems arising from their computational processes. The purpose of this paper is to study efficient applications of relaxation iterative methods to four types of image deblurring problems and then to compare their performances with those of Krylov subspace iterative methods. Since convergence rate of relaxation iterative methods depends on the choices of preconditioners and relaxation parameters, it is crucial to find good preconditioners and optimal relaxation parameters. Since the order of blurring matrix A is usually very large, computation of optimal relaxation parameters is very time-consuming. So the novel contribution of this paper is to propose how to choose good preconditioners and near optimal relaxation parameters which provide fast convergence rate and efficient computation for relaxation iterative methods. The relaxation iterative methods to be used in this paper are parameterized Uzawa (PU), 5 OPR, 6 and generalized symmetric SOR (GSSOR)7,8 methods which have been proposed for solving singular saddle point problems, and the Krylov subspace method to be used is the preconditioned LSQR (PLSQR) method.9,10

This paper is organized as follows. In the next section, we briefly review relaxation iterative methods for solving saddle point problems. In the “Relaxation iterative methods for Tikhonov regularization problem” section, we study an application of relaxation iterative methods to the Tikhonov regularization problem (1) with three cases of regularization matrices D. In the “L1-norm regularization problem using relaxation iterative methods” section, we study an application of relaxation iterative methods to Split Bregman method 11 for solving the L1-norm regularization problem (2) with three cases of D described in the “Relaxation iterative methods for Tikhonov regularization problem” section. In the “TV regularization problems using relaxation iterative methods” section, we study an application of relaxation iterative methods to Split Bregman method for solving the TV regularization problems (3) and (4). In the “Fixed point method using relaxation iterative methods” section, we study an application of relaxation iterative methods to the fixed point method for solving the TV regularization problem (4) which has been proposed in Chen et al. 4 In the “Numerical experiments” section, we provide numerical experiments for four types of image deblurring problems to evaluate the efficiency of relaxation iterative methods. This evaluation has been done by comparing performances of relaxation iterative methods with those of the PLSQR method. Lastly, some conclusions are drawn.

Review of relaxation iterative methods for saddle point problems

In this section, we briefly review relaxation iterative methods for solving the following saddle point problem

Relaxation iterative methods for Tikhonov regularization problem

In this section, we study an application of the relaxation iterative methods to the Tikhonov regularization problem (1) for solving image deblurring problems. The best way to solve (1) numerically is to treat it as a least squares problem

If the size of the original image X = array(x) is m × n, then the size of blurring matrix A is N × N with N = mn, which is very large and sparse when m and n are large. So, the linear system (7), or equivalently the least squares problem (6), is usually solved using Krylov subspace methods such as preconditioned CGLS, PLSQR, and so on.2,9,10,17,18

Another existing approach for solving the linear system (7) is to solve the following generalized saddle point problem19,20

In this section, we propose a different approach for solving the linear system (7). Let

Then, the linear system (7) is equivalent to

We now consider how to solve the singular saddle point problem (9) using relaxation iterative methods efficiently. In this paper, we only consider three relaxation iterative methods, PU, OPR, and GSSOR, which have explicit formulas for computing their optimal relaxation parameters. We first introduce three cases of regularization matrices D to be used in the Tikhonov regularization problem (1).

D corresponding to the Laplacian operator −Δ (Case 1)

The first case of D is a finite difference matrix corresponding to the Laplacian −(xss + xtt) of the image X ∈ R

m

×

n

, where xss and xtt denote the second-order partial derivatives in the vertical direction and the horizontal direction, respectively. Then the matrix

D corresponding to the gradient operator ∇ (Case 2)

The second case of D is a finite difference matrix corresponding to the gradient (xs, xt) T of the image X ∈ R m × n , where xs and xt denote the first-order partial derivatives in the vertical and horizontal directions, respectively.

Let D1,

m

and D1,

n

be m × m and n × n matrices obtained by finite difference approximations to the first-order partial derivatives xs and xt. That is, when m = 4, the matrix D1,

m

is given by

Then the matrix

where

D corresponding to differential operator −(xss, xtt) T (Case 3)

The third case of D is a finite difference matrix corresponding to the differential operator −(xss, xtt) T of the image X ∈ R m × n , where xss and xtt denote the second-order partial derivatives in the vertical and horizontal directions, respectively.

Let D2,

m

and D2,

n

be finite difference approximate matrices corresponding to −xss and −xtt which are defined in Case 1. Then the matrix

Algorithms for relaxation iterative methods

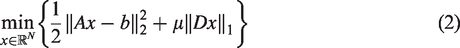

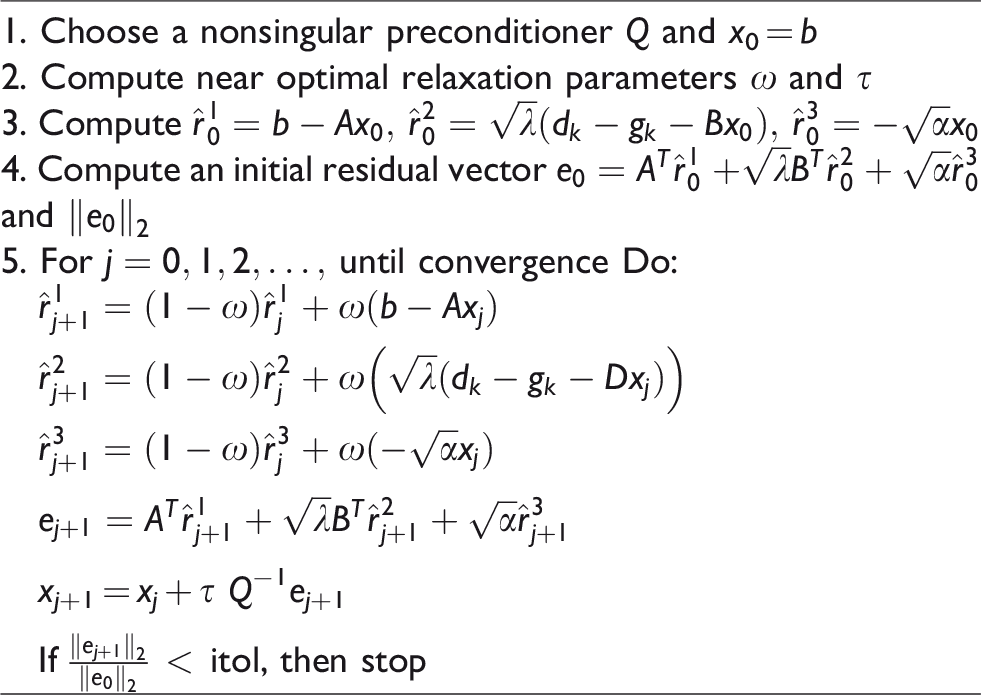

We provide efficient algorithms of relaxation iterative methods for solving the singular saddle point problems (9). Here we only provide an efficient algorithm for PU method since other two relaxation methods (OPR and GSSOR methods) can be done similarly.

In Algorithm 1, tol denotes the tolerance of stopping criterion which is set to 10−2 for test problems used in this paper.

Notice that the convergence rate of Algorithm 1 depends upon the choices of a good preconditioner Q and optimal relaxation parameters ω and τ. We first describe how to choose a good nonsingular preconditioner Q efficiently. Based on the theory of relaxation iterative methods, Q is chosen to be a symmetric positive definite matrix which approximates Step 1: Construct symmetric approximation matrices CTΛaC ≈ A and CTΛdC ≈ D using the DCT2 Step 2: Construct ≈ ATA + δ2DTD Step 3: Let If σii < Cut-Off, then σii = 1 Step 4: Construct a symmetric positive definite preconditioner Q = CTΣC which modifies the ill-conditioned matrix

Here Λ a and Λ d are diagonal matrices, C is the orthogonal two-dimensional discrete cosine transformation matrix, the DCT2 refers to the two-dimensional discrete cosine transformation, and Cut-Off is a positive small value which guarantees the well-conditioned and positive-definite properties of Q. When constructing Q, the matrix C is never computed and only the diagonal matrix Σ is computed. This is because matrix-vector multiplication with C can be easily done using Matlab function dct2 without constructing C. All the diagonal elements of Σ can be easily computed from the corresponding PSFs without constructing A and D. Hence the matrix Q is an efficient preconditioner whose construction and computation with a vector can be done easily without requiring a lot of computational amounts.

We next describe how to choose near optimal relaxation parameters ω and τ of Algorithm 1. Let μmin and μmax be the smallest and largest nonzero eigenvalues of the matrix

Since the order of A is N = m n which is usually very large, computation of μmin and μmax is very time-consuming. Thus, we want to compute near optimal parameters ω and τ instead of computing optimal parameters ωo and τo. Let νmin and νmax be the smallest and largest nonzero eigenvalues of the matrix

L1-norm regularization problem using relaxation iterative methods

In this section, we study an application of relaxation iterative methods to Split Bregman method 11 for solving the L1-norm regularization problem (2) with three cases of D described in the “Relaxation iterative methods for Tikhonov regularization problem” section. Note that the L1-norm regularization problem (2) with Case 2 of D is called the anisotropic TV regularization problem.

We first provide Split Bregman method for solving (2). Let us consider the problem (2) as a constrained minimization problem

Rather than considering (11), we will consider the following unconstrained minimization problem with a penalty parameter λ > 0

Given x0 = b and d0 = g0 = 0, the alternating Split Bregman method using an auxiliary vector g for solving (12) has the following iteration form

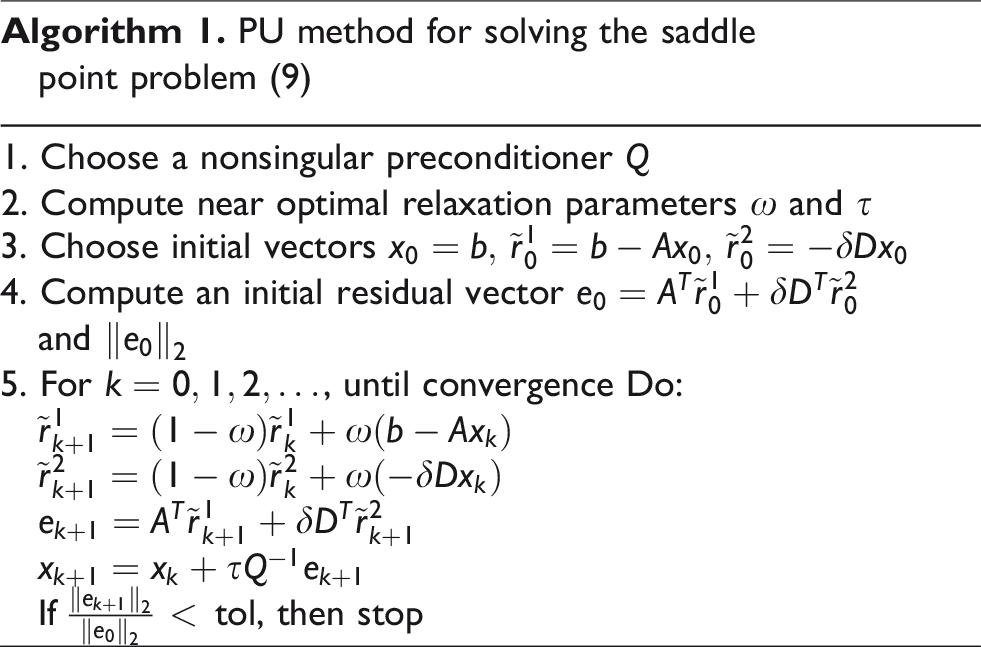

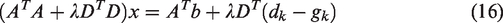

These iterations yield the following decoupled subproblems. Finding xk+1 from subproblem (13) is equivalent to solving the following linear system for x

Another subproblem (14) can be solved by applying the following shrinkage formula

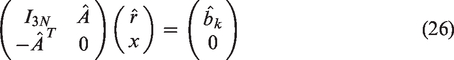

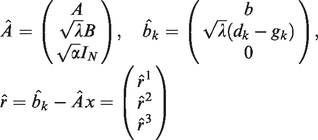

We next propose how to solve the linear system (16) using relaxation iterative methods instead of solving it directly using Krylov subspace methods. Let

Then, the linear system (16) is equivalent to

In Algorithm 2, itol denotes the tolerance of stopping criterion which is set to 10−3 for test problems used in this paper. The preconditioner Q and near optimal relaxation parameters ω and τ are computed in the same way as was done in the “Relaxation iterative methods for Tikhonov regularization problem” section. The only difference is the value of s which is set to

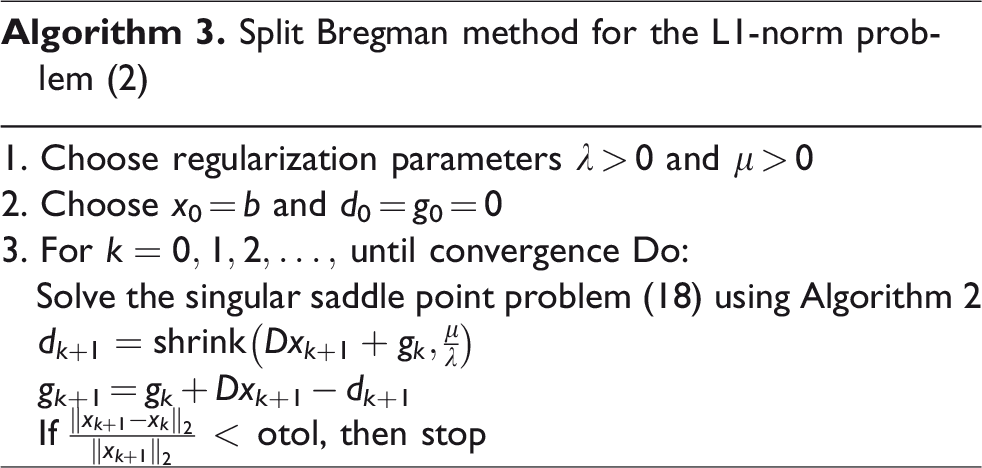

Finally, we provide the Split Bregman method using relaxation iterative method for solving the L1-norm regularization problem (2).

In Algorithm 3, otol denotes the tolerance of stopping criterion which is set to 10−3 for test problems used in this paper. When using Algorithm 2, the preconditioner Q and near optimal relaxation parameters ω and τ are computed only once at the first iteration of Algorithm 3. This is because the coefficient matrix (ATA + λDTD) is unchanged during the whole iterations.

TV regularization problems using relaxation iterative methods

In this section, we study an application of relaxation iterative methods to Split Bregman method for solving the TV regularization problems (3) and (4). We first provide Split Bregman method for solving problem (3). Problem (3) can be considered as a constrained minimization problem

Rather than considering (19), we will consider the following unconstrained minimization problem with a penalty parameter λ > 0

Given x0 = b and d0 = g0 = 0, the alternating Split Bregman method using an auxiliary vector g for solving (20) has the following iteration form

Subproblem (21) is equivalent to solving the following linear system for x

From subproblem (22),

Let

Then, solving the linear system (24) for x is equivalent to finding x from the following singular saddle point problem

Thus, we want to solve the singular saddle point problem (26), which can be done efficiently using Algorithm 2 with D = B, instead of solving (24) directly using Krylov subspace method.

We next provide Split Bregman method for solving the TV regularization problem (4). In the similar way as was done for (3), one can easily obtain the following iteration form

We only need to consider the subproblem (27) since (28) is exactly the same as (22). (27) is equivalent to solving the following linear system for x

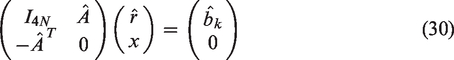

Let

Then, solving the linear system (29) for x is equivalent to finding x from the following nonsingular saddle point problem

Notice that the coefficient matrix of equation (30) is nonsingular since

method for solving the saddle point problem (30)

In Algorithm 4, itol is set to 10−3, and the preconditioner Q and near optimal relaxation parameters ω and τ are chosen in the similar way as was done in Algorithm 2. The difference is that Q is chosen to be a symmetric positive definite matrix which approximates ATA + λBTB + αIN. Since ATA + λBTB + αIN is nonsingular for α > 0, Cut-Off value is not required when choosing Q, i.e. Cut-Off is set to 0, and α is set to 10−6 for all test problems.

Combining Algorithms 2 and 4, we can obtain Algorithm 5 which is the Split Bregman method using relaxation iterative method for solving the TV regularization problems (3) and (4). Here otol denotes the tolerance of stopping criterion which is set to 10−3. When using Algorithms 2 and 4, the preconditioner Q and near optimal relaxation parameters ω and τ are computed only once at the first iteration of Algorithm 5 since the coefficient matrices are unchanged during the whole iterations.

Split Bregman method for the TV problems (3) and (4)

Fixed point method using relaxation iterative methods

In this section, we study an application of relaxation iterative methods to the fixed point method for solving the TV regularization problem (4) with bilateral constraint 0 ≤ x ≤ 255 which has been proposed in Chen et al.

4

We first introduce the fixed point method described in Chen et al.

4

Let

Fixed point method for the TV problem (4)

In Algorithm 6,

Hence, the linear system in line 3 is solved by applying relaxation iterative methods to the saddle point problem (31) instead of solving it directly using Krylov subspace methods. Note that (31) can be solved using Algorithm 2 with D = IN and appropriate changes for the input data. Also note that when using Algorithm 2, the preconditioner Q and near optimal relaxation parameters ω and τ are computed only once at the first iteration of Algorithm 6.

Numerical experiments

In this section, we provide numerical experiments for four types of image deblurring problems to evaluate the efficiency of relaxation iterative methods. This evaluation has been done by comparing performances of relaxation iterative methods with those of Krylov subspace methods. For numerical experiments, we have used one Krylov subspace method PLSQR and three relaxation iterative methods PU, OPR, and GSSOR. All of the three relaxation iterative methods have the same convergence rates, but GSSOR takes more CPU time than PU and OPR since GSSOR has more computational amounts per iteration than PU and OPR. Also note that PU and OPR methods produce exactly the same performances. For this reason, we only provide numerical results for the PU method.

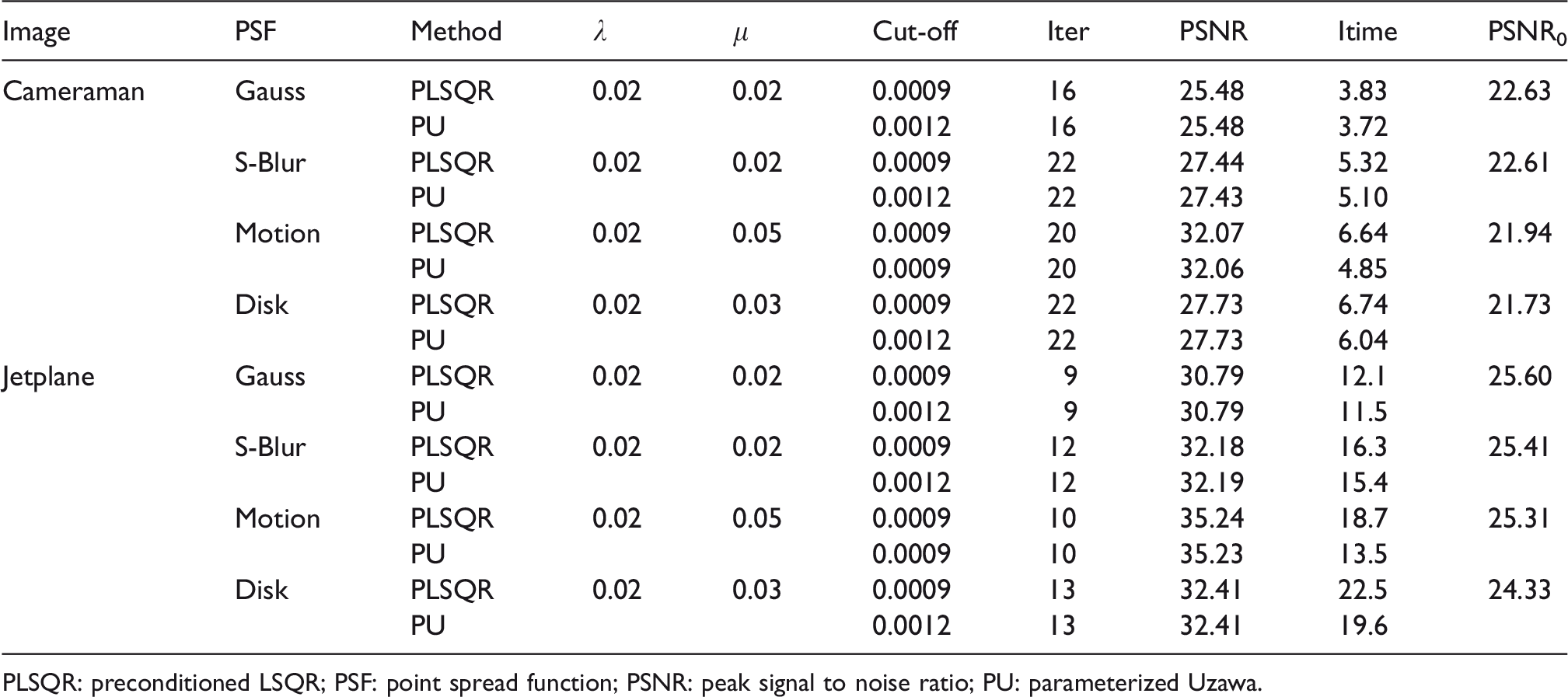

For the case of Tikhonov regularization problem (1), performance of Algorithm 1 has been compared with that of PLSQR method for solving the linear system (7) (see Tables 1 to 3). For the cases of L1-norm and TV regularization problems (2) to (4), performances of Algorithms 3 and 5 have been compared with those of Split Bregman methods using PLSQR (see Tables 4 to 8). Here the Split Bregman methods using PLSQR stand for Algorithms 3 and 5 in which computational steps of solving the saddle point problems (18), (26), and (30) are replaced by those of solving the linear systems (16), (24), and (29) using PLSQR, respectively. Also, performance of Algorithm 6 has been compared with that of the fixed point method using PLSQR (see Table 9).

Numerical results for Case 1 of Tikhonov regularization problem.

PLSQR: preconditioned LSQR; PSF: point spread function; PSNR: peak signal to noise ratio; PU: parameterized Uzawa.

Numerical results for Case 2 of Tikhonov regularization problem.

PLSQR: preconditioned LSQR; PSF: point spread function; PSNR: peak signal to noise ratio; PU: parameterized Uzawa.

Numerical results for Case 3 of Tikhonov regularization problem.

PLSQR: preconditioned LSQR; PSF: point spread function; PSNR: peak signal to noise ratio; PU: parameterized Uzawa.

Numerical results for Case 1 of L1-norm regularization problem.

PLSQR: preconditioned LSQR; PSF: point spread function; PSNR: peak signal to noise ratio; PU: parameterized Uzawa.

Numerical results for Case 2 of L1-norm regularization problem.

PLSQR: preconditioned LSQR; PSF: point spread function; PSNR: peak signal to noise ratio; PU: parameterized Uzawa.

Numerical results for Case 3 of L1-norm regularization problem.

PLSQR: preconditioned LSQR; PSF: point spread function; PSNR: peak signal to noise ratio; PU: parameterized Uzawa.

Numerical results of Algorithm 5 for TV regularization problem (3).

PLSQR: preconditioned LSQR; PSF: point spread function; PSNR: peak signal to noise ratio; PU: parameterized Uzawa.

Numerical results of Algorithm 5 for TV regularization problem (4).

PLSQR: preconditioned LSQR; PSF: point spread function; PSNR: peak signal to noise ratio; PU: parameterized Uzawa.

Numerical results of Algorithm 6 for TV regularization problem (4).

PLSQR: preconditioned LSQR; PSF: point spread function; PSNR: peak signal to noise ratio; PU: parameterized Uzawa.

The preconditioners Q for the PU method are chosen as was described in the “Relaxation iterative methods for Tikhonov regularization problem” section, i.e. Q = CTΣ C, and the preconditioners

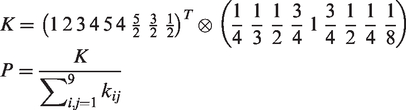

All numerical tests have been performed using Matlab R2017b on a personal computer equipped with Intel Core i5-4570 3.2 GHz CPU and 8 GB RAM. For numerical experiments, we have used four types of PSFs which are Gaussian blur, S-blur, Motion blur, and Disk blur of size 9 × 9. The PSF array P for Gaussian blur of size 9 × 9 is generated by the Matlab function

The blurred and noisy image b is generated by

We have used two test images called Cameraman and Jetplane for numerical experiments (see Figure 1). The pixel size of Cameraman image is 256 × 256, and the pixel size of Jetplane image is 512 × 512. The blurring matrix A whose size is large is not constructed since its construction is very time-consuming and matrix-vector multiplication with A can be performed without constructing A (see Hankey and Nagy 1 for details).

(a, b): Cameraman images, (c, d): Jetplane images, (b, d): Noisy images blurred by Motion Blur. (a) True image, (b) blurred and noisy image, (c) true image, and (d) blurred and noisy image.

In Tables 1 to 9, “Cut-Off” represents the Cut-Off values that are used for constructing the preconditioners Q and

Numerical results for Tikhonov regularization problem (1) are provided in Tables 1 to 3 and Figure 2, and numerical results for L1-norm and TV regularization problems (2) to (4) are provided in Tables 4 to 9 and Figures 3 and 4. As can be seen in Tables 1 to 9 and Figures 2 to 4, deblurring algorithms using PU method restore the true image as well as those using PLSQR method except for the Motion blur of Tikhonov regularization problem for which PLSQR restores the true image a little bit better than PU. As may be expected, Split Bregman methods for L1-norm and TV regularization problems (2) to (4) and fixed point method for TV regularization problem (4) restore the true image better than deblurring algorithms for Tikhonov regularization problem.

Restored images by Tikhonov regularization problem for Motion blur ((a, b, c): restored images by PLSQR for three cases of D, (d, e, f): restored images by PU for three Cases of D). (a) Case 1 (PSNR = 29.34), (b) Case 2 (PSNR = 29.10), (c) Case 3 (PSNR = 29.19), (d) Case 1 (PSNR = 29.09), (e) Case 2 (PSNR = 28.91), and (f) Case 3 (PSNR = 29.02).

Restored images by L1-norm regularization problem and TV regularization problem (3) for Motion blur ((a, b, c): restored images by PLSQR for two cases of D and TV, (d, e, f): restored images by PU for two cases of D and TV). (a) Case 1 (PSNR = 34.61), (b) Case 3 (PSNR = 35.20), (c) TV (PSNR = 35.24), (d) Case 1 (PSNR = 34.59), (e) Case 3 (PSNR = 35.20), and (f) TV (PSNR = 35.23).

Restored images by TV regularization problem (4) for Motion blur ((a, c): restored images by Split Bregman method, (b, d): restored images by fixed point method, (a, b): restored images by PLSQR, (c, d): restored images by PU). (a) TV (PSNR = 32.07), (b) TV (PSNR = 30.78), (c) TV (PSNR = 32.06), and (d) TV (PSNR = 30.79).

For Tikhonov regularization problem, deblurring algorithms using PU have less execution time than those using PLSQR for small size of Cameraman image, while deblurring algorithms using PU have more execution time than those using PLSQR for large size of Jetplane image (see Tables 1 to 3). The reason for this is that computational step of finding near optimal relaxation parameters ω and τ of the PU method requires a lot of CPU time for large size of images.

For L1-norm and TV regularization problems, Split Bregman methods using PU have less execution time than those using PLSQR except for Case 3 of L1-norm regularization problem (see Tables 4 to 8). For Case 3 of L1-norm regularization problem, Split Bregman methods using PU have more execution time than those using PLSQR. This is because convergence rate of PU method is slower than that of PLSQR method for every iteration of Split Bregman method. For Split Bregman method, near optimal relaxation parameters ω and τ of the PU method are computed only once at the first iteration, so computing time for finding near optimal relaxation parameters ω and τ is negligible since it is small as compared with the total computing time of Split Bregman method.

Algorithm 5 for TV regularization problem (4) performs almost as well as that for TV regularization problem (3) (see Tables 7 and 8). Problem (4) has one additional parameter α, but it has some advantages that Cut-Off value is not required and one value of α = 10−6 performs well for all test problems. On the other hand, problem (3) requires a Cut-Off value which varies depending upon test problems to be used. In this respect, TV regularization problem (4) may have a preference over problem (3).

Split Bregman method for problem (4) (i.e. Algorithm 5) restores the true image better and has faster convergence rate than the fixed point method for problem (4) (i.e. Algorithm 6) (see Tables 8 and 9). Since the fixed point method has slow convergence rate and computational amount of PU per iteration is smaller than that of PLSQR, Algorithm 6 using PU has much less execution time than that using PLSQR (see Table 9).

Conclusions

In this paper, we have studied efficient applications of relaxation iterative methods to four types of image deblurring problems which are Tikhonov, L1-norm, and TV regularization problems. To this end, we proposed how to find good preconditioners and near optimal relaxation parameters which provide fast convergence rate and efficient computation for relaxation iterative methods. Numerical experiments show that these techniques work well for deblurring algorithms using relaxation iterative methods.

For Tikhonov regularization problem, deblurring algorithms using PU have less execution time than those using PLSQR for small size of Cameraman image, while deblurring algorithms using PU have more execution time than those using PLSQR for large size of Jetplane image since finding near optimal relaxation parameters ω and τ of the PU method requires a lot of CPU time for large size of images.

For Split Bregman and fixed point methods solving L1-norm and TV regularization problems, near optimal relaxation parameters ω and τ of PU are computed only once at the first iteration, so computing time for finding the near optimal parameters is negligible since it is small as compared with the total computing time of Split Bregman and fixed point methods. Hence, Split Bregman and fixed point methods using PU have less execution time than those using PLSQR. In particular, the fixed point method using PU has much less execution time than that using PLSQR since the fixed point method has slow convergence rate. Overall, Split Bregman and fixed point methods using PU with near optimal relaxation parameters perform quite well in terms of both PSNR values and execution time as compared with PLSQR.

Split Bregman method for TV regularization problem (4) performs almost as well as that for TV regularization problem (3). Even if problem (4) has one additional parameter α, it does not require Cut-Off and one value of α = 10−6 performs well for all test problems. However, problem (3) requires a Cut-Off value which should be well chosen appropriately for each test problem. In this regard, TV regularization problem (4) may have a preference over TV regularization problem (3).

Footnotes

Acknowledgements

The author would like to thank the anonymous reviewers and the Editor C.H. Lai for their valuable comments and suggestions which greatly improved the quality of the paper.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by Basic Science Research Program through the National Research Foundation of Korea (NRF) funded by the Ministry of Education (NRF-2016R1D1A1A09917364).