Abstract

In this work, we propose a fast iterative algorithm for the reconstruction of digital breast tomosynthesis images. The algorithm solves a regularization problem, expressed as the minimization of the sum of a least-squares term and a weighted smoothed version of the Total Variation regularization function. We use a Fixed Point method for the solution of the minimization problem, requiring the solution of a linear system at each iteration, whose coefficient matrix is a positive definite approximation of the Hessian of the objective function. We propose an efficient implementation of the algorithm, where the linear system is solved by a truncated Conjugate Gradient method. We compare the Fixed Point implementation with a fast first order method such as the Scaled Gradient Projection method, that does not require any linear system solution. Numerical experiments on a breast phantom widely used in tomographic simulations show that both the methods recover microcalcifications very fast while the Fixed Point is more efficient in detecting masses, when more time is available for the algorithm execution.

Keywords

Introduction

Digital breast tomosynthesis (DBT) is a 3D emerging technique for the diagnosis of breast tumors that has some advantages over the traditional 2D mammography suffering from the fact that the lesions can be hidden by overlaying tissues in the plane representation of a 3D object. In DBT, the breast volume is reconstructed in a stack of 2D slices and the structure is resolved in space, reducing the impact of the overlapping tissues on the tumor and making easier the tumor detection by the radiologist.

The volume image is reconstructed from 2D cone beam projections data acquired at a limited number of views over a limited angular range, in order to reduce the X-ray dose across the body. Due to the data incompleteness, DBT image reconstruction is challenging. One pass algorithms, such as Filtered Back Projection (FBP), traditionally used in complete data tomography, introduce artifacts and noise and lose the information about tissue density when used in limited angle tomography.1–3 Iterative statistical reconstruction algorithms, that maximize the similarity between the computed and measured projections at each iteration and enable the introduction of priors, have some advantages over FBP in the noise reduction and in the identification of the object borders. Investigation of iterative algorithms derived from complete data tomography and applied to DBT, such as Maximum Likelihood or Algebraic Iterative Algorithms (ART), can be found in Wu et al.

4

and Zhang et al.

5

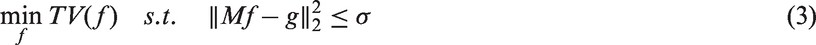

Mathematically, they can be formulated as minimization problems of the form:

As evidenced in Lu et al., 2 tomosynthesis reconstruction is an illposed problem both for the ill posedness of the continuous projection operator 6 and for the data incompleteness, that causes infinite solutions to the underdetermined least squares problem (2). 2 For this reason, the idea of using a regularization operator has been introduced in Lu et al. 2 and Sidky et al., 7 with the double purpose of loosening up the consistency with the data and of selecting an image with the prescribed regularity among the infinite possible solutions. The regularization function makes assumption on the reconstructed image and forces the choice of one of the infinite possible solutions.

Recently, the compressed sensing theory 8 has been used in Computed Tomography. If the image is supposed sparse in some domain, the minimization of the 1-norm in that domain guarantees the sparsity of the solution. For breast images, where the interest is to identify microcalcifications and/or masses, the image gradient is supposed to be sparse and the Total Variation (TV) regularization function has been successfully employed.1,7,9–12

In this case, the minimization problem can have a constrained formulation:

Some iterative algorithms for the solution of problem (3) or (4) applied to the reconstruction of 3D tomographic images have been investigated in literature.2,9,10,13–17 All the cited algorithms are first order algorithms, since they use only the gradient information of the objective function. We refer in particular to Jensen et al. 15 for a comparative analysis of first order methods for the minimization of a least-squares TV function applied to 3D tomography.

Aim and contribution

In this paper, we apply to DBT image reconstruction a Newton-like method, the Lagged Diffusivity Fixed Point (FP) algorithm by Vogel, 18 that uses second order information of the TV function. The FP algorithm is efficiently used in other imaging applications, such as deblurring or denoising, and in this paper, we show that it performs very well even in DBT imaging reconstruction. On the basis of the results obtained in Jensen et al., 15 where first order methods are applied to 3D tomography, we compare the proposed algorithm with the Scaled Gradient Projection (SGP) algorithm accelerated by Barzilai–Borwein rules, as the representative of efficient first order methods for this particular application.

The main contribution of the paper is the efficient implementation of a method with second order information for the reconstruction of DBT images, i.e. a very large size problem with unstructured matrix. The method has better performance when compared with the methods traditionally used for DBT images reconstruction both in the first iterations and at convergence, so that in the final images not only the microcalcifications but also the masses can be better distinguished. Hence, it could be potentially used in clinical applications.

The paper is organized as follows. In the section “Mathematical model of digital tomosynthesis” we present the mathematical model of DBT image formation and the numerical optimization problem for the DBT image reconstruction; in the section “Algorithms for DBT image reconstruction” we describe, with implementation details for this particular application, the proposed FP method and the SGP method considered for comparison; in the section “Numerical experiments” the numerical results on a digital breast phantom are reported and lastly in the section “Conclusions” we make some final observations.

Mathematical model of digital tomosynthesis

Digital tomosynthesis

Digital tomosynthesis is a cone-beam tomographic technique where the projections are obtained along a smaller range of incident angles.

19

Usually, 10–25 projections along a range of up to 40–45° are obtained and from these projections a pseudo-3D representation of the object is reconstructed, with lower resolution in the z-direction perpendicular to the detector plane (see Figure 1 for a schematic representation of the tomosynthesis system).

20

There are different geometries of motion of the X-ray tube, but the reconstruction algorithms can be easily adapted to each one without significant changes.

5

In our case, the source rotates in the yz plane with x-coordinate equal to zero. The detector is positioned onto the xy plane and the breast is compressed over it.

Tomosynthesis system in yz plane.

The mathematical model of image formation

If we suppose a monochromatic X-ray source, the continuous mathematical model of the image formation process is described by Beer’s law that relates the measured values Np is the number of pixels in the detector (Np is of the order of millions in the real systems);

20

The discretization of equation (5) is:

Nv is the number of voxels (few billions in the real systems) in the discretized 3D object;

If we take the negative logarithm of (6) and we reorder all the resulting projections and noise elements in vectors g and η of length

Numerical model for tomosynthesis image reconstruction

DBT images are the grayscale representation of the attenuation coefficients of each voxel of the breast. Hence, aim of the reconstruction algorithms is to compute the values of the vector f, given the projection data g.

From the previous model (6), it is clear that the problem of reconstructing f can be reduced to the solution of the linear system (7). In the real cases

Therefore, the reconstructed image f can be computed as the solution of a minimization problem of the form:

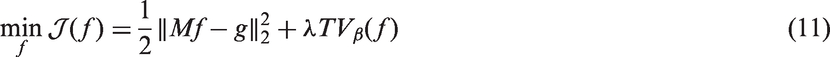

Since the TV is not differentiable, usually it is substituted by a smooth differentiable function. For example, in Jensen et al.

15

the Huber function is used instead of the TV. In deblurring and denoising applications (see for example18,24,25) a small parameter β is often added and a smoothed version of the TV function is obtained as:

Hence, the reconstructed image is the solution of the minimization problem:

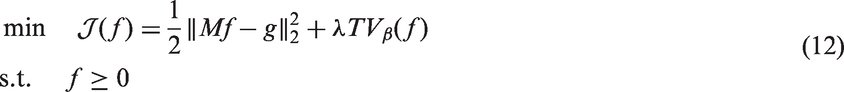

Since the reconstructed image f should have nonnegative values, the constraint

Algorithms for DBT image reconstruction

In this section, we briefly revise the algorithms that we compare for the solution of problem (11): the SGP and the FP methods. The SGP method finds a nonnegative solution of the minimization problem (11). It is a gradient-like method

26

accelerated by using a scaling matrix and the Barzilai–Borwein rules for the choice of the steplength. This algorithm has been recently proposed in imaging applications with very good results in terms of efficiency and precision.27,28 The FP method is a Newton-like method for the solution of the minimization problem (11),

26

whose descent step is computed by solving a linear system. The coefficient matrix is an approximation of the Hessian of the objective function

In this paper, we present an efficient implementation of the FP method tailored for the tomographic problem; at the same time the FP method maintains its desirable convergence properties.

Scaled Gradient Projection method

The SGP method is a gradient-like method solving the constrained minimization problem:

Avoiding to introduce significant computational costs, simple projections on

The SGP algorithm. For more details, see [27].

SGP: Scaled Gradient Projection.

Implementation notes. The step 5 of the SGP algorithm computes the most appropriate step length μk for the descent passage, with a backtracking loop. This is the most expensive point of the algorithm, because it requires one function evaluation in each inner execution plus one matrix-vector product.

For the evaluation of

Moreover, only one function assessment and one gradient evaluation are involved for each main iteration k.

We use a convergence criterium based on the difference between two successive iterates, since in imaging applications it is useless to go on with the iterations if the image does not change any more:

Lagged Diffusivity FP method

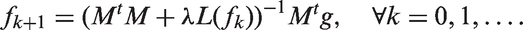

The FP method is a Newton-like method for the solution of the minimization problem (11), with an FP method that makes use of approximated second order information.

To solve the convex optimization problem (11), the first order condition

that leads to the following updating rule:

In this notation,

Following the notations used in Vogel,

18

L(f) can be written as:

The FP algorithm.

FP: Fixed Point.

Implementation notes. In step 1,

In step 2, the matrix Hk is never stored since the matrix L is made of only five diagonal vectors (of maximum length Nv) and

Step 3 is the most computationally expensive part, because a large size linear system is to be solved with the CG algorithm. We require the CG not to converge exactly, in order to get more regularized final solutions. For this reason, the CG algorithm is stopped when the residual 2-norm is smaller than a tolerance of

Finally, step 4 performs a backtracking procedure, as in the SGP algorithm, to compute the step-length αk.

The FP stopping criterion is set as:

Numerical experiments

The experiments of this section aim to show the efficiency and effectiveness of the FP method, comparing it with the SGP method. We analyze the results obtained by the two methods after few iterations of the algorithms, i.e. what can be obtained during clinical trials, and at convergence, when a posteriori executions can be performed offline. We think that both the simulations are interesting in practical use.

All the methods are implemented in Matlab R2015a and the experiments are performed on a computer equipped with two processors Intel Pentium G2120 3.1 GHz, with 3.6 GB RAM, using Linux operating system.

Test problem

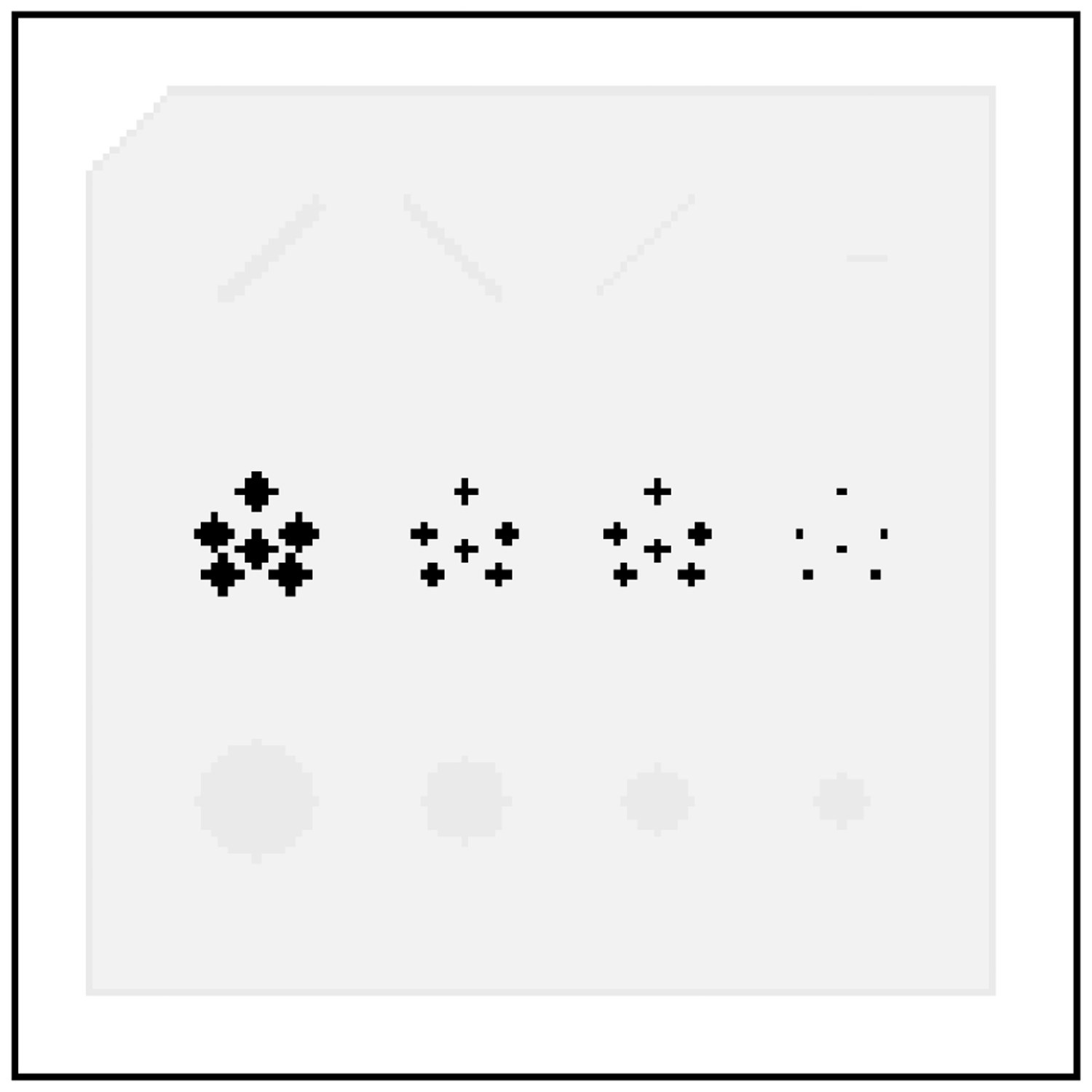

The tests are made on a digital version of a real accreditation phantom, called “CIRS mod. 015.” 29 In our tests, it is discretized in 128 × 128 × 15 voxels with a resolution of 1 × 1 × 3 mm3. These dimensions are proportional to a real case.

Figure 2 shows the central slices of this phantom: inside a voxel-thin boundary of simulated skin, objects like fibers, microcalcification and masses are neatly put in a uniform background of adipose tissue. Outside the skin-made boundary, air is simulated with null attenuation coefficient and it is part of the volume we want to reconstruct together with the phantom. This phantom is used to check the competency of mammographic systems because the included objects are very important for the early detection of a breast cancer. In more detail, they are of different dimensions and thickness, in order to analyze and compare the 3D graphic resolution of different reconstructions, and their attenuation values are performed taking into account a low X-ray scan of 20 keV.

Central layers of the digital mammographic phantom CIRS mod. 015, sliced in 15 digital planes with resolution of 128 × 128 pixels. (a–e): Layers (6–10). Layer 8, in reverse gray-scale.

Attenuation coefficients of different materials, related to a 20 keV scan.

In our tests, the detector is made of 128 × 128 pixels and its extension is the same of the volume area on the xy-plane. Moreover the volume leans on the detector, so it captures all the projections of the CIRS even from the most angled views (since it is smaller than the volume, because of the surrounding air ring). The tomosynthesis acquisition parameters that we use in our simulations are inspired by the real systems produced by IMS (Internazionale Medico Scientifico). We consider 13 angles, uniformly distributed from −17 and +17°. The central cone beam starts 64 cm above the detector and the X-ray monochrome source wheels along the x-direction, with a 59 cm ray.

The simulation problem is defined by computing the projections:

Evaluation criteria. Outputs are evaluated by computing the

Figure 4 shows profiles and objects, on the eighth layer, that we use to analyze all the outputs in more details. Denoising, in fact, is estimated taking the standard deviation value ( Objects we will focus on to analyze reconstructions deeply.

Algorithms parameters. The TV makes use of

The regularization parameter has been heuristically chosen for both algorithms as

Both the algorithms always start from an initial null vector

In the SGP code, we used L = 10 to construct the scaling matrices Dk,

The maximum number of iterations for the inner CG loop, in the FP algorithm, is 150.

Results at the algorithms convergence

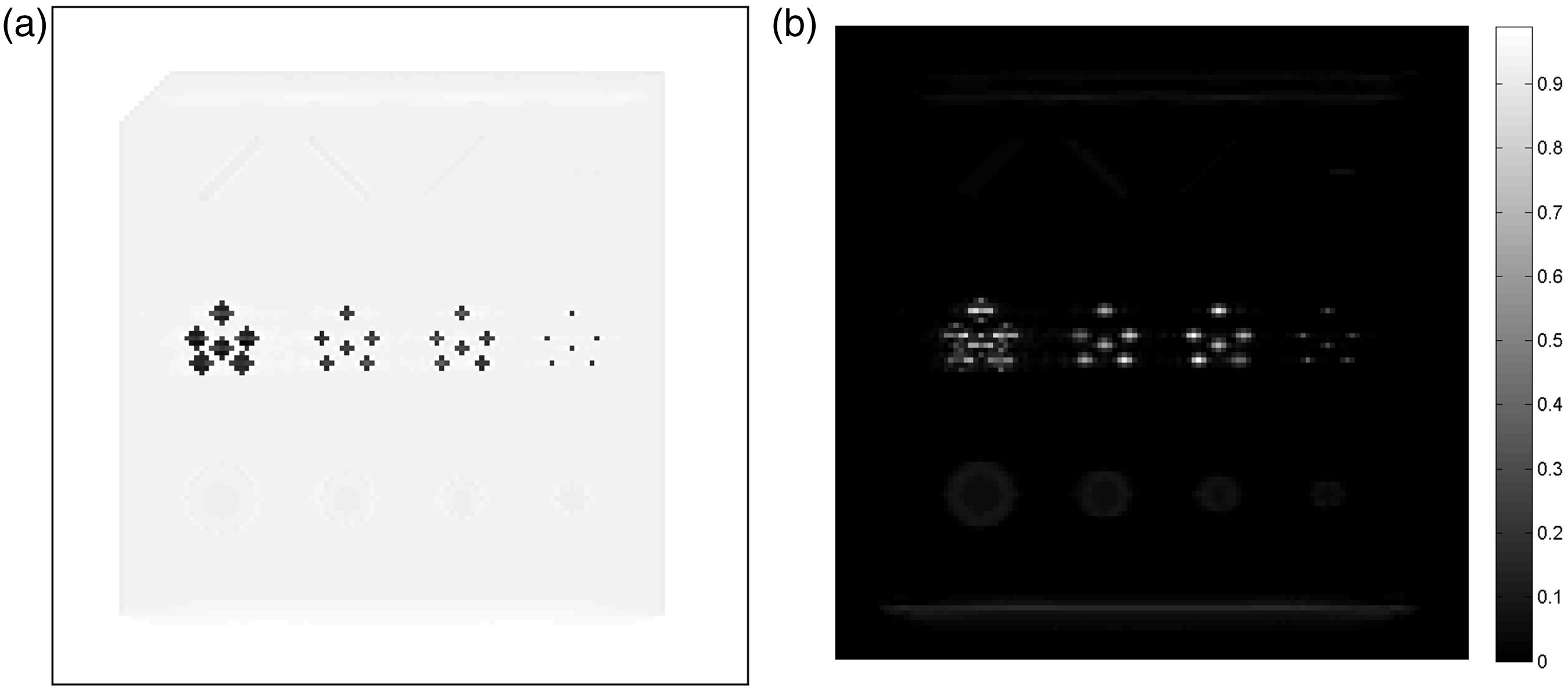

Figures 5 and 6 show the central layer (in reverse grayscale) of SGP and FP reconstructions together with the absolute errors between the original and the reconstructed images. First of all, we can appreciate that all the microcalcifications are detected, even the smallest ones, in both outputs and this is an encouraging remark. Actually speaking, the SGP is faster than the FP method, since it requires about one fifth time to get up to its convergence: more precisely, the SGP convergence takes 105 s while the FP makes use of 505 s to provide its output. On the other hand, the FP output looks much more precise than the SGP output. In fact, focusing on the central layer that has values in [0, 3], the FP pointwise absolute error is always smaller than SGP reconstruction in the eighth layer and the related absolute error. FP reconstruction in the eighth layer and the related absolute error. To notice that the color bar scale is one order smaller than that in Figure 5.

Results after few algorithms iterations

Due to the practical aim, where there is no time to wait for the convergence, it is essential to analyze how the algorithms behave during their execution, with untimely outputs. From now on, we analyze the performance in temporal windows of 5, 20, and 60 s of computations: it is interesting to see how the profiles (related to the lines plotted in Figure 4(b) and (c)) on the partial reconstructions become closer and closer to their target.

Figures 7 and 8 point out the main differences between the two methods. If we concentrate on the microcalcifications, we can realize how the SGP is really fast in its first computations: it makes the microcalcifications visible after only 5 s, while in that time the FP hardly moves from its initial null guess. On the contrary, in 20 s the FP becomes more effective, since the smallest object detection is very accurate, and later outputs show that the SGP never manage in reaching the tops, even in its convergence. On the other hand, the FP reconstructions are almost perfect.

Profiles related to Figure 4(b), taken from some early reconstructions of both methods. In black continuous lines the exact profiles, in red circles the SGP, and in blue asterisks the FP profiles. (a): 5″, (b): 20″, (c): 60″, and (d): Convergence. Profiles related to Figure 4(c), taken from some early reconstructions of both methods. In black continuous lines the exact profiles, in red circles the SGP, and in blue asterisks the FP profiles. (a): 5″, (b): 20″, (c): 60″, and (d): Convergence.

Focusing on the masses (Figure 8), the gap between the two outputs becomes more and more noticeable since the blue lines representing the FP output approach more and more to their target, as time goes by, while the SGP suffers from inaccuracy when it deals with all these low-attenuation elements.

At last, we cannot appreciate significant improvements between the 60-s and the convergent outputs.

Results for the FP and SGP methods at different execution times.

SGP: Scaled Gradient Projection; FP: Fixed Point.

The second column reports the number of performed iterations, together with the number of backtracking executions for the SGP method (between brackets), and the total number of CG inner loops for the FP method (between brackets). As we can see, each FP iteration takes more time than a SGP iteration.

Finally, the third column compares the two algorithms in terms of noise removal through the standard deviation value. We remark that the images obtained with the FP method always have a very low value of standard deviation. The values of the means of these test are not reported, since they all approximate the exact value with an error lower than

3D accuracy

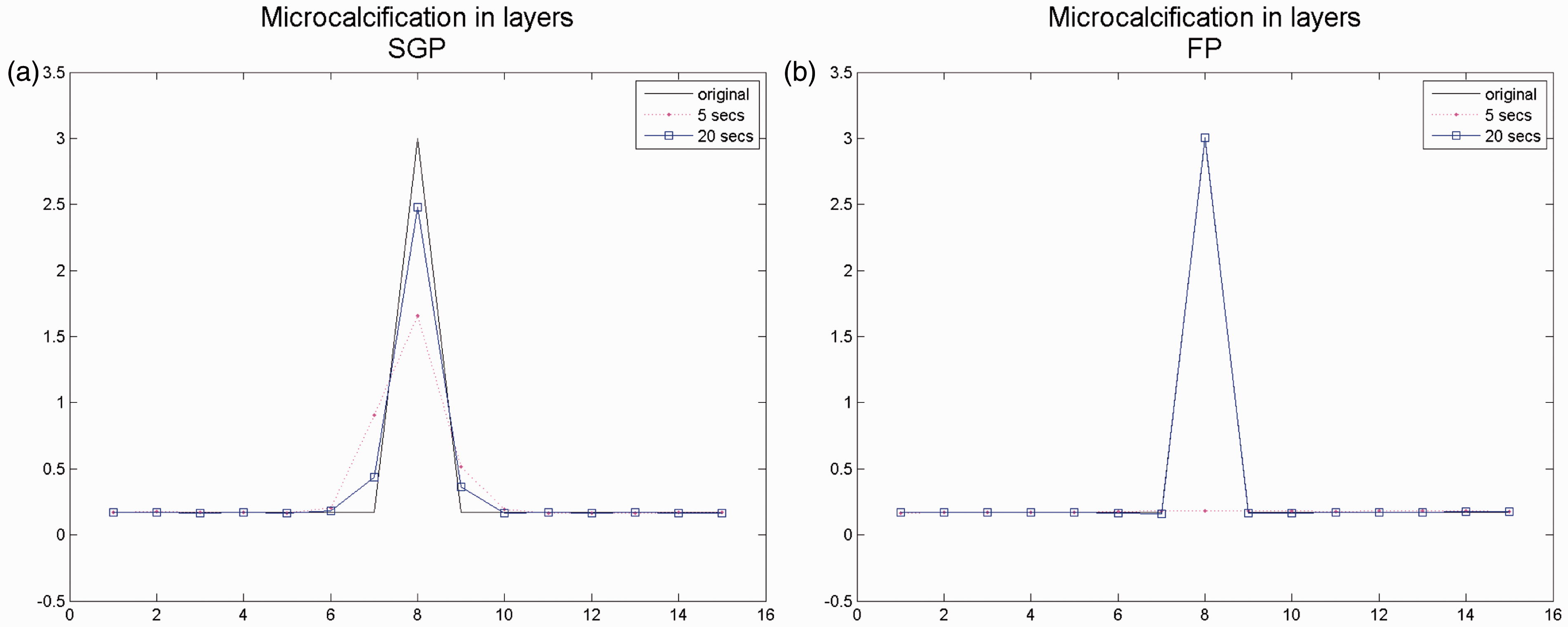

As we are interested in three-dimensional reconstructions, we now investigate the accuracy of both the methods along the z direction, in the two voxels pointed by arrows in Figure 4(d).

Looking at the plots in Figure 9 related to a thin microcalcification, we can confirm the excellent capability of both the methods to locate voxel-wise elements of high intensity in their exact positions, even in few iterations. Hence, we plot the values of the reconstructed voxels in the 15 slices along the z axis. This is an important validation for the prefixed model.

z-Profiles related to the one-voxel thin microcalcification: in black continuous lines the exact profiles, in red dots the output after 5 s, and in blue squares output after 20 s. (a): SGP and (b): FP.

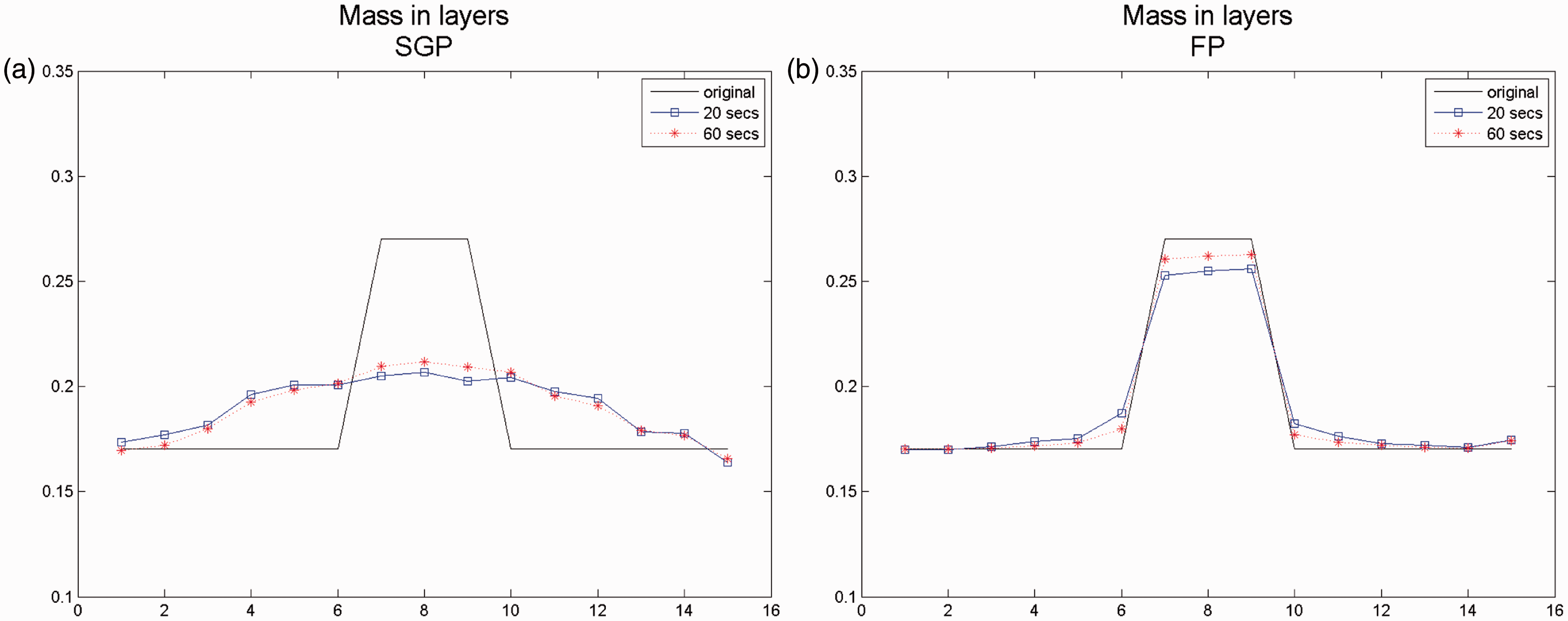

On the contrary, the plots in Figure 10 are related to the central voxel of the biggest mass on the left (that is three layers thick) and they reveal the inability of the SGP algorithm to detect lighter and plainer objects; the FP method, on the contrary, needs short time to detect smoother structures. We remark that, for what concerns the masses, in the z direction the two methods produce very different results, since the SGP method detects an object with very low intensity diffused in too many slices, while the FP method accurately finds the intensity and depth of the original structure. These profiles are concerning reconstructions obtained after 20 and 60 s. We notice that if we make many more iterations of the SGP algorithm the relative error does not decrease and the reconstructed image remains the same.

z-Profiles related to the central voxel of the biggest mass: in black continuous lines the exact profiles, in blue squares the output after 20 s, and in red asterisks the output after 60 s. (a): SGP and (b): FP.

Conclusions

In this paper, we have proposed to use an FP method for the solution of a regularized TV least squares minimization problem for the reconstruction of DBT images. In tomographic applications, only first order methods have been used up to now, because one iteration of a first order method is less expensive than one iteration of a method using approximate second order information. We proposed an efficient implementation of the FP method, suited for DBT applications, that makes it competitive with a fast first order method such as SGP.

Numerical experiments performed on a medium size phantom showed that the FP method is very well performing, both after very few iterations and at convergence. Both the FP and SGP methods detect microcalcifications in a very short time, while in a longer time the FP method recovers different structures, as masses, that cannot be obtained with SGP. For practical purposes, this is very useful when a deeper investigation is needed to make a diagnosis, if the operator has quite a lot of time or a powerful computing configuration available.

Footnotes

Acknowledgements

We thank the I.M.S. Company for the collaboration in the choice of the phantom and in the discussion of the results.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The research is part of the project Nuovi aspetti della regolarizzazione nell’imaging funded by the GNCS.