Abstract

Soccer clubs use rubrics to guide scouts and coaches toward uniform rating standards, yet empirical evidence on their reliability remains limited. Therefore, this exploratory field study examined the inter-rater reliability of scouts’ assessments during an 11v11 live match using a rubric. We focused on current and potential performance, information combination methods, and scouts’ confidence and perceived difficulty. Sixteen male scouts (Mage = 55 years, Mexperience = 6 years) each evaluated three to four under-13 players (yielding 23 unique players in total), rating seven rubric performance indicators on both current and potential performance: motoric/physical, technical execution, attacking on the ball, attacking positioning, defensive duels, defending positioning, and transitioning. Overall current and potential performance scores were established in two different ways: based on the scouts’ judgments (i.e., clinical combination), and by calculating the mean of their indicator ratings (i.e., actuarial combination). Across both these combination methods, inter-rater reliability was moderate for overall current performance (ICC = .61 clinical, ICC = .61 actuarial), but poor for overall potential performance (ICC = .37 clinical, ICC = .42 actuarial). Clinical versus actuarial combination made little difference in reliability. Moreover, scouts reported similar confidence assessing potential but greater difficulty compared to current performance. The findings suggest that evaluations of potential are especially prone to unreliability and highlight the need to further examine reliability in soccer scouting.

It is common practice for scouts and coaches to subjectively assess players during matches to identify future elite performers.1,2 To support accurate selection processes, these assessments must be both reliable and valid. 3 However, recent studies reveal low inter-rater reliability or agreement between scouts and coaches, indicating considerable differences in assessments of the same players.4–7 This is problematic because reliability sets the upper bound of criterion validity, 8 meaning that unreliability constrains the predictive accuracy of assessments. In practice, this implies that two scouts from the same club – who are expected to provide similar judgments from the organization's point of view – may arrive at different evaluations due to their individual subjective “judgment personalities”. 9 This unreliability can result in suboptimal selection decisions for clubs, raises concerns about fairness, and is increasingly recognized in athlete selection as an important source of error.3,10,11

Regarding athlete assessment practices, we observe that many clubs employ rubrics, consisting of predefined performance indicators (e.g., technical ability, speed, passing) rated on standardized scales (e.g., 1 = poor, 5 = excellent). In theory, rubrics should increase inter-rater reliability, as they guide evaluators to focus on the same criteria and apply uniform rating standards. Indeed, meta-analyses in the domains of hiring interviews and job performance evaluations have shown that approaches which increase assessment structure improve reliability.12–14 However, empirical research into rubrics and related reliability in soccer player assessment remains limited. Therefore, the main aim of the present study is to investigate the inter-rater reliability of scouts’ assessments using a rubric.

Current and potential performance

When identifying talent in children and early adolescents, there is often a gap between current and potential performance. For example, relative age and maturational differences can give older or early-developing players temporary advantages, making them appear more promising than their younger or later-developing peers.15–18 Indeed, research has shown that success at junior level is a limited predictor of success at senior level.19–21 Accordingly, scholars have emphasized the importance of distinguishing between current performance and future potential. 22 Practitioners likewise stress that their primary task is not only to evaluate present performance but also to estimate a player's long-term potential. However, research in soccer indicates that, although coaches recognize the importance of potential, they nevertheless tend to prioritize current performance in selection practices. 23 Moreover, studies demonstrate that subjective assessments of potential are strongly correlated with current ability.24,25 This raises questions about how accurately subjective assessment can capture potential.

Less is known, however, about the role of rubrics in the assessment of both current and potential performance, and the reliability of these assessments. Because potential is less observable and more open to interpretation, attempts to assess it may show reduced inter-rater reliability compared to current performance. Therefore, the first sub-aim is to explore how assessing current versus potential performance affects inter-rater reliability when using a rubric. Moreover, with potential being inherently less certain, scouts may also feel less confident and experience greater difficulty when making such assessments. Accordingly, the second sub-aim is to investigate scouts’ confidence and experienced difficulty in making current and potential performance assessments.

Clinical and actuarial combination

When completing a rubric consisting of multiple indicators, scouts produce separate ratings for each indicator. These separate ratings can be combined into an overall score either clinically, through a scout's subjective judgment in their mind, or actuarially, using a predefined decision rule or formula.26,27 Across many domains, actuarial combination of information generally yields higher reliability and validity than clinical combination,26,28–30 primarily because it ensures consistent integration of information across cases, thereby removing unreliability. 9 Surprisingly, in a soccer context, Bergkamp et al. 5 found no significant difference in inter-rater reliability between actuarially and clinically combined rubric indicator ratings. One possible explanation they provided was that participants, who stemmed from different soccer organizations, interpreted and rated the indicators differently, resulting in lower reliability for the actuarial combination. To investigate this topic further, the third sub-aim of this study is to explore how the method of combining rubric indicator ratings into an overall score – clinically or actuarially – affects inter-rater reliability with scouts stemming from a single soccer organization.

The study aims are examined in a field experiment in which scouts from a professional soccer academy assessed players during a live match. a Given the limited sample size and the exploratory nature of the work, we primarily focus on describing our findings, rather than formal significance testing.

Methods

Participating scouts

Sixteen male scouts from a Dutch professional soccer academy participated (Mage = 55.13, SD = 12.90, range = 28–78; Mexperience = 6.25 years, SD = 6.75, range = 1–22). All scouts were formally appointed by the academy as volunteers and had completed a three-session training program on talent identification provided by the HAN University of Applied Sciences. Participation was voluntary and uncompensated. The study was approved by the Research Ethics Committee of HAN University of Applied Sciences, Arnhem and Nijmegen, the Netherlands, on the 14th of April 2025 (approval no. ECO 659.04/25).

Assessed players

The assessed players stemmed from two Dutch male U13 teams who competed in a friendly 11v11 match on a regular-sized pitch. One team was from the participating academy, and the other from a comparable professional academy. Both teams played in the second national division, which is the second-highest level for their age category in the Netherlands. Players and their parents were informed about the study in advance. The match consisted of three 25-min periods separated by 5-min breaks; only the first two periods were used for assessment. b

All outfield players from both teams were eligible for assessment (N = 24, including substitutes who could only enter between periods). Scouts were randomly assigned to players using a random number generator. Each player was observed in either the first or second period, ensuring that if scouts evaluated the same player, they did so simultaneously. For depersonalization, players were identified only by randomly assigned jersey numbers, and no personal data was collected. The probability that scouts had prior knowledge of the players they were assigned was low, particularly for players from the visiting team. Given the random assignment, any potential influence of prior knowledge would not have systematically influenced the assessments.

Materials and procedure

The academy's standard rubric was developed by the head scout and head of coaching, reviewed by the second author (SP) and another sport researcher, which led to refinements of the items. It was then tested by scouts and further discussed with the head scout, head of coaching, and researchers, resulting in a finalized version. During one of the three talent identification training sessions (see Participating Scouts paragraph), scouts were familiarized with these rubrics by watching in-game footage, using the rubrics to rate players, and collectively discussing their ratings. However, the rubrics are not yet routinely used in practice by the participating scouts.

For this study, a shortened, research-adapted version of the academy's standard rubric was created (Supplementary Material 1). A subset of items of the rubric was selected through discussions between the head of scouting and the research team to align with the relatively short assessment period and the fact that scouts had to assess two players simultaneously. This selection focused on directly observable indicators (e.g., motoric/physical abilities and technical execution), and less on more mental attributes (e.g., self-regulation and coachability). The final version contained seven indicators: (1) motoric/physical, (2) technical execution, (3) attacking on the ball, (4) attacking positioning, (5) defensive duels, (6) defending positioning, and (7) transitioning after losing/winning possession, each rated on a 10-point scale with four descriptive levels (1–4 = Mediocre, 5–6 = Fair, 7–8 = Good, 9–10 = Very Good).

Data were collected during an evening session organized by the professional soccer academy. Upon arrival, scouts provided informed consent, received the rubrics and instructions, and then the match began.

During the match, each scout evaluated two players per period, completing four player assessments in total. For each player, scouts rated all seven indicators twice: once for current and once for potential performance (i.e., projected performance in two years at the U15 level). They then provided (a) overall current and potential performance scores (1–10), (b) confidence ratings for both these assessments (1 = not at all confident, 5 = very confident), and (c) experienced difficulty ratings (1 = very easy, 5 = very difficult).

Scouts observed the first two periods of the match from an elevated stand and were instructed not to communicate with each other during the assessments. A researcher was present to ensure adherence to this instruction. Afterward, scouts completed a short questionnaire, c and the program concluded with a debriefing.

Statistical analyses

Data and code are available on OSF. For the reliability analyses, we focused on the overall current and potential performance scores, combined clinically or actuarially. Scouts provided the clinical overall scores (i.e., the scores they provided for overall current and potential performance). We calculated the actuarial overall scores by taking the unweighted mean of seven indicators as rated by the scouts (only calculated if at least five indicators were rated).

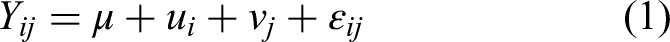

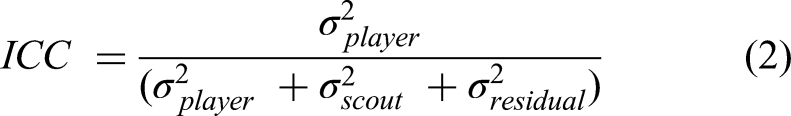

To assess inter-rater reliability, we estimated an intraclass correlation coefficient (ICC) for each of the four overall performance scores (i.e., clinical current performance, clinical potential performance, actuarial current performance, and actuarial potential performance) using linear mixed-effects models with random intercepts for both players and scouts (Equation 1). The ICCs were computed directly from the variance components of these models. This approach handles the unbalanced, partially crossed structure of the data, where each player was rated by one or more scouts and each scout rated only a subset of players. The ICC reflects the proportion of total variance attributable to differences between players (Equation 2) and represents the reliability of a single scout's rating. Conceptually, it generalizes Shrout and Fleiss's ICC(2,1) formulation to accommodate the unbalanced mixed-effects design.

31

μ: grand mean (fixed intercept)

ui ∼ N(0, σ2Player): random intercept for player i

vj ∼N(0, σ2Scout): random intercept for scout j

εij ∼N(0, σ2Residual): residual error

Due to some missing data, the effective sample sizes for calculating the four ICCs varied slightly: 59–62 observed scores across 23 players (each rated by 1–6 scouts) stemming from 15–16 scouts (each rating 3–4 players). Given the limited and unbalanced sample sizes, as well as the lack of a standard method for formally comparing ICCs from partially overlapping data, we did not statistically test differences between ICCs. Instead, we focused on their descriptive interpretation using the guidelines proposed by Koo and Li 32 and confidence intervals.

To examine whether scouts differed in their confidence when assessing current versus potential performance, we compared their mean confidence ratings across their player assessments using paired-sample t-tests. The same procedure was applied to experienced difficulty.

Results and discussion

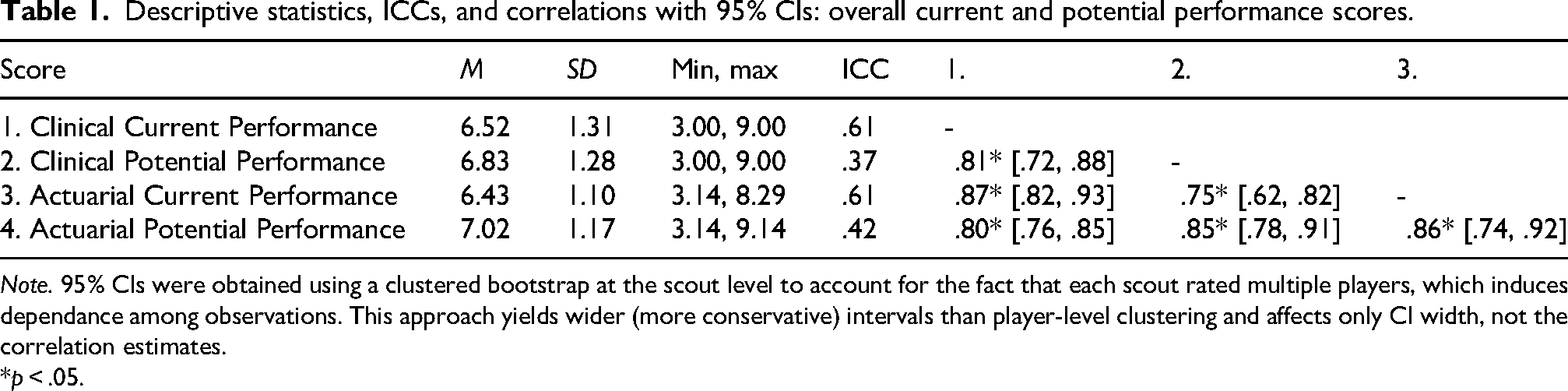

Table 1 presents the descriptive statistics, ICCs, and correlations for overall current and potential performance scores, both clinically combined (i.e., as provided by the scouts) and actuarially combined (i.e., unweighted average of the indicator ratings). In line with the study's aims, we next present and discuss the results regarding the reliability of the clinical overall current and potential performance scores, scouts’ confidence and perceived difficulty in making these assessments, and the reliability of actuarially combined overall scores.

Descriptive statistics, ICCs, and correlations with 95% CIs: overall current and potential performance scores.

Note. 95% CIs were obtained using a clustered bootstrap at the scout level to account for the fact that each scout rated multiple players, which induces dependance among observations. This approach yields wider (more conservative) intervals than player-level clustering and affects only CI width, not the correlation estimates.

*p < .05.

Current vs. potential performance

For the clinical overall scores, the ICC for current performance was .61 (95% CI: [.33, .79]), indicating moderate inter-rater reliability. In contrast, the ICC for potential performance was notably lower, at .37 (95% CI: [.05, .62]), reflecting poor inter-rater reliability. Although the wide confidence intervals around the estimates and the lack of testing of the difference caution against strong interpretation, this pattern suggests that assessing potential results in more disagreement between scouts than assessing current performance.

Scouts’ self-reports provided further insight into this. Confidence was very similar for current (M = 3.69) and potential performance assessments (M = 3.60; Cohen's d = .19, 95% CI: [−.31, .68], t(15) = .74, p = .47). In contrast, experienced difficulty was greater for potential (M = 3.08) than for current performance (M = 2.67), reflecting a large effect size (Cohen's d = 1.16, 95% CI: [.51, 1.79], t(15) = 4.63, p < .001). This combination of similar confidence but greater experienced difficulty seems paradoxical, but could indicate that scouts put more mental effort (e.g., considering variables beyond observable performance) into assessing potential, but arrive at similar confidence in the end.

These findings relate to broader concerns in talent identification regarding the distinction between current performance and potential.21,22 This issue was also reflected in our data: overall scores on current and potential performance were strongly correlated (e.g., r = .81 for the clinical overall scores). This indicates that scouts who rated players highly on current performance also tended to rate them highly on potential performance, similar to the findings of Barraclough et al. 24 and Gilson et al. 25 This focus on current ability is consistent with well-known biases in talent identification, such as the relative-age effect and biological maturation bias.15–18 Furthermore, the lack of evidence for a difference in confidence in current and potential performance assessments could indicate an ‘illusion of validity’, where evaluators remain confident despite added uncertainty when moving from performance evaluation to performance prediction.9,33

Taken together, these findings suggest that subjective assessments of potential are unlikely to improve predictions of future performance beyond assessments of current ability. Because assessments of potential are both unreliable and highly correlated with current performance, their (incremental) validity for predicting outcomes is limited. As a result, adding them to current performance assessments may actually reduce the predictive validity of the composite, since potential assessments likely contribute more to redundancy among predictors than to the prediction of future performance.34,35 Accordingly, selection decisions may be improved by using statistical approaches to assess potential - such as modeling individualized trajectories or adjusting for biological, relative, and training age 36 - which can provide less biased estimates, while also mitigating the unreliability inherent in subjective judgment (cf. 9 ).

Clinical vs. actuarial combination

For the actuarially combined overall scores, reliability was moderate for current performance (ICC = .61, 95% CI: [.37, .80]) and poor for potential performance (ICC = .42, 95% CI: [.17, .65]). These estimates were nearly identical to the clinical overall scores (current = .61, potential = .37), indicating that clinical versus actuarial combination of indicator ratings made little difference for inter-rater reliability, similar to the findings of Bergkamp et al. 5 This is at odds with broader evidence that actuarial combination generally outperforms clinical judgment in terms of reliability and accuracy.26,28–30

The absence of evidence for a difference between clinical and actuarial combination might be explained by the strong correlations between the clinical and actuarial overall scores (r = .87 for current performance; r = .85 for potential performance). The overall scores from the two combination methods likely converged because scouts tended to rate players similarly across indicators; the seven rubric indicators showed high average inter-item correlations (r = .70 for current, r = .77 for potential). When indicators are strongly correlated, overall scores and their reliability are likely to be similar whether they are combined clinically (in one's mind) or actuarially (as an unweighted mean), provided that scouts base their overall judgments on the indicator ratings rather than additional information and combine them in a roughly linear way.

The high inter-item correlations raise important questions about what the rubric is actually capturing. High inter-item correlations could suggest that, in practice, the indicators reflect a largely unidimensional construct such as general football ability (akin to general ‘g’ in intelligence testing). At the same time, one might theoretically expect rubrics to capture distinct and empirically separable dimensions of performance (e.g., physical, technical, attacking). Alternatively, the observed pattern may simply result from halo effects, whereby impressions of overall quality influence ratings across indicators. 37 While our limited sample precluded factor analysis, the findings suggest that the internal structure of scouting rubrics deserves investigation to determine whether they meaningfully differentiate between performance dimensions (if theoretically expected) or collapse into a single global impression. Relatedly, it would also be valuable to explore whether certain performance dimensions are (perceived to be) more indicative of current performance (e.g., physical strength) versus potential performance (e.g., coachability).

Limitations and future research

The most notable limitation of this study is its relatively small sample size (62 assessments across 23 players and 16 scouts), resulting in limited statistical power. In addition, all scouts were drawn from a single academy. While this restricts generalizability, it enabled estimation of reliability within one coherent scouting system rather than introducing variability by pooling scouts from multiple clubs, who are used to different assessment rubrics or approaches. In terms of demographics, the participating scouts were similar to the 125 Dutch soccer scouts included in Bergkamp et al. 1 suggesting that our sample is representative of the broader scouting workforce in the Netherlands. Although the match time was relatively short and scouts were instructed not to communicate with each other, factors that may differ from typical scouting practices, a key strength is that it was a field study, with real scouts evaluating real players during a live match.

Future research could build on our initial exploration by systematically comparing the reliability of assessments with different levels of standardization, including rating standardization (i.e., the extent to which there are predefined dimensions and rating scales, such as single global judgments versus rubrics) and combination standardization (i.e., the extent to which information is combined in a standardized manner, such as clinical versus actuarial). Within such lines of research, an important area of focus is frame-of-reference (FOR) training in which evaluators are trained through practice and feedback to use common standards for the to-be-rated dimensions, 38 and which has been shown to improve rating reliability and accuracy in organizational settings.39,40 Practically, our results suggest that clubs may focus their FOR training particularly on the assessment of potential, as this area appears most vulnerable to unreliability, highlighting the importance of calibration efforts. This can be done, for example, during FOR workshops where potential and rating dimensions are defined, followed by scouts practicing and receiving feedback on their application.

Notably, the personnel selection literature - which generally shows that increasing structure improves reliability12–14 - distinguishes not only rating and combination standardization, but also task standardization (i.e., the extent to which all assessees perform the same task). For example, in structured interviews, task standardization involves asking all candidates the same questions, while rating standardization entails evaluating each response using a behaviorally anchored scale. 41 In contrast, when assessing soccer players during a match, task standardization is largely unattainable because performance is highly dynamic and dependent on game context, which also complicates the development of behaviorally anchored rating scales. 5 As a result, achieving high reliability in soccer scouting is inherently challenging. Rubrics, nonetheless, may serve as important tools for improving reliability by directing scouts’ attention to shared performance dimensions and supporting more consistent interpretation, particularly when used alongside FOR training and standardized approaches for combining dimension ratings into overall scores.

Conclusion

Given that reliability sets the upper bound for validity, and that scouting will continue to rely heavily on human judgment in the foreseeable future, identifying methods to improve inter-rater reliability remains a pressing challenge. Addressing this issue is essential for ensuring that athlete assessments and selection decisions are both fair and accurate. Using rubrics may be a good way forward, provided that they are well-developed, mutually understood, and critically investigated rather than assumed to be effective.

Footnotes

Acknowledgements

We would like to thank the Head of Youth Scouting and the Head of Sport Science at the participating soccer academy for their collaboration and support in facilitating this research.

ORCID iDs

Ethical considerations

This study was approved by the Research Ethics Committee of HAN University of Applied Sciences, Arnhem and Nijmegen, the Netherlands, on the 14th of April 2025 (approval no. ECO 659.04/25). All research procedures were conducted in accordance with the World Medical Association Declaration of Helsinki.

Consent to participate

Written informed consent to participate was obtained from all scouts after they were fully informed about the study design before data collection. Assessed players and their parents were fully informed about the study design and were aware that the friendly match was organized for research purposes, in which scouts would be evaluating the players. Players were given the option not to participate in the match. No personal data from the players was collected; they were completely unidentifiable to the research team and were only identified by randomly assigned jersey numbers.

Consent for publication

Not applicable.

Funding

The research was supported by the University of Groningen PhD Fund of the Faculty of Behavioral and Social Sciences.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.