Abstract

This study examines the use of artificial intelligence (AI) in communication with research participants during the recruitment process, exploring stakeholder perspectives and evaluating ChatGPT’s ability to generate participant informational texts. A mixed-methods design was applied, combining an online survey with semi-structured interviews. The survey, conducted among clinical research professionals and laypersons, investigated perceived suitability, trustworthiness, ethical concerns, and requirements for responsible implementation of AI across use cases such as generating informational texts, visual aids, translations, and chatbot-based interactions. The interviews assessed how experts from diverse disciplines responded to AI-generated versus human-written texts, with particular attention to clarity, empathy, and emotional impact. Survey findings indicated that translation and writing assistance were viewed as the most appropriate and least controversial applications of AI, whereas answering participant questions or assessing eligibility were regarded with caution. AI appears to be rather underused, partly due to regulatory uncertainty and limited trust. Trust in AI was generally lower than trust in humans, especially among professionals. Key ethical concerns included unreliability, data-protection risks, and the potential for manipulation. In the empirical comparison, AI-generated texts were frequently described as clearer, more concise, and more empathetic than human-written materials, yet concerns persisted regarding missing content and oversimplification. Interviewees emphasized the need for strong human oversight, adequate researcher training, and continued involvement of patients in developing participant-facing materials. Maintaining a human role was seen as critical not only for accuracy and accountability, but also to prevent the dehumanization of research communication and preserve communication skills within research teams. Overall, the study highlights both the promise and the ethical complexities of integrating AI into participant communication during recruitment and offers a foundation for future research and policy development.

Introduction

Although clinical research has advanced significantly in recent decades, it remains operationally complex and resource intensive. A well-recognized and persistent challenge that limits the efficiency and timeliness of clinical research is participant recruitment (Kadam et al., 2016; Pemberton et al., 2018). Approximately 80% of trials experience delays due to unmet recruitment targets, and more than one-third of study sites do not recruit any participants at all. These recruitment difficulties generally prolong trial timelines, driving up expenses and postponing access to new therapies (Fogel, 2018).

Effective recruitment depends on the quality of communication between researchers and potential participants during the informed consent process. This process is often hindered by the difficulty of conveying complex, technical information in a way that is accessible and meaningful to diverse audiences with varying levels of health literacy (Kadam, 2017).

Efforts to improve recruitment have increasingly turned toward technological solutions. Digital strategies such as online recruitment and mobile applications have been shown to expand outreach and help identify eligible participants more efficiently (Brøgger-Mikkelsen et al., 2020; Tomiwa et al., 2024; Uy et al., 2025). However, while these tools expand outreach, they do not inherently enhance the quality or clarity of communication. In this context, artificial intelligence (AI), and large language models (LLMs) in particular, offer a qualitative shift, as they can not only disseminate information but also tailor, simplify, and explain it in an adaptive manner.

LLMs have shown promise across medical settings by supporting clinicians in summarizing information, facilitating communication, and enhancing patient understanding (Clay et al., 2024). However, its role in clinical trial communication remains relatively underexplored (Vaira et al., 2025; Waters, 2025). In this context, LLMs could assist in drafting informational materials for research participating (Waters, 2025) or generating visual aids and pictorial content to explain study procedures, an approach shown to improve engagement, learning outcomes, and understanding in medical education (Galmarini et al., 2024). AI may also function as a translation tool (Genovese et al., 2024), and chatbots could support the screening process by providing trial information and allowing potential research participants to ask questions (Ghim and Ahn, 2023). Yet, empirical evidence supporting these applications within clinical trial environments remains limited.

More broadly, as Bernasconi and Grossmann (2025) note, the integration of AI into clinical trial management remains a distant prospect. Adoption is slowed by ethical concerns, with regulatory gaps and limited trust among stakeholders emerging as key obstacles. The authors emphasize that analyzing the ethical implications helps to map the normative challenges that must be resolved to ensure safe, responsible, and effective implementation. Such analysis can shape regulatory trajectories and foster trust, especially when stakeholders are meaningfully involved. Despite this, in the literature on the use of AI in research recruitment, ethical considerations are often underrepresented, and stakeholders are seldom included in discussions about design, governance, and implementation (Bernasconi et al., 2025). To address this gap, our study adopts an ethical lens to explore how stakeholders view AI’s role in communication during clinical research recruitment. In this context, the ethical perspective is especially relevant because the informed consent process is not merely informational, but a dynamic interaction that shapes participants’ trust, engagement, and retention throughout the study (Kadam, 2017).

Our study had two primary objectives. Through a survey, we first sought to assess attitudes of professionals and laypersons toward the use of AI in clinical research communication, with a focus on the perceived suitability and trustworthiness of different use cases, ethical implications, and requirements for responsible implementation. Second, we aimed to evaluate the ability of AI to generate written information materials, and to examine how individuals from diverse backgrounds responded emotionally to AI-generated versus human-written texts, focusing on perceived clarity, empathy, and overall impact. We placed particular emphasis on emotional responses, as decision-making in clinical trial contexts depends not only on factual comprehension but also on emotional resonance (Gregersen et al., 2022). Importantly, this phase served to validate, challenge, and refine the survey insights, by examining them within a concrete, operational scenario. The use case was selected for its broad accessibility and technical simplicity.

Overall, this work contributes to the broader shift toward more patient-centered approaches in clinical research (Sharma, 2015), where inclusive, clear, and compassionate communication is seen as fundamental.

Methods

We conducted a mixed-method study combining findings from a survey and semi-structured interviews. The study is reported according to the Checklist for Reporting Of Survey Studies (CROSS; Sharma et al., 2021) and the consolidated criteria for reporting qualitative research (COREQ; Tong et al., 2007). Since no personal or sensitive data were collected, the study falls outside the Swiss Human Research Act and did not require ethics approval. All interviewees provided informed consent and were given the opportunity to review the manuscript.

Survey

The online cross-sectional survey was collaboratively developed by the authors and started with a definition of AI and an explanation of the study’s objectives. Initial questions collected demographic information, professional background, and participants’ experiences with AI. Subsequent items explored attitudes toward the use of language models in communicating with research participants, focusing on their suitability, trustworthiness, ethical implications, and responsible implementation. To make abstract ethical principles more accessible, we formulated questions and answers in a practice-oriented and relatable manner for our survey population. The survey focused particularly on the recruitment phase and included the following use cases: (a) creation of informational texts; (b) development of visual representations of study procedures; (c) translation of informational materials; (d) answering participant questions about the research project; and (e) screening of research participants (Supplemental File 1). The use cases were selected based on literature relevant to the use of LLMs in research participants recruitment (Galmarini et al., 2024; Genovese et al., 2024; Ghim and Ahn, 2023; Waters, 2025).

Two survey versions were created: one for clinical research professionals and another for laypersons. The surveys were identical except for questions related to participants’ backgrounds and experiences with AI. In the layperson version, some technical terminology was simplified. Additionally, the version for research professionals included one extra question regarding human oversight in AI applications.

For validation, the surveys were reviewed by three independent clinical research professionals and four laypersons. Additionally, an experienced data manager conducted a technical validation.

The survey was conducted among Swiss German-speaking participants and distributed via email. Clinical research professionals were contacted through clinical research networks and via publicly available contact details in the Swiss national clinical trials portal. Laypersons were recruited through patient organizations and the authors’ private networks. Data collection was conducted anonymously using the secure web application REDCap between February and March 2025. Multiple reminders were issued to reduce non-response bias.

Only responses from participants who answered at least four core questions related to AI use in research communication were included in the analysis. Data were compiled in Excel and results are reported as percentages. Given the limited statistical power of the lay group, no statistical comparisons between groups were conducted. Some questions used five-point Likert scales: responses at the two extreme ends were grouped for graphical display, and numerical values were assigned to responses for ranking purposes.

Interviews

We anonymized a protocol from a completed study and its associated participant information sheet. Using this material, we asked ChatGPT-4o to generate a prompting guideline for writing participant information based solely on the protocol. Our focus was limited to three sections: study objective, risks for participants, and data protection. Subsequently, we asked ChatGPT to refine the prompts based on Swissethics’ criteria for participant information (Swissethics, 2023), the umbrella organization for ethics committees in Switzerland. The resulting guidelines were applied to a second study protocol from the same research group to generate new participant information texts, to be compared with the original, human-authored texts. This approach was chosen for its reproducibility and accessibility for clinical researchers. By minimizing human input, we aimed to evaluate the current maturity of the language model in generating participants’ information material independently. We used study protocols written in English and corresponding participant information sheets in German. All prompts used for ChatGPT are available in Supplemental File 2.

One-hour interviews were conducted in person or via teleconference in June and July 2025. An interview guide was developed iteratively by the authors (Supplemental file 2). Initial questions focused on participants’ views regarding the use of language models in communication with research participants, particularly in the creation of informational texts. Interviewees were then asked to compare the texts generated by ChatGPT and by human authors in terms of comprehensibility, quality, and emotional impact. The texts were shared 30 minutes before the interview without revealing their origin, allowing for a blinded comparison. To ensure clarity, both the interview guide and the text comparison materials were pilot tested with a layperson beforehand.

Purposive sampling was used to recruit via email a regulatory affairs specialist (Interviewee 1), a marketing and communication expert (Interviewee 2), a patient partner advocating patients’ rights and involvement (Interviewee 3), an ethics committee member (Interviewee 4), a data protection officer (Interviewee 5), and a pharmacist (Interviewee 6). All participants were female, based in Switzerland, and had expertise in clinical research ranging from none to extensive. All interviews were conducted by ZK (female, master student) following initial training and audio-recorded with participants’ consent. One recording was transcribed manually at the request of the interviewee; the remaining recordings were transcribed using Amazon Transcribe. An additional interview using the same set of questions was conducted with ChatGPT-5.

ZK and LB (female, PhD candidate and senior clinical research professional with experience in qualitative research) performed an independent exploratory thematic analysis following Braun and Clarke’s (2006), framework, using a combination of inductive and deductive coding. The analysis followed a conceptual and reflexive approach and focused on identifying meaningful patterns across the data through iterative reading, coding, and interpretation. After independently generating initial codes, the researchers compared and discussed their coding to reach consensus on the final set of codes and thematic categories. Quotes in this manuscript were translated from German using ChatGPT-4o.

Results

Survey

Survey participants’ profile

A total of 199 clinical research professionals participated in the survey. The majority were female (67%) and aged 40–59 years (60%). Respondents held various roles within clinical research (multiple responses allowed,

215 individuals completed the survey for laypersons, but 21 were excluded from the analysis: 7 due to incomplete responses (fewer than 4 core questions answered), 3 for being under 18 years of age, and 11 for indicating employment in clinical research. Of the remaining 194 respondents, the majority were female (83%), aged 18–39 years (58%), and held a higher education degree (51%). The professional backgrounds were diverse; notably, 23% worked in healthcare. In terms of AI familiarity, 84% of laypersons reported prior use of AI language models, specifically for (multiple answers possible) creative tasks (70%), translations (68%), research (64%), or other tasks (18%) (Supplemental File 1, Table 1 and Figures 1–4).

Communication challenges in clinical research recruitment

Among clinical research professionals, 80% agreed that communication with participants is challenging. The most frequently cited contributors (multiple responses allowed) were complex medical texts (77%), limited time for communication (77%), and language barriers (65%), while fewer pointed to a lack of researcher empathy (24%) or other factors (16%) (Supplemental File 1, Figures 5 and 6).

Suitability and trustworthiness of AI use cases

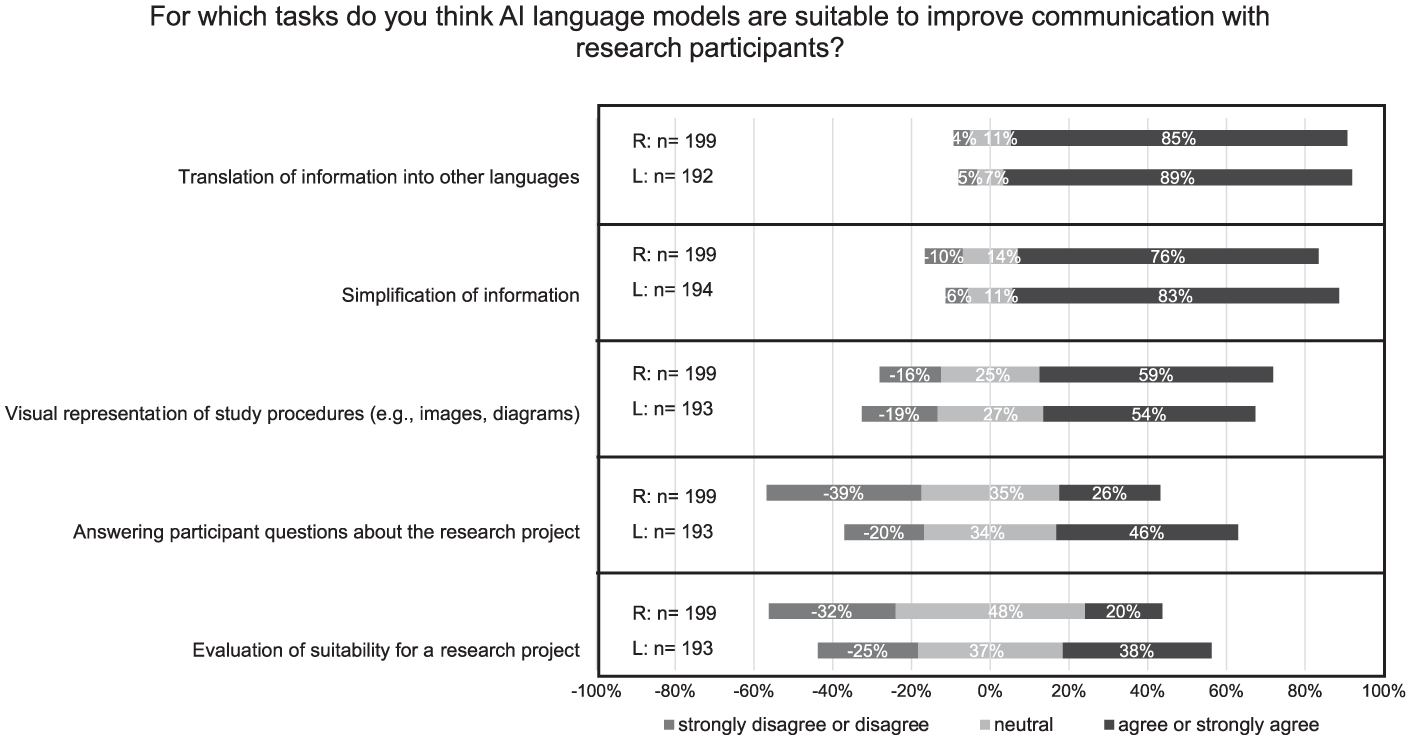

Figure 1 presents the perceptions of clinical research professionals (R) and laypersons (L) regarding the suitability of AI language models for supporting communication with research participants across different tasks. Both groups viewed AI as particularly appropriate for translations (R: 85%, L: 89%) and for simplifying complex content (R: 76%, L: 83%). The use of AI to visualize processes through diagrams or illustrations was also positively received, though to a lesser extent (R: 59%, L: 54%). Greater divergence between groups emerged regarding AI’s role in answering project-related questions, which was considered unsuitable by R: 39% and L: 20%. The same applies to the use of AI for assessing participant eligibility, considered unsuitable by R: 32%, L: 25%.

Suitability of AI use cases to improve communication with research participants. R = research professionals, L = Laypersons. A five-point Likert scale was used. Responses at the two extreme ends of the scale were pooled for graphical representation. The use cases were ranked according to the responses of laypersons.

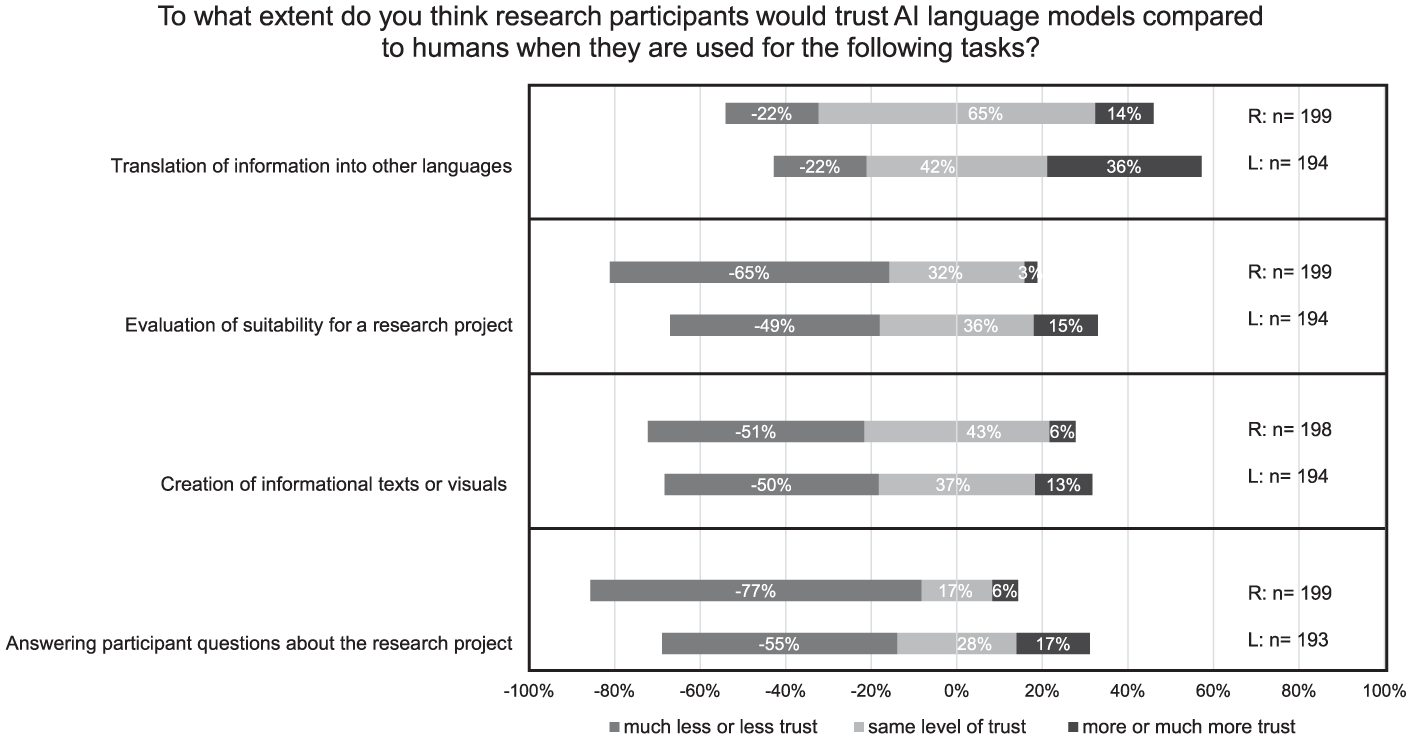

Figure 2 compares levels of trust in AI versus human agents across the use cases. The highest relative trust in AI was observed for translations, with R:14% and L: 36% expressing even greater trust in AI than humans. In contrast, for tasks such as evaluating participant eligibility, generating project-related content (text or visuals), and answering participant questions, the majority in both groups reported less or much less trust in AI than in human agents (R: 65%, L: 49%; R: 51%, L: 50%; and R: 77%, L: 55%, respectively). Across all use cases, professionals consistently reported lower trust in AI compared to laypersons.

Trust in AI language models compared to humans across the use cases. A five-point Likert scale was used. Responses at the two extreme ends of the scale were pooled for graphical representation. The use cases were ranked according to the responses of laypersons.

Ethical implications

The primary ethical concern across both groups was unreliability of AI outputs (R: 73%, L: 68%), followed by data protection and security concerns (R: 63%, L: 65%), and lack of transparency about AI use (R: 58%, L: 61%). Group differences were more pronounced for concerns about over-simplification and a loss of communication skills within research teams, acknowledged by R: 44%/L: 58% and R: 46%/L: 54%, respectively (Supplemental File 1, Figure 7).

When asked to assess potential negative impacts of AI language models in research communication, respondents worried that AI might reduce the research team’s sense of responsibility in communicating with participants (R: 52%, L: 55%). The largest groups difference concerned the risk that AI could weaken participants’ trust in research, with R: 48% and L: 38% agreeing. Fewer respondents expressed concern about AI-driven discrimination in eligibility assessments (R: 25%, L: 33%). Despite the concerns, respondents also acknowledged potential ethical benefits of AI. A strong majority agreed that AI could improve accessibility to research projects through translation, simplification, or personalization (R: 71%, L: 75%). Around half of respondents also agreed that AI could enhance clarity and support informed decision-making (R: 50%, L: 49%). Opinions were more mixed on whether AI might promote more independent decisions by reducing physician influence, with R: 31%/L: 40% agreeing, and R: 29%/L: 22% disagreeing. A considerable proportion of respondents held neutral opinions on both the positive and negative impacts of AI, with percentages ranging from 17% to 40% across all items (Supplemental File 1, Figures 8 and 9).

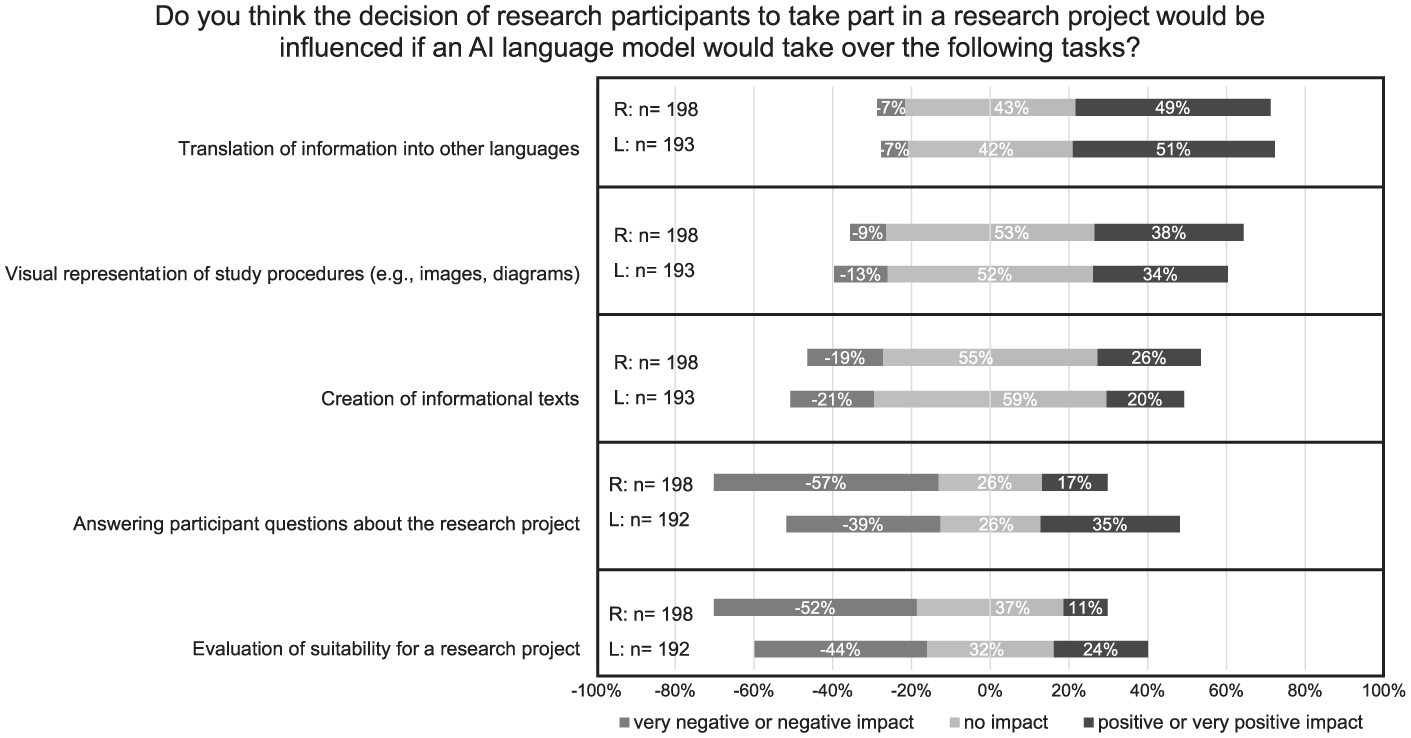

Regarding impact on research participation (Figure 3), respondents considered AI-based translation most favorably, with about half indicating a positive influence (R: 49%, L: 51%). Most felt that AI-generated visual aids or informational texts would have no impact on participation decisions (R: 53%, L: 52%; and R: 55%, L: 59%, respectively). Answering participant questions with AI was evaluated with greater skepticism, with R: 57% and L: 39% indicating a negative impact. The most controversial use case was AI-based eligibility assessment, with R: 52% and L: 44% expecting a negative impact on participation.

Influence of AI language models on the decision of research participants to take part in a research project. A five-point Likert scale was used. Responses at the two extreme ends of the scale were pooled for graphical representation. The use cases were ranked according to the responses of laypersons.

Requirements for responsible implementation

When asked which aspects of a research project should be communicated exclusively by humans (multiple answers allowed), both groups emphasized the importance of human explanation for: insurance details (R: 48%, L: 67%), research risks (R: 77%, L: 66%), data protection (R: 43%, L: 58%), and purpose of the study (R: 46%, L: 47%). Differences emerged for financial compensation, which more L: 38% than R: 20% preferred to be explained by humans, while the reverse was true for study procedures (R: 41%, L: 25%). Finally, across all use cases, over 80% and up to 98% of clinical research professionals considered moderate to full human oversight of AI-generated outputs to be necessary (Supplemental File 1, Figures 10 and 11).

Interviews

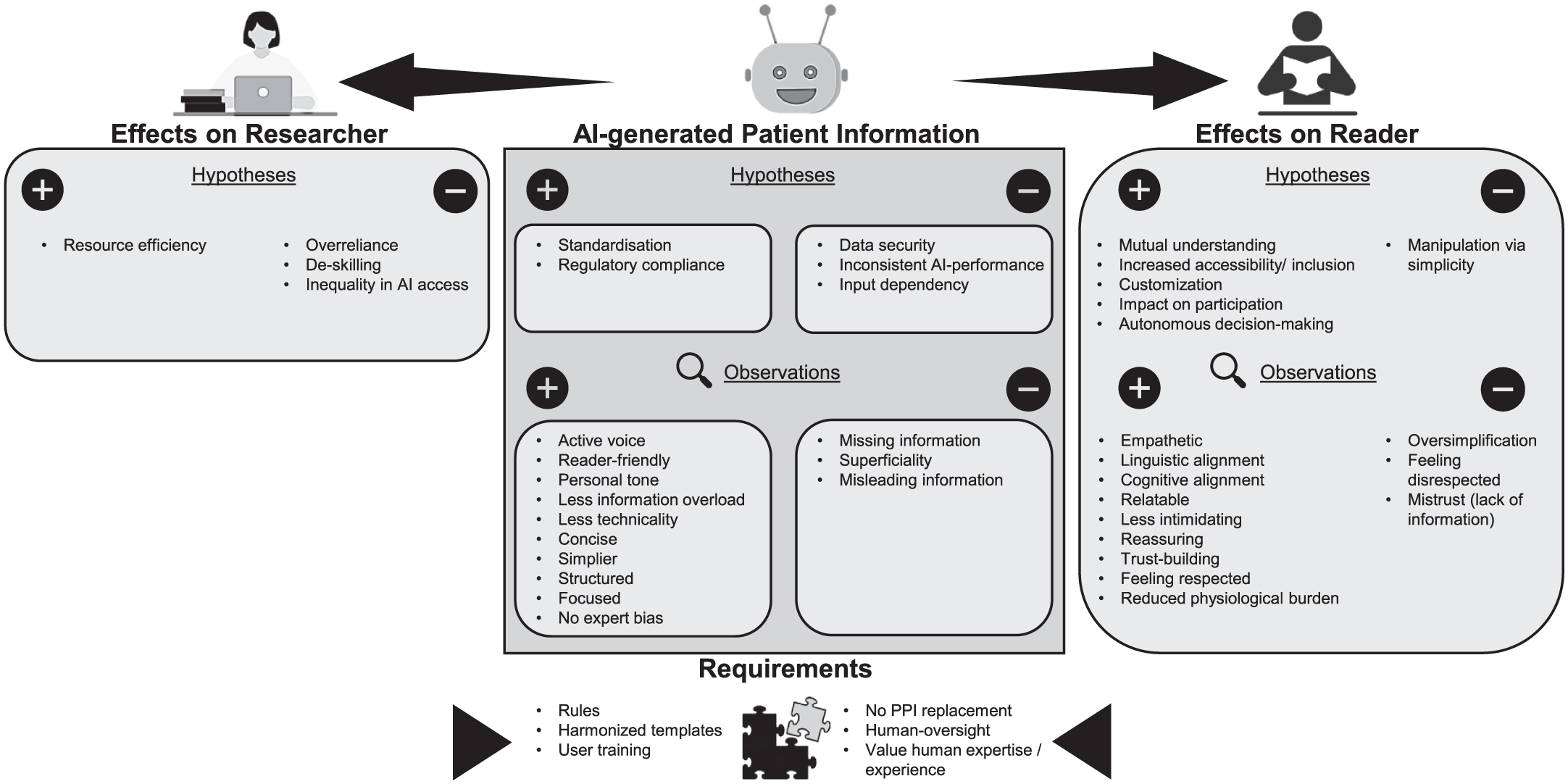

Interview analysis identified subthemes concerning the perceived benefits, risks, and prerequisites of AI-generated informational texts in research. These clustered into four overarching themes, presented in Figure 4, which can be understood in relation to the AI-generated text itself, those who interact with the text, and the overarching requirements governing its use: qualities of AI-generated information (1), implications for research participants (2) and researchers (3), and requirements for responsible implementation (4).

Thematic mind map derived from the analysis of interview data on AI-generated informational materials for research participants. The map illustrates both hypothesized and observed AI effects, categorized by their impact on the informational text, the research participants, the researchers.

In general, the reflections of ChatGPT were largely aligned with those of the human experts.

Qualities of AI-generated informational texts

Across interviews, participants described both perceived and empirically observed qualities of AI-generated content. All judgments were made blindly: when asked at the end of the interviews to identify the AI-generated text, only two participants were certain. Three reported feeling unsure, and two, including ChatGPT, incorrectly attributed authorship.

Under “improvements in text quality” (subtheme 1.1), interviewees consistently noted that the AI-generated texts were well-structured, reader-friendly, with less technical jargon and reduced information overload. The texts were considered simpler, more focused, and more personal in tone. Interviewee 2 (marketing and communication) emphasized that ChatGPT demonstrated good communication practices, such as the use of active voice, clarity, and conciseness, which were attributed to its lack of expert bias.

Interviewees also noted “regulatory compliance” (subtheme 1.2) as a potential benefit of AI-generated texts: the standardization of language and structure could facilitate adherence to regulatory requirements and improve alignment with established templates.

Alongside these strengths, interviewees expressed concerns regarding “accuracy and reliability” (subtheme 1.3). They pointed to cases of missing or incomplete information, superficiality stemming from insufficient detail, and the risk of misleading claims or distorted meaning.

Text 1 (human) mentions ‘frequent urinary tract infection’ as potential side effect, whereas Text 2 (AI) only says ‘frequent urination’. Frequent urination doesnt necessarily mean I could have a urinary tract infection. . .Also, Text 1 says ‘frequent hypoglycaemia’ and Text 2 says ‘rare low blood sugar’. That doesn’t make sense to me. A professional really needs to take a closer look at that. (Interviewee 3, patients’ rights and involvement)

These worries related to inconsistent AI performance, with output quality varying depending on the prompt. However, interviewee 2 emphasized that while AI poses risks of altering content, these shortcomings are not unique to AI, as human-generated texts can likewise be ambiguous or incomplete.

Implications for research participants

Interviewees hypothesized several ways in which AI-generated texts might shape participant experience. Under “enhanced engagement and comprehension” (subtheme 2.1), they suggested that AI-generated texts could promote mutual understanding by bridging gaps between expert and lay language, thus strengthening accessibility and inclusion. Many found that the AI texts fostered cognitive and linguistic alignment: participants felt the tone “fit” them and was easier to process. Emotional resonance was also noted: several interviewees described the AI text as more empathetic and reassuring. These qualities appeared to reduce cognitive burden, making the information feel less overwhelming. Together, these features were seen as supporting autonomy and informed decision-making.

Only those who truly understand the information can grasp the consequences of participation and make an independent, informed decision. . . A personal, empathetic tone conveys respect for the individual – and signals that their decision matters. This fosters a sense of control and autonomy. In contrast, a neutral, technical tone can reinforce the feeling of being just part of a system or merely an object of research. (ChatGPT supported by Blom et al., 2025; Ng, 2024)

However, interviewees also discussed “negative informational impacts” (subtheme 2.2). Oversimplification was viewed as a substantial risk for manipulation and disempowerment.

If someone isn’t very practiced in reading this type of texts, then it’s obvious that Text 1 (AI) is much easier to read – but with a big question mark as to whether everything is really covered. Of course, I can also lead people to participate by leaving out information. (Interviewee 4, ethics committee)

For some readers, missing information could also lead to feelings of being disrespected. Interviewee 5 (data protection), for example, felt less respected and more mistrustful toward the AI-generated text due to perceived incompleteness. Several interviewees emphasized that communication must be tailored to individual personalities, knowledge levels, and needs.

Implications for researchers

From the researcher’s perspective, under “enhanced efficiency” (subtheme 3.1), AI tools were primarily hypothesized to offer resource-saving benefits. At the same time, interviewees identified some challenges under “risks to professional competence and equity” (subtheme 3.2). Interviewee 1 (regulatory affairs) cautioned against potential overreliance on AI, which could lead to deskilling over time.

I’m a bit concerned that people are relying too much on AI and forgetting that they still have abilities as humans. . .It’s important to use it only for specific purposes and not reflexively. . . I’m worried that everything will become too technical and that we’ll forget or lose sight of important, typically human things. (Interviewee 1, regulatory affairs)

Interviewee 3 (patients’ rights and involvement) also raised broader concerns about inequitable access to AI tools across institutions and regions, potentially exacerbating disparities in research capabilities.

Requirements for responsible implementation

Interviewees also highlighted several essential conditions for the ethical and effective use of AI in generating informational texts for research participants. Under “governance and standards” (subtheme 4.1), they emphasized adherence to established templates to maintain quality and consistency, as well as the need for regulatory guidance that is comprehensive enough to ensure safety and accountability yet flexible enough to avoid overregulation. Training for researchers was regarded as equally critical to ensure competent and informed use of AI tools.

In addition, “human-centric safeguards” (Subtheme 4.2) were emphasized. All interviewees agreed that human oversight is indispensable and affirmed the continued value of human expertise and experience in designing and communicating research. Interviewee 3 (patients’ rights and involvement) further stressed that AI-generated content must not replace the involvement of patients themselves in developing study materials.

Whatever is produced by AI must absolutely be double-checked by the end users of such a document. And I’m not just talking about the research staff or whoever is seeking informed consent, but also those who sign it in the end—the participants. I believe both sides need to be involved in reviewing whether it’s appropriate or not. (interviewee 3, patients’ rights and involvement)

Discussion

Communication challenges and the potential of AI

Communication with research participants is a sensitive task, particularly during the recruitment process. Clinical research professionals completing the survey indicated several persistent challenges, including the use of complex language, limited time for clear communication, and language barriers. Considering these issues, which are well documented in prior research (Godskesen et al., 2023; Pietrzykowski and Smilowska, 2021), study participants acknowledged the potential of AI to help address these barriers.

The applications of AI identified as most suitable by survey respondents were translation and writing assistance. This focus likely reflects the accessibility of such tools. It may also reflect the belief that AI-generated translations and informational texts can improve access to research, addressing a known barrier to the generalizability of study findings (Mohan and Freedman, 2023).

In the context of writing participant information, interviewees suggested that AI could contribute to greater standardization, which in turn may lead to more consistent documentation across studies and support equal treatment by ethics committees (Bernasconi and Grossmann, 2025). At the same time, interviewees emphasized that standardization should not come at the expense of personalization. They stressed that research communication should be attentive to the individual needs and preferences of research participants. However, while personalization is appealing, it also introduces several risks. As noted by De Sutter et al. (2021), if some research participants receive more or different information than others, this could introduce inequality. Moreover, developing personalized materials poses technical challenges. Finally, not all research participants may be prepared to the responsibility of managing their own preferences.

These reflections on personalization naturally extend to a broader question about tone and emotional resonance in participant communication. Empathy is a crucial element of effective communication, and its role in patient-physician interactions is well documented in the literature (Derksen et al., 2013). In the context of clinical research engagement, empathy reflects a sincere attempt to see the research process through the participant’s eyes and to respond to their needs with transparency and respect. In this view, empathy supports ethical principles by reinforcing participants’ autonomy, dignity, and trust in the research process, making communication not only informative but also meaningfully supportive (Blom et al., 2025).

In the survey, clinical research professionals did not recognize a lack of empathy as a major challenge in communication with research participants. However, interviewees, including laypersons and individuals particularly attuned to patient needs, emphasized that empathetic tone is also important in written materials They also perceived AI-generated texts as more empathetic and reassuring than those written by humans, a pattern previously reported in the literature (Ovsyannikova et al., 2025). The discrepancy between the survey and interview results may be explained by a tendency of health professionals to overestimate their own communication skills, as observed by Osuna et al. (2018).

Barriers to adoption: Regulation and trust

Despite the potential of AI tools, survey findings suggest that AI remains underused in clinical research. This limited adoption may be partly attributed to the absence of clear regulatory guidance, which is especially problematic in a highly regulated sector such as clinical research (Bernasconi and Grossmann, 2025). Interviewees emphasized the need for regulatory frameworks that are both comprehensive and flexible, ensuring safety without stifling innovation. The regulatory landscape for AI in healthcare is fragmented and still evolving. Leading jurisdictions such as the United States, the European Union, and the United Kingdom have begun developing frameworks, but these remain in flux as they attempt to keep pace with rapid technological advances (Busch et al., 2024). Within clinical research, the International Council for Harmonisation (ICH) Good Clinical Practice guideline E6 provides international ethical and scientific standards. However, ethical questions surrounding the practical application of AI remain unresolved, undermining trust in its use.

Figure 2 confirms a widespread skepticism toward AI, especially when compared to human performance. Trust, as a social construct, is shaped by factors such as public engagement, institutional accountability, and transparent governance frameworks. Therefore, trust in the clinical research community’s use of AI hinges on a continuous, inclusive process of ethical reflection, which must ultimately be codified into comprehensive and effective regulations (Gille et al., 2020; Lahusen et al., 2024).

Patterns of trust differed between groups. Overall, professionals showed less trust in AI than laypersons. This may reflect greater awareness of the ethical, and regulatory implications of using AI in research communication. Another possible explanation is that professionals tend to prioritize accuracy and reliability, while laypersons may place more value on clarity and ease of understanding. Professionals may also be concerned that participants perceive the use of AI as a sign of carelessness or a lack of personal engagement. Supporting this interpretation, more than half of respondents in both survey groups agreed that AI could reduce the research team’’s sense of responsibility in participant communication. However, this concern appears to have stronger implications for trust among professionals than among laypersons. Lastly, differences in trust may also be influenced by age. The layperson group included a higher proportion of younger participants, who are generally more accepting of AI technologies (Osnat, 2025).

Interestingly, there was one notable exception to this pattern of skepticism. The only task in which a clear majority of survey respondents expressed equal or greater trust in AI compared to humans was translation. Familiarity with widely used online translation tools may contribute to this baseline trust, along with the perception that AI provides objective, literal translations, whereas human translators might introduce personal interpretation. While literature suggests that AI holds promise for clinical translation, the complexity and nuance of medical language call for a balanced approach that combines AI capabilities with human oversight (Genovese et al., 2024).

Ethical implications of AI-mediated communication

While the survey results showed general alignment between professionals and laypersons regarding the suitability of certain AI use cases, perspectives diverged more noticeably when considering tasks involving interpretation or decision support. These include scenarios requiring active interaction between potential research participants and AI chatbots, such as answering project-specific questions or screening individuals for eligibility. Professionals expressed greater skepticism, possibly due to their familiarity with the complexity, nuance, and emotional sensitivity involved in these interactions. In contrast, lay respondents may rely on positive experiences with customer service chatbots and may not fully recognize the risks associated with applying similar technologies in research contexts.

Despite the more pronounced divergence in views, both groups ranked these interpretive use cases as the least suitable. This may reflect a shared perception that such applications are more vulnerable to what the groups identified as the principal challenges of using AI in research communication: unreliability, data protection, and lack of transparency in AI use. Regarding reliability, LLMs are known to produce inaccurate or misleading content and may express unjustified confidence in their outputs (Wachter et al., 2024). These risks are particularly concerning when laypersons interact with AI without direct oversight from professionals and may be further amplified in emotionally sensitive contexts, such as participant recruitment (Vinay et al., 2025).

Data protection was another key concern. Ensuring robust guidelines for the collection and storage of data to protect participant privacy is essential and should be considered a core ethical design principle for these tools (Garcia Valencia et al., 2023).

Transparency also emerged as a central issue. Concerns may include the possibility of research participants anthropomorphizing AI systems, which could lead to emotional manipulation or misplaced trust (Ferrario et al., 2025). Ensuring that research participants clearly understand they are interacting with an AI system, and are aware of its limitations, is vital for ethical implementation.

Empirical evidence on the use of chatbots in clinical research recruitment remains limited. One study on attitudes toward vaccination found that chatbot- and telephone-based recruitment resulted in similar consent rates among participants who responded to a contact attempt (Kim et al., 2021). Another study reported reduced time required for consent and successful knowledge transfer (Smith et al., 2023). However, these evaluations have largely focused on logistical and operational outcomes. As Rothstein (2023) notes, informed consent serves broader purposes, including building trust, affirming autonomy, and preserving the dignity of potential participants. He cautions that the use of chatbots may risk dehumanizing the consent process, particularly in sensitive or high-risk research and when involving vulnerable populations. Rothstein further emphasizes that existing ethical and regulatory frameworks are not yet fully equipped to address these developments.

Concerns about the use of AI in research communication extend beyond chatbot applications. Even for AI-generated informational texts, which were widely accepted by survey respondents, challenges emerged in our study. The primary concern raised by survey participants, namely that AI could produce incorrect or misleading information, was confirmed in the empirical experiment. Interviewee 2 (marketing and communication) noted that this issue is not exclusive to AI, as human-generated texts can also be ambiguous and inaccurate. Although such problems were not observed in our human-authored materials, a recent study by Vaira et al. (2025) found that AI-generated consent documents, particularly those produced by ChatGPT-4, even outperformed human-written versions in terms of accuracy and completeness. However, that study focused on consent for routine surgical procedures rather than clinical trials, used a simple expert-crafted prompt, and did not rely on a source document like a study protocol, as was the case in our experiment.

Regarding AI’s potential impact on trial participation, survey participants hypothesized that AI might have a positive effect in the case of translations, but a negative effect when used for screening or answering participant questions. However, empirical evidence to confirm or refute these assumptions remains limited. To our knowledge, only one relevant study, previously mentioned, compared chatbot- and telephone-based recruitment and found no significant difference in consent rates among those who responded to a contact attempt (Kim et al., 2021).

While most survey participants expected little or no impact from AI-generated informational texts on participation, there was broad agreement that improved understandability could support autonomous decision-making. This perspective was echoed by several interviewees, who viewed AI-generated texts as a means of empowering participants through clearer communication. However, full autonomy may not be fully achieved. First, the influence of physicians may still play a role even when AI is used, although survey participants expressed mixed opinions on this point. Second, while AI-generated texts may appear easier to read, this does not necessarily translate to better comprehension. Our study did not measure actual understanding, but prior research has shown that plain language and simplified syntax can indeed improve patient comprehension (Feinberg et al., 2024). Nonetheless, caution is needed to avoid oversimplification, which, as some interviewees warned, could risk being manipulative.

Requirements for responsible AI implementation

Taken together, the ethical concerns point to a central role for human expertise in shaping responsible AI use. Unlike traditional technologies, whose outcomes are typically predictable and transparent, AI operates through dynamic and often opaque decision-making mechanisms, weakening accountability and eroding trust in professional judgment (Amann et al., 2020). Consequently, the responsible deployment of AI systems depends on humans’ ability to interpret, apply, and contextualize AI-generated outcomes within real-world scenarios. Human expertise is thus essential, not only for evaluating AI-driven decisions but also for guiding the development and refinement of AI systems to ensure they align with professional standards, ethical principles, and practical realities in the field (Park and Langlotz, 2025).

The view that AI should not fully replace human expertise was consistently expressed by both interview and survey respondents. This perspective may also reflect concerns that overreliance on AI could lead to a gradual decline in communication competence within research teams, a concern shared by approximately half of survey participants and echoed by interviewee 1 (regulatory affairs). Recent studies provide empirical support for this possibility, showing that information and communication technologies can negatively affect various cognitive and affective processes (Duhaylungsod and Chavez, 2023; Kosmyna et al., 2025). From an ethical standpoint, recognizing such cognitive impacts is essential to safeguard personal autonomy. Individuals must be fully informed about the potential cognitive risks associated with the technologies they use (Fasoli et al., 2025).

In line with this emphasis on human oversight, Interviewee 3 (patients’ rights and involvement) further highlighted that patient involvement in developing study materials remains essential. This view reflects the broader recognition of the importance of patient and public involvement throughout all stages of the clinical research process. New recommendations and tools are emerging to gather valuable insights from patient experiences and better identify barriers and facilitators to engagement and compliance (Arumugam et al., 2023).

Ensuring meaningful human oversight, especially from professionals, also presupposes that they possess the competencies required to engage critically with AI systems. As several interviewees emphasized, there is a pressing need for ongoing investment in researchers’ training. AI literacy should be developed through a structured process. As suggested by Ng et al. (2021), this requires the establishment of clear frameworks to guide curriculum development and evaluation. Such frameworks should aim to build competencies across multiple dimensions, including understanding, applying, and evaluating AI, while also fostering ethical awareness. Ultimately, the goal is to empower researchers not just as passive users, but as informed problem-solvers and active contributors to AI-driven innovation.

Study limitations and future directions

This study has some limitations that should be acknowledged. First, certain background characteristics of the survey participants may limit the generalizability of the study findings. The survey was conducted only among the Swiss German-speaking population, and the age distribution slightly differed between laypersons and professionals. Both groups also included a higher proportion of female participants. Additionally, the lay group comprised a relatively large share of individuals with higher education and those working in healthcare. This distribution may in part reflect the survey’s circulation within the author’s private network, which possibly contributed to this bias. Second, no statistical analysis was performed, and differences between groups should be interpreted as indicative trends rather than definitive conclusions. This reflects the exploratory nature of the study, which aimed to identify relevant issues and generate hypotheses for future research. Third, the interviews were conducted with a limited number of participants. However, we included individuals with diverse professional backgrounds and varying levels of experience in clinical research, allowing for in-depth exploration of the topic and ensuring that the findings were well-rounded. Finally, some of the hypothesized effects of AI applications, such as their impact trial participation, could not be directly assessed within the scope of this study. These effects should be examined in future interventional studies involving research participants. Moreover, the potential of AI to contribute through multimedia applications (e.g. audio, video, interactive formats) warrants exploration, as these modalities may enhance accessibility and participant engagement (Goldschmitt et al., 2025). Nevertheless, our findings offer a valuable foundation by identifying key areas of potential impact and informing the design of follow-up research.

To advance the field, future research should incorporate guidelines and practical strategies for evaluating AI applications in real-world clinical trial settings. Co-design approaches should be prioritized to actively involve patients, trial staff, and other key stakeholders throughout the development process. This ensures that AI applications are not only technically effective, but also ethically sound and aligned with user needs and the operational realities of clinical trials (Kilfoy et al., 2024). Regulatory sandboxes could offer a controlled environment where researchers, developers, and regulators collaborate to test AI tools under flexible, provisional oversight, allowing for innovation while maintaining safeguards to protect research participants (Qiu et al., 2025).

Future work may also explore the potential role of AI in evaluating communication materials used in clinical research. In our interviews, the analyses and comments provided by human participants did not differ substantially from those generated by ChatGPT when assessing both AI-produced and human-authored texts. This convergence suggests that AI could assist in reviewing informational materials intended for research participants. The possibility of AI-supported ethical review has been explored preliminarily by Nickel (2024), who suggests that such tools could promote greater consistency across review processes. However, he also cautions that moral judgment cannot be fully automated and warns that researchers might use these tools strategically to bypass ethical scrutiny, for instance by avoiding language that might otherwise raise red flags.

Conclusion

Our study highlights broad recognition of AI’s potential to improve communication with research participants by addressing persistent challenges such as linguistic complexity, limited time for interaction, and the need for translation. There is particular optimism around use cases already familiar to the public, such as writing assistance and translation, which are seen as ways to enhance equity and accessibility in clinical research. However, our findings also reveal significant concerns, particularly regarding data protection and the risk of manipulation. These concerns are particularly relevant for AI applications involving direct interaction with participants.

In addition to ethical issues, we believe that the lack of clear regulation and insufficient user training contribute to a continuing gap between AI’s theoretical promise and its practical readiness for responsible use in clinical research. Bridging this gap will require empirical evidence to inform policies and practices. Further research is needed across all use cases, ideally including multimedia applications, and should extend beyond logistical efficiency to examine key factors such as participant comprehension, trust, and autonomy. This will require clear evaluation frameworks, co-design approaches, and the use of regulatory sandboxes to balance innovation with participant protection.

Although not comprehensive, our empirical experiment provides valuable initial insights into the generation of information materials for research participants. While AI demonstrated some advantages over human authors, such as producing clearer, more empathetic, and better-structured texts, human oversight was found to remain essential. This includes not only professional oversight by research teams, but also the meaningful involvement of patients and public. Our findings suggest that these groups may hold differing perspectives and priorities, both of which must be considered in the design and implementation of AI tools. Maintaining a human presence during recruitment appears important not only for ensuring reliability, but also for preserving personal connection and preventing the dehumanization of research through overreliance on automation and the potential de-skilling of research staff.

While the profile of our survey and interview participants may limit the generalizability of the findings, the perspectives gathered nonetheless provide valuable insights into expectations and concerns surrounding AI in this context.

Supplemental Material

sj-docx-1-rea-10.1177_17470161261419861 – Supplemental material for Between promise and practice: Exploring AI’s role in research participant communication

Supplemental material, sj-docx-1-rea-10.1177_17470161261419861 for Between promise and practice: Exploring AI’s role in research participant communication by Lara Bernasconi, Zeynep Kapaklikaya and Regina Grossmann in Research Ethics

Supplemental Material

sj-docx-2-rea-10.1177_17470161261419861 – Supplemental material for Between promise and practice: Exploring AI’s role in research participant communication

Supplemental material, sj-docx-2-rea-10.1177_17470161261419861 for Between promise and practice: Exploring AI’s role in research participant communication by Lara Bernasconi, Zeynep Kapaklikaya and Regina Grossmann in Research Ethics

Footnotes

Acknowledgements

We sincerely thank the clinical research professionals and laypersons who participated in the survey or contributed valuable feedback during its development. We also appreciate the kind cooperation of the Clinical Trials Center Zurich staff member involved in the survey validation. Our gratitude extends to Dr med Annette Widmann (regulatory affairs specialist), Susanne Franke (marketing and communication expert), Manuela Grüttner (patient partner advocating patients’ rights and involvement), Interviewee 4 (ethics committee member), Samira Yeganeh Shirazi (data protection officer), and Mateja Tiric (pharmacist) for participating to the interviews and providing their personal perspective on the topic. We acknowledge the use of ChatGPT-5 to enhance the manuscript text and correct sentences. No content was generated entirely by the chatbot. Prompts included requests for improvements, reformulations, and simplifications.

Ethical considerations

This study did not use any personal data from survey respondents. The study does not fall within the scope of the Swiss Human Research Act and hence did not require ethics approval.

Consent to participate

All interviewees provided informed consent.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

The raw survey data and interview coding tree are available upon request. All materials are in German.*

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.