Abstract

The growing integration of Artificial Intelligence (AI) in marketing has introduced both opportunities and challenges, particularly concerning consumer trust. This paper critically examines two emerging phenomena: AI Washing, where companies exaggerate AI capabilities for marketing advantage, and AI Booing, a public backlash fueled by unmet expectations, ethical concerns, and transparency issues. By analyzing the interplay between these opposing forces, we explore the cyclical nature of AI mistrust and its implications for responsible AI adoption in marketing. Through a review of existing literature and industry examples, this study identifies key ethical, operational, and regulatory challenges in AI-driven marketing strategies. Our findings call attention to the need for transparency, human agency, stakeholder collaboration, and ethical data management to foster responsible AI practices that align with consumer trust and regulatory expectations. We conclude with recommendations for marketing professionals and policymakers to mitigate the cycle of AI mistrust and establish more credible AI integrations in marketing.

Keywords

Introduction

Artificial Intelligence (AI) is often heralded as a game-changer for virtually every industry. It is supposed to make everything it touches smarter, faster, and more efficient, but when we look at how AI is used in business, there’s often a disconnect between the hype and what’s really happening on the ground. Typically, it’s expected to incorporate mentions of AI in any pitch or project to showcase a forward-thinking mindset, yet there remains a noticeable gap between those marketers who tout AI capabilities and those who manage to deliver measurable AI-enhanced outcomes. Marketing has traditionally been quicker to embrace new technologies than to adopt a cautious approach, which involves observing, researching, and tentatively testing the waters. There’s no harm in marketers seeking efficient tools and shortcuts that could prove advantageous to their businesses. In fact, eschewing innovative technologies merely because they push you out of your comfort zone could be short-sighted.

As AI becomes more integrated into market research, it introduces concerning trends such as AI Booing, which stems from overblown promises, and AI Washing, which uses misleading claims to mask ethical issues. Chintalapati and Pandey (2022) highlight that the application of AI in marketing can often be marred by inflated expectations that lead to consumer skepticism, especially when AI’s capabilities are exaggerated without sufficient empirical support. Similarly, Wirth (2018) cautions against this hype-driven approach, emphasizing that AI’s potential in marketing must be grounded in practical, validated applications to foster sustainable consumer trust. Furthermore, the International Journal of Market Research (2018) emphasizes the value of ethical transparency, calling for a more rigorous assessment of AI applications to bridge the gap between perceived and actual outcomes, thereby preventing the erosion of trust in AI.

This paper aims to bridge the gap between the anticipated promises of AI in marketing and its actual performance, with a particular focus on the cyclical relationship between AI Washing and AI Booing. Despite AI’s transformative potential in enhancing consumer insights and optimizing strategies, significant disconnects persist between industry rhetoric and functional outcomes. This misalignment has given rise to practices such as AI Washing—where companies overstate AI capabilities only to lead to disillusionment—fueling AI Booing—a backlash against AI due to issues like embedded biases and privacy concerns. This cycle of overstatement and backlash presents critical challenges for responsible AI adoption.

Consequently, this study addresses the central research question: How do the promises and practical applications of AI in marketing align, and what ethical, operational, and regulatory considerations must be addressed to ensure responsible and transparent AI integration? By investigating this question, we aim to provide insights that will support marketers, policymakers, and other stakeholders in fostering ethical, reliable, and responsible AI practices within marketing. Conceptualizing AI Washing and AI Booing—and the cycle of mistrust between the two—requires examining their broader ramifications for various stakeholders. Through this analysis, we point out the urgent need for transparency, human agency, authentic ethical practices, and robust regulatory frameworks to ensure that the deployment of AI in the marketplace aligns with responsible and equitable standards, safeguarding the interests of all stakeholders involved.

This paper is a conceptual contribution aimed at clarifying emerging terminology and theorizing the cyclical dynamics of public trust in AI marketing. By integrating insights from trust theory, ethics, and technological legitimacy, it introduces two new constructs, namely AI Washing and AI Booing, and proposes a theoretical framework to explain their interplay. Building on this foundation, the study highlights both academic and managerial implications. Conceptually, it extends understanding of how cycles of exaggerated claims and public backlash shape debates on technological legitimacy, institutional signaling, and responsible innovation in AI-driven marketing. From a managerial perspective, it underscores that hype-driven or symbolic approaches to AI carry reputational, legal, and consumer trust risks. Responsible AI adoption therefore requires moving beyond compliance toward transparency, human agency, and stakeholder collaboration as strategic imperatives for sustaining trust and competitive advantage.

The following sections address these challenges and opportunities in detail. First, we set the scene by introducing the background and definition of key terms, then examine marketing in the era of AI, outlining how AI-driven transformations are reshaping marketing strategies, consumer engagement, and brand-consumer interactions. Next, we analyze AI Booing, exploring the social and technological factors driving public backlash against AI in marketing. We then discuss AI Washing, assessing how overstated claims about AI’s capabilities influence consumer perceptions and hinder ethical progress. Following this, we examine the impact on trust, highlighting how AI-related transparency, fairness, and accountability concerns shape consumer confidence in AI-driven marketing initiatives. This discussion leads to an exploration of the Cycle of AI Mistrust, which illustrates the recursive relationship between consumer skepticism, corporate AI misrepresentation, and the regulatory landscape. Finally, we propose a framework for responsible AI adoption, centered on ethical data management, human agency, stakeholder collaboration, and transparency. In conclusion, we discuss the need for a balanced approach that integrates AI innovation with accountability to sustain consumer trust. We also outline key research questions across these core themes to guide future studies and ensure the responsible and equitable deployment of AI in marketing.

Background

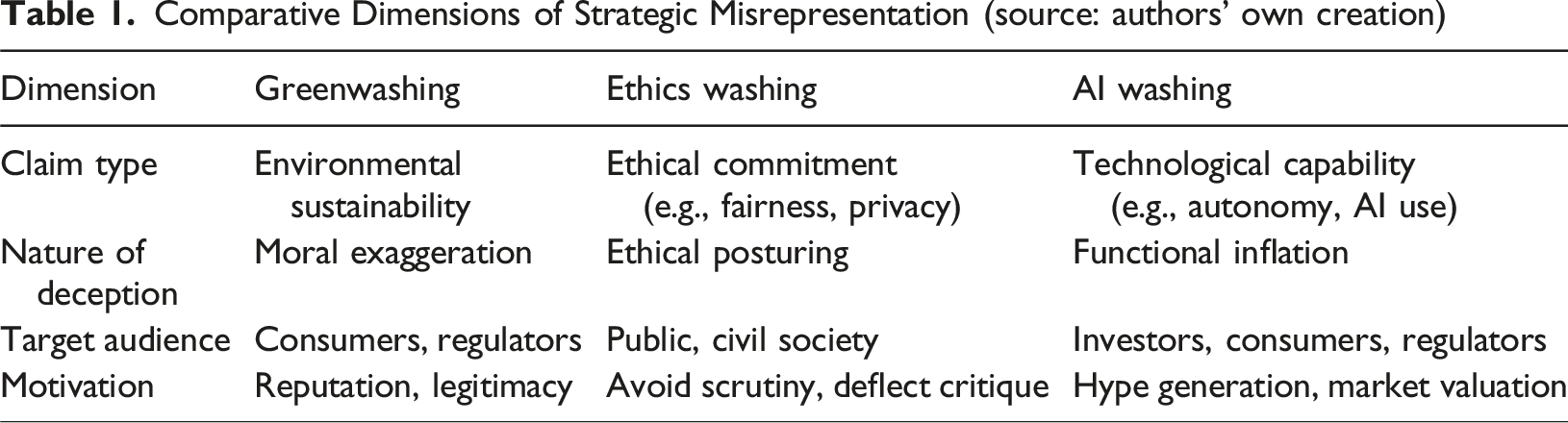

Comparative Dimensions of Strategic Misrepresentation (source: authors’ own creation)

Conversely, AI Booing captures the rising public backlash against perceived failures, overreach, or ethical lapses in AI applications. This concept is grounded in scholarship on the contestation of technological authority (Jasanoff, 2003) and the erosion of trust in digital technologies (Eslami et al., 2015; Mittelstadt, 2019). Backlash emerges when AI hype fails to align with real-world experiences or when opaque systems compromise user autonomy, fairness, or data privacy. Gawer and Phillips (2013) and Ferraro et al. (2015) demonstrate how field-level institutional contradictions and contested logics can catalyze public resistance to dominant technological narratives.

Definition of Key Terms

In this regard, our definitions of AI Washing and AI Booing are as follows: AI Washing is the deliberate or negligent exaggeration of a system’s artificial intelligence capabilities, typically by presenting rules-based or pre-programmed functionalities as autonomous, adaptive, or ethically governed systems. AI Booing denotes public disapproval or backlash against AI technologies, often triggered by incidents of bias, opacity, surveillance concerns, or perceived ethical breaches.

Together, these terms help articulate a recursive cycle of mistrust, wherein exaggerated claims (AI Washing) invite scrutiny, disillusionment, and critique (AI Booing), which in turn prompt firms to double down on symbolic assurances, thus perpetuating the cycle. By synthesizing insights from trust theory, technology ethics, and institutional theory, this paper clarifies these emerging constructs and lays the conceptual foundation for a broader theoretical model of responsible AI in marketing.

Marketing in the Era of AI

A significant issue is the ambiguous use of the term “AI” in marketing, which often lacks a clear definition, leading to uncertainty and underperformance in various marketing applications. AI has been broadly defined over the years; initially, McCarthy et al. (2006) described it as the science and engineering of creating intelligent machines. Subsequent definitions have expanded this concept: Nilsson (1998), Legg and Hutter (2007), and Kaplan and Haenlein (2019) characterize AI as systems that process data, learn from experiences, and adapt to achieve goals. Russell and Norvig (2010) view AI as devices that perceive environments and maximize success in their objectives, while others like Crevier (1993) and Poole et al. (1988) emphasize its role in automated problem-solving and intelligent agent interactions.

More contemporary views, such as those from Donath (2020) and Grewal (2014), define AI as technology that simulates intelligent behavior in areas like language and pattern recognition and manages and disseminates global knowledge, providing actionable intelligence. De Bruyn et al. (2020) emphasize the importance of specifically defining AI as machine intelligence with established boundaries to mitigate issues in marketing. This definition confines AI to a manageable scope, clarifying its capabilities and goals. In this manuscript, we adopt Liwicki’s (2024) broad definition, where AI is an agent that perceives its environment, processes information through reasoning and/or learning, and acts—physically or virtually—to influence that environment intelligently.

By automating complex managerial tasks such as lead generation and customer segmentation, which traditionally depended on human expertise, AI increases efficiency and accuracy in strategic decision-making (Paschen et al., 2020). Analyzing both structured data, such as demographics and sales figures, and unstructured data, including social media comments and customer reviews, businesses can gain faster, more accurate insights even with unsupervised AI (Davenport et al., 2020). These capabilities not only provide real-time insights into customer behavior and market trends but also offer a competitive edge by facilitating quicker and more informed decision-making (Leone et al., 2021) through accuracy and efficiency to ensure ethical and reliable market research insights (Krugmann & Hartmann, 2024). Additionally, AI tools enable the analysis of competitor campaign performance and the identification of customer expectations, key elements in boosting engagement and campaign success much faster than ever (Haleem et al., 2022).

AI’s influence in marketing spans six distinct clusters—psychosocial dynamics, market strategies, consumer services, decision-making, value transformation, and ethical marketing—underscoring the potential for future research to develop a comprehensive model or theory that accurately reflects AI adoption in marketing (Labib, 2024). Among the notable examples, Amazon Prime Air revolutionizes delivery speeds, Stitch Fix personalizes shopping experiences, and RedBalloon’s Albert platform optimizes marketing efforts to improve conversion rates (Kumar et al., 2024), while Salesforce’s Einstein AI platform further enhances market research by analyzing customer data and interactions to refine marketing strategies (Salesforce, 2024).

The rise of personalized online marketing and its ability to target consumer vulnerabilities is deepening the power imbalance between businesses and consumers, raising concerns about consumer autonomy and privacy. Hyper-targeting with data can enhance engagement and interaction, but it also raises concerns about the exploitation of consumer weaknesses (Duivenvoorde, 2023). The vast data requirements for effective AI operation necessitate stringent privacy measures; marketers must balance the advantages of personalized marketing with the need for transparency and consumer privacy protections (Helsloot et al., 2018; Wang et al., 2018). Moreover, the opacity of AI algorithms calls for improved transparency and accountability to prevent ethical and regulatory issues, reinforcing the need for collaboration among various stakeholders to guide responsible AI integration (Campbell et al., 2022; Larsson & Heintz, 2020).

Responsible AI in marketing refers to the ethical and fair use of AI technologies to enhance marketing strategies while ensuring transparency, accountability, and fairness (Benjelloun & Kabak, 2024; Hermann, 2022; Potwora et al., 2024). This approach aims to balance the benefits of AI, such as improved customer targeting and operational efficiency, with the need to address ethical concerns and societal impacts. In line with this, there are calls for comprehensive reforms in European Union marketing laws to protect consumers against sophisticated digital-age strategies (Duivenvoorde, 2023). It is crucial that these regulations are carefully crafted to avoid stifling innovation.

Some responsible AI implementations offer powerful enhancements that are capable of addressing real-world problems and generating valuable market insights. For example, O2’s AI Granny, Daisy, not only combats phone scams by engaging fraudsters and exposing their tactics but also serves as a form of market research, analyzing scam patterns to enhance consumer protection and reinforce brand identity. Insights from over 1,000 scam interactions revealed common fraud strategies—high-pressure tactics, impersonation of trusted companies, and aggressive responses—highlighting AI’s role in fraud prevention and the critical value of real-time consumer data in refining scam detection, improving AI-driven caller ID, and strengthening fraud-blocking services (Virgin Media O2, 2025).

The AI Booing and AI Washing Cycle of Mistrust

AI Booing

AI technology is shaped by both social forces and technological developments (Pinch & Bijker, 1984). Its success and acceptance depend on alignment with societal norms and expectations, shaped by the collective input of various stakeholders, including consumers, marketers, policymakers, and community leaders. From a marketing perspective, understanding these dynamics is critical, as the adoption and diffusion of AI innovations are influenced by how well they meet market needs and user preferences (Rogers, 1962). When AI applications are rushed without considering social and market contexts, disruptions can hinder consumer trust and brand equity. Therefore, marketers play a crucial role in ensuring that AI aligns with consumer values to foster smoother adoption and sustained engagement.

AI Booing refers to the backlash against AI, primarily driven by concerns about embedded biases in the models and data, discrimination, and data privacy issues that won’t be complying with the norms and values of an organization (Kumar & Suthar, 2024; Singh & Mishra, 2024). The characteristics of AI-generated content, such as its realism, liveliness, creativity, and composition, significantly impact how consumers feel about it by influencing their perceptions of eeriness and intelligence (Gu et al., 2024). However, the overhyped promises of AI’s capabilities may result in unrealistic expectations that cannot be fulfilled, typically without adequately acknowledging their limitations. This disconnect underscores the critical need for a recalibration of expectations around AI, advocating for a more informed and realistic understanding of its capabilities and limitations, particularly in market research, where the promise of revolutionary insights frequently falls short of reality (Peukert & Kloker, 2020; Steinhoff, 2024).

The effectiveness of AI systems in marketing research heavily depends on data quality. Algorithmic bias, which can be influenced by variables such as gender, race, religion, age, nationality, or socioeconomic status, can lead to unfair customer management decisions and represents a significant challenge in AI development and deployment (Akter et al., 2021; Bansal et al., 2023). Addressing these biases requires robust data management, modeling, and deployment systems to ensure diversity, fairness, and equity (Akter et al., 2023). The principle “garbage in, garbage out” highlights that poor data quality—be it inaccurate, incomplete, or biased—leads to unreliable and potentially harmful outputs that compromise predictive models and personalized marketing strategies (Kilkenny & Robinson, 2018; Sáez-Ortuño et al., 2023). In healthcare, for instance, algorithms that prioritize organ donation recipients might unfairly assess younger patients based on biased assumptions about age and survival probabilities, which could skew the fairness of medical decisions (Financial Times, 2023).

Similarly, AI-powered crime prediction systems have been criticized for reinforcing systemic biases, as seen in the UK police forces’ use of predictive crime technology, which has been reported to “supercharge racism” by disproportionately targeting marginalized communities (Amnesty International UK, 2024). Certain types of AI (e.g., large language models (LLM)) display covert racism by showing dialect prejudice against African American English speakers, often assigning them to less prestigious jobs and harsher legal outcomes, while current bias mitigation strategies tend to only conceal deeper levels of racism (Hofmann et al., 2024). Such biases also affect marketing communications, where AI-driven decisions can perpetuate existing disparities, necessitating stringent algorithmic hygiene and data model integrity to build unbiased and reliable AI systems (Siddique et al., 2024; Vasilopoulou et al., 2023).

Biases in the AI systems stem from datasets influenced by both conscious and unconscious human prejudices, impeding the fairness and objectivity of AI technologies (Akter et al., 2021). These biases manifest in various forms, such as design, contextual, and application biases (Akter et al., 2022). For example, an AI-driven newsletter might showcase diverse content—from discounts to new product launches—but if it disproportionately uses data from specific groups, combined with contextual limitations and user interaction errors during training, it embeds biases within the system. This results in content that could unfairly target or mislead, failing to meet broad user needs. Addressing these issues requires transparent and explainable AI systems to ensure fairness, thus enhancing consumer trust (Kaushal & Mishra, 2024; Kumar & Suthar, 2024; Sharma & Sharma, 2023).

Algorithmic bias in marketing models also stems from unrepresented data, flawed models, and historical biases, impacting how customer value and experience are delivered and managed. For example, Google announced it would suspend the image generation capabilities of its Gemini system following criticisms over misleading racial depictions in historical contexts, acknowledging the urgent need for accuracy in such representations (The Economist, 2024). X, formerly Twitter, faced internal investigation and public scrutiny when its image-cropping algorithm displayed a preference for lighter-skinned individuals in photo previews (Johnson, 2021). Other cases, such as racial bias in Optum’s health algorithms, gender-biased ad targeting on Facebook, Orbitz offering premium services to Mac users, and Uber or Lyft charging higher fees for rides to African-American neighborhoods, illustrate the broad ethical challenges AI presents in business management (Angwin et al., 2016; Pandey & Çalışkan, 2020).

AI’s role as a gatekeeper can heighten operational costs and contribute to the dehumanization of interactions, potentially impacting consumer trust and acceptance of AI solutions (Keegan et al., 2023; Lopez & Garza, 2023). Research indicates that biased AI recommendations, such as those from chatbots or content generators, may influence consumer decisions beyond direct interactions, affecting marketing management professionals if unchecked (Vicente & Matute, 2023). To counteract this, marketers should implement both a priori and post-hoc bias mitigation strategies, including scientific, stakeholder, and ethical model validation before and after deployment (Akter et al., 2021). Investing in bias detection tools is also vital for sustaining ethical and transparent AI applications in marketing (Kumar & Suthar, 2024).

A striking example is the toeslagenaffaire scandal in the Netherlands, where an algorithm unfairly targeted families for childcare benefit fraud, pointing to the urgent need for transparency and accountability in algorithmic decision-making to prevent significant social and financial harm from biased government systems (Akter et al., 2023; Loohuis, 2022). In Sweden, an investigation by Svenska Dagbladet and Lighthouse Reports uncovered a similar issue within the social insurance agency, Försäkringskassan, where an AI system disproportionately flagged marginalized groups—such as women, immigrants, and low-income individuals—for welfare fraud investigations (Amnesty International, 20242024). The algorithm assigned risk scores that automatically subjected individuals to enhanced scrutiny, often assuming criminal intent from the outset. Amnesty International (2024) found that the opaque nature of the algorithm led to systemic discrimination, reinforcing socio-economic disparities and raising concerns about AI-driven decision-making in public services. These cases exemplify how AI systems, when left unchecked, can exacerbate pre-existing inequalities and erode public trust. Similarly, marketers must prioritize transparency in AI practices to build consumer trust and comply with legal and organizational standards (Kumar & Suthar, 2024; Potwora et al., 2024).

Von Bertalanffy’s (1968) theories of differentiation and equifinality help explain the AI Booing phenomenon in marketing. Differentiation shows how brands uniquely position themselves using AI and social media, adapting to technological and cultural shifts. This can lead to public backlash if these innovations do not meet consumer expectations (Deryl et al., 2023; Hoffman et al., 2021). The inflated expectations can lead to loss of trust when AI fails to deliver on its promises, which reflect concerns including bias, discrimination, and privacy violations (Kumar & Suthar, 2024; Singh & Mishra, 2024; Thamik & Wu, 2022). Equifinality demonstrates that, despite different starting conditions, marketing strategies often provoke similar negative responses, emphasizing the systemic risks inherent in marketing and heightening consumer anxieties (Beck, 1992).

System theory’s output concept suggests that AI-related marketing issues are part of broader cultural and systemic patterns rather than isolated events, reflecting the complex interplay of societal interactions (Kuhn, 1970). This highlights the need for diverse management strategies that accommodate different perspectives and effectively manage AI integration into marketing research. Brands are encouraged to integrate their values and identity into their strategy to enhance resilience and adapt to the complexities of consumer environments (Dibrell & Memili, 2019; García-Álvarez & López-Sintas, 2001). In this light, a holistic approach that goes beyond technical solutions is essential for aligning AI use in marketing.

AI Washing

Greenwashing, a term recognized beyond academia, describes companies misleadingly promoting their products as environmentally friendly, using buzzwords to imply greater sustainability than exists (Seberíni et al., 2024). Similarly, AI Washing involves firms overstating their AI technologies’ capabilities, falsely advertising them as more advanced or ethically sound to attract consumers and appease regulators, while obscuring transparency and functionality issues (Steinhoff, 2024). Products and services might be labeled as “intelligent” without genuine self-learning capabilities, providing vague definitions that downplay human involvement. These practices, prioritizing profit over true ethical progress, risk fostering consumer mistrust and stakeholder dissatisfaction by presenting a facade of ethical responsibility.

Self-learning from experiences, executing autonomous operations, and making unsupervised decisions are often cited as hallmarks of true AI capabilities (Buttazzo, 2023; Kumpulainen & Terziyan, 2022; Markelius et al., 2024). However, many consumer products marketed as “AI-powered” merely feature internet connectivity and basic software-driven automation, with little to no genuine self-learning or decision-making capabilities. Items such as smart fridges, electric kettles, robotic vacuums, and heating controls are often labeled as artificially intelligent despite functioning primarily through pre-programmed responses and human-managed app controls. This misleading characterization is reinforced by socio-technical dynamics, including anthropomorphism—where AI is ascribed human-like traits—and marketing narratives that exaggerate AI’s capabilities in response to competitive pressures and rapid technological advancements (Markelius et al., 2024).

In the commercial sphere, firms increasingly claim that their platforms fully automate complex tasks such as video production and market research. However, these systems frequently continue to rely on significant human oversight to maintain quality, interpret nuanced contexts, and ensure accurate outcomes. This disconnect between marketed capabilities and actual AI functionality contributes to AI Washing, fostering inflated consumer expectations while eroding trust in AI-driven innovations. By masking the extent of human involvement, companies obscure the genuine limitations of their technologies, ultimately prioritizing branding over meaningful advancements.

AI Washing, or the superficial enhancement of products to capitalize on AI hype without real technological advancement, is akin to adding “go-faster” stripes on a car without improving its engine, exploiting AI excitement without meaningful innovation (Vakkuri et al., 2022). This practice stifles genuine AI breakthroughs, erodes consumer trust, inflates expectations, and sets unrealistic goals for investors who struggle to identify valuable projects. For instance, Coca-Cola faced criticism for claiming that AI co-created a new drink, Y3000, without clear explanation of AI’s actual involvement, making the campaign seem more innovative than it truly was (Steinberg, 2023). In finance, two firms were charged by the U.S. Securities and Exchange Commission (2024) for misleadingly stating their investment strategies were AI-driven, further exemplifying AI Washing’s risks. In insurance, findings from Sprout.ai revealed a rise in AI-altered fraudulent claims, a trend that some insurers have overstated in their reliance on AI, downplaying the necessity of human oversight (Fox, 2024). Additionally, the rise in AI ethical guidelines has drawn criticism as “ethics washing,” where high-minded principles lack enforcement and practical application, diverting focus from AI’s potential systemic harms (Munn, 2023). This pseudo-ethical positioning can lead to biased AI decisions and false promises of “AI for good” initiatives that may, in reality, conflict with ethical standards when companies sell surveillance technology to questionable buyers.

AI Washing in marketing is often identified by several warning signs. First, claims about AI’s transformative impact frequently lack substantiated evidence or detailed case studies, relying on vague promises rather than specific, data-backed examples (Jarek & Mazurek, 2019; Koubaa El Euch & Ben Said, 2024; Potwora et al., 2024). This trend extends to exaggerated portrayals of AI’s superiority over traditional tools, often without clear or proven functionality. When technical specifics are missing, such as explanations of machine learning or natural language processing, the audience is left with an inflated impression of AI’s capabilities (Potwora et al., 2024; Sáez-Ortuño et al., 2023; Zejjari & Benhayoun, 2024). Additionally, ethical and privacy considerations are often overlooked, which further undermines the need for a balanced approach between leveraging benefits and ensuring responsible deployment (Vlačić et al., 2021). Moreover, AI often appears superficially integrated into operations, where it is cited without genuine functionality or alignment within business practices, signaling an inflated role in marketing strategies (Jarek & Mazurek, 2019). This phenomenon is compounded by limited academic rigor, as the absence of comprehensive analyses undermines the validation of AI’s impact (Koubaa El Euch & Ben Said, 2024).

A current example is sustainability messaging in AI, where claims about AI’s role in sustainable marketing are contradicted by the technology’s considerable resource demands, such as water and energy use (Acuti et al., 2022). Here, simple and clear sustainability communication is essential, as complex messaging combined with cognitive biases and emotions like guilt can diminish consumer engagement (Antonetti & Maklan, 2014; Lima et al., 2019). Commodifying sustainability as an ethical feature without substantial backing reduces its potential for impactful change, illustrating the importance of transparent, evidence-based marketing practices (Tam, 2019). Responsible AI embodies three core principles—lawfulness, ethics, and robustness—throughout its lifecycle, guided by seven requirements: human oversight, safety, data privacy, transparency, fairness, societal well-being, and accountability (Díaz-Rodríguez et al., 2023). Practical implementation of these standards can be enhanced through audits and regulatory sandboxes, aligning AI with societal values and fostering a transparent environment. AI’s impact on consumers’ sense of agency (SoA) is a critical factor; understanding this influence can enhance consumer attitudes and engagement, although current research on AI’s adaptability to human agency remains limited (Legaspi et al., 2024).

AI tools like Salesforce’s Einstein AI are crucial for converting theoretical insights into practical marketing outcomes, enabling real-time adaptation to market changes, customer interaction analysis, and campaign personalization. However, platforms like Amazon Mechanical Turk (MTurk), often used to train AI systems such as Salesforce, expose the gap between AI’s promise of fairness and ethical practice. MTurk workers face precarious conditions characterized by low compensation and job insecurity, leading to issues such as response bias, inconsistent engagement, and data reliability concerns (Aguinis et al., 2021; Semuels, 2018). Some workers describe it as a “low-wage hell” (Semuels, 2018; Socher, 2018). MTurk workers facing job insecurity, lack of social protections, and income volatility often engage in satisficing behaviors—completing tasks with minimal effort to maximize earnings—leading to inconsistent response styles, lower engagement, and unreliable data (Gonzalez-Cabello et al., 2024; Stephens, 2022). The platform also presents methodological concerns that threaten data validity, including self-misrepresentation (falsifying demographics to qualify), self-selection bias (participation influenced by financial incentives rather than representative sampling), social desirability bias (adjusting responses for acceptance), and perceived researcher unfairness (grievances over compensation, lack of communication, and study accessibility) (Aguinis et al., 2021). Furthermore, bot-generated responses pose an increasing risk, complicating the verification of authentic human participation (Agley et al., 2022; Kennedy et al., 2020; Webb & Tangney, 2022). Disregarding human factors such as fatigue, motivation, and cognitive biases of the MTurk workers ultimately risks compromising data integrity (Crawford, 2021). These conditions challenge the ethical claims of companies offering AI solutions and contribute to AI Washing, where overstated promises of AI capabilities mask the socio-technical limitations and ethical pitfalls of its implementation (Crawford, 2021; Gonzalez-Cabello et al., 2024).

The deceptive nature of AI Washing can allow companies to evade full compliance with consumer protection laws and data privacy regulations, undermining the effectiveness of regulatory oversight. This creates challenges for regulators in ensuring that AI technologies are used responsibly and ethically (Peukert & Kloker, 2020; Vakkuri et al., 2022). To address issues such as AI bias and opacity, it is crucial to treat AI not merely as a tool but as a teleological extension of human agency—actively aligning its development and application with human intentions and ethical responsibilities (Noller, 2024). Building a participatory marketing culture can support accountability by involving consumers in brand values, transforming the traditional consumer-brand relationship into one of shared values and transparency (Pilon & Brouard, 2023). Incorporating key ethical principles into AI systems—such as autonomy, the right of explanation, and value alignment—ensures that AI operations are not only technically proficient but also ethically responsible and in harmony with human values (Bertoncini & Serafim, 2023).

Both AI Booing, driven by disillusionment when AI fails to meet exaggerated expectations, and AI Washing, which erodes trust through misleading claims about ethical practices, compromise data integrity and transparency, presenting regulatory compliance challenges. Companies must emphasize transparency, utilize bias detection tools, and adhere to comprehensive guidelines that ensure data integrity and foster consumer trust. Regulatory frameworks under entities like the European Commission are crucial in enforcing accountability for AI practices, requiring robust regulatory development. However, these regulations must be dynamically updated to keep pace with the rapidly expanding field of AI while also being carefully designed to avoid stifling innovation. By adhering to these standards, marketing researchers and businesses can responsibly utilize AI, building consumer trust and complying with evolving regulations (Díaz-Rodríguez et al., 2023; Kumar & Suthar, 2024; Méndez-Suárez et al., 2023; Singh & Mishra, 2024).

Impact on Trust

Trust, as defined by Mayer et al. (1995), involves a willingness to be vulnerable based on perceptions of competence, integrity, and benevolence. It is a dynamic psychological construct shaped by values, attitudes, and past experiences (Beldad et al., 2010; Jones & George, 1998).

In marketing, trust is critical for ensuring that AI systems align with consumer expectations, facilitating behaviors like sharing sensitive information and engaging in collaborative relationships, both of which are crucial for long-term consumer loyalty. However, trust and mistrust are not simply opposites on a linear continuum. Instead, Lewicki et al. (1998) emphasize that individuals may simultaneously trust an entity’s abilities but remain skeptical of its intentions, leading to partial trust or careful distrust, which is particularly relevant for responsible AI integration in marketing.

From an adoption perspective, the extent to which AI marketing tools fulfill their promises directly impacts trust formation. Partial trust manifests when consumers are willing to engage with AI-powered services but demand proof of their reliability, fairness, and ethical responsibility (Yang & Wibowo, 2022). Conversely, repeated negative experiences, such as privacy violations or misleading claims, fuel distrust, leading to consumer disengagement (Choudhury & Elkefi, 2022; Khosravi et al., 2022). This phenomenon underscores the risk of marketing overpromising AI capabilities without delivering on transparency, a key challenge in responsible AI integration.

To mitigate distrust, marketers must focus on calibrated trust, an optimal balance that prevents both over-trust (blind reliance on AI) and under-trust (excessive skepticism) (Ismatullaev & Kim, 2024). Failing to maintain this balance leads to ethical concerns, as AI systems may either be perceived as deceptive or incapable of delivering unbiased outcomes. Achieving this balance requires transparent communication, explainability, and impartiality in AI practices (Duenser & Douglas, 2023; Gerlich, 2024).

Additionally, corporate misconduct, such as concealing AI failures or engaging in deceptive marketing by misleading consumers about AI’s capabilities, risks exacerbating trust erosion. Research by Davies & Olmedo-Cifyentes (2016)Davies and Olmedo-Cifuentes (2016) shows that actions like “bending the law” and “not telling the truth” have severe consequences for consumer trust, while unfair or irresponsible actions, though damaging, are perceived as less severe. Individual characteristics also play a role in how trust is affected, with some consumers exhibiting both high trust and high distrust simultaneously (Verhoest et al., 2024).

Context is crucial in trust formation. Lewis & Weigert (1985) differentiate between system trust (faith in institutional structures) and human-like trust (based on qualities such as familiarity and competence). In AI-driven marketing, trust erodes quickly when human-like qualities are overhyped and later found lacking, complicating the technology’s acceptance (Montag et al., 2024). For instance, industries like healthcare and autonomous vehicles have faced significant trust challenges, where non-transparency and perceived risks deter adoption (Choudhury & Elkefi, 2022; Detjen et al., 2021). Transparency, stakeholder collaboration, and regulatory oversight are pivotal in fostering responsible AI practices that align with societal expectations (Moorman et al., 1993).

To summarize, trust serves as both a barrier and an enabler of AI adoption in marketing. AI’s practical applications must align with its promises to prevent trust erosion, as gaps between expectations and real-world performance fuel consumer skepticism. Ethical risks, such as bias, deception, and lack of transparency, must be proactively mitigated to foster responsible AI engagement. Operationalizing trust requires calibrated transparency, governance mechanisms, and ethical AI practices that ensure fairness and accountability. Additionally, regulatory oversight plays a crucial role in enforcing compliance standards that protect against algorithmic discrimination and misuse. Ultimately, trust is far from an abstract concept; it is the dynamic currency of responsible AI in marketing, one that can be gained or lost in an instant and whose careful stewardship is essential for aligning technological promise with societal expectations.

The Cycle of AI Mistrust

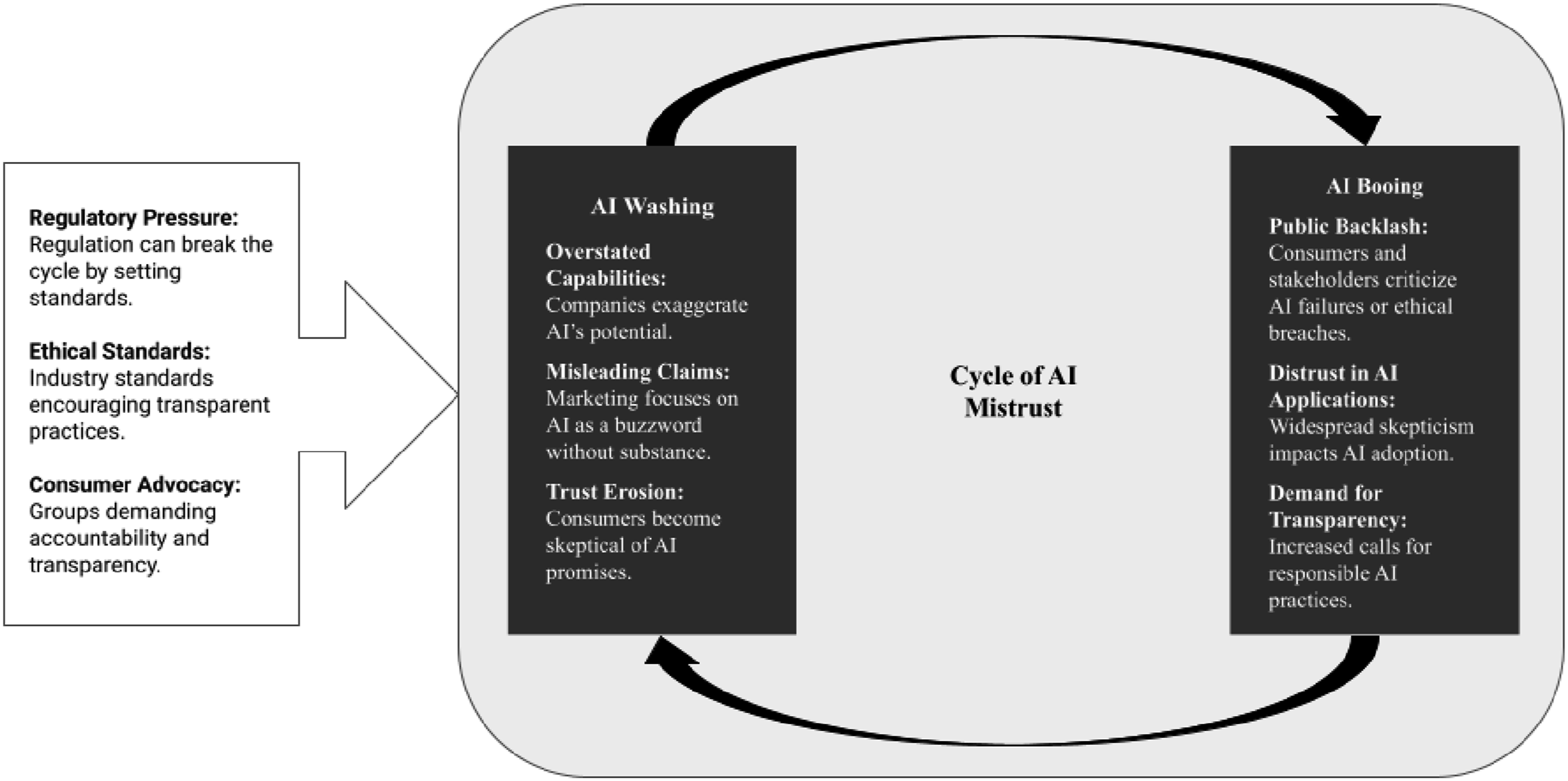

AI-driven marketing faces challenges not only in adoption but also in maintaining public trust. Figure 1 illustrates the cyclical nature of AI mistrust, driven by both over-promising (AI Washing) and backlash against perceived failures (AI Booing). AI Washing occurs when companies exaggerate the potential of AI technologies, leading to unrealistic expectations. This marketing approach often results in misleading claims and erodes consumer trust over time. Consumers who feel misled may lose faith in both the technology and the brand. AI Booing, on the other hand, refers to the public backlash that occurs when AI fails to meet expectations or when ethical issues arise. Such incidents trigger widespread skepticism and increased scrutiny, prompting stronger demands for transparency in AI-driven marketing practices. Dynamics Between AI Booing and AI Washing (Source: Authors’ Own Creation)

As shown in Figure 1, regulatory pressure, ethical standards, and consumer advocacy are crucial to breaking the cycle of mistrust. These forces can encourage companies to adopt more responsible AI marketing strategies. By recognizing the factors that contribute to AI mistrust, marketers can develop more effective strategies to build and maintain consumer trust. Transparency and stakeholder collaboration are essential for overcoming both AI Washing and Booing.

AI Washing (hype) frequently leads to AI Booing (backlash), forming a boom-and-bust cycle where initial enthusiasm for AI deteriorates into mistrust. As Abrahamson (1991) notes, early adopters—such as consultancies and business media—often drive hype without fully considering practical viability. This process leads to inflated expectations, which, when unmet, create disappointment and erode trust in both the technology and associated brands.

The hype cycle is further fueled by bandwagon effects, where firms rush to adopt AI-driven marketing solutions to remain competitive. However, growing disillusionment can trigger a counter-trend of abandonment, reinforcing skepticism toward new innovations. To mitigate this cycle, marketers must adopt transparent, responsible strategies that manage expectations and clearly communicate AI’s capabilities and limitations.

Rather than following a linear trajectory, the process of AI integration in marketing may be shaped by conflicting forces, such as optimism about efficiency gains versus concerns over ethical implications, which create cycles of overadoption followed by rejection (Van de Ven & Poole, 1995). These opposing perspectives generate tensions that can disrupt continuity, unless firms recalibrate their strategies by prioritizing transparency and stakeholder engagement to restore trust and stabilize innovation.

Implications for Ethical Practices

The expanding role of AI in marketing introduces both opportunities and ethical dilemmas, which are underscored by two key challenges: the knowledge gap and the implementation gap (Herhausen et al., 2020). The knowledge gap refers to the shortfall between the awareness of AI-driven marketing technologies and the deep expertise required to deploy them responsibly. This gap necessitates continuous learning to navigate the ethical and strategic complexities of AI-powered marketing. Meanwhile, the implementation gap highlights the disconnect between AI’s theoretical potential and its real-world application, where ethical risks—such as algorithmic bias, lack of transparency, and consumer manipulation—pose significant barriers to responsible deployment. These challenges emphasize that AI integration in marketing must go beyond technological efficiency and prioritize ethical, accountable, and consumer-centric practices.

Transparency, Oversight, and Human Agency

Questions of transparency and human oversight become especially salient when considering the opacity of AI systems. One key issue is the black box problem, where AI’s complex decision-making processes lack transparency (Pasquale, 2015). This opacity poses risks for marketing decisions, as unexplained AI-generated recommendations can undermine consumer trust and ethical accountability. Additionally, users may over-rely on AI-generated insights, disregarding contextual factors or their expertise (Klingbeil et al., 2024). Addressing these risks requires a balanced trust framework that enhances AI literacy and promotes human oversight in marketing operations.

Educated human agency plays a crucial role in aligning AI actions with ethical standards and societal welfare (Virvou, 2022). By maintaining transparency and collaboration between AI and human intelligence, organizations can mitigate risks, enhance accountability, and foster a symbiotic relationship where AI supports rather than substitutes human ingenuity in marketing strategy. This approach strengthens both technological innovation and consumer trust, ensuring responsible AI integration in marketing research and practice.

Commodification, Data Loops, and Bias Amplification

The concept of alienation in the Frankfurt School’s critical theory—the estrangement of individuals from their authentic selves through commodification and instrumental control—closely aligns with the dynamics of AI-driven marketing and communication (Celikates & Flynn, 2023; Marcuse, 1964). In this context, alienation emerges as individuals are increasingly abstracted into data points, stripped of agency and reduced to computational profiles for predictive targeting. Individuals and their interactions are increasingly treated as quantitative data points for algorithms to analyze and exploit. This process reduces the rich, qualitative aspects of human communication to mere numbers or patterns, obscuring authentic human experience and reducing it to something manipulable for commercial gain. For example, Levi’s faced backlash for collaborating with Lalaland.ai to use AI-generated models in its online campaigns aimed at promoting diversity. The company acknowledged that this move was not meant to fulfill its goals of diversity, equity, and inclusion, emphasizing the importance of real actions and stating that AI models would not replace human models but serve as a tool to enhance customer experience (Levi Strauss & Co., 2023). This illustrates how technological progress in marketing can clash with social justice and equality goals, showing that technology is not merely a tool for supporting these values but can also pose a potential barrier.

Organizations are increasingly deploying AI across various functions, including content generation, personalized marketing, consumer behavior analysis, predictive analytics, customer support automation, dynamic pricing, and social media monitoring. This integration of AI can create a cyclical process in which over-reliance on automated systems may reduce the quality of content and degrade the user experience due to insufficient human oversight. For instance, online stores often utilize AI to refine and automate the use of manipulative dark patterns in their interfaces, such as presenting confusing choices, mandating unnecessary account creations, and using countdown timers to create undue urgency (Gao et al., 2023). These tactics, proven to influence consumer behavior across all demographics (Zac et al., 2023), exemplify how AI can negatively impact consumer trust and decision-making. Consequently, the data generated from marketing activities is continually recycled back into the organization’s AI systems, which then reanalyze this information to further refine and personalize marketing strategies. This recursive loop not only boosts the efficiency and personalization of marketing efforts but also significantly expands the data repository.

However, this process can amplify existing biases if the initial data or algorithms are flawed, introduce data contamination that impairs decision-making, and cause overfitting models that struggle with new trends. For example, if systems rely on biased training data, they risk perpetuating societal biases by producing content that reinforces gender stereotypes or excludes diversity (Shrestha & Das, 2022). With extensive data collection and unchecked systems, organizations risk creating bloated data repositories that, while voluminous, may ultimately hinder rather than help, clouding decision-making with an overabundance of low-quality or irrelevant information. Innovation carries inherent risks; without careful management and ongoing adaptation, it can lead to long-term problems (Inthavong et al., 2023). To address these challenges, it is essential to conduct thorough bias audits, maintain strict data cleanliness, develop adaptable AI models, and enforce robust privacy protections at every stage of data handling. These measures ensure that AI integration into marketing not only enhances business processes but also adheres to ethical standards and maintains consumer trust.

Stakeholders, Sustainability, and Ethical Futures

More fundamentally, the risks outlined above compel reflection on issues of collective responsibility, the governance of stakeholder relationships, and the enduring sustainability of AI within marketing practice. The negotiation between consumers and marketers regarding data usage and personalized advertising can be conceptualized as a strategic interaction akin to a public good. While such practices enhance engagement and personalization, they simultaneously risk triggering a Tragedy of the Commons scenario, where inadequate regulatory oversight permits the overexploitation of consumer data and erosion of trust (Hardin, 1968). Here, individual actors pursuing short-term advantage may collectively undermine the long-term value of the data ecosystem, compromising its integrity for all stakeholders. For collective resources, the sustainability challenges posed by digital marketing efforts, particularly those utilizing AI and cloud computing, necessitate comprehensive strategies to address their significant energy and resource footprints. This calls for an industry-wide commitment to sustainable marketing practices that minimize environmental impact while optimizing data-driven marketing strategies (Ferrara, 2024; Li et al., 2023). Such practices not only support environmental goals but also bolster brand integrity and consumer loyalty in an increasingly eco-conscious marketplace.

Systems adapt to imbalances by reorganizing, a concept essential for managing stakeholder interests within corporate mechanisms. Balancing stakeholder concerns helps transform issues such as AI Washing and AI Booing into opportunities for ethical engagement and continuous improvement, positioning brands as leaders in addressing digital challenges (Pies & Valentinov, 2024). Collaborations with secondary stakeholders (e.g., media, government, and NGOs) often drive ecological innovation, while partnerships with primary stakeholders enhance product and process development, fostering trust and adaptability (Özdemir et al., 2023).

Understanding stakeholder perceptions is crucial for managing expectations and mitigating risks associated with AI in market research. To enhance the effectiveness of stakeholder theory in this domain, it is necessary to clarify stakeholder definitions, boundaries, and membership criteria; systematically organize information to manage conflicting interests; and ensure theoretical alignment (Bridoux & Stoelhorst, 2022; Chatterji et al., 2016; Wagner Mainardes et al., 2011). Full transparency in organizations is unattainable, but there can be an enhanced understanding to carefully decide which information to share or use, and not to be truthful without misleading info (Von Bertalanffy, 1968). This selective transparency becomes especially critical for ethical marketing and crisis management, illustrating the value of managing AI biases and safeguarding stakeholder trust in AI-used settings (Valentinov et al., 2019).

AI, in its design, does more than simulate human thought—it increasingly aims to surpass it. This trajectory points to a future where AI could begin to shape, rather than just support, human decision-making. If AI can evolve to not only predict but actively influence preferences based on vast data repositories, then marketing risks edging into the dystopian territories we aim to avoid in ethical practices. This possibility calls for a radical rethink of marketing ethics and strategy within an AI-driven landscape. To balance this power, responsible AI becomes essential—setting non-negotiable ethical standards that protect human agency and dignity. The ultimate challenge for marketers and theorists, then, is not just the ethical integration of AI but guiding its development in ways that enhance rather than undermine humanity. This shift requires a new philosophical foundation in marketing: to see AI not solely as a commercial tool but as a collaborator in creating a more empathetic and inclusive marketplace.

Conclusion

Our analysis reveals that while AI has the potential to completely reinvent marketing by enhancing personalization and consumer insights, a significant disconnect remains between the capabilities touted by marketers and the outcomes achieved in practice. This disconnect has led to phenomena such as AI Washing, where overstated claims undermine consumer trust, and AI Booing, reflecting public backlash driven by concerns over data privacy and bias, leading to the Cycle of AI Mistrust.

This study explores the transformative role of AI in marketing, emphasizing its advanced data analysis and predictive capabilities (Chintalapati & Pandey, 2022). However, the rise of the Cycle of AI Mistrust reveals a critical tension between AI’s potential and its actual implementation. Wirth (2018) warns that inflated expectations risk undermining trust unless grounded in practical applications. Similarly, the International Journal of Market Research (2018) highlights the importance of empirical validation to foster transparency and ethical governance. Addressing these challenges, this study proposes a comprehensive framework to guide responsible AI adoption, with the aim of enhancing both trust and methodological integrity in marketing.

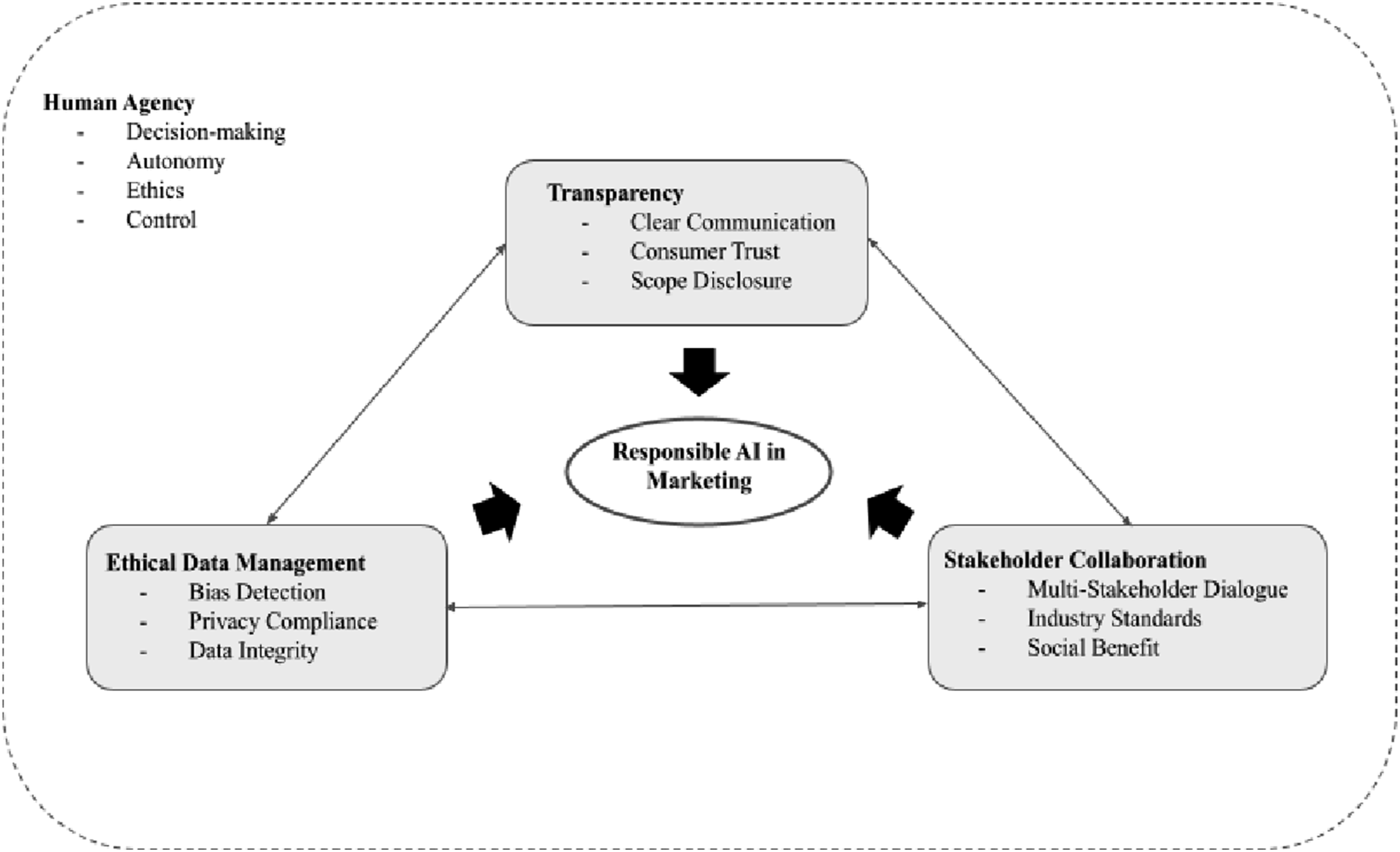

Framework for Responsible AI in Marketing

We illustrate our proposed approach for responsible AI integration in marketing in Figure 2. At the core of this framework is the principle of Responsible AI in Marketing, supported by three foundational pillars: Transparency, Ethical Data Management, and Stakeholder Collaboration. Each of these pillars plays a critical role in addressing the ethical and operational challenges identified in our analysis. We emphasize that a responsible approach to AI in marketing necessitates transparency and clear communication regarding AI’s actual capabilities and limitations. Transparency includes elements such as clear communication, consumer trust, and scope disclosure, which are essential to avoiding exaggerated claims and fostering trust. Ethical Data Management—another key pillar in the framework—requires practices like bias detection, privacy compliance, and data integrity to ensure that AI systems operate fairly and reliably. Conceptual Framework for Responsible AI Integration in Marketing (Source: Authors’ Own Creation)

Finally, Stakeholder Collaboration highlights the importance of multi-stakeholder dialogue, industry standards, and social benefit, underscoring the need for cooperation among marketers, policymakers, and technology developers to establish balanced, ethical standards. Human agency is crucial in AI, driving innovation and enhancing system interpretability by allowing AI to dynamically interact with and adapt based on human inputs. This not only enriches AI’s learning processes with human judgment but also transforms it into an active participant in creative problem-solving, tackling real-world complexities. Integrating human agency helps maintain control over technology and aligns AI developments with complex human values, thereby establishing a new paradigm in the human-machine relationship that emphasizes ethical decision-making and autonomy. Establishing feedback loops, as outlined in our framework and represented with dashes around, is essential for keeping roles centered on human agency within AI systems well-informed and adaptable. Such an approach enables real-time adjustments in AI operations to uphold ethical standards and human-centric practices. Our conceptual framework advocates for aligning AI use with responsible standards in marketing to foster a sustainable, consumer-centric digital environment where AI’s benefits are realized transparently and equitably for all stakeholders.

Toward a Sustainable and Trustworthy AI Future

This paper contributes to the academic literature on trust in AI and responsible AI by introducing and theorizing two interconnected concepts: AI Washing and AI Booing. While existing discussions emphasize principles such as fairness, transparency, and accountability, our framework shifts the analytical focus to the communicative and marketing dynamics that shape how AI is publicly represented and perceived. We define AI Washing as the strategic exaggeration of AI’s technical capabilities, which can mislead stakeholders and distort expectations. In response, AI Booing captures the ensuing backlash or resistance that arises when such inflated claims fail to materialize or align with ethical concerns. By conceptualizing these dynamics, the paper advances ongoing debates by mapping how inflated claims and public reactions form a cycle of mistrust in AI. By integrating these pillars, marketers can not only maximize AI’s potential but also address the ethical concerns that threaten to erode consumer trust.

Limitations and Future Research

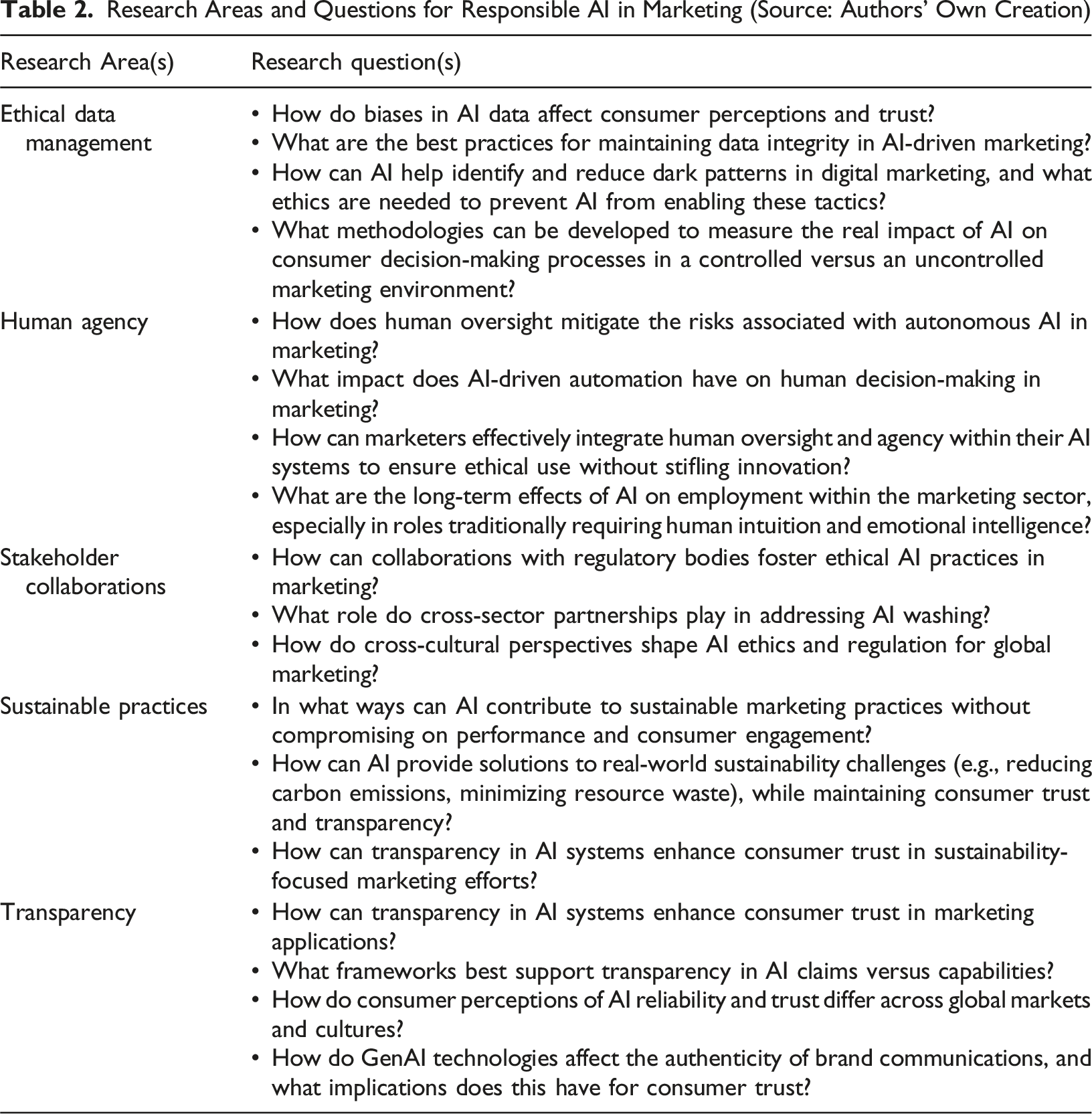

Research Areas and Questions for Responsible AI in Marketing (Source: Authors’ Own Creation)

Second, the study emphasizes broad ethical concerns—such as transparency, consumer trust, and data privacy—without exploring the nuanced impacts of AI on different demographic groups. AI’s effects may vary significantly across consumer segments, particularly in terms of vulnerability to algorithmic biases and privacy risks. Future research should examine these variations through segmented analyses, providing more tailored recommendations for marketers to safeguard diverse consumer interests.

Third, this study highlights the role of human agency, ethical data management, transparency and stakeholder collaboration in breaking the cycle of AI mistrust. While this broad perspective highlights responsible AI’s interconnected nature, it limits the depth of each component based on the cyclical relationship between ethical, operational, and regulatory factors. Future research could adopt a more focused approach, particularly in bias detection, privacy protection, and transparency. Bias detection is critical to preventing algorithmic discrimination, which undermines trust and decision-making, especially in the absence of ethical data governance (Ashok et al., 2022; Yang et al., 2019). Privacy protection frameworks must address security risks in high-volume environments where personal data remains vulnerable (Ashok et al., 2022; Yang et al., 2019). Transparency plays a key role in sustaining consumer trust, particularly in ensuring the ethical use of AI-driven data (Ashok et al., 2022; Yallop et al., 2023). Since trust erosion is closely tied to AI-related concerns, research should also examine phenomena like AI Booing and AI Washing, which contribute to growing consumer skepticism. Conceptual and empirical studies on these issues would provide marketers and policymakers with strategies for ethical AI adoption (Abraham et al., 2019; Yallop et al., 2023).

Fourth, given the rapid evolution of AI technology, this study’s findings may require continual reassessment. As new AI applications emerge in marketing, future research should investigate the implications of technologies such as GenAI, deep learning, and advanced sentiment analysis on consumer engagement and ethical practices. Longitudinal studies would be especially valuable for tracking how AI capabilities and consumer attitudes shift over time, helping to maintain relevant ethical standards.

Lastly, the study primarily addresses Western perspectives on AI ethics and regulation. Cross-cultural research could uncover diverse viewpoints on AI practices, influenced by different cultural norms, regulatory environments, and consumer expectations. Including perspectives from a range of regions would deepen our understanding of AI’s global impact on marketing ethics.

To address these limitations and further refine our understanding, we also suggest the following avenues for future research:

A first direction of future research could assess whether the conceptual insights can be validated empirically. Future studies should empirically investigate the ethical and operational challenges of AI in marketing across different industries and contexts. Case studies or field experiments could validate and refine the conceptual findings presented here, offering more context-specific guidance for AI implementation in marketing.

The second direction of future research could focus on the demographic impact of AI in marketing. Further research is needed to explore how AI-driven marketing practices affect various demographic groups, with a particular focus on algorithmic biases that may impact vulnerable populations. By examining AI’s influence on different consumer segments, researchers can identify specific risks and develop ethical guidelines tailored to diverse audiences.

The third direction of future research could employ longitudinal studies on evolving AI technology. To keep pace with rapid AI advancements, future research should adopt a longitudinal approach, tracking consumer attitudes, regulatory changes, and technological developments over time. This will help reveal long-term trends and emerging ethical issues, ensuring that AI standards remain responsive to ongoing changes.

Lastly, the fourth direction of future research could adopt cross-cultural perspectives on AI ethics and regulation. Developing a globally relevant framework for ethical AI use requires examining practices across diverse cultural and regulatory landscapes. Comparative studies between Western and non-Western perspectives could highlight unique challenges and insights, enriching our understanding of AI’s ethical impact on marketing worldwide.

Footnotes

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.