Abstract

This study addresses the current lack of empirical data on the experiences and attitudes of clinical research professionals towards AI-powered clinical trial management tools. Clinical research professionals affiliated with various Swiss and international clinical research networks were invited to participate in an online survey. The survey focused on nine use cases of AI-powered clinical trial management tools. Participants were asked to share their ethical considerations, and their experiences were assessed at both the individual and institutional levels. Answers from 110 participants, primarily from Swiss academic institutions, were included in the data analysis. While participants generally held positive attitudes, mixed views were also common. Governmental attitudes were widely perceived as unclear or cautious, with the associated regulatory opacity possibly contributing to the limited adoption of AI in trial management observed in this study. In addition to a clear AI governance, ethical compliance and training emerged as crucial aspects. There was also broad consensus on the necessity of informing patients about tools’ uses and of ensuring an independent certification of the respective tools. The widespread adoption of AI-powered clinical trial management tools remains distant, entangled in a net of complexities that require further empirical investigation. Ethical concerns appear to play a crucial role, driven by distrust and the absence of a clear regulatory framework. Urgent actions are needed at both institutional and governmental levels, including the establishment of a governance framework and training provision.

Introduction

Over the last 30 years, scientific research has made remarkable progress, however, clinical trials remain complex, require large investments to conduct (Subbiah, 2023), and are sensitive to biases that can jeopardize their successful execution and interpretation of results (Krauss, 2018). For future clinical trials to succeed, a fundamental transformation in their management is essential. This shift may be driven by emerging technologies and the continuous challenge of existing models by all stakeholders (Subbiah, 2023). Various artificial intelligence (AI) approaches have the potential to streamline clinical trial management (CTM) by enhancing trial design and conduct, improving surveillance tasks, and optimizing data analysis. Specifically, AI-powered CTM tools could improve methodological decision-making, evaluate trial feasibility, and facilitate document creation. Additionally, they could aid in patient recruitment, enhance quality and safety monitoring, and provide real-time assessment of trial progress and outcomes (AI Joint Task Force from the European CRO Federation and the eClinical Forum, 2023; Weissler et al., 2021). These AI solutions may either be specifically designed for CTM or leverage open-source models with broader applications such as ChatGPT.

While AI holds significant promise across various aspects of CTM, there is a notable lack of studies quantitatively assessing its impact on trial planning and execution. To date, the most documented applications have been in patient recruitment (Cascini et al., 2022). Achieving AI potential to enhance the quality and efficiency of clinical research requires addressing both operational and ethical challenges. Key concerns include the origin of data, bias, and the transparency of development and validation processes. These issues are often interrelated; for example, inadequate training data and poor model calibration can introduce biases, which in turn increase the risk of propagating errors across research contexts due to flawed models (Weissler et al., 2021). Additional challenges may include the increased complexity of informed consent processes, ensuring AI tools are user-friendly for clinical researchers, and building sufficient AI expertise within regulatory agencies and ethical committees to effectively support evaluations (Askin et al., 2023).

While numerous guidelines on AI ethics exist worldwide (Jobin et al., 2019), they are not specific to clinical research and pose implementation challenges (Goirand et al., 2021). Tools intended to support implementation are often deemed user-unfriendly, compounded by organizational barriers and a lack of evaluation metrics (Morley et al., 2020). In clinical research, the International Council for Harmonisation (ICH) (1997) Good Clinical Practice Guideline E6 sets international ethical and scientific standards. The guideline is currently undergoing major revision. The new version (R3) will encompass overarching principles and two annexes, with annex 2 focusing on innovative trial designs and the impact of technology on clinical trials (ICH, 2021). The delayed release of Annex 2 for public consultation highlights the complexity of these subjects. At the national level, laws are struggling to keep pace with technological advancements (McKee and Wouters, 2023). In Switzerland, recent revisions to the Human Research Act (Federal Office of Public Health, 2011) ordinances still leave uncertainty around the requirements for clinical research involving emerging technologies like AI. Similar to other countries, Switzerland is witnessing the emergence of start-ups offering AI solutions for CTM. These companies tend to focus on partnerships within the industry and, as noted by Weissler et al. (2021), evidence supporting a model’s performance is often limited to promotional material, with limited transparency regarding their development and validation processes. In the academic context, there is currently limited information regarding the use of AI-powered CTM tools within the Swiss clinical research environment.

It is not just the functionality of AI systems but also how they are perceived that fundamentally shape AI governance (Cath, 2018). To fully leverage AI’s potential in supporting CTM, it is therefore crucial to engage all stakeholders in discussions about the advantages and limitations of these tools. In this study, we focused on one key group of stakeholders. Currently, there is a lack of empirical data on both the experiences and attitudes of clinical research professionals towards AI-powered CTM tools. This survey study aims to fill that gap, providing insights from those who should interact directly with these technologies.

Methods

Participants

The reporting of this study conforms to the Checklist for Reporting of Survey Studies (CROSS; Sharma et al., 2021). The cross-sectional study did not require informed consent or ethics approval. An online survey was distributed via email through various Swiss and international clinical research networks. The survey was also published on the website of the Swiss Clinical Trials Organization. Clinical research professionals involved in the planning, conduct, and oversight of clinical trials were invited to complete the survey anonymously, with the option to provide their email addresses for further discussion on the topic. Multiple reminders were sent to mitigate non-response error.

Survey

The survey was designed collaboratively by the authors. The secure web application REDCap version 14.0.20 was used to collect the data between February and March 2024. The survey, comprising five sections, started with a definition of AI. The initial questions gathered information on the participants’ background and their level of engagement with AI and AI ethics. Subsequent questions addressed respondents’ experience and attitudes towards AI in general and specifically towards its application in CTM. Regarding AI-powered CTM tools, the use cases covered include:

(a) Trial design: AI identifies emerging trends and research gaps by analysing data from sources like patient forums (identification of relevant research questions); based on past trials and medical literature, AI optimizes trial designs by analysing study protocols and identifying potential pitfalls (trial design optimization); AI assesses trial feasibility by evaluating resources, target populations, and logistical constraints (assessment of resources and feasibility).

(b) Trial conduct: AI drafts study documents such as protocols and informed consent forms (writing of study documents); AI analyses medical records to identify individuals who match the specific trial criteria (participants’ screening); instead of recruiting real patients for a control group, AI generates simulated patients using real-word data (creation of synthetic control arms).

(c) Surveillance tasks: AI monitors patient compliance with trial protocols, such as medication adherence and completion of self-reported assessments (monitoring of patient); AI continuously tracks patient data to detect anomalies and ensure safety (quality control and safety monitoring).

(d) Data analysis: AI assesses trial progress and outcomes in real-time, enabling adjustments to parameters such as dosage and treatment arms (optimization of adaptive study designs).

The level of experience was captured at both the individual and institutional levels. To assess individual experience, composing trial documents using AI was selected as widely accessible use case. One question also explored participants’ subjective perceptions of governmental attitudes towards AI applications in healthcare.

The questions were formulated and the use cases selected based on literature relevant to the use of AI in CTM (AI Joint Task Force from the European CRO Federation and the eClinical Forum, 2023; Askin et al., 2023; Cascini et al., 2022; Weissler et al., 2021) as well as to AI ethics in healthcare (Hashiguchi et al., 2022; McKee and Wouters, 2023; WHO, 2021; Zhang and Zhang, 2023). For validation, five independent clinical research professionals reviewed and provided feedback on the survey structure and content. Additionally, an experienced data manager conducted a technical validation.

Analysis

Only responses from participants who completed at least the first three survey sections were included in the analysis. Although the survey was distributed internationally, the uneven distribution of participants across different geographical areas did not allow for the detection of representative regional differences. Total responses to each question were therefore compiled for analysis in Excel, with results presented as percentages.

Five-point Likert scales were utilized for certain questions. Responses at the two extreme ends of the scale were pooled for graphical representation. For ranking purposes, numerical values were assigned to the answers and averages were then calculated.

Results

Participants’ background

181 clinical research professionals viewed the survey, with 120 providing responses. Ten dropped out before completing the third section and were excluded from the analysis. Of the remaining responses, the majority (82%) originated from Switzerland, followed by 9% from Asia, 8% from Europe, and 1% from Australia. Most participants (90%) reported working for academic institutions, while a minority indicated employment by companies (6%) or governmental institutions (4%).

Regarding familiarity with AI, 70% of participants indicated minimal or no engagement with the topic, while 30% reported moderate to extensive engagement. Furthermore, 81% stated they had not engaged with the topic of AI ethics, while 19% declared moderate to extensive engagement.

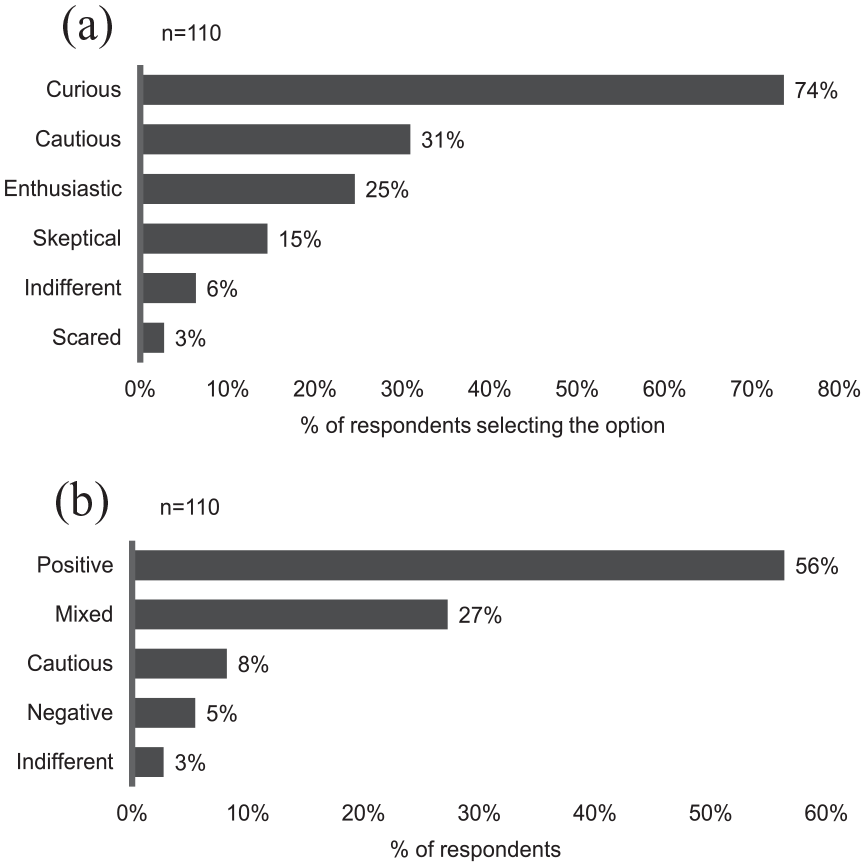

Attitudes

To express their attitudes towards AI-powered CTM tools, participants were asked to select one or more sentiments (Figure 1). ‘Curiosity’ emerged as the prevailing sentiment among the 110 respondents, being selected by 74% of them (Figure 1a). This was followed by ‘cautiousness’, chosen by 31%, and ‘enthusiasm’, which was selected by 25%. Negative or neutral feelings were less frequent, with ‘scepticism’ chosen by 15%, ‘indifference’ by 6%, and ‘fear’ by only 3% of the respondents. When analysing the combination of selections from each participant (Figure 1b), 56% of them were classified as having a positive attitude, defined as being at least curious or enthusiastic and not expressing any negative sentiments like scepticism or fear. Additionally, 27% of respondents exhibited a mixed attitude, combining positive feelings with cautiousness or blending positive and negative sentiments. Participants who were purely cautious, negative, or indifferent were in the minority (8%, 5% and 3%, respectively).

Personal attitudes towards AI-powered CTM tools. Multiple answers were possible. (a) % of respondents selecting each answer. (b) respondents were classified in five categories based on the combination of their selections. Positive = at least curious or enthusiastic and no negative sentiments (=scepticism or fear); mixed = combining positive feelings with cautiousness or blending positive and negative sentiments.

At the governmental level (Supplemental Figure S1), the majority of the 110 respondents perceive the attitude towards AI applications in healthcare as unclear and/or cautious. Specifically, ‘unclear’ was selected by 56% of respondents, while ‘cautious’ by 40%. A ‘restrictive’ stance by the government was perceived more frequently (selected by 11% of participants) than an ‘incentive’-oriented approach (selected by 7% of participants). No participant perceived the government as prohibitive.

Institutional and individual experience

Out of the 85 participants involved in medical writing, 33% stated that they have already utilized a language model to write a trial document, with three of them having received prior training on the model.

At the institutional level (Supplemental Figure S2), 5% of respondents reported occasional use of AI-powered CTM tools. Among the 109 respondents to this question, 50% were uncertain about their institution’s experience, while the remaining 45% indicated no institutional involvement in clinical trials facilitated by AI-powered CTM tools.

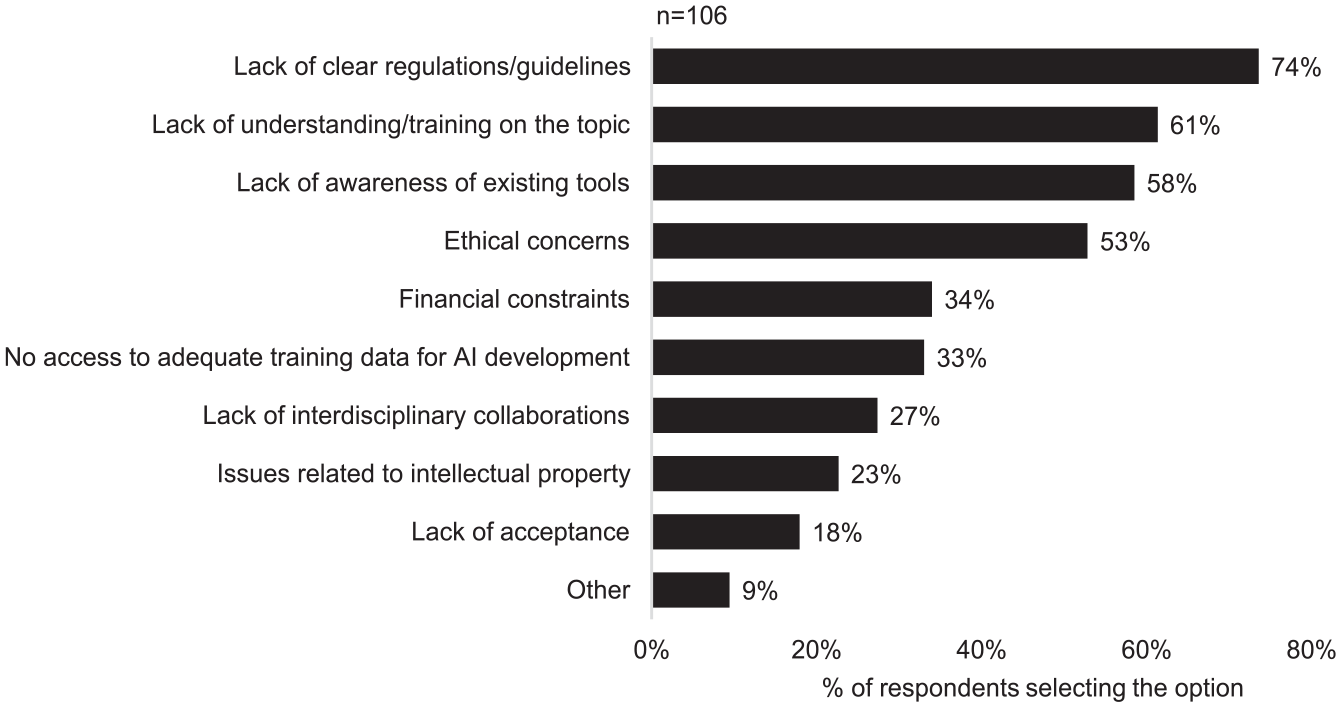

Out of the 110 participants, almost all (106) agreed that AI-powered CTM tools are not receiving sufficient attention compared to other AI applications. Regarding the factors potentially contributing to the slow adoption of these tools, the respondents selected multiple answers (Figure 2). Specifically, ‘lack of clear regulations/guidelines’ was chosen by 74% of respondents, followed by ‘lack of understanding/training on the topic’ (61%), ‘lack of awareness of existing tools’ (58%), and ‘ethical concerns’ (53%). With the exception of ‘lack of acceptance’, the remaining factors were also selected by more than 20% of the participants.

Factors slowing down the adoption of AI-powered CTM tools. Multiple answers were possible. Four participants did not agree that these tools are underused and were not required to answer this question.

Ethical considerations

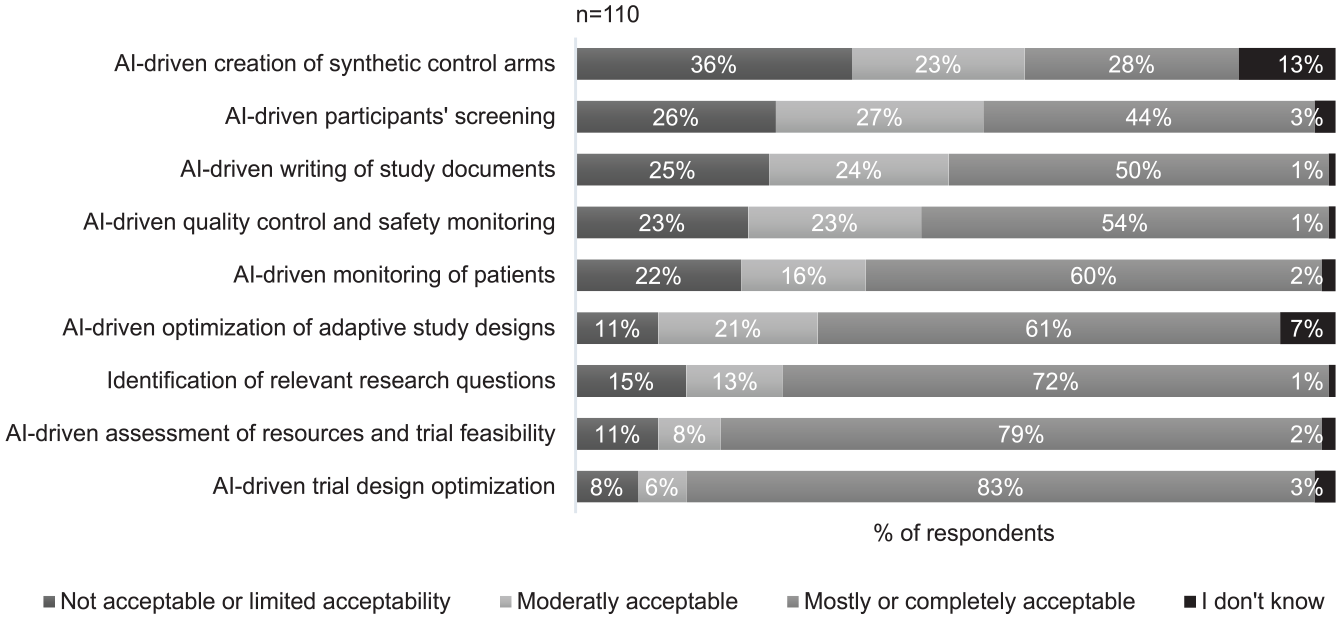

Out of nine AI-powered CTM use cases, three were rated as not or only limitedly acceptable by 25% or more of the 110 participants (Figure 3). These included the AI-driven ‘creation of synthetic control arms’, ‘participants’ screening’ and ‘writing of study documents’. On average, AI-driven ‘quality control and safety monitoring’, as well as ‘monitoring of patients’, appeared to raise less concerns. However, no or limited acceptability was still indicated by 22% and 23% of the respondents, respectively. The remaining use cases were considered mostly or completely acceptable by more than 60% of the participants, with AI-driven ‘trial design optimization’ ranking as the least problematic use case.

Acceptability of nine use cases. A five-point Likert scale was used. Responses at the two extreme ends of the scale were pooled for graphical representation.

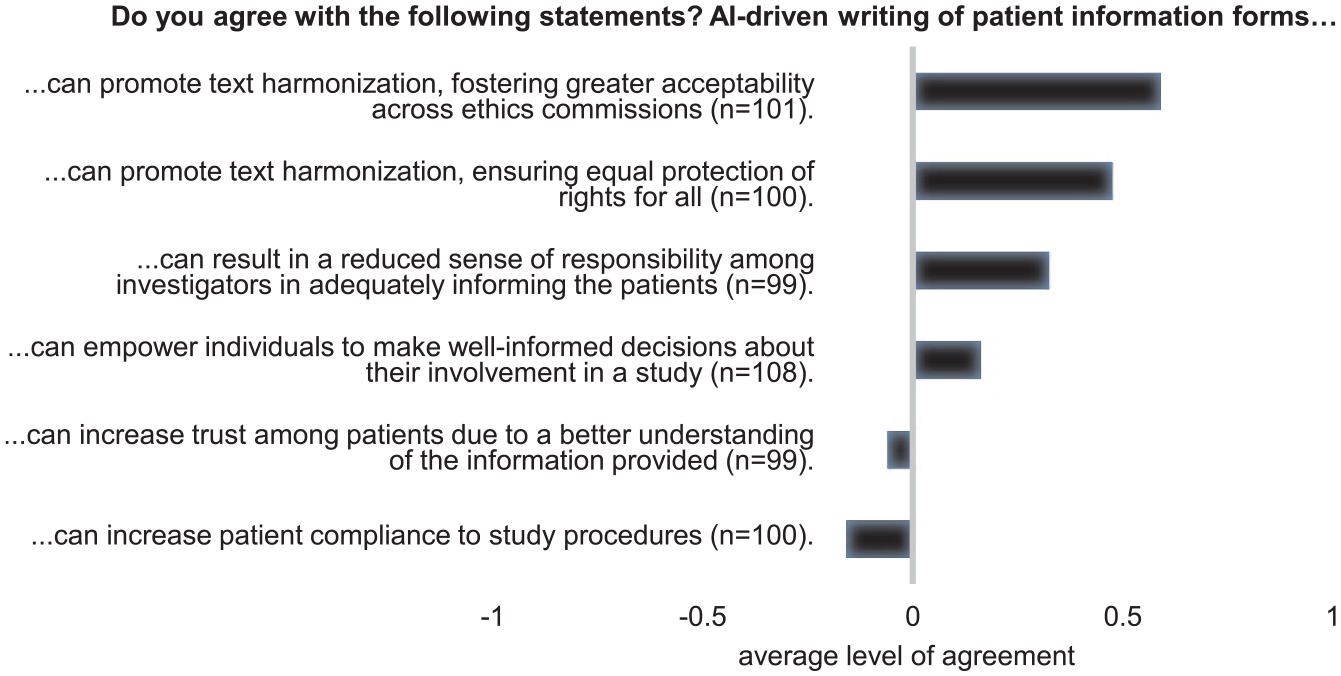

When considering the writing of clinical study documents, particularly patient information (Figure 4), the 110 participants predominantly agreed that AI could help achieving better text harmonization. This would in turn enhance the document's acceptability across ethics committees and ensure equal protection of rights for all patients. Although to a lesser extent, participants also agreed that using AI for writing patient information may empower individuals to make well-informed decisions about their involvement in clinical trials. On the other hand, participants also agreed that a negative implication may be a reduced sense of responsibility among investigators to adequately inform patients. Disagreement was instead expressed regarding an increase in patient trust as a result of a better understanding of the information provided. Even stronger disagreement was expressed regarding the potential of AI to increase patient compliance with the study procedures.

Using AI to write patient information forms. A five-point Likert scale was used to assess the level of agreement with six given statements. Responses at the two extreme ends of the scale were pooled and numerical values were assigned to the answers (disagree = −1, neutral = 0, agree = 1). Average level of agreement is displayed. Missing answers reflect those who responded with ‘I don’t know’.

In the survey, we asked whether patients should be informed when AI tools are used for CTM. 61% of the 110 participants agreed, while 12% disagreed. The remaining 27% believe that it depends on the type of tool. Regarding what patients should understand about the tool, four points in particular were repeatedly cited in the free-text comment section. These included the ‘purpose of the tool’ (mentioned 26 times), the ‘level of human supervision’ (mentioned 12 times), ‘data protection-relevant information’ (mentioned 10 times), and ‘risks associated with the tool’ (mentioned 9 times).

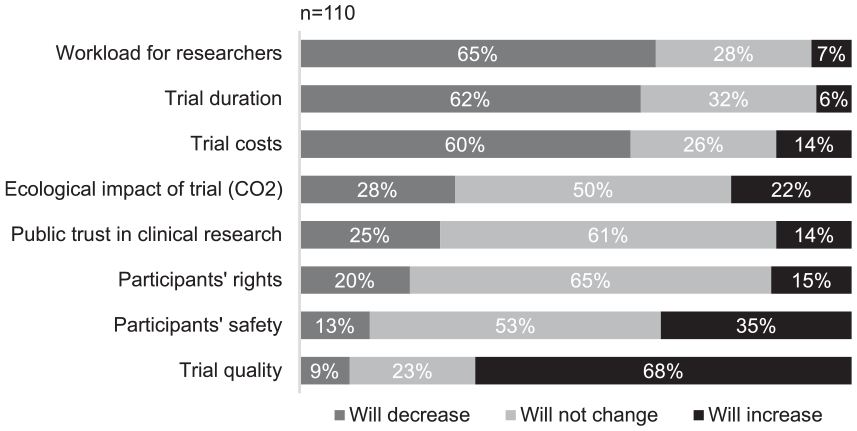

Considering the potential impact of widespread adoption of AI-powered CTM tools (Figure 5), most of the 110 participants (60% or more) anticipated a reduction in trial duration, costs, and researchers’ workload. Additionally, 68% foresaw an enhancement in trial quality. For the other aspects, the predominant opinion (50% or more) was that there will be no change. However, a relevant proportion (35%) held the view that AI will positively impact participant safety. Among those who anticipated a change in the ecological impact of trials, opinions were divided. A similar share of people were convinced that the impact will decrease (28%) and that it will increase (22%).

Impact of a widespread use of AI-powered CTM tools on various aspects. Ecological impact was defined as the consequences on overall well-being of ecosystems, for example, by the release of CO2.

Requirements

Examining the importance of six features for AI-powered CTM tools, we found that all of them are considered relevant (Supplemental Figure S3). ‘Ethical compliance’ emerged as the most crucial feature, with 93% of the 110 participants rating it as important or very important. It was followed by ‘reliability’ (90%), ‘supervisability’ (87%), ‘user-friendliness’ (77%), and ‘customizability’ (56%). ‘Ecological sustainability’ ranked as the least essential feature, with 15% considering it only slightly important or even not important at all.

Similar results were found when querying the level of knowledge necessary for clinical researchers using AI-powered CTM tools (Supplemental Figure S4.). The majority of the 110 participants considered all thematic subjects important and indicated that a competent or proficient level of knowledge is required. ‘AI ethics’ emerged as crucial once again, ranking second after ‘AI tool risks’. For both subjects, more than 80% of participants expressed the need for competent or proficient knowledge for clinical researchers. They were followed by ‘AI tool limitations’ (75%), and ‘AI regulatory framework’ (74%). ‘AI tool functionality’ and ‘AI key terminology’ were considered slightly less important, with 17% and 25% of participants stating that no or only elementary knowledge is required for clinical researchers.

According to 86% of the 110 participants, tools should undergo independent assessment and certification before being implemented in a clinical trial. An additional 7% suggested assessment and certification at least for some types of tools.

Discussion

Our findings indicate that the general hype surrounding AI fosters curiosity and a generally positive attitude towards AI-powered CTM tools. At the same time, we also identified significant ambivalence and caution among clinical research professionals. While acknowledging the benefits of technology, people are also aware of the uncertainties and potential drawbacks they might bring. This duality creates confusion about technological progress (Zhang and Zhang, 2023). Additionally, given the traditionally stringent clinical trials regulations, we suggest that these cautious and mixed attitudes may stem from the perceived risks of using tools without clear regulations.

Our results also indicate that the implementation of AI in CTM remains limited so far, at least in academic institutions. This finding aligns with previous literature-based analyses (Cascini et al., 2022; Weissler et al., 2021). We believe that the governmental caution and the associated regulatory opacity contribute to hinder the adoption of these tools both at the individual and institutional levels. Appropriate regulatory frameworks are urgently needed to promote AI applications in CTM, but also to prevent ethically questionable practices (Reddy et al., 2020). Historically, regulatory advancements in clinical research often followed scandals revealing weaknesses in the regulatory and ethical frameworks (McKee and Wouters, 2023). To prevent such occurrences, proactive measures are imperative. Similarly to ElZarrad et al. (2022), we advocate for an adaptive policy-making infrastructure, a skilled workforce, and interdisciplinary collaborations. For example, Korea recently took a step in this direction by establishing a multi-stakeholder organization aimed at accelerating regulatory innovation for clinical trials (Chung et al., 2024).

Survey respondents attributed the limited use of AI in CTM to several reasons. Beside the absence of clear regulations, they indicated the lack of awareness of existing tools and insufficient training as probable contributing factors. Although being personal opinions, the lack of awareness was reflected in responses about institutional experience, where the implementation of tools was mostly unknown. Lack of training was confirmed in the example of using a language model to write study documents, with only three participants having received prior training. In contrast to that, participants suggested broad and advanced knowledge among AI users in CTM. These findings underscore the importance of establishing a clear institutional AI strategy and investing in digital literacy for clinical researchers to fully leverage the benefits of AI in CTM. As previously reported for the clinical routine context, educational initiatives are crucial for building trust in AI and would enable clinical research professionals to actively engage with the technology, rather than merely being passive users (Reddy et al., 2020).

Ethical concerns represent for survey participants an additional significant barrier to the widespread adoption of AI in CTM. Calls for independent certifications and the expectation for researchers to possess proficient knowledge in AI ethics suggest that the ethical concerns may stem from a general mistrust in the development and implementation of these tools. The literature justifies this mistrust by highlighting potential biases, lack of transparency, and privacy issues, which are further exacerbated by the absence of practical recommendations and standards (Reddy et al., 2020) and the limited understanding of ethical principles by AI developers (Morley et al., 2023).

Despite these concerns, most use cases were perceived as generally acceptable, offering potential long-term benefits such as increased safety, improved quality, and reduced costs, duration, and workload. Although speculative, this positive impact may suggest an ethical imperative to advance the implementation of these tools. However, while AI may reduce researchers’ workloads, potentially allowing more time for patients, it also raises concerns about dehumanizing clinical research. Furthermore, although trials could be streamlined, tools development, adaptation to trial settings, and the certification process wished by most survey participants require significant time and resources. Without a fundamental paradigm shift, AI tools could even complicate the clinical trial model, adding costs and complexity (Landers et al., 2021). Participant opinions on the environmental impact of AI in CTM were divided. Interestingly, the ecological sustainability of these tools ranked lowest in importance. This finding may be attributed to a general lack of awareness within the field on this topic, as previously noted by Hoffmann et al. (2023). For a comprehensive understanding of economic and ethical considerations, more empirical data on the impact of these tools is required. The existing literature on these subjects currently remains insufficient (Cascini et al., 2022).

Between the use cases, those considered more problematic were the ‘creation of synthetic control arms’, ‘participants’ screening’ and ‘writing of study documents’. Synthetic control arms may raise concerns due to their direct impact on trial results. While synthetic datasets offer the advantage of being free from identifiable personal information and the potential to expand available data, a key challenge is ensuring that synthetic data accurately represent the real-world phenomena they aim to emulate. Additionally, balancing privacy protection with the fidelity of synthetic data remains a complex trade-off (Shanley et al., 2024).

Regarding the application of AI in generating study documents, in the survey we further analysed the example of patient information. Large language models have already been studied in the context of patient information and showed promising results, however, the few studies have primarily explored chatbot applications (Ghim and Ahn, 2023). The potential of AI to enhance informational texts for clinical trials and the associated ethical implications have yet to be empirically investigated. Survey participants largely agreed that AI could improve text harmonization, however, this may only be achievable with proper prompting techniques. AI outputs can vary depending on users and prompting methods (Shah et al., 2024), potentially leading to inconsistencies instead of uniformity. Therefore, the expectation that AI will enhance acceptability across ethics committees and ensure equal protection of patient rights may only be realized through the development of prompting guidelines and the education of clinical researchers on the effective use of this technology.

Survey participants also expressed optimism that AI could help patients make better-informed decisions by enhancing the clarity of patient information material. Given the progress made in simplifying medical texts to empower patients (Moramarco et al., 2021), similar efforts could be applied to clinical trial documents.

While AI-driven writing of patient information was hypothesized to promote equity and autonomy, concerns emerged regarding the potential decrease in researchers’ sense of responsibility in adequately informing patients. Additional aspects may influenced the relatively negative evaluation of the use case, such as the use of unspecialized open source tools that are underperforming in specific languages and contexts like clinical trials (Li et al., 2023). Regarding responsibility, we believe that AI-generated content does not directly undermine the duty of researchers to inform patients, as this involves multiple practitioners, not just the text’s author. However, we are concerned that over-reliance on AI technology could degrade communication skills among researchers (Jarrahi, 2019), potentially harming the quality of the informed consent process on the long term.

The third use case that was not considered particularly ethical was participants’ screening. Interestingly, this is the most extensively studied application (Askin et al., 2023; Cascini et al., 2022). This raises questions about whether the tools being developed align with the preferences of the clinical research community, highlighting the need for closer collaboration among all stakeholders. For this last use case, concerns may be associated with a new form of paternalism in which AI makes decisions regarding trial participation and consequently therapeutic options on behalf of patients and doctors (Sauerbrei et al., 2023). Another issue may be the inability of patients to be informed beforehand about the use of AI tools, which appears to be an essential factor for survey participants. Consent is a delicate topic in clinical research, requiring a careful balance between providing sufficient information to respect patients' autonomy and avoiding information overload (Godskesen et al., 2023). Typically, not all tools implemented in CTM are disclosed to participants, and there is a lack of clear legal basis for this practice. Further studies are needed to determine if this should be different for AI tools, and if so, why and how. In addition to the nature of the tools, the level of automation appeared to be an important aspect to disclose according to survey participants. Tools’ supervisability also ranked as the third most important aspect desired for AI CTM tools, reflecting a growing concern about the need for clear and effective human control over AI processes. However, there still a lack of clarity about why human control is necessary or what specific role it should play across different applications. Without this understanding, it is difficult to design institutions or systems that provide adequate level of control. As explained by Davidovic (2022), ‘meaningful human control’ is not a one-size-fits-all solution, but rather a flexible tool that must be tailored to the particular challenges and risks associated with each AI system.

This survey study has some limitations to consider. First, not all geographical regions are represented and there is a clear overrepresentation of the Swiss clinical research community. However, global literature suggests that AI in CTM is still in its early stages in many regions (Askin et al., 2023; Cascini et al., 2022; Weissler et al., 2021). Therefore, the insights gathered from Swiss respondents may be considered indicative of challenges and opportunities likely present in other countries as well. Although the survey mainly reflects the views of clinical research professionals working in academia, most human research projects in Switzerland are conducted in academic settings (DKF Basel, 2023), making these insights particularly valuable. Respondents’ varying familiarity with AI and AI ethics might raise concerns, but we believe this does not significantly affect the study’s validity, as the focus was on capturing the gut reactions of clinical research professionals. Familiarity with CTM was considered more critical for interpreting the survey, and we ensured this by distributing it through established clinical research networks. Additionally, ethical principles were not presented in abstract terms but embedded in practical examples, allowing for a more intuitive understanding. Before distribution, the survey was validated by five individuals representing the target group. As a final limitation, we recognize that further in-depth analysis for each use case is needed. However, the study aimed to offer a broad overview of the uncertainties surrounding AI-powered CTM tools, serving as an important first step toward understanding their challenges and opportunities.

Conclusion

This study represents the first analysis of clinical research professionals’ attitudes towards AI-powered CTM tools and the first empirical evaluation of their current implementation extent. The study clearly indicates that widespread adoption of AI-powered CTM tools is still a distant prospect. Adoption seems to be tangled in a web of various factors, each presenting its own complexities. Ethical concerns take centre stage, with distrust in processes and inadequate regulatory frameworks appearing to be the primary drivers. We identified a pressing need for action at both institutional and governmental levels, including the establishment of clear regulation and guidelines, as well as the provision of necessary training. Future discussions with a broader range of stakeholders, coupled with empirical research on economic and ethical aspects, should help shape this framework effectively and facilitate the responsible integration of AI in CTM.

Supplemental Material

sj-docx-1-rea-10.1177_17470161241309347 – Supplemental material for Navigating the future of clinical trial management – insights on the transformative role of AI

Supplemental material, sj-docx-1-rea-10.1177_17470161241309347 for Navigating the future of clinical trial management – insights on the transformative role of AI by Lara Bernasconi and Regina Grossmann in Research Ethics

Supplemental Material

sj-docx-2-rea-10.1177_17470161241309347 – Supplemental material for Navigating the future of clinical trial management – insights on the transformative role of AI

Supplemental material, sj-docx-2-rea-10.1177_17470161241309347 for Navigating the future of clinical trial management – insights on the transformative role of AI by Lara Bernasconi and Regina Grossmann in Research Ethics

Footnotes

Acknowledgements

We sincerely thank the participating clinical research experts for their kind cooperation. Our gratitude extends to the clinical research networks that facilitated survey distribution. Particularly, we would like to thank the Swiss Clinical Trials Organization (SCTO) and the International Clinical Trials Center Network (ICN). Lastly, we appreciate the valuable feedback provided by the employee from the Clinical Trials Center Zurich involved in survey validation. We acknowledge the use of ChatGPT 3.5 to enhance the article text and correct sentences. No content was generated entirely by the chatbot. Prompts included requests for improvements, reformulations, and simplifications.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Ethical approval

This study did not use any personal data from survey respondents. The study does not fall within the scope of the Swiss Human Research Act and hence did not require ethics approval or informed consent.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.