Abstract

Restrictions put in place during the COVID-19 pandemic necessitated a rapid shift toward remote qualitative recruitment and data collection. This shift generated considerable opportunities to conduct qualitative research, but also introduced novel challenges to data integrity in the form of inauthentic participants. While newer literature describes potential strategies that may be deployed to target concerns related to inauthentic participation, an explicit focus on equity and ethical considerations has been largely absent from current discourse. Here we describe our experiences and challenges conducting a study aimed at exploring reasons for recent firearm acquisition among Black Americans. We describe the mitigation strategies we implemented, as well as the ethical challenges and potential consequences that emerged. Our team identified three specific ethical considerations: identity and processes of categorization, trust and mistrust in research engagement, and privacy and honoring sensitive context. These considerations are critical to consider in conducting qualitative research, especially among marginalized groups, and expand the current literature on qualitative research ethics.

Restrictions put in place in response to the COVID-19 pandemic necessitated a rapid shift toward remote qualitative research recruitment and data collection. This shift generated considerable opportunities and challenges for conducting qualitative research. Benefits of leveraging online approaches include recruitment of “seldom heard participants” (Ridge et al., 2023), reach of large geographic areas (Mistry et al., 2024), and practices that amplify inclusion of populations historically underrepresented in research (Woolfall, 2023). The benefits of online recruitment could be especially helpful to facilitate firearm suicide research, a socio-politically sensitive and sometimes intensely private topic that has had a limited focus on exploring lived experiences of historically and contemporaneously marginalized groups.

There are also challenges to conducting online qualitative research, including a novel threat to data integrity in the form of inauthentic qualitative research participants—those who falsify personal or contextual characteristics to meet study eligibility criteria and/or exaggerate their experiences in order to participate in qualitative studies (Drysdale et al., 2023; Jones et al., 2021; Mistry et al., 2024; Pellicano et al., 2024; Pullen Sansfaçon et al., 2024; Ridge et al., 2023; Roehl and Harland, 2022; Santinele Martino et al., 2024; Sefcik et al., 2023; Sharma et al., 2024; Woolfall, 2023). Newly published case reports have described post-COVID challenges with potential inauthentic participants across various content domains, including research pertaining to autism (Pellicano et al., 2024), neurodevelopmental disorders (Sharma et al., 2024), and dementia care (Sefcik et al., 2023). For example, participants reportedly have made efforts to create email accounts, complete preinterview questionnaires, engage in consent processes, answer interview and focus group questions, refer acquaintences, and solicit multiple payment-related emails (Pellicano et al., 2024; Ridge et al., 2023; Sefcik et al., 2023; Sharma et al., 2024; Woolfall, 2023). In these scenarios, participants were suspected of misrepresenting personal characteristics to gain study access and then intentionally providing fabricated data once enrolled.

While inauthentic participation is not new to research, the inclusion of such participants in qualitative, in contrast to quantitative or experimental, studies generates unique pragmatic and ethical challenges (Lawlor et al., 2021). To date, limited literature (Mistry et al., 2024; Pullen Sansfaçon et al., 2024) has explored specific ethical considerations. These ethical considerations are especially heightened for research that engages with groups experiencing marginalization due to extensive histories of harm imposed by individual researchers and institutions (Drysdale et al., 2023). If efforts are to promote research integrity, the process of navigating responses to potential inauthentic participants must be historically informed and sensitive to sociopolitical context in order to ensure ethical practices and not reinforce harm.

During the COVID-19 pandemic, firearm purchasing surged in the United States and new firearm owners were disproportionately more likely to identify as Black and women than during prior buying periods (Miller et al., 2021). Our team conducted a qualitative study to explore reasons for firearm acquisition among Black Americans in the early pandemic era. However, that work is only helpful in improving our understanding of firearm acquisition and developing intervention strategies if the findings are valid (Morse, 2015). Here, we present our experiences and describe challenges with potential inauthentic participants, the mitigation strategies that we implemented, and the ethical challenges that arose throughout our efforts to maintain research integrity.

Our experiences

Research aim

For this study, we used a qualitative approach to conduct semi-structured interviews with adults living in the United States who identified either as Black American or African American and had acquired a firearm between January 2020 and December 2022. We developed an interview guide based on the socioecological model that elicited narratives about recently acquiring a firearm, with questions and probes regarding factors that influenced acquisition. A community partner provided input on the interview guide and recruitment flier prior to study launch. We engaged in criterion-based sampling across pre-existing and new firearm owners, as well as men and women. We recruited participants via online avenues to optimize broad reach across U.S. geography, culture, and political ideology. The research team was comprised of individuals with diverse professional, racial, and gender identities. All were based at U.S. universities. The primary interviewer identified as a white cisgender woman with a background in macro social work. Interviews were audio-recorded and transcribed for data analysis. The study was classified as exempt by the University of Pennsylvania and University of Colorado Institutional Review Boards. All participants provided verbal informed consent.

Recruitment and data collection challenges

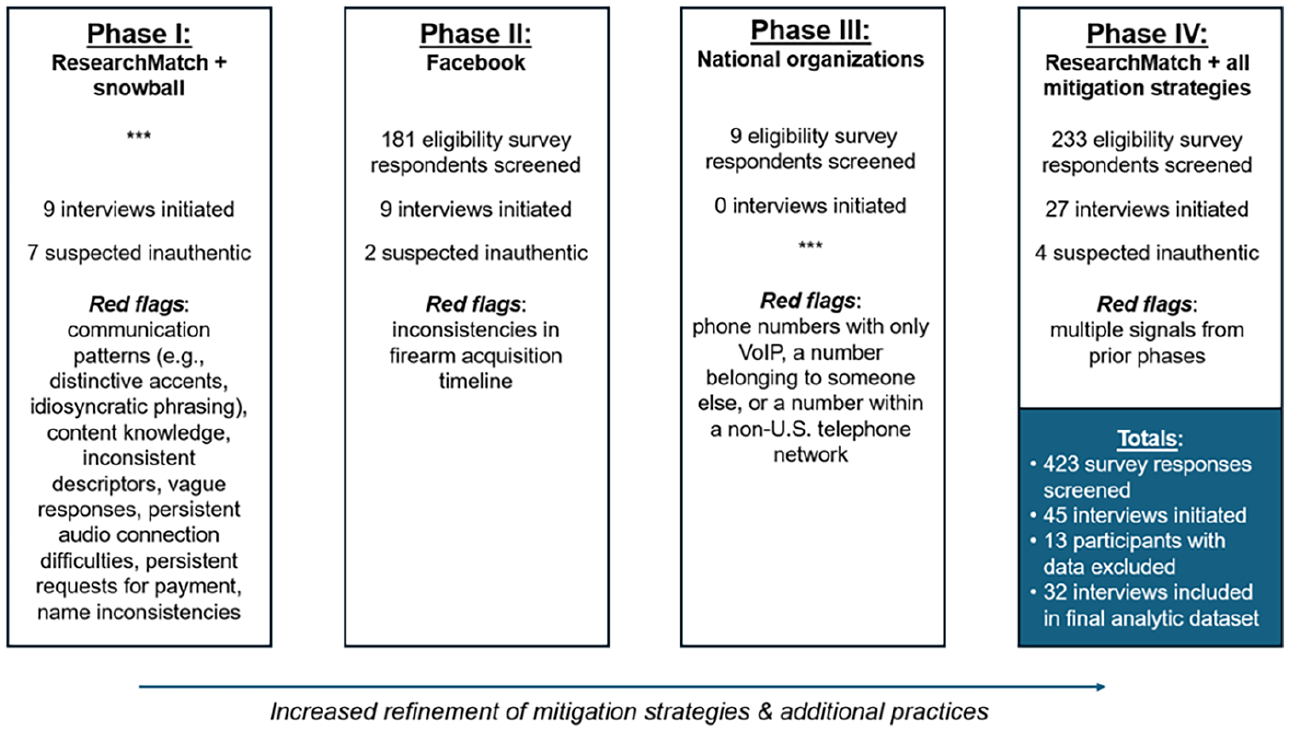

We conducted recruitment and data collection with iterative adaptations to our approach across four phases of our study from March to November 2023.

Phase I

We began recruitment on ResearchMatch (RM), a free, nonprofit online recruitment platform funded by the U.S. National Institutes of Health. RM connects volunteers with health research studies in the U.S. We set our eligibility criteria to include only volunteers whose RM profile racial category indicated “Black/African American.” Our recruitment message with eligibility criteria specified that potential participants must have acquired a firearm between January 2020 and December 2022, and participants would receive a $50 electronic Visa card for interview completion. A research team member followed up by email to schedule a phone screening for people who expressed initial interest. Phone screening attempted to establish rapport and confirm that respondents (1) identified as Black American or African American, (2) had acquired a firearm since January 2020, (3) spoke English, and (4) had no known diagnosis of dementia or condition causing cognitive impairment. We then provided additional information on the study, obtained verbal informed consent, and scheduled the interview. We offered participants the option of Zoom conference call (camera on or off) or phone interviews. To reach potential participants outside of RM, we utilized snowball sampling and invited participants to refer members of their networks to the study.

Our team encountered unexpected patterns across our nine initial interviews, which we documented in post-interview field notes. All participants elected for audio-only interviews. We noticed similarities in communication patterns, including between participants who initially enrolled through RM (n = 6) and subsequent snowball referrals (n = 3). Several participants spoke English with distinctive accents, frequently asked for repetition and clarification of interview questions, did not know the meaning of pertinent terms (e.g. “ammunition”), and used highly unique descriptive phrases (e.g. “committed homicide on himself” to communicate “suicide”). Further, some participants provided unusual accounts of their knowledge and use of firearms suggesting that they had little experience with firearms. For example, one participant shared that he only recently learned that American civilians could own firearms. Two participants residing within a major U.S. city spoke of using firearms for recreationally hunting “wild boar.” These latter two participants also provided inconsistent accounts of their surroundings, spoke about their farmland, and described where they lived as “suburban.” The extreme similarity of the narratives and personal details suggested two attempts to participate by the same individual or attempts to coordinate enrollment among more than one individual (when asked directly, the latter interviewee emphasized that current interview was his first/only).

Moreover, several participants were unable to describe their reasons for acquisition or their usage of firearms, even when the interviewer probed for clarity or further information. These interviews starkly contrasted others, in which participants readily understood questions and relevant terminology, provided specific and illustrative descriptions of their motivations, and gave nuanced accounts of the impact of owning a firearm. Interviews with unusual features tended to conclude earlier than other interviews.

With the use of snowball sampling, we received eight emails from referred individuals within a 1-week period. The structure of the emails was remarkably consistent and variously listed a “friend,” a “colleague,” and a “flier” as their study information source, and the messages came from similarly formatted email addresses (i.e. consisting of similar name and number combinations). Some names included typos or spelling differences between emails and full names provided during screening.

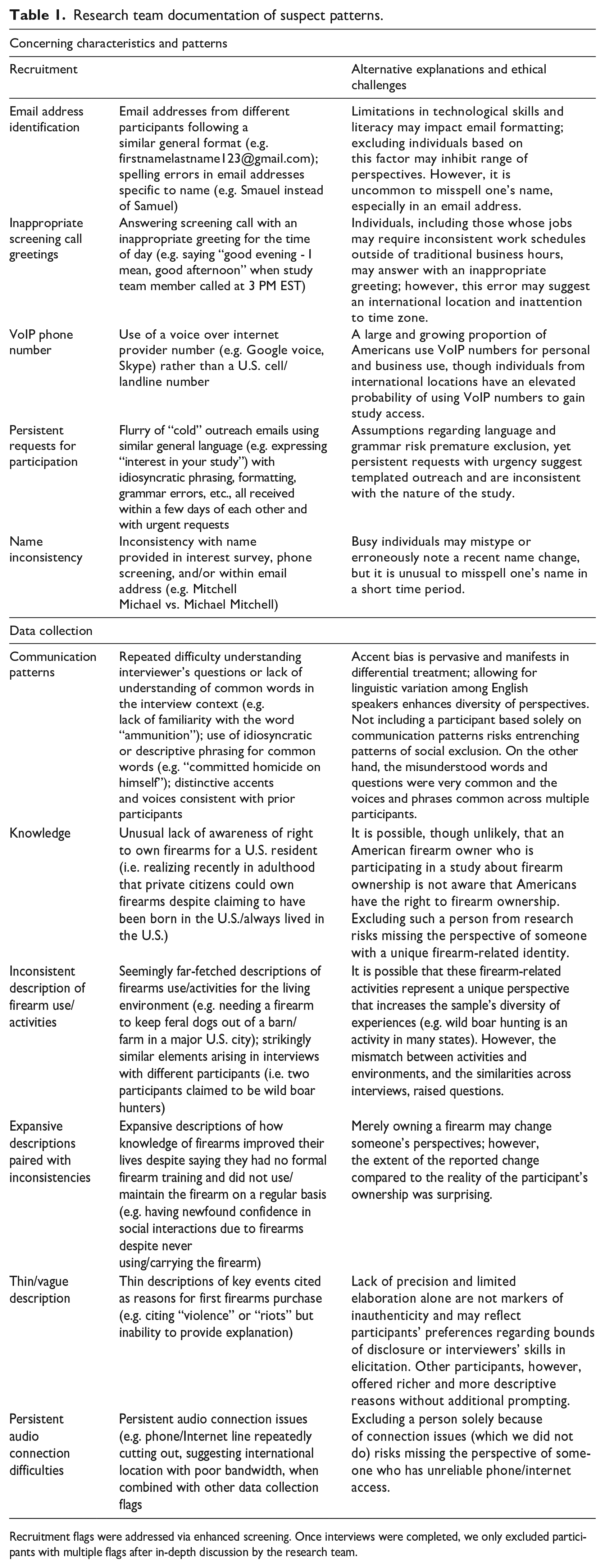

Following these initial experiences, we met as a research team to discuss our concerns that enrolled participants were potentially inauthentic. Our main concerns were the similarity in communication patterns, limited content knowledge, inconsistent descriptors, descriptions of firearm use in implausible scenarios, vague responses, repeated audio connection difficulties (suggesting, in concert with other factors, that participants may be connecting from an international location with poor bandwidth), persistent requests for payment, and name inconsistencies (Table 1). After review of emergent literature on this topic and consultation with several subject matter experts on qualitative research recruitment, we were concerned that several individuals had misrepresented their identities in efforts to gain access to our study. In response, we identified mitigation strategies, described further below.

Research team documentation of suspect patterns.

Recruitment flags were addressed via enhanced screening. Once interviews were completed, we only excluded participants with multiple flags after in-depth discussion by the research team.

Phase II (A–E)

As an alternative to our Phase I approach, we pivoted to Facebook for recruitment. Facebook retained the potential for broad reach, with the added benefit of targeted paid advertising to a U.S.-based audience, as determined by the location selected by the user in addition to a location identifier such as the GPS signal, Wi-Fi connection, and IP address used by their device and account. On a newly created Facebook page, we removed the incentive information to increase odds of authentic participation and to protect against bots that could scrape Facebook ads for a dollar sign. The ad invited interested users to complete an electronic eligibility survey, which we developed to include more specific questions than our first verbal screener. We disclosed the incentive amount to eligible respondents during the follow-up screening phone call, prior to informed consent. Initially, we still noticed similar communication patterns as described in our initial interviews. We engaged in five distinct advertising campaigns (A–E) with iterative updates to our mitigation strategies.

Phase III

In addition to Facebook, we attempted to recruit participants via outreach to national organizations aligned with Black communities (e.g. national civil rights and advocacy organizations). We emailed the leadership of 89 local chapters of one organization, garnering commitments from five leaders to share our study information with their local constituents. Our university-based injury science center also disseminated study information to local community organizations. Several weeks later, we experienced a substantial uptick in responses over 3 days. Nine individuals completed the electronic eligibility survey specific to this recruitment effort. Each of the potentially eligible participants used either a VoIP number, a number that belonged to someone else, a number within a non-U.S. phone network, or declined a video call.

Phase IV

In final recruitment efforts, we returned to RM given pragmatic budget constraints limiting paid advertising, significant effort required to monitor Facebook ads, and targeted recruitment based on RM profiles. For this iteration, we created an electronic eligibility survey that paralleled the one used in our Facebook campaign, which further augmented our initial phone screening and continued to enhance the rigor of our approach. We garnered 233 responses over the course of 3 months.

Our team successfully enrolled enough participants with high quality data to meet our analytic goals and close out study enrollment. Over our 1-year project, we screened 423 electronic eligibility survey responses, initiated 45 interviews, and excluded data from 13 participants due to the substantive concerns for authenticity described above. See Figure 1 for the number of interviews initiated and suspected inauthentic participants. We included 32 interviews with highly trustworthy data in our analyses.

Study phases and participants.

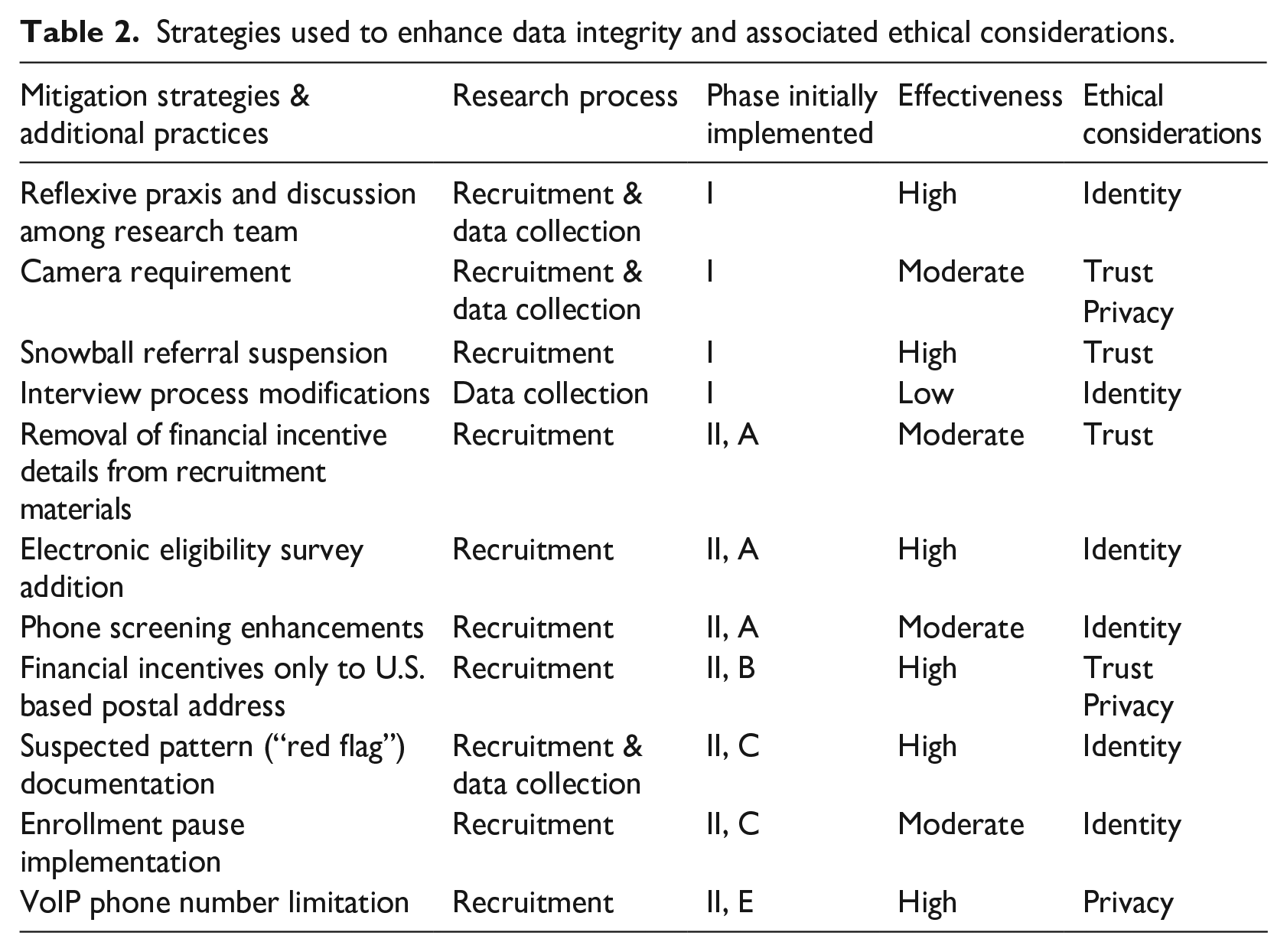

Mitigation strategies and additional practices implemented

Ultimately, our challenges with potential inauthentic participants decreased across Phases I–IV as we developed, implemented, and refined mitigation strategies. Throughout this process, we grappled with key ethical considerations, further described below. Table 2 lists the strategies and additional practices used to enhance data integrity. These are labeled as applicable to recruitment (i.e. process of identifying, screening, and selecting participants) versus data collection (i.e. gathering of narrative data) and linked to key ethical considerations.

Strategies used to enhance data integrity and associated ethical considerations.

Engagement in continuous reflexive praxis (initial implementation Phase I): We approached reflexivity as “an active, ongoing process that saturates every stage of the research” (Guillemin and Gillam, 2004: 274). This process involved critical reflection on our values, biases, and beliefs, but also attention to cultural, gender, and political differences, as well as the “microethics” of research practice (Guillemin and Gillam, 2004; Gunaratnam, 2003; Mistry et al., 2024).

Suspended snowball referrals (Phase I)

We elected to suspend referrals shortly after an initial early influx of suspected inauthentic participants who appeared to be connected.

Added a camera requirement (Phase I)

We modified screening procedures to include a condition that participants must switch on phone or computer cameras for the first few minutes of the interview to visually confirm unique participants.

Modified the interview process to query duration of time living in the U.S (Phase I)

When participants’ communication patterns signaled (1) limited English language fluency, (2) native language other than English, and/or (3) idiosyncratic phrasing (e.g. “committed homicide on himself”), we attempted to gather additional contextual background in the interviews by inquiring how long they had lived in the U.S. We saw this as an opportunity to better understand Black immigrants’ experiences with firearms and obtain additional context for potential language barriers in the early interviews. Every participant to whom we posed this question replied that they had lived in the U.S. all their lives.

Removed financial incentive details from recruitment materials (Phase II, A)

As noted above, we elected to remove financial incentive details from recruitment materials in efforts to deter non-eligible individuals who may have engaged with the study team solely for material gain. We provided incentive information after initial screening and prior to informed consent. All enrolled participants, regardless of concerns for authenticity during data collection, were provided compensation after the interviews.

Increased initial screening intensity via electronic eligibility survey (Phase II, A)

In lieu of an initial phone screening, we created an electronic eligibility survey to enhance scrutiny prior to phone outreach. We required that respondents provide first and last names. We did not contact respondents who provided incomplete or celebrity names and sought clarification with any respondents who provided inconsistent names between the survey and phone screening steps. Respondents were also required to indicate their location as U.S. Northeast Region, U.S. South Region, U.S. Midwest Region, U.S. West Region, outside of the U.S., and prefer not to answer. Respondents were required to indicate whether they fluently spoke and understood English (yes/no). Respondents needed to specify the timeframe in which they recently acquired firearms. And lastly, respondents were required to provide a working phone number and email address.

Enhanced phone screening with questions specific to racial identity (Phase II, A)

The electronic eligibility survey included checkboxes aligned with federally defined race categories. Respondents could check multiple boxes to indicate multiracial identities. Instead of exclusively relying on these survey responses, we rephrased our phone screening to explicitly inquire how participants identified racially.

Required a U.S.-based postal address for mailed incentive (Phase II, B)

Our team provided incentives in the form of ClinCard Prepaid Visa cards. Initially, our team provided funds exclusively via the virtual card option to enhance accessibility. We later switched to physical cards to ensure that participants were based in the U.S. Prior to informed consent, participants were informed that the payment would be mailed after the conclusion of the interview. Participants were required to provide a mailing address, but there were no preconditions that this had to be their actual residence (i.e. it was acceptable to indicate any valid mailing address where they felt comfortable receiving an envelope). When issuing virtual cards, our team had concerns that seven of the first 10 participants were potentially based outside the U.S. After switching to physical cards, we had such concerns about only two of the remaining 35 participants.

Documented suspect patterns (Phase II, C)

We reviewed our field notes and created a table consisting of our reasons (i.e. “red flags”) for suspecting inauthentic potential or enrolled participants (Table 1). This table formally documented the patterns our team observed with specific examples, serving as a key reference point. In documenting these patterns, it became apparent that participants noted as potentially inauthentic all had multiple red flags during the data collection phase. Overall, the table enhanced the transparency of screening efforts, a critical element for reducing potential inconsistencies and bias within the process.

Added an enrollment pause into screening protocol (Phase II, C)

In addition to increasing screening intensity with the electronic eligibility survey, we built an enrollment pause (i.e. “gut check”) into our screening protocol just prior to obtaining participant informed consent and scheduling by phone. When concern about inauthentic participation arose, the research team member was encouraged to discuss reasons for their concern with another team member before proceeding with enrollment. If the second team member agreed with the reasons why the screener found the respondent’s authenticity questionable, the individual was not enrolled.

Limited voice over internet protocol (VoIP) phone numbers (Phase II, E)

We stopped accepting VoIP phone numbers from interested eligibility survey respondents. Because VoIP services use the Internet rather than phone networks to place calls, it is impossible to ensure that a VoIP user is physically within the geographic region represented by their phone number’s country and area codes. While users of the VoIP service Google Voice are required to provide an American or Canadian phone number to create a Google Voice number, one workaround is to use a U.S. phone number—including that of another Google Voice account—belonging to a friend or relative. If we placed a screening call and heard a call screening or other automated message indicating that the number belonged to a VoIP service, we ended the call and informed the respondent via email that we were unable to proceed with their participation due to our screening protocol not allowing for VoIP numbers. This strategy screened out 16 respondents who attempted to enroll but had locations that could not be verified as U.S.-based.

Of note, the strategies we employed, as outlined in Table 2, align with newly published reports (Mistry et al., 2024; Pellicano et al., 2024; Sefcik et al., 2023), suggesting the emergence of a coherent approach to navigating inauthentic participants.

Ethical considerations

A central focus of growing literature on inauthentic participants is the threat to research integrity, which is essential for maintaining the credibility of scientific research (Drysdale et al., 2023; Zhaksylyk et al., 2023). Inauthentic participants compromise research integrity by damaging studies’ scientific validity and broader social value. While research integrity is of critical ethical importance, our team identified additional ethical considerations that emerged as we navigated potential inauthentic participation: identity and processes of categorization, trust and mistrust in research engagement, and privacy and honoring sensitive context. These issues have key relevance for qualitative research methods generally, and especially among marginalized groups.

Identity and processes of categorization

Our study focused on two key axes of identity—race and nationality—imbued with political meaning. While members of our research team reflected both white and Black racial identities, the team was especially aware of our outsider positions, power asymmetry, and the sensitivity required to question the authenticity of lived experiences so different from our own (Yip, 2024). We grappled with probing the authenticity of individuals who voiced an identity of Black or African American, noting the complexity of multiple and cross-cutting social differences (Gunaratnam, 2003). This discomfort elicited broader questions of how identity is defined, categorized, and used in critical knowledge production.

In terms of definitions, our early recruitment efforts in some ways risked essentialist pitfalls by initially relying on “neutral” self-reported identity checkboxes. This approach contrasted our broader conceptualization of race and nationality as social categories, “produced and animated by changing, complicated, an uneven interactions between social processes and individual experiences” (Gunaratnam, 2003: 7). Balancing pragmatic recruitment tasks (i.e. limiting the scope of our study to individuals identifying as a specific demographic group) amid complex conceptual considerations was challenging. We understood meanings of these social categories as constructed relationally and located within a specific context that configures power relations (Ford and Airhihenbuwa, 2010; Gunaratnam, 2003). In questioning how participants represented their identities, we were concerned about undermining the different ways in which categories may be invoked to note variation in experiences (i.e. voicing; Ford and Airhihenbuwa, 2010). For example, when confronted by distinctive communication patterns that deviated from perceived norms, we were reluctant to make assumptions regarding nationality, which may be flexible and subjective to reflect a sense of belonging. However, in all these situations, we also recognized multiple signals suggesting participation by individuals other than Black Americans who owned firearms. Consistent with Sharma et al.’s (2024) and Mistry et al.’s (2024) reflections, the accumulation of several factors raised concern.

In a broader sense, we sought to understand how experiences of discrimination may impact our phenomenon of interest and feared injecting bias into the research process itself. Systematic documentation of suspect patterns and enrollment pauses for peer consultation supported transparency and consistency in decision-making. Our concern for expressing skepticism toward participants who may have believed that their participation was indeed valid (Drysdale et al., 2023) was somewhat attenuated with implementation of the electronic eligibility survey, which practically functioned to maintain relational distance between the study and suspected inauthentic participants, plus screened out overt misrepresentation. As the participant-researcher relationship is a key nexus for ethical concerns (Sanjari et al., 2014), our discomfort was felt more acutely during phone screening and data collection, after which we had engaged participants. Here, reflexivity served not only an analytical, but also a core ethical purpose (Guillemin and Gillam, 2004). Accounting for our values, biases, and beliefs was important for critically reflecting on the knowledge generated from the research process and recognizing that research is intertwined with broader historical and social relations (Gunaratnam, 2003; Yip, 2024).

Trust and mistrust in research engagement

Recognizing these broader historical and social relations required an examination of trust, an ethical good that is foundational for qualitative research. Extensive histories of ethical violations—including but not limited to exploitation of and extraction from Black bodies—characterize the U.S. research enterprise (Scharff et al., 2010). These historical traumas coupled with ongoing social exclusion have contributed to mistrust about research participation among marginalized groups, including Black Americans (Gilmore-Bykovskyi et al., 2022; Scharff et al., 2010). Gilmore-Bykovskyi et al. (2022) critique emphases on individual-level conceptualizations of mistrust and highlight a core paradox: excess blame and burden for marginalized groups for rational responses to past harms, and insufficient demonstrations of trustworthiness for investigators and institutions to repair these harms. As researchers working within academic institutions, we recognized our role in fostering equitable inclusion.

Part of equitable inclusion is reducing barriers to research participation. Online qualitative research presents opportunities to advance equity by reducing friction and responding to participants’ needs (Pellicano et al., 2024). However, these assets also make studies more susceptible to inauthentic participants, who generate further mistrust among researchers, participants, and consumers of research. Our mitigation strategies functioned to maintain integrity but also increased up front labor for participants in the recruitment phase, specifically with the eligibility survey, screening calls, and pauses in scheduling. Moreover, ending snowball sampling limited potential referrals of potentially valuable participant perspectives, and not including compensation details in recruitment ads might have signaled lack of respect for participants’ time or lack of transparency (Pellicano et al., 2024). All of these decisions came with ethical tradeoffs not only for our study, but also potentially for future research participation (Drysdale et al., 2023).

Complicating our role as agents of institutions with obligations to restore trust in research, our early concerns related to participant authenticity generated significant unease. While prior discussions on the ethics of online research has centered on participants’ trust of the researchers, we initially faced doubts in our ability to trust the participants (Drysdale et al., 2023). We were familiar with threats to quantitative survey and experimental research, but we had not anticipated inauthentic participation in our qualitative study, particularly given the effort, intimacy, and sensitivity involved in one-on-one interview formats. Initial fractures shifted the prevailing paradigm of trust in research with important implications.

Approaching participants from a position of skepticism generated potential risks for the interaction and quality of data elicited through the interviews. Rapport, or the “harmonious relationship between research and informant that allows for the free flow of information,” is generally understood as establishing of a basic sense of trust (Schmid et al., 2024: 1254; Spradley, 2016). Rapport then functions as a key communication tool for obtaining rich data. As Schmid et al. (2024) explain, building rapport can be both an approach for gaining access to lived experiences but also enhance participants’ comfort in telling their stories. Rapport may be especially meaningful for participants on the margins, whose voices have been ignored or silenced, and create emotional safety within the context of disclosures (Schmid et al., 2024). Recognizing the potential for research to re-traumatize is essential (Wojnicka and Nowicka, 2023). We believe that our primary interviewer deftly negotiated the tensions between reasonable doubt and positive regard while maintaining respect throughout data collection. Of note, our team observed that establishing rapport was fluid and natural during interviews with no signals for inauthentic participation, in contrast to interviews with multiple signals of inauthenticity, as the interviewer’s focus was diverted toward probing and gathering context to help explain red flags. Our overall experience exposed a difficult facet of mistrust in research.

Privacy and honoring sensitive context

Qualitative research is well-suited for exploring topics that may be sensitive (Silverio et al., 2022), and in many cases firearm ownership fits within this context. Firearms are situated within complex and often charged discourse related to rights and responsibilities in U.S. society. Even though 32% of Americans personally own a firearm (Schaeffer, 2024), ownership decisions may be considered intensely private. Firearm owners often link the right to own firearms in the U.S. to the right to privacy, specifically the right to not disclose firearm ownership (Khazanov et al., 2022). Indeed, privacy as a concept is central to the national firearm context.

Within research, privacy is the ethical obligation to protect identities and personal information. Our duty to respect privacy—especially given the sensitive context—was amplified in this study. For example, in designing the study, we specifically used language related to firearm “acquisition” instead of “purchasing,” as we were open to including participants whose exposure to firearms increased through any mechanism. We recognized that participants would present with a spectrum of comfort, and some may have specific concerns related to scrutiny, as well as a desire to decline questions considered too intrusive (Sanjari et al., 2014). As such, we emphasized voluntariness related to disclosures and our prioritization of confidentiality as a means of protecting privacy.

In navigating potential inauthentic participation, we considered the ethical tensions related to mitigation strategies that pushed privacy bounds by eliciting personal information. For example, we extensively discussed implementing video requirements in efforts to ensure unique participants, recognizing that some individuals may have concern for showing their faces and/or environments. We attempted to minimize this concern and optimize participants’ comfort by requiring video for only a few minutes. No participants referenced lack of video access; however, important issues related to equitable accessibility remain (Mistry et al., 2024; Ridge et al., 2023). In not accepting VoIP numbers, we reflected that some participants may be reluctant to share their personal phone numbers, but also provided caveats that any cell phone or landline—not necessarily the participant’s own number—was acceptable. Specific to the decision to mail ClinCard Prepaid Visa cards, important factors included unstable housing and/or reluctance to reveal residential addresses. We negotiated this tension by informing participants that any U.S. address (not solely the participant’s own), including post office boxes, would be welcomed. We proactively sought to balance privacy, sensitive context, and research integrity through design and implementation of these strategies.

Conclusion

With firearm injuries increasing among Black Americans, understanding reasons for firearm acquisition is critical for injury prevention; invalid research threatens this key objective. Our experiences align with newly published recommendations for identifying potential inauthentic participation, and we would encourage other researcher to review these frameworks in efforts to address participation concerns (Mistry et al., 2024; Pellicano et al., 2024; Ridge et al., 2023; Woolfall, 2023). We expand on this literature by analyzing specific challenges related to ethics and equity that are associated with responding to potential inauthentic participants. Efforts to ensure the outcome of scientific validity and broader social value must also attend to the process of achieving these ends. As such the ethical implications of implementing mitigation strategies and additional practices must also be considered, with particular attention to historical and sociopolitical context, as well as the potential impact on marginalized populations.

Footnotes

Acknowledgements

We appreciate the generous contributions of Dr. Meredith Vanstone.

Author contributions

KH, KS, AT, JS, JW, and GK made substantial contributions to conceptualization, analysis, and critical manuscript revisions. KS drafted the first version. All authors provided final approval and participated sufficiently in the work to take public responsibility for appropriate portions of the content.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: University of Pennsylvania Innovation in Suicide Prevention Implementation Research (INSPIRE) Center (NIH grant number P50MH127511-04). The funder had no role in the design or reporting of this manuscript.

Ethical approval

The referenced study was approved by the University of Pennsylvania IRB (#852618) and University of Colorado IRB (#22-2270), and all participants gave verbal informed consent prior to enrollment.