Abstract

The quality of a research study application sends a distinct signal to the institutional review board (IRB) about the skills, capacities, preparation, communication, experience, and resources of its authors. However, efforts to research and define IRB application quality have been insufficient. Inattention to the quality of an IRB application is consequential because the application precedes IRB review, and perceptions of quality between the two may be interrelated and interdependent. Without a clear understanding of quality, IRBs do not know how to define quality and researchers do not know how to achieve quality. This position has not been systematically studied to date, and future research could provide much-needed empirical validation. This paper lays the conceptual groundwork for future investigation into what constitutes quality in an IRB application. It includes a landscape review of multidisciplinary research on quality, as well as a discussion of quality frameworks analogous to research with human participants that exist in the published literature. It also examines the background and significance of federal research regulations, regulatory burdens, researchers’ regulatory literacy, and the roles and responsibilities of IRB professionals within this ecosystem.

Keywords

Introduction

Throughout the published literature, calls can be found for a greater understanding of quality review within the field of human participant research (Abbott and Grady, 2011; Lynch et al., 2019; McDonald and Cox, 2009; Nicholls et al., 2015; Resnik, 2021; Scherzinger and Bobbert, 2017; Taylor, 2007; Tsan, 2019; Vawter et al., 2004). While this piece is written from a US perspective, a more precise grasp of research quality is a ubiquitous, global need. In this paper, the US-specific term Institutional Review Board (IRB) is used. Elsewhere, IRBs are commonly known as research ethics committees (RECs) or ethics review boards (ERBs); all are functionally equivalent terms.

The quality of IRB review has been the primary focus of the extant literature however, the quality of IRB applications is equally significant and requires in-depth inquiry. Inattention to the IRB application itself is consequential because the application precedes IRB review, and perceptions of quality between the two may be interrelated and interdependent.

A high-quality IRB application can convey the skills and capacities of its authors, as well as their appreciation for ethical research practices. It can implicitly communicate core competencies about the research team to the IRB, including their regulatory literacy, experience conducting research, ability to communicate complex research concepts and methods, stewardship of ethical standards, and capacity to ensure research ethics compliance. On the other hand, a poor quality IRB application may signal deficits, training needs, inattention to detail, inexperience, lack of resources, inappropriate workload delegation, and possible future noncompliance.

Ultimately, whether high or low, the quality of the application will convey to the IRB how the research will be conducted. Because the IRB’s primary mandate is to protect the rights and welfare of research participants, the measure of quality is bound by how completely the researcher has described all research procedures from start to finish and how capably they have applied ethics requirements to their specific work. Without these components, the IRB cannot assess how well the proposed research study meets regulatory, ethical, scientific, and administrative requirements. To this end, the IRB is capable of, responsible for, and well-positioned to strengthen the research-practice nexus.

The literature reviewed in this paper examines the background and significance of federal research regulations, regulatory burdens, researchers’ regulatory literacy, and the roles and responsibilities of IRB professionals within this ecosystem. The roles of both research administrators and the IRB are critically important to the IRB application process and will be described operationally and relationally. Research administrators’ collective tasks in reducing the burden on researchers, providing regulatory support, and promoting research compliance will be articulated. Finally, to support future research objectives, this paper lays the conceptual groundwork for the investigation of quality in IRB applications through a landscape review of multidisciplinary research on quality, as well as a discussion of quality frameworks analogous to human participant research.

Problem of practice

Despite the availability of federal criteria and ethical principles, there are no known U.S.-based resources that provide researchers with a straightforward roadmap of how to prepare a high quality IRB application. U.S. regulations and standards do not prescribe how research proposals are submitted to the IRB, nor do they codify the components of a standard IRB application or describe what a quality application looks like. As a result, preparing an approval-ready IRB application is a challenge. There are differing perspectives about which approach predicts the strongest IRB application: regulatory literacy, subject matter expertise, a fastidious application, or some combination thereof.

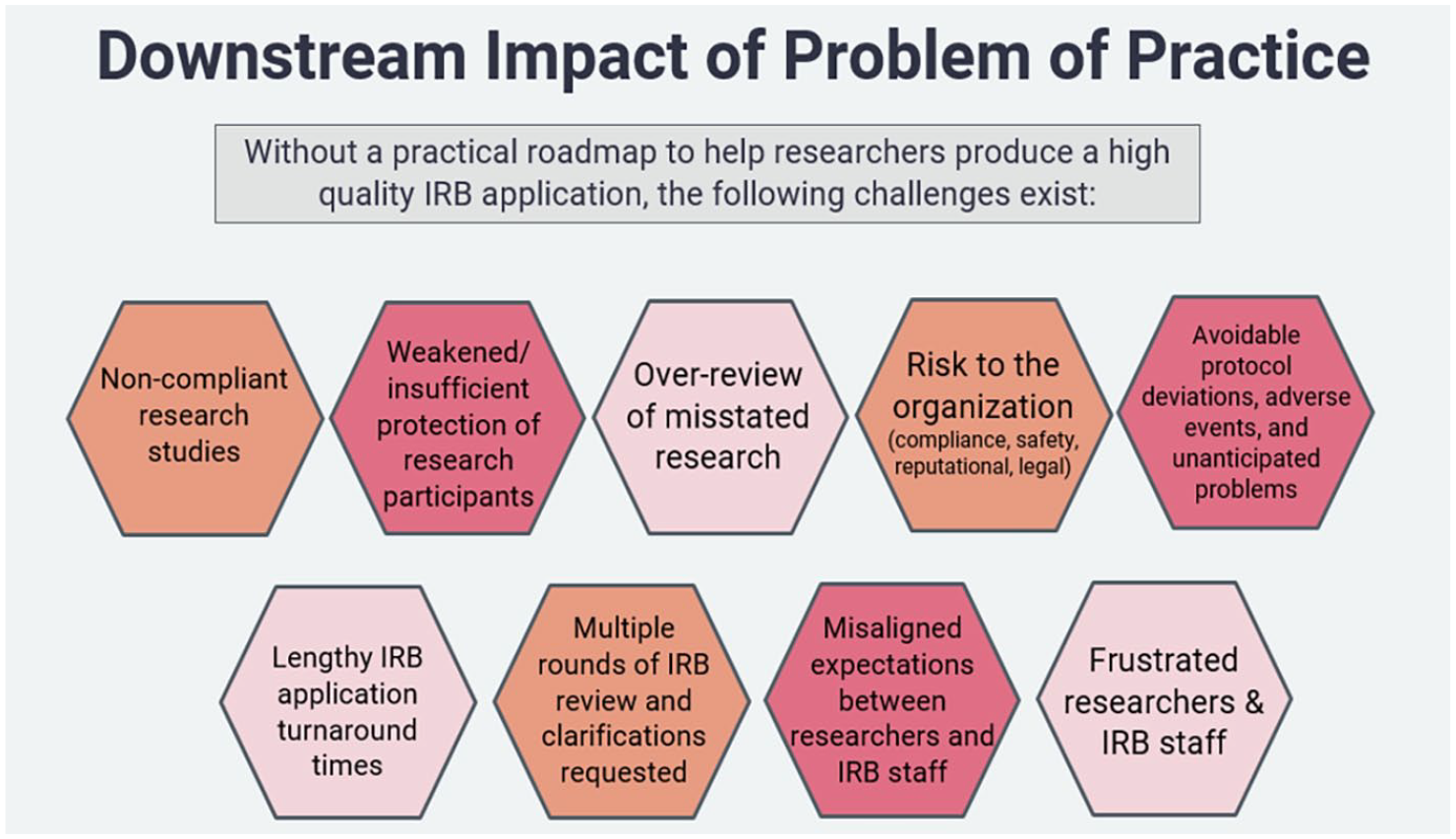

In addition, a practical and efficacious roadmap to help researchers produce high quality IRB applications is needed. Without such a roadmap, the challenges represented in Figure 1 face IRBs, researchers, and their institutions. These downstream impacts may compromise participant protection, impede compliance, undermine ethical conventions, and hinder critical research activities.

Downstream impact of problem of practice.

Regulatory burden and regulatory knowledge are frequently misattributed as common reasons an IRB application lacks quality (Association of American Medical Colleges AAMC [AAMC], 2016; Bozeman and Youtie, 2020; Brønstad and Berg, 2011; Decker et al., 2007; del Álamo et al., 2022; Gordon et al., 2003; Lottes et al., 2022; Mullen et al., 2008; Nichols and Wynes, 2018; Silberman and Kahn, 2011; Starokozhko et al., 2021; Wimsatt et al., 2009). Some have suggested that if researchers simply had stronger regulatory knowledge, and wrote their applications accordingly, they would produce model IRB submissions (Fullerton et al., 2015; Pech et al., 2007; Sonne et al., 2018). While the regulations are necessary to provide guardrails for the research enterprise, the IRB is solely responsible for ensuring research under review meets the specific criteria for approval outlined in the federal regulations. Contrary to the established literature, researchers do not need to be experts in that criteria to achieve a quality IRB application.

The published literature on regulatory burden is abundant, and numerous studies have been conducted to address the consequences for researchers and their institutions. However, there are gaps in the literature connecting the financial, compliance, ethical, psychological, and learning burdens for the cast of characters involved in institutional research administration. To thoroughly explore this operationally diffuse process, and its impact on IRB application quality, the literature reviewed here examines the US federal research regulations, regulatory burdens, researchers’ regulatory literacy, and the research administration model.

Federal research regulations

The United States Code of Federal Regulations (CFR) is a set of laws whose purpose is to provide structural and legal consistency and oversight within their respective federal agencies. There are 50 titles within the CFR that represent broad areas subject to regulation by the federal government: domestic security, federal elections, agriculture, education, and public welfare, to name a few.

The federal code pertaining to research with humans falls under the purview of the U.S. Department of Health & Human Services (HHS). In 1991, the Common Rule regulations (45CFR§46) were published and adopted by 16 U.S. federal agencies. The Food & Drug Administration (FDA) is not a Common Rule agency because its regulatory jurisdiction differs; 21CFR§56 regulations pertain to clinical investigations of drugs, biological products, and medical devices. However, the FDA is required to harmonize with the Common Rule whenever permitted by law. IRB approval of research with humans can only be granted in accordance with these two sets of regulations.

The goal of federal research regulations is to provide direction on conducting compliant, ethical, valid, reliable scientific research involving human participants. Regulatory oversight and public policy implementation under Congress chart the expectations and standards for researchers to follow. However, as this oversight is implemented in practice, such regulations can pose substantial burdens on researchers and impact the quality of their work.

Regulatory burdens of research

While researchers agree on the need for the protection of research participants and clear rules for consistency, safety, transparency, and integrity in the conduct of research involving humans (AAMC, 2016; National Science Board, 2014), the regulations themselves are complex. They can be overly technical/legalistic, can be seemingly inconsistent, include domain-specific terms unfamiliar to novices, and are not organized in an intuitive way for the reader (AAMC, 2016; Hale et al., 2011; Law et al., 2014).

Deciphering the regulations is problematic for researchers because it is excessively time-consuming and may require comparative analysis across multiple regulations; such burdens could impede compliance (Law et al., 2014). As McLaughlin and Holmes (2015) point out, the word count of the entire CFR is 103,079,294 and would take 5,727 hours to read. Given both the density and volume, it is unsurprising that the current state of the regulations has caused regulatory overload and resulted in layers of additional work for researchers (National Science Board, 2014). Ensuring regulatory compliance, then, becomes costly, both in terms of time and resources, neither of which researchers typically have the luxury of.

With regulatory complexity comes a myriad of requirements necessary to ensure compliance. In their 2016 report addressed directly to the President of the United States, the Association of American Medical Colleges (2016) bluntly stated that “the unintended cumulative effect of federal regulations places significant stress on institutions and individual researchers that can impede research productivity and innovation” (p. 21).

Government agencies have received unambiguous feedback that regulatory burden is impeding innovation and research productivity, and reducing the return on investment of federal funds. For example, in 2012, the Federal Demonstration Partnership survey found that federally funded researchers spent, on average, 42% of their time performing administrative tasks—including ensuring compliance with federal regulations—instead of conducting research (AAMC, 2016; Leshner, 2008). Further, a 1999 report by the National Institutes of Health (NIH) identified that researchers are subject to roughly 60 sets of research-related regulations and, upon closer inspection, found that the cumulative impact of just five of these regulations on researchers was “interrelated and. . .synergistic in a negative sense” (Mahoney, 1999: 1). A regulatory landscape that is cumbersome and onerous to navigate precipitates poor researcher preparation and non-compliance. This situation demands intervention.

Addressing researchers’ regulatory literacy

Current literature describes why researchers lack the capacity, resources, attention, and desire to retain information about research regulations and IRB processes and policies. Overall, a researcher’s primary goal is to conduct their research. However, secondary and tertiary administrative requirements can prohibit a researcher from focusing solely on this goal. For example, administrative duties, grant management, hiring study personnel, purchasing equipment and supplies, managing applications/paperwork, securing intellectual property protections, negotiating and obtaining agreements/contracts, and so on, shift valuable time and attention away from conducting one’s research activities (Mullen et al., 2008; Wimsatt et al., 2009). Further, Wimsatt et al. (2009) point out that “heightened demands for accountability, increased competition for research grants, expanded demands on faculty time. . .are among the challenges that make achieving institutions research missions increasingly difficult” (p. 72). Mullen et al. (2008) found in their survey of over 6,000 faculty members that 95% could spend more time on active research if they had more administrative assistance. This finding is consistent with the observable pressure and demands on faculty’s time between clinical duties, committee work, teaching, mentoring, producing scholarly work, securing grants, and their research, to name a few.

Within the literature, and anecdotally, there exist at least six root causes of researchers’ regulatory knowledge gaps (AAMC, 2016; Bozeman and Youtie, 2020; Decker et al., 2007; National Science Board, 2014; Nichols and Wynes, 2018; Silberman and Kahn, 2011; Wimsatt et al., 2009).

First, researchers may lack capacity and rely on IRB staff to inform them about specific regulatory issues or direct their attention when needed.

Second, knowledge gaps are just that: disparities in experience or understanding. Trained research administrators cannot (and should not) expect researchers to have the same depth of knowledge on the regulations or internal standard operating procedures as they have.

Third, researchers may not know where to seek education or assistance, even when they recognize a knowledge gap. This could create a perception of lack of IRB support, even though multitudinous resources exist.

Fourth, regulations and requirements can change frequently. For the research community, policies, procedures, rules, and regulations change too frequently and knowledge retention suffers as a result.

Fifth, research-related administrative tasks are regularly delegated by the Principal Investigator to a research assistant or research coordinator. Frequent turnover in such roles can create barriers to retaining legacy knowledge.

Sixth, some seasoned researchers do understand and follow IRB policies and procedures with near-perfect accuracy, however, there can be specific, nuanced, complex, and highly technical areas that fall outside of the existing IRB knowledgebase (e.g. reliance agreements, international data privacy regulations).

Given the fact that top-down regulatory burden reform from the federal government is unlikely to occur, it is prudent for research institutions to address some of the burdens by providing regulatory support and promoting compliance in innovative new ways. In their critique of regulatory-only education for researchers, Geller et al. (2010) report that training researchers on how to conduct ethical research may be a more practical focal point for their learning, especially if regulatory issues can be handled by the IRB.

“The abundance of rules can distract conscientious investigators from focusing on the ethical underpinnings of the regulations. . . [Researchers understand that] attention focused on compliance does not mean that the ethical underpinnings of the regulations are less important. . .Although compliance is important for both investigators and the IRB, there is confusion about the relative attention that each party should pay to it” (p. 1300).

The roles of research administrators & IRB professionals

Research administrators

The research enterprise is an intricate environment with numerous interrelated and overlapping functions, priorities, and complexities. Most research-supporting organizations include centralized research administration offices designed simultaneously to support and implement innovative and transformational research, generate and secure research funding, establish research collaborations and partnerships (both domestically and internationally), and train the next generation of research scholars (Figure 2).

Research Administration Compliance Areas (Adapted from University of Texas at Austin, 2018).

Staff employed within research administration offices are responsible for ensuring regulatory compliance, administering funding, establishing research oversight policies, managing conflicts of interest, responding to research misconduct, and promoting the responsible conduct of research. As such, research administrators must have an extensive body of specialized expertise required to meet the demands of the field (Atkinson et al., 2007; Wagonhurst, 2002).

A critical component of research administration is the need to navigate relationships and interactions with an extremely broad constituency within the research community (faculty, scientists, senior leadership, students, and others), and do so while being mindful of social roles and professional culture within the institution (Atkinson et al., 2007; Greenwood, 1957). Atkinson et al. (2007) quoting Hensley (1986) affirmed that “research support personnel are essential. . .to the achievement of the specific missions of postsecondary institutions. . .yet this vital group’s value to science is largely unrecognized [in the literature]” (p. 1).

Due to this inextricable relationship, the interaction between research administrators and the research community must be collaborative, cooperative, and complementary. Wimsatt et al. (2009) and Ross (1990) agree that “developing and maintaining effective partnerships between faculty and research administrators is a critical issue if both are to be in a position to do their best work” (p. 74) and that these relationships are “a key variable in determining the success of an organizations research endeavor” (p. 21).

One key challenge for research administrators is to manage the differing priorities between their responsibilities and the research community’s interests. Ross (1990) identified that .. administrators are often the messengers, monitors, and enforcers of regulations; thus, they are the most convenient targets for the ire that researchers may. . .direct at the regulations and regulators. The challenge to those who implement regulations at the institutional level is to ensure compliance in a way that does not unduly interfere with the. . .research by the investigator. The task is to serve the needs of the investigators by creating an institutional environment that will help the investigator meet [their] regulatory obligations (pp. 19–20).

Serving the needs of the researchers is paramount and a required part of research administration work. Research administration teams devote countless hours to education, training, guidance, and support services. However, one pressing concern for research administrators, specifically within the IRB, is to ensure that those efforts are fruitful and sustainable. IRBs need efficient pedagogical approaches for researchers that will yield high quality IRB applications.

IRB professionals

In the book, Regulating Human Research, Babb (2020) describes the seemingly overnight professionalization of IRB work. This shift was an adaptive response to the rapid expansion of the research enterprise in the 1990s, and the concurrent need for professionals with regulatory and ethical expertise to review that research. Volunteer faculty and clinicians who loosely applied the regulations and produced sparse documentation were no longer adequate in these roles. Organizations began investing heavily in full-time IRB staff with advanced credentials. Growth in the field promised fulfilling careers ahead: There was a growing sense that mastering the regulations was too important to leave to the amateurs. . .Whereas filing paperwork could be carried out by clerical staff, correctly interpreting what documents regulators would want to see in an audit required considerably more skill. Not only were the regulations complex and ambiguous, but they were fragmented and inconsistent. . .As regulatory agencies issued new guidance. . .it created yet another level of esoteric complexity in need of expert interpretation (Babb, 2020, pp. 36–37).

During this time, IRB professionals emerged as skilled regulatory and compliance experts who enhanced IRB operations and audit trails. The introduction of the Certified IRB Professional (CIP) credential elevated and validated the higher-level competencies required of IRB staff. The establishment of the field’s professional organization, Public Responsibility in Medicine and Research (PRIM&R), created a community of practice and legitimized the occupational identity of IRB professionals across the U.S.

The role of the IRB: IRB application process and review

A central criticism of IRBs throughout the literature has been their lack of transparency and failure to pair clarifications with a corresponding regulation or ethical position (Binik and Hey, 2019; Friesen et al., 2019; Lynch, 2018; Scherzinger and Bobbert, 2017). Researchers may see these kinds of opaque requests as a scrutinizing fishing expedition, or worse, research administration writ large as a burden and a barrier. Instead, researchers expect IRB review that is supported by clear explanations and regulatory standards. IRBs have historically had a reputation of hiding behind a veil of anonymity, not providing a rationale or justification for their decisions, and seeking arbitrary revisions that fundamentally change the scope of the research (Hamburger, 2004; Klitzman, 2012; Lynch, 2018; Resnik, 2021). While this view of IRBs is gradually shifting, lingering frustration caused by vague IRB directives can strain relationships and cause mistrust between researchers and the IRB, and potentially compromise research compliance as a result. IRBs that positively and proactively address these stereotypes can gain trust and increase confidence within their research communities.

To IRB professionals, ensuring compliance with federal regulations and ethics principles are straightforward components of their day-to-day work. However, to researchers, these nuances are not so apparent and can be daunting. This disconnect is the source of most tension within the IRB application and review process; IRB professionals see the regulations as instructive, researchers see the regulations as unclear and convoluted.

Much of the established literature calling for clearer standards of evidence for IRB quality and decision-making make little or no mention of the caliber of the IRB application submitted by the researcher. Resnik (2021) provides a helpful definition: “A standard of evidence for an IRB would be [a] rule or guideline concerning the type or amount of evidence that is needed to make an approval decision” (p. 429). In applying Resnik’s definition, it is clear that the “amount of evidence needed to make an approval decision” lies squarely within the IRB application. Resnik asserts that a high-quality IRB application is the standard being pursued. What remains unclear is the “rule or guideline” IRB’s use to codify their standards. This could be resolved by operationalizing what is meant by “quality” or “good” IRB applications.

The concept of quality

Ground-laying research on quality

Much of the established literature on quality is rooted in industry: consumer-focused businesses, goods-production, service sectors, consumer markets, economic modeling, manufacturing, agriculture, telephone/technology networks, and global marketplaces. Quality sub-disciplines began to emerge throughout the 1950s-1980s, including W. Edward Deming’s management-focus on statistical process control; Joseph Juran’s seminal work, the Quality Control Handbook; Armand Feigenbaum’s influential quality management book, Total Quality Control; and Philip Crosby’s “zero defects” approach to quality, which laid the groundwork for the landmark quality management framework, Six Sigma (Avci, 2017a; Chandrupatla, 2009; Reeves and Bednar, 1994).

Empirical research on quality has focused principally on the healthcare field, where healthcare communities, providers, insurers, and federal assistance programs have sought to use clinical measures, including emergency care, hospitalizations, and re-admission rates, to determine the quality of patient care (Codman, 2013; Donabedian, 1985, 1988). In addition to the medical field, the nature of quality of higher education is emergent in the empirical literature. However, the groundwork is relatively new (beginning in the 1970s) and is often studied in combination with the cost of education, institutional rankings, attrition and retention, and campus demography. In other words, defining quality in academia is fragmented among many competing interests including, but not limited to, teaching and curriculum development, learning and academic mastery, resource allocation, job/workforce preparation, and progressing research, discovery, and innovation (Green, 1994; Harvey and Green, 1993).

Initial attempts to define quality

One commonality found throughout the literature is the initial attempt to utilize the broadest definition of quality available: “degree of excellence” (Merriam-Webster Dictionary, 2021), and the swift rejection of this definition as too abstract, immeasurable, and impractical. Further, where the concept of quality is dependent on human contact and interpersonal interactions, there is even more complexity and no monolithic definition seems to fit.

Unanimously, the published literature agrees that the concept of quality is domain-specific, stakeholder-relative, and contextually bound. From service encounters, to customer satisfaction, to precision medicine, “quality” corresponds to the stakeholder’s goals, needs, expectations, and standards (Avci, 2017a; Donabedian, 1988; Harvey and Green, 1993; Reeves and Bednar, 1994). Reeves and Bednar (1994) aptly state: A search for the definition of quality has yielded inconsistent results. Quality has been variously defined as value (Abbott, 1955; Feigenbaum, 1951), conformance to specifications (Gilmore, 1974; Levitt, 1972), conformance to requirements (Crosby, 1979), fitness for use (Juran et al., 1974, 1988), loss avoidance (Taguchi, cited in Ross, 1989), and meeting and/or exceeding customers’ expectations (Gronroos, 1983; Parasuraman et al., 1985). Regardless of the time period or context in which quality is examined, the concept has had multiple and often muddled definitions and has been used to describe a wide variety of phenomena (p. 419).

An interdisciplinary query of the literature on quality confirms that this position remains true.

Quality frameworks analogous to human participant research

As there are no precise definitions of IRB application quality in the existing literature, seeking an analog from other disciplines becomes the next best option for now, at least until the study of IRB application quality frameworks expands. The business, healthcare, and education sectors’ definitions of quality are useful benchmarks, but are not an accurate enough comparator to the quality of research applications, nor the work of IRBs.

There are three papers in the available literature that approximate models of quality relevant to the questions posed in this paper. As there is not an exact or beneficial match, only a brief summary of each is provided here:

Avci’s (2017b) paper on defining the quality of bioethics education offers a validation of the state of the literature. Recognizing the same shortage within the extant literature, Avci’s work also draws on other disciplines studying quality, and helpfully corroborates the applicable antecedent examples of quality.

Pech et al.’s (2007) work attempts to provide practical guidance to researchers, but emphasizes instead how researchers should anticipate the IRB’s regulatory concerns. Even if researchers increased their regulatory literacy (as much of the literature discussed in this paper suggests they should), this overbroad advice could skew researchers’ expectations by oversimplifying success and misdirect their efforts by wagering on regulatory solutions. Expecting IRB opposition is problematic because it primes researchers toward anticipating obstacles. Increasing one’s understanding of research ethics and federal regulations should be rooted in protecting research participants, not failure avoidance. A more positive approach to the IRB application experience is necessary, and this includes the valuable learning that comes from trial and error.

Sieber and Baluyot’s (1992) paper is a short survey-based research study which, despite its brevity, appears to be the only work to explicitly define IRB application quality in the presently available literature (Figure 3). The author’s definition of quality is limited though: it is only briefly mentioned, is not explored in any great detail, and focuses on low-quality protocols, not high-quality protocols (as this paper has).

While the Avci (2017a), Pech et al. (2007), and Sieber and Baluyot (1992) pieces are proximal to assessing IRB application quality, large gaps remain in what is understood about what a high quality application looks like, why it matters, the barriers and bridges researchers face in producing quality applications, and how IRBs can effectively promote and support the production of quality applications.

Why the lack of a definition of quality is a problem for practice

Federal regulations establish the required criteria for approval of the conduct of research with human participants (45CFR§46.111). The IRB cannot approve a proposed research study that does not satisfy this criteria. Even so, the regulations do not prescribe how research proposals are submitted to the IRB, nor do they codify the components of a standard IRB application.

Each IRB is required to maintain written operational procedures describing their recordkeeping and decision-making, but can exercise their discretion on the precise mechanism for IRB application submission. Application templates created by IRBs provide researchers with a functional framework for describing and structuring their research studies. Such templates are the best approximation at a roadmap, as they request information the IRB believes to be the most useful and compelling in satisfying the required regulatory criteria for approval.

Despite the head start provided by the regulations and supported by templates, preparing a quality IRB application remains a challenge. As stated, there are significant gaps in what we know, empirically and descriptively, about how to define application quality. To that end, a more nuanced and practical understanding is needed of what both IRBs and researchers consider to be a successful IRB application experience.

Future opportunities

Sociologist William Bruce Cameron wrote: “Not everything that can be counted counts, and not everything that counts can be counted” (Cameron, 1963: 13). Thus is the tension between downstream effects and upstream solutions for a challenge like defining IRB application quality. The downstream effects of a poor quality IRB application are evident, serious, and potentially innumerable (e.g. inadequate risk mitigation, misrepresented study procedures). Upstream impacts are largely invisible and can thus seem less urgent (e.g. avoiding non compliance, acceptable turnaround times). However, characterizing quality is urgent. Without it, two consequences are guaranteed: (1) IRBs will continue reacting to downstream problems that result from poor quality rather than proactively seeking mitigation upstream and (2) researchers will continue to perceive the IRB as obstructive, regard their interactions as negative, and find the application process confusing.

For too long, issues of quality and education have been insufficiently researched. IRBs have been forced to innovate flexible and creative approaches to their work where the regulations are noticeably silent. A definition of IRB application quality, and how to accomplish it, cannot afford further delay. Empirical investigation is needed to query, design, and evaluate standards for IRB application quality. An in-depth examination of this topic also has the potential to inform educational strategies that can meaningfully facilitate quality IRB application preparation. This type of evidence-based approach would ensure IRBs are better positioned to assess the regulatory, ethical, scientific, and administrative requirements of a proposed study, to strengthen the research-practice nexus at their organization, and ultimately, protect the rights of research participants.

Footnotes

Acknowledgements

I have no acknowledgments to declare.

Declaration of conflicting interest

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

All articles in Research Ethics are published as open access. There are no submission charges and no Article Processing Charges as these are fully funded by institutions through Knowledge Unlatched, resulting in no direct charge to authors. For more information about Knowledge Unlatched please see here: ![]()

Ethics approval

Research ethics approval was not required for this study.