Abstract

The primary purpose of Institutional Review Boards (IRBs) is to protect the rights and welfare of human research participants. Evaluation and measurement of how IRBs satisfy this purpose and other important goals are open questions that demand empirical research. Research on IRBs, and the Human Research Protection Programs (HRPPs) of which they are often a part, is necessary to inform evidence-based practices, policies, and approaches to quality improvement in human research protections. However, to date, HRPP and IRB engagement in empirical research about their own activities and performance has been limited. To promote engagement of HRPPs and IRBs in self-reflective research on HRPP and IRB quality and effectiveness, barriers to their participation need to be addressed. These include: extensive workloads, limited information technology systems, and few universally accepted or consistently measured metrics for HRPP/IRB quality and effectiveness. Additionally, institutional leaders may have concerns about confidentiality. Professional norms around the value of participating in this type of research are lacking. Lastly, obtaining external funding for research on IRBs and HRPPs is challenging. As a group of HRPP professionals and researchers actively involved in a research consortium focused on IRB quality and effectiveness, we identify potential levers for supporting and encouraging HRPP and IRB engagement in research on quality and effectiveness. We maintain that this research should be informed by the core principles of patient- and community-engaged research, in which members and key stakeholders of the community to be studied are included as key informants and members of the research team. This ensures that relevant questions are asked and that data are interpreted to produce meaningful recommendations. As such, we offer several ways to increase the participation of HRPP professionals in research as participants, as data sharers, and as investigators.

The primary purpose of Institutional Review Boards (IRBs) 1 is to protect the rights and welfare of research participants. Evaluation and measurement of how IRBs satisfy this purpose, and address other important goals including promoting justice, fostering an ethical research culture at their institutions, and maintaining public trust in research (Lynch et al., 2019), are open questions that demand empirical research. Research on IRBs, and the Human Research Protection Programs (HRPPs) 2 of which they are often a part, is necessary to inform evidence-based practices, policies, and approaches to quality improvement in human research protections (Anderson and DuBois, 2012; Sieber, 2009).

Research questions that are relevant to IRB and HRPP effectiveness (how well they achieve their intended outcomes) and quality (how fairly, consistently, and efficiently they do so) are wide ranging. No single research question can encompass the complete picture of how well any IRB or HRPP operates, let alone how well the system is doing as a whole, but individual questions can help to address important pieces of the puzzle. For instance: Are research participants satisfied with their experience? Do they understand the information conveyed during the consent process? Do they experience avoidable harms? Do researchers find that the review process improves their capacity to protect the rights and welfare of participants? Are expert reviewers engaged in substantive deliberation about regulatory and ethical standards? Do they provide support and justification for their decisions in a manner that enhances understanding of human research protections? Additional examples abound.

In addition to conceptual and definitional challenges in evaluating IRB and HRPP quality and effectiveness, there are layers of practical challenges and barriers to studying these matters empirically (Abbott and Grady, 2011; Berry et al., 2019; Coleman and Bouësseau, 2008; Lynch et al., 2021; Nicholls et al., 2015). For research on IRBs and HRPPs to be valid, reliable, generalizable, and most importantly, utilized to improve practice, it must include IRBs and HRPPs that oversee all types of research, at institutions of all sizes and types, and across geographic areas. Additionally, those IRBs and HRPPs must be committed to sharing information and conceptual insights for research. More specifically, organizational leaders must be willing to participate in formative research about how best to define and measure both quality and effectiveness. They must also provide access to relevant staff, board members, investigators, and research participants so that their perspectives and experiences can be directly examined through surveys, interviews, and other methods; to gather and share policy documents, existing metrics, and performance data; and to pilot and test new interventions aimed at improving IRB and HRPP quality and effectiveness. In addition, sufficient funding and resources are needed to support all of the above.

Ultimately, IRBs and HRPPs should be viewed as part of and subject to the scientific process, rather than standing apart from it. To date, however, HRPP and IRB engagement in empirical research about their own activities and performance has been limited, as reflected in our experience and in the literature (Klitzman et al., 2020; Lidz et al., 2012). For example, survey responses from HRPP professionals (we use this umbrella term to include HRPP/IRB staff members, IRB chairs, board members, and others involved in research oversight) are often low, as is participation in research that aims to collect data on IRB practices and decisions (Clapp et al., 2017; Taylor et al., 2021). There have also been few rigorous studies testing potential research oversight policies or applied interventions that could inform future practice (Lynch et al., 2020; Nicholls, 2017; Resnik, 2015). This is not to say that HRPPs and IRBs are not engaged in extensive quality improvement efforts—they are (Lynch et al., 2021; Serpico, 2021). Instead, the concern is that these efforts are often limited to individual institutions and programs, rather than being conducted as collaborative, systematic research to inform research ethics oversight more broadly.

To promote more robust engagement of HRPPs and IRBs in self-reflective research on HRPP and IRB quality and effectiveness, several barriers to their participation need to be addressed. First, HRPP/IRB staff members already face extensive workloads, and IRB chairs and board members are typically volunteering their time or fulfilling faculty service requirements. As a result, relevant quality and effectiveness research is often squeezed in around the margins rather than being viewed as a core component of HRPP and IRB work. At the same time, the information technology systems used to manage research protocol reviews may not be designed to easily facilitate the sort of data collection and sharing that could meaningfully support research on HRPP/IRB quality and effectiveness. Second, there are few universally accepted or consistently measured metrics for HRPP/IRB quality and effectiveness, making it challenging to compare data across programs or to assess the impact of policy or practice changes. Third, institutional leaders may have concerns about confidentiality risks to researchers, sponsors, IRB members and HRPP staff, and the institution that might arise from externally sharing information needed for this research. They may also have other legal and reputational concerns about sharing details about human research protection program quality, quality measures, and performance. Fourth, professional norms around the value of participating in this type of research are lacking. There is no regulatory requirement to do so, and, although quality measurement is an expectation for HRPP accreditation, that expectation is focused on individual organizations. As a result, research on HRPP/IRB quality and effectiveness may not be valued by institutional leaders, rendering it a low priority. Lastly, despite the central role of HRPPs and IRBs and the broad relevance of their quality and effectiveness, in the research enterprise, obtaining external funding for research on IRBs and HRPPs is challenging, which further limits the ability to do sector-wide studies.

Resolving these challenges is the central mission of the Consortium to Advance Effective Research Ethics Oversight (AEREO, www.aereo.org), which aims to improve HRPP/IRB quality and effectiveness by defining relevant outcomes, empirically evaluating them, and informing evidence-based policies and best practices. As a group of HRPP professionals and researchers actively involved in AEREO, here we identify potential levers for supporting and encouraging HRPP and IRB engagement in research on HRPP/IRB quality and effectiveness in response to the key barriers outlined above. Fundamentally, we maintain that this research should be informed by the core principles of patient- and community-engaged research, in which members and key stakeholders of the community to be studied are included as key informants and members of the research team in order to ensure that relevant questions are asked and that data inform recommendations meaningfully (Clinical and Translational Science Awards Consortium Community Engagement Key Function Committee Task Force on the Principles of Community Engagement, 2011; Frank et al., 2015). As such, we offer several ways to increase the participation of HRPP professionals in research as participants, as data sharers, and as investigators. Based on our experience with AEREO, we recommend and describe what a community-engaged approach to research on HRPP/IRB quality and effectiveness can look like. Such an approach could facilitate research on HRPPs and IRBs and positively impact empirical research on research ethics issues more broadly. Additionally, this approach has the potential to decrease barriers to conducting embedded research ethics studies, such as on innovations in informed consent.

Five levers for supporting and encouraging participation in research on HRPP/IRB quality and effectiveness

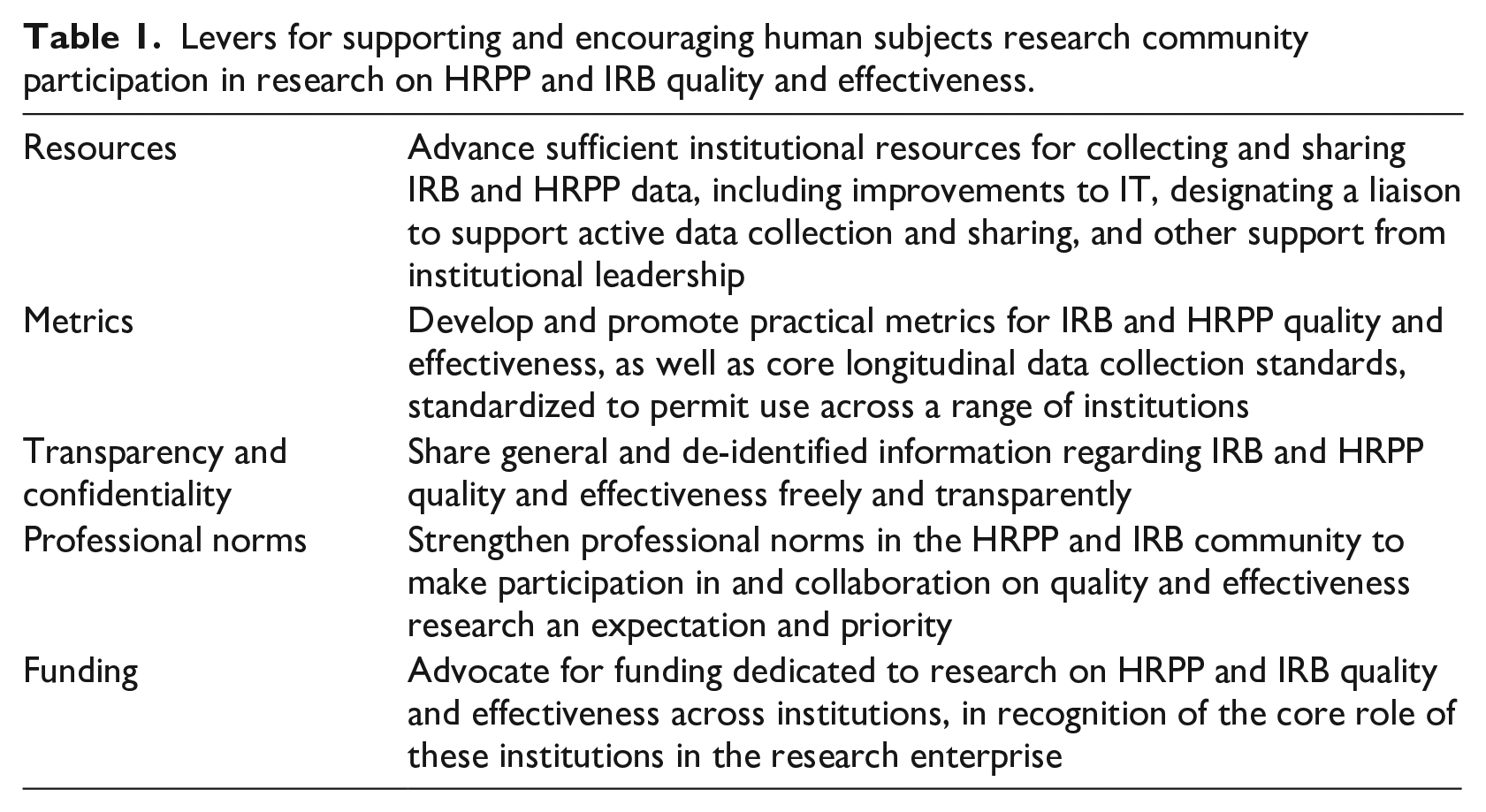

To address the barriers identified above, cooperation is needed from HRPPs, institutional leaders, professional organizations, and those conducting research on IRBs. In particular, they must work together to: (1) advance sufficient institutional resources for collecting and sharing IRB and HRPP data; (2) develop and promote practical metrics (performance indicators) for IRBs and HRPPs that are standardized as far as possible given different institutional features and portfolios; (3) clarify appropriate protections for the confidentiality of investigators, IRBs, and institutions; (4) strengthen professional norms regarding research participation; and (5) advocate for increased funding dedicated to research on HRPP/IRB quality and effectiveness (Table 1).

Levers for supporting and encouraging human subjects research community participation in research on HRPP and IRB quality and effectiveness.

Institutions should provide sufficient support and resources for collecting and sharing data

Research on HRPP/IRB quality and effectiveness requires defining the outcome measures that relate to quality and effectiveness and then identifying metrics that reasonably relate to these outcomes. To this end, such research also requires gathering information about HRPP and IRB policies, procedures, and practices, as well as the data that these bodies collect for their own operations and compliance purposes (e.g. meeting minutes, adverse event reports, investigator and participant complaints, etc.). However, such information may be time consuming to collect, difficult to de-identify or anonymize, and challenging to access in a way that allows for easy retrieval, analysis, and sharing (He et al., 2015; Lynch, 2018). IRB protocol management systems are set up to facilitate protocol review and manage workflows, not necessarily to facilitate research. Most do have tracking and reporting capabilities but can require custom-built record queries that involve manual manipulation and cleaning before data can be meaningfully utilized. For example, most electronic submission systems do not allow for a simple query of all decision letters to investigators conducting greater than minimal risk research with children or all protocols requesting waivers of or alterations to informed consent. While collecting and collating this kind of information would be informative for understanding the extent to which IRB review identifies substantive ethical concerns and requires modifications, at most institutions doing so would require a significant amount of manual person hours—hours that HRPP staff members and the IT departments that support them typically cannot spare. Such challenges may be easily fixable from a technological standpoint via improving the search capacities and other features of IRB software to allow more flexibility in data retrieval. Investing in software solutions could decrease the burden on human resources in the long term. However, changes to IRB software take time to implement and may entail new costs, training requirements, hiring technical developers, and other inconveniences which may cause relevant stakeholders to resist.

Beyond passive data collection from submissions, decisions, and other records, responding to surveys or other requests that ask for specific information (e.g. reasons for requiring modifications on specific types of protocols) requires identifying and delegating someone to do the work. However, invitations to participate in research on HRPP/IRB quality and effectiveness may be overlooked when the appropriate respondent is not self-evident or is not the person who receives the initial invitation to participate. To address this challenge, HRPPs with the available resources should consider designating a research liaison who is clearly identified on the institution’s website. 3 Elevating research participation as part of an HRPP professional’s job duties communicates the value of the labor involved and the institutional commitment to evidence-based practice. This role could be filled by a variety of people, such as a member of HRPP staff with research skills or appropriate training, a faculty member with an IRB appointment, or a graduate student or post-doc in a relevant field such as bioethics, education, or organizational psychology. Indeed, designating a research liaison is a good way to take advantage of the fact that many HRPP professionals have research backgrounds or are working toward advanced degrees in relevant fields.

Resources to support research engagement broadly, and data sharing in particular, may be limited because the institutional leaders who oversee budgets for HRPPs, define their priorities, and create their job positions may not recognize that involvement in such research may enhance HRPP and IRB functioning and is critical to advancing the field. As Klitzman (2012) suggests, transparent data sharing and dedication to quality improvement efforts could also generate “good PR” for IRBs that want to reduce perceptions of inaccessibility and ambiguity (Klitzman, 2012; Klitzman et al., 2020). Leaders need to see the value of and be willing to invest in the human capital and information technology support critical to developing protocol submission and workflow systems that also facilitate data retrieval and sharing. To be sure, there is wide variability across institutions in terms of the resources already devoted to HRPPs/IRBs, as well as differences in staff and board size and protocol volume. We call on all HRPPs to consider how they can be more engaged in research on HRPP/IRB quality and effectiveness, while acknowledging that this will be more challenging for smaller institutions and those with fewer resources.

Professional organizations should help develop and promote practical metrics (performance indicators) for IRBs that are standardized to the extent possible

In the human research protection and oversight context, there is limited agreement regarding what outcome measures reflect or correlate with HRPP/IRB quality and effectiveness (Lynch et al., 2021; Nicholls et al., 2015). In part, this is because many of the harms that IRB review aims to prevent, such as research with undocumented informed consent, are rare or not always discovered, while others, such as inadequate informed consent, may be quite subjective with IRBs determining whether ethical standards have been sufficiently met. It has also been argued that a large part of the protection provided by IRBs and HRPPs lies in their mere existence, which encourages researchers to think through ethical challenges and discourages them from exploiting participants (London, 2022).

Even in the absence of comprehensive quality and effectiveness measures, prior AEREO work has emphasized efforts to measure elements relevant to participant outcomes, meaningful board deliberation, and procedures most likely to identify and mitigate risks to participants (Lynch et al., 2020), such as the quality of the consent process, robust assessment of adverse events, and overall participant experience (Lynch et al., 2021). Yet when each HRPP and IRB takes a customized approach to these factors and others, both in the metrics used and the points at which they are collected, it becomes difficult to make comparisons between institutional practices and their impact on IRB quality (Resnik, 2020; Serpico, 2021; Tsan, 2019). For example, consider attempting to evaluate the impact of 2018 changes to the U.S. Common Rule that eliminated the requirement for annual continuing review for some studies. 4 This would require that HRPPs track which studies remain under the old Rule and which transitioned to the 2018 Common Rule (Berry et al., 2019; Nicholls, 2017). Even accreditation standards offer substantial discretion to organizations when specifying their approaches to quality measurement (Lynch and Taylor, 2022). There is no consensus among HRPP professionals regarding standards for minimum data that an HRPP/IRB should collect or definitions of key concepts relevant to quality such as justice, ethical culture, public trust, participant satisfaction, etc. (Serpico, 2021).

Leadership is needed to define and promote a collection of standardized metrics that are correlated to outcome measures of quality and effectiveness. In addition to AEREO’s ongoing relevant work in this domain (Lynch et al., 2020; Lynch et al., 2021; Lynch and Taylor, 2022), professional and accrediting organizations supporting the research oversight community, such as PRIM&R (Public Responsibility in Medicine & Research) and AAHRPP (Association for the Accreditation of Human Research Protections Programs, Inc.) are well-positioned to provide such leadership, as are federal agencies such as the Office of Human Research Protections (OHRP) and the National Institutes of Health (NIH). At a minimum, they could initiate efforts to conduct research on the specific metrics IRBs currently collect, how they are collected, and for what purposes, thereby supporting efforts to establish more uniform measurement and identify measures that should be preferred, and, ultimately, encouraging their uptake. In addition, as Fernandez Lynch and Taylor (2022) note, metrics are typically envisioned as quantitative, but it could also be useful to consider “qualitative assessments that encourage organizations to broadly consider ‘how things are going’” in relevant quality domains, since “examination is better than ignoring important elements of quality simply because we are not sure precisely how to score them” (p. 11).

Researchers should ensure sufficient confidentiality protections, and the default position of institutions should favor transparency

Research on quality and effectiveness requires that IRBs and HRPPs allow themselves to be studied and observed by outsiders. General lack of transparency about IRB decision making and processes is well documented (Lynch, 2018; Stark, 2011), but there are often good reasons for keeping IRB meeting discussions and decisions confidential. For instance, it is important to protect participants’ privacy, ensure open and frank discussion of potential concerns regarding submitted protocols, protect proprietary information and intellectual property, preserve investigators’ reputations, and maintain peer review privilege in litigation. HRPP professionals and institutional leaders may also be concerned that sharing information for research purposes might make them or the institution more vulnerable to criticism, might jeopardize their employment, expose compliance issues, instigate an audit of their IRB, or even create liability (Lynch, 2018).

When addressing these concerns, it is critical to distinguish between general and protocol-specific information, as well as between identifiable and de-identified information. General information, such as HRPP and IRB policies; templates for submissions; review checklists; number and types of protocol reviewed annually; turn-around times and methods of calculation; satisfaction surveys used with participants, investigators, and board members, and aggregate results; staff leadership name and contact information; and similar details, should be posted and shared with few, if any, restrictions. Although this information has the potential to raise reputational concerns, those should not be deemed great enough to inhibit the research on HRPP/IRB quality and effectiveness or the value of the data gathered.

For information about specific protocols and related IRB decisions, we can differentiate between identifiable and de-identified materials. It is reasonable for identifiable materials to be more restricted, but it is also reasonable to expect materials to be de-identified when possible. This might include removing identifiers about the institution, investigator, sponsor, IRB reviewers, or others to minimize reputational concerns. This sort of de-identification could be resource-intensive for institutions, depending on the materials in question, but it may also serve in adhering to confidentiality requirements. If standards similar to those for maintaining the confidentiality of human research data are followed, institutional leaders and HRPP professionals should trust them. We also note that some states make IRB records subject to public requests, reflecting a commitment to transparency that should be explored more broadly to support research on HRPP/IRB quality and effectiveness. Overall, more work is needed to determine precisely which IRB/HRPP materials (with which details) would raise genuine legal concerns if shared outside the institution for research purposes. But rather than assume a position in which materials will not be shared, we recommend the alternative default: sharing as much as possible to support research on HRPP/IRB quality and effectiveness unless there is a clear, strong reason not to do so.

An important benefit of this default toward sharing is that engagement of more IRBs and HRPPs in research—either as contributors of data in observational studies or sites in interventional studies—not only increases generalizability but also strengthens the confidentiality of published data, making it harder to identify institutions, sponsors, investigators, participants, and others. Collaborative research that gathers data from large numbers of institutions can promote “safe spaces” to share information about challenges and problems, minimizing reputational risks, and encourage transparency across the field. The more institutions that participate, the lower the possibility of identification of any one institution. When more IRBs participate in research, this also helps normalize the sharing of performance data that may fall short of intended goals; no institution will be perfect. Sharing information for research on HRPP/IRB quality and effectiveness also demonstrates a reputation-enhancing institutional commitment to quality improvement.

Everyone committed to protecting human research participants should promote a professional norm of participation in research on IRB quality and effectiveness

Despite the fact that IRBs themselves exist to support the research enterprise, it does not appear to be a strong professional norm to support HRPP staff members, IRB chairs, or board members to take part in research on IRBs and HRPPs either as participants or as investigators. This may be largely because of institutional barriers, or perceptions of and expectations regarding the role of HRPP professionals, and not necessarily due to how HRPP professionals view themselves. Indeed, AEREO has approximately 80 members from over 50 institutions, indicating that there is significant interest within the relevant community. Historically, the role of HRPP professionals has been considered by institutions to be an administrative or compliance function rather than an academic one and thus, without expectations for contributing to research and scholarship. Research experience and training varies among HRPP professionals as well. But with increasing professionalization of HRPP staff roles, and in particular the emphasis on continuing education for certification (e.g. Certified IRB Professional (CIP) exam, https://primr.org/cip), as well as the expansion of empirical research and scholarship on research ethics in recent decades, HRPP professionals may be better positioned to participate in and collaborate on research related to HRPP/IRB quality and effectiveness (Babb, 2020). HRPP leaders and managers can encourage and support their staff and members (e.g. through training and resources) to participate in research when opportunities arise, to initiate research projects when they have ideas, and to present and publish their research findings. Many IRB chairs and board members are conducting research in their own disciplines, but given the time spent on IRB work, they likely also could be encouraged and supported to contribute their skills and experience to research on HRPP/IRB quality and effectiveness.

Professional norms can be influenced by expectations (such as duties that are articulated in job descriptions), requirements (such as regulations), incentives (such as funding and other resources, or promotion criteria), and penalties (such as loss of accreditation). We think sanctions are unlikely to be helpful here, but encouragement (such as guidance from the Secretary’s Advisory Committee on Human Research Protections (SACHRP) and regulatory bodies); resources (such as the availability of research grants); and expectations (including special recognition and designating an HRPP research lead or liaison, as noted above), could help promote more widespread participation in research on HRPP and IRB quality and effectiveness thereby changing professional norms and creating professional opportunities. Well-resourced HRPPs, as well as professional organizations and accrediting bodies such as PRIM&R and AAHRPP, respectively, can help lead the way. Ultimately, institutional leadership must make it clear to HRPP staff and members that participation in research on IRB quality and effectiveness is valuable and therefore should be prioritized for the HRPP program. Formal training of HRPP professionals can help them be better equipped to contribute to research efforts. More engagement of HRPP professionals in research could build trust across the field and strengthen the legitimacy of the resulting evidence-base. Perpetual calls to close the gap on quality and effectiveness metrics signal that empirical data in our field could benefit from ground-level initiatives from HRPP professionals (Scherzinger and Bobbert, 2017).

Public funding for research on HRPP/IRB quality and effectiveness is needed

In the USA, although the portfolio of bioethics-related research funded by NIH is expanding, the majority of NIH-funded bioethics research to date has focused on genomics, international bioethics capacity building, and the ethics of biomedical research (National Academies of Sciences Engineering and Medicine, 2020). Given that in the U.S., IRB review of specific protocols and the establishment of IRBs within institutions are federally mandated, federal funds should support initiatives that aim to promote the quality and effectiveness of these endeavors.

To be sure, there are many other funding priorities for federal agencies and for institutions. However, funding for research on HRPP/IRB quality and effectiveness is in line with broader research goals, such as ensuring that studies meet their recruitment goals and that participants are representative of the patient population in order to promote generalizability. Failing to support such research could contribute to failures to adequately protect research participants and perpetuate potentially poor quality research oversight. This would have significant downstream effects across the research enterprise.

A community-engaged approach to research on HRPP/IRB quality and effectiveness

Progress on each of the five levers described above can be accelerated by a community-engaged approach to research on HRPP/IRB quality and effectiveness. Hallmarks of community-engaged research include identification by affected stakeholders of the problems to be studied and outcomes to be measured, as well as an orientation toward disseminating and utilizing findings for action and system change. Incorporation of stakeholders’ perspectives occurs at every stage of the research from design through implementation, and ideally stakeholder partners are included as co-investigators (Clinical and Translational Science Awards Consortium Community Engagement Key Function Committee Task Force on the Principles of Community Engagement, 2011). In addition to HRPP professionals, staff, IRB chairs, and board members as well as other stakeholders such as researchers, study participants, and patient advocates should also be engaged in research on IRB and HRPP quality. Here, however, we focus on engaging HRPP professionals whose primary work is research ethics review as a critical first step.

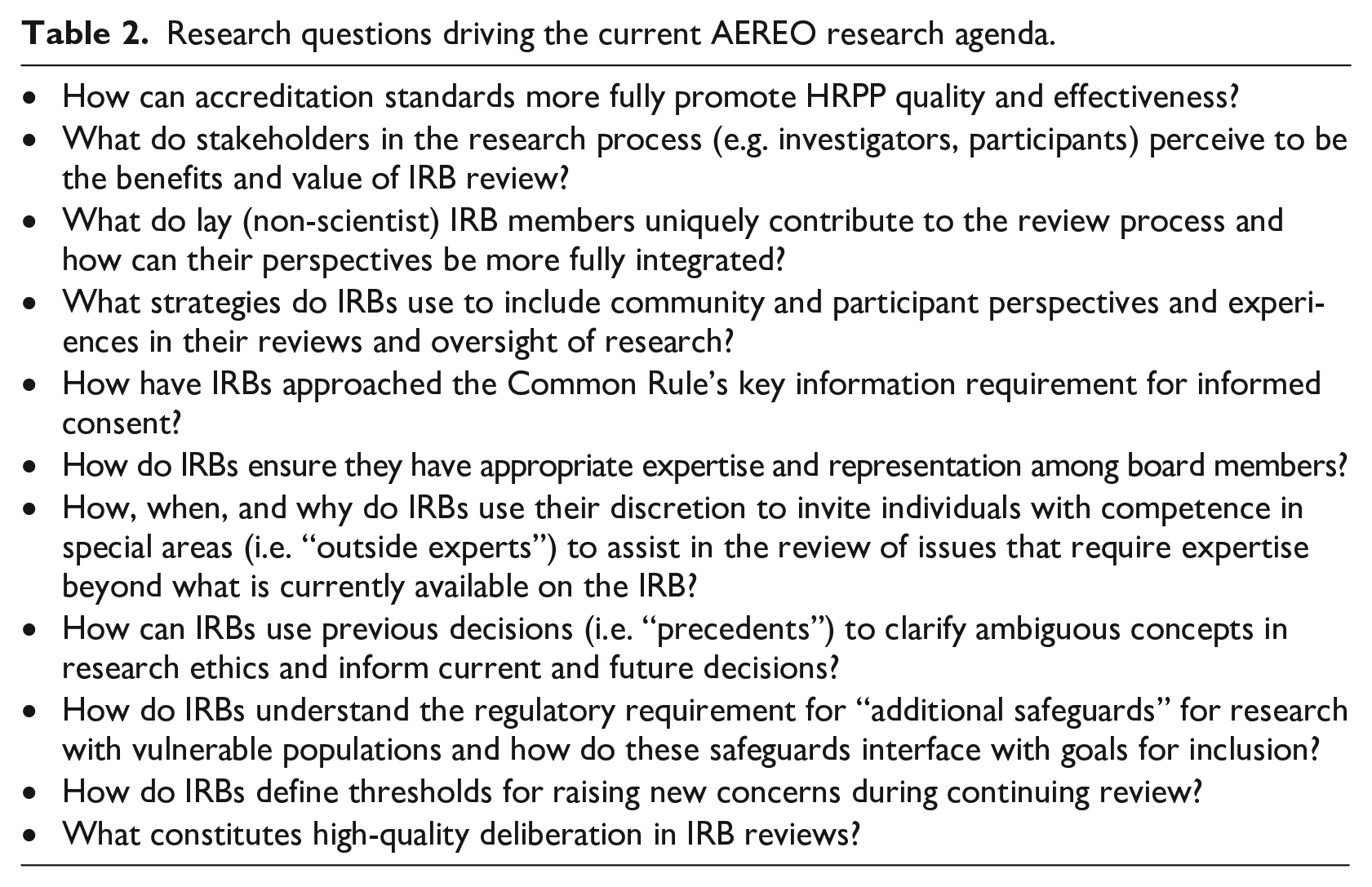

The AEREO Consortium applies the basic principles of community engagement to research on HRPP/IRB quality and effectiveness, bringing researchers/scholars and HRPP professionals from a variety of institutions together in collaboration with professional organizations like PRIM&R and AAHRPP to identify research questions, engage HRPPs at members’ institutions as sites and participants in research, and disseminate results in ways that can be applied by HRPPs to support changes in their practice. Our recommendations for increasing engagement of HRPP professionals in research come from our experience since AEREO’s founding in 2018. Any AEREO member can bring research questions and ideas for projects to the group for brainstorming. (See Table 2 for a snapshot of AEREO’s current research agenda and www.AEREO.org for a list of published research projects.) Many projects are led or co-led by HRPP professionals, including those who do not have research expectations in their job duties, but who are motivated or supported to participate in a variety of ways. Examples of AEREO projects that have been proposed and led by HRPP professionals include a pilot project to explore whether and how tracking IRB decisions to create an “institutional memory bank” could work to promote consistency (Seykora et al., 2021) and a mixed methods study of how IRBs utilize outside experts for protocol reviews (Serpico et al., 2022). Once a project is defined, regardless of the research question, method, or leadership of a particular project, our standard process involves seeking input from our HRPP professional members to determine appropriate outcomes to measure, develop and pilot data collection instruments, finalize sampling and recruitment plans, interpret results, and disseminate recommendations. As we move from data collection to developing and testing interventions, member institutions will serve as sites for interventional research.

Research questions driving the current AEREO research agenda.

We acknowledge that our recommendations are aspirational, but we aim to stimulate discussion about how to improve research on HRPP/IRB quality and effectiveness. As we engage more institutions and more HRPP professionals, we hope to learn more about how certain barriers to research on HRPPs/IRBs (e.g. concerns about institutional privacy) can be addressed. Engaging HRPP professionals as partners in research on HRPP/IRB quality and effectiveness means they have a role in framing the questions to be asked around problems that they believe need to be addressed, and in analyzing and interpreting research findings based on their “insider” understanding of how IRBs and HRPPs work. Taking a user-centered approach to this research, with HRPP professionals engaged, fosters their buy-in for research that evaluates their work, and has the potential to identify important research questions that have not been asked before. The more HRPP professionals are engaged in research on HRPP/IRB quality and effectiveness in significant and meaningful ways, the more that engagement will be supported by institutional leaders and resources. And, the more HRPP professionals are recognized for their research efforts, the more they will want to participate in research. Ultimately, this will lead to more valuable research that evaluates and ultimately improves HRPP/IRB quality and effectiveness.

Footnotes

Acknowledgements

The authors would like to acknowledge helpful feedback from Holly A. Taylor, PhD, MPH, AEREO Co-Chair and all AEREO consortium members for their ongoing commitment to human research protections and assessing HRPP/IRB quality and effectiveness.

Author note

Second author Elisa A Hurley declares the existence of a non-financial competing interest as she is Executive Director of Public Responsibility in Medicine & Research (PRIM&R) and several of the recommendations in the paper are directed at PRIM&R’s role as a professional membership organization for HRPP professionals.

Funding

All articles in Research Ethics are published as open access. There are no submission charges and no Article Processing Charges as these are fully funded by institutions through Knowledge Unlatched, resulting in no direct charge to authors. For more information about Knowledge Unlatched please see here: ![]() .

.

Ethical approval

Not applicable.