Abstract

Qualitative fieldwork research on sensitive topics sometimes requires that interviewees be granted confidentiality and anonymity. When qualitative researchers later publish their findings, they must ensure that any statements obtained during fieldwork interviews cannot be traced back to the interviewees. Given these protections to interviewees, the integrity of the published findings cannot usually be verified or replicated by third parties, and the scholarly community must trust the word of qualitative researchers when they publish their results. This trust is fundamentally abused, however, when researchers publish articles reporting qualitative fieldwork data that they never collected. Using only publicly available information, I argue that a 2017 article in an Elsevier foreign policy and international relations journal presents anonymised fieldwork interviews that could not have occurred as described. As an exercise in post-publication peer review (PPPR), this paper examines the evidence that calls into question the reliability of the putative fieldwork quotations. I show further that the 2017 article is not a unique case. The anonymity and confidentiality protections common in some areas of research create an ethical problem: the protections necessary for obtaining research data can be used as a cover to hide substandard research practices as well as research misconduct.

Keywords

Introduction

The bestowal of a cloak of confidentiality and anonymity on interviewees allows researchers to obtain reliable qualitative data on sensitive topics. These important protections enable fieldwork researchers to gain candid responses from interviewees who are thereby free to speak without fear of reprisals. When the data from such interviews are prepared for publication, researchers should ensure that any statements made by interviewees cannot be traced back to them. Members of the scholarly community trust that the qualitative data reported under these conditions in scholarly publications are reliable, since the data set typically does not admit of verification or replication by third parties.

A violation of that trust occurs when qualitative researchers misuse the practice of confidentiality and anonymity to produce fraudulent works. Proving that a researcher has fabricated fieldwork findings under such circumstances is quite difficult. In a widely publicised case involving political anthropologist Mart Bax, the official investigating committee could only conclude that the allegations of qualitative data fraud were ‘highly plausible’ (Baud et al., 2013: 17). In the words of the committee members, ‘it has been impossible to find supporting evidence or sources’ for the basic fieldwork claims (Baud et al., 2013: 17). Bax was found to have committed scientific misconduct, and retractions have been issued for some of his works (Ferguson, 2014; Marcus, 2020). The academic community failed to realise the problem with his research in a timely way, in part because of his use of anonymization techniques. Bax delayed scrutiny of his research for years by claiming ‘to be protecting informants by using pseudonyms and inventing geographical names for his field sites’ (Sandberg and Scheer, 2020: 6; see also Margry, 2020).

Discrediting fraudulent data putatively collected under the conditions of confidentiality and anonymity is difficult. In this paper, I argue that a recent article published in an Elsevier foreign policy and international relations journal reports fieldwork interviews that could not have occurred as described. Using only publicly available information, I propose that the qualitative data is not reliable and that the publication of the article is a suspected violation of research and publication integrity. (I should mention that the then-Editors-in-Chief of the Elsevier journal are aware of an earlier version of the present paper and in August 2019 recommended that it be submitted for publication in a journal that considers ethics in the social sciences.) This paper is an exercise in post-publication peer review; I warn that researchers should be wary of trusting the findings and conclusions set forth in that article and argue further that this case is not an isolated one.

Post-publication peer review

Post-publication peer review (PPPR) is a general term that refers to various practices for publicly disclosing concerns about the reliability of articles belonging to the published scholarly literature. Some researchers have argued that PPPR ‘can out-perform that of the conventional pre-publication process’ in the areas of correction and fraud detection (Tennant et al., 2021). PPPR provides a crowd-sourced layer of scrutiny to the published research literature by finding errors, identifying faulty research practices and discovering cases of research misconduct that were missed by peer reviewers prior to publication.

PPPR of a given article typically occurs in a venue other than the journal that published that article. PubPeer, for example, is an online platform for PPPR that allows users to post concerns about published articles. For all postings in PubPeer, ‘facts must be restricted to those accessible to other readers’ (PubPeer, 2017). Postings on PubPeer not only warn researchers about potential problems with published articles, but the postings have also prompted editors and publishers in many cases to issue retractions, corrections and editorial notes for fraudulent or severely deficient articles (Bik, 2019). The journalists at Retraction Watch regularly report on cases of research misconduct that were first brought to light on PubPeer. The relegation of PPPR to an extrinsic online venue (such as PubPeer) means, however, that many journal readers may miss relevant comments on articles.

Some journals do support PPPR directly by publishing criticisms of articles that have appeared in their pages, and sometimes the authors of the criticised articles are invited to respond. (For recent examples, see Jung, 2019 and Dougherty, 2019a, and the authors’ replies Lin et al., 2019 and Schulz, 2020.) These types of articles are sometimes classified as ‘refutations’. Journal-supported PPPR is the exception rather than the rule, however. Two of the founders of PubPeer have noted that ‘there is a widespread and self-fulfilling perception that journals do not welcome correspondence or comments that criticise their publications’ (Barbour and Stell, 2020: 151). They are not alone in this assessment. The authors of a recent article call for editorial courage and diligence, arguing that ‘editors are in the unique position to facilitate post-publication error correction’ but that ‘many journal editors do not fulfill this responsibility’ (Vorland et al., 2020). Some journal editors and publishers appear to operate by a loose principle termed the research incumbency rule where ‘once an article is published in some approved venue, it should be taken as truth’ (Gelman, 2016). The failure of journals and editors to support investigations into the quality of the articles they have published, as well as the failure to issue corrections in print, has been judged by some researchers to be serious forms of editorial malpractice (Shelomi, 2014; Teixeira da Silva, 2016). Exercises in PPPR sometimes must appear in journals other than the ones in which the deficient articles were published (For an example, see Dougherty, 2019b and updates in Hansson, 2019b and Weinberg, 2019).

As a mechanism to improve the reliability of the published research literature, therefore, PPPR remedies two kinds of failures. First, PPPR addresses the wide range of deficient research practices that harm the quality of published research. These deficiencies range from questionable research practices (QRPs) to major acts of research misconduct, including the standard categories of fabrication, falsification and plagiarism (FFP). Second, PPPR addresses the problem that some editors and publishers fail to alert readers to faulty publications that have appeared under their aegis. Editorial inaction is a major threat to the quality of the published research literature; one of founders of Retraction Watch has concluded that ‘it is incredibly hard to get papers retracted from the literature, or even corrected or noted in some way’ (Oransky, 2020: 141). Furthermore, ‘the suppression of self-corrective mechanisms in science has enabled a surprisingly large number of researchers to build very successful careers based upon the most dubious of research practices’ (Barbour and Stell, 2020: 152). Violation of research ethics is a major driver for increased interest in PPPR; on some accounts ‘the prevalence of misconduct varies 1%−2% and can be as high as 14%’ (Shamoo, 2019: 1).

The role of trust

Pre- and post-publication peer reviewers face many challenges when evaluating manuscripts or articles that report the completion of research activities that are not easy to verify, such as data collection, archival research or lab experiments. In short, reviewers are limited in their ability to authenticate that the findings described in manuscripts or articles have been obtained in the manner described by authors. The trust extended to researchers who submit manuscripts by editors, reviewers and publishers need not be absolute or uncritical; some journals require that manuscript authors submit raw data sets, offer evidence that studies have been pre-registered, or supply copies of dated institutional review board (IRB) approval. Furthermore, some resources offer guidance to pre-and post-publication reviewers who want to be a ‘statistical detective’ (Sainani, 2020), discover plagiarism (Gipp, 2014; Weber-Wulff, 2014) or whistleblow about plagiarism (Dougherty, 2018).

Manuscripts based on qualitative research pose particular vetting challenges for both pre- and post-publication reviewers, since the collected data is typically anonymised with identifiers removed. When researchers interview vulnerable groups on sensitive topics, assurances of confidentiality and anonymity are required to obtain candid, reliable responses. According to a standard understanding of anonymity protections, ‘data should be presented in such a way that respondents should be able to recognise themselves, while the reader should not be able to identify them’ (Grinyer, 2002: 1).

For these reasons, reviewers, editors, publishers and readers extend trust to researchers who publish articles based on anonymised qualitative research with vulnerable populations. In the dilemma between (1) scientific transparency, accuracy, quality and detail, and (2) generous anonymity and confidentiality protections, the default of many in the research community is to favor the latter. Considerations may include the fact that the historical breaches of confidentiality ‘are not uncommon in qualitative sociology and anthropology’ (Tolich, 2018: 2) as well as the prudential position that ‘anonymity cannot be completely guaranteed’ by researchers (Saunders et al., 2015: 629). A common view holds that anonymity and confidentiality protections must be supported liberally to insulate vulnerable research participants from potential harm, such as retaliation, stigma or loss of privacy. Proposals for defusing this dilemma include offering research participants more autonomy via consent forms with a range of confidentiality and anonymity options, some of which allow for the reporting of potentially identifying elements and hence more details about the data collected (Kaiser, 2009).

The trust between researcher and reader can be abused by researchers who use anonymity and confidentiality protections to produce faulty or fraudulent qualitative research. The committee investigating the Bax case emphasised that ‘academic relationships are based on trust in the scientific honesty of everyone involved’ and that ‘reciprocal trust will always be essential’ (Baud et al., 2013: 3, 45). Two editors who recently issued retractions for seven of Bax’s articles noted that the trust between researcher and reader is ‘built not only through the quality and reliability of previously published work’ but also in performances ‘during conferences, meetings and in research cooperation’ (Sandberg and Scheer 2020: 5).

Anonymity and confidentiality protection can also be used as a cover for fraudulent studies that report quantitative research findings. The three committees that investigated the data fabrication and falsification case in social psychology by researcher Diederik Stapel asserted that ‘trust forms the basis of all scientific collaboration. If [. . .] there is a serious breach of that trust, the very foundations of science are undermined’ (Levelt et al., 2012: 4). Other cases where anonymity and confidentiality protections were used as cover for falsifying and fabricating qualitative as well as quantitative data continue to come to light in various fields, including historically influential studies (see Calahan, 2019: 277, 295).

The use of confidentiality and anonymity protections increases the level of trust demanded of readers. Some researchers identify their research subjects only obliquely by using pseudonyms for them or by describing them only with generic descriptions. Direct quotations might be paraphrased to hide idiosyncratic speech patterns. Furthermore, sometimes researchers will be vague about the location where interviews took place. Since the responses by some interviewees might shed light on the identities of other interviewees, researchers must be especially careful in reporting data from research participants who know each other (Tolich, 2004). Major modifications to raw qualitative research data place the reader in a vulnerable position of trust, since verification or confirmation of what has been reported is typically not possible.

A suspected case of unreliable anonymised qualitative data

To illustrate how anonymity and confidentiality protections might be misused in the production of unreliable research, I examine a new case of suspected research and publication misconduct. This exercise in PPPR evaluates an article titled ‘Promoting the Rule of Law in Serbia: What is Hindering the Reforms in the Justice Sector?’ that appeared in the December 2017 issue of an Elsevier quarterly. The article is easily accessible; in addition to the print version, the article is currently available on Elsevier’s ScienceDirect platform as well as on a new webpage for the journal on the University of California Press website. The author of record for the article – hereafter, ‘C.’ – is a widely published professor at a European university. (Since my research focus here is on the suspected abuse of anonymity and confidentiality protections, rather than on any persons who engage in it, the names of any individuals involved do not appear in this article.)

The now-retracted 2017 article 1 purports to explain why weaknesses in the judiciary in Serbia still exist despite the passage of reform legislation. Its findings are said to be based on fieldwork research conducted by C. In a detailed method statement at the beginning of the article, C. explains that in addition to using data from published primary and secondary sources, the research includes ‘face-to-face interviews with judicial system representatives (judges and judicial servants) in Belgrade’ and that ‘all interviews were conducted in the first half of 2015’ (C., 2017: 332). Given the nature of the research, the interviewees are not identified by name in the article, but are only described generically (e.g., ‘judge in a judicial court in Belgrade’; ‘a judicial servant’). These methodological claims are important, as they establish the procedure for data collection (confidential and anonymised interviews), a location for the fieldwork research (Belgrade) and a time window for the interviews (the first 6 months of 2015). Furthermore, readers are told that that the collected fieldwork data is the basis of the conclusions of the article; that is, ‘analysis of the data collected during the interviews enabled proper identification of shortcomings in judicial reforms in Serbia’ (C., 2017: 332). This claim posits an essential relationship between the fieldwork data and the critical analyses of the state of the judiciary in Serbia that follow in the rest of the article. Unmentioned in the article’s research statement, however, are (1) the language in which the interviews were conducted and (2) the total number of research participants.

The principal reason that casts substantive doubt on the reliability of the fieldwork data putatively collected by C. in Belgrade in the first half of 2015 is simple. In short: every quotation C. attributes to anonymised interviewees in the 2017 article has already appeared in print in works prior to 2015 authored by other researchers. In these undisclosed source texts, the verbatim and near-verbatim words are not fieldwork quotations but are simply sentences of scholarly analysis by other researchers. C. appears to be taking sentences of scholarly analysis found in the published writings of other scholars and then re-presenting them as confidential on-the-ground fieldwork interview quotations. What is more, the surrounding passages in which the sentences originally appear in the undisclosed source works are also apparently appropriated by C. In addition to suspected data fraud there is suspected copy-and-paste plagiarism.

Evidence of suspected data fraud

The putative words of a Serbian judge

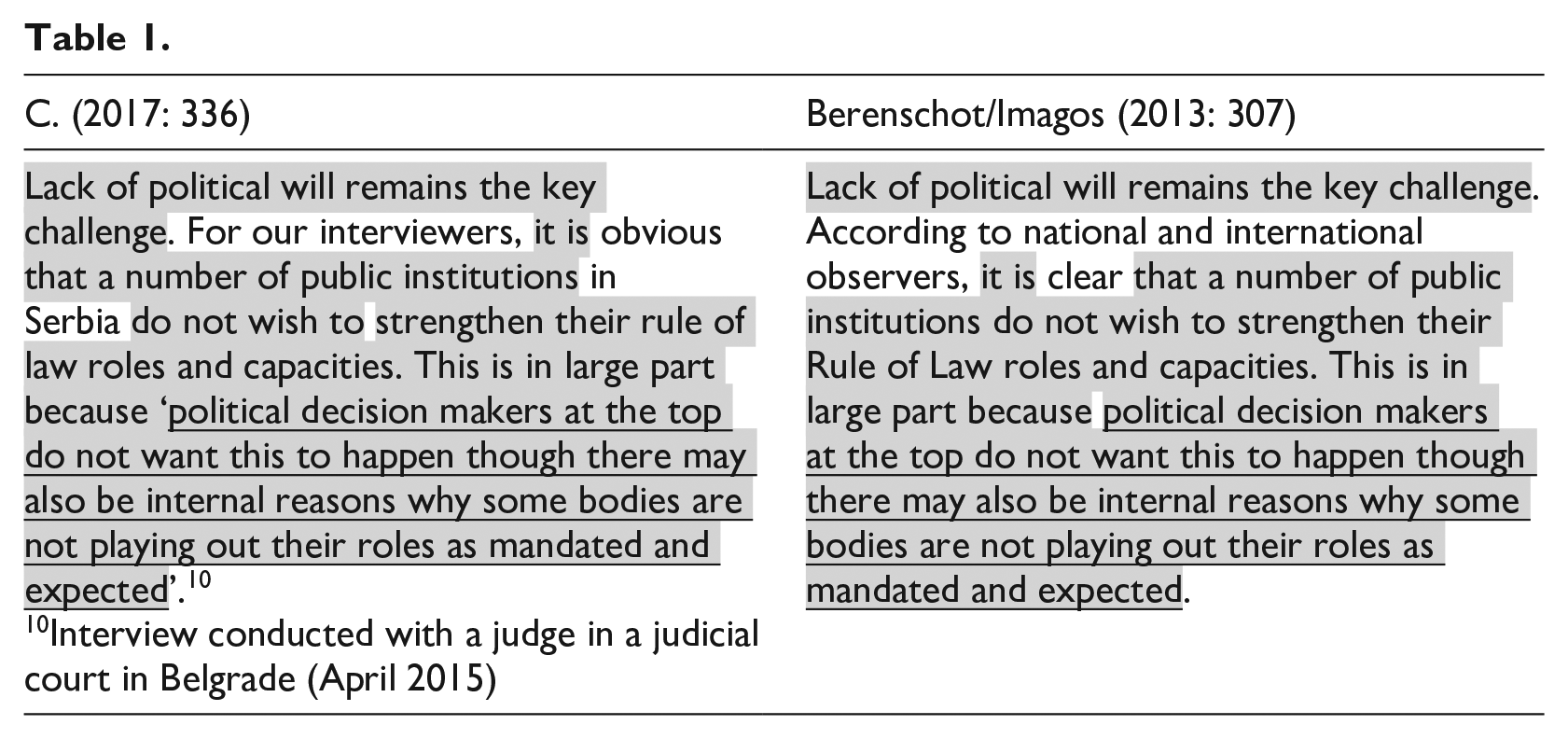

The first example of suspected data fraud in C.’s article is presented in Table 1. In the left column is a passage from C.’s (2017) article, and in the right column is the undisclosed source text. Verbatim overlap between the two passages is highlighted, and the words attributed by C. to a 2015 confidential and anonymised fieldwork interview with ‘a judge in a judicial court in Belgrade’ are underlined.

As Table 1 shows, the 32-word sentence that C. specifically attributes to a judge purportedly interviewed in Belgrade in April 2015 ‘in a judicial court’ corresponds identically with a 32-word sentence found in a 2013 consultancy report. The apparent undisclosed source text is a study funded by the EU and jointly prepared by the international consultancy groups Berenshot and Imagos for the European Union’s IPA Program for the Western Balkans. The study was completed in December 2012 and published in early 2013 under the title Thematic Evaluation of Rule of Law, Judicial Reform and Fight against Corruption and Organised Crime in the Western Balkans (Berenschot/Imagos, 2013). That report was published 2 years earlier than the interview was said to have taken place. In the apparent undisclosed source text, the 32-word sentence is not presented as a confidential fieldwork interview statement but is simply a sentence of scholarly analysis on the part of the report writers. C. appears to have fashioned a sentence of scholarly analysis from the 2013 consultancy report into a fieldwork quotation from 2015 by supplying quotation marks, a speaker (a judge), a location (a judicial court in Belgrade), an occasion (a fieldwork interview with C.) and a general date (April 2015).

Table 1 also provides further evidence that the 2013 consultancy report is the real material for the putative fieldwork quotation: the three preceding sentences that introduce it in C.’s article appear also to be derived directly from the same consultancy study. The first sentence is identical (‘Lack of political will [. . .]’.) and the second and third sentences contain much overlap, including strings of 6 and 18 consecutive words verbatim. Additionally, there is a close synonym substitution, as the word ‘clear’ is rendered as ‘obvious’.

The results of Table 1 can be summarised as follows. The portion in Table 1 that is highlighted and underlined shows evidence of suspected data fraud, and the portion that is highlighted but not underlined shows evidence of suspected copy-and-paste plagiarism.

The plausibility of the fieldwork data

To assume that this putative fieldwork interview quotation in the article in the 2017 Elsevier journal is reliable requires that one take as true the elements of the following implausible scenario: (1) an anonymised Serbian judge, in a confidential interview in Belgrade with C. in April 2015, is unwittingly uttering words that correspond verbatim with the text of a commissioned EU consultancy report published 2 years earlier; (2) in recounting the interview with the judge, C. is unwittingly using words from the very same report to introduce the words of the judge and (3) both the words of the judge and the words of C. happen to be adjacent on the same page of the consultancy report that has more than 325 pages. There is no doubt that C. is familiar with the consultancy report; it is cited elsewhere in the article and listed in the bibliography.

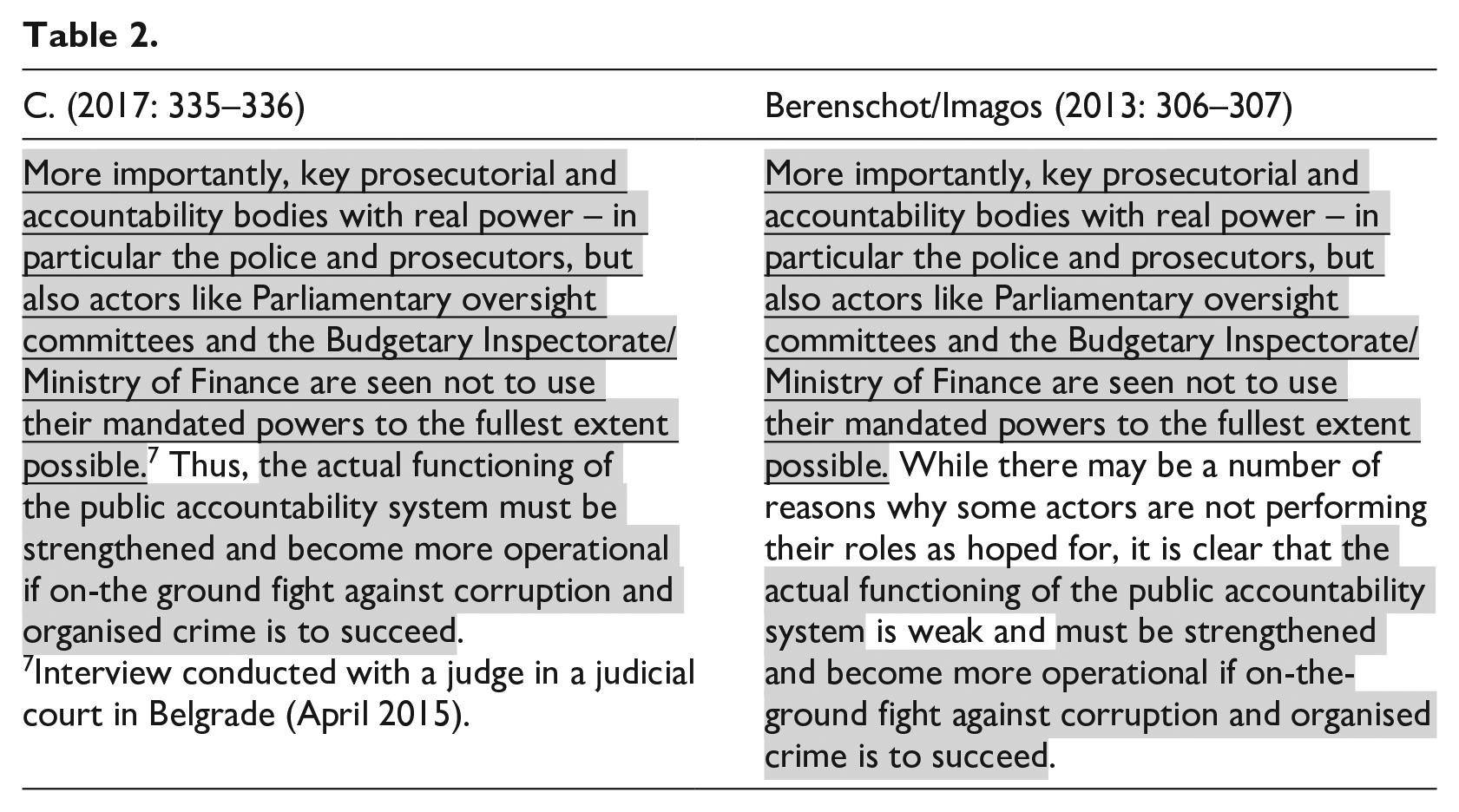

Even if one were to accept the fieldwork quote as reliable, by judging the confluence of such elements as a chance constellation of events, one is still left to account for another feature: every other fieldwork quotation in C.’s article has similar circumstances surrounding it. Table 2 presents a second fieldwork attribution presented in C.’s article. This time the putative interview ‘with a judge in a judicial court’ is presented in the mode of indirect discourse, where C. purports to summarise the judge’s words. Again, there is clear verbatim overlap with the same EU consultancy report that was published 2 years before the fieldwork interview was supposed to have taken place. A string of 43 verbatim words is attributed to the judge via a footnote that identifies the words as having been uttered in a judicial court in Belgrade in April 2015. These very words, however, appear in the EU consultancy report from 2 years earlier, and not as data from a fieldwork interview but merely as a sentence of scholarly analysis. The idiosyncratic punctuation marks, including the use of an en-dash surrounded by spaces, and a forward slash, are the same in both. Apart from the verbatim overlap of 43 consecutive words, these dash and slash punctuation idiosyncrasies suggest a dependency of C.’s putative interview quotation on the previously published consultancy work by Berenshot and Imagos.

The text following the 43-word putative fieldwork summary also overlaps substantively with the 2013 consultancy report. With the exception of the word ‘Thus’, all the remaining 28 words in the passage are also found in the report, and they occur in the next sentence of the report.

Additional electronic evidence

There are minor differences between the passage as is appears in 2017 and its suspected source text, including the omission of some text and a missing hyphen, but the latter omission really constitutes an additional piece of evidence that C. is taking from the report. Where the report has the dual hyphenated expression ‘on-the-ground’, in C.’s version the second hyphen is missing so that it is presented as ‘on-the ground’. This small difference of a missing hyphen is significant. In the 2013 consultancy report, the expression comes at the end of a line, so that ‘on-the-‘ appears at the end of one line and ‘ground’ appears at the beginning of the next line. If one were to copy-and-paste the line, the second hyphen would be read as a hyphen used to divide long words at the end of a line, and so it would not be carried over when one ‘pastes’ the text. The absent hyphen is an additional piece of evidence – albeit electronic circumstantial evidence – that the passage copied from the 2013 consultancy report. Contemporary research on plagiarism detection emphasises how subtle electronic evidence – such as spelling inconsistencies, unintentionally transferred metadata and accidental ligatures from optical character recognition programs – can assist in building a case that a text copies from an undisclosed source text (Weber-Wulff, 2014: 7).

Additional putative fieldwork quotations

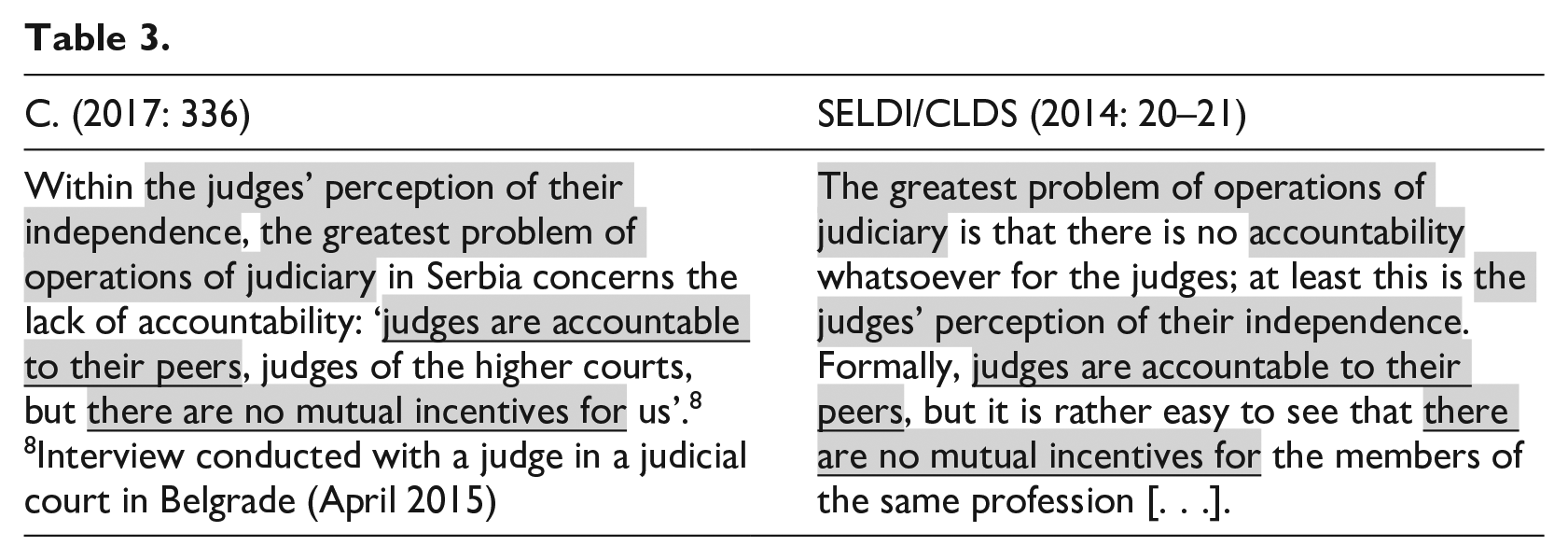

The third putative fieldwork quotation in C.’s (2017) article can also be reduced to an undisclosed and previously published source text. As shown in Table 3, this time the apparent source text is a 2014 report co-authored by researchers at the anti-corruption coalition SELDI (Southeast Europe Leadership for Development and Integrity) and the think-tank CLDS (Center for Liberal-Democratic Studies). Titled Corruption Assessment Report: Serbia, the report ‘provides an overview of the state of corruption and anti-corruption in Serbia in 2013–2014’ (SELDI/CLDS, 2014: i). The report has a publication date of 2014, a year prior to the April 2015 date given by C. for the putative fieldwork interviews in Serbia.

Again, here the substantive part of the presumed quotation ascribed to ‘a judge in a judicial court in Belgrade’ in April 2015 corresponds to a sentence of scholarly analysis found in previously published research literature. And again, the sentence introducing the putative fieldwork quotation also overlaps to some degree with the source text. There is indisputable evidence that C. is familiar with this source text, as it is cited elsewhere in the 2017 article and appears in the bibliography.

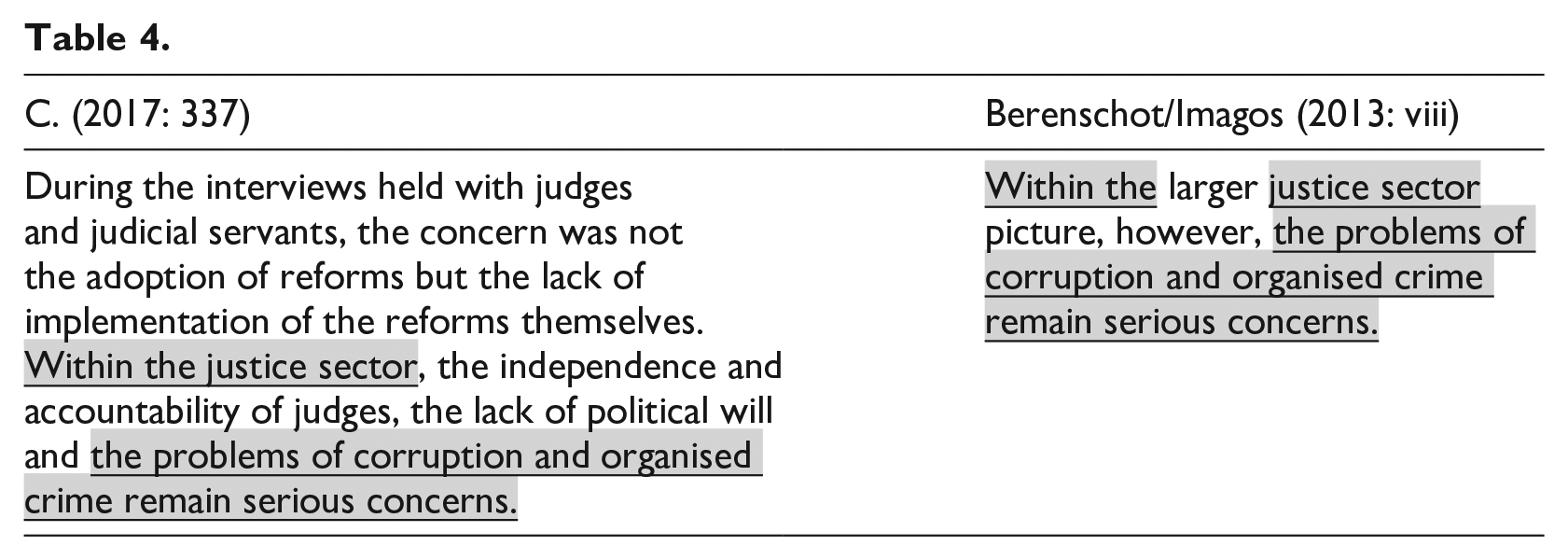

In the conclusion of the 2017 article, C. further invokes the putative fieldwork interviews from 2015. Table 4 displays the relevant passage and its undisclosed source text. The reference here is a general one, since it claims to be offering a summary of the data collected from interviews with ‘judges and judicial servants’ during the first half of 2015. This final representation of qualitative data in the article appears to have an undisclosed source text, which is the same 2013 consultancy report that appeared to serve as the basis for the fieldwork quotations presented in Tables 1 and 2. Here again the apparent source text is simply a passage of scholarly analysis that re-appears in C.’s work as the fruit of confidential and anonymised fieldwork interviews.

All the qualitative data explicitly presented in the 2017 article in the form of putative fieldwork interviews conducted by C. are reducible to passages found in the previously published research literature. The data consists of (1) direct quotations, and (2) indirect discourse summaries. The methodological statement from the beginning of the 2017 article, discussed above, claims that the qualitative fieldwork interview data supplements data from primary and secondary sources, and that the qualitative data is the basis of the critical evaluation of the state of the Serbian judiciary. If the qualitative data presented in the article really consists of sentences of scholarly analysis taken verbatim from previously published literature, then there is no justification for the conclusions of the article. If the data is from previously published primary and secondary sources, there is no genuine qualitative fieldwork data at all. Furthermore, the subject matter of the article – the current state of the judiciary in Serbia – is no longer timely if the new putative fieldwork research is really a repackaging of sentences from works published 2 or 3 years earlier. In a region marked by upheaval and transition, any conclusions based on older sources may not be reliable, even if the source texts pertain to the same region as it existed years earlier. To be sure, the readers of the 2017 article expect that it is presenting new qualitative fieldwork research, not repackaging secondary material as newly obtained on-the-ground fieldwork. If what I have argued above is correct, when readers trust that they are encountering the words of a Serbian judge uttered in a judicial court in 2015, for example, they are really hearing the words of someone else. That is, they are either hearing the words of a researcher working for Dutch or German consultancy groups (Berenschot/Imagos) or the words of a researcher working for coalitions or think-tanks (SELDI/CLDS). In either situation, the reader is misled.

Suspected plagiarism

Although Tables 1–4 support a finding of an undisclosed dependency of the 2017 article on earlier published works, the problem is not reducible to suspected garden-variety copy-and-paste plagiarism. The apparent representation of previously published texts of scholarly analysis by researchers as new qualitative data – anonymised on-the-ground interview quotations – transforms the apparent source texts. The addition of a speaker, location and time period to the previously published words makes this case one of suspected data fabrication. The problem with the article is not simply a failure to cite sources or to use conventional modes of sentence attribution (e.g., quotation marks, block quoting, in-text citations) but the apparent transformation of source texts into the appearance of new qualitative research findings. The qualitative data appears to be derived from undisclosed source texts that do not themselves consist of qualitative data.

A second case

One must ask about the prevalence of the suspected data fraud. The 2017 article is not an isolated case. An earlier 2013 article also by C. in the same Elsevier foreign policy and international relations journal was also retracted by the editor and publisher (C., 2013). The detailed retraction statement published by the Editor-in-Chief (2019) explains that the 2013 article ‘carelessly uses parts of diverse sources (13 in total; list is available upon request) without appropriate citation methods, though without any apparent malicious intent’ (Editor-in-Chief, 2019). Even though the retraction statement states that ‘Re-use of any data should be appropriately cited’, readers are likely to infer that the problem with the now-retracted article was that it plagiarised text from 13 sources (Editor-in-Chief, 2019). Nevertheless, copy-and-paste plagiarism does not appear to be the only problem with that article, and it might be the less serious one.

The stated conclusions of the now-retracted 2013 article are also based on putative fieldwork quotations. In a conspicuous asterisk footnote attached to the title of the 2013 article, readers are informed that ‘All interviews were conducted in confidence, and the names of interviewees were withheld by mutual agreement’ (C., 2013: 481). In the course of the article, various individuals are claimed to have been interviewed, and this time the putative location and putative time for the interviews are identified as ‘Skopje, March 2011’. The anonymised interviewees are referenced with generic descriptions (e.g., ‘a public official’; ‘a representative of a civic organisation’). The problem with all the fieldwork quotations presented in C.’s (2013) article is that, again, they can each be located verbatim and near-verbatim in the previously published research literature. In most cases, the quotations can be definitively established as having been uttered prior to the March 2011 period during which the fieldwork interviews were said to have been conducted. Furthermore, the text that introduces the putative interview quotations in the 2013 article also corresponds in some cases to sentences in the undisclosed source text, and those instances of copy-and-paste plagiarism further cast doubt on the reliability of the qualitative fieldwork data. As with the 2017 article, it is not reasonable to assume that for the 2013 article all of C.’s on-the-ground fieldwork interviewees only utter propositions that correspond verbatim and near-verbatim to idiosyncratic propositions found in previously published scholarly articles on the topic.

Of the two articles by C. in the Elsevier journal, the 2013 article appears to have exercised more influence in the downstream literature. It has received at least a dozen citations in articles by other researchers, including works published in Indonesian, Portuguese, Swedish and Turkish as well as in English. Since the 2013 article has already been retracted for plagiarism, there is no need to analyse the defects in detail here. In the Appendix below, Tables A5–A9 present all the putative fieldwork quotations and reveal the source texts from which they were apparently appropriated. In one instance, a familiar pattern seen above is repeated, where a sentence of analysis in a previously published work by a researcher re-emerges as a fieldwork interview quotation (Table A8). But in other cases, the fieldwork quotations that are claimed to have been conducted by C. in March 2011 either correspond with fieldwork quotations obtained by other researchers in other contexts (Tables A6 and A7) or are found in other studies performed by other researchers (Tables A5 and A9). The source texts are from as early as 2001, a decade prior to when the fieldwork quotations are said to have been obtained by C. Two of the quotations discussed in the source texts are from 2011, but from a different month – June – than C.’s putative March 2011 fieldwork research (Table A7). In short: all the putative fieldwork quotations in the 2013 article overlap substantively with sentences found in two previously published works: Cohen (2010) and International Crisis Group (2011). Again, C.’s familiarity with both works is indisputable, as each is listed in the bibliography. Readers of the 2019 retraction statement, however, will likely infer that copy-and-paste plagiarism, rather a problem with the reported qualitative fieldwork data, is the reason for unreliability of the article and the basis for retraction.

Diagnosing research misconduct

The creation of qualitative data by mining and transforming the previously published work by others is a distinctive form of research misconduct that requires a precise diagnosis. The phenomenon analysed here seems to have degrees of overlap with each part of the standard threefold typology of research misconduct – fabrication, falsification and plagiarism (FFP) – widely used by research institutions. The phenomenon appears to be a kind of fabrication, since putative quotations from on-the-ground interviewees are represented to have taken place, but the evidence suggests otherwise. Furthermore, it appears to be a kind of falsification, since the words that were already expressed months or years earlier by others in different contexts are manipulated and presented as fresh and original data. Finally, it appears to be a form of plagiarism, since the strings of words forming the data are appropriated or copied without attribution to the source texts.

Reducing the suspected research misconduct to simply one of the three forms is inadequate. For example, characterising this form of research misconduct as simple copy-and-paste plagiarism is an insufficient diagnosis of the phenomenon, given the overlap with fabrication and falsification. When unacknowledged source texts are used as templates to produce fabricated qualitative data, this form of research misconduct can be viewed as template plagiarism.

Reducing the suspected research misconduct simply to fabrication alone or falsification alone also fails to account for the fact that the research findings of others have apparently been manipulated in the production of the anonymised qualitative data. The Bax case, in contrast, involved pure fabrication, since sources (interviewees), locations (e.g., a monastery) and events (e.g., wars and mass murders) did not derive from the work of others but were entirely made up for some articles. Furthermore, falsification typically involves the manipulation of one’s collected data through omission or addition. In the phenomenon discussed here however, there does not appear to be an original data set collected by C.

Conclusion

This paper has examined a particular kind of research and publication misconduct, namely, the misuse of anonymity and confidentiality protections in the production of unreliable qualitative data. Analysis of this phenomenon included an exercise in PPPR that argued for the unreliability of the qualitative data in an article published in an Elsevier foreign policy and international relations journal. Venues for discussing suspected cases of research misconduct are few, yet candid discussions of suspected violations of research norms contribute to a culture where whistleblowing is encouraged. Disclosing evidence of research misconduct can be perilous, yet such actions are essential to promoting a culture of research integrity (Hansson, 2019a). In the present-day context, ‘the most common outcome for those who commit fraud is: a long career’ (Oransky 2020: 142). Without PPPR of this sort, researchers might otherwise cite and incorporate defective work in their research, and furthermore, defective research might influence the decision-making of policymakers who trust the published research found in respected journals.

This particular form of research misconduct vitiates the purpose of qualitative research, which is to give representation to perspectives that would otherwise be missed by other traditional forms of research. The abuse of anonymity and confidentiality protections in the production of fraudulent research data displaces authentic perspectives with counterfeit ones; the practice denies individuals and groups of participation in research that purports to be about them. The careful delineation of this particular kind of research misconduct – the abuse of anonymity and confidentiality protections – can be useful for the community of researchers; knowing the subtle variations in which research is unreliable can assist reviewers, editors, publishers and readers in being on guard for future instances of them.

Postscript

On 13 April 2021, I was informed by the new editor of the Elsevier journal that C. 2017 would be retracted. The retraction was published later that month. It states, ‘The author fabricates interview data in attributing quotes to respondents that previously appeared in published works by other authors and further misrepresents an interview with a single respondent as multiple interviews’ and ‘the article contains substantial unattributed material’ (Editor-in-Chief, 2021).

Footnotes

Appendix

Acknowledgements

I am grateful to the reviewers and editor of Research Ethics for helpful, detailed and insightful comments that improved this article. I am also grateful to Michelle Dougherty, Alkuin Schachenmayr, Anjel Stough-Hunter, Michael Fisher and Lawrence Masek for their help and discussions. The Galvin Family Foundation, which established the Sr. Ruth Caspar Chair in Philosophy at Ohio Dominican University, made work on this article possible.

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

All articles in Research Ethics are published as open access. There are no submission charges and no Article Processing Charges as these are fully funded by institutions through Knowledge Unlatched, resulting in no direct charge to authors. For more information about Knowledge Unlatched please see here: ![]() .

.