Abstract

In this paper we reflect on the looming question of what constitutes expertise in ethics. Based on an empirical program that involved qualitative and quantitative as well as participatory research elements we show that expertise in research ethics and integrity is based on experience in the assessment processes. We then connect traditional concepts of expertise as “improved performance” with deliberate practice activities and, based on our research findings, show that ethical assessment experience is a form of deliberate practice. This in our view has further ramifications in the design and recruitment processes of ethical assessment units performing research ethics and integrity assessment.

Introduction

Research ethics and integrity: achieving clarity, transparency and reliability through defining expertise

Research ethics and research integrity, the two strands of dealing with the ethical aspects of doing research and innovation, have been mainstreamed in the past decade (Mertz et al., 2016; Ormerod and Ulrich, 2013, Pratt et al., 2017). However, there is still discernible work to be done to improve standards on good scientific practice in order to achieve clarity and calculability for participants, transparency for users, and reliability for policymakers in ethical considerations related to research. While there are several scholars and communities working on the unification of the two conceptual approaches 1 and their shared focus on how research behavior can be understood within the framework of responsible conduct of research (RCR) (Steneck, 2006: 55), it is also understood that the remits of research ethics units and research integrity committees are quite different. Ethics committees perform ethical evaluation of research projects and issue approvals beforehand, while research integrity offices typically are engaged in retrospective handling of alleged cases of ethical misconduct. The different institutions for research ethics and research integrity are also committed to different regulations and have developed their separate guidelines. At the same time, they have much in common: both types of institutions are involved in the promotion of responsible conduct of research, and there is a number of overlapping issues that both institutions deal with, for example, data protection, conflicts of interest, and safeguarding research transparency, to mention but a few (Komić et al., 2015).

It is also argued that ethical reflections of all sorts need shared consideration through a process of mutual exchange and learning among those who are actively engaged in ethical reviews and are participating in and sponsoring research integrity (Mooney-Somers and Olsen 2016, Petillion et al., 2016). Also, “users” of the two territories need to have clarity, transparency, and reliability as to how and from whom to request expert opinion in ethical issues related to research (McKenna and Gray, 2018). There is a convergence between the two fields of ethics assessment that may lead to a growing need for experts in ethics who would be able to participate in ethical assessment units (EAUs) dealing with both strands of ethical inquiries. Thus, it is important to define what constitutes expertise in ethics in scientific practice and explore who could be considered an expert in matters of research ethics or integrity issues. In this article, we bring into focus the intersection of research ethics and research integrity expertise as the imbricated nature of the two significantly characterizes the empirical findings of the study. This paper reports on empirical findings and draws conclusions that may assist in reconceptualizing expertise in research ethics and research integrity, strategies of design, and recruitment of assessment units performing ethics and integrity assessments.

Hence, in order to answer the research question, “what constitutes expertise in research ethics and research integrity?” we created an empirical research program to systematically explore and develop acceptable indicators representing expertise in the heterogeneous fields of research ethics and integrity. The rationale of the empirical research was to: (a) systematically review previous research on ethical expertise in literature and ongoing research projects; (b) harvest positions on expertise in ethics from those practitioners who currently work in different strands of ethical review processes as well as determine the field via their participation in the most important organizations and networks of the territory; and (c) involve potential users of such expertise in determining what their expectations would be. This paper summarizes the findings of the empirical program and draws conclusions on the research question of what constitutes expertise within the ambits of both research ethics and research integrity. We also address how empirically grounded comprehensions of expertise within the two fields can add to a conceptual framework of understanding expertise as expert performance. We will first discuss our methods and present key empirical findings. This will be followed by a discussion of what this means for defining expertise in ethics, constitution of EAUs, and placing our results in wider epistemic developments and debates across scientific cultures and communities as to how scientific knowledge/expertise may be perceived and generated. We will close the paper with conclusions related to the design of ethical assessment processes and point to areas where further research may be required.

Specifically, in our analysis, we connect traditional concepts of expertise as “improved performance” (Ericsson, 2006) with deliberate practice activities (Ericsson et al., 1993) and, based on our research findings, show that assessment experience constitutes a form of deliberate practice. The basic assumption of deliberate practice is that expert performance is acquired gradually and that effective improvement of performance requires sequentially mastered tasks initially outside the current realm of reliable performance. Deliberate practice, as opposed to routine performance and playful engagement, requires concentration, repetition, and feedback (Ericsson, 2006: 694). In our view, assessing expertise in research ethics and research integrity in terms of deliberate practice has further ramifications for the design and recruitment processes of assessment units performing research ethics and integrity assessment.

Method

Methodology

By virtue of the vast and heterogeneous fields of research ethics and research integrity and the observation that expertise relates to diverse types of involvement spanning from participation in research ethics committees (RECs) and research integrity offices (RIOs) to legal/administrative experiences, we applied a mixed-methods research design to systematically elicit a multifaceted understanding of research ethics and research integrity expertise. The empirical program was initiated with a literature review focusing on existing literature (i.e., key EU documents, research and project findings, institutional reports, and EU network material) on research ethics and research integrity expertise. Next, we conducted a qualitative study among current experts within the two fields, complemented by a quantitative survey among a wider group of practitioners in research ethics and integrity. This was followed by a participatory research design comprising of a series of one-day consensus conferences in four European cities involving assumed users of a potential European database of experts (for details, see Braun, 2019).

In general, only limited resources are available on detailing research ethics and integrity expert skills and qualifications. This is particularly evident for expertise beyond the involvement in research ethics committees and research integrity offices. In these cases, too, expert qualifications seem more often to characterize collective units of expertise rather than the individual level of expertise. To remedy this apparent knowledge lacuna, a multimethod research design was constructed to expound on the constituents of expertise and develop indicators that take the individual level as a starting point.

For the particular objective of the empirical program and, predominately, for the focus of this article, two EU projects, SATORI (Stakeholders Acting Together On the ethical impact assessment of Research and Innovation) and MoRRI (Monitoring the Evolution and Benefits of Responsible Research and Innovation), are considered especially relevant. The ENERI (European Network of Research Ethics and Research Integrity project) research team has reviewed these research projects. Our main aim was to systematically assess the documents that deal with ethics and expertise produced during the lifetime of the projects. As SATORI aimed at developing a common European framework for ethical assessment of research and innovation, it did extensive research on which qualifications, skills, and processes are required in ethical assessment processes. We reviewed its documents, and these documents served as an input for developing our interview guidelines and questionnaire in the qualitative and quantitative elements of our research. MoRRI, on the other hand, aimed at monitoring the benefits of a more responsible research and innovation culture and develop indicators to monitor the progress toward more responsibility; ethics played but a small part of its research work. However, we also reviewed project outputs, especially the extensive literature review that provided input for our consensus conference setup.

Following the review, we have conducted 12 in-depth expert interviews. Based on the literature, experts were defined as people with proven experience in the field. Experience was identified as having been part of assessment units or ethics evaluation processes, teaching or lecturing on research ethics or integrity issues, and having been part of professional networks of the two strands of ethical inquiry.

All expert interviews were carried out in September and early October 2017 and were performed by phone, Skype, or face-to-face. The interviews lasted 30–60 minutes. The selection of experts/interviewees was based on an “information-oriented” selection strategy (Flyvbjerg, 2006), as experts were carefully chosen for their significance, with the aim of reaching a broad group of research ethics and integrity experts and achieving variation according to the “criteria of maximum variation” in order to enhance in-depth understandings of potential expert criteria and qualifications (Patton, 1990). Variation has been pursued according to criteria such as research ethics/research integrity focus, institutional category, geographical location, gender, or age. The institutional category endeavored to include national research ethics committees (REC); regional/local research committees (REC); European network of RECs (EUREC); national research integrity committees/offices (RIO); local/university research integrity committees/offices (RIO); European network of research integrity offices (ENRIO); national funding organization (involved in ethics review); European funding organization (involved in ethics review); government agency (ministry); industrial advisor/consultant on ethics/CSR/corporate sustainability. Interviews were recorded and subsequently transcribed verbatim by student assistants. Next, all interviews were coded thematically in the software program Nvivo, which allows for a transparent and comparable management and analysis of the empirical data. The structured coding strategy was in alignment with the set of focused codes derived from the key themes explored in the interviews.

Based on the analysis of the interviews and focusing on the main points of study regarding our research question, an online questionnaire for the quantitative survey was created in January 2018. It was distributed by the European Network of Research Integrity Offices (ENRIO) network and shared at the EUREC members’ meeting in Berlin on 15 February 2018. As addressees were asked to forward the request within their networks, the exact number of recipients cannot be determined. However, the total number of experts reached within the ENRIO and EUREC networks have most probably not exceeded 250 people. The target sample was 100 respondents and after intensive communication and repeated reminders, 125 respondents filled out the questionnaire. In selecting the respondents, we used nonprobability sampling as randomization was not possible in order to obtain a representative sample. Following up on the expert interviews and utilizing the core expert networks of research ethics and integrity, ENRIO and EUREC, we used expert sampling as a subset of nonprobability sampling. We contacted and utilized the membership of the two organizations as they have the broadest range of research ethics and integrity expert base with good geographic distribution.

Preliminary conclusions from the qualitative and quantitative survey were tested, discussed, and fine-tuned in a series of consensus conferences. The rationale for the consensus conferences was based on (a) the critique of a technocratic treatment of (ethics-related) techno-policy issues (Laird, 1993; Lakoff, 1977; Tribe, 1972) as well as (b) the growing concern that citizens and nonexpert users have a stake (Freeman, 1994) in the outcome of research ethics and integrity and may have important views and insights to contribute. The consensus conference design followed traditional consensus conference methodology (Einsiedel and Eastlick, 2000; Joss, 1998, Nielsen et al., 2006) altered to fit the purpose. The long, resource-intensive original consensus conference design—involving meeting and deliberation for several successive weekends—was shortened to a one-day session. One-day consensus conferences have already been used to reach expert consensus in medical research (Grudzen et al., 2016). To fit the required timeframe and align resources with the character of the topic, we asked for consensus on questions that originated from our qualitative and quantitative research and focused only on ambiguities expressed by the experts surveyed. The method was altered to include a number of predefined consensus options to better align stakeholder decisions with the qualitative and quantitative research findings and help decide between ambiguities stemming from the research. Stakeholders were at liberty to alter potential consensus answers or reflect on anything they found to be substantial for the topic in question. The consensus conferences took part in four European cities (Aarhus, Athens, Vienna, and Vilnius) during the month of June 2018. Altogether, 50 stakeholders selected from diverse groups, such as university management, funding agency, students, and science journalists, participated in the four cities. In accordance with Laird (1993), “substantial education” was involved in the project, on research ethics and integrity controversies as well as preliminary findings of the quantitative and qualitative research. All sessions followed a similar format comprising of an introduction, an understanding session, and a deliberation session managed in a World Café format (Brown and Isaacs, 2005). After the session, a consensus sheet and an “impact or consensus statement” (Beighton, 2017) were created and summarized the questions, remarks, issues discussed, and the consensual answers arrived at as well as the consensus in a narrative format, respectively. Photo protocols of the discussion flip charts were created and sent to participants, so they had a further option to reflect on the consensus achieved and offer further remarks, should they have had any.

Findings

In the limited literature available on ethical expertise, the focus is on expertise being “knowing what ought to be done or being better at making moral judgments” (Iltis and Sheehan, 2016); or “the ability to understand and integrate knowledge from various disciplines and viewpoints” as well as “reconcile the disparate perspectives that impinge” in a research setting (Yoder, 1998). Some scholars challenge the very concept of ethical expertise or would consider it only for focusing on procedural matters (Gordijn and Dekkers, 2008).

In this paper, we take a different path. Based on our research findings and previous literature on expertise claiming that the “amount of time an individual is engaged in deliberate practice activities is monotonically related to that individual’s acquired performance” (Ericsson et al., 1993: 368), we argue that expertise in ethics and integrity may also be theorized as acquired through de facto deliberate practice, adding an empirically grounded conceptual understanding to what constitutes expertise in research ethics and integrity. Our hypothesis for our empirical investigation was, based on our reading of literature and findings in other research project, that experience may be conceptualized as expertise gain through deliberate practice. Experience is de facto deliberate practice in-as-much as expert performance acquisition is not designed as such but represents in most cases what participants themselves feel and would like to learn during the process. Expertise is thus conceptualized as ethical concerns and normativities embedded in assessment processes and expertise acquired through such practices (Hankins and Von Schomberg, 2019; Rip, 2010).

Elements of such conceptualization were already present in our review of the relevant literature of previous EU project deliverables. In SATORI, the most extensive research in research ethics and integrity assessment units to date, ethics expertise was defined as having experience in ethics assessment processes. In their overview of the literature, SATORI researchers came to the conclusion that experience was valued over formal qualification, and training as a form of deliberate practice was advised for members (Brey et al., 2017). Specific knowledge and qualification was required for ‘“ethics specialists” and “legal experts,” to be gained through specific forms of training, which, again, may be considered as forms of deliberate practice (Ericsson, 2006: 701). A key question in reference to skills and qualifications of assessment unit members, thus of “expertise,” was the validation of such skills and qualifications. While certifications were seen as one potential form of validation, implementing them into practice was debated in SATORI (Reijers et al., 2018). In MoRRI, a research service set up in late 2014, the objective was “to provide scientific evidence, data, analysis and policy intelligence to directly support Directorate General for Research and Innovation (DG-RTD) research funding activities and policy-making activities in relation to Responsible Research and Innovation (RRI)” (cf. http://morri-project.eu/). Ethics is one of the keys or pillars of RRI as defined by the European Commission (EC, 2014). MoRRI focused on lay or civic participation as an extension to traditional expert-based concepts of ethics expertise. While MoRRI found that civic or lay participation may be beneficial to the social embeddedness of the ethical assessment process, the research did not specifically reflect critically on the question of what constitutes expertise in ethics.

The question of how expertise is to be defined reflects wider epistemic developments and debates across scientific cultures and communities as to how scientific knowledge/expertise should be perceived and generated. In general, within the social science research on expertise and, particularly, within the sociology of knowledge and public understanding of science literature, clear horizontal distinctions between lay and expert knowledge have long been challenged. Traditional views of expertise were reconsidered as the social construction of knowledge gained currency and therefore contested the view of the expert as an “agent of truth and authority” (Bogner et al., 2009: 3). Whereas some refer to these developments as “crises of expertise” (Gerold and Liberatore in Felt et al., 2008), others focus on the “democratization of expertise” (Bogner et al., 2009: 3) to reflect reinvigorations of citizen–science interrelations that are often referred to as a move from “understanding to engagement” (Stilgoe et al., 2014: 4). Others, again, like Bogner and Menz (2010), deliberatively, do not provide a definition of what constitutes expertise in ethics as they focus on ethical assessment teams and political controversies and also claim that the specific expertise of professional ethicists may be challenged openly altogether as expertise in value questions is often supposed to be a basic competence of daily life. Our exploratory and critical empirical research points to similar directions. In terms of assessment processes, we also find a positive attitude toward including lay publics into ethical decision-making processes (Braun, 2019).

Based on the interviews carried out, there is a broad agreement among interviewed experts that expertise in research ethics and integrity needs to be addressed with an inclusive, diverse, and transparent approach. Our interviewees are skeptical of an overall set of knowledge that may constitute a normative basis for expertise in research ethics and integrity. As an overall impression, interviewees seem to share a general consensus as to the rather nebulous notion of what research ethics and integrity expertise is, with interviewees agreeing on a series of key points. There are many types of experts, such as practitioners, policy/law experts, academic experts etc. Expertise can be possessed within a large number of topics, such as publication ethics, codes of conduct, ethics review, data management, falsification, fabrication, plagiarism [FFP], questionable research practices [QRPs], teaching curriculum development, etc., and may relate to one or several organizational levels (e.g., local, regional, national, European, or international areas of knowledge). Moreover, while expert interviewees provide explicit examples of core competences and skills in regard to their own position, it is also evident that no fixed expertise definition exists and that research ethics and integrity qualifications in many ways can be regarded as intrinsic to research processes and may occur as a kind of tacit knowledge (Polanyi, 1966).

The contingent nature of research ethics and integrity and the ephemeral notion of what constitutes expertise within a given time frame and within different cultural, geographical, and epistemic contexts are clearly conveyed in the expert interviews. For instance, one expert explicitly states that the guiding principle of expertise should originate from a multidisciplinary, inclusive, and broad perspective that “gives room to other ways of showing expertise” (Braun, 2019: 70). Overall, interviewees emphasize formal education and relevant experience as the most important competences to possess as an expert. In addition, different types of experts highlight various types of experience and competences in accordance with their field of expertise. Hence, ethics assessment and review competences are accentuated for ethics research project reviewers, while knowledge of integrity guidelines and codes of conduct are mentioned as important competences for journal editors, for instance. Despite variation, similarities in core competences and skills appear somewhat consistent across different areas of expertise. In terms of competences, experts point to the following types of acquired knowledge: ethical competences (deep knowledge of national and international regulation, cases, awareness of moral dilemmas and ethical deliberation); integrity competences (deep knowledge of national and international regulation, policy, and guidelines); research/science experience [having performed research activities in the past]; legal competences; ethics assessment/review experience [having performed ethics assessment in the past]; and integrity assessment/review experience [having performed integrity assessment in the past].

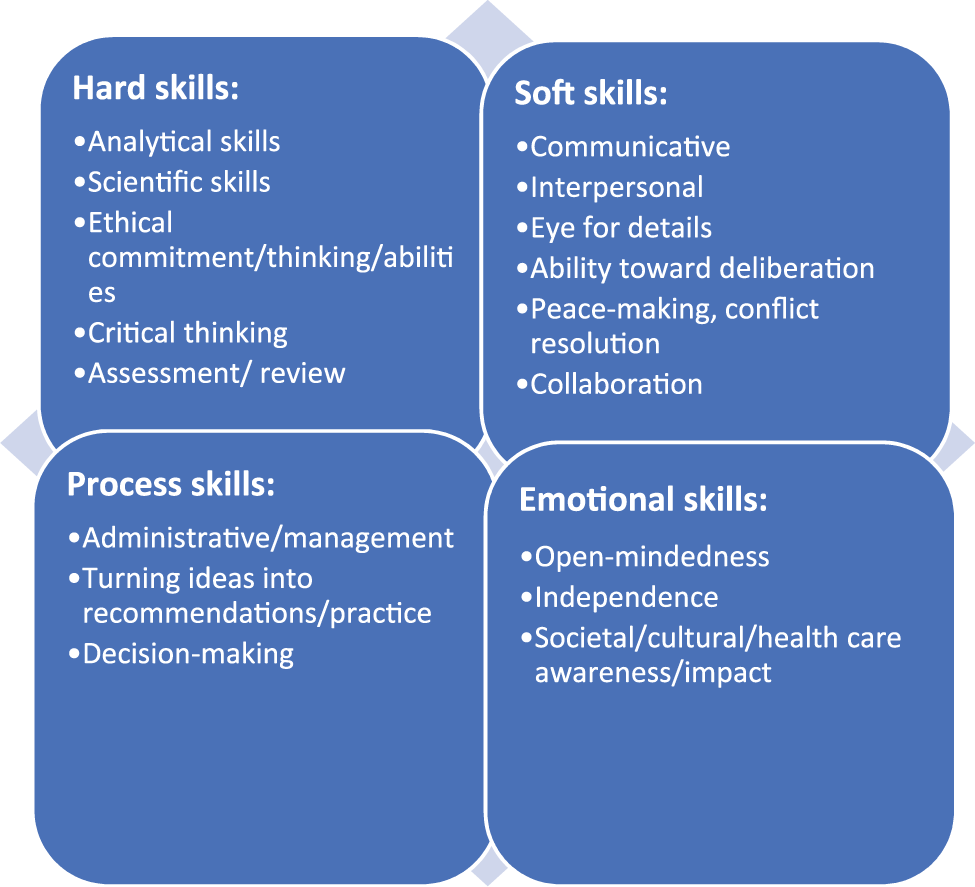

Moreover, experts agree on the importance of a number of skills related to communication, deliberation, collaboration, and management. In Figure 1, these are summarized and grouped according to hard skills (e.g., education, technical), soft skills (e.g., communicative), process skills (e.g., administrative/management), and emotional skills (e.g., commitment, open-mindedness).

Research ethics and research integrity skills.

Irrespective of research ethics and integrity expertise type, expert interviewees give emphasis to and prioritize a number of emotional skills and competencies as essential for working with and within areas related to research ethics and integrity. Being open-minded toward other perspectives as well as being able to collaborate, for instance, is seen to minimize potential frictions between different discipline practices/guidelines and more broadly between different (normative) perceptions of ethical/integrity standards across research fields, institutions, and countries (See Braun, 2019). The contingent and contextual nature of research ethics and integrity expertise confirms previous assessments of the literature arguing that expertise in ethics is not premised on one type of moral knowledge or that a specific, distinct kind of ethics expertise can be identified (Yoder, 1998). At the same time, interviewees establish a number of potentially shared skills and competencies that fall within deliberate practice activities as well, especially experience in ethical assessment processes where such skills can be acquired, cultured, and refined.

Our quantitative survey substantiates the conceptual framework of theorizing expertise in ethics and integrity as deliberate practice, thus confirms our initial hypothesis. Respondents found “research/science” competence the most important (4.45 on a scale of 1–5 where “1” means absolutely unimportant, and “5” stands for very important) closely followed by “ethics assessment” (4.27) and “integrity assessment” (4.39) competencies. This confirms our findings in both the literature review and the expert interviews that experts highly value experience in assessment as an important competence in being an “expert” in research ethics and integrity. Aside from experience, respondents’ value “ethics/philosophy competences” (4.10) to be important, while “legal competences” (3.18) is considered relatively lower. When assessing the required skills of research ethics and integrity expertise, “impartiality” (4.29) and “open mindedness” (4.14) are rated as the most important skills, while “personal commitment” (4.14) is also highly valued. “Administrative” (2.57) and “technical” (2.43) skills are valued the least, while “analytical” (4.10), “problem-solving” (4.00), and “debate/deliberation” (4.02) skills are also exceedingly valued. When it comes to specific skills and competences, respondents value research ethics and integrity experience (4.71) as well as previous experience in research ethics and integrity commissions experience (4.28) the most, closely followed by scientific/research experience (4.13). Specific education, current position as research ethics and integrity expert, or research ethics and integrity teaching experience, are all valued significantly lower (3.69/3.58 and 3.31, respectively). Furthermore, respondents seem to be skeptical toward the importance of an “official research ethics and integrity certification” system that would test the availability of a normative set of knowledge on issues of research ethics and integrity (Braun, 2019).

The consensus conference series dealt with specific questions focused on the open questions related to how expertise within research ethics and research integrity should be approached as well as how a potential European database of experts could be designed. From an “ethics expertise” point of view, the consensus conferences mainly supported the view of the interviewed and surveyed experts. Consensus conference participants do not see a general set of knowledge base applied to ethics expertise, and they suggest a broad, diverse, and inclusive approach to understanding expertise in ethics and research integrity. They suggest that both quantity (number of cases assessed, number of years spent with assessment, number of ethics assessment units attended) and quality measures (peer appraisal) of experience should be incorporated in evaluating expertise. Stakeholders also express the need for a diversity-sensitive approach to expertise, being vigilant to issues of gender, research field, and age while also noting that national, cultural differences and appropriate representation of all EU countries have to be considered. Participants also voice that “lay experts” [people with willingness to contribute to ethics assessment but with no field-specific experience] and “NGO/CSO representatives” could also hold valuable experience that would add to assessment expertise. In concert with some authors in the literature, participants in the consensus conferences emphasize the need of a general “code of conduct” or the inclusion of “ethical principles”/“procedural requirements” to be followed by all “experts” (cf. Gordijn and Dekkers, 2008: 125). This would not be a set of normative principles; rather, it would be procedural rules or guidelines that also point toward a unified set of deliberate practices systematizing reflection on, acquisition, and concentrated iteration of shared ethical expertise.

Discussion

Implications

In order to understand expertise as improved performance, a set of conditions needs to be applied according to Ericsson et al. (1993): Significant improvements in performance are realized when individuals are (1) given a task with a well-defined goal; (2) motivated to improve; (3) provided with feedback; and (4) provided with ample opportunities for repetition and gradual refinements of their performance. Deliberate efforts to improve one’s performance beyond its current level demands full concentration and often requires problem-solving and better methods of performing the tasks. Ericsson (2006) notes that practice that work toward automaticity and activities that are routinized and not complex enough do not improve performance and are not to be considered deliberate practice. Our research points to the understanding that expertise in research ethics and integrity is experience-based. However, if we look at the expressed opinions more closely, we can see that what was actually meant by our research subjects is less an automaticity of activities measured in the time spent with “practicing ethics,” but, very specifically, experience related to ethical assessments. Ethical assessments involve complex problem-solving challenges, require concentration and focus while also, to refer to one of the definitions of expertise, the need to reconcile disparate ethical perspectives that impinge (Yoder, 1998). This necessitates those carrying out the assessment to improve their skills constantly, both hard and soft. Ethical and integrity assessments have a well-defined goal of coming up with “what ought to be done” (Iltis and Sheehan, 2016), and members provide feedback to each other on ethical positions expressed during the deliberation process. Those with more occasions to do research ethics and integrity assessments are provided with continuous opportunities for repetition and refinement of performance based on such feedback from peers.

In general, experts and stakeholders in our study also valued analytical, problem-solving, and debate/deliberation skills highly as they see the questions to be dealt with as challenging and complex. The highly valued skills are all required to avoid routinized practices and address complex challenges that constantly require improved performance in a process that may be viewed as de facto deliberate practice.

Experience in research ethics and integrity assessment improves the performance of those participating in such processes. Assessments as deliberate practice activities are aiming at well-defined goals, participants are motivated and also challenged by their peers to constantly improve, they are provided with peer feedback and—should their improving performance warrant—ample opportunities for repetition and further refinements of their performance as to be better at making moral judgments. As Yoder (1998) explains, “[i]n an era of specialization and fragmentation, one might argue that it is increasingly important to recognize a type of expertise that consists not of knowing everything about a single subject, but of seeing how a range of concerns fit together” (18). This “fitting together,” as improved moral knowledge, is something that is acquired via peer feedback and deliberation during assessment processes. Therefore, research ethics and integrity assessment is also to be seen as a primary source of creation and improvement of expertise.

This has implications beyond answering our research question of what constitutes expertise in research ethics and integrity. Those assembling assessment units or inviting people to perform in research ethics and integrity assessments should be vigilant of the fact that through such processes, they are also creating opportunities for less experienced members to perform deliberate practice activities and acquire or improve the skills to be better at making moral judgments.

Conclusion

Based on findings from a mixed-methods research study, this article seeks to answer the looming question of what constitutes expertise in research ethics and integrity. Our answer is procedural and contextual. Our research points to the understanding that there is no set and general knowledge that can be defined as “ethical/integrity expertise.” Our research has also shown that expertise is complex and deliberative in nature as it aims to reconcile disparate ethical perspectives. Making better moral judgments, that is, having expertise in research ethics and integrity, is to a great extent based on experience. However, this experience is also the source of expertise. Expertise is seen as a collaborative and collective effort of creating a transformative entity originating from multidisciplinary, multiperspective approaches that may provide room for various ways of showing expertise (Braun, 2019). While formal training in ethics or law may be beneficial, it is the experience gained through the deliberate practice of actually performing ethics assessments in concert that provides ethics expertise. This is reinforced by the idea that lay or civic participation is seen as an extension of the practice provided to all participants in the assessment process.

Our findings point to the fact that research ethics and integrity assessment processes should also be viewed as a source for deliberate practice activity to improve ethics expertise. This would require that those entrusted with the composition of such ethical assessment units pay attention not only to the diversity of the people regarding multiple perspectives and skills, but also to invite people in need of deliberate practice to improve their levels of expertise and become more experienced as research ethics and integrity experts. At the same time, those who are tasked with designing research ethics and integrity assessment, for example, chairpersons of committees, should pay special attention to the process being constructed in a way that well-defined development goal(s) are defined to support a participatory environment in which participants are especially motivated to improve their skills and all are provided with continuous peer feedback on their performance. People with experience should not only participate in assessment processes because they have acquired expertise but also because this provides them with ample opportunities for repetition and gradual refinements of their research ethics and integrity expert performance. Further research is called for as to how deliberate practice of research ethics and integrity expertise could purposely be built into assessment processes. In the meantime, we would encourage a heightened attention toward the performative, deliberative, and experiential dimensions of expertise.

Footnotes

Declaration of Conflicting Interest

No conflict of interest reported by the authors.

Funding

All articles in Research Ethics are published as open access. There are no submission charges and no Article Processing Charges as these are fully funded by institutions through Knowledge Unlatched, resulting in no direct charge to authors. For more information about Knowledge Unlatched please see here: ![]()