Abstract

This article argues that strong interrelations between methodological and theoretical advances exist. Progress in, especially comparative, methods may have important impacts on theory evaluation. By using the example of the “Varieties of Capitalism” approach and an international comparison of higher education systems, it can be shown that the use of improved data and of advanced analysis techniques changes the acceptance or rejection of the theoretical approach. As a conclusion, the article advocates data improvements, the use of advanced methods, more generally a methodologically reflexive interpretation of comparative research results as well as a closer cooperation between theory and method development.

Keywords

Introduction

International comparisons in higher education have been gaining in importance over recent years. The reasons are manifold. An important one is globalization and an increase in international exchange. A second reason is the improved availability of comparative data; for example, from administrative sources (e.g. the Organisation for Economic Co-operation and Development (OECD), World Bank or the United Nations Educational, Scientific and Cultural Organization (UNESCO)) or from international surveys (Eurostudent, REFLEX). Policy, thirdly, is increasingly referring to findings from these data as a base of evidence-based policymaking or for legitimation purposes. Against this background, it is becoming ever more important to be aware of the methodological problems of quantitative international comparisons. The same is, however, also true for theory development. As most methodologists argue, theories in a field of study cannot be better than the applied methods. This holds at least in empirical sciences, such as research into higher education. The improvement of methods and data availability are, therefore, important ongoing requirements.

The following sections exemplify this claim by using the example of the “Varieties of Capitalism” (VoC) approach (Hall and Soskice, 2001b). It will be shown that the rejection or approval of hypotheses, derived from this theory, are heavily dependent on the data and analysis techniques used. Before delving into the data in more detail, however, first some general remarks on the importance of and problems with international comparisons will contextualize these data analyses.

The next section will, therefore, very briefly address some methodological issues on the relation between theories and data in general and introduce international comparison as a method, followed by some general problems of this method. This lays the ground for the empirical analyses in the subsequent section. The paper ends with some conclusions on further improvements with regard to the usage of data in international comparative research.

Theoretical and methodological advancements in international comparison

As already mentioned, research into higher education is an empirical science. Its theories and data want to describe and explain adequately, or one could even say “make sense of” a certain aspect of reality (higher education) and even aim in many cases at influencing this reality. As such, its statements (theories, hypotheses) relate to an empirical world and may fail when confronted with it. This understanding of empirical science refers back to Karl Popper’s (1935) idea of falsification and its further development by Imre Lakatos (1970), whose idea of “research programmes” claims, inter alia, that the evaluation of a theory and its validity also requires the evaluation of the methods applied for its measurement. Following from this, many methodologists see measurement instructions necessarily as being part of a theory (e.g. Kromrey, 1998). Ergo: advancements in methods can contribute substantially to advancement in our theories. On the other hand, theories are needed to make sense of empirical data, which are meaningless without interpretation. One important argument of this article is, therefore, that we need advancements in data and methods to improve our theories and vice versa, or what Marsh and Hau (2007) have called “substantial-methodological synergies”, to improve overall progress in (comparative) higher education research. It is, therefore, reasonable to look at the potential and problems of comparative methods in more detail to also improve theories in comparative and international education.

International comparison as a method and its problems

Comparison is one of the most important methods of empirical social research. Indeed, classics of sociology call the comparative method “the sole one suitable for sociology” (Durkheim, [1895] 1982: 147). In sociology, a comparison has to fulfil at least three requirements to be called a method in its own right (Dogan and Pelassy, 1990):

two or more units that each represent a specific context are explicitly compared;

the cognitive interest is directed towards an additional benefit through this comparison;

the used methods take into account the specific requirements of comparisons.

With regard to the first point, while one can compare quite different types of units such as organizations, regions and so on, the international comparison of countries or states is especially important in (higher) education. One reason is that (higher) education systems are still heavily regulated by their nation states (e.g. Teichler, 2007), despite globalization and internationalization trends (Hoelscher, 2012a). Another reason is the important role that education played, and probably still plays, in the creation of modern nation states (Gellner, 1983).

The second point above refers to an “additional benefit” of comparisons. Immerfall (1995) mentions four such benefits or functions: the first one is description. Higher education participation rates, for example, of 30% can only be assessed as high, medium or low when compared to other figures. However, what is often not fully understood is that such relatively easy comparisons are often more demanding with regard to representativeness of data than most kinds of relational analyses.

A second function, generalization, tests whether empirical relationships (approved hypotheses) found in one context can be observed in other contexts as well. If that is not the case, comparisons, third, can be used to specify hypotheses. Different contexts or their characteristics can then become moderator variables (e.g. Andersen and Van de Werfhorst, 2010). However, such tests need a clear theoretical basis to be able to reduce the number of potential influences. Last but not least, comparisons can be used for elucidation and for showing alternatives. This function is strongly related to David Phillips’ work on policy borrowing and transfer (Phillips, 2005; Phillips and Ertl, 2003; Phillips and Ochs, 2004) and is probably best served by qualitative comparisons that can elaborate in much more detail on the different alternatives and their inherent mechanisms.

Problems in quantitative comparative research

If, as argued so far, international comparisons are highly relevant for theory building as well as policy formulation in higher education, the potential problems also have to be taken into account. Because the literature on the problems of quantitative international comparisons is extensive, only two very general problems are discussed here: data availability and the question of equivalence (or invariance). 1

International quantitative comparisons are resource intensive, and the better they are, the more resources they normally need. A good example for such a huge research infrastructure is the European Social Survey. Following from this is, first, a lack of comparative data overall. Data availability has improved considerably during the last few years in higher education research (Teichler, 2008; Trahar, 2013); for example, through the additional efforts of international organizations such as the OECD, Eurostat and UNESCO, or through comparative surveys such as REFLEX (Allen and Van der Velden, 2007), CAP (Teichler et al., 2013) or EUROSTUDENT (Hauschildt et al., 2015). Nevertheless, it remains impossible to address many empirical and theoretical questions due to missing data. Many studies, therefore, resort to secondary analyses of the few available datasets. While this is in principle a useful strategy, Roose (2013) mentions two important shortcomings of when a research discipline is confronted with the problem of only few data sources: error multiplication and path dependency. Error multiplication denotes the issue that problems with the data (e.g. biased samples) are reproduced over and over again if many different studies draw on the same dataset. What might look like a new interesting research finding by different authors might then only be a data issue. Path dependency denotes the problem that research and related theory development progresses only in a direction where data are available. Therefore, in a bad-case scenario, data availability, and not relevance or scientific curiosity, might become the driver of scientific progress.

The second problem of comparative research, equivalence or invariance, is equally severe. 2 While we are used to comparative classifications of educational degrees (e.g. International Standard Classification of Education [ISCED], Comparative Analysis of Social Mobility in Industrial Nations [CASMIN]), many other issues are difficult to compare, and even with these classifications, it is questionable to what extent, for example, something as seemingly similar as bachelor degrees are really comparable across (or even within) countries. Similar problems exist with comparative survey data, where we are well aware that small changes in the instrument (questionnaire) or in sampling may result in huge biases (e.g. Harkness et al., 2010). Different forms of harmonization can be applied, but do have their problems as well (e.g. Hoffmeyer-Zlotnik and Warner, 2012). While all of these problems are well known, in practice, international comparative data is too often assumed to be already comparable. However, as the next section will show, this assumption is often not met.

Testing the Varieties of Capitalism (VoC): different data, different methods, different results

As elaborated above, empirical sciences such as education use theories to describe, explain and maybe even influence phenomena observed in the real world. 3 In the following, a specific comparative theory, the VoC approach by Hall and Soskice (2001b), will be tested using different data and different methods. By showing that the acceptance or rejection of the approach depends on the used data and method, the interrelatedness between (comparative) methodological issues and theory development becomes apparent.

Introducing the VoC approach

The VoC approach by Hall and Soskice (2001b) is the most popular theory in the field of Comparative Capitalisms (see Jackson and Deeg (2006) for an overview). Their idea is that different types of organizing a capitalist market economy may be equally successful. The main characteristic for their differentiation are the predominant means for the coordination of companies and their environment (other companies, the labor force, customers, finance, etc.). In their analyses, they differentiate especially between two types, the Liberal Market Economies (LMEs) and the Coordinated Market Economies (CMEs). While in LMEs (e.g. USA, UK, Australia) prices, competition and the free market are the main instruments for coordination, in CMEs (e.g. Germany, Austria, Switzerland, but also the Scandinavian countries) contracts, networks, etc. additionally play an important role. It is not possible to give full credit to this theory here, but two more things are important to mention. First, complementarities across different societal subsystems (e.g. finance, welfare state, education) exist. “Two institutions can be said to be complementary if the presence (or efficiency) of one increases the returns from (or efficiency of) the other” (Hall and Soskice, 2001a: 17). Second, due to these complementarities, the corresponding countries gain comparative institutional advantages in certain economic fields.

While this theory is quite popular, it is by no means undisputed. Important questions refer, for example, to the number of different “varieties”, to the stability of these types and to the kind of complementarities observable (e.g. Hancké et al., 2007). It is, therefore, important to see to what extent this theory is compatible with empirical evidence and able to explain differences across countries.

In the field of higher education, if complementarities also exist within this field, which seems to be a reasonable assumption, then one should be able to differentiate higher education systems along their respective VoC (see Hoelscher, 2016). To date, research on education in the context of the VoC approach has mainly concentrated on vocational education and training (VET) (e.g. Culpepper and Finegold, 1999), but the theory also allows to deduce hypotheses about expected differences in higher education systems, which can be tested empirically. While Graf (2009), Hoelscher (2016) and Leuze (2010) find more or less strong support for a strong relationship, Wentzel (2011) is more skeptical.

The next sections cannot provide a full test of the VoC approach, but they want to illustrate the dependency of decisions regarding this theory on the methods and data applied. This will be exemplified by two different hypotheses.

Student figures in international comparison

One hypothesis that can be derived from the VoC approach is that one would expect there to be more Higher Education (HE) students in LMEs than in CMEs. 4 The main reason for this is that CMEs “typically make extensive use of labour with high industry-specific or firm-specific skills” and therefore “depend on education and training systems capable of providing workers with such skills” (Hall and Soskice, 2001a: 25). LMEs, on the other hand, encourage individuals to invest in “general skills, transferable across firms” (Hall and Soskice, 2001a: 30). Industry- or firm-specific skills are normally attributed to VET, while HE is supposed to deliver more general and transferable skills (see also Amable, 2003: chapter 4.5; Brown et al., 2001).

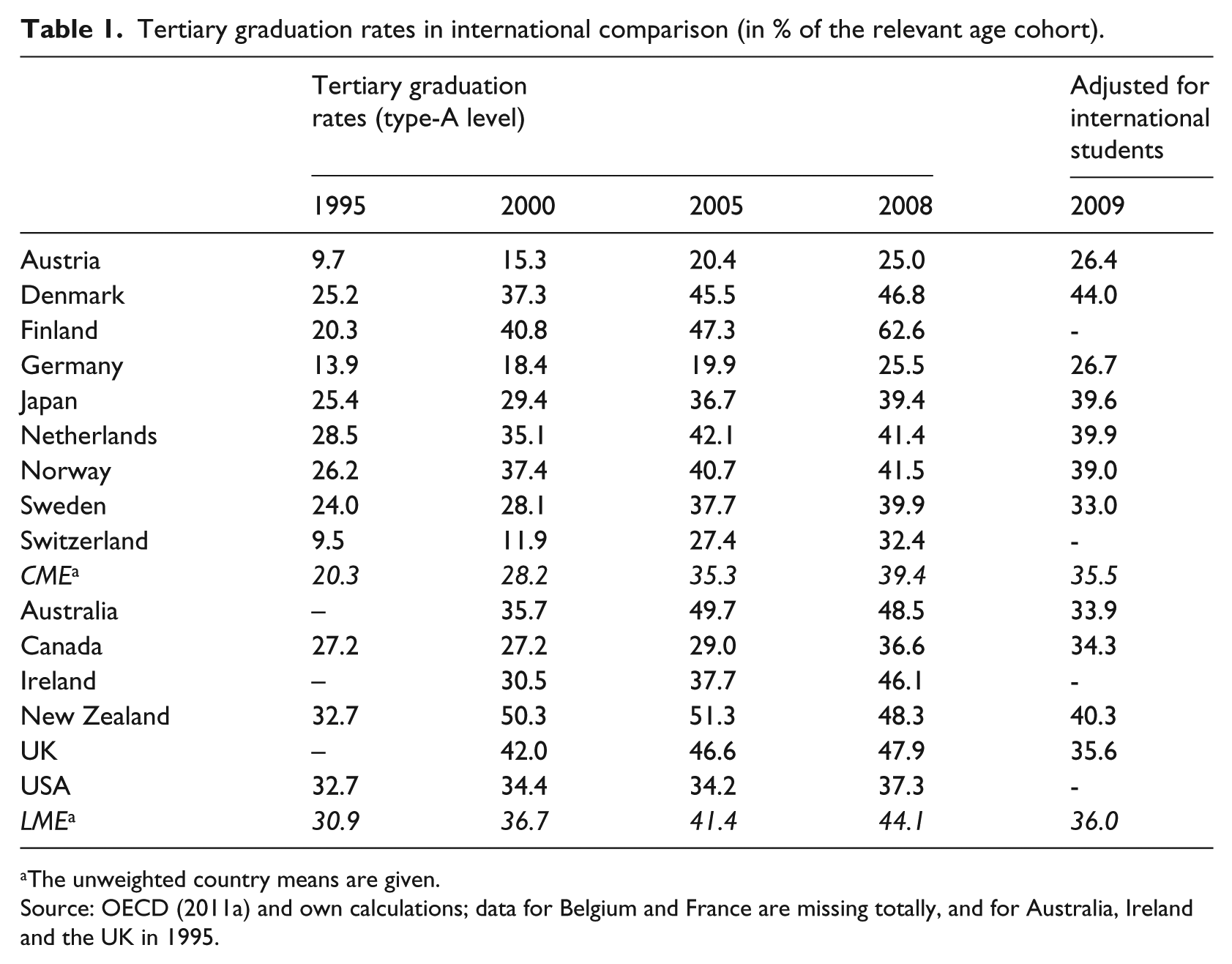

To test this hypothesis, student figures from the OECD can be used. 5 The first question, however, is, how to operationalize the dependent variable exactly, as the OECD provides many different figures. For example, data on study entrants (again differentiated in “first-time” and “first-degree” students) or on graduates are available. Because the main focus of the VoC approach is the labor market and not the educational system, it could be argued that figures on graduates are more appropriate. 6 Table 1 presents the figures of the years 1995, 2000, 2005 and 2008 for all LMEs and CMEs that are mentioned by Hall and Soskice and where data is available.

Tertiary graduation rates in international comparison (in % of the relevant age cohort).

The unweighted country means are given.

Source: OECD (2011a) and own calculations; data for Belgium and France are missing totally, and for Australia, Ireland and the UK in 1995.

If we examine the first three columns of Table 1, the data support the hypothesis. While there is a convergence over time (the difference decreases from 10% in 1995 to 5% in 2008) and quite some heterogeneity within the groups (especially for the CME), the expected differences between LMEs and CMEs persist. 7

Since 2009, however, the OECD has provided more specific data, allowing for the consideration of international students. As the hypothesis is mainly aimed at national students, it makes sense to use these improved data without international students. If one adjusts graduation figures in this way (shown in the last column), the difference between LME and CME totally disappears. 8

The assessment of the hypothesis on graduation rates derived from the VoC approach is, therefore, dependent on whether one uses the “old” or the “new” data, with the latter leading to a preliminary rejection of the VoC approach. However, the now visible larger share of international students in LMEs would also be concordant with the VoC approach (Graf, 2009). Therefore, the new data can lead to the formulation of new hypotheses, which could be seen as a kind of theory refinement.

Comparing work orientations of graduates

Another hypothesis that can be derived from the VoC approach is that graduates from LMEs should emphasize “career” as a work orientation more strongly than graduates from CMEs. Without being able to explain this here in more detail, one reason is that studying is seen much more as an investment in one’s individual human capital in the former countries that should pay out by better career opportunities.

One data source to test this hypothesis is the REFLEX study (see www.reflexproject.org). It is a large comparative survey of more than 30,000 respondents from 12 countries from the year 2005. 9 Graduates from higher education (ISCED Level 5a) were surveyed five years after their graduation in the academic year 1999/2000 to get a picture of their route of study as well as their transition into the labor market (Allen and Van der Velden, 2007; Arthur et al., 2007; Schomburg and Teichler, 2006).

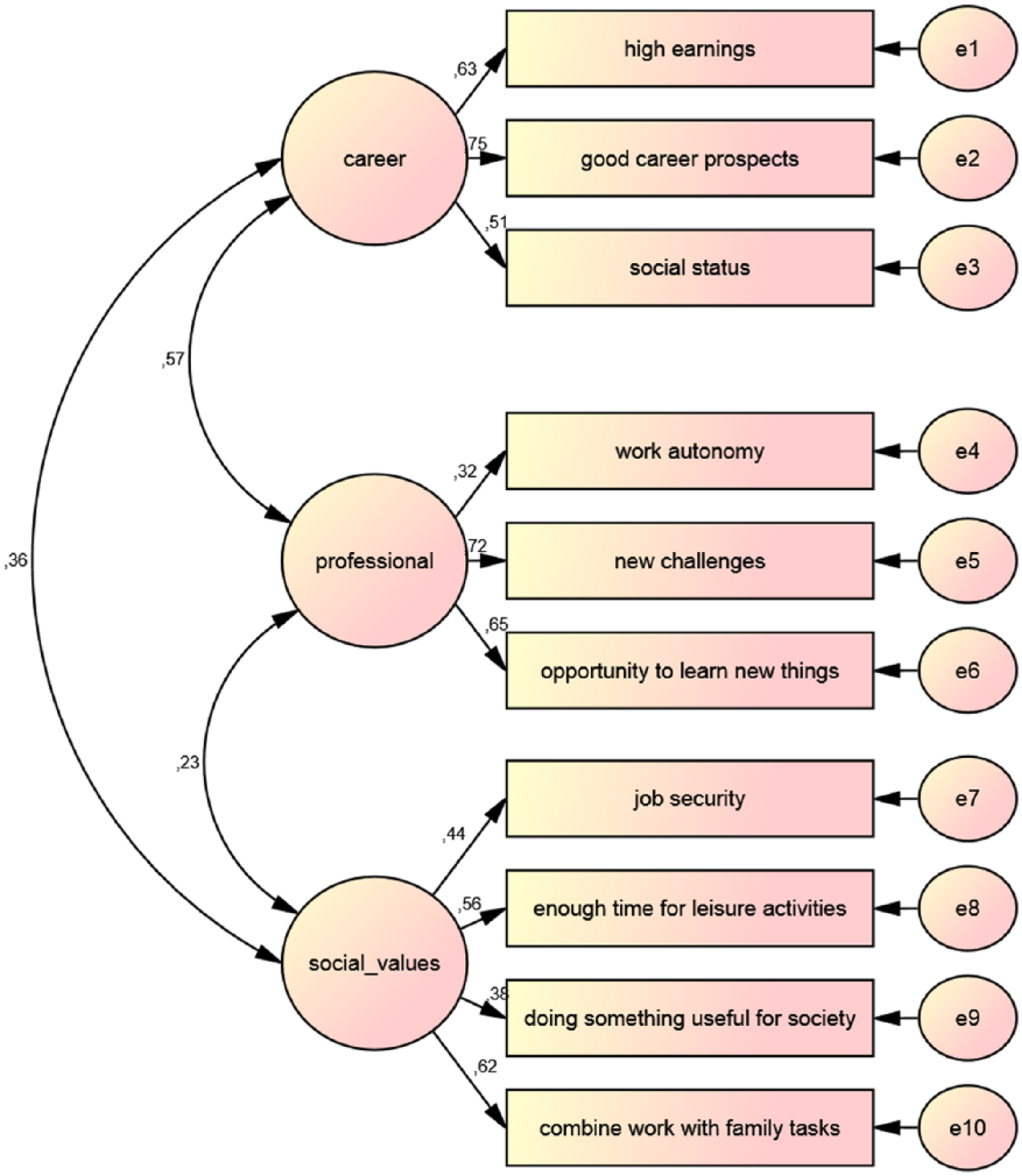

The REFLEX study contains 10 items on work orientations. Allen and Van der Velden (2007: 242 ff.) combine these into three distinct factors via an exploratory factor analysis (see Appendix 1): career and status orientation; professional/innovative orientation; and social-oriented values. 10 This is, in principle, a useful and common way of a) reducing data complexity and b) measuring complex issues, such as values, in a more reliable way. In a second step, Allen and Van der Velden compare the countries with regard to their average scores on these three factors.

Unfortunately, the REFLEX study contains data on only one LME, the UK. On the other hand, data on seven CMEs (Austria, Belgium, Finland, Germany, Netherlands, Norway and Switzerland) are provided. Comparing these countries once more supports the hypothesis: the UK exhibits an average score of around 0.2, while all CME countries have below-average scores (Austria ≈ −0.07, Belgium ≈ −0.11, Finland ≈ −0.01, Germany ≈ −0.21, Netherlands ≈ −0.12, Norway ≈ −0.21, Switzerland ≈ −0.28). 11 With regard to the other factors, Switzerland and Austria have especially high scores in professional values, and the UK has relatively low scores; Norway has relatively high figures in the social values. The other values are relatively similar across these countries. 12 On the basis of Allen and Van der Velden’s analysis, one would again accept the VoC approach.

While the OECD data in the first example stem from official administrative sources, surveys such as the REFLEX study in this second example are another important means of making international comparisons. However, survey data, and especially international comparative surveys, are prone to different kinds of error. Our hypothesis acceptance should, therefore, only be provisional.

One specific problem of comparative surveys is the question of equivalence or invariance (Deth, 1998; Harkness et al., 2010; Przeworski and Teune, 1966): does the instrument really measure the same in different countries? There are different techniques that allow testing for measurement invariance (Braun and Johnson, 2010). The standard procedure is a Confirmatory Factor Analysis (CFA) or Multigroup Structural Equation Model (SEM) (Byrne, 2010; French and Finch, 2006), which tests whether a set of manifest indicators (e.g. items in a questionnaire) measure a latent construct (e.g. work orientations) in a similar way across different groups (in this case, countries).

Comparing groups … with respect to hypothetical constructs requires that the measurement models are equivalent across groups. Otherwise, conclusions drawn from the observed indicators regarding differences at the latent level (mean differences, differences in the structural relations) might be severely distorted. (Temme and Hildebrandt, 2009: 138)

Equivalence is especially relevant in cases where the different contexts of the groups may potentially moderate the relationship between the manifest indicator and the latent construct (Temme and Hildebrandt, 2009), as explained previously with regard to the third function of comparisons.

Work orientations can be seen as such complex latent constructs. The question, then, is, whether the REFLEX items measure the same in the different countries and whether mean comparisons, as described above, are allowed and appropriate.

With regard to invariance, different levels are distinguished in the context of CFA (e.g. Temme and Hildebrandt, 2009): configural invariance means that the number of latent constructs and the assignment of manifest indicators to these latent constructs are the same in all groups. Metric invariance requires, furthermore, that factor loadings of the manifest indicators are equal across groups. Scalar invariance, also needs equivalent constants of each manifest indicator across countries. This is the prerequisite for mean comparisons of the latent concepts.

Figure 1 shows the results of a pooled CFA with the three latent concepts career, professional, and social values and the assignment of the 10 items (corresponding to the three factors by Allen and Van der Velden). Latent concepts are represented by ellipses, the manifest indicators by rectangles. The “e1”–“e10” on the right-hand side are the error terms, as the indicators are not fully explained by the latent constructs. The factor loadings for the pooled sample (respondents from all countries put together) are given at the single-headed arrows between ellipses and rectangles. The double-headed arrows between the latent concepts display their correlations. For example, “professional orientation” is best represented by the item “new challenges” and less well represented by “work autonomy”.

Pooled CFA model for work orientations.

To check for configural invariance, this model is estimated in all countries separately. Factor loadings, etc. are set free and can, therefore, be different for each country. CFA offers a range of measures to evaluate whether a theoretical model fits the data. Traditionally, a chi-square test is provided. However, this test statistic is extremely sensitive to sample size (e.g. Lei and Wu, 2007: 36). Therefore, alternative goodness-of-fit indices are usually also given. Two of the most common ones are the comparative fit index (CFI) and the root mean square error of approximation (RMSEA). As a rule of thumb, values above 0.9 for the CFI and values below 0.06 for the RMSEA are seen as indicators of a good fit between model and data.

The fit indices for the model of configural invariance are CMIN/DF = 190.8, CFI = 0.839 and RMSEA = 0.085. While the chi-square test is, as expected, extremely high (test theory suggests that the value should not exceed 3), CFI and RMSEA are only slightly lower/higher than the threshold. Therefore, the model does not fit very well to the data, but due to further inspection (no single parameter or modification index stands out in particular) one might accept a reasonably similar structure in all countries.

Adding similar factor loadings across all countries as additional constraints results in metric invariance. To compare different nested models, Byrne (2010: 221) recommends, with reference to Cheung and Rensvolds (2002), to use the increase in the CFI (ΔCFI) for evaluation. The ΔCFI should not lie above 0.01. The increase for the model of work orientations analyzed here is 0.012, exceeding this threshold only slightly. Taking into account the large number of countries, this might again be acceptable. Metric invariance of the model might, therefore, be assumed as well.

Scalar invariance additionally requires similar constants for each manifest indicator across all countries. Testing this model results in ΔCFI = 0.309, well above the threshold, which means that the assumption of scalar invariance has to be rejected.

As a result, mean comparisons of the latent constructs across countries, as presented above (factors or work orientations), are, therefore, not adequate. A decision about a preliminary acceptance or rejection of the second hypothesis is, therefore, not possible on the basis of these data (or at least needs additional analyses, i.e. in the context of “partial invariance”).

Conclusion

The question of the special issue “Why compare?” was addressed in a double sense in this paper. First, it was argued that comparisons are necessary for theory development and improvement. Only by confronting our hypotheses with empirical data can we reject, (preliminarily) accept or refine our theoretical assumptions. (International) comparisons especially allow for transferring or specifying hypotheses to different contexts. On the other hand, observed differences between countries can best be interpreted in the light of theory. The VoC approach was used to exemplify this interrelation between theory and international comparative data in the field of higher education.

However, on a second level, it was argued that it is also necessary to compare different data sources and different techniques of data analysis, as international comparisons are especially prone to data difficulties. Two of these problems, general data availability and equivalence, were shown to have an important impact on the above-mentioned theory assessment.

With regard to the example of hypotheses derived from the VoC, in one case new and better data reversed the interpretation overall. While the old data supported the hypothesis, improved data (for a reduced sample of countries, though) suggested a rejection. However, the new data also led to the formulation of a new hypothesis. In a second case, it was argued that the availability of comparative data does not necessarily mean that the data is also comparable. While traditional techniques (exploratory factor analysis) supported the hypothesis again, improved techniques of data analysis (Multigroup SEM) found measurement inequivalence of the instrument, thereby impeding a straightforward test of the hypothesis. It is important to mention, though, that while these two examples are critical about the applicability of the VoC approach to a comparison of higher education systems, other analyses support a relationship between the VoC and their respective higher education systems (Graf, 2009; Hoelscher, 2012b, 2013, 2016; Leuze, 2010).

What are the conclusions from these considerations? Comparisons are highly important, but are also demanding with regard to theory and data. While a lot of progress has been achieved in the last years already, further improvements in data availability as well as in our capacity to analyze the data with advanced techniques are necessary. In spite of, or maybe even especially because of, the high relevance of comparisons, one should be extremely careful, and ideally combine different approaches and data sources. In the case of the above-presented assessment of the VoC, the quantitative data should be complemented by qualitative approaches to allow for the triangulation of results, otherwise false conclusions may be drawn; for example, in the field of evidence-based policymaking, when, again for example, US policies in the field of higher education are transferred to European systems too easily. Therefore, when interpreting (comparative) data, we should always be full of doubt – to refer back to Russell’s statement presented at the beginning of this article.

Footnotes

Appendix 1

This article uses data from the study REFLEX – International Survey Higher Education Graduates (www.reflexproject.org). The Swiss data was provided by the “Bundesamt für Statistik der Schweizerischen Eidgenossenschaft”. I would like to thank all primary researchers for access to this data.

The question was “Please indicate how important the following job characteristics are to you personally?”. Each item could be rated on a 5-point scale ranging from “1 = not at all important” to “5 = very important”.

The items and their respective factors were as follows:

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.