Abstract

This article addresses the time-varying leader–follower formation control problem for nonholonomic mobile robots, under communication and visibility constraints. Although the leader–follower formation control under visibility constraints has been studied, the elimination of the off-tracking effect has not been widely addressed yet. In this work, a new method to eliminate the off-tracking effect, considering the time-invariant formation as a tractor–trailer system, for unknown and circular tractor paths, taking into account the visibility constraints, is proposed. For a time-varying formation with not circular tractor’s path, the proposed method significantly reduces the off-tracking. Only the relative position and the relative orientation, provided by the on board monocular camera, are required. Thus, both the leader robot’s absolute position and the leader robot’s velocities are not needed. Furthermore, to avoid explicit communication among the robots, an extended state observer is implemented to estimate both the translational and the rotational leader’s velocity. In this way, the desired tasks are executed and achieved in a decentralized manner. For a time-varying formation, with constant leader robot’s velocities, the proposed control strategy, based on the kinematic model, guarantees that the formation errors asymptotically converge to the origin. Based on the Lyapunov theory, the stability proof of the formation errors dynamics is shown. Simulation results, considering time-varying leader robot’s velocities, show the efficiency of the proposed scheme.

Keywords

Introduction

The problem of convoy driving can be seen as a special case of group formation. 1,2 Military applications of convoy driving are the most obvious, where a given number of autonomous vehicles follow each other while keeping a safe constant distance. The convoy-like vehicle can be seen as an emulation of the multi-steered general n-trailer with the difference that the physical link does not exist between the tractor/leader and the trailer/follower, but an additional error dynamics equation is introduced to virtually represent this physical constraint. 3 A tractor–trailer mobile robot (TTMR) is a mechanical system composed of a known number of trailers pulled by a tractor.

The off-tracking phenomenon is the major problem in TTMR. This term refers to the deviation of the path of each articulated vehicle from the paths of preceding vehicles. 4 The reduction or elimination of off-tracking will result in much improved performance in terms of safety during turns, cornering, overtaking other especially small cars, and backward motion. If both the tractor and the trailers can track an identical geometric path, the overall width of the system is only equal to that of the tractor or the trailer. 5 Thus, the robot can perform transport tasks in a narrow space which is the most important problem when finding an obstacle-free path. One way to reduce off-tracking is by using the proportional navigation guidance law, more precisely deviated pursuits. The guidance laws used in Fethi and Boumediene 1 to model and control a robotic convoy are based on the geometry and the kinematics equations between two successive robots. With the deviated pursuits, the linear velocity of the follower robot is designed to keep a constant distance between robots. The rotational velocity is designed such as the follower robot moves pointing toward the leader robot but considering a small parameter in the relative angle to increase the curvature radius of the follower robot’s path. Since a constant value for that small parameter is used, and a formal analysis is missing, the off-tracking effects are not correctly reduced. To eliminate the off-tracking effect, authors’ 5 proposed approach is the generation of the desired full-state trajectory from a desired geometric path, in the Cartesian plane along a time-parameterized path. This technique is useful only when the desired path, given in the configuration space, is known. The trajectory generation process will be more complex according to each desired path. One way to eliminate the off-tracking on physical trailers, for circular paths only, is by using the sliding kingpin mechanism, proposed in Deligiannis et al. 6 According to this technique, the kingpin hitch of the trailer slides in a perpendicular direction to the longitudinal axle of the tractor when the tractor–trailer turns. The displacement of the kingpin hitched off-axle along the rear axle allows to increase the radius of curvature of the path that the trailer travels. Notice that, for implementing this technique, a mechanic device is required which increases its cost.

By the other hand, in the formation control approaches, it is assumed that each robot in the formation can obtain its accurate global position information by the use of some global positioning sensors. To address this issue, some researchers have focused on the use of alternate sensors on board (e.g. laser, cameras, and sound ranging technologies). Compared to other traditional sensors, the visual cameras (monocular, stereo and omnidirectional cameras, kinect device) can provide richer information at lower cost, making them a very popular option for formation control using only available relative on board sensing. 7 However, the practical drawbacks of incorporating additional sensors include increased cost, increased complexity, decreased reliability, and increased processing burden. 8 Taking into account the previous disadvantages, many authors have chosen visual servo strategies, using a monocular camera, that rely on analytic techniques to address the lack of depth information. Visual servoing is classified into two groups: position-based visual servoing (PBVS) and image-based visual servoing (IBVS). PBVS control methods 9 –12 use three-dimensional scene information that is reconstructed from image information. That is, the camera acts as a “Cartesian sensor,” where pose estimation algorithms use camera data to generate an error signal in Cartesian space. This error signal is then used in a feedback control law. The main advantage of the position based approach is to perfectly control the robot’s trajectory done in the Cartesian space using well-known path tracking techniques. As a bad consequence, certain trajectories defined in the Cartesian space can lead the target out of the field-of-view (FOV) of the camera. 13 In IBVS methods, 14 –18 the controlled states are image features of the target, this means that the image data are used directly in the control loop. The major drawback of these methods is that they can only regulate the pose of the camera with respect to a reference pose where some reference image was taken.

Related works

Dani etal. 10 proposed that control law requires only the knowledge of a single known length on the leader. The relative pose and the relative velocity are obtained using a geometric pose estimation technique and a nonlinear velocity estimation strategy, respectively. A Lyapunov analysis indicates asymptotic tracking of the leader vehicle. Xinwu et al. 19 proposed a time-invariant leader–follower formation tracking control scheme for nonholonomic mobile robots with on board perspective camera, in the image space. Measurements of the position and the translational velocity of the leader robot are not needed. An adaptive observer is used to estimate the linear velocity. The stability proof shows that the system is stable. The relative orientation angle between mobile robots is obtained by using a compass sensor. In Hasan et al., 12 a time-invariant, state-feedback control law that allows one differential drive robot to follow another, at a constant relative distance, is presented. The proposed control law does not require measurement or estimation of the leader robot’s velocity and has tunable parameters that allows one to prioritize the error bounds of either the relative polar angle or the relative orientation. In Dimitra and Vijay 20 andXiaomei et al., 21 to address the cooperative motion coordination of leader–follower formations of nonholonomic mobile robots in known polygonal obstacle environments, the visibility constraints are defined. In Jie et al., 13 an adaptive image-based visual servoing control strategy is proposed following the prescribed performance control methodology. Firstly, the leader–follower visual kinematics in the image plane and an error transformation with predefined performance specifications are presented. Moreover, the off-tracking effect is not addressed. In Qun et al., 22 a distributed leader–follower formation for nonholonomic mobile robots only using local interactions among the robots is proposed to solve the trajectory tracking problem. A distributed estimation strategy is presented for each follower robot to estimate the leader’s states, since the dynamic model is used, the leader velocity is also considered as a state. In Fabio et al., 23 a method for estimating the relative distance between leader and followers by a reduced-order nonlinear observer is introduced. The leader mobile robot’s velocities are not estimated since both leader robot and follower robot’s velocities are considered as the control inputs of the system. Also, the off-tracking is not addressed. In Sida et al., 24 a formation controller based on model predictive control scheme is proposed to assure the desired formation and the position consistency of followers. Afterward, a dynamic controller based on adaptive terminal sliding mode control scheme is developed for the leader to observe and then compensate the external disturbance as soon as possible. However, any vision strategy is addressed. González-Sierra and Aranda-Bricaire 4 proposed the emulation of the so-called kingpin mechanism to reduce the off-tracking effects exhibited by the standard and generalized n-trailer systems. In turn, both systems are emulated by a group of differential-drive mobile robots using the leader–follower scheme. It is assumed that the absolute robots’ position and the leader robot’s velocity are known. Also visibility constraints are not addressed.

The main advantage of this work is that the absolute posture of the leader robot, its velocities, and the knowledge of its path are not needed. Only a monocular camera is used to estimate, on board the follower robot, both the relative position and the relative orientation. Thus, the use of another sensor either IMU or compass is not necessary. In addition to this, an extended state observer is implemented to estimate both the translational leader robot’s velocity and the rotational leader robot’s velocity. Therefore, the proposed time-varying leader–follower formation scheme is decentralized, computationally inexpensive and simple to be implemented on board. Also, the main difference of this work compared to the related works that address the vision-based leader–follower formation control is that also the off-tracking effect is significantly reduced for a time-varying formation. Furthermore, communication constraints are considered since a velocity estimator of the leader robot is implemented.

This article presents the following contributions. First, a new method to eliminate the off-tracking effect for a time-invariant formation, considering a circular leader robot’s path, is proposed. With this method, the off-tracking is significantly reduced either for a time-varying formation or for a time-varying leader robot’s velocities. To guarantee that the time-varying formation control problem can be solved, the minimum curvature radius of the leader robot is defined. Also, this method considers both the visibility and the communication constraints. Second, a control strategy is proposed to solve the time-varying formation control problem with reduction of the off-tracking effect, using only both the relative measurements and the leader’s velocity estimation. Based on Lyapunov theory, the proposed control law guarantees that the formation errors asymptotically converge to the origin, for the time-varying formation with a circular leader robot’s path. To our knowledge, the reduction of the off-tracking for a time-varying leader–follower formation of nonholonomic mobile robots under communication and visibility constraints, using relative position/orientation measurements only, had not been studied.

The article is organized as follows: In the second section, the vision-based algorithm is described. The third section presents the formation kinematics, the visibility constraints, the minimum curvature radius condition, and the strategy to reduce the off-tracking. In the fourth section, the observer to estimate the leader robot’s velocities and the controller design are described. Simulation results are shown in the fifth section. Finally, some conclusions are mentioned in the sixth section.

Vision-based relative position/orientation reconstruction

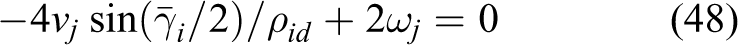

In this section, the vision-based relative posture reconstruction algorithm is briefly described. For simplicity in the notation, consider one robot only, with an ideal perspective camera, without distortion, with reference frame

Projection of the 3D feature points onto the image plane.

Relative distances and relative orientation in the camera’s frame.

Similar to Hasan et al.,

12

from the Thales’ theorem, the distance of each vertical line that composes the pattern, along the camera’s optical axis (Zc

), is given by

From the Thales’ theorem as well, the lateral offset of points

The relative orientation between mobile robots is obtained from the knowledge of W,

Similar to Gans et al., 9 the presented method is also distinguished due to the knowledge of two geometric lengths is much less restrictive than the complete geometric knowledge of all lengths. Furthermore, initialization is not required, and errors due to large motions are not propagated because of measurements are computed from the present frame only.

Preliminaries and strategy to reduce the off-tracking

In this section, the leader–follower formation kinematics and the visibility constraints are described. Also, the measurement of the off-tracking, the minimum curvature radius condition, and the proposed strategy to reduce the off-tracking effect are presented.

Kinematics of the leader–follower formation

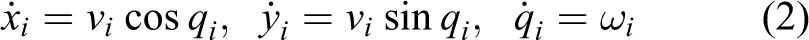

Let us consider a set of n unicycle mobile robots moving in a plane,

The coordinates

The representation of separation-bearing parameters is often used by many formation control approaches. In addition, in many formation control approaches for mobile robots, the relative position between the mobile robots is defined with respect to the leader robot’s frame. As done in Xinwu et al.

19

and Consolini et al.,

25

the position of the leader robot, with respect to the follower robot’s frame

with

Therefore, from Figure 3, it is implied that

Leader–follower formation.

By differentiating (4) with respect to time, taking into account (2), (6), and using the following trigonometric identities:

where

Measurement of the off-tracking

For a unicycle mobile robot, the instantaneous curvature radius of its path is given by

Remark 1

Notice that

Note that

From (11), the maximum deviation, when any reduction action is implemented, is given by

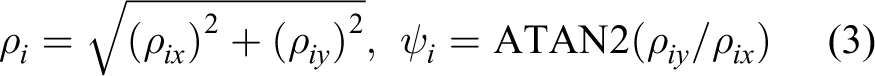

Modeling of the visibility constraints

In Dimitra and Vijay

20

and Xiaomei et al.,

21

the visibility constraints are introduced. It is assumed that each follower robot is equipped with a fixed on board camera of limited angle of view

where

It is important to note that, in the original work, both the relative orientation (

Visibility constraints.

Therefore, in this work, visibility constraints are modified such as, furthermore, the size of the mobile robots and the maximum relative orientation are taken into account. Notice that the modifications in the visibility constraints are made not only for the proposed vision-based algorithm but also for any vision-based strategy that uses a perspective camera.

Assume that the camera’s FOV is given by

The minimum separation between a pair of mobile robots takes into account both the minimum separation under visibility constraints and the size of the mobile robots. Thus, the minimum separation between a pair of leader–follower robots is given by

where

Therefore, in this work, the leader robot’s pattern can only be detected if and only if the following visibility constraints are satisfied

where

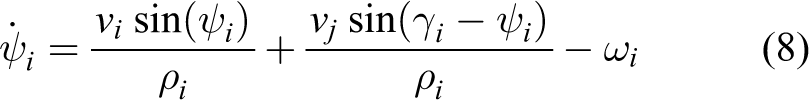

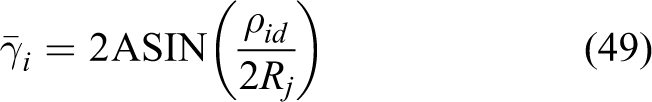

Minimum curvature radius condition

Let us now consider the steady state of a time-invariant pair of leader–follower robots, it implies that, both the leader robot and the follower robot are moving along the same circular path defined by the constant curvature radius Rj

, and with a desired constant separation, denoted as

According to the

Due to the visibility constraints, to guarantee that the formation control problem can be archived, the leader robot’s path is restricted by the minimum curvature radius condition. Thus, to define the minimum curvature radius condition taking into account visibility constraints, from Figure 5, substituting

For the steady state, the follower robot moves along the leader robot’s path with a constant radius of curvature.

To solve the formation control problem considering the reduction of the off-tracking effect, the instantaneous curvature radius of the leader robot’s path (Rj ) must satisfy the following condition

Remark 2

In this work, to guarantee that the time-varying formation control problem, with reduction of the off-tracking effect, can be solved, the minimum curvature radius of the local leader robot is defined. Visibility constraints only guarantee that the local leader’s pattern can be detected by the local follower’s camera. The minimum curvature radius condition guarantees that the pair of mobile robots can fit on the leader’s circular path. Otherwise, the off-tracking effect cannot be eliminated for a time-invariant formation in the steady state even using absolute positions.

Proposed strategy to reduce the off-tracking

For simplicity of notation, consider the auxiliary variable

Remark 3

Notice that there are two problems if equations (22) and (19) are used to compute

(1) the desired curvature radius of the local leader robot (

From (22), the desired bearing angle and its time derivative are chosen as

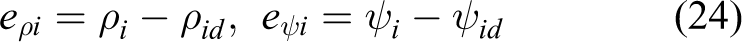

The formation errors of a pair of mobile robots are given by

where

The considered input vector of a pair of mobile robots is

In this work, the following assumptions are considered:

Assumption 1

The desired separation between a pair of mobile robots,

Assumption 2

The control inputs, (

Assumption 3

The global leader robot is able to move in an obstacle-free environment. Furthermore, its velocities (

Assumption 4

The initial states

Assumption 5

Each local follower can reliably detect its local leader which lies within a limited region with respect to the forward-looking direction.

Problem statement. Given an instantaneous global leader robot’s velocities (

Remark 4

By using only the instantaneous local leader robot velocities, in the transient state for a time-invariant formation, this is, for a time-varying curvature radius of the leader robot, the local follower robot will try to converge to the instantaneous curvature radius of the leader, neglecting the path that was traveled by the leader robot, since the proposed method to eliminate the off-tracking is based on the steady state and the local leader robot’s path is omitted. Notice that, it is assumed that

Observer and controller design

In this section, the extended state observer (ESO), implemented to estimate the local leader robot’s velocities, is described. Also, the proposed control law to solve the time-varying formation control problem with reduction of the off-tracking is shown.

Extended state observer

The ESO was first proposed in the context of the active disturbance rejection control (ADRC).

26,27

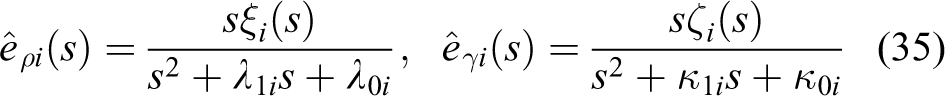

The main ability of ESO is to estimate both the internal dynamics and external disturbances of the plant. Thus, in this work, an ESO is implemented to estimate the translational velocity and the rotational velocity of the local leader robot. Now, consider the

with

Any state observer will estimate the state and the external disturbance since the latter is now a state in the extended state model. Such an observer is known as ESO. A particular ESO of (25) and (26) is, respectively, given as

where

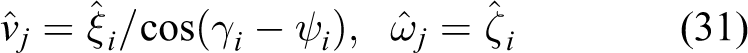

From the estimation of the disturbances, both the translational velocity and the rotational velocity of the local leader robot can be obtained. That is

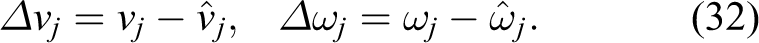

Then, the velocities estimation errors are given by

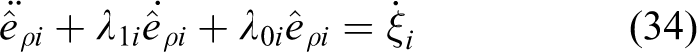

By differentiating the state estimation error of

By differentiating once (33), and using (29), the second-order differential equation is obtained

In a similar manner for the other state estimation error (

where gains

Controller design

Similar to Ricardo et al.

11

and Hasan et al.,

29

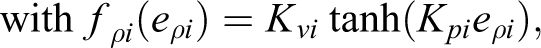

the proposed control law is based on a control strategy which linearizes the dynamics of the variables (

where

Unlike related works, since the reduction of the off-tracking is addressed, the proposed control law (36)–(37) requires not only the estimation of the local leader’s translational velocity

It is important to note that the proposed controller requires both the states of the system,

By differentiating once the formation errors (24) yields

By substituting the proposed control signals (36)–(37) into (39) and (40), the time derivative of each formation error, in closed-loop, is given by

The necessary and sufficient conditions to solve the stated problem, using the proposed control law with the ESO, are summarized in the following lemma.

Lemma 1

Consider n unicycle mobile robots (2), which compose

Proof

Considering the Lyapunov function candidate given by

with

Remark 5

Since a time-varying formation is addressed (

Notice that, when following a straight line (

Now, according to the proposed controller (37), replacing

Every particular case will be discussed next. Consider the time-invariant formation problem in the steady state, that is

By substituting (47) into (9), yields

Notice that (48) can be rewritten in a similar manner to (20), that is

The measurement of the off-tracking of a time-invariant leader–follower formation is given by (12). Since

Finally, from (10) and (49), the convergence to zero for all three variables (

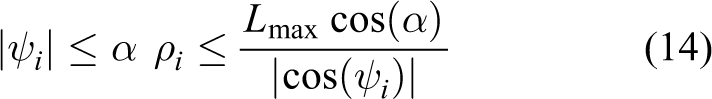

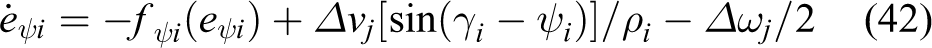

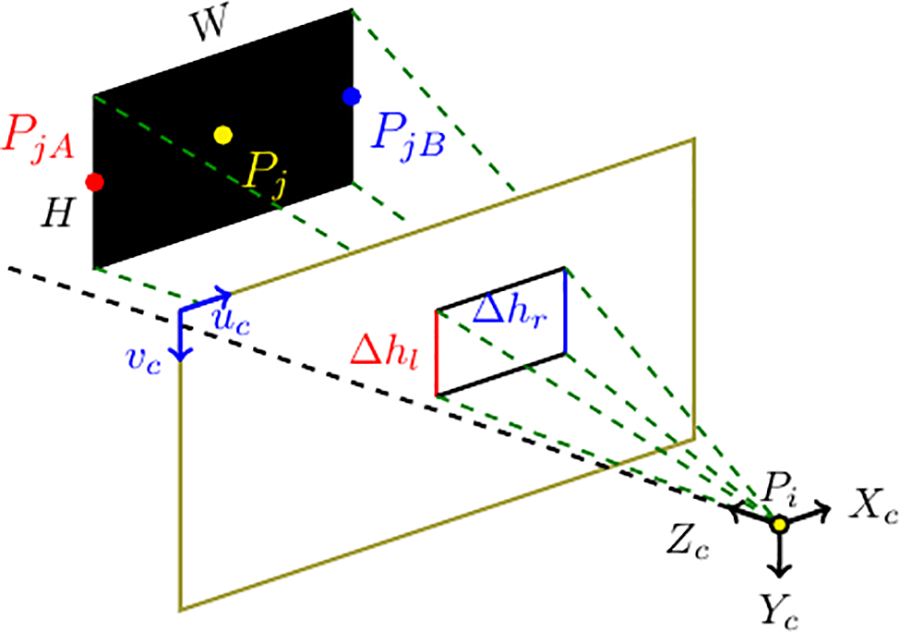

Figure 6 shows the information flow of the proposed scheme.

Control block diagram of the proposed decentralized scheme.

Simulation results

In this section, to validate the proposed time-varying leader–follower formation control scheme, simulation results are shown.

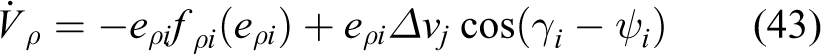

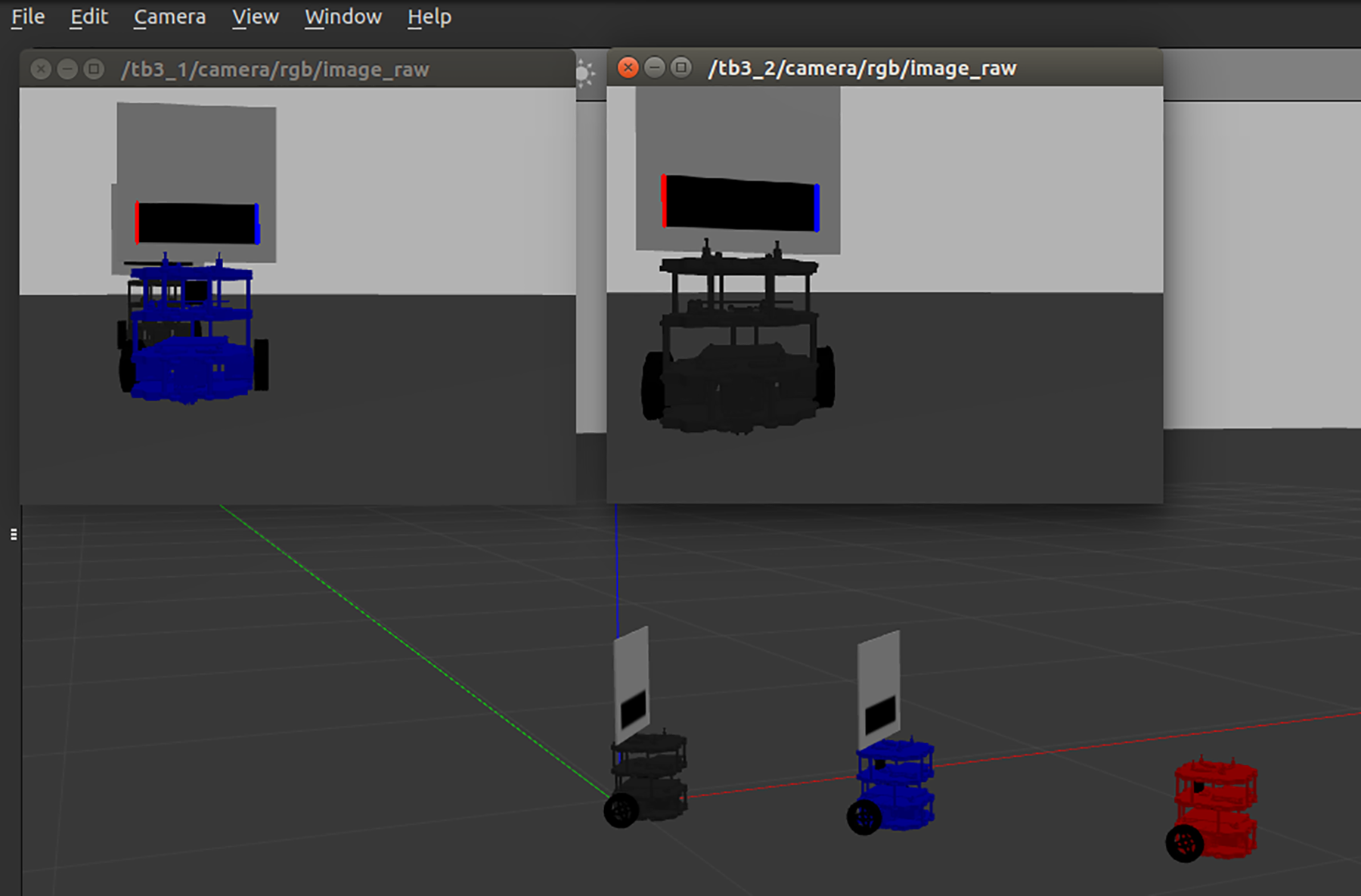

The simulation platform for the formation control of mobile robots is developed using Gazebo simulator on Robot Operating System (ROS) implemented by C++. 31 To study the performance of the control strategy presented in the fourth section as well as the vision-based pose estimation algorithm introduced in the second section, a simulation interface is designed as Figure 7, with two subwindows illustrating each camera view of two followers and a main window demonstrating the motion of three mobile robots in the environment. Thus, two pairs of leader–follower mobile robots are used. So, the red mobile robot (r 1) is the local follower of the blue robot (r 2). The blue mobile robot 2 (r 2) is the follower of the global mobile robot in gray color (r 3), which has the knowledge of the desired trajectory.

Simulation interface.

Simulations were performed with three TurtleBot3 mobile robots,

32

using a Raspberry Pi Camera Board V2.1 on each follower robot, with a horizontal field of view

Two simulation experiments were developed. For both simulation experiments, the desired separation between robots 1 and 2 was chosen as

where

Simulation 1

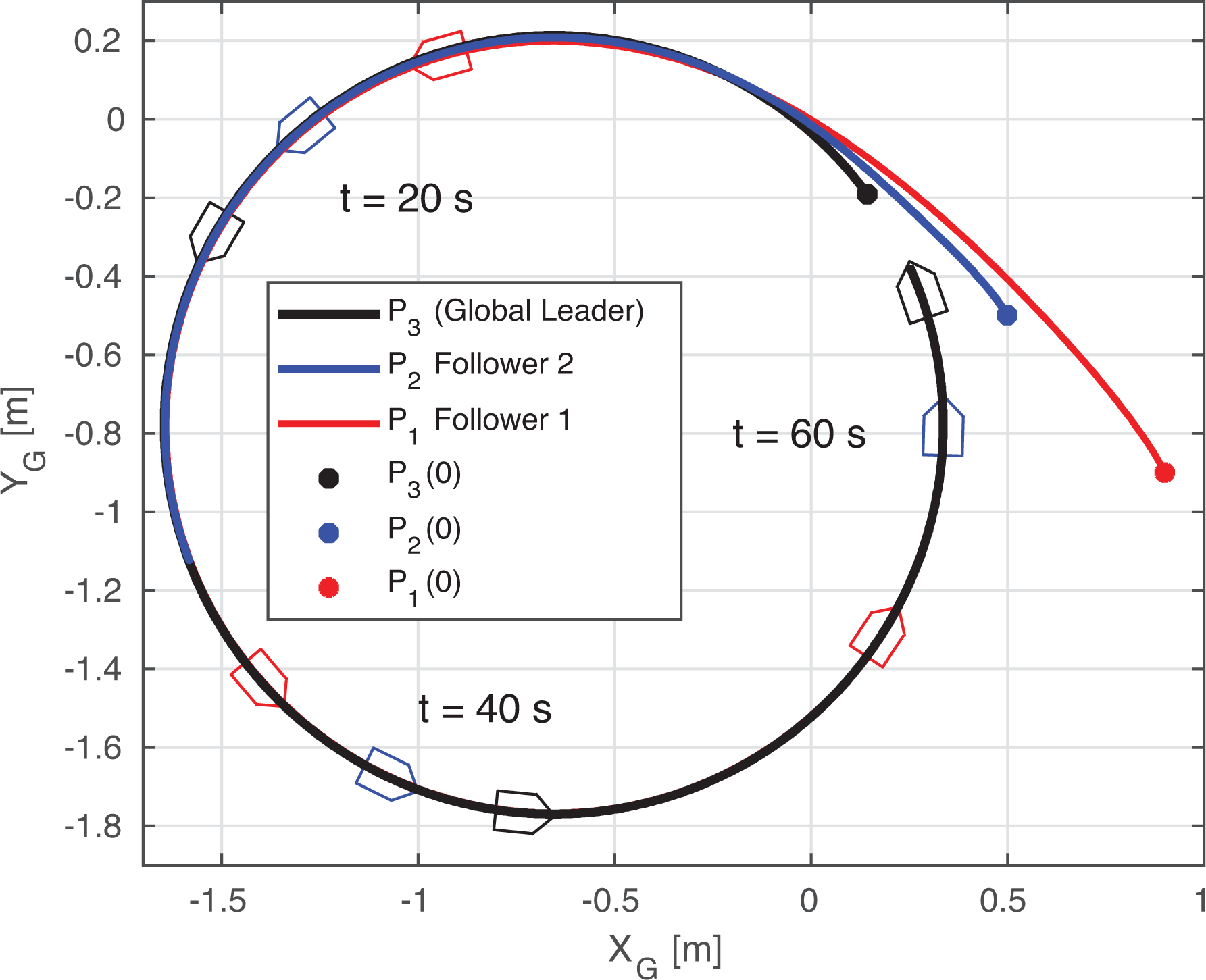

Similar to related works which address the vision-based formation control problem, the first experiment was carried out considering a circular path.

21,29,33

In open-loop, the global leader robot’s velocities were given as

Figure 8 illustrates the trajectory of each mobile robot. As can be seen, the off-tracking phenomenon of the pair composed of robot 2 and robot 3, after a transient, is eliminated since both a time-invariant formation and a circular leader robot’s path are required. In Remark 5, it is demonstrated that for this particular case,

Trajectories of all mobile robots (Simulation 1).

To show the efficiency of the proposed scheme, in Figure 9, the trajectories of all mobile robots without reducing the off-tracking, are depicted. To avoid the reduction of the off-tracking, it is necessary to consider

Trajectories of all mobile robots without reducing the off-tracking (Simulation 1).

In Figure 10, the formation errors and the relative orientation of each pair of robots are shown. As is demonstrated in Lemma 1, formation errors asymptotically converge to zero, independently whether a time-varying formation is required. Also, according to visibility constraints, the relative orientations are kept bounded by the maximum relative orientation,

Formation errors and relative orientations (Simulation 1).

Figure 11 shows the translational velocity of each mobile robot for the first simulation. Due to the desired separation between r 2 and r 3 is constant, v 2 converges to the global leader robot’s velocity (v 3). Since the desired separation between r 1 and r 2 is time-varying, the linear velocity of the follower robot 1 (v 1) is oscillating around its local leader robot’s velocity (v 2), according to (45).

Translational velocities of the mobile robots (Simulation 1).

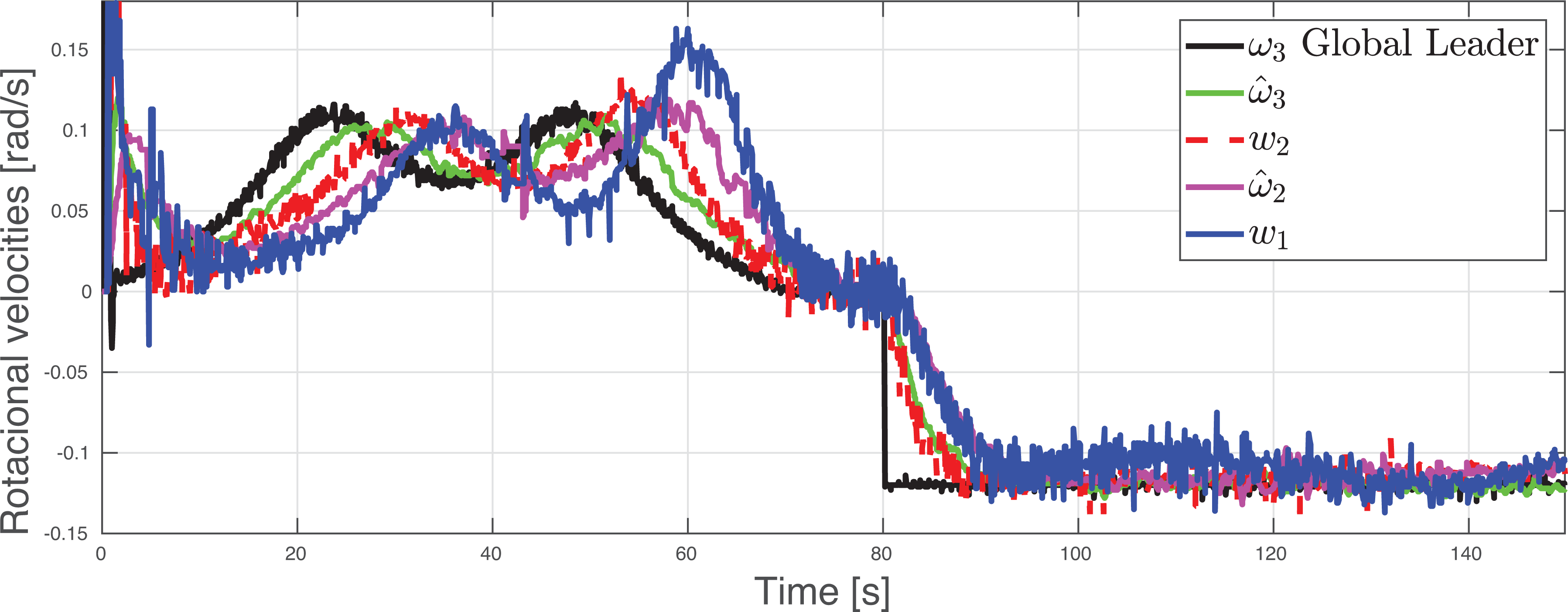

In Figure 12, the rotational velocity of each mobile robot, for simulation 1, is shown. In a similar manner to the translational velocities, the rotational velocity of the follower robot 2 (

Rotational velocities of the mobile robots (Simulation 1).

Simulation 2

Unlike related works which address the vision-based formation control problem, the efficiency of the proposed scheme was tested on a difficult scenario, that is, not to consider only straight lines,

12

circular/parabolic segments,

10

–12

and circular paths with constant radius of curvature.

21,23,29,33

Similar to González-Sierra and Aranda-Bricaire,

4

where any camera is used and only the reduction of the off-tracking is addressed, to show that the time-varying formation control problem can be solved while significantly reducing the off-tracking effect, a lemniscate trajectory is considered. Additionally, to test many time-varying curvature radii until reach out the minimum radius, allowed by the visibility constraints, a spiral path for the global leader robot is chosen. Also, to test a sudden change between two different curvature radii, a straight line segment is added to connect both paths. According to the maximum relative angle (

Figure 13 illustrates the trajectory of each mobile robot. As can be seen, although the off-tracking effects are significantly reduced during all test, the largest deviation between the paths occurs when the global leader robot suddenly changes its path from the straight line to the circular path (at 80 s). As was mentioned before, this situation cannot be eliminated by using the proposed scheme, since the proposed control law only requires the instantaneous velocities of the leader robot; the path traveled by the leader robot in the configuration space is unknown by the local follower robot. Furthermore, the proposed strategy to reduce the off-tracking is based on the steady state for a time-invariant leader–follower formation.

Trajectories of the unicycle mobile robots (Simulation 2).

In Figure 14, both the formation errors and the relative orientation of the pairs of robots are shown. Formation errors converge to a small band within 20 s. As is mentioned in section “Extended state observer,” the estimation errors (27), will be bounded by the time derivative of the disturbances (35), which are the global leader robot’s velocities (

Formation errors and relative orientations (Simulation 2).

Figure 15 shows the translational velocity of each mobile robot, for Simulation 2. In Figure 16, the rotational velocity of each mobile robot is shown. Since the global leader robot’s velocities are not constant, from (35), the velocities estimation errors (

Translational velocities of the mobile robots (Simulation 2).

Rotational velocities of the mobile robots (Simulation 2).

Due to the reduced camera’s FOV, taking into account the visibility constraints, to guarantee that the time-varying formation problem can be solved, it is necessary to start either with a small relative orientation between the mobile robots and with a small formation errors or on a straight line.

From the simulation results, it can be concluded that the obtained development, in this time-varying leader–follower formation scheme with reduction of the off-tracking under communication and visibility constraints, is similar to the one that can be obtained by using both the absolute positions and the absolute orientation of the mobile robots with explicit communication. However, due to the reduced camera’s FOV, to guarantee that the time-varying formation control problem can be achieved, the desired trajectory of the global leader robot must satisfy the minimum curvature radius condition (21), which implies that the desired trajectory must be large enough compared to the desired separation between the mobile robots.

Conclusion

In this article, a time-varying leader–follower formation control scheme for unicycle mobile robots to reduce the off-tracking effect, using only a perspective camera, is presented. The off-tracking effect is eliminated in the steady state for a time-invariant leader–follower formation, using only feedback from the monocular camera, and it is significantly reduced for a time-varying formation. Based on Lyapunov theory, it is demonstrated that the formation errors converge to zero even for a time-varying formation, considering a circular leader robot’s path. Also, the relative orientation dynamics is stable. To guarantee that the formation control problem can be achieved, both the visibility constraints and the minimum curvature radius condition are defined. Simulation results, tested on a difficult path, with a time-varying curvature radius, show the efficiency of the proposed time-varying formation control with reduction of the off-tracking effect under communication and visibility constraints.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was partially supported by CONACyT, Mexico, through scholarship holder No. 553972, and also by the Project CB-2015-01, 254329.