Abstract

Unmanned ground vehicles are usually deployed in situations, where it is too dangerous or not feasible to have an operator onboard. One challenge faced when such vehicles are teleoperated is maintaining a high situational awareness, due to aspects such as limitation of cameras, characteristics of network transmission, and the lack of other sensory information, such as sounds and vibrations. Situation awareness refers to the understanding of the information, events, and actions that will impact the execution and the objectives of the tasks at the current and near future of the operation of the vehicle. This work investigates how the simultaneous use of immersive telepresence and mixed reality could impact the situation awareness of the operator and the navigation performance. A user study was performed to compare our proposed approach with a traditional unmanned vehicle control station. Quantitative data obtained from the vehicle’s behavior and the situation awareness global assessment technique were used to analyze such impacts. Results provide evidence that our approach is relevant when the task requires a detailed observation of the surroundings, leading to higher situation awareness and navigation performance.

Introduction

Unmanned vehicles 1 are used in situations that require human action in an inaccessible or dangerous place, where there is a need to keep human beings distant. For example, a location can store toxic or explosive material, present a risk of collapse, be in a zone of urban violence, be a possible target for terrorists, or even be located in a war zone.

These vehicles have capabilities that allow the execution of activities that would be performed by a human being if he were at the remote location. Such activities include moving around the place, observing it, collecting data, or manipulating and collecting material. 2,3

One possible solution for the control of these vehicles is the automatic calculation of their trajectories, using, for example, mathematical linguistics and relational algebra, 4 probabilistic approximations like simulated annealing 5 or assisted control, as in the case of the work of Pivarčiová. 6

Another approach is the remote human control of the vehicle. In this case, the required separation between the human operator and the device makes it necessary for the latter to be controlled from a distance. Considering that the device is controlled in real time and continuously, the operator and the device need to be in constant communication. In this case, it is said that the device is teleoperated because the operation happens through the use of telecommunication features.

Among the different types of unmanned vehicles, the unmanned ground vehicle (UGV), 7,8 constrained to movements over a terrain, is the focus of this work. In general, this type of vehicle has an embedded camera, which captures images from the remote location and transmits these images in real time to its operator. At the same time, the operator can specify commands to be sent to the vehicle for it to move or execute other operations. This is done from a control station, 9,10 defined as a room in which the operator can observe the remote location, where the UGV is located, through one or more monitors that reproduce the images captured by cameras installed in the vehicle, which is equipped with joystick, keyboard, and mouse to control it.

The main challenges regarding unmanned vehicle technology refer to the design of new capabilities that allow the operator to perform their work in a correct, precise, and safe way. The location of the operator outside of the vehicle leads to lower situation awareness, 11 due to aspects, such as the low field of view of cameras, the existence of delays over the network transmission, and the lack of sensory information, such as sounds and vibrations. Endsley 11 defines situation awareness as the understanding of the information, events, and actions that will impact the execution and the objectives of the tasks at the current and near future of the operation of the vehicle.

The present work proposes an approach that combines immersive telepresence with mixed reality in the design of a control station to manage the operation of a UGV. We investigate how the combination of these technologies impacts the situation awareness of the operator and the navigation efficiency. Through the combination of cameras on the UGV and the use of a head-worn display (HWD), the operator obtains an immersive visualization as if they were inside the vehicle. By displaying virtual objects over the video feed based on information from multiple sources, the operator could have a better understanding of the environment. We performed a user study with the objective of understanding the impacts of using such an approach when compared against a traditional control station.

This work makes contributions to the design of control stations for unmanned vehicles by providing an approach, which implements the combination of immersive telepresence and mixed reality and a user study that presents evidence of how this approach impacts situation awareness and navigation performance.

Teleoperation

The teleoperation of machines can be used in situations, where it is desired to carry out work in a given place without exposing the human to the risks associated with the task to be performed, the place where the machine is, and the operation of the machine itself. For example, robots are used in tasks, such as the search and rescue of victims of urban disasters 2,12 in the handling and cleaning of toxic material. 13

For Sheridan, 14 the main advantage that the teleoperation offers is the security that it generates for the human being. For Durlach and Mavor, 15 in turn, the “teleoperated system” is characterized as being a machine that takes the form of a teleoperator, containing sensors and actuators, which extends the motor and sensorial capacity of its operator allowing them to manipulate and “feel” the environment in an alternative way. As far as producing sensations is concerned, the use of the image obtained from the remote location and the sensation of depth that can be generated during its visualization can be highlighted. This sensation generation in the operator increases the perception of the remote location and degree of immersion in that environment.

Another important aspect for teleoperation to be successful is the creation, at the operator, of some sense of presence at the remote location. Sheridan 16,17 and Martinez-Hernandez 18 call this sense of presence a “telepresence” and define it as the sensation of being in a place different from the one in which one is in fact. The place where the human operator is, in turn, is called a control station. For these authors, telepresence occurs when the operator receives sufficient information regarding the teleoperator (of the machine) and the environment where it is, and this information is presented in a way that the operator feels physically present at the remote location.

A teleoperator is generally characterized as being a humanoid robot, a vehicle, or even equipment that has sensors and actuators that can be used to perform manipulations in the remote environment or in its own mobility, besides having a means of communication with its operator. In the work of Rakita et al., 19 a method to effectively teleoperate robots is introduced, enabling a robot arm to mimic human arm motions with ease.

The work of Maeda et al. 20 uses teleoperation as an interface for robot operation. It couples image processing and teleoperation to account for obstacles and occlusion from objects at the scene, which helps when planning a route for the robot. At the same time, Zhang et al. 21 use imitation with both a hand and a head tracker to naturally teleoperate robots by teaching.

Situational awareness

During a teleoperation involving a UGV, the operator needs to be aware of the situation of the vehicle at the remote location (where it is and what it is doing), the events taking place at that location, and the relationship between the situation of the vehicle and those events in the context of the execution of the tasks, in the present and the future, and how this impacts the objectives of the mission. 11 This is called situational awareness. A designed orientation to maximize this will look into feeding the right information to the operators at the right time and in an effective way to respond accordingly. 22

For Endsley, 11 there are three situational awareness levels: perception (level 1), comprehension (level 2), and projection (level 3). In level 1, the perception of the elements within a volume of space and time by the operator is fundamental. The lack of this ability to perceive can generate an incorrect understanding of the present situation, inducing serious errors of operation. Level 2 is related to how humans combine, interpret, and retain information. It concerns the integration of information from various sources and the determination of its relevance in the context of the objectives to be achieved. Finally, level 3 is linked to the ability to predict future events and the understanding of the related dynamics. This level is present in individuals who hold the highest level of understanding of a situation.

Mixed reality

Mixed reality is a continuum that is composed of physical reality at one extremity, virtual reality at the other, and augmented reality (AR) in the middle. 23 In the context of teleoperation, virtual reality can act as a tool that synthesizes virtual worlds with which the operator can interact. 24 In these environments, data from the real world alter the virtual environment, seeking to change the state of the human operator. In the opposite direction, operator actions in the virtual world can make the vehicle change the real world or change its own configuration in relation to it. 25

AR, in turn, can enrich the operator’s sensations of the remote environment by the superimposition of computer-generated content on images originating from the remote environment. 26 In these cases, the place where the information should be presented is an important aspect to be considered. In the case of information related to specific objects, such as the height of a wall, or the temperature of a boiler, the presentation of the information must be performed close to the object to which it refers. On the other hand, global information, such as wind speed or room temperature, can be provided in fixed positions of the visual display, always being available and updated, regardless of where the operator is looking.

Immersive telepresence

Related to the concept of telepresence, presented in the second section, immersive telepresence 27,14 is defined as the experience in which a human operator can perceive the remote environment as if it were there, disconnecting its senses from the physical location where it is. In this context, perception refers mainly to the senses of sight, hearing, and touch. The same authors also define virtual telepresence as the sensation of being in a remote location that does not actually exist, which is generated by graphic and sound processing.

In other words, immersive telepresence is defined as the experience in which a human operator wears an HWD, which controls a remote video camera system according to the human operator’s head movement, based on the data collected by a position tracking device installed on the HWD. Thus, the user of the HWD visualizes the images captured by the remote cameras as if he had his eyes in the same position in which the cameras are and looking in the same direction in which the cameras are pointing.

Proposed approach

We propose a control station for a UGV that aims to increase the operator’s situation awareness and navigation efficiency. The concept developed seeks to achieve these objectives through a strategy to compensate for lost information, in which remote location visualization is enriched by cognitive resources resulting from a fusion process of immersive telepresence and mixed reality techniques. This system consists of hardware and software for control of a remote vehicle that, when installed, transforms a conventional control station into an immersive control station.

While a conventional station provides basic features, such as a monitor and a joystick, used, respectively, in the visualization of the remote location and in command of the movements of the UGV, the immersive station includes the use of an HWD and head tracker, which increases the field of regard, and displays virtual elements over the video feed that make the understanding of the environment better. In the opposite direction, the head movements of the operator are captured by a head tracker and define the movement and orientation of the camera installed in the UGV in real time.

Like Pretlove 28 and Mollet et al., 29 we believe that the interface for telerobotic and telepresence systems can be enhanced by immersive techniques, 25 as these increase the sense of presence. 30 In addition, through AR, the understanding of the remote environment can be improved, as the operator can see virtual information superimposed on the real images without having to shift his attention to a console or panel.

We designed our application in two components: an immersive control station based on known mixed reality techniques and technologies and an unmanned vehicle simulator. This allowed us to evaluate our approach using simulated AR, which is the use of a virtual environment to simulate an AR system without problems, such as tracking and small fields of view of AR devices. 31 In this case, it allows us to focus on the control station rather than the design of a UGV.

Control station

A UGV control station is composed of a computer, a data communication interface, and a control and navigation software. The “C/C++” programming languages were used in conjunction with the OpenGL and GLUT libraries. On the immersive station, OpenGL is also used to implement the features directly over the video feed sent from the vehicle. GLUT provides support for the keyboard and joystick interface in both stations. Figure 1 shows the control station built in this work.

Physical components of the control station. The equipment includes an HWD, head-tracker, and joystick. HWD: head-worn display.

In addition to the possibility of moving the UGV, the control station user receives information from sensors installed in the vehicle. These include: the inclination of the vehicle on its longitudinal axis relative to the ground; the inclination of the vehicle on its transverse axis relative to the ground; the inclination of the vehicle on its vertical axis relative to the earth’s magnetic north; the inclination of the camera onboard in relation to the transverse axis of the vehicle; and the inclination of the camera attached to the vertical axis of the vehicle.

In this arrangement, immersive telepresence intends to help the operator to better and more intuitively observe the place where the vehicle is, and even the vehicle itself in that place, contextualizing the image obtained by the camera installed in the UGV. It seeks to achieve this goal by having the operator feel as if he or she is immersed in the remote environment, where the vehicle is, or even within the vehicle in real time. This can result in a better understanding of the situation of the vehicle at the remote location, reducing the operator’s chance of causing collisions, for example.

However, the use of an HWD makes it impossible for the operator to interact with other controls and displays that may exist in the CS because his vision is restricted to the image presented in the closed display. For example, pushing buttons becomes an insecure procedure because the operator is not able to observe their hands and buttons.

On the immersive station, AR is employed in the form of a visual resource presented on the image obtained from the remote location. The objective is to help the operator to keep the UGV sailing in a predefined trajectory, in the sense that, the longer it remains in this trajectory, the less chance of exposing the vehicle to places or situations at risk.

With this feature, the operator simultaneously observes the image coming from the remote vehicle (simulated real world), as well as virtual information, with the image of the virtual world superimposed on the simulated real-world image. Through AR, information is provided about the remote location where the UGV is located and the navigation to be performed. This information takes the form of georeferenced virtual objects presented in the HWD and that seems to be part of the image captured by the camera, that is, the location of the vehicle. These objects (Figure 2) can be of two forms: cone oriented vertically, called “pointer” and the vertical plane, called “wall.” The pointer indicates a reference point in the terrain, a point that is part of a trajectory to be followed or a point to be reached. The wall has the objective of delimiting a region.

Augmented reality content over video feed. (a) The pointers over the path to be followed are shown and (b) the walls showing areas that the UGV should not go are shown. UGV: unmanned ground vehicle.

Unmanned ground vehicle simulator

Connected to the station is a virtual environment consisting of a 3D scenario and a simulated UGV, such as a four-wheel vehicle (Figure 3).

Simulated unmanned ground vehicle. Camera is located on the top of the vehicle, initially pointing forward. In the back, beacons are used for one of the tasks.

This vehicle has been implemented using the OpenGL graphics library, it is able to move forward or backward, turn right and left, and move the camera 45° up or down (rotation relative to the transverse axis), and 90° to the right or left (rotation relative to the vertical axis). In the centralized position, the camera points to the same direction of movement of the vehicle as it is moving forward, parallel to its longitudinal axis.

In the implementation of the simulator, the response time of the servomotor mechanical actuators employed in the UGV was taken into account for the purpose of realism, both to move the camera onboard and to define the direction of the front wheels. In this way, a command to change the direction of the front wheels is issued at a slower speed than the one used in the movement of the joystick itself. Likewise, the speed of movement of the embedded camera is not equal to the speed with which the operator moves his own head.

Communication between the UGV simulator and the control station is performed using sockets. The images obtained from the virtual camera installed in the UGV are compressed using the LibJPEG before being transmitted via TCP/IP.

User study

We performed a user study with the objective of understanding the impact of applying concepts of mixed reality and immersive telepresence on a control station of an unmanned vehicle. This evaluation is important to provide evidence of whether or not this approach is suitable to be used in a real-world setting. The question that we aimed to answer was: How does the use of mixed reality and immersive telepresence influence the operation of an UGV in terms of situation awareness and navigation efficiency?

Experimental design

We conducted our study within-subjects, with the type of control station as the independent variable.

We recruited 11 users with age ranging from 18 to 45 years. Eight participants were graduate students and three were professionals. Nine participants had experienced VR before and had used an HWD prior to this test.

We used a baseline station that does not use an HWD and does not provide virtual artifacts over the video feed to support the user and the proposed immersive control station. The ordering of the systems was counterbalanced to minimize any learning effects. For each system, we conducted a training session on its use and then presented the participant to the main task.

The following dependent variables were analyzed: total steering change (right/left), total steering greater than 10°, average speed, sum of distances traveled, average distance traveled, longest distance traveled, shortest distance traveled, average battery consumption, highest battery consumption, lowest battery consumption, overall total collisions, and overall total contamination.

The situation awareness global assessment technique (SAGAT) 32 was also used. This technique defines that while a given piloting task is taking place, the systems will randomly halt the simulation and perform a series of questions to the pilot. By crossing the information answered by the pilot with the quantitative data gathered, we are provided with an objective measure of the pilot’s situation awareness. The questions asked to the participant are shown in Figure 4.

SAGAT questionnaire. SAGAT: situation awareness global assessment technique.

These questions were created based on both the SAGAT methodology and the works of Steinfeld 10 and Yanco and Drury 33 that deal with human–robot interaction.

Hypothesis

From our research question, we proposed two hypotheses.

We hypothesize that, by providing the operator with an immersive visualization that highlights some of the characteristics of the environment, we can raise the situation awareness. While it is mostly reduced due to the lack of sounds, vibrations, or other sensory information, we believe that enhancing the amount of visual information could compensate for that loss, taking advantage of the predominance of visual information over other human senses.

24

We hypothesize that a higher level of immersion and data provided using mixed reality technologies will also lead to a more efficient performance of the operator while navigating. This means that the environment will be better understood by the operator, who will be able to take the correct choices more often.

Procedure

The participant arrived in the area where the study took place, a private room at the university, used exclusively for this study, and the investigator greeted them. They signed a consent form, which explained their rights and the study. Upon completion, the investigator presented the equipment to them. The participant used a VTV i-Glasses HWD, a CyberTrack II CT-4.0 Sourceless head tracker, and a Genius MaxFighter F-23U joystick.

A training session was performed before the main task. In the 5-min session, the investigator invited the participant to command the remote vehicle to understand how to use the equipment and how the vehicle behaved. During this session, the investigator also demonstrated how the SAGAT technique would occur during the main task, with the display going blank and the investigator asking sample questions to the participant. Training session lasted until the participant was comfortable with the use of the equipment.

Once the participant had understood how to operate the vehicle, the investigator instructed them on the main task, and handed a map of the scenario to them, as seen in Figure 5. The map provided the participant with an idea of the physical structure of the remote location. The starting point, prohibited zones, obstacles, and arrival point were present on it. This map was fixed to the table, where the joystick was located and could be consulted by the participant at any point of the task.

Map given to participants. Starting point located at the center; cluster of barrels on the bottom right; and arrival point on top left.

After explaining all the details of the test, the participant was invited to perform the teleoperation mission. In the case of the traditional station test, the participant wielded the joystick and observed the teleoperation through a monitor placed in front of them. In the immersive station case, the participant wore the helmet with both the HWD and the head-tracker, gripped the joystick, and observed the teleoperation through the HWD. In the latter, the participant was instructed to follow the AR cones and avoid the regions indicated by the virtual walls.

Environment and task

The environment consists of a remote location and a type of mission that can be accomplished by employing a UGV. The two main aspects taken into account in the preparation of the mission to be carried out were the need to have mechanisms to measure situation awareness in its three levels and the measurement of navigation efficiency. For this, it was considered that the simulation should generate situations in which the operator could: Navigate on the remote location; Observe the remote location; Understand what is happening at the remote location; Predict what happens next.

Therefore, we designed an environment that consisted of the locations shown in Figure 5. In this environment, the participant had to perform five consecutive tasks:

Navigate and find a cluster of barrels;

Find and count the leaks (shown in Figure 6);

Navigate and find the beacons;

Contour the beacons (shown in Figure 6);

Find the arrival point.

Obstacles that were used on tasks. (a) User had to contour the beacons and (b) count the leaks on the barrels.

The operator of the UGV should not collide the vehicle with the obstacles and should take it to the point of arrival, a place where the vehicle can be picked up. To measure the level of situation awareness at its highest level (level 3—predict future events), two leaks may end up blocking a closed region formed by the barrels and, if the operator does not notice, they will not be able to leave this closed region without contaminating the vehicle, as can be seen in Figure 7. The simulation has a time limit of 60 min, which is related to the battery capacity set for the virtual UGV.

Toxic leak. Operator will not be able to leave the region without contaminating the vehicle if they do not notice the leak spreading.

Results and discussion

We present our results and discussions based on our hypotheses. The first refers to the effect of using the immersive system in navigation efficiency. The second refers to the effect of using the same system in the situation awareness of the participant. The data consist of quantitative measurements and questionnaires, as explained in “Experimental design” section.

Navigation efficiency

The evaluation of navigation efficiency was based on the data generated by the simulator. In this evaluation, we analyzed the graphs generated from the data, all of the variables that described the performance of the vehicle and the operator, and the trajectories described by the remote vehicle in the accomplished missions.

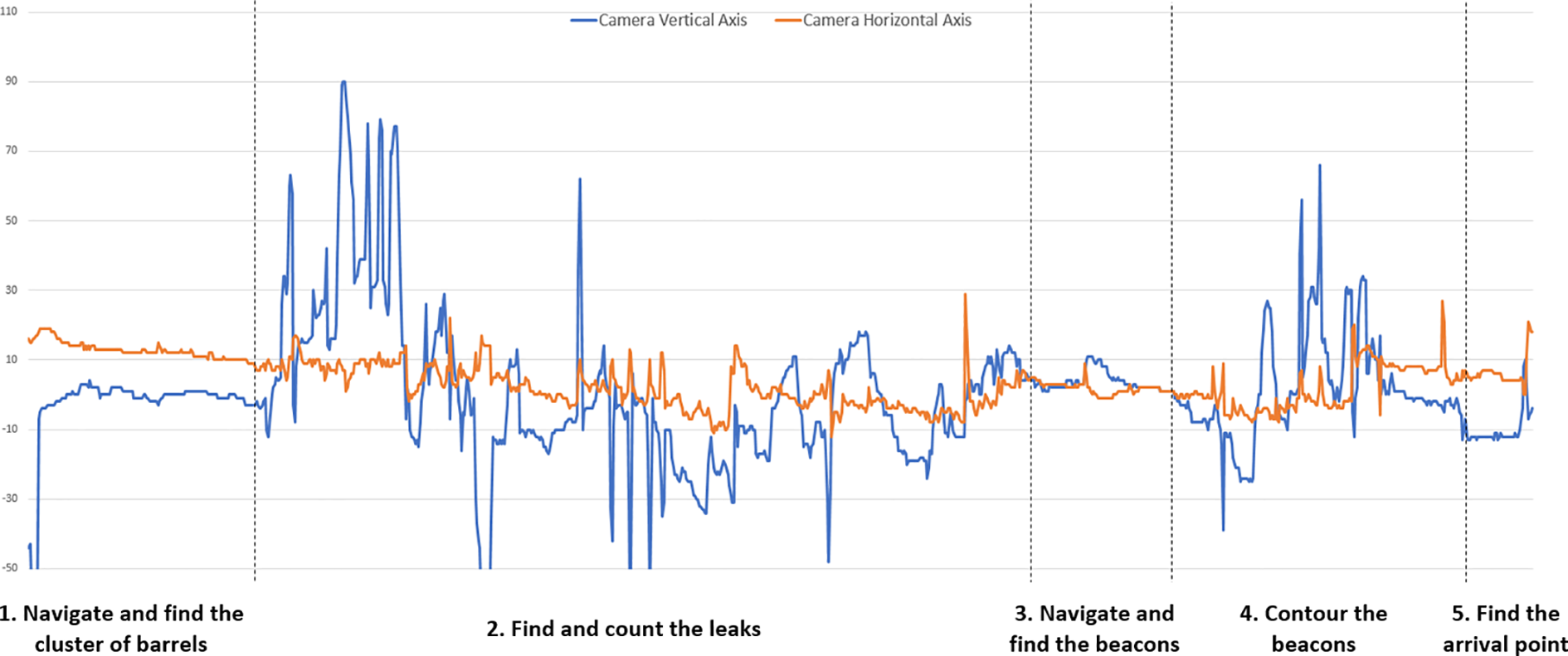

As a result of these analyses, behavior patterns and performance trends were identified. A behavioral pattern related to the employment of the immersive station was identified that explained the relationship between the tasks that had to be performed and the use of both the camera on the UGV and the joystick. The intensive use of these resources was evidenced in two specific moments of the mission: the search for leaks and the contouring of beacons.

This intensive use can be observed on the graph, in Figure 8, which presents the data of a user in the various moments of the mission. On them, the greatest use of the camera is evidenced by the biggest variation of the values of their rotation angles during the tasks: navigate and find the cluster of barrels, navigate and find the beacons, contour the beacons, and find the arrival point. This indicates that a user on a traditional station will face a greater difficulty to perform these tasks since he will be obligated to rotate the whole vehicle instead of the camera.

Changes in rotation angles of the camera during the five tasks in the immersive station (the dotted lines divide each task).

On the other hand, in tasks in which navigation by a planned path predominates (navigate and find the cluster of barrels, navigate and find the beacons, and find the arrival point), one can perceive a lower movement of the camera onboard. This occurs because the operator is more interested in walking a certain path, than in locating some object or navigating more precisely due to obstacles in the terrain. This indicates that in missions which navigation of the “go through a planned path” type predominates, using only a fixed camera attached to the vehicle, as in the traditional station, could allow the mission to be carried out satisfactorily.

With regard to the trajectories described by the remote vehicle, observing Figure 9 as an example, we can see a greater tendency to concentrate on the trajectory when the vehicle is operated by the immersive station. This can be an effect of the use of AR resources: in this case, the cones indicating the path to be covered. If navigational accuracy is one of the safety items in the mission, this greater concentration of trajectory may indicate greater safety of operation, provided that the operator of the remote vehicle obeys the virtual element indicators.

Comparison between the trajectories using the immersive and the conventional stations. Immersive station shows a less spreadout result. (a) Immersive station and (b) tradition station.

There are also other aspects that differentiate the two sets of data. The first of them is that only with the traditional station, there were invasions of prohibited areas. This is another indication that the immersive station can increase teleoperation security. The second aspect is related to the case of two participants that were lost using the immersive apparatus, even with mixed reality resources, a fact that did not occur using traditional station. One possible cause of this situation is the combination of two factors. The first is the inherent difficulty of navigating with the UGV in one direction while observing the remote location in another direction, a situation that may confuse the operator. The second factor is that, depending on the direction from which the operator is observing the remote location, the mixed reality resources may not be displayed in the HWD, causing the operator to forget the instruction to follow the cones, inducing a sensation of disorientation when they also do not know what task to perform. One possible solution would be for the operator to be constantly reminded that mixed reality features are available. Periodic presentation of mission data, such as a map, a list of tasks, and tasks by region, could also help.

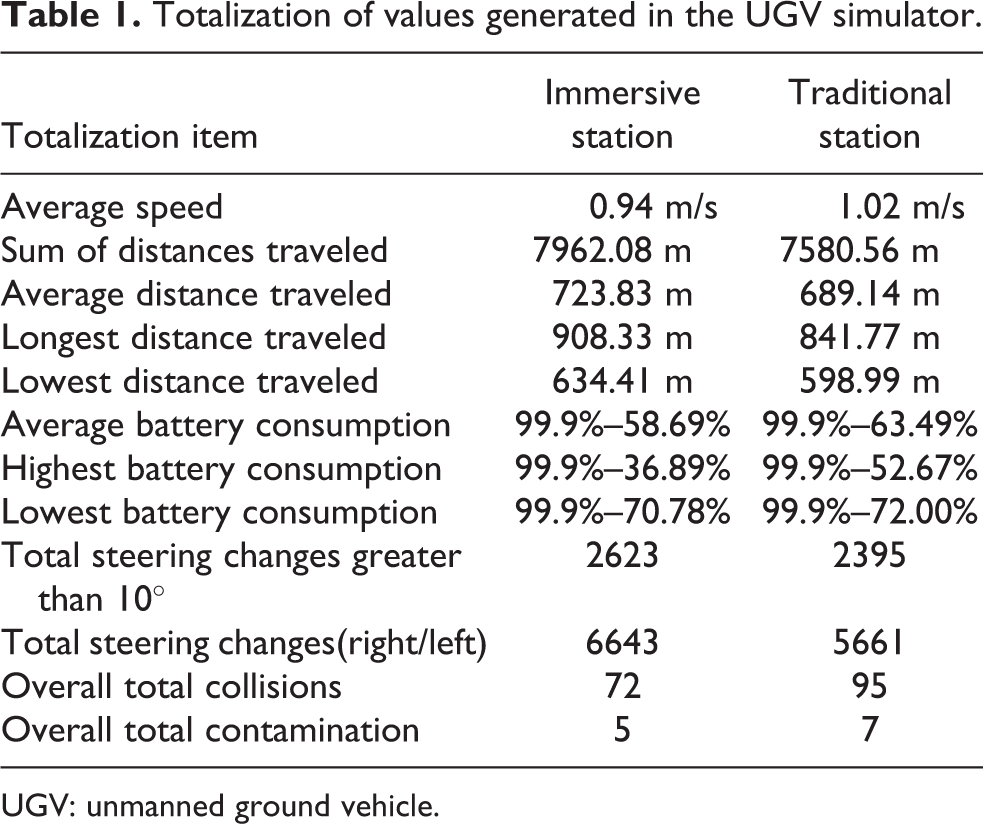

Four participants using the immersive station solved the question of search for leaks by walking in circles near the cluster of barrels (Figure 10), which required constant head movement, somewhat simpler when using an HWD. This circular movement was done until the participant was certain about how many leaks he was getting. Thus, these participants avoided entering the vehicle through the barrels, which could increase the chances of collision and contamination. In any case, they were able to count the number of leaks with a functionality that only the immersive station provided, which indicated that it could perform better in search tasks. Finally, the summation of the values, presented in Table 1, showed that traditional station tends to present better overall performance when its use is related to navigation. However, when what is being considered is the performance related to performing maneuvers during the execution of specific tasks, it is evident that the immersive apparatus tends to be of greater utility. This division of advantages between them is reinforced by the fact that traditional station has performed better in all aspects related to navigation efficiency.

Four participants solved the problem by doing a circular navigation. On top left of each map, the participant circles the cluster of barrels to avoid leaks. (a) Participant 1, (b) participant 2, (c) participant 3, and (d) participant 4.

Totalization of values generated in the UGV simulator.

UGV: unmanned ground vehicle.

Situational awareness

In the execution of the trials, a different amount of SAGAT questionnaire applications were randomly generated. The data of these questionnaires were filtered according to the applicability of certain questions to certain situations of the simulation.

Although the situational awareness results are similar for both stations, as can be seen in Table 2, it is also possible to note that there are more hits from the traditional station when the questions are related to navigation and guidance. This can be observed in the results found from questions 5, 6, 7, and 8. However, when the questions are related to the observation and the execution of searches, the greatest number of hits falls to the immersive station, as can be observed in the results found from questions 9, 10, 11, and 12. Also, regarding the use of the SAGAT method, corporal expressions of tiredness and discontent were observed on the participants from a number of times in which the execution of the teleoperation mission was interrupted for the application of the questionnaire. One of the probable causes is that the questionnaire required from the participant is a repetitive effort of reasoning because, with each interruption, he had to strive to remember the situation in which the vehicle and the mission were a moment before the teleoperation was interrupted.

Totalization of the values related to the situation awareness.

It was also evidenced that this method causes a great variation in the time of application of the test in each participant, since each one can take the time that is necessary to answer the questions of the questionnaire in several times in which the simulation is stopped, and the questionnaire is applied.

Conclusion and future work

This work addressed the issue of teleoperation of unmanned vehicles. It was proposed and evaluated a control station configuration that is characterized by the simultaneous use of mixed reality and immersive telepresence.

For real applications, where it is possible to obtain the vehicle position and a video stream, both in real time, and the user can use an AR helmet, it is possible to use the same evaluation strategy of this work to compare the efficiency of use whether or not AR and the efficiency of a helmet with a tracker for observation operations.

The strategy of evaluating the performance of this control station was to compare it with a baseline that presents a so-called traditional configuration. In this comparison, we analyzed the efficiency of navigation performed with the vehicle and the level of situational awareness that each type of station generates in the operator.

For the user study, a UGV simulator was built. This simulator is characterized by interacting in real time with the control station, generating images similar to those that would be seen by the operator at the control station if he were using a real vehicle, capturing images from a real remote location. Through this simulator, it was possible to create a teleoperation mission similar to a real situation in which the operator may experience difficulties similar to those present in a real employment situation.

The navigation efficiency was measured by analyzing the data generated by the simulator. To do so, this simulator continuously recorded the value of variables that describe the operator’s actions and the resulting behavior of the vehicle. The situational awareness of the operator was measured by the SAGAT method, which interrupts the simulation with a random frequency and collects from the operator data that seeks to evaluate the instantaneous and near future mental model that this operator has of the general teleoperation situation.

In a real setting, the same software infrastructure can be used to assess the performance of the vehicle’s controller.

The analysis of the collected data showed that the traditional station can perform well in navigation tasks. The immersive station has proven to be more advantageous at times of teleoperation, where a greater capacity of observation of the remote site is required during the execution of tasks other than navigation. This work can be used as a base for research related to teleoperation, such as the evaluation of different configurations of control stations and vehicles, simulators of unmanned vehicles, and data communication. On the application side, this work can be used in the construction of land inspection environments, such as industrial plants or areas of difficult access affected by conflicts or natural disasters.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This word is partially funded by Brazilian Coordination for the Improvement of Higher Education Personnel (CAPES).