Abstract

A lack of Situation Awareness in maritime navigation has been shown to be a reoccurring contributory cause of vessel collisions at sea. Vessel navigation and operational tasks have progressively moved towards screen-based interactions and monitoring, leading to increased navigator Head-Down Time and decreased navigator performance. Augmented Reality enables new and different opportunities to display operational data, which may reduce Head-Down Time and better support navigator Situation Awareness. However, research and development of Augmented Reality solutions in maritime operations are still in the preliminary stages. This paper presents a multidisciplinary approach towards developing and testing Augmented Reality solutions for maritime applications. We describe a methodology for investigating the effects of Augmented Reality on human performance during maritime navigation scenarios and how this data feeds back in an iterative design and development process using a user-centred approach for maritime digitalization and new technology initiatives.

Keywords

Introduction

Maritime operations are complex safety-critical activities carried out by highly skilled workers located both onshore and offshore. The responsibility and organization of seafarer positions and shipboard tasks are divided across differing functional areas and levels of responsibility in a structured framework (IMO, 2011). Overall, maritime navigators are responsible for the safety of their vessel, the crew and the environment (GOC, 2021). Maritime navigation is highly codified, with navigation officer training and competency requirements regulated and standardized through international codes. This includes knowledge of the standardized “rules of the road”, not unlike automobile driving regulations, for how to operate a vessel in varying environments and traffic situations (IMO, 1977; 2011).

A good navigator, like a good automobile driver, must constantly monitor their surrounding environment and its’ elements to make informed decisions for ensuring safety, operational efficiency, and ultimately avoiding unwanted events, such as collision or grounding. Contemporary maritime navigation tasks are aided through differing electronic and automated systems, such as RADAR; autopilot, GPS and electronic charts, however the practice of navigation still requires fundamental knowledge and skills relevant to navigators from decades and centuries past.

Highly sophisticated systems allow for increasingly automated navigation, leading to more passive monitoring tasks. Navigation training and best practices continue to stress the importance of human navigators having adequate visual coverage of their external environment (Hareide, 2018; IMO, 1977). Simply put, ensuring one remembers to look out the window. Visual coverage and observation of the area surrounding the ship continues to be a critical aspect of safe navigation in order to make a full appraisal of the operational situation and risk of collision (van Dokkum, 2011).

Despite vast improvements made in maritime safety over the past decades mainly attributed to advances in technology, regulation and training, accidents at sea continue to occur (Allianz, 2012). Between 2012 and 2021 892 vessel losses were registered globally (Allianz, 2022). Sampson et al. (2018) found that approximately 25% of collisions analysed could be attributed to “inadequate lookout” in a review of maritime accidents between 2002-2016. Investigations of vessel collisions at sea have found that contributory root causes involved a lack of navigator Situation Awareness (SA) (de Maya & Kurt, 2020; Jaram et al., 2021) including where navigators failed to properly perceive the situation in the time leading up to the collision (Sandhåland, 2015).

As more systems became installed within the bridge work environment to improve operational efficiency and functionality, the Head-Down Time (HDT) of navigators looking down at consoles and screens began to increase. Correspondingly, Head-Up Time, where navigators are looking directly out at their surrounding environment, reduced. Hareide & Ostnes (2017) reported that maritime navigators can spend more than 50% of their time looking down at the instruments within a bridge during active navigation tasks. While occasionally looking down at differing instruments, such as a RADAR or electronic charts, can help build SA, too much HDT during operational activities is detrimental (Liu & Wen, 2004).

Inadequate work environment design and poor equipment usability that lacks a user-centred approach have been shown to be contributory root causes to accidents at sea (Nordby et al., 2019; USFFC, 2017). Increased time spent monitoring and interacting with systems located on physical consoles and screens rather than what is happening outside the vessel has been shown to distract navigators and lead to collisions and deaths at sea (Mallam et al., 2020). Thus, investigating differing solutions to combat excessive HDT may facilitate improved SA, operator performance and vessel safety in maritime operations.

Scope of Work

As part of the exploration to reduce HDT and improve navigator SA, the application of Augmented Reality (AR) and Head-Up Displays in maritime navigation is an under-explored area we are currently investigating. AR can be defined as allowing “the user to see the real world, with virtual objects superimposed upon or composited with the real world” (Azuma, 1997). AR can be presented across a variety of mediums, for example, head-mounted wearables, integration into handheld mobile phones and tablets, or traditional screen-based overlays (e.g. CCTV, live video feeds, static images, etc.).

AR development and application has shown potential across differing domains, including transportation, medicine, military, manufacturing, education and general consumer electronic and entertainment products (Woodward & Ruiz, 2022). Hareide & Porathe (2019) state that one of the potential benefits of AR in maritime navigation specifically is the shortening of HDT due to opportunities to place and access information in differing locations besides the traditional screen-based systems. However, there are still gaps in the understanding and deployment of AR which is a relatively novel technology, particularly in the maritime domain.

Several studies have shown that AR increases user’s workload by providing too much information (Woodward & Ruiz, 2022). Kim & Gabbard (2022) found overlaying AR data can impair a user’s Field of View (FOV). A clear FOV is essential for transportation sectors that rely on operators’ visual coverage and assessment of their surroundings in order to make decisions. Furthermore, in automobile drivers, Kim & Gabbard (2022) report that AR overlays lead to higher levels of distraction.

In the maritime domain, AR solutions currently have little real-world uptake and are still in the early stages of research and development (Laera et al., 2021). There are no standards or best practices for adopting AR for maritime use, whether in design, training or operations (Nordby et al., 2020). Different AR concepts for maritime applications remain untested, and largely in conceptual phases in order to demonstrate and visualize the future potential of the technology (Gernez et al., 2020). Additionally, there is a lack of human performance testing of maritime AR concepts, both at the individual and team levels. Limited empirical data exists on the effects of AR on operator SA (Kim & Gabbard, 2022), as well as the known and unknown risks and benefits of utilizing AR across differing applications.

This paper presents a multidisciplinary approach towards developing and testing Augmented Reality solutions for maritime navigation applications. We describe a methodology for investigating the effects of an AR Head-Up Display of maritime navigation information on human performance during differing simulated maritime navigation scenarios. We further describe how this human performance data feeds back into the design and development process in a user-centred approach for maritime digitalization and new technology initiatives.

Ar Solutions For Maritime Navigation

A fundamental advantage of using AR in maritime navigation involves the ability of an operator on a vessel’s bridge to visually assess their surroundings whilst still being supported by data presented via AR which would typically only be found in traditional screen-based systems located on physical consoles (Grabowski et al., 2018). This theoretically reduces the amount of time required for a navigator to extract relevant information from a traditional screen-based system while still maintaining good SA, thus decreasing HDT and increasing time looking outside at their surrounding environment (Wilkins, 2018).

Holder & Pecota (2011) found that a Head-Up Display within a vessel’s bridge reduced perceived navigator Head-Down Time. The navigators in this study also expressed a preference for the Head-Up Display solution over the traditional system but noted the potential for distraction. Rowen et al. (2019) collected empirical data supporting this perspective finding that operators using head-mounted AR devices in maritime navigation tasks had lower responsiveness. AR has been found to increase navigator SA and performance, but also increases their workload (Hong et al., 2015; Rowen et al., 2019).

Several examples of the application of AR solutions in the maritime domain currently exist, with information incorporated for maritime navigation typically limited to basic navigation data, including heading, speed, compass, route waypoints and danger area identification (Laera et al., 2021; Takenaka et al., 2019). Specialized applications for maritime navigation AR have also been developed, including for ice navigation (Frydenberg et al., 2021; Okazaki et al., 2017), high-speed vessels (Hareide & Porathe, 2019), and recreational boaters and pleasure craft operations (Porathe & Ekskog, 2018). The majority of the focus of maritime navigation AR applications is on data and visualization that relate to general wayfinding and anti-collision. However, Laera et al. (2021) note that most of the maritime AR solutions they reviewed were untested and the technology readiness levels for real-world deployment of the solutions were currently low.

New Technology Design & Integration – Our Approach

A review of the current body of literature reveals that one of the barriers to unlocking AR’s full potential is the lack of integration with existing systems onboard vessels, including a common design framework (Gernez et al., 2020). The majority of vessels are designed, tendered and built within a multi-vendor framework, meaning that the differing systems found on a bridge and throughout a vessel are supplied by different vendors that have varying design approaches and solutions. This can introduce conflicts in design across divergent operational systems, including inconsistencies across graphical user interfaces, unintuitive usability, poor information access and flow, which all ultimately can have negative effects on end-user performance (Nordby et al., 2019). Laera et al. (2021) comment that the relatively small amount of maritime AR solutions currently realized is most likely due to the difficulty of integrating new technologies into complex legacy systems.

New technology integration cannot be viewed as adding new solutions “on top” of existing systems. Rather, a more holistic approach to the process of system design, organization and integration is necessary to manage and mitigate known and unknown effects of evolving and modernizing work systems. The move towards digitalization and new technologies in maritime systems have had an overwhelmingly positive effect on the domain, however, if implemented improperly has negative effects on navigators and system safety (Mallam et al., 2020).

Grabowski (2015) presents a conceptual model for investigating wearable head-mounted AR, noting the need to take a multidisciplinary approach in evaluating the technology within the context of task-based, simulation-based and shipboard environments. Nordby et al. (2019) created a common, open framework for maritime equipment graphical user interfaces to fill a gap in maritime equipment design guidance. As an extension of this, our work is exploring how this design framework could be extended to the new medium of screen-based and head-mounted AR, for which there are currently no maritime standards or design guidelines (Nordby et al., 2020).

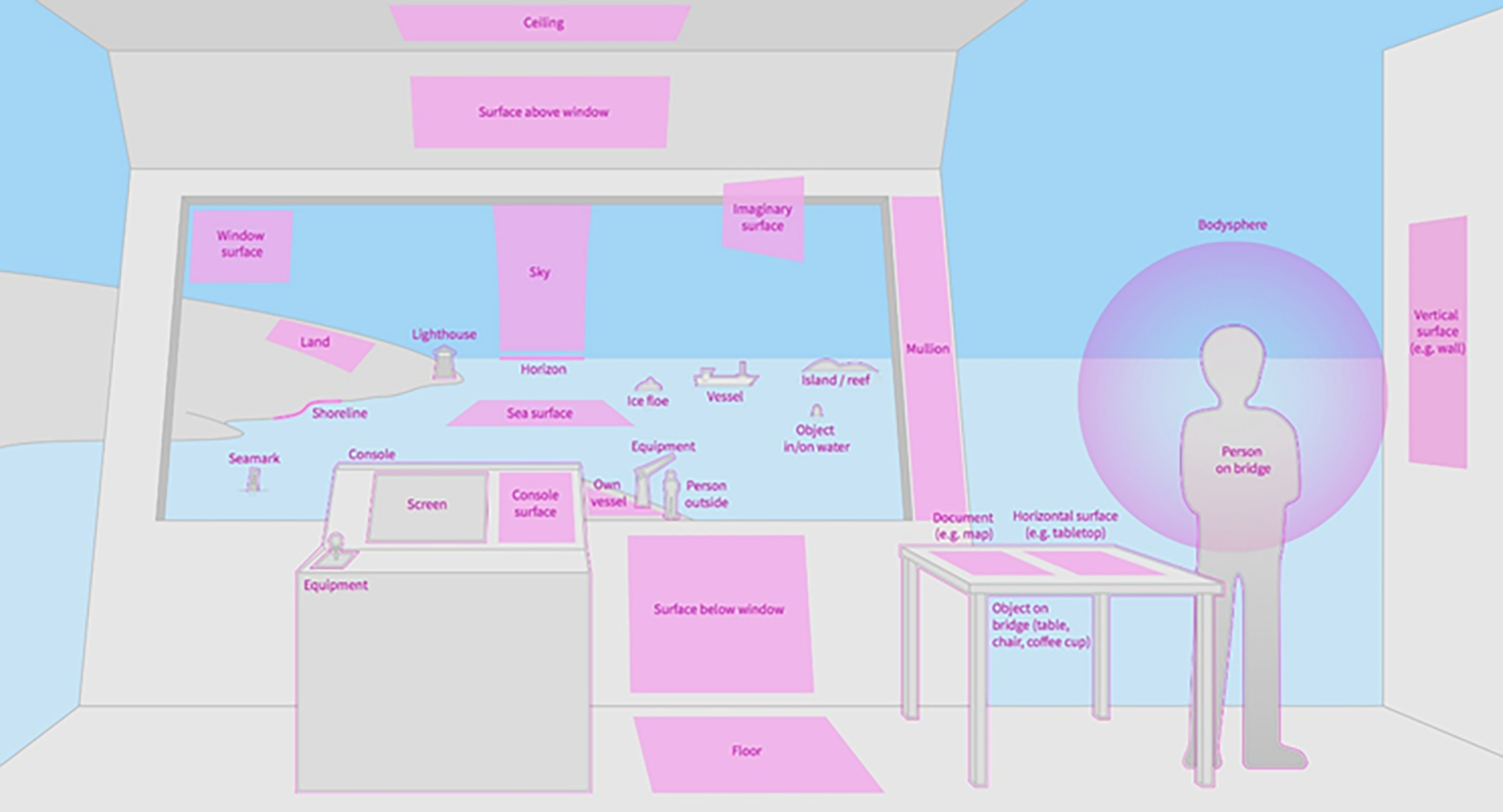

The design of an AR interface is crucial to ensure that its benefits are realised and should be based on a user-centred approach to avoid common risks of AR implementation, such as cognitive overload, distraction and visually blocking elements in the real world. AR creates new opportunities to place information outside of the traditional console and screen restrictions. Each function can be represented in different display formats to maximise the specific strength of a particular format for differing data and visualization across varying types of work tasks and operations (see Figure 1).

Mapping differing areas (both internal and external to the bridge/ship) where digital information can be placed using AR.

Our development process follows user-centred design guidelines (ISO, 2019) and is organized around case-based scenario development. This process is designed to capture relevant use cases and define areas where AR could add value to differing aspects of maritime operations. First, differing use cases are generated through SME inputs and stakeholder interests for potential solutions to known issues in specific operations, for example ice-breaking (Frydenberg et al., 2021) and Dynamic Positioning (Carho et al., 2022). From these initial data collections and scoping work fundamental knowledge is captured and organized relating to user and operational needs (Mallam et al., 2021). AR demonstrators are then developed to showcase and explore AR implementation across differing work tasks. These AR demonstrators are used as a platform to test and refine the designs through a multi-method user-centred process to provide inputs for further iterative developments.

Additionally, this work contributes to the development of user interface architecture and design guidelines to support future AR development for both a minimum level of quality, as well as a level of standardization across AR applications and navigation systems.

Head-Down Time, Head-Down Occurrences & Situation Awareness

Our initial approach for empirical human performance testing focuses on the basics of what constitutes best practices for navigation: ensuring that navigators are looking out the window at their surrounding environment (IMO, 1977). Thus, in order to have a fundamental understanding of how AR impacts maritime navigation we first investigate the effects of AR on navigator:

(i) Head-Down Time: total time spent looking down at navigation instruments) in relation to time spent looking out the bridge window

(ii) Head-Down Occurrences: number of times a navigator moves their eyesight between a desktop console screens (i.e. navigation instruments) and looking out of the bridge window

(iii) Navigator Situation Awareness of a specific traffic scenario

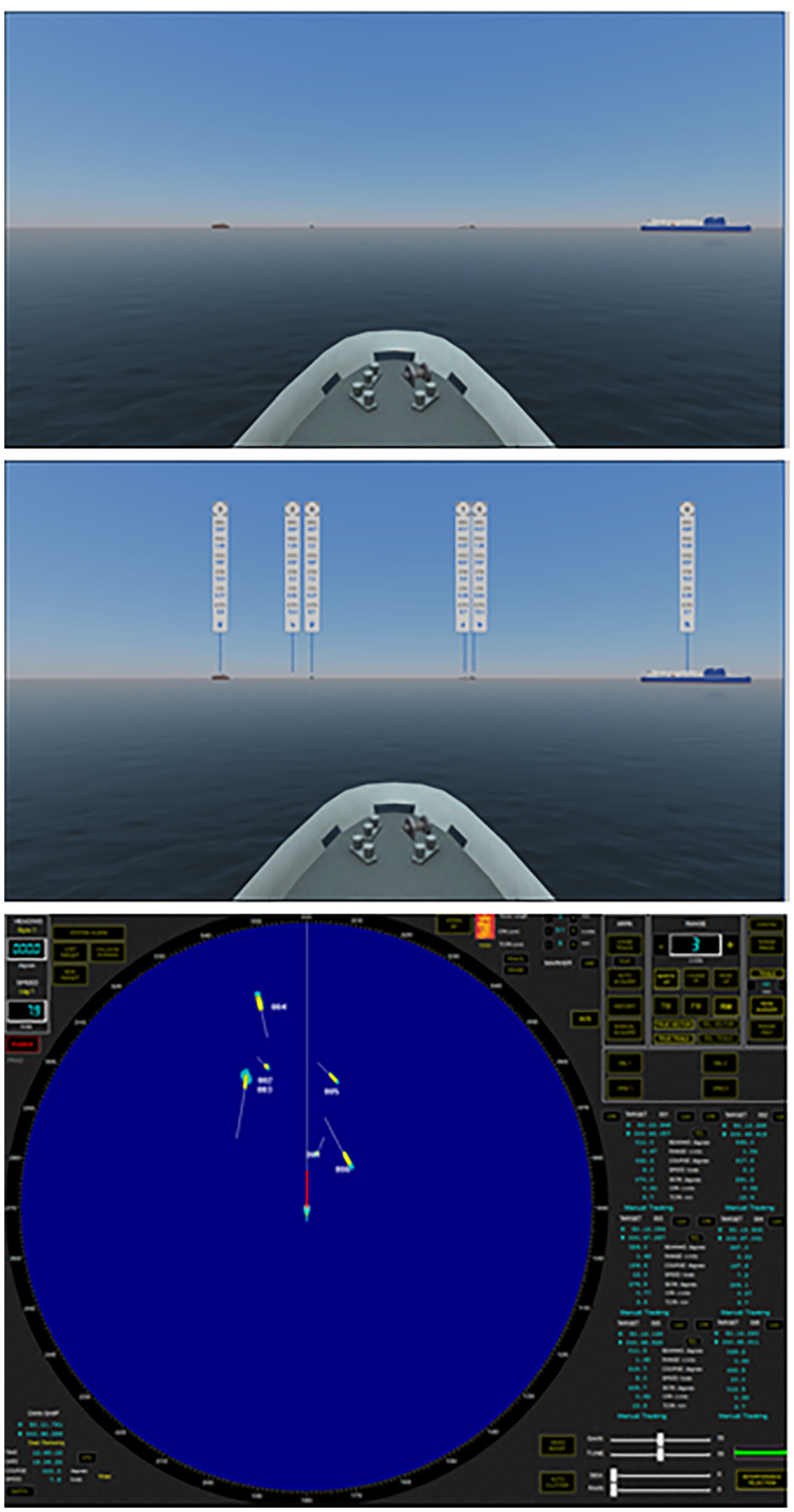

In order to measure these variables twelve static navigation scenarios were created where trained navigators are asked to perceive the situation and report back information they gather through a RADAR plot and corresponding own vessel bow view presented on a 3D maritime training simulator. This setup was intended to recreate part of the bridge working environment used for active navigating, which includes an external visual (i.e. looking out of the bridge window at the surrounding external environment of one’s ship) and a console-based RADAR plot, presented on a desk, thus requiring the participant to look down at it the same way a navigator would onboard a bridge (see Figure 2).

Lab set up where participants sat with bridge bow view of ship on screen (left), corresponding RADAR plot (centre) and video recorder.

Six scenarios included an AR overlay on the bridge bow view with relevant navigation-related information of surrounding vessels’ status (experimental group) and six scenarios with only the bridge bow view with no AR overlay (control group) (see Figure 3). The AR interface provided the same essential information as the RADAR, including: ship target number; distance; bearing; course; speed through water; Closest Point of Approach; Time to Closest Point of Approach. In both the control and experimental scenarios participants had access to the corresponding traditional console-based RADAR plot. Each scenario was set to a standardized 60-second time period for participants to appraise the traffic scenario. Scenarios were designed to have similar, but not identical traffic scenarios, having between 5-7 vessels, with each vessel being uniquely identifiable visually. The completion of each navigation scenario was followed by two SA measures (SAGAT and SA-SWORD questionnaire).

External bow view of own ship with traffic of six other ships in view (top); identical traffic situation with AR overlay of relevant navigation information (middle); corresponding RADAR interface of traffic situation (bottom).

All scenarios were visually recorded for post-hoc analysis of the amount of time each participant spent with their head down looking at the RADAR screen compared to looking out the bow view of the ship. As each scenario was standardized at 60-seconds a comparison can be made between the ratio of time a participant spends in head-down and head-up positions (Total Time = HDT+HUT). Data outputs provide a percentage of time used and compared between having AR information compared to no AR information across each 60-second scenario which is then compared to their SA scores. Furthermore, Head-Down Occurrences measure how many times the navigators transitioned between the head-down position to the head-up position.

Additional insights from participants regarding their experiences attaining SA of differing navigation scenarios with and without the AR solution are gathered through semi-structured interviews. This provides descriptive feedback and helps contextualize the empirical data, while also providing data for ways to improve the design and deployment of AR in maritime navigation.

Conclusions & Future Work

As introducing new technologies into pre-existing systems poses foreseen and unforeseen impacts, a more holistic and user-centred approach to how systems are organized helps mitigate unwanted effects when and if new technologies, procedures and practices are applied in the real-world. Empirical investigations into human performance with and without AR support in maritime navigation will help the domain better understand its’ effects on maritime navigation. Data can then be fed back into the design process and iterated for more effective application within a user-centred design cycle. These outputs have relevance not only for designers of AR equipment, but also the larger network of relevant stakeholders in the maritime industry, including maritime equipment manufacturers, training and education institutions, operators, ship designers and builders, regulators, including the larger Human Factors and wearables communities.

Footnotes

Acknowledgements

This work was partially funded by the Research Council of Norway through OpenAR: Framework for Augmented Reality in Advanced Maritime Operations (project no. 320247) and OpenVR: Next Generation Virtual Reality for Human-Centered Ship Design (project no. 310043).