Abstract

In this article, an application for object segmentation and tracking for intelligent vehicles is presented. The proposed object segmentation and tracking method is implemented by combining three stages in each frame. First, based on our previous research on a fast ground segmentation method, the present approach segments three-dimensional point clouds into ground and non-ground points. The ground segmentation is important for clustering each object in subsequent steps. From the non-ground parts, we continue to segment objects using a flood-fill algorithm in the second stage. Finally, object tracking is implemented to determine the same objects over time in the final stage. This stage is performed based on likelihood probability calculated using features of each object. Experimental results demonstrate that the proposed system shows effective, real-time performance.

Introduction

Object tracking is a multimedia application that is widely applied in intelligent vehicles 1–2 such as autonomous robots. For each autonomous robot, we often employ one or more multichannel laser sensors. In each frame, the laser sensor scans the surrounding environment and returns a point cloud. Using the object tracking system, robots can sense their environments and automatically perform particular tasks without human engagement. From the tracking results, autonomous mobile robots can detect their surroundings, identify navigation paths, distinguish traversable ground regions from impassable obstacles, and identify relevant areas. To enable successful navigation in outdoor environments, segmenting from a three-dimensional (3-D) point cloud is important. Object segmentation is a preprocessing step for detecting and tracking moving objects. Many studies on object segmentation have been conducted in recent years. However, these methods tend to be affected by certain factors, such as terrain conditions, while having long processing times.

Hernández and Marcotegui 3 presented a ground segmentation method that only works well with flat terrains; it is ineffective for non-flat terrains. Besides, Moosmann et al. 4 proposed a segmentation approach using graphs and local convexity features to solve the segmentation problem in non-flat terrains. Generally, this approach is simple and easy to implement; however, it is also ineffective at handling rugged terrains. Furthermore, in some cases, point losses occur, which cause difficulties in graph building and defining local convexity features. In addition, Douillard et al. 5 proposed a segmentation method by combining some segmentation methods including cluster-all, base-of, Gaussian Process Incremental Sample Consensus and mesh-based for both sparse and dense 3-D point clouds. Nevertheless, the approach is not easy to implement in real time.

Furthermore, Bogoslavskyi and Stachniss 6 presented a fast and easy segmentation approach with small computational demands. The method is based on the angle between two laser beams. Moreover, Himmelsbach et al. 7 proposed a method that is performed on occupied grid cells. However, neither of the two above methods is effective when the terrain contains many objects with various shapes, such as trees, vehicles, and buildings.

Although significant improvements have recently been made in this research area, problems remain with respect to object segmenting, detecting, tracking of moving objects, and so on. Hence, Manh and Lee 8 presented an object segmentation method based on band-pass filtering and a Gaussian mixture model (GMM). This method segments small-sized objects and determines the object regions in a 2-D image. The results showed efficiency in object segmentation; nonetheless, the method cannot be applied for a multichannel range sensor in a 3-D environment.

Meanwhile, several methods using speeded-up robust features for object detection with mobile robots were introduced. 9,10 Their methods showed good results for object detection through the massive descriptors extracted from each image. However, these methods have issues relating to a high computational complexity while processing a large amount of data.

In addition, Truong and Kim 11 proposed an object tracking method using a particle filter through a condensation algorithm to track object movement. They addressed the issue of high-complexity computation by applying parallel programming. Their approach showed a new means of handling difficult problems in terms of performance and time consumption. However, this algorithm is not easily applied in real situations on account of its weakness in addressing light conditions.

Lee et al. 12 presented an object tracking algorithm similar to that proposed by Truong and Kim 11 using a small region. They proposed a “home alone fainting” detection system by combining a Kalman filter and a continuously adaptive mean shift (CAMshift) algorithm using thermal imaging cameras. Basically, their algorithm produces a good effect in tracking moving persons; however, it has difficulty producing good results in complicated environments and it cannot be applied in real time. In other more sophisticated processes, such as for driving assistance and autonomous driving, detecting and tracking moving objects is indeed a fundamental component. In particular, detection of the moving objects is an important step that must be handled before implementing tracking. The difficulty of tracking of moving objects is separating moving objects and associating in real time the detection corresponding to the same object over time.

The remainder of this article is organized as follows. The second section presents related works. In the third section, the proposed object segmentation and tracking algorithm approach is presented. The fourth section summarizes the results from experiments. Our conclusions are presented in the fifth section.

Related work

Today, technology has changed almost all aspects of our lives. In particular, the technology of autonomous mobile robots that operate without human intervention has rapidly advanced. Many studies conducted in the last few years have focused on detection and tracking of moving objects (DATMO) problems. 13 –16 Various research groups have proposed techniques for handling DATMO in other techniques. We roughly categorize these techniques in relation to our present research as outlined below. The categorization is based on the type of sensors in these studies.

Hwang et al. 17 presented a DATMO framework based on a color camera and 3-D light detection and ranging (LIDAR). They increased the speed of their framework by implementing a fast segmentation method. Basically, their approach is efficient in some cases in practical environments; however, it is not efficient in real time on account of its high computational complexity compared to iterating ground plane estimation by using both 2-D and 3-D information. In their work, Wang et al. 18 proposed a DATMO method based on a Bayesian formula. That method is effective in almost all terrains, while accurately detecting and tracking dynamic objects at high speeds. Zhang et al. 19 proposed a method by combining a multiple hypothesis tracking (MHT) algorithm and dynamic point cloud registration to handle dynamic environments. The algorithm shows good performance; nevertheless, it is not adequate for real-time speed and accuracy.

In addition, Monteiro et al., 20 Mahlisch et al., 21 Premebida et al., 22 and Spinello and Siegwart 23 proposed methods for respectively tracking vehicles and pedestrians tracking. Both methods employ an approach fusing LIDAR measurements with vision outputs of sensors. Meanwhile, Monteiro et al. 20 presented a method that employs a single-layer LIDAR and a monocular camera to detect, track, and classify objects. Furthermore, they applied a GMM classifier and an AdaBoost classifier for object classification. Mahlisch et al. 21 presented a method focusing on cross-calibration of two sensors for vehicle tracking. Premebida et al. 22 used a multilayer LIDAR and a monocular camera for pedestrian detection. The algorithm showed improved accuracies compared to existing methods.

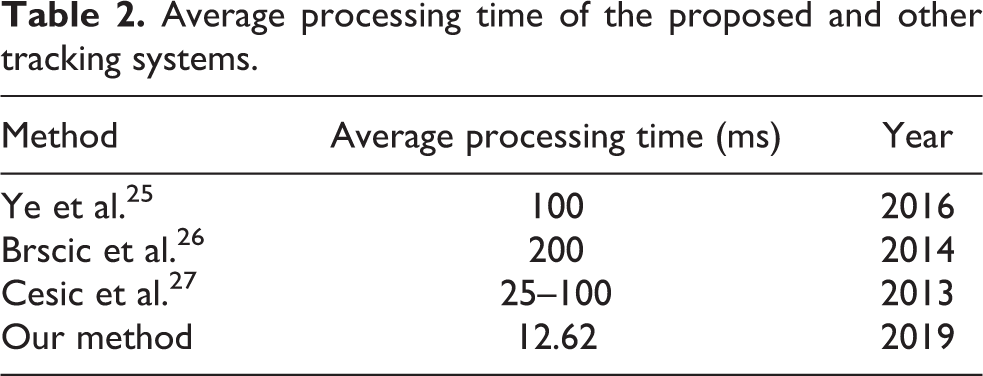

Spinello and Siegwart 23 used a multilayer LIDAR for pedestrian hypotheses detection for which they applied a vision-based pedestrian detector. In addition, Hernández et al. 24 presented an object detection method by combining a support vector machine (SVM) algorithm for classification while using Red-Green-Blue (RGB) and depth images as the system inputs. The method can detect and locate objects. However, it cannot be applied in outdoor environments; it can only be used in static environments. In the works of Ye et al., 25 Brscic et al., 26 and Cesic et al., 27 several methods were presented for tracking object movement using the same type of multichannel laser sensor used in the present research. The methods quality showed good quality; however, their processing times were not adequate.

Most previous DATMO methods have had difficulty in real-time applications owing to a high computation complexity. They are not efficient in an application to autonomous mobile robots in outdoor environments. Therefore, the above limitations motivated us to develop an approach that combines three stages to achieve a higher quality and performance. In this method, we apply an enhancement of the ground segmentation method proposed by Chu et al. 28 as the first stage to separate ground and non-ground parts. We then continue to implement high-accuracy object segmentation from the non-ground parts. At this stage, we apply a flood-fill algorithm to segment each object in non-ground parts. The final stage of the detecting and tracking of moving objects is detailed in the third section. We employ the Point Cloud Library 29 to visualize the results.

Proposed method

The main purpose of our study was to design a real-time object segmentation and tracking system. The system framework is illustrated in Figure 1. The input of our system is 3-D global point cloud data received frame by frame. In the first stage, each frame of the point cloud data is segmented into two groups: ground and non-ground points. The first stage is divided into three smaller steps. The stage is adapted and improved from our previous study. 28 In the second stage, the fast object segmentation algorithm is applied to divide non-ground points into separate objects. From the list of objects in the current frame, we apply our object tracking algorithm in the third stage. Finally, a list of tracking objects is obtained and used for processing in subsequent frames.

Overview of the proposed object segmentation and tracking system.

Ground segmentation

In the ground segmentation stage, we adapt and enhance the results of our previous research. 28 First, the single-frame data are processed based on features in the vertical direction, including the gradient, missing points, and abnormal distance between the sensor and a point. In this step, we divide all points into vertical lines. All lines begin with a ground point at the sensor location and end at the points in the furthest scanline. In each vertical line, we find all starting ground points and threshold points. The first start-ground point is obtained by orthogonal projection of the sensor location on the ground. From the first start-ground point, we then identified first threshold point by considering each pair of consecutive points. The details of the method to detect starting ground points and threshold points are shown in our previous study. 28 Next, we assign a temporary ground label from the starting ground point to the next threshold point. After the threshold point, we assign temporary non-ground labels until we identify the next starting ground point. We repeat this process for all vertical lines in the point cloud. After this step, the temporary labels will be reconsidered in subsequent steps.

In the second step, we consider the points gained by each channel in the horizontal direction. If we employ an m-channel laser sensor, we will obtain m scanlines in the horizontal direction. First, each scanline is divided into smaller “level-2” lines based on the distance between each pair of consecutive points. Next, we classify and label all level-2 lines and reduce the number of line types from four to two. Each line is then either a ground line or a non-ground line. Lines in which all points have the ground label are ground lines; those in which all points have the non-ground label are non-ground lines. After that, we consider each level-2 line in relation to the other level-2 lines in the horizontal direction. All abnormal level-2 lines will have updated labels. If the label of one level-2 line changes, the labels of all points on the line change accordingly.

In the next step, we process the data that depend on features in both directions. In general, we reconsider each level-2 line in a relationship with two level-2 lines in the next and previous scanlines. If the current line is determined to be incorrectly labeled, we change the labels of its points. After the third step, all labels are fixed. Finally, we obtain two groups: ground points and non-ground points. All non-ground points become the input for the next stage.

Object segmentation using flood-fill algorithm

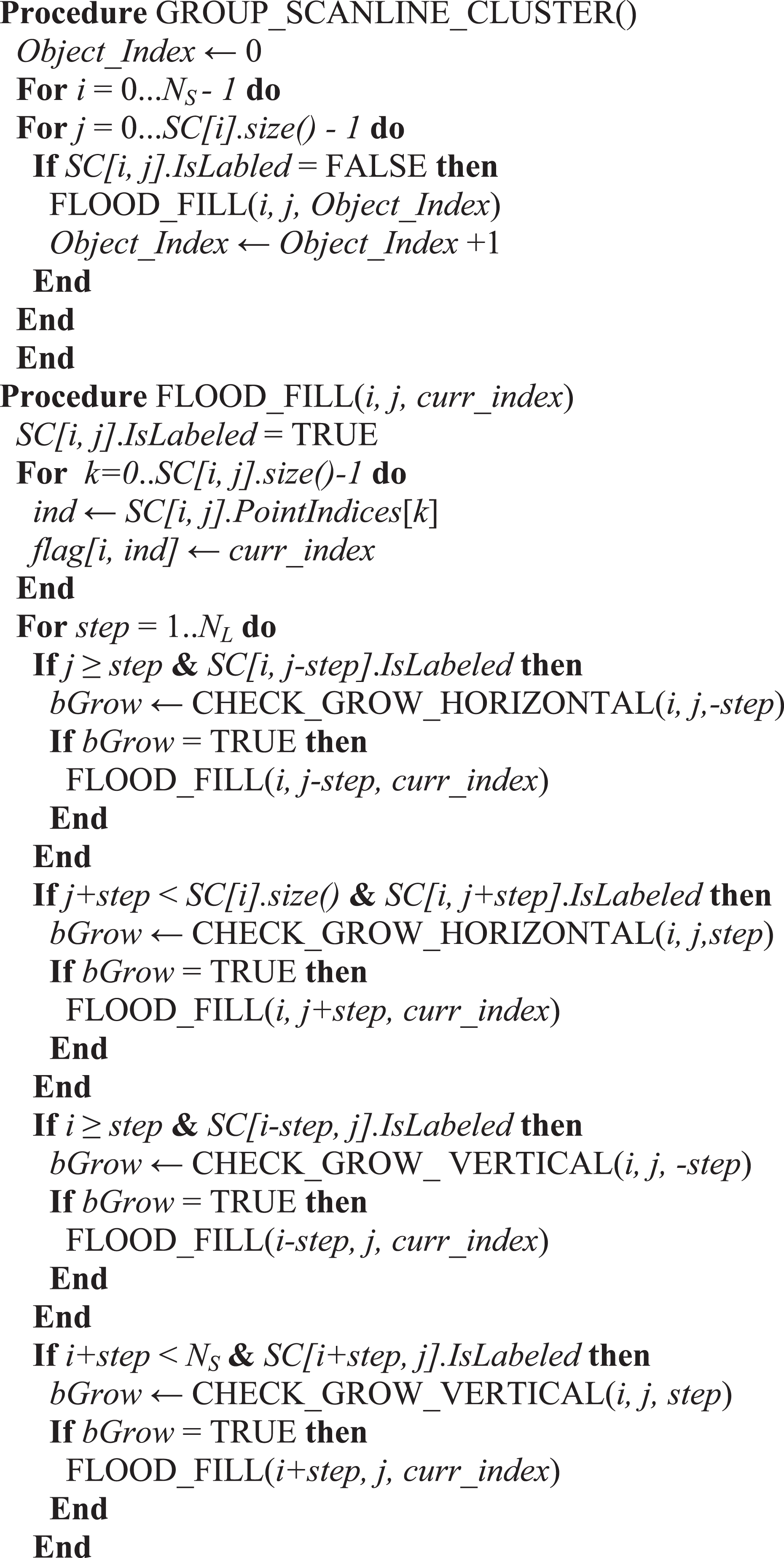

In the object segmentation stage, we apply a proposed object segmentation method based on the flood-fill algorithm. First, we segment clusters in each scanline using Algorithm 1. The basic idea of the algorithm relies on the observation of real life. In a scanline, if two non-ground points are separated by some ground points or lost points, they have a high probability of being placed in two different objects. However, this conclusion is incorrect in some special cases. Even if there are some lost points or ground points located between two non-ground points, they are placed in one object. We correct these cases by using the flood-fill algorithm as shown in Algorithm 2.

Scanline cluster segmentation.

Grouping scanline clusters.

In addition, the distance between two consecutive non-ground points placed in the same object is smaller than that between two consecutive non-ground points placed in two separate objects. We use a threshold value, dmh , to make the decision. In the “Distance2D” function, we use the Euclidean distance in the x–z plane to reduce the computational cost. If the distance is smaller than dmh , we push both points in a cluster. Otherwise, we create a new cluster and push the next point to a new cluster. “New_scanline_cluster” is an initial step to create new cluster S. At this time, S is an empty cluster. Ns and NP[i] are number of scanlines and number of points in scanline i, respectively.

In the second step, we employ a flood-fill algorithm for grouping the clusters in all scanlines into separate objects. The details of this step are illustrated in Algorithm 2. Each object is separated from other objects by the Object_Index value. All non-ground points belonging to one object have the same Object_Index value in the flag array. We grow the flood-fill algorithm in four directions: left, right, front, and back. For checking the growing condition in left and right directions, we employ the CHECK_GROW_HORIZONTAL function. Based on the distance between current cluster and neighbor cluster in the horizontal direction, we either merge them or ignore growing in this direction. In this function, we use same threshold, dmh , as in Algorithm 1. Similarly, for front and back directions, we use CHECK_GROW_VERTICAL function and define a new threshold, dmv . In general, dmv is greater than dmh because, as mentioned above, the resolution of the scanner in the vertical direction is smaller than the resolution of the scanner in the horizontal direction. In reality, because of errors due to scanners or complex environments, we lose many points and the point cloud is quite sparse. Therefore, if we check only one step in four directions for growing, one object is sometimes segmented into several objects. To avoid this case, we grow it in four directions with NL steps. Value NL is chosen depending on the sensor type.

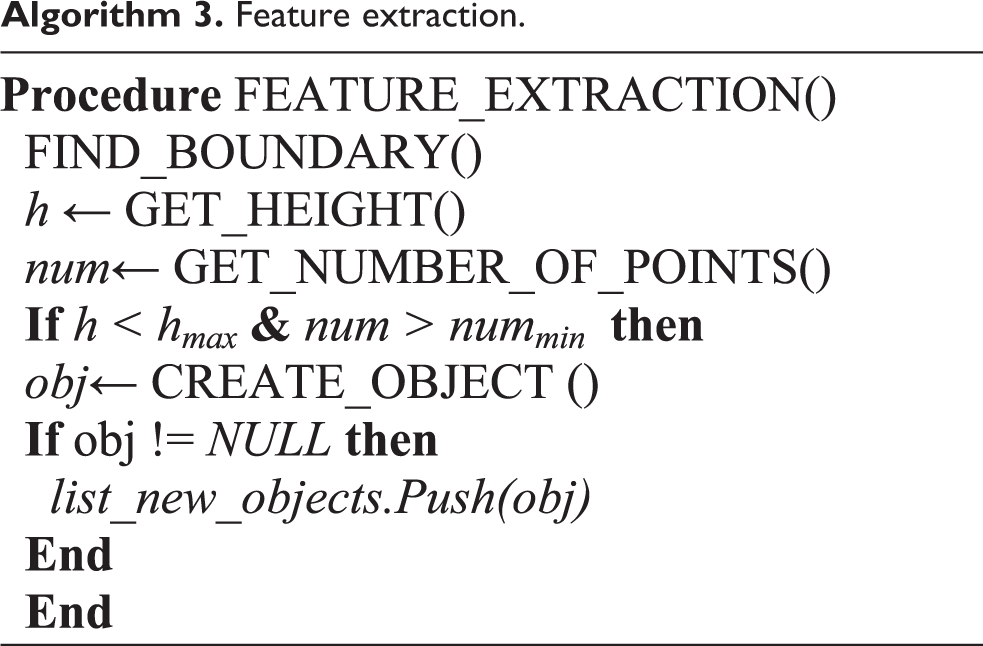

Object tracking based on likelihood probability

In the object tracking stage, we implement three steps. First, we extract the features of each object in the current frame. These features include the boundary points, direction, length, width, height, center point, number of points, and list of all previous center points. These features are obtained using list of 3-D points of each object gained in the object segmentation stage. For processing each object obtained in the second stage, we use Algorithm 3. In the FIND_BOUNDARY function, we estimate the boundary and count the number of points of the object. From the boundary, we estimate the height of each object. We ignore all high objects, such as trees and lights. If the height value is larger than threshold hmax , the object is ignored. We additionally use threshold nummin for decreasing noise. In the CREATE_OBJECT function, we calculate the width, length, direction, and center point. The very large objects such as houses and buildings are static ones. Therefore, we ignore large objects based on length and width values. We do not estimate directions for small objects, such as pedestrians. After passing through all filters, the object is pushed into the list of new objects. This step is considered object detection.

Feature extraction.

In the second step, we calculate the similar probability of each new object obtained in current frame with each object in the tracking list. At the start time, the tracking list is empty. After implementing the first step, all objects obtained in the first frame are pushed into the list of tracking objects. From the second frame, we calculate the likelihood probability of each new object i with a tracking object j using formula (1)

Here,

Assume that we have n factors that affect the probability computation. In our study, we employ four factors: length, width, direction, and distance between the centers of two objects. The height factor is ignored because almost all objects, such as pedestrians, cyclists, and vehicles, have no significant height difference. For factor k, we use the function

In the next step, we update the object information in the tracking list using Algorithm 4. The updating information includes points, length, width, direction, and center location of each tracking object. If an object in the tracking list is not updated in a few frames, we consider it as out-of-range object. From that point, we remove this object from the tracking list to reduce computational space.

Update list of tracking objects.

Experiments and analysis

We conducted experiments on real data sets from Velodyne, Inc. (Morgan Hill, California, USA). These data sets included many moving objects, such as pedestrians and vehicles. The data were obtained from a Velodyne HDL-32E sensor attached to both static and moving vehicles. The first data set, named HDL_32_PoliceCar, was obtained by a static sensor placed on the top of a car. The car was parked at a crossroad. We gained the second data set, named HDL32-V2_MontereyHighway, by a sensor attached to a moving car in an urban environment. For the first data set, the local and global coordinates were the same. However, for the second data set, the global coordinate differed from the local coordinate because of the dynamic sensor location. We, therefore, converted and extracted each frame from a local to global coordinate using the Veloview 3.1.1 tool from Velodyne, Inc.

To run the application, we employed a computer with an Intel Core i7-6700 CPU (3.4 GHz) and 16 GB of RAM. For the ground segmentation stage, we employed the same parameters that were used in our previous study. 28 In the object segmentation stage, we set NL = 3, dmh = 50 cm, and dmv = 100 cm. We chose these parameters based on the resolution of the Velodyne HDL-32E sensor. We made several experiments and concluded that these parameters help us to get the best results. For other sensors, different parameters can be chosen. In the third stage, we set nummin = 10, hmax = 3 m, and minprob = 0.5.

Experimental results

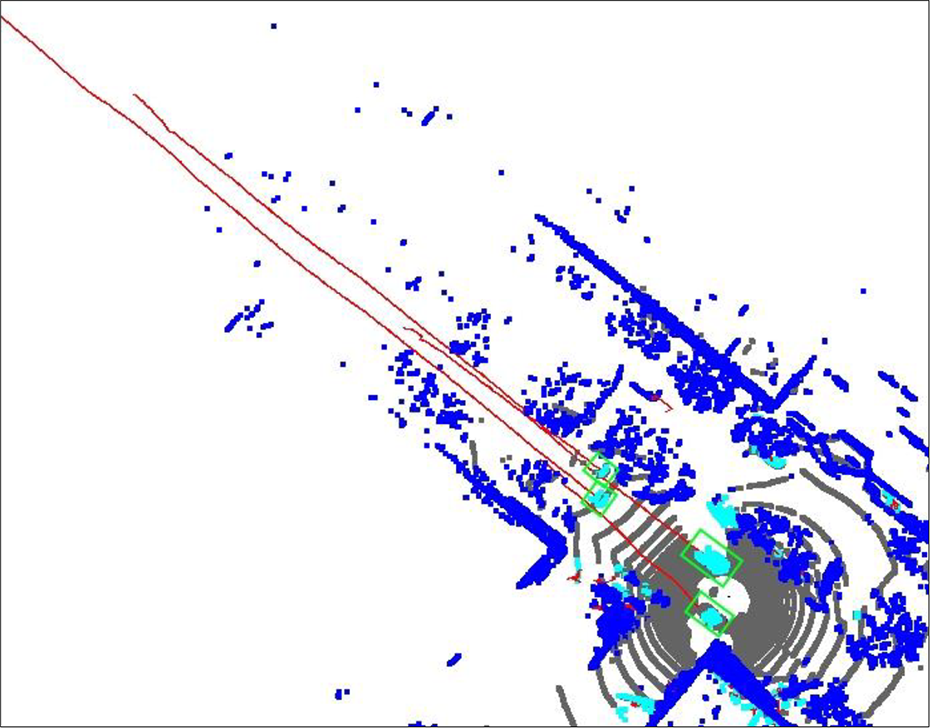

In the first experiment, we segmented and tracked multiple objects from the point cloud obtained by the static sensor. Figure 2(a) shows the result after the proposed ground segmentation and object segmentation. All ground points are represented in black. Other objects are illustrated by random colors. Figure 3 shows the object tracking results. For better visualization, we set all ground points in gray, static objects in dark blue, and tracking objects in light blue. The path of each tracking object is demonstrated by the red line, which connects all center points of an object in many frames. We conducted a second experiment for the point cloud received from the moving sensor. The results of our object segmentation algorithm for moving sensor are illustrated in Figure 2(b) and (c). In addition, Figure 4 shows the object tracking results, and Figure 5 depicts the result after approximately 30 s. Both experiments showed good results. Therefore, the proposed object segmentation and tracking methods are effective.

Results of the proposed object segmentation algorithm using a (a) static sensor (perspective view), (b) dynamic sensor (perspective view), and (c) dynamic sensor (top view).

Results of the proposed object tracking system with a static sensor from the top view: (a) frame 10 and (b) frame 60.

Results of the proposed object tracking system with a dynamic sensor from the top view: (a) frame 5 and (b) frame 33.

Results of the proposed object tracking system with a dynamic sensor at frame 300 from the top view.

Experimental analysis

When we applied our ground segmentation method to an urban environment, the accuracy was 94.71%. For complex terrain, the quality was greater than 80%. From the non-ground points, we performed effective segmentation in separate objects, as shown in Figure 2. Vehicles, pedestrians, trees, bushes, lamps, traffic lights, signposts, and buildings are distinguished in different colors. For the sake of clarity, we create labels for several representative objects. However, for pedestrians walking together with a small distance between them, they are considered one object. This case is shown in Figure 6(a).

Results of the proposed method for a group of people: (a) object segmentation (perspective view) and (b) object tracking (top view).

In both data sets, there were many trees and bushes with various shapes. These objects can easily cause noises when tracking moving objects. However, Figures 3 and 4 show the good quality of the proposed approach. In Figure 3, two moving cars and one pedestrian are well tracked. Figure 4 shows the results of tracking six moving cars using a dynamic sensor. Cars 1–5 are well tracked. Car 6 was moving at a higher speed than the others; hence, the dynamic laser sensor could not scan it in some frames. Therefore, this car was deleted from the tracking list in few frames. When this car emerged again, its tracking continued. In Figure 5, four moving cars were tracked with long paths. Figure 6(b) shows the case when a group of pedestrians was tracked as one object. In addition, the tracking system will fail if there is a crowd of people in a small area. We will solve these problems in future work.

Furthermore, we calculated the processing time in each step, as illustrated in Figure 7 and Table 1. The total processing time according to the frame is denoted by the red line. Other lines represent the processing time of each stage in the object segmentation and tracking system. Figure 8 shows the number of new objects and that of tracking objects in each frame. In addition, the green line shows the number of moving objects with the trajectory length longer than 10 m.

Processing time of the proposed object segmentation and tracking system.

Average processing time of the proposed object segmentation and tracking system.

Number of new objects in the current frame and number of tracking objects.

From frame 20, we obtained many long moving objects. In each frame, we received and processed approximately 60,000 points. The average processing time for each frame was 12.62 ms. Hence, we could process 79 frames per second (fps). Moreover, the Velodyne sensor returned only 10 fps. It can thus be concluded that the proposed object segmentation and tracking system can effectively operate in real time at a rate eight times faster than that of the sensor. In Table 2, we compare the performance of our method with other methods using the same Velodyne HDL-32E sensor. The table shows that our method is the fastest. We also verified the quantitative results of our method as shown in Table 3. This table illustrates the quantitative results of the proposed method in two data sets named “HDL_32_PoliceCar” and “HDL32-V2_MontereyHighway.” In both data sets, the accuracy is more than 80%.

Average processing time of the proposed and other tracking systems.

Quantitative results of the proposed tracking system.

Conclusions

In this article, we proposed a fast and efficient segmentation and tracking method for multiple objects using a multichannel LIDAR sensor. In the proposed method, we implement three stages for each frame to achieve our targets. First, we apply a ground segmentation algorithm. Next, we employ a proposed fast object segmentation method based on the flood-fill algorithm. Finally, we track the moving objects in the non-ground group. The experimental results demonstrated that our method could achieve high-quality results in all evaluated aspects, showing real-time and accurate performance. For the Velodyne HDL-32E sensor, the presented approach required only 12.6 ms for processing each frame data item. However, sometimes a group of pedestrians was detected as one object. In future work, we will examine and upgrade our system to enhance the quality and enable it to classify object types. In addition, we have a plan to compare the accuracy of the proposed method to other existing methods using public data sets.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Research Foundation of Korea (NRF) grant funded by the Korea government (MSIP) (2018R1A2B2007934).