Abstract

When service robots work in human environments, unexpected and unknown moving people may deteriorate the convergence of robot localization or even cause failure localization if the environment is crowded. In this article, a multisensor observation localizability estimation method is proposed and implemented for supporting reliable robot localization in unstructured environments with low-cost sensors. The contribution of the approach is a strategy that combines noisy laser range-finder data and RGB-D data for estimating the dynamic localizability matrix in a probabilistic framework. By aligning two sensor frames, the unreliable part of the laser readings that hits unexpected moving people is fast extracted according to the output of a RGB-D-based human detector, so that the influence of unexpected moving people on laser observations can be explicitly factored out. The method is easy for implementation and is highly desirable to ensure robustness and real-time performance for long-term operation in populated environments. Comparative experiments are conducted and the results confirm the effectiveness and reliability of the proposed method in improving the localization accuracy and reliability in dynamic environments.

Introduction

Service robots have been increasingly designed to act autonomously over time in human environments. 1,2 Human environments have objects that are either permanent like walls, movable like tables and chairs, or moving like humans. Going beyond robot localization, it is recognized that when service robots are deployed to perform long-term operation in populated areas, unexpected moving objects will pose a challenge to the reliability and accuracy of mobile robot self-localization.

Autonomous navigation has been extensively studied in the robotics literature, especially on applications in unstructured environments. It is an essential part of the realization of fully autonomous robotic systems. In the last decades, many researchers 3 –5 have placed emphasis on improving the Monte Carlo localization (MCL)-based self-localization algorithms, in order to accommodate dynamic environments.

Implementations of such algorithms with single laser range finder (LRF) have shown its feasibility in dealing with dynamic environments. Rowekamper et.al. 4 propose a method that incorporates Kullback–Leibler Distance algorithm sampling, 5 scan matching 6 with the MCL method, and hence the accuracy of robot localization is improved. Valencia et al. 7 propose to establish a short-term map based on the static map information, and further perform normal distribution transform-based MCL. The method can compensate the disturbance of environmental dynamics and thus is more robust to be applied in dynamic environments. In the study by Peng, 8 a real-time achievable localization algorithm is proposed by combining the natural scene recognition method with the MCL method. Wang et al. 9 propose an approach to estimate the observation localizability for robot localization, which is proved to have more robustness against unexpected disturbances in the robot’s neighborhood. In fact, the observation localizability evaluates the effective observations using Cramér-Rao bound (CRB), 10 and the covariance lower bound is related to the inverse of Fisher’s information matrix (FIM). The effectiveness of evaluating the observations through CRB has been analyzed and verified in the studies by Censi10 and Censi et al. 11 . In Wang’s method 9 , the prior probability of a moving person in the environment is assumed to be known, based on which a factor that models unexpected observations is computed. Thus, this method may not be that reliable in different situations that a known number of people are present. Intuitively, measuring how the observed data is affected by unexpected and unknown objects (e.g. moving people) directly can better accommodate actual changes in the environment.

All of the abovementioned approaches resolve to improve the robustness of self-localization algorithm but with a single LRF only. Although laser data provide explicit distance measurements, distinguishing humans in LRF data is not an easy endeavor since human legs are scanned as arcs and lines which are usually confused with cluttered background. Identifying typical leg patterns can be beneficial to leg detection in LRF, 12 compared with other leg detection methods such as distance minima and motion detection. However, laser segments of human legs are still not discriminative in cluttered environments, if people walk naturally in the environment and their leg postures vary. It is noteworthy that most leg detection algorithms 12 require accurate LRF sensors (e.g. a SICK LMS 200 LRF with 80 m maximum sensing range and 0.25° angular resolution) to be employed. In contrast, we consider applying HOKUYO URG-04LX (https://www.hokuyo-aut.jp), a less accurate and low-cost LRF sensor (4 m maximum sensing range, 0.36°angular resolution), which will thereby make laser-based leg detection incompetent and inappropriate in our system implementation.

Meanwhile, vision systems for robot localization are very common due to the ability to obtain rich information about the environment. However, real-time monocular-based navigation in unstructured environments using front or omnidirectional cameras is difficult, because image processing for unstructured scenes is complex and computationally heavy. In the study by Carreira et al. 13 and Rodrigues et al., 14 an image-based navigation system is proposed for localization of mobile robot in indoor unstructured environments, but the system is restricted to work in buildings with static ceilings containing rich visual information. Visual odometry (VO) has been widely applied for rotation and translation estimation of mobile robots. 15,16 In general, due to the variation in illumination and image noises in real-world environments, VO methods are not appropriate for applying in long-term localization. 17

This work is motivated by the observation that fusing multisensor information is often an imperative measure to this problem, 12,18 especially when only low-cost sensors are available. Li et al. 17 have proposed a method for estimating the position of a mobile robot with high accuracy in an unknown and unstructured environment by fusing images of an omnidirectional vision system with measurements of odometry and inertial sensors. In the study by Bellotto and Hu, 12 the authors propose a human-tracking algorithm that fuses a vision-based face detector with a leg detector based on the laser scans of an LRF. Assuming that the major causes of unexpected and moving objects in robot’s working environment are humans, it’s desirable to apply RGB-D sensors (Kinect) for human detection 18 –20 and autonomous robot navigation. 21,22 If we compare the characteristic of laser sensors and Kinect sensor in this context, RGB-D-based human detection is much more efficient 20 than using LRF. However, laser data is more accurate for computing the robot’s position compared with RGB-D-based robot localization methods. Thus, a fundamental problem is that we must resort to an elaborate technique to properly combine the advantages of LRF and RGB-D sensors for fast and reliable robot localization in dynamic environment.

For this purpose, in this article a real-time observation localizability estimation method for robot localization in unstructured environments is proposed that uses noisy and multi-cue information. Our approach is along the line of the localizability estimation theory described in the study by Wang et al. 9 But to the best knowledge, this is the first study to apply low-cost multisensor in the observation localizability estimation. The novelty of the approach is in a strategy to combine inaccurate LRF data and RGB-D data in the computation of the dynamic localizability matrix (DLM). The proposed method efficiently estimates the unreliable part of laser readings that hit unexpected moving people, while avoiding the necessity of detecting legs from the inaccurate laser data of low-cost LRF sensors. The method is competent to work at very high frame rate on a standard laptop without the need for a graphics processing unit implementation.

The article is organized as follows. The “Method overview” section introduces the outline of the proposed method, “Estimating localizability using multisensor information” section describes the estimation of DLM using the combination of low-cost LRF and RGB-D sensors, followed by “Robot pose update” section, where the update of robot pose estimates is described. The experimental results in “System implementation and experiment” section validate the effectiveness and performance of the proposed method in several scenarios.

Method overview

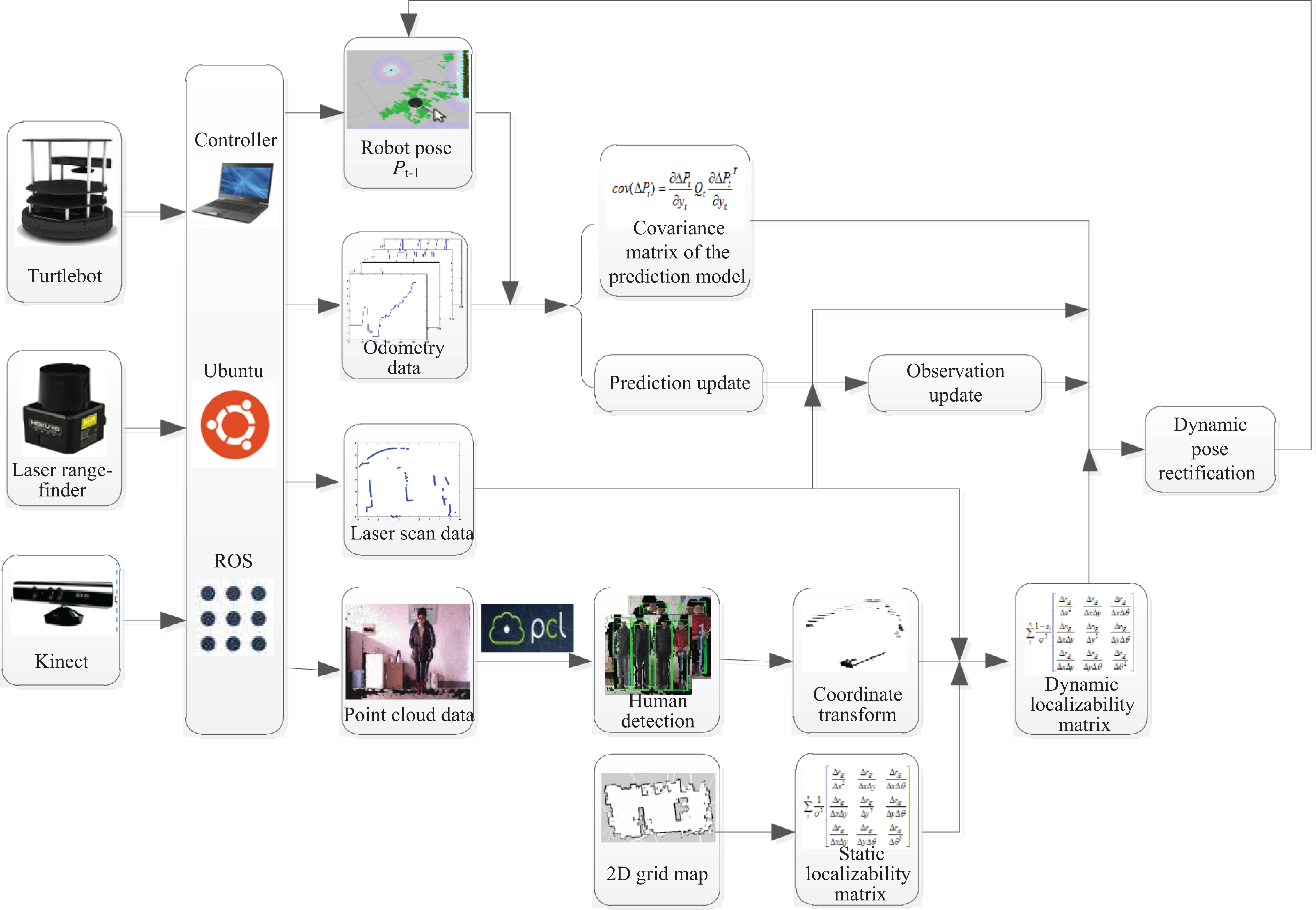

In this article, moving people are viewed as the major cause of environmental dynamics compared with a previously built metric map. The proposed method incorporates Kinect sensor data and LRF data in the estimation of observation localizability. By aligning data acquired from a Kinect sensor and a LRF equipped on the robot, the outputs of a RGB-D-based human detector are utilized for estimating the probability of laser scanner data that corresponds to unexpected objects. Hence, the DLM and the covariance matrix of the prediction model are computed for rectifying the distribution of particles. Figure 1 shows the flowchart of the algorithm.

Outline of the proposed method.

The LRF and the Kinect sensor were installed according to the geometrical relation as shown in Figure 2, which indicates that two known rotational matrix R and translational matrix T can be measured to represent the transformation between the laser sensing coordinate and the Kinect sensing coordinate

Positional relationship of laser and RGB-D sensors.

Estimating localizability using multisensor information

Dynamic localizability matrix

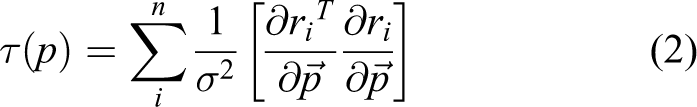

Static localizability matrix (SLM) 23 is computed based on the concept of FIM, which is defined as a function of the expected readings and of the orientation of the environment’s surfaces at the sensed points. In the situation of laser range-finder-based mobile robot localization and environmental mapping, Censi 10 defines FIM using the first derivatives of the ray-tracing function r

where

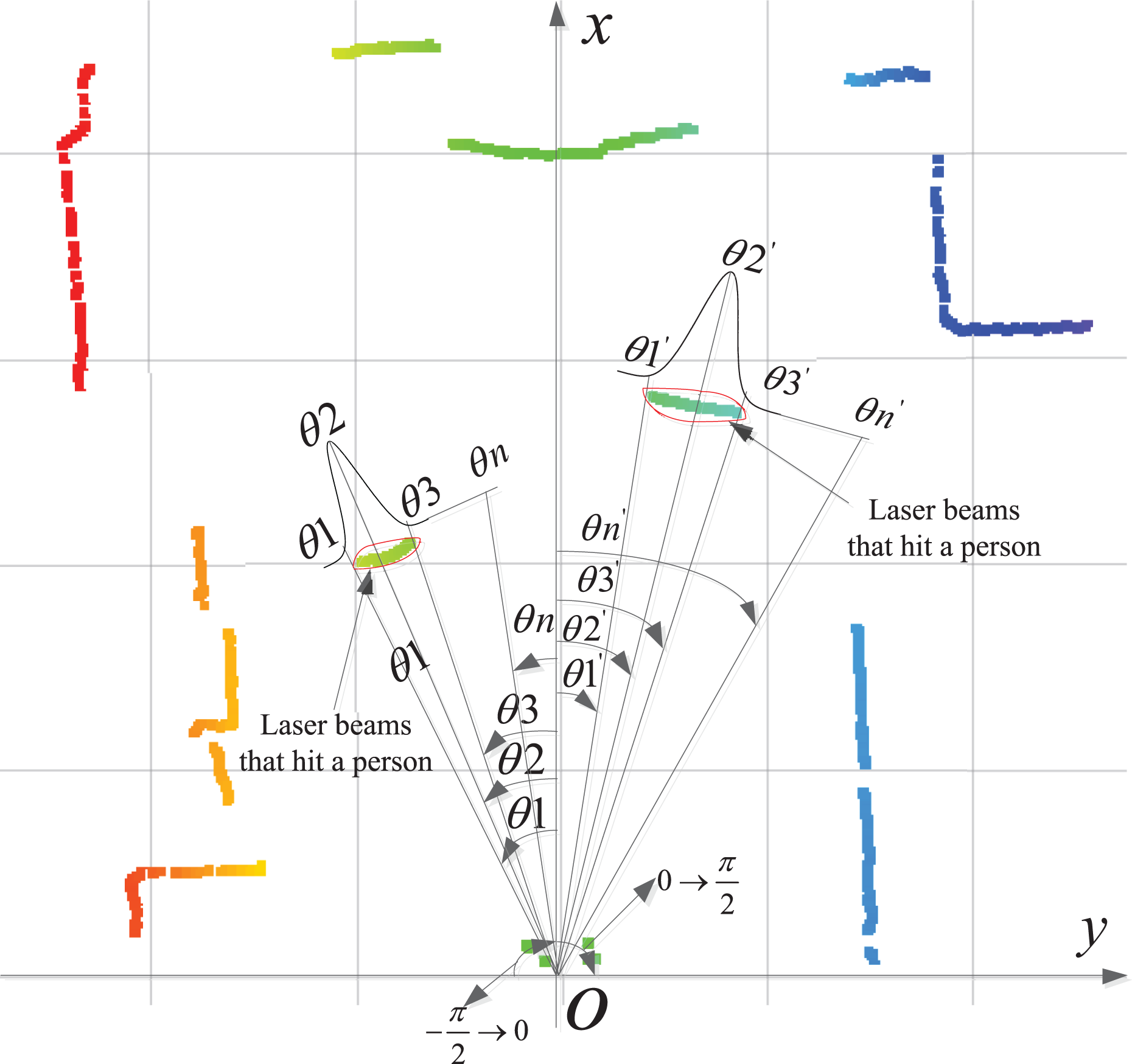

The principle of DLM is introduced in the study by Wang et al, 23 which can be briefly described as follows. As an example shown in Figure 3, the robot’s current pose is assume to be P0 = [x0, y0, θ0] and the ith beam of the LRF reading is denoted as ri. For clarity purposes, we denote A as the event of the laser beam hits a known obstacle, and B as the event of the laser beam hits an unexpected obstacle. The probability of the laser beam ri hits a known obstacle can be computed according to the Bayes law

Mobile robot model.

The i th laser beam data (xi, yi) can be represented in the X–Y coordinate as

p(ri|A) can be computed according to p(ri|A) = μxiyi, in which μxiyi is the occupancy probability at the point (xi, yi) in the original grid map.

Computing DLM using RGB-D and laser sensors

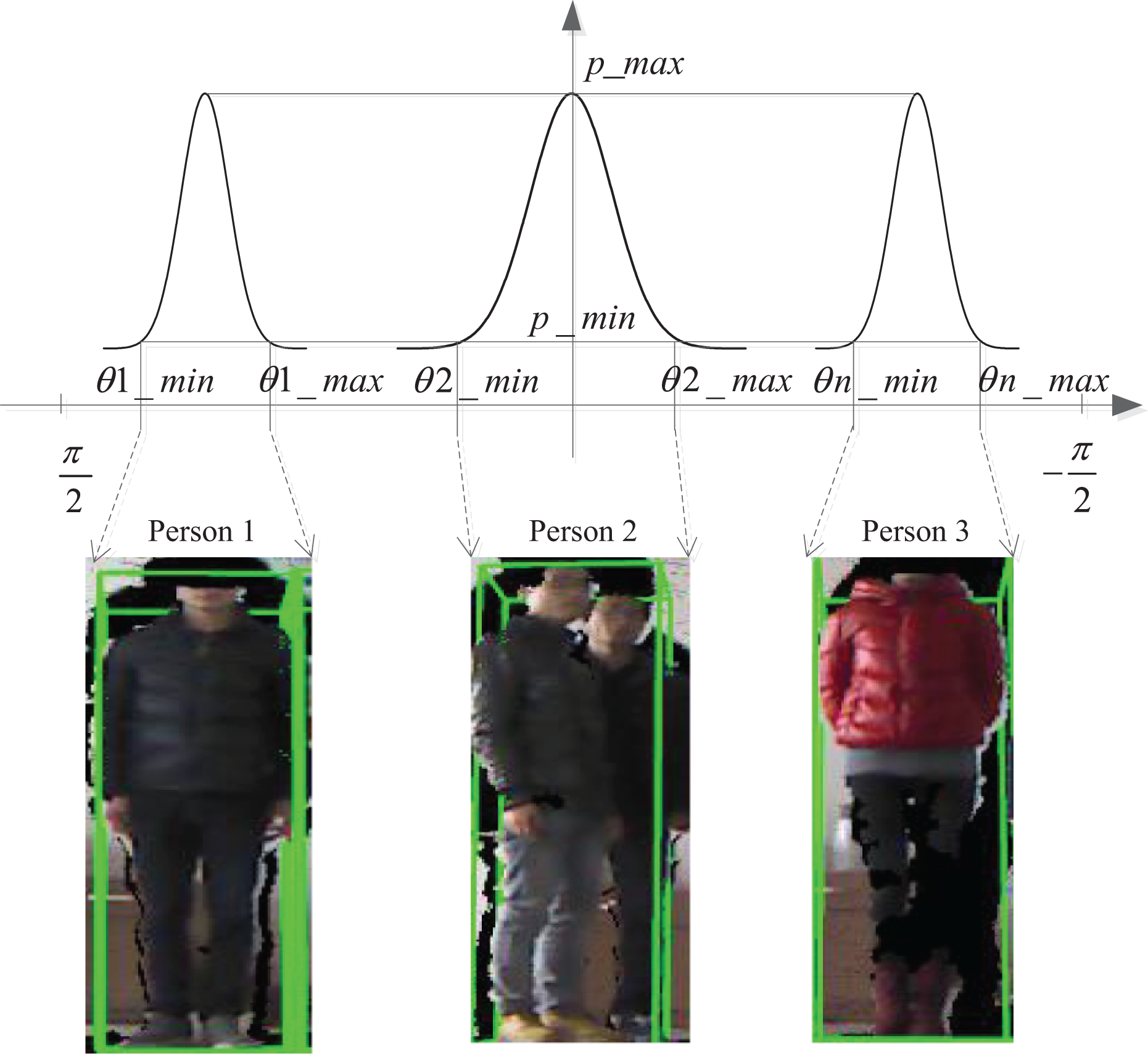

Since the laser data and RGB-D data have already been aligned in the same coordinate frame, the output of Kinect-based human detector can be directly transformed to the laser data coordinate. In this article, an implementation of histograms of oriented gradient 24 (HOG)-based people detection algorithm as part of the open source Point Cloud Library (PCL) is utilized. The human detector applies HOG people detector on segmented clusters of three-dimension (3-D) point clouds, resulting in 3-D bounding boxes that should contain whole persons. The boundary of the bounding box in Kinect sensor coordinate is then projected onto the ground plane and transformed into the laser sensor coordinate, which help to determine the section of laser beams that correspond to the persons’ legs.

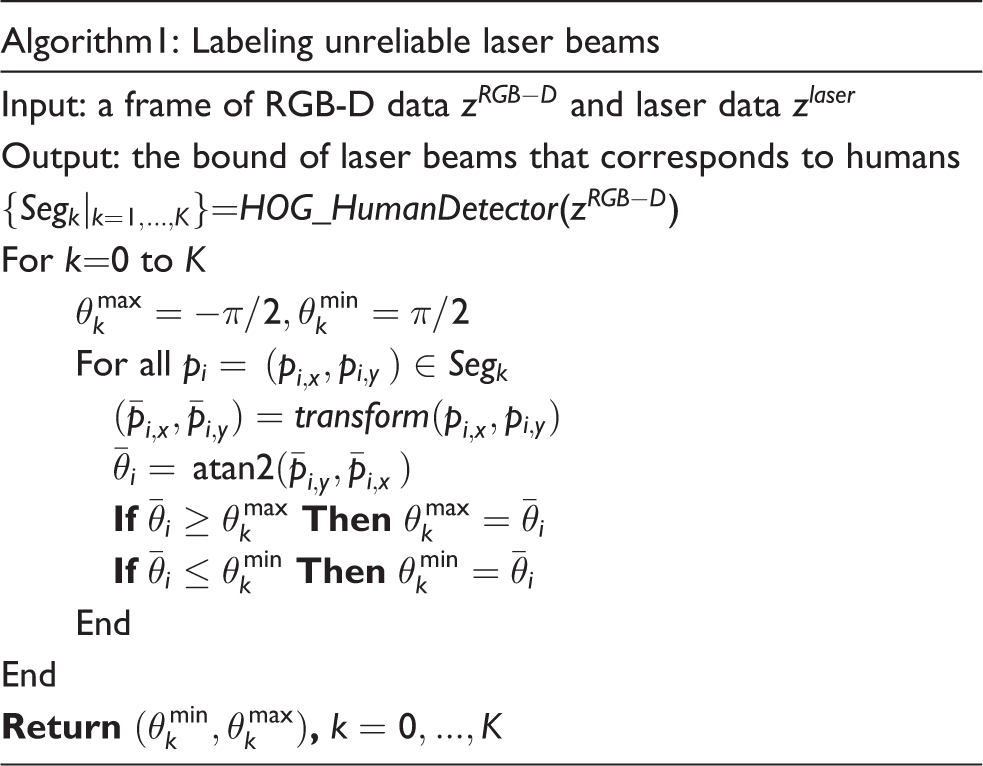

Denote Segk as a segment of point cloud that corresponds to an extracted person, in which pi = (xi, yi), i = 1 ⋯ n is a point and n is the total number of point in the segment. The segment is bounded by a rectangle (left, right, top, and bottom). Algorithm 1 determines in the polar coordinate the boundary of laser beams that correspond to the persons. When the RGB-D-based human detector outputs a number of K persons, the boundaries of laser beams that correspond to different persons can be all computed according to Algorithm 1, resulting K boundary pairs

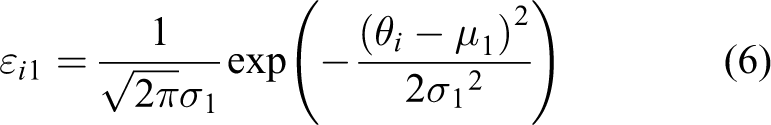

Due to the sensor noise, for the kth detected person, a Gaussian distribution

Modeling the uncertainty of observing unexpected objects.

An example of uncertainty of observing two people in laser readings.

As a result, for any laser beam the probability of hitting an unexpected object (person) can be computed. Denote θi as the angle of the ith laser beam, then the probability of the laser beam hits an unexpected object is computed as εi

In a special situation that the Gaussian distributions that correspond to two people partially overlay due to the fact that the two people are standing close to each other, the probability of a laser beam hitting an unexpected object can be computed according to Figure 6. In this case, two Gaussian distributions

Situation that two Gaussian distribution partially overlays.

In equation (3), p(ri|B) denotes the probability of a laser beam reading ri hits an unexpected obstacle. The computation of p(ri|B) can be approximated by discretizing the p(ri|rB) using a step value ΔrB

where rB ∈ (0, riE) is the real distance between the robot and the obstacle, and we further assume Gaussian error in laser reading

Note that p(B) denotes the prior probability of unexpected objects in the environment. Since εi represents the probability of the ith laser reading should ideally hit humans, according to what the RGB-D sensor reports, it is utilized to replace p(B) in this article to represent the prior probability of unexpected humans in laser readings.

Therefore equation (3) can be rewritten as

in which p(A) = 1 − p(B). After p(A|ri) is calculated according to equation (11), the DLM can be computed as 25

The DLM accommodates impact of both static obstacles and moving objects in the environment on the robot’s localization task. The time complexity of computing the DLM is O(n) and thus the real-time localization is ensured. In this article, the prior probability p(B) is evaluated by running a RGB-D-based human detector, which allows real-time update of the DLM according to the geometrical relation between the robot and people. As a consequence, it better reflects the reliability of current laser observations for being incorporated into the probabilistic self-localization algorithm, although the moving people are unexpected compared with the previously established grid map of the environment.

The covariance of every unbiased estimator is bounded by the CRB lower bound, which is the inverse of FIM. Hence the achievable localization error covariance is given by the inverse of the localizability matrix

The determinant of D(P) well reflects the physical meaning of the robot’s laser observation localizability

In other words, the higher value d(p) is, the lower amount of unexpected objects are in the robot’s neighborhood, and thus the higher localizability the robot’s current laser observation will have.

Robot pose update

The popular classic MCL algorithm uses odometry-based motion model to predict the sample distribution and laser observation model for perception update. As is a known problem, the importance sampling technique employed in the conventional MCL algorithm may cause the impoverishment effect of samples in cluttering and dynamic indoor environments, since a large majority of samples will be settled in areas with lower density value of the posterior, and will be abandoned as the iteration proceeds.

In contrast, in this article the DLM together with the covariance matrix of the prediction model is used to balance the effect of motion prediction and perception update. According to “Estimating localizability using multisensor information” section, the determinant of the DLM reflects how discriminative the laser observation is for determining the robot’s current position. Intuitively, the effect of observation update should be attenuated when the observation localizability is low, and vice versa. In addition, the covariance matrix of predication model is utilized for evaluating the reliability of odometer, which reflects the systematic error and accidental error of using the odometry as the robot motion model.

DPF,t denotes the DLM at time t, which is computed by the method described in “Estimating localizability using multisensor information” section. fodo(Δut) denotes the additional samples brought by the motion prediction step at time t, that is

in which Pt−1 is the sample set at time t − 1, Δ ut is the amount of odometry input, fodo is the motion model with a covariance matrix Codo,t and “+” is an adding operation defined on two sets. The ideal sample set after performing the assumed ideal perception update is {pPF,t, wt}, in which pPF,t is the sample set and wt is the corresponding weights. Hence the amount of samples modified by perception update is

in which “−” is a subtraction operation defined on two sets.

Combing the DLM DPF,t and the covariance matrix of the prediction model, the distribution of particles is rectified by a weighing function. A weighting function 25 is applied to adjust the effect of motion prediction and observation update on the distribution of the particles

Equation (17) describes a tradeoff between the two effects and k1 is a constant that can be chosen to adjust the proportion of the two effects. In a highly dynamic environment, the observation localizability is low and thus is unfeasible to evaluate the effectiveness of each particle. Thus, in this case the effect of observation update is reduced and the major factor is chosen as motion prediction, so that the wrong evaluation of particles can be avoided significantly. In situations in which the observation localizability is sufficient for observation update, the particles can be rectified according to the observation model so that the accumulated error of odometry can be corrected.

System implementation and experiment

The experiment is conducted in a lab environment using a mobile robot navigation control system developed for verifying the proposed method. The system entails the use of a Turtlebot robot platform with an onboard Hokuyo URG-04LX-UG01 LRF and a Kinect sensor, as shown in Figure 7. The proposed method is implemented using robot operating system 26 to encapsulate the odometry, laser, RGB-D, and different types of sensory data, and to ensure communication among separate threads or processes via message interface. An example of laser data interface is provided in Table 1. In addition, OpenNI is utilized for RGB-D data acquisition and PCL 27 is employed to implement the HOG-based human detector for assisting the DLM-based self-localization algorithm.

Turtlebot platform.

Parameters of LRF.

LRF: laser range-finder.

Figure 8 shows the diagram of the system, in which the implementation of the proposed method for robot localization in dynamic environment is depicted in detail.

Diagram of the system implementation.

A testing environment 12.2 m long and 7.5 m wide is shown in Figure 9. The static environmental grid map was established by applying an elementary Gmapping 28 algorithm. The result of global localization process with no people walking around the robot is given in Figure 10, in which the robot was controlled to navigate through the environment along a predefined path of about 10 m ahead. Figure 10 indicates the convergence of the robot’s positional covariance using the proposed method. The number of particles was set at 3000 in the experiments.

Environment model and robot trajectory.

Convergence of global localization error.

In order to compare the performance of the proposed method with a classic Monte Carlo method, the robot was controlled to follow predefined paths through the environment across a spatial range, in both static and dynamic situations. Localization accuracy was assessed by the mean square deviation of the robot’s pose in the X–Y space.

Structural environment scenario

In the first structural environment scenario, the robot navigated with no moving objects in its proximity. Figure 11(a) and (b) shows the mean square deviation of the robot’s estimated pose in X and Y space, respectively.

Mean square errors in static environments.

It’s obvious that the two methods achieve almost the same amount of localization error during navigation, in which most errors in X and Y space are between 3 cm and 5 cm. That’s because in static environment the proposed algorithm achieves the same effect with classic MCL algorithms. Note that between 200th to 300th cycles, the classic MCL algorithm outputs an increasing localization error in Y space because the robot was moving in a cluttering area along the Y direction in the testing environment, as is depicted by the “region A” in Figure 9. In contrast, our proposed method achieves less fluctuation in Y space because the estimated DLM was low in this area and thus the effect of observation update in the particle filter scheme is lowered to inhibit the variance in the localization error.

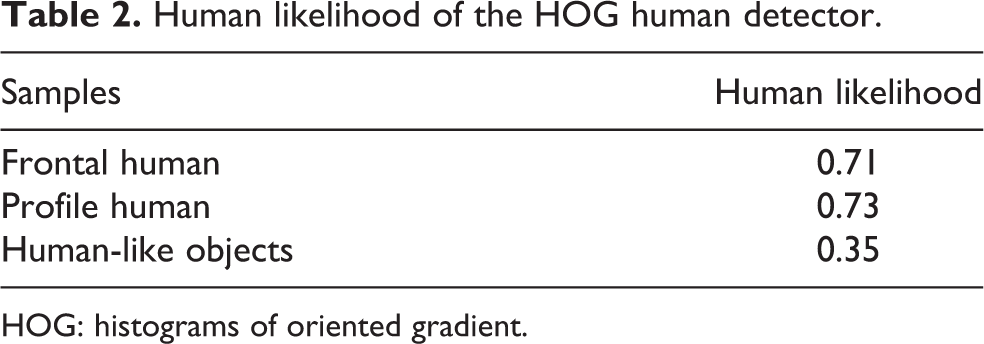

Unstructured environment scenario

The next scenario was the robot’s self-localization with human participants walking around. The performance of the RGB-D-based human detector in our application is firstly tested with a comparison of laser-based detection methods. In the study by Bellotto and Hu, 12 the accuracy of laser-based leg detection was evaluated by experiments, which controlled a robot to follow a person at a relatively low and nearly constant speed, in which the person’s leg patterns were mostly frontal and profile. However, in this article we address the problem of natural human–robot coexistence in navigation rather than just following. Hence, we tested the performance of the RGB-D-based people detector in different types of sequence performed, as indicated in Table 2.

Human likelihood of the HOG human detector.

HOG: histograms of oriented gradient.

According to our trial tests, the minimum confidence and maximum confidence for people detection are taken as −1.5 and 2.0, respectively. Since the HOG human detector reports an online person confidence value in person_confidence, a person’s likelihood is defined in our system as

Trial tests performed with humans and other human-like objects (e.g. humanoid robots with similar head–shoulder shape) suggest that the two can be distinguished by a threshold of human likelihood that takes the value 0.7.

The comparison in Table 3 indicates that the RGB-D-based human detector achieves low false positive rate and false negative rate, and thus is reliable and indispensable for implementing our method.

Human detection results from moving positions.

LRF: laser range-finder; FP: false positive; FN: false negative.

Figure 12 shows a snapshot of the testing scenario in which three people were in the robot’s proximity. Figure 13 shows the laser scan data that corresponds to Figure 12, in which the point clouds that belong to the three person segments detected by the RGB-D sensor are projected onto the laser frame according to the coordinate transform relation.

Unstructured scenarios.

Corresponding laser reading with projected RGB-D detection of people.

Figure 14 demonstrates the comparison between classic MCL methods and the proposed method in dynamic scenes. The mean squared errors of both methods are more or less the same in the first 100 iterations, because no people were close to the robot at that time. From the 100th iteration to the 250th iteration, moving people appeared in the scene and thus the localization mean square error of the classic MCL method increased to as high as 100 mm, which reflects the fact that the particle distribution diverged with the robot moving forward. In contrast, the localization mean square error of the proposed method remained below 50 mm and its fluctuation was less significant. The result suggests that the proposed method improves the accuracy and reliability of robot localization in dynamic scenes, because the method can estimate the part of unreliable laser readings caused by unexpected moving objects and thus modify the sample distribution using less observation information. Thus, the proposed method can better accommodate unexpected moving people. In addition, during the 250th to 350th iteration, the localization mean square error produced by the two methods both decreased, because moving people gradually moved out of the robot’s field of view.

Mean square errors in dynamic environments.

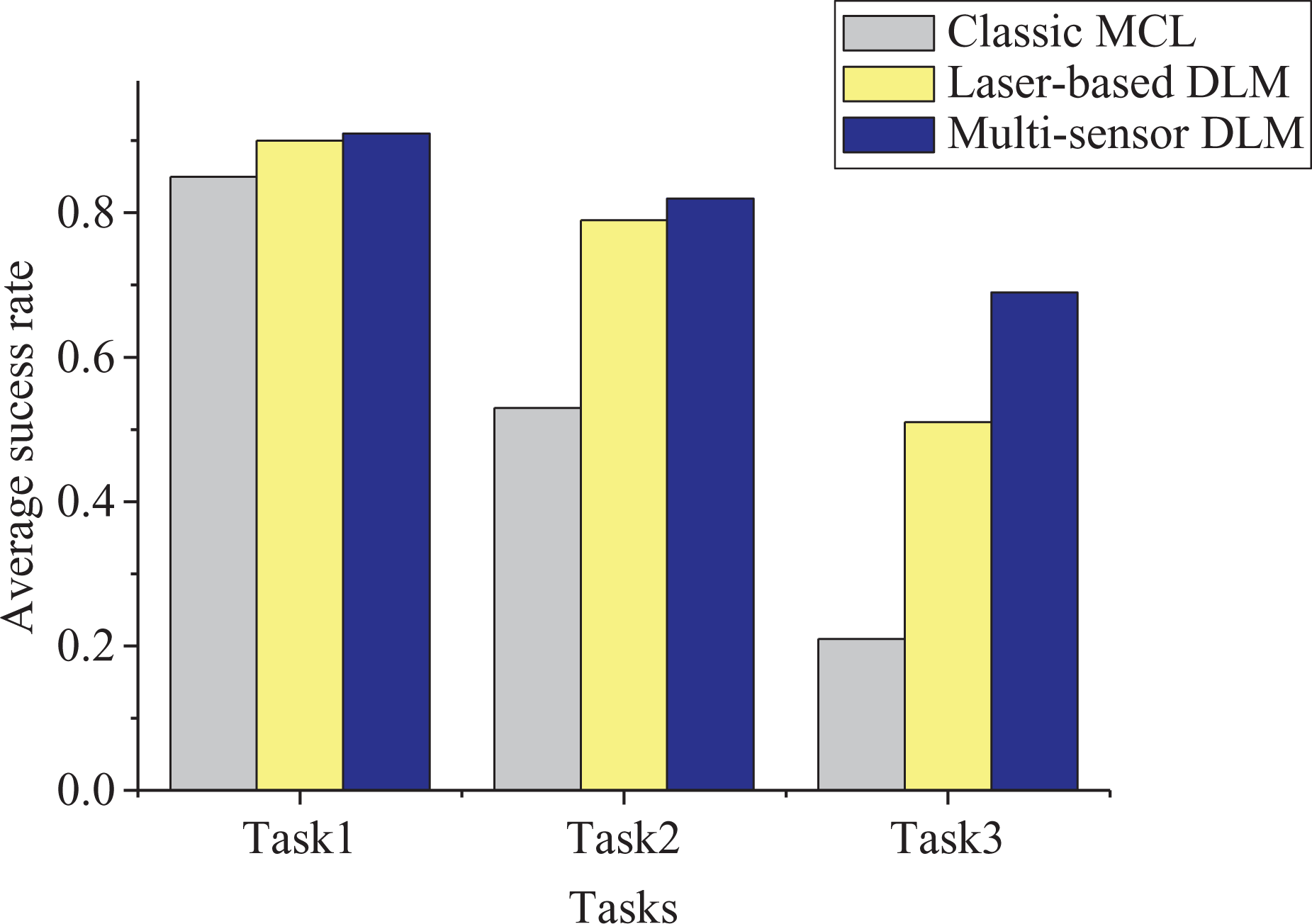

In addition, reliability is usually more of a priority than accuracy in many service robot applications. A 24-h long-term trial study involving eight participants was conducted in a large-scale office environment. The participants were asked to perform random walking, running, and back-and-forth movements during the robot’s operation. The robot was assigned with daily object delivery tasks and it was controlled using the proposed navigation method with a regular obstacle avoidance function to autonomously navigate in the human environment. The numbers of walking people involved in task 1, task 2, and task 3 are 2, 4 and 8, respectively. A total number of 180 trials were recorded during the 24-h test and the results of success per action were analyzed. In the navigation trial, following situations were counted as failure: Robot localization failure: once the robot failed to localize itself because of the divergence of particles, a localization failure event is recorded. In this case, the global localization process will be re-initialized. Inefficient navigation: the robot spent more than two times the time to reach the goal place, compared with the situation when navigating with no human presence; this usually happened when the robot lost track of its position and thus the time-consuming global localization process is conducted frequently.

The success rates result is given in Figure 15, in which the performances of three methods are compared, including the classic MCL method, the DLM estimation method using a single sensor only and the proposed multisensor DLM estimation method. The DLM estimation method using a single sensor was implemented by utilizing a leg detector with predefined leg patterns. The reliability of laser-based DLM method is moderate as people walked or run randomly, but it is not satisfactory because the success rate will fall below 25% as the number of people rises, since discriminating legs from many people with partial occlusions are often problematic. The statistical result indicates the superior performance of the proposed method for ensuring higher possibility successful navigation. It’s clearly evident that the proposed method is more reliable and efficient in empowering robots to perform daily navigational tasks in human environments.

Average success rate.

Conclusion

In unstructured environment that human and robots coexist, unexpected moving people may violate the convergence of robot pose samples in the classic probabilistic localization framework, or even cause failure localization in cluttered situations. In this article, a real-time observation localizability estimation method for robot localization in unstructured environments is proposed that entails the use of noisy and multi-cue information from low-cost sensors. The contribution of the approach is a strategy that combines inaccurate LRF data and RGB-D data for computing of the DLM. By aligning two sensor frames, the unreliable part of the laser readings that hit unexpected moving people is fast extracted according to the output of a RGB-D-based human detector, so that the impact of unexpected moving people on observations can be explicitly and accurately estimated. SLM is obtained from a priori probability grid map. A factor of dynamic obstacles influence is computed online, and then is combined with SLM to implement dynamic estimation of the multisensor observation localizability. The method avoids the need for leg detection in inaccurate laser data, which is proved unreliable in cluttered environments. The method is also easy for implementation and is therefore highly desirable to ensure robustness and real-time performance for long-term operation in populated environments.

Footnotes

Author’s note

Authors are also affiliated to Key Lab of Measurement and Control of Complex Systems of Engineering, Ministry of Education, China.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship and/or publication of this article: Natural Science Foundation of China (Grant 61573101 and 61573100), the research project of Electric Power Science Research Institute of Jiangsu Electric Power Company (Grand 5455HT160027) and the Fundamental Research Funds for the Central Universities(No. 2242013K30004).