Abstract

Nowadays, humanoids are increasingly expected acting in the real world to complete some high-level tasks humanly and intelligently. However, this is a hard issue due to that the real world is always extremely complicated and full of miscellaneous variations. As a consequence, for a real-world-acting robot, precisely perceiving the environmental changes might be an essential premise. Unlike human being, humanoid robot usually turns out to be with much less sensors to get enough information from the real world, which further leads the environmental perception problem to be more challenging. Although it can be tackled by establishing direct sensory mappings or adopting probabilistic filtering methods, the nonlinearity and uncertainty caused by both the complexity of the environment and the high degree of freedom of the robots will result in tough modeling difficulties. In our study, with the Gaussian process regression framework, an alternative learning approach to address such a modeling problem is proposed and discussed. Meanwhile, to debase the influence derived from limited sensors, the idea of fusing multiple sensory information is also involved. To evaluate the effectiveness, with two representative environment changing tasks, that is, suffering unknown external pushing and suddenly encountering sloped terrains, the proposed approach is applied to a humanoid, which is only equipped with a three-axis gyroscope and a three-axis accelerometer. Experimental results reveal that the proposed Gaussian process regression-based approach is effective in coping with the nonlinearity and uncertainty of the humanoid environmental perception problem. Further, a humanoid balancing controller is developed, which takes the output of the Gaussian process regression-based environmental perception as the seed to activate the corresponding balancing strategy. Both simulated and hardware experiments consistently show that our approach is valuable and leads to a good base for achieving a successful balancing controller for humanoid.

Introduction

Environmental perception is one of the most elementary functionalities of intelligent robots. Like human or animals, an intelligent robot acting in the real world must follow some online control mechanism that is gated by the feedback information. As an example, for a walking humanoid robot, any large unexpected environmental changes such as emergent uneven terrain or unknown external disturbances should be dealt with momentarily in order to keep current status. The robot gathers environmental information all the time with its equipped sensors. With this primitive sensory information, how the environment is changing is then perceived. Thereafter, the robot manages to well respond to the encountered situation by evaluating its past behaviors and determining the next action. The response the robot taken might either keep performing the task at hand or start new strategies to cope with events induced by outside emergency. Obviously, to reach such a success, an exact environmental perception is essential.

Although quite a few attempts including different kinds of sensory facilities and several approaches have been investigated in past years, environmental perception is still a challenging task. First, despite that robots are increasingly expected to have the similar motion skills like human or animals, the equipped sensory facilities are still far more limited. For example, unlike the ability of humans to gather large amount of environmental information through the nervous systems in every second of their lives, the involved sensors for common seen humanoids are only inertial measurement units (IMUs) located around its center-of-mass (COM), force sensors on feet, and cameras on heads. Thus, preferred measurements on many environmental changes are hardly to be provided by limited sensory functionalities. The major problem for limited sensing is the lacking of direct measurements about the environment. As a result, the robots may indirectly derive the environmental information through limited observations. Generally speaking, the less the sensory functionalities, the harder these derivations. Second, for complex robot systems like humanoids, the high degree of freedom (DOF) may lead to higher nonlinearity and uncertainty. These phenomena may lead the sensory signals to be more noisy and unpredictable, which further increases the hardness for determining the relationship between the observed sensory information and the real environmental changes.

To deal with these environmental perception problems, mechanisms that characterize the relationship between the primitive sensory signals and the state variables of the environment are necessary. In establishing such mechanisms, one possible approach is to build the mapping from the sensory signals to the state variables, so that to directly reveal the changes of the environment. 1 Another approach is to introduce the probabilistic filtering techniques, such as Kalman filter, aiming to build the transition function from state variables to sensory signals as well as to establish the system dynamic model. 2,3 Both abovementioned approaches serve as the appropriate solutions for efficient online environmental perception tasks. However, for robot systems with large number of DOF such as humanoid robots, the actual dynamics are heavily nonlinear and high dimensional. Therefore, due to that, the sensory mappings or system dynamics can hardly be described by linear transformations or simple differential equations, developing desirable corresponding models may be quite difficult. In tackling such nonlinearity and uncertainty, methods under probabilistic learning framework turn out to be a suitable way, through which the above models are learned from data, as several past works have been done on this topic. 4 –6

In this article, we focus on how Gaussian process (GP) regression is used for settling the above modeling problems for humanoid environmental perception with the integration of inertial sensory informations. The inertial sensors, which are usually integrated as the IMU placed near the COM of humanoids, can give measurements of body tilt angles, angular velocities, and accelerations. Although each one of them reveals only indirect noisy primitive information about environmental changes, jointly utilizing multiple sensory information may significantly enhance their representational power. The GP regression is a general probabilistic approach commonly used for representing high dimensional nonlinear functions 7 and has been successfully employed for many tasks in the area of robotics. 6,8,9 Considering its strong representational power in describing nonlinear functions and the potential ability in quantifying uncertainties in estimations, the GP regression could thus serve as an appropriate choice in this research. Moreover, the specified tasks for studying robot environmental perception in this article are actually the detection of unknown pushes from outside and the detection of sloped terrain while walking, which can be solved in past works by designing dynamical models or utilizing specific sensory facilities. 2,10 While with the employment of GP regression, some unnecessary assumptions such as introducing simplifications or constraints into the perceptual models could then be avoided. In the work by Plagemann et al., 11 GP regression is successfully introduced in estimating the sloped terrain for quadrupedal walking with laser sensing, and the usefulness of GP regression was justified in learning such complicated sensory mappings. Sharing the similar idea, in our work, the GP regression will be further extended on humanoid robots with inertial sensors. 12 Taking into account the point of integrating multiple sensory information, the GP regression-based humanoid environmental perception approach is proposed. With abovementioned tasks under two typical environment changing situations, that is, unknown external pushing and suddenly encountering sloped terrains, the proposed approach is verified to be a powerful potential candidate for coping with nonlinearities and uncertainties in humanoid environmental perception.

The environmental change detection always act as a first step for robot being robust for carrying out assigned tasks or taking emergency response once the change being an unexpected one. In this research, humanoid balancing control is further studied, where several bio-inspired mechanisms shown effective by previous researchers are involved 13 –15 With the output of the GP regression-based environmental perception, an effective humanoid balancing controller is successfully achieved.

The rest of the artice is organized as follows. In section “Perception tasks under two typical environment changing cases,” the brief descriptions of inertial sensors and the analysis of pushing force and sloped terrain detection tasks are given. In section “Environmental perception with GP regression,” the learning and the online estimation methods based on GP regression are discussed. Section “Experimental results” shows the experimental results. The concluding remarks are given in “Conclusions” section.

Perception tasks under two typical environment changing cases

Since environmental perception is a large scope topic, first, the task specification should be introduced. This may include how robot is configured, what kind of sensors will be used, and what kind of situations will be encountered, and so on.

Robot configuration

This subsection mainly describes what kind of sensors is supposed to be equipped on the robot and how the motion of the robot is controlled.

Sensors equipped on the robot

Sensors involved in this research include a three-axis gyroscope and a three-axis accelerometer that are integrated into the IMU settled near the COM of the robot. This setting is common for humanoids. The gyroscope and accelerometer measure three-axis angular velocities and accelerations, respectively. Fixing the sample frequency of measurements, the body tilt angles of robot can be calculated by the integration of angular velocities based on the chain multiplication rule of the rotational matrix.

16

As suggested in the study by Luo et al.,

12

for simplicity, it is assumed that the environmental changes are only taken place in the forward direction of the sagittal plane, that is, the direction a standing robot faces, so sensory information corresponding to this direction is used only. Thus, in each of the time slides, the sensory information received by the robot is formed as a three-element vector

where a denotes the acceleration, both q and

Humanoid robot motion controller

For a bipedal humanoid robot, the most basic and important skill is the walking, which is also the selected motion behavior for studying the environmental perception issue in this research. To establish a normal waling motion controller for humanoid robot, in this article, a pendulum-based model is simply adopted as in the study by Yi et al.,

17

and the relative displacement of COM could be calculated according to the following equation

where p is the planned zero moment point (ZMP) trajectories, x is the displacement of COM and b is a parameter. Since there are no force sensors assumed for detecting ground contact forces, the ZMP trajectories and the foot positions are prescheduled rather than controlled. Through inversed kinematics, joint angles could be easily obtained.

Fisrt task: Unknown external pushing

This task is actually the pushing force detection and rejection, which are subjects of push recovery, one of the fundamental topics in humanoid research.

14,15,17

The case that a humanoid suffers from an external push force is illustrated as upper subfigures of Figure 1. Obviously, in order to efficiently react to the unpredicted pushing forces, it is essential to give accurate estimations of them as soon as they inflict on the robot. For a dynamical model-based approach, the forces are often modeled as accelerations horizontally affected at the COM. As a result, these accelerations can be independently measured by accelerometers theoretically. But in practice, this is usually unreasonable. First, the actual accelerometers are seldom ideal. The sensitivity leads to strong sensory noises resulting in inaccurate measurements. Second, simply modeling the forces as line accelerations is incomplete. Since the robot usually falls down after being pushed, torques may be happened with respect to the foot that contacts with the ground due to the appearance of the extra gravity that is not offset timely, which makes the robot rotate around its foot. Thus, simply measuring only with accelerometers seems to be insufficient. Therefore, considering that individual sensor usually has limited performance due to complicated disturbance as well as big sensory noises, the idea that pushing forces estimated with multiple sensory information will be investigated as in the study by Luo et al.,

12

by involving some mapping or transformation function, which might usually be nonlinear, that is

Illustration of two environmental perception tasks. The upper left subfigure illustrates the robot being pushed by an instant force. The upper right subfigure illustrates the robot falling down as the result of pushing. The below left subfigure illustrates the robot walking normally. The below right subfigure illustrates the robot meeting with a sloped terrain.

where f is the estimation of the pushing force with a mapping function φ;. In this research, to be simplicity, only such a case is focused that the pushing forces are instantly applied on the COM from the back of a standing robot. The objective is to detect the strength of forces as earlier as possible.

Second task: Suddenly meeting sloped terrains while walking

Without sensors such as infrared, laser, camera, and so on suddenly meeting sloped terrain is a challenge issue for bipedal humanoid robots only with IMU sensory information. Meanwhile, meeting sloped terrain is one of the most representative cases of environmental changes for a humanoid while walking on flat surface, as illustrated by the below subfigures of Figure 1. Ideally, the angle of the sloped terrain humanoid encountered can be calculated from both of body tilt angle changes and proprioceptive measurements by employing simplified modeling and assumptions.

10

But difficulties remain in giving elaborate estimations. As same as in task of detecting pushing forces, building simple models for revealing relationships between individual sensory information and terrain slope angles is also not easy in practice. As discussed in the study by Luo et al.,

12

more than the problems stated in the first task of pushing force detection, another important problem that needs to be considered, even ignoring the effects of sensory noises, is the issue of how to reasonably tackle the touching moment, as illustrated in Figure 2. Without visual feedbacks, the robot can only passively “run into” a sloped terrain. The touching moment issue states that, as the moments of touching are different, the changing process of sensory information after the first touch occurrence should also be different. This effect reveals the fact that detecting environmental changes in meeting slopped terrain is not only just the matter of sensory information modeling but also closely involves current inner walking state of the robot. That is to say, the following simple mapping from the sensory observations z to the angle of the slopped terrain θ

Illustration of different moments for touching the sloped terrain. The left subfigure illustrates the robot touches the terrain near the end of a left stepping. The middle subfigure illustrates the robot touches the terrain earlier than that of the left subfigure during a left stepping. The right subfigure illustrates the robot touches the terrain in a right stepping. This phenomenon reveals the fact that the slope of terrain could not be identified only by the inertial sensory feedback. The effect of current robot walking state, which can be determined by the phase variable tph, should also be involved.

is not enough any more, since the argument of sensory measurements z is not sufficient in giving identical estimations of terrain slope angles without utilizing information about robot current state at the moment of touching the sloped terrain. As in the work by Luo et al., 12 a discrete phase variable tph is thus introduced to represent robot current motion status, which is expected to synchronize the evolution of the walking process. As a consequence, in the task of detecting sloped terrain while robot walking, the sensory mapping ψ becomes well-defined when phase variable tph is taken into account, as formalized by a modified version of equation (4) as following

Note that the choice of walking control strategies is not restricted to be the model characterized by equation (2). In fact, any controller where the walking control state of robot can be identified by a minor collection of discrete variables is appropriate for building such sensory mapping.

Environmental perception with GP regression

In this section, the basic idea of GP regression-based environmental perception approach is first described, and then the principle of GP regression is discussed. Perceive environmental changes with GP regression are finally outlined.

The basic idea of GP regression-based environmental perception

The basic objective of robot environmental perception is actually to give accurate and prompt estimation of environmental changes. Generally, there are two kinds of candidate approaches. One is the approach based on probabilistic filtering algorithms, such as the Kalman filtering method, which can provide efficient and accurate performance in practice. For dealing with the modeling problem described in section “Introduction,” the GP regression can be used in developing the sensory and dynamical models required for filtering. 6 But when this kind of approach is employed in estimating the environmental state variables, it faces with nontrivial obstacles. In practice, it is more general to model the environmental changes as the action variables, which are assumed to be known by the system. While if they are modeled as state variables to be estimated, the complex environment itself has to be analyzed and modeled beforehand, which is obviously difficult and wasteful.

Another candidate approach is the one based on supervised learning, which takes the idea to directly learn the mappings from sensory observations to environmental change variables. With these learned mappings, the online estimation of environmental changes can be then obtained. Since the latter approach avoids the challenge of modeling the environment, it is followed in this research. That is, within the proposed GP regression-based environmental perception approach, those direct sensory mappings are taken as the targets to be learned. Specifically, the sensory mapping, as equations (3) and (5), is first obtained with GP regression, then those learned models are used to estimate the environmental changes online.

The principle of GP regression

GP regression actually takes the GP, an infinite set of random variables, into the regression technique in target process modeling, which can also be regarded as a general kernel-based supervised learning framework. As a nonparametric model, the only constraint within a GP is that any arbitrary finite subset of its variables are jointly Gaussian, that is, GP only introduces minor assumptions of the function to learn. Moreover, as a kernel method, GP is suitable in modeling highly nonlinear functions. Thus, it is a desirable model for learning sensory mappings focused in this research.

In the following parts, as in the work by Luo et al., 12 GP regression is first formalized, and then the model learning is discussed.

GP regression

In given observation set,

where X is the n by l input data matrix with l being the input dimension, and

holds, where y* is the output of the test sample. Thus, the following conditional distribution also yields normal distribution

Therefore, with this GP regression model, the output of a test sample could be estimated according to both equations (9) and (10). Equation (9) representing the mean function gives the estimated output value, while equation (10) indicating the variance function presents the uncertainty about such a estimation.

As mentioned earlier, GP regression is a kernel-based method with kernel function k, and a commonly used kernel function, the squared exponential, is laid out as following

where δpq is the Kronecker delta, σf, l, and σn are hyper-parameters that can usually be learned through maximum likelihood estimation (MLE) from the training data.

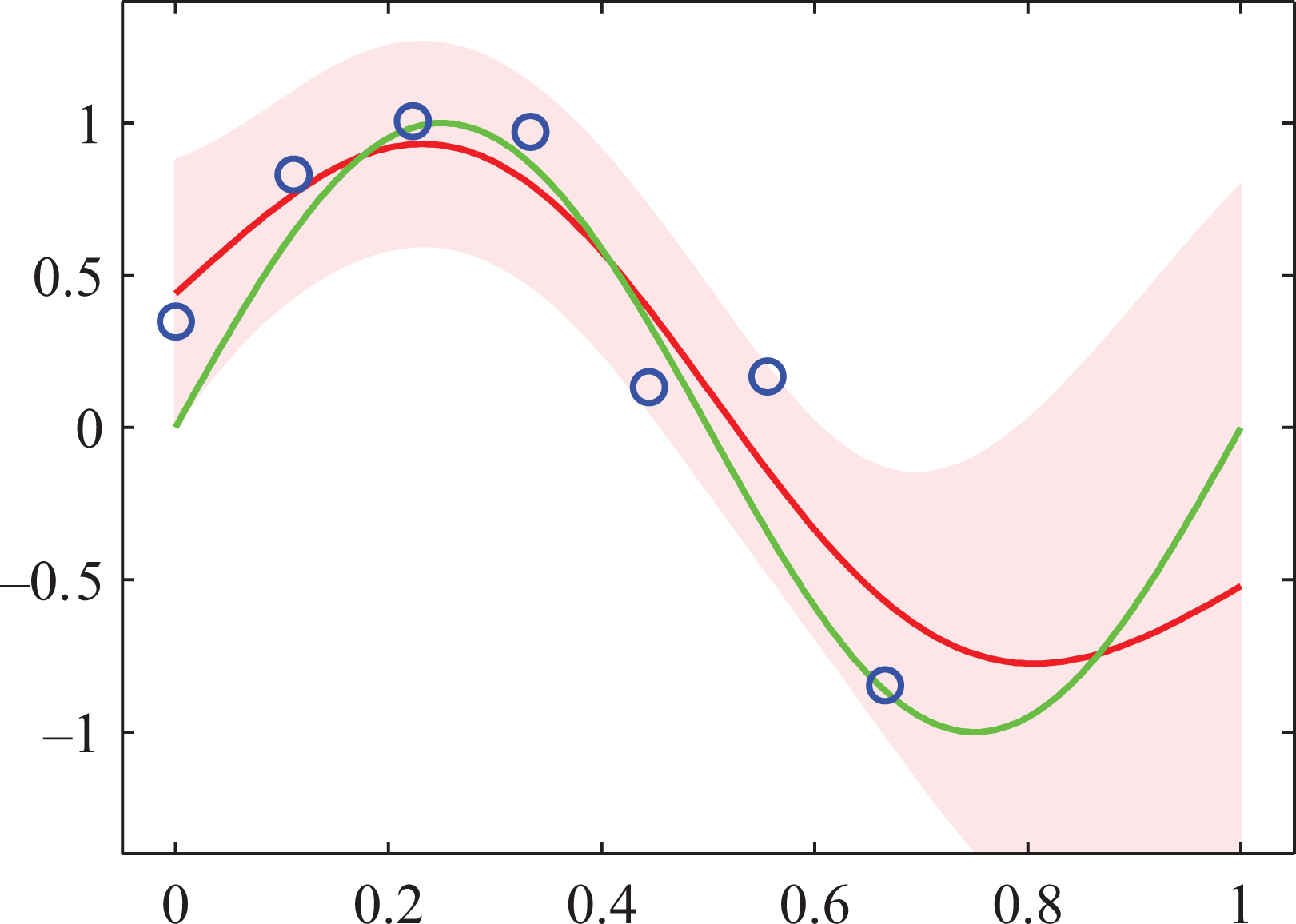

It is worth mentioning the fact that, the uncertainty of GP regression estimation is explicitly expressed by the variance function (10) and is one of the major advantages in GP learning. Based on which, the learning process could then be under the guidance. For example, in GP learning, the uncertainty for a new test input is affected by the number of training samples near it. The uncertainty of output will reduce if sufficient training samples are given. This property is illustrated in Figure 3. On the other hand, as to be observed in equations (9) and (10), the mean and variance calculations of GP regression involve the inversion of n by n matrix, which means

An illustration of GP regression cited from. 18 The estimated function (red curve) is based on points (blue circles) sampled from a sinusoid (green curve) with Gaussian noises. The increase of uncertainty (the pink region) due to absence of training data. GP: Gaussian process.

GP model learning

From above description, it is known that the GP model learning process should involve the MLE procedure to optimize its hyperparameters of the kernel function. That is to say, the training data set for GP regression is used for both of the model learning and output prediction. Regarding two selected environmental perception tasks in this research, the model learning process will be further detailed as in the study by Luo et al. 12 as follows.

For the first task (unknown external pushing), n training sample pairs should be first collected and noted as

where z represents the sensory inputs as formalized in equation (1), f expresses the corresponding labeled forces taken as the outputs. With the whole training set, hyperparameters of the GP model as listed in equation (11) are learned first. Then the estimation of the function given by equation (3) could be obtained according to equation (9).

However, for the second task (meeting sloped terrain while walking), above model learning steps are not qualified any longer. As discussed at the end part of section “Perception tasks under two typical environment changing cases,” the mapping function to be estimated is right such a case that depends not only on sensory inputs but also on the phase variable tph as indicated by equation (5). To deal with this problem, a simple strategy is introduced that segmenting the whole training data into bins according to tph. That is, same as that in the pushing force detection task, n training sample pairs are first collected and noted as

Then, the whole training data is segmented into m bins with m being the number of the possible value of phase tph

where ni is the size of i th data bin and

Online environmental perception with GP regression

Based on the learned GP regression models, noted as GP1 and

For the sloped terrain detection task, the estimation for a new test input is given using the model with the same phase variable tph. Therefore, before calculating equations (9) and (10), the phase variable tph representing current robot walking status for the new test input should be first identified.

As a summary, the overall procedure for online estimation within the duration T is presented in algorithm 1.

Experimental results

With simulation platform Webots 6.0 developed by Cyberbotics Ltd., experiments to evaluate the GP regression-based environmental perception approach are performed, along with the experiments on the performance of the presented humanoid robot balancing controller.

Experimental setting

To evaluate the contribution of this research, within the Webots simulation environment, 20 the DARwIn-OP humanoid robot model 21 is employed. The robot model is a bipedal one with head, torso as well as two arms and two legs, containing a total of 20 DOFs distributed as, two in the head, three in each arm, and six in each leg. It is also equiped with a three-axis gyroscope and a three-axis accelerometer that integrated into the IMU settled near its COM. Through the build-in functionality of Webots, sensory information regarding gyroscope and accelerometer is obtained, and then regularized according to equation (1).

For the first task (unknown external pushing), the pushing force ranging from

For the second task (meeting sloped terrain while walking), a slopped terrain is set in front of the robot, and then the robot starts its walking from a fixed distance and keeps walking straightly toward the terrain. In developing the training samples, three slopped terrains with three angles of

In this research, the Gaussian processes for machine learning (GPML) Matlab toolbox developed by C. E. Rasmussen and H. Nickisch 22 is adopted for GP model learning and estimation. The squared exponential given by equation (11) is employed as the Gaussian kernel function for both tasks. When using the GPML package, we need to first specify the mean function and covariance function of a GP as well as a likelihood function, where the corresponding hyperparameters are initialized as, 0 for the mean, 0.1 for the variance and the likelihood function is specified to be Gaussian. In the first task, a single GP model is learned with the whole training data set. While in the second task, totally 75 GP models are learned corresponding to 75 tph, respectively. With the algorithm 1, the performance of the proposed environmental perception approach is evaluated based on test samples of two tasks. In the experiments, to tune the relative weights for input features, the angular velocities values are scaled by a discount factor of 0.02.

With the GP regression-based environmental perception, a humanoid balancing controller is developed by employing bio-inspired balancing strategies according to the studies by Stephens and Luo et al. 15,23 For the first task, the balancing controller will take a sequence of actions to recover to robot original status once some specific external pushing force is applied upon the robot, where the specific external push force is estimated by the learned GP model. While for the second task, once a new slope degree is estimated, the robot balancing controller responses with shifting to a new corresponding walking pattern. To evaluate the performance of the presented robot balancing controller, further experiments are performed. For the first task, two additional pushing forces (31 N and 37 N) are collected and applied upon the standing robot. For the second task, two unseen slope angles (8° and 13°) are involved, and corresponding sloped terrain is placed on the way of the walking robot. It is worth mentioning that, when without balancing controller, the robot will fall down while suffering from these two specific pushing forces or meeting the sloped terrain of angle 13°.

Results and discussions

Both Figures 4 and 5 illustrate the experimental results for humanoid environmental perception under two tasks. To make it more clear, taking the top-left subfigure of Figure 4 as an example, the sensory inputs are detailed. This subfigure illustrates the sensory inputs for 27 N pushing force. The tilt angle (in blue) first decreases (right from the moment that the pushing happens) and then increases, which means that after the robot being pushed with the given force of 27 N, its body first bends forward and then leans back, since in this case the robot does not fall down. During the whole process, the corresponding velocity (in green) also first decreases and then increases slowly (note that the velocity values are scaled by a discount factor of 0.02 as mentioned above), and right at the point the tilt angle reach its minimum, the velocity changes its sign from negative to positive. It is also worth mentioning that, the robot always has a small negative bias in tilt angle (means a slightly bend forward) so that to better cater to robot’s physical configuration to keep balance.

The experimental results for pushing force detection. Two upper subfigures illustrate the sensory inputs of test data for two pushing forces (27 N and 32 N). Curves in blue, green and red denote the body tilt angles q, the angular velocities

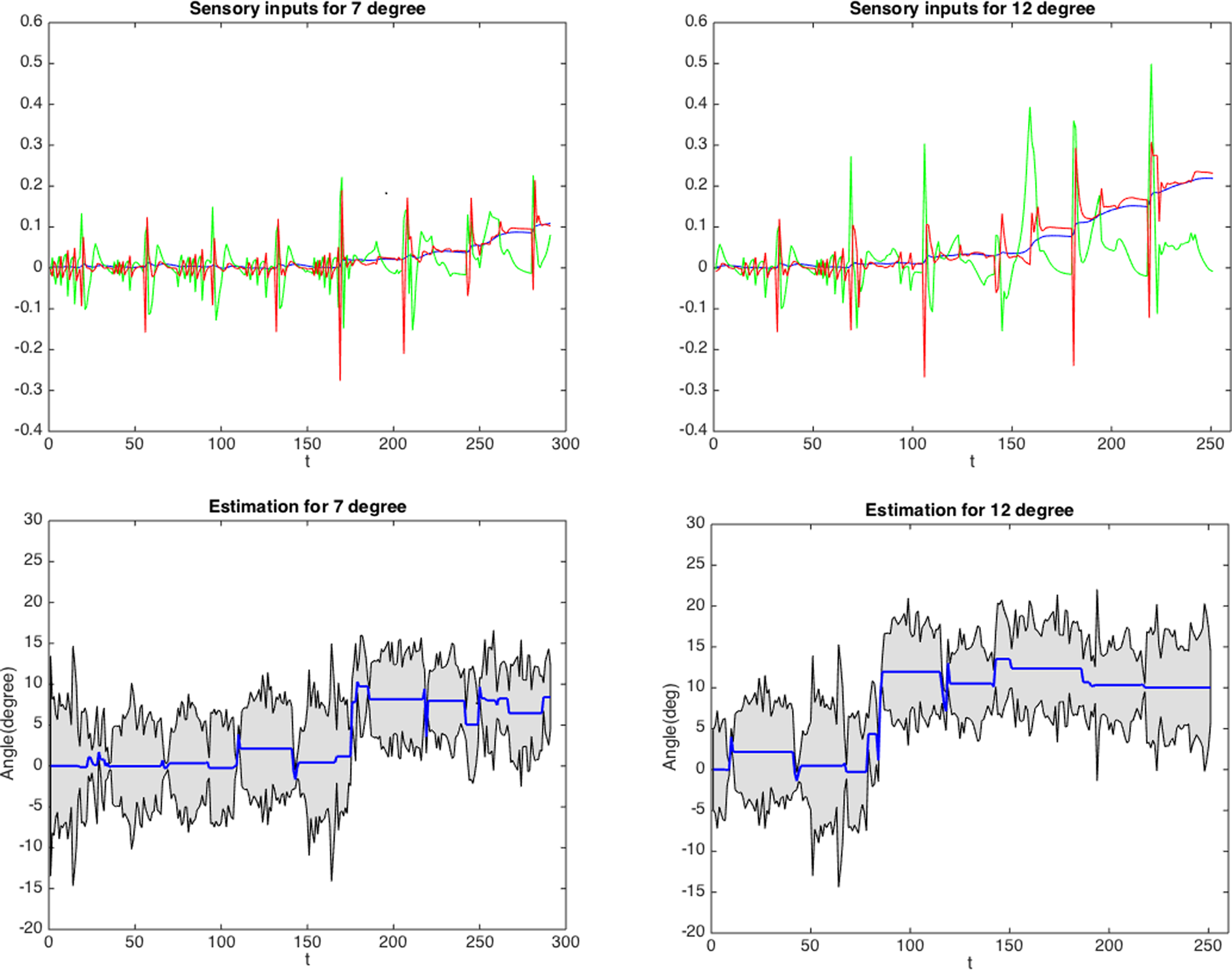

The experimental results for sloped terrain detection. Two upper subfigures illustrate the sensory inputs of test data for two sloped terrain angles (7° and 12°). Curves in blue, green and red denote the body tilt angles q, the angular velocities

While the acceleration (in red), which is not the angle acceleration but the line acceleration from horizontal direction, is read directly from the accelerometer integrated in the IMU settled near the COM of the robot. In the simulation platform employed in this research, unlike both the tilt angle and the corresponding velocity where the bend forward is set negative, for the acceleration, its forward direction is set positive. As a consequence, in this subfigure, the acceleration usually takes a quasi opposed behavior, that is, it first increases to a positive value and then decreases to a negative value, after that, it lasts with negative values for a period of time but increases slowly which indicates a slowdown backward acceleration.

From Figures 4 and 5, it can be seen that the proposed detection approach can give prompt estimations for both external pushing forces and terrain angles, and the outputs seem to be with rather satisfying accuracy. By comparing both the sensory inputs and the estimated results, for the first task on pushing force detection in Figure 4, the promptness of the estimation is not surprising since the sensory data also exhibits significant variation as soon as the pushes are applied. While on the contrary, for the second task on sloped terrain detection in Figure 5, even though the sensory inputs change rather gradually, the promptness of GP-based estimation is still reserved. This fact may reveal that GP regression can utilize the multiple sensory information to give accurate and prompt estimations.

It can be further observed that, for the first task on force estimation in Figure 4, the variances are consistently with rather high values for all of the test points, while for the second task on terrain angle estimation in Figure 5, the variances fluctuate more significantly and turn to rather small value for a few test points where the mean estimations change. This phenomenon observed is interesting since intuitively the second task on sloped terrain estimation is a more difficult one. It seems that more training samples are required to reduce the estimation variances. Nevertheless, the cause of uncertainty is not only the lacking of sample points for estimation but may also relates to the training of GP models. It can be reasoned from equations (9) to (11) that the variances may be incorrectly calculated if the kernel function is not well tuned. In addition, it is shown in the second task on sloped terrain detection in Figure 5, as the GP models are learned separately with local data bins corresponding to the phase variable tph, the number of data samples required for learning each individual model is significantly reduced. This fact is consistent to what is generally known that learning a local model will be more easier than learning a global one. Furthermore, it shows that the idea of introducing the phase variable tph into the modelling is not only necessary but also beneficial to the efficiency.

From both experimental results, we also see that the biases or errors in estimations could not be ignored. One major source for such a problem lies in the ambiguity that comes from training data. For example, at the initial stages when the robot touches the sloped terrain, the sensory information may be quite ambiguous to that of normal walking states. This may lead to incorrect estimation for noisy test inputs from both of normal walking and walking encountered sloped terrain, since incorrect training data points may affect the accuracy of estimation results. This problem is not only caused by the insignificance of input features but also from the difficulty in labeling training data. It is often difficult to discriminate the early stages of environmental changes from normal states, which is also an interesting topic to be further studied in the future.

The experimental results for humanoid balancing control are illustrated in Figures 6 and 7. Since the robot balancing control is actually an action process as mentioned earlier, a sequence of key frames are drawn from the balancing process as shown in both figures. In Figure 6, the upper subfigure Figure 6(a) shows the robot balancing process when suffering the external pushing force of 31 N, while the below subfigure Figure 6(b) exhibits the case of 37 N. In Figure 7, the upper subfigure Figure 7(a) shows the robot balancing process when meeting the slopped terrain with 8° angle, while the below subfigure Figure 7(b) exhibits the case of 13°.

Illustration on humanoid robot balancing sequence with the established bioinspired motion controller for external pushing forces. Two subfigures show that, under both cases regarding to two unseen external pushing forces (31 N and 37 N), the robot could successfully and consistently recover to its original status after the environmental changes are detected correctly and the corresponding actions are executed subsequently. (a) Humanoid robot balancing under 31N external pushing force and (b) humanoid robot balancing under an unseen 37N external pushing force.

Illustration on humanoid robot balancing control when meeting the slopped terrains. Two subfigures show that, under both cases regarding to two unseen sloped terrains (8° and 13°), the robot could successfully keep walking under the new circumstances after the environmental changes are detected correctly and the corresponding walking pattern are called subsequently. (a) Humanoid robot balancing when meeting the slopped terrain with 8° angle and (b) humanoid robot balancing when meeting the slopped terrain with 13° angle.

It can be seen from both subfigures of Figure 6, the humanoid robot could recover to its original status (quiet standing) successfully and consistently with the GP regression model-based balancing controller. The balancing process in the below subfigure Figure 6(b) behaves much more bending extent comparing to that in upper subfigure Figure 6(a). This is because the external pushing force in the former case (below subfigures, 37 N) is larger than that in the latter case (upper subfigures, 31 N). The external pushing force of 37 N seems almost beyond capability of the balancing controller. Similarly, from both subfigures of Figure 7, the robot could successfully keep walking under the new circumstances provided that (i) the environmental changes are detected correctly and promptly and (ii) the corresponding walking controller is executed immediately in succession. The below subfigure Figure 7(b) is a more representative one, since under this case, the robot will fall down once the new suitable walking controller is not called timely. From the below subfigure Figure 7(b), an obvious bend forward motion in fouth key frame can be observed, which means a new suitable walking controller is taken into use timely.

The success of the robot balancing control owns to both effective balancing strategies and accurate and timely detection of pushing force or sloped terrian. That is to say, it is further revealed from the experimental results that the proposed approach is effective in feeding correct detection output to balancing control strategies.

To further evaluate the proposed approach, hardware experiments on a real bipedal humanoid robot, PKU-HR5, are performed. The PKU-HR5 humanoid is the fifth version kid-size humanoid robot (Peking University, China), which is 2.65 kg weight and 47.8 cm high. It has 20 DOFs, including 6 DOFs for hip, 1 DOF for each knee, 2 DOFs for each ankle, 2 DOFs for head, and 3 DOFs for each arm. It uses 20 ROBOTIS RX-28 servo actuators manufactured by the ROBOTIS Co, Ltd. . Similar to the DARwIn-OP humanoid robot model selected in our above simulations, PKU-HR5 humanoid also equipped with a three-axes gyroscope, a three-axes accelerometer, and a magnetic compass that are integrated into the IMU settled near its COM.

For the hardware experiments, two cases, that is, PKU-HR5 quiet standing and forward walking, are considered. The environmental changes are induced by a simple hand-push from the back. Similar to that of simulated experiments, during the hardware experiments, the status of the robot keeps being monitored. As signals referring to the robot status is perceived, the online estimation is carried out with the learned GP regression models. Once a hand-push is detected by the estimation process, the corresponding balance controller (predesigned) is then triggered according to the specific estimated results. Figure 8 illustrats the experimental results of two aforementioned cases, where perceived signals representing the robot status as well as the corresponding estimated results are shown. Consistent with that of simulated experiments, it can be seen from Figure 8, the proposed approach can give prompt estimations for the hardware robot PKU-HR5 under two cases of PKU-HR5 quiet standing and forward walking. In details, two upper subfigures show the perceived signals for two experimental cases, respectively, when hand-pushes are applied from the back, where both evolutions of the tilt angle (in blue) corresponding to two cases are similarly decreasing at first and then increasing to the normal levels. This means PKU-HR5 experiences the successful balancing control processes. It is worth mentioning that, differing from that of the simulated experiments, the real physical robot PKU-HR5 always has a small positive bias in tilt angle (in blue, means a slightly leaning back) due to its physical configurations as shown in the top-left subfigure. It can also be observed that the right one is much more complicated than that of the left one, this is reasonable since the components of the perceived signals, that is, the tilt angle (in blue), angular velocity (in green, discounted), and the acceleration (in red), for a walking robot are naturally more versatile than that for a standing robot. Two below subfigures illustrate the estimated results of the corresponding perceived signals. For the first experimental case that a hand-push is applied while PKU-HR5 quiet standing, a push force of about 40 N is estimated as shown in the below-left subfigure. Before this push force is correctly estimated, a push force of about 14 N is first detected, which, however, is regarded as a false alarm due to that a more larger push force (about 40 N) is detected soon after. While for the second experimental case that a hand-push is applied while PKU-HR5 forward walking, a push-force of about 30 N is estimated as shown in the below-right subfigure.

The experimental results on a physical humanoid robot PKU-HR5 when sufferrs from the hand-pushes from the back. Two upper subfigures illustrate the perceived signals of the robot statuses under two cases regarding to quiet standing and forward walking, where curves in blue, green and red, respectively, denote the body tilt angles q, the angular velocities

Figure 9 illustrates the sequences of key frames that are drawn from the balancing control processes when the robot suffers from back pushes with human hand under two experimental cases of quiet standing and forward walking. From both subfigures of Figure 9, it can be seen that PKU-HR5 humanoid successfully maintains its balance, which is also consistent with the results of above discussed experiments on a simulated robot.

Illustration on balancing control of physical humanoid robot PKU-HR5 when sufferring from the hand-pushes from the back. Two subfigures show that, under two cases regarding quiet standing and forward walking, the robot could successfully keep balance and recover its original status (either standing or walking) after the environmental changes are detected correctly and the corresponding actions are executed immediately in succession. (a) A hand-push from the back while PKU-HR5 quiet standing and (b) a hand-push from the back while PKU-HR5 forward walking.

Conclusions

Humanoid environmental perception is an essential and challenging issue in robotics area, especially when less sensors are equipped upon the robot. In this research, an alternative approach based on a learning paradigm employging GP regression model is detailed and analyzed, aiming to solve the modeling problem of nonlinear and noisy indirect mapping from sensory information to environmental changes. In order to dig out the potential cues that conveyed in feedback signals derived from limitted sensors, the idea of integrating inertial sensory information is involved. On two typical tasks of environmental perception with multiple inertial sensory information, that is, pushing force detection and sloped terrain detection, the proposed approach is evaluated and discussed.

In particular, due to the complexity of the second task, a walking phase variable is introduced to establish the detection model. It is shown that, such a technique is not only necessary but also helpful in improving the learning efficiency.

Furthermore, upon the proposed GP regression-based environmental perception, a humanoid balancing controller with several strategies is developed and the corresponding performance on two specific tasks are evaluated. Experimental results on two environmental perception tasks as well as humanoid balancing control consistently reveal the effectiveness and potential of the contribution in this research. The future work lies in exploring further possible improvements to reduce the ambiguities of the environmental perception.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The work is supported in part by the National Basic Research Program (973 Program) of China (no. 2013CB329304), the National Natural Science Foundation of China (nos 11590773 and 61421062) and the Key Program of National Social Science Foundation of China (no. 12&ZD119).