Abstract

3D object instance segmentation plays a vital role in various applications such as autonomous driving, robotics and virtual reality. However, tabletop scenes exhibit diverse object complexities and size variations. The challenge is to enhance the accuracy of segmenting these scenes for multiple object instances. This limitation directly impacts robots’ capabilities to effectively grasp and manipulate objects. In this paper, we propose a multi-scale deep learning and clustering-based approach for object instance segmentation in tabletop scenes. Our approach incorporates a multi-scale neighborhood feature sampling (MNFS) module specifically designed to extract local features, and a clustering algorithm to eliminate noise and preserve instance integrity. Furthermore, we combine the strength of both methods through ScoreNet and non-maximal suppression. We conducted extensive experiments on TO-Scene, the first large-scale dataset of 3D tabletop scenes, and observed an average mIoU improvement of approximately 4.07% compared to existing methods. This highlights the superior performance of our proposed method. In addition, we tested our algorithm on a real-scene robotics platform and showed that it has good performance and generalization capabilities to support future applications such as robot grasping.

Introduction

3D object instance segmentation is a fundamental task in computer vision that aims at recognizing and outlining individual separate objects in a 3D scene. It plays a vital role in various applications such as autonomous driving, robotics, healthcare, augmented reality and virtual reality. As robots are widely used in various fields of human production and life, it has become an inevitable requirement for the development of robot-operated intelligent technology to allow robots to replace humans to autonomously complete a variety of humanoid operation tasks in complex and unknown tabletop scenes.

However, most of the current research focuses on large-size scenarios such as autopilot or remote sensing, and there is insufficient research on small-size scenarios such as tabletop objects. Tabletop scenes may contain a wide variety of objects with different categories and sizes (such as pens, cups, fruits, computers, desks, etc.). Even objects within the same category can exhibit diverse shapes (e.g., Cup, Mug, Travel Tumbler, Bowl Cup, Goblet, Champagne Flute, etc.), adding complexity to the problem due to the large variation in object types and sizes. Additionally, objects may suffer from boundary ambiguity, further complicating instance segmentation. As a result, improving the accuracy of tabletop and multi-object instance segmentation becomes a challenging problem that is a crucial component of intelligent perception and flexible grasping for robots. Instance segmentation for tabletop scenarios helps industrial and service robots to understand the applied environment more deeply and facilitates the subsequent high-level decision-making process.

Traditional instance segmentation methods have focused on 2D images with the task of segmenting objects in a single image plane.1,2 With the rapid development of 3D sensing technologies such as depth cameras and LIDAR, the 3D data acquired by these sensors can provide richer geometric, shape, and scale information compared to 2D data. Whereas, there is an increasing demand for accurate and efficient realization of instance segmentation in 3D scenes. Point clouds, as a common representation of 3D data, have also received extensive research and attention for 3D object instance segmentation,3–5 which faces new challenges due to the extra spatial dimensions and the complexity of dealing with occlusions, cluttered scenes and object shapes.

Instance segmentation of point clouds is mainly categorized into clustering and deep learning methods. Clustering methods 6 can extend many already existing 2D instance segmentation methods directly to 3D, offering improved judgment of object contours. But accurately performing clustering is particularly challenging for several reasons. (1) The point cloud scene contains a lot of interference from background points. (2) The size densities of the instance points are all very different. (3) The semantic gap between point and instance identities is huge. Therefore, over-segmentation or under-segmentation are common problems and can easily exist. In recent years, advances in deep learning, the availability of large-scale labeled datasets, and improved 3D perception capabilities have driven significant progress in the field of 3D object instance segmentation.7–9 However, there still exists some problems. (1) The processing of points by the deep network is susceptible to noise, which produces misclassification of some points. It will cause instances to be incomplete and affect accuracy. (2) The shape of objects in the dataset is limited and insufficient model generalization capability leads to poor application of models trained to real-life scenarios. So, we thought it would be an interesting study to combine the strengths of both while compensating for the weaknesses.

In this paper, we propose a tabletop-aware learning and clustering-based approach to address these challenges and improve the accuracy and generalization of 3D object instance segmentation for robots in realistic tabletop scenes. Our approach utilizes the currently popular Point Transformer deep learning network, and we add a multi-scale neighborhood feature sampling (MNFS) module, taking into account the tabletop scene dataset object sizes and the density contrast between objects and background. The 3D point cloud information is directly feature extracted through the network, and the extracted features are used to design a clustering segmentation method that meets the objects of the tabletop scene, and then the two are advantageously fused through the ScoreNet network and non-maximal suppression. We conducted extensive experiments on TO-Scene, 10 the first large-scale dataset of 3D tabletop scenes for tabletop scenarios, to demonstrate the superior performance of our method compared to existing methods. It achieved test results on the TO-Vanilla, TO-Crowd, and TO-ScanNet datasets with mIoU scores of 82.90%, 81.16%, and 73.58%, respectively. In addition, we apply the instance segmentation test on a robot to a realistic scenario, provide an in-depth analysis of our method, and discuss potential future directions for advancing the field of instance segmentation of 3D objects.

In a nutshell, our contributions are as follows:

We propose a 3D instance segmentation framework based on the fusion of deep learning and clustering. Through our algorithm and ScoreNet, we can greatly leverage the advantages of both and effectively achieve object instance segmentation. A local information extraction module, multi-scale neighborhood sampling (MNFS), was designed to effectively extract the features of small-scale objects on the tabletop. The algorithm was tested on datasets and the robot platform in a realistic scenario. The results show that it has excellent performance and generalization capabilities, which support future applications such as robot grasping.

The rest of the paper is structured as follows: section “Related work” provides an overview of related works in the field. In section “Methods,” we delve into the comprehensive explanation of our methodology. The experimental results and analysis are thoroughly presented in section “Experiments.” Lastly, section “Conclusion” encompasses our summary.

Related work

Deep learning on 3D point clouds

Point clouds are widely used as a common format for 3D data with the development of 3D scene understanding. Processing methods for point cloud deep learning are mainly categorized into projection-based networks, voxel-based ones and point-based ones. Projection-based methods project 3D point clouds into various image planes and then utilize 2D CNN-based networks to extract feature representations,11–13 but the choice of projection planes affects the performance to a great extent, and the occlusion of objects in the projection reduces the accuracy; voxel-based methods aim to turn irregular point clouds into regular representations by voxelization,14,15 and the efficiency of this method has been improved by introducing sparse convolution, 16 but the geometric information of the point cloud may still be lost due to quantizing the point cloud into a mesh according to different dimensions. Point-based methods extract features directly from unstructured point sets, such as PointNet and PointNet++.3,4 With the recent popularity of Transformer17,18 in the NLP field, some researchers have also introduced Transformer and self-attention mechanism module to 3D point clouds, Point Transformer 5 is a classic example, which has achieved excellent results in several point cloud tasks such as recognition segmentation. In this paper, Point Transformer is chosen as the backbone of tabletop-aware learning.

Clustering-based instance segmentation

The basic principle of clustering-based 3D point cloud segmentation methods is to find out certain discriminative rules to map points to a discriminative representation space, where points belonging to the same instance exhibit similar features while points on different objects have different features, and then group different sets of points together in the space. Just like earlier pixel grouping in the 2D domain is similar, for example, Fathi et al. 6 compute the likelihood of pixels and group similar pixels together in the embedding space. In the 3D domain, SGPN 19 proposes a similarity matrix to represent the pairwise similarity between points, and generates instances by merging high similarity points through a grouping algorithm. OccuSeg 20 employs learned occupancy signals to guide the clustering. MTML 21 learns the feature and directional embeddings, and then performs a mean-shift clustering of the feature embeddings to generate the target segments, which are scored based on the orientation feature consistency of the target segments for scoring. A fundamental problem arises from the wide variation in size and point density of object instances in 3D scans, which can result in over- and/or under-segmentation when fixed clustering parameters are used. 22 The problem is made evident in tabletop scenarios where the contrast between the background and the object makes the problem obvious.

So there are some attempts to change the clustering steps, 3D-MPA 23 does this by predicting the center of the instances and then aggregating the points into candidate instances. PointGroup 7 proposes clustering points based on dyadic coordinate sets and introduces ScoreNet to predict the scores of the instance objects. HAIS 8 and SoftGroup 9 obtain the point cloud features through 3D-UNet and then also follow the clustering paradigm by introducing set aggregation and within-instance prediction to improve instances at the object level. In this paper, we achieve the fusion of tabletop-aware learning and clustering-based algorithm by predicting the instance object scores using ScoreNet.

Instance segmentation for robot manipulation

The ability to perceive the geometric space of three-dimensional objects is crucial for robot manipulation. Instance segmentation is also widely used in the field of robot grasping. Researchers24,25 used an instance segmentation network to segment and localize objects in a logistics sorting scene before grasping them. The core idea of both is to improve the efficiency of target pickup by designing a joint learning method of semantic and instance segmentation based on RGBD images. However, it is difficult to be applied to complex and chaotic industrial scenes because the objects in logistics scenes are more organized. Abbeloos et al. 26 use point pair features matching of model points and scene points to solve the instance segmentation problem in highly cluttered scenes, while introducing a heuristic to reduce the complexity. But the computation time will be long due to the large number of points. PPR-Net 27 and FPCC 28 infer instance centers in the feature space and then generate instance segmentation results quickly based on point cloud clustering. This method greatly improves the speed of calculation, but can only be applied to a single class or specific industrial objects. Currently, due to the variety and complexity of objects in tabletop scenarios, there is a shortage of research specifically focused on object instance segmentation in tabletop scenes. Moreover, tabletop scenes are frequently encountered in robotic manipulation applications, making them particularly relevant for robot grasping applications.

Methods

The general framework of this paper is shown in Figure 1. The learning network's inputs are divided into coordinate information coord and RGB information feat. Given the significant disparities between tabletop objects and the background in terms of density and size, a MNFS module is incorporated. The network produces feature F, which subsequently undergoes a two-branch structure, resulting in the extraction of semantic labels and predicted points. Po is the tabletop objects of semantic segmentation from the input point cloud through the deep learning network with a two-branch structure. Then the point set Po, representing the predicted tabletop objects, undergoes a clustering algorithm to yield the cluster set Pc. The semantically labeled pred points Pso and the clustering results Pc are jointly output to ScoreNet, whose output Sc gives the suggested scores for evaluating the two. Finally, non-maximum suppression (NMS) is applied to generate instance predictions.

The diagram of the algorithmic framework. It is composed of four key components: network input, deep learning backbone, the two-branch structure, and clustering fusion output.

Points feature extraction network

The backbone of our points feature extraction network uses Point Transformer, and a MNFS module is designed for small-size objects such as tabletop scenes to fully extract global and local information. In the implementation, we first pre-voxelize the point cloud inputs so that we can obtain more regular structural and contextual information.10,29,30 We set the voxel size to 4 mm

3

to match the small size of tabletop objects. Random sampling of different segmented regions through the MNFS module conveys 60,000 points for training. All points are used for testing. The transformer block within the Point Transformer enables the exchange of information among these local feature vectors, resulting in the generation of new feature vectors as its output. Specifically, the transformer processes input feature vectors using a self-attentive mechanism, enabling each vector to consider connections with other vectors in the sequence. This information is then employed to update the feature vectors, capturing correlations and correspondences in the data. The newly generated, more discriminative feature vectors from the transformer contribute to extracting complex spatial correlations and semantic relationships among objects in the tabletop scene. Information aggregation adapts both to the content of the feature vectors and to the layout of the feature vectors in three dimensions. The network output feature vectors

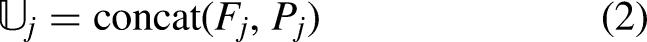

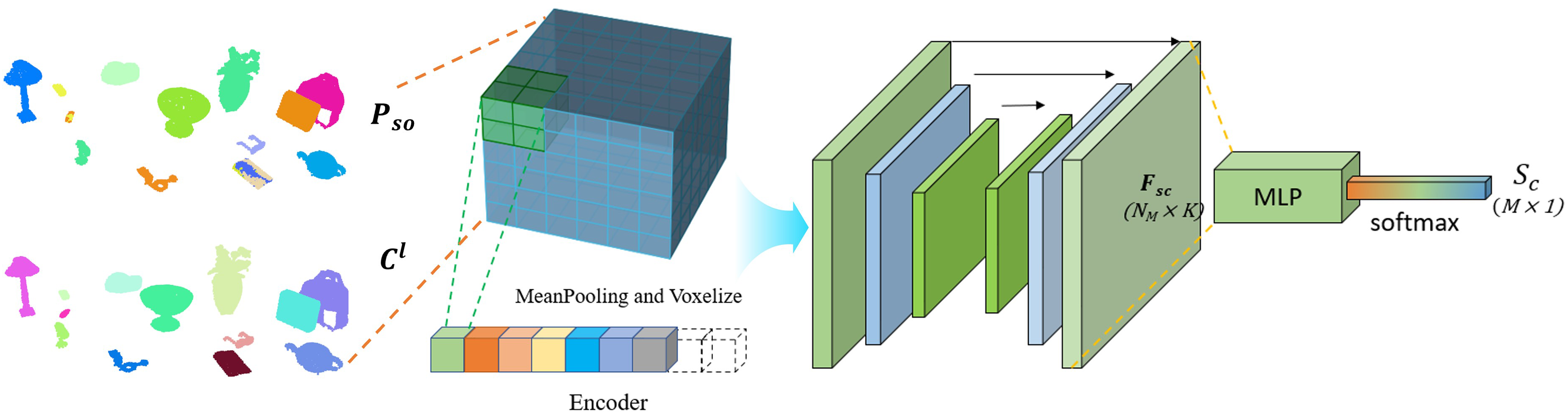

Multi-scale neighborhood feature sampling

In order to finely segment tabletop backgrounds and objects, we need further aggregation of features. The MNFS module is designed to efficiently extract the local features of a point using the density information of its neighborhood space. Inspired by multi-scale local feature aggregating (LFA),31,32 the coordinate and feature information of joint points are encoded and combined to provide efficient discriminative region feature extraction. However, our approach is different from the former in that we dynamically adjust the scale and sampling of the point set region by learning the density features from the neighborhood, taking into account both the intricacies of large-scale features and the nuances of smaller-scale features.

The large-scale features present in 3D point clouds highlight the positional and structural relationships among global objects throughout the entire scene. Differently, small-scale features specifically focus on local information, encompassing geometric normals, local point density distribution, subtle shape variations, and other attributes. These elements are crucial in discerning objects that are either in close proximity or bear similarity. The integration of multi-scale neighborhoods is an effective means of aggregating these basic local features, which plays a good role in segmenting small object and more detailed instances.

Given a set of points Points = {pi}, a preliminary step involves employing Farthest Point Sampling (FPS) to acquire a subset of sampled points {p1, p2, ……, pn}. We construct the multi-scale neighborhood

The multi-scale neighborhood feature sampling (MNFS) module.

Semantic labels branch

A multi-layer perception (MLP) with softmax layer is applied for feature F to get the initial predicted semantic scores

Predicted points branch

We apply a 2-layer MLP to generate gap-shifted prediction that separates different instances that are in contact or very close together. It contains point coordinate information

Clustering algorithm

Based on the Objects output from the semantic labeling branch and the point set result

Our clustering algorithm estimates the watershed of instance boundaries by calculating the distance between the nearest neighboring points, and then predicts the point set of instances by region growing. First, the input predicted point coordinates :

In the predicted points of the learning network, the small-size instances may be split into multiple instances, and the instances may produce the presence of many noises. Our clustering algorithm, on the other hand, maintains the integrity of the instances well, as analyzed in Section “Evaluation on TO-Scene.”

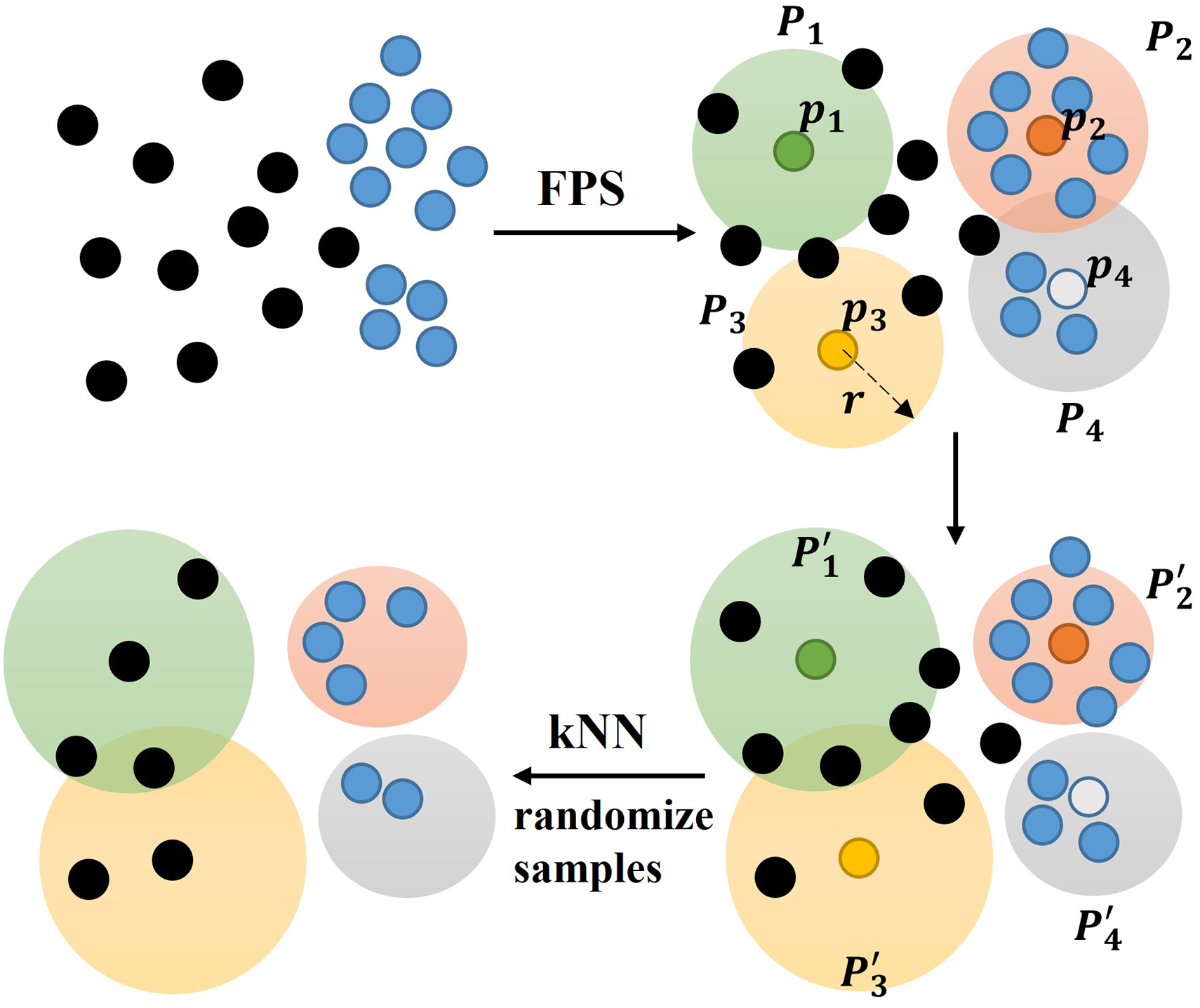

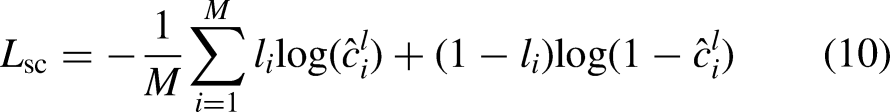

Scorenet

We use a ScoreNet inspired by Point Group 23 to evaluate the clustering results in order to better fuse the results of deep learning and clustering algorithms. In contrast to PointGroup, our tabletop-aware learning strategy excels in extracting semantic information from tabletop objects and completeness of the clustering algorithm to further enhance the accuracy of instance segmentation. The structure of ScoreNet is shown in Figure 3, where the inputs to the network are Pso, Cl, and Lso. Specifically, Pso represents the prediction points of objects with the semantic labels. Cl is the labels of clustering predicted instances, and Lso is the ground truth.

The structure of ScoreNet. The input is voxelated and then encoded into the network to predict scores.

Since there is a correspondence between semantic labels and ground truth, it is necessary to establish a mapping relationship between clustered labels and ground truth.

The loss function is defined using the cross-entropy loss.

Experiments

We conducted extensive experiments to evaluate the performance of our method on the first large-scale tabletop scene dataset, TO-Scene, as well as on a robotics platform for realistic scenes. Additionally, we tested our algorithm in ablation experiments and explored its applicability to grasping scenarios. The results demonstrate the effectiveness of our method.

Experimental settings

Dataset

The dataset for the experiments is TO-Scene, the first large-scale tabletop scene dataset. 10 TO-Scene contains a total of 16,077 tabletop scenes and 52 common tabletop object classes, which are subdivided into three subdatasets. The vanilla dataset, which contains 12,078 tabletop scenes and 60,174 tabletop instances belonging to the 52 classes, is called TO-Vanilla. The dataset with a higher density of tabletop objects in a single scene, TO-Crowd, which contains 3999 tabletop scenes and 52,055 instances. The TO-ScanNet dataset, which is an interception of scans from the ScanNet dataset of an entire room, preserving the semantic labels on the original ScanNet room furniture, covers 4663 scans and holds approximately 137k tabletop instances. Each sub-dataset is divided into a training set, a validation set, and a test set. Since the test set is not yet complete (the authors have not yet published the annotated labels for the test set), we train on the training set and obtain results on the validation set.

Evaluation metrics

According to state-of-the-art methods,5,33,34 we adopt the mean of classwise intersection over union (mIoU) as a main evaluation metric. The mIoU quantifies the extent of overlap between predicted and ground truth segmentation masks for each class. It provides a comprehensive measure of segmentation accuracy by considering both false positives and false negatives. The 52 classes of objects and background, totaling 53 classes, are evaluated on the validation sets of the TO-Vanilla, TO-Crowd, and TO-ScanNet datasets.

Implementation details

Our model is trained iteratively on a 4 × RTX4090 (24G) graphics card with a batch size of 32. We use the stochastic gradient descent (SGD) optimizer with momentum and weight decay set to 0.9 and 0.0001. To expedite the model's convergence, we employ a MultiStepLR learning rate adjustment strategy with milestones set every 10 epochs and a gamma value of 0.5. The initial learning rate is established at 0.1. Because of the small size of the objects in the tabletop scene, we set the hyperparameter radius of the spherical grouping to r = 0.08 in MNFS. Due to memory limitations, the maximum number of points per scene after random sampling is capped at 60k.

Evaluation on TO-Scene

Results

We compared our proposed method with several widely used techniques in the field of point cloud segmentation. Among these, PointNet++ 4 stands out as a classical network in the field of deep learning for 3D point clouds. PointTransFD 10 builds upon the foundation of Point Transformer by incorporating feature vectors and dynamic sampling strategies, which currently holds the highest score on the TO-Scene dataset. As shown in Table 1, our method achieves a mIoU of 82.90% on the TO-Vanilla dataset, 81.16% on the TO-Crowd dataset, and 73.58% on the TO-ScanNet dataset. Notably, it is on average 4.07% higher than the current highest result on the TO-Scene dataset, which fully proves the effectiveness of our method. Furthermore, we excerpt the category mIoU values for a portion of the objects in the test results of the dataset, as detailed in Table 2.

Test results of different methods for object segmentation tested on the TO-Scene dataset.

Category-wise mIoU values for our method tested on the TO-Scene dataset.

Analysis and discussion

We have selected several typical illustrations over the dataset to visualize the intermediate and final results of our algorithmic process, as shown in Figure 4. The output of the tabletop-aware learning network does a good job of distinguishing objects by category, but there may be a lot of noise or misclassification of some regions. Our clustering algorithm maintains the integrity of the instances better, but may split them into two or more parts on instances with poor connectivity due to partial occlusion, or stacked objects split them into one part. The final output of instance prediction can be well blended with the advantages of both by ScoreNet to score the clustering results, followed by the application of NMS. It is clear from the figure that the result of instance segmentation is more accurate.

Visualization of test results on the TO-Scene dataset for our algorithmic process. Objects are the semantic output of the two-branch structure. Cluster is the visualization of the clustering result. Instance Pred represents the final instance segmentation outcome produced by the algorithm.

We observe the specific scores of various categories for our method as tested on the three datasets from Table 2. The differences in their scores are reflected in a variety of reasons, which we try to analyze and discuss. Firstly, variations in shape and appearance among object categories are crucial factors. The complexity of an object's shape, specifically the regularity of its geometric surface, directly influences the segmentation difficulty. For instance, a nearly cylindrical object like a can is likely to achieve a higher score than a complexly shaped item such as a camera. Flat objects, including notebooks and keyboards, pose additional challenges due to susceptibility to noise from surrounding desktops during segmentation. Moreover, while object size may be a contributing factor, the integration of MNFS has significantly improved results in this aspect. For example, categories with a high number of variations, such as vegetables, can increase segmentation difficulty, thereby impacting accuracy. Additionally, factors like data labeling quality and proximity to other objects can introduce variations in the results.

In Figure 5, we present a comparison of the output results obtained from different methods. By comparing the output results of PointNet++ and Point Transformer, we can clearly see that the latter has better scene understanding ability after adding a self-attention mechanism, and the correct rate of object classification will be greatly improved. Furthermore, the introduction of our MNFS module (without clustering) refines local details, leading to more precise segmentation of small-size objects such as erasers, pencil holders, and books. In brief, paired with the MNFS module deep learning network has a strong performance. Coupled with the fact that clustering methods can maintain object integrity well, so our method has a better advantage over other methods.

Visual comparison of test results on the TO-Scene dataset for different methods. Different colors represent separate semantic categories of objects. Key regions are circled with red to indicate cases of misclassification and mis-segmentation.

Ablation study

As shown in Table 3, we performed ablation experiments on the MNFS module and the clustering part for object segmentation. The experimental results show that the MNFS improves by 6.5% on TO-Crowd dataset compared to the backbone model, and also demonstrates an improvement of approximately 2% on the TO-Vanilla and TO-ScanNet datasets, which indicates that it is better for dense scenes. The clustering module improves by 5.03% on the TO-Vanilla dataset and about 1.8% on the other two datasets, indicating its enhanced effectiveness in scenarios with sparse objects. Both the MNFS and clustering components contribute significantly to augmenting and refining the overall algorithm's performance.

Test results of the ablation study for the MNFS and clustering.

Experiments in real-world scenes

Experimental scenes setup

Our experiments are conducted on an autonomously designed robot platform equipped with a ROBOTIQ three-finger gripper on the robot end-effector. 3D reconstruction is performed using an Intel Realsense D435i camera to acquire RGBD images of the scene from multiple angles. The computational processor for the robot is the official Nvidia Jetson AGX Xavier Developer Kit. The robot in this study adopts a humanoid configuration with seven degrees of freedom in one arm, comprising three shoulder joints, three wrist joints, and one elbow joint. For the general shape of the working objects in a desktop scenario, the direct operating load at the end of the robot arm is set at 5 kg, and the arm's working space spans 914 mm. To address accuracy issues in end localization, hand-eye calibration is performed on the depth camera and robot coordinate system. To enhance stability during object grasping and transfer, we employed harmonic reducers as joint reducers, benefiting from their low backlash and high reduction ratio. Additionally, the connecting rods were designed with a large safety margin to ensure stiffness and improve positioning accuracy. Simultaneously, we implement a force control program for the robotic arm's rotating joints. Force sensors are mounted on the joint output axes for joint force perception, allowing the joint controller to complete torque closed-loop control. This approach enables impedance control at both the joint and end effector levels. The relative accuracy of the end force control is within 0.2 N force and 0.1 Nm torque, ensuring precise and stable gripping and operations. The experimental scene is illustrated in Figure 6. On the left is the structural diagram of our self-designed robotic arm. The robot platform with a depth camera applies the algorithms discussed in this paper to generate instance segmentation results for tabletop objects.

Diagram of the experimental scene and the robot platform.

Semi-autonomous experiments

Semi-autonomous grasping experiments are conducted in a realistic setting using a robotic platform equipped with the algorithms outlined in this paper. Initially, the depth camera captures RGBD images of the scene to enable 3D reconstruction. Real-time 3D point cloud reconstruction is achieved through truncated signed distance function (TSDF) spatial fusion, global feature matching, and local optimization algorithms. Subsequently, upon completing instance segmentation using our algorithm, researchers select targets through mouse clicks. The point cloud corresponding to the grasping target is extracted from the instance segmentation results. The trajectory planning process employs the open motion planning library (OMPL) sampling-based planning method integrated into MoveIt!. Specifically, the URDF model of the physical robot is configured, and obstacle constraints are extracted from the point cloud of the current scene. Through OMPL's rapidly-expanding random trees (RRT) algorithm, smooth and collision-free motion trajectories are generated for the robotic arm. The geometric center point and rotation angle are employed to derive the desired end pose, facilitating grasping experiments conducted with a strategy based on shape primitives and pose estimation.35–37 For arm control, we implement position control in the end Cartesian space, using the moveLToPose function in the robot development API. Figure 7 offers a clearer view of the sequence in some experimental processes,while the experimental flow is shown in Figure 8.

Real-world scenarios of robot grasping experiments. The scene in Experiment 2 is denser compared to the Experiment 1.

Flowchart of experimental semi-autonomous grasping for robots based on object instance segmentation algorithm in realistic scenes.

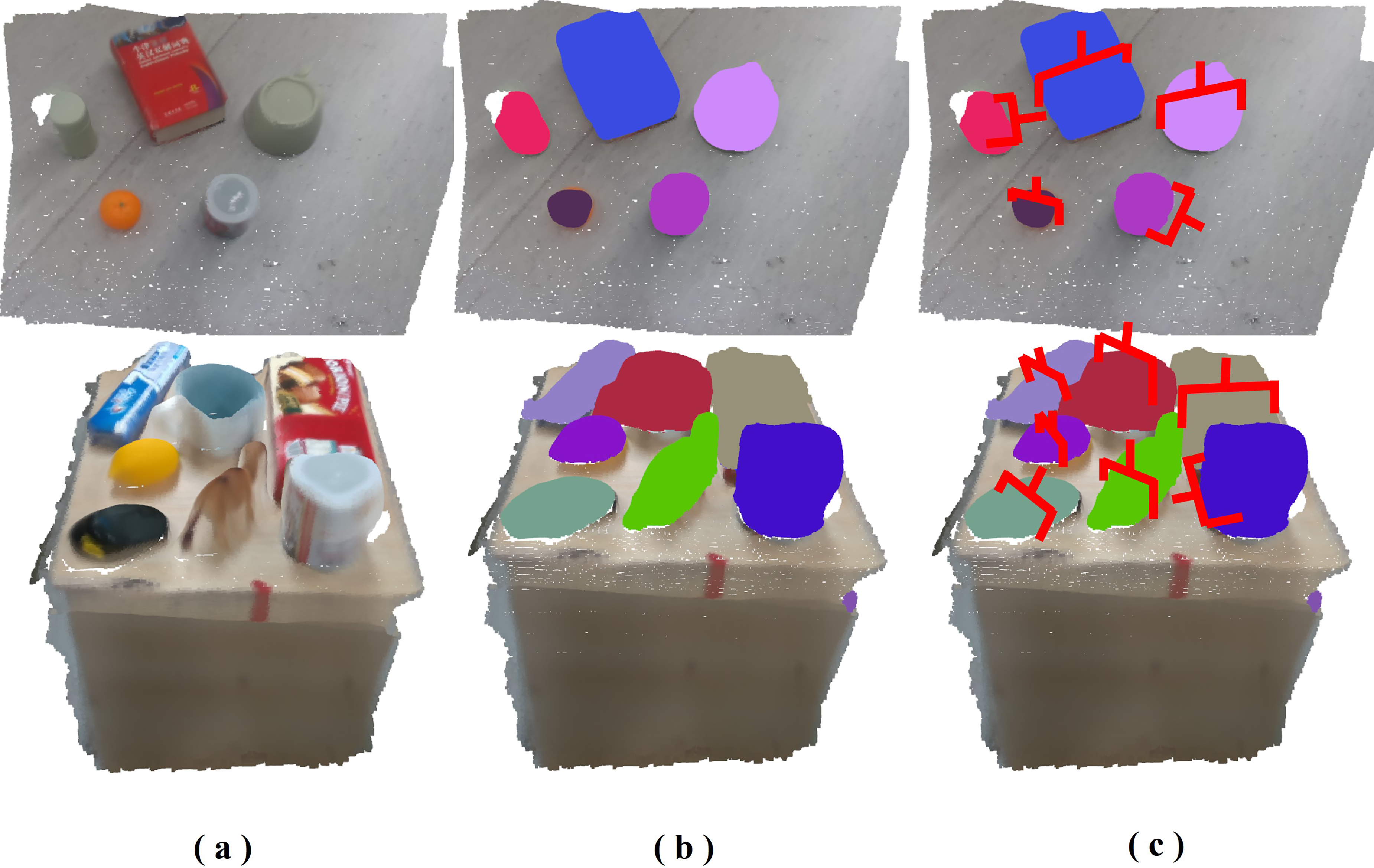

Realization results and analysis

We evaluate the algorithm using the robot's reconstructed results of a realistic tabletop scene. The algorithm is tested in both sparse and crowded tabletop scenarios. The test results demonstrate instance segmentation of tabletop objects in various situations, as shown in Figure 9. The point cloud of realistic scenes generated using depth camera 3D reconstruction on our robot platform is presented on the left, followed by the instance segmentation results for tabletop objects displayed in the middle, and the corresponding grasping strategy depicted on the right.

(a) 3D reconstruction of realistic scenes. (b) Instance segmentation. (c) Grasping strategy.

The time to completion (TOC) is defined as the duration from issuing the algorithm execution instructions to completion, representing the interval between receiving the input of the point cloud scene and obtaining the output of the instance segmentation result. According to our experiments, the average testing speed for single-scene segmentation of the algorithm is approximately 317 ms. We convert the contour center of the object instances into the world coordinate system and compare it with the actual measured values. Repeated experiments have found an average error of about 1 cm. These results highlight the algorithm's effectiveness in achieving improved object instance segmentation in realistic tabletop scenes.

We encountered some challenges during the experiments, which will be the focal point of our future work. It is worth noting that the accuracy of our results is significantly influenced by the performance of the 3D reconstruction algorithm, as evidenced by the superior segmentation performance in sparse scenes compared to dense scenes. The missing or distorted reconstructions can have a direct impact on the segmentation accuracy. In particular, the portion of an object in contact with the tabletop may exhibit sparser representation than the top during the reconstruction process, which could impact the segmentation accuracy of small objects to a certain extent. Moreover, instance segmentation errors in object contours or incorrect grasping strategies have the potential to result in failures during the grasping operation.

These aspects require further research on our part, including testing on more robot platforms. Overall, our instance segmentation algorithm achieves a success rate of up to 91.7% in repeated trials of robot grasping application.

Conclusion

We design an accurate and effective instance segmentation algorithm for tabletop scenes. Our tabletop-aware learning approach incorporates a multi-scale neighborhood sampling module within the Point Transformer, enabling the extraction of features from 3D point cloud, particularly for small-sized objects. In addition, we design a clustering algorithm that ensures the preservation of instance integrity. Furthermore, we enhance the segmentation accuracy of the objects by combining the network and clustering advantages through ScoreNet.

We have conducted comprehensive experiments to evaluate our algorithm on the TO-Scene dataset. These experiments observe an average mIoU improvement of approximately 4.07% compared to existing methods. The ablation study demonstrates the effectiveness of our designed MNFS module and clustering algorithm. In the real-world scenes, we explore the application of our algorithm on robotic grasping. The results indicate a single-scene segmentation speed of approximately 317 ms and a grasping success rate of up to 91.7%. We are currently actively exploring the application of instance segmentation in robotics. Our future work would like to further improve algorithm accuracy through multimodal fusion, as well as investigate small-sample or unsupervised stance segmentation of objects in tabletop scenes.

Footnotes

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported in part by the National Natural Science Foundation of China (Grant No. U22B2079, 62103054, 62273049 and U2013602), Beijing Natural Science Foundation (Grant No. 4232054 and 4242050), Foundation of National Key Laboratory of Human Factors Engineering (Grant No. HFNKL2023WW06), Beijing Institute of Technology Research Fund Program for Young Scholars (Grant No. XSQD-6120220298).