Abstract

In order to improve the neurological recovery of hand neurorehabilitation, target-oriented, intensive, repetitive activities of daily living are used, such as training with recognition of hand gestures during robot-aided exercise. In this article, a cascade control algorithm integrating electromyography bio-feedback into hand gesture recognition is proposed. The outer loop is the trajectory motion tracking with Kinect-based gesture decoding classifier, and the inner loop is torque control with electromyography bio-feedback in the real time. This proposed method improves the tracking accuracy. The tracking error is effectively reduced from 70.56 to 28.07 in the simulation experiment. The initial test proves that the proposed method with additional torque control allows active assistance on the human–machine interface of other rehabilitation robots in future.

Keywords

Introduction

Hand is one of the most frequently used body parts in activities of daily living (ADLs) which is so important for the quality of life and working career.1,2 However, it is not easy to regain functional motions of hand and establish the precise fine-control neurological loops once damaged. Intensive and long rehabilitation training process should be carried out with the help of clinical professionals and operational therapists (OTs) for the final recovery of delicate and subtle movements of hand and finger.3,4 Clinicians involved must pay full attention to identify correct hand gestures while manually aiding as needed with awareness, verbal guidance, and assistance during the whole training process. This training process is effective when it conducts repetitively. As this process is tedious and time-consuming, many researchers and engineers have worked to mimic this repetitive training process by building intelligent robot.

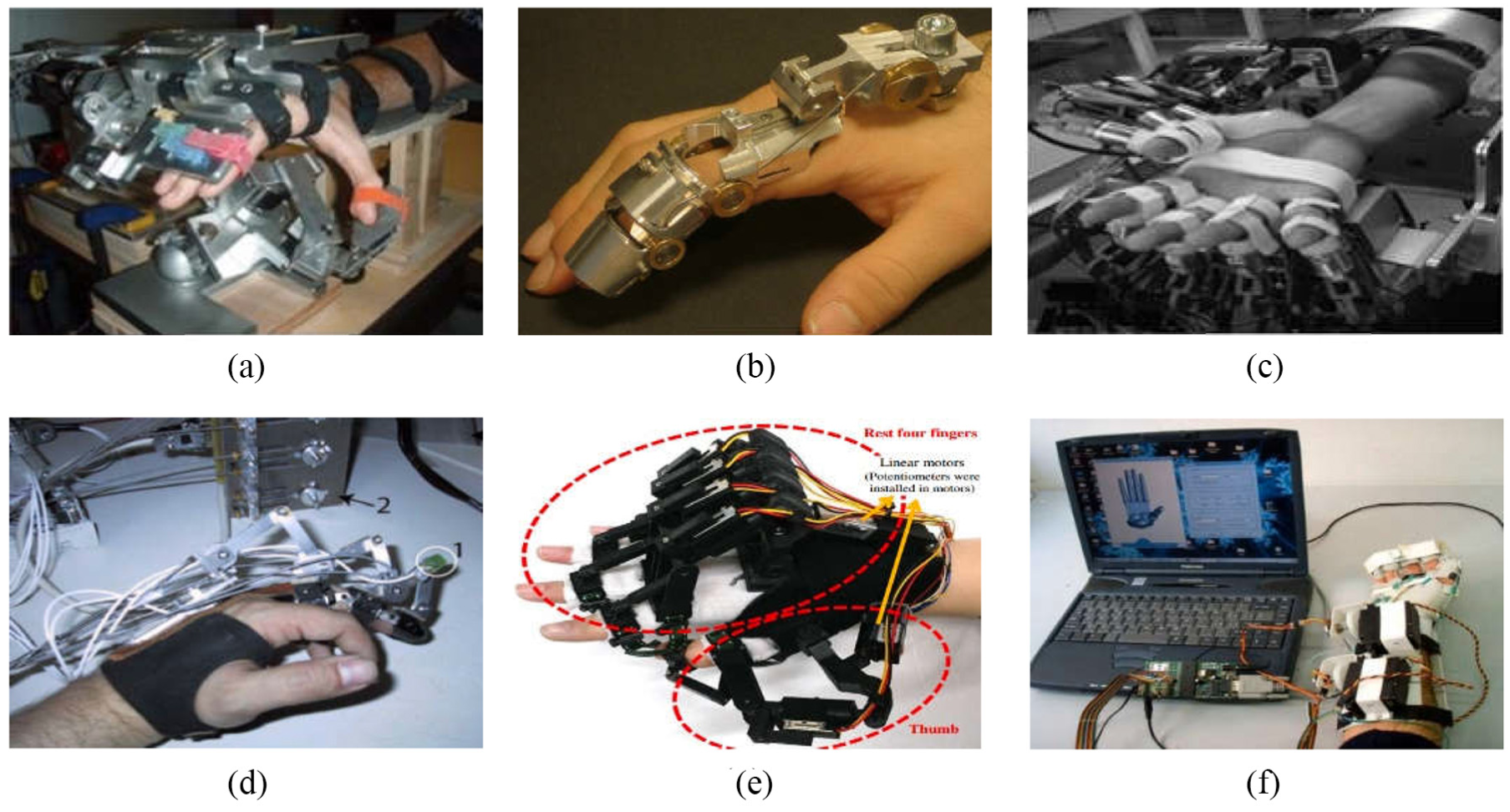

Most of these robot-aided neurorehabilitation nowadays can perform assistance during hand training process to mimic the motions such as flexion and extension.5,6 Several representative hand rehabilitation robots are shown in Figure 1. As shown in Figure 1(a), hand EXOskeleton rehabilitation robot (HEXORR) 7 can move the hand’s digits through the entire range of motion (ROM) with physiologically accurate trajectories and has at least traceability to extend the fingers. Scholars have developed a hand functional rehabilitation robot 8 as shown in Figure 1(b), which can overcome the limitation of movement range of hand extension and bending in hemiplegic patients, so that it can perform rehabilitation training in the range of hand safety. The idea of modular design has been adopted in an electromyography (EMG)-controlled exoskeleton rehabilitation 9 as shown in Figure 1(c). This device achieves to vary the way of wearing, and the active joint angle has a nonlinear relation with the finger joint angle as proposed. Another hand exoskeleton rehabilitation robot has combined with virtual reality (VR) technology 10 as shown in Figure 1(d); this robot has established a visual operation instruction based on VR technology to improve the efficiency of rehabilitation training. Other successful hand rehabilitation robots have been developed in capable of potentiometer motor feedback, 11 remote control, 12 or other technologies.

Research achievements of typical hand rehabilitation robot: (a) HEXORR: 7 a modular exoskeleton; (b) HAN-DEXOS: 8 a simple finger exoskeleton robot; (c) an exoskeleton controlled by EMG; 9 (d) a rehabilitation exoskeleton combined with virtual reality (VR); 10 (e) a wearable hand exoskeleton for exercising flexion/extension of the fingers with potentiometers; 11 and (f) hand exoskeleton capable of remote control. 12

Although current hand rehabilitation robot has reached great improvement, these robot-aided rehabilitations cannot completely replace manual assistance to liberate clinicians. One of the common unsolved problems is lacking bio-feedback. Without the bio-feedback, it is impossible to recognize gestures and hand force at a fast speed and with high accuracy similar to that of manual assistance. Researchers have explored various methods for detection of hand gestures and improve the recognition accuracy. Roughly, two catalogues of recognition are normally used including: vision based and the wearable device. Vision-based recognition works on images captured by charge-coupled device (CCD) and then extracts features for classifiers to identify gestures: a finite stat machine (FSM) 13 or SOM-Hebb network 14 is used based on single regular camera, which has a satisfactory accuracy to recognize typical hand gestures. Instead of regular cameras, three-dimensional (3D) camera incorporated depth information 15 is used to exclude the disturbance of background and light change. High-speed camera 16 is also used to for training the hidden Markov models (HMMs), which present their improved effectiveness. However, the captured image is easily blocked to make it hard to recognize. So, multiple cameras are further introduced to improve the identification accuracy. Kinect is one of the popular products which has been widely used in this area and has improved the recognition accuracy. As Kinect successfully extracts the gesture from background, many machine learning algorithms have combined to this application such as neural network (NN), 17 support vector machine (SVM), 18 K-means, 19 Markov model, 20 and AdaBoost 21 with promising recognition accuracy.

All those gesture recognition as mentioned has high accuracy when it identifies relatively dramatical movements of hand and fingers. In this article, we also used Kinect to recognize the gesture for rehabilitative use. It works well to detect movement trajectory of fetching a cup; however, when it comes to the “slight” motions of patient participant, it is hard to recognize because the gesture images are almost identical. Take picking up a cup in Figure 2 as an example, fingers and palm movements are so intricate and vulnerable to be blocked.22,23 Furthermore, post-stroke patients are prone to exert more force even enter the spastic quivering during the picking process, which is a major problem as mentioned by clinicians. The picking force cannot identify from the vision-based method as the hand gestures are similar when they are holding the cup. However, “too much” force or spastic quivering is also the neurological problem that should be detected.

Typical hand rehabilitative training activities: (a) relatively dramatical movements of hand and fingers and (b) grabbing the object with proper and overwhelming hand force.

Wearable device is another way to detect moving situations including gesture recognition and the force feedback. With help of EMG signal, some wearable devices have obtained bio-feedbacks of myoelectric signals with muscle strength and activation/inactivation.2,24 Typical classifiers such as NN, SVM, and principal component analysis (PCA)25–29 have been combined with captured EMG signals to recognize gesture. Other sensors such as accelerometers or inclinometers have also merged into the wearable device to identify the gesture. With studies of those bio-feedbacks, we select EMG as it directly reflects the activation of muscle rehabilitative conditions.

In this article, the significant gesture movements could use the Kinect to recognize. The slight and stationary situation is identified by the EMG signal. Using fused signals, we identify both the gesture and the hand force at the same time. A cascaded structure is proposed as the gesture decoding classifiers to reach high recognition accuracy and reduce the tracking error.

Initial experimental system

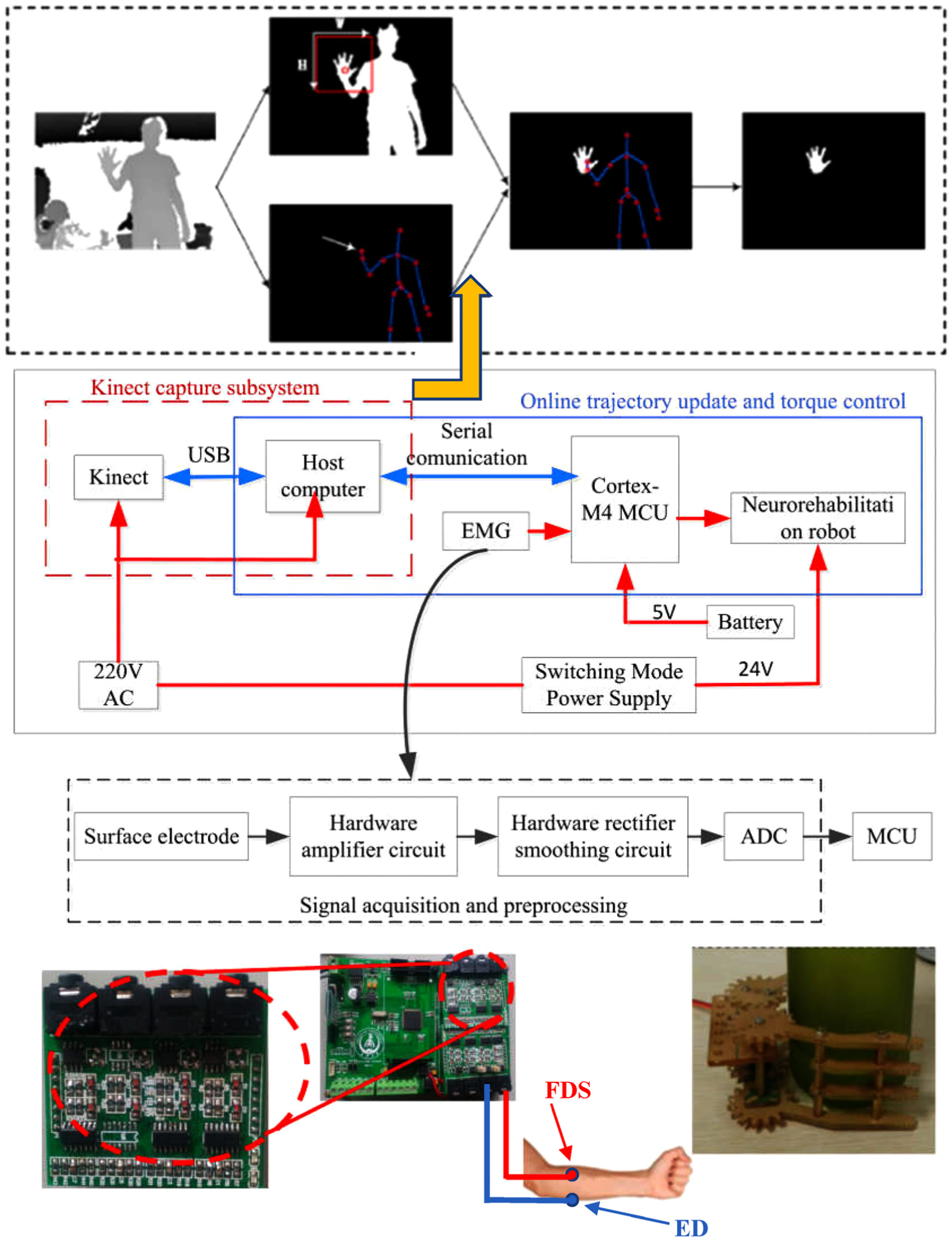

An initial robot-aided hand neurorehabilitation to perform hand movements is simulated and designed to test the gesture recognition accuracy via the proposed algorithm. The significant hand gesture change and finger moving trajectory are captured by Kinect, and a rectangle area is located at hand and the fingers within the captured image. Decoding algorithms are then used to recognize the gesture in general. The more specific and exact motion of fingers are expected based on the EMG to identify the intention or fingers’ early movement. This recognition of gestures is further sorted out for a hieratical control command for the hand robot to track hand movements. The main components of designed experimental system include Kinect and EMG acquisition system, embedded controller-based microprocessor, signal processing algorithms and trajectory tracking control, graphical user interface (GUI) operation system, and motion control board for the actuator to drive the hand robot. All these components are illustrated in Figure 3 and explained term by term.

The overall processing and control methodology for target-oriented hand robot. An experimental system is designed and fabricated for testing various control algorithms with EMG and gesture identification on hand movements’ algorithm, processing, and derived motion trajectory to mimic flexion and extension of interphalangeal joint in hand.

Kinect capture and gesture decoding classifier

As Kinect is used to identify limbs’ motion, the skeleton linkage is easily identified with joint nodes. 30 Fingers are smaller compared to limbs so a rectangle virtual box are used to zoom in on hand. Gesture is recognized within the rectangular area for the finger movements normally performed in daily life. Take picking a cup as an example, reaching the cup is the first step before holding it. The reaching movement is composed of upper limb and hand. The approaching trajectory of trunk and upper limb is easily identified by Kinect. The hand pre-movement on fingers is captured and zoomed using the rectangular virtual box. K-nearest neighbor neural network (KNN) is used to classify the gesture with stored gesture library as trained in advanced. Various hand gestures are selected, classified, and stored in the host computer. The gesture decoding algorithm is presented in the next section.

EMG signal acquisition modular system

The patch electrode is placed on muscles with AgCl material which has a significant advantage to capture the small polarization and quickly transmit the EMG raw data. Amplifier and preprocessing are integrated on an electronic acquisition modular system with sampling time of 0.015 s. In this application, electrodes adhered to anticipated location of forearm to detect myoelectric information of EMG. EMG signal is collected and conveyed through electronic circuit to be amplified, rectified, and smoothed and then passes through the analog-to-digital converter (ADC) as this modular system’s output. EMG signal acquisition and conditioning hardware is shown in Figure 3. Amplifier is selected as low-power, high-precision, low-noise instrumentation amplifier INA128. The input offset voltage is 50 µV, and the common mode rejection ratio is 120 dB. Rectifier circuit selection diode model 1N4148 is used, which is a small high-speed switching diode. The filter circuit adopts the fourth-order band-pass filter, which consists of a second-order multiple feedback (MFB) high-pass filter and a second-order MFB low-pass filter. The desired bandwidth is selected from 1 to 1000 Hz, and the filter threshold is set to 1 Hz, which can completely filter out low frequency and DC interference. The conversion resolution of the ADC module is 12 bits. Self-adhesive silver chloride dual-snap electrodes are attached to muscles of the upper limb on flexor digitorum superficialis (FDS) and extensor digitorum (ED).

Embedded controller based on microprocessor

Embedded controller is implemented by STM32F407VET6 microprocessor, which is based on ARM Cortex-M4 microcontroller. By EMG signal acquisition modular system, the microprocessor obtains the EMG signals and then transmitted to the host computer for further processing and analysis through USB cable. Host computer sends control signals back to this main control board. The microcontroller also achieves the hand robot flexes and extends motor control according to the processing results.

EMG signal processing

Since EMG signal is such a small signal, acquisition procedures are prone to unstable and vulnerable to all kinds of disturbances such as the acquisition cable noise, and the adhesive electrode on surface of the skin and surrounding will have an adverse effect and crossover interruptions. Even the EMG signals have passed through the hardware preprocessing. The signals remain relatively messy, and it is hard to use directly. Therefore, to refine the meaningful and accurate EMG signal, more complicated algorithms should be further explored for noise cancellation, filtering, and smoothing. In this article, online processing algorithms written in MATLAB (MathWorks, USA) is developed and incorporated with GUI system. Thus, this unit is completed by host computer, the successful processing and analysis for acquisition EMG signal obtained is extracted to a myoelectric bio-feedback and send back to the microprocessor for the online control of the robot-aided hand rehabilitation.

GUI operation system and motion control panel for the hand robot

To facilitate displaying, operating, and debugging the system, a customized system is developed. The GUI operation system consists of two panels including monitoring Kinect and EMG signals and generated motion trajectory and torque command that send out for control. A two-phase four-wire stepper DC motor is applied on the moving joint to mimic the finger joints’ flexion and extension. STM32F407VET6 is used as the controller, YKD2405M is used as motor driver with 24 VDC power supply, and YKD2405M is a driver with external 16-speed equal moment constant torque subdivision, up to 200 segments. The use of subdivision controllers to control stepper motors can increase their accuracy, especially when fetching items need to be relatively precise.

A cascaded Kinect and EMG gesture decoding algorithm

After establishing the initial experimental system, further investigations on gesture decoding algorithms for the flexion and extension movements of wrist and interphalangeal joint on the hand robot are conducted. There are two stages of grabbing objects including before touching the object and holding to pick up the object. In the first stage, hand gestures are captured first by Kinect and using KNN to classify into different typical gestures in the process of grabbing objects. EMG signals from FDS and ED work as an agonist–antagonist pair and are collected at the same time. FDS contracts while the ED relaxes to make wrist joint flexion. In contrast, the reverse extension needs opposite contraction and relaxation of this pair of muscles. These activation and inactivation related to the processed EMG signals can be represented by the contraction and relaxation forces. In the second stage, there is no significant movement of hand. Kinect cannot recognize the different grabbing forces. This force can be estimated by the EMG signals of FDS and ED activated to make interphalangeal joint holding the object. The hand gesture remains the same while holding it. To solve this problem, Kinect and EMG signals are fused to control the hand robot. With the help of EMG analysis, the motion information including hand force, time of duration, and intention of movements can be obtained. Therefore, a cascaded Kinect and EMG gesture decoding algorithm is proposed using images captured by Kinect and two-channel EMG collection system as shown in Figure 4. The baud rate for serial communication in the experiment is 9600. All the historical and current situations of Kinect and EMG signals are spontaneously monitored, and the control algorithm is developed via a software with cable-connected or wireless communication.

EMG and Kinect gesture decoding algorithms applied on the hand robot. The modular system is presented including Kinect motion capture system, two-channel EMG collection system, motorized hand robot, and online monitoring and control system.

The Kinect-based gesture identification algorithm

To identify the gesture from background with high accuracy, host computer with 2.5-GHz central processing unit (CPU), Microsoft Company’s Xbox 360 Kinect camera, and the programming environment of Visual Studio2010 + openCV2.4.0 with Kinect for Windows SDK v1.8 are used. Based on the experiments,

Step 1. Normalize gesture figure into pixel of

Step 2. Compare the gesture extract from Kinect system and normalize to

where I(i, j) is the pixel of gesture figure of input with row i line j, Lt(i, j) is the pixel of tth figure of row i line j, and then the difference between testing gesture and gesture in database is

Step 3. Sort D from minimum to maximum, define totally k gesture

where M is the total number of gesture and Cj is class of j.

Extract gesture after image segmentation by Kinect.

An approach hand node method is applied using hand node as the center of palm to be an origin point and the distance between hand node and wrist node as radius, and the average white pixel is obtained as (xp, yp)

where T is the point of white pixel within the circle, xi is the ith white pixel in x-coordinate, and yi is the ith white pixel in y-coordinate.

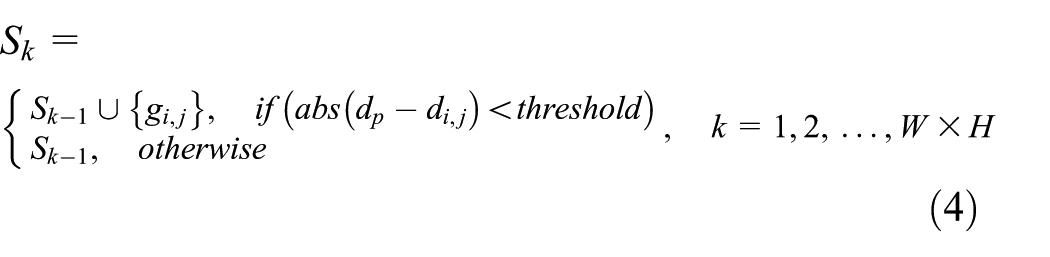

Set dp as the distance from Kinect extract hand node to Kinect camera, palm node as (xp, yp), search within a rectangle of width W and height H,

where k is the searching time, threshold is the threshold from palm node to Kinect, and abs(dp-di,j) is the absolute distance between palm node and gesture pixel area. Sk is the final extract gesture point set.

EMG signal processing algorithms

EMG signal processing consists of two parts: EMG signal preprocessing and processing. EMG signal preprocessing in the process of the collection includes absolute value processing, root mean square (RMS) processing, and Butterworth low-pass filtering. EMG signal processing includes median filtering, moving smoothing filtering, and normalization processing.

The absolute value processing is shown in equation (5)

A(t) is the original signal for collecting EMG signals and B(t) is the signal after absolute value processing.

The RMS processing is shown in equation (6)

where n is the number of EMG signals collected in 50 ms and BRMS is the RMS value.

After the root square processing, the resulting RMS values are transformed in the frequency domain and then Butterworth low-pass filter is used to filter and is shown in equation (7)

where n is the order of the filter; according to trials, we find that the fourth-order Butterworth low-pass filter can achieve satisfactory results.

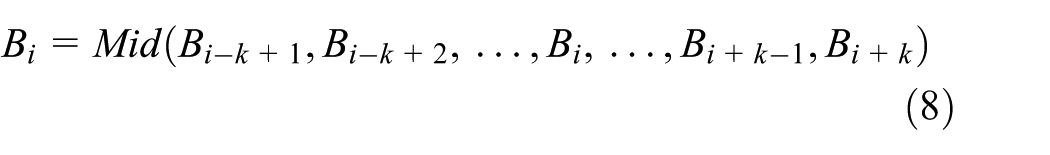

Step 1. Median filtering is the extraction of intermediate values as B(i) current value in a section of original signal, which is centered on B(i) and the length is 2k (interval is [i − k + 1, i + k]), that is, Bi−k+1, Bi−k+2, …, Bi, …, Bi+k−1, Bi+k. The median filter is shown in equation (8)

Step 2. Moving smoothing filtering taking B(i) as the center; selecting n data before and after, that is, Bi−n, Bi−n+1, …, Bi, …, Bi+n−1, Bi+n; and taking the average value instead of the current value B(i); this is shown in equation (9)

Step 3. Normalization converts the amplitude to [0, 1] using a linear function, that is

EMG signal preprocessing: (a) the raw EMG data and (b) the result of the EMG preprocessing.

The result of processing is shown in Figure 7.

Result of proposed signal processing algorithms: (a) result for EMG signal processing of Flexor Digitorum Superficialis (FDS), (b) result for EMG signal of Extensor Digitorum (ED), and (c) normalization of grabbing force.

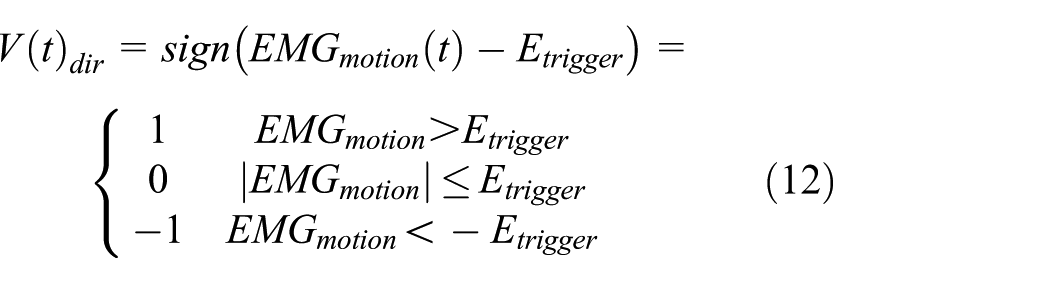

After achieving real-time acquisition and processing neuromuscular EMG, the corresponding control law applied on the wrist joint is provided as

where V(t) dir is the direction of hand movement, B(t) is the processed EMG signals obtained from FDS, T(t) is the processed EMG signals obtained from ED, and Etrigger is the trigger threshold. When V(t) dir = 0, this control law can be used when holding the object.

A cascaded control based on Kinect and EMG

Kinect integrating the analysis of the EMG signal leads to the motion of the interphalangeal joint when holding the object, which can control the fine movement of the hand

where Control(t) is the target-oriented trajectory of the rehabilitation robot effector, VKinect(t) is the corresponding action obtained by Kinect recognizing gestures, and

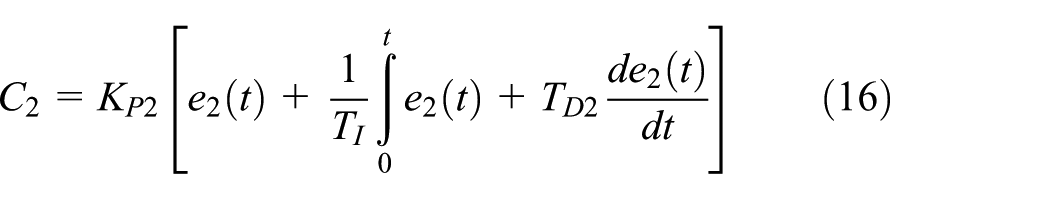

The control algorithm uses double closed-loop cascade structures as proposed. The outer loop is used for tracking control, that is tracking the joint angle, and the inner loop is torque control to realize the force required to grab the object. The joint angle tracked is obtained by Kinect capturing, and the difference between the desired angle and the output angle of the final actuator generates an error signal e1. The error signal is adjusted by the gesture recognition angle controller C1(s) to keep it decreasing. The inner loop uses hand force to regulate torque error. Take holding a cup as an example; the desired hand torque is obtained by EMG analysis, and the torque output of the motor is adjusted accordingly by the EMG torque controller to the torque error. Control block diagram of a cascade control system for an initial hand rehabilitation training robot incorporating EMG and gesture is shown in Figure 8, and the expected input of the system is shown in equation (14). It consists of two parts: the first part is the angle of the interphalangeal joint obtained by Kinect, and the second part is the torque obtained by EMG analysis. The transfer function of the inner loop control system is shown in equation (15)

where Y1(s) is the output of inner loop controller, Gv(s) is the actuating motor, G2(s) is the system torque model, and e2(s) is the error signal of torque.

Expression for EMG torque controller C2(s) is shown in equation (16)

where Kp2 is the proportionality coefficient and e2 is the error of torque.

The transfer function of the outer loop control system is shown in equation (17)

where Y1(s) is the output of the system and e1(s) is the tracking error. Expression for gesture recognition angle controller C1(s) is shown in equation (18)

In the hand movement process, Kinect can capture the hand motion trajectory which is used to drive the robot-aided movement in general and then combined with five fingers’ movement intention and muscle contraction strength obtained by EMG analysis.

Control block diagram of cascade control system integrating EMG and Kinect gesture recognition.

Simulation and test results

With this Kinect and EMG combined decoding algorithm, satisfactory tracking results are obtained. The recognition accuracy of gesture recognition is listed in Table 1. Before touching the object, the accuracy is satisfactory with Kinect. However, recognition accuracy decreases when it recognizes the gesture of holding is not moving. With cascaded inner EMG loop, the recognition accuracy increased to 91.23%. Furthermore, the cascaded EMG loop provides the torque control. Without the torque control, grabbing object will be difficult: if the torque is too small, the grabbing process cannot last. If the torque is too large, it will damage the object. After integrating EMG signal, the torque control loop has been added to the system; in other words, it is on the basis of goal-oriented movement that fine motion control can be realized. The proposed cascaded Kinect and EMG gesture decoding algorithms are simulated and tested on the initial robot-aided rehabilitative hand.

Comparisons of gesture recognition accuracy between with and without cascaded EMG feedback loop.

The movement of interphalangeal joint is studied when the gesture of hand remain to hold the object. In order to verify the effectiveness of the algorithm, an experimental model is tested. The integration of EMG and Kinect gesture recognition algorithms and only Kinect capture gestures driven by rehabilitative robot were compared in Figure 9.

Comparisons of the proposed method and Kinect tracking system: (a) the result of tracking driven only by Kinect, (b) the result of tracking driven by Kinect and EMG, and (c) the result of the tracking error; the solid line is the result of Kinect, and the dotted line is the result of Kinect and EMG.

The usage of Kinect recognition gestures to drive exoskeleton grasping items is very difficult, and the integration of EMG signal analysis and Kinect cascade control to achieve the action of crawling items, although the identification of gestures, is the beginning of the implementation of the outer layer, and there will be 1.5-s time delay to response. The simulation result shows that using Kinect gesture recognition driven rehabilitation robot can be achieved motions but the total error of 70.5601 was obtained. The combination of EMG and Kinect gesture recognition algorithm drives a recovery robot with a decreased tracking error of 28.0744. In comparison, the tracking error is reduced by 60.21%. The algorithm integrating EMG and Kinect is compared with Kinect of gesture recognition driven by rehabilitation robot, and the algorithm integrating EMG and Kinect can respond to the error signal earlier and achieve the goal of reducing errors in advance, and high accuracy is the realization of robot hand motion control.

Concluding remarks

The active-assistive control algorithm has been developed to achieve steady tracking performance of the target-oriented movement for the rehabilitation training hand robot, which is successfully developed as an initial experimental platform in this article. Hand gesture is first captured and identified by Kinect, and EMG signals from forearm are collected and analyzed on an agonist–antagonist muscle pairs which motivate the flexion and extension of wrist and interphalangeal joints in hand. After interpretation of gesture and the result of analyzing the EMG signal, a cascaded control loop is proposed for the rehabilitation training hand robot, both the position and torque are fused into control loop. This is a potential possible way to improving the target-oriented rehabilitation training and solve the problems of ineffective passive training in the traditional robot-aided rehabilitation. The initial applications on the combined Kinect gesture with EMG signal have proved the effectiveness of the higher recognition accuracy. The target-oriented and active-assisted control methodology realizes the hand movement of robot following human. It is expected to provide an alternation for the manual therapy in the near future, and it is the crucial investigation of robotics use in rehabilitation medical field. This initial exploration and research of robot make clinical science and engineering more closely linked. Although initial experimental platform and proposed algorithm have been verified effectively, it still needs further research.

Footnotes

Acknowledgements

The authors would like to express appreciation to Mr Sheng Yi, Miss Ni Zhang, and Miss Qian Zhang for their help to present work in various aspects.

Handling Editor: Francesco Aggogeri

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Natural Science Foundation of China (grant no. 51505037); Key Science and Technology Program of Shaanxi Province, China (grant no. 2016JM6059); China Postdoctoral Science Foundation (grant no. 2016M600814); and the Fundamental Research Funds for the Central Universities (grant nos 3102017zy023 and 310832173702).