Abstract

Vision-guided telerobot is often used to execute tasks, such as grasping and classification, in various environments, which contains some unfamiliar objects beyond its matching library. Hence, it is necessary to create new template dynamically for the unfamiliar objects. However, this procedure is inconvenient for the traditional template matching algorithm. In this article, a novel map–based normalized cross correlation algorithm is proposed. Map–based normalized cross correlation is summarized into two phases. In the learning phase, map–based normalized cross correlation creates new template and map by the superpixel-based GrabCut method dynamically, which is different from previous template matching algorithms. In the matching phase, a map-based similarity evaluation is designed to determine the position and rotation angle of object, where the map is used to eliminate the interference of background. Various experiments demonstrate that superpixel-based GrabCut method is more robust against noise than the traditional GrabCut algorithm and can separate the object from texture-rich background with less iteration times and time consumption. Additionally, map–based normalized cross correlation algorithm can locate objects in texture-rich images more accurately compared with polar transformation and image pyramids normalized cross correlation algorithm, especially for the matching of irregularly shaped object.

Keywords

Introduction

Telerobot is a special type of robot, which is widely applied in space stations, undersea detections, telemedicine facilities, and other remote control devices.1,2 To improve the efficiency of telerobot and release the workload of operator, some smart systems have been designed and applied to tasks including force feedback teleoperation,3–5 to autonomous obstacle avoidance, and so on.6,7

Vision-based positioning, as an important machine vision technology, is increasingly applied in telerobot systems.8–10 It makes telerobot achieve accurate grasping and efficient classification automatically. Pixel-based template matching is one of the most popular methods to determine the target position and rotation angle.11–13

In the past decades, various pixel-based template matching algorithms have been investigated. These algorithms work as follows: given a template image

In addition, if the object in target image is rotated with respect to the template, the polar transformation algorithm will be used to reduce the computing load.25–27 When a polar transformation is performed, a circular template is needed. However, there is an issue when the circular template is selected for the elongated or irregularly shaped object such as bolts, rivets, chips. If the incircle region is selected, then the template will not contain all of pixels belonging to the object. And if the excircle region is selected, some pixels out of the object will be contained. Both of these situations lead to inaccurate matching. To solve this problem, some researchers proposed the region-based normalized cross correlation (RB-NCC) algorithm, 28 but this algorithm is not robust against texture-rich background and cannot perform well since it works with a rectangular bounding box based on the binary image.

Moreover, most of the traditional template matching algorithms work as depicted in Figure 1; template image needs to be created manually based on reference image in the offline mode. This procedure is inconvenient, especially for irregularly shaped object, so it is better to establish a library that contains all of objects before matching. In fact, it is difficult to ensure all of objects are included in advance for a telerobot that often works in unfamiliar environment. Consequently, traditional template matching algorithms are not flexible enough for the telerobot. But if the massive tasks such as grasping and classification is finished without the assist of template matching algorithms, it will increase the workload of operator obviously.

Procedure for traditional template matching algorithms.

In this article, a novel template matching algorithm is proposed, which is named map–based normalized cross correlation (MB-NCC). Our contribution can be summarized as follows: (1) MB-NCC algorithm can create new template for the unfamiliar object dynamically, which is different from tradition template matching algorithms. It can replace operator to finish the massive tasks, such as grasping and classification, and release the workload of the operator. (2) SB-GrabCut method, which combines with superpixels and GrabCut algorithm,29–34 is applied for the creation of template. It can separate the object from texture-rich background with less iteration times and time consumption compared with the traditional GrabCut algorithm. (3) Map is used for the similarity evaluation to eliminate the interference of background, which makes MB-NCC more robust against the noise.

The remainder of this article is organized as follows. The overview of MB-NCC algorithm is presented in section “The overview of MB-NCC algorithm.” Then, the approach of MB-NCC is introduced in section “The approach of MB-NCC.” Implementation details of MB-NCC are described in section “The implementation of MB-NCC” and relevant experiments are provided in section “Experimental results and discussions.” Finally, we conclude the article in section “Conclusion.”

The overview of MB-NCC algorithm

In this section, the overview of our MB-NCC algorithm is described. Figure 2 depicts the brief procedure for MB-NCC algorithm. MB-NCC is summarized into two phases, and both of them work in the online mode:

In the learning phase, SB-GrabCut method is for the creation of map and template image. The polar-transformed template image

In the matching phase, the target image

Brief procedure for our algorithm.

The approach of MB-NCC

According to the two phases as described in section “The overview of MB-NCC algorithm,” the approach of MB-NCC is divided into two parts: (1) the creation of map and template by SB-GrabCut method and (2) the map-based similarity evaluation.

SB-GrabCut

As mentioned in section “Introduction,” when polar transformation is performed, a circular template for the object will be used. For a square or round object, the circular template includes most of its pixels as shown in Figure 3(a) and (b). But when the shape of the object is elongated or irregular, there is an issue for the circular template selection. If the incircle region is selected as shown in Figure 3(c), the circular template cannot contain all of the pixels in object. And if the excircle region is selected as shown in Figure 3(d), then some pixels out of the object will also be contained. Both of these situations lead to inaccurate matching. In traditional template matching algorithms, such as the NCC algorithm using polar transformation and image pyramids, the creation of template image is finished in the offline mode. In this case, the useless pixels in circular template can be eliminated manually. However, this procedure is inconvenient for the dynamic creation of template. To overcome the shortcomings of the above method, a novel SB-GrabCut method is applied to create template based on the excircle region for the unfamiliar object by separating the object from background.

Different shapes of objects: (a) circular template for a circular object, (b) circular template for a square object, (c) circular template based on the incircle region of the irregularly shaped object, and (d) circular template based on the excircle region of the irregularly shaped object.

SB-GrabCut is the most crucial for MB-NCC. Briefly, SB-GrabCut separates the foreground, such as object, from background and gets the mask of the foreground. In MB-NCC, this mask can be used to create the map and template without background. SB-GrabCut is composed with two procedures: superpixel-based simplification and GrabCut-based segmentation.

Superpixel-based simplification works by gathering similar and neighbor pixels to the same block and then averaging them. Compared with the traditional K-means algorithm, our method simplifies the image based on local pixel block, which ensures that the pixels in same region are more similar and boundaries between different regions are smoother.

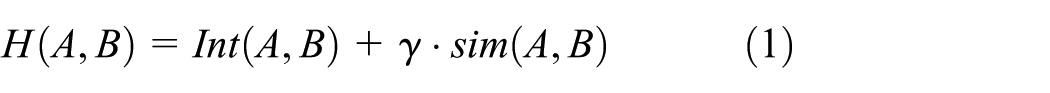

Considering the superpixel transformation as the histogram intersection and histogram similarity problem,

35

a novel function is proposed to evaluate the similarity between two histograms as described in equation (1), which composes with two terms.

Let

Let

Regarding

The segmentation of partitioned image.

Superpixels with different boundaries: (a) superpixels with sharp boundaries and (b) superpixels with smooth boundaries.

Figure 6 shows the effectiveness of superpixel-based simplification. It is concluded that the similar pixels are gathered into same superpixels. The simplification decreases the textures but retains the boundaries between the object and background obviously, which benefits the segmentation of GrabCut algorithm.

The effectiveness of superpixel-based simplification.

In the next procedure, GrabCut algorithm is used to separate the object from background. GrabCut is an image segmentation method based on Gaussian mixture model (GMM). It works relying on a Gibbs energy function defined as follows, which consist of one data term and one smoothness term

In the above equation, the image is considered as an array

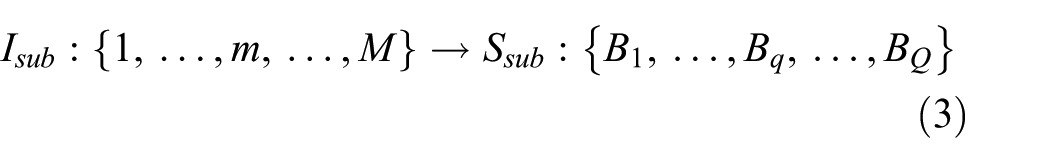

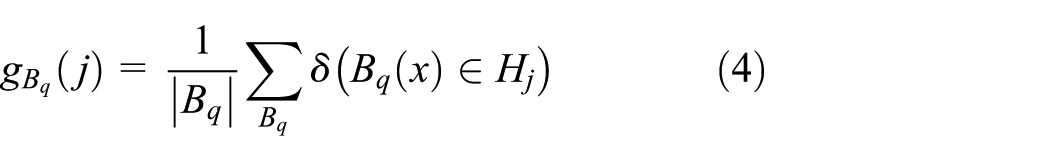

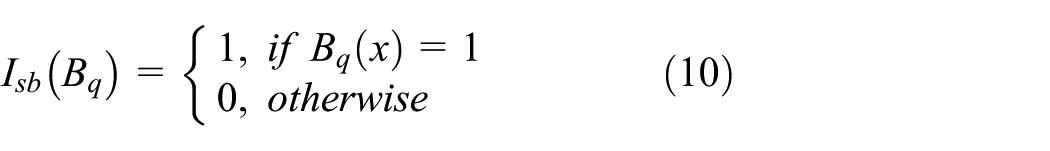

In our SB-GrabCut method, all of the pixels are divided into two sets

Benefitting from the superpixel-based simplification which makes the diversity between the object and foreground clearer, the value of energy function

The map-based similarity evaluation

As mentioned in section “Introduction,” NCC is often used in the template matching. It is described as follows

However, there is an issue when it is used for the matching of polar-transformed image. If the polar-transformed target image is based on the excircle region of an irregularly shaped object as mentioned in section “SB-GrabCut,” it will contain both the object and background. So the similarity evaluation using NCC will be interfered by the background. In our MB-NCC, the map-based similarity evaluation is used to solve this issue as formulated in equation (9), where the map

The implementation of MB-NCC

As mentioned in section “The overview of MB-NCC algorithm,” MB-NCC algorithm is separated into two phases. The details of each step are provided in the following sections.

Learning phase: creation of map-based template

Generally, the procedure of the learning phase is executed in the following four steps as described in Figure 7. In this phase, the operator controls the telerobot to grasp an object and put it aside, and then the polar-transformed template

Procedure for learning phase.

Mark the approximate region of object

An object in current image

Optimize the region of object with superpixels

Superpixel-based simplification is used for

Acquire the map

and template image

In this step, SB-GrabCut is used for the separation of the object and background in

Acquire polar-transformed image

and

In this step, polar transformation is performed for the map

Taking the map

Matching phase: matching with MB-NCC

In this phase, the target image

The correlation between

Experimental results and discussions

In this section, various experiments using different images were conducted to evaluate the effectiveness of the proposed template matching algorithm. The telerobot system is depicted in Figure 8. It is composed of a 6-degree-of-freedom (DOF) robot with fore feedback holder and multi-axis force sensor, two cameras, a PHANTOM 7-DOF force feedback controller, and a PC. The Camera1, which is vertical to the platform, is used to take the target image, and the Camera2 is used to monitor the robot. The fore feedback controller is connected to the PC. In the learning phase of MB-NCC, it is used to control the telerobot to grasp the object which is used to create template and map.

The telerobot system for experiments: (a) 6-DOF robot, platform, and cameras and (b) 7-DOF controller and PC.

All experiments were done using a PC with an Intel Core i5-4460 CPU operating at 3.20 GHz with 8 GB of memory and running on the Windows 7 Professional 64-bit OS. We do not use any GPU or dedicated hardware.

Effectiveness test for SB-GrabCut method

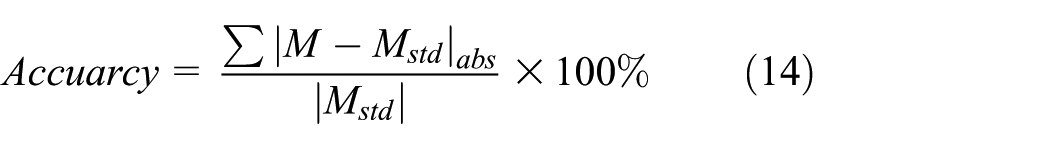

We compare the performance of our SB-GrabCut method with the traditional GrabCut algorithm when they are applied to crop the objects from texture-rich background. We try to crop objects and create maps by our SB-GrabCut method and the traditional GrabCut algorithm as shown in Figure 9.

Sample image and some different methods: (a) a sample image, (b) SB-GrabCut initialized by superpixels’ region mask

Six objects are cropped from different images and the results are presented in Figure 10. Additionally, the standard map

Objects and the maps of them created by different methods: (a) objects with different backgrounds and (b)–(e) maps of the objects created by the methods descripted in Figure 9(b)–(e).

Considering that the map is binary image, we take the absolute diversify value between the maps to evaluate the accuracy of the created map as described in equation (14), where

The relationship between the accuracy and iteration times, or between the accuracy and time consumption of the different methods are recorded in Figure 11. We conclude that our SB-GrabCut method achieves higher accuracy with less iteration times and time consumption. In the contrast experiments with the six objects, our SB-GrabCut method creates the maps within two times of iteration, which consumes less than 10 s. The reason of this is that our SB-GrabCut method has simplified the target image. This procession makes the texture get less but retains the boundaries between the object and background. In other words, the boundaries are more obvious than before, and it becomes easier to get the minimization of the total energy

Results of compared tests for different methods about the creation of map: (a) the relationship between the iteration times and the accuracy of created maps and (b) the relationship between the time consumptions of different methods and the accuracy of created maps.

It is worth mentioning that despite the maps created by the GrabCut algorithm with manual scribbles are more similar with the standard maps, the processions take much more time compared with others. Most of the time is taken to select the objects and draw the manual scribbles. What is more, it needs redrawing the scribbles after each iteration to rectify the segmentation and iterating many times to get the best result, which are really inconvenient for the dynamic creation of template.

Accuracy test for MB-NCC and PP-NCC algorithm

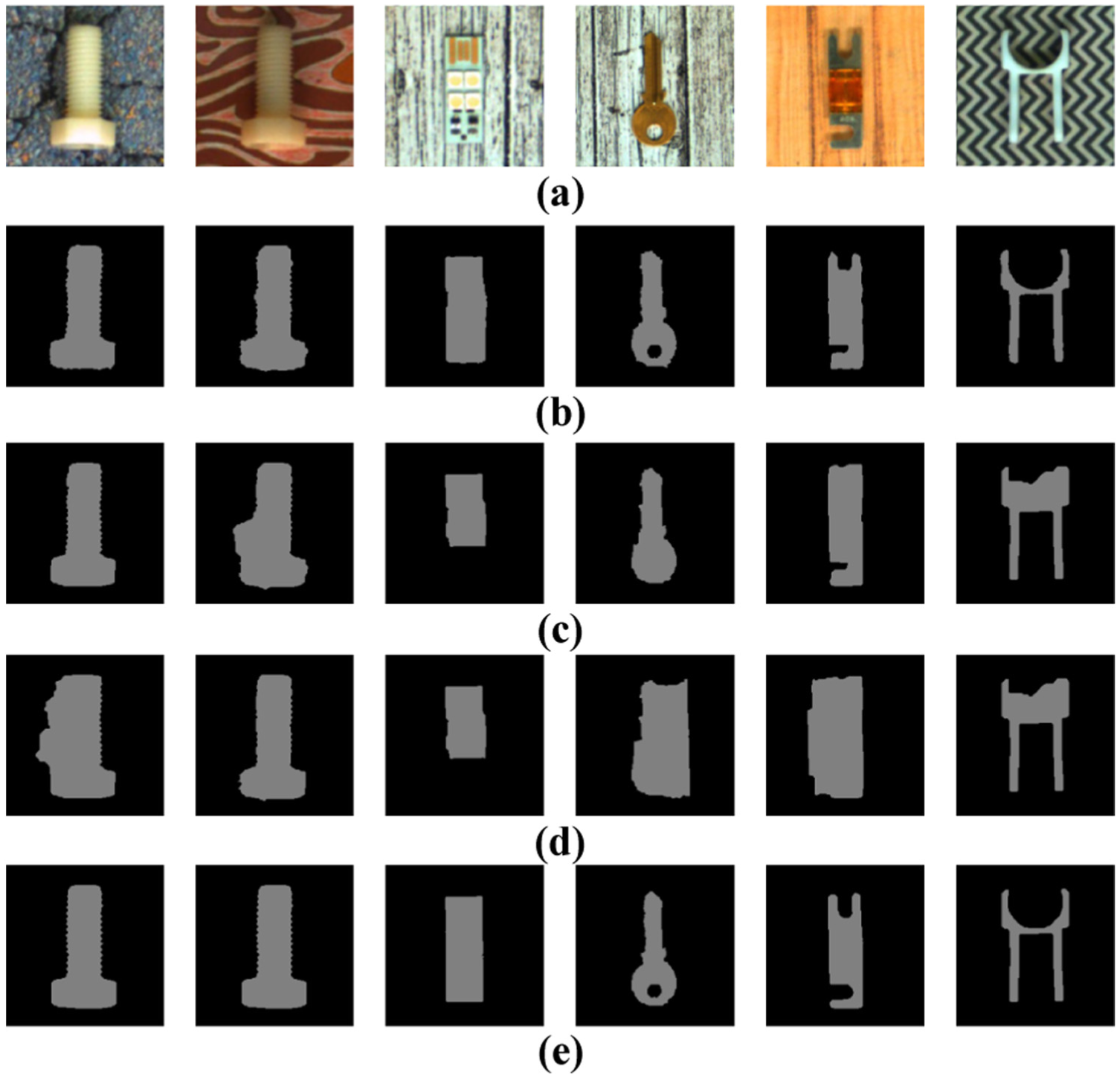

We compare the performance of the polar transformation and image pyramids normalized cross correlation (PP-NCC) algorithm with that of our proposed MB-NCC algorithm when they are applied to the matching of square object, elongated object, and irregularly shaped object.

Firstly, some contrast experiments between PP-NCC using the circular template based on the excircle or incircle region and our MB-NCC using the map-based template are performed. As shown in Figure 12, three kinds of objects are sought in several different images using the two algorithms. The results are recorded in Figure 13. For the objects shown in Figure 12(a)–(f) which contains the same square object in different backgrounds, there was no obvious diversity of the results about two implemented algorithms. For the square object, PP-NCC can work with the template based on incircle region since it contains most of the pixels of object, which avoids the interference of the background when the matching goes. Nevertheless, the effectiveness of two algorithms becomes different as represented in Figure 12(g)–(r), where a good matching algorithm should still return a high similarity score. In detail, when the shape of the object is complex, PP-NCC becomes unreliable, but our MB-NCC still seeks out the object accurately from texture-rich image.

Three kinds of objects in different backgrounds: (a)–(f) a square object in different backgrounds, (g)–(l) an elongated object in different backgrounds, and (m)–(r) an irregularly shaped object in different backgrounds.

Results of tests for the objects shown in Figure 12.

Then, the images in Figure 14(a) and (b) are rotated from 0° to 200° and the similarity scores are calculated to evaluate the tolerance of rotating deviation. Figure 15 shows that the similarity score calculated by MB-NCC decreases more sharply than PP-NCC when the rotating deviation occurs. On the other hand, PP-NCC does not perform steadily. There is another peak value when the rotating deviation gets to 180°. It means that MB-NCC can achieve a higher accuracy for the estimation of rotation angle.

A texture-rich target image containing one object.

Result of rotating deviation test for Figure 14(a) and (b) by PP-NCC and MB-NCC: (a) the result corresponding to Figure 14(a) and (b) the result corresponding to Figure 14(b).

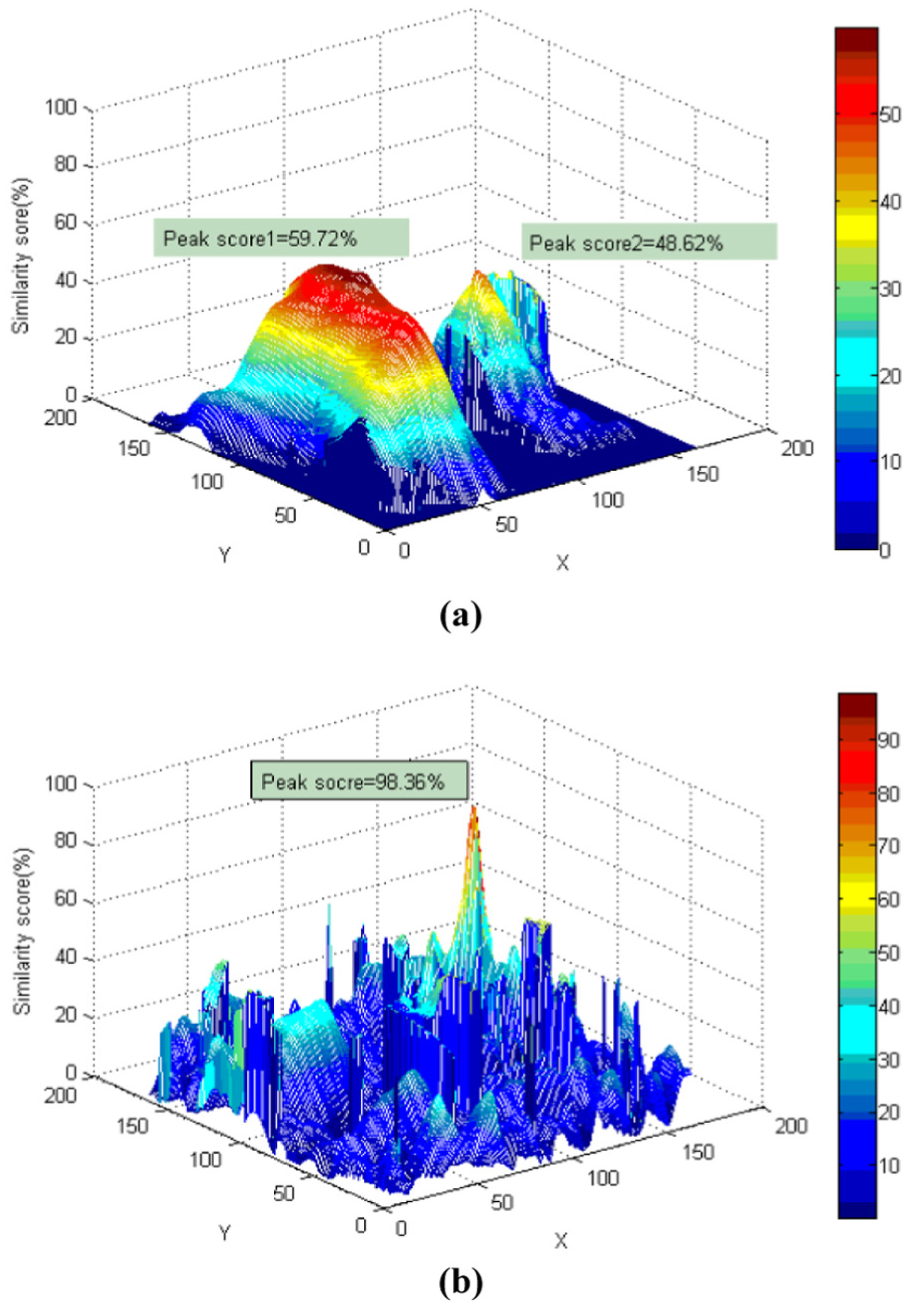

Finally, a contrast experiment aiming to evaluate the robustness of MB-NCC is implemented. As shown in Figure 14(c), there is a texture-rich image that contains one object near to a piece of wastepaper. Two algorithms are used to seek the object. The maximum similarity score for every coordinate in the target image is recorded when the rotation angle ranging from 0° to 360°. It can be seen from Figure 16(a) that there are two peak similarity scores. Moreover, the higher peak value corresponds to the coordinate of the wastepaper. It means an incorrect position will be returned for the matching in texture-rich image by PP-NCC. On the contrary, it becomes easy to obtain the accurate coordinate of the object referencing on the similarity scores by MB-NCC. As shown in Figure 16(b), there is only one peak similarity score when the coordinate moves. The peak value is higher than others distinctly and the change of the similarity score is sharper than that of PP-NCC obviously, which are beneficial to the matching.

Similarity scores obtained by PP-NCC and MB-NCC: (a) similarity scores obtained by PP-NCC with the circular template based on excircle region and (b) similarity scores obtained by MB-NCC with the map-based template.

In summary, our MB-NCC is more reliable than the traditional PP-NCC, especially when it is used for the matching in texture-rich image. The reason is that our MB-NCC algorithm can create a map-based template and eliminate the interference of the background effectively. However, when PP-NCC algorithm is used, the contribution of the background pixels to the matching remains substantial. In practice, a similarity score threshold is established before the matching. Since the similarity score acquired by MB-NCC can distinguish the object from background more effectively, it becomes easy to establish a suitable threshold.

Conclusion

We proposed a novel MB-NCC algorithm. Different from previous template matching algorithms, our MB-NCC algorithm creates new template and map for the unfamiliar object dynamically. The procedure of MB-NCC consists of two phases. In the learning phase, a SB-GrabCut method is applied for the creation of template image and map by separating the object from background. In the matching phase, a map-based similarity evaluation is designed to determine the position and rotation angle of object, where the map is used to eliminate the interference of background. The results of experiment demonstrate that our SB-GrabCut method is more robust against noise than the traditional GrabCut algorithm. It can separate the object from texture-rich background with less iteration times and time consumption. Moreover, our MB-NCC algorithm can locate the objects in texture-rich images more effectively than PP-NCC algorithm, especially for the matching of irregularly shaped object.

When our MB-NCC is used for vision-guided telerobot, it can replace operator to finish the massive tasks, such as grasping and classification, and release the workload of the operator. Since MB-NCC proposed in this article is robust against noise and immune to the interference of the background, it could be in wider application.

Footnotes

Acknowledgements

The authors sincerely thank the editor and all the anonymous reviewers for their valuable comments and suggestions.

Academic Editor: Chenguang Yang

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This paper is supported by the National Key Research and Development Program of China under grant number 2016YFB1001301, the Natural Science Foundation of China under grant numbers 61325018 and the Technique Support Project of Jiangsu Province under grant number BK2014132.