Abstract

The presented research investigates the accuracy of a localization algorithm using lidar (NAV-350) pose data in a GPS denied environment. A template matching solution is presented using the corners of the workspace area as markers. Results are also compared against the lidar NAV-350's own software solution which are based on average reflector distance. The presented algorithm has shown to have a higher accuracy in most cases. Data is analyzed for approximately 100 different locations in a workspace of 8.9 m by 10.7 m using an industry standard forklift with a base dimension of 1 m × 1.3 m. This paper shows how the algorithm can successfully determine the x, y coordinates and heading angle of the forklift in real time.

Introduction

Autonomous mobile robots (AMRs) are riding a prosperous market due to multinational manufacturers who are successfully including them in their warehouse automation plans. Especially because of the trend of Industry 4.0, the concept of autonomous forklift vehicles in real factory environments is of particular interest to researchers. Modern facilities are attracted by this digital trend as it increases the technological intelligence in the manufacturing industry. This leads to more efficient labor distribution and novel infrastructure design of industrial autonomous systems and automated warehouse management. 1 In this respect, a particular area of interest is in the navigation or localization of the forklift. Researchers have already shown success in path planning strategies. For small freight, they have used the shortest path algorithm and A* algorithm based on vision. 2 There is also published work on robotic forklifts for intelligent warehouse, but it has not been experimentally validated with multiple forklifts. 3 Research has been published on time-varying feedback control of unmanned autonomous industrial forklifts where sonar, laser range finder, etc. was used. 4 The control algorithm in such research forced the forklift to travel on a trajectory given by a path planner. Past research using image-based approaches utilized specific features captured by camera to differentiate between the forklift's pallets and its environment. 5 In other approaches, some used a radio frequency identification (RFID) tag, others used wireless sensors and laser pointers and also laser sensors to differentiate between the pallets and the environment.6,7 Researchers have also used a combination of ultrasound chirp and RF. 8 Indoor positioning is quite successful using ultra wide band (UWB) technology. Here multiple positioning markers with fixed coordinates are placed in specific locations and the timing of the pulses from these markers are used to calculate the three-dimensional position using the least square method. 9

There are many other similar applications using UWB with accuracy of position at centimeter-level making it a very promising field of research.10,11 However, it faces challenges from non-line-of-sight as well as attenuation of signal.12,13 Using multiple integrated sensors with UWB has also produced promising results. 14 One research claimed success using an integration of UWB anchors, IMU, and template matching algorithm, but the authors were able to achieve an error of 33.4 cm. 15 Here the template matching involved recording an image (using a monocular camera) at a particular frame and then calculating the highest similarity of a rectangular area for the next frame to determine its position.

This research is aimed at minimizing errors in a part of the navigation process of an AMR, or to be more specific, the localization of a forklift. The scope of the research in this paper will be on the localization only. The environment for this navigation is expected to be similar to an industrial warehouse where the ground will be smooth, and the presence of moving people will make the mapping of the warehouse somewhat dynamic. The ability to correctly predict the vehicle's exact location for enabling error free automated navigation requires a large amount of data evaluation from the lidar. The real challenge lies here, and this research is aimed at solving this problem with a reduction in error compared to established methods and tools. The control algorithm will be responsible for reducing the position and heading error.

The next section discusses the “Conceptual settings” involved in location and mapping. The following two sections after that will explain the methodology through the “Experimental hardware setup” and the “Mathematical model for localization of the forklift.” This will be followed by the “Results and discussion” section which also includes comparisons to other work. The paper will then end with the “Conclusion” section which also addresses future work that can be pursued in this area.

Conceptual settings

Just as we need to understand our location before navigation, so too do mobile robots. To understand their process, a few introductory concepts must be addressed first. If a robot's location is given by a pair of coordinates (x, y), they can describe its relative location (from start position) or an absolute position. But in neither case can the robot's orientation be determined. For this reason, another parameter, θ, is necessary to express its facing direction. Now the Pose vector (x, y, θ) can be used as a data structure expressing all aspects of its localization. For a three-dimensional localization, the z-coordinate can be calculated using the transpose of the two-dimensional Pose. Some research on robot behavior successfully showed the location estimation without knowing the robot's exact pose, but its accuracy was limited. 16 Mobile robot localization is the problem of determining a robot's pose relative to a given map of the environment. It is often called position estimation. The forklift's localization is an instance of the general localization problem, which is the most basic perceptual problem in robotics.

Mapping is a process of storing extracted environmental information in a data structure. This can be represented in 2D (easier to create and evaluate) or 3D form (increased accuracy). Computer vision solutions and robotics solutions have already been proposed over the years.17,18 The robot can use the sensors to measure its relative bearing to a prominent landmark on the map. Another method is to create the map in the form of an occupancy grid. The grid contains information such as which regions are free, and which are occupied. The robot's sensors measure the directional bearing from the nearest occupied region. The hypothesis space is quite big with a very high number of variables (e.g., approximately 1015 variables with such a discrete technique) and the process is quite complex when neither the robot's location nor the map is known. It is necessary to measure the uncertainty associated with the estimation of the robot's location. A proven powerful technique such as the Bayesian filtering can also be used to calculate this associated uncertainty. 19

In 2003, Carnegie Mellon's Groundhog was used to explore and generate a map of an abandoned coal mine with the intention of working and navigating in conditions that are too toxic. This was one of the main causes for an exponential growth in the demand for robot localization, and ever since then researchers have been interested in mobile robots trying to construct a map while traveling. Simultaneous localization and mapping (SLAM) algorithms have been successful in generating a map of uncharted territory and are used by self-driving cars, planetary rovers and UAVs and AUVs. The process of SLAM involves controls and sensor readings at specific times to compute an estimate of the robot's location and a map of its surrounding environment. Research over the years has shown different approaches in robot navigation using maps and SLAM methods using maps.20,21 Some of these were topological and metric representations while others were probabilistic forms.22,23 But most research faces challenges when the maps are for large areas, possibly due to computational demands and prohibitive uncertainties associated with this.

Experimental hardware setup

The forklift used in this research as shown in Figure 1 is a Xilin Counterbalanced Electric Stacker with a Load capacity of 1500 kg and an overall service weight of 2020 kg. The forklift also has a maximum lifting height of 3 m, and a turning radius of 1.75 m. It should be noted that the base dimension of the forklift is 1 m by 1.3 m and the workspace for this experiment was 10.7 m by 8.9 m as shown in Figure 2. The lidar sensor used in this experiment is SICK NAV-350 as shown in Figure 3.

Xilin counterbalanced electric stacker.

The workspace.

SICK NAV-350 lidar mounted on the forklift.

This is an indoor sensor that is mostly used with AGVs in determining their position using reflectors and its surrounding contour. It can extrapolate its own position (distance and angle) through a computer which can then be used to keep the AGV on course for a predetermined route. 24 Using a maximum of 36 W, the laser operates at the wavelength λ = 905 nm for a 360-degree scan angle. It can use up to 12,000 reflectors to determine its position although the device claims that a minimum of three reflectors is sufficient to ascertain its position and the accuracy of the device is reported to be dependent on its distance from the reflectors mounted on the walls of the workspace. 24

Mathematical model for localization of the forklift

The forklift's localization can be seen as a problem of coordinate transformation. Maps are described in a global coordinate system, which is independent of the forklift's pose. Localization is the process of establishing correspondence between the map coordinate system and the forklift's local coordinate system. Knowing this coordinate transformation enables the forklift to express the location of objects of interest within its own coordinate frame – a necessary prerequisite for its navigation. It is clear that knowing the pose xt of the forklift is sufficient to determine this coordinate transformation, assuming that the pose is expressed in the same coordinate frame as the map.

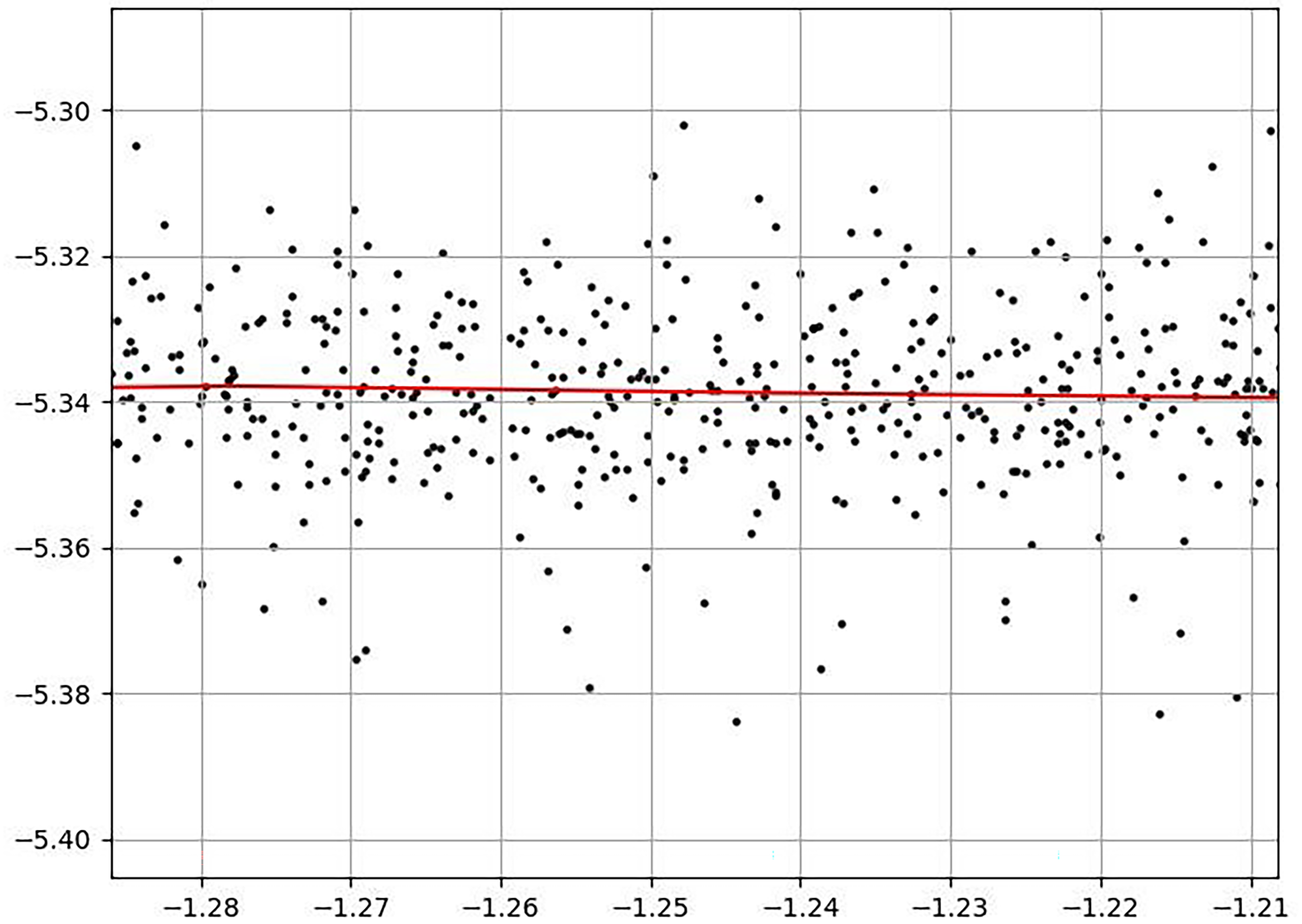

Suppose that a forklift moves within a room with straight walls. Then the lidar data will register each wall as a straight line in polar coordinates. Corners are then the intersection between such lines and introduce discontinuities into the data where one line becomes visible and the other becomes invisible. This simple process was not yielding the desired accuracy. Although most simulations yielded accuracy of around 5 cm, some were off by as much as 8 cm from the measured data. As the preliminary pose estimation needed to be refined, a few additional steps were required. Firstly, a reference room was applied, and the filtered data was projected back onto the raw data. After this, a local search was performed over the raw data to identify the corner coordinates. From the location of the corners, the pose could then be determined. After refining the initial pose estimation, many frames were aligned against a reference frame to gather statistics about the exact shape of the room. All the points were then statistically aggregated to compute a reference room, allowing any frame to be aligned to the room thereafter. Because the room is computed from many frames, the noise washes out errors in measurement. The uncertainty in this alignment then represents the uncertainty in the forklift's position.

In the following sections, a brief review of the relevant mathematics is given.

Mathematical descriptions of parameters used in algorithm

The detailed steps of the algorithm will be discussed in the “Data analysis” section and their corresponding effects will be shown in the “Results and discussion” section. A table showing the processing time for each step of the algorithm will be presented in Table 1. The major steps of the algorithm are:

Convert raw sensor data to usable form. Discover the boundary and landmarks for the room from this data. Create an aligned reference room from the sensor data as well as the room shape and size discovered by step 2. As the forklift moved, new live data along with the results from step 3 were used to determine its current position with the help of a Neural Network classification as well as some statistical tools.

Processing cost time by each step of algorithm.

The rest of this section will focus on all relevant mathematical descriptions of the key parameters used in the algorithm.

Assume that a forklift is placed within a closed boundary that is denoted by B. The forklift's position is described by the vector

Schematic showing the coordinate systems.

The figure shows that the

The scan captures the shape of the boundary of the room B as seen from the forklift's perspective from pose

If it is approximated that the forklift does not move throughout the duration of the scan, then this equation is written as:

To make these definitions a little clearer, the concept of

When the forklift performs a scan and is not moving, or moving slow enough that its movement can be neglected over the duration of the scan, a series of data

The goal of the analysis is to determine the pose of the forklift

In this presented approach, a combination of geometric and machine learning methods is employed to obtain an approximate solution. Near the solution the problem is approximately convex, enabling numerical optimization to be performed via stochastic gradient descent to obtain the final pose estimate.

Data analysis: two phases

This method involves two phases. In the first phase, we acquire some information about the surroundings. This phase is referred to as offline since the forklift must perform some initial analysis about the room before it is ready to be deployed. In the second phase, the forklift is ready to be deployed and to navigate the room. In this phase, there are three possible settings corresponding to three categories of available information which will be discussed in detail in the following section.

Phase 1: offline analysis

Boundary discovery: determine the boundary of the room

Landmark identification: determine landmarks L on the boundary

Phase 2: online analysis

Lidar data: Use triangulation method to align any frame.

Phase 1: offline analysis

This initial phase consists of two steps that provide the forklift with information about the room and the room's landmarks. Each step must be performed once every time the forklift is introduced into a new room. Each step takes a small amount of time, but the steps have not been optimized.

To compute the boundary, at least around

Since the forklift has no prior information about the room, it must collate and align the frames using a brute force method, which performs slower than the optimized methods described below. For this reason, the initial scan and analysis may take a few minutes. (Also note that this step has not been optimized, so the minimum amount of time / data to accomplish this step is unknown for now). The mathematics of this step follows the mathematics of alignment. What is different is that a frame of reference is chosen that constitutes the standard orientation (or “home position”). The forklift will obtain an exploratory dataset of measurements which we denote by D.

The algorithm accomplishes this in a straightforward manner:

The current algorithm accounts for automatically detecting the corners, however it is unlikely that this will work on general rooms. In practice, a simple manual procedure can be introduced by having the user verify that the forklift points in the correct direction of each corner, or by whatever method communicate to the forklift the corner locations. In addition to corners, other reference points can be labelled. The corner locations can then be passed to the dataset that was used to generate the boundary, which are then passed to neural networks. The neural network is trained to recognize the corners. Again, while this step is not strictly necessary, it improves the performance of the algorithm.

Phase 2: online analysis

Filter raw radial data: use statistics obtained from the exploratory dataset D to identify outlier points, or parts of the data that correspond to room doors, etc. Compute curvature of boundary: use curvature to detect corners and landmarks. Identify corners / landmarks with a neural network: is a two-step procedure which first consists of identifying the peaks in the curvature by simple signal processing tools and then feeding these potential peaks into the neural network to classify them.

Using the corner locations, a geometrical alignment is performed.

To obtain the center, the mean of the corner vectors averages to the center of the room. This is true for any rectangle. To obtain the angle, several approximations are used. Simplifying the procedure somewhat, (a) a landmark is used to label the walls and then (b) the average angle of the walls with the standard frame is used to estimate the angle as seen in Figure 5.

The room from the forklift's perspective.

In the robot frame, the frame in which the data is collected, the room appears translated and rotated. However, the total extent still identifies the geometric center of the room.

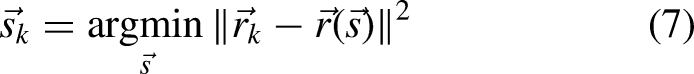

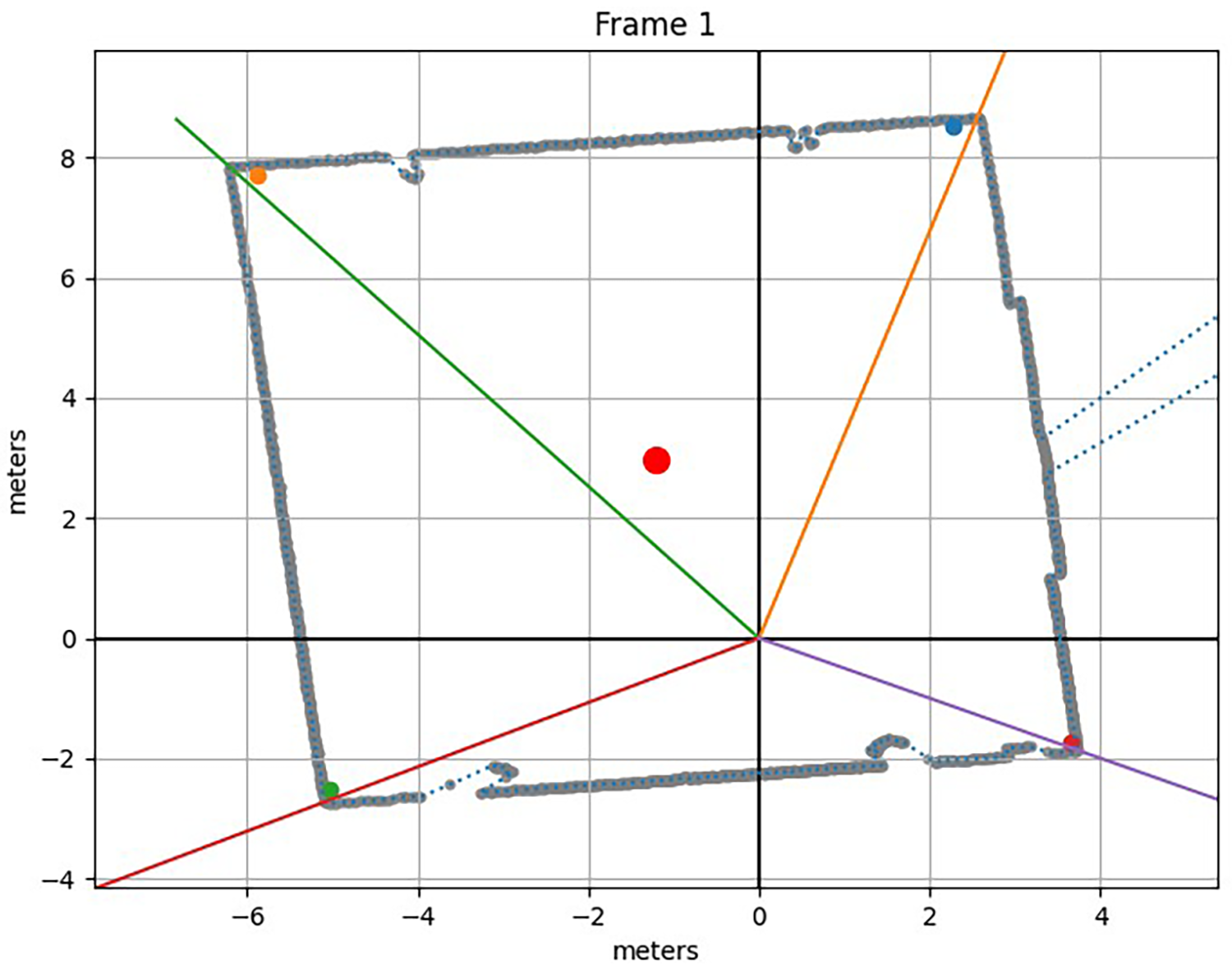

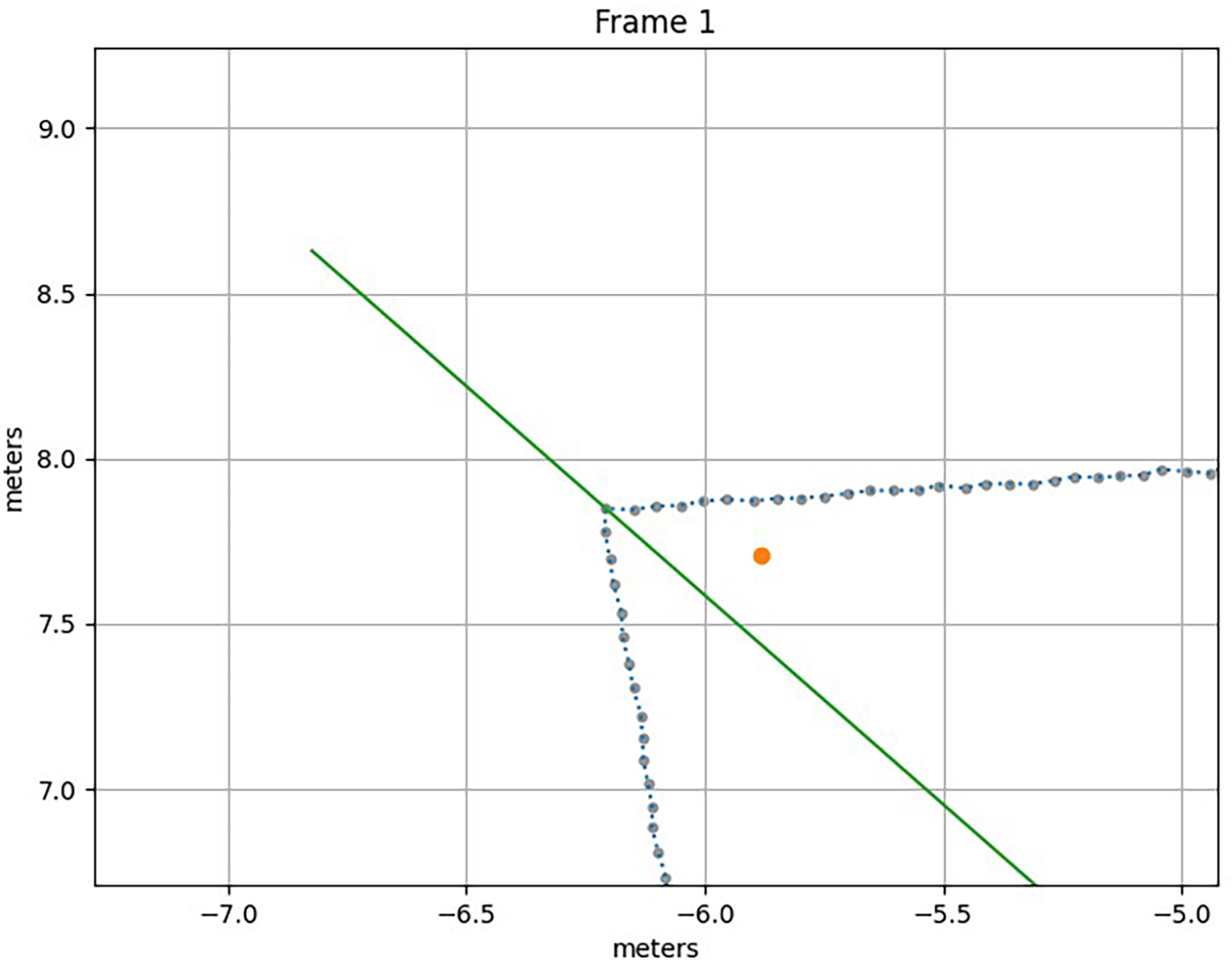

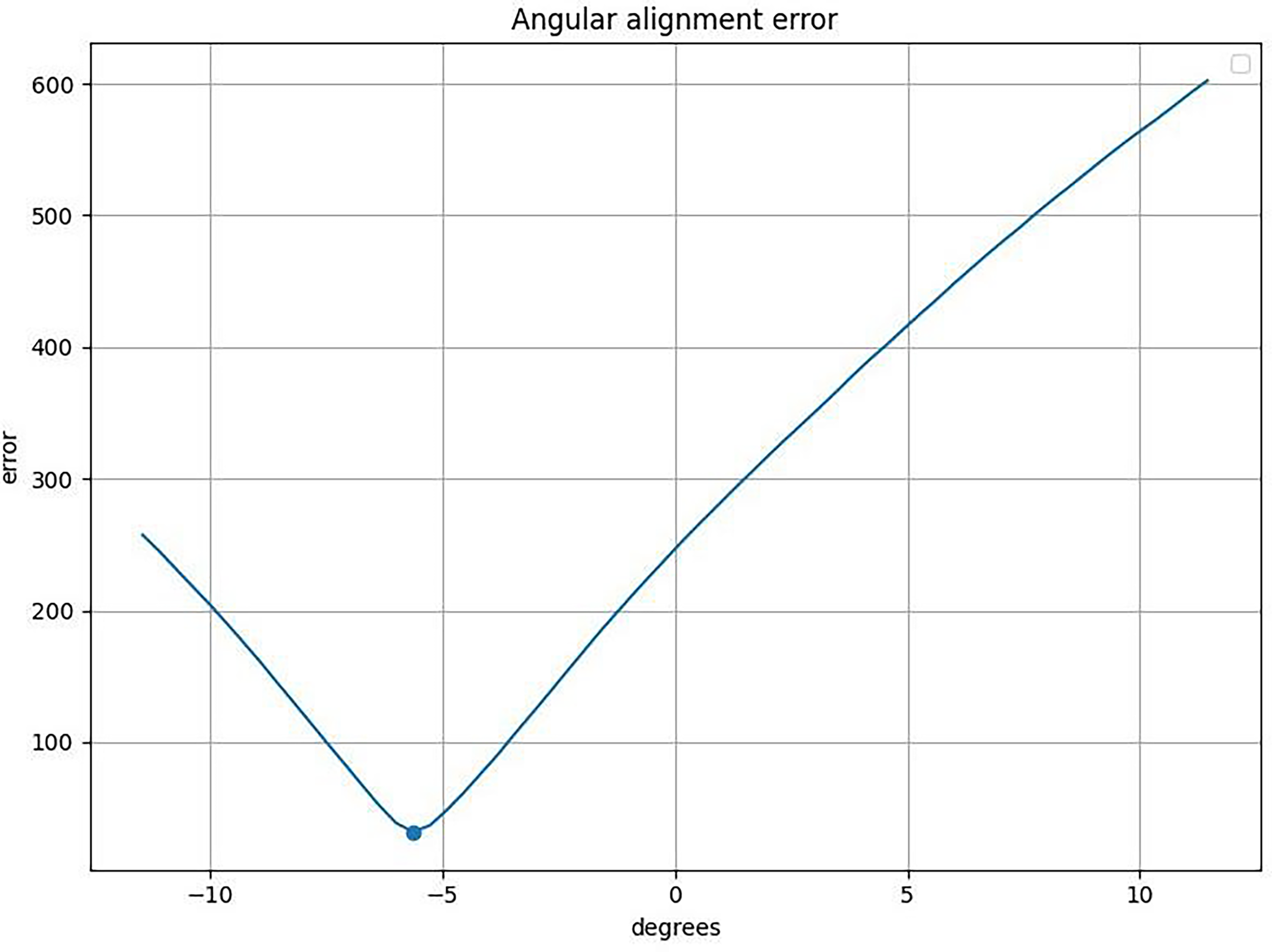

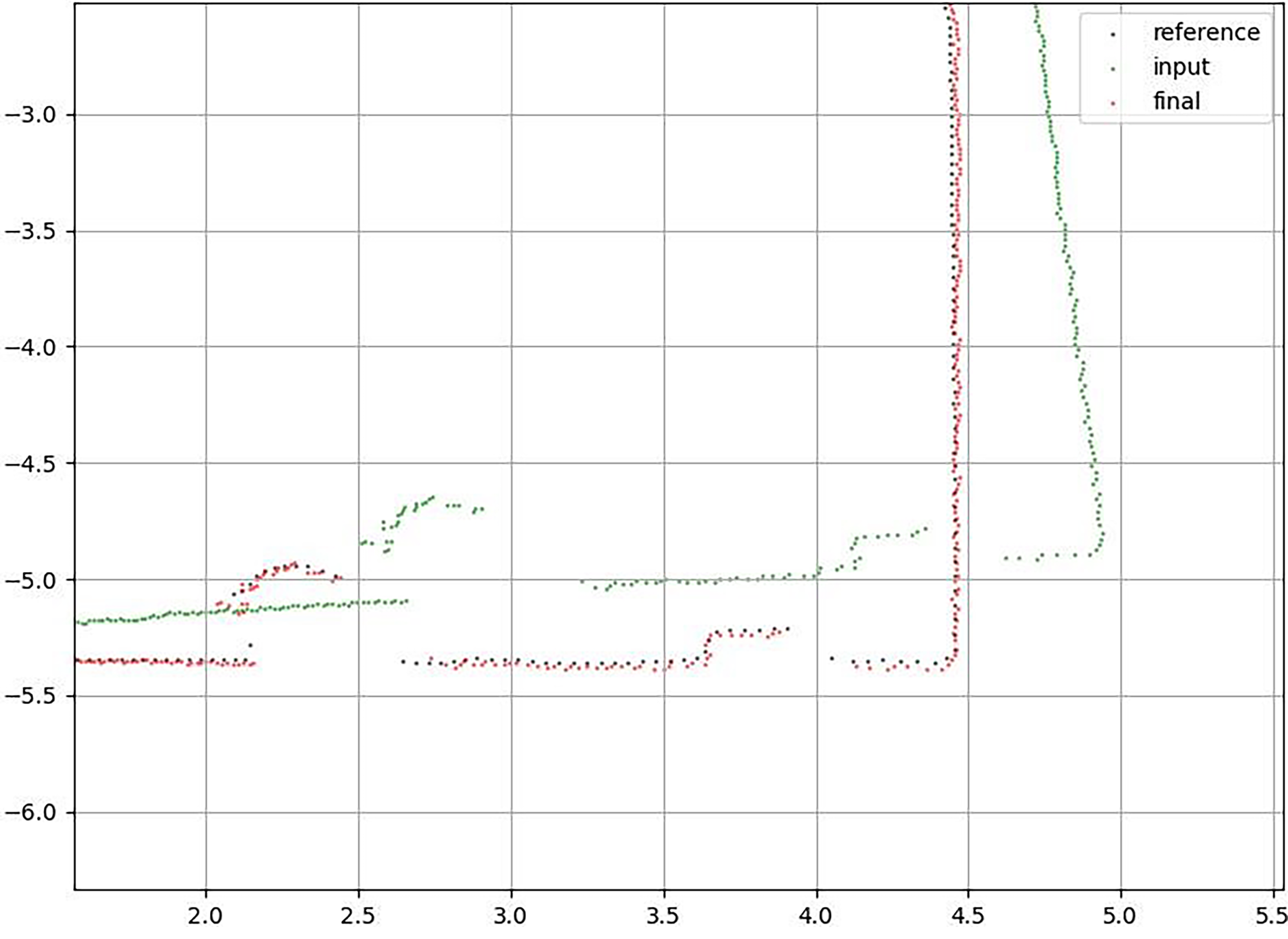

The reference boundary estimate Rotational alignment via SGD: first align the forklift by SGD in rotation. Spatial alignment via SGD: then align the forklift by SGD in space. Now, having constructed a reference room, the error between the reference room and any frame and its proposed orientation can be computed. In other words, a given frame can be re-oriented to the reference room and its degree of alignment to the reference room can be computed. This error computation is performed via brute force over a large range of possible positions and orientations and the optimization is also performed via Monte Carlo. The results are then compared. In Figure 6, the x- and y-axes are in meters. Note the considerable statistical noise between the frames which hinders the pose estimation. Superposition of 100 aligned frames showing a corner, a marker, and a room detail. It was determined that it is essential to build a reference room to optimize the forklift pose estimation. Figure 6, which shows a detail of the superposition of 100 frames, indicates that there is substantial variation in the location of the landmarks between frames. This will be discussed in a later section. In order to align these frames, an initial alignment was required. The initial alignment uses the previously developed method based upon the neural network. An additional algorithm was developed for refining the corner position obtained from the neural network back onto the raw data. See Figures 7 and 8. The refinement of the four corners for a single frame. A detail magnification of Figure 7 showing the corner refinement. The initial corner estimates are shown as dots and the refined corner position via the four colored lines. The x- and y-axes in both Figures 7 and 8 are in meters. The determination of the corner position allows us to estimate the forklift's pose. Now the measurement of the room can be matched against a reference frame. To achieve this, a reference orientation is defined. In the reference orientation, the room is centered (i.e., the center of the room aligns with the origin of the coordinate system), and the angle of orientation is set at 0 degrees. This means that the markers are at the bottom and the walls are vertical as in Figure 8. This allows to build up a collection of frames to compute the reference room as seen in Figures 9 and 10. In Figure 9, the black dots are the input data, the red dots are the reference data, and the green dots are the output data (i.e., the aligned data). Alignment of a single frame to the reference frame in the reference orientation. The error function for the frame alignment. In Figure 10, the left panel shows the error for small shifts of the preliminary alignment to the right and to the left. The color indicates the degree of error (darker color means less error). The center panel plots the central cross sections along x and y to give an idea of the variance of the fit. Note that the error function shows a high confidence of the fit for +/–1 to 2 cm. Many frames are then combined into one dataset. Each of these frames has been aligned with respect to a reference frame and can be an estimate of the true room structure. Thus, a statistical procedure to combine the frames is used to determine the reference room. In Figure 11, note that there is wide variation for the marker. It seems that at some forklift positions (e.g., near the corner), the marker is not clearly visible to the forklift. The x- and y-axes are in meters. A scatter plot of the aligned frames for a marker. In Figure 12, note the large degree of variation compared to the reference room about the markers. It seems that at some forklift positions (e.g., near the corner), the marker is not clearly visible to the forklift. The x- and y-axes are in meters. In Figure 13, note that 1 standard deviation is about 1 cm. A closeup of the reference room (red) along with the data points (black) from which it was computed. A closeup of the reference room along with the data points from which it was computed. Once the reference room is acquired, any frame can be aligned to it. This takes several steps: first, the reference room is put in the reference orientation (see previous section); then the frame is put in the reference orientation as best step using the data from previous section. It is then compared to see how much the frame agrees with the reference room. This error computation has some important complications which are not discussed in detail. Intuitively, the minimum distance between the points of one frame and the points of the next frame are computed. The intriguing factor is determining which points should be compared. Now knowing the forklift orientation in any frame, the room measurement can be transformed into the reference orientation and then compared to the reference room. This is the basic calculation behind the results in the section below. In Figure 14, the markers are at the bottom and the walls adjacent to the markers are vertical. The x- and y-axes are in meters. The reference room in the reference orientation. In principle, the procedure for refining this alignment is simple. In practice, the computation becomes expensive when computed by brute force. First a spatial increment and a rotational increment must be defined. Then the frame-to-be-aligned must be moved and rotated by these increments, computing the error of the alignment at each one. When the scan is complete, the orientation with the minimal error will be evaluated. This is the “optimal alignment.” While simple in principle, in practice there are several complications. The first is the scale of the optimization. There are three degrees of freedom (two spatial coordinates and one angular coordinate), so the number of orientations that must be explored grows with the 3rd power of the number of increments. That is, if there are 64 angular and spatial increments, then there are 262,144 orientations to consider. In practice, that is too many to implement in real time. Other issues involve (a) the optimal step size (b) other search conditions that may allow reducing the number of states. Neither issue has been addressed in the present note. The simplest way to accelerate the search is via Monte Carlo. In simple terms, this means that instead of evaluating every possible orientation, a subset of these orientations is randomly sampled. A variety of probabilistic methods can be used to improve the performance of the algorithm. In the following sections, Stochastic Gradient Descent will be applied to enable mathematical optimization. In Figure 15, note that the black dots are the reference room, the red dots are the aligned room, and the green dots are the input. The x- and y-axes are in meters. Alignment by brute force. In Figure 16, the rotational alignment of the frames shows the error between the frame and the reference as a function of rotation (from the starting position of the frame). The dot shows discovered minimum at about −5 degrees. Rotational alignment of the frames. In Figure 17, the left panel shows the entire search grid over which the room was shifted in x and in y. The right panel shows the 1D plots through the minimum of the alignment grid. Note the uncertainty is about +/–1 cm. Spatial alignment. Next the brute force is compared to the Monte Carlo method. In the Monte Carlo method, the entire space of possible orientations is not sampled, but instead only a random subset is considered. Figures 18 and 19 show that comparable accuracy is obtained versus the brute force method. Alignment by Monte Carlo. Spatial alignment. The black dots in Figure 18 are the reference room, the red dots are the aligned room, and the green dots are the input. Note that the x- and y-axes are in meters and that accuracy is comparable to brute force. The left panel in Figure 19 shows the Monte Carlo search grid over which the room was shifted in x and in y. The right panel shows the 1D plots through the minimum of the alignment grid. Note that the uncertainty is about +/–1 cm. Also note that accuracy is comparable to brute force. It has been shown that Monte Carlo performs comparably to the brute force method. It has also been shown that a reference room built from many frames statistically aids in the rapid alignment of frames. A variance of about 3 cm in the accuracy of the pose estimation is seen repeatedly. This range comes up in several contexts: (1) when aggregating the frames for alignment (2) when comparing the reference room to the aggregated frame (3) when comparing any frame to the reference room. It also seems that forklift velocity impacts the accuracy of the frame. The faster the forklift is moving or spinning, the more that error is introduced into the measurement. This issue has not yet been studied quantitatively. It should certainly be addressed. It is evident that the forklift accelerates during the frame acquisition, causing an apparent bend in a straight wall. This is not to say that the accuracy is limited to 2–4 cm. However, it is roughly the current accuracy for high confidence: 2 cm when the forklift is stationary and obtains a good measurement, 4 cm when the forklift is moving / accelerating. Note that by 2 cm, it is implied plus or minus 1 cm. And by 4 cm, it is implied plus or minus 2 cm. So, it might be more accurate to report this as plus or minus 1–2 cm accuracy. The next change explored was on the optimization of forklift pose estimation using a vectoral reference. This method is based upon the following facts:

The shape of the room does not change. If we can determine the (vectorial) location to the center of the room, or any other room landmark, then we can entirely determine the forklift's pose. From fact 2, the measurement and the reference room are used to align the forklift. This can be divided into two further steps:

First, simple geometric properties of the room are used to estimate the center. The details are omitted here, but some simple math enables us to roughly estimate the forklift's location and orientation in the room. Using the reference room, the alignment of the lidar data is optimized to the reference room. A major method of assessment would be to check for the time needed to assess the forklift's location. Table 1 shows the time of the computation for each step of the alignment. The results here show that the spatial align process was sped up by a factor of nearly Another major method of assessment would be to check for accuracy of this method against a standard. A sampling of 100 sets of pose data (x, y, θ) were collected and analyzed for both the template matching algorithm and the lidar NAV-350's own software solution based on eight reflectors. At the time of data collection, the orientation angle θ was kept fixed at ten specific predetermined positions. These data sets were then compared against the True Data measured physically in the workspace of 8.9 m by 10.7 m. The average error (Δx, Δy) for the Template Matching algorithm versus the true data is (0.75, 0.79) cm. This number was reduced to (0.62, 0.64) cm when data from close to the walls (within 2 m) were not considered. The average error for the lidar NAV-350's own software was (0.88, 0.89) cm, and this was consistent even when compared against data from close to the walls (within 2 m). The experimental setup was not tested for ranges less than 2 m because the forklift has a base dimension of 1 m × 1.3 m. The proposed algorithm in this research yields better accuracy when compared against other path planning algorithms using conventional lasers and visual SLAM for indoor tracking. Published research has shown the results of three different SLAM based technologies: SLAMMER, NAVIS and Matterport. The errors reported were 1.7 cm, 3.2 cm, and 4.7 cm, respectively.

25

Results and discussion

Refine preliminary pose estimation

Align against a reference frame

Aggregate reference frames to compute a reference room

Use reference room to align any frame

Alignment optimization via brute force and Monte Carlo

Vectoral reference w.r.t center of the room

From fact 1, a reference room is computed which consists of the entire room, including walls, corners, and reflectors. This room is aligned into what is called standard orientation. It is the room from the perspective of the forklift assuming that the forklift is located at the center of the room with the sensor facing the wall containing the reflectors.

Processing time of algorithms

Comparison of final results

Conclusion

This paper has presented a novel method of localization that was successfully implemented on an industrial sized forklift. The process of taking sensor data from the lidar and applying the necessary mathematics has been described in detail. The results at each step have been discussed in each section of the paper.

The research was performed with one forklift only. Due to budget and logistic reasons, multiple forklifts were not tested. In the presence of any dynamic obstacles, the forklift would need to stop, and the data would have to be acquired at the point where it stopped instead of its initial planned stopping point.

Past research has successfully used SLAM in tracking model robots indoors. The proposed method was also successful in doing the same but using an industry standard forklift. However, it is limited to rooms having corners or angles in the boundaries. Since the markers used were wall corners, any workspace which is circle or oval or devoid of corners would not be applicable to this method.

The results are quite promising as the error is comparable to the industry standard software that uses multiple reflectors to achieve the same level of accuracy. This experiment can be further investigated in the future to attempt better accuracy in results. Use of multiple additional sensors and statistical tools may reduce the error even more. There is also the uncharted area of investigating this concept using multiple forklifts especially in an actual warehouse environment with dynamic obstacles.

Footnotes

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Data availability statement

Data sharing not applicable to this article as no datasets were generated or analyzed during the current study.