Abstract

This article focuses on the use of a puncture classification method for prostate cancer seed implantation robots. To address the limitations of seed implantation surgery, different tissues in the human body are punctured and classified to improve the precision and success rate of seed implantation. This paper discusses an information fusion sensing technique that can be used in seed implantation surgery, which senses the real-time state of the puncture needle by fusing force and image modes and dramatically improves puncture accuracy and surgical tolerance. In addition, considering the multiscale feature problem of the image recognition process, this paper improves the YOLOv8 model by adding a global attention mechanism (GAM_Attention) to the backbone of the model, which significantly improves the image recognition success rate. Then, datasets of the puncture force and real-time puncture images were produced through puncture experiments. Preliminary puncture experiments with different models were completed, and the experimental results showed that the classification method improved the classification accuracy. The accuracy of seed implantation is improved by this method, which may provide a new idea for deep learning in seed implantation modality perception.

Introduction

In adult men, especially those older than 60 years, prostate cancer (PCa) is a prevalent reproductive system disease. The aging population and changes in living standards are contributing to an increase in the incidence of prostate cancer. The International Agency for Research on Cancer (IARC) of the World Health Organization reported that 1.41 million new cases of prostate cancer were reported globally in 2020, accounting for 7.3% of all new cases and surpassing those of stomach and liver cancer. At present, proximity radioactive seed implantation therapy is a technical method for treating limited prostate cancer, which significantly improves the surgical cure rate by implanting radioactive seeds into prostate tissue through accurate localization of the diseased tissue. Many clinical experimental evaluations have confirmed the advantages of this type of surgery in terms of solid targeting, minor trauma, rapid efficacy, and fewer side effects. 1

Rectal ultrasonography is characterized by low cost, minimal damage, and visualization and is generally used to guide prostate seed implantation procedures. 2 TRUS has poor interpretability for prostate ultrasound images and average human tissue classification. A force-sensing feedback mechanism was proposed to improve the success rate of seed implantation, which dramatically improved the safety of the procedure and the accuracy of surgical position determination. Currently, the puncture robot acquires the magnitude of the puncture force in real time through two acquisition methods: fiber-optic force sensor and multidimensional force sensor. Needle puncture multilayer tissue state perception, which primarily involves acquiring multimodal sensor information, fusion processing, and formalization into high-level commands that the robot can understand, is a prerequisite for the robot’s localized autonomous needle planning. Following the fusion processing and feature extraction of the raw sensing data, there are noticeable variances in the mechanical information and picture features of the various tissue layers when the needle pierces the dura mater, blood vessels, bones, and other tissues.

Park et al. at the Korea University of Science and Technology (KUST) independently created an electrical impedance needle to test the electrical conductivity of muscle and fat tissues. Pork was used in the piercing trials, and the needle conductivity was evaluated in several tissues during the puncture procedure, including the muscle and fat layers, to complete the classification described above. 3 Kumar et al. from the Indian Institute of Technology employed the fiber-optic Fabry Perot force sensor principle to create a fiber-optic stress sensor with an integrated needle tip and gather data. He then used the central difference approach to detect five different soft tissue types. 4 Using multispectral tissue analysis and principal component regression methods, Brian et al. of Johns Hopkins University achieved the online classification of needles inserted into nine soft tissues. They concluded that the developed fiber-optic probe spectrometer system could be used in robotic autonomous needle insertion tasks. 5

As artificial intelligence, computer vision, and medical imaging technologies mature, a large number of scholars have begun to conduct research on signal processing and iterative algorithms for deep neural networks. The connection between neural networks and iterative algorithms has been revealed by the algorithm development. 6 Deep learning technology is developing rapidly in the field of medicine. Li et al. of Qingdao University proposed a multimodal medical image fusion method that obtains fused images through two steps – model learning and fusion testing. The fusion of multimodal medical images can greatly improve the efficiency of image fusion and provide the possibility of automatic diagnosis technology in the future. 7 Yan et al. proposed a two-dimensional ultrasound needle tip tracking technique, which realized real-time needle tip tracking in two-dimensional ultrasound (US) by improving the tracking accuracy and robustness through the improved compressive tracking algorithm (ICT) and the improved Sage-Huas adaptive Kalman filter (SHAKF). 8 Due to the limitations of commonly used target detection algorithms for small target recognition, Yi et al. studied an improved YOLOv8 algorithm, LAR-YOLOv8. By designing a bidirectional feature pyramid network, adding top-down path-guided feature fusion and proposing the RIOU loss function, the detection accuracy of small targets in remotely sensed images improved. 9 Pande and Agarwal, VIT-AP University, successfully identified cysts, tumors, stones, and normal kidneys with 82.52% accuracy by training kidney CT images using the YOLOv8 model, which provides a new idea for kidney disease diagnosis. 10 Haq et al. from Telkom University also successfully recognized the position and trajectory of spinal needles by the YOLOv8 model with a real-time detection accuracy of 95%, which further improved the accuracy of spinal needle placement. 11

Description of the puncture classification methods

Single-picture identification approaches are typically susceptible to variations in viewing angles (such as rotation, scaling, and tilting), which cause problems with recognition accuracy and reliability. This can occur if the target is presented at different angles. 12 It can also occur when noise and distortion in the image negatively affect the accuracy of single-image recognition methods.13–15 Seed implantation methods can embed external information into the target in the image or video and realize the tracking and state estimation of the target. It is more suitable to study the information fusion perception method of needle-pricked soft tissue for robotic intervention.

Force-mode-based puncture classification methods

The puncture stiffness force increases steadily as tissue puncture is performed. There is a great magnitude of the stiffness force at the moment before the tissue is punctured. The force on the puncture needle after the tissue is punctured is the friction and cutting forces at the location of the tip of the needle, which is modeled as a combined force:

where Z denotes the tip position of the puncture needle,

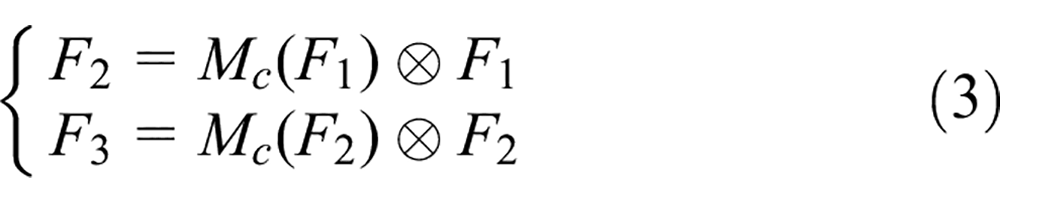

During the soft tissue puncture, the soft tissue exhibits deformation, rupture, and crack extension as the puncture needle moves. At different puncture stages, the puncture needle is subjected to different forces and the puncture force pattern changes. Particular attention is given to the fact that the puncture force undergoes a significant abrupt change at the critical point of tissue deformation and after the puncture needle pierces the tissue. Hence, this paper focuses on the analytical treatment of this stage. The mechanical model of the specific puncture process can be divided into four stages: (a) prepuncture, (b) deformation, (c) puncture, and (d) needle withdrawal, as shown in Figure 1:

Quantitative analysis of the soft tissue puncture procedure where A, B, C, and D are the needle tip positions,

Thus, a complete mechanical model of the puncture process is derived:

where z denotes the location of the tip of the needle,

Different piercing force patterns occur when a needle is placed into a human body because of how the needle interacts with the tissue and the various tissue shapes. 11 We can identify and categorize each puncture force pattern in a particular model using image processing and deep learning, allowing us to assess the current puncture condition and create plans to respond to it. Different puncture force patterns will arise since each model’s materials vary.

In the four stages of the puncture process shown in Figure 2 (begin puncture, deformation, insertion, and withdrawal), different puncture forces appeared. Before puncture, the puncture force is small and tends to be stable. In the deformation phase, the puncture needle is in contact with the surface of the liver tissue, and the puncture force reaches a critical value to pierce the tissue, and the puncture needle enters the tissue interior. At this time, there is a decreasing trend of the puncture force. In the insertion phase, the puncture needle continues to penetrate into the tissue interior at a constant speed, and the puncture force increases slowly with increasing puncture depth. Finally, in the needle retreat stage, the puncture force slowly increases due to the rapid decrease in penetration force occurring in the withdrawal stage due to a rapid change in the direction of penetration.

Penetration force of human tissue.

This paper uses the latest image processing model, YOLOv8, for processing learning. The YOLOv8 backbone uses the C2f module, the detection head uses anchor-free + decoupled-head, the loss function uses a combination of classification BCE, regression CIOU + VFL (new item), and the frame matching strategy is changed from static matching to task-aligned assignment matching. The mosaic operation is turned off in the last 10 epochs, and the total number of training epochs increases from 300 to 500.

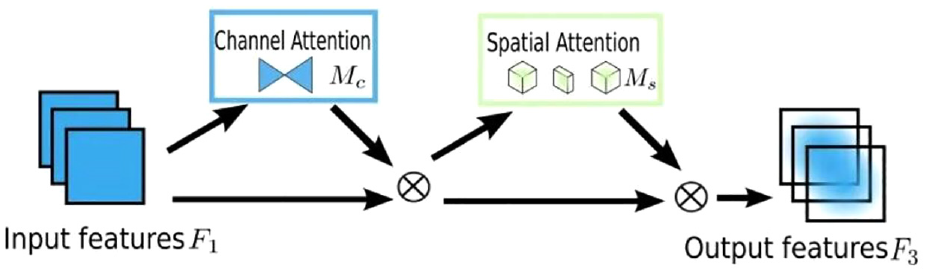

Due to the particular characteristics of force waveform maps, the detection effect of using the YOLOv8 model alone must be more satisfactory. We optimize and improve the YOLOv8 model. A global attention mechanism (GAM) is added, which preserves the correlation between spatial and channel information and thus improves the model’s prediction performance. The GAM can capture the correlation between different information channels efficiently and thus better differentiate the detection targets. 16

where

Diagram of the GAM network structure. Reprinted with permission from Liu et al. 16 Copyright 2021.

As shown in Figure 4, we add a GAM layer under the backbone of YOLOv8, which contains two submodules: channel and convolutional space attention. In channel attention processing, the input feature maps are first subjected to maximum pooling and average pooling, then processed by multilayer perceptron (MLP), and finally processed by the sigmoid activation function. In convolutional spatial attention processing, the pairs of features are then subjected to convolutional operations and finally processed by the sigmoid activation function.

Structure of the model.

Image pattern-based puncture classification method

During seed implantation surgery, the contact between the puncture needle and soft tissue generates friction and extrusion forces on the needle surface, forcing the needle to bend and deform, thus deviating from the intended trajectory. 17 The YOLOv8 model can identify and categorize each organ model while also dynamically tracking the position of the needle tip to assess whether the puncture needle travels along a predetermined path and reaches the intended location. The input image is preprocessed to extract features, which are then used to build a classification model during training and matched with category features in the database during testing, and the results of the category discrimination are output based on the degree of matching (Figure 5). 18

Silicone puncture model.

After comparison, the YOLOv8 model is better at object recognition because it replaces the C3 structure of YOLOv5 with the C2f structure, which is richer in gradient flow, ensures that it is lightweight and, at the same time, enables the model to obtain richer information about gradient flow, dramatically improving the model’s performance (Figure 6).

C2f structure diagram.

YOLOv8 discards the previous IoU matching or one-sided proportional allocation method, uses the task-aligned assigner positive and negative sample matching method, and introduces distribution focal loss (DFL). The regression loss includes left-side DFL and right-side DFL. The left-side DFL is calculated by rounding down the target to obtain the left-side integer and then adding 1 to the left-side integer to obtain the right-side integer. Weights are calculated based on the difference between the target and the left and right integers, using the left and right-side targets and the predictive distribution as inputs.

where S i is the sigmoid output of the network, y i and y i +1 are the order of the intervals, and y is the label value.

Considering the superiority of the dynamic allocation strategy, the YOLOv8 algorithm directly references TOOD’s task-aligned assigner, which selects positive samples by weighting the classification and regression scores.

where s is the corresponding prediction score and µ is the IoU of the prediction and GT boxes (Figure 7).

Model inference process.

The inference process of YOLOv8 compared to that of the previous generation YOLOv7 decodes the bbox form of the integral representation in DFL to a four-dimensional bbox. The head part has six scales of output and regression feature maps, in which three compartments with different scales of category prediction branches and bbox prediction branches are spliced. Finally, the enclosure transform is performed, and the shapes are given as (b,8400,80) and (b,8400,4). The classification prediction branch performs sigmoid computations, while the bbox prediction branch needs to be decoded.

In general, training effectiveness is measured using the mean average precision (mAP), which is closely related to the P and R curves. In this paper, we use the following method.

Note that the AP is for a particular class, and a dataset often contains multiple classifications, so the AP is then averaged to yield the mAP value:

where AP represents the average accuracy of a particular class of samples, m denotes the number of positive examples, C denotes the number of dataset categories, P denotes the maximum accuracy of a sample, and mAP denotes the average accuracy of the dataset.

Experiments

Silicone rubber was used to represent muscle tissue for the penetration studies in this research (Figure 8).

Experimental model.

The material is a 1:1 mixture of A and B gels, with the main ingredients of the A gel being 40.1% vinyl-terminated dimethyl, 30% polydimethylsiloxane, and 29.9% hydrated silica, and the main ingredients of the B gel being 30.1% vinyl-terminated dimethyl, 30% polydimethylsiloxane, 29.9% hydrated silica, and 10% hydrogen-terminated dimethyl. We placed models of blood vessels, tumors, bladders, and prostates inside the gel for puncture experiments since the substance exhibits characteristics similar to those of human tissue. Since each model’s material varies, various piercing force patterns also occur. Based on the force-mode and image-mode experiments, we produced two datasets, 80% of which were used as the dataset and 20% as the validation set.

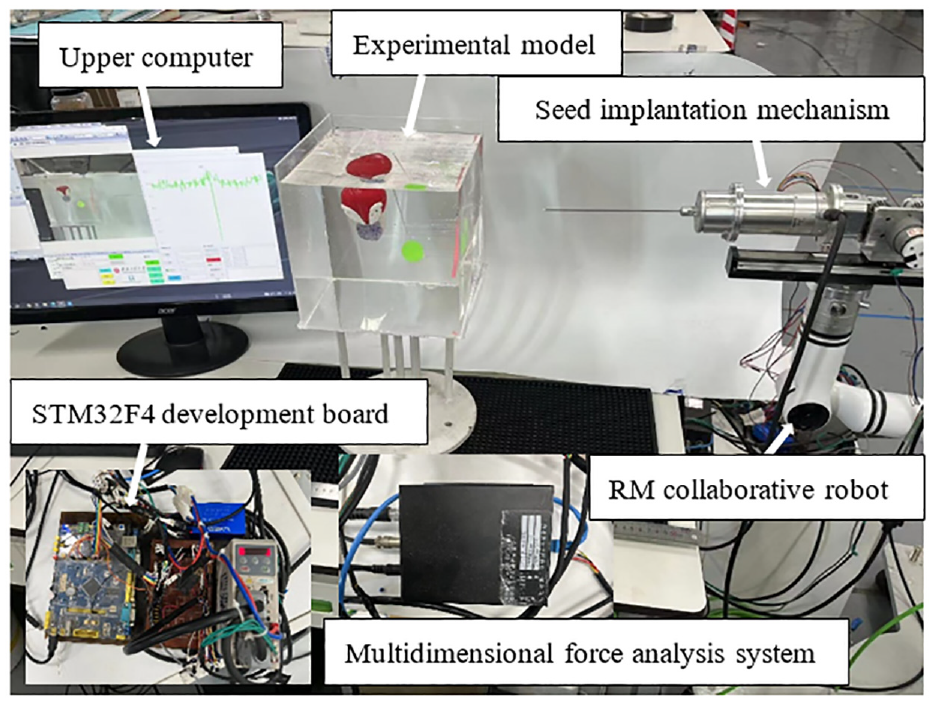

To complete the puncture experiment, we designed and developed a radioactive seed implantation robot as the experimental equipment (Figure 9).

Puncture experiment platform.

The experimental platform consists of an experimental model, an RM collaborative robot, a seed implantation mechanism, a multidimensional force analysis system, an STM32F407 development board, and upper computer software. 19

Experiments on force model-based argumentation for puncture classification

For the production of a force pattern dataset, the sensor in this paper adopts the resistance strain gauge type to collect the stress of the elastic beam, design the corresponding collection circuit, and utilize the constant-current source bridge conversion circuit, high-precision signal amplification circuit, and digital-to-analog conversion circuit to realize accurate online collection of the puncture force (Figure 10).20,21

Puncture force signal detection and amplification circuit composition.

To highlight the improvement effect, we conducted three sets of control experiments with YOLOv8n trained at 200 epochs, YOLOv8n trained at 300 epochs, and the improved YOLOv8-GAM trained at 200 epochs. Since the YOLOv8-GAM model stopped at Epoch 199 at 200 Epochs, a 300-Epoch control trial was not performed. After averaging over all categories, the best performance scores for the validation set were as follows: (a) precision = 0.986 ± 0.001, (b) recall = 0.916 ± 0.001, (c) mAp50 = 0.995 ± 0.001, and (d) mAp50-90 =0.895 ± 0.001. The experimental parameters and performance scores are shown in Table 1. The precision-recall and F1 curves are shown in Figure 11.

The performance scores of the three object detection models.

F-measure confidence curve and precision–recall curve: (a) F-measure confidence curve for YOLOv8n, (b) F-measure confidence curve for YOLOv8-GAM, (c) precision–recall curve for YOLOv8n, and (d) precision–recall curve for YOLOv8-GAM.

The following shows the actual detection of the puncture force pattern. After testing, the model can recognize the puncture force pattern in static picture mode and has high accuracy when predicting dynamic video. Using the improved YOLOv8n model can help us distinguish different puncture force patterns with high accuracy and improve the reliability of seed implantation (Figure 12).

Penetration force pattern detection: (a) vascular penetration force pattern and (b) tumor penetration force pattern.

Experiments on puncture classification argumentation based on image patterns

We similarly conducted three comparison experiments for the image pattern puncture experiments with YOLOv7, YOLOv8n, and the improved YOLOv8-GAM models. The training of the YOLOv8-GAM model stopped at period 198 after no significant improvement in the last 50 periods. After averaging over all categories, the best scores for the validation set were as follows: (a) precision = 0.939 ± 0.01, (b) recall = 0.966 ± 0.001, (c) mAP50 = 0.912 ± 0.001, and (d) mAP50-95 = 0.554 ± 0.001. The experimental parameters and performance scores are shown in Table 2. The precision-recall and F1 curves for the YOLOv8-GAM model are illustrated in Figure 13.

The performance scores of the three object detection models.

(a) Precision–recall curve and (b) F-measure confidence curve.

As seen from the data, the improved YOLOv8-GAM model has higher training accuracy and better results with the addition of the attention mechanism. Therefore, we discarded the YOLOv8 model and used the YOLOv8-GAM model, which has higher accuracy for prediction. After testing, we found that the model can better recognize different experimental models under dynamic conditions with higher accuracy and no recognition errors (Figure 14).

Image pattern recognition.

Information fusion perception

We can identify and pinpoint the precise location of the puncture needle in real time throughout the procedure by fusing the two modes of perception. In addition, we can use it to complete the puncture potential sensing function by receiving some feedback data. We can monitor the situation of the puncture needle through real-time imaging and respond quickly (stopping the needle, removing the needle) when there is an unexpected change in the piercing force.22,23 However, if there is a problem with real-time imaging (damage to the camera or other hardware), we can assess the puncture needle’s condition by the puncture’s force to determine whether it has reached the implantation point of the seed.

We fused the two datasets and retrained them with the improved YOLOv8-GAM model due to the multiscale feature problem of the two datasets. The GAM_Attention attention mechanism reduces the loss of cross-dimensional information and improves accuracy. After averaging over all categories, the best scores for the validation set were as follows: (a) precision = 0.875 ± 0.01, (b) recall = 0.857 ± 0.001, (c) mAP50 = 0.995 ± 0.001, and (d) mAP50-95 = 0.810 ± 0.001. The experimental parameters and performance scores are shown in Table 3. The precision-recall and F1 curves for the YOLOv8 model are illustrated in Figure 15.

Size of the performance scores of the detection models.

(a) Precision–recall curve and (b) F-measure confidence curve.

As seen from the above data, and Figure 16, the model still has a good classification and recognition effect after fusing the two modes, which meets our expectations.

Information fusion perception experiment.

Conclusion and outlook

In this paper, we propose an information fusion situational awareness concept. We fused force and image pattern recognition for the first time using a deep learning target detection technique (YOLOv8) for recognition and prediction. To verify the feasibility of the technique, we collected force and image data through puncture experiments and produced our dataset for simulation experiments. We created two situational awareness functions through simulated trials. The model’s foundation was strengthened by adding a global attention mechanism, or GAM, which maintains the correlation between spatial and channel information and enhances the model’s capacity for prediction. When performing image processing, the accuracies were improved by 41% and 49% for mAP50 and 68% and 9% for mAP50-95, respectively, compared to the YOLOv8n model. The accuracy of the fusion experiments reached 0.995 for mAP50 and 0.810 for mAP50-95. There are still some problems in the experimental process due to the limitations of self-constructed datasets. The experimental dataset is small, which impacts the training effect.

Footnotes

Handling Editor: Chenhui Liang

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by Key Laboratory of Advanced Manufacturing and Intelligent Technology (Ministry of Education), Harbin University of Science and Technology (Grant No. KFKT202209); Anhui Engineering Research Center on Information Fusion and Control of Intelligent Robot (Grant No. IFCIR2024014); National Natural Science Foundation of China (Grant No. 61741101), Anhui Provincial Department of Economy and Information Technology Industry University Research Project (Grant No. JB22031); Anhui Future Technology Research Institute Enterprise Cooperation Project (Grant No. 2023qyhz35); Anhui Province Higher Education Natural Science Research Project (Grant No. KJ2021A1208); Wuhu Science and Technology Plan Project (Grant No. 2022jc41). 2023 Collaborative Innovation Program for Universities in Anhui Province (Grant No. GXXT-2023-076).