Abstract

Collecting and analyzing qualitative research with large datasets is inherently complex and often difficult to convey in traditional manuscripts. Contemporary guidance on managing such datasets, especially those with multiple data sources, is limited. Implementation science, which studies and tests methods to promote uptake of evidence-based practices into routine use, often requires complex qualitative data and analysis and faces unique challenges. These challenges include multiple data collection time points and the need for rapid analysis and feedback to sites. In this paper we reflect on the data collection, management, and analysis of over 400 pieces of qualitative data from three different sources (semi-structured interviews, meeting minutes, and open-ended survey responses) as part of a large implementation study. The data discussed are derived from a type 3 Hybrid-design study of H-HOPE (Hospital to Home: Optimizing the Preterm Infant’s Environment) in six diverse neonatal intensive care units (NICUs). We first thoroughly outline the methods our team used in collecting and analyzing our large qualitative dataset. We then discuss four key processes that aided our data collection, management, and analysis: structure and consistency, prompt responsive alterations to site specific procedures, an iterative and extensive analysis approach led by case summaries, and utilization of both an initial ‘quick’ and more in-depth traditional qualitative analysis approaches. Recommendations for future studies working with large qualitative data sets with multiple sources of data are discussed.

Conducting qualitative research, particularly with large datasets, is inherently complex. It is often difficult to convey the complexity and rigor of qualitative research in traditional manuscripts. There is a dearth of contemporary guidance on managing large qualitative datasets (Devonald & Jones, 2023; Hodgson et al., 2024), especially with multiple sources of qualitative data (Lichtenstein & Rucks-Ahidiana, 2021). Due to the increased complexity, detailed discussion on managing large qualitative datasets is important.

Large datasets may involve collecting data at multiple time points which allows for relationship building with participants and time for deeper reflection on the data (Devonald & Jones, 2023). Yet rapport-building could blur into ‘friendship’ or personal information being shared. This brings about concerns with maintaining appropriate researcher-participant boundaries (Batty, 2020). In addition, matrixes and framework-guided codebooks are common in managing large datasets as they can help guide data collection and analysis. Maintaining consistency across all team members over time through detailed training and oversight is thus important (Devonald & Jones, 2023). However, that can be challenging over time as many research teams include students, who may turnover multiple times during a longitudinal study, and who each bring vast differences in experience and skill level. For example, if the codebook is not closely followed, codes will not be consistently applied the same way by all coders. Inadvertently, minor shifts in data management or interpretation may threaten the validity and rigor of the results. Further, throughout data collection, new research questions may emerge or new relevant analysis approaches may be identified. This has the potential to further complicate the research process if researchers get sidetracked by assisting in secondary analyses led by students, for example. Delays could also occur if the team decides to learn and/or test a potential new analytical approach (Busch, 2014). Thus, thoughtful and meticulous approaches and oversight are imperative.

Implementation science, which studies and tests methods to promote uptake of evidence-based practices into routine use, commonly uses qualitative approaches to make sense of the implementation process and identify barriers and facilitators to implementation (Bauer & Kirchner, 2020; Hamilton & Finley, 2019). But qualitative research in implementation science faces unique challenges. One common challenge is that multiple sites are often involved. If, for example, six sites are included, there may be a total of 30 interviews with personnel, but only five interviews per site. And possibly only one or two personnel type (e.g., nurse, leader). There may also be ongoing pressure from sites for the research team to analyze and return their own site-specific results quickly.

It is common for a final dataset of qualitative data to be large as data are commonly collected from more than one site and at more than one time point. Often, the same participants are interviewed at several time points. This increases the need to develop and maintain trust (Batlle & Carr, 2021). However, site specific sample sizes are often small as qualitative interviews or focus groups are typically conducted with a handful of individuals in various roles at each site through purposive sampling (Hamilton & Finley, 2019). The small site sample size creates challenges in confidentiality when results are shared back with the site (Campbell et al., 2023). While data are aggregated and de-identified, the perspective of particularly vocal individuals or discussion of particular events might still be recognizable by individuals at the site.

In addition, rapid data collection and analysis are often crucial for making adaptations to the intervention or implementing new strategies to address barriers in ‘real time’ (Glasgow & Chambers, 2012; Nevedal et al., 2021). For that reason, rapid qualitative analysis approaches are becoming more popular (Kowalski et al., 2024). Rapid analysis typically involves taking detailed notes during interviews and immediately filling out a matrix summarizing predetermined themes sometimes based on a specific conceptual model. Areas that need clarification are noted and checked with audio recording (Nevedal et al., 2021). While rapid analysis approaches are becoming more common, this process requires a structured, well-organized approach with dedicated and well-trained team members (Holdsworth et al., 2020; Nevedal et al., 2021). However, more traditional in-depth coding approaches for obtaining high levels of details are still commonly used as well (Neal et al., 2015; Nevedal et al., 2021). Further, frameworks are frequently used in implementation science to guide data collection and analysis. However, many implementation frameworks are limited because they focus on identifying and delineating common factors rather than deeper qualitative understanding (Kirk et al., 2016; Nilsen, 2015). Finally, site recruitment and data collection often must occur relatively quickly to capture perspectives at the same time point or at specific intervals in a sequential implementation approach.

These challenges are present in most implementation studies. However, they are more salient when working with large datasets. In this paper we reflect on the data collection, management, and analysis of three different sources of qualitative data as part of a large implementation study. We aim to 1) thoroughly outline the methods our team used in collecting and analyzing our large qualitative dataset, which included interviews, meeting minutes, and open-ended survey responses; and 2) discuss four key processes that aided our data collection, management, and analysis. Recommendations for future implementation studies working with large qualitative data sets with multiple sources of data are also discussed.

Research Team

An experienced team is essential as it brings diverse complementary expertise than any single researcher would lack. Our team brings extensive experience in qualitative research. Collectively, we bring decades of experience in qualitative research and over a decade of experience in implementation science. Further, the Multiple Principal Investigators (M-PIs) (RWT, DB, KN) and Co-Investigator (Co-I) (KK) have a long history of collaboration. KN and KK co-led the qualitative research team.

Description of the Intervention and Implementation Study

The data discussed in this article are derived from a large implementation study of H-HOPE (Hospital to Home: Optimizing the Preterm Infant’s Environment), an evidence-based early behavioral intervention that consists of an infant-focused (Massage+) and a parent-focused (Parents+) component (White-Traut et al., 2021). H-HOPE (originally termed ATVV; Auditory, Tactile, Visual, and Vestibular) was developed to provide mothers of preterm infants with a guided opportunity to interact with their preterm infants (Parents+) while using Massage+, which promotes infant development. In addition to being developed and tested in the US (White-Traut et al., 2013, 2015), H-HOPE has been tested in Malawi, Africa (Kapito et al., 2023). Investigators in the US (Bell et al., n.d.) and other countries (Gaffari & Jindal, 2020; Gualdrón & Villalobos, 2019; Rodovanski et al., 2023; Séassau et al., 2023) have also tested the ATVV and H-HOPE.

The study employed a type 3 Hybrid design which simultaneously examined the implementation process and effectiveness of H-HOPE in six diverse neonatal intensive care units (NICUs) in the United States using an incomplete stepped wedge design.

Theoretical Framework

The Consolidated Framework for Implementation Research (CFIR) guided qualitative data collection and analysis (Damschroder et al., 2009, 2022). The CFIR is a meta-theoretical framework that guides researchers to identify and understand diverse contextual factors that influence implementation process and success and is one of the most commonly cited frameworks in implementation science (Kirk et al., 2016). CFIR guided the development of the semi-structured interviews. In addition, our team created a CFIR codebook tailored for this specific implementation context and process which was utilized throughout the analysis process.

NICU Sites

We considered many variables when selecting the NICUs for this study. We wanted to include NICUs providing diverse levels of care (e.g., levels 2, 3 and 4) and with a wide range of sizes (based on annual admissions). We also aimed for geographic diversity in the United States, including urban, rural, and suburban based NICUs and considered the diversity of patients, including racial/ethnic distribution of each NICU. Finally, as NICUs were asked to take on such a large endeavor, we had to consider their interest and ability (e.g., leadership, resources, motivation) to implement H-HOPE.

Six diverse NICUs across four health systems in the United States (four in the Midwest and two in the South) participated in this study. In two of the health systems, two different NICU units participated. At each NICU, an H-HOPE implementation team was developed, typically consisting of NICU managers, clinical leaders, and administrators (White-Traut et al., 2021). The external facilitator (implementation project director) met with the implementation team regularly, led site team meetings and assisted with implementation issues. For each pair of sites within the same health system, the H-HOPE teams met together for their implementation team meetings, yet each implementation process was modified to meet the unique needs of each site (e.g., how training was organized and facilitated, what strategies would facilitate implementation).

Qualitative Data

Qualitative data included three sources: semi-structured interviews, several open-ended survey responses from a larger quantitative questionnaire, and H-HOPE implementation team meeting minutes. Qualitative interviews were conducted with both parents and hospital/NICU personnel (staff, H-HOPE team members, managers, clinical leaders, hospital administrators) to better understand their experience with H-HOPE, including barriers and facilitators to engagement, implementation, and sustainment. NICU nurses also completed quantitative surveys with an open-ended qualitative survey component to further capture their perspectives on implementation. Finally, H-HOPE implementation team meeting minutes from each NICU provided further insight into the implementation process, including how the team incorporated H-HOPE into the nurse workflow and adaptations necessary to improve reach and sustainability.

Sampling and Recruitment

Semi-Structured Interviews

Hospital personnel and parents were recruited for semi-structured interviews. We purposively selected hospital personnel at each site, including: a hospital leader (administrator), NICU leadership (e.g., medical director, nurse manager, clinical leader), H-HOPE implementation team members, and NICU nurses and other staff (e.g., occupational therapist, physical therapist, speech therapist, social work) who delivered H-HOPE or assisted in its implementation. Among parents, we originally planned to randomly select and interview parents who had participated in H-HOPE and consented for larger data collection of their preterm infant and themselves. However, due to logistical issues, such as being able to promptly identify eligible families prior to discharge and difficulty in connecting with parents, the sample became a convenience sample.

Two research leaders (project director and implementation center PI) identified hospital leaders, NICU leaders, and implementation team members for semi-structured interviews. NICU staff received an interest form to complete via QR code during H-HOPE training. In addition, staff identified as particularly enthusiastic or unenthusiastic by the implementation team were purposefully recruited. Our team contacted hospital personnel via email or text for two to three interviews: one pre-implementation (but post-training), one following approximately six months of implementation, and in sites that were able to sustain, one following two months of sustainment. Interviews lasted on average 30 minutes.

Parents were identified through REDCap when a planned discharge date of two weeks or less was entered. Parents were contacted by text message or phone call for two interviews: one approximately two weeks prior to their infant’s planned discharge, and one approximately six weeks post NICU discharge. Interviews lasted on average 20 minutes. To ensure parents did not feel pressured to participate, parents were only contacted three times before no further contact was attempted. Additionally, the parents received a $75 gift card for participation in the larger study but did not receive any additional monetary compensation for the interview. In the informed consent document, it was clearly stated that the interview was optional and not necessary. Further, the clinician providers were not involved in recruitment. Finally, parents were assured that the clinicians who cared for their infants would not know whether they participated or not and would not know what they shared.

Open-Ended Survey Responses

As part of the larger study, all NICU nurses who worked at least .50 FTE were emailed an anonymous quantitative survey at three points: pre-implementation, during implementation, and post-implementation. The survey was originally designed to assess burnout, work satisfaction, and the work (practice) environment. Due to difficulty in recruiting NICU nurses for interviews (discussed in ‘Prompt Responsive Alterations to Site Specific Procedures’ section below), after the first site, open-ended qualitative survey questions regarding nurses’ uptake of H-HOPE and associated barriers and facilitators to implementation were added to the quantitative survey. The entire survey was designed to take less than 20 minutes.

H-HOPE Team Meeting Minutes

The external facilitator (KG) led regular meetings with the implementation team at each NICU. Meetings typically lasted one hour and followed a structured agenda.

Both hospital personnel and parents completed written informed consent, signed via REDCap, prior to conducting semi-structured interviews. Nurse open-ended survey responses were anonymously collected and thus no informed consent was collected. Implementation team members were exempt from consenting for meeting minutes but approved the use of meeting minutes for analysis. All recruitment materials, data collection methods, and consent forms were approved by the Medical College of Wisconsin’s Institutional Review Board (IRB).

Data Collection

Qualitative Data by Type and NICU

Note. Sites are not listed in order of implementation to increase confidentiality. Sites 1 and 2 and 5 and 6 were part of the same hospital system and held team meetings together, which is why their total is combined. In addition, NICUs 5 and 6 had frequent infant transfers. While we tracked where parents were initially recruited, we combined parent interviews for those sites.

Data Management and Analysis

Data Collection and Management Steps for Personnel Interviews

Coding approach

All qualitative data, aside from parent interviews (discussed at the end of this section), were coded in MAXQDA. A codebook was created with a sub-set of CFIR constructs believed to be most relevant for this project. These constructs were collaboratively identified through discussions with implementation leaders at each NICU and team conversations based on prior research, clinical, and implementation experience.

Directed content analysis (Hsieh & Shannon, 2005) began with team coding in weekly meetings to get everyone comfortable with the codebook and to refine the codebook for clarity and consistency. After the team coded two full transcripts together, team members were comfortable with the codebook and coding process. We then progressed to double coding. While double coding, we discussed differences in weekly coding meetings and made minor updates to the codebook as necessary. We double coded all transcripts from the first site, at which point agreement was very high, demonstrating high coding reliability. Finally, we moved to individual coding for most interviews with coding verification during case summary development. We purposefully identified transcripts with greater detail and richness of data to code. We were able to do this due to our rich data immersion.

Case summary approach

After an individual team member coded an interview, a second team member created a case summary for each interview by summarizing the coded data into the relevant CFIR construct in an Excel file (each construct had its own row). The individual case summary was at a basic descriptive level, summarizing yet keeping close to the raw data. Interviews not coded also had a case summary created through reading the transcript without detailed coding. Consistency or changes in the interviewee’s perspective over time (from one interview to the next) were also noted. Coding verification was done by the person creating the case summary. They would review the coded transcript in MAXQDA and make additions/deletions as necessary. All changes were tracked in a separate file and discussed during team meetings to ensure we maintained a high level of consistency across multiple sites and time points.

Individual case summaries were then combined by participant type (i.e. Nurse, H-HOPE Team, Nurse Manager, Administration, Medical Director, Therapists) while keeping unique details for each participant but also noting patterns within the participants. Open-ended responses from nurse questionnaires were coded with the same CFIR codebook and added to the combined nurse case summary at each site. Implementation meeting minutes were also coded with the same CFIR codebook and added to the H-HOPE team case summary at each site.

Our team then created within site summaries, which required a deeper comparative analysis than the individual summaries. We started at the role level within each site, looking at divergences or convergences. We iteratively combined roles one at a time (e.g., combined nurses and therapies, then added managers to that combined summary, then H-HOPE team, then administration, and finally medical director). Then across all roles, we combined agreement but noted variation.

Once site summaries were created, as a team, we rated each CFIR construct for each site from -2 (Major Barrier) to +2 (Major Facilitator) based off our summary for each construct. This rating scale was adapted from Damschroder & Lowery (2013). This rating system considers both the valence (positive or negative effect) and magnitude (strength of effect) (Damschroder & Lowery, 2013).

Finally, we entered each case summary into a single Excel document for across site comparison. CFIR constructs were listed in rows and each site had its own column. We compared the CFIR constructs and their ratings to identify patterns, or convergence and divergence, across all sites. We first identified common constructs (barriers and facilitators) across all NICUs. We then identified distinguishing constructs of ‘successful’ sites (those that were able to sustain H-HOPE) in comparison to sites that were unable to sustain H-HOPE. Finally, we re-examined constructs that didn’t play a large role in implementation.

Parent interviews

Parent interviews were focused on their experience with H-HOPE and barriers or facilitators to participation. They were not included in the case summaries. We did not use the CFIR codebook which is more suited to implementation within and across sites or organizations. Instead parent interviews were double coded more broadly for perceived barriers and facilitators to participation in H-HOPE, as well as benefits from participation (Schmitt et al., 2025).

Key Processes

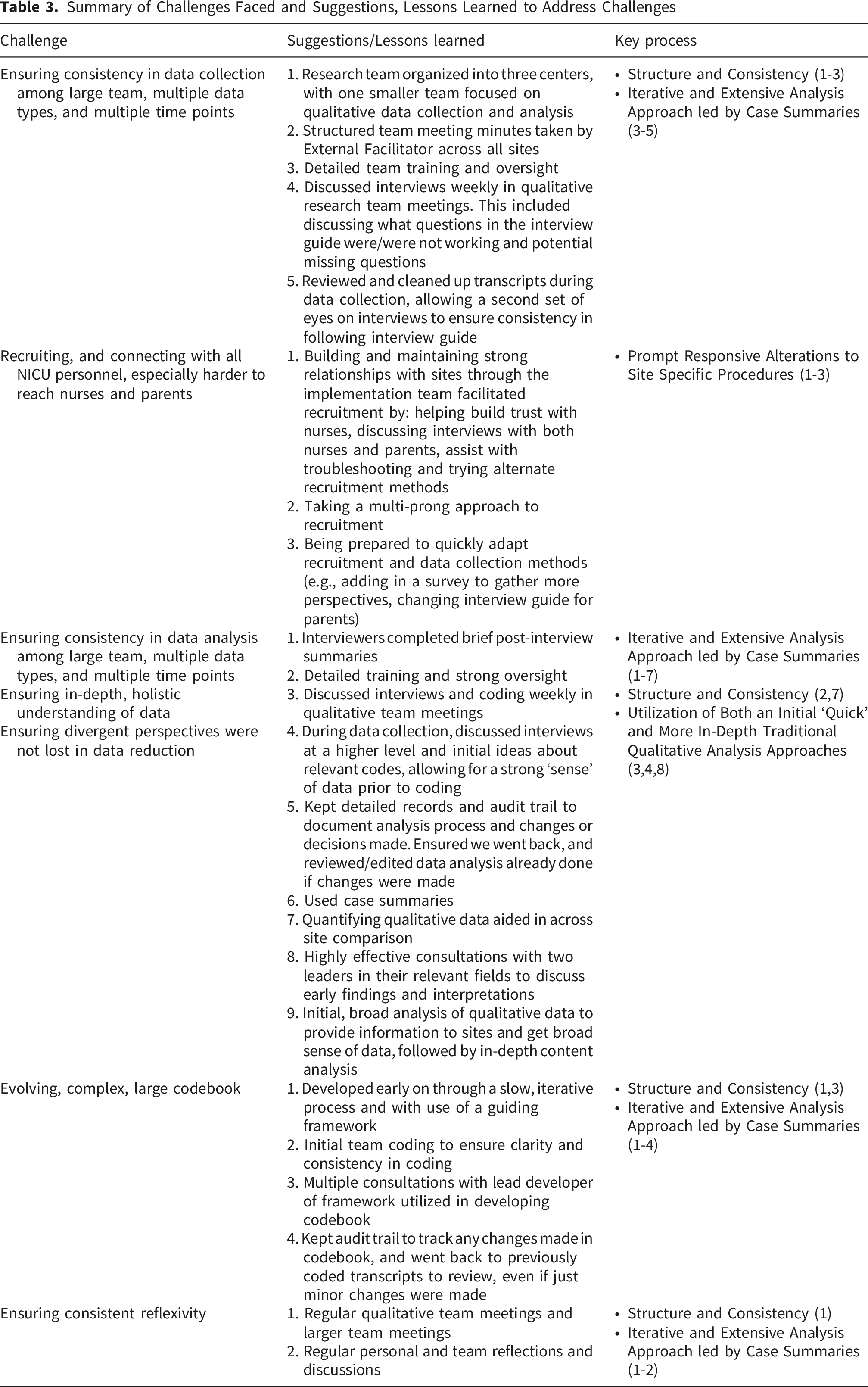

We have identified four key processes that aided our approach for this study (Figure 1): structure and consistency, prompt responsive alterations to site specific procedures, an iterative and extensive analysis approach led by case summaries, and utilization of both an initial ‘quick’ and more in-depth traditional qualitative analysis approaches. To support discussion of the four key processes, Table 3 shares many of the challenges we faced and that we believe are common in managing large qualitative datasets. It also provides lessons learned/recommendations that align with our key processes. Key processes organized by major research domains Summary of Challenges Faced and Suggestions, Lessons Learned to Address Challenges

Structure and Consistency

We benefited from structure and consistency in three ways: 1) internal research team organization; 2) use of theory (CFIR), along with external consultation, to guide data collection and analysis; and 3) a consistent structured format for implementation meeting minutes across all NICUs.

Internal Research Team Organization

Our research team for the implementation study consisted of three M-PIs organized around three centers, with one M-PI leading each center: the qualitative and mixed methods center, the implementation center, and the quantitative center. While work certainly overlapped centers at times, by organizing in this way, we used team members’ expertise to focus on specific aspects of the study, allowing for greater data immersion and insight.

Use of Theory (CFIR) with External Consultation

CFIR provided a starting off point on what to focus on and how to make sense of our findings (Wise & Shaffer, 2015). In addition, we were fortunate to have Laura Damschroder, the lead developer of CFIR, join us virtually for two consultations. During our first consultation, as we discussed our codebook development, she shared information about an impending updated CFIR publication (Damschroder et al., 2022). Once the updated CFIR was published, we finalized our codebook with constructs we identified as relevant from both the original and the updated framework, allowing us to utilize new constructs that were particularly relevant, such as ‘Critical Incidents’ (e.g., COVID-19 pandemic) and ‘Teaming’ (e.g., nurses working together). During the second consultation, we discussed early findings, broader implications, and ideas for how to organize the data. This expert consultation was especially advantageous by identifying how to best use the CFIR for our study, by helping us get past the initial struggle of how to organize the vast amounts of qualitative data, and by improving our confidence in our approach.

Structured Implementation Team Meeting Minutes

Our external facilitator (KG) organized the implementation team meetings with the NICU clinical site leader in the same fashion for each NICU. This consistent, structured manner for meeting agenda and minute taking saved our team an immense amount of time in coding. As one person facilitated meetings across all NICUs, we also benefitted from having one resource to contact for clarification. Our external facilitator recorded meticulous notes and reflection throughout the implementation process at each NICU and thus was able to fill in gaps or clarify inconsistencies in the data as well.

Prompt Responsive Alterations to Site Specific Procedures

Overall, at each NICU, we were able to obtain a diverse sample of personnel to participate in interviews, allowing for a nuanced understanding of the implementation process and related barriers and facilitators. However, we faced challenges recruiting staff nurses and parents for interviews, which required prompt alterations to recruitment procedures.

Nurse Recruitment

Initially, we had more difficulty recruiting NICU nurses for interviews than we anticipated. This was in part due to the high stress and burnout many nurses were facing during/post the COVID-19 pandemic (Catarelli et al., 2026; Gralton et al., 2025; Shaw et al., 2021). We also found many nurses were extremely concerned about confidentiality and whether their leaders would know they participated in interviews and/or what they said. We talked with the larger research team about these challenges and brainstormed solutions. We also talked with the implementation team at each site to gather their perspectives on nurses’ concerns and how to address them. While we were unable to address recruitment challenges due to burnout, we were able to quickly alter our recruitment and data collection methods to address confidentiality concerns by clarifying more extensively and repeatedly how we were maintaining confidentiality. We created a recruitment video and provided more detailed information during staff training that 1) discussed the value of the interviews, not just for this project but for their NICU in learning about how to better implement projects in the future, 2) what types of questions would be asked, and 3) how seriously we took confidentiality. For example, access to raw data was limited to our qualitative team and not available to the other MPIs. Other changes we made on site included: having a member of the implementation team and/or leader talk with nurses during rounds and staff meetings to encourage participation, distributing paper interest forms for nurses to fill out, and sending email reminders and links to the interest form.

To further aid in capturing diverse perspectives, as mentioned above, open-ended responses regarding the nurse’s uptake of H-HOPE and associated barriers and facilitators to implementation were added to the anonymous questionnaires sent to nurses. Through our ability to rapidly respond, we were successful in obtaining a sample of nurses with diverse perspectives – some very enthusiastic supporters, some indifferent, and others who were frustrated or disappointed with the program and the implementation process.

Parent Recruitment and Data Collection

Through our previous research, we greatly respected the stress and busyness of parents with a preterm infant in the NICU. However, we still faced greater difficulty in recruiting parents than initially anticipated. It was very difficult to reach parents and be able to talk uninterrupted at our scheduled interview time. Our initial approach of waiting for a Research Coordinator or Clinical Practice Leader to enter a planned discharge date in REDCap was not feasible. At many sites, the coordinator would only have time to enter these dates once a weekly or sporadically. This led to instances where the planned discharge date wasn’t entered until after the infant was already discharged. Moreover, at times we wouldn’t know about a planned discharge until the day of or day before, at which point it was nearly impossible (and inappropriate) to reach the parents.

We adapted our protocol so we would not have to rely on these planned discharge dates. We started recruiting any parents who had been enrolled for at least a week and whose infants were at least 33-34 weeks post-menstrual age (with the idea that they would have been able to have their first H-HOPE session). We also adapted the interview guide to first ask general questions focused on their NICU stay and then more focused questions about participation in H-HOPE. If we started an interview and learned they had not been able to participate in H-HOPE, we would continue with general questions and not discuss H-HOPE. We changed this focus for a couple of reasons. First, we didn’t want to abruptly end the interview and/or make the parent feel like they were missing out on something they should have received already or were not contributing to this aspect of the study. In our prior research with parents of preterm infants, we have found the approach of letting parents tell their story to be essential. This is in part because of their vulnerability when recalling their NICU experience. Second, this general information was still helpful to sites regarding parents’ experiences in the NICU, even if it didn’t directly pertain to the intervention.

Another adaptation we made was creating a combined first and second parent interview guide. While we ideally wanted to interview parents once prior to discharge and once post-discharge, this combined guide allowed us to capture more information if we were only able to reach parents post-discharge. While this relied more on parents recalling their experience in the NICU, the information gained in these interviews was similar to those collected in the two separate interviews, increasing our confidence in their validity. All these adaptations allowed us to capture more parents who had immersive experiences with H-HOPE and who we would not have been able to capture if we waited for data to be entered into REDCap.

Iterative and Extensive Analysis and Data Reduction Approach Led by Case Summaries

Due to the large quantity of data, familiarization with the data were vital to support trustworthiness and rigor. Throughout data collection and analysis, we took numerous small steps in becoming familiar with the data, combining data through case summaries (from individual to role to site), ensuring divergent perspectives were maintained, and then finally reducing the data into a manageable and useful interpretation. This also included multiple external consultations that aided data analysis and interpretation.

To support familiarization and validity, as mentioned above, data were regularly discussed at qualitative team meetings throughout the collection and analysis process. This included detailed recordkeeping and audit trail to document our analysis process and changes or decisions made throughout. This was particularly helpful as we compared and combined data across participant type and site to ensure we were analyzing and interpreting data consistently. In addition, during weekly large research team meetings, implementation center updates were provided, giving more detail on dynamics occurring within each NICU context. This helped frame our interview questions and development of the codebook.

During data collection, interviews conducted in the prior week would be discussed in team meetings. This discussion focused on the perspective of the interviewee, but also helped identify if any interview questions needed to be altered, added, or removed, as well as if other key personnel were identified to interview. We also made initial notes of divergent and convergent perspectives across interviews. This helped develop a strong ‘sense’ of what the data looked like prior to coding. Further data immersion occurred as we carefully checked each transcript for accuracy by listening to the audio recording. This meant at least one person would end up listening to and reading each transcript multiple times.

During coding, we also regularly discussed the relationship between the different CFIR constructs as we found ourselves regularly coding certain aspects of the transcripts with few, closely related codes that were theoretically similar. We tried to identify the most critical construct to avoid over-coding as much as possible. This included adding some rules about when to use more than one code to the codebook. This allowed us to reduce duplication to focus our analysis, which aided greatly in combining, and then reducing data.

The rigorously developed case summaries were invaluable because of the numerous times we went to them during our analysis. While creating them was more work up front, due to our deep data immersion, they were not too difficult or time consuming. Specifically, having individual, role, and site case summaries aided in ensuring rigor and accuracy within and across sites of this large dataset. They also saved us from the tedious task of re-checking transcripts to verify information. Further, we each personally took time to reflect on the data collection and analysis process and discussed our perspectives regularly. This allowed for us to identify any biases, be more thoughtful and intentional in framing our summaries, and ensuring we maintained integrity in all stages of data management.

Quantifying of the qualitative data through rating each construct from -2 (Major Barrier) to +2 (Major Facilitator) also greatly facilitated across site comparison (Damschroder & Lowery, 2013; Sandelowski et al., 2009). This rating schema allowed us to first compare constructs at a macro level. Once these comparisons were made, it was easier to go back to the qualitative summaries and focus on the nuances, or what really made the sites similar or unique. For example, we rated ‘Opportunity’ (defined as the nurse/staff having the availability, scope, and power to do H-HOPE) as a barrier or major barrier for all six sites. However, in reviewing the more detailed qualitative summaries, we found that lack of time was a major barrier for all sites, but other aspects such as the influence of assignments and staffing impacted sites differently. At some sites, the consensus was that it was difficult but still doable, while at other sites nurses and other staff rarely had the opportunity to provide H-HOPE.

Finally, in addition to the consultation with Laura Damschroder mentioned above, we had two consultations with Eileen T. Lake, PhD, RN, FAAN, who has conducted groundbreaking work surrounding the impact of the work environment on nurse work capacity and well-being. We initially reached out to her for consultation to help us interpret and make sense of data we collected using a questionnaire she developed, the Practice Environment Scale of Nursing Work Index (Lake, 2002). However, as we proceeded with analysis, a second consultation with her was extremely valuable as she brought her expertise to assist us to connect how constructs interacted with each other and make meaning of our findings in the larger context of the NICU.

Utilization of Both Initial ‘Quick’ and More In-Depth Traditional Qualitative Analysis Approaches

For each site, we utilized an initial qualitative analysis prior to in-depth coding. This was done to provide the implementation team at each NICU site with findings. This analysis mainly focused on a deductive approach of identifying barriers and facilitators to implementation broadly, without worrying about which CFIR construct it most closely aligned with. We organized barriers and facilitators into sub-themes to share summaries and quotes with each NICU. Once data collection was complete, we also conducted more in-depth directed content analysis through coding of transcripts, meetings minutes, and qualitative question responses (as detailed above) (Hsieh & Shannon, 2005).

The initial analysis helped us become more familiar with the data and CFIR. While we were not organizing our findings into CFIR constructs at that time, our team still discussed which constructs appeared more frequently. This built on our discussions following each interview and led us to be more familiar with the data prior to in-depth coding. However, we also had to acknowledge potential biases we may have developed through our initial analysis. We were very reflective by writing memos and discussing openly in team meetings to ensure our codes and analysis were an accurate reflection of the data. This also included going back and verifying information repeatedly. This is again where the case summaries were invaluable.

The more in-depth thematic data analysis was particularly helpful in identifying key CFIR constructs that impacted implementation and how these constructs interacted with each other. This led our analysis beyond simply identifying barriers and facilitators but examining the relationship between them. Without this deep level of immersion with the data, we do not believe we would have been able to pinpoint the complex relationships between CFIR constructs to the level that we were.

Discussion

This article used an example from a Hybrid Type 3 implementation study of a behavioral intervention in NICUs to discuss four key processes that facilitated collection, management and analysis of a large qualitative dataset. These key processes allowed us to stay organized, get useable data back to each site to provide real-time adaptations and decision making, and find nuance in an otherwise overwhelming amount of data. Our approach, and these key processes, were consistent with the seminal work of Renata Tesch; specifically viewing the process as systematic and orderly but not rigid, as analysis was concurrent and cyclic, using an organizing scheme to identify and sort text, and using reflectivity throughout the entire process (Tesch, 1990). Many of our processes also mirror other published work on large qualitative datasets. For example, Devonald & Jones (2023), in discussion of their longitudinal study of adolescent development in low- and middle-income countries, noted that their codebook was guided by a conceptual framework, they utilized both initial and in-depth coding, and mentioned having frequent team meetings to discuss coding/transcripts.

Our method of data management, particularly the development of case summaries, was key to our success. This allowed us to efficiently re-examine data to identify and validate the common and distinguishing constructs we identified during analysis. We revisited these case summaries frequently. For example, we reviewed them when rating each construct, throughout analysis, and in development of our findings/manuscripts. We had multiple levels of data to backtrack for verification: from site case summaries, role case summaries, individual case summaries, and then finally original transcripts. There is a dearth of literature on the use of case summaries in qualitative research. This approach is similar to narrative summaries as it can account for complex dynamics and processes between participants and topics (Bayoumi, 2004; Dixon-Woods et al., 2005).

Many of the key processes we discussed here are not novel or unique to large qualitative datasets or implementation science, such as use of theory (Collins & Stockton, 2018) and iterative analysis (Srivastava & Hopwood, 2009). However, they have not been discussed in detail, especially pertaining to large datasets or implementation science. We aimed to identify and share what processes we found particularly helpful and those that would be widely applicable, even outside of implementation science. For example, the use of CFIR to guide coding and analysis was especially helpful in data reduction (Horwood et al., 2022). While the use of a framework is common (Moullin et al., 2020; Nilsen, 2015), there has also been some critique that over-reliance on conceptual frameworks in data analysis may inhibit deep engagement with the data (Sale & Carlin, 2025). However, in our case, knowing how to integrate the CFIR was key in high level analysis (including multiple consultations with the lead developer of the framework).

As discussed, we had more difficulty recruiting NICU nurses for interviews than initially anticipated. In retrospect, we should have 1) focused more on the open-ended responses in the nurse surveys and then asked in the questionnaire if nurses would be interested in a more in-depth interview and 2) found a way to compensate them for their interview time. By recruiting immediately after they provided their initial open-ended questionnaire responses, nurses may have had a better idea of the types of questions that would be asked. They may also have been more motivated to participate immediately following reflection initiated by the questionnaire. Moreover, we were not able to offer any nurses compensation for their participation because some NICUs had policies that nurses could not be compensated for research participation, and for ethical reasons, we wanted to be consistent across all sites.

Finally, artificial intelligence (AI) was not used in this study; however, it is increasingly being utilized in qualitative research to assist with thematic and content analysis. Yet with AI, there also comes ethical concerns and questions about its validity (Christou, 2023; Marshall & Naff, 2024). As AI is likely to become more common in qualitative research, especially due to its recent integration into ATLAS. ti, NVivo, and ChatGPT, researchers should examine how AI could most effectively aid in the handling of large amounts of qualitative data. In particular, using AI as an ‘additional team member’ may aid in an initial round of analysis or data consolidation (Christou, 2023; Marshall & Naff, 2024).

Implications for Future Research

Research teams overall, and especially in implementation science, are becoming increasingly interdisciplinary. While this brings about unique challenges, it also facilitates diverse experiences and perspectives that can aid in research design and analysis (Guerrero et al., 2017). We recommend relying on these diverse teams in regularly discussing methodological challenges and ethical concerns. In addition, in our project, we had two significant external consultations with topic experts that impacted our analysis and interpretation in a much more significant way than initially anticipated. Through our experience, we know some consultations are much more logistical or surface level discussions and do not always occur throughout all phases of a study, especially data management and analysis. However, we were fortunate to have two consultants (Laura Damschroder and Dr. Eileen Lake) who clearly dedicated significant time to preparing for our consultations and thus provided extremely valuable insight. We developed detailed plans and agendas for these consultations as well, including sending materials for review ahead of time. We recommend seeking external consultations when feasible and taking the time to properly prepare and being clear in expectations regarding time commitments (and compensation) of consultants through all phases of the study. Finally, we found the use of case summaries especially useful with a large dataset. It helped create connections between the individual and the whole. It also provided an efficient and accurate way to go back to the data without having to review the coded transcripts.

Qualitative data are commonly used in implementation science and extensive datasets will become more common with large trials across multiple sites. Thoughtful and rigorous approaches are necessary in managing these datasets, including systematic approaches to the qualitative components of implementation studies. The rigor of qualitative approaches is not always detailed and truly appreciated in publications, yet it is equally important to quantitative analysis. Qualitative approaches are vital for understanding how to make interventions work in complex settings, yet continues to face criticism as being unscientific or unreliable (McAleese & Kilty, 2019). Thus, it is important that we as investigators show the rigor of our research. It is also important for investigators to detail our approaches, learn from each other, and openly discuss the many real, but manageable, challenges we face. In summary, we highlighted here four key processes that facilitated our implementation study across six NICUs. We suggest continuing this conversation to ensure that qualitative research in implementation science maintains high levels of rigor and trustworthiness to reach its potential of improving care and services across diverse communities.

Footnotes

Acknowledgements

We would like to thank Laura Damschroder and Dr. Eileen Lake for their invaluable support through numerous consultations throughout our research project.

Ethical Considerations

Our study was approved by Medical College of Wisconsin (approval no. PRO00035600). All participants provided written informed consent prior to enrollment in the study.

Author Contributions

MS, KN, and KK conceptualized this manuscript. MS wrote the original draft preparation. All authors contributed significantly to review and editing of the manuscript.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by National Institute of Child Health and Human Development, National Institutes of Health [1R01HD098095].

Declaration of Conflicting Interests

The authors declared no potential conflicts of interests with respect to the research, authorship, and/or publication of this article.