Abstract

During COVID-19, social distancing regulations hindered traditional in-person child research. To study children’s lived experiences of resilience during COVID-19, a novel, arts-informed online interview protocol was developed and evaluated for utility and feasibility. In total, 27 children (ages 7–10) participated in an eight-stage semi-structured interview: (1) Introductions and assent; (2) Icebreaker activity; (3) Agenda; (4) Resilience video; (5) Child survey; (6) ME/WE activity; (7) Visioning storytelling video; and (8) Wrap up/Thank you. The online mixed-media, multi-sensory protocol proved effective for building rapport, engaging children, and collecting high-quality data. Future research should consider online arts-based approaches to enhance child engagement.

Introduction

The evolution of COVID-19 as a global pandemic invoked the implementation of public health regulations by governments across the world to help prevent the spread of the virus (World Health Organization, 2020). In Ontario, Canada, such regulations included the declaration of a state of emergency that mandated the temporary closure of in-person school, childcare centres, non-essential businesses, and most other face-to-face settings (Government of Ontario, 2021; Nielsen, 2020). Ontario initially responded to COVID-19 by closing schools from March 14, 2020 to June 30, 2020. The following school year, Ontario adopted a phased, staggered approach, where schools either returned in-person or reverted to emergency remote learning based on COVID-19 data from their public health region. From March 2020 to May 2021, Ontario closed schools for a cumulative 20 weeks – a duration longer than any other Canadian province or territory (Gallagher-Mackay et al., 2021). These regulations were necessary to promote public safety, but had complex, profound, and multi-level consequences, often unintended, throughout society. While all citizens were impacted by these regulations, children were subjected to some of the most severe disruptions to daily life (Van Lancker & Parolin, 2020). The vast majority of the two million school-aged children in Ontario were forced to abruptly transition from the traditional classroom to an online learning environment (Duffin, 2020). This posed significant challenges for young children, as it compromised their engagement with school, familial routines, community connections, emotional well-being, and social relationships – all of which are key common resilience factors central to child development (Masten & Motti-Stefanidi, 2020; Van Lancker & Parolin, 2020). Despite the anticipated magnitude of socio-emotional consequences stemming from the pandemic-related disruptions, children’s voices about their lived experiences of resilience have been underexplored in the context of COVID-19.

Children are believed to be one of the most excluded and marginalized groups relative to participation in decision-making (Cornwall & Fujita, 2012), and this problem is known to be exacerbated during disaster and emergency situations (Martin, 2010). The silencing of children is justified by decision-makers and researchers by their designation as a vulnerable social group, implying that they are virtually defenseless and in need of assistance and protection in the event of a crisis (Bennouna et al., 2017; Martin, 2010; Rodríguez-Giralt et al., 2017). In reality, children are competent agents in their own lives with valuable insights that can help formulate protective responses, including during times of great difficulty (James & James, 2012; World Vision International, 2021). Indeed, the United Nations Convention on the Rights of the Child states that children have the right to express their perspectives and to be heard regarding any matter affecting them (Lundy et al., 2011). Children have made valuable, well-documented contributions to disaster resilience work in the past and have inherent potential to add value to pandemic-related decision-making as well (Rodríguez-Giralt et al., 2017). For the benefit of children themselves, as well as broader society, it is critical to learn about children’s lived experiences of the COVID-19 pandemic from children themselves (Armitage & Nellums, 2020), as a lack of understanding hinders opportunities to respond to children’s needs, as well as improve supports in the event of future public health emergencies (Prime et al., 2020; Wade et al., 2020).

To prioritize the ethical, effective, and meaningful inclusion of children in research, child-centred participatory research methods are considered ideal (Barker & Weller, 2003; Grant, 2017; Woods, 2000). Such techniques aim to ground children’s voices at the centre of the research process, empower them to clearly articulate their lived experiences, and trust them as experts of their own world (Baumann, 1997). When conducting research with children, individual interviews that incorporate innovative, task-based research methods are recommended to promote confidence in the reliability and validity of the data (Baumann, 1997). On average, children have a much shorter attention span than adults and experience a steeper decline in their attention over time when engaging with a task (Simon et al., 2023). Thus, to better sustain children’s attention techniques such as rapid feedback, progression, and storytelling, can promote children’s interest and curiosity in active participation (Topsakal & Topsakal, 2022). Further, in acknowledgement of the diversity in skills and competencies among elementary school-aged children, using a variety of research methods has been recommended to gather comprehensive data (Baumann, 1997). Another particularly effective means of promoting children’s active participation in research is employing arts-informed methods, which combine traditional and innovative artistic research techniques (e.g., drawings, photographs, diaries, in-depth interviews, and surveys; Barker & Weller, 2003; Coad, 2007; Kutrovátz, 2017). Arts-informed methods are widely considered an effective means of promoting children’s agency in the research process by conducting research with them – as an equal partner – rather than on them (Barker & Weller, 2003; Gibson, 2012).

In the pre-COVID-19 pandemic context, arts-informed, child-centred research was almost exclusively conducted in-person. Face-to-face interviews have been long-considered the ‘gold standard’ for qualitative research (McCoyd & Kerson, 2006). This perspective stems from the perceived increased availability of non-verbal and contextual data and low risk of data loss or distortion considered exclusive to in-person research (Novick, 2008; Roberts et al., 2021). However, the COVID-19 pandemic and associated public health regulations precluded in-person interviews. Thus, to gather data from children and to ensure compliance with public health measures, it was necessary to meet participants where they were during the pandemic – at home (Soule, 2021). This required an abrupt adaptation of arts-based research methods to suit the digital context (Donison et al., 2023). There was an immediate need for a study design that was child-centred, compatible with the online context, conducive to building rapport, and capable of collecting data of comparable quality to those gathered in an in-person context. Unfortunately, literature at the beginning of the pandemic failed to provide a suitable alternative, as best practices for conducting online arts-informed research about children’s lived experiences had not yet been established. In the absence of an existing protocol, the study aimed to create and evaluate a novel, child-centred, arts-informed online interview protocol that sought to understand children’s experiences of resilience during the COVID-19 pandemic.

Methods

The purpose of the mixed-methods, cross-sectional Surviving or Thriving?: Exploring how Children and Caregivers in Ontario Cultivate Resilience in Response to the COVID-19 Pandemic (SOAR) study was to explore resilience among primary caregivers and elementary school-aged children during the COVID-19 pandemic in Ontario, Canada. Thorne’s interpretive description, an inductive qualitative framework useful for addressing research aims that are driven by practical intent, was employed to develop conceptual understandings of thematic patterns and individual variation alike (Thorne, 2016; Thorne et al., 2004). Ethical approval was designated by the Non-Medical Research Ethics Board at the host institution (NMREB #119509). Data collection began in February 2022, when the Ontario government was allowing children to attend school either in-person or remotely (following a period of emergency remote learning from January 3rd to 19th, 2022; Government of Ontario, 2022). Data collection for the SOAR study concluded in October 2022.

Recruitment

Participants were recruited using purposive and snowball sampling techniques; recruitment ads were posted on social media (e.g., Facebook, Twitter) and online community platforms (e.g., Kijiji), and participants were invited to circulate the study advertisements within their social networks. Advertisements invited primary caregivers to express their and their child(ren)’s interest by emailing the secure study inbox, upon which they were asked to confirm their eligibility, provided with the letter of information and survey link, and invited to schedule their interviews. Each participant (caregiver and child(ren), respectively) was asked to book a separate interview (i.e., one time for caregivers, and a distinct time for each eligible child). Caregivers were asked to book their interview prior to their children’s to allow the interviewer to learn about the context of their children’s experience of COVID-19 prior to child interviews. After completing the survey and interviews, each dyad member (primary caregiver and child, respectively) was provided with a $40 Amazon e-gift-card in recognition of their time and contributions.

Eligibility and Sampling

A total of 27 primary caregiver-child dyads were recruited, where a primary caregiver was understood as an individual who identified as being primarily responsible for caring for a child. This sample size was considered sufficient based on previous interpretive description research (Scheibelhofer, 2023). To be eligible to participate, dyads were required to: (a) include a primary caregiver of at least one child aged 7–10 years; (b) reside in Ontario, Canada; (c) have access to an audio-based conferencing platform and provide assent to be audio-recorded; and (d) be able to communicate in English. There were no exclusion criteria for the study. Primary caregivers of more than one child aged 7–10 were eligible to enroll in multiple dyads; for example, a caregiver of two eligible children would compose two distinct dyads (one per child). The current paper pertains exclusively to the child-related methods and data in the SOAR study. Additional details about caregiver methods and overall results are available in Orchard et al. (2025).

Data Collection

The research team developed a novel one-on-one, semi-structured, mixed-media (including arts-informed), multi-sensory child interview guide, designed for approximately 30 minutes of conversation between the child and a research team member in an online setting. Interviews were audio-recorded using the Zoom web-conferencing platform, transcribed verbatim, and de-identified by the research team prior to analysis.

The child interview guide included eight stages: (1) Introductions and assent; (2) Icebreaker activity; (3) Agenda; (4) Resilience video; (5) Child survey; (6) ME/WE activity; (7) Visioning storytelling video; and (8) Wrap up and thank you. All stages of the interview process were qualitative in nature except for the survey. The guide was rooted in work conducted by Molina and Colleagues (2009) regarding child-friendly, participatory research tools; namely, that successful research methods are focused on having fun, are iterative and based on the child’s ideas/preferences, involve mixed sensory experiences (i.e., oral, visual, and written activities), and are beneficial for the child, and adapted to the online environment. The overall interview was designed to meet children where they were at (Soule, 2021) – that is, a space in their home with basic remote learning materials (i.e., a drawing utensil and medium and internet-enabled device), and verbal and written communication at an age-appropriate level of literacy. Further, in recognition of the potential stress involved with discussing experiences of COVID-19, interviews were designed to cultivate a supportive and comfortable atmosphere.

A presentation was also created using Canva design software and displayed to participants throughout the interview to assist children in describing their experiences (Lindsay & Schwind, 2016; Schwind et al., 2014). The visual materials are presented in Appendix A. Communication included verbal, visual, and written forms to improve children’s comprehension of their participation in the research (Baumann, 1997; Molina et al., 2009). In participatory research with children, it is critical that researchers document “self-reflexive accounts of practice evaluating what works and what does not” (Cahill, 2007, p. 299). Accordingly, interviewers made detailed memos throughout each interview, inclusive of a post-interview summary fieldnote, to promote their immersion in the data (Birks et al., 2008).

Before beginning the activities, the interviewer employed honesty demands, a technique used to diminish social desirability bias, by stating the following: “There are no right or wrong answers, we are only looking for the answers that are true for you” (Bates, 1992). During interview activities, interviewers also used follow-up questions to limit social desirability responses (e.g., asking the child to tell them an example or story about their response; Bergen & Labonté, 2019). To help determine acceptability of the protocol, after each activity-based component (i.e., all but the introduction, agenda, and wrap-up), children were asked how they liked the activity, whether they wished any part of it were different, and if they would like to move on to the next activity. This approach was adopted to help promote children’s agency in participation, support flexible participation, and continuously adapt the research conditions to their needs, with the overall intention of helping to mitigate power imbalances in the interviewer-child relationship (Baumann, 1997; Kutrovátz, 2017). Revisiting the child’s choice to participate was also useful in mitigating perceived influence from caregivers or the interviewer (Baumann, 1997).

Introductions and Informed Assent (2–5 Minutes)

It has been recommended that researchers begin any online interview by explaining the project and data collection process to the participant, as this is considered an effective means of developing trust and rapport (Ali et al., 2020; Mani & Barooah, 2020). Accordingly, to begin building trust, the interview began with the interviewer greeting the child, inclusive of a welcome graphic on-screen, and introducing themselves, the purpose of the study, and the purpose of the child’s participation. The interviewer then described each section of the interview and expressed that the child could take a break, ask a question, or stop the interview at any time. Next, the interviewer presented the Letter of Information to the child by verbally describing its contents using age-appropriate language and on-screen visualizations. Providing children with comprehensive information to request their informed assent (alongside their primary caregiver’s informed consent) is important to mitigating the unequal power relationship between interviewer and child (Baumann, 1997). Before asking for assent, children were asked if they had any questions about the study. Then, the interviewer asked the child whether they would like to participate in the study, explicitly expressing that it was okay to say no. Only one child declined to participate during the consent process (they are therefore not represented in this sample). The interviewer ended the interview accordingly and the recording was immediately destroyed. It is important to support children’s freedom of choice in research participation and adapt to their needs to dissolve power inequalities (Baumann, 1997). To conclude the introduction, children were asked what motivated them to participate in the interview.

Icebreaker Activity (3 Minutes)

The icebreaker activity was purposefully designed as an easy way for children to engage while building rapport between the child and interviewer at the start of the interview process. Arts-based icebreakers (i.e., activities aimed at building rapport when first meeting another person) have been employed for decades, including with children, to help them feel more comfortable in the research process (Morrow, 1998). In child research specifically, icebreakers have been found to improve energy, confidence, and a sense of safety in participatory research settings (Molina et al., 2009; Soule, 2021). An activity, like drawing, also helps to form a sense of collaborative effort with children, with an aim towards extinguishing the unequal power relationship between interviewer and child (Baumann, 1997).

In the SOAR study, the icebreaker activity consisted of a drawing activity for the child participants. This activity was explained to the child, and they were asked if they would like to participate in a drawing game with the interviewer. Children were asked to gather drawing supplies in case they had not prepared them before the interview; caregivers were also advised prior to the interview that drawing supplies of any kind would be helpful for the child to have ready for the interview. A photo with various cartoon animals was displayed on-screen and the child and the interviewer each picked an animal. Then, both the child and interviewer drew what a combination of the two animals might look like while a 2-min timer was displayed on-screen; this was inspired by Soule’s (2021) warm-up activity. Once time ran out, the child was asked if they would like to name their ‘hybrid’ animal and whether they would like o show their work (via Zoom video). The interviewer also named their animal and asked if the child would like to see their drawing. Interviewers took a moment to appreciate each child’s drawing and offer specific, genuine praise about their creation. Children were encouraged to keep their drawing close to them for the remainder of the interview, as the visioning and ME/WE activities would include questions involving their animal (e.g., “If [animal name] was with you at school during COVID-19, who would they see hanging out with you?”). To conclude, children were asked about whether they enjoyed the drawing activity and what made them like or dislike this interview component (Figure 1). Icebreaker activity screen

Agenda (2 Minutes)

To continue building trust and rapport, the interviewer then reiterated the data collection process for the participant by outlining the remaining activities (Ali et al., 2020; Mani & Barooah, 2020). In keeping with Baumann’s (1997) and Molina et al.’s (2009) recommendations, children were reminded that they could end any activity at any time by notifying the interviewer they wanted to stop.

Resilience Video (3 Minutes)

The resilience video was a cartoon created by the research team and an elementary school educator and was intended to equip children with a conceptual understanding of the construct before being asked about their personal experiences of resilience. Prior to the video, children were asked if they had heard of the word resilience. Children who answered no were asked if they would like to watch the video together, and after viewing the video, were asked about what they learned about resilience. Children with prior knowledge of resilience were first asked to describe resilience in their own words before being asked if they would like to watch the video, and after viewing the video, were asked to compare their perspective of resilience to what they learned (if anything). The video was approximately 2 minutes in length, was animated using PowToon software, and included on-screen visuals, music, and voice-over narration. The content was inspired by an introductory resilience video created by the UK organization Health for Kids (2019), designed to define resilience in an age-appropriate, child-friendly manner. Accordingly, resilience was exemplified using three superheroes named Concentration (to stay focused), Determination (to keep going), and Learning (to keep improving). The video introduced the superheroes individually, and then brought them together to reiterate the definition of resilience at the end of the video. Finally, children were asked if they enjoyed watching and discussing the video together and their reasoning behind their perspective (Figure 2). Screenshot from resilience video

Child Survey (5 Minutes)

Questionnaire surveys are not typically considered child-friendly, despite children being fully capable of participating in this method of data collection (Barker & Weller, 2003). Surveys are effective in providing valuable insights into children’s lives and helping to situate their experiences within the wider socio-politico-economic context (Barker & Weller, 2003). In recognition of the age range of children included in this study (i.e., ages 7–10) and varying levels of literacy among elementary school-aged children, this online survey was embedded into the interview, such that each question was asked verbally by the interviewer and accompanied by on-screen visuals with images and text. Before beginning, the interviewer described the activity, identified what a questionnaire is and how to participate, and assured that there were no right or wrong answers. The survey included demographic questions about the child’s age, gender, and school grade, as well as the True Resilience Scale for Children (RS10; Wagnild, 2009). The RS10 includes ten “I” statements (e.g., “I finish what I begin”), and respondents must select from the following four responses: “Not at all like me”, “Not much like me”, “Somewhat like me”, and “A lot like me”. A visual was displayed with a pictorial Likert scale to assist children in structuring their response (Lindsay & Dockrell, 2000). The RS10 has demonstrated good internal consistency (a = 0.82) and reliability among samples of children aged 7–11 years (Taber, 2018; The Resilience Centre, 2021). Total scores range from 10 to 40, where higher scores are indicative of higher levels of child resilience. Once completed, children were asked whether they enjoyed completing the survey and what factors informed their perspective (Figure 3). Pictorial likert scale

Visioning Storytelling Video (5 Minutes)

The visioning storytelling video was designed by the research team and an elementary school teacher, using Powtoon, to explore how children self-identified with Masten and Barnes’ (2018) resilience factors for child development. Before beginning, children were asked if they wished to watch the video. The video began by introducing a young Black girl named Lucy who displayed traits associated with resilience (e.g., perseverance). In the video, Lucy was shown wanting to play with her parents but feeling unsure whether to ask them because they seemed busy. The video concluded with Lucy pondering the problem and wondering what she should do next. Children were then asked to reflect on what they might do if they were in Lucy’s situation, what decision their animal would see them make, and what decision they thought Lucy would do next. Probing questions were used to explore children’s perspectives of Masten and Barnes’ (2018) resilience factors for child situational development, particularly, close relationships, emotional security, and belonging (given direct linkages with social isolation due to COVID-19 regulations). To conclude this activity, children were asked whether they liked watching the video and discussing their thoughts about it, inclusive of probing about specific elements they liked or disliked (Figure 4). Screenshot from visioning storytelling video

ME/WE Activity (5–10 Minutes)

Relational mapping is a well-established, arts-informed participatory research tool used to explore interpersonal relationships, as well as their importance and social dynamics (Bagnoli, 2009; Coad, 2007). Mapping is considered to have wide applicability with young children (Clark & Moss, 2011) and is purported to facilitate an empowering, nuanced process of self-reflection that is largely unachievable via text-based methods (Literat, 2012). Maps with their visual, structured organization of information, while traditionally implemented in an in-person setting, have effectively provided insights into children’s social networks and perceptions, including details that may be emotionally challenging to articulate (Bagnoli, 2009; Molina et al., 2009; Thomas & O’Kane, 1998). This method is useful in empowering children to challenge adult assumptions by exploring how children use and perceive spaces in their lives (Young & Barrett, 2001). For the purpose of the present study, the ME/WE map activity was adapted to an online environment to provide insights into how children perceived their social networks (i.e., ME) and their experiences within them (i.e., WE) during the COVID-19 pandemic.

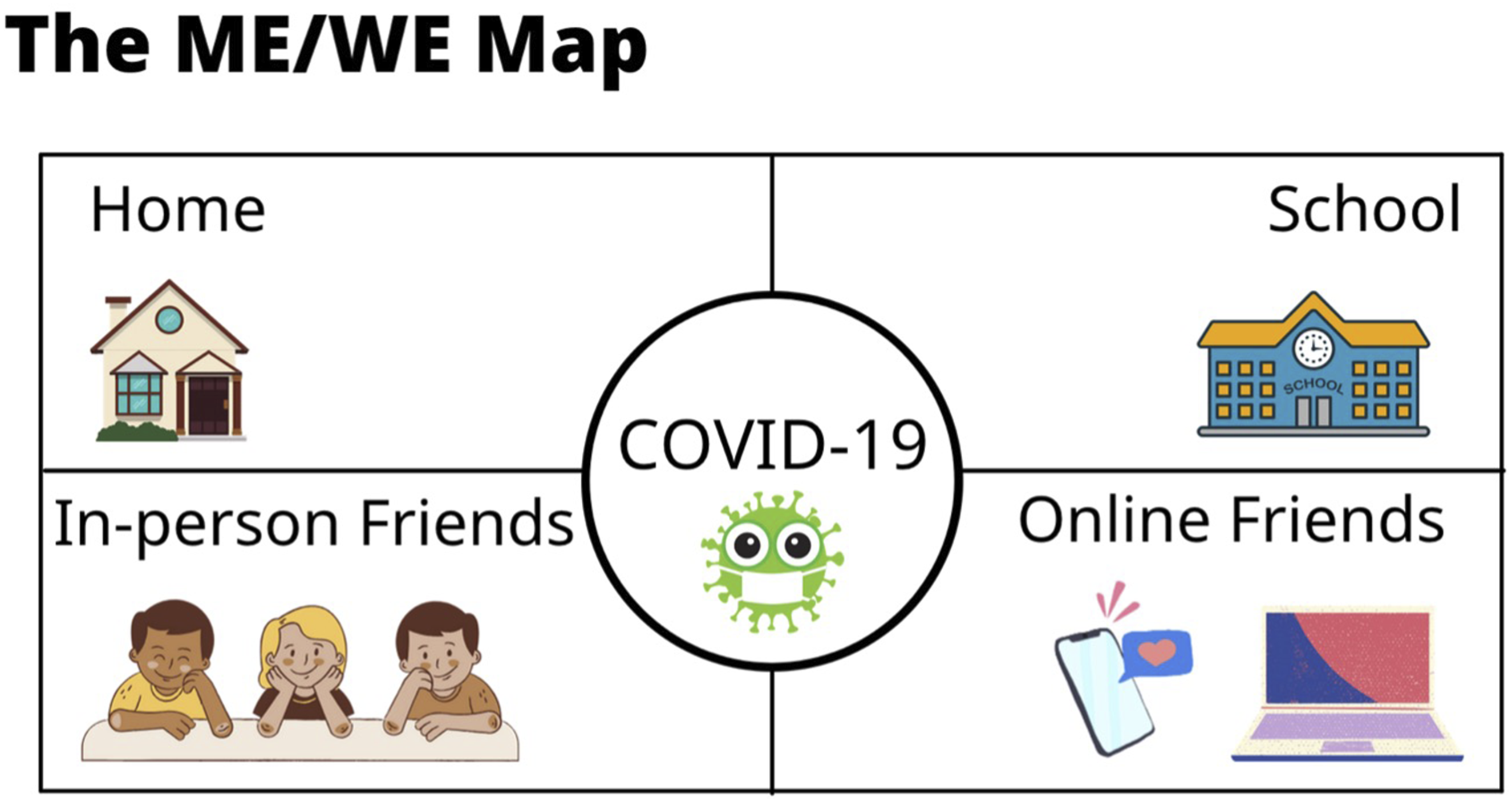

The ME/WE Activity was introduced to the child using a visual map (presented on-screen; Figure 5) that included four quadrants: home, school, in-person friends, and online friends, with COVID-19 set in the middle to contextualize these settings/persons. ME/WE template

The map was described verbally by the interviewer prior to asking the child questions (see Appendix B for examples) informed by narrative inquiry (i.e., questions that help to interpret and experience the world of the participant rather than trying to explain or predict that world; Wang & Geale, 2015). To begin, the child was asked to reflect on the people in their lives for each quadrant (i.e., the mapping of their social network). In addition, a mood board visual was displayed on-screen to depict examples of what might be helpful (e.g., “listens to me”) or unhelpful (e.g., “ignores me”) to support children’s responses. Children were then asked to describe their interactions and sentiments surrounding the people in each quadrant during the COVID-19 pandemic (i.e., exploring how the “ME” perceives the “WE” in their social networks). Interviewers mapped the child’s responses on the ME/WE graphic and the child was offered a copy of the map at the end of the activity. Finally, children were asked whether they liked this activity and what aspects specifically informed their opinion.

Wrap Up and Thank You (2 Minutes)

The provision of feedback and validation are important to children when concluding an arts-informed research process, as this helps to show that that their work is highly valued and will be used for an important cause (Coad, 2007). Accordingly, children were thanked and praised for their efforts, and asked whether they would like to share anything they had not had a chance to. Then, the interviewer explained how their conversation would be used for research, including publication in a paper. Finally, the interviewer thanked the child once again, and confirmed that their honorarium would be sent to them via their primary caregiver.

Data Analysis

This qualitative, cross-sectional, arts-informed method was guided by Thorne’s (2016) interpretive description to collect and analyse data. Interview audio recordings were transcribed in their entirety by research assistants affiliated with the research team. Few participants consented to video recording, therefore, video recordings were not analysed. However, interviewer memos were reviewed to inform visual findings (e.g., body language, background elements).

Following the completion of interviews, the research team immersed themselves in the data through repeated readings of the transcripts, and subsequently developed a preliminary coding structure. To test the coding structure, a subset of transcripts was randomly selected and assigned to a coding dyad (i.e., two members of the research team), who then independently applied open and axial coding (Williams & Moser, 2019). Next, each coding dyad met to discuss their preliminary coding structure, followed by a meeting with the broader research team to discuss applicability and fit, including refining the structure as needed. This analysis process was repeated until the research team believed the coding structure accurately and comprehensively covered the dataset. The final coding structure was then applied to each interview transcript, with two researchers coding each transcript using Quirkos (an online software for qualitative analysis). After all transcripts were analysed, analysis files were merged and reports were run on codes related to the interview protocol (as was determined by group consensus on codes reflecting the protocol’s structure and children’s experiences of each activity). The codes used to generate reports included Child’s perception of activity, Questions, and Inconsistencies (each of which were coded distinctly for each protocol element), as well as the global codes Reasons for participation and Parental intervention. The reports were then examined using Levitt et al.’s (2017) recommendations from the Task Force on Resources for the Publication of Qualitative Research of the Society for Qualitative Inquiry in Psychology, a section of Division 5 of the American Psychological Association. Accordingly, the utility (i.e., functionality of the protocol during implementation) and fidelity (i.e., gaining access to authentic lived experiences) were evaluated in the context of identified themes within reports (Levitt et al., 2017). Child demographic data was extracted from the survey completed during interviews and measures of central tendency, dispersion, and frequency were computed. Interviewer memos/fieldnotes were also consulted to consider unique influences of the at-home research setting (e.g., caregiver presence) during transcript analysis and interpretation (Baumann, 1997).

Results

In total, 27 children (Mage = 8.19 years; SD = 1.14) participated in this study. Most children self-identified as female (n = 13, 48.14%) and male (n = 12, 44.44%), and two children identified as non-binary (7.40%). The majority of children were in Grades 2 or 3 (n = 17, 62.96%), however, level of education ranged from Grade 1 to Grade 6. Children’s RS10 scores ranged from 22.00 - 39.00 (MRS10 = 33.04, SD = 3.96).

Qualitative findings are presented below inclusive of illustrative quotes selected to highlight key insights and provide concrete examples of the protocol in-action.

Interview Guide Utility

The authors operationalized utility as the functionality of the protocol during implementation. This was explored by considering the volume of activities, the design of the interview, capacity to build rapport, and accessibility of participation.

Volume of Activities

The protocol included eight sequential stages with time allotments for each stage, ranging from two to 10 minutes. While there was a large number of activities in each interview, this was done intentionally to hold the attention of the child participants (Topsakal & Topsakal, 2022), as well as to offer variability in terms of data collection methods such that if one activity did not resonate with a child, the desired data could be collected using a different activity. However, during implementation, interviewers did experience feeling pressed for time due to the high volume of activities to be completed within a 30-min interview. Timing was clearly identified for each protocol element prior to the interviews; however, flexibility in timing was not adequately considered during construction. As a result, some interviewers struggled to balance understanding children’s ideas while maintaining the planned pace of activity progression. Further, probing is a vital component of the semi-structured interview format (Bryman, 2016). In the present study, the interviewers often had to spend additional time in activities than originally allotted to fulsomely explore a child’s train of thought. Overall, interviewers were able to manage time constraints, as they successfully completed all interview components with all children.

Mixed Media and Multi-Sensory Design

This protocol benefitted from the inclusion of presentation visuals, inclusive of image- and video-based aids. Video-based activities were strategically implemented between text- and image-based activities to provide sensory variety to children. Interviewers noted that children were highly engaged in each video, as evidenced by their lack of interruption during the video and interest in discussing the video after watching. For example, one participant paid such close attention that they recognized their interviewer’s voice: Child: And was it your voice in the video? Researcher: Yes, it was [laughs]. Does it sound like me? Child: Uh-huh... Researcher: Mm hmm. Yeah, it was me. That's good ears. Yes, I did I did voice over that one. (Child 16, 9 years old)

The videos were designed to optimize child interest by being short in length (∼1–2 minutes) with vibrant animated characters and upbeat, well-paced narration. However, an unanticipated finding was that the appearance of animated video characters influenced how participants were able to relate to the video. For example, the visioning activity included a scenario featuring a young girl, which made it difficult for some participants of diverse genders to put themselves in the female character’s situation. This prevented some children from using abstraction to reflect on activities, as exemplified by one child’s experience with the visioning video: Researcher: Yeah. Sounds good, so to me it sounds like you and Lucy would be very similar, do you think that’s true? Child: Uhm, kinda. Researcher: Kind of, OK, so kind of suggests to me that you think you might also be different, so can you tell me more about how you and Lucy might be different? Child: That I’m like a boy and she’s a girl... Researcher: OK, that’s true. What about your actions? Your resilience in your growth mindset? Do you think? Or can you tell me how those might be different between you two and your actions? Child: Not really a lot. (Child 2, 9 years old)

Non-video, ‘still’ presentation visuals also proved effective in promoting participant engagement during the interview. In particular, the mood board presented during the ME/WE activity was a positive inclusion that aided children’s participation. This was particularly important because the ME/WE activity was the final data collection tool in the protocol, and some children were beginning to show signs of fatigue (i.e., shortened responses, visible disengagement, etc.) at this stage of the interview. For example, one participant described enjoying the ME/WE map because of the mood board as a visual aid: Researcher: OK, that’s OK. That means we did a good job then, we were thorough. Um, how did you like this activity? Child: It was cool. I like the little mood board. I got to read all the little expressions. (Child 9, 10 years old)

The most efficient, in terms of time, single element of the protocol was the child questionnaire; this also happened to be the only quantitative-based element of the interview. When questions were posed in a closed format, most children were able to reflect and verbalize answers quickly, as well as provide valuable information pertaining to the scope of the research question. Two children expressed difficulty in having to choose a single response, while three children requested clarification about some of the terminology employed (e.g., statement, not a lot like me). Five children took the initiative to direct their participation in the activity. For example, one child had the idea to reply to the resilience scale questions by simply stating the number corresponding with their response, instead of saying their response in full: Researcher: So, I have the first question for you. It is I finish what I begin. Is that not at all like you, not much like you, somewhat like you, or a lot like you? Child: Can I just say one, two, three or four? Researcher: Yeah, if that's easier for you! (Child 3, 9 years old)

Closed-ended survey questions were unexpectedly efficient in providing detailed insights into children’s lived experiences of resilience, as five children volunteered additional information and context during the survey to justify their responses. For example, when presented with the survey question, When I get upset, I know how to calm down, one child indicated that this was a lot like them, explaining “Because when I get upset there, I have dolls in my bed, so I just go grab one” (Child 21, 7 years old).

Early Rapport Building

The icebreaker activity was found to be an effective means of initiating children’s interest in the interview given the fun nature of the activity, and because it served to promote comfort with sharing ideas. The interviewers felt that offering the child a choice in the direction of the activity (i.e., selecting an animal to draw) was particularly useful in encouraging their buy-in before moving on to more serious topics. While only 2 minutes were allotted to this activity, many children enjoyed the activity so much that they wanted to spend more time with it.

Participants were also asked to name their animal creations in the icebreaker activity, which appeared to further enhance their interest. This was noted early in the data collection phase, when a child spontaneously shared an idea during the icebreaker about how their animal could be incorporated into their life, saying. I think like what will what Doel do? (Child 2, 9 years old). Interviewers then began integrating the drawing into subsequent interview activities to help promote engagement. For example, in the visioning activity, children were asked what their animal might say if they saw the child in the situation described by the video. This appeared to support the ability of some children to reflect on the concept of resilience later in the interview. For example, one child, who named their Tiger-Elephant drawing Tiphant, drew upon their animal later in the visioning activity when discussing resilience: Researcher: Lucy would wait, you would wait, and Tiphant would agree... Child: Yeah, I hope he does. Researcher: [laughs]. Child: Because he is a tiger. He can handle stress. (Child 7, 7 years old)

Interviewer fieldnotes consistently documented the high level of engagement initiated by the drawing game and how this appeared to enhance rapport throughout the broader interview as a result. This also improved the ability of the interviewer to navigate the imbalanced power relationship between child and researcher and seemed to help mitigate bias in responses.

Accessible Materials and Modes of Delivery

Another practical element of the protocol was that it was entirely online, making it easily accessible to participants. This was key in promoting the participation of children with immunocompromised family members (i.e., due to long-COVID-19 and other conditions; see Orchard et al., 2025 for a detailed description). Further, participation required only a few materials (i.e., a drawing utensil and medium, device, and Internet connection) that most children during COVID-19 had access to; the pandemic resulted in virtually all school-aged children in Ontario being provided with an internet-enabled device to facilitate remote schooling, as well as Wi-Fi access. All children in this study had access to the art supplies and technology required to fully participate in the interview.

Across all 27 interviews, there were no technical difficulties that prevented children’s participation. However, 5 interviews included minor technical issues, such as the child needing to change devices, audio ‘cutting out’, and video buffering. Overall, conducting interviews using the Zoom web-conferencing platform was highly practical, as most children were already familiar and comfortable with its use from their experiences in virtual school. For example, one child already knew how to navigate a recorded Zoom meeting, as demonstrated by the following interaction: Researcher: So, you’re going to see that pop up on your screen, do you see that it’s recording? Child: Yeah, so do I say got it? It says it says this meeting is being recorded by the host or the participant, got it or leave meaning. And I think I’m gonna press got it. (Child 1, 7 years old)

Children were audio-recorded to record assent; however, it was optional for children to turn on their camera. Participants were inconsistent with regard to camera use; the majority of children (n = 19) used their camera throughout the interview and six children did not use their camera. Two children chose to selectively use their camera during the icebreaker activity to show their drawing to the interviewer before turning their video off again. As such, interviewers had varying levels of non-verbal feedback available between interviews.

When children did not use video, interviewers were unable to read non-verbal communication cues, as is exemplified in the following fieldnote: “No video for the whole interview – found it hard to gauge interest or if the mother was there or not” (Child 23, 8 years old). Interviewers noted that non-verbal cues provided a convenient indicator of child engagement and comfort, especially in cases where child participants had more difficulty articulating their perspectives. It was evident to the researchers when children using video became disengaged with an activity, as they would look away from the screen and appear antsy, while children who were highly engaged in the interview would smile, make eye contact, and nod their heads. For example, one participant was clearly uncomfortable and shy, which was evidenced by them sitting on their caregiver’s lap and cuddling them throughout the interview; this was noted in the researcher’s fieldnotes: “Shy child - very dependent on his mother who was in the room the whole time giving the child answers” (Child 14, 9 years old). Similarly, in another interview, the researcher identified that the child was very nervous despite a lack of verbal input, as they continued looking towards their caregiver for assistance replying to questions. The summary fieldnote stated, “Very shy child - dad was in room with him the entire time. […] Didn’t have a lot to say for many of the questions and asked dad/looked to dad for help with responses” (Child 15, 7 years old).

As a whole, the authors discovered that the protocol functioned effectively to facilitate children’s participation in an online arts-based interview. It was identified that utility was strongly informed by interviewers negotiating efficiency throughout each interview, rather than an implicit characteristic of the study design. On one hand, prioritizing timeliness risked data richness and the interviewer-respondent relationship but preserved space for each protocol activity (and therefore, promoted data breadth); on the other hand, deprioritizing timeliness created an opportunity to encourage child engagement, build rapport, and more deeply explore children’s lived experiences. Ultimately, interviewers were able to ensure the protocol’s utility by carefully balancing the competing priorities of timeliness, data quality, and the interviewer-participant rapport.

Interview Guide Fidelity

The authors operationalized fidelity as the ability of the protocol to generate meaningful insights into children’s authentic lived experiences. Accordingly, the age-appropriateness of the design, quality of child participation, and degree of caregiver engagement were evaluated.

Age-Appropriate Design

The protocol was designed to engage children aged 7–10 years; however, in the present study, children within this age range appeared to vary significantly in their engagement, comprehension, and responses. While the interview protocol was designed for approximately 30-min, the attention span of children and their ability to focus was often shorter in duration, especially among younger children. Commonly, as the interview progressed, children would begin to give shorter and one-word answers, and some appeared distracted and bored as the duration increased. This was exemplified in interviewer fieldnotes, for example, Child seemed pretty outgoing/into the interview. [...]. Once we got to the ME/WE interview it was like pulling teeth trying to get anything out of him – definitely seemed to get bored by the end. (Fieldnote excerpt from Child 22, 8 years old).

The language used within the letter of information and assent procedures were found to be beyond the level of literacy of most child participants. During the development of child-facing study materials, the research team aimed to meet an appropriate literacy level for school-aged children. Unfortunately, some of the ethics requirements in the informed assent process required the inclusion of language that exceeded children’s level of literacy, resulting in confusion during interviews. One such example was the unidentified quotes question in the assent procedure, which was a common source of confusion for younger children, as exemplified in the following interaction: Researcher: Umm, and do you agree to me using unidentified quotes in the dissemination of our research. So that’s just, I would be quoting what you say but I wouldn’t say it came from you, I would just, I would call you something else. [Pause 2:53–3:02] Child: Mmm. I don’t know. Researcher: You don’t know? Okay. Umm, do you understand the question? Child: No. (Child 25, 8 years old)

Children who were confused by terminology benefitted from additional clarification by the interviewer. Interviewers explained complex phrasing using language that was more age-appropriate and provided examples of how the questions manifested in ‘real-life’. For example, the same child asked for two different explanations of the quote dissemination question before indicating they understood: Researcher: No? Okay, so, basically after we finish this interview, my team and I are going to analyse the data and then we’re going to write a paper about it. And in that paper, we might quote something that you said, but we won’t say that it came from you, we will say that it came from participant one for example. Does that make sense? Child: A little bit. Researcher: A little bit? Okay, is that alright with you or do you want me to explain it in a different way? Child: Mmm. Maybe explain it in a different way. Researcher: Okay. So, you know how you just said maybe explain it in a different way? I would quote that, so I’d put it in quotation marks and put it in a paper that were writing about this study, and I would say, maybe explain it in a different way, then I’d put a little dash, and I would say like, child one, said this. So, you would be referred to as child one but it wouldn’t actually have your name anywhere. Does that make sense? Child: Yes. (Child 25, 8 years old)

In another example, a child’s caregiver identified that their child was confused by the sheer volume of information presented to them immediately after the letter of information was read to them: Researcher: OK, so you're going to see the screen change for you because we're going to read something that's called a letter of information. OK, did that screen change for you as well? Child: Yeah, it says letter of information. Researcher: There you go. [reads entire letter of information]. Do you have any questions? Child Caregiver: Do you have any questions? She has no idea what you’re talking about. (Child 1, 7 years old)

Later, the same child was further confused by an ethical requirement of the child interview letter of information, where the interviewer identified the study’s primary investigator as a contact for any questions post-interview. The child was uncertain about the addition of another person in the interview context, inquiring:

Child: So um, I have another, what’s what’s, who’s Dr. [primary investigator] … Researcher: Dr. [primary investigator]! She is my boss. She's in charge of this study, so I report back to her. But if you have any questions that I can't answer, I will direct you and you can talk to Dr. [primary investigator]. Does that make sense? Child: Mhm. (Child 1, 7 years old)

While the interviewer clarified the role of the primary investigator and the child verbally indicated they understood the explanation, it did not seem practical to expect a child to initiate emailing the primary investigator, an adult stranger, should they have questions about the study.

The drawing activity was received positively by all participants, however, younger participants (i.e., those aged 7–8) tended to respond more strongly in terms of enjoyment. Older children (i.e., those aged 9–10) did not typically display the same level of enthusiasm in naming their animal and discussing them in subsequent interview activities. Younger children were also generally more interested in having their ME/WE map shared with them after the interview (with only two of the 27 children interviewed requested a copy).

It was also discovered that participants closer to 10 years of age were generally more capable of abstract thinking and sustained attention throughout the interview than their younger counterparts. This was evident during the ME/WE map activity where some of the younger participants struggled with understanding the mechanics and vocabulary used in the activity. For example, one child stated that they “forgot what social media was” (Child 1, 7 years old) when asked about online friends. Interviewers made note of potential age-related differences in fieldnotes; for example, one fieldnote described how a participant “Was able to differentiate herself from Lucy in the visioning activity – might have been due to age (9 years old).” (Fieldnote excerpt from Child 16, 9 years old).

Aligning Children’s Engagement with Research Objectives

Children were often most verbose when recounting niche experiences (i.e., storytelling), which complicated the interviewer’s ability to keep the conversation aligned with research objectives. Participant enthusiasm and engagement seemed to peak during less data-rich elements of the protocol (e.g., the icebreaker drawing activity, indirectly useful as a support in later activities) and waned during more data-driven elements (e.g., the ME/WE map, where all questions directly pertained to research objectives). Across virtually all interviews, the icebreaker activity initiated the liveliest responses from children; for example, one child felt inspired to brainstorm how to incorporate their drawing into their life: Researcher: You're also, you can keep Doel [name of the child’s dog-elephant animal drawing] as long as you like. He stays with you and... Child: Can I like, can I like cut him out? So, then it's not like a paper... Researcher: Of course, you can do whatever you'd like. That's all yours OK. OK... Child: And then like on the like key chain I made; I can write his name on that. Then when I cut out the Doel I can just put his name on that key dog key chain... Researcher: That's a great idea. Yeah, I think you should totally do that... Child: I could, I would keep, keep it in my room mostly. Researcher: Mhm… Child: … and like uh, I can put it like when I'm doing something I can just like leave it on the side. I think like what will what Doel do? (Child 2, 9 years old)

It was also identified that children appeared highly engaged with sharing personal stories with the interviewer. Across interviews, 11 participants initiated verbose storytelling about their lived experiences that did not directly pertain to the study purposes. In such circumstances, it was important for interviewers to genuinely and enthusiastically listen to children’s stories to continue building rapport. For example, one shy child began to open up during the ME/WE activity when discussing Roblox (a children’s online video game), and the interviewer actively listened to their story to ensure they felt heard: Child: Uh, last year my Papa got me Roblox… Researcher: Oh, OK. Child: … 'cause our friend wanted to play with us, so we would play it and then and then we will play it again and then we would play games and uh and uh yeah. She has a very big personality and then she we would have sleepovers, but online sleepovers. Researcher: Oh. Child: So basically uhm she would be like, hey, I can't go to sleep, guys. Hey, wanna play a game? Maybe like you know, you have to go to sleep now, it's ten o'clock and she’d be like OK and then [sister’s name] would send her like uhm my sister would...I forgot what I was saying. Researcher: Oh, that's OK. You were telling me about your online sleepovers and when it would get late, what would happen? Child: Uhm, we would send her a very long video and she would be like, watch this, watch this, OK, it's very cool. Watch it until the end and she'd watch it, she'd be like this is so weird. Why is did you send me this video? It's just some person singing and then we'd be like sleeping already. Researcher: [laughs]. So, you would do online sleepovers and people would fall asleep on each other? Child: Yeah. Researcher: [laughs]. That does sound like fun though. I haven't heard of online sleepovers before, but I think that's super cool. Child: Yeah. (Child 9, 10 years old)

Often, active listening built sufficient rapport to motivate children to share additional personal stories with the interviewer later in the session. However, the relationship between some stories and the interview topic was not always immediately clear, requiring delicate investigation by the interviewer. For example, later, as the interviewer was wrapping up the same interview, the child interrupted to say, “I forgot to tell you about my toad” (Child 9, 10 years old). While unsure of the relevance of the toad (named Strawberry), the interviewer responded positively by creating space for the child to share more, eventually inviting them to reflect on the toad in a manner consistent with the research objectives: Researcher: Was, I know that you had Strawberry [the toad] during the pandemic, were they helpful to you? Child: Yeah, like. We were online, so like I would like get like a little container. And put it, fill it with, I would dampen it with like, we had this like, it was just water for like humidity for her tank and stuff. And then we would, I would put her in the little container and then I would play with her while I was, when I finished my work. (Child 9, 10 years old)

Children sharing their personal stories, often in great detail, was indicative of the interviewer’s ability to build strong rapport and trust with participants in an online environment using this protocol.

In some cases, however, interviewers noted that the imbalanced power relationship between child and researcher may have influenced responses. Some children seemed aware of when their response may be perceived as socially undesirable and seemed to avoid responding in ways that could potentially upset the interviewer.

Caregiver Engagement

The involvement of caregivers in child interviews was an unexpected outcome of the protocol, as it was not designed for caregiver input. However, 13 child interviews included the presence of a caregiver who chose to contribute to their child’s participation and responses, typically through probing, translating non-verbal communication, or helping their child refocus.

Caregiver interruptions were particularly common when children were asked about their motivation for participating in the interview. For example, this was highlighted by a caregiver interrupting to identify the importance of the gift card for the child: Researcher: Thank you. I know that was a lot of big and unfamiliar words probably, so thank you for bearing with me. And my last question before we get to the fun stuff is, before we begin, can you tell me why you wanted to participate in this interview? Child’s Caregiver: Do you remember what we talked about? What are you, what are we going to do with the money from the amazon gift card? We going to buy daddy's birthday present? Yeah, we thought it was kinda cool that you could earn some money for daddy's birthday present. Ya. You gotta use your words kiddo because she cannot see you. [Laughs]. So, you say yes. Child: Yes... (Child 12, 7 years old)

Similarly, four children appeared to be directed to participate by their caregiver. This made it difficult to ascertain whether some children were feeling pressured into participation by their caregivers or truly understood what was happening, such as in the case of this participant: Researcher: Exactly [laughs]. Exactly that. Alright, and just before we get started, can you tell me why you wanted to participate today? Child: Uhm…. Child’s Caregiver: [laughs]. ‘Cause mommy made you [laughs]... Researcher: [laughs]. That's OK, you can be honest. Child’s Caregiver: Or a forty-dollar gift card. Woo hoo! (Child 26, 7 years old)

Another child was not completely aware of the study or their participation until moments before the interview, which seemed to be confusing for them: Researcher: OK, so before we get started, can you tell me why you wanted to participate in this interview? You can be honest. Child: I don't know. Researcher: [laughs]. That's OK. That's totally fine. I'm just glad you're here. Child’s Caregiver: He didn't even know about it, until he came down the stairs. [laughs]. (Child 27, 7 years old)

Conversely, in some cases, a caregiver’s interest sparked a genuine interest from their children, for example, as stated by one child: Child: Um, I heard my mom, like she was participating… Researcher: Mm hmm. Child: …and I also wanted to participate. (Child 20, 10 years old)

Caregiver engagement also extended towards interview activities, sometimes acting as a second interviewer to promote their child’s participation. For example, one participant’s mother noticed the brevity of their child’s responses and initiated some probing to help the interviewer gather more details: R: Can you think about anybody else that um was maybe helpful for you during the pandemic. So maybe um some other people in your family, or maybe people that don’t really fit onto this map, but that come to mind for you. Child: Um, my uh. Child’s Caregiver: What are you saying, what are you trying to say? Then just say that, say nanny. Child: My nanny... Researcher: Your nanny. Awesome. And how was your nanny able to help you? Child: Um... Child’s Caregiver: Well what's um, what's something that you did when you went to nanny's house? Child: Um. I um, taking my computer... Child’s Caregiver: Yeah, but why were you taking your computer? Child: I was taking my computer to like, for online learning at nannies... Researcher: Online learning. Awesome, so nanny was able to help you with your online learning when you were, had to do school on the computer? Child: Yeah... (Child 23, 8 years old)

In another example, a child’s mother was able to remind them to provide clear verbal answers to the interviewer because their camera was off, saying “Do you want me to turn the camera back on so that she can see? Okay, so then just use your words” (Child 12, 7 years old). Later in the interview, the same participant’s caregiver assisted the child in reflecting on their social activities and helped them refocus when their body language appeared distracted: Researcher: Okay, and when you hang out with L at the playground, at your house, and even at McDonalds, what do you like to do together? Child: Umm. Lots of things. Child’s Caregiver: Like? Think about the last time you saw L, what did you guys do together when you were here? Child: Climb on the monkey bars. Child’s Caregiver: Okay, keep going. Child: Play with stuffies. Child’s Caregiver: Alright, keep going. Child: Umm. Child’s Caregiver: Don’t lick your foot. Child: I’m not. (Child 12, 7 years old)

Alternatively, in some interviews, some children appeared uncomfortable sharing their honest thoughts in front of their caregivers or looked to the caregiver to answer on their behalf. This was largely documented in interviewer fieldnotes, such as in the following example: “Child was quite shy and hesitant to provide her own answers. Mom was in the room and whispering answers in child’s ear” (Child 10, 8 years old). Overall, caregivers appeared to act as gatekeepers to their child’s participation, suggesting that the inclusion of caregivers in data collection with children is a practical means of recruiting child participants. However, this also raised concerns regarding the genuine interest and comfort level of children in participation, as well as the potential for biased responses and coerced assent.

Maintenance of Child Engagement

The protocol’s mixed-methods, multi-sensory design (i.e., ongoing verbal, visual, and written forms of communication) was a practical means of promoting participant engagement among children aged 7–10 years. Specifically, the combination of verbal descriptions by the interviewer complemented by images, videos, and on-screen text was found to be useful for promoting understanding. It was also effective to employ a variety of activities during the interview, as this allowed the interviewer to use different methods of data collection instead of dwelling on an activity that might have been perceived by a child as disengaging.

Videos were particularly well-received by participants. Most children were unfamiliar with the meaning of the word resilience prior to the interview, which was expected, and the animated resilience video was discovered to be a practical and effective means of conveying this concept in an age-appropriate, engaging manner. Post-video, the majority of participants were capable of describing the concept in basic terms: Researcher: Now that we watched this video together, can you tell me what resilience means to you? Child: Resilience means when you're feeling negativity and when you not wanna do something, you keep trying. (Child 9, 10 years old)

Some participants were even able to apply what they learned from the video to give detailed examples of resilience from their lives. For example, one child articulated their understanding of resilience using an analogy of swinging at the park, sharing, Uhm, I don't know. One day we were at the park, and I was on the swing, but my dad was pushing me and then when I came off and then next time I went on and all of a sudden, I was swinging on my own. (Child 21, 7 years old)

Other children described difficult experiences they had gone through. For example, one child described how they overcame unkind comments from their classmates at school: Child: When I was…uhm…when I was… I kept on trying to draw a picture of a fish and I couldn't draw it because I felt really like I felt upset because people were saying and my classmates were saying that I couldn't draw a fish because uhm I had COVID… Researcher: Mm hmm. Child: …so then I would I didn't listen to them. Researcher: Good, you didn’t listen to them and… Child: [unintelligible 11:21] Researcher: …sorry, go ahead. Child: And then I just drew the fish. (Child 16, 9 years old)

Discussion

The arts-informed, online interview protocol designed to explore school-aged children’s experiences of resilience during the COVID-19 pandemic was largely an effective means of eliciting the active, meaningful participation of children in qualitative research. Children were most engaged in arts-focused activities and their attention was typically well-maintained by the mixed-methods and multi-sensory interview design. In some cases, caregivers served as gatekeepers to children’s participation, which was expected but surprising given that many caregivers participated in their child’s interview without prompting or invitation. The online format of participation seemed to promote accessibility and comfort for school-aged children, but also presented some challenges for the researchers regarding the visibility of children’s non-verbal communication and environmental contexts. Overall, the process of the arts-informed, online interview appeared to be well-received by child participants and was found to be useful in capturing meaningful insights about the pandemic from children in an online setting.

Feasibility of Arts-Based Online Research With Children

To the authors’ knowledge, this is the first time an arts-based online interview protocol has been applied to study school-aged children to explore their resilience. The arts-based nature of this protocol proved to be central to engaging young participants in research and well-suited to yielding high-quality data from school-aged children. In comparison to in-person data collection, online research has long been viewed as subpar due to the perceived lack of non-verbal and contextual data and high risk of data loss or distortion (Novick, 2008; Roberts et al., 2021). However, the comprehensiveness and quality of the data collected in this study challenges this perspective. Globally, other researchers have documented transitions to virtual research conducted with children during the COVID-19 pandemic, and found similar success (although not focused on resilience). For example, in a United Kingdom-based study of school-aged children by Lomax and Smith (2022), the use of creative visual arts (e.g., drawing, comic, film) about lived experiences of COVID-19 was found to be a viable means of empowering children (age 9–11) and uplifting their voices in an emergency context.

Importantly, the protocol was feasible in building trust and rapport between children and the interviewer in an online setting. The drawing icebreaker was fundamental to this success, as all children appeared to enjoy engaging in the activity. Prior in-person research has documented the utility of arts-informed methods in motivating child engagement (Barker & Weller, 2003; Coad, 2007; Kutrovátz, 2017), and based on our findings, this appears to translate to online settings. In a similar study employing online video interviews with children during the COVID-19 pandemic in Ontario, researchers were surprised to discover children’s avid interest in artwork and self-expression beyond verbal communication (Donison et al., 2023). While arts-informed methods were not a part of Donison et al.’s (2023) original data collection plan, once they discovered that some children were eager to draw and share artwork, they adapted their design to include drawing retroactively. Future research conducted online with children may benefit from including drawing as a component of arts-based research.

Influence of Contextual Factors

Interviewers in this study noted the importance of contextual factors that affected children’s participation; namely, the presence of a caregiver and the use of video. This observation is consistent with prior literature in child research that recommends the careful consideration of subjective, environmental factors in the context of children’s participation to enhance data interpretation (Baumann, 1997; Kutrovátz, 2017).

Primary Caregiver Presence

In this study, the most influential subjective factor affecting child participation seemed to be the presence of a caregiver, which has been a consistent finding in similar child research (Koller et al., 2023; Thompson et al., 2021). It has been established that a caregiver’s presence during the research process can exert exceptional influence on a child’s verbal competence, sense of social power, and vulnerability, which can change how they choose to participate in the research (Greene & Hill, 2005). This study identified that the presence of a caregiver often positively affected child participation when the caregiver acted as a supportive partner in the research process. This manifested in caregivers enhancing children’s verbal competence (e.g., helping to clarify questions), promoting their social power (e.g., offering words of encouragement), and reducing their vulnerability (e.g., providing a source of comfort/safety). These findings are consistent with evidence from Dodds and Hess (2020), who conducted online group interviews with families (including young people ages 12–22) during the COVID-19 pandemic and identified that younger participants typically felt more comfortable participating online due to being in a safe environment (i.e., at home) with family members close by for support. Similarly, in online interview-based COVID-19 research with children, Donison et al. (2023; ages 5–16) and Graber et al. (2024; ages 3–10) described having a parental presence during data collection as supportive and reassuring for children.

Another important observation was how the presence of primary caregivers in the online environment introduced the potential for children’s coerced assent and compromised agency in participation. For example, one caregiver shared that their children were unaware of the study until the very moment they were asked to begin the interview. Similarly, when children were asked about their motivations for participation, some simply indicated that their caregiver had instructed them to. The large incentive ($40 per participant) may have introduced potential for children to feel coerced into participation. However, it was also identified that some children felt personally motivated to participate because of the opportunity to have their own money. It was extremely difficult for the research team to discern whether coercion was present due a lack of familiarity with each dyad’s relationship dynamics and children’s personalities in new social contexts. For example, when a caregiver was speaking on behalf of their children at the beginning of the interview, it may have been because the child felt shy in the presence of a stranger and gained confidence through their caregiver speaking first. Alternatively, the child may have not been interested in participating in the interview and only did so because of their caregiver. Future research should examine how best to meaningfully recognize children’s research contributions through incentives without introducing undue burden on the child to participate. Equally important is the identification of strategies for interviewers to reliably detect children’s coerced participation without disproportionately excluding children who are naturally quiet and shy when meeting strangers. To be effective, said strategies must be able to compensate for the interviewer’s lack of contextual knowledge regarding a child’s personality and their relationship with their caregiver. Extant COVID-19 interview-based child research has documented the potential impacts of a family members presence during a child’s interview (Donison et al., 2023; Koller et al., 2023) and suggested that interviewers take care to identify the presence of caregivers and carefully consider their potential influence on children’s participation (Thompson et al., 2021). Strategies have also emerged to help mitigate caregiver interference with data collection. For example, Lim and Kaveri (2024) suggested that interviewers clearly communicate the caregiver’s role in the study to them ahead of time. However, there is a need for research that considers children’s agency in pre-interview contexts – for example, in the processes of recruitment, caregiver’s informed consent, and scheduling interviews – to better mitigate the potential for coercion and parental influence.

Online Environment

The comfort of children in navigating the Zoom environment and the overall lack of serious technical difficulties noted in this study suggest that the online environment was a feasible means of conducting arts-based research with children. This finding is consistent with the dramatic increase in public use and confidence with use of audio/video conferencing software, especially among online learners, due to the COVID-19 pandemic (Batat, 2020).

This study also granted children the option of an audio-only interview in an effort to promote their agency by selecting their method of participation; when given the choice, many children chose to keep their videos on, which is indicative that child agency in mode of online participation and the availability non-verbal data are not mutually exclusive. However, the use of video provided valuable contextual information to interviewers, such as whether they were in a private setting or appeared distracted. Similarly, emerging COVID-19 research has identified that video-facilitated interviews provide unique contextual data that can be used to enhance data quality and interpretation. When working with child populations, video-based interviews offer unique opportunities to observe their real-time behaviour and body language (Lim & Kaveri, 2024), and glimpse into the child’s environmental context (e.g., presence of other family members; Donison et al., 2023). A potentially useful contextual factor not considered in this study was the child’s visible environment. Prior literature indicates that a child’s environment can be leveraged to build rapport and learn about a child’s life (e.g., through visible toys, photographs, pets, etc.; Bichard et al., 2023). In hindsight, this study may have been enriched by asking children to pick a strategic location in their home to conduct the interview (e.g., the room they spent most of their time in during COVID-19) and including environment-oriented prompts in the interview guide (e.g., if board games are visible, asking the child who they played those games with during COVID-19). Overall, additional research is required to determine how to best capture and leverage contextual factors in online research with children whilst respecting children’s agency and comfort in participation.

Children’s Agency

This research highlighted challenges with promoting children’s agency during some assent procedure elements. This was largely a result of the language and procedures required to secure ethical approval being primarily adult-centred and ill-suited to the needs of elementary school-aged children. For example, the research team was obligated to provide children with the name and email of the primary investigator in case they had questions post-interview. This procedure ‘checked the box’ of children’s informed assent on paper, but was not a realistic, accessible support in practice; children may lack access to an email account, the requisite digital literacy skills to seek help in this manner, or simply feel unsafe or uncomfortable communicating with an adult stranger on the Internet. This finding is consistent with those presented Oulton et al.’s (2016) scoping review which identified an overemphasis on terminology and legal concerns in children’s research, noting that this ultimately compromises their informed, voluntary assent. Recent research has begun to move away from literacy-focused assent by considering non-verbal communication (Huser et al., 2022), but there is no consensus about how to best modify assent procedures to be child-centred while also maintaining ethical standards. Despite these challenges, in the context of this study, interviewers were able to establish informed assent with children. This was the result of interviewers spending time describing confusing aspects in creative and age-appropriate ways (e.g., by providing ‘real-life’ examples). Future research should explore how to better promote children’s agency in-practice: for example, how considerations of dissent can be integrated into assent procedures, how to ascertain whether children feel pressured into participation, and how creativity in language and structure can modify assent procedures to better promote children’s understanding.

Limitations

There are some important limitations that should be considered alongside the results of this research. Primarily, the demographic details collected in the child interview were limited to age, gender, and school grade, which precluded the ability to determine whether the protocol was considered acceptable and effective across a diverse sample of children. An unexpected challenge was the potential for visual supports to impede discussion, as some children did not see themselves reflected in the cartoon characters. Unfortunately, we did not ask children to self-identify their race during interviews to facilitate exploration of this phenomenon; this is an area for future research. Insights may be limited from children who identify with demographics different from the visioning video’s main character (a young Black girl). In addition, an eligibility requirement was access to an internet-enabled device, which excluded children without digital literacy skills, device access, or Internet access. As noted by Dube (2020), marginalized and rural participants may rely on external sources of internet (e.g., internet café) that were closed during the COVID-19 pandemic, precluding their participation. In addition, while interviewers tried to mitigate social desirability bias, they inevitably could not catch or correct all instances, which may mean negative thoughts and feelings (e.g., dislike for activities, frustration with caregiver presence) could be underrepresented in these data. Finally, interviews were conducted in 2022, when COVID-19 was exceptionally prevalent in Ontario. During this time, audio conferencing applications (e.g., Zoom) were very popular to communicate with others. At the time of writing, however, many people have strived to return to normal by participating in in-person activities whenever possible, even disliking Zoom because of Zoom fatigue (i.e., videoconferencing for great lengths of time resulting in fatigue and stress; Riedl, 2022). It is unclear whether this online protocol would be similarly effective and well-received in a climate where Zoom is not as widely used. It would be beneficial to validate this protocol in additional, diverse samples and consider how to better enable the participation of under-resourced children. Future research would also benefit from evaluating children’s perceptions of online arts-informed interviews in comparison to in-person protocols.

Conclusion