Abstract

Online interviewing has allowed cost-effective data collection and access to diverse, geographically dispersed populations. However, these benefits come with significant risks to research integrity, particularly in terms of attracting potential scammer participants—individuals who volunteer to take part in research by creating a false identity that fits the eligibility criteria for a research study. This paper explores our experiences in conducting online interviews for a qualitative health research study involving expectant parents with a rare genetic condition. We outline the strategies we implemented to deter fraudulent interest and participation at different stages of recruitment and data collection and share our critical reflections on how we managed these challenges. This paper highlights how Artificial Intelligence (AI)-assisted software may help scammers to evade detection, representing a new external threat to the validity with online qualitative research. We provide practical recommendations for safeguarding qualitative research in the digital age, along with a checklist for the design stage, informed by recent technological advancements and lessons learned from our study.

Background

Conducting online interviews via video conferencing tools has become a popular and accessible way to gather research data offering flexibility and broader reach compared to in-person interviews (Shapka et al., 2016). This is particularly beneficial when eligible participants have caring responsibilities or when they are geographically dispersed due to the relative rarity of the phenomena. Participants may find it easier to discuss sensitive topics during online interviews compared to in-person interviews (Sipes et al., 2022), as the online format can offer greater comfort by allowing them to remain in familiar surroundings, balance power through a sense of control over the interaction, and create psychological distance that makes disclosing personal or emotionally charged topics feel less intimidating (Adams-Hutcheson & Longhurst, 2017). Furthermore, evidence suggests that researchers can obtain data of similar quality and depth through online interviews as they would with in-person interviews (Guest et al., 2023; Shapka et al., 2016).

The COVID-19 pandemic accelerated the shift to online data collection methods. Researchers and potential participants have become comfortable with various online tools for work, attending social events and keeping in contact with family, friends and colleagues remotely. This has allowed researchers to interact with participants through online tools. Moreover, technological innovations such as video recording and automated transcripts have made online interviews an attractive option for researchers (Keen et al., 2022). However, this technology may be open to abuse by individuals who apply to participate for rewards or vouchers despite knowingly not meeting the eligibility criteria, which challenges the integrity and inclusiveness of research and may also damage the researcher–participant relationships (Drysdale et al., 2023; Husted et al., 2025).

Scammer Participants in Online Research

Scammer participants, also sometimes termed as ‘fraudulent’ (Jones et al., 2021; Mistry et al., 2024; O’Donnell et al., 2023; Woolfall, 2023) and ‘impostor’ participants (Drysdale et al., 2023; Husted et al., 2025; Ridge et al., 2023; Roehl & Harland, 2022), refer to individuals who volunteer to take part in research by creating a false identity that fits the eligibility criteria for a research study. Their motive may be to obtain gift vouchers or other small rewards to recognise their ‘contribution’ and time. The Code of Human Research Ethics (2021) highlights the potential harm from disseminating inaccurate or deceiving information, including research results and conclusions, which can severely undermine the validity of the research.

The issue of scammer respondents has become relatively well-established in the application of quantitative methods, such as online surveys. For example, ‘bots’, defined as computer software designed to perform automated tasks for users (Eslahi et al., 2012), can be created or downloaded within minutes and deployed to complete simple automated functions or find surveys offering incentives (Godinho et al., 2020; Teitcher et al., 2015; Yarrish et al., 2019). Some respondents may deliberately fill out the same survey multiple times in order to obtain additional incentives (Bowen et al., 2008; Griffin et al., 2021). Researchers recommend guidelines to avoid fraudulent responses, including using reCAPTCHA (completely automated public Turing test to tell computers and humans apart), adding screening questions, setting up a brief video chat/phone call prior to the interview, collecting IP addresses and allowing only one response per IP address, and using bot-detecting software (Bowen et al., 2008; Bybee et al., 2022; Cimpian et al., 2018; Davies et al., 2024; Griffin et al., 2021; Panicker et al., 2024; Teitcher et al., 2015).

The British Psychological Society (BPS) Ethics Guidelines for Internet-Mediated Research (2021) suggest that procedural variables are less critical in qualitative approaches, reducing the likelihood of issues like ‘bots’ or artificial intelligence (AI) interference. However, qualitative research remains vulnerable to deception, as scammer participants may provide responses that do not reflect genuine lived experiences. This risk is heightened by challenges in verifying identities and the sensitivity of topics, which can make interviewers hesitant to probe further. The discussion around scammer participation in the qualitative literature is still limited, which has been described in only a handful of recent publications. Some of these are brief reports intended to warn researchers (Hewitt et al., 2022; Hoskins et al., 2025; O’Donnell et al., 2023; Ridge et al., 2023; Woolfall, 2023), while others offer a more detailed reflection on collaborative experiences across diverse disciplines (Drysdale et al., 2023; McLachlan et al., 2024; Mistry et al., 2024; Roehl & Harland, 2022). A recent scoping review by Husted et al. (2025) further highlights the emerging nature of this issue, noting a lack of empirical evidence and formal guidance despite increasing concerns about its ethical and methodological implications. Technological advancements, including the use of AI software, highlight the need for further exploration of researchers’ experiences with these tools.

AI technologies, including advanced language models, have transformed various aspects of research and communication. While these tools can generate sophisticated and contextually relevant responses, they also pose risks of misuse (Fitria, 2023; Yu, 2023). Scammers may exploit AI to produce convincing yet fabricated information, complicating the task of distinguishing genuine participants from dishonest ones (Mistry et al., 2024). The challenge lies in effectively identifying and managing AI-generated responses to maintain research integrity. Gibson and Beattie (2024) describe how they encountered AI-generated responses, likely produced by bots, during a qualitative survey. This experience led them to reflect on the idea of AI-as-participant in online research. In contrast, our paper highlights a different but equally important concern - the use of AI tools by human participants during interviews to present themselves as eligible members of a specific population.

Scammer participants provide fabricated or inconsistent information that distorts findings and undermines the quality of data (Drysdale et al., 2023; O’Donnell et al., 2023). Common indicators of scammer participation include vague responses to screening questions, quick expressions of interest, reluctance to share identifying information, and a preference for keeping cameras off during interviews (Mistry et al., 2024; Ridge et al., 2023; Roehl & Harland, 2022). These challenges are exacerbated by the rise of online recruitment and the use of social media platforms, which increase the risk of bot or fraudulent participation (Drysdale et al., 2023; Woolfall, 2023). In some cases, researchers found that up to 80% of participants were scammers (Woolfall, 2023), highlighting the severity of the issue. To address these concerns, researchers have proposed several deterrents. Enhanced screening methods, such as using software like Qualtrics, which can collect IP addresses and use AI tools like ExpertReview to flag potentially fraudulent responses, or RedCap to collect demographic data and conducting pre-interview video or phone calls, can help verify participant authenticity (Drysdale et al., 2023; Gibson & Beattie, 2024; O’Donnell et al., 2023). Asking participants to provide institutional or professional email addresses can verify their identities, especially in studies involving professionals (Ridge et al., 2023). Limiting the mention of financial incentives in recruitment ads and reviewing data across multiple researchers to identify patterns of fraud have also been suggested as effective measures (Mistry et al., 2024; Ridge et al., 2023; Woolfall, 2023) to help safeguard the integrity of qualitative research, ensuring that data collected are valid and trustworthy (Drysdale et al., 2023; Ridge et al., 2023). Although awareness of scammer participants is growing among qualitative researchers, approaches to addressing the issue remain varied. Some researchers learn through peer discussion, institutional meetings, or ethics panel feedback, while others develop strategies independently. In the absence of formal guidance, practice remains inconsistent across studies.

In this paper, we have chosen to use the term “scammer”, as recommended by Pellicano et al. (2024). This choice was made because our research project focus is on individuals with Neurofibromatosis 1 (NF1), a rare genetic condition which affects 1 in 300 people, and is associated with a higher likelihood of having neurodevelopmental conditions, including autism and ADHD (Garg et al., 2013). These conditions might be self-diagnosed later in life, and using alternative terms could unintentionally imply doubt in the sample group’s self-identified experiences or even lead to feelings of self-doubt (Pellicano et al., 2024). While the term may suggest intent, our focus is not on individual motivation but on the impact such cases have on the integrity of qualitative data and the research process. This case report will outline our recent research experiences with scammer participants.

Case Report: the Pregnancy Experiences of Expectant Parents with NF1

Our Research Study

Our qualitative study focused on the experiences of expectant parents with NF1, a rare autosomal dominant genetic condition where a baby with one affected parent will have a 50% chance of inheriting the condition (Friedman, 2022). We aimed to explore expectant parents’ experiences of reproductive decision-making, how they navigate pregnancy and how they create a bond with their future child. Because the focus was on a specific experience (i.e. pregnancy in the UK) in a relatively rare genetic condition, the target sample was specific. The inclusion criteria were (a) having NF1 themselves or in their partner, (b) currently being pregnant or having a pregnant partner, and (c) living in the UK. Online interviews were chosen for geographic flexibility and cost-efficiency. As a token of appreciation for their time, we provided participants with £20 vouchers. Ethical approval was obtained from NHS North West - Greater Manchester West Research Ethics Committee with reference number 21/NW/0346. Recruitment initially took place through clinical referrals and patient charities known to the research team. When participant numbers were lower than required, recruitment was expanded to social media following approval of an ethical amendment.

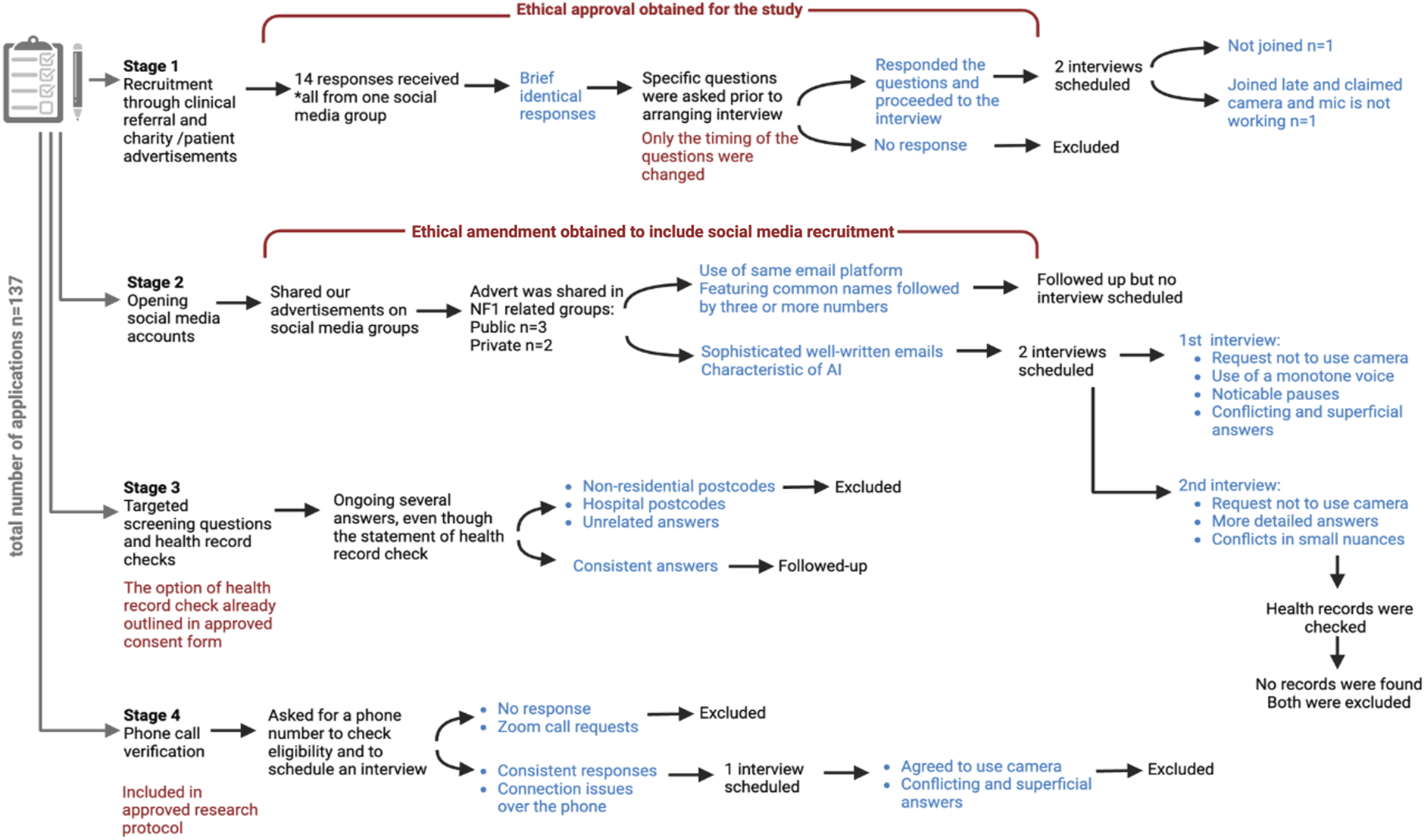

Issues in the recruitment and interviewing processes unfolded across four distinct phases, each revealing critical insights into how potential scammer participants managed to avoid escalating measures designed to verify eligibility (Figure 1). These phases illustrate the key suspicions raised, the ethical considerations encountered, and the critical decision-making processes implemented to address these challenges. The Key Stages in the Study Process that Alerted Us to Scammer Participants. *

Stage 1. Recruitment through Clinical Referral and Charity/Patient Advertisements

Initially, study recruitment involved a combination of targeted advertisements shared through patient charities and clinical referrals. Charities were selected as recruitment partners due to their close connection with both healthcare professionals and patients living with the condition, including patients who engage with their services or are part of their wider networks. The advertisement was distributed via email and through the charities’ social media platforms. Recruitment via these routes was consistent with the approved ethics protocol, which covered outreach through clinical and charity networks. After being briefly described the study, potential participants were asked four screening questions via email or an online form. – Do you live in the UK? – Are you currently pregnant? – If yes, when is the baby due? – Could you please provide your email address?

One of the patients who had contact with the charity, and who was also the administrator of a public social media group for people with NF1, independently reposted the advertisement within that group. We were informed of this action. Although we had not received any responses to our original advertisement before this, we received 14 responses within 24 hours of the repost. We began reaching out to the respondents by email, and most of them responded immediately. Some of the responses were identical to each other, and the responses were very short, such as “I would be happy to participate.”

After receiving many similarly written responses to the advertisement within a short period, we began to suspect the possibility of scammer participants. When we contacted potential participants for further information, the pattern of short and rapid responses continued from these participants. Eligibility screening questions were planned for immediately before the interview, but in an attempt to address our suspicions, we decided to ask the screening questions earlier by email. These included details such as gestational age, location within the UK, and the clinic where they were receiving care.

Roehl and Harland (2022, p. 2475) recommend researchers tell potential participants that “The responses to these eligibility screening questions will remain confidential and will not be used as data in the study but only to confirm your identity and to discourage scammer participants.”. In line with their recommendation, our email also made reference to “scammer participants” to show the applicants that the researchers are aware of this possibility and were taking action to eliminate those. However, we kept our emails personalised to avoid sounding impersonal or automated.

Moving the screening questions earlier in the recruitment process saw several applicants stop responding to emails. From the 14 email responses received in the 24-h period, two interviews were scheduled after obtaining informed consent via email. The first participant did not attend the interview or respond to any further emails. The second interview also could not be conducted, as the participant muted their audio and covered the camera with their hand. When a phone call was suggested instead, the participant did not respond, further raising strong concerns about the participants’ authenticity.

Stage 2. Opening Social Media Accounts as a Recruitment Tool

Due to slow recruitment, we submitted an ethics amendment to expand our strategy to include social media, allowing us to engage directly with potential participants by posting in relevant social media groups, rather than relying on charities or healthcare professionals to share recruitment materials through their own communication channels. Upon sharing our advertisement on target-group specific social media, we found a similar response to stage 1 and received approximately 40 responses through the application form or directly via email. Suspicions were raised that these were scammer patients, as they had generic email addresses (e.g.

Some emails appeared more detailed, were highly formal, polite in a letter-writing style and more sophisticated compared to previous short responses – characteristics that were reminiscent of the style generated by AI tools. While AI tools can also generate false information easily, potential participants could not be excluded from using AI tools (e.g. they may be especially helpful if English is not their first language or they have learning, or communication issues associated with NF1 (Cohen et al., 2020). So, we introduced the next stage: asking screening questions. We followed up with those respondents who provided us with all answers to the screening questions and scheduled interviews with two potential participants.

From the start, Scammer Participant 1 requested not to use the camera, referencing the participant information sheet and consent form to show their understanding that only audio, not video, was required by the research procedure. While this request is a common scammer tactic described in the literature (Davies et al., 2024; Drysdale et al., 2023; McLachlan et al., 2024; Panicker et al., 2024; Pellicano et al., 2024; Ridge et al., 2023; Roehl & Harland, 2022; Woolfall, 2023), referring to the information sheet and consent form to support keeping the camera off has not previously been highlighted. However, participants may also make this request for genuine reasons, such as privacy concerns or discomfort with video communication and we responded with understanding.

Participants need to have some knowledge of the specific genetic aspects of the condition to engage in meaningful discussion. This participant’s responses were delivered in a monotone voice, with notable pauses before answering questions, raising suspicion that they might be using AI tools such as ChatGPT to search for answers while online, prompting repeated checks to ensure they were still present. For example, when asked about their understanding of preimplantation genetic testing (PGT) and natural pregnancy, they initially struggled with the question but ultimately claimed they had undergone PGT. Later in the interview, when asked about the inheritance of the condition, they stated, “They had been tested to understand if there was any risk of the condition, and they learnt that there is a 50% chance.” Given the example of these inconsistencies, we suspected the participant was a scammer. To verify, we checked medical records (to which they had consented), but were unable to locate any, showing that they provided false information about their medical team.

We had scheduled an interview with potential scammer participant 2 whose email content bore similarities with the previous one. Medical record checks revealed the absence of records for this participant as well, indicating another likely scammer. Nonetheless, we proceeded with the interview to gather additional insights. Potential scammer participant 2 also declined to use the camera, citing the information sheet, which indicated that only audio recording was required. Unlike the previous interview, they provided more detailed responses related to NF1, such as having café-au-lait spots, described as “light brown skin patches” and details of bullying aligned with experiences described in genuine interview experiences in the literature (Williams, 2018). Additionally, they connected their history of NF1 with their expectations for their child, demonstrating a sophisticated approach and high confidence during the interview. However, some information they provided is not known in the scientific literature, such as an increase in patches during pregnancy. However, some information they provided is not reflected in the scientific literature, such as an increase in patches during pregnancy. While increases in neurofibromas (skin tumours) during pregnancy in NF1 have been reported (Nastac et al., 2025), changes in patches such as café-au-lait spots have not. These small nuances may be crucial in identifying scammer participants. In our study, the research team included a clinical geneticist and a child psychiatrist, and the early interviews were co-conducted with a child psychiatrist experienced in working with children and families. This multidisciplinary input allowed the team to more effectively identify potential red flags or clinical inconsistencies in participant accounts.

Quotes From the Interviews With Three Scammer Participants With Credibility Concerns and Convincing Statements

Following Flicker’s (2004) cynic approach, we adopted the ontological position that if participant authenticity cannot be reasonably verified, their account cannot be treated as valid qualitative data. On this basis, we excluded these interviews from the analysis. However, participants were still remunerated, as our ethics protocol had not anticipated such cases, and no institutional guidelines were in place to guide decisions in these situations.

Stage 3. Targeted Screening Questions and Check for Health Records

In response to challenges encountered with scammer participants during interviews, we amended our initial contact email to include a set of eligibility screening questions asking for additional details such as date of birth, postcode and the name of their local geneticist, alongside the information collected in stage 1, and requested that they read and sign the consent form. This information and consent enabled us to verify their medical records.

Although medical record checks were not part of the formal screening criteria, the possibility of reviewing medical information was included as a standard clause in the consent form, which had been approved by the ethics committee. The revised email was primarily intended to deter scammers and, where possible, confirm participant identity. In cases where participants listed clinics outside our institution, we were not able to locate their records. Participants were informed that their responses would remain confidential and would not be used as data in the study. They are offered the option to share this information via a phone call if they prefer not to do so over email. Although these requests could feel burdensome, we made efforts to ensure our emails remained respectful and personable. We used a warm tone and personalised each message with the participant’s name and details relevant to their enquiry.

Each advertisement continued to attract several responses. We identified seemingly scam responses, particularly those using generic email addresses with celebrity names on common email platforms. We stopped further contact with these respondents due to clear concerns about authenticity. For those who responded to our screening questions, the critical question was, “Who is your local geneticist?” This allowed us to check their medical records, and some scam responses included irrelevant details to these questions, such as “X-linked dominant”. For such responses, we followed up 17 responses by asking the unanswered question again. Or if there is a strong suspicion regarding their authenticity with different reasons, such as giving the postcode of commercial places, we stated, “Unfortunately, we couldn’t find a medical record with your name. That’s why we are unable to proceed to the next stage.” The responses were typically either a simple thank you or no response at all.

Stage 4. Phone Call Verification

While screening questions and medical record checks partially discouraged some potential scammer participants, they also risked inadvertently discouraging genuine participants. We managed to check medical records successfully of some participants; however, we were unable to verify records if participants listed a centre outside our location. To streamline the process, we began asking participants for a phone number to call (Jones et al., 2021; Ridge et al., 2023), which was already included as an option in our ethics procedure. Genuine participants generally found this to be an easier method and willingly shared their numbers. Conversely, scammers typically avoided providing a phone number. Phone calls also helped us to introduce ourselves and explain the purpose of the checks. This made the process feel more personal, helping to build trust and position the researcher as approachable rather than procedural.

While in most cases, phone calls effectively distinguish genuine participants from scammers, scammer participant 3 willingly shared their phone number. At the start of their interview, they inquired about the possibility of turning off the camera, and we explained that keeping it on would facilitate better communication, to which they agreed. Initial connection issues and several inconsistencies emerged during the interview that raised concerns about their authenticity. They initially claimed to have been diagnosed with NF1 during pregnancy but later claimed a childhood diagnosis. In contrast, genuine participants were typically consistent and detailed about their medical history, even when discussing a recent diagnosis. The participant expressed a desire to prevent their baby from experiencing “abdomen pain”, their primary symptom. Given that abdomen pain is not typical of NF1 per se, this response was another red flag. They mentioned that they were on medication; when asked about which medication they were on, they had a little hesitation and stopped for a second, then they said, “It is not a big thing, a painkiller for my NF1 pain”, which was also ambiguous. At the conclusion of the interview, the participant inquired about the incentive and when they would receive it, which is indicative of inauthenticity (Ridge et al., 2023). Thus, the phone verification strategy, while effective in some cases, did not eliminate scammer participation. The inconsistencies in the participant’s narrative, particularly regarding their diagnostic history and symptoms, coupled with her emphasis on the incentive, strongly suggested insincerity.

Reflections on Managing Scammer Participants and Recommendations

Key Challenges, Ethical Considerations, and Recommendations for Managing Scammer Participants in Online Qualitative Research

Checklist for Designing Online Interview Studies to Prevent Scammer Participants (C-PSP)

Balancing Trust and Scepticism

Adopting a stance of balancing trust and scepticism as part of the reflexive process is essential for researcher-interviewers to uphold their role as qualitative scientists conducting online interviews. While caution is wise, overly strict actions taken to eliminate scammer participants and over-scepticism may cause a loss of the trust of the genuine participants. For instance, scammer participants in our study frequently asked about incentives during or right after the interview, whereas this was not the case for genuine participants. Only two genuine participants inquired about the incentive, and this was due to delays in university processing system. While incentives are intended as fair compensation, a strong focus on rewards may indicate a potential red flag (McLachlan et al., 2024; Pellicano et al., 2024; Ridge et al., 2023; Woolfall, 2023). Similarly, requests to keep the camera off can raise concerns (Davies et al., 2024; Drysdale et al., 2023; McLachlan et al., 2024; Mistry et al., 2024; Panicker et al., 2024; Pellicano et al., 2024; Ridge et al., 2023; Roehl & Harland, 2022; Woolfall, 2023), but these must be understood in context. Our research sample includes women who are pregnant, and this can be around their last trimester. They may rightfully request to turn off the camera due to their physical fatigue, which is a reasonable need that must be accommodated. This request can also be made with different reasons such as technical issues. For example, one of genuine participants had poor internet connection and requested a phone interview instead. Yet having cameras on does not guarantee genuineness, as demonstrated by scammer participant 3. Some scammer participants also demonstrated unusual familiarity with research procedures, confidently referencing information sheet and consent forms, behaviours not typically observed among genuine participants unless they had research experience (Davies et al., 2024; Drysdale et al., 2023; McLachlan et al., 2024; Panicker et al., 2024; Pellicano et al., 2024; Ridge et al., 2023; Roehl & Harland, 2022; Woolfall, 2023). Developing protocols that allow for thorough verification without compromising participant comfort and engagement should be considered.

Ethical Dilemmas in Implementing Verification Measures

Additionally, introducing measures such as screening questions, medical record checks, and follow-up calls reduced scammer applications but also created ethical dilemmas: Should we require additional steps and mandatory verifications from all participants? Hoskins et al. (2025) reported that strategies such as requiring cameras, removing financial incentives from advertisements, and suspending snowball referrals may reduce fraud but also raise concerns about participant trust. In our study, we followed a more flexible approach. We only invited the final participant to turn their camera on if they felt comfortable, but we did not make this a requirement. We included the incentive in our advertisement without stating the amount and used smaller font to avoid discouraging eligible participants. All participants were approached with trust and screened using the same process. When red flags emerged, we assessed them in context and excluded cases with strong concern. Mandatory verifications, especially those requiring sensitive information, may deter genuine participants, particularly those who are vulnerable or from marginalised backgrounds, thereby increasing mistrust (Drysdale et al., 2023). The use of AI-generated emails further complicates verification, as non-native English speakers might use AI tools to communicate more effectively. While AI use alone should not be sufficient for exclusion, it may be a red flag to follow up (O’Donnell et al., 2023; Ridge et al., 2023). Considering these factors in study design can help minimise scam risks while promoting trust and ensuring critical judgment, enabling researchers to assess participant authenticity better.

Context-Dependency and the Role of the Researcher

One key advantage of qualitative research is the depth of detail in the data set, such as word choices and story completion tasks, which can help researchers identify scammer participants during interviews (Gibson & Beattie, 2024; Jones et al., 2021). However, as discussed by Mistry et al. (2024), the use of AI may make the prevention and identification of scammer participants challenging. AI tools can generate well-detailed, rapid responses that may mislead researchers, giving the false impression that participants are genuine representatives of a community, even when the topic requires specific knowledge and experience, as exemplified in our experience. AI-generated responses often include more generalised details, leading participants to focus on aspects of their condition repeatedly. However, this confusion could also be due to a genuine participant’s lack of awareness or knowledge about their condition. We also observed that some participants paused frequently during specific questions, raising the possibility that they were using AI tools to generate or support their answers. In contrast, conversations with genuine participants were generally smoother. Some genuine participants also paused, but these tended to occur in more natural contexts, often marked by meaningful reflection. While pauses alone are not a definitive red flag, researchers need to consider their quality and context, distinguishing between thoughtful hesitation and attempts to retrieve external information. Thus, researchers should be skilled in conducting qualitative interviews and the use of probes to clarify ambiguous details. In our experience, researchers’ familiarity with the participant group, developed through interactions with patients and charities during an extended recruitment period, was key to differentiating between outlier experiences and potentially fabricated accounts. The multidisciplinary team also contributed clinical insights based on their established experience of working with children and adults with NF1. Although we did not embed a formal PPIE structure throughout the study, we recommend involving patients and the public in future research, particularly for researchers with less clinical experience. This can help build understanding of the of the subject area and support more confident and informed interpretation during data collection.

Having the option to check health records and make phone calls as part of the consent process and research protocol has been advantageous in our study. This is especially significant when recruiting participants with rare conditions or those from marginalised groups, where recruitment is already time-consuming, which makes it critical to anticipate the challenge of scammer participants and implement appropriate verification strategies from the outset is critical for researchers. However, these options are context-dependent. Health record checks are only possible for medical-related studies or when patients are recruited from the NHS, or phone calls may not be possible if the recruitment strategy requires a reach out of the country. Researchers can create detailed, context-specific screening questions to better differentiate between genuine participants and scammers. Furthermore, it may be beneficial for ethics committees to review researchers’ measures to protect the study from fraudulent data and ensure the safeguard of both the research process and the researchers involved. These preventive measures can enhance research processes and strengthen the overall credibility of online data collection.

AI in Qualitative Research: Risks and Opportunities

This report contributes to the growing discussion about the role of AI in qualitative research. As highlighted by Gibson and Beattie (2024), AI-generated responses, likely produced by bots, can be unintentionally included in datasets. In contrast, our study focused on human participants who intentionally used AI tools during interviews to present false or misleading accounts. Although the source of AI involvement differs, both cases raise important ethical and methodological challenges. This also does not imply that AI should be avoided in research; instead, researchers should become literate in detecting AI-generated content and stay updated with technological advances. For example, platforms such as Qualtrics offer features like ExpertReview, which can help flag suspicious responses, although these tools are not always accurate and still require researcher judgment (Gibson & Beattie, 2024). AI may also be useful for researcher training. Simulated interviews using AI could help early career researchers build reflexive skills and learn to recognise features of inauthentic responses. However, this should be paired with real interactions with patients to build familiarity with lived experiences and the nuanced variation within genuine accounts. Engaging patients and the public in the research process, can help researchers better understand participants’ experiences and more confidently distinguish genuine responses from potentially fraudulent ones.

Moving Forward

Given the growing complexities of online tools and AI technologies, researchers must adopt a cautious but adaptive approach. While online methods offer valuable flexibility, in-person interviews may sometimes be necessary to protect research integrity. As challenges evolve, ethical guidelines should be regularly reviewed to safeguard participants and researchers alike. Balancing innovation with robust ethical practice will remain central to the future of qualitative research.

It is important to acknowledge the presence of scammer participant issues and be aware of them during the recruitment and data collection process. This awareness may help the researcher be more alert to signs that can show their created identities. While existing guidelines and frameworks (McLachlan et al., 2024; Mistry et al., 2024) provide valuable support, we recommend a practical checklist—based on both the literature and our research experience—for researchers designing studies and for committees evaluating study designs. However, it is also important not to start with negative bias; this may lead to hesitation in approaching actual participants. Keeping reflective researcher journals and holding regular research meetings can help realise the existing assumptions and ensure the researchers remain open-minded while being mindful of potential scammers.

Footnotes

Ethical Considerations

This paper presents a case report based on a qualitative research study. Ethical approval for the research study was obtained from NHS North West - Greater Manchester West Research Ethics Committee with reference number 21/NW/0346. All participants mentioned in this paper provided written informed consent to participate in the study.

Author Contributions

Gamze Kaplan, Debbie M. Smith, and Shruti Garg conceptualised the study. Gamze Kaplan drafted the initial manuscript, and Debbie M. Smith, Ming Wai Wan and Shruti Garg contributed to revising and refining it. Debbie M. Smith, Ming Wai Wan and Shruti Garg provided supervision and guidance throughout the research process and offered critical feedback on the manuscript.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Gamze Kaplan is a PhD student, and their study was supported by the Republic of Türkiye Ministry of National Education. This research was supported by the NIHR Manchester Biomedical Research Centre (NIHR203308). The views expressed are those of the author(s) and not necessarily those of the NIHR or the Department of Health and Social Care.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.