Abstract

Across sectors, including education, there are a confluence of pressures towards integration of research and evidence into practice. These include a focus on evidence quality in practice and policy, inclusion of research and evidence evaluation in professional training, and development of implementation standards and translational resources to support evidence mobilisation. However, while current approaches to evidence synthesis support many purposes, there is a gap in approaches to identify and design for evidence-informed practice ‘from within’ those practices. That is, current approaches may not adequately reflect the ways that practices: emerge and may be seen as ‘in need of evidence’; make use of proto-theories; and give rise to a need for clear alignment (or relevance) with evidence, and designed features of intervention contexts. This narrative review draws on an instrumental case to describe a novel method for Pragmatic Evidence Synthesis Matrices (PESM). PESM emerged from a body of work, particularly in educational technology (edtech), with one instrumental case briefly described to motivate its development. PESM: draws on extant evidence synthesis approaches, particularly best fit realist synthesis; integrates design artefacts including theory of change or logic model approaches and persuasive design; and is stakeholder oriented through use of narrative scenario-based methods. Through use of approaches such as PESM, evidence synthesis and systematised approaches to their development and use may be more readily developed across domains.

Introduction: Understanding Evidence in Practice

Across sectors the integration of research and practice to drive forward knowledge and achieve positive social impact are increasingly under the spotlight. This impetus, and the focus of this paper, is at the confluence of three key demands: (1) to build capacity for, and with, high quality evidence; (2) to motivate evidence-informed practice; and (3) to create opportunities for high quality evidence use through evidence mobilisation strategies such as synthesis and translation. This push is paralleled across fields with shared concerns around use of evidence, research-practice gaps, and issues around the complexity of implementation, illustrated here (and throughout the paper) in the context of school education and educational technology (edtech), which has seen: (1) An increasing focus on ‘standards of evidence’ in analysis of the sector and its policy landscape (see, e.g., Puttick, 2018) (2) Expectations that research and evidence form part of teacher professional induction and practice (see, e.g., Mills et al., 2021), through practices such as learning conversations (Earl & Timperley, 2009) (3) Recognition that to achieve quality of evidence use (alongside quality of evidence) (Rickinson et al., 2022), greater attention is needed to evidence mobilisation including via implementation guidance (Sharples et al., 2018).

The paper is motivated by a practical need, characterised in the following two vignettes, for rigorously grounded stakeholder-oriented evidence syntheses. As the literature review elaborates, the vignettes express a need in research-practice interaction that may not be well addressed via existing characterisations of evidence synthesis. Through a discussion of existing approaches, and a practical case, this paper therefore sets out (a) a novel methodological approach to (b) creating artefacts that represent pragmatic stakeholder-oriented evidence synthesis.

These vignettes reflect experiences over a number of projects designing and deploying learning technologies in higher education (HE), through which the approach reported in this paper was developed. A case report regarding a specific technology to support wellbeing in schools provides further exemplifications of the challenges and how the approach may address these.

Across contexts, practitioners, including both educators and edtech developers, experience a range of challenges in evidence use. These include considering how to identify the evidence that underpins their existing practices, the consistency of this evidence and corresponding theories, the gaps in that evidence, and the ways in which to continuously integrate and maintain evidence informed practices into their existing work. The vignettes illuminate the ways that local contexts may include explicit and implicit (or proto) theories that are implicated in everyday professional practices of design and implementation. These practices may represent theorised early, planned out strategies that have recently been referred to as ‘Practice[s] in Need of Evidence’ (PINE) (Hayes, 2019; Murphy & Speer, nd; Sanders et al., 2022), or they may represent practices that are longer standing (and perhaps longer problematised for their lack of theorisation), or practices that are emergent or adapted from evidence and syntheses of interventions. Gaps in the ability of evidence syntheses to speak to these contexts of practice may result in (1) relatively less guidance on continued improvement of existing approaches (intra-intervention improvement); and (2) relatively more guidance on selecting macro-level interventions, via comparisons that may not account for existing local structures and transition costs (inter-intervention comparison). Developing approaches to address this concern, while responding to local design needs and context, is important. This is important given innovations are most likely to succeed when they connect – with well-grounded rationale – to existing practice (Knight et al., 2020; Zhao et al., 2002). Correspondingly, research is stymied by this lack of clarity regarding how to connect existing practices to theory, in order to draw on and learn from practice and practical design in research and theory building. But, “interventions are theories” (Pawson et al., 2005), and practices and technologies may be treated as forms of intervention, with rich underlying theories. Seen this way, practices and technologies express ‘designs’ as both products or things, as well as the ways practitioners engage designerly knowledge in their process of interaction (Langley et al., 2018).

If we want to understand and support theory and evidence use in practice, we must develop methods to: identify evidence informed practice, in practice; distinguish evidence-grounded from not evidence-grounded practices 1 ; and identify where theory and research uptake occurs, implicitly and explicitly. This marks a shift to supporting stakeholders in addressing their questions: “what’s working here, why, and how might it be developed?”, and “What does the evidence say about this practice?” From a research perspective, it marks a shift towards addressing the research question: “What is the role of evidence synthesis approaches in probing, developing, and learning from practice?”

This paper, by section (§), thus: §Literature Review, Describes the drivers for evidence informed practice, highlighting educational literature; §Method, Outlines a specific case description which was the impetus for this work, and which exemplifies the common challenge described; §Drawing Case Lessons from the Literature, in the first two subsections reviews a number of approaches to evidence synthesis and their limitations with regard to this case and challenge; §Drawing Case Lessons from the Literature, in the latter two subsections reviews intervention design and strike design-based research approaches to inform a model; §Discussion and Conclusion, Proposes a model that can be adopted, through pragmatic evidence synthesis matrices (PESM).

Literature Review: a Confluence of Pressures: Capacity for Quality Evidence; Evidence-Informed Practice; Evidence Synthesis and Translation

Building Practitioner Capacity through Quality Evidence

A body of literature is devoted to claims regarding the need for high quality evidence (and, the deficits of poor quality evidence), for policy making and practice, often using hierarchical models of evidence quality (for a variety of approaches, e.g., Carrier, 2017; Hoeken, 2001; Petty, 2015; Sharples et al., 2018). Perhaps best known of the approaches takes a ‘what works’ approach (Slavin, 2004) to evidence quality and its dissemination in summarising the effects of various educational interventions (Hattie, 2008), and provision of toolkits from these summaries (Higgins et al., 2016). Although not without merit, these approaches have been criticised for their approach to both evidence quality and teacher practice (e.g., Biesta, 2007; Hempenstall, 2014; Wrigley & McCusker, 2019), with a systematic review of the kinds of evidence evaluation systems used in these models indicating significant variation in approaches to rating evidence, and “little reporting of rigorous procedures in the development and dissemination of evidence rating systems” (Movsisyan et al., 2018, p. 224).

Moreover rather than particular methods having independent quality, research evidence involves appropriate selection of systematic methods for the purposes of addressing specified questions (Doucet, 2019; Hoadley, 2004). It is thus that emerging approaches to evidence development in educational technology provide practitioner research training emphasising that the type of evidence does not necessarily reflect its quality and that different types of evidence have different advantages and disadvantages (Cukurova et al., 2019; drawing on, Cox & Marshall, 2007) 2 . Indeed, some have suggested the term “best available evidence” (Slocum et al., 2012; Spencer et al., 2012) may be useful to distinguish practices for which there is not yet enough quality evidence for a systematic review, but that may nevertheless be well supported; transparency of this evidence is key in helping make judgements regarding the practices and different types of evidence synthesis (Hempenstall, 2014).

More radically, a range of authors have posited a shift in evidence evaluation, to propose “relevance to practice as a criterion for rigour” (Gutiérrez & Penuel, 2014). That is, “consequential research on meaningful and equitable educational change requires a focus on persistent problems of practice, examined in their context of development, with attention to ecological resources and constraints, including why, how, and under what conditions programs and policies work.” (Gutiérrez & Penuel, 2014, p. 20). Going further, Ming and Goldenberg ask: “How can we conceptualize quality in ways that engage practitioners and policymakers, to make our highest quality work accessible and relevant?” (Ming & Goldenberg, 2021), proposing five key dimensions of “research worth using”: “(1): Relevance of question: alignment of research topics to practical priorities; (2) Theoretical credibility: explanatory strength and coherence of principles investigated; (3) Methodological credibility: internal and external credibility of study design and execution; (4) Evidentiary credibility: robustness and consistency of cumulative evidence; (5) Relevance of answers: justification for practical application” (Ming & Goldenberg, 2021). Crucially, these shifts recognise that evidence quality is assessed in context, by people, and thus requires a pragmatic practice-oriented analysis (Farrell et al., 2022), which further seeks to centre what research can learn from practice (Farley-Ripple et al., 2018; Penuel et al., 2015).

Motivating Evidence-Informed Practice

Correspondingly, there has been increasing pressure for evidence informed practice, that is, practices for which there are explicit warrants drawn from scholarly literature and evaluation. These calls encompass a broad range of approaches that may be in tension, from more bottom up engaged evidence use in influencing policy planning and evaluation decisions, and diffuse models of grassroots practitioner evidence uptake, through to more top-down ‘imposed’ use of direct “evidence based” practices (e.g. state mandates for pedagogies restricted to synthetic phonics), or other political aims (e.g. directives to be ‘evidence based’) (Ming & Goldenberg, 2021).

Grassroots or bottom-up evidence use by practitioners is sometimes addressed through ‘close-to-practice’ research, that focuses on, informing, and building on (or emerging from), practice (Wyse et al., 2021, p. 5), and related work on practitioner enquiry (see discussion in, Wyse et al., 2021). This research often builds on Lewin’s models of action research and mantras that ‘nothing is as practical as a good theory’ and ‘the best way to understand something is to try to change it’ (see, Greenwood & Levin, 2007; Wyse et al., 2021). However, aspects of this work have been critiqued for its rigour or poor theorisation (Wyse et al., 2021).

In parallel, more ‘top down’ approaches present significant concerns regarding evidence-informed policy, and approaches to integrate research into practice (Cairney, 2016; Hallsworth et al., 2011; Oliver et al., 2022; Verhagen et al., 2014), including in educational policy (Rickinson et al., 2017, 2018, 2022). These concerns arise in part from the challenge that evidence may be treated as too abstracted or ‘from nowhere’ (Shapin, 1998), providing an idealised intervention into malleable systems; but policies are rarely implemented onto blank canvases, and evidence rarely speaks to the nuanced design required to integrate into existing systems and local contexts. Moreover, there are concerns regarding the imposition of ‘evidence informed pedagogy’ by policy instrument – such as standard curricula – for reducing teacher (and pre-service teacher) professionalism both regarding their own pedagogic practice, and ability to critically engage with said evidence (Brooks, 2021; Wrigley, 2015; Yoshizawa, 2022).

Fostering Opportunities to Use Evidence through Evidence Synthesis and Translation

Finally, the burgeoning research literature creates new needs for methods to synthesise this evidence, into forms that may be used by practitioners, to inform that practice. Evidence synthesis methods targeting practice thus reflect pressures including that: the large amount of literature makes it impossible for practitioners to ‘keep up’; research often requires ‘weighing up’ to understand complex and sometimes contrasting findings; and most crucially, that research often requires translation that highlights implications for practice, to support its uptake and implementation in practice. Educational research faces an underlying challenge in this regard, despite recent attention to systematic reviews in education (Zawacki-Richter et al., 2020), a recent analysis of educational technology evidence syntheses (Buntins et al., 2023) indicates that: (1) there are gaps in reporting from such syntheses, that may reflect both methodological deficiencies and deficiencies in methodological guidance that is suitable for the field; and (2) a relatively narrow set of available synthesis approaches adopted 3 .

Alongside synthesis and translation research, there has been a parallel recognition of the importance of co-production approaches of various kinds in identifying problems, developing evidence informed interventions, and evaluating these in practice. There are emerging approaches to synthesis and development of theory through this work (see §Drawing Case Lessons from the Literature). However, commonly these approaches focus on local contextual features of the research site (e.g., a particular school or district), rather than the broader evidence base. In contrast, repositories that collect and synthesise evidence may foreground issues that are prioritised by researchers (such as methodological validity), over those of practitioners (Ming & Goldenberg, 2021). Indeed, in developing stakeholder-oriented syntheses, as in other types of research, there is an, “often undiscussed key challenge with regard to stakeholder involvement in systematic reviews: that responding to stakeholders can mean reconsidering what makes a review rigorous.” (Haddaway et al., 2017, p. 111).

Crucially, “High-quality evidence is necessary, although not sufficient, for high-quality use.” (Ming & Goldenberg, 2021, p. 130), with those authors arguing for “potential for use” (ibid) as a criterion for quality research, and inclusion of stakeholder perspectives in this assessment. However, while their ‘Research Worth Using Framework’ (Ming & Goldenberg, 2021, p. 154) offers an important shift in evaluation of research quality, it nevertheless remains focused on the research production side, rather than approaches to rigorously identify research practices in practice, and adapt research into practice and vice-versa.

It is for this reason that the compelling special issue conclusion title is so troubling: “The research we have is not the research we need” (Reeves & Lin, 2020, p. 1991). The special issue provided “A Synthesis of Systematic Review Research on Emerging Learning Environments and Technologies”, however, the authors ask: “What guidance do systematic reviews provide practitioners?” highlighting some kernels, and noting that the reviews were not written for this purpose, but nevertheless flagging a paucity of practical insight, and thus encouraging researchers to focus on, “serious problems related to teaching, learning, and performance, collaborating more closely with teachers, administrators, and other practitioners in tackling these problems, and always striving to make a difference in the lives of learners around the world.” (Reeves & Lin, 2020, p. 1991). This, they suggest (Reeves & Lin, 2020, p. 1998), is in part due to a focus on “things”, such as tools, rather than “problems”, such as educational low engagement or poor outcomes.

Reeves and Lin thus suggest a move from “what works”, to “what is the problem, how can we solve it, and what new knowledge can derived from the solution?” (Reeves & Lin, 2020, p. 1998). To do this, they propose design research as an approach “in which the iterative development of solutions to complex educational problems through empirical investigations are pursued in tandem with efforts to reveal and enhance theoretical understanding. Such efforts can serve to guide educational practitioners as well as other researchers.” (Reeves & Lin, 2020, p. 1998). This family of approaches (Penuel et al., 2020) correspond to Gutierrez and Penuel’s call for “a shift in focus of research and development efforts, away from innovations designed to be implemented with fidelity in a single context and toward cross-setting interventions that leverage diversity (rather than viewing it as a deficit)” (Gutiérrez & Penuel, 2014, p. 19), with increased focus on organisational contexts and infrastructure to address “how to make programs work under a wide range of circumstances” (Gutiérrez & Penuel, 2014, p. 19). Synthesis, in this approach, could thus support practitioners in reflecting on and problematising their practice while also challenging the uni-directional research-practice model of systematic reviews (Suri, 2013). Given this increased diversity of contexts, and the limitations of systematic reviews, it is therefore important to understand the role of different kinds of review (and evidence), in – as Slocum et al., (2012) and Spencer et al. (2012; 2012; as cited in, Hempenstall, 2014) put it – providing indications of ‘best available evidence’. In this context, this paper thus addresses the research question: What is the role of evidence synthesis approaches in probing, developing, and learning from, and for, practice?

Method: Case Description and Approach

This paper arose from work by the lead author across a number of projects within the context of our core impetus, and that thus act as a collective instrumental case (Stake, 2003). To illustrate the need and development of the approach proposed, this paper focuses on a particular case situation in which a collaboration was established between an academic group (led by the author), and an industry startup (who funded the project, with matched government funding). This example thus illustrates the issues in developing and deploying evidence synthesis. In the situation, the funder was a funder-practitioner as an entrepreneur in the education and training sector with a background working in youth organisations, who wanted to: • understand the evidence base of the organisation’s existing tool, including both key directions for development, and establishment of existing alignment with evidence; • design and build evidence-based resources into the tool; • and develop a strategy for ongoing evaluation of the tool-in-use.

An evidence synthesis approach was therefore needed that would be: • stakeholder relevant, in order to guide the practitioner, and support their user base in understanding the evidence underlying the tool’s use; • rigorously grounded in evidence, to provide a formal theory of change for the tool in use and direction for evaluation, and highlight high quality evidence that could be used to ground/support or destabilise/critique design decisions made in the tool for its intended purpose; • rapid, based on both resourcing level available, and the focus of the work which did not require a comprehensive review, but rather, an information-criterion oriented heuristic, reviewing enough literature to inform existing and ongoing design; • design oriented: o to connect to existing design features of the tool (i.e., recognising that there was an existing tool, being used in contexts with established routines and practices, thus providing a contextual review around the existing situation, not ‘from nowhere’); o and to point to potential for development of the tool and both its integrated resources and guidance for its use. This design focus including regarding specific design questions being faced and evidence for which choices to make.

In the following section, we first outline some common approaches to evidence synthesis, including those specifically targeting stakeholders and problems of implementation. We then discuss some approaches to develop program theory, and how these have been used to support stakeholders in evidence-based thinking. Finally, design-based research is drawn on to outline some approaches to involving stakeholders, and developing evidence syntheses in synchrony with stakeholder engagement. (A visual overview of the paper is provided in Supplement 1).

Through each of the subsequent sections, key features and proposals for a model are drawn out. The Discussion and Conclusion integrates these, showing how these components complement each other to point to some final components of a model, and ultimately to address the needs set out above. The model is demonstrated through some template resources, and a practical example, with key comparisons to other tools provided.

Drawing Case Lessons from the Literature

Evidence Synthesis for Translating Quality Evidence

Overview of Synthesis Methods and Their Selection

Across reviews, narrative syntheses, and methodological descriptions of evidence synthesis approaches, a number of methods are identified (Cook et al., 2017; Grant & Booth, 2009; Kastner et al., 2012; Tricco et al., 2016; Wickremasinghe et al., 2016, pp. 9, 14, 25, 25, 10, approaches respectively), with inconsistency regarding both the number of approaches and their operationalisation (Grant & Booth, 2009; Paré & Kitsiou, 2017; Tricco et al., 2016), and, “a lack of guidance on how to select a knowledge synthesis method” (Tricco et al., 2016, p. 4).

This lack of guidance for selecting a knowledge synthesis method presents a challenge in addressing the aim for evidence syntheses to identify and bring together evidence from across sources, in ways that can provide novel insights and theories, including for particular contexts of use (Cook et al., 2017). For syntheses to provide these functions, selection of their method of production should consider both the purpose for which it is being developed (e.g., exploratory or confirmatory purposes), and any key requirements (“e.g., the level of certainty required”, Cook et al., 2017, p. 136).

Review Types Identified in Wickremasinghe et al. (2016) review (Durations from Figure 2, reflections regarding Benefits and Challenges drawn from Wickremasinghe, for the specific target of this paper).

Thus, selecting an evidence synthesis approach requires understanding stakeholder needs (such as those identified in Table 2), against features of synthesis approaches including “readability, relevance, rigour, and resources” (Wickremasinghe et al., 2016, p. 527), and their technical characteristics: (1) Quality appraisal of evidence: Limited versus Essential (2) Evidence usually presented as: Reference list; graphics; tables; narrative; (and combinations therein) (3) Systematic documentation of evidence: Comprehensive or limited (4) Replicability (5) Periodic updating (6) Limitations – with a range given, the most obvious for us are that: limited focus, on readily available evidence and existing reviews of relevance, with possibility for bias, and resources determining scope Wickremasinghe et al.’s ‘Users’ Knowledge Needs’ Collation, (Wickremasinghe et al., 2016, p. 528) Under a CC-By License.

Section Lessons: Common Models of Evidence Synthesis

Returning to our case requirements, we sought methods for syntheses that: present ‘best available’ evidence, or evidence that would point to design implications, particularly where design decisions are required; relate evidence to the kind of tool and context of use, specifically Australian schools where possible; and that it be disseminated in a form that is readable (and useable). Specifically, synthesis outputs should provide for use in (1) positioning of the tool in the current evidence base; (2) the ongoing design and evaluation of the tool-in-use; and (3) dissemination of this to stakeholders. Thus, our interest is particularly in methods that target professionals and practitioners, who sometimes fulfil an advocacy and policy role (using the language of Wickremasinghe et al.).

However, as Table 1 summarises, although there are benefits for the purposes described in §Method: Case Description and Approach (column 2), the range of approaches discussed does not provide a clear method for the case and general problem discussed in this paper. Notably, Wickremasinghe et al.,’s model excludes end-users, who may also wish to understand the background evidence for the interventions – such as curricula or technologies – that they are using.

Moreover, in the context introduced in the §Introduction, §Literature Review and §Method, the purpose of the synthesis is generally not to test hypothesis but rather configurative purposes (i.e., models use of existing studies to apply existing theories to different contexts, with the possibility to generate hypotheses). Thus, this purpose excludes many synthesis methods (summarised Table 1) for reasons of resourcing, rigour, or/and relevance to practice. While methods such as systematic maps, conceptual models, and narrative reviews offer some flexibility (see discussion, Cook et al., 2017), they nevertheless present challenges in: aiming to be complete or exhaustive in their review, and thus being more expensive and less targeted to the specific questions (systematic maps); lack of systematicity (narrative reviews); and a focus – as the name suggests – on visual and narrative depictions of systems, to model their relationship, rather than to develop or probe designed interventions (conceptual models). There is thus a challenge in navigating approaches that are too exhaustive on the ‘systematic’ end (with significant recall, but less specificity); and on the more narrative end, not systematic enough.

As Table 1 shows, there are approaches that seek to maximise rigour (but may reduce relevance and readability), and others that seek to maximise relevance but may reduce rigour and readability (literature review), or rigour (e.g. evidence maps) 4 . A range of features across approaches may be drawn on, including: inclusion of grey literature, currency, clear narrative and visual distillation, emphasis on quality evidence. However, no approach discussed in Table 1 combines these, and thus there is little guidance on, for example, how to take a more systemic approach to a literature review.

Realist, Qualitative, and Stakeholder Engaged Evidence Synthesis

Overview of Stakeholder-Oriented Synthesis Methods

One approach to evidence synthesis that may be promising in seeking to develop practice-oriented and stakeholder-engaged evidence synthesis is that of mixed methods research synthesis, which in Wickremasinghe et al. (2016) conflates realist reviews with mixed methods research syntheses (which receive the only mention of qualitative data). Alongside approaches to stakeholder engagement in the evidence synthesis approach these review types are central to the aims of our case.

Overview of Processes of Scoping, Familiarisation, Search and Synthesis, and Dissemination in realist synthesis, qualitative evidence synthesis, Best-fit and Rapid best-fit realist synthesis, and Stakeholder engaged synthesis.

apage numbers given refer to the location in the source text where key steps are set out. Where direct quotes (rather than brief step outlines) are given, they are quoted and sourced.

Qualitative evidence synthesis provides an approach to the synthesis of qualitative research evidence, in recognition that (1) most evidence synthesis approaches focus on quantitative methods, and (2) qualitative research may provide important insights, while presenting distinct challenges for the purpose of synthesising. As Flemming and Noyes (2021) note, Qualitative Evidence Synthesis (QES) can help us to explore a range of questions around the experiences of interventions and their complexity, from the perspective of stakeholders including those implementing, and those receiving a particular intervention. As they further describe, while in quantitative syntheses PICO (Population, Intervention, Counter-intervention, Outcome) is often used to map interventions, in QES question formulation may be guided by concerns of both local and wider context, via structures such as Booth et al.,’s (2019) “PerSPecTIF (Perspective, Setting, Phenomenon of interest/ Problem, Environment, Comparison (optional), Time/ Timing, Findings)” (Flemming & Noyes, 2021, p. 5).

As with realist evidence synthesis, qualitative evidence reviews provide a useful insight into varying approaches to evidence synthesis, with alignment of rigour, relevance, and output readability to the purposes of the review (as Table 3). Importantly, methodological filters may be used based on the particular focus, providing a mechanism for ‘methodological alignment’ (Hoadley, 2004) in selection of items to synthesise grounded in the purposes being addressed. Moreover, variants of QES (e.g., best-fit models, outlined in detail Table 3), align with models of realist synthesis in the ways they develop and select questions and synthesis output generation.

A separate body of work has sought to engage stakeholders in the evidence synthesis process, including specific targeting of rapid reviews (Garritty et al., 2023), scoping reviews (D. Pollock et al., 2022), systematic reviews (Boote et al., 2012; Cottrell et al., 2015; A. Pollock et al., 2018, 2019), realist reviews (Abrams et al., 2021), although with significant variation in detail regarding methods of engagement. As Haddaway et al., highlight, this engagement seeks to address a range of concerns to, “increase the quality of research and decision-making; broaden understandings of context and drivers of change; increase legitimacy and acceptance of research; increase research impact; empower stakeholders and facilitate the sharing of information” (Haddaway et al., 2017, p. xiii).

Involvement of stakeholders in reviews can produce tensions, “between their calls for locally-specific, often rapidly-produced evidence syntheses for policy needs and the production of unbiased, generalisable, globally-relevant systematic reviews. This tension raises the question of what is a ‘gold standard’ review.” (Haddaway et al., 2017, p. 111). These authors again set out a number of steps for conducting a quality review (outlined, Table 3), of particular note here is their focus on storytelling as a distinctive tool in participatory review processes (Figure 1). These stories may be used to connect to the existing concerns of stakeholders, situate evidence in context, and identify potential for action, supporting both identification of key issues in scope, and dissemination of results (Haddaway et al., 2017). A further body of work has adopted design, and co-design processes to engage stakeholders in and with reviews, particularly those adopting a realist synthesis method (Langley et al., 2018, 2020; Law et al., 2020, 2021b, 2021a) (§Design for Change and §Design-based research: Things and people focus on two considerations also present in this work). Conceptual framework for the integration of storytelling in systematic reviews and systematic maps, (Haddaway et al., 2017, p. 152, article published under a CC-By license).

Section Lessons: Developing a Model of Evidence Synthesis

Returning to our case requirements, as outlined in the preceding section, there are challenges in applying many common evidence synthesis methods to stakeholder-engaged contexts in which active (but perhaps implicit or proto) theories are being investigated. Realist, qualitative, and stakeholder engaged synthesis approaches seek to address this challenge. As Table 3 indicates, a range of approaches exist to the development, execution, and dissemination of evidence syntheses, which may inform various aspects of a design-focused stakeholder-oriented approach. Although no single approach provides a current model for our needs, lessons can be drawn across approaches, with further insight coming from design theories (§Design for Change) and design-based research (§Design-based Research: Things and People).

In the scoping stage, all the approaches provide some clear guidance regarding engagement with the stakeholders to identify the purpose and topics of the evidence synthesis. This stage may be guided by methods for the development of research questions, although caution should be taken that these do not narrowly scope the syntheses with respect to outcomes or contexts where issues of implementation or practical design characteristics may be of more relevance to stakeholders.

Familiarisation describes the initial researcher engagement with the topic of the evidence synthesis, grounding subsequent searches. Where familiarisation is discussed in synthesis methods, it typically involves a combination of initial literature and grey-literature search, sometimes focusing on existing frameworks or models. These can be shared with the stakeholder to develop an initial model, to be iterated through the process. Of note is that some stakeholders may have existing models, or (claim to) draw on theories or literature, and this may be a consideration in the development of the model and synthesis. Indeed, this may be an important reference point where initial stakeholder models diverge from the evidence synthesised, or the theories identified as drawn on in existing practice appear not to be operationalised in ways that are consistent with theory.

Evidence synthesis methods vary in their structuring guidance regarding the search, appraisal, and synthesis stage, typically focusing on purposive search, with appraisal targeted at model refinement and the aims of the synthesis. The approaches share a consideration of the need for evidence appraisal, which should be consistent with the needs of the synthesis, while also being structured, transparent, and independent of the stakeholder demands.

At dissemination stage, the models emphasise “explaining why [it] works” (Rycroft-Malone et al., 2012, p. 2), “lines of action” (Hannes & Lockwood, 2011, p. 1639), identification of theories that provide a ‘best fit’ to practice, and use of multiple outputs where appropriate, which may support different stakeholders, provide guidance on confidence in the findings, and potential for future directions.

These lessons will be drawn on and elaborated through Design for Change, which provides further insights regarding model development approaches, identification of ‘claims’ made in design (including intervention designs) and their role in evidence synthesis, and Design-based research: Things and people, which discusses approaches for stakeholder engagement through question elicitation and scenario use. Discussion and Conclusion will return to the lessons of these lessons to provide an overview model.

Design for Change

Mapping Theories of Change for Evidence Informed Practice

Across the approaches that may be used for design-focused stakeholder-oriented synthesis described in Table 3 (and notably, not in Table 1), a common first step is to “make explicit the programme theory (or theories) – the underlying assumptions about how an intervention is meant to work and what impacts it is expected to have” (Pawson et al., 2005, p. 1). These theories may be framed as theories of change, that can be used to make clear how learning technology innovations are designed to produce their desired outcomes in a given context (Century & Cassata, 2016; Cukurova et al., 2019; Weatherby et al., 2022).

A range of approaches exist to developing models – often visual – that help express or make conjectures regarding theories of how an intervention or tool will work to achieve some ends, including logic models (Coldwell & Maxwell, 2018), driver diagrams (Bryk et al., 2015), models for mapping features of technologies to desired changes such as the outcome/change design matrix (Langrial et al., 2013; Tikka & Oinas-Kukkonen, 2019), or features of learning design to learning outcomes, such as conjecture mapping (Sandoval, 2014) or design patterns (Goodyear et al., 2006). Applying these approaches generally involves drawing on both extant evidence, and engagement with stakeholders, with a view to bridge gaps in evidence synthesis approaches regarding relevance to local context (Bryk et al., 2015; Coldwell & Maxwell, 2018; Langrial et al., 2013). Domain specific practices for these design artefacts have emerged in education that aim to bring theory into alignment with practice (e.g., Goodyear et al., 2006; Sandoval, 2014), drawing on a lineage of design-based research approaches (Cobb et al., 2003; Easterday et al., 2016; McKenney & Reeves, 2013; The Design-Based Research Collective, 2003; Wilson et al., 2017) 5 . For example, ‘design patterns’ – adopted from architectural practice into many disciplines – provide abstractions of existing practices, with the aim to support adoption across contexts through remaining tied to practical context (Goodyear et al., 2006). Similarly, conjecture mapping (Sandoval, 2014) aims to express how designs come to produce outcomes that are mediated by tasks and contextual configurations through the expression of design and theoretical conjectures: testable, improvable, propositions about how a learning interaction should achieve its outcomes.

As artefacts that both inform, represent, and help develop models for tools and technologies, these range of models can serve a number of purposes for practitioners and designers within both the target local context, and broader practice. Thus a dual purpose of these models is that they make explicit and transparent the theory of change for (1) evidence synthesis and product evaluation; while also (2) supporting understanding of the tool and intervention between (and within) stakeholder and researcher groups, acting as a boundary object (Star & Griesemer, 1989), for shared reasoning and model improvement (Cukurova et al., 2019; Weatherby et al., 2022).

This range of purposes is captured in the following taxonomy, drawing on models of the value of design research and logic models (Edelson, 2006; Rehfuess et al., 2018), indicating that such artefacts can: (1) Make explicit how tools/interventions are connected to existing evidence (prior to a review, a priori, with evidence synthesis testing this initial model; or in iterative or staged approaches, defined prior to a review, and then updated to produce a final output model); (2) Shape product development, by making clear how proposed product changes influence desired outcomes; (3) Drive evaluation by clearly defining desired outcomes, the observable indicators and outputs we may measure to evaluate progress on these outcomes, and the features of the tool-in-use that may be producing outcomes (and could be systematically varied).

However, across common approaches to producing models for theory of change the focus is typically on the theoretical mechanisms that tie aspects of program outcomes to overarching program impact. In contrast, design approaches provide greater attention to both design material (i.e., the tools we design with), and the material design (i.e., the artefacts that we produce through design, for intended purposes). This is a significant strength of design-based approaches, not least because design artefacts provide us with a further material source for probing theories of program logic, including through the analysis of the ‘claims’ that our artefacts make. That is, claims analysis – an approach from human-computer-interaction research – provides a lens for understanding the implicit model of a user, through analysis of the tool and its apparent intended use (J. M. Carroll & Rosson, 1992; for a critical review, see, McCrickard, 2012). While certainly this analysis of implied claims provides only one lens into the mechanisms of a tool mediated intervention, alongside other approaches it provides an important tool for theory development and a way to probe designers assumptions and knowledge of what has (and has not) worked in their practice (Moran & Carroll, 1996).

Section Lessons: Feature-Outcome Matrix

One pragmatic approach to mapping the conjectures or claims interventions make regarding target outcomes is through a matrix, that sets out the key material features of an intervention (sites and modes of interaction) against outcomes. This approach is inspired by a matrix design that is seen largely absent in academic literature

6

, but used in aspects of software and communications development for example, of a ‘feature-benefit matrix’. In these matrices, we map features of an intervention or program (software or social program), to target outcomes. As such, this model can be used to map features that target particular behavioural or attitudinal changes, to outcomes that reflect the longer-term changes in users/audiences. These matrices can provide an additional approach to mapping evidence to connect features of interventions to desired outcomes. Here, we adopt the term ‘feature-outcome matrix’ to draw alignment with logic models, while using the structure of a matrix to simplify the expression of the theory of change. In the sample grid Figure 2, an example matrix is provided of four features, that ‘work towards’ sets of secondary drivers that are associated with our primary drivers or outcomes, in achieving our overall aim or impact. In this case, the number of features is arbitrary; some interventions may be simpler, others more complex, and in some cases in mapping it may become obvious that some features are not connected to a particular outcome (or, more concerningly, to any outcome). The matrix is intended as an improvable object for stakeholder dialogue (Twiner, 2011), used to iteratively develop a theoretical model, and to support evidence synthesis and triangulation. Blank feature-outcome model for mapping design propositions made regarding the mechanisms connecting features to outcomes.

Design-Based Research: Things and People

People and Stories

Across design research there is a significant attention to the role of stakeholders in understanding and addressing the problem space. A range of approaches (e.g., Cukurova et al., 2019; Weatherby et al., 2022; Wilson et al., 2017) suggests engaging stakeholders in: (1) identifying the intended outcomes of the tool or intervention, or challenge being addressed; (2) scoping how the work will address that challenge and any staged iteration; (3) and testing of implications for practice and implementation of any review or development research, including consideration of the kinds of resources and processes required for change. The models described in the preceding section offer a tool throughout this process. Alongside these resources, in recent work to connect design approaches, to implementation science, Lyon et al. (2021) outline the potential of integrating cognitive walkthroughs – an approach not used previously in evaluation and implementation strategies – to develop a ‘Cognitive Walkthrough Implementation Strategy (CWIS)’. The CWIS is intended, as a pragmatic approach to probe implementation usability, following the broad approach outlined in Figure 3. Lyon’s ‘Overview of the Cognitive Walkthrough for Implementation Strategies (CWIS) methodology’ (Lyon et al., 2021, p. 4) under a CC-By license.

In CWIS users are asked questions to investigate their expectations within target scenarios or tasks, that help probe assumptions and develop models for synthesis and implementation. These include (1) analysis of the preconditions or implementation contexts for the intended use of a tool or intervention (an approach which might be complimented by identifying situations in which an implementation might be ‘challenging’); (2) analysis of the tasks and subtasks that are required to use a tool, at a level that is meaningful to users; (3) analysis of how important each of these tasks is and how likely users are to experience challenges. Crucially, this analysis is then converted to a scenario form, to represent the key context of implementing a tool or intervention, and the key role-specific tasks that a user might engage in, with scenarios providing key information such as the task objective, materials available, etc. with a visualisation – such as a user interface or its representation. These scenarios are then tested with users, with these tests (via semi-structured interviews, or survey instruments) analysed for key issues identified.

The scenarios developed through CWIS serve two purposes, (1) to help identify the key tasks and stages of a tool use, and (2) to gain design information regarding the implementation of these tasks, and stakeholder feedback on possible issues and tool use. In this way, the CWIS approach tests assumptions underlying theories of change and their evidence both through operationalising these theories into practical scenarios, and through user testing with those scenarios. This approach draws on a body of design research around the use of task scenarios for purposes ranging from design rationales, requirements elicitation and specification into designs, and evaluation (Rosson & Carroll, 2009 identify 11 example uses of scenarios throughout system development, p. 28).

Section Lessons: Claims Analysis and Scenarios; Connecting Abstractions to Situations

Beyond the established uses of CWIS (or, the underlying cognitive walkthrough approach), adaptations of CWIS may also hold additional benefits. As highlighted by Haddaway et al. (2017) storytelling can play a set of particular roles in evidence synthesis, outlined in Figure 1. Development of scenarios, using an approach such as CWIS, is one method to create stories that help to identify stakeholder needs, to identify questions. It also provides a way to connect these stories to specific aspects of a synthesis, and to triangulate the evidence synthesis through user interviews. Moreover, scenario approaches may be useful in multi-stage interviews, where design changes – emerging from an evidence synthesis – may be piloted using prototypes that reflect the results of an earlier round of interviews and synthesis. That is, scenarios can serve two key purposes: (1) Guide evidence synthesis. Scenarios provide a way to embed evidence into scenarios of relevance to stakeholders, including through creating ‘typical’ and ‘complex’ cases, or variations on implementations. Expressing scenarios in these terms helps to identify the key features that must be sought in literature. (2) Test and triangulate the synthesis and its implications with stakeholders, through interviews – perhaps in iterations – that probe how users would engage with an intervention, triangulate responses with evidence, and test design variations.

Scenarios also help to define the purpose of the synthesis, to support evidence appraisal, with ‘relevance’ in mind using the “research worth using” approach (Ming & Goldenberg, 2021) (§Building Practitioner Capacity through Quality Evidence). One approach to aligning scenarios to the design propositions (§Design for Change), and developing a clear mapping of stakeholder concerns to the evidence synthesis, in a way that may be used for other output varieties (such as overview ‘Frequently Asked Questions’ FAQ documents) is through mapping the propositions to questions.

That is, for each cell in the feature-outcome matrix, identify the key questions that underpin the cell(s) (which may not be a 1:1 relationship). It may be useful to group these, for example with respect to key users or constructs, contexts and systems, or implementation concerns, as indicated in the Figure 4 template (see also Supplement 4). Some questions may lend themselves to scenario development more than others (e.g., implementation questions). Mapping questions to a feature-outcome matrix.

Discussion and Conclusion: Pragmatic Evidence Synthesis Matrices

Model Outline

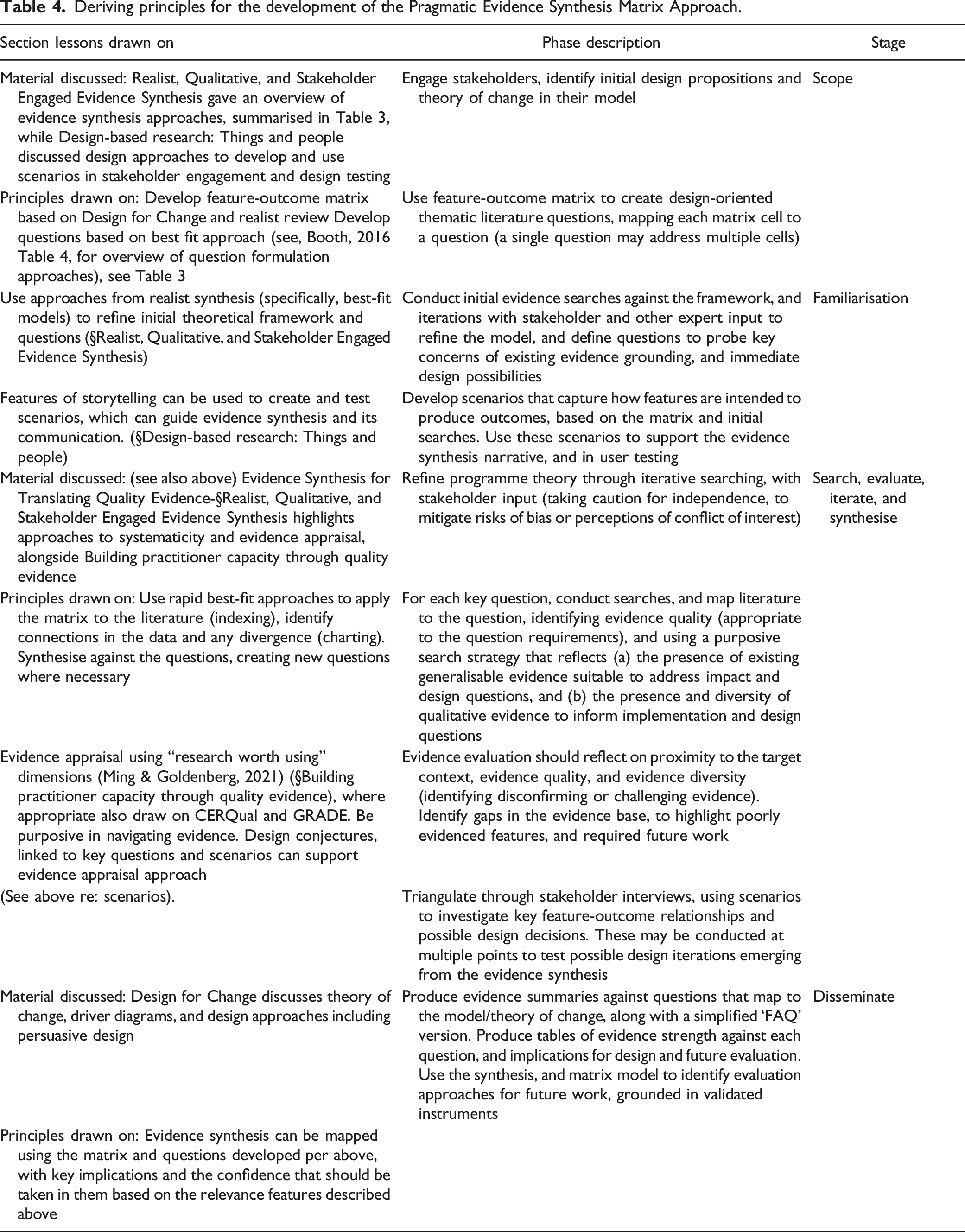

Deriving principles for the development of the Pragmatic Evidence Synthesis Matrix Approach.

Drawing on methodological lessons from evidence synthesis literature and its material tools, Supplement 2 provides a checklist model for this PESM approach, alongside comparison to extant checklist models (drawing on Booth, 2016; Booth, 2006; Rethlefsen, et al., 2021; Tong et al., 2012). Supplement 3 further elaborates the model adapting the RAMESES item template (Wong et al., 2013) for an individual synthesis, providing a template and guidance on issues such as evidence strength (see van der Bles et al., 2019). PESM thus involves iterative development of (1) key questions developed via the feature-outcome matrix (Supplement 4); (2) evidence syntheses (which are informed by, and can inform the key questions), which should be addressed via Supplement 2 and 3; (3) scenarios, that help to focus both the key questions and synthesis, and to frame these for practice; and (4) stakeholder interviews that triangulate synthesis outputs. This approach may be facilitated through the use of tools that help in managing disaggregated elements of an evidence synthesis, and aggregating these into a suitable final output when required 7 .

The PESM approaches draws on design approaches, scenarios and narrative, to integrate and synthesise evidence that is appraised clearly in a manner appropriate to the claims it is evaluated against within a theory of change model. It aims to be pragmatic in providing insight into action (qua pragmatism), and in the everyday sense of being practically oriented towards stakeholder needs, and resource constrained environments where lengthy systematic reviews may not be feasible or appropriate.

Discussion and Conclusions

Across sectors, including education and educational technology, the call for evidence informed practice is growing. The benefits of evidence use, and risks of not being evidence informed, are recognised, and thus the pressures to use evidence have emerged in policy, professional practice, and research translation. However, top down strategies to evidence dissemination may result in policies that impose strategies without engagement with the underlying evidence, or reviews and translational pieces that do not connect effectively with practice. Moreover, there is a gap between approaches to developing evidence syntheses that on the one side are targeted but may not have wider relevance (e.g. literature reviews, or industry reports in that genre), and on the other, abstracted, without connection to site specific context or local proto-theorisation and practices in need of evidence. The approach set out in this paper is intended to address this gap, by drawing on existing approaches in evidence synthesis, intervention design and evaluation, and design-based approaches, to model intended outcomes against features, understand this model in terms of design conjectures or propositions that can be expressed as questions, and draw on evidence – using a best fit approach – to make clear where evidence connects (and does not) to these propositions. These syntheses inform design, evaluation, and stakeholder engagement through making feature-outcome relationships explicit, and through scenarios that help navigate these relationships and possible design changes.

This PESM approach builds on realist synthesis approaches, and their strengths, while of course also suffering from the limitations of such approaches; neither are intended to replace or substitute for systematic reviews or other forms of synthesis where that level of systematicity is required. There are skills needs in developing any kind of evidence synthesis, although PESM has advantages here insofar as it is intended to build on models and tools that are relatively familiar to many researchers, rather than specialised software or review procedures. In developing the approach, it is hoped that effective use and mapping of evidence can be supported in a wider range of research engagements than might traditionally be served by systematised evidence synthesis approaches, contributing an additional tool in the drive for evidence informed practice.

Supplemental Material

Supplemental Material - Identifying and Designing Evidence-Informed Practice, in Practice: The Case for Pragmatic Evidence Synthesis Matrices (PESM)

Supplemental Material for Identifying and Designing Evidence-Informed Practice, in Practice: The Case for Pragmatic Evidence Synthesis Matrices (PESM) by Simon Knight in International Journal of Qualitative Methods

Supplemental Material

Supplemental Material - Identifying and Designing Evidence-Informed Practice, in Practice: The Case for Pragmatic Evidence Synthesis Matrices (PESM)

Supplemental Material for Identifying and Designing Evidence-Informed Practice, in Practice: The Case for Pragmatic Evidence Synthesis Matrices (PESM) by Simon Knight in International Journal of Qualitative Methods

Supplemental Material

Supplemental Material - Identifying and Designing Evidence-Informed Practice, in Practice: The Case for Pragmatic Evidence Synthesis Matrices (PESM)

Supplemental Material for Identifying and Designing Evidence-Informed Practice, in Practice: The Case for Pragmatic Evidence Synthesis Matrices (PESM) by Simon Knight in International Journal of Qualitative Methods

Supplemental Material

Supplemental Material - Identifying and Designing Evidence-Informed Practice, in Practice: The Case for Pragmatic Evidence Synthesis Matrices (PESM)

Supplemental Material for Identifying and Designing Evidence-Informed Practice, in Practice: The Case for Pragmatic Evidence Synthesis Matrices (PESM) by Simon Knight in International Journal of Qualitative Methods

Supplemental Material

Supplemental Material - Identifying and Designing Evidence-Informed Practice, in Practice: The Case for Pragmatic Evidence Synthesis Matrices (PESM)

Supplemental Material for Identifying and Designing Evidence-Informed Practice, in Practice: The Case for Pragmatic Evidence Synthesis Matrices (PESM) by Simon Knight in International Journal of Qualitative Methods

Footnotes

Acknowledgements

The model described in this paper is connected to, among other projects, the funded research project ‘Developing the Evidence Base for a School Wellbeing App’, funded by iyarn with matched funding from the NSW Government Tech Voucher scheme. The author is grateful to Lachlan Cooke (iyarn founder and director), for the opportunity to collaborate. The author is also grateful to Peter Lee, Monique Potts, and Clara Mills (of UTS), and Paula Robinson (of APPLI) for their work on the project. The work described in this paper is a culmination of thinking over a number of projects, which coalesced around the project as a productive space for thinking. The paper also draws on thinking with colleagues in the Connected Intelligence Centre, including Professor Simon Buckingham Shum, Associate Professor Kirsty Kitto, Dr. Shibani Antonette, Dr. Sophie Abel, and Dr. Andrew Gibson, to whom I extend my thanks. Aspects of the paper draw on work conducted in my capacity as co-editor-in-chief of the Journal of Learning Analytics, and productive discussions with Professors Alyssa Wise and Xavier Ochoa, regarding impact in the field, and whether the research we have is the research we need. My thanks to Kristine Deroover, and Dr. Hossai Gul of the UTS TD School for useful discussions regarding evidence synthesis approaches. An example of a report generated using the method described is available (Knight et al., 2020) with thanks to the instigators of that project. As noted in the acknowledgements, aspects of the approach have informed/been informed by other projects.

Author Contributions

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Iyarn with matched funding from the NSW Government Tech Voucher scheme, ‘Developing the Evidence Base for a School Wellbeing App’. The Australian Technology Network Excellence in Teaching and Learning Grants Building ATN Institutional Capacity for Text Analytics. Australian Research Council (ARC) Discovery Early Career Award (DECRA) Fellowship (DE230100065), held by Associate Professor Simon Knight.

Supplemental Material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.