Abstract

The study aimed to provide a detailed description of a process to conduct a phased principle-based concept analysis and to introduce quality criteria assessment for a phased principle-based concept analysis. Concept analysis explores how a concept is described, used and measured in the literature. This conceptual understanding is important to guide translational research to direct the development of evidence-based practice. The principle-based concept analysis is one approach of concept analysis used in published work, but the literature is lacking in articles clearly describing how to conduct it in practice. This article provides a methodology utilising a phased approach and by advancing on previous work; this approach includes a combination of a systematic search, quality criteria and qualitative analysis with principle-based concept analysis. Quality criteria for a phased principle-based concept analysis is introduced to critically assess articles against the four principles: epistemology, pragmatic, linguistic and logical. These improvements to the methodology promote transparency, rigour and replicability. This comprehensive systematic approach will aid future phased principle-based concept analyses and enable future comparisons of concept development, advancement and related concepts to improve the evidence base.

Keywords

Introduction

Concepts are mental abstractions or units of meaning derived to represent some aspect or element of the human experience (Chinn & Kramer, 1995; King, 1988; Penrod & Hupcey, 2005). The purpose of a concept analysis is to analyse, define, develop and evaluate a concept (Delves-Yates et al., 2018) and should be undertaken to achieve a better understanding of the concept (Foley & Davis, 2017). It is an activity where a concept’s characteristics and relations to others are clarified (Nuopponen, 2010), with the aim to provide a definition (e.g. Penrod & Hupcey, 2005; Rodgers, 1989).

Deciding on which concept analysis approach to use can be difficult due to the various approaches developed over the years (Hupcey & Penrod, 2005). A scoping review conducted by Rodgers et al. (2018) listed the various concept analysis approaches used. The top three methods were the Wilson Method (Walker & Avant, 2005), the Evolutionary Method (Rodgers, 1989) and principle-based concept analysis (Morse et al., 1996).

The Wilson Method follows a step process and has been critiqued for a lack of rigour, failing to describe how the steps are integrated (Hupcey et al., 1996) and despite adaptations, it has been critiqued for not essentially producing documentation of a scientific nature (Hupcey & Penrod, 2005). Yet, it has been noted to enhance critical thinking (Hupcey & Penrod, 2005). The Evolutionary Method’s (Rodgers, 1989) traditional step-by-step linear approach has been stated to be limiting (Smith et al., 2020). However, the method has undergone various adaptations to promote clarification, rigour and transparency in recent publications using fluid phases (e.g. Delves-Yates et al., 2018; Smith et al., 2020; Tofthagen & Fagerstrøm, 2010) which follows an iterative process by enabling researchers the flexibility to return to a previous stage or phase to reconsider decisions based on new data and alter if required (Delves-Yates et al., 2018). The principle-based concept analysis is an appealing method because it analyses evidence found in the scientific literature, thus being evidence-based, to determine what is known about a concept (Hupcey & Penrod, 2005). Penrod and Hupcey (2005) stated that its use has been limited and the method continues to be less used in comparison to other methods such as the Wilson Method (Walker & Avant, 2005) and the Evolutionary Method (Rodgers et al., 2018). This has prompted an exploration of the principle-based concept analysis method.

Descriptions of the Four Principles.

According to Hupcey and Penrod (2005), the principle-based concept analysis is a method that demands the researcher to analyse scientific meaning (not everyday notions) and to think critically (not imaginatively), as other methods have been critiqued (e.g. Wilson Method). To accept a concept as probable truth, it is acknowledged that there are multiple realities and worldviews with history, context and perspective shaping knowledge (Russell, 2013). This probable truth reflects the state of science surrounding a concept at a particular point in time: as science evolves, so does the scientific concept. Therefore, concept analysis is not a static product (Waldon, 2018). Any lack of conceptual understanding highlights where further research is required to advance the definition of the concept of interest. Penrod and Hupcey (2005) stated that this enables the researcher to determine how to strategically advance the concept of interest by addressing identified gaps or inconsistencies. This process also identifies suitable measures for a concept, or if one cannot be identified, will provide evidence to develop such an instrument thus encouraging the development of valid and reliable instruments to capture a concept.

The principle-based concept analysis has been noted to be robust (O'Malley et al., 2015) and is claimed as one of the most thorough methods to conduct concept analysis available for analysing the state of science (Bernard, 2015; Penrod & Hupcey, 2005). Yet, it has been noted to have indistinct guidelines (Smith et al., 2020). Various published principle-based concept analyses highlight the lack of empirical examples on how to operationalise this method with many unclear presentations. For example, some of the principle-based concept analysis studies include brief descriptions of the ways in which each of the principles were evaluated or critically analysed, but it remains unclear how this was achieved with each of the principles (e.g. Bicking Kinsey & Hupcey, 2013; Fenstermacher & Hupcey, 2013; O'Malley et al., 2015; Smith et al., 2013), highlighting a gap in the literature. In support, Rodgers et al. (2018) stated that many concept analyses have a possible lack of rigour, restricted scope and fail to approach conceptual work in a systematic way that leads to more useful and relevant concepts and theories. Similarly, Beckwith et al. (2008) argued that few concept analysis frameworks have the necessary analytical depth, rigour and replicability to enable the theoretical development claimed for them. Currently, the principle-based concept analysis lacks rigour and transparency due to poor systematic description of its methods.

Tofthagen and Fagerstrøm (2010) highlighted the importance of using quality criteria in Rodgers’ evolutionary concept analysis approach to determine the inclusion of material and stated it should be a requirement for further development of the method. More recently, principle-based concept analysis studies have been conducted using systematic searches, screening, data extraction and analysis strategies. This has also included being guided by questions to review the literature (e.g. Jaruzel & Kelechi, 2016) and using data extraction sheets or tools (e.g. Nevin & Smith, 2019; Ruel & Motyka, 2009; Salehian et al., 2016; Waldon, 2018). Waldon’s (2018) data collection and Nevin and Smith’s (2019) data extraction tools ask questions about the principles, which not only helps to understand the principles but also to review and extract the data in relation to the principles. Thus, combining principle-based concept analysis and advancing current questioning approaches to a quality criterion has the potential to strengthen this approach further and increase rigour and transparency.

Due to the decreasing use and risk to forsake a robust method with the potential to advance scientific concepts, there is a need to explore the principle-based method in more detail and attempt to improve the explicit description of its methods, rigour and use. Combining a systematic search, quality criteria and qualitative analysis with principle-based concept analysis will make this approach more rigorous and transparent, thus potentially increasing its use.

Aim

The study aimed to improve and describe the methodological procedures used to conduct a phased principle-based concept analysis.

Method

Design

An explorative and descriptive process was used to advance existing guidelines and improve the principle-based concept analysis methodology. Previous principle-based concept analyses were reviewed regarding methods for systematic search, quality criteria, data analysis, conceptual components and maturity in order to provide improvements and a detailed description.

Systematic Search

The original guidelines from Penrod and Hupcey (2005) mention collecting the scientific literature from disciplines that are considered applicable to the inquiry, however do not advise clearly how to do this. They do mention recording search parameters and databases used, but how to do this is missing. By also limiting the search to disciplines that the researchers may consider applicable may exclude disciplines that have not been considered that could provide insight into the concept of interest.

More recent principle-based concept analyses have used systematic searches and screening processes (e.g. Beecher et al., 2020; Jordan et al., 2020; Nevin & Smith, 2019; Solli et al., 2012; Waldon, 2018), with most not referring to existing procedures that are available (e.g. Nevin & Smith, 2019; Solli et al., 2012; Waldon, 2018). For example, many principle-based concept analyses referred to filtering and search results that resembled the Systematic Reviews and Meta-Analyses (PRISMA) (Moher et al., 2009; Page et al., 2020). More recognised structured approaches may improve the transparency and rigour and recognition of principle-based concept analysis.

Principle-based concept analysis uses scientific literature not lay literature or other forms of representation (Hupcey & Penrod, 2005). The majority of principle-based concept analyses only focus on using data retrieved from peer-reviewed databases, with many excluding grey literature and reviewing only scientific literature in line with the tenets of principle-based concept analysis (e.g. Fenstermacher & Hupcey, 2013). Yet, recent publications have included grey literature (e.g. Nevin & Smith, 2019). Grey literature can consist of reports, theses, conference proceedings, technical specifications and standards, translations, bibliographies, technical and commercial documentation, and official documents (Alberani et al., 1990). It is therefore possible to use grey literature which covers concepts that may be less researched and that require relying on literature that has not been peer-reviewed.

Quality Assessment

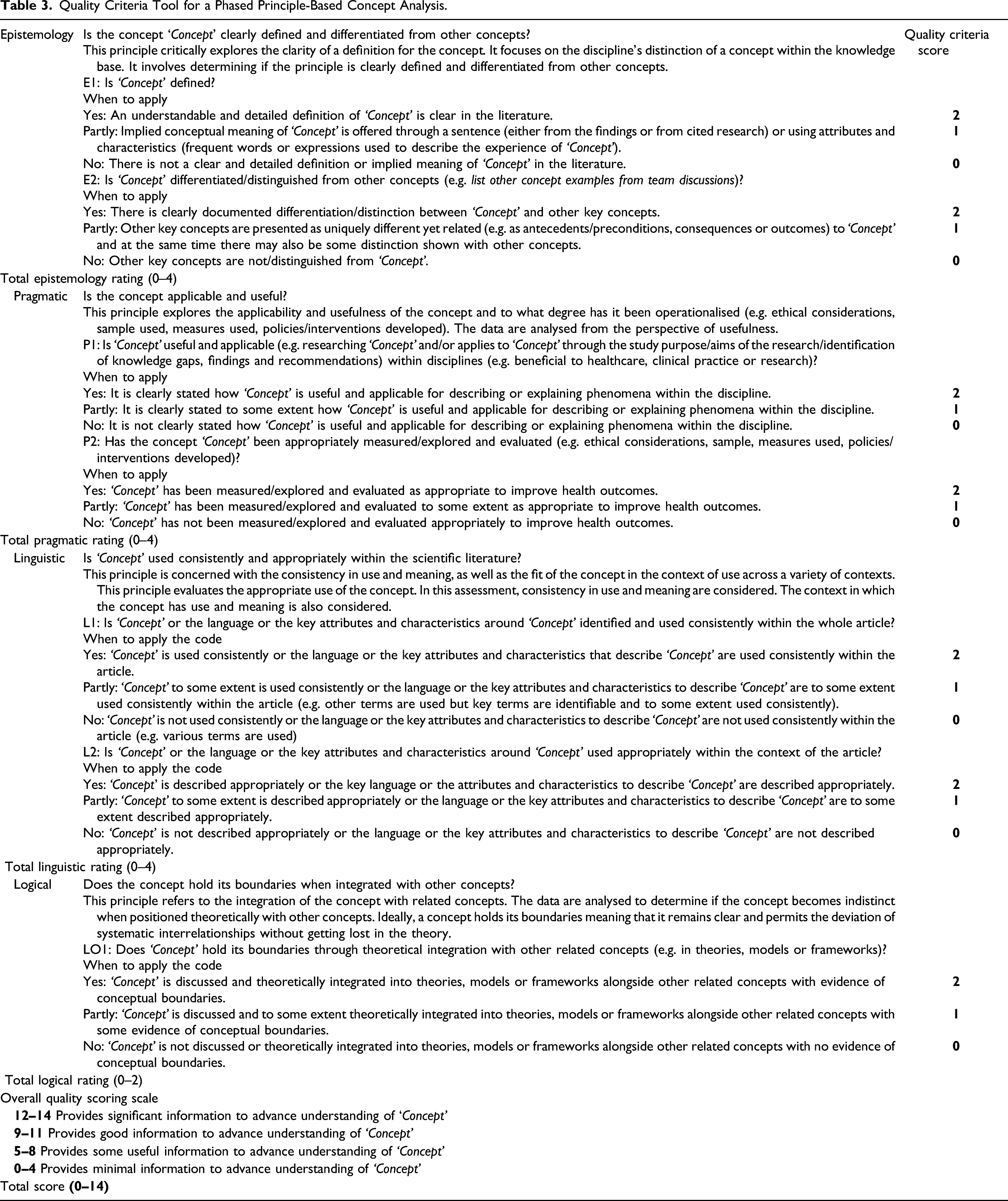

Penrod and Hupcey (2005) stated that evaluative criterion must be considered, especially when facing large data sets but do not mention how to do this. Quality assessing final articles selected for a principle-based concept analysis using recognised tools such as the Strengthening the Reporting of Observational Studies in Epidemiology (STROBE) checklist (von Elm et al., 2007) for quantitative studies and the consolidated for reporting qualitative research (COREQ) checklist (Tong et al., 2007) for qualitative studies (e.g. Dehghani et al., 2018) were reviewed. Yet, existing tools are not suitable or developed for principle-based concept analysis. The principle-based concept analysis method asserts that concept analysis must be held as a separate and unique research endeavour, and critical appraisal is therefore not required (Penrod & Hupcey, 2005). However, it can be argued that principle-based concept analysis includes an appraisal assessment. For example, the pragmatic principle critically assesses if a concept is useful, applicable and appropriately measured/explored (see Table 1).

Data collection and extraction tools have more recently been used in principle-based concept analysis studies. Waldon’s (2018) data collection tool and Nevin and Smith’s (2019) data extraction tool were reviewed yet advancements by combining the two tools were noted as each tool covered different aspects. For example, Waldon (2018) included examples of the data in relation to the question that covers transparency, and Nevin and Smith’s (2019) tool includes an overall quality rating which highlights if an article is strong in a particular principle or not. This provided an opportunity to advance and develop a quality criteria assessment tool for principle-based concept analysis based on these tools.

Developing a Tool for Quality Criteria

Questions to the principles from Waldon’s (2018) and Nevin and Smith’s (2019) studies were combined, any noticeable repetition of questions removed, and the remaining questions were added to an Excel spreadsheet to be piloted. Similar to Nevin and Smith (2019), the type of report, country of origin and study aim were recorded. Additional information including the first author’s surname, year of publication, the method used, setting, number of participants in the study were recorded in the tool.

Three researchers piloted the tool over three online meetings. Reviewing a small number of articles at each meeting enabled any queries to be covered to aid the following meetings, comparison of findings and development of the tool. The researchers’ expertise included one having explored the principle-based concept analysis method in detail, the second researcher is experienced in conducting and leading a concept analysis and the third researcher is experienced in clinical research. Articles included in the pilot test attempted to cover research conducted in different countries and disciplines, and a mixture of qualitative, quantitative and mixed-methods research to ensure a variety of research was reviewed.

The three researchers were initially emailed the articles to be piloted. Before each meeting, the results of the reviews were collated and emailed to the researchers. Similarities and differences of findings against the questions were discussed and compared at each meeting and relevant revisions to the tool were made. Over the meetings, the tool was simplified and similar questions within and across each principle were removed and the most appropriate questions were agreed upon and retained under the most suitable principle as it was noticed that some principles were interrelated.

Questions were merged when appropriate, examples added to the questions, and ‘when to apply the code’ statements were added for all three answers (Yes, Partly or No) to enhance clarity. Similarly, some of the wording was simplified, for example, ‘well’ from ‘well-defined’ and ‘well-differentiated’ was removed. The overall quality rating using a four-point alphabetical score proposed by Nevin and Smith (2019) was found to be subjective and the rating would benefit from a score rating to differentiate articles. The scores for each question were decided as yes - 2 points, partly - 1 point and no - 0 points. The scoring scale was based on the researcher’s subjective rating using the alphabetical rating and linked to a score rating. Due to the new scoring system, the alphabetical rating was removed.

The scoring of each question enables each principle to have a total number based on the number of questions and to be individually rated which highlights if it is strong in a particular principle or not. Penrod and Hupcey (2005) stated that evaluative criterion must be considered when facing large data sets and the appropriateness of the derived sample but do not highlight how to do this. The quality criteria tool may be used to assist this issue.

Data Analysis

The analysis sections of many principle-based concept analyses were found to be poorly described. In some, the process is briefly mentioned, or at times qualitative methods are often referred to but the process is not described. Gilmore-Bykovskyi et al. (2019) used a mixture of concept analyses methodologies including principle-based concept analysis and used both content and thematic analysis. In other methods of concept analysis which have been advanced, Smith et al. (2020) adapted Rodgers (1989) Evolutionary Method with a systematic integrative review and used a descriptive thematic synthesis (Thomas et al., 2004). Having a qualitative method to follow is important for the transparency and rigour of the findings.

Maturity

Morse’s proposed term ‘maturity’ has been used to label a concept’s level of development (e.g. Fenstermacher & Hupcey, 2013; Mikkelsen & Frederiksen, 2011; Solli et al., 2012), however its use varies in the literature. The level of maturity ranges on a continuum from immature to mature with few descriptive labels to describe the variation (Penrod & Hupcey, 2005). The confusion occurs when Penrod and Hupcey (2005) stated that rather than relying on a label of maturity, the focus should be on the evaluation of the state of the science and the best estimates of probable truth surrounding the concept at that point in time. Yet, later in the same article, they describe the four principles in relation to their maturity. They also advocate in other work to dismiss maturity and stated that concept analysis is an integration of what is known, not an evaluation of quality or maturity of the concept (Hupcey & Penrod, 2005) but in a more recent article maturity has been used by one of the authors again (Fenstermacher & Hupcey, 2013). From the published literature it appears the use of maturity can be used and dismissed. Hupcey and Penrod (2005) stated the need to establish criteria for the evaluation of the level of maturity of concepts, with maturity defined as ‘a concept which is defined, has clearly described characteristics, delineated boundaries and is based on the four principles’ (Morse et al., 1996, p. 387).

Conceptual Components

The conceptual components are not included in the original guidelines of Penrod and Hupcey (2005) but have been used later by various published concept analyses (e.g. Bicking Kinsey & Hupcey, 2013; Fenstermacher & Hupcey, 2013; Mikkelsen & Frederiksen, 2011; O'Malley et al., 2015; Russell, 2013; Smith et al., 2013; Solli et al., 2012; Steis et al., 2009; Waldon, 2018). In the studies that include the conceptual components, more commonly, the conceptual components are presented after the review of the principles (e.g. Bicking Kinsey & Hupcey, 2013; Fenstermacher & Hupcey, 2013; O'Malley et al., 2015; Russell, 2013; Smith et al., 2013; Steis et al., 2009; Waldon, 2018). Other literature has presented the conceptual components before the principles (e.g. Mikkelsen & Frederiksen, 2011) or incorporated with the linguistic principle (e.g. Solli et al., 2012). Similar terms for the conceptual components are used and vary from, for example, preconditions, characteristics and outcomes (e.g. Smith et al., 2013; Steis et al., 2009; Waldon, 2018), boundaries, preconditions, and outcome (e.g. Mikkelsen & Frederiksen, 2011), preconditions and outcomes (e.g. Nevin & Smith, 2019; Solli et al., 2012) or antecedents, attributes and outcomes (e.g. Fenstermacher & Hupcey, 2013). Whereas other published principle-based concept analyses do not include conceptual components (e.g. Jaruzel & Kelechi, 2016; Ruel & Motyka, 2009; Sadlon, 2018; Salehian et al., 2016). Smith et al. (2013) stated that they were guided by previously published work by Steis et al. (2009) and note that they go beyond the summative conclusions presented in the four principles and the conceptual components of the concept are important for how the construction of the concept is considered. Fenstermacher and Hupcey (2013) highlighted this process is informed by the findings from each precept of the principle-based concept analysis and the conceptual components of the concept can be organised to include antecedents, attributes and outcomes and that these conceptual insights contribute to the final product of the principle-based concept analysis: a theoretical definition.

In summary, the principle-based concept analysis approach represents a reduction of the data (the literature). Initially, the four principles (Table 1) are reviewed and summarised. Followed by the conceptual components (the construction of the concept), where the preconditions (phenomena or events that precede an instance and that influences the concept), characteristics/attributes (frequent words or expressions used to describe the experience of the concept) and outcomes (the consequences that follow the occurrence of the concept) are reviewed (Waldon, 2018). Finally, a theoretical definition is produced.

Results

A Phased Approach to Conducting a Principle-Based Concept Analysis.

Phase 1: Preparation Phase

Phase 1; Stage 1: Determine the Concept of Interest

As noted with the original method, the starting point of a principle-based concept analysis is to determine the concept of interest to collect the scientific literature (Penrod & Hupcey, 2005). However, additional considerations include what the gaps are related to the definition of the concept, if the concept has already had a concept analysis conducted before, and if a previous concept analysis requires being updated.

Previous principle-based concept analyses include women’s experiences of their maternity care (Beecher et al., 2020), telecare (Solli et al., 2012), perinatal bereavement (Fenstermacher & Hupcey, 2013), recognition in the context of nurse–patient interactions (Steis et al., 2009), advanced practice nursing (Ruel & Motyka, 2009), relief from anxiety using complementary therapies in the perioperative period (Jaruzel & Kelechi, 2016), frailty in older people (Waldon, 2018) and non-specialist palliative care (Nevin & Smith, 2019) to name a few.

Phase 1; Stage 2: Develop a Protocol

Developing a protocol has been added to the original guidelines. A protocol should be developed outlining the study’s inclusion/exclusion criteria and the search strategy, quality criteria and analysis procedures.

Phase 1; Stage 3: Systematic Literature Search

Principle-based concept analysis can include qualitative, quantitative and mixed-methods research and grey literature. Guidelines such as the Centre for Reviews and Dissemination guidelines for the University of York, UK (CRD, 2009) can be followed which have been used in a previous principle-based concept analysis (Dehghani et al., 2018). Other suitable guidelines include Cochrane (e.g. Higgins et al., 2020) for quantitative studies or Joanna Briggs Institute (e.g. Lockwood et al., 2020) for quantitative and qualitative studies. Including such procedures also enables future updates and comparisons of the concept.

Phase 1; Stage 4: Screen Articles

This section on screening articles requires clear inclusion/exclusion criteria as outlined in the protocol phase 1; stage 2. The screening process can follow the recognised guidelines such as the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) (Moher et al., 2009; Page et al., 2020). Results of the databases can be exported into software such as Endnote (The EndNote Team, 2013) and Covidence (Covidence, 2021). After duplicates are removed, articles should be screened by two reviewers independently to enhance the reliability and validity of the screening process. Screening can be conducted in, for example, Endnote (The EndNote Team, 2013) or through Rayyan (Ouzzani et al., 2016) depending on the researchers' preferences. Initially, the title and abstract of the article will be reviewed and articles not fitting the inclusion/exclusion criteria will be removed. At this stage, the reviewers will meet to compare their results. The resultant articles will undergo a full-text review to determine if they meet the inclusion criteria. The reviewers will again compare the results of their full-text review. Hand searches of the reference lists of the resultant articles will be checked for any possible articles meeting the inclusion criteria. The reviewers will meet again to compare their results. Any articles that result in different opinions should be reviewed by a third reviewer who will make the final decision of inclusion.

Phase 2: Analysis Phase

Phase 2; Stage 1: Initial Note-Taking

Each eligible article will be reviewed. At least two reads are recommended. On the first read, notes or text can be highlighted in the article itself on anything of interest. Definitions and/or terms or associated terms/characteristics to the concept should be noted. For example, Ruel and Motyka (2009) stated that three characteristics distinguished advanced practice nursing from basic nursing: advancement, specialisation and expansion. Notes should also be made separately and consist of the definitions and/or terms used and associated with the concept and points made about each principle. Brief notes under each principle consist of (1) Epistemology – if any definitions or part definitions about the concept were made and/or characteristics/attributes used, (2) Pragmatic – tools and methods used to measure or explore the concept, (3) Linguistics – notes on consistency and use of terms and (4) Logical – any theories mentioned around the concept. Notes should also be made for the conceptual components identified in the articles that will be covered in Phase 3; Stage 3.

Phase 2; Stage 2: Adapt and Pilot Test the Quality Criteria Tool

Quality Criteria Tool for a Phased Principle-Based Concept Analysis.

We recommend that the team members have at least three team meetings to pilot test the tool. The number of articles to review in the pilot test is up to the team’s discretion, however, we recommend reviewing at least two articles for each meeting. This process is flexible, and the following provides suggestions on the process.

Meeting 1 is recommended to focus on completing the tool with the concept of interest and discussing suitable examples for the questions in relation to the concept. In reviewing the articles with the tool, we recommend following three review steps: (1) Read each article twice to ensure full understanding of the content. (2) Complete the tool and answer the questions to the four principles for each article outlined in Table 3 and provide brief explanation/evidence for the decision. Answers to the questions are either ‘Yes’ a score of 2, ‘No’ a score of 1 or ‘Partly’ a score of 0. (3) Provide an overall rating for the article as outlined in Table 3. The overall score will fit into one of the four categories either ‘significant’, ‘good’, ‘some’ or ‘minimal’ information to advance the understanding of the concept of interest as outlined in Table 3.

Meeting 2 is recommended to review the tool’s examples and findings. Discussions can occur on the suitability of the examples, and similarities and differences of the findings between the team and comparison of the scores, etc. Any amendments to the tool from the discussions will be made by the facilitator of the team and the team members to be provided with a revised tool, if necessary, for the next meeting. Meeting 3 and any additional meetings will follow the process as outlined in meeting 2 to finalise the completion of the tool. It is recommended to include a variety of articles to be reviewed, for example, to cover research from different countries, using different methods (qualitative, quantitative, mixed method) and research from different disciplines.

Phase 2; Stage 3: Quality Criteria Assessment

Quality criteria for a phased principle-based concept analysis has been added to the original approach. The quality criteria assessment is a short tool to aid researchers to become familiar with the four principles and to review the literature relevancy to the principles. The tool provides an overview on each of the four principles and includes two brief questions each for the epistemological, pragmatic and linguistic principles and one brief question for the logical principle. Each question includes a choice and score of Yes (2), Partly (1) or No (0). A score for each principle and an overall score is provided. See Table 3 for the tool and the overall rating guidelines. All the articles should be reviewed against the tool. Completing the tool in practice will involve reading through the article again and answering the questions in each principle.

The decision to include or exclude articles should be outlined in the protocol. The research team will need to consult on the eligibility of articles. For example, to include all articles or higher scoring articles based on the overall rating scale as outlined in Table 3.

Phase 2; Stage 4: Integrate Data

This section adds to the original approach of Penrod and Hupcey (2005) to describe how the data is managed and analysed.

The relevant data from the included studies should be coded to the four principles using a deductive approach with the four principles as a framework. Qualitative data management software such as NVivo (QSR International Pty Ltd, 2018) is recommended to manage the data. Data can also be coded to preconditions and outcomes to assist the conceptual components. If the researcher is including characteristics in the conceptual components, this will be captured in the epistemological principle that can be referred to for the conceptual components which will avoid double coding and repetition. It is recommended to also code any recommendations made that may be included in the pragmatic principle and discussion.

During coding, the initial notes, key terms and associated terms noted can be referred to. Once all the articles are reviewed each principle will be reviewed individually and data reduced to common themes and defining theme labels. To ensure rigour, researchers should follow an inductive qualitative approach in this stage, for instance, reflexive thematic analysis (e.g. Braun & Clarke, 2019) or content analysis (e.g. Krippendorff, 2013), or Framework Analysis (e.g. Ritchie & Spencer, 1994) depending on the concept to be defined.

Stages 1–4 in phase 2 are iterative and with each stage, if the information is noted for an earlier stage, it can then be recorded in the earlier stage.

Phase 3: Results Phase

Phase 3; Stage 1: Quality Criteria Findings of the Included Articles

Quality criteria for a principle-based concept analysis has been added to the original approach. The results of each article against the four principles and their relevant questions should be presented in a table in the results section, including each principle’s score and the overall scores for each article.

Phase 3; Stage 2: Summative Conclusions of the Four Principles

As with the original method, presenting the findings includes presenting each principle as a summative and integrated conclusion of the data as part of the analysis. Researchers will be guided on the qualitative method chosen on presenting the findings. For example, if using reflexive thematic analysis (Braun & Clarke, 2019), each principle may be presented using the themes and/or sub-themes.

Phase 3; Stage 3: Conceptual Components

Informed by the findings from the principles, the data will be further explored by the conceptual components. We recommend using the terms preconditions, characteristics and outcomes. The preconditions will describe the phenomena or events that precede an instance and that influences the concept (Waldon, 2018). The characteristics are the frequent words or expressions used to describe the experience of the concept (Waldon, 2018). The outcomes highlight the consequences that follow the occurrence of the concept (Waldon, 2018).

Phase 3; Stage 4: Theoretical Definition

As with the original method proposed by Penrod and Hupcey (2005), the final product of the principle-based concept analysis is a theoretical definition. Penrod and Hupcey (2005) stated the theoretical definition integrates an evaluative summary of each of the principles. The summaries of the four principles and the conceptual components contribute to the theoretical definition (Fenstermacher & Hupcey, 2013). The analysed data of the summaries from each principle and the conceptual components will be integrated to develop the definition.

The overview of the principle-based concept analysis along with the strengths and limitations, further development of the concept and conclusions can be outlined in the discussion.

Discussion

This article aimed to provide a detailed description and improved guidelines of a phased principle-based concept analysis. The principle-based concept analysis has been advanced by providing a combination of a systematic literature search, quality criteria and qualitative analysis to guide the process and enhance transparency and rigour. Triangulation of methods has been stated to enhance the understanding of data findings (Rapport & Braithwaite, 2018; Smith et al., 2020) and strengthens a study’s validity and accuracy of its working techniques (Rapport & Braithwaite, 2018; Teddlie & Tashakkori, 2003) which this methodology now addresses. These additions improve the quality of data integration and synthesis. The search strategy and the data extraction process using the quality criteria tool, qualitative software management and qualitative methods makes the phased principle-based concept analysis more replicable, rigorous, comprehensive and systematic. In previous principle-based concept analyses, the analysis sections are often unclear or not described. Using rigorous qualitative methods such as reflexive thematic analysis (e.g. Braun & Clarke, 2019), content analysis (e.g. Krippendorff, 2013), or framework analysis (e.g. Ritchie & Spencer, 1994) will improve the quality of data integration and synthesis processes.

The quality criteria tool for a phased principle-based concept analysis poses questions to each article, targets the data, promotes transparency listing the evidence to the questions and enables familiarisation with each principle.

This proposed approach attempts to highlight and address the integration of the principles which were not clear previously with existing data collection (Waldon, 2018) and extraction tools (Nevin & Smith, 2019). For example, the linguistic and logical principles draw on the epistemological principle, some of the questions in the logical principle were also covered in the pragmatic principle. When it comes to writing up the findings for both the linguistic and logical principles, the relevant other principles will be referred to. This process also prevents repetition of similar questions in the tool. The tool can be viewed as a multipurpose tool that aids in familiarisation with the data, data extraction and analysis.

This proposed tool is adaptable to future phased principle-based concept analysis. Carter et al. (2014) stated that investigator triangulation involving two or more researchers in the study providing observations and conclusions can confirm findings and breadth with the different perspectives Carter et al., 2014. The combined expertise of the researchers involved in developing the tool was beneficial in deciding the questions and wording to use. It is recommended that future researchers adapting the tool to their concept of interest, pilot test a sample of eligible articles in a team with the expertise to decide on the examples and comparison concepts to use to aid data extraction.

The scoring of the quality criteria tool allows researchers to review articles that are strong in a particular principle or not. In the cases where a large number of articles are eligible for the concept analysis, a decision may be made to include only the higher rating articles for analysis or higher rating articles in the relevant principles. Similarly, the comparison of the same concept being updated over time or associated concepts will be possible using this approach. As Waldon (2018) stated, concept analysis is not a static product and as research evolves so does the concept. Therefore, this methodology enables replicability for future updates of a concept. Future uses of the tool may assist in reducing the number of articles when there is a large result.

Tofthagen and Fagerstrøm (2010) highlighted the issue of quality appraisal tools where quantitative randomised meta-analysis is considered to be on a higher scientific level than qualitative meta-analyses. The benefit of this quality criteria tool is that it treats methods equally due to its focus on principle-based concept analysis. It is noted that Hupcey and Penrod (2005) argued that principle-based concept analysis is about the integration of what is known and not an evaluation of quality of the concept. Yet, it can be argued that the pragmatic principle is about the quality of the research around the concept and thus quality is important to principle-based concept analysis (e.g. whether a concept has been appropriately measured) and through this quality criteria tool, it is possible to highlight those articles that may be stronger in certain principles than others. An additional strength with the quality criteria tool is that it can be used to review conceptual papers to assess how much information on the concept is provided.

The quality criteria tool also has the possibility to be adapted to apply maturity within the scoring scale. This requires further review with future studies using the phased principle-based concept analysis.

Due to the lack of clear accounts on using principle-based concept analysis, it is hoped that this article contributes to explaining the process of a phased principle-based concept analysis whilst helping other researchers use this method.

Conclusion

Concepts guide a discipline by forming the units that comprise and link theory, research and practice (Nevin & Smith, 2019). Having a clear definition encourages the development of valid and reliable instruments to capture the concept and ensures that we are all talking about the same thing. With clinical studies and the development of instruments requiring clarity in the definition of a concept, this approach has the potential to impact future research and provide more valid and reliable research due to a clear definition and process.

This comprehensive approach to conducting a phased principle-based concept analysis is systematic and enhances transparency, rigour and replicability. The description of this process will benefit future researchers interested in using the phased principle-based concept analysis and perhaps increase its use as a method of choice. A quality criteria tool has been introduced and is available for future researchers to use and enable future comparisons of a concept and related concepts. Thus, improving communication between various disciplines and shaping future research to improve the evidence base.

Footnotes

Acknowledgements

We would like to thank Dr James Smith and Dr Charlotte Angelhoff for their involvement and consultation in developing the quality criteria tool for phased principle-based concept analysis.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The research is supported by the School of Nursing and Midwifery at Edith Cowan University and the Nursing Research Department at Perth Children’s Hospital in Western Australia. The professorial position is supported by Perth Children’s Hospital Foundation.