Abstract

In this article, the authors hope to shift the debate in the practice disciplines concerning quality in qualitative research from a preoccupation with epistemic criteria toward consideration of aesthetic and rhetorical concerns. They see epistemic criteria as inevitably including aesthetic and rhetorical concerns. The authors argue here for a reconceptualization of the research report as a literary technology that mediates between researcher/writer and reviewer/reader. The evaluation of these reports should thus be treated as occasions in which readers seek to make texts meaningful, rather than for the rigid application of standards and criteria. The authors draw from reader-response theories, literature on rhetoric and representation in science, and findings from an on-going methodological research project involving the appraisal of a set of qualitative studies.

Introduction

Over the past 20 years, reams of articles and books have been written on the subject of quality in qualitative research. Addressing such concepts as reliability and rigor, value and validity, and criteria and credibility, scholars across the practice and social science disciplines have sought to define what a good, valid, and/or trustworthy qualitative study is, to chart the history of and to categorize efforts to accomplish such a definition, and to describe and codify techniques for both ensuring and recognizing good studies (e.g., Devers, 1999; Emden & Sandelowski, 1998, 1999; Engel & Kuzel, 1992; Maxwell, 1992; Seale, 1999; Sparkes, 2001; Whittemore, Chase, & Mandle, 2001). Yet after all of this effort, we seem to be no closer to establishing a consensus on quality criteria, or even on whether it is appropriate to try to establish such a consensus. Garratt and Hodkinson (1998) questioned whether there could ever be “preordained” (p. 517) “criteria for selecting research criteria” (p. 515). Sparkes (2001) stated it was a “myth” that qualitative health researchers will ever agree about validity. And Kvale (1995) suggested that the quest for quality might itself be an obsession interfering with quality.

The major reason for this lack of consensus is that no “in principle” (Engel & Kuzel, 1992, p. 506) arguments can be made that can uniformly address quality in the varieties of practices designated as qualitative research. As Schwandt (2000, p. 190) observed, qualitative research is “home” for a wide variety of scholars across the disciplines who appear to share very little except their general distaste for and distrust of “mainstream” research. Indeed, these scholars are often seriously at odds with each other. Accordingly, it is not surprising that these different communities of qualitative researchers have emphasized different quality criteria. Standards for qualitative research have variously emphasized literary and scientific criteria, methodological rigor and conformity, the real world significance of the questions asked, the practical value of the findings, and the extent of involvement with, and personal benefit to, research participants (e.g., Emden & Sandelowski, 1998, 1999; Heron, 1996; Lincoln & Reason, 1996; Richardson, 2000a,b; Whittemore, Chase, & Mandle, 2001).

Moreover, we have found in our own work that even when ostensibly the same criteria are used, there is no guarantee that reviewers will use them the same way, agree on whether a study has met them, or, if they agree, have the same reasons for agreeing. Indeed, we recognized, from our own efforts to review approximately 70 published reports and dissertations on women with HIV/AIDS, how little we consistently relied on any one set of criteria for evaluating qualitative studies, but how much we relied on our own personal readings and even “re-writings” of the reports themselves. While one of us tends to assume an “aesthetic” stance toward research reports, responding in terms of her total engagement with texts, the other tends to assume an “efferent” stance, reading primarily for the clinically relevant information they provide (Rosenblatt, 1978).

In this article, we hope to shift the debate in the health-related practice disciplines concerning quality in qualitative research from a preoccupation with epistemic criteria toward consideration of aesthetic and rhetorical concerns. Indeed, we see epistemic criteria as inevitably including aesthetic and rhetorical concerns. We argue here for a reconceptualization of the research report as a dynamic vehicle that mediates between researcher/writer and reviewer/reader, rather than as a factual account of events after the fact. More specifically, we propose that the research report is more usefully treated as a “literary technology” (Shapin, 1984, p. 490) designed to persuade readers of the merits of a study than as a mirror reflection of that study. We further propose that the evaluation of these reports be treated as occasions in which readers seek to “make meaning” from texts (Beach, 1993, p. 1), rather than for the rigid application of standards and criteria.

To make our case, we draw from reader-response theories (Beach, 1993) which emphasize the interactions between readers and texts by which “virtual texts” (Ayres & Poirier, 1996) are produced, and from studies of rhetoric and representation in science and ethnography, which emphasize the writing practices intended to produce appealing texts (e.g., Clifford & Marcus, 1986; Geertz, 1988; Hunter, 1990; Latour & Woolgar, 1986; Lynch & Woolgar, 1988). We describe key problems in using existing guides for evaluating qualitative studies and offer a reading guide that addresses these problems.

The Qualitative Metasynthesis Project

We draw also from the findings of an on-going research project, the purpose of which is to develop a comprehensive, usable, and communicable protocol for conducting qualitative metasyntheses of health-related studies, with studies of women with HIV/AIDS as the method case. 1 We began the project in June of 2000 and we expect to complete it by June of 2005. The reading guide we feature here is a product of that study, a key component of which is the development of a tool that will allow the systematic appraisal of a set of qualitative studies but also the preservation of the uniqueness and integrity of each individual study in the set. In order to enhance the validity of the findings of this project, we chose an expert panel comprised of six scholars who have had experience conducting qualitative metasynthesis and/or quantitative meta-analysis projects. 2 Their role is to provide peer review of our procedures: that is, to assist us to “think/talk aloud” (Fonteyn, Kuipers, & Grobe, 1993) the often inchoate moves that comprise so much of qualitative work. They will also evaluate the contents, relevance, and usability of the various tools — and the protocols for using them — we will develop for conducting each phase of a qualitative metasynthesis project, from conceiving such a project to disseminating the findings from it to various audiences.

We convened the members of the expert panel 3 in March of 2001 for a two-day discussion (the contents of which were transcribed) of the version of the guide we had at that time, which was based on our intensive analysis of those reports of qualitative studies on women with HIV/AIDS we had retrieved by then. In order to prepare for this meeting, the panel members used the guide with a study we had purposefully selected from our bibliographic sample as an example of what we then thought was a methodologically “weak” albeit informative study. After this meeting, we revised the guide again, used it on all of the studies we had retrieved to date, and then asked the expert panel members 4 to use this latest version with a purposefully selected sample of five other studies from our bibliographic sample. This time, we chose studies to represent variations in medium (e.g., journal article, book chapter), complexity (Kearney, 2001) (e.g., low-complexity descriptive summaries to high-complexity grounded theories and cultural interpretations), style of presentation (e.g., traditional scientific, alternative), and in author affiliations (e.g., nurse, social scientist). We asked the panel members to use the guide with — and to comment on its contents and usability for — each of these five studies and then to rank the relevance to them for each study of each category of information listed in the guide. We refer to the results of this work later in this article.

Reading and Writing Qualitative Studies 5

Readers of research reports bring to these texts a dynamic and unique configuration of experiences, knowledge, personality traits, and sociocultural orientations. Readers belong to one or more “interpretive communities” (Fish, 1980) (e.g., qualitative researchers, academic nurses, social constructionists) that strongly influence how they read, why they read, and what they read into any one text. The members of these communities differ in their access and attunement to, knowledge and acceptance of, and participation with, for example, references and allusions in a text, the varied uses of words and numbers, and various genres or conventions of writing. Because of their varying reading backgrounds, experiences, and expectations, readers will vary in their interaction with texts (Beach, 1993; Lye, 1996a,b). Indeed, even when one reader is engaged with the same text, interactions will vary as such factors as the passage of time and different reasons for reading that text alter the reading. Moreover, reading is cumulative as each new reading builds upon preceding readings of this and other texts (Manguel, 1996).

Researchers/writers, in turn, employ various writing conventions and literary devices in order to appeal to readers, and to shape and control their readings. Shape is a property of information that includes, not just the informational content per se, but also the very physical form in which it appears (Dillon & Vaughan, 1997). Indeed, the research report is itself better viewed, not as an end-stage write-up, but rather as a dynamic “literary technology” (Shapin, 1984) whereby writers use literary devices — such as correlation coefficients, p values, metaphors, and coding schemas — rhetorically to engage readers to accept their study procedures and findings as valid. As Shapin (1984, p. 491) conceived it, this technology is intended to make readers “virtual witness(es)” to what they have never seen: namely, the conduct of the project itself. Researchers/writers “deploy…linguistic resources” (Shapin, 1984, p. 491), such as the correlation coefficient and the emotive quote, to appeal to communities of scholars that will find such appeals convincing. In the case of the correlation coefficient, the appeal is to stability and consensus; in the case of the quote, the appeal is to “giving voice.” These devices contribute to the illusion that write-ups of research are reflections of reality and that readers are witnessing the study reported there. Graphs, charts, tables, lists, and other such visual displays are powerful rhetorical devices that function “manifestly” to reduce large quantities of data into forms that can be more readily apprehended by readers, but also “latently” to shape findings and to persuade readers of the validity of findings (McGill, 1990, p. 141). They are components of the literary technology of science, not only because they evoke images of the research that has taken place, but also because they themselves constitute a visual source of information. They are part of the “iconography” of science, offering “visual assistance” to the virtual witness (Shapin, 1984, pp. 491–492).

In a similar rhetorical and representational vein, statistics are not merely numeric transformations of data, but “literary…displays treated as dramatic presentations to a scientific community” (Gephart, 1988, p. 47). In quantitative research especially, the appeal to numbers gives studies their rhetorical power. Statistics are a naturalized and rule-governed means of producing what is perceived to be the most conclusive knowledge about a target phenomenon (John, 1992). John (1992, p. 146) proposed that statistics confer the “epistemic authority” of science. The power of statistics lies as much in their ability to engender a “sense of conviction” (John, 1992, p. 147) in their “evidentiary value” (p. 144) as to provide actual evidence about a target phenomenon. Statistics authorize studies as scientific and contribute to the “fixation of belief” whereby readers accept findings as facts and not artifacts (Amann & Knorr-Cetina, 1988, p. 85). They are a display of evidence in the “artful literary display” (Gephart, 1988, p. 63) we know as the scientific report, and they are a means to create meaning. Statistical meaning is not “inherent in numbers,” but rather “accomplished by terms used to describe and interpret numbers” (Gephart, 1988, p. 60). Indeed, quantitative significance is arguably less found than created, as writers rhetorically enlist readers, with the use of words such as

Whereas tables and figures provide much of the appeal in quantitative research, tableaux of experience and figures of speech provide much of the appeal in qualitative research. Writers wanting to write appealing qualitative research reports tend to use devices, such as expressive language, quotes, and case descriptions, in order to communicate that they have recognized and managed well the tensions, paradoxes, and contradictions of qualitative inquiry. Qualitative writers desire to tell “tales of the field” (Van Maanen, 1988) that convey methodological rigor, but also methodological flexibility; their ability to achieve intimacy with, but also to maintain their distance from, their subjects and data; and, their fidelity to the tenets of objective inquiry, but also their feeling for the persons and events they observed. They want their reports to be as true as science is commonly held to be, and yet as evocative as art is supposed to be.

In summary, the only site for evaluating research studies — whether they are qualitative or quantitative — is the report itself. The “production of knowledge” cannot be separated from the “communication of knowledge” by which “communities” of responsive readers are created and then come to accept a study as valid (Shapin, 1984, p. 481). The production of convincing studies lies in how well the needs and expectations of readers representing a variety of interpretive communities have been met. Indeed, although we tend to distinguish between epistemic and aesthetic criteria, they are in practice indistinguishable as the sense of rightness and feeling of comfort readers experience reading the report of a study constitute the very judgments they make about the validity or trustworthiness of the study itself (Eisner, 1985). As Eisner (1985) observed, all forms — whether novel, pottery, or scientific report — are evaluated by the same aesthetic criteria, including coherence, attractiveness, and economy. Quantification and graphical displays are common ways to achieve these goals in science texts (Law & Whittaker, 1988), while conceptual renderings, quotes, and narratives are common ways to achieve these goals in ethnographic texts. The aesthetic is itself a “mode of knowing” (Eisner, 1985); both scientific and artistic forms are judged by how well they confer order and stimulate the senses. Whether a reviewer judges study findings as vivid or lifeless, coherent or confusing, novel or pedestrian, or as ringing true or false, s/he is ultimately making a communal, but also a personal (Bochner, 2000; Richardson, 2000a) and an “aesthetic judgment” (Lynch & Edgerton, 1988, p. 185).

The Problem With Existing Guides for Evaluating Qualitative Studies

Although useful, existing guides for evaluating qualitative studies (variously comprised of checklists and/or narrative summaries of criteria or standards) tend to confuse the research report with the research it represents. They also do not ask the reviewer to differentiate between understanding the nature of a study-as-reported and estimating the value of a study-as-reported, nor do they allow that any one criterion might be more or less relevant for any one study and to any one reviewer. We prefer the word

Form is Content

Because the only access any reader/reviewer typically has to any study is to a report of it, what a reader/reviewer is actually reading/appraising is not the study itself, but its representation in some publication venue, usually a professional journal. The research report is an after-the-fact reconstruction of a research study and generally one that makes the inquiry process appear more orderly and efficient than it really was. In the health sciences, reports of research generally conform to what Bazerman (1988) described as the experimental scientific report. This literary style of reporting research is a “prescriptive rhetoric” (Bazerman, 1988, p. 275) for reporting research that conceives the write-up as an objective description of a clearly defined and sequentially arranged process of inquiry, beginning with the identification of a research problem, and research questions or hypotheses, progressing through the selection of a sample and the collection of data, and ending with the analysis and interpretation of those data (Golden-Biddle & Lock, 1993; Gusfield, 1976).

The standardization of form evident in the familiar experimental scientific report does not so much reflect the procedures of any particular study as it reinforces and reproduces the realist ideals and objectivist values associated with neo-positivist inquiry. Written in the third person passive voice; separating problem and questions from method, method from findings, and findings from interpretation; and, representing inquiry as occurring in a linear process and findings as truths that anyone following the same procedures will also find, these texts reproduce the neo-positivist assumption of an external reality apprehendable, demonstrable, and replicable by objective inquiry procedures. The reader/reviewer knows what to expect in the conventional science write-up, and the fulfillment of this expectation alone constitutes a major criterion by which s/he will evaluate the merits of study findings. A write-up that fails to meet reader/reviewer expectations for the write-up will jeopardize the scientific status of the study it represents (McGill, 1990). Although standardization of form is actively sought in the belief that form ought not to confound content, form is inescapably content. Researchers/writers are expected to report their studies

Complicating the critique (that is, appreciation + appraisal) of qualitative reports is that many of them do not conform to the conventional experimental style. A hallmark of qualitative research is “variability,” not “standardization” (Popay, Rogers, & Williams, 1998, p. 346), including the reporting of findings. Many qualitative researchers do not adhere to neo-positivist tenets and thus seek to write in ways that are more consistent with their beliefs. Moreover, it is a commonplace in qualitative research that “one narrative size does not fit all” (Tierney, 1995, p. 389) in the matter of reporting qualitative studies. Indeed, there is a burgeoning effort — itself a target of criticism (e.g., Schwalbe, 1995) — to experiment with different forms for communicating the findings of qualitative studies, including novels, poems, drama, and dance (Norris, 1997; Richardson, 2000b).

Accordingly, in order to appraise a qualitative study fairly, readers/reviewers have to appreciate the various forms and “narrative sizes” that qualitative reports come in so that they will know what they are looking at, what to look for, and where to find it. For example, we found in our review of studies of women with HIV/AIDS that some reports had no explicit description of method either in sections devoted just to this topic or anywhere else in the report, nor any explicit statement of research questions. Yet method was still discernible in the findings. Some reports had no explicit statement of a problem, which was instead implied in the research purpose and/or literature review, or became evident in the findings. Although some readers will not mind having to read method into the findings or the problem into the literature review, other readers will insist that writers explicitly address these categories of information.

Reporting Adequacy Versus Procedural or Interpretive Appropriateness

Another problem with existing guides for evaluating qualitative studies is that they do not clearly separate for the reader what a writer reported that s/he did or intended to do from what s/he apparently did, to the extent that this can be discerned in the research report. They confuse the adequacy of a description of something in a report with the appropriateness of something that occurred in the study itself, as represented in the report.

A case in point is the sample. Existing criteria frequently do not ask reviewers to differentiate between an informationally adequate description of a sample and a sample adequate to support a claim to informational redundancy. In the first instance, the writer has given either enough or not enough information about the sample to evaluate it. In the second instance, the reader makes a judgment that the sample is or is not large enough to support a claim the writer has made. In the first instance, a judgment is made about adequacy of reporting. In the second instance, a judgment is made about the appropriateness of the reported sample itself (that is, its size and configuration) to support the findings. In other words, a judgment of reporting adequacy has to be made before a judgment of procedural or interpretive appropriateness can be made. Before a reader can make a judgment about anything, the writer must have given enough information about it in her/his report. A judgment of procedural or interpretive appropriateness (i.e., is it

Actual Versus Virtual Presence or Absence

Yet the reader must also have an appreciation for the reporting constraints that may have been placed on the writer, such as page limitations and journal and disciplinary conventions concerning what needs to be explicitly said, what can be implied, and what can be omitted. The absence of something in a report does not mean the absence of that thing in the study itself.

Moreover, readers themselves will vary in their willingness to accept a reporting absence. While reviewing the five studies we had selected for the expert panel, one panel member reported that she was most influenced by the absence of information in a report. As she explained it, if a researcher said nothing about method, then method became highly influential in how she viewed the study. Another reviewer suggested a presence/absence calculus in that the presence of findings “with grab” could favorably offset for her the absence of a well-defined problem or method.

Just as the absence of something in a report does not necessarily mean it was absent in the study itself, so too the presence of something in a report does not necessarily mean it was present in the study itself. A writer may have reported that s/he used phenomenological methods, but the reader — in her or his judgment of what constitutes phenomenology — finds no discernible evidence of the use of those methods in the findings. Instead, the reader finds discernible evidence that the technique used to analyze the data was a form of content analysis. Accordingly, a more appropriate way for the reader to read the study is as a content analysis, not as a phenomenological analysis, despite what the writer reported. The reader is here rewriting the report to conform to her/his reading of it and is arguably, in the process, giving the report a better reading and the writer of the report a reviewing break. Read as a content analysis, the report may be judged a good example of its kind; read as a phenomenological analysis, it may not be.

The description of a procedure may be judged informationally adequate but informationally and/or procedurally inappropriate. A writer may adequately describe the inter-rater reliability coding technique used to validate study findings, but the reader may judge the rendering of the technique itself as inaccurate and/or the actual use of such techniques as inappropriate to the narrative claims made in a study. In addition, a writer may be forced to discuss matters inappropriate to a qualitative study. The best case in point is the frequent discussion of the so-called limitations of qualitative research, where writers may be forced by peer reviewers or editors to state that their sample was not statistically representative or that their findings are not generalizable. Such statements suggest that a researcher/writer does not understand the purpose of sampling in qualitative research, nor the fact that idiographic and analytic generalizations are outcomes of qualitative research. Yet such statements may not be reflective of any error on the part of the researcher.

In summary

In summary, readers must have a keen grasp of the diversity in qualitative research and of writing styles and constraints. A critical error would be to exclude from consideration studies with valuable findings for reasons unlikely to invalidate the findings. A study presented as a phenomenology that is evidently a qualitative descriptive study may still be a “good” study: that is, a study with credible and useful findings. Another critical error would be to accept what a writer says at face value without looking behind the face. The appraisal of qualitative studies requires discerning readers who know and take account of what their reading preferences are and who are able to distinguish between non-significant representational errors and procedural or interpretive mistakes fatal enough to discount findings. The appraisal of qualitative studies also requires discerning readers able to distinguish between a report that says all of the right things, but which contains no evidence that these things actually took place.

Development, Purpose, and Use of a Guide for Reading Qualitative Studies

The reading guide that follows is the latest version of a tool we developed to assist us in apprehending those features of any one research report most relevant to understanding and ultimately combining its findings with those from a set of reports. We developed it by using the iterative process we described previously involving our own and expert panel members' use and appraisal of successive versions of the guide. We intend the guide to be used with exclusively qualitative and not mixed methods studies, which present distinctive challenges to reading and writing that have been addressed elsewhere (Sandelowski, in press). Although the primary purpose of the guide is to help readers/reviewers read write-ups of qualitative research on health-related topics, it may also be useful to researchers/writers wanting to write up their studies in ways that will appeal to the varied readers in the health sciences.

The purpose of the guide is to make more visible those features of qualitative reports that readers in the health-related practice disciplines are likely to want to see, but which the form of reporting might make it more difficult for them to see. Readers in the health-related practice disciplines typically want information in 13 categories, including research problem, research purpose(s)/question(s), literature review, orientation to the target phenomenon, method, sampling, sample, data collection, data management, validity, findings, discussion, and ethics. Each of these categories is defined in the reading guide shown here. The guide directs readers/reviewers to look for information in these 13 categories, no matter where they might appear in the report. A 14th category — form — asks the reader to consider the general style of the report and, especially, the shape of the findings.

Sometimes bits of information that writers insist are “there” in a report are not seen by the reviewer because they are located in places of the report where reviewers are not looking for them. For example, we have noticed in our review of studies on women with HIV/AIDS that information concerning the ethical and credible conduct of a study was often nested in information provided about the sample, data collection, and data analysis, and/or in the findings and discussion sections. Typically there were no defined sections of the report devoted to the topics of ethics and validity. Accordingly, the guide helps readers identify what they want to find without rigidly linking that information to any one place in a research report, which might cause a study to be inadequately appraised.

Yet it offers a systematic way to dissect and organize and, therefore, to obtain all of the information available from a report that might subsequently be useful for one or more of the varied purposes readers will have for reading that report, which may be a systematic and comprehensive review of the findings and/or methodologic approaches of a set of studies for a state of the science paper or for a research proposal, or to conduct a metasynthesis. Or, the guide can be used in a more focused way to target key features of qualitative studies in a domain, such as the kinds of participants who have been included or the kinds of recruitment strategies used.

Although the guide is arranged to reflect the topics and order both apparent in most qualitative research reports in the health sciences and expected by most readers of these reports, we intend it to be used dynamically to reflect the purpose of the reading and the nature of the report itself. As the sections of the guide artificially separate and arrange what are integral elements of a whole, some reviewers may want to begin their reading of a report with the findings and sample, some will want to read in the given order of the report, and some will want to read in the given order of the guide. Some categories of information cannot be fully understood until all of the report is read, while other categories may be evident in more self-contained sections of a report. Moreover, any one statement from a research report can be placed in more than one category as it may carry information applicable in more than one category. A statement about how a researcher coded data may be relevant to both the

The purpose for reading a report will determine how the guide is used. Reviews will likely be more detailed when the purpose is to determine whether a study meets criteria for inclusion in a qualitative metasynthesis, but may be less detailed when the purpose is to survey methodological approaches in a field of study. For very detailed work, we recommend using a hard copy of the guide, along with a scanned copy of the research report, from which words, phrases, and/or paragraphs can be copied directly into the template shown in Figure 4.

The guide is thus a reconstruction (of a report) that is itself a reconstruction (of a study); it asks readers to re-shape a report to conform to its logic. By this reshaping effort, the guide makes visible how differently common features of a report contribute to a unique whole. As one panel member suggested, the research report is a “gestalt” with the different components comprising that gestalt variously operating in the foreground or background. Two other panel members suggested that the very same features of a report can enhance or detract from the value assigned to the study it represents. As we learned from our expert panel and from using the guide ourselves, there are at least two effects of this re-shaping effort. One effect is for the reviewer to feel as if s/he were taking a research report apart and rearranging its parts so that it no longer resembles what it was (as Picasso did in many of his paintings of human beings and animals). A contrasting effect is for the reviewer to feel as if s/he were taking a work not immediately recognizable as a research report and rearranging its parts so that it more clearly resembles a scientific research report. No matter which of these or other effects the guide will have on readers, it will compel them to see a report in ways they had not before.

The guide asks readers/reviewers to consider also the presence and, even more importantly, the relevance of specified appraisal parameters. A reader may judge that a category of information has been addressed — and/or addressed well or badly — but decide that no matter whether or how it was addressed, it did not matter anyway to the overall value of the report. Accordingly, the guide helps readers see what is there, where it is, and what is not there in a report. One panel member noticed how often information about sampling and analysis was missing from reports. And, the guide helps readers better to understand themselves as readers. The guide makes visible what a reader's inclinations are and whether and how they figure into their reviews.

The expert panel members demonstrated this point, in addition to the futility of efforts to create a quantitatively reliable tool to appraise qualitative studies. We asked the expert panel members to rank how important (1 as most important and 14 as least important) each of the 14 categories of information were to them in reviewing each of five qualitative studies on women with HIV/AIDS. The panel members uniformly agreed that it was virtually impossible reliably to rank all 14 categories, one member specifically objecting to any effort to quantify what for her is ultimately a qualitative assessment. As the panel members observed, it was difficult to disaggregate parts that were not only integrally connected to each other, but also connected to each other in different ways in each of the five studies. However, they did find it easier and more acceptable to select the three categories that influenced them the most, and the three categories that influenced them the least, in appraising each study. Their rankings are summarized in Figures 1–3.

Expert panel member rating of most and least influential information categories

Most and least influential rankings of 14 categories in five studies (n=5)

Frequency of ratings of 14 information categories in five studies (n=5)

Template of reading guide for on-screen work

As shown in these Figures, reviewers showed relatively low inter-rater or intra-rater consistency in the categories of information they selected as most influential and least influential across and within ranking positions and across studies. But they did show some reading/reviewing tendencies or preferences. As shown in Figure 1, Reviewer A tended to select

In summary, we see the guide as itself a literary technology designed to enhance the appreciation and thereby promote a more informed appraisal of the literary technology we know as the qualitative research report. We offer it as a “conceptual and presentation device” (Maxwell, 1996, p. 8), not as a set of rules to be slavishly followed. We agree with Maxwell (1996, p. ix) that “a guide… is best when those guiding you are opinionated.” This guide certainly derives from our opinions, but these opinions are grounded in our current research and years of experience in reading and writing qualitative research. We fully anticipate and invite the readers/reviewers who use this guide to exercise their opinions and, in the process, to enhance the quality and utility of the guide. Any guide is always a work-in-progress.

Footnotes

Acknowledgments

We thank the members of our Expert Panel (named in Note 2) and our research assistants, Janet Meynell and Patricia Pearce.

1.

This study, entitled “analytic techniques for qualitative metasynthesis,” is supported by grant # R01 NR04907 from the National Institute for Nursing Research.

2.

They are Cheryl Tatano Beck, Louise Jensen, Margaret Kearney, George Noblit, Gail Powell-Cope, and Sally Thorne.

3.

One panel member could not attend because of inclement weather.

4.

Another panel member is currently on sabbatical and did not participate in this exercise.

5.

Material in this portion of the text is also included in a different and expanded form in a chapter previously prepared for an anthology that will be published as Sandelowski, In press, cited in the reference list.

A Guide for Reading Qualitative Studies

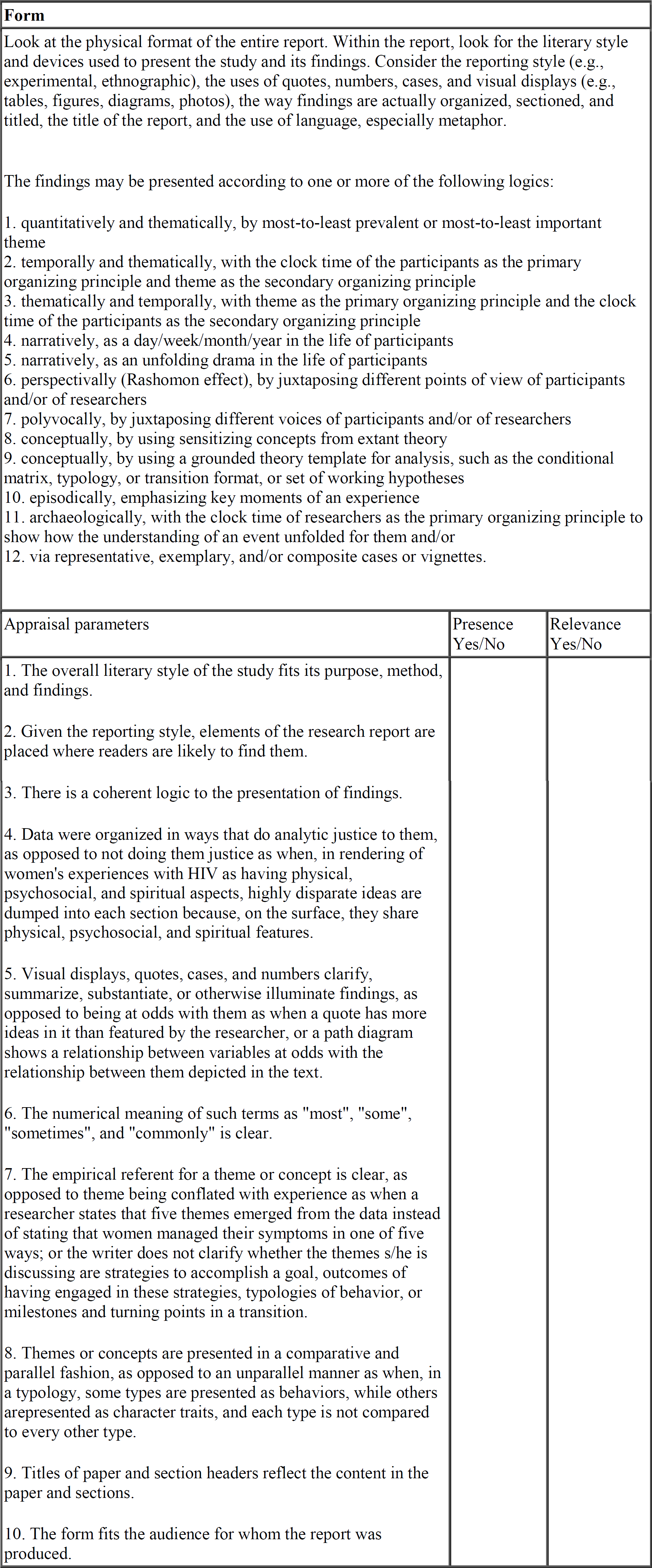

| Form | ||

|---|---|---|

| Look at the physical format of the entire report. Within the report, look for the literary style and devices used to present the study and its findings. Consider the reporting style (e.g., experimental, ethnographic), the uses of quotes, numbers, cases, and visual displays (e.g., tables, figures, diagrams, photos), the way findings are actually organized, sectioned, and titled, the title of the report, and the use of language, especially metaphor. quantitatively and thematically, by most-to-least prevalent or most-to-least important theme temporally and thematically, with the clock time of the participants as the primary organizing principle and theme as the secondary organizing principle thematically and temporally, with theme as the primary organizing principle and the clock time of the participants as the secondary organizing principle narratively, as a day/week/month/year in the life of participants narratively, as an unfolding drama in the life of participants perspectivally (Rashomon effect), by juxtaposing different points of view of participants and/or of researchers polyvocally, by juxtaposing different voices of participants and/or of researchers conceptually, by using sensitizing concepts from extant theory conceptually, by using a grounded theory template for analysis, such as the conditional matrix, typology, or transition format, or set of working hypotheses episodically, emphasizing key moments of an experience archaeologically, with the clock time of researchers as the primary organizing principle to show how the understanding of an event unfolded for them and/or via representative, exemplary, and/or composite cases or vignettes. |

||

| Appraisal parameters |

Presence Yes/No |

Relevance Yes/No |

|

The overall literary style of the study fits its purpose, method, and findings. Given the reporting style, elements of the research report are placed where readers are likely to find them. There is a coherent logic to the presentation of findings. Data were organized in ways that do analytic justice to them, as opposed to not doing them justice as when, in rendering of women's experiences with HIV as having physical, psychosocial, and spiritual aspects, highly disparate ideas are dumped into each section because, on the surface, they share physical, psychosocial, and spiritual features. Visual displays, quotes, cases, and numbers clarify, summarize, substantiate, or otherwise illuminate findings, as opposed to being at odds with them as when a quote has more ideas in it than featured by the researcher, or a path diagram shows a relationship between variables at odds with the relationship between them depicted in the text. The numerical meaning of such terms as “most”, “some”, “sometimes”, and “commonly” is clear. The empirical referent for a theme or concept is clear, as opposed to theme being conflated with experience as when a researcher states that five themes emerged from the data instead of stating that women managed their symptoms in one of five ways; or the writer does not clarify whether the themes s/he is discussing are strategies to accomplish a goal, outcomes of having engaged in these strategies, typologies of behavior, or milestones and turning points in a transition. Themes or concepts are presented in a comparative and parallel fashion, as opposed to an unparallel manner as when, in a typology, some types are presented as behaviors, while others arepresented as character traits, and each type is not compared to every other type. Titles of paper and section headers reflect the content in the paper and sections. The form fits the audience for whom the report was produced. |

||

© Sandelowski and Barroso, 2001