Abstract

Health professionals deliver a range of health services to individuals and communities. The evaluation of these services is an important component of these programs and health professionals should have the requisite knowledge, attributes, and skills to evaluate the impact of the services they provide. However, health professionals are seldom adequately prepared by their training or work experience to do this well. In this article we provide a suitable framework and guidance to enable health professionals to appropriately undertake useful program evaluation. We introduce and discuss “Easy Evaluation” and provide guidelines for its implementation. The framework presented distinguishes program evaluation from research and encourages health professionals to apply an evaluative lens in order that value judgements about the merit, worth, and significance of programs can be made. Examples from our evaluation practice are drawn on to illustrate how program evaluation can be used across the health care spectrum.

Introduction

Integral to health professional practice is the development and delivery of initiatives aimed at improving the health and wellbeing of individuals and communities. Given that many of these initiatives are publicly funded, an evaluation phase should be included for these initiatives. For many people working in the health professions, across both clinical and non-clinical roles and including those providing medical education, facilitating program evaluation can therefore be considered an essential responsibility (Frye & Hemmer, 2012; Kemppainen et al., 2012).

Program evaluation can be usefully employed by health professionals to determine the impact and quality of the initiatives they are delivering and whether they are having the desired health-related outcomes for individuals and communities. Identifying whether programs are successful is likely to contribute to the security of funding for those programs. Additionally, funders and managers are often interested in identifying opportunities for advancing program improvement that can be identified through program evaluation activities (Curry et al., 2009; Trombetta et al., 2020).

We propose that health professionals are ideally positioned to both contribute to and lead program evaluation activities. In order for health professionals to be effective in this role, they require appropriate understanding, knowledge, skills, and confidence around program evaluation (Dickinson & Adams, 2012; Taylor-Powell & Boyd, 2008). However, in our experience program evaluation is often misunderstood and the term applied variably. This article provides an overview of program evaluation and considers what it is (and is not). We detail a clear, practical framework for health professionals to use when planning and completing a program evaluation and illustrate this with examples from our work.

What is Program Evaluation?

Providing a precise and universally accepted definition of evaluation is difficult, in part because the discipline is extremely diverse (Gullickson et al., 2019). Patton (2018) observed that professional evaluators “are an eclectic group working in diverse arenas using a variety of methods drawn from a wide range of disciplines applied to a vast array of efforts aimed at improving the lives of people in places throughout the world” (p. 186). However, in spite of this diversity, the foundational description of evaluation as the systematic definition of the merit, worth, and significance of a program, project or policy endures (Scriven, 1991). More recently Davidson (2014) stated that “evaluation, by definition, answers evaluative questions, that is, questions about quality and value. This is what makes evaluation so much more useful and relevant than the mere measurement of indicators or summaries of observations and stories” (p. 1). Further, findings from program evaluations are characterized as being of particular use to inform decisions and identify options for program improvement (Patton, 2014).

In contrast, research is typically described as producing new knowledge through systematic enquiry (Gerrish, 2015). Researchers are often broadly characterized as seeking to understand and interpret meaning (qualitative research), or seeking to identify relationships between variables in order to explain or predict (quantitative research) (Braun & Clarke, 2013). These approaches can also be combined in multi methods and mixed methods projects (DePoy & Gitlin, 2016). Research is thus primarily “valued” for the contribution it makes to the body of knowledge (Levin-Rozalis, 2003; Patton, 2014).

In general health professionals are unaware of the distinctiveness of program evaluation as a discipline (Davidson, 2005). As a result many reported “program evaluations” show no evidence of the application of any evaluation-specific methodology, and accordingly no robust and clear determination of the program’s quality or value is made. One example of this is an evaluation of a structural competency training program for medical residents that aimed to raise awareness about how social, political, and economic structures impact on illness and health of people (Neff et al., 2017). Among the qualitive results “residents reported that the training had a positive impact on their clinical practice and relationships with patients. They also reported feeling overwhelmed by increased recognition of structural influences on patient health, and indicated a need for further training and support to address these influences” (Neff et al., 2017, p. 430). While these results appear insightful, there are no evaluative conclusions provided that explicitly addresses how good, valuable, or worthwhile the training was in terms of changing clinical practice or relationships. Using criteria established by Davidson (2013), these presented results can most appropriately be considered “descriptive research facts” and not evaluation results as there is no explicit evaluative methodology used or process of evaluative reasoning reported.

While there is no doubt the findings of this type of research study are useful, the core purpose in this article is to present a framework that allows claims to be made about a program’s merit, worth, and significance. Such a framework requires specific skills and training in program evaluation over and above standard research skills.

Preparation to Undertake Program Evaluation

While training in research practice and methods is common in the undergraduate and graduate preparation of many health professionals, exposure to program evaluation is much less prominent, and in some cases absent. A review of several nursing research-focused textbooks identified that minimal information is provided about program evaluation compared with other research techniques and skills. For example, only one of the 29 chapters comprising the Nursing Research and Introduction textbook (Moule et al., 2017) focused on program evaluation, including two pages outlining generic steps in conducting an evaluation. Similarly, in The Research Process in Nursing (Gerrish & Lathlean, 2015) one chapter of 40 (12 of 605 pages) is about program evaluation. The information about program evaluation provided in most textbooks is broad and explores core concepts but does not provide sufficient information to guide the conduct of an evaluation. In addition, while research skills are often considered foundational in the educational curricula for health professionals, training in program evaluation is much less prominent. Consequently, many health professionals develop research skills and conduct research projects, but evaluation skills and practice remain undeveloped.

Undertaking program evaluation requires a solid foundation in research knowledge and skills, as well as specific evaluation knowledge and skills. As Davidson (2007) noted, researchers may need to unlearn some of their research habits and develop new skills and ways of thinking: “Training in the applied social sciences provides a wonderful starting toolkit for a career in evaluation, albeit one that needs topping up with several essentials” (p. vi). The ability to utilize the perspective of evaluative reasoning is essential. This is a process through which evidence is collected (typically using standard research data collection methods) and assessed as the basis for making well-reasoned evaluative conclusions about the program being evaluated (Davidson, 2005, 2014).

As noted previously, much of the published information available to those wishing to undertake program evaluation remains generic, focusing on theory and concepts while lacking the specificity required to guide the conduct of an evaluation. In the remainder of the article we provide a practical and proven framework for program evaluation of health-related programs. If followed, the framework enables health professionals to plan and undertake program evaluation.

The framework, branded “Easy Evaluation,” has been widely taught to the public health workforce in New Zealand since 2007 (Adams & Dickinson, 2010; Dickinson & Adams, 2012). We bring the perspectives of a program evaluation specialist and public health researcher (JA) and nurse researcher and educator (SN). We have used the framework to guide a number of program evaluations undertaken across a variety of health-related settings and topics. These include projects examining diabetes care (Wilkinson et al., 2011, 2014), sonography training (Dickinson et al., 2016), sexual health promotion (Adams & Neville, 2013; Adams et al., 2013 , 2017), and the implementation of age friendly community initiatives (Neville et al., 2018).

Easy Evaluation

Easy Evaluation is a hybrid framework drawing on several established evaluation approaches. The central theoretical grounding is program theory-based evaluation. This approach centers people’s understanding of what is required to develop and implement a successful program (Mertens & Wilson, 2019). These understandings form the basis of the program theory, which is typically shown in a logic model (Donaldson, 2007). Easy Evaluation also emphasizes the importance of the valuing tradition in evaluation, which requires value judgements about the merit and worth of a program to be made (Davidson, 2005). The framework also incorporates a participatory dimension through the active involvement of key stakeholders at all stages.

Easy Evaluation comprises six key phases (Figure 1). An explanation of these phases follows along with examples from completed evaluation studies. Further detail about implementing Easy Evaluation is available (Dickinson et al., 2015). 1

Easy evaluation framework.

Logic Models

Logic models are a fundamental tool for evaluators using a theory-driven approach (Bauman & Nutbeam, 2014; Renger et al., 2019). The development of the model helps to set the boundaries of the project, program, strategy, initiative, or policy to be evaluated (sometimes generically referred to as the evaluand) (Bamberger & Mabry, 2020; Davidson, 2005).

Logic models represent the causal processes through which the program 2 is expected to bring about change and produce outcomes of interest (Donaldson, 2007; Hawe, 2015; Mills et al., 2019). Logic models provide an illustration of “an explicit theory or model of how an intervention, such as a project, programme, a strategy, an initiative, or a policy, contributes to a chain of intermediate results and finally to the intended or observed outcomes” (Funnell & Rogers, 2011, p. xix). In other words, the rationale or theory underpinning a program is provided in the model, and the expected outcomes are identified prior to the program being implemented (Mertens & Wilson, 2019).

Logic models represent stakeholders’ views of how and why a program will work. When developing the model, it is therefore beneficial to include a wide range of stakeholder input. Stakeholders who have an interest are likely to include the program implementers and funders, as well as participants in the program. Logic models are often developed in facilitated workshop sessions designed to enable stakeholders to exchange ideas on program activity and the changes (outcomes) the program is likely to achieve. The process of working together to develop a model promotes a shared understanding of the program, what it is trying to achieve and the rationale underpinning it (Oosthuizen & Louw, 2013).

Logic models can be drawn in many ways. One key type is an outcome chain model which is drawn in a way that represents the intervention and the consequences of its implementation (Funnell & Rogers, 2011). Models drawn in this tradition demonstrate the causal pathway through a series of linked outcomes (short term outcome → medium term outcome → long term outcome) that result from the program’s implementation. When developing a logic model it is crucial to identify the key problem or overarching issue of concern. This problem or issue can then be “reversed” and written in a positive way. This would typically be shown as one of the medium or long term outcomes on the model. Doing this ensures the central issue or concern underpinning the program being evaluated remains prominent.

A logic model may have already been developed in the planning phases of a project and existing models may need refining if they are not up to date. However, in many cases programs will not have a logic models and a model will need to be developed for the evaluation. In relation to the sexual health social marketing program we evaluated (Figure 2), the logic model was newly developed for the evaluation.

Logic model for Get it On!.

The overarching health issue of concern in this program was the incidence of HIV infection among men who have sex with men (Adams et al., 2017). The desire to see a reduction in the incidence of HIV was thus included as the long-term program outcome. While a logic model is read following the direction of the arrows, typically the development of the logic model is undertaken in the opposite direction. To achieve reduced incidence of HIV, the project team identified the need for increased condom use, and this was included as an outcome. Because increased condom use requires broad community commitment and support (Adams & Neville, 2009; Henderson et al., 2009), the maintenance and development of such a condom culture was included as a medium term outcome. In order to achieve this outcome, it was determined the target audiences needed to understand the key message of using condoms and further a tangible way for audiences to engage with the messages and program activities was required (these are expressed as the immediate short-term outcomes). Finally, a social marketing program was seen as appropriate to enable these short term outcomes to be achieved.

The completed model can therefore be “read” as follows: If a high quality social marketing program is developed and implemented, then the target audiences will understand the key messages and they will engage with the social marketing program. If the audiences understand and engage with the program, this will contribute to the maintenance and development of a positive condom culture. If this condom culture is maintained and developed there will be increased condom use for anal sex, which will lead to a reduced incidence of HIV. This model represents the program team’s understanding of how the program would work. The evaluation of this program was planned to test this rationale by assessing the program’s development and implementation, and to what extent the outcomes were achieved. In that regard, the program evaluation examined the quality of the intervention and tested the program’s rationale/theory.

In making decisions about program elements to be represented in the model, the team drew on relevant theory and their own experiences to determine the causal links between the elements. The key theory drawn on is that consistent (and correct) use of condoms for anal sex by gay and bisexual men can lead to a reduced incidence of HIV (Shernoff, 2005; Sullivan et al., 2012). At other points when developing the model, the program team drew on their knowledge of the importance of peer support to encourage condom use (McKechnie et al., 2013; Seibt et al., 1995), and the effectiveness of social marketing approaches based on direct community engagement for reducing barriers to participation in the desired activity (McKenzie-Mohr, 2000; Neville & Adams, 2009; Neville et al., 2014).

It is imperative to recognize that logic models need to be considered within the context in which they are developed. In this case the campaign was developed based on evidence that promotional activities that engage with communities at all levels of development and implementation are more likely to be successful (Neville et al., 2014 , 2016). The campaign was also delivered at a time when condom use in New Zealand for anal sex was relatively high compared to elsewhere and HIV diagnoses among gay and bisexual men was relatively low by international standards (Saxton et al., 2011), and before the use of biomedical HIV pre-exposure prophylaxis (PrEP) to prevent HIV infection (Adams et al., 2019, 2020).

In another example, the evaluators were tasked with examining the initial 12-week intensive full-time course for building core competencies for sonography trainees when developing a logic model (Figure 3) (Dickinson et al., 2016). The aim of this initial training was to ensure trainees were “work ready,” thus reducing the burden on their supervisors when they returned to the workplace and continued their studies. Trainees were thus expected to be well-armed with essential skills, and well-prepared in sonography fundamentals for the remainder of their postgraduate course.

Logic model for 12-week sonography training.

Logic models are not static entities. They represent thinking at one point in time and can be regenerated and redrawn as needed throughout the implementation of the project and/or the course of an evaluation as familiarity with the program develops (Patton, 2011). A robust logic model will be plausible and sensible and clearly communicate the causal processes that lead to the identified outcomes (Donaldson & Lipsey, 2011; Funnell & Rogers, 2011).

Evaluation Priorities and Questions

Using the Easy Evaluation framework focuses the program evaluation on the identified interventions and the expected outcomes. In other words, each box on the logic model can be evaluated. For each intervention depicted in the logic model, the key question is: What is the quality of the intervention? In turn for each outcome, the key question is: To what extent has the outcome (of interest) been achieved? The benefit of this approach is identifying a specific focus for the program evaluation. The aim of the evaluation is to provide direct and succinct answers to these intervention and outcome questions.

When determining the evaluation priorities, decisions need to be made about which interventions and outcomes will be evaluated. In the cases of Get it On! and sonography training it was possible to evaluate all components of the models. However, in many instances it is neither practical nor useful to evaluate all the interventions and outcomes on a logic model, and only the most important interventions and outcomes of the program need to be prioritized for evaluation. These prioritizing decisions must be made to meet stakeholder needs within the resources and budget available for the evaluation. In general terms, the short and medium term outcomes of a program are expected to be more fully achieved than long term outcomes and it typically makes sense to prioritize them. In a theory-driven evaluation it is essential to understand whether the short and medium term outcomes are being achieved, as the logic modeling exercise will have identified these outcomes as theoretically necessary to produce the desired long term outcomes. If these short and medium outcomes are not achieved to a sufficient degree it would be unlikely the program would achieve its long term outcome(s).

A number of mechanisms are available to assist in the prioritization process to ensure evaluation decisions are informed by the interests and needs of the stakeholders (Dickinson et al., 2015). Typically, this will involve a formal facilitated discussion with stakeholders. A useful process for setting priorities is to have stakeholders vote on what they see as the most important elements of the logic model. One technique is to give stakeholders “sticky dots” to place on the logic model to represent their interests and priorities. After this process discussion will be held between the evaluators and stakeholders to develop an agreement as to what will be evaluated. This type of process will enhance alignment between the stakeholders’ views, interests and expectations of the evaluation process.

Evaluation Criteria and Performance Standards

Each prioritized intervention and outcome requires the establishment of criteria and performance standards. In developing these criteria, the aspects of an intervention or an outcome that are important within the evaluation are identified. These criteria and standards represent the values that will be used to determine program performance (Gullickson & Hannum, 2019; Peersman, 2014). The foregrounding of these values is something not undertaken in research, highlighting a key difference between research and evaluation practice.

At their broadest level criteria represent concepts to be addressed in the evaluation (Peersman, 2014). To ensure their usefulness the development of specific criteria is recommended to make the evaluation targeted and specific (Dickinson & Adams, 2017). In the Easy Evaluation framework, criteria reflect stakeholder views about the important dimensions of an intervention or an outcome. Most interventions and outcomes require the development of several criteria to ensure a well-rounded understanding of the intervention or outcome.

Once established, performance standards for these criteria need to be developed. A standard refers to levels of performance in relation to the quality of the intervention and degree to which an outcome has been achieved. Rather than providing a singular standard, such as the minimum level of achievement acceptable, we advocate the use of rubrics setting out a range of performance standards. Rubrics can be considered a rating table or matrix that provides scaled levels of achievement. They set out an agreed understanding and provide a transparent basis for making evaluative judgements about aspects of a program (King et al., 2013). Through the process of developing rubrics stakeholders make it clear what is valued about a program.

An evaluation rubric comprises two key components—the criteria to be rated and performance standards for the criteria. Depending on the scope of the project and stakeholder needs there can be any number of categories of merit or levels of standards. Different labels can be employed to accompany the description of performance (e.g., Excellent, Very good, Good, Poor; or Highly effective, Minimally effective, Not effective). Alternatively, a numbered rating scale can be used to depict various levels of performance (e.g., 1–5). Six levels of standards should be the maximum as extra precision is not typically gained from having more levels (Davidson, 2005).

In the Get it On! evaluation, a workshop was held with key stakeholders to develop criteria and standards. The discussion drew on stakeholders’ expertise along with evidence and practice from other similar evaluations and the views and advice provided by topic area experts. Four criteria were developed for the outcome—Target audiences understand Get it On! key message: participants identify the key message of Get it On! participants identify condom use as an important issue for gay and bisexual men participants report message as clear participants report message as instantly recognizable

In addition, four levels of performance incorporating these criteria were identified (Table 1) (Adams & Neville, 2013; Adams et al., 2017). Developing four criteria meant the evaluation was able to take into account four different views or aspects to determine performance in relation to the outcome. Having several views or datapoints is much stronger than relying on a single indicator.

Rubric for Outcome: Target Audiences Understand Get it On! Key Message.

The Get it On! evaluation also incorporated a significant qualitative component exploring the planning and design of the program. To assess the quality of the intervention, evaluation sub-questions were developed. One of these was: How well does Get it On! reflect best practice in social and behavior change marketing? A rubric stating the performance standards for this sub-question was also developed collaboratively (Table 2).

Rubric for Intervention: Get it On! Reflects Best Practice in Social and Behavior Change Marketing.

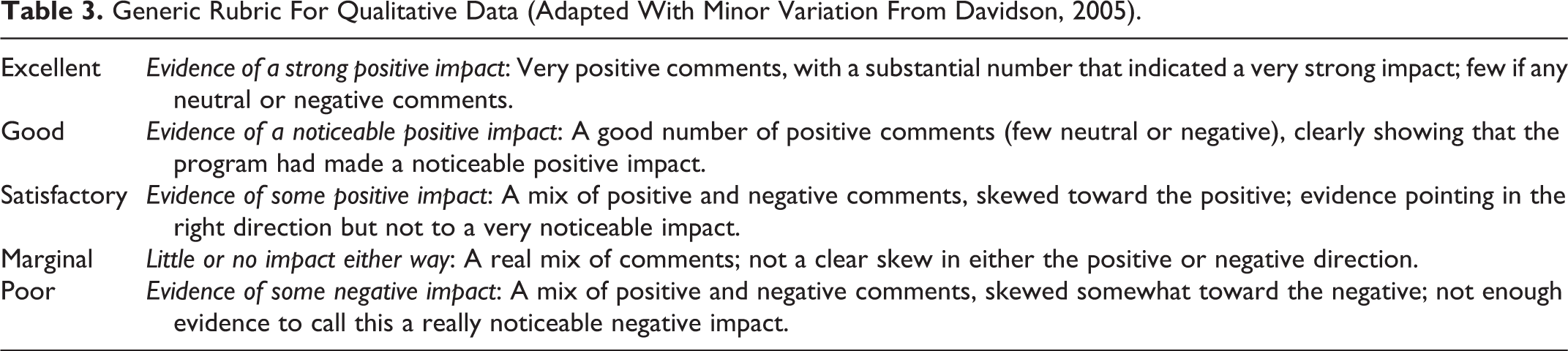

Rubrics can vary in their level of detail and preciseness. The rubrics suggested as a starting point within the Easy Evaluation framework are reasonably prescriptive. Specific standards (e.g., Almost all (>90%) of men identify the key message of Get it On!, see Table 1) reduce ambiguity when determining performance. More holistic rubrics can be developed as the experience and confidence of the evaluator grows. Holistic rubrics require more interpretation by the evaluator and stakeholders to determine how well a program has performed. An example of a holistic rubric is a generic rubric developed by Davidson (2005) for use with qualitative data (see Table 3). This generic rubric can be customized for a range of evaluation projects.

Generic Rubric For Qualitative Data (Adapted With Minor Variation From Davidson, 2005).

Collect, Analyze and Interpret Data

Data collection should be driven by the needs of the evaluation (Bauman & Nutbeam, 2014). In the Easy Evaluation framework, the criteria and standards determine the areas for data collection. For each area of data collection, specific methods and tools for gathering data need to be developed. Each criterion requires at least one data collection method. Where feasible the use of qualitative and quantitative methods is recommended as more likely to support a comprehensive understanding of the intervention or outcome being examined.

As an example, the Get it On! evaluation utilized an online and paper-based survey to seek the views of gay and bisexual men about the program and their sexual behavior and practices. The survey was largely quantitative, but qualitative data were also collected using a series of open ended questions (Neville et al., 2016). For the criteria Knowledge of key message of Get it On!, survey participants were asked: “What does the Get it On! message mean to you? (Please select one option closest to your understanding of what it is promoting or associated with): Sports participation; Unsafe sex; Use of condoms for sex; I’ve seen it, but don’t know what it means; I’ve never seen or heard of Get it On!; Other (please specify).”

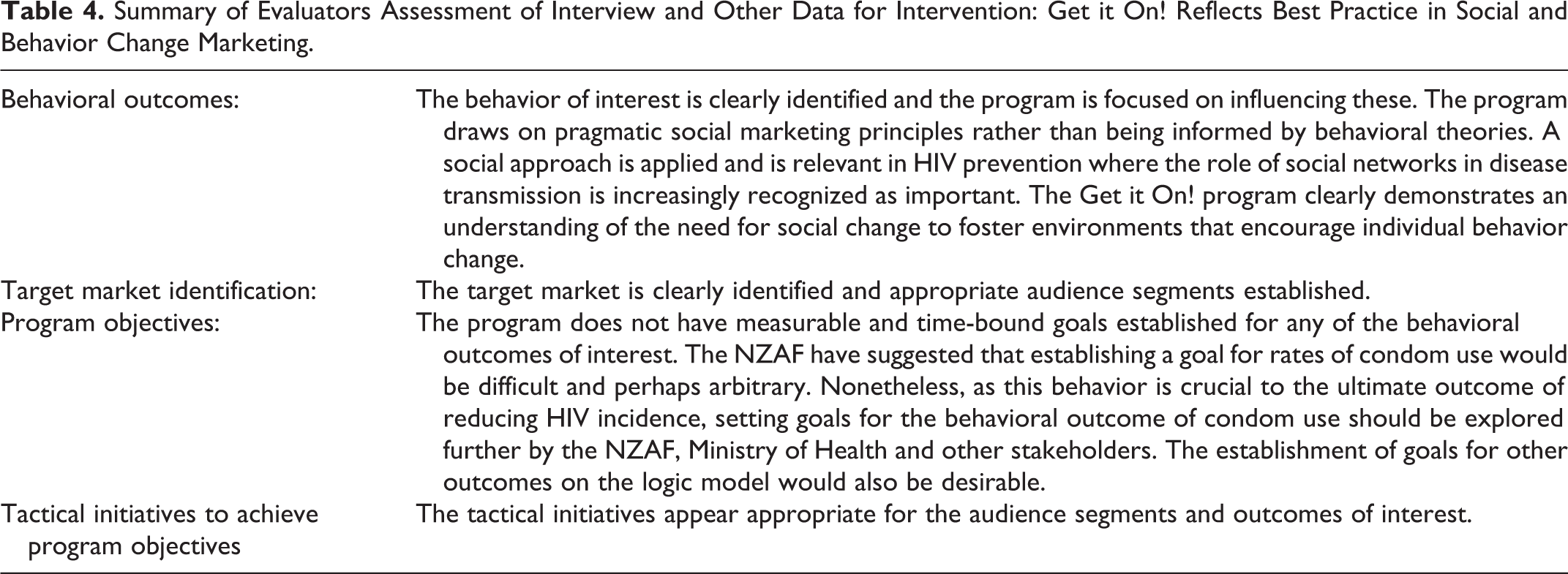

Qualitative key informant interviews (along with a review of documents) were also used in the Get it On! evaluation. The data collected from the interviews were used to assess the evaluation sub-question: How well Get it On! reflected best practice in social and behavior change marketing” (for a summary of the qualitative and document review findings see Table 4).

Summary of Evaluators Assessment of Interview and Other Data for Intervention: Get it On! Reflects Best Practice in Social and Behavior Change Marketing.

In general terms, the data collection methods and analysis used in program evaluation are the same as those used in a standard research project. The use of surveys, key informant interviews, and focus groups is common in program evaluation. Where possible existing data already collected by the program or by others should be reviewed to establish whether it is suitable and relevant to use. The focus of all data collection centers on providing relevant data for the evaluation. After analysis the data are used in the process of drawing evaluative conclusions.

Draw Evaluative Conclusions

In this phase the analyzed data (or the descriptive research “facts”) are viewed through a process of evaluative reasoning so that evaluative conclusions can be developed. Drawing conclusions is the process by which the values of the project are made explicit in determining program performance. This centering of values is an important feature of program evaluation and sets it apart from research.

A key step in formulating evaluative conclusions is holding a sensemaking session or “data party.” This is a participatory process to involve stakeholders and evaluators in interpreting findings and establishing common understandings (Patriotta & Brown, 2011). Integral to this process is the “mapping” of shared understandings of the data to the rubric to determine and agree the level of performance.

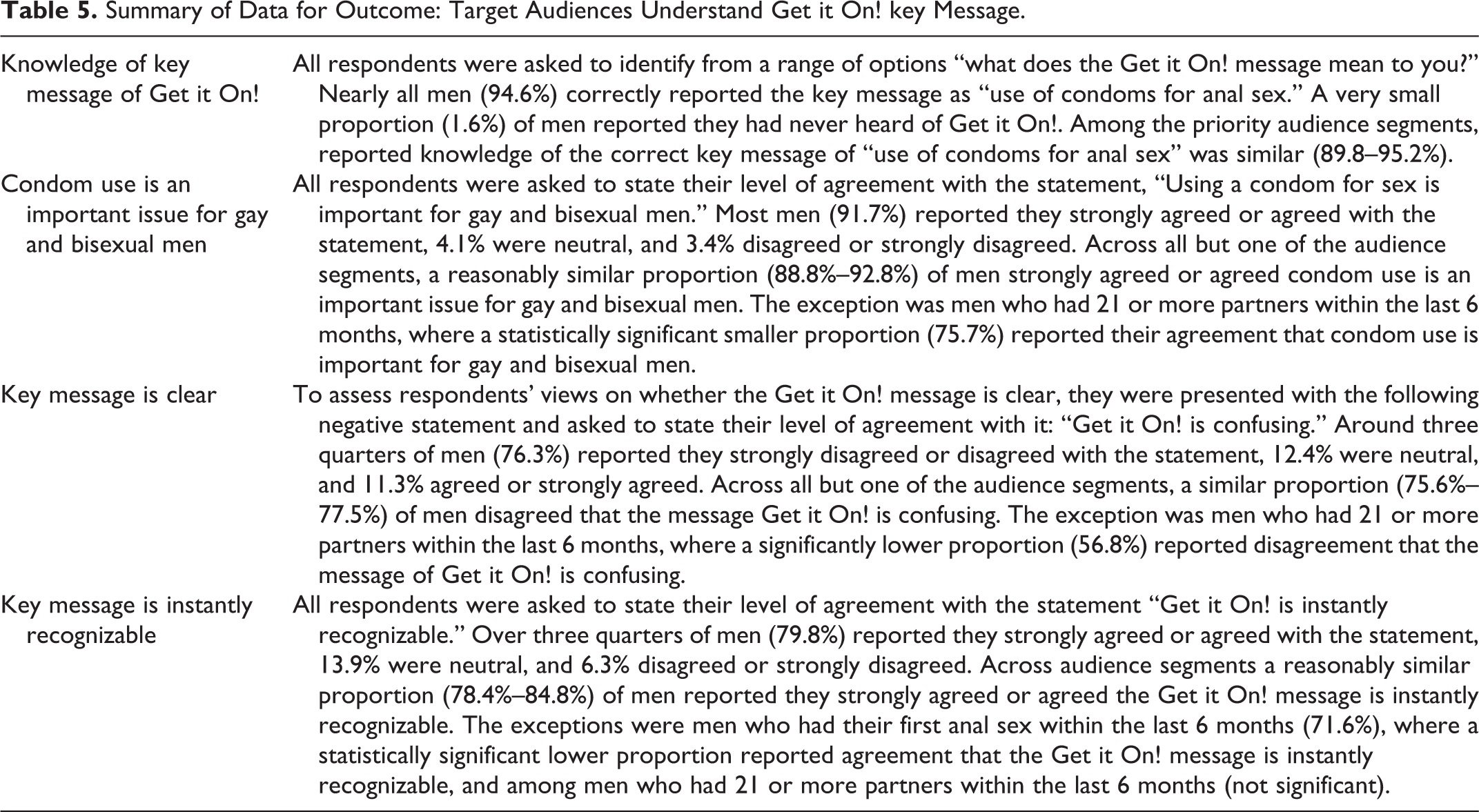

In the Get it On! program evaluation data for the outcome Target audiences understand Get it On! key message were collected via a survey and summarized (Table 5). These data were then mapped to the rubric (Table 1). An assessment was determined for each criterion: Knowledge of key message of Get it On! (identify key message)—Excellent Condom use is an important issue for gay and bisexual men—Excellent Key message is clear—Very good Key message is instantly recognizable—Very good

Summary of Data for Outcome: Target Audiences Understand Get it On! key Message.

Following the process outlined above a short descriptive paragraph providing a concise summary of performance should be made. In respect to the outcome question “How well have the targeted audiences understood the Get it On! program’s key message?,” performance was rated as excellent to very good. This determination was made because almost all men had knowledge of the key message of Get it On! and reported strong agreement that condom use is an important issue for gay and bisexual men. Additionally, most men reported the key message was clear and instantly recognizable.

Similarly, the process for assessing the quality of the intervention (How well does Get it On! reflect best practice in social and behavior change marketing?) involved comparing the evidence (qualitative data and document review) (Table 4), with the standards detailed in the rubric (Table 2). An assessment was determined for each criterion (below), leading to an overall determination of very good performance in relation to how well best practice in social and behavior change marketing was reflected. Behavioral outcomes—Excellent Target market identification—Excellent Program objectives—Good

Share Lessons Learned (Reporting)

The Easy Evaluation framework supports the development and use of succinct reports. These reports are based around the notion of providing direct answers to specific evaluation questions about the quality of the intervention and its success in achieving predetermined outcomes. Sharing the results of completed evaluation projects widely is important, but often overlooked. Formal reporting will usually involve a written report for project funders and selected key stakeholders (e.g., Adams & Neville, 2013; Wilkinson et al., 2011).

Unlike reports prepared for traditional research projects, innovative data visualization (Azzam et al., 2013; Evergreen, 2016) and reporting practices are increasingly being employed (Hutchinson, 2017). It is often useful to present summaries of the data within the body of the report (e.g., Tables 4 and 5), with a fuller analysis of results provided as appendices. This helps to shift the thinking from reporting of descriptive research findings to considering how data are used in an evaluative sense to tell the “story” about the merit, worth, and significance of the program.

In addition to formal reporting, creative ways to provide stakeholder feedback should be developed. The mediums employed for providing stakeholder feedback should be appropriate for these groups and might include a PowerPoint presentation, oral report at a meeting, various media (print, radio, Facebook, webpage), or an exhibition or display in a public place. Where possible wider dissemination to academic and practice audiences should also be undertaken through journal articles (e.g., Adams et al., 2017; Wilkinson et al., 2014) and conference presentations.

Discussion

Health professionals are competent clinicians who deliver health services to communities. Integral to the successful delivery of health services is the knowledge, attributes and skills for evaluating their impact. Consequently, the ability to undertake program evaluation is an important addition to the skill set of all health professionals. While there has been an expectation for some time that health professionals can plan and implement evaluations of small scale projects (Stevenson et al., 2002), training and support for this group has been lacking.

In this article we have presented a framework to guide health professionals in conducting program evaluation. In doing so, we have highlighted that research and evaluation are not the same, and it is evaluative reasoning that sets these endeavors apart. We are not suggesting that following this framework will prepare health professionals to be “professional evaluators.” The framework can however inform “non-evaluation professionals” about how to undertake useful evaluation activity (Gullickson et al., 2019) and contribute to building evaluation capacity and capability among health professionals (King & Ayoo, 2020). Small projects including student theses or applying tools such as logic models may be an appropriate way to initially utilize the framework. With increased confidence larger projects may be possible, as well as more informed involvement with external evaluators when this is relevant.

The strength of the Easy Evaluation framework is that it offers health professionals a way to embed evaluative thinking into program evaluation through its processes. Ensuring an evaluation lens is applied sets program evaluation apart from research projects that are evaluation in name only and demonstrate no evidence of evaluative thinking, nor have robust systems in place to make value judgements about the merit, worth, and significance of programs. Using an evaluation-specific framing allows evaluators to move beyond just reporting data to telling a meaningful “story” about a program (Hauk & Kaser, 2020).

Some limitations should be noted. This framework is one way to approach program evaluation, but there are a plethora of alternative approaches to program evaluation (for a survey of approaches see Mertens & Wilson, 2019). However Easy Evaluation has been successfully taught to and used by health professionals, and has proved suitable in many circumstances. Further, evaluation approaches using logic models have been critiqued on the grounds they are not always suitable for the evaluation of complex programs (Brocklehurst et al., 2019; Renger et al., 2019). While this criticism is valid in particular circumstances, the approach offered here is entirely suitable for the simpler projects in which health professionals starting out in program evaluation are most likely to be involved.

Conclusion

Providing a way for health professionals to undertake program evaluation will allow for greater understandings to be developed about the merit, worth, and significance of clinical and non-clinical health initiatives. In our view health professionals have valuable expertise which can enhance program evaluation activity. Easy Evaluation is a framework that will allow health professionals to conduct robust program evaluation.

Footnotes

Acknowledgments

We acknowledge colleagues (Dr Pauline Dickinson, Dr Lanuola Asiasiga, Dr Belinda Borell) who were involved in the development of the Easy Evaluation framework and those who led or contributed to the evaluation projects referenced in this article.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The development of Easy Evaluation and the teaching of it to the public health workforce is funded by the Ministry of Health, New Zealand. The views expressed here are those of the authors and not the Ministry of Health.