Abstract

To the disbenefit of qualitative health services research, the generation of study design is too often implied as a logical consequence of aims or questions. Limited space is afforded to describing the critical processes we go through to devise our research for the ever-complex services we seek to understand. This article offers an in-depth examination of qualitative health services research design and the considerations inherent in the process. To illustrate, we present a worked example of our experience developing an investigation to characterize and explore multidisciplinary cancer service provision in hospital outpatient clinics. We map the development of our investigation from the a priori conceptualization of the phenomena of inquiry through to the detailed research plan, explicating the design choices made along the way. We engage with key issues for qualitative health researchers, which include how we make sense of and account for context; address multisite research considerations; design with and for stakeholder engagement; ensure epistemological, ontological, and methodological coherence; and select analytical and interpretative strategies. We arrive at a complex staged investigation that employs mixed and multi-methods to be conducted across a range of settings. Our purpose is to stimulate thinking about many of the contemporary design challenges researchers negotiate.

Keywords

Introduction

This article explores an under-described aspect of the work of qualitative researchers; methodological scholarship rarely focuses on how we actually design studies for health services research. Design is the process of coherently aligning what we wish to learn with what is conceptually and practically possible to glean. A range of determinations, theoretical, methodological, and pragmatic, must be made at the outset and modified ad hoc. In practice, for researchers, this often means negotiation and compromise, but it also affords an opportunity for innovation. We believe that sharing our experiences of developing novel designs for implementation of health services research in the real world can promote textured reflection upon how we practice. This belief stems from a shared unease about the dearth of published literature “concerning how we think about” the design and conduct of research (Cheek et al., 2015, p.752). The call to lay bare, examine, and learn from these processes has largely gone unanswered (Cheek et al., 2015; Morse, 1999, 2008).

There are several reasons why sharing our experiences is needed to address this gap. First, we risk promulgating a superficial understanding of qualitative research. To the detriment of our knowledge of the actual qualitative research we do, discussion of the process tends to occupy minimal space in common outputs such as study protocols, articles reporting findings, and reports for funding bodies. In such texts, the rationale for the research aims (RAs) and questions is often described in painstaking detail, while the methodological justification for the design can read as if it is self-evident or as a simple logical outcome of these aims. As captured by Morse (2008) “the plain hard thinking—and the more the better for our research—is hidden behind the elegance of the end product” (p. 1311). In other words, the complex array of often difficult methodological decisions is rendered invisible.

Second, failing to reflect on how we develop designs not only risks stagnation but leaves us under-equipped to adapt when necessary. The bulk of guidance for design comes from texts targeting novice research audiences (e.g., Bourgeault et al., 2010), yet mastery of design is an ongoing pursuit for practitioners—a deep dialogue between researchers and design considerations. As researchers, our understanding is enhanced as we adapt our methodologies to address diverse health settings that are ever-changing, participant population considerations that are a moving target, diverse clinical experiences and opinions, constraints of time and resources, and a landscape of shifting health priorities. Flexibility and adaptive thinking are core items in our qualitative research toolbox. Offering accounts that highlight the emergent and iterative processes of design can demonstrate how our thinking evolves.

Third, by examining such accounts, we can enhance our understanding of many methodological considerations, learning from the ways other researchers negotiate challenges we share. When fellow researchers employ approaches such as the “armchair walkthrough” of a research proposal, posing “what if” questions along the way (Morse, 1999, 2008), or dynamic reflexivity to uncover the thinking which changed the methodological trajectory of an investigation (Cheek et al., 2015), we all benefit. We wish to contribute to these efforts.

Our contribution takes the form of an in-depth examination of an investigation developed within a multimillion dollar program of research at a Centre for Research Excellence in Implementation Science in Oncology funded by the Australian National Health and Medical Research Council. The research program was conceptualized by research leaders in the fields of implementation science, oncology, social science, and health services and focused on service provision in two geographically defined “health districts.” One of the Centre’s ultimate aims is to develop and implement tailored interventions for public hospital oncology services to support better, more evidence-based, multidisciplinary care (MDC). Prior to identifying intervention targets, we need to establish foundational understanding of multidisciplinary oncology care and the service context of delivery. The Centre begins therefore with a project to characterize cancer MDC, the design of which serves as our worked example.

In Part One of the article, we map the design process for the MDC project, commencing with the a priori conceptualization of the phenomena and culminating in the generation of a detailed investigation plan. We discuss the issues that researchers encounter when they engage with the context of research. This includes how we make sense of context and how we study phenomena in a context where multiple sites are being researched in a complex, multi-method design.

In Part Two, selected methodological considerations are identified as critical to the design process and are explored more deeply. Determinations about stakeholder involvement in research development are explored: Whose input should be sought? By what means? And to what extent? We then consider issues of epistemological, ontological, and methodological coherence. Guided by Willig (2013), we are prompted to think about the philosophical assumptions, the type of knowledge desired, and the conceptualization of the role of the researcher within a given methodology. Finally, we take up the issues to be negotiated when we engage in collective “sense-making.” How can we make sense of different types of data, coherently articulate their in-setting situatedness, and derive their relevance between the studied settings, and beyond. Throughout, we highlight possible alternative pathways among the large number of design options from which researchers may choose.

Part One

A Priori Conceptualization of the Phenomena

We began the task of characterizing MDC in hospital outpatient cancer services across the two local health districts by consulting the literature to develop an initial conceptualization of the elements of this care. The principles of MDC in the Australian health context consist of the delivery of equitable, evidence-based care by a team that combines relevant expertise and facilitates active patient involvement in the process (Cancer Australia, n.d.; National Breast Cancer Centre, 2003). Via the literature, we conceptualized constitutive, interconnected elements of MDC as addressing patients’ needs, diverse professional expertise, and decision making. As depicted in Figure 1, these key elements must be coordinated for the effective delivery of MDC, and these elements collectively offered us a working conceptual framework.

Study conceptualization of multidisciplinary care.

A multidisciplinary approach is internationally regarded as best practice in the provision of cancer care (e.g., American College of Surgeons, 2016; Borras et al., 2014), but there is variation in how it is defined, modeled, and practiced. We noted that this diversity is apparent at various scales, not only regionally but also within individual hospital services (Rankin et al., 2018). Our study focused on service provision in five public hospitals serving a resident population of over 1.8 million people, with some hospitals only providing care for common cancers, while others also cater for rarer tumor types. Given this, we were prompted to consider questions such as what form MDC takes in these cancer outpatient clinics (OPCs), how it is organized, and what types of practice would it involve? We reasoned that efforts to engage with MDC must be informed by knowledge of local procedures and practices. Thinking about the implications of potential diversity in the organization and practice of MDC helped us to progress toward the development of a focus for the investigation.

Adopting a problem-sensitive lens, we then undertook a more detailed examination of the elements of MDC provision. We may think about this as the identification of a problem as constructed through a mismatch between expectation and outcome; through a poverty of effective means to address an issue; or, as was the case for us, through a limited understanding of the detailed, nuanced world of care delivery across our diverse sites. The literature pointed to critical gaps between optimal evidence-based care and current care in relation to the key pivot points of clinical decision making and addressing patients’ supportive needs (e.g., Dilworth et al., 2014; Hamilton et al., 2016; Rankin et al., 2018; Walsh et al., 2011). Further, these elements were aspects of care targeted within national health sector reports and local health district plans, highlighting these elements as salient areas of focus for our investigation.

While this approach was useful for our purposes where literature offered conceptualizations of MDC and an associated evidence base, it may be instructive to contrast this with an alternative where primacy does not lie with the literature for the conceptualization of a problem or phenomenon. For example, Hack and McClement (2016) document the origins of “dignity therapy,” an oncological psychosocial intervention for palliative care that was derived from grounded theory approaches. Researchers would “explicate the construct of patient dignity” through extensive, in-depth interviews with patients who had advanced cancer (Hack & McClement, 2016, p. 501). Analysis of this data informed a theoretical framework that was subsequently developed and validated via quantitative approaches (Chochinov et al., 2002). Grounded theory approaches offer a rigorous process for developing new conceptualizations and theoretical frameworks (e.g., a framework for MDC could be generated and refined on the basis of exploratory interviews with health professionals). A grounded theory approach would be freer from dominant framings in the existing literature, which is appealing when investigating unexplored, emerging, or poorly theorized phenomena.

Engaging with the Context of Practice

The progression of a preliminary conceptualization where we fashioned individual researcher’s views into a team-agreed perspective led us to document a viable research plan. This was aided by an increasing understanding of the local environments of service provision and the phenomena of interest within these contexts, largely based on the published literature and supplemented by scoping discussions with key managers. In this section, we chart the development of the design from the areas of potential focus already identified, highlighting key considerations to be negotiated along the way. Owing to the nonlinearity of the design process, we used the following questions to structure this discussion: How do we make sense of the health services context? How do we develop a focus that is locally salient and relevant? and How might we then study phenomena in context(s)?

In our investigation, the research settings were prespecified: two local health districts with a combination of large and small hospitals that collectively offer an extensive range of cancer outpatient services. Each of the local health districts contained one large hospital in which the outpatient clinics provided an extensive range of consultation and treatment services. The smaller hospitals offered a more limited range of services but were closely linked with the larger outpatient clinics to facilitate access to centralized services (e.g., radiation therapies).

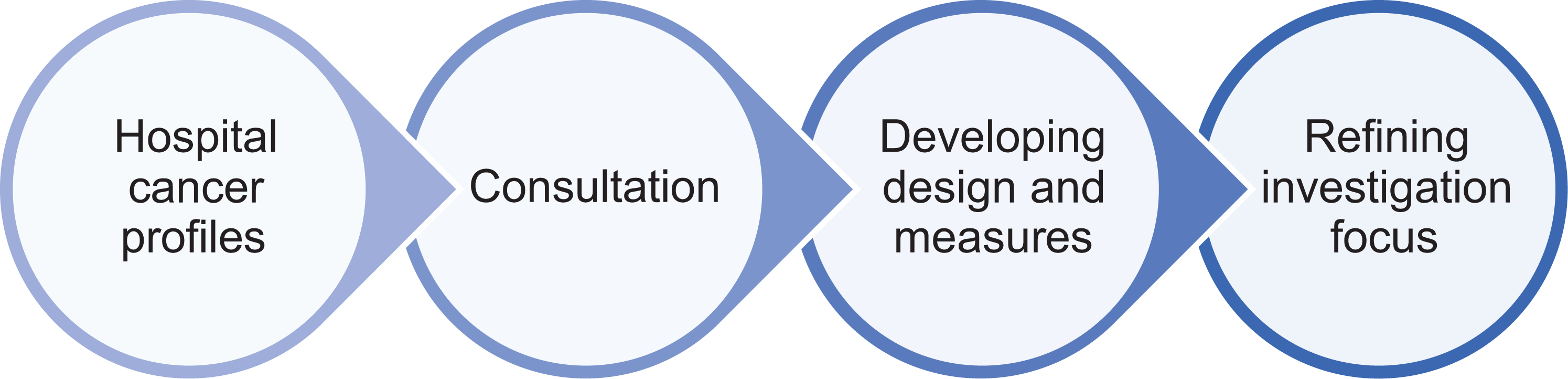

To gain a basic knowledge of cancer OPCs, we sought to generate profiles of each that would function as living documents, where descriptions would be accumulated over the course of the investigation. Basic structural descriptions were derived from publicly available information such as local cancer plans, reports by peak state bodies, government, reported data sets, and hospital websites. We aimed to extend this through scoping of the OPCs and consultation with managers and other hospital key staff (see Consultation and Codesign subsection for details). Core preparatory activities are depicted in Figure 2.

Preparatory activities.

As we engaged with the sites and key staff, our appreciation of the distinction between the research settings and research context deepened (Nilsen, 2015). A textured understanding of the local context of MDC provision was a key basis for formulating the RAs. As a first step in the development process, we found it useful to consider how we may define and operationalize the context for the purposes of our work.

How we make sense of the context?

What we take to constitute the research context (e.g., the boundaries, attributes, or significant features in a given environment—the health ecosystem under investigation) and the extent to which we need to engage with the context to address the aims of the research have profound implications for design. We surveyed theoretical resources that would equip us with a useful framework to explore these issues. For the purposes of our work, we found complexity science to offer a rich lens through which we can characterize the delivery of MDC (Braithwaite et al., 2017; Holland, 1992; Walker et al., 2009).

Within complexity thinking, health services settings including oncology OPCs are considered to be embedded within complex adaptive systems; they are dynamic and responsive to internal (e.g., new patient-reported measures system) and external (e.g., closure of a nearby service) disruptions (Braithwaite et al., 2013). Critical within these systems are the interactions among multiple interconnected agents (such as members of the medical oncology team, the clinical support team, patients, and their families) that can generate unanticipated outcomes (Holland, 2002). Complex adaptive systems often follow their own internalized rules (not necessarily those prescribed by top-down authorities) and respond to competing demands by exercising discretion and judgment (Braithwaite et al., 2018). Agents who are interconnected through organizational structures also self-organize through other interconnected networks often to achieve shared goals or bridge gaps in formal systems of service delivery (Long et al., 2013). As interconnected networks of agents interact and respond to environmental demands, they not only generate social structures but also communicate, shape ideas, and produce rules by which to live and practice, which may or may not over time become formalized into policy (Clay-Williams et al., 2015). While a complex adaptive system is ever-changing, patterns of structure, rules, and behaviors emerge that influence and are influenced by frontline practices (for a comprehensive discussion, see Braithwaite et al., 2013, 2017). This lens informed our understanding of context and in turn had implications for shaping the aims of our investigation.

How do we develop a focus that is locally salient and relevant?

When we engage with complexity thinking, we are trying to counteract natural linear causal assumptions, but this does not make research any easier. We are obliged to appreciate the limitations of reliance upon formalized work prescriptions (e.g., guidelines, structural descriptions, policy, or management views), what is termed “work-as-imagined” (Hollnagel, 2012). In providing the best care for oncology patients, for example, clinicians must make myriad small and large decisions, choices, and adjustments in their day-to-day work to accommodate shifting conditions and unanticipated demands while aligning care with the needs of the individual patient. This real-world activity is labelled “work-as-done” (Hollnagel, 2012). Complexity science allows us to appreciate the relevance of spaces between work-as-imagined and the work-as-done in real-world practice (Braithwaite et al., 2018). This thinking enabled us to refine the first RA: RA 1: “To characterize the organization and practice of MDC”

The focus areas of clinical decision making and addressing patients’ supportive needs as identified may also be explored more deeply through a complexity science lens. In the case of clinical decision making, the literature suggested that while particular care pathways may be envisioned or recommended, actual pathways frequently vary. Multidisciplinary team meetings (MDTMs) are a core component of most MDC service provision, creating a vehicle for relevant oncology and allied specialities to meet and review patients at specified times in the care pathway, but they are never standardized, nor can they be. Although MDTMs offer a formal decision-making mechanism, the literature revealed that this mechanism may not be used consistently or in the way envisioned (Rankin et al., 2018). Rather, clinical decisions are routinely made outside MDTMs, without any consultation during their routine care provision or in consultation with other clinicians who may or may not be part of the multidisciplinary team. This means that our conceptualization of this process becomes more complex as represented in Figure 3.

Potential for collaboration in clinical decision making. Source: Authors’ adaptation of Fennell et al. (2010).

This prompts us to consider whether such extra-MDTM collaborations are facilitated by interconnections and with what effects in terms of sustaining current rules and practice or promoting new ones. This presents an important opportunity to open our question up to capture clinical decision making as work-as-done as well as work-as-imagined. Thus, we develop this as an aim of the research: RA 2: To explore, in-depth, selected key elements of MDC: (i) to explore decision making in MDC and to explore decision making in MDC and how clinicians engage with decision-making support mechanisms in practice

As we turn to consider the second specific element of addressing patients’ supportive needs, we found the work of McDaniel et al. (2009) on designing research for complex adaptive systems instructive. They propose research design as the “development of tentative guides for action,” an ongoing activity that yields a capacity to adapt. This is not to say that the process should lack focus or specified purpose; rather, “as researchers pay attention and learn during the research process, they allocate and reallocate attention in a continuous way; they participate in a process of design” (McDaniel et al., 2009, p. 4). Researching in a dynamic context by responding and adapting to perturbances is especially relevant to our purpose, as during the course of our investigation a model of patient-reported measures will be rolled out in some of the OPCs in which we are working. Patient-reported measures involve the collection of routine feedback from patients about their well-being (Girgis et al., 2017). The adoption of patient-reported measures represents a significant shift from current models of supportive needs care. Rather than viewing this as a confounder, we take the implementation of patient-reported measures as an opportunity to explore a natural experiment occurring in the setting. Thus, we develop our second RA to include: (ii) to examine how health professionals engage with patients’ supportive needs in MDC (including patient-reported measures)

Planning this flexibility has implications for the design process. Specifically, as we want to study this care process both before and after implementation, we are restricted to a subset of the hospitals being studied, as the remainder has been exposed to patient-reported measures in a pilot program.

How do we study phenomena in context(s)?

We wish to create a design that simultaneously addresses major challenges and takes advantage of opportunities. From the previous discussion, three principles emerged, which are instructive for designing the investigation.

Thus, the first principle arises: We need to sufficiently situate the work within the different OPCs, each with its own context for work-as-imagined and work-as-done. This is the well-known risk of trade-off between depth and breadth of information. The risk is not just limited to adequacy of resourcing (it is entirely possible to under-resource a small-scale, single-site study) but relates also to context-stripping such that the output is devoid of features and detail crucial to meaningful interpretation. The emergent principle, therefore, is that our design should incorporate strategies that sustain connection with the setting-specific descriptions that emerge in the collection of data (i.e., per OPC) as part of the process of analysis (see the Making Sense of Findings section).

The second principle derives from the insight that the separate aims of the research are also ultimately interconnected, presenting an opportunity. As an example, the more we learn about the delivery of MDC, the better positioned we will be to understand practices of identifying and addressing supportive care needs. A useful principle of design emerges that we should facilitate a process of accumulative learning. That is, as we grow our understanding through multiple sources of data over time, we can bring new understandings into differing settings.

Third, we have made the case that maintaining openness to a dynamic and changing environment of service delivery is beneficial (as with the patient-reported measures study), and as a result, we should embody the concept of adaptive flexibility within the design. Within the confines of feasibility, we seek to take a broad view initially and reallocate our attention as we learn (McDaniel et al., 2009). Consistent with this principle, we should maintain the possibility of constant iterative revision as a legitimate stage in planning the research.

The design, shown in Figure 4, presents a rapid ethnography methodological exploration of MDC in OPCs (RA 1) feeding into two embedded sub-studies focusing on clinical decision making (RA 2i) and patients’ supportive care needs (RA 2ii). The key elements are described in terms of their coherence with the RAs and design principles.

Study flowchart.

Addressing the first aim begins with the generation of hospital profiles and is elaborated through the data collected in the following stages. In Stage 1, interviews will be conducted with navigators such as cancer care coordinators, nurse unit managers, and managers of clinical streams as they are well positioned to provide an overview of the organization of practice including models of care. We will undertake general observations of MDC delivery in the OPCs and individual observation of professionals from core MDT professions to access this care in practice (RA 1). In this first stage, we will also conduct pilot interviews intended to form part of the second stage focused studies. At the end of Stage 1, we will consolidate what we have learned, identify any emerging directions in the research, and reflect upon the process as a team. The principle of flexibility underpins this design choice. Amendments to approvals would be sought as required before Stage 2.

We continue to grow familiarity and enrich the accounts of these settings via ongoing characterization (including continued observations) in parallel with two focused studies in Stage 2 of the investigation, consistent with the third design principle. Study 2.1 will explore clinician decision-making practices and their engagement with support mechanisms available through the MDT. This will involve interviews with oncology doctors, whose treatment planning may be informed by MDTs, as well as observations of cancer MDTMs (RA 2i), in one group of three hospitals. Study 2.2 will examine engagement with patients’ supportive needs in current practice through interviews with health professionals in OPCs, cancer MDTMs, observations, and surveys of clinician readiness in the other two hospitals. A second phase of the study will examine the implications of the introduction of patient-reported measures through interviews with health professionals and a post-patient-reported measures implementation survey (RA 2ii). A range of multidisciplinary professionals are invited, given the potential OPC-wide implications of practice change. Consistent with the first design principle, rigor and richness will be maintained through a reflective and team-based approach to data collection, examining multiple sources of data, and the use of analytical and interpretative approaches formulated for multisite data collection.

Part Two

In this part, selected methodological issues emerging from the design process are engaged with more deeply. The following issues will be considered in turn: “consultation and codesign,” “philosophical coherence and method selection,” and “making sense of findings from across sites and their relevance beyond sites.”

Consultation and Codesign

While the development of research is often assumed to be a shared enterprise, in this section, we tackle the issues surrounding the collaboration of stakeholders as a formal element of the research. We reflect upon whose input is sought, in what ways, and to what extent in the development process.

Greenhalgh et al. (2004) note that: “Locally owned and driven programs produce more useful research questions and data that are more valid for practitioners and policymakers” (p. 616). In other words, rich and locally relevant knowledge may be gained from the perspectives of stakeholders (those with experience and expertise and/or who may be impacted by the research). In developing our design, expertise is derived from OPC leaders, senior managers, and clinicians within the local settings. This input is congruent with this provider-focused study; for other studies, stakeholder expertise could equally include those in receipt of care and their personal supporters or other groups as appropriate to the research. As an example, in oncology, Shaw et al. (2018) drew upon a wide range of this expertise to identify and establish priorities for care coordination in cancer services. This involved the elicitation of stakeholder submissions and workshop attendance by patients, experts, and cancer service health professionals. A nominal group technique was used to refine and establish measurable, consensus-based priorities for cancer care coordination (Shaw et al., 2018). The expertise of patients and carers was not sought in the design of our studies as we wish to learn about elements of MDC from the viewpoints of service providers. While this investigation is undertaken alongside patient-centered studies within the Centre’s program of research, we will nonetheless need to carefully reflect upon the implications of this decision as we develop our findings.

The value of researcher–stakeholder collaboration is widely recognized (Wikman et al., 2018), but a strategy is required to put it into practice. To aid clarity, we distinguish the decisions about stakeholder involvement in the design of research from designing for stakeholder involvement in the research. We can draw upon our experiences to illustrate the latter.

We were interested from the outset in establishing meaningful relationships with hospital stakeholders and viewed their input as essential to developing research that was relevant and made sense in the OPCs. To this end, we consulted with on-site clinicians and managers at the early conceptual stages of the research, allowing us to refine the investigation’s focus and nominated means to achieve it. We developed a better appreciation of the scope of cancer services, interdependences between facilities in the provision of certain treatments, and the external factors that impact hospital cancer services (e.g., statewide rollout of patient-reported measures). These discussions drove the development of the studies and facilitated an anticipation of potential ethical and pragmatic issues that could arise. As an example, it was useful to discuss the ways in which the research could minimize impact upon staff time and disruption to routine service delivery.

We sought to extend the engagement of the research team with professional groups (e.g., oncology nursing staff) working within oncology services through the provision of research information sessions and offering feedback tailored to these groups upon completion of the analysis. We viewed the sharing of expertise and skills as mutually beneficial and elected to offer support and training to eligible professional health care staff who had an interest in joining the research team in Stage 2 studies.

Our research illustrates some engagement strategies that may be embedded when designing provider-focused research but does not fully address other options for stakeholder involvement in the design of research. Participatory action research approaches, for example, highlight alternative design choices (Wong & Chow, 2006). Wikman et al. (2018) depict this approach to address a deficit of evidence-based psychological interventions for parents of children who were treated for cancer. They undertook a collaborative research process where researchers worked with a parent research partner group to codesign an online cognitive behavior therapy intervention for this group. These approaches are characterized by shifting beyond input of stakeholders at selected points to invite codesign that may involve partnership, shared ideas, and control over the orientation and focus of the research. The lived experience and expertise of those who may use this intervention are located as central to research efforts (Wikman et al., 2018). While different studies will have varied aims and input needs, we hold that decisions regarding stakeholder collaboration at any point during the research should be consciously and explicitly engaged with, even where a determination is made not to invite collaboration.

Philosophical Coherence and Method Selection

What of the philosophical underpinnings of the research? Here, we find helpful Willig’s (2013) introductory text to offer conceptual clarity, without oversimplification. Willig (2013) poses three questions that guided our efforts to achieve epistemological, ontological, and methodological coherence: What kind of knowledge does the methodology aim to produce? What kinds of assumptions does the methodology make about the world? and How does the methodology conceptualize the role of the researcher in the research process?

Often interventional and knowledge translation research is reported to be embedded in positivist paradigms or provided via naive or direct realist theoretical positioning (Hack & McClement, 2016). However, this is often not explicated, including in some qualitative studies. An absence of these details may (perhaps inaccurately) leave the reader to assume the aforementioned philosophical bases of the work by default unless otherwise stated (e.g., “an interpretative phenomenological approach was used”). Qualitative approaches offer an array of strategies for inquiry, which occupy (to differing extents) a diverse and rich tapestry of epistemological (how we might know), ontological (assumptions about what can be known), and methodological (the strategic framework for uncovering this knowledge) positions. These basic assumptions help to coherently thread the aim, methodological framework, and methods. A lack of detail regarding the basic assumptions of the inquiry renders it difficult for the reader to interpret and evaluate the findings (including against the internal objectives of the study) as well as to assess the appropriateness of the selected methods of data collection and analysis. Thus, here, we examine our methodological choices and their justification for each of the questions posed (Willig, 2013)

The first question concerns what the research seeks to know and the means through which we believe it can best be known. This may range from wishing to learn about how patients come to experience recovery or wishing to compare outcome indicators following treatment; each would best be served by employing differing methods.

For us, an account of MDC is needed which reflects the need for on-the-ground practice, inclusive of those practices that may be innovative or divergent. Scholarship delineating the space between work-as-imagined and work-as-done alerts us to the fact that while hospital policies and procedures specifying the organization of care are important, they are limited (Clay-Williams et al., 2015). To explore everyday practices and the organizational relations that shape them, we must select methods allowing observation of formal collaborations (such as multidisciplinary cancer care meetings), observation of health professionals in the process of delivering care, and general observations to understand the activities of the OPCs (see Figure 5).

Methods summary.

We also wish to deepen our understanding of selected elements of practice by harnessing the perspectives of health professionals through surveys and interviews. We draw upon their knowledge to guide our understanding of the processes and organization of care, such as through the mapping of typical and atypical patient journeys as part of the interview as well as to provide insights from their experiences of care delivery.

The second question, centered on methodological assumptions, concerns the ontological conventions that would underpin methodology. Positions adopted by researchers can range on a spectrum of direct or naive realist to relativist (i.e., there are as many realities as there are participant perspectives). Illuminating real-world provision necessitates some form of ascription to a realist ontological position. This is further cemented by the desire to provide a basis to inform interventional research. In other words, we begin with an assumption that there are discernible factors that may shape the trajectory of practice. For example, to gauge the implications of introducing patient-reported measures into routine practice, we take clinicians’ attitudes to be a potentially influential factor in its adoption; this can provide information about preparedness and change over time.

However, a consistently applied direct realist position would require us to believe that we have directly accessed the unfiltered and “true” reality through observations and accounts from health professionals. This is not the case. Where we diverge from a direct realist framework is in the epistemological assumptions; essentially, how we can know this reality (Bentall, 1999; Harper & Thompson, 2011). From this position, it may not be possible for accounts of health professionals and what we capture in observations to perfectly mirror reality (Bentall, 1999; Harper & Thompson, 2011). As put by Willig (2012), “the underlying structures that generate the manifest, observable phenomena are not necessarily accessible to those who experience them (i.e., the research participants)” (pp. 13–14). As a result, the investigation adopts a critical realist position (Bentall, 1999; Harper & Thompson, 2011). The experience and expertise of health professionals are valuable, if not essential, for characterizing care and identifying those aspects that impede or promote adoption of particular practices. These accounts offer a partial insight into complex phenomena, mediated by specific roles, situated in settings, and derived from professional experience.

Willig’s (2013) third question challenges us to consider the researchers’ role. A social constructionist framework might assume researchers inevitably bring to bear their subjectivity in actively constructing the findings and may consider this subjectivity as a resource rather than a burden to be reflexively mitigated. This lies in contrast to a direct realist framework where we may wish to minimize the influence of the researcher as bias could distort the fidelity of the findings. We recognize the challenges in capturing the reality of MDC provision and see ourselves as actively interpreting what we observe and learn through interviews. Furthermore, our interpretations may shape the trajectory of the research, as we have in-built flexibility to select the OPCs which, or interview participants who, we wish to allocate further sessions to the second stage of our work. That said, we maintain a desire to provide the most grounded and comprehensive account possible. We use a team-based approach, which is often advisable where rapid or intense data collection occurs (Charlesworth & Baines, 2015; Millen, 2000); this will allow us to learn from each other’s insights and to build consensus through ongoing team discussion and reflection.

In our approach to methodology, we emphasize the need for coherence across the selected methods in anticipation of the findings informing intervention studies to follow. Not all researchers would necessarily agree with this as a requirement (Duggleby & Williams, 2016). An alternative position that may be interesting for some researchers to consider is that taken by Duggleby and Williams (2016), in relation to intervention development. They contemplated the diversity of philosophical assumptions and epistemological considerations that correspond with qualitative and mixed methods approaches; in doing so, they suggested that diversity may in itself be advantageous as “diversity broadens the understanding of the human experience and situations upon which the intervention is based to effect change” (Duggleby & Williams, 2016, p. 152.).

Making Sense of Findings From Across Sites and Their Relevance Beyond Sites

The final section of this article, before concluding, addresses the sense-making process; that is, the means through which we derive findings from collected data and ascertain their implications. This raises the following question: How do we make sense of different types of data, coherently articulate their in-setting situatedness, and derive their relevance between and beyond settings?

Working with multiple and diversely sourced data originating in separate settings has tremendous potential to yield rich and interesting insights (and optimally address RAs, in our case). But realizing these benefits is only possible where analytical strategies are sufficiently sophisticated.

It is useful to establish the rationale for use of multiple or mixed methods. Clarity is needed about whether the methods allow for multiple insights into the same phenomena of inquiry (e.g., studying MDTMs through observations of meetings and interviewing professionals who take part) or whether the methods target differing components that comprise a phenomenon, akin to assembling its composite components. Or a combination, as is the case for the MDC project, where we selected differing methods that provide the best view of differing aspects of the process (e.g., direct observation of formal and informal mechanisms) and that allow us to explore the key processes and ideas from multiple vantage points (combining interviews with said observations).

As a conscious choice, we make no attempt to integrate these data and instead regard it as complimentary. While this is a minor component in our work, there is a body of mixed-methodology scholarship that highlights more sophisticated possibilities (and the associated conceptual bases) for the integration of these data points and the accompanying risks (O’Cathain et al., 2010).

Qualitatively driven research that includes a quantitative component must additionally contemplate how this relates to associated qualitative data and the role it has in the overall sense-making process. We collect a small discrete quantitative component in the form of a clinician readiness survey. The 8-item survey will be collected prior to the implementation of patient-reported outcomes to examine preparedness and following the introduction to examine any change in attitudes (Willis et al., 2009). The survey offers distinct information about a factor (attitudes of health professionals) that may be useful to measure, in tandem with other data to understand shifts in supportive care delivery.

The next set of issues encountered when developing an analytical strategy stem from the desire to, at once, recognize the site specificity of data and to articulate an account of the provision of MDC collectively in the hubs. Even if we temporarily sidestep this issue, we are eventually faced with gauging the relevance of findings for settings beyond this research, confronting a differing facet of the same issue of the generalizability of findings. The nuance and specificities of research context underlie these issues. Engagement with the particulars of context within the analytical and interpretive processes is well traversed within qualitative methodological scholarship and even offers a means through which quality may be assessed (e.g., see Elliott et al., 1999, on situating the sample). Clearly, we need to devise an analytical strategy that would allow us to ground our interviews and field notes in settings while carefully eliciting important tissues of meaning which thread through the corpus of data. Relatedly, transparency about the selected analysis would help us to better gauge how our findings may be relevant beyond the immediacy of the settings studied.

Contextualization and generalizability may often be pitted acrimoniously, a tension that requires unpacking to inform the design process. Generalizability is not typically or necessarily a useful standard for qualitative researchers and may be fundamentally incongruent with the theory, aims, and methods used in qualitative investigations. This does not reflect the capacity of qualitative findings for beyond-study relevance, but there is a divergence in the underlying premise resulting in the need for appropriate conceptual tools and standards. These and related ideas are explored through a number of ways: “transferability” (Henwood & Pidgeon, 1992; Lincoln & Guba, 1985), “applicability,” or as may be particularly relevant for knowledge translation research “analogical generalization” (Duggleby & Williams, 2016). Regardless of the terminology, in essence, we are invited to question our conceptualization of context as wholly unique or as possessing characteristics that may be confidently identified in similar settings. To make sense of the data, we should reflect further on the extent to which context may be regarded as productive of findings (e.g., Are findings irreducible from the context related or influenced?). Of course, this is not always the case, as some qualitative approaches (e.g., autobiographical or interpretative phenomenological analysis) may be employed without any desire to consider the implications beyond the individuals or settings.

The matters raised are not only conceptual but are also pragmatic when it comes to planning. As was observed by Jenkins et al. (2018), a rich body of scholarship exists to guide researchers about how we achieve richer contextualization of a single site; however, little guidance exists in relation to how we may accomplish this in multisite and multi-method activity, beyond that specified for the single site.

The “qualitative rapid appraisal, rigorous analysis” approach, specifically developed for multisite analysis, is one of the few available frameworks (Phillips et al., 2014). We selected this approach due to its track record with similar methods as well as coherence with our previously described methodological position concerning the retention of site specificity (Phillips et al., 2014). In basic terms, they begin with an analysis of like data (e.g., all interview data), and they then take a particular practice (setting) as a unit of analysis through intra-site analysis, developing a rich picture of that particular site. Then, they move to undertake intersite analysis using the distinct sites to develop a comparison (for full details, see Phillips et al., 2014).

Given the lack of scholarship that proposes multisite analytical frameworks, we point to an alternative approach. Jenkins et al. (2018) tackled the issue of multisite analysis using interviews and field notes derived from ethnographic observations of two sites. In their within-site stage of analysis, they analyzed within-site interviews as guided by the field notes derived from observations. Shirking the term cross-site analysis, as they regarded it as devaluing site-specific context, a between-site analysis was conducted in the next stage. Here, the researchers examined commonalities and differences and facilitated the development of spaces between sites where new insights may emerge. Instead of then summarizing the overall findings from sites, they then undertook a second within-site analysis to reengage with the site-specific data produced. This served as a reconsideration and exploration of the fidelity of the interpretations. The relative recency of this publication means that other examples of this approach have not been published to evaluate the feasibility of this approach across larger numbers of sites.

Discussion and Reflection

As with Morse’s (1999, 2008) work on the armchair walkthrough or Cheek et al.’s (2015) dynamic reflexivity, we too found the process of developing qualitative research to be anything but simple and linear. Subsequently, we reflect upon critical points in the process and share what we found helpful to advance our thinking. The first critical point concerns the progression of tentative ideas about what is important to investigate, toward arriving at finalized RAs. Given the anticipated contribution of this research for informing implementation in local health districts, the local relevance and salience ought to be key attributes of these aims. While these requirements will vary for researchers, this progression can bring uncertainty, as it forecloses other avenues of exploration, and may privilege certain methodologies as better placed to address these aims.

We found certain strategies that sensitized us to the context of inquiry helpful in this regard. Consultation with hospital stakeholders served to confirm some assumptions, relieved us of others, while compelling us to speak in more concrete terms, that is, what would each method require of health professionals at hospitals. Learning about issues of local importance prompted consideration of what meaningful role the research could play, and in turn, the design implications. This supported us to think about how we might align the ideal, the possible, and the pragmatic in our investigation.

We also recognized that while we were becoming increasingly convinced of the need to account for the variation and complexity of our sites, we lacked a frame of reference through which to do so. We found it useful to engage with theoretical resources, leading us to a lens through which we refined the focus of our RAs, and which provided guidance concerning the form the investigation might take. We are not advocating the widespread applicability of complexity theory for others but do contend that design is better informed where we make efforts to crystallize our conceptualization of health services contexts and their bearing on the given phenomena of study.

Another critical point stemmed from bids to cohere the aims, methodological framework, and methods. In reality, we find the underpinning philosophical positions to rarely be determined on the sole basis of a researcher’s view on the nature of knowledge. Rather, this is fashioned through interactivity with a range of specifications and concerns—in our case the overarching aim of the MDC characterization. We found the coherence of our investigation benefited by teasing out and grappling with these concerns. For example, the desire to inform future implementation lends itself to a realist ontological position, but as we thought this through, we reasoned that our efforts to understand MDC would be best served by a critical divergence in our epistemological position.

Further, through these efforts, we enhance understanding of tensions and pressing issues in qualitative methodology (e.g., negotiating in-setting situatedness with applicability beyond settings). As qualitative researchers, we have much to learn as we seek this coherence where developing designs for new health settings, problems, and populations.

The final critical point concerns our experience formulating the three design principles. In essence, these are a cumulation of what we learned through an iterative and interactive process of engagement with theory, literature, stakeholders, and methodological issues. While we found this challenging to do, we would encourage the articulation of the requirements and desirable properties in a given study. For researchers, this not only offers a template to explore methodological approaches but also helps to illustrate the trajectory between we seek to learn and the detailed procedure through which it will be investigated.

We describe a staged investigation that is complex, employs mixed and multi-methods conducted across a range of settings, and which is offered in order to stimulate thinking about many of the contemporary design challenges researchers negotiate. We considered strategies for developing contextually relevant RAs, deriving design principles and discussing the challenges of multisite research. How we design with and for stakeholder engagement, ensure philosophical coherence, and make sense of the findings are key considerations. In this article, we do not propose that the specifics of our design be instructive, our process be prescriptive, or for that matter claim to have invented anything new. Rather here, we have sought to contribute to the project of how we think about qualitative research design, a project, in our opinion well worth investing in.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Australian National Health and Medical Research Council [grant numbers APP9100002 and APP1135048].