Abstract

Recent methodological work that draws on a ‘constructionist’ approach to interviewing - conceptualizes the interview as a socially-situated encounter in which both interviewer and interviewee play active roles. This approach takes the construction of interview data as a topic of examination. This article adopts the view that close examination of how particular interactions are accomplished provides additional insights into not only the topics discussed, but also how research design and methods might be modified to meet the needs of projects. Focus is specifically given to investigation of sequences observed as puzzling or challenging during interviews, or via interview data that emerged as problematic in the analysis process. How might close analyses of these sorts of sequences be used to inform research design and interview methods? The article explores (1) how problematic interactions identified in the analysis of focus group data can lead to modifications in research design, (2) an approach to dealing with reported data in representations of findings, and (3) how data analysis can inform question formulation in successive rounds of data generation. Findings from these types of examinations of interview data generation and analysis are valuable for informing both interview practice as well as research design in further research.

Keywords

Although Charles Briggs in his 1986 book, Learning How to Ask, urged researchers to attend to the communicative structure of interviews, it has taken some time for his recommendations concerning the design, conduct and analysis of interview data to be seriously addressed by qualitative researchers. Holstein and Gubrium (Gubrium & Holstein, 2002; Holstein & Gubrium, 1995) have contributed significantly to this work through their conceptualization of the active interview in which researchers attend to both ‘what’ is said and ‘how’ data are co-constructed in interviews. More recently, researchers in a wide range of fields have discussed how constructionist theorizations of interviewing might be used to accomplish more complex readings of interview data (for example, see the 2011 special issue of Applied Linguistics, volume 32, issue 1).

In this paper, I use a ‘constructionist’ approach to interviewing, in which interviewers and interviewees are seen to “generate situated accountings and possible ways of talking about research topics” (Roulston, 2010, p. 60). As Holstein and Gubrium (2004) comment: “Both parties to the interview are necessarily and unavoidably active. Meaning is not merely elicited by apt questioning, nor simply transported through respondent replies; it is actively and communicatively assembled into the interview encounter” (p. 141)

Much methodological writing on qualitative interviewing indicates that interviews often do not proceed as planned, and that researchers must continuously deal with challenges as they arise during interviews. For example, Schwalbe and Wolkomir (2002) talk about various challenges that have arisen in interviews with men and suggest strategies that might be used by interviewers; Riessman (1987) discusses troubles encountered in interviewing a Puerto Rican woman that resulted in misunderstandings on the part of the interviewer; Johnson-Bailey (1999) examines the schisms of color and class that emerged in her interviews with African-American women; Roulston, deMarrais and Lewis (2003) review challenges in conducting interviews reported by novice researchers; and Adler and Adler (2002) describe a range of “reluctant respondents” (p. 515) who might confound interviewers' purposes. This paper adds to this body of literature by exploring a range of challenges that arose both during qualitative interviews and in analyzing and representing data for a qualitative evaluation study. Specifically, I demonstrate how findings from a methodological analysis of how interview data were generated might inform both the design process as well as interview practice.

Research Design and Methods

This paper draws on data from a qualitative evaluation of a Health Resources and Services Administration Curricular Grant Implementation at a Family Medicine Residency Program in the United States that was conducted from 2007–2010. The aim of the study was to describe stakeholders' perspectives of a training program in Mind Body/Spirit (MB/S) interventions for residents in Family Medicine preparing for careers as family care physicians. Although the study included field notes of observations, surveys and documentary data, this paper addresses the challenges that occurred in interviews. Over a three-year period, three focus groups, and 77 individual interviews with 57 people were conducted. Interviewees include 47 residents, six faculty members, and four instructional faculty members. All but four interviews were conducted face-to-face by the author and all were audio-recorded. Interviews averaged 40 minutes in length and were transcribed by a research assistant, a professional transcriber and the author. The author listened to all audio-recordings that she did not transcribe to check the accuracy of transcriptions.

Theoretical perspectives and data analysis

For the four evaluation reports delivered to stakeholders in January 2008, 2009 and 2010, and August 2010, all data were analyzed inductively (Coffey & Atkinson, 1996) in order to generate themes to represent emergent issues in program implementation. The author also drew on conversation analysis (CA) as a method of investigating interactions that were puzzling or problematic, although these analyses were not included in evaluation reports. CA as a method of examining talk-in-interaction was developed by the sociologist Sacks and his colleagues (Sacks, 1992; Schegloff, 2007) and focuses on examining the conversational resources used by members in everyday interaction (Psathas, 1995; Have, 2007). Originally used to analyze mundane talk rather than research interviews, scholars have used methods drawn from CA and ethnomethodology (EM) (Garfinkel, 1967; Have, 2004) to investigate the generation and analysis of interview data (Baker, 1983, 2002, 2004; Bartesaghi & Bowen, 2009; Mazeland & Have, 1998; Rapley, 2001, 2004; Roulston, 2006; Schubert, Hansen, Dyer & Rapley, 2009).

This paper draws on both thematic analyses of data and methodological analyses using CA. In sections in which the focus of analysis is on the turn-taking, sequential organization, and preference organization – i.e., examination of how participants select utterances from a range of “non-equivalent” alternatives (Liddicoat, 2007, pp. 110–111) – transcription conventions developed by Gail Jefferson (Psathas & Anderson, 1990) are used. These conventions provide additional information concerning how talk is produced – including pitch, re-starts, elongations, pauses, gaps and overlaps in talk. Through analysis of these features of talk, additional insight into how speakers make sense of one another's talk may be gained. For example, speakers' delays in providing an answer may indicate that the response is ‘dispreferred,’ or one that is routinely avoided. For those excerpts in which the analytic focus concerns the substantive topic of the talk, these features of talk are omitted from transcriptions.

Findings

When attention is paid to how participants of interviews construct their accounts, insights may be gained into whether the research design is effective in generating data to inform the research questions, as well as whether or not the formulation of questions asked of interviewees facilitates interaction that contributes to a study's purpose. In this paper, I address several issues. First, with respect to questions of research design I discuss the use of focus groups, dealing with reported data in representations of findings, and working on question formulation to inform further fieldwork. Second, I look specifically at how interview questions might be posed in order to facilitate interaction with participants who resist cooperating with the interviewer.

Questions of research design

Using focus groups

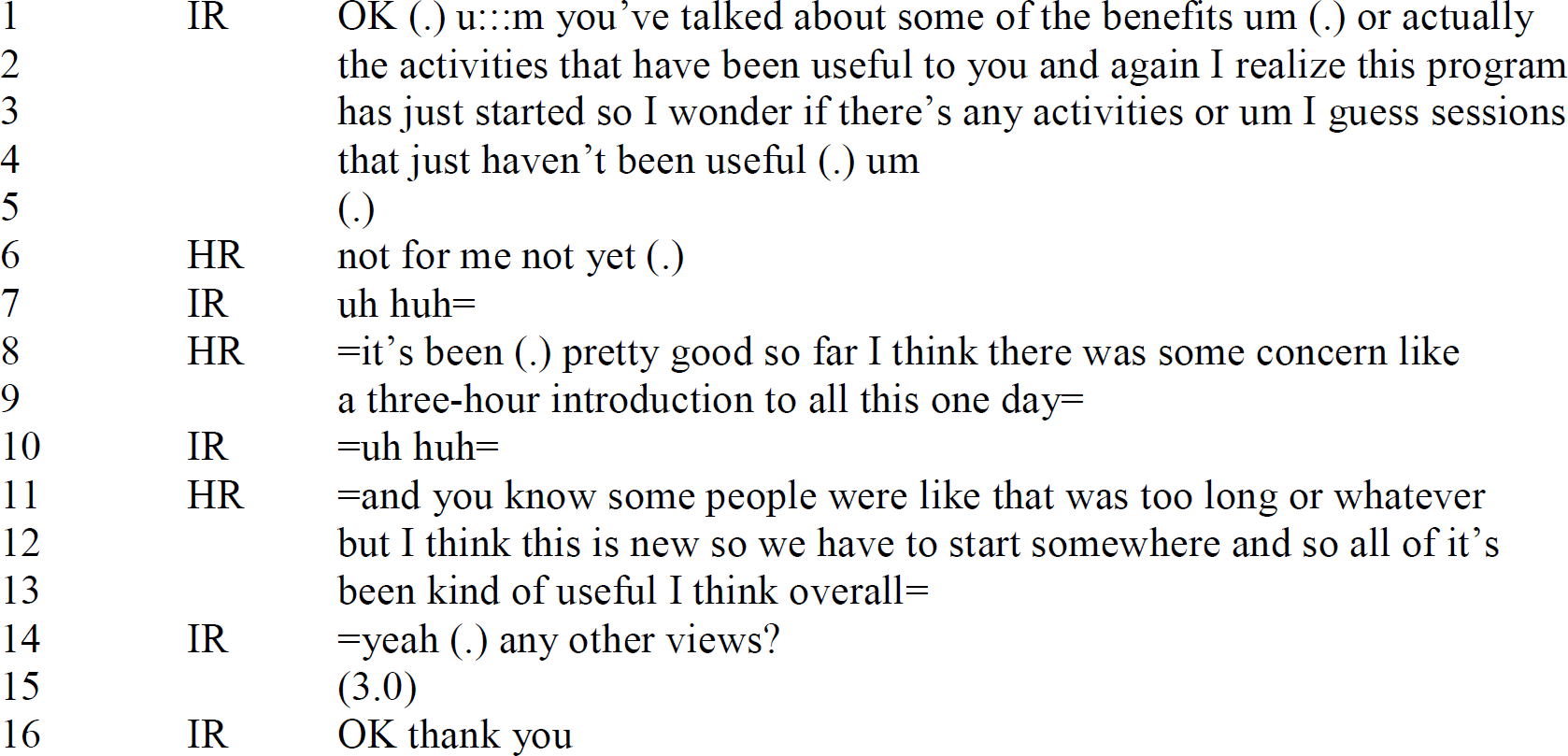

In the first round of data collection for the study conducted in October 2007, some residents expressed reservations in participating in the evaluation study, were reserved in discussing their views, had little to say, or chose to remain silent in focus groups. While the reasons for the reluctance expressed by some residents were not completely apparent, it appeared that some participants did not feel comfortable in talking about their personal views about MB/S with the two moderators, particularly in the presence of peers in the focus groups. For example, in Excerpt 1, a sequence of interaction is drawn from a focus group with 6 of ten 3rd year residents.

Focus group with 6 of ten 3rd year residents, October 2007 1

This was the first occasion that the researcher had met with this group, and questions focused on learning about how residents defined the key concepts represented in the training program. The program had begun two months prior to this meeting, and the stakeholders (i.e., faculty involved in program delivery, program consultants and the director of the residency) were interested in learning about initial responses to the program activities. In Excerpt 1, one of two Head Residents (HRs) provided a positive opinion on the training sessions that downplayed suggestions that there might have been any problematic aspects (lines 8, 12–13). This assertion is made in a way that both notes concerns expressed by others (lines 11), and minimizes (line 8, “there was some concern”; line 11, “that was too long or whatever”) and disagrees with these (line 12, “but I think this is new”). In the next turn, the moderator responds with “yeah”, and asks for other views (line 14). Here, the moderator's “yeah” functions as a response token to the prior turn, rather than agreement, since it is immediately followed by a question seeking clarification if there are “other views.” The moderator's question is followed by a three second silence (line 15) from the group. Conversation analytic work on turn-taking has shown that when the first pair part of an adjacency pair (in this case the question posed at line 14) is followed by silence or a gap, then the missing second pair part (in this case an answer and speaker change that is not forthcoming at line 15) is problematic (Liddicoat, 2007). The lack of either a response to the moderator's question or any agreement or orientation to the prior speaker's turns at lines 8–9 and 11–13 suggests that others in the group did not endorse the HR's positive assertions that the program had been “pretty good so far” (line 8) and “all of it's been kind of useful” (lines 12–13).

This interpretation was supported by other data examined in this phase of the study. For example, other residents had expressed a number of criticisms in the anonymous written evaluations of the two sessions that the HR referred to in Excerpt 1. Further, some of the 1st year residents critiqued various activities in the training sessions during individual interviews. Examination of the negative evaluations that were expressed in the focus groups showed that these focused more on concerns that physicians might have in applying skills covered in the training sessions in their future practice than on criticism of program activities. Although there were few instances of overt criticism within the focus groups conducted in the first round of data generation, residents from all three years expressed the following criticisms of program activities in individual interviews and written evaluations:

Participants commented that they would like to learn more about concrete applications of MB/S rather than abstract principles. Residents specifically wanted to know how they would apply MB/S in 15–minute consultations in their work as family medicine physicians, would like to have access to practical examples of physician-patient interaction with respect to MB/S and would like to have practice in applying MB/S interventions (e.g. role plays).

Some participants found the 3–hour lectures too long, especially after lunch.

Some participants wanted access to specific evidence that supported the use of MB/S interventions in patient care.

Some participants commented that the material in the training sessions could be delivered more efficiently; or that the information included was “repetitive,” or already known.

Two residents expressed the view that the MB/S training program should be an optional part of their residency requirements.

When asked for evaluations of what activities in the training program had not been useful, both 2nd and 3rd year residents refrained from offering negative evaluations of activities in the focus group setting. As Excerpt 1 shows, when asked for evaluations of what had not been useful in a focus group context, 3rd year residents refrained from offering negative evaluations even though they were invited to comment further. In fact, only one resident expressed a criticism of program implementation in either of the focus groups conducted in the initial round of data generation.

In a second example, Excerpt 2 shown below, a speaker (R15) who provided a positive evaluation of the training program is supported by another speaker (R2). However, when the moderator of this group followed up on R15's positive claims about the training program to question whether there was agreement from others on this viewpoint (lines 6–10), R15 resisted commenting on others' viewpoints within the group setting (lines 11, 13, 15–16). These co-present residents had not expressed their opinions, and did not do so within the subsequent group interaction.

Focus group with 7 of ten 2nd year residents, October 2007

Excerpt 2 opens with R15, an active participant throughout this focus group, beginning an assertion on behalf of “all of us here” (line 1). This assertion is immediately self-repaired by R15 to “ones who have spoken up.” This utterance orients to co-present parties who had not expressed any opinions thus far (two of the participants did not participate in the focus group at all). R15's assertion is overlapped by a supportive utterance from another group member: “well it's important right” from R2 (line 4). In this excerpt, R15, supported by R2, claims a positive view for several speakers in the group (lines 1–5). In response to this collaborative assertion, the moderator orients specifically to R15's self-repair at line 1 by asking “is there an assumption that those who have not speak spoken up in the focus group that they are not interested?” (lines 8–10). This is a rather unusual move by the moderator, in that she asks R15 to openly evaluate the “interest” of two co-present speakers who have thus far remained silent. (An alternative move on the part of the moderator would have been to nominate those speakers who had remained silent for the next turn.) The moderator's utterances at lines 6–10 contain both a formulation of prior talk (Heritage & Watson, 1979) in which she repeats what R15 has said, followed by a closed question asking her to assess the moderator's formulation of prior talk. This question is both formulated to prefer a yes (or agreeing)/no (or disagreeing) response, and includes a presupposition on the part of the interviewer (that R15 has asserted that “there is an assumption that those who have not speak spoken up in the focus group are not interested”) (cf. adversarial question formulation by news interviewers in Clayman & Heritage, 2002). Here, R15 is faced with a dilemma. Agreement with the moderator's formulation is a preferred response – that is one in which no further explanation would be necessary. Yet, in this instance, for R15 to agree with the moderator's formulation of her talk (that “those who have not speak spoken up in the focus group that they are not interested”) would be to also assert a viewpoint on behalf of others who have remained silent – a risky position open to immediate refute by co-present parties. R15 manages this interactional difficulty by vigorously disagreeing with the moderator's formulation (line 11), and re-stating the position presented earlier. Interestingly, R15 repeats the self-repair made earlier, beginning the assertion with “from what ev-” (cutting off “everyone”, line 11), restarting with “from we've all said,” and then self-repairing with “from those who have spoken.” R15's response to the moderator's follow-up question re-emphasizes her positive assessment on behalf of the “ones who have spoken”; and declares that “I can't speak for the ones who haven't spoken” (lines 15–16).

The moderator who conducted this focus group spoke to one of two residents who chose not to speak during this group later in the day. In this interaction, this resident expressed concerns about the training program and the way in which it had been implemented. During the focus group these views were not expressed, and in speaking to the moderator on an individual basis, the resident commented that the choice to not speak during the focus group was based on wanting to avoid presenting contrasting and disagreeing views. Close analysis of how interaction took place in these two focus groups indicated a need to modify the initial study design, and discontinue the use of focus groups in favor of conducting individual interviews. This modification was made in the next round of data generation to allow residents who had little to say in focus groups a venue to discuss their views privately with the researcher, and was effective in generating responses from those residents who had been reticent to discuss their views in the presence of peers. By examining these excerpts in detail, it is clear that participants orient to both interactional problems in the focus groups, in addition to the implications of what they say for over-hearing audiences. These sorts of puzzling interactions are deserving of close inspection, yet may be lost during typical coding-based analysis aimed at generating topical themes.

Dealing with reported data

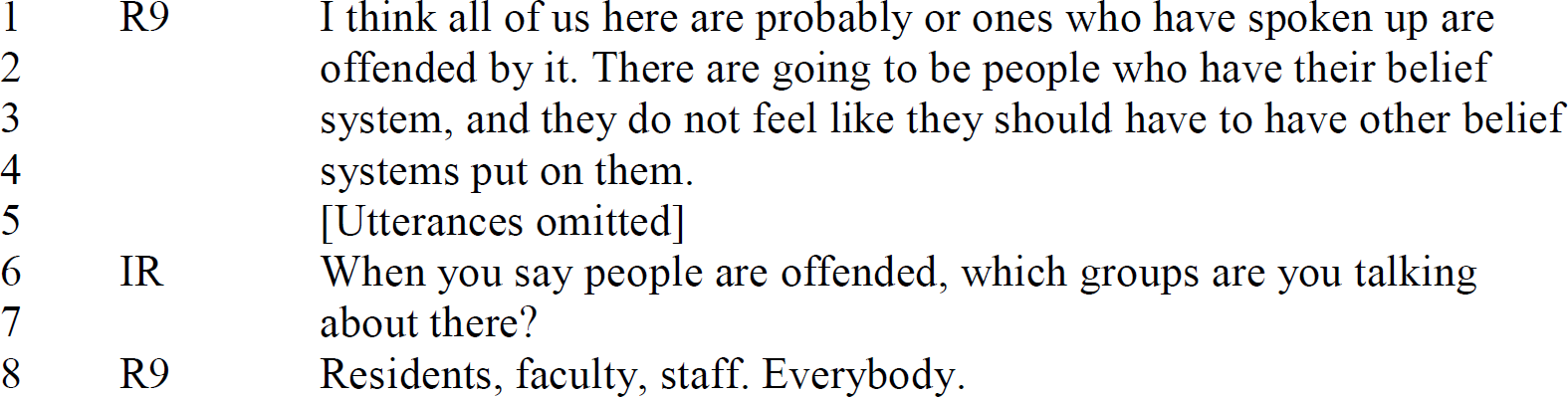

In interviews, some participants reported on “other people's beliefs.” These included opinions of staff, other residents, and faculty and so forth. In the first evaluation report to stakeholders, these viewpoints were clearly identified as “reported views of others.” For example, in the initial interviews conducted in October 2007, a first-year resident expressed a negative view of the training program, rejecting any value in learning about MB/S interventions for professional or personal purposes. R9 stated a number of times during the interview that the MB/S training program would have no impact whatsoever on his views or practice, and asserted that many other in the residency were “offended” by the program.

Individual interview with first-year resident, October 2007

R9 linked the offense described specifically to the very first session in which the program had been introduced. This session was an open meeting for all faculty, staff and residents involved in the Family Residency program. R9 claimed that audience members were “blind-sided” by the presentation. When the interviewer asked for further detail about this event, it appeared that a particularly problematic issue in R9's view was the inclusion of a guided meditation.

Individual interview with first-year resident, October 2007

When the interviewer later asked R9 to provide examples of the kinds of things each of these groups would typically say, the assertion regarding faculty seen in Excerpt 3.1 was modified:

Individual interview with first-year resident, October 2007

R9 provided a forthright evaluation of the MB/S training program — in R9's view it was not beneficial, was not valuable in terms of any learning outcomes, and would not promote any change in practice. R9 went further to assert that what had been presented in the MB/S training program had “offended” many within the Residency, including faculty, residents, and staff. These kinds of claims suggested that further information from other members of the residency was needed in order to verify the claims that many were offended by the training program in the way R9 described.

As a result of these accounts, at the end of the first year, faculty members who were not involved in program implementation in instructional roles were interviewed. An informal interview was also conducted with an administrator knowledgeable about the residency as a whole. By modifying the design of the evaluation study to include other people involved in the residency who were not residents or instructors in the MB/S training program (an administrator and six faculty members), further perspectives were gained concerning the views of others. Analysis of data showed that R9's claims about “others' views” could not be substantiated. For example, all six faculty members interviewed claimed to be supportive of the MB/S training program, even though they had different degrees of involvement and were aware of resistance to the program among some of the residents. Attendance records indicated that the mean number of training sessions attended by faculty members not involved in program instruction at the residency in the first year of implementation was 3.07 (mode = 1). For the same period, one faculty member had attended 8 sessions, and another 6.

These examples demonstrate how claims made by interviewees can be examined further via other sources of data (in this case, attendance records), as well as modifying the research design to include more participants. Of course, this process is commonly referred to as data triangulation (Seale, 1999). In this particular study, multiple sources of data in the form of documents, written evaluations, attendance records and interviews were part of the original research design for the evaluation. Since the focus of the study was on residents' perspectives and experiences in the program, the initial design had not included interviews involving faculty members not directly involved with the program. Given the forthright assertions with respect to the faculty made by R9, it was worthwhile modifying the research design in order to capture viewpoints of those to whom he had referred. Thus, this resident's claims that the program offended “everybody,” while not supported in other data generation as an accurate portrayal of others' viewpoints, may be viewed as a powerful rhetorical device used within the interview to portray his personal responses to the program.

Working on question formulation

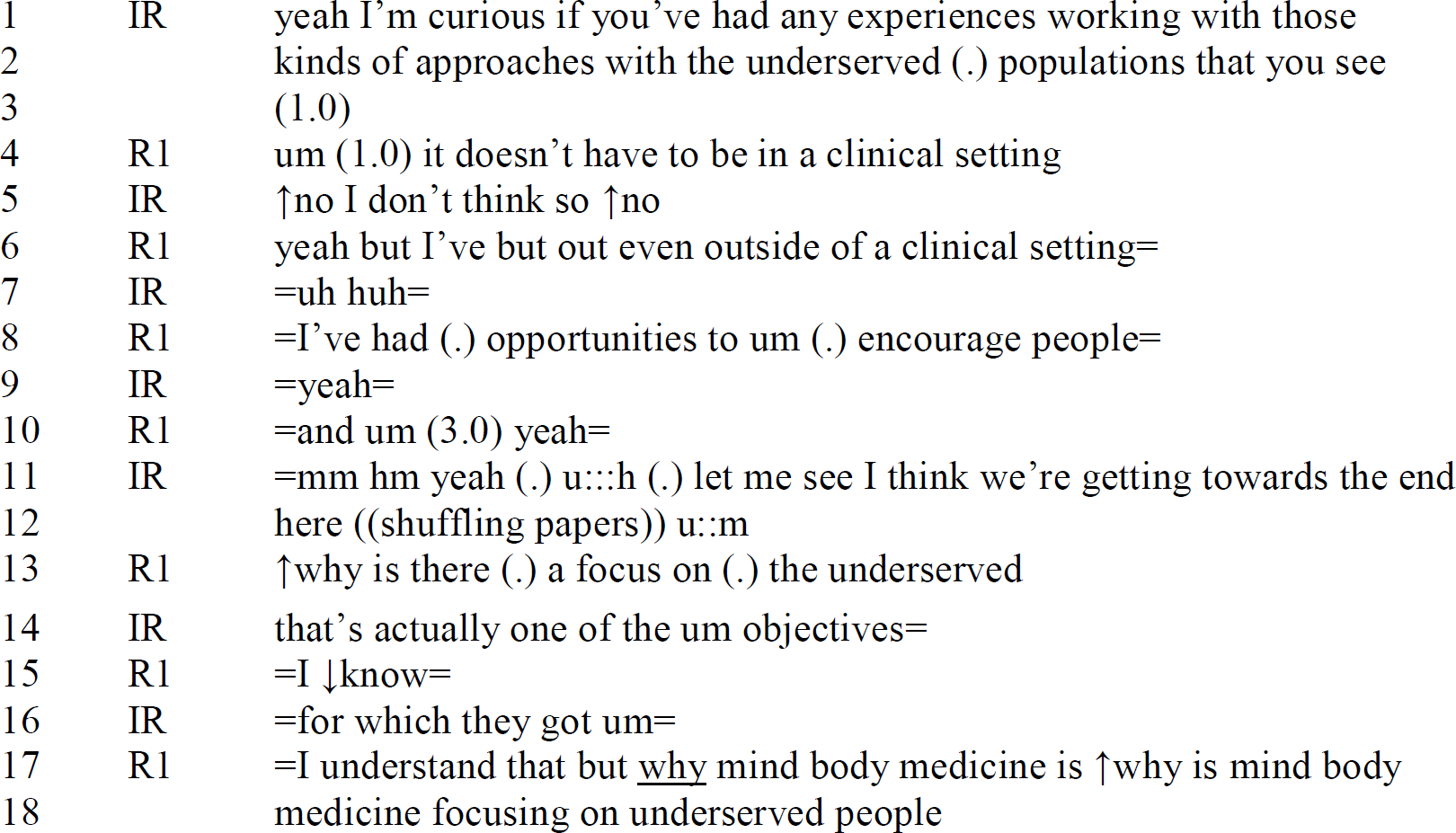

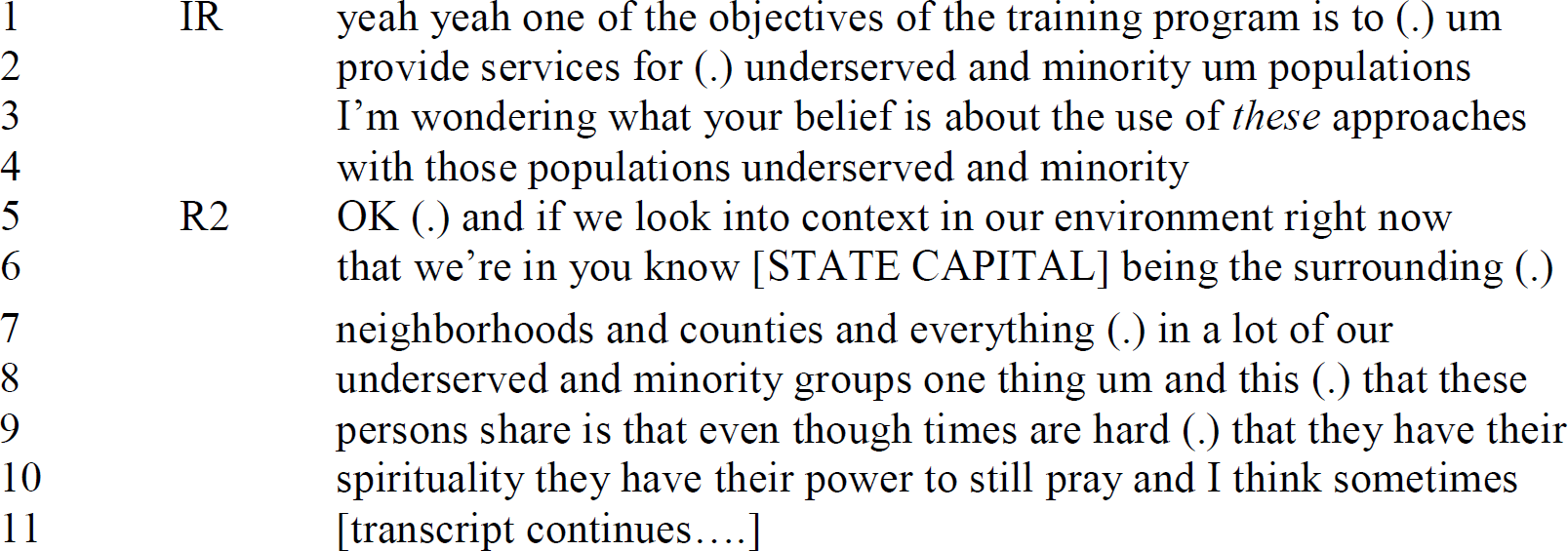

Two of the objectives of the MB/S training program related to raising residents' awareness of the needs of underserved populations, cultural minorities and diverse populations, and how MB/S interventions might be used in patient care with these defined groups. In order to describe residents' perspectives concerning the needs of underserved and minority populations, in interviews conducted in 2008, they were asked to characterize the populations served by the residency, and how MB/S interventions might be used with these populations. Of all topics discussed, this one elicited perhaps the widest variety in responses, and answers showed this to be a sensitive topic. For example, some participants did not answer the question, or responded by questioning the interviewer about the purpose of the question. Excerpt 4 provides one example of this kind of response.

Individual interview with second-year resident, 2008

Excerpt 4 shows the opening of a lengthy sequence (not shown) in which R6 provided an account of how she provided “equitable” care for patients — irrespective of race or socioeconomic status. Below, I include another example of how a resident oriented to a follow-up question concerning “underserved populations.”

Individual interview with third year resident 2008

In Excerpt 5, R1's responses are marked by delays (line 3, 4, 10), and she seeks permission from the interviewer to orient to the question not in terms of clinical practice, but in terms of her experience “outside clinical practice.” Later, after the interviewer has signaled the closing of the interview (lines 11–12), R1 pursues the interviewer for a response to the question of why the topic of “underserved populations” is of interest (lines 13–18), finding the interviewer's initial response (line 14) unsatisfactory. Excerpts 4 and 5 show the openings of lengthy and complicated sequences in which the interviewer and interviewees struggled to accomplish mutual understanding. Sequences are replete with repairs, pauses, restarts, and clarification questions posed by both speakers – all characteristics of trouble.

Thus, while some speakers provided descriptions of the kinds of populations served by the residency without prompting, others did not. Different question formulations were used in the second round of data generation in order to learn what question formulation would most effectively elicit descriptions from participants. For example, if residents initiated descriptions of patient populations, the interviewer posed follow up questions regarding their opinions with respect to the use of MB/S with patients. For example, R23 introduced the topic of the population served by the residency without being prompted in Excerpt 6.

Individual interview with first-year resident, June 2008

Later in this sequence, the interviewer formulated the sense of R23's talk at lines 29–30, and asked for more detail:

Individual interview with first-year resident, June 2008

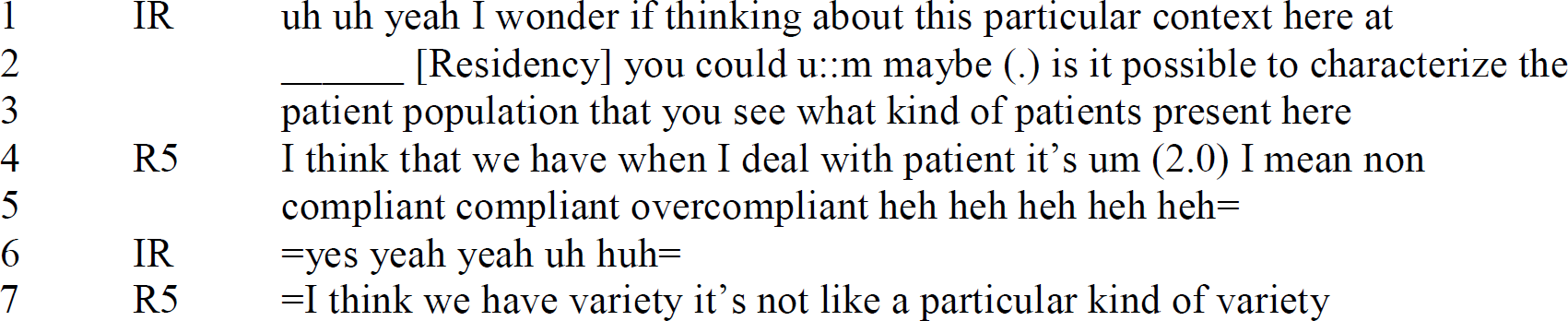

In excerpt 6.2, at line 5, R23 oriented to the description offered by the interviewer unproblematically, thereby agreeing with the interviewer's formulation of prior talk about the patient population at the residency as “underserved and minority.” R23 went on to provide an in-depth description of her perspectives (not included here). This did not happen in all cases, however. If interviewees did not introduce the topic, residents were asked to characterize the kinds of populations served by the residency. In Excerpt 7 below, for example, R5 gave a short description in response to this question formulation that provided information on how she characterized various patient “types.” Here, the issue that R5 focused on was that of “compliance,” that is, whether or not patients take up advice provided by their physicians. R5 presents the the categories of “non compliant,” “compliant” and “over compliant” in a joking fashion (line 5), and the interviewer accepts this as a serious response by not sharing in the laughter.

Individual interview with first-year resident, June 2008

In this interaction, R5's depiction of patients in terms of compliance provided an important clue to a central issue in her practice. Her straightforward response to the question posed indicates how an indirect question with wide parameters for responding in this case provided insufficient guidance as to what kind of information the researcher was seeking. Although some interviewees did make a link between underserved and minority patients without guidance, others did not. For the purposes of this study, Excerpt 7 shows the question to be ineffective for generating data relevant to the topic of “underserved and minority populations.”

Given that not all physicians oriented to the interview questions in the same way (for example, another resident responded to the question posed in Excerpt 7 by discussing insurance and reimbursement for the physician's services), a second question formulation was used. Here, the topic was introduced through reference to the objectives outlined in the original grant proposal. That is, given that one of the objectives of the training program was to serve underserved and minority populations – what were residents' opinions about that? An example of this kind of question formulation is included in Excerpt 8, along with the opening utterances provided by the interviewee.

Individual interview with third-year resident, June 2008

Thematic analysis of residents' responses to these various question formulations were characterized in the second evaluation report as: (1) recognition of specific benefits of using MB/S interventions with underserved and minority populations; (2) claims by residents that they treated underserved and minority populations no differently than other patients; (3) a focus on the challenges of applying MB/S interventions with underserved and minority populations; and (4) responses that did not address the intent of the question. Given the range of responses elicited via the different approaches to questions posed in interviews conducted at the end of the first year of program implementation, in the third round of interviews, the interviewer used the approach shown in Excerpt 8 systematically to examine this topic with incoming residents in the next round of data generation. This question had been shown to be most effective in eliciting further information from interviewees.

Because this topic was taken up in multiple ways by interviewees, when interviewing residents at the end of the 2nd year of the program, in addition to presenting the findings listed above and asking for residents' feedback, the researcher began by asking residents to define how they understood the term “underserved.” This resulted in gaining further insight into interviewees' reasoning practices concerning the topic of underserved populations, and clarification of interpretations of earlier interview data. In succeeding rounds of data analysis, for example, further insight was gained into the various ways in which residents did and did not link the ideas of (a) “non-compliance” to “underserved” populations; (b) “low-income” with “underserved”; and (c) “underserved” with the patient population served by the residency. Analysis of these descriptions provided information concerning the reasoning practices used by physicians in formulating treatment plans and descriptions of whether and how they how they incorporated MB/S modalities with underserved and minority patients. Thus, close analysis of puzzling interactions, in this case, both residents' descriptions of underserved and minority patients, as well as those sequences in which residents either refused to answer a question, or answered a different question, provided information into the kinds of questions that might be asked in succeeding rounds of data generation, and issues about which the researcher might check interpretations with residents.

Facilitating interaction

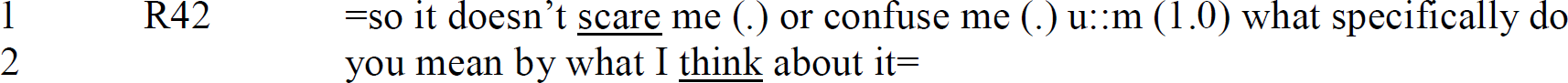

Participants sometimes orient to questions in ways that interviewers do not anticipate, or resist cooperating by answering questions posed by the interviewer. For example, in the fifth round of interviews, one interviewee repeatedly asked questions to clarify the topical intent of questions posed by the interviewer. For example, in an extended sequence (not shown here) following the opening interview question, the following interaction occurred:

Individual interview with first-year resident, October 2009

In Excerpt 9.2, below, I examine a sequence that followed shortly after this turn in order to examine the methods that speakers use to manage problematic interaction.

Individual interview with first-year resident, October 2009

This sequence took place just over three minutes into the interview, and as noted above R42 had already provided minimal responses to earlier questions and asked the interviewer what she meant by questions posed (see Excerpt 9.1). In responding to R42's earlier refusal to understand questions posed at the beginning of the interview, in Excerpt 9.2 the interviewer formulated three possible questions that R42 might respond to (lines 2–3). The topical foci of these questions orient directly to the kinds of responses that had routinely been provided by other interviewees in interviews conducted over the previous two years. While this might be read as poor interview practice in that several questions were posed at lines 2–3, rather than one, it also demonstrates the interviewer's orientation to earlier talk in this particular interview context. Rather than using the question formulation outlined on the interview guide (“What do you think of when you hear the term “spirituality” in relation to patient care), here the interviewer provides multiple ways in which the question might be understood. Seen in relation to earlier talk, this question formulation is a preemptive move on the part of the interviwer that corresponds to earlier clarification questions asked by the interviewee. Yet, rather than answer, R42 treated the questions at lines 1–3 in excerpt 9.2 as problematic by delaying a response (line 4), and asking “what do you mean by that?” This question invites the interviewer to answer her own question. Instead of providing possible answers, the interviewer's clarification of the intent of the question is prefaced by a review of possible “viewpoints” that physicians might have towards spirituality in patient care (lines 7–10, “there's a huge variety of opinions of whether it's useful or ?not or uh in what kinds of instances it might be uh::: ?useable”). This description of the spectrum of “what others might think” is framed in a general way, and by introducing the question with “I'm just wondering,” the interviewer invites R42 to comment on what he has observed of others in his practice, rather than asking him to provide statements about personal beliefs or knowledge. The interviewer's prefatory remarks to the restated question are marked by disfluencies, including a pause (line 6), delays (lines 6, 8 “so:::” “uhh:::”), and restarts (line 7). The formulation of a question as “just wondering” – that is, among many possible opinions about the “usefulness” of spirituality in patient care, had R42 encountered anything to do with spirituality? – works to downgrade the question from seeking statements about belief and knowledge to seeking observations about others. In response, R42 interrupted the interviewer by orienting to the notion of “usefulness” of spirituality (line 9). In his response R42 indicated that he is open to a wide variety of opinions in clinical practice (“I'm not afraid of (.) of different opinions when it comes to religion and spirituality”, lines 12–13). R42 went on to share his perspectives concerning spirituality, via a specific example of his dealing with end-of-life issues and informing a patient to prepare for death.

When faced with interactions with participants that unfold in unstraightforward ways such as these, interviewers need to be able to facilitate interview interaction in ways that prompt interviewees to provide further detail concerning their perspectives and reasoning. A useful strategy that interviewers can use to do this is to sum up or formulate the sense of prior talk in order to gain participant feedback concerning the accuracy of the interviewer's interpretations of what has been said. Twenty-five minutes into this interview, the interviewer formulated the sense of the views that had been expressed by R42 concerning the training program as follows.

Individual interview with first-year resident, October 2009

In this interview the interviewer was faced with the problem of eliciting descriptions and opinions from a participant who had provided minimal responses, and asked the interviewer repeatedly to answer the very questions she had posed. In Excerpt 9.3, the interviewer re-oriented the topic of talk in a way that invited R42 to expand on what he had said without evaluating his responses. In this instance he did so, this time providing extended responses (not included here) and in fact returning to the topic of spirituality first mentioned in Excerpt 9.2 and discussing this in considerable detail. How the interviewee responded to questions in this interview — by refusing to make sense of the interviewer's questions — is also analyzable, in that the interviewee used the interview context to immediately alert the interviewer to his reluctance to provide data for the evaluation, thereby demonstrating his perceptions of the program as problematic in some way.

Discussion

There are numerous guides within the field of qualitative inquiry to what researchers might do in interviews in order to generate quality data (see for example, Briggs, 1986; deMarrais, 2004; Douglas, 1985; Hermanowicz, 2002; Kvale, 1996; Kvale & Brinkmann, 2009; McCracken, 1988; Mishler, 1986; Patton, 2002; Rubin & Rubin, 2005; Seidman, 2006; Wengraf, 2001). Much of the advice literature, however, avoids providing concrete examples of how to deal with challenges in interview contexts. Perhaps this is partly because the range of challenges that might occur in interview contexts is as wide and varied as the sorts of qualitative studies conducted by innumerable researchers. One exception is Nairn, Munro and Smith's (2005) article in which the authors use Pillow's (2003) notion of “uncomfortable reflexivity” (p. 188) to examine how an apparently ‘failed’ interview of a group interview of high school students conducted by one of the researchers provided ways to re-consider the design and methods used for the study.

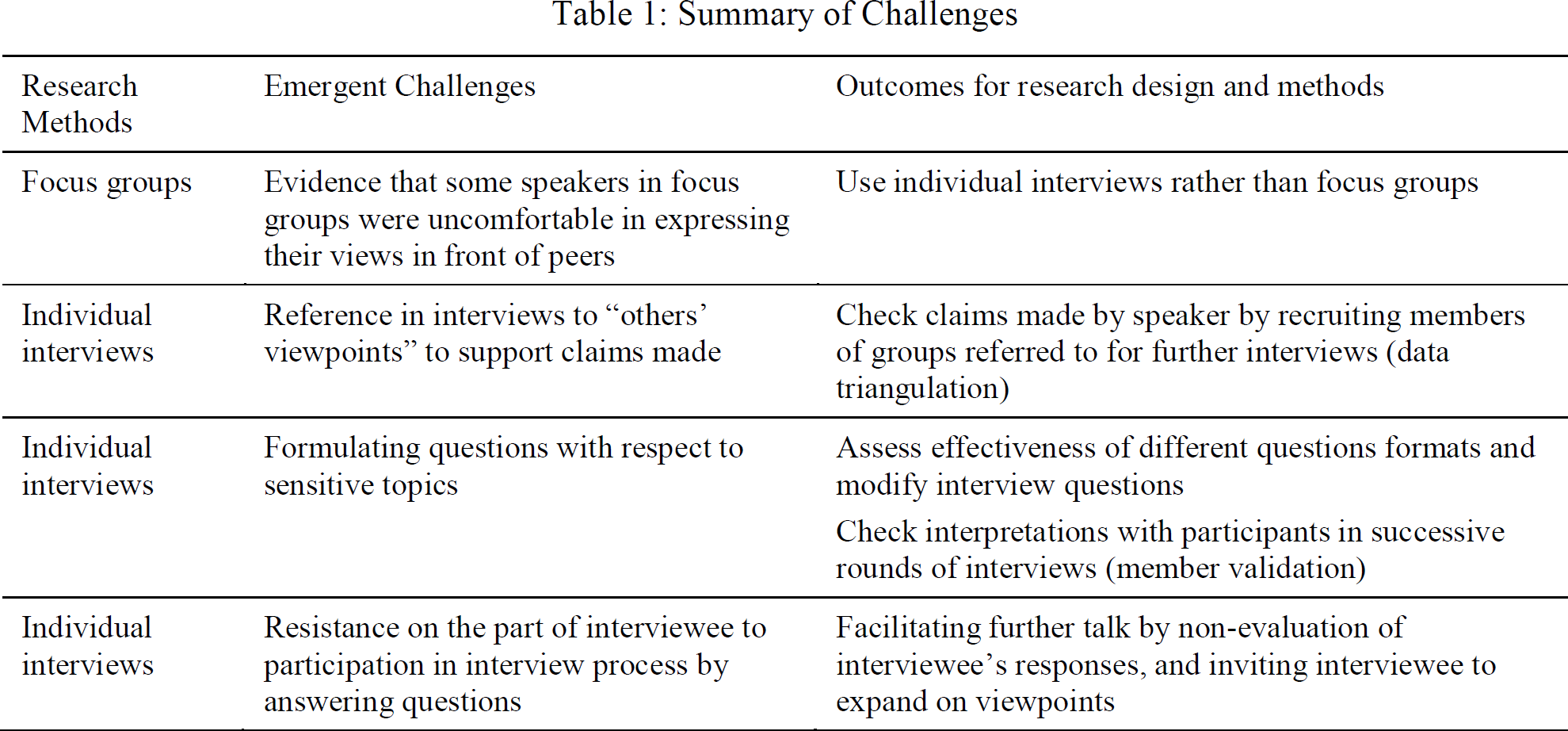

In this paper I have examined challenges that occurred in interview interactions in one study in which I was involved (see Table 1 for summary).

Summary of Challenges

I began by showing how close analysis of how focus group interaction unfolded demonstrated that participants were reluctant to candidly discuss their views in front of peers. In this case, this interactional problem led to modification of the research design to drop the use of focus groups in further rounds of data generation. Second, I showed how a participant's reports concerning “others' views” was used to inform further data generation and data analysis (i.e., via interviewing other people and examining other forms of data). Third, I showed how topics of talk that emerge as “sensitive” may be analyzed to inform the formulation of interview questions in succeeding rounds of data generation. Fourth and finally, I show a specific strategy for facilitating interaction in interviews in which participants may refrain from answering questions as posed.

Jonathan Potter and Alexa Hepburn (2005, Forthcoming) argue that “interviewing has been too easy, too obvious, too little studied and too open to providing a convenient launch pad for poor research” and make recommendations to researchers for improving the quality of interview research – both in analysis and representation. This paper aligns with several of their recommendations, specifically:

Improving the transparency of the interview set-up;

More fully displaying the active role of the interviewer;

Using representational forms that show the interactional production of interviews;

Tying analytic observations to specific interview elements.

By combining insights from conversation analysis with thematic analysis of data, I hope to have shown more about specific interview contexts in which data were generated, the actions of the interview, and the details of the interactional sequences in which communicative difficulties were worked out by speakers. My observations of how these data were generated is grounded in a constructionist conceptualization of interviewing (Roulston, 2010), although this is but one of many analytic possibilities. Like Talmy and Richards (2011), I argue that qualitative researchers may gain significant insights concerning their research topics by taking a constructionist conceptualization of qualitative interviewing. Talmy and Richards (2011) argue, “[t]he analytic concern with both interview product (the whats) and process (the hows) grounds the interview as an interactional event, thus opening up for analysis how the interview is achieved” (2011, p.2).

By examining in detail challenging, puzzling or problematic sequences in interview interaction, qualitative interviewers and researchers might consider ways to enhance their practice as interviewers and analysts, and consider how particular interactions can inform research design. This kind of work involves purposeful reflection concerning the details of interaction and encompasses focusing on how interaction is accomplished by speakers. Questions that might be posed include: Did interviewees answer questions posed? If not, what happened? How might the methods used and questions posed be modified in order to attend to interactional difficulties that occur in field work? These kinds of examinations of the co-construction of interviews are confronting, since researchers are usually invested more in reporting findings, than in examining interactional problems encountered, which may well be interpreted as ‘failures’ or ‘poor practice.’ Nevertheless, I encourage other researchers to investigate their own practice for problematic and puzzling sequences that will inform the way questions are posed as well as the research design of projects.

Footnotes

1.

Transcription conventions used are included in Appendix 1.

Transcription conventions

Interviewer

IR

R

Resident

M

Moderator

( )

words spoken, not audible

(( ))

transcriber's description

[

two speakers' talk overlaps at this point

=

no interval between turns

?

interrogative intonation

(2.0)

pause timed in seconds

(.)

small untimed pause

ye::ah

prolonged sound

why

emphasis

YEAH

louder sound to surrounding talk

heh heh

laughter

-hhh

in-breath

hhh-

out-breath

°yes°

softer than surrounding talk

↑

upward intonation

↓

downward intonation

<this thing>

faster than surrounding talk