Abstract

The common texture feature extraction method is only in spatial or frequency domain, leading to insufficient texture information and low accuracy. The main aim of this paper is to present a novel texture feature analysis method based on gray level co-occurrence matrix and Gabor wavelet transform to sufficiently extract texture feature of cashmere and wool fibers. Firstly, the gray level co-occurrence matrix is constructed to calculate the four texture feature vectors including of contrast, angular second moment, dissimilarity and energy in spatial domain, and four texture feature vectors, which are contrast, angular second moment, mean and entropy, in frequency domain is obtained through Gabor wavelet transform and Gray-Scale difference statistics method. Then, because the contrast and angle second moment are used as descriptors of fiber image in both spatial and frequency domain, they are fused respectively by introducing a weight to make linear addition, making eight feature values compose a 6-dimensional feature vector. Finally, these feature vectors are fed into the Fisher classifier. The experimental results show that the identification accuracy of the proposed algorithm is improved by 0.682% compared to use 8-dimensional feature vectors describing the sample image. It verifies that the fused method based on texture feature in spatial and frequency domain is an effective approach to identify fibers of cashmere and wool.

Introduction

Because the structure and morphology of cashmere and wool fibers are very similar, it is difficult to distinguish them. Cashmere fiber with the characteristics of expensive price and soft material is a kind of rare animal fiber, so that it has become one of the extremely significant raw material in the textile industry. However, a number of lawbreakers have used wool as expensive cashmere products to obtain high profits. It is urgent to distinguish the two.

In recent years, the identified methods have five categories including physical method, chemical method, biological method, image method and deep convolution network method. In the former three ones, there are commonly identifying methods of microscope method, 1 DNA detection method, 2 solution method, 3 etc. Their shortcomings are complex operation, worse stability of the detection results, and low accuracy. The deep convolution network, 4 a time-consuming method, requires massive sample size and expensive experimental devices. However, the image method with small experimental error and high accuracy is frequently applied in the filed of identifying similar animal fiber. Jiao 5 proposed to use the GLCM to extract the texture features and the Support Vector Machine (SVM) model to classify, showing over 90% of accuracy. Yuan et al. 6 extracted six indexes of texture feature of fiber images by an improved extraction method of the Tamura texture feature. Then using the BP neural network to identify and get 81.17% of accuracy. Xing et al. 7 put forward analyzed wavelet multi-scale by the Gaussian random field model and adopted the Support Vector Machine (SVM) model to identify, acquiring 90.07% of the identification accuracy. Lu et al. 8 introduced the SURF features into the filed of similar animal fiber and reached 93% of accuracy. When extracted texture feature to identify, the texture information only gained in spatial domain or frequency domain is used as the description of the fiber image, resulting in inadequate texture information and lower accuracy. Thus, the effective fusion of texture features in spatial domain and frequency domain can obtain more complete texture information to improve accuracy.

In this paper, we put forward an identification method based on gray level co-occurrence matrix and Gabor wavelet transform. Firstly, the gray level co-occurrence matrix is constructed to calculate the four texture feature vectors including of contrast, angular second moment, dissimilarity and energy in spatial domain, and four texture feature vectors, which are contrast, angular second moment, mean and entropy, in frequency domain is obtained through Gabor wavelet transform and Gray-Scale difference statistics method. Then, because the contrast and angle second moment are used as descriptors of fiber image in both spatial and frequency domain, they are fused respectively by introducing a weight to make linear addition, making eight feature values compose a 6-dimensional feature vector. Finally, these feature vectors are fed into the Fisher classifier. The experimental results show that the identification accuracy of the proposed algorithm is improved by 0.682% compared to use 8-dimensional feature vectors describing the sample image. It verifies that the fused method based on texture feature in spatial and frequency domain is an effective approach to identify fibers of cashmere and wool.

Research methods

System framework process

This paper proposes an identification method of texture feature based on gray level co-occurrence matrix and Gabor wavelet transform. The algorithm mainly involves image pre-processing, constructing gray level co-occurrence matrix to extract texture information in spatial domain and adopting the Gabor wavelet transform and the Gray-Scale difference statistics method to extract texture feature in frequency domain. In addition, the fused vectors of texture feature is identified by establishing the Fisher classifier. Figure 1 shows the flow chart of system framework.

Step 1: Image pre-processing. Pre-processing wool and cashmere images to obtain clear texture information, which is obtained by the image processing algorithm including grayscale, the Sobel edge detection, morphological filling, edge extraction, and removal background.

Step 2: Fiber texture feature extraction in spatial domain. The texture information of fiber image is extracted by gray level co-occurrence matrix in spatial domain, acquiring four secondary statistics: contrast (CON), angular second moment (ASM), dissimilarity (IDM), and energy (ENG).

Step 3: Fiber texture features extraction in frequency domain. The Gabor wavelet transform and the Gray-Scale difference statistics method are used to obtain texture feature in frequency domain. First is to select the optimally impact factors of constructing Gabor filter in frequency domain. And then is to calculate four characteristic values such as contrast (CON), angular second moment (ASM), mean (MEAN) and entropy (ENT) by adopting Gray-Scale difference statistics method.

Step 4: Texture Feature fusion. The values of texture feature in spatial domain and frequency domain are respectively obtained by the second and third steps. Because the contrast and angular second moment exist in both domains, they are fused respectively by introducing a weight to make linear addition, making them consist a 6-dimensional feature vector.

Step 5: Identification. The whole fiber images, which are divided into training samples and testing samples, are fed into the Fisher classifier to determine the Y0 of surface in accordance with the inter-class scatter matrix and the average value of fiber texture feature values. It is final to verify the classified effect and obtain the accuracy with utilizing test set.

The flow chart of system framework.

Improved algorithm

The described method of texture feature mainly involves five types: signal processing, statistical, geometric, structural, and model analysis method.9–11 The former two ones, which contribute to combine spatial and frequency domain to analyze texture features, are chiefly applied in our algorithm. In order to get sufficient and effective texture information of image, it is critical to use the optimal parameters constructing Gabor filter and determine the fused weight of two kinds of texture features with regard to the improvement of identification accuracy in this article.

Selection of parameters based on Gabor

The extraction of texture feature in frequency domain is based on Gabor wavelet transform,12–14 which is making the convolution operation of Gabor filter and original image. Then the converted fiber image is extracted texture feature values by adopting the Gray-Scale difference statistics method.15–17 Consequently, the construction of the optimal Gabor filter is one of the vital factors to get texture feature in frequency domain. The Gabor filter with frequency f and orientation by coordinate rotation given by

Where

x and y represent initial ordinates,while and represent ordinates after rotation. The space constants and define the Gaussian envelope along the axes of x and y.

Firstly, we use Gabor filter banks with five frequency and four orientations, f = {1,2,3,4,5} and

So it is critical to select frequency f and orientation θ for the Gabor filter. In order to reduce the amount of calculation, this article adopts the method of selecting two parameters one-by-one. First is to determine the orientation θ Randomly selecting 20 cashmere and wool fiber images, supposing the frequency as 3 and setting the orientation θ respectively as 0,

Feature fusion

In order to make full use of the texture information in the gray image, extracting the GLCM and Gabor features of the fiber to construct the texture feature vector.18–21 Suppose that the feature vector obtained by GLCM in spatial domain is

Where

Where cij was defined as

In formula (2), a weight

Experimental results and analysis

A total of 200 fiber sample images including 100 cashmere fibers and 100 wool fibers were captured for the study by the scanning electron microscope magnified 1000 times. They were all captured and stored in the computer with a size of 275 × 275 pixels. Part of original cashmere and wool fibers are shown in Figure 2.

Display of part original cashmere and wool fiber image: (a) cashmere and (b) wool.

Experimental result of image pre-processing

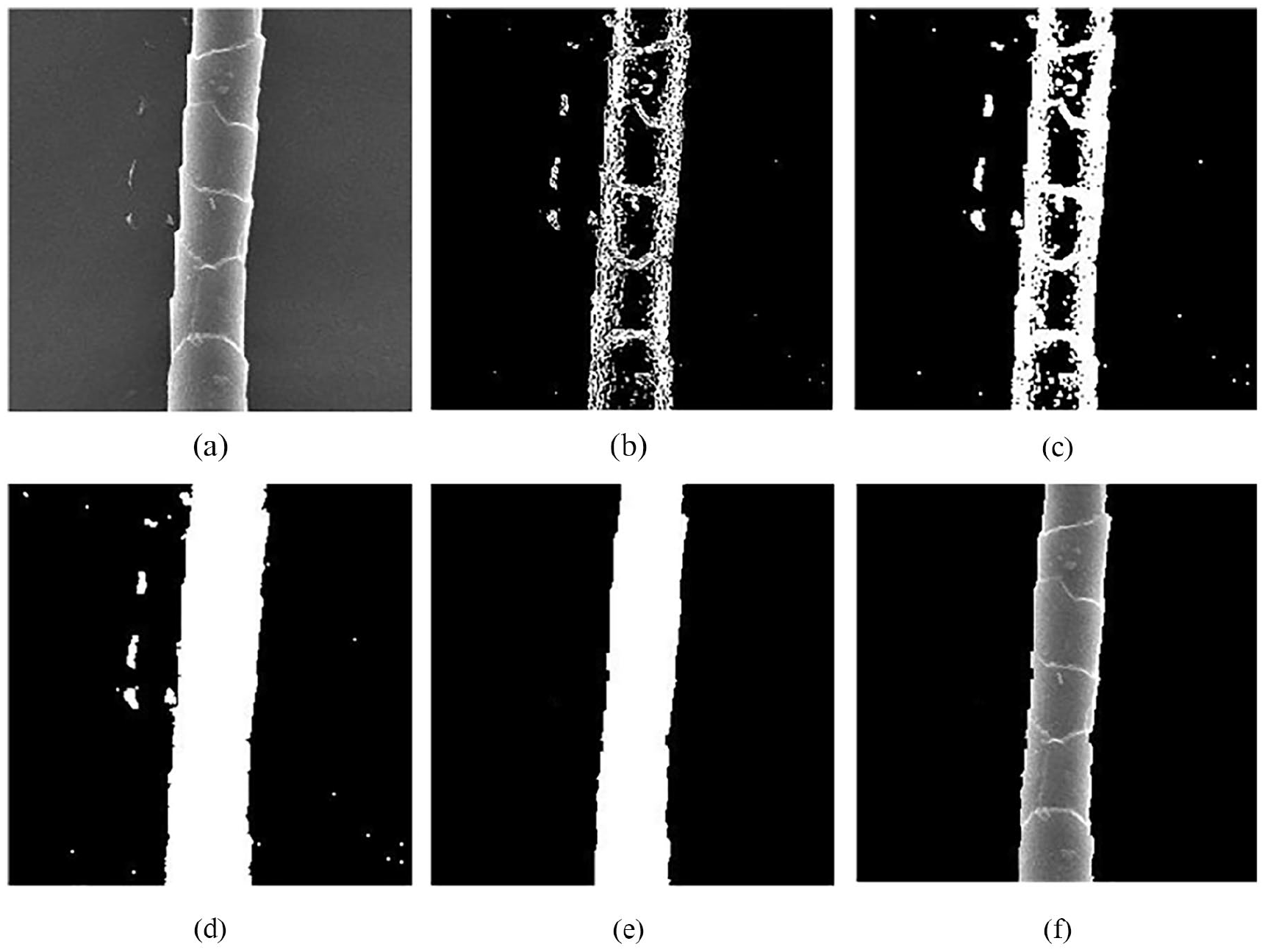

One cashmere fiber image, which is shown in Figure 3(a). The captured fiber images is processed to remove background. Figure 3(b) mainly obtains the skeleton outline of the fiber though Sobel edge detection and image binarization. Figure 3(c) gains a closed fiber area by dilating the fiber edge and (d) fills the holes in the fiber by using the mathematical morphology. Figure 3(e) is the result of the removing noise by eroding these noise. Removing background in the original image is shown in Figure 3(f).

Cashmere image pre-processing: (a) original image, (b) Sobel edge detection, (c) dilation, (d) filling margin, (e) removing noise, and (f) removing background.

Identification

After a series of pre-processing operation on the captured images, the first step is to extract four statistics in spatial domain by adopting the gray level co-occurrence matrix algorithm. And the second is to extract texture feature values from the image of frequency domain after the Gabor wavelet transform by adopting the Gray-Scale difference statistics method. Finally, 200 groups vectors of texture feature are fed into the Fisher classifier.

In addition to Gabor wavelet transform, Fourier transform is the most common one in the process of spatial-frequency conversion. Table 1 shows the recognition results under different transformation methods. By using Gabor wavelet transform, the recognition result reaches 86.41%, which is 26% higher than that of Fourier transform. The recognition rate of combining gray level co-occurrence matrix with Gabor transform is 23% higher than Fourier transform when adopting the method of fusing into an eight dimensional feature vector. What’s more, it is found that when the ratio of training set and testing set is 7:3, the identified rate of cashmere and wool reaches the highest. Hence, 7:3 is set as the ratio of training set and testing set in the absence of special instructions.

Compared by different transform method.

A group of six feature vector is obtained by adding the weight as the description of the texture feature of a single image. The identified result of different weights is shown in Figure 4. It is easy to find that different identification accuracy follows different weights. When the weight value is less than 0.8, the identified result tends to be stable. In this figure, these lines of each color represent the recognition result in different proportion of training set and testing set. According to the figure, the accuracy of the green line is the optimal, and the corresponding ratio is 7:3. Meanwhile, when the weight is set as 0.18, we found that the accuracy reaches the highest no matter in the ratio of training set and testing set, thus the optimal identification result can be achieved.

Comparison of different weights.

Different fusion methods, including fused into 8-dimensional and 6-dimensional feature vector, can cause quite different identification results. The compared result among different dimension feature vectors is shown in Figure 5. The solid line represents the fusion into 6-dimensional feature vector, and the dotted line represents the fusion into 8-dimensional feature vector. It is clearly noticed that the recognition result fusing into six dimensional feature vector is better no matter of which training set and testing set proportion, improving 0.682% of the average accuracy.

Compared in different dimension feature vectors.

Table 2 shows the identification results when the cashmere and wool sample size ratios are set at 4:5, 5:5, and 5:4, no matter in the spatial domain, frequency domain or fused texture feature by adding the weight. The study shows that the recognition rate is respectively up to 90% and 93.33% in the case of frequency domain and the fusion of texture features. It is obvious that the best classification effect can be achieved only in the same number of cashmere and wool fiber images.

The accuracy of different number of samples.

Although these previous identification method based on the texture feature can better identify cashmere and wool fibers, there still are some issues in the process of experiment and identification accuracy also need to improve. Therefore, it is necessary to improve and design the identification algorithm based on texture feature analysis. The method in this paper has an obvious improvement, as show in Figure 6. A better result can be obtained by combining texture feature of the spatial and frequency domain.

Compared with the existing method.

Conclusion

In this paper, we present an identified method of texture feature based on gray level co-occurrence matrix and Gabor wavelet transform. The fiber images were firstly processed by the image processing algorithm, which includes the Sobel edge detection, morphological filling, edge extraction and so on, to obtain clear texture information after removing background. Then, the multi-directional gray co-occurrence matrix is constructed to calculate the GLCM features in the spatial domain. And the texture feature values are extracted from the image of the frequency domain after the Gabor wavelet transform by adopting the Gray-Scale difference statistics method. In the end, these 6-dimensional feature vectors, which are constructed though the method of linear addition, are fed into Fisher classifier. The experimental results show that the identification accuracy of the proposed algorithm is improved by 0.682% compared with 8-dimensional feature vectors. However, there are still shortcoming including less samples, rough surface of existing some fibers and some fuzzy images. So in the future, we will collect more high-quality fiber images and expand the experimental data to improve the identified accuracy.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was support by the program general projects of Shaanxi Provincial Department of Science and Technology Key R & D (No.2019GY-098), the service local science research plan of Shaanxi Provincial Department of education (No.18JC012), Science and technology innovation new town project of Yulin science and Technology Bureau (No.2018-2-24), Shaoxing Keqiao District West Textile Industry Innovation Research Institute Project (No.19KQYB10) and Science and Technology plan project of Yulin City (No.CXY-2020-052).