Abstract

The productivity of textile industry is positively correlated with the efficiency of fabric defect detection. Traditional manual detection methods have gradually been replaced by deep learning algorithms based on cloud computing due to the low accuracy and high cost of manual methods. Nonetheless, these cloud computing-based methods are still suboptimal due to the data transmission latency between the end devices and the cloud. To facilitate defect detection with more efficiency, a low-latency, low power consumption, easy upgrade, and automatical visual inspection system with the help of edge computing are proposed in this work. Firstly, the method uses EfficientDet-D0 as the detection algorithm, integrating the advantages of lightweight and scalable and can suit the resource-constrained edge device. Secondly, we performed data augmentations on five fabric datasets and verified the adaptability of the model in different types of fabrics. Finally, we transplanted the trained model to the edge device NVIDIA Jetson TX2 and optimized the model with TensorRT to make it detection faster. The performance of the proposed method is evaluated in five fabric datasets. The detection speed is up to 22.7 frame per second (FPS) on the edge device Jetson TX2. Compared with the cloud-based method, the response time is reduced by 2.5 times, with the capability of real-time industrial defect detection.

Introduction

From the perspective of image analysis, traditional image recognition inspection has three classes 5 : statistical analysis,6,7 frequency domain analysis2,8–10 and model analysis5,11,12 used the Gabor filter band and lattice segmentation to extract image patch features, and then use it for defect detection. Jing et al. 10 proposed a fabric defect detection based on CIE L × a × b color space using 2-D Gabor filter. Xiaobo et al. 11 apply the Gaussian-Markov random field (GMRF) model to extract fabric features and detection. All of these methods required manually designed feature extraction schemes. Nevertheless, with the wide variety of textiles today, the generality of these methods has been greatly restricted. In recent years, with the substantial improvement of Graphics Processing Units (GPU) computing capabilities, deep learning method has made significant progress. Different from traditional image processing algorithms, deep learning methods use the convolutional neural network (CNN) to automatically extract image features, which integrates feature learning into the whole process of model building and dramatically improves the generality of the model. Cha et al. 13 apply CNN to wall crack detection, and the proposed CNN method showed very robust performance compared to the traditional well-known edge detection algorithms (i.e. Canny 14 and Sobel). Jing et al. 15 proposed a fabric defect detection method based on YOLO-V3, 16 which improves the detection effect by clustering datasets and adding network detection layers and finally applied it to gray cloth and lattice.

In the textile industry scenario, an automatic fabric defect detection system needs to meet three requirements. 5 First, the high-speed running product line needs the detection system satisfy the requirements of real-time and low response latency. Second, the power consumption of the product line should be as low as possible. Finally, in order to upgrade the product line conveniently, the detection system must scale well. In response to the above requirements, a fabric defect detection method based on edge computing is proposed in this paper. In summary, the main contributions of this work are listed as follows:

This paper proposes an automatic fabric defect detection system that combines EfficientDet and edge computing, and edge device is applied to fabric defect detection. Deploying a lightweight network on the edge device NVIDIA Jetson TX2 reduces the overall response time of the system and leverages model features to make the production line easier to upgrade.

In order to improve the robustness of the model, we augmented the training datasets and compared EfficientDet with mainstream one-stage detection networks on five different fabric datasets.

To adapt to the limited computing resources of edge devices, we optimized the model with TensorRT to make it satisfy the requirements of industrial detection speed. Besides, the corresponding latency of cloud computing and edge computing is compared.

The rest of the paper is organized as follows: Section 2 introduces the related work of this paper, including traditional defect detection methods and detection methods based on deep learning, as well as the current status of edge computing. Section 3 describes the details of our proposed method. Section 4 briefly introduces five different fabric datasets, and subsequently discusses the experimental results, including the test results of the model, TensorRT optimization after model transplantation, and the comparison between cloud computing and edge computing. Section 5 concludes this paper.

Related work

In this section, the computer vision based detection method is briefly reviewed from the following three perspectives: traditional defect detection methods, detection methods based on deep learning, and the status quo and applications of edge computing.

Traditional defect detection methods

According to the different analysis directions of the image, the detection model can be divided into three categories: statistics-based methods, spectrum-based methods, and model-based methods. Latif-Amet et al. 6 proposed the sub-band co-occurrence matrix (SBCM) to detect defects encountered in textile images. It combines wavelet theory and co-occurrence matrices, decomposes the gray level images into sub-bands, and then divides the image into non-overlapping windows to extract co-occurrence features. Nevertheless, the reliability of the method and how it can be applied to other fabrics is not clear. Tsai and Molina 7 proposed the morphological method of arc SE for machined surface inspection. This morphology method can effectively remove tool-marks and highlights local defects, but it has greater limitations and only targets for the surfaces with circular tool-marks. Given the strong texture characteristics of some fabric images, some scholars consider adopting the method of spectrum analysis to detect fabric defects. Anandan and Sabeenian 2 utilize the immediate duplication of curvelet change information at adjoining scales to recognize critical edges from the clamor. And use Curvelet Transform (CT) and Gray-Level Co-event Matrices (GLCM) to find potential texture defects. Nevertheless, the real-time performance of the algorithm is not mentioned. Jing et al. 8 combines the genetic algorithm with the Gabor filter to match the fabric defect-free image texture information, and then use the adjusted Gabor filter to detect defects on the fabric. But the parameters selected in the Gabor filter is a challenging task in defect detection problem. When dealing with fabric with intricate textures, the model-based method is more suitable. The complex textures can be modeled as a stochastic process and treat the defect detection problem as a statistical hypothesis-testing problem on the statistics derived from the model. Xiaobo 11 and Allili et al. 12 respectively apply the Gaussian-Markov random field (GMRF) model and Gaussian mixture model to extract fabric features and detection. However, these methods are computationally expensive and have low accuracy for small defects.

Deep learning methods for defect detection

On account of the limitations of traditional methods, more and more scholars have begun to study detection methods based on deep learning in recent years. For example, Quyang et al. 17 proposed a deep learning-based defect detection method on on-loom by combining the techniques of pre-processing, fabric motif determination, candidate defect map generation, and CNNs. Li et al. 18 proposed a compact CNN architecture and applied it to some common fabric defects. Liu et al. 19 proposed a multistage GAN network, which generates defect samples by training multistage GAN and detect them through a semantic segmentation network. It performs well on the accuracy metric of various fabric datasets. However, the paper does not mention the effect of the model in practical application, such as whether the detection speed meets the requirements. Zhao et al. 20 proposed a multi-defect detection pipeline and applied it to Fuxing Electric Multiple Units. It improves the anchor and feature fusion mechanism of Region Proposal Network (RPN) and combines the super-resolution strategy with CNN in the classification stage to improve classification performance. Although deep learning methods are superior to traditional methods in generality, the deployment of their systems often requires substantial computing resources. When computing resources are insufficient, the detection performance of the system will be seriously reduced.

Edge computing and its application

Most of the current deep learning methods are combined with cloud computing. In order to meet excessive computing needs, cloud computing has adopted a powerful data center for intensive processing. However, in this cloud-centric approach, data jams caused by the transmission of masses data (i.e. images and videos) will greatly influence production efficiency. 21 To alleviate this problem, the combination of edge computing and deep learning came into being. Edge computing is an open platform that sinks the computing capability and storage facilities to the side close to users or data sources and integrates core functions of network, computing, storage, and applications. 22 The deployment diagram of edge computing is shown in Figure 1. Edge computing uses the advantages of distributed deployment and closer data sources, which can effectively solve problems such as high broadband cost, transmission latency, and data congestion. 23 This novel pattern enables computation-intensive and latency-critical applications. With the accelerated application of 5 G, the application of edge computing will continue to expand, such as industrial Internet, smart city, and smart transportation. 24 Lin et al. 25 combines edge computing framework with the deep Q network (DQN) to solve complex job shop scheduling problems (JSP), which performs better than the other methods that only use one dispatching rule. Liu et al. 26 proposed a video recognition system for dietary assessment based on edge devices. Liu et al. 27 proposed the application of edge computing to public intelligent monitoring and tracking.

Edge computing deployment view.

Proposed method

To satisfy the requirements of the industrial automatic fabric defect detection system, we proposed an automated fabric defect detection method based on edge computing.

The overall framework of our method is shown in Figure 2. In 3.1, we first elaborate the feasibility of the proposed method from the three requirements of industrial defect detection. Then introduce the detection algorithm used in this paper, as described in 3.2. To empower the model has the capability of real-time industrial defect detection, we optimize the trained model with NVIDIA TensorRT and introduce it 3.3.

Defect detection method.

Design basis

In the industrial scenario, automatic fabric defect detection needs three necessary attributes. First, the automatic fabric defect detection system needs to match the high-speed production line and respond to the defective fabric promptly. This requires not only fast detection algorithm but also fast system response. Second, the production line should be characterized by low power consumption to reduce the production cost. Third, in order to make the production line can be upgraded conveniently in the future, the detection system must have the characteristic of scale. In response to the above requirements, we adopt the detection system based on edge computing and using EfficientDet-D0 28 algorithm. As described, edge computing can offload computing tasks from the cloud to the edge device, which not only improves the detection speed but also protects the privacy of data in the production process of enterprises. Likewise, the reduction of network bandwidth also reduces the production cost of enterprises. EfficientDet uses the lightweight, scalable backbone and feature extract network, the params and FLOPs of the Efficientdet-D0 are only 3.9 M and 2.54 B, respectively, which gives it an absolute advantage in detection speed. The addition of edge equipment can effectively reduce the power consumption of the production line and system response time, as well as the production investment of enterprises. At the same time, the multi-scale characteristic of EfficientDet model accords with the requirement that the industrial production line needs time and convenient upgrade.

In order to enhance the robustness of the model in the real industrial scenario, we augmented the training datasets, including random cropping, flipping, blurring, brightness gain, and noise. We applied them to five different fabric datasets to verify the detection effect. The random crop strategy is used to simulate the sample that collected from various camera perspectives. Random flip is used to expand the data to improve the quality of the training model. 29 A random brightness gain can mimic different lighting conditions. The noise and blur can enhance the robustness of the model.

After the model is trained on the local workstation, we transplanted it to the edge device NVIDIA Jetson TX2. To further improve the detection speed, we use TensorRT to optimize and accelerate the model. The response latency of the detection system in cloud computing and edge computing were compared to verify the feasibility of the proposed approach.

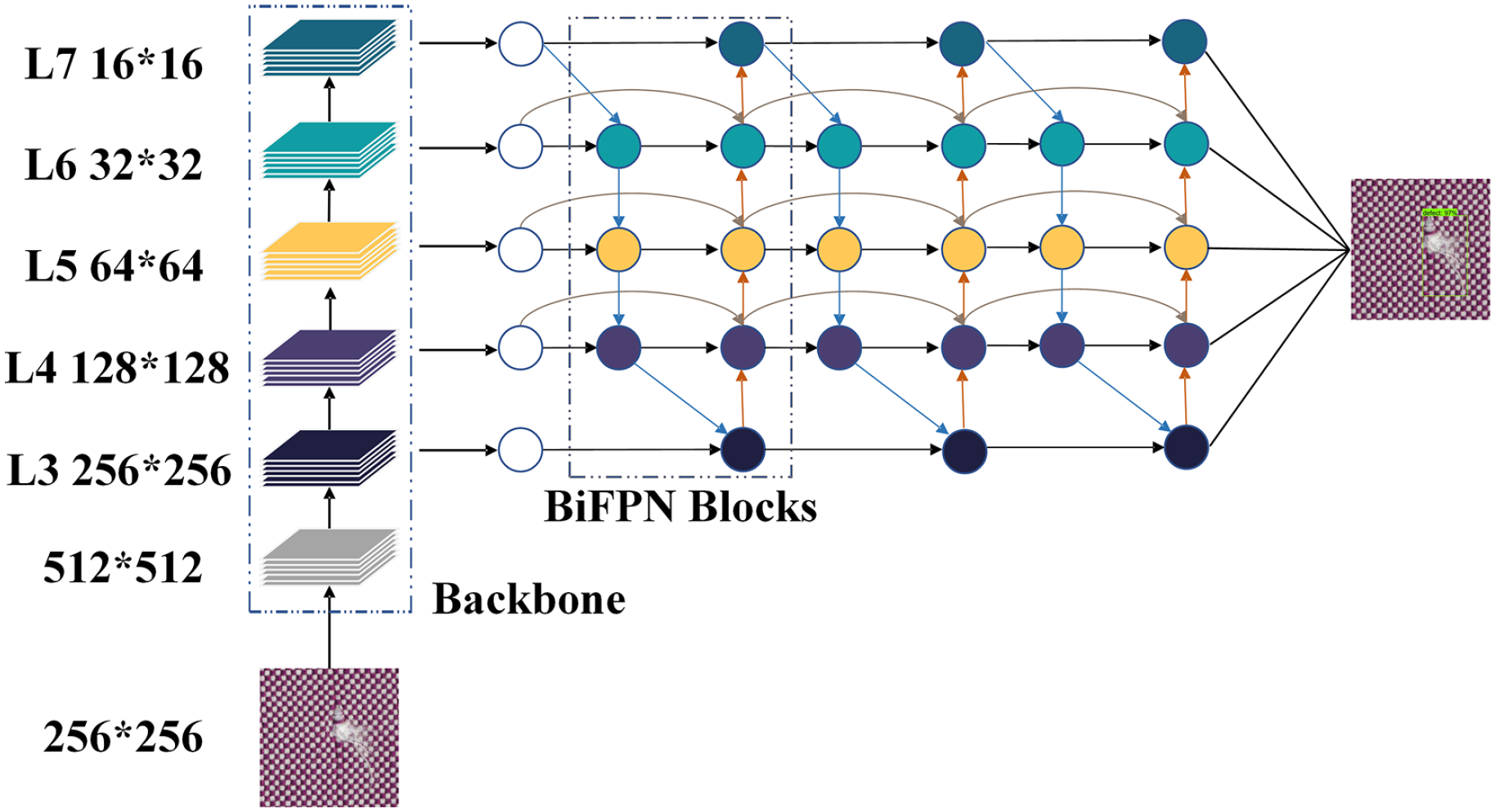

EfficientDet architecture

EfficientDet is a lightweight, scalable detection Network, and it contains a total of eight models, D0–D7. From D0 to D7, the accuracy and time complexity of the model increases with the model size. The eight models can meet a broad spectrum of resource constraints. The backbone of the EfficientDet employs EfficientNet, 30 which uses a mass of deep separable convolution 31 to make the model more lightweight. Moreover, the neck part of the network uses the bidirectional weighted feature pyramid network (BiFPN). Starting from the path aggregation network (PANet), 32 BiFPN removes the nodes that only have one input edge, then add a shortcut between the input and output node if they are the same level. The purpose is to fusing more features without increase the computation cost. Since the resolution of different input features, additional weight was added on each input features during features fusion. This weighted fusion make the network learn the importance of different features. We use the feature in level 5 as a concrete example to describe the fusion mechanism:

where

EfficientDet-D0 architecture.

TensorRT optimization

TensorRT is an optimizer provided by NVIDIA specifically for the neural network inference stage. It mainly optimized from two aspects: Layer & Tensor fusion and Weight & Activation Precision Calibration. When the neural network performs inference calculations, the calculations of each layer must be completed by Compute Unified Device Architecture (CUDA) in the GPU, which makes much time wasted on the startup of CUDA and the read and write operations on each layer. TensorRT greatly reduces the number of layers by merging horizontally or vertically between layers (the merged structure is called CBR, which represents convolution, bias, and ReLU, and all these are merged into one layer). Vertical merging can merge convolution, bias, ReLu into a CBR structure, and only occupy one CUDA core. Horizontal merging can merge CBRs with the same structure but different weights into a wider layer, also occupy one CUDA core. As a result of the merge, there are fewer levels of the calculation graph and fewer CUDA cores to use so that the overall model structure will be smaller, faster, and more efficient.

Experiments and discussion

In this section, we will evaluate the performance of the proposed method through a series of experiments. All the local experiments were performed on a local workstation configured with Intel i7-5930K processor (3500 MHz), 64 GB memory, and a NVIDIA GeForce GTX TITAN X GPU. The software part used the Windows 10 operating system and Tensorflow 2.1 deep learning framework. All the experiments on the edge were performed on NVIDIA Jetson TX2 edge device. NVIDIA Jetson TX2 is an efficient and fast embedded Artificial intelligence (AI) computing device. The GPU adopts NVIDIA Pascal architecture with 256 CUDA cores and 8GB memory. The processor cluster consists of dual-core Denver2 processor and 4-Core ARM architecture cortex-A57, power consumption is only 7.5 W. It is suitable for edge computing scenarios. Figure 4 shows the Jetson TX2 and its detection device.

Detection device based on Jetson TX2.

Datasets and augmentation

In this experiment, we used five different fabric datasets, in which all images are derived from the real-word fabric production line and manually annotated. Furthermore, the camera used to capture textile image data is Basler acA2500-14gm, with GigE interface. And the lens adopts Basler Lens C125-0618-5M-P with a resolution of 5 megapixels and a fixed focal length of 6 mm. Meanwhile, according to the characteristics of textiles, we choose the Opt white ring LED light source, the specific model is OPI-RI5000-W. When collecting images, the distance between the textile and the lens is 320mm, and the distance between the light source and the textile is 270 mm. The fabric types of the five datasets are dark red fabric (DRF), grid fabric (GF), camouflage fabric (CF), digital printed fabric (DPF), and light blue fabric (LBF), as shown in Figure 5.

Five fabric datasets: (a) DRF, (b) GF, (c) CF, (d) DPF, and (e) LBF.

In fabric defect detection, the number of datasets is not as sufficient as we think, and the lack of samples is a challenge to the detection methods based on deep learning. Therefore, we augmented the five datasets to improve the training effect and industrial robustness of the model. Take the DRF dataset as an example, In the case of the dark red fabric data set, we employed random cropping, blurring, brightness gain, flip, and noise for each original image, as shown in Figure 6.

Data augmentation: (a) original, (b) crop, (c) blur, (d) brightness, (e) flip, and (f) noise.

We divided all images into two parts: 90% as the training set and 10% as the test set. The sample numbers of training sets and test sets in the five fabric datasets are shown in Table 1. The five datasets include fabrics with different types of textures, including both regular-textured fabrics and random-patterned fabrics, and the types of defects span an extensive range (such as large-area defects in DRF and tiny defects in CF), which can verify the proposed method in the performance of different types of fabrics and defects. Please note that before training, we normalize all images size to 256 × 256.

Distribution of augmented datasets.

Evaluation metrics

The evaluation metrics in this experiment includes two parts, detection speed and detection accuracy. We utilized frame per second (FPS) to evaluate detection speed and mean Accuracy Precision (mAP) to evaluate the detection accuracy of the proposed method. The evaluation metrics FPS and mAP are defined as follows:

where

where

Experiment on the workstation

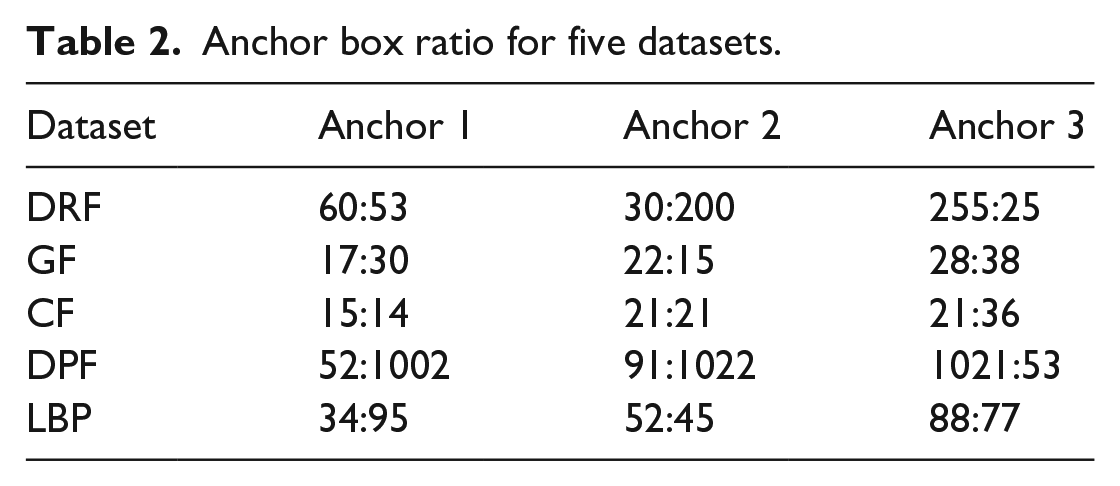

Given the limited computing capability of the edge device, we train the model on the local workstation and then transplant the trained model to the edge device to reduce the training cycle of the model. The Box prediction network of EfficientDet is based on anchors, so we reference the YOLO-V2 33 anchors clustering method to achieve better training results. According to the network settings, we perform k-means clustering on the training data, and the final anchor box ratio is shown in Table 2. At the same time, we also compared the current mainstream one-stage object detection methods, including YOLO-V3, SSD, 34 RetinaNet. 35

Anchor box ratio for five datasets.

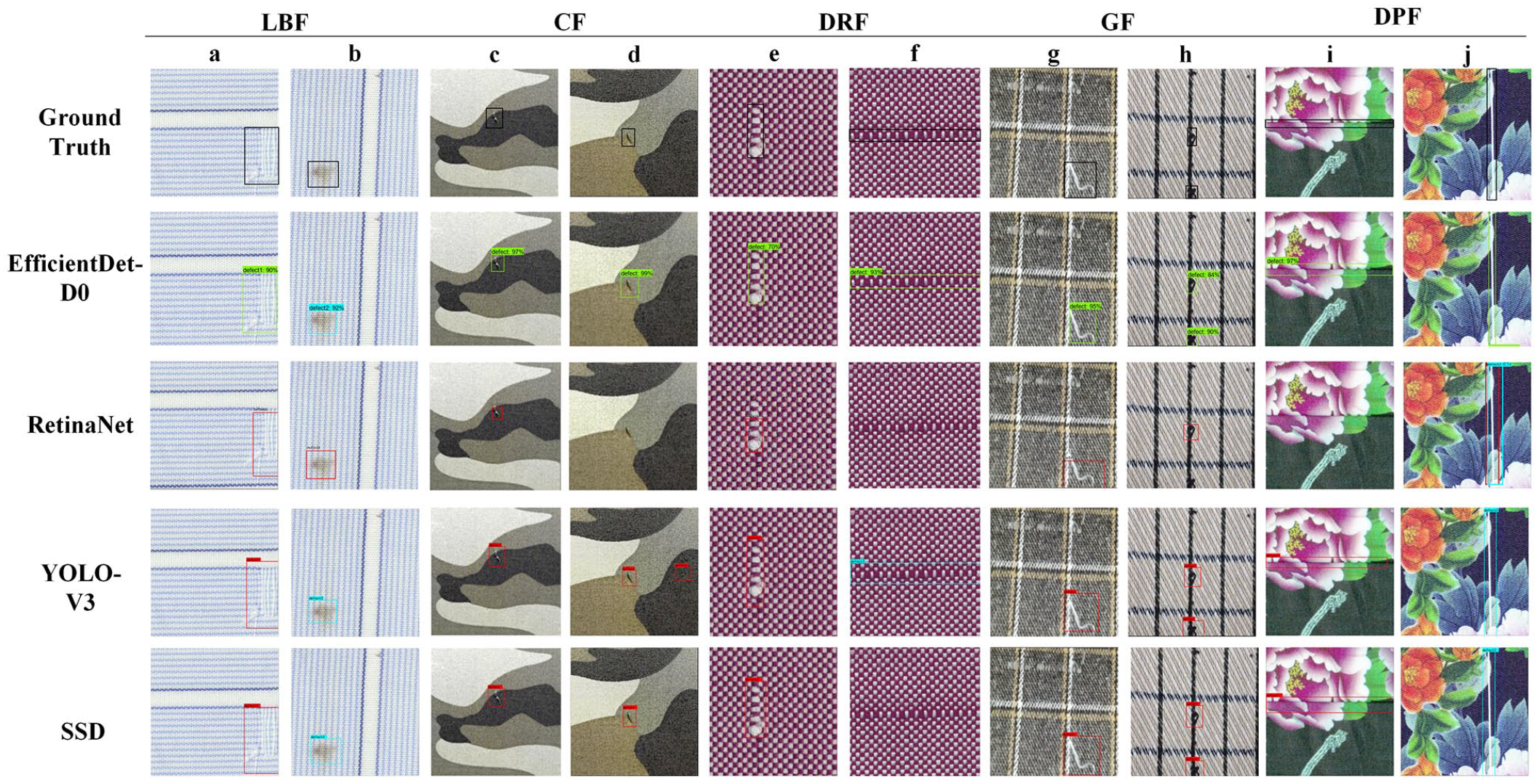

Qualitative analysis

All models are compared on the five fabric datasets listed in Table 1. The test results of different models are shown in Figure 7, where Figure 7(a) and (b), (c) and (d), (e) and (f), (g) and (h), (i) and (j) are the test results of LBP, CF, DRF, GF, DPF datasets, respectively. For the LBF dataset, the four models can effectively detect defects, but the location accuracy is different. From the detection results in column (a), there are more non-target areas in the detection results of all models, except for EfficientDet-D0. For the tiny defects in the CF dataset, RetinaNet and YOLO-V3 have missed and falsely detected, respectively, as shown in the columns of Figure 7(c) and (d). For defects across the entire sample, the detection results of all models are not optimal. Intuitive displays such as the DRF and DPF datasets. In both datasets, there are defects that across the entire sample, but neither RetinaNet nor SSD can detect this type of defect, although YOLO-V3 has detected defects, there are too many defect-free areas detected. In the column e of the DRF dataset, RetinaNet only bounding half of the defect area. In the GF dataset, the shape of the defect is small, and there are multiple defects in a single sample. Except for RetinaNet, other models have detected multiple defects, but the bounding box predicted by the model is quite different from the ground truth, except EFficientDet-D0. Judging from the overall visual contrast, the Efficientdet-D0 model achieves better detection effect in five different fabric datasets, and it can detect the sample defect as well as the predicted bounding box is relatively accurate.

Visual comparison of detection results by different models in five datasets. Where a-j is the representative of the dataset.

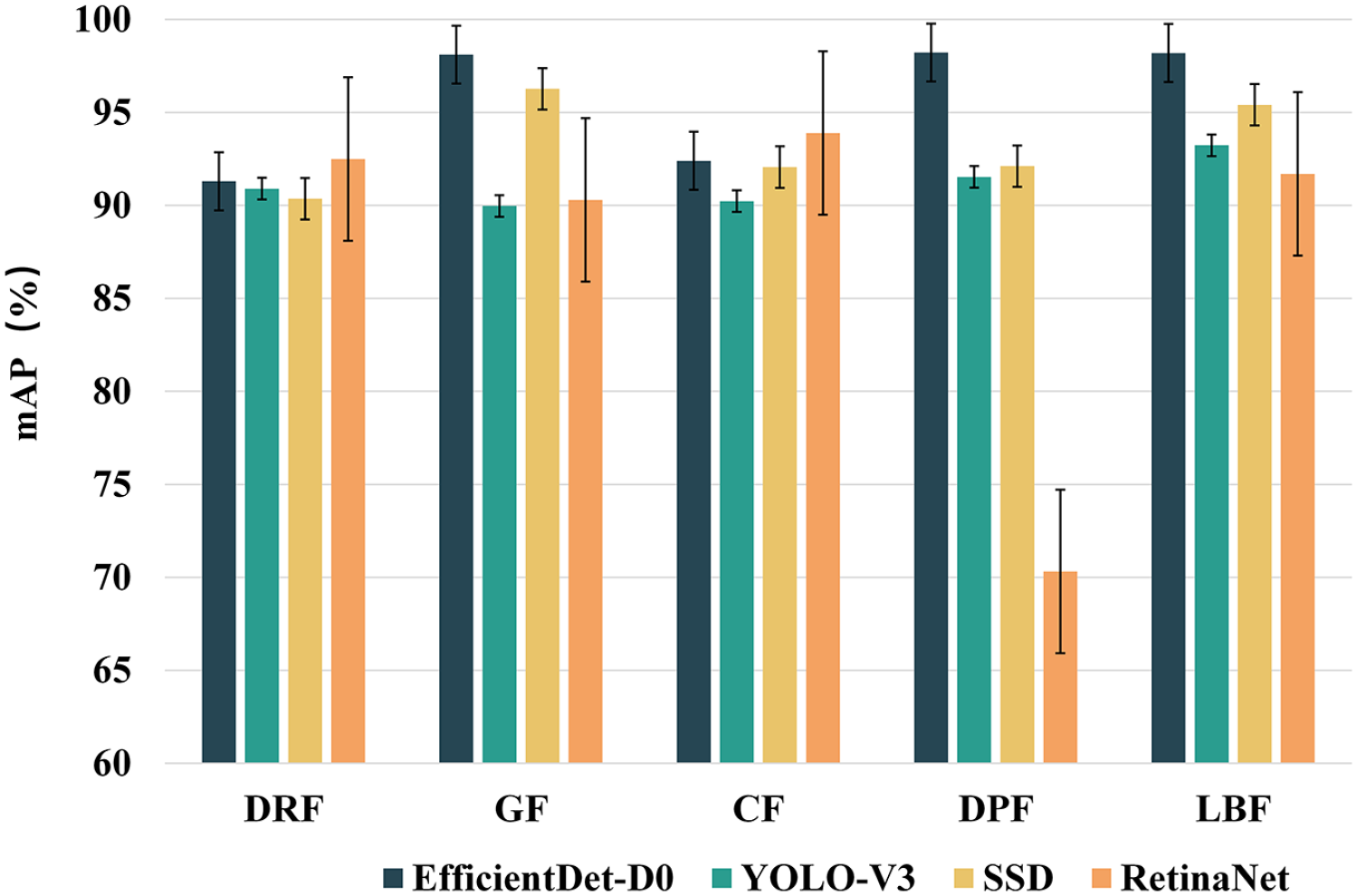

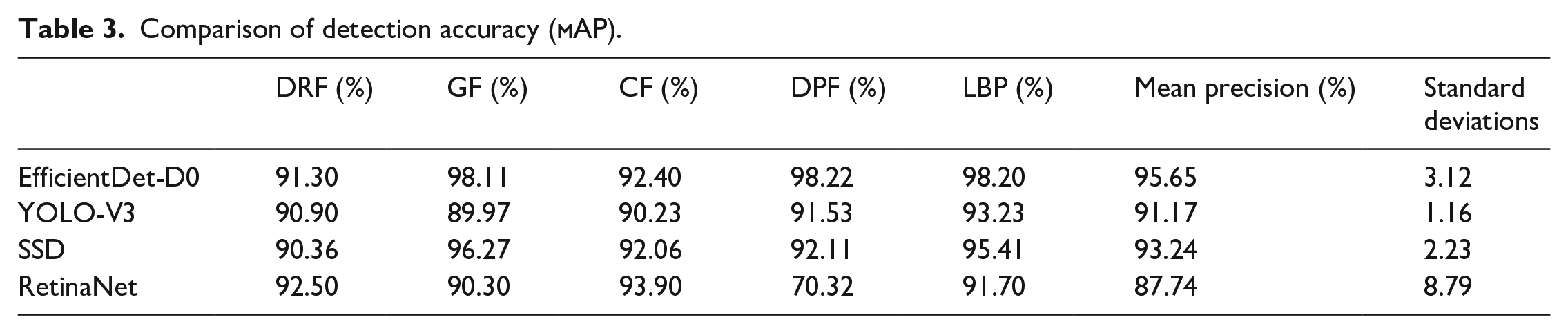

Quantitative analysis

FPS and mAP were respectively used to quantitatively analyze the accuracy and speed of the model. The detection accuracy of the five datasets is shown in Figure 8 and Table 3. By comparison, the Efficientdet-D0 model achieves better performance in all five datasets. In the longitudinal comparison, in the GF data set, the sample background is changeable, and there are many small defects. The detection accuracy of EfficientDet-D0 reaches 98%, which is higher than the other three models and indicates its strong ability to detect tiny defects. In the DPF data set, the aspect ratio of defects is large, and most of the defects across the entire sample. In the detection of such defects, EfficientDet-D0 is better than other models. In the horizontal comparison, EfficientDet-D0 can obtain high mAP on all five datasets, showing its high robustness. At the same time, we compare the mean precision and standard deviation of the model on five datasets. Efficientdet-D0 has the highest mean precision with the lower standard deviation, which shows its excellent generalization.

Comparison of detection accuracy of different datasets.

Comparison of detection accuracy (

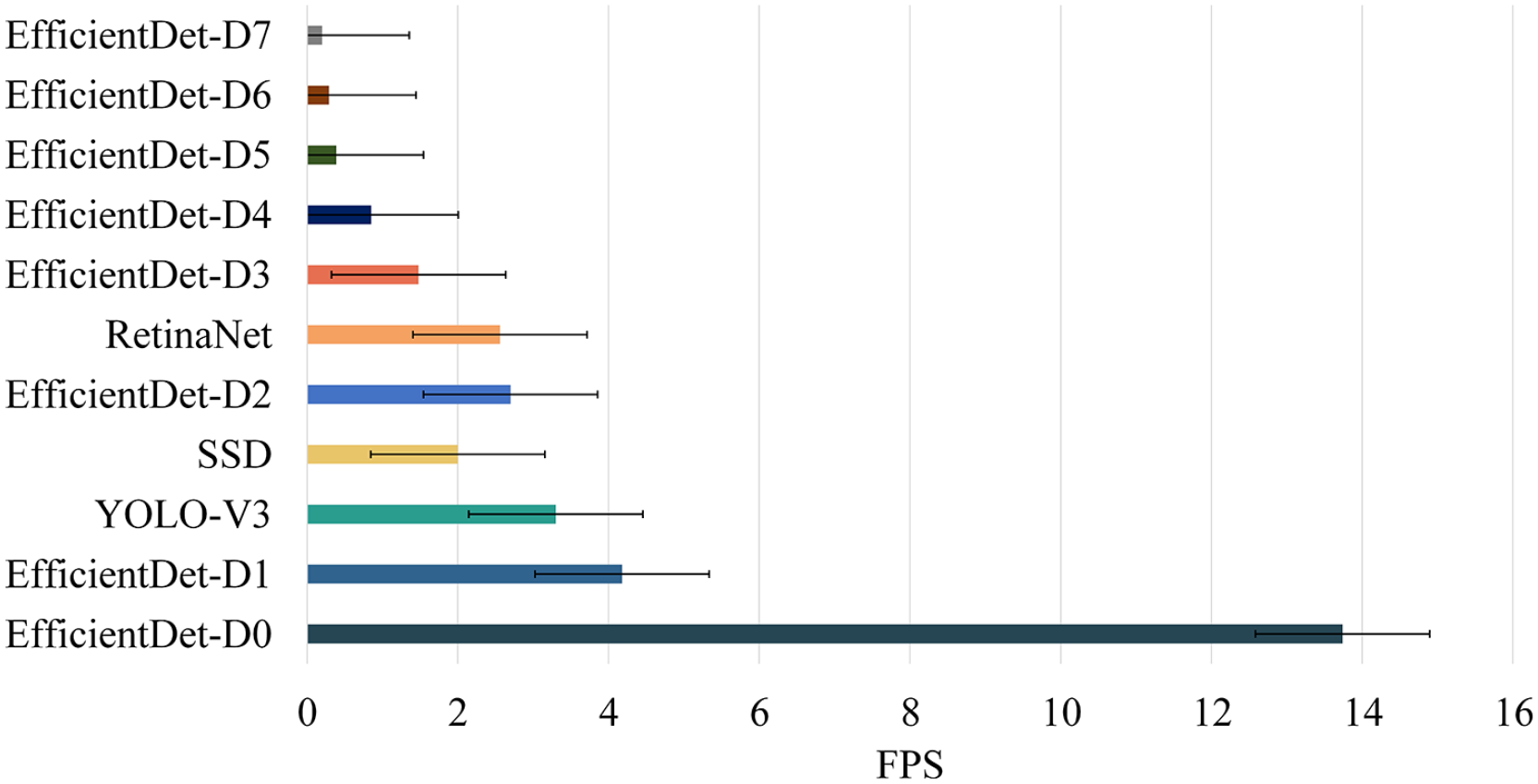

Figure 9 and Table 4 shows the detection speed of the compared models on the same hardware platform, respectively. The fastest model is EfficientDet-D0, which has a detection speed of 42 FPS and a single detection time of 23.8 ms. The detection speed of YOLO-V3 is close to that of SSD, reaching 23 FPS and 24 FPS, respectively, and the single detection time is about 41 ms. RetinaNet has the slowest detection speed, only 12.8 FPS.

Comparison of inference time for different models on workstation.

Inference time on workstation.

Experiment on the edge device

Taking into account the real-time and low-power requirements of industrial defect detection, we transplanted the model trained on the local workstation to our edge computing device Jetson TX2. We compared the inference time of the models mentioned above on the edge device, as shown in Table 4. Note that the size of the image we sent to the network for inference is 256 × 256, and the final output size is 512 × 512. As shown in Figure 10 and Table 5, due to the limited computing resources of edge devices, the detection speed of all models transplanted to Jetson TX2 has decreased. In order to improve the speed of the model, we adopted TensorRT optimization for the EfficientDet-D0. The optimized model has a shorter inference time, which is more in line with the requirements of industrial detection speed. In this experiment, we adopted a single-precision optimization strategy for the model. The comparison of the model before and after acceleration is shown in Figure 11.

Comparison of inference time for different models on Jetson TX2.

Inference time on Jetson TX2.

Comparison before and after EfficientDet-D0 model optimization.

After the model was optimized by TensorRT, the inference time of the model was shortened from 72.8 to 43.9 ms, and the FPS increased from 13.7 to 22.7. The detection time of the model is reduced by nearly half, and the speed is improved by nearly twice, with the capability of industrial real-time detection.

We used the optimized model to test the five datasets, and the detection results were shown in Figure 12. The accelerated model performs well in the detection of five datasets.

Detection results: (a) DRF, (b) GF, (c) CF, (d) DPF, and (e) LBF.

In order to verify the advantages of edge computing in industrial defect detection, we compared the corresponding latency of edge computing and cloud computing. In this experiment, we test the data transmission latency from the cloud server to the local. Although cloud computing takes less time to compute, data transmission consumes much time, which slows down the overall detection speed. In edge computing, edge devices can directly process received images without uploading data to the cloud and downloading detection results, which reduces most of the time and improves the overall response speed. We use the Ali cloud server as the test platform to measure the data transmission latency from the local to the cloud server through the HTTP protocol. The data transmission latency from cloud to local is shown in Table 6.

Latency comparison.

The local bandwidth used in this experiment is 300M, and the Ali cloud server has 1M. There are seven hops from local to the cloud. In Table 6, the latency in original images upload and result return include resource scheduling, establishing connections, and all the time it takes to complete data transfer. Except for resource scheduling and connection establishment latency, the exact latency for uploading data is 48.39 ms, and the data transfer latency for returning results from the cloud is 35.70 ms. If we remove the connection establishment time (after the first connection is established), from original images upload to results return, compared with cloud computing, the time consumed by edge computing is reduced by 2.5 times. After comparison, it can be seen that edge computing occupies a significant advantage in detection speed, which is a feasible detection solution for industrial applications that require high detection speed.

Conclusions

In this paper, we propose a fabric defect detection method based on edge computing which satisfies the requirements of industrial defect detection production line for a low response, low power consumption, and convenient upgrade. Combining the lightweight and scalable EfficientDet algorithm with NVIDIA Jetson TX2 can better address the latency and power consumption issues. Meanwhile, data augmentation is used to improve the detection performance of the model. Furthermore, TensorRT optimize is utilized to accelerate the detection speed of the model for fast defect detection. The comparisons experimental indicate that the response time of our method is 2.5x faster than the cloud computing-based detection method. Therefore, the proposed edge computing-based detection approach shows significant potential and advantages in real-time industrial defect detection.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported in part by the Shaanxi Provincial Education Department under Grant 19JC018.