Abstract

Computational social science research, particularly online studies, often involves exposing participants to the adverse phenomenon the researchers aim to study. Examples include presenting conspiracy theories in surveys, exposing systems to hackers, or deploying bots on social media. We refer to these as “social challenge studies,” by analogy with medical research, where challenge studies advance vaccine and drug testing but also raise ethical concerns about exposing healthy individuals to risk. Medical challenge studies are guided by established ethical frameworks that regulate how participants are exposed to agents under controlled conditions. In contrast, social challenge studies typically occur with less control and fewer clearly defined ethical guidelines. In this paper, we examine the ethical frameworks developed for medical challenge studies and consider how their principles might inform social research. Our aim is to initiate discussion on formalizing ethical standards for social challenge studies and encourage long-term evaluation of potential harms.

Introduction

In computational social science research, particularly in studies exploring online phenomena or technological systems, researchers often employ methodologies that involve exposing individuals or systems to potentially harmful conditions to gain insights into behaviors or vulnerabilities. Examples include participants in surveys of misinformation being exposed to conspiracy theories that they might not have known prior, or online social networks are deploying automated bots and exposing users to synthetic content, or exposing technological systems for attackers to test their resilience through adversarial interventions. Despite involving similar risks, the studies in these examples do not operate within a standardized ethical framework across disciplines. This absence creates a gap in addressing potential harms, assessing benefits, and ensuring accountability.

A notable example of this tension arose when researchers submitted intentionally flawed patches to the Linux kernel without disclosure or consent to evaluate vulnerabilities of this open-source community, which led to calls for new ethics boards in computer science research (Dirksen et al., 2024). This study exemplifies the methodological and ethical challenges inherent in these types of studies. Although this approach can yield valuable insights, it also raises significant ethical questions about consent, harm, and societal benefit in computer science and computational social science.

A recent case study further highlights the importance of considering ethics in social challenge studies. In 2024, researchers from the University of Zurich conducted a large-scale experiment on Reddit’s r/ChangeMyView forum (Koebler, 2025). They used AI-generated bots that pretended to be real people to persuade others in debates. These bots posted as pretending to be rape survivors, therapists, and crafted their responses based on what users have shared online. Over the course of a month, they posted approximately 1,700 AI-generated comments across 404 threads without anyone’s knowledge or consent. After the study, the moderators and Reddit officials criticized the research, even bringing legal action. Similarly, in 2021, researchers at the University of Minnesota sent some seemingly harmless but flawed code patches to the Linux kernel project to show issues in how open-source code is reviewed (Holz & Oprea, 2021). They did this without informing the maintainers or obtaining proper approval first, which raised questions among the open-source community and led to a push for changes to research ethics rules. Although the Reddit and Linux studies differed in their methods and communities, both revealed how research interventions conducted without consent or transparency can risk undermining trust and raise questions of safety and legitimacy. These cases highlight the need for more robust ethical frameworks that take into account the risks associated with studying real-world online and technical communities. While ethical questions related to exposing participants to psychological, social, or informational risks have long been examined within the social sciences, including sociology, psychology, and political science, where researchers have developed discipline-specific ethical frameworks and debated the limits of consent, deception, and acceptable harm (Hilbig et al., 2021; Marzano, 2021; Phillips, 2021) these frameworks may benefit from complementary insights from other research traditions.

Challenge studies are a well-established method in medical research, providing critical insights into vaccine efficacy, drug development, and mechanisms of infectious disease transmission. In clinical research, challenge studies involve the deliberate exposure of healthy participants to pathogens with controlled risks, necessitating robust ethical frameworks to balance scientific progress with participant safety. Over decades, these frameworks have evolved to include rigorous standards for assessing risks, benefits, and long-term impacts on participants. This precedent has helped to ensure both the advancement of public health and the protection of the individuals involved in such research.

Our paper specifically examines a subset of computational social research which we call “social challenge studies.” While our paper draws heavily on medical challenge studies, we also recognize that the key ethical dilemmas, such as deception, exposing participants to psychological stress, and observing sensitive behaviors, have long been part of classic social science research (Humphreys, 1970; Kelman, 1967; Milgram, 1963). Recent work in psychology and research ethics has also shown that deception, debriefing, and consent remain active areas of debate, particularly in misinformation research and studies that involve manipulation without prior disclosure (Bohns, 2022; Murphy & Greene, 2023; Verbeke et al., 2023). Informed consent, even in these complicated and complex studies, is a crucial part of the ethical process. These studies show the long-standing challenge of balancing valuable insights with the risk of harm. In parallel, internet-research ethics communities have developed guidelines for studying behavior in networked environments, addressing platform consent, contextual integrity, and privacy expectations. They also highlight the practical limits of debriefing in large-scale or anonymous online studies (Franzke et al., 2020). Understanding social-science debates through the lens of contemporary internet-research ethics provides important context for digital research and highlights the limits of relying solely on medical analogy frameworks. Accordingly, we examine the ethical implications of these studies in light of established frameworks developed for medical challenge studies as a complementary analytic perspective rather than a normative replacement for existing social science ethics frameworks.

The use of medical challenge studies as a point of comparison is not intended to privilege biomedical ethics over social science frameworks, but rather to draw on a well-developed ethical vocabulary for reasoning about intentional exposure to risk, proportionality of harm, and spillover effects on third parties. The purpose of this work is not to create generalizable guidelines across all disciplines or methodologies, nor is it to review all existing ethical frameworks across fields, but to begin a discussion about what multidisciplinary ethical lessons can be learned from the medical field. Drawing on the ethical principles that govern medical challenge studies, we propose preliminary guidelines to address gaps in ethical oversight and risk assessment in social challenge studies. This effort aims to promote dialogue on the development of ethical practices that ensure both participant protection and responsible research. We start with the understanding that, even though the risks in these fields may look different (e.g., physical injury vs. psychological harm), the core ethical responsibility of balancing risk and benefit remains the same. In fact, several scholars argue that the traditional ethical distinctions between biomedical and social science research ethics are often overstated, since both areas share many core ethical concerns (Emmerich, 2016; Wassenaar & Slack, 2016).

The structure of this paper is as follows. The section titled Medical Challenge Studies reviews ethical frameworks for these studies. The section entitled Social Challenge Studies examines case studies that illustrate the challenges of social research with controlled adverse conditions. The section entitled Lessons Learned: Applying Medical Recommendations to Social Challenge Studies explores how principles from medical challenge studies can inform the development of ethical guidelines for social research. Finally, in our conclusion, we will consider the broader implications of these proposals and identify areas for future research.

Medical Challenge Studies

Definition

A challenge study (also called a human challenge trial) can be defined as a clinical trial in which healthy participants are intentionally exposed to a test subject or pathogen in a controlled environment (Hope & McMillan, 2004; Jamrozik & Selgelid, 2020c). Human challenge studies frequently raise ethical concerns because they deliberately expose participants to risks that they would otherwise not face (Callaway, 2020). Although these studies offer significant scientific benefits, they require careful planning and rigorous ethical oversight to balance participant risks with potential public health benefits (Eyal et al., 2020). It is important to note that many of the standards we discuss here are not unique to medical challenge studies; rather, they originate from broader medical research ethics. Principles such as scientific justification, benefit and risk evaluation, informed consent, and independent ethical review were developed for human subjects research in general and were later applied to challenge studies. Acknowledging this broader foundation helps show that challenge studies build on an existing ethical framework rather than creating one from scratch.

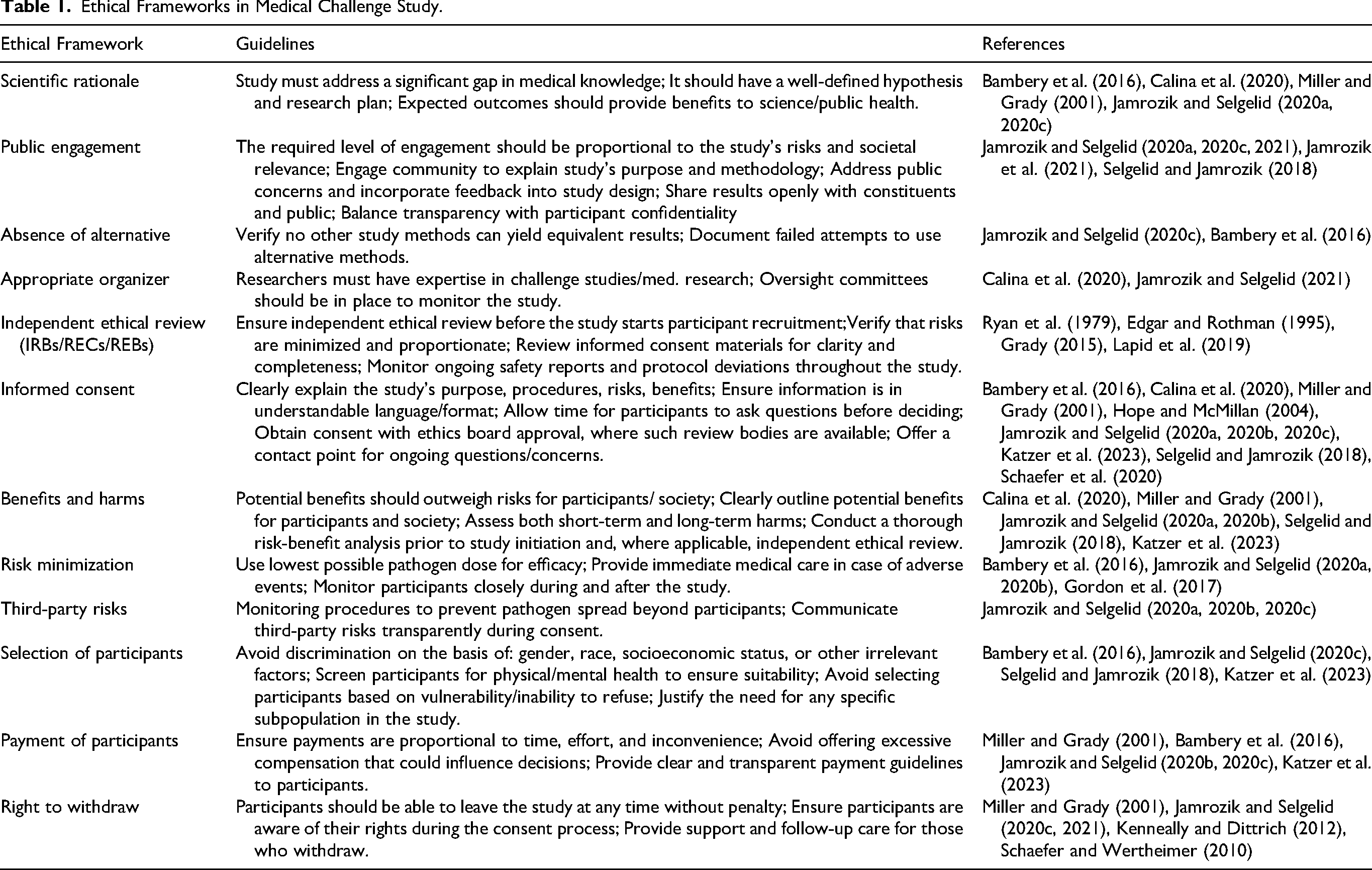

We review some of the key components of ethical frameworks relevant to medical challenge studies by drawing on a multitude of sources. The standards discussed are not meant to be exclusive but to synthesize a large conceptual framework succinctly. We also outline these concepts in Table 1.

Ethical Frameworks in Medical Challenge Study.

Standards

Scientific Rationale

A standard of scientific justification requires sufficient evidence from researchers that the benefits of research, such as public health advancement or policy information, outweigh the associated risks (Bambery et al., 2016; Calina et al., 2020; Miller & Grady, 2001). This standard also guides the design of reliable and impactful studies that yield findings of value to both the scientific community and society at large (Jamrozik et al., 2021).

Public Engagement

Public engagement is essential in medical science, particularly in challenge studies, as it fosters transparency, collaboration, and trust (Jamrozik & Selgelid, 2020a). Early public participation aligns the research process with societal realities and interests, ensuring that community needs are considered in the design and conduct of the study (Jamrozik et al., 2021; Selgelid & Jamrozik, 2018). Transparent and open communication about the aim, approaches, and potential risks and benefits of the study promotes understanding and trust (Jamrozik & Selgelid, 2020c). The impact of these activities would be further maximized by regularly updating community representatives with newly available information that reflects broader research initiatives and public health strategies (Jamrozik et al., 2021; Jamrozik & Selgelid, 2020a). While public engagement plays an important role in many challenge studies, it is not necessary for all of them. The degree of public engagement required for challenge studies should be proportional to the study’s associated risks and its public health relevance.

Absence of Alternatives

The use of medical challenge studies often depends on the lack of other plausible options to achieve the research objectives. However, this requires evidence that there is no reasonable alternative to achieve the objectives of the study, ensuring that the ethical and scientific merits of the study exceed the associated risks (Bambery et al., 2016).

Appropriate Organizer

For conducting medical challenge studies, institutions with appropriate organizational ability are required for participant safety and the validity of the study. They should have the necessary specialized infrastructure (Calina et al., 2020), such as containment units or emergency care resources, to handle the risks associated with the exposure of participants to pathogens and for the handling of personally identifiable information.

Independent Ethical Review

Independent ethical review represents an important mechanism for supporting ethical research involving human participants. This process is carried out by an impartial body, often called a Research Ethics Committee (REC), Institutional Review Board (IRB), or Research Ethics Board (REB) internationally, whose role is to assess whether a study meets accepted ethical standards. The primary purpose of this committee is to ensure the research design aligns with fundamental ethical principles, specifically: maximizing potential social value, minimizing harm to participants and communities, and ensuring the fairness and voluntariness of the informed consent process (Edgar & Rothman, 1995; Ryan et al., 1979).

Before a study can begin recruiting participants, the committee usually performs a thorough review to confirm that the risks are as low as possible and that any remaining risks are reasonable with respect to the potential benefits of the study (Grady, 2015). As part of this process, they also check informed-consent materials to ensure that they clearly explain the study, its possible risks, and the rights of participants in a way that anyone can understand (Lapid et al., 2019). While access to such independent ethical review varies across institutional, disciplinary, and geographic contexts, this form of oversight remains a valuable though not universal component of research governance, complementing researchers’ responsibility to adhere to established ethical frameworks and professional norms.

Informed Consent

One of the most important ethical principles in research is informed consent, which ensures that potential benefits must outweigh the risks of harm to both subjects and society (Jamrozik & Selgelid, 2020b; Schaefer et al., 2020). It is even more pertinent for challenge studies, in which participants are deliberately exposed to risks. Challenge studies require clear and detailed explanations of the purpose of a study, along with a description of the risks involved and a discussion of their potential long-term consequences (Jamrozik & Selgelid, 2020b; Miller & Grady, 2001). Obtaining valid informed consent involves providing not only thorough explanations of the research but also an understanding of the possible health risks and the potential broader societal benefits that might result from it. Participants must agree voluntarily or willingly to be part of the research, understand the possible consequences, and have enough time to make an informed decision without coercion or undue pressure (Hope & McMillan, 2004).

However, it can be argued that in certain cases where the public interest is at stake, it is ethically permissible to involve study subjects without obtaining informed consent. Such an approach is also viable if providing informed consent is not possible and if the participation of the subject does not impact their autonomy. In all cases, the ethical justification of such decisions must be carefully evaluated (Gelinas et al., 2016; Resnik & Finn, 2018). In certain situations, researchers may require a waiver of informed consent from an Institutional Review Board (IRB) before beginning their study. It should be noted that IRBs (or their equivalents) do not exist to provide ethical oversight in all research contexts; indeed, these are far more normalized in North America than in other parts of the globe. Disciplinary associations often also provide ethical guidance in many cases.

When an IRB is available, a consent waiver can be obtained. This waiver is necessary to protect the interests of the participants and ensure that the research is conducted ethically. It is important for researchers to understand the importance of obtaining a waiver from an Institutional Review Board (IRB), as it is pivotal to protecting the rights of study participants. To obtain a waiver of informed consent researchers have to ensure that: (1) the research involves no more than minimal risk to the subjects; (2) the waiver or alteration will not adversely affect the rights and welfare of the subjects; (3) the research could not practicably be carried out without the waiver or alteration; and (4) whenever appropriate, the subjects will be provided with additional pertinent information after participation((Department of Health and Human Services 2009 at 45 CFR 46.116d) (Regulations, 2009).

Benefits and Harms

In human challenge studies, the potential benefits must outweigh the risks of harm to both subjects and society. Participants may benefit from these interventions if they receive the most modern medical treatment and the option of possible early treatment. Society, on the other hand, may benefit from the rapid advancement of research, such as the development of treatments or interventions (Jamrozik & Selgelid, 2020a; Schaefer et al., 2020). It is important to note that while some challenge studies may offer individual benefits (e.g., acquired immunity or access to high-quality care), others do not provide direct benefits to participants. The primary anticipated benefits of these studies are societal rather than individual.

However, it is also important to consider short- and long-term adverse effects. In medical research, short-term harms include adverse reactions to exposure to the pathogenic agent, whereas long-term consequences could be permanent functional disabilities or death resulting from an infection.

In addition to physical harm, participants may also face burdens that affect their well-being, such as commitment to time, inconvenience, and psychological stress. Ethical frameworks for human subjects research emphasize that both harms and burdens should be incorporated into risk–benefit assessments (Bambery et al., 2016; Emanuel et al., 2000). Therefore, it is imperative to perform a detailed risk-benefit analysis before any study is approved to minimize the risk of harm and to justify the expected benefits to participants and society (Bambery et al., 2016; Miller & Grady, 2001). It is also essential to evaluate risk longitudinally and implement strategies to contact challenge study participants retrospectively to assess any harm they may have experienced.

Risk Minimization

Minimization of risk to participants means incorporating measures that reduce the risk of harm to participants while not compromising the quality of research. This can be achieved by appropriate participant selection, such as healthy subjects with particular and defined eligibility criteria, and by excluding vulnerable groups (Schaefer et al., 2020). More specifically, strict safety measures, including continuous surveillance of participant health and rapid availability of medical care in the event of side effects, should be ensured (Hope & McMillan, 2004). Research design also needs to be driven by reducing contact with pathogens and by using the least invasive techniques for research purposes. Informed consent procedures should allow participants to understand the possible risks and participate of their own free will (Jamrozik & Selgelid, 2020a, 2020b).

Third-party Risk

Third-party risks in challenge studies involve possible harm to people or communities who do not participate in the research. These risks can arise from unexpected outcomes, such as pathogens that escape controlled settings (Jamrozik & Selgelid, 2020a). These concerns are more pressing in endemic or low- and middle-income countries, where public health networks might not be able to handle and stop surprise outbreaks (Jamrozik & Selgelid, 2020c). In endemic settings with high levels of background risk, risk assessment is complicated due to the fact that the marginal risk from participation in challenge studies is likely low compared to the background, yet the absolute risks for participants remain high. In low-resource settings, measures to mitigate additional risks might not always be available. To address these issues, scientists need to put in place strong safeguards. These include keeping participants isolated when they are infectious, following strict biosafety rules, and making sure the laboratories are well-contained. Ethical guidelines require a thorough check of the risk of harm to third parties during planning and approval, with clear plans to reduce risks built into the study design (Jamrozik & Selgelid, 2020b).

Selection of Participants

The selection of participants for human challenge studies should emphasize fairness and minimize harm, particularly by focusing on young healthy adults (18-30 years old). These parameters, of course, can change depending on the study parameters and risk factors (Calina et al., 2020; Jamrozik et al., 2021). These participants would have a low probability of background infection, minimizing any additional risks related to the study (Jamrozik & Selgelid, 2020b). Participants who are at high risk for infection due to social injustice should be excluded from the study. It would be unethical and could take advantage of their vulnerabilities (Jamrozik et al., 2021). Vulnerable populations, such as children, pregnant individuals, and those with preexisting health conditions, are often excluded from studies to prevent exacerbating inequalities or causing additional harm to these groups (Bambery et al., 2016; Jamrozik & Selgelid, 2020a). Ensuring equity in participant selection is essential to prevent the exploitation of disadvantaged groups, especially in low- and middle-income countries where socioeconomic inequalities might undermine voluntariness of consent (Jamrozik & Selgelid, 2020c; Selgelid & Jamrozik, 2018).

Payment of Participants

When considering the payment of participants in human challenge studies, it is important to ensure that financial incentives do not unduly influence their decision to participate. Compensation for individuals for their time and the inconveniences they face during the study is ethical (Calina et al., 2020). However, payment amounts should not be so high that they create pressure, particularly for people from vulnerable or socioeconomically disadvantaged backgrounds (Bambery et al., 2016). Compensation should be aligned with the risks and demands of the study, ensuring fairness and avoiding exploitation (Katzer et al., 2023; Miller & Grady, 2001). Researchers must avoid using payments to exploit economically vulnerable groups and ensure that compensation does not encourage participants to conceal risks to receive payment (Jamrozik & Selgelid, 2020b; Selgelid & Jamrozik, 2018).

Right to Withdraw

In challenge studies, participants must have the freedom to withdraw from the study at any time, without any harmful consequences or loss of any benefit (Jamrozik & Selgelid, 2020c; Kenneally & Dittrich, 2012; Miller & Grady, 2001). They should be well informed that they can withdraw from the study whenever they want, even after giving their consent. Such decisions should not involve coercion or disadvantage. The right of withdrawal respects the autonomy of the participants and protects them from undue pressure to continue with the study. They should also be assured that withdrawal from the study will not affect their access to medical care, compensation, or eligibility for future studies (Schaefer & Wertheimer, 2010). In addition, researchers should maintain open communication so that participants feel comfortable sharing their concerns or requesting to withdraw without worrying about negative consequences (Kenneally & Dittrich, 2012; Schaefer & Wertheimer, 2010).

Social Challenge Studies

We define social challenge studies as research that deliberately introduces structured risks (for example, risks of deception, privacy loss, or the potential for perturbing group dynamics or institutional processes) to individual participants or to communities or social groups, in order to generate scientific knowledge. Here, “risk” refers to the possibility of harm arising from research interventions, recognizing that such risks may or may not materialize as actual harms during the course of a study. This includes, but is not limited to, studies of misinformation and conspiracy theories, studies that use bots in online platforms, some studies of open-source software, and certain cybersecurity studies.

In this section, we outline areas of research we believe fit the criteria of a social challenge study. These studies typically adhere to the ethical guidelines of their field and are often developed by professional organizations (AAS Code of Ethics, 2023; European Sociological Association, 2022; Ethics, 2024). At the same time, these research domains are inherently multidisciplinary and may raise ethical questions, particularly regarding intentional exposure to risk, that are not always addressed in a systematic manner within existing disciplinary codes. For this reason, social challenge studies could benefit from a more comprehensive ethical framework that complements established social science and internet research ethics guidelines by incorporating insights from medical challenge studies, without supplanting those existing frameworks.

Misinformation and Conspiracy Theories

Research on misinformation focuses on factually incorrect (or sometimes simply unproven) information that is actively spread by people who believe it to be true. Online social media platforms have become central channels for the rapid diffusion of conspiracy theories, while simultaneously providing researchers with large-scale data to analyze their spread and evolution. Conspiracy theories can be broadly defined as unproven explanations of historical events or other aspects of reality based on the role of secretive small groups with harmful goals (Brian, 1999; Nera & Schöpfer, 2023).

Because there is an important social signaling function related to sharing and broadcasting these beliefs (Bergamaschi Ganapini, 2023), misinformation can spread widely on online platforms. Progressive exposure to a social group and its related concepts, ideas, and stories leads people to adopt beliefs in conspiracy theories (Williams et al., 2022). Susceptibility to misinformation has been shown to be positively correlated with a lack of interpersonal trust and employment security (Goertzel, 1994), as well as a lack of trust in experts (Imhoff et al., 2018; Nera et al., 2024). However, susceptibility has also been shown to depend on personal demographics (Lovato et al., 2024) and, in the case of conspiratorial thinking, it is sometimes even positively correlated with historical knowledge (Adams et al., 2006; Nelson et al., 2010). Therefore, given the broad range of potential misinformation, the structure of the problem is not simply a minority intrinsically prone to believing falsehoods, but is instead a complex system in which some beliefs can spread from one individual to another in surprising ways.

Misinformation Challenge Studies

Research on misinformation has developed in multiple directions in recent years, and much of the empirical work has involved exposing human subjects to misinformation in various forms. By exposure, here we mean that participants are presented with stories or claims that may or may not be true and that can influence their personal beliefs. First, research on social psychology has been able to explore how marginal online communities have facilitated the spread of conspiracy theories as traditional social ties and norms break down (Klein, 2018). Many of these studies, including those cited above, rely on traditional survey methods and expose participants to different belief statements. Importantly, because these studies often want to analyze the role of conspiratorial thinking, education, related beliefs, and other factors, they often intentionally target different demographics. However, these studies are rarely longitudinal, so little is known about the risk associated with this level of exposure.

Second, research in computer science and sociotechnical systems has explored ways to classify and moderate content, or even actively help inform users of online social networks. The detection, verification, and mitigation of online misinformation have all been the subject of numerous recent reviews, given the vast number of contemporary studies covering these topics (Aïmeur et al., 2023; Alghamdi et al., 2024; Rohera et al., 2022). These systems can take many different forms, but very often include human intervention or supervision in their algorithm. Humans can be included in the labeling of training data for the automated classification of information. They can also be included later in the algorithm, either as experts or as standard users, to flag information in real-time on online social networks (Micallef et al., 2020). In either of these tasks, individuals can be exposed to misinformation to which they might be susceptible, and once again, little is known about the associated risks.

Third, and indirectly related to misinformation, social media bots have been designed to mimic human behavior and help the study of online social networks (Boshmaf et al., 2013). These social bots are known to be able to amplify certain messages or narratives (Aïmeur et al., 2023). Researchers may deploy bots to simulate behaviors like retweeting or liking posts to study how they influence the spread of information by collecting how real accounts interact with the bots and their posts (Mønsted et al., 2017). These accounts are not controlled by researchers and potentially contain personally identifiable information. More importantly, this method raises concerns about the ethical implications of research that might unintentionally contribute to the spread of false or misleading content. We revisit this topic in more detail further.

Unintended Harms and Recommendations

Human subjects are involved in many key research areas on misinformation: understanding the susceptibility of individuals, understanding the spread of misinformation, and designing interventions to reduce its harmful impacts on online social media. The risk that a subject adopts certain beliefs because they were exposed through the study platform is likely low, given that the individual may not be part of the relevant social group (Bergamaschi Ganapini, 2023) and may not have been systematically exposed to relevant concepts (Williams et al., 2022), but it is also likely non-zero.

A recent paper titled “Do Survey Questions Spread Conspiracy Beliefs?” tackles this exact issue and measures the potential harms of misinformation challenge studies (Clifford & Sullivan, 2023). The study is motivated by the fact that misinformation is known to spread through repeated exposure, yet research on misinformation routinely exposes participants to it. The authors then conduct a pre-registered experiment embedded in a panel survey. The methodology consists of 18 conspiracy questions, based on questions and format consistent with previous studies in the field, but importantly, allowed researchers to follow participants over weeks. The results show a significant 3% increase in conspiracy beliefs following exposure to the survey. Interestingly, this effect is mainly observed if the conspiratorial statements are presented with a simple agree-disagree format. The effect mostly disappears if participants are offered an explicit choice between a conspiracy theory and an alternative explanation.

Results show that exposing participants to misinformation can affect them, but also that simple steps can be taken in survey or labeling tasks to reduce potential risks. In addition to concerns about misinformation, there is a more comprehensive methodological concern that surveys can often reinforce categories and social outcomes that shape the realities they seek to measure. Researchers have shown that surveys can reproduce descriptive constructs of race (Martinez, 2025), sexuality (Westbrook et al., 2022), and other identity categories (Law, 2009). This raises questions about whether the surveys are truly fair and accurate. Even in regular online surveys, it is still important to consider whether they are accurate and whether they may cause any unexpected consequences (Evans & Mathur, 2018). These studies show that exposure effects are not just conspiracy theories. They also have to do with how survey questions can create, strengthen, and normalize sensitive categories. This can have long-term implications for the survey participants and for society as a whole.

Accordingly, we can follow several recommendations. First, as we just saw, recent results show that presenting factual information as an alternative to misinformation can help reduce the effect of exposure (Clifford & Sullivan, 2023). Second, other research methods could be considered, such as ethnographic or observational studies of online communities to find individuals involved in conspiratorial thinking rather than exposing random participants to misinformation (Polleri, 2022). This can also serve to minimize risk. Third, in both surveys and labeling tasks, participants could be screened to ensure suitability and avoid selecting participants with known susceptibility, such as a lack of job security or other risk factors (Goertzel, 1994). Fourth, longitudinal studies should be considered in order to assess long-term risks and enable meta-studies on the potential impact of misinformation research (Clifford & Sullivan, 2023). Finally, researchers should be aware of the risks of making social categories seem more real than they are when designing surveys. They need to carefully think about the categories they use, avoid simple yes/no questions or formats that reduce complexity, and interpret the survey results with a broader understanding of social identity based on qualitative and historical contexts.

Online Social Media Research and Bots

Research on social media platforms involves studies of user-generated content and user behavior. Social challenge studies in this area may include the generation of new content generated by researchers to monitor user reactions (for example, to gauge the perceived credibility of posts (Li et al., 2023)). Bots, therefore, present a particularly compelling opportunity due to their scalability and ability to simulate human-like behavior on a large scale. This unique capability makes bots valuable tools for studying phenomena such as information spread, but it also raises complex ethical considerations.

Bot Challenge Studies

Bots are commonly used in research to study how information spreads through online social networks (Mønsted et al., 2017). They can simulate behaviors like retweeting or commenting. But in general, bots can also greatly affect the platform that hosts them. Bots can amplify divisive messages, causing them to spread more quickly and broadly (Ferrara et al., 2016). Bots can also contribute to the spread of misinformation by making false stories appear more credible through extensive sharing, creating a frequency illusion (Shao et al., 2017). Bots are also valuable for examining user behavior. Bots can influence the polarization of online discussions, emphasizing their role in the formation of digital echo chambers (Grimme et al., 2017). Furthermore, since bots act as pseudohumans, they intrinsically influence perceptions and trust dynamics in online interactions (Cresci et al., 2017).

Ethical Issues in Research Involving Bots

Informed consent is a crucial ethical consideration in online social media research involving bots for two reasons. First, the methodology often relies on the fact that users will be unaware that they are interacting with bots (Krafft et al., 2017). Second, bot-generated content is usually public, which means that researchers cannot control who interacts with it (Krafft et al., 2017). If individuals do not know they are engaging with a bot, they cannot truly consent to the interaction, and their autonomy is being compromised. This raises concerns as respecting autonomy and informed decisions is a fundamental ethical principle in research (Brock, 2008).

Moreover, it is also a significant challenge to determine who is ethically and legally responsible for the actions of intelligent technologies when they cause harm (Coghlan et al., 2023). In the case of online social media research that integrates social challenge studies, such as bot research, does liability lie with the researchers who created the bot, the platform that hosts it, or both? This challenge increases when bots are designed to function autonomously, making decisions in real-time based on user input or adapting to changing conditions, which may also lead to issues of interaction bias.

Defined accountability frameworks are crucial for establishing responsibility for bot actions and addressing potential harm. Ethical oversight helps researchers identify and mitigate risks before deployment, while legal frameworks ensure accountability for any resulting harm. However, the lack of clear and consistent rules for using bots on social media creates significant ethical and legal challenges for researchers. Different social media platforms have varying policies: for example, X, which was formally known as Twitter (Twitter, 2023), permits some forms of automation, while Facebook (Facebook, 2019) and YikYak (Yik Yak, 2025) strictly forbid it. These inconsistencies make it more challenging for researchers to assess the ethical implications of using bots in their studies. Violating Terms of Service raises ethical concerns and potential legal liabilities. According to the Computer Fraud and Abuse Act (CFAA) in the United States, accessing a platform in violation of its terms can be considered illegal (Vaccaro et al., 2014).

To address this issue, researchers should carefully consider whether they are adhering to platform policies and Terms of Service regarding consent. Researchers also need to ensure that ethical oversight boards, such as Research Ethics Committees (RECs), Institutional Review Boards (IRBs), or Research Ethics Boards (REBs), understand and approve this type of research.

Cybersecurity and Honeypots

Cybersecurity Challenge Studies

Cybersecurity research focuses on protecting digital environments by studying vulnerabilities and malicious behaviors. Not unlike the open-source software example given in the Introduction, social challenge studies in cybersecurity often involve controlled exposure to threats to understand and mitigate risks. One common method is the use of honeypots: deliberately exposed systems or resources designed to lure attackers so that their behaviors can be observed and analyzed. Unlike traditional cybersecurity measures designed to deter threats, honeypots encourage interactions to gather information on attacker strategies and techniques (Provos et al., 2004; Spitzner, 2003). These resources can include physical systems, virtual environments, or abstract entities such as files and online accounts (Lazarov et al., 2016; Onaolapo et al., 2016).

Honeypots do not create new malicious actors or necessarily increase attacker activity. Rather, they make existing malicious behavior more visible by presenting a system with which attackers are likely to interact. The intentional design and deployment of the honeypot to lure, isolate, and attract malicious actors is why we characterize this line of research as a social challenge study. By exposing these systems to potential threats (attackers), we can better understand attackers’ behavior and strategies in a controlled setting, but we also risk compromising the security of the environment. This is a unique case where human participants are the challenge, and the study environment itself is being challenged. It is therefore necessary to ensure that honeypot systems are designed, built, and deployed to minimize harm; e.g., by isolating potentially harmful honeypot environments.

Recommendations for Honeypots

Using honeypots presents significant ethical and technical challenges, particularly in terms of informed consent, deception, and risk management. In this line of research, the researcher must keep all recorded activity traces safe and avoid deanonymizing visitors to honey assets. Furthermore, if experiments involve running live malware samples (as seen in Onaolapo et al., 2016), adequate care must be taken to ensure that the malware samples do not harm any internal or external parties (Rossow et al., 2012). It is also important to protect the researcher responsible for honey assets. Honey assets and honeypot systems must be designed to minimize the potential harm that the researcher may face, e.g., if their identity were to become known during experiments. Hence, it is important to take advantage of Virtual Private Networks (VPNs) and proxies and to incorporate them into the honeypot infrastructure when necessary. Moreover, there can sometimes be conflicts between the requirements of minimizing harm (e.g., via sandboxing honey assets) and ensuring that those honey assets appear realistic. In line with the Menlo Report (Kenneally & Dittrich, 2012), the right balance between these requirements must be found by the researcher.

Honeypot studies frequently conflict with the principles of informed consent, as attackers cannot be notified without affecting the research. Consent is typically waived in accordance with ethical guidelines such as the Menlo Report, which emphasizes minimizing harm and maximizing social benefit (Kenneally & Dittrich, 2012). Honeypot experiments are in necessary conflict with the informed consent norms that are enforced by several regulations and professional codes (Emanuel et al., 2000; Regulations, 2009). They depart from conventional informed-consent norms because attackers cannot be notified or debriefed without undermining the study. However, waiver of consent can be considered justifiable when the risks are minimal, rights are not significantly violated, and the research addresses substantial public interest (Gelinas et al., 2016). For example, studies that expose malicious actors to controlled honeypot environments seek to protect broader systems without unduly affecting the attackers themselves (Regulations, 2009).

In addition, honeypot studies raise ethical questions that challenge even the most adaptable guidelines. What is the researchers’ duty to attackers? If attackers use fake items, do standard ethical rules still apply? These scenarios intentionally cause hostile actions with no opportunity to explain them later, creating an uneven ethical situation. Unlike medical research, where people agree to controlled harm for knowledge and safety, cybersecurity studies create ethical traps to study participants’ actions without their approval, which goes against their best interests. Because of this, researchers must carefully measure possible harm to systems, themselves, and even the attacker.

Another challenge comes from research ethics committees (such as IRBs/REBs). Many research ethics committees are not equipped to evaluate honeypot or malware studies. As a result, ethical review can become overly cautious or insufficiently critical. This gap highlights an opportunity for cooperation and knowledge sharing between cybersecurity experts and ethics reviewers, supported by guidelines like the Menlo Report, to better assess digital risks.

Ethical honeypot research faces several challenges, including insufficient education on computer ethics and technical risks. The lack of training and qualified instructors to teach ethics in computer security contributes to knowledge gaps among cybersecurity researchers, leaving many unaware of appropriate ethical frameworks (Stavrakakis et al., 2022). Ethical oversight boards are essential for reviewing research that involves human subjects. However, their limited understanding of advanced technological methods may make these studies difficult to review, which highlights the need for improved collaboration between researchers and review boards. This collaboration is crucial for ensuring compliance with ethical protocols. Technical risks also present significant concerns; for example, cybercriminals may exploit honeypot servers to access sensitive data, or participants may unintentionally expose personal information by linking their accounts to research systems (Onaolapo, 2019).

Considering the aforementioned issues, we propose that honeypot studies actually align with our ethical model. These studies often involve deception, consent waivers, potential harm to individuals or communities, and complexities in ethical oversight. Similar to social bot experiments, honeypot studies raise questions about maintaining participant anonymity, what they are exposed to, how their data is used, and who is responsible. This means that they should be included in an ethics framework that draws on lessons from medical challenge trials.

Adaptability of Medical Ethics in Social Challenge Studies

When translating ethical lessons from medical studies to social studies, researchers must consider the conceptual similarities and differences in how studies are designed, the risks involved, and the necessary oversight. Ethical research with human participants, whether conducted in clinical settings or online, must balance the benefits of scientific discovery with the risks of potential harm to individuals and groups. In this section, we draw clear comparisons between medical challenge studies and social challenge studies, pointing out how the ethical issues of medical research apply to social research and how to adjust for digital environments. Both types of studies involve exposing participants to potential risks: medical risks from pathogens in health studies and risks from bots, misinformation, or manipulated online experiences in online studies. Although the fields are different, the core ethical issues, such as exposure control, informed consent, and harm minimization, are closely related. These principles help researchers put ethical protections in place, even when they are not in a formal medical trial setting.

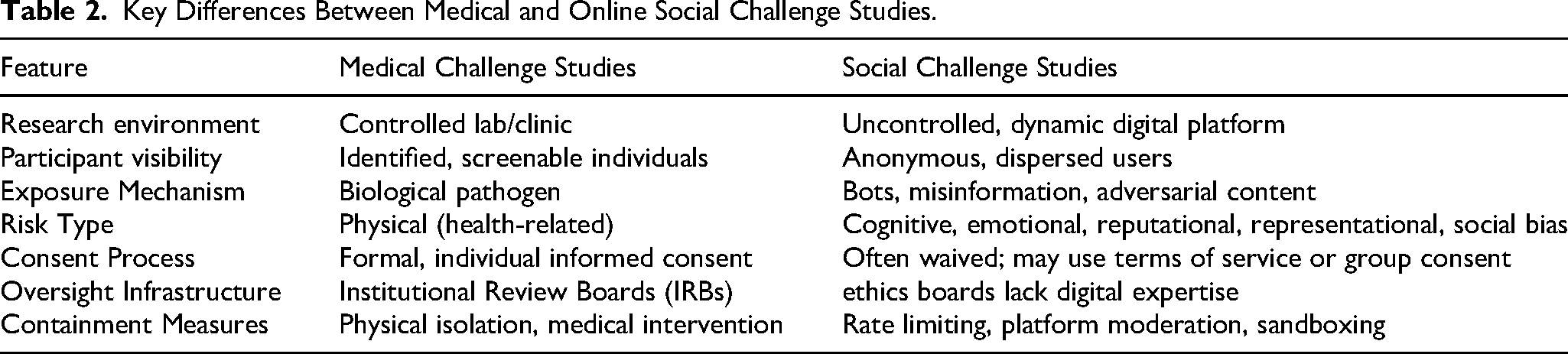

To support this framework, Table 2 presents a comparison of medical and social challenge studies, highlighting the particular ethical considerations required for online research. Understanding these differences makes it easier to see where adjustments are needed and where we can learn from similar situations.

Key Differences Between Medical and Online Social Challenge Studies.

Controlled Exposure

In medical challenge studies, people are intentionally exposed to a virus or bacteria under controlled settings. This usually means that they receive a specific dose in a sanitized environment and that medical professionals are keeping a close eye on everything. The idea here is that this exposure is done for a clear scientific reason, with careful administration, and the dose amount is kept to what is necessary. However, social challenge studies also put participants in situations where they encounter harmful content, such as online bots, fake news, hate speech, or misleading information. Interestingly, some researchers use epidemiological methods to explain how misinformation spreads through people and communities, borrowing terminology from the study of viruses (Govindankutty & Gopalan, 2024).

Like medical challenge studies, where the amount of pathogens given to participants is carefully managed, social studies must also carefully quantify the amount of misinformation or online research content participants encounter. This involves determining how often, how intensely, and for how long participants are exposed to harmful messages. For instance, if someone sees a mild false statement just once, it might not be a major ethical issue. But if they are exposed to repeated, emotionally charged lies, that would be unethical. This highlights the need for a more structured and systematic approach, similar to the one used in medical studies for dose-response relationships.

These similarities do not imply equivalence; exposure in online social systems is less physiologically risky but often more diffuse and less predictable. The ethical relevance of the medical analogy lies in its articulation of how intentional exposure imposes specific types of ethical considerations on researchers, regardless of domain.

Informed Consent

In medicine, informed consent is typically an individual act: participants are informed of the risks, given the opportunity to ask questions, and allowed to withdraw without penalty. Social research, however, often complicates this model. Data may be drawn from online platforms or group interactions where individuals are not directly approached, identities may be obscured, and researchers sometimes rely on terms of service as a proxy for consent. Yet, as both legal scholars and review boards point out, simply using a platform does not constitute meaningful agreement to participate in research (Straub & Burton, 2025). Controversial cases, such as the Reddit manipulation study, underscore how the absence of explicit consent, especially when deception is involved, raises serious ethical concerns (Koebler, 2025). It is important to note that even though some content or online ecosystems are publicly accessible online, ethical obligations differ when researchers enter semi-private or membership-restricted spaces. Community-specific norms, privacy expectations, and barriers to entry shape the forms of consent or moderator approval that are appropriate. Our framework treats online data as context-dependent rather than uniformly public. Users’ contextual expectations of privacy, the norms of a particular community, and the presence of barriers to entry (e.g., application-only forums, moderated spaces, closed groups) all affect whether additional consent, community consultation, or platform-level approval maybe appropriate.

For networked or group-based data, the problem is sharper still. Consent is rarely confined to a single person: identifiable nodes can reveal information about others in the network, including secondary participants who never agreed to participate. This poses a fundamental tension between the individualistic model of consent and the relational nature of network data (Lovato et al., 2022; Neal et al., 2024).

There is no perfect solution, but several practices from the social sciences can guide ethical approaches. In closed populations, researchers can seek consent not only for individuals to share their own information but also permission for others to share information about them. In some contexts, consent may be gathered from a representative, such as a household head or community moderator, while still allowing space for individuals to opt out. When direct consent is impractical, researchers can provide clear public notice, establish straightforward opt-out mechanisms, and minimize risks to those indirectly implicated.

Across all cases, researchers must act as stewards of the people represented in their datasets. This means limiting deception, protecting privacy, and actively reducing the potential harms that fall on secondary participants. Group consent and representative consent should not be seen as substitutes for ethical responsibility but as part of a layered model of transparency, risk minimization, and respect for the relational character of social data.

Risk Minimization and Mitigation

In medical challenge studies, having solid plans in place to keep participants safe is essential. Researchers take a close look at who they want to include, often leaving out anyone who has existing health problems that could make things riskier. After participants join the study, they are closely monitored in a controlled setting where anything can go wrong. If complications appear, they are ready with emergency treatments like antiviral drugs or oxygen to help right away.

Similarly, social challenge studies should have the necessary protections in place for online settings. The risks in these studies often revolve around how people think and feel, and what they might lose in terms of reputation, as well as their physical safety, as implications from online challenges can be taken and spread into offline contexts. Unlike medical challenge studies that address physical risk, social challenge studies must carefully consider broader social harms. This includes the possible creation or spread of stereotypes and biases. These can include reinforcing stereotypes, bias, or prejudice against certain groups (Cecchini, 2019). When researchers study sensitive issues like racism or sexism in real online environments, their work can unintentionally make these problems worse by normalizing biased behavior or spreading harmful ideas (Cecchini, 2019; Glaser & Kahn, 2005). For example, showing participants biased news could strengthen stereotypes, or the study’s results could be misunderstood in ways that unfairly stigmatize a group (Arendt, 2023). Therefore, ethical guidelines for social challenge studies should encourage researchers to think carefully about how their methods or findings might contribute to collective harm. In addition, to reduce bias and potential harm, researchers should include some helpful strategies, such as:

Fact-checking tools that let users know when content might not be reliable. Alerts that inform users they are part of a study or interacting with something that is not real. Resources for psychological support, like options to opt out if users feel upset.

However, not every study allows participants to access these protections immediately. Studies that rely on complete deception keep participants anonymous, in which participants do not even know they are being tested, and raise specific ethical issues. In these situations, participants cannot take protective measures, such as avoiding certain content or asking questions, leaving them exposed until the study is over or the truth emerges. Things get even more complicated in big online spaces like social media, where participants are often anonymous and unaware of their participation. This makes it difficult for researchers to follow up after the study to explain what happened, clear up any misunderstandings, or let people know they can pull their data. This violates ethical expectations established in both biomedical and behavioral research, where post-study debriefing is essential when deception is involved (Kenneally & Dittrich, 2012). When it is not possible to provide safeguards during the study, researchers should consider the following duty:

Justify using deception and anonymity to an oversight board. Show that there is no less harmful way to conduct the study. Keep the exposure as limited in scope, frequency, and emotional weight as possible. When possible, prepare for a thorough debriefing after the study and let participants withdraw their data if they choose to, following ethical guidelines for digital research. Get approval from the community if you cannot get individual consent, for example, getting permission from the platform, platform admins, or moderators. Be open about how the study was done and its limits so that people can trust the findings.

In summary, reducing risk involves the researcher being aware and responsible during their study design. This is especially true when participants cannot give their consent, opt out, or be informed later. In studies that involve deception or keep identities anonymous, researchers must make sure they minimize risks and harm, and they should follow up with proper accountability after the study.

Lessons Learned: Applying Medical Recommendations to Social Challenge Studies

Objective

Building on the earlier discussion about how medical ethics can inform social challenge studies, this section turns those ideas into practical guidance. Here, we lay out clear standards for designing, reviewing, and running these studies, drawing on both medical challenge research and established practices in social and computational work. Through this exploration, we aim to contribute to the ongoing dialogue on creating multidisciplinary ethical frameworks that balance scientific innovation with accountability and the well-being of participants.

Although medical challenge studies provide a natural reference point, we do not assume that standards developed for clinical research can be transferred wholesale to online social science research. Instead, the analogy rests on a narrower and more general normative premise: any form of research that deliberately introduces controlled adverse conditions in order to produce knowledge must satisfy a set of obligations concerning risk–benefit justification, harm minimization, informed consent or its justified waiver, and independent oversight. These ethical considerations reflect broad traditions in research ethics that span many fields. Medical challenge studies, however, operationalize these principles in particularly explicit ways by managing risk through deliberate exposure. Social challenge studies share this structural feature, even though the sources of harm, types of participants, mechanisms of exposure, and relevant communities differ. The medical challenge study framework, therefore, functions as a starting point for reasoning about how to structure protections in online social contexts, while requiring careful reinterpretation to account for digital settings, informational harms, autonomy constraints, and community-level effects.

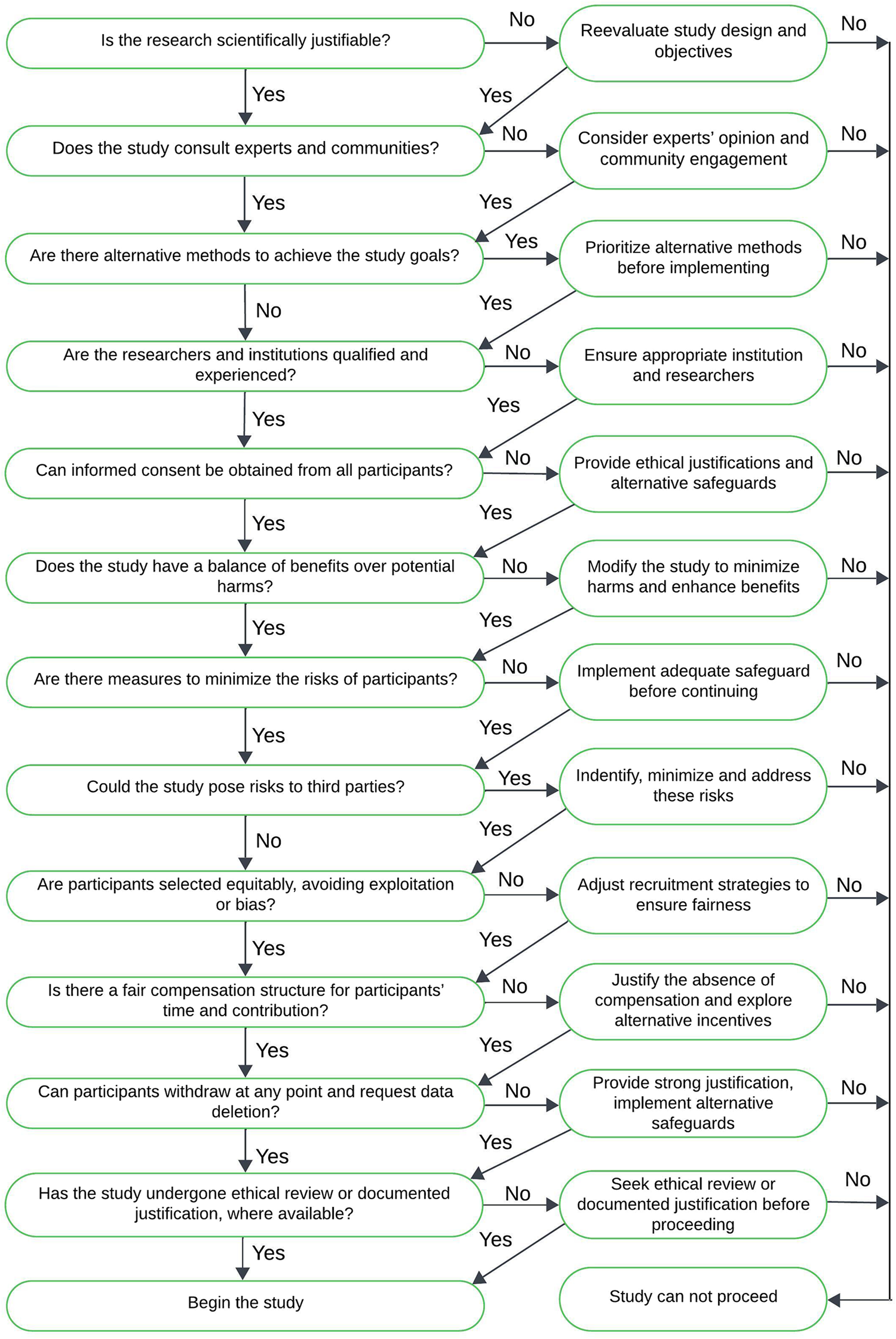

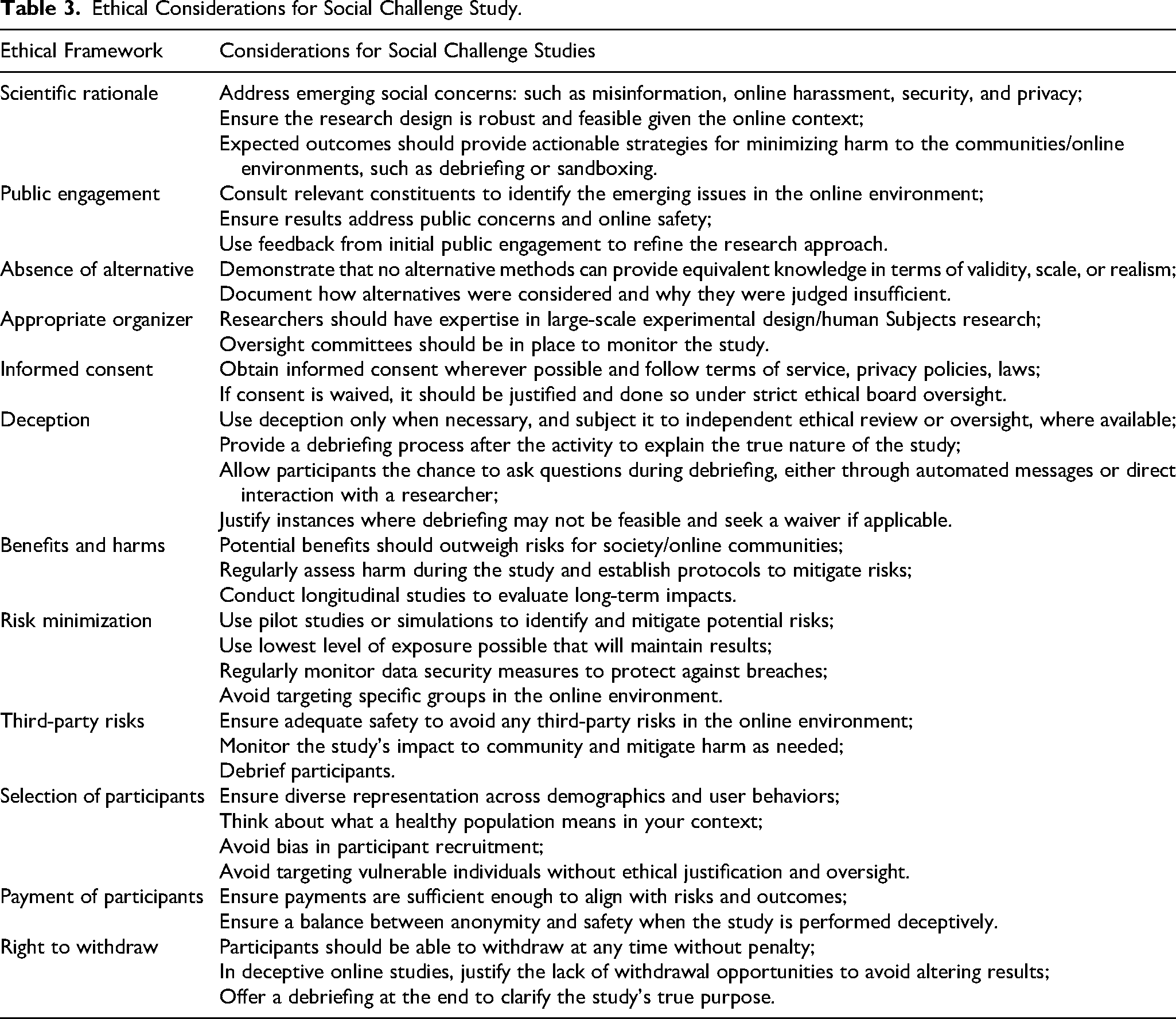

We have summarized the applicable ethical frameworks in Table 3 and also created an additional decision tree (Figure 1) in the Appendix to help researchers support the practical application of these frameworks. This decision tree guides researchers through key questions including whether their study involves intentional exposure to risk, whether deception is used, whether informed or group consent is feasible, and whether participants are vulnerable or likely to experience long-term risks. This design helps researchers identify when additional safeguards, such as longitudinal follow-up, post-exposure debriefing, or platform-level review, are ethically required. This decision tree translates broad ethical principles into a practical framework for diverse audiences.

Decision tree outlining steps in the social challenge study ethical framework. This decision tree supports researchers in systematically evaluating key ethical considerations when designing or reviewing social challenge studies. It outlines steps, including assessment of exposure risks, consent pathways, participant vulnerability, use of deception, and harm mitigation strategies. Drawing analytically on medical challenge frameworks, the tree is designed to guide ethical decision-making for studies involving bots, misinformation, platform interventions, honeypots, and other controlled-risk designs in digital environments.

Ethical Considerations for Social Challenge Study.

Standards Revisited

Scientific Rationale

Drawing on both medical and social research ethics, social challenge studies require a robust scientific rationale to justify the importance of the study both for the research team and for any external ethical review, as well as its associated risks and benefits, for both participants and the general public. Such studies usually examine issues of system security, privacy, misinformation, and online manipulation, with the broader objective of promoting the public good through enhanced cybersecurity and improved user experience. Researchers need to be transparent about their research goals and outcomes, enabling accountability among broader societal communities and ethical oversight boards. The scientific knowledge acquired resulting from social challenge studies must be both scientifically rigorous and impactful, while also ethically defensible, ensuring that progress respects the responsibilities to individuals and society.

Public Engagement

Public engagement through community consultation is an important ethical consideration in social challenge studies. Although it should not replace individual informed consent, community consultation fosters trust and supports the ethical conduct of research by allowing investigators to engage with local constituents and subject matter experts and explain study objectives, gather feedback, and address concerns (Richardson et al., 2006). Community consultation highlights the importance of transparency and accountability, demonstrating a commitment to justice and beneficence. It can also lead to better research questions, help mitigate bias, and provide a clearer understanding of the research context, ultimately yielding more effective research results (Marshall & Rotimi, 2001). By actively involving representatives from the groups most likely to be affected by the study, researchers can more effectively identify and address potential risks or ethical issues that they (and even ethics review boards) might overlook. Ultimately, including diverse perspectives during the study planning phase can lead to a more equitable distribution of risks and benefits.

Defining what makes a “community” can be challenging because there is often no straightforward method to identify legitimate community members or guarantee meaningful participation. However, effective communication and collaboration can help address these issues. Researchers should provide background education on relevant health, social, or technological topics to empower community members to engage meaningfully in discussions about study design and the risks and benefits involved (Hintz & Dean, 2020). Collaborating with local organizations or community leaders can enhance engagement, fostering mutual respect and shared decision-making. These partnerships also acknowledge the cultural sensitivities of the groups involved and help to build long-term trust in the research enterprise (Casari et al., 2023; Hintz & Dean, 2020). Such collaborative approaches not only strengthen trust but also enable more informed and accurate research by uncovering otherwise hidden or neglected forms of ’dark data,’ thereby broadening the evidence base for ethical and effective study design (Hand, 2020).

Beyond the consultative phase, there is growing recognition that participants have the right to learn about study results, both as a form of respect for their autonomy and as an acknowledgment of their contributions (Casari et al., 2023; Fernandez et al., 2003). Researchers should aim to communicate these results in clear, accessible language whenever possible, as long as it does not create undue risks to participants or investigators (Carroll et al., 2023; Hintz & Dean, 2020). In certain contexts, sharing of findings can be harmful or stressful if the information is unreliable, misrepresents the community, or exposes individuals to risks such as trauma, discrimination, or financial harm (Fernandez et al., 2003). Furthermore, researchers studying sensitive or high-risk subjects (e.g., hate speech or cybercrime) must consider the potential for personal harm if they are identified and targeted by adversarial groups. Thus, decisions about how and when to disclose study outcomes should strike a balance between the ethical imperative of transparency and the duty to prevent harm to participants, communities, and investigators.

Absence of Alternatives

Social challenge studies should only be conducted when no reasonable alternatives exist to achieve similar research objectives. Before launching a full-scale study, researchers can use simulations, models, existing observational data, archival corpora, or controlled experiments to evaluate the potential effectiveness of the proposed intervention and to predict possible external effects. By conducting experiments in a controlled environment (either through computational modeling, sandboxes, or pilot studies), researchers can more accurately predict outcomes, enhance methods, and uncover unexpected risks before engaging real-world communities.

Industry partnerships can foster an environment that minimizes risks for participants and third parties. Collaborations with online platforms, for example, enable researchers to conduct experiments in “sandboxed” accounts or restricted spaces that isolate the investigation from broader user populations (Leckenby et al., 2021; Onaolapo et al., 2021). This approach allows for the testing of novel or potentially disruptive interventions without exposing full-scale user communities to potential harm.

Mathematical, computational, and agent-based models can incorporate established theories and empirical parameters to explore how an intervention might unfold, offering insights into potential dynamics before any real-world implementation. Integrating emerging technologies, such as artificial intelligence simulations, provides an additional layer of potential risk mitigation. In certain cases (particularly those with potential adverse mental health implications), AI-driven modeling can help researchers anticipate and address potential harms, or perform potentially harmful experiments on AI subjects rather than human subjects (Park et al., 2024). Using generative AI in research, on the other hand, introduces additional ethical issues, including consent, attribution, and bias, that should be carefully considered (Davison et al., 2024; Schlagwein & Willcocks, 2023).

In medical challenge studies, the “absence of alternatives” principle typically requires showing that no other method (e.g., animal models, in vitro studies, or simulations) can achieve the same results. However, for studies of social challenges, the assessment is more complex. Because researchers often have access to simulations, observational data, or archival corpora, it is rare that no alternative exists. Instead, the relevant ethical question is whether alternatives can provide knowledge that is equivalent in validity, scale, and ecological realism to what a live-challenge study would reveal. For example, while survey experiments can measure whether exposure to misinformation shifts individual beliefs, they cannot capture how repeated exposures through social networks interact with platform algorithms to drive large-scale diffusion. However, researchers should document how they evaluated alternatives, simulations, observational studies, or smaller pilot interventions, and explain why these approaches were inadequate to meet the objectives of their study.

Appropriate Organizer

For conducting social challenge studies, institutions should have the necessary specialized infrastructure, such as ethical research boards. In addition, individual researchers should possess expertise and training in conducting large-scale, hypothesis-driven experiments, have an understanding of what challenge studies entail, and receive training on the ethics of working with human subjects.

Informed Consent

Informed consent is one of the most important ethical issues for conducting research on social challenge studies. The preceding section highlights how online and networked contexts complicate traditional medical models. Informed consent is a process in which potential study participants are provided with clear information about the research study’s purpose, procedures, risks, and potential benefits, after which they may choose to agree to participate or decline (Kenneally & Dittrich, 2012; Largent et al., 2024). The purpose of the informed consent procedure is to respect the autonomy of the study participants (Kenneally & Dittrich, 2012). However, in some cases, when the public interest is at stake, involving study subjects without informed consent may be ethically justifiable. Such an approach is also viable if providing informed consent is not possible and if the participation of the subject does not impact their autonomy. In all cases, the ethical justification of such decisions should be carefully evaluated and reviewed by a research ethics committee, where such review is available (Gelinas et al., 2016; Resnik & Finn, 2018).

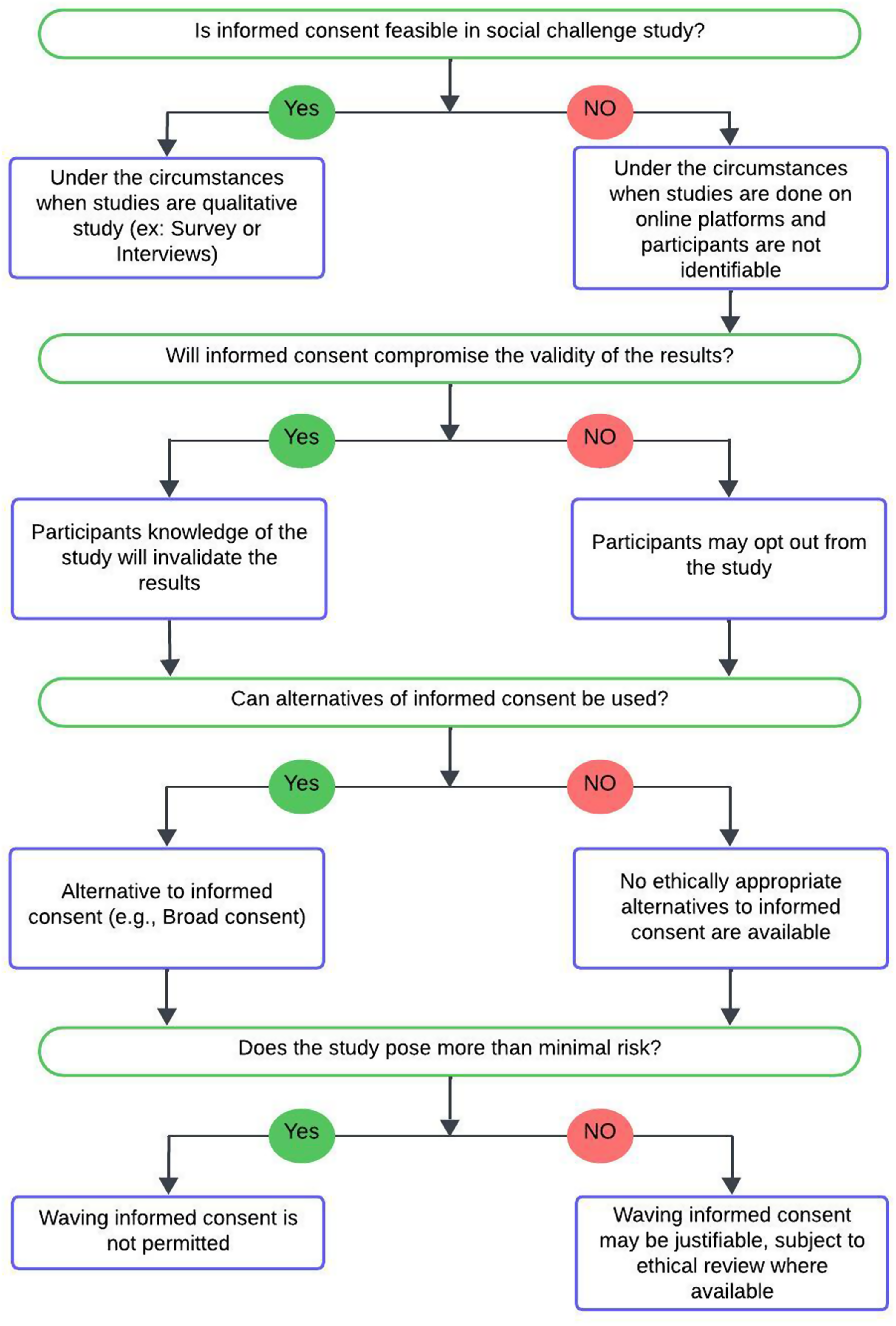

To guide researchers through the complexity of informed consent, we developed a decision tree (Figure 2) in the Appendix that outlines the ethical consent pathways for social challenge studies. This flowchart begins by asking whether individual informed consent is possible. If so, researchers are expected to obtain it from participants. If not, due to study scale, anonymity, or the nature of public platforms, the decision tree outlines structured alternatives. This includes getting consent from a group or community, sending public notices, or seeking a consent waiver through independent ethical review (e.g., IRB/REB), where such mechanisms are available. The tree also guides researchers through cases involving deception or delayed debriefing. It asks them to consider whether participants could be identified, whether they could be harmed, and whether they should be informed about the study later or allowed to opt out.

Decision tree outlining steps in the social challenge study ethical framework related to informed consent. This tree helps researchers assess how informed consent should be obtained, waived, or substituted in studies involving deception, public interactions, or group-level exposure. It highlights the role of terms of service, the feasibility of direct consent, the importance of debriefing when possible, and ethical oversight for waivers or documented ethical justification for waivers, where applicable. It supports researchers conducting studies in environments where traditional consent is difficult or impossible to implement.

In these contexts, it is essential to comply with online terms of service, robot.txt files, privacy policies, and applicable regulations and laws. The terms of service and privacy policies represent the baseline agreements that outline how the data of the participants can be used, providing a foundational level of consent for online studies.

Many social challenge studies, especially those conducted online, often lack informed consent, where study participants are unaware that they are participating in a study. Addressing ethical frameworks for social challenge studies that waive consent due to impracticality is a complex issue that requires careful consideration of participants’ autonomy, privacy, and potential harm. Researchers conducting such studies also need to ensure that the data is not identifiable and that the observation of human subjects is indeed in a space where it is permissible to collect data (Willis, 2019).

Another approach is to request broad consent from participants. This means obtaining consent in advance for a variety of research activities without exactly defining the types of data or behaviors that may be examined. For example, participants might agree to contribute to “research involving social behavior on platforms” without being informed about the specifics of each individual study. However, it remains an open question as to how to obtain effective consent from groups or participants on a large scale. An option to enhance transparency before conducting the study is to implement widespread public awareness campaigns outlining the purpose, methods, and opt-out options. However, if waiving consent is necessary, the researcher must provide a strong justification by demonstrating that obtaining direct individual consent is not feasible. They must also show that the research offers significant social value, and explain how risks to participants are minimized and justified, typically as part of an independent ethical review where available.

Deception

In some cases, revealing the purpose of a research study can compromise the validity of the results (Johnson et al., 2011). Consequently, researchers may opt to use deception (if approved by an ethics oversight board) before beginning their study. This deception approval is necessary to protect the interests of the participants and ensure that the research is conducted in an ethical manner.

Furthermore, most studies that include deception are generally followed by a debriefing process rather than a one-time statement. Once the activity is over, the participants can be informed about the true nature of the study and the reason why deception was necessary. This can be done automatically or with a researcher present to discuss the details and address any questions or concerns. The goal of this process is to ensure that participants leave with a clear picture of their role in the study and a solid understanding of its purpose. However, there has been some criticism of studies that rely on self-discovery as a means of learning about participants’ behavior or social interactions (Sommers & Miller, 2013). In computer security research, if revealing the actual research protocol might cause adverse harm to the participant, and there is minimal risk involved, a waiver for debriefing can be granted by a review board if adequately justified (Finn & Jakobsson, 2007; Johnson et al., 2011).

Benefits and Harms

Clear metrics for assessing the benefits and drawbacks of research are crucial to maintaining ethical integrity, particularly in studies that address social challenges. While social challenge studies offer insights into online behavior, misinformation, and policy effects, the outcomes may not always be as direct or linear. However, they do possess significant potential to improve societal outcomes. To maximize societal benefit, researchers should actively engage with key constituents, including platforms, communities, policymakers, and other relevant parties. By establishing these connections, researchers can ensure that their findings are communicated effectively, thereby increasing their likelihood of impacting decision-making and driving meaningful change.

Additionally, by involving communities early in the research process, researchers can better align their objectives with the public’s needs and expectations. This collaborative approach makes the findings more applicable and fosters trust and transparency, ensuring that the research positively contributes to the broader social context. Responsible storytelling about research outcomes represents another critical balancing act: results should be presented with humility and exactness, while remaining accessible and comprehensible to diverse audiences beyond academia.

Assessing risks in social challenge studies should also consider the possibility of participant or community burdens, such as privacy disruptions or unintended exposure to sensitive content, even when no direct harm occurs. These burdens may be less visible, but they are still important for ethical review and should be weighed together with the study’s potential social benefits. Researchers must navigate the complexities of understanding how participation in these studies affects individuals, groups, or systems, both in the short term and over time. Longitudinal studies that follow up with participants after the conclusion of a study can offer valuable insights into long-term consequences. These types of retrospectives can help identify any unforeseen harms or benefits, as well as enhance the overall understanding of potential risks and harms associated with these types of studies. Currently, there is a lack of sufficient longitudinal studies in this area to fully understand the ripple effects of these studies. Expanding retrospective analyses and conducting meta-studies across various domains could significantly improve our understanding of these risks and help shape ethical guidelines.

Unlike medical challenge studies, social challenge studies often take place in digital, dynamic, decentralized, and only partially controllable environments. As a result, risk–benefit assessment cannot always rely on precise prediction or tightly bounded exposure. The relevant ethical principle is therefore not a utilitarian calculus but a structured and transparent justificatory process. Researchers, therefore, should justify why an intervention is warranted, how they have bounded the scope of exposure, what uncertainties remain, and how emergent effects will be monitored and addressed over time. Risk–benefit reasoning becomes iterative and adaptive rather than determinative.

While fully definitive metrics may not be immediately feasible, our aim is to call for the development of transparent, evolvable, and accountable approaches for assessing the benefits and potential harms of social challenge studies. More work is needed to create approaches that support ethical justification and provide a foundation for even more future work that further formalizes these evaluations.

Risk Minimization

In social challenge studies, potential risks to participants can be minimized by carefully designing the study and ensuring minimal risks to their privacy and well-being. The sensitive data of the study can be protected from misuse by using appropriate data anonymization (Majeed & Lee, 2020; Zhou et al., 2008) and protecting the confidentiality among the team members (Nosek et al., 2002). To avoid any unintended consequences, it is necessary to monitor the study continuously and adapt cybersecurity protocols. The study should be justified ethically to ensure that the benefits of the study outweigh the potential risks.

Third-party Risks