Abstract

Online recruitment and data collection in qualitative research grew significantly during the COVID-19 pandemic, revealing a host of benefits including cost and time savings for researchers and participants. However, significant risks and limitations exist when recruiting and interviewing participants online. ‘Imposter participants’ have emerged, seemingly incentivized by study honoraria. These imposter participants invoke significant administrative burdens and call into question data integrity and researcher commitment to equitable and inclusive sampling. This article features insights drawn from experiences of conducting online recruitment for a Canadian photovoice study of men’s mental health and peer support in three themes: (1) Gone Phishing: Detecting and Deterring Imposters, (2) Screening for Subterfuge: Balancing Integrity and Inclusivity, and (3) Fraud Fatigue: Researcher Strain and Drain. The first theme, Gone Phishing: Detecting and Deterring Imposters, outlines processes for identifying imposter participants, including technological tools and human strategies. Screening for Subterfuge: Balancing Integrity and Inclusivity chronicles ethical implications and researcher adaptions for ensuring that authentic eligible participants are not inadvertently excluded. The third theme, Fraud Fatigue: Researcher Strain and Drain, details the workload and distress that researchers can face in dealing with imposter participants, while thoughtfully considering avenues for reducing these potential harms. Findings across these themes underscore the potential for imposter participants to increase project costs and compromise data integrity for online qualitative research. Implicating the need for strategies, recommendations are made for supporting researchers and upgrading university systems to improve security and risk management guidelines for managing imposter participants, especially in the wake of artificial intelligence–generated scams.

Introduction

The COVID-19 pandemic fundamentally reshaped how qualitative research is conducted (Oliffe et al., 2021). Social distancing measures and travel restrictions necessitated rapid shifts to online methods, especially participant recruitment and interviews (Husted et al., 2025). This transition, while initially driven by unprecedented circumstances, highlighted the immense potential of doing qualitative research virtually (Oliffe et al., 2023). Online platforms eliminated the need for physical travel and in-person data collection, leading to significant cost savings for researchers (Ryan, 2013). Furthermore, compared to traditional methods including in-person interviews and paper-based demographic surveys, online platforms allowed researchers to reach a wider pool of participants with diverse locales and backgrounds more quickly and efficiently (Arman, 2023). However, these benefits are not without concessions, and the online environment increases the risk of bot-initiated phishing attempts (Oliffe et al., 2023), amplifying concerns about study recruitment strategies and data integrity. Conversely, limited Internet access, lack of computer resources (Oliveri et al., 2021), and the complexity of technologies and communication methods used in qualitative research can be barriers for potential participants (Ferlatte et al., 2022). The problematics of online qualitative research demand careful consideration and strategic efforts to ensure inclusive, ethical, and methodologically sound practices (Oliffe et al., 2023).

A critical concern centres on data integrity, with studies showing that a non-trivial segment of online research participants misrepresent their identities (Hydock, 2018). In this context, the term ‘imposter participants’ refers to individuals who deliberately fabricate identities to infiltrate studies, often motivated by financial incentives offered as an honorarium (Chandler & Paolacci, 2017). The rise of imposter participants in online research poses ongoing challenges and reveals important recruitment, sampling, and methodological considerations in qualitative research (Oliffe et al., 2023). Herein lies an ethical tension that, while honoraria in qualitative research are given to compensate participants’ time and expertise, they may inadvertently attract people who are primarily interested in the reward and not the research topic itself (Husted et al., 2025). Additionally, financial incentives may disproportionately attract individuals from lower socioeconomic backgrounds (Gelinas et al., 2018), increasing the risk of misrepresentation as participants contrive experiences or characteristics to qualify for the study – and the honoraria. While online anonymity can aid imposter participants, the problem of participant misrepresentation is not unique to virtual research. Dishonesty has been reported in face-to-face studies as well (Flicker, 2004); yet, the crucial difference lies in verification. Traditional in-person settings allow researchers to see more and utilize visual cues and physical presence to confirm identities. Online environments lack these optics and safeguards. These online vulnerabilities are compounded by qualitative research’s reliance on and epistemological commitments to participant subjectivities and self-reported experiences. Imposter participants can indeed craft elaborate narratives to appear legitimate, and the presence of such participants not only threatens to undermine the trustworthiness and integrity of the data being interpreted but also raises ethical concerns. Researchers have a responsibility to ensure data integrity and protect legitimate participants’ privacy. When imposter participants infiltrate a study, they violate this trust and potentially skew (or obscure) the representation of genuine voices and authentic experiences. Furthermore, the presence of imposter participants can erode trust within the qualitative research community itself. Researchers who unknowingly include imposter data in their analyses risk scepticism and having their work questioned or even redacted. This can have dire effects on future endeavours, discouraging researchers from venturing into online methods for fear of reporting compromised data and swindling narratives which, by extension, misrepresent their study findings (Ridge et al., 2023).

Concerns about participant misrepresentation intersect with long-standing ethical debates within qualitative inquiry, where trust and participant–researcher relations and dynamics shape knowledge generation and interpretation (Aluwihare-Samaranayake, 2012; Hewitt, 2007). These scholars argue that ethical decision-making is an ongoing, situated process that requires researchers to balance vigilance and responsibility to avoid undermining relational integrity (Aluwihare-Samaranayake, 2012; Hewitt, 2007). Qualitative research methods work with rich, in-depth narratives and personal experiences, and the analyses and representations of that data are reliant on trustworthiness comprising credibility, dependability, and confirmability (Agius, 2013; Lincoln & Guba, 1985). The focus is on understanding participants’ perspectives and lived experiences, and this purposefully subjective data can present distinct challenges for identifying (and calling out) imposter participants. By contrast, identifiers used in quantitative research, such as straight-lining responses to survey items, are not available for evaluating the quality of qualitative data (Kim et al., 2019). Imposter participants can craft seemingly plausible narratives that align with and even build upon the research topic. Additionally, qualitative research processes ideally involve rapport-building and fostering open dialogue with participants. As a result, researchers may be hesitant to over-scrutinize or check narratives that seem farcical or false for fear of alienating genuine participants (Husted et al., 2025). Lengthy and complex screening processes can deter participation; yet, researchers must develop screening methods that balance sampling rigour with inclusivity. Incorporating open-ended questions that probe participants’ experiences is a staple of qualitative work (Oliffe & Mróz, 2005). Similarly, utilizing video conferencing for brief introductory meetings can allow researchers to observe non-verbal cues and gain a sense of participants’ eligibility. The rise of imposter participants necessitates a critical re-evaluation of online recruitment, screening, and honoraria incentive practices in qualitative research. For example, targeted recruitment strategies that engage with trusted online communities may help attract authentic participants.

The current article builds on earlier insights (Ridge et al., 2023; Roehl & Harland, 2022) and a systematic scoping review (Husted et al., 2025) by offering project-informed methodological considerations for identifying and managing imposter participants in online qualitative research. Specifically, we detail insights gained from managing imposter participants during online recruitment for a Canadian photovoice study of men’s mental health and peer mutual help.

Positioning the Current Study

We come to this article having recently encountered imposter participants in a photovoice study exploring men’s perspectives and experiences of peer support and mutual help for mental health challenges (Sharp et al., 2025). By way of background for this study, there are known factors that contribute to men’s low uptake of professional mental health services, including gender norms and mental health stigmas (Gough & Novikova, 2020; Mursa et al., 2022; Rice et al., 2020). Recent efforts in men’s mental health promotion and suicide prevention have focused on encouraging men to be more emotionally expressive and supportive with their peers (Sharp et al., 2024). Within these contexts, we used photovoice, a qualitative method that utilizes participant-taken photographs and narratives, to elicit rich descriptive data about men’s community-based strengths and challenges. The study was purposefully designed to be conducted online, motivated by both practical and methodological considerations. The research team sought to develop a flexible approach that could continue despite possible COVID-19 lockdowns, accommodate geographically dispersed staff, and take advantage of the perceived efficiencies and travel cost savings of online qualitative research (Oliffe et al., 2023). Recruitment occurred via social media platforms X (formerly Twitter), Facebook, LinkedIn, Reddit, and Instagram. We also posted the study on e-bulletin boards and sent email correspondence to individuals and organizations (e.g., non-profit men’s groups, male-dominated workplaces) who helped promote the study through their networks. Eligible participants were English-speaking men (18+ years) who lived in Canada. To incentivize participation, we offered a $100 CAD honorarium to acknowledge participants’ time and contribution to the study. Recruitment materials included the study aims and eligibility criteria as well as an email contact for the study coordinator, along with a link to a Qualtrics-hosted study information sheet. Potential participants were provided additional information about the research here, including the study procedures, and opportunity to provide their name and email registering their interest for taking part in the study.

Potential participants that contacted the study coordinator (via email or the Qualtrics information sheet) were provided a unique study ID number and assigned to one of four researchers (3 men and 1 woman) to schedule a time for a brief (approximately 15 minutes) intake Zoom meeting. The purpose of the intake meeting was to confirm eligibility and discuss study procedures, which involved Qualtrics-hosted consent, demographics and survey questions, and uploading photographs illustrating their perspectives about and experiences of peer support and mutual help for men’s mental health challenges. Participants took two weeks to take photographs after which an individual Zoom interview (approximately 1–2 hours) was scheduled to review and discuss participants’ photographs in semi-structured individual interviews.

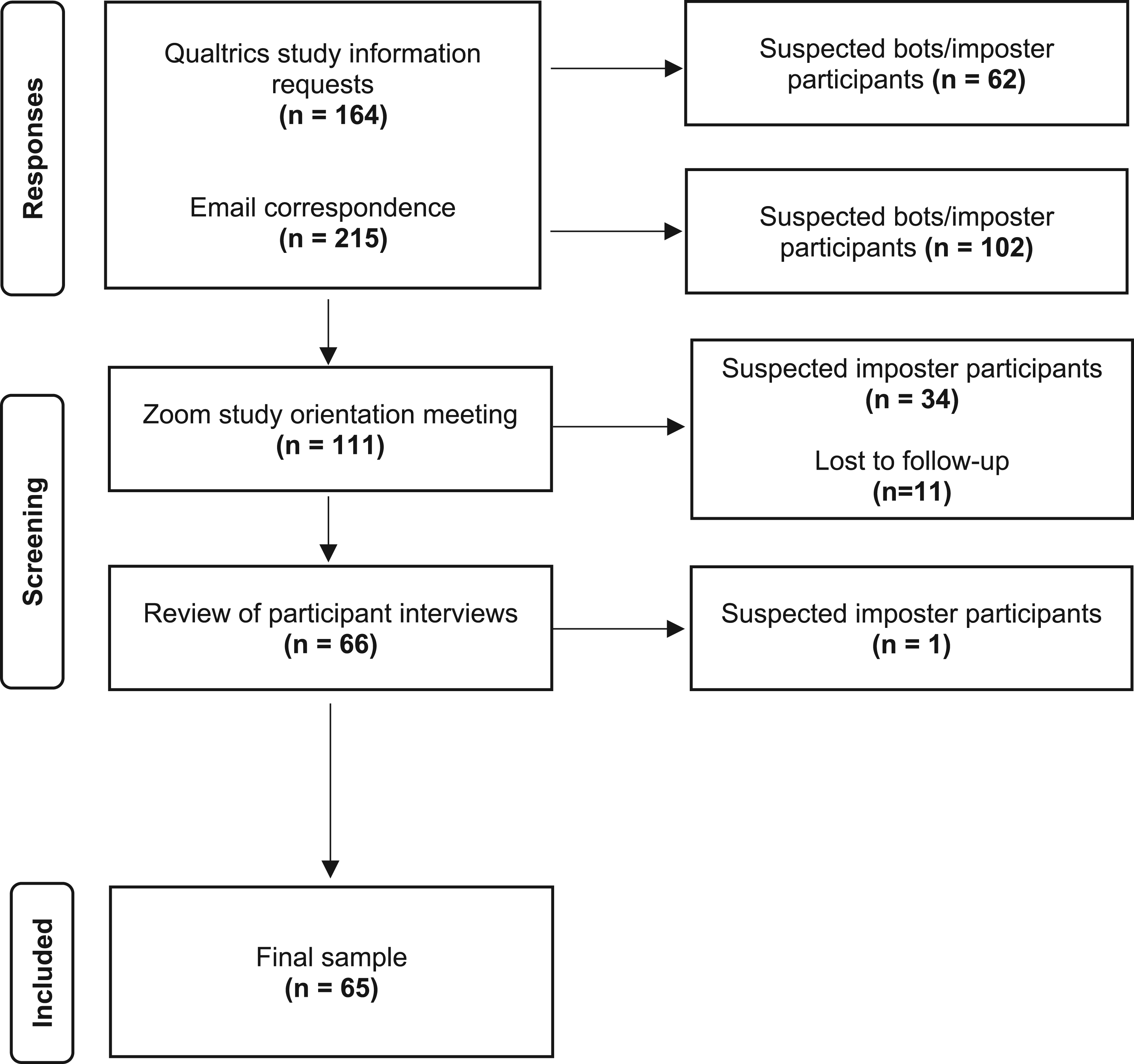

Rolling recruitment for the study occurred over four months, between June and September 2023. The study coordinator received 215 emails and 164 study information requests from potential participants. Of these 379 contacts, 111 individuals scheduled an orientation meeting, 66 submitted photographs, and 65 completed a follow-up interview (see Figure 1). Throughout this process, we became increasingly aware of imposter participants attempting to take part in the study. Of the 164 study information requests, 62 (38%) were identified as bots or imposter participants. Of the 215 emails directly received, we suspected that at least 102 (47%) were from bots or imposter participants. From the 111 scheduled Zoom orientation meetings, we identified 34 (31%) as imposters. Follow-up interviews were conducted with 66 participants, and after data screening, we excluded the data from one suspected imposter (1.5%). In this article, we outline our experiences identifying and managing imposter participants at different stages of the study to address the research question: What methodological and ethical challenges arise from imposter participants in online research, and how can qualitative researchers detect and respond to these encounters? Participant flow diagram

The findings were derived from multiple qualitative data sources, including post-interview researcher debriefs, reflexive memos, and detailed notes from bi-weekly team meetings during data collection. These materials captured researchers’ immediate impressions and reflections about participants’ demeanour, communication style, and interview settings (e.g., background environment and time of day) (Phillippi & Lauderdale, 2018). They also recorded the emotional and ethical tensions collectively experienced by the team as they navigated issues of authenticity, trust, privacy, inclusion, and integrity. Beyond supporting the identification of potential imposter participants, these materials offered rich contextual and reflexive data that formed the foundation of our thematic analysis. Guided by Braun and Clarke’s (2021) reflexive thematic analysis framework, the analytic process was iterative and interpretive. Initial coding focused on capturing challenges and considerations that arose during different stages of the research (e.g., recruitment, screening, and data collection). These codes were then grouped conceptually to identify recurring patterns of actions and meaning related to methodological decision-making, ethical tensions, and researcher emotions. Through ongoing team discussions, these patterns were reviewed, refined, and interpreted to move from descriptive summaries toward more conceptual and abstract understandings about our collective experience. Triangulation was achieved by integrating insights across data sources and through collaborative interpretation among the four researchers involved in data collection. Through this iterative and reflexive engagement, three overarching themes were developed that represent shared insights about detecting and managing imposter participants in online qualitative research: (1) Gone Phishing: Detecting and Deterring Imposters, (2) Screening for Subterfuge: Balancing Integrity and Inclusivity, and (3) Fraud Fatigue: Researcher Strain and Drain. Illustrative data including email correspondence and participant quotes are provided to contextualize salient points within each theme.

Findings

Gone Phishing: Detecting and Deterring Imposters

Online recruitment and data collection have long been lauded for their cost-effectiveness and capacity to broaden participation across geographical and social determinants (Fenner et al., 2012). However, these advantages were increasingly undermined in our research by the rise of phishing emails (i.e., fraudulent or deceptive messages designed to impersonate eligible participants or gain access to research incentives and data). Here, the persistent and evolving attempts by imposter participants to take part in the study posed significant challenges for administrative resourcing amid threatening data integrity. What began as a promisingly efficient online methodology quickly became entangled with the complexities of detecting and deterring fraud. These challenges demanded substantial adaptation on our part and exposed critical gaps in institutional infrastructure for supporting researchers who were trying to navigate the threat of imposter participants. This theme illustrates how detecting and deterring imposter participants in online qualitative research could not be rendered entirely foolproof through technological safeguards and instead required sustained judgement, staff time, and iterative procedural adaptation.

From the outset, we employed a range of technological tools and human strategies to safeguard the study. Our Qualtrics-hosted study information sheet became one of the most reliable assets for identifying and deterring imposters. Drawing on features predominantly used in quantitative research, we applied a suite of fraud-detection tools to the study information sheet and consent form, including reCAPTCHA, RelevantID, duplicate submission detection, bot detection, and geolocation. Together, these features provided a layered system for flagging suspicious responses. For example, reCAPTCHA helped filter out bot-generated entries, though it did little to prevent human imposters from submitting multiple registration requests. RelevantID offered deeper scrutiny, examining respondent metadata including browser type, operating system, and location to detect duplicate and fraudulent submissions. We also leveraged Qualtrics’ multiple submissions detection, which used browser cookies to identify repeat attempts by the same user. Notably, many submissions flagged as fraudulent used auto-generated email formats that echoed patterns found in suspicious email correspondence. Manual review of geolocation data further aided our vetting process, although this feature had precision limitations which located some participants improbably in public parks or bodies of water. Geolocation data suggested that 110 study information requests were from Canada, 29 from the United States, 20 from Nigeria, and 5 from other countries including Australia, England, New Zealand, and Brazil. While these features were helpful, we soon learned that technologically adept imposters could simply circumvent such safeguards by clearing their browser cookies, masking IP addresses, or using virtual private networks.

Email correspondence provided another vital but labour-intensive line of defence for detecting fraud. Almost immediately after launching our social media recruitment ads, we began receiving suspicious emails, including many with empty subject lines or minimal text such as ‘I’m interested’. These messages often came from similarly structured email addresses (i.e., first name, last name, and numerical sequence at Gmail or similar domains) which we suspected were being generated en masse to register participation interest. Emails arrived in clusters, typically following recruitment posts on high-traffic platforms including Reddit and X. Although social media increased the visibility and reach of the study, it also drew the attention of opportunists seeking to access the study’s honorarium. Despite consultations with our university’s information technology department, no specific solutions emerged for filtering or blocking these phishing attempts, leaving us to manually manage the growing influx. Initially, we responded to each inquiry, mindful of not prematurely excluding genuine participants. However, as the volume of suspicious contacts escalated, this approach became untenable. We began triaging emails, choosing not to respond to messages that bore obvious markers of phishing.

Research staff noted that imposter correspondence became more sophisticated over time. Messages began including vague but plausibly relevant details, such as having heard about the study on ‘a website’ or describing themselves as ‘an adult man in Canada’. Some emails contained significant grammatical errors that hinted at automated or low-effort composition, while others were overly detailed and formal, suspected to be produced by generative artificial intelligence (AI). These messages typically incorporated extraneous details paraphrased from the study information sheet, which paradoxically raised suspicions. By triangulating multiple data sources (e.g., email patterns, geolocation, and metadata), we were reasonably confident in our identification of imposter participants, yet the potential for misrepresentation remained ever present. To address this shifting landscape, we adapted our process to include additional email screening questions, asking potential participants to explain how they heard about the study and why they were interested in participating. While some suspected imposters provided no response or faltered when asked for additional details, others supplied elaborate backstories tailored to align with the study aims. These narratives could be convincing enough to warrant progressing to a Zoom orientation meeting to evaluate eligibility.

The Zoom meetings, designed originally to obtain consent and orient participants to study procedures, became critical to our fraud-detection efforts. While many suspected imposters failed to attend, others joined and engaged in what appeared to be authentic dialogue, further complicating our assessments. One illustrative case involved a participant who, during the orientation meeting, experienced multiple technical difficulties, including camera malfunction and dropping from the call for an extended period before returning to complete the session. His follow-up interview proceeded without issue, and the transcript revealed no immediate concerns as responses were detailed and thematically consistent with the study focus. However, upon review of the submitted photographs, several anomalies emerged. The images were of notably low resolution, making it difficult to discern identifying features, and they appeared to depict different individuals. Among the photos were screenshots of a Facebook group and a men’s support group, which were from geographically disparate areas than the participant’s self-reported location. When contacted for clarification and to obtain consent for images containing other identifiable individuals, the participant did not respond. Consequently, we removed this individual’s data from our final sample, highlighting the importance of triangulating multiple data sources to detect potential fraud.

The administrative burden associated with detecting and deterring imposters was considerable. Each suspected imposter typically required multiple rounds of correspondence, careful review of submitted materials, and team discussions to assess authenticity. Rescheduling missed Zoom appointments, tracking patterns across email accounts, and corroborating narratives with geolocation and metadata added further layers of complexity and time. The research team convened frequently to devise strategies and share emerging patterns, which diverted attention from other aspects of the study. We estimate that addressing imposter participants consumed approximately 450 staff hours, representing roughly 10% of the study’s total labour allocation, and resulted in costs exceeding $40,000 CAD. These unforeseen expenditures challenge the assumption that online research is inherently cost-saving and efficient. By comparison, pre-pandemic in-person photovoice studies led by our team required similar staff time and travel expenditure for participant coordination, consent, and scheduling. The savings in travel and transcription were largely offset by the additional administrative and verification workload of research staff. These figures highlight that while online methodologies may appear efficient, the unanticipated costs of verifying participant authenticity can erode expected financial and time savings. This underscores the need for institutional recognition and support through funding, infrastructure, and training to ensure these burdens are equitably managed.

Our exposure to phishing underscores the need for greater institutional support for managing the risk of imposter participants. Despite the clear threat to data integrity, we found limited guidance or best practices for identity verification that balanced inclusivity. The absence of robust institutional protocols left our team to develop ad hoc solutions, often navigating tensions between data quality, participant privacy, and the ethical imperative not to overburden or alienate genuine participants, an issue we discuss in the following theme.

Screening for Subterfuge: Balancing Integrity and Inclusivity

As imposter participants became increasingly adept at circumventing our initial safeguards, we were compelled to introduce additional screening measures aimed at protecting the integrity of the study. These adaptations centred on refining our intake processes and deepening interactions with potential participants to identify inconsistencies or signs of fraud. Yet, as we increased participant scrutiny, we became acutely aware of the ethical tensions arising from that focus. Efforts to screen for subterfuge risked creating barriers for genuine participants, including among people who may already face barriers to research participation, by virtue of their social locations and marginalizing living conditions. Testing our commitment to inclusivity and equitable participation, this theme explores how we navigated these competing demands, reflecting on some emergent methodological and ethical complexities.

As the volume of potential imposter participants grew, the Zoom orientation meetings became a crucial step in our efforts to protect the integrity of the study. These meetings offered a space to build rapport and establish trust while providing an opportunity to further assess participant authenticity. We quickly observed patterns in behaviour that signalled potential fraud. Most suspected imposters were late or missed their scheduled orientation times, requiring research staff to follow up, extend meeting durations, and reschedule appointments. In one case, an individual joined a password-protected meeting using the display name of another (likely imposter) participant. They left the meeting abruptly before re-joining minutes later under a new name. Incidents such as these underscored the challenges of managing security while upholding a welcoming environment. Our university ethics board approval required that participants be permitted to leave their cameras off during interviews to protect their privacy. However, given the increasing number of suspected imposters, we requested that participants briefly activate their camera during the orientation meeting to verify identity. This strategy was met with mixed responses. Some participants, both genuine and fraudulent, resisted the request, citing privacy concerns or technical limitations. In several instances, suspected imposters joined meetings whilst lying in bed and/or being shirtless. Whether due to time zone differences or intentional efforts to unsettle research staff, this resistance along with poor audio and video quality made it difficult to detect visual or verbal inconsistencies that might have helped determine legitimacy.

During orientation meetings, research staff were encouraged to foster conversational engagement. We routinely asked participants where they lived, how they had heard about the study, and why they wished to take part. These seemingly simple questions often revealed important discrepancies in participant responses. Some suspected imposters struggled to name the current season or time where they claimed to live, while others provided answers that conflicted with earlier email correspondence or information submitted through their Qualtrics form. When asked to elaborate, suspected imposters tended to give vague or hurried responses. Many suspected imposters were also focused on details about the honorarium, including the amount, payment method, or potential alternatives to gift cards such as Venmo (an online money transfer service). Requests for expedited interviews were also common, as were instances where participants became confrontational or aggressive toward staff when their narratives were gently probed. These patterns pointed to the evolving nature of fraudulent attempts. As we adjusted procedures to address specific tactics, imposters appeared to share information and adapt their strategies accordingly. In one case, a participant arrived prepared with a detailed street address and descriptions of local landmarks visible on Google Street View, reinforcing our suspicion that private networks were disseminating tips on how to bypass our safeguards.

Our screening measures, while necessary, created unintended tensions with principles of equity, diversity, and inclusion. Research staff routinely recorded information such as participants’ city and province, how they heard about the study, and any noteworthy features of the interaction, including refusal to activate the camera or inconsistencies in responses. Over time, these practices shaped a defensive posture, where vigilance became entwined with scepticism. Authentic participants were inevitably asked to do more to verify their identity. Additional email correspondence, requests for further information during orientation, and encouragement to activate cameras all required greater effort from genuine participants. Some legitimate participants expressed discomfort or frustration at the scrutiny, which risked eroding rapport. The potential impact on participants was not only practical but emotional, as efforts to detect imposters strained the atmosphere of trust central to qualitative inquiry.

The risk of exclusion was particularly acute for participants from underrepresented groups. Many of the strategies we used to detect imposters (e.g., probing for precise location details or observing reluctance to disclose personal information) may have inadvertently disadvantaged participants who were less familiar with technology, spoke English as a second language, had discomfort with the subject matter, or had concerns about confidentiality. In one instance, a participant was initially suspected to be an imposter due to hesitancy in sharing personal information or activating their camera during orientation. Only later during their interview was it revealed that the participant was fearful of compromising their privacy and endangering future employment opportunities: Interviewer: I am interested in your experiences with the military. What does this photo represent to you? Participant: Yeah. So, I think I mentioned last time that I knew somebody that was… (pause). Yeah, you know what? That person was me. It was not a friend; that person was me. When I enlisted, I went through everything. You know, medical, all that, but they found that I had a history of depression, and they stopped my application. It is very frustrating because, ironically, being rejected might be even worse for my depression than, you know, being accepted.

This example highlighted the delicate balance between safeguarding data integrity and honouring participant preferences. It also signals the ethical complexities of screening practices that, however well intentioned, may replicate the harms of exclusion they sought to avoid and emancipate through participation in the study itself. These experiences required us to take stock and reflect on the risk of becoming overly suspicious and defensive to the extent that the voices qualitative research aims to amplify become alienated and stigmatized. Screening for subterfuge, while vital, demanded careful consideration of its impact on inclusivity, particularly when working with participants for whom trust in research can be hard won and easily lost. Of course, the tensions for researchers juggling these seemingly incompatible goals grew, and the effects of those challenges are discussed in the next theme.

Fraud Fatigue: Researcher Strain and Drain

Despite our best efforts to detect and deter imposter participants, their persistent and evolving tactics placed significant strain on the research team. The administrative and emotional burden of trying to manage suspected fraud, coupled with the moral uncertainty of potentially excluding genuine participants, took a cumulative toll over the course of the study. Herein, we examine the impact of fraud fatigue on the researchers, detailing how ongoing exposures challenged the resilience of the study team.

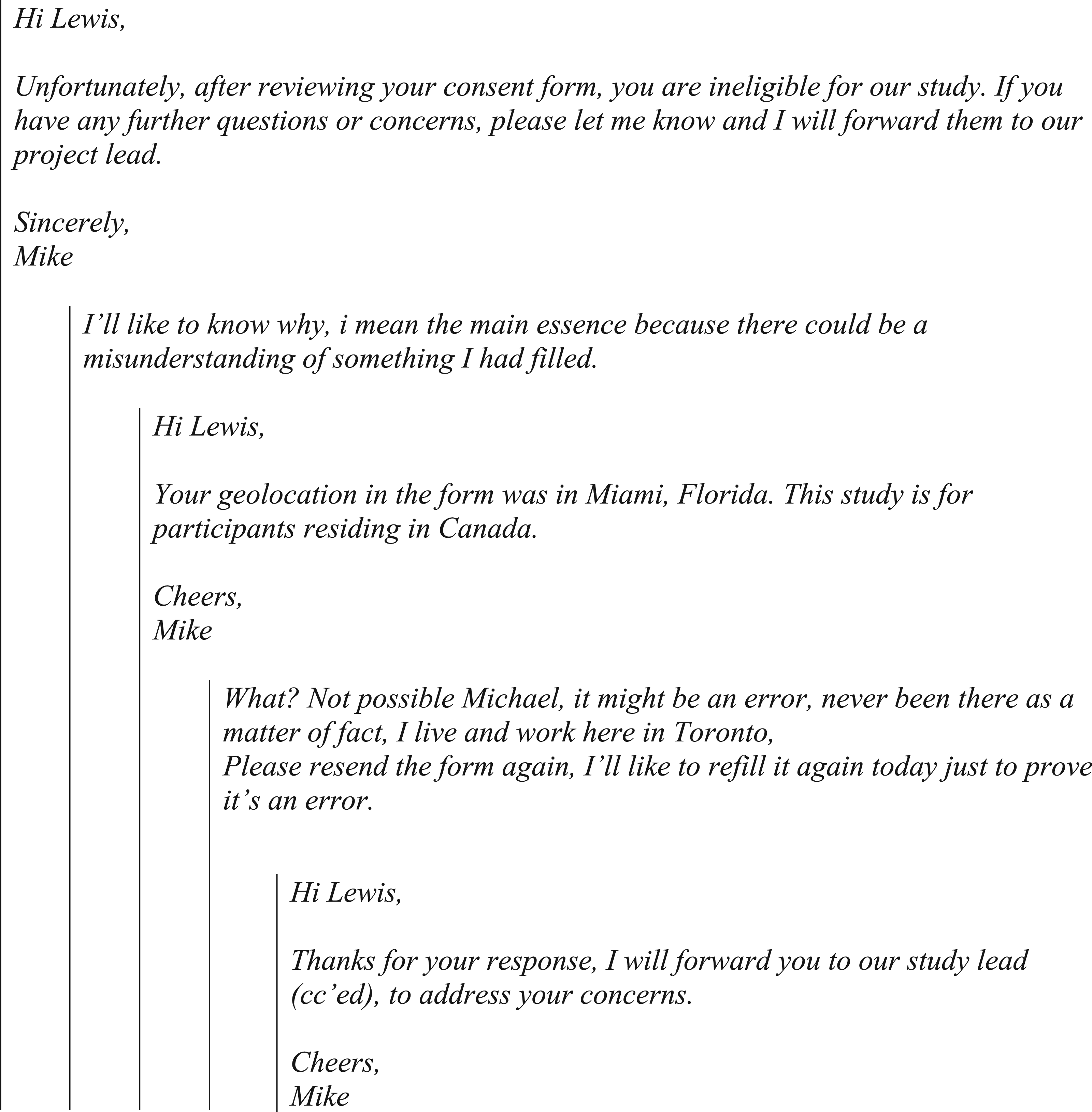

Most interviewers were drawn into a potential conflict with at least one or two participants, an antithesis positionality prescribed for and pursued by qualitative researchers. A particularly illustrative case involved a participant who originally reported living in Toronto, Canada, but whose consent form metadata indicated they were in Florida, USA. Based on several indicators including a vague initial email, no video and minimal detail provided during the orientation interview, and the mismatched metadata, the research team decided not to proceed with the interview. The email interaction that followed our exclusion of this individual further illustrates the strain and drain these situations placed on research staff. After informing the participant, pseudonymized here as Lewis, of his ineligibility due to location, we received the following email exchange:

Illustrated here are the tensions and time that pervaded our work including the challenge of calling out ineligibility while managing the discomfort of confronting someone who might, however unlikely, have been genuine. These exchanges placed research staff in awkward and uncomfortable positions which risked provoking the resistance or defensiveness of would-be-participants. Staff had to maintain professionalism and courtesy even when confronted with implausible claims or, in some cases, overt pressure. The emotional labour involved in managing these interactions was considerable. Some team members, especially students and newer researchers, reported a growing dismay at the frequency with which they encountered imposter participants and noted that this began to erode their enthusiasm for the work. The administrative demands added to this fatigue as each suspected fraud case required additional correspondence, documentation, and internal research group consultations, diverting time and energy from the many other study tasks.

Contrasting the emotional toll of policing participant narratives was the reprieve of genuinely connecting with legitimate participants ensuring they felt heard through the research processes. In one instance, a participant reached out after his interview to thank the research team and express how meaningful it had been to articulate his (often difficult) experiences of trying to socially connect with other men. Herein, the interview and his broader participation had afforded him the rare opportunity to be vulnerable, reflect, and feel validated in what and how he shared his experiences. Through his follow-up correspondence, the participant sought suggestions for how to meet new people, ultimately inquiring if the researcher would like to exchange information and meet up socially. The gesture spoke to the therapeutic value of their participation, and the interview which was clearly interpreted as a genuine social connection. Navigating this invitation required careful and compassionate professional boundary setting without eroding the participant’s trust or making him feel rejected. This experience stood in stark contrast to the scrutiny for uncovering suspected imposter participants. Especially evident were the tensions between empathy and interrogation as extremes feeding our experiences of fraud fatigue.

There was also the ongoing cognitive burden with these uncertainties and policing. While we could be reasonably confident in most decisions to exclude participants based on the eligibility criteria, there was always the possibility of error. The risk of mistakenly excluding a legitimate participant weighed heavily on the team, especially given the study’s focus on mental health, peer support, and mutual help, where trust and rapport are foundational. The potential for harm, however unintended, contributed to a sense of fatigue that compounded the logistical strain. In some cases, imposters became hostile when their claims were scrutinized, adding to the emotional toll. Research staff described the stress of being pressured by individuals who appeared intent on exploiting the study’s design for financial gain, while simultaneously worrying about inadvertently alienating genuine participants.

This cumulative burden ultimately contributed to our decision to close recruitment earlier than originally planned, with the fatigue for the researchers primarily responsible for recruitment, screening, and data collection a significant factor. The team’s resilience had been tested over months of navigating fraudulent attempts, adapting procedures, and working to protect the study’s integrity without compromising inclusivity. The emotional and administrative toll of these efforts also threatened researcher burnout. Repeated exposure to suspected fraud can heighten scepticism that, however justifiable, risks compromising the openness and empathy that are hallmarks of qualitative research engagement. The more defensive posture that researchers adopted in response to persistent fraud attempts undoubtedly impacted their relationships with genuine participants. The administrative and emotional costs borne by our team were significant, and they underscore the importance of developing institutional policies and resources that acknowledge and address these challenges.

Discussion and Conclusion

Imposter participants present a considerable threat to the integrity of and budget for doing qualitative research online, and researchers must implement strategies to detect and deter their involvement. Previous research has suggested that researchers act as ‘gatekeepers’ to confirm the eligibility of participants and safeguard the integrity of the research process throughout recruitment and data collection. Several techniques have been proposed including the use of technology (i.e., reCAPTCHA, limiting to one response per IP address), targeted sampling techniques (e.g., snowball sampling), identity verification (e.g., requiring participants to upload documentation), and data screening (e.g., reviewing and removing suspicious data) (Sophus & Mitchell, 2025). Our experiences suggest that no single strategy is guaranteed effective, and that researchers must employ a combination of approaches to identify and deter imposter participants. Key indicators of fraudulent or scam participants have been outlined in the literature including unusually high volumes of emails within short time periods, similarly structured email addresses and generic message content, reluctance or refusal to activate cameras, recurring technical difficulties, and strong financial interests (Pullen Sansfaçon et al., 2024; Sharma et al., 2024). Aligning with Ridge and colleagues’ (2023) assertion that detecting fraud in online qualitative research is perhaps an even more complex endeavour than that of online surveys, considerations for implementing deterrents are emergent. Recent research has called for proactive approaches that embed imposter screening protocols within study designs (Kumarasamy et al., 2024), as well as the active involvement of research ethics boards (REBs) in helping guide researchers to prevent and manage these issues (McLachlan et al., 2025). However, Jones et al. (2021) suggested that the availability of study software and increased administrative requirements may impact and limit researchers’ ability to detect imposter participants in asynchronous online qualitative research. The current study extends this growing body of work by highlighting additional considerations relating to administrative costs, researcher fatigue, participant burden, and the equity, diversity, and inclusion considerations shaping rigour-rich qualitative research.

In relation to administrative costs, the 10% ($40,000 CAD) lost to our research team’s hypervigilance with study inclusion was likely equivalent to the savings afforded by conducting the study online compared to in-person. Specifically, travel costs and associated staff time, professional transcription fees, and hardcopy scans and uploads were all cost-savings made possible by doing the study virtually. Indeed, interviewing participants in the diverse locales across Canada where they resided would have easily cost >$40,000. Thus, it might be reasonably anticipated (and argued) that some study budget lines shift in conducting qualitative studies online rather than claiming an overall lack of feasibility for doing the work virtually. That said, institutions must work centrally to improve security and provide guidelines and tangible supports for all their researchers. Recent work has shown that researchers often turn to research ethics committees for advice on withholding compensation and/or redacting data collected from suspected imposter participants (Andrew et al., 2024). In this context, McLachlan et al. (2025) suggest that REBs develop standard operating procedures and template scripts for communicating with suspected imposters, which may reduce procedural uncertainty and ease administrative burden. Research indirect costs collected by universities, for example, might be usefully directed to reducing the unnecessary administrative costs being absorbed by every online research project. Worrisome also is the lure of participant honoraria and likelihood that these benefits are a key motivator for imposter participants wanting to take part in research (Kumarasamy et al., 2024). Honoraria were traditionally offered as a gesture of respect for participants’ time and contribution, but they have become a double-edged sword, serving both as an incentive for genuine engagement and a lure for fraudulent actors. Offering $100 CAD may be an issue in terms of the amount being offered, and more nominal amounts of $10–$30 CAD might reduce the potential for imposter participants. In a time of great geopolitical economic disparities, there are also relevant debates about the ethics of payments for research participants (Różyńska, 2022), especially regarding what amount might be assured as not overly attractive or coercive. While not suggesting that the participant research honoraria is (or should be) dead, we must consider that the ultimate costs of incentivizing online samples likely far exceed the face value of the honoraria itself. Recent recommendations suggest retaining honoraria to support equitable participation while avoiding explicit mention of financial incentives in recruitment materials to reduce fraudulent interest (Andrew et al., 2024). Such adjustments, however, require careful ethical consideration to ensure transparency and fairness, underscoring the need for ongoing dialogue between researchers and ethics committees about responsible incentive practices in online qualitative research.

Researcher fatigue and distress is an ever-growing concern drawing contingencies to optimize the well-being of those working on qualitative studies (Andrew et al., 2024; Wray et al., 2007). It is fair to say that universities have increasingly defaulted administrative work to faculty and research teams (Woelert, 2023). Indeed, many centralized services have defaulted to directing what needs to be done to ensure employee alignment with institutional policies, with the responsibilities for that increasingly residing with individual researchers, and ultimately the principal investigator leading the research team. There might be institutional opportunities (and obligations) for staff health promotion services within the university to prevent the harms associated with such administrative challenges rather than focussing downstream on treating (or compensating) the effects, including staff burnout (Forrester, 2023). Practical considerations for addressing fatigue and distress in research teams include ensuring that staff and students have adequate training and induction, appropriately spacing data collection, and employing ‘buddy’ and debriefing systems where team members talk through challenging research experiences (Silverio et al., 2022). Our experience also highlights the importance of preparing research teams, particularly junior members (Heller et al., 2011), to navigate complex and unexpected virtual interactions, including how to end a research session safely, recognize red flags in real time, and maintain professional boundaries under social pressure. Providing practical tools such as standardized screening scripts, troubleshooting guides for suspicious situations, and escalation pathways can support decision-making during live interviews (McLachlan et al., 2025). Training and education in research communication and digital ethics should also be incorporated into research capacity building to equip teams with the skills to manage methodological and ethical uncertainty in online environments (Sefcik et al., 2023). Additionally, there is a need to build in generic strategies to equip employees to articulate their challenges and solicit tangible assistance, from immediate personal supports to centrally making system changes that address emergent and established research security issues (Kumar & Cavallaro, 2018).

The experiences shared in this article provoke fundamental questions about the future of sampling and participation in qualitative research conducted online. As imposter participants become more skilled through AI, the equity, diversity, and inclusivity drivers of convenience sampling online demand a nimble re-think. Ever-growing sophistication in AI technologies including deep fakes and generative video threaten to amplify all the issues detailed in the current article and previous related literature. Emerging scholarship reveals generative AI as having the potential to fabricate convincing personal narratives and simulate human interaction in research contexts (Burleigh & Wilson, 2024), increasing the challenges for verifying participant authenticity. At the same time, explicit and increasing scrutiny, under the guise of security risk, can reproduce practices of exclusion and surveillance, particularly for structurally marginalized and/or stigmatized groups. Scholars have cautioned that approaching participants from a starting point of distrust may damage relationships with communities and community-based organizations (Drysdale et al., 2023) and risk exclusion of legitimate participants who already face barriers to research participation (Pullen Sansfaçon et al., 2024; Santinele Martino et al., 2024). Ethical responses must therefore extend beyond detection to consider how fraud mitigation strategies are designed and communicated, ideally tailored to specific study populations (Wright et al., 2024) and developed in collaboration with trusted community partners (Andrew et al., 2024). Herein, a complex ethical landscape opens up a chasm of considerations (and uncertainties) for what counts as the truth(s) in unregulated online and AI worlds.

Future research is needed to better understand the contextual factors that make some studies especially vulnerable to imposter participation, including the influence of honoraria, study topic and population, and recruitment platform. Project-specific investigations might usefully examine how these factors interact to shape the prevalence and detection of imposter participants. At a broader level, meta-institutional analyses are needed to guide university and research ethics committees’ policy and coordinated strategies including centralized infrastructure and protocols to reduce the risk of research fraud and support teams conducting online qualitative research. Beyond policing imposters, the fidelity of these policies and practices must ensure the inclusion of legitimate, equity-owed voices in research without negatively impacting the experience of participating in online qualitative research.

In conclusion, as new technologies emerge, especially AI-generated identities, we anticipate that imposter participants in online qualitative research will become increasingly common and difficult to detect. Beyond ongoing training for research staff to identify imposter participants and procedures for reducing those administrative burdens, institutions must invest in specific protections to ensure the integrity of qualitative research conducted online. Key also are ethics committees to guide risk management procedures for protecting researchers and participants from these emergent challenges in online qualitative research.

Footnotes

Ethical Considerations

This study obtained ethics approval from the University of British Columbia Behavioural Research Ethics Board (H23-00301).

Consent to Participate

All participants provided informed written consent to participate in the Zoom interview and/or for their photographs to be used in the online resources and academic publications. This consent was provided via Qualtrics, a University of British Columbia Survey Tool that complies with the BC Freedom of Information and Protection of Privacy Act (FIPPA).

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Government of Canada’s New Frontiers in Research Fund (NFRF) [NFRFR-2022-00308]. Sharp is supported by a Michael Smith Health Research BC Research Trainee award, Canadian Institutes of Health Research Fellowship, and SSHRC project grant (NFRFR-2022-003080). Oliffe is supported by a Tier 1 Canada Research Chair in Men’s Health Promotion.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

Research data are not shared.