Abstract

We propose to perform wearable sensors-based human physical activity recognition. This is further extended to an Internet-of-Things (IoT) platform which is based on a web-based application that integrates wearable sensors, smartphones, and activity recognition. To this end, a smartphone collects the data from wearable sensors and sends it to the server for processing and recognition of the physical activity. We collect a novel data set of 13 physical activities performed both indoor and outdoor. The participants are from both the genders where their number per activity varies. During these activities, the wearable sensors measure various body parameters via accelerometers, gyroscope, magnetometers, pressure, and temperature. These measurements and their statistical are then represented in features vectors that used to train and test supervised machine learning algorithms (classifiers) for activity recognition. On the given data set, we evaluate a number of widely known classifiers such random forests, support vector machine, and many others using the WEKA machine learning suite. Using the default settings of these classifiers in WEKA, we attain the highest overall classification accuracy of 90%. Consequently, such a recognition rate is encouraging, reliable, and effective to be used in the proposed platform.

Keywords

Introduction

The Internet of Things (IoT) in recent years has gained significant importance in daily life. The IoT combines sensors, actuators, and communication networks to allow sensing and collecting information from the environment and human body for further computing and processing.1,2 The proliferation and changing trends of smartness in every physical object enables human-centric pervasive application development to facilitate people’s daily lives. Diverse and powerful embedded sensors make a ubiquitous platform to acquire and analyze data. This provides greater potential for efficient resource management and utilization, and for the ability to monitor human activities for health and wellness. 3 The lightweight and miniature size of devices allow them to be worn and carried on the move. In recent years, wearable sensors have emerged in various fields, such as entertainment, medicine, and security, changing the IoT trend to the wearable IoT (WIoT). 4 Mobile, wearable, and IoT devices provide a large amount of important personal data and help elucidate the context of the user. 5 Mining of this big data provides new approaches to measure and/or track welfare problems. Wearable sensors provide reliable and accurate information on human activities and behavior to ensure a sound and safe living environment. 6 The WIoT permits observing, tracking, and measuring individual functions in daily life. This article extends this line of application by proposing a wearable sensor IoT platform to acquire the data and automatically recognize activities.

Physical activity is the movement of body produced by skeletal muscle contractions resulting in energy expenditure above the resting level. 7 Human physical activities play a major role in a human being’s mental and physical health. Lack of physical activity can negatively affect physical fitness. 8 Recent studies show that the physical inactivity (aside from poor nutrition, smoking, and use of alcohol) is a significant cause of premature death. 9 This problem can be alleviated significantly with the help of physical activity tracking platforms. To this end, the data acquired from them can be used in recognizing activities. Consequently, with such tracking, the health issues related to physical inactivity can be reduced significantly. This concept can also be extended to other healthcare application areas such as tracking the patient or an elderly person to determine whether they are performing prescribed activities.

Based on the type of data, the physical activity recognition and tracking systems can broadly be divided into two classes. First is the video/image-based10,11 systems that use images or videos to recognize and track the physical activities. The second type relies on data generated by wearable sensor.12,13 The image-based systems face challenges due to image variations caused by occlusion, background clutter, and various object deformations. Apart from that, video- and image-based systems may cause the problem of privacy invasion. 5 However, wearable-sensors-based systems do not face such challenges as they are worn closely on the body without any visual contact with the user. Due to this reason, the data generated by these sensors are relatively accurate, reliable, and non-intrusive than images.

A human physical activities recognition (HPAR) system can be used to recognize and track activities of various magnitudes such as short, simple, and complex. A short activity is one with a short duration or a transition from one state of activity to another, such as a change from standing to sitting. Simple activities, such as walking, standing, and reading, are periodic in nature and can be recognized using a single sensor. Complex activities, such as cooking and presentations, are non-periodic in nature, and multiple sensors are required for their accurate recognition. Recognizing complex activities is a challenging task due to similarity, concurrency, and their interleaved nature. Two different activities can have a similar nature (such as sitting, compared to reading while sitting). Concurrent activities are when a person can perform more than one activity at the same time, such as standing while brushing teeth. Interleaved activities are defined as ones where an interruption can occur when someone is performing an activity (e.g. someone who is running might stop to drink some water, and after drinking, starts running again). Our proposed platform has the capability to recognize physical activities ranging from simple to complex.

HPAR is divided into several steps.13–15 First, either one sensor or a combination of body-worn, wrist-worn, and smartphone motion sensors collect activity time-series data. Various sensors, such as the tri-axil accelerometer, the gyroscope, and the global positioning system (GPS), are used at sampling rates from 1 to 100 Hz to collect data. In some cases, for signal noise removal, pre-processing (like Kalman filtering) is performed on the acquired data. The time-series data are segmented using a segment window size of 1 to 30 s for feature extraction.14,16,17 The segmented data are used to extract various time-domain features, such as the mean, variance, and root mean square values, as well as the frequency domain, such as the magnitude of the highest frequency. Feature data with labels are used to train supervised learning algorithms (classifiers). More recently deep learning has also been used to recognize activities for problem like health care. 18 Activities can be recognized in two different ways. One approach is the client/server model19,20 with any worn device or smartphone locally used for data collection and activity recognition.20,21 In the client/server architecture, the mobile phone is used for data collection and functions as a client node. It collects the data from mobile phone sensors or collects body-worn sensor data through Bluetooth. Collected data are sent to the server using the Internet, either in raw form or in some pre-processed format. At the server, features are extracted from the data and a trained classifier is used to classify the activity. The mobile-based local approach uses the smartphone for data acquisition, pre-processing, feature extraction, and classification. In the local scheme, the classifier is trained either offline or online. In offline training, the classifier is trained on a desktop PC and then used on the mobile device for activity recognition.

Concretely, following are the main challenges in our proposed platform:

Collection of various physical activities data from subjects of different ages and genders using wearable sensors.

Representation of data acquired from wearable sensors in the form of feature vectors and physical activity classification using these features.

Deployment of a real-time framework to asses and recognize users’ physical activities.

In face of the above-mentioned challenges, following are our contributions:

We collect a novel data set named “CUI-HPAR-Dataset” of physical activities performed by participants of various ages and genders. These activities range from simple to complex where some of them are performed outdoors such as presentation and laundry folding, whereas others are performed indoors such as cooking and reading.

The collected data are then represented in feature vectors that are calculated using various statistical measures of the input data such as mean and standard deviation. A detailed performance evaluation of supervised learning-based classifier is then performed on this data to determine the one with best recognition rate.

Finally, we propose a novel real-time and web-based framework that collects the data from wearable sensors and sends it to the server via a smartphone. Based on the received data, the activity is recognized and sent to the user.

The rest of this article is organized as follows. Section “Background and related works” briefly introduces the background and related works on the WIoT, activity data collection using ambient sensors, on-body wearable sensors and smartphone sensors, feature extraction, and recognition algorithms. The proposed WIoT platform, data collection, feature extraction, and classifiers are explained in section “The proposed WIoT platform for HPAR.” Section “Simulation results and discussion” introduces the properties of the acquired data set and includes a performance comparison of various classifiers using different features with different window sizes. Finally, we conclude the proposed work in section “Conclusion.”

Background and related works

This section briefly introduces the background and related work about the platforms used to collect the sensor data, kind of sensors, sensors placement, features extracted from raw data, recognized activities, and classifiers used for recognition.

Platforms and sensors used for HPAR

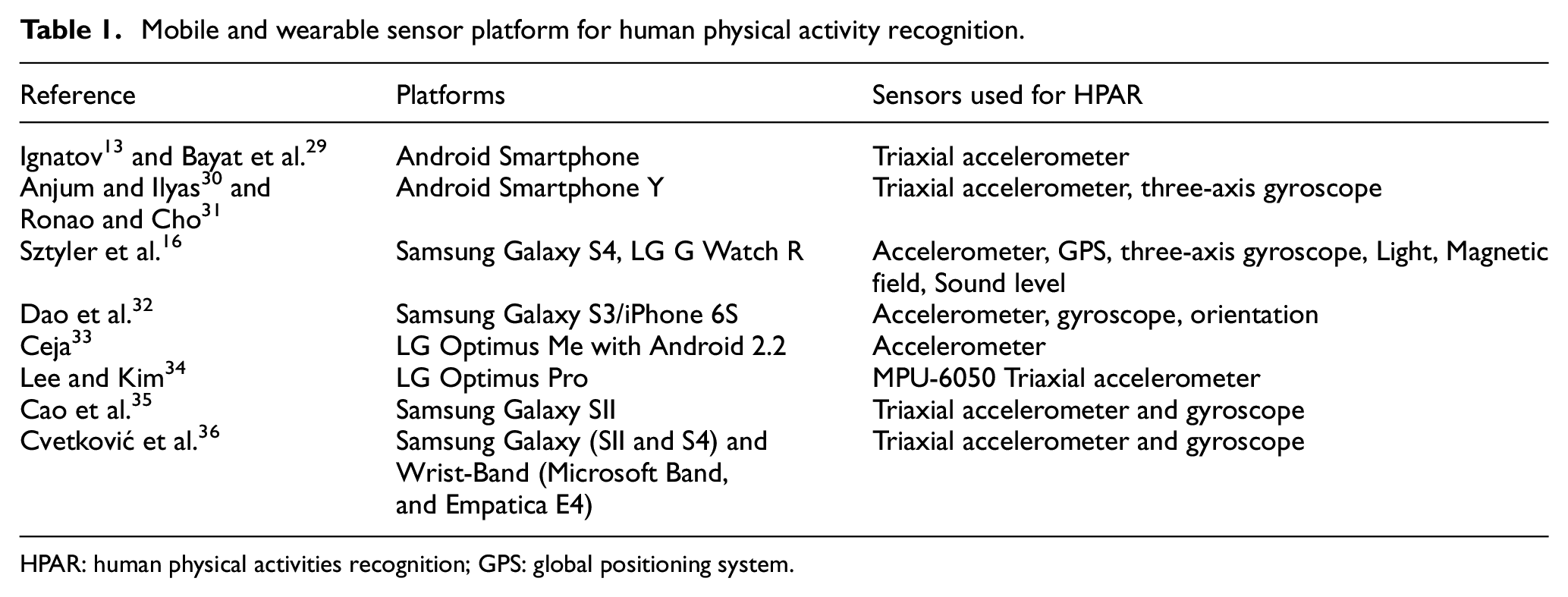

HPAR can be recognized either using ambient or body-worn sensors. The ambient sensors used for activity recognition include a camera-based platform, 9 a passive infrared sensor,22,23 an ultrasonic sensor, 23 radio frequency identification, 24 and WiFi- and vision-based sensors. 25 These sensors are installed in offices and at home. Ambient sensor-based HPAR has the drawback of being static in nature and cannot perform outside of the installed area. However, wearable sensors can overcome this problem. Kumari et al. 26 proposed the WIoT architecture that interfaced the accelerometer, gyroscope, and magnetometer sensors with particle photon board. Using WiFi interface the particle photon board is connected to Raspberry Pi to collect the data and send it to ThingSpeak cloud for storage. They used smartphone to start recording and control the storage (sampling). However, they did not provide any statistical analysis and placement of sensor module on body. Also, Raspberry Pi and particle photon both have WiFi connectivity so the architecture can be minimized components-wise using only one device to transfer data to the cloud. Gia et al. 27 proposed energy-efficient WIoT sensor node for fall detection and analyzed energy consumption considering various parameters such as sampling rate, communication interfaces, transmission rate, and transmission protocols. They showed that Bluetooth (Bluetooth Low Energy) consume about 70% less energy than WiFi. A remote patient monitoring system is proposed to track a person’s physiological data to detect specific disorders which can aid in Early Intervention Practices. 28 Most people are using smartphones, and the number is increasing day by day. Modern smartphones and smart watches are equipped with sensors such as a gyroscope, an accelerometer, a magnetometer, and a GPS. The Android™ smartphone is the most common platform used to collect activities data. Table 1 provides detailed insight into mobile platforms and wearable sensors for HPAR.

Mobile and wearable sensor platform for human physical activity recognition.

HPAR: human physical activities recognition; GPS: global positioning system.

Sensor placement for HPAR

Sensor placement on the human body mainly includes relative position to the body and orientation of the sensor. In the literature on HPAR, single or multiple sensors are attached or worn on the body in a predefined position and orientation. The number of activities recognized and the accuracy increases as the number of sensors increases at various positions. 37 The research shows that the best locations for sensors to recognize an activity depend on the activity performed. Bao and Intille 38 stated that placing accelerometers on the hip, thigh, and ankle are good indicators for activities that include some kind of ambulation, movement, or posture, while placement of accelerometers on the wrist, elbow, and arm are good indicators for activities that mostly involved the upper body. Cleland et al. 39 placed six triaxial accelerometers at six different positions and found that the sensors installed at the hip had higher recognition accuracy, compared to other positions. Figure 1 shows a graphical illustration of sensors placed for HPAR, as found in the literature.

Graphical illustration of sensor placement for HPAR from the literature review.

Features computation, extraction, and selection for HPAR

In HPAR, the recognition step is generally preceded by features extraction from the acquired sensor data. Features are the characteristics of sensor signals in both the time and frequency domain. Features extraction is then performed on various length (1–30 s) segments of these signals. However, selecting significant features is an important and non-trivial step as increasing the number of features does not necessarily improve classification accuracy. 40 Sztyler et al. 16 used features such as correlation, entropy, gravity, mean, absolute deviation, standard deviation, and Fourier transform. According to them, these features are helpful in finding the orientation information of the device by calculating angles. Li 41 proposed a two-step process for feature selection where in the first step, features are extracted while, in the second step, unnecessary features are eliminated by a specialized filter. Consequently, 37 initially selected features are reduced to just seven. Bayat et al. 29 employ a low-pass filter to remove the DC component and then perform the features extraction. They extract various features such as mean, MinMax, standard deviation (STD), average peak frequency, and root mean square. Table 2 shows the features used for activity recognition, as described in the literature.

Literature summary of physical activities, selected features, window size for feature extraction, and classifiers for HPAR.

HPAR: human physical activities recognition; RF: random forest; NB: Naïve Bayes; KNN:

Activities: A1: Slow Walking, A2: Jogging, A3: Going up stairs, A4: Going down stairs, A5: Sitting, A6: Standing, A7: Fast walking, A8: Dancing, A9: Lying, A10: Bending, A11: Getting Up, A12: Laying Down, A13: Putting a hand back, A14: Stretching a hand, A15: Jumping, A16: Answering a phone call, A17: Typing, A18: Reading, A19: Drinking, A20: Eating, A21: Talking, A22: Smoking, A23: Biking, A24: Cooking, A25: Washing dishes, A26: Brushing teeth, A27: Combing hair, A28: Scratching the chin, A29: Washing hands, A30: Taking medication.

Classifiers for HPAR

Classifiers are supervised learning algorithms used to perform physical activity recognition. The most widely known and used classifiers are the

The proposed WIoT platform for HPAR

In this section, we briefly describe the proposed platform, the acquiring of physical activity data from sensors, features vector construction, and the classifiers used for physical activity recognition.

Hardware and software of the proposed HPAR framework

Figure 2(a) depicts hardware of the proposed framework for HPAR that consists of three main components. The first is a sensing device with Bluetooth communication link to get connected with a smartphone for data transmission. In this article, we used the Metawear MetaMotion R 64 module that includes several sensors such as triaxial accelerometer, gyroscope, magnetometer, temperature, and pressure. The second component of the hardware is a smartphone that collects the data from sensor and transmits it to a web-server over the Internet. It also receives the physical activity recognition and analysis report from web-sever over the Internet for user’s information and monitoring. The last component of hardware is the web-server itself which receives the physical activity data from the smartphone, recognizes, and analyzes this data and then sends the feedback to user’s smartphone via the Internet.

An overview of the (a) hardware and (b) software architecture for human physical activities recognition.

Figure 2(b) shows the software architecture of the proposed framework that consists of two main parts. The first part is based on PHP scripts that are used to transfers the data between a smartphone and web server. The second component is the recognition web server (Bottle server) interfaced with MySQL Database through Python. It receives the time-series data of the sensors, extracts various features from the data, and uses the trained classifiers and labels to recognize a physical activity which is then stored on the server for further use and analysis.

Data collection in the HPAR

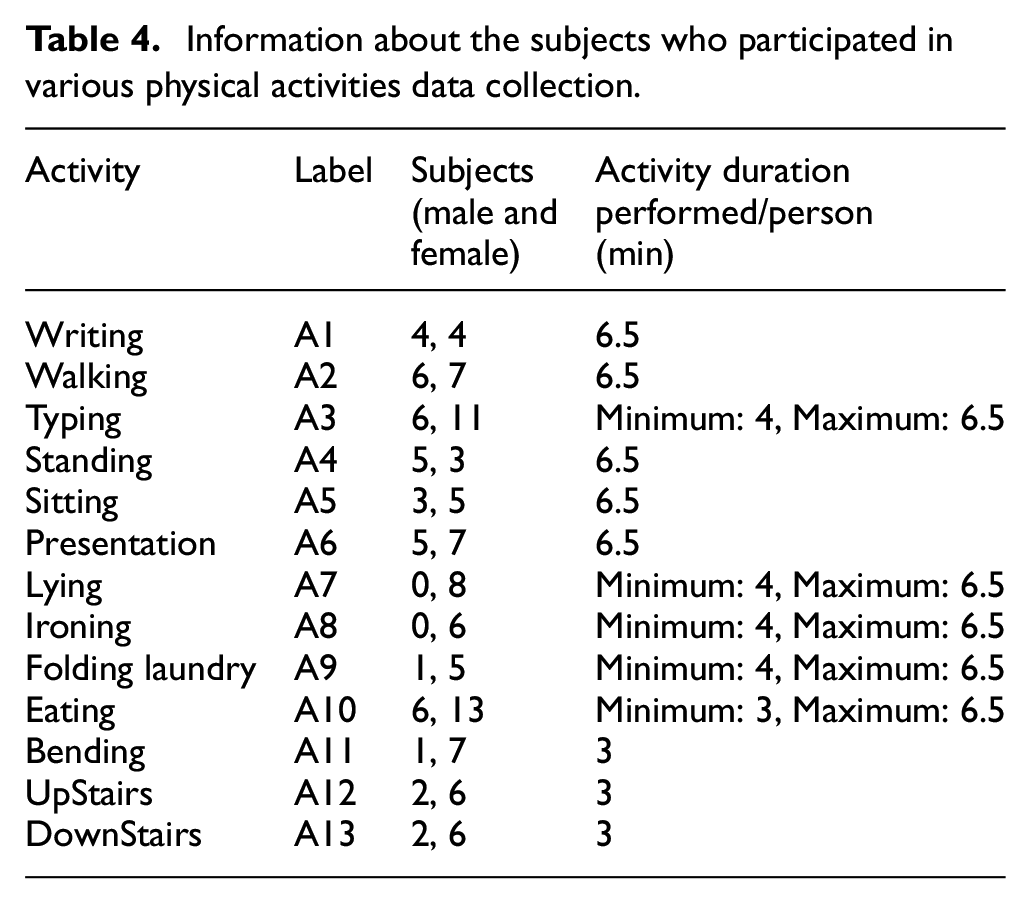

In this article, we collected data from the sensor modules installed on various body locations such as wrists and arms. Table 3 shows the technical specifications of various individual sensors in a module such as sampling frequency, quantization levels, and range. The data were collected in both indoor and outdoor environments from participants of both the genders for 13 various activities. Since activity parameters vary with an individual’s fitness, age, height, weight, and physical movements, the participants for each activity were chosen in such a way that they vary from one another based on these parameters. For each activity, the number of participants vary from 6 to 19. The ages of participants are

Sensors configurations for data collection.

Information about the subjects who participated in various physical activities data collection.

Feature extraction and selection in the proposed HPAR system

The extraction of features from sensor time-series data depends on the window size. To find the best window size, we divided the data into different segments using empirically selected window sizes of 2, 3, and 4. We calculated both the time-domain and frequency-domain features of the activities data. Details of the features are as follows:

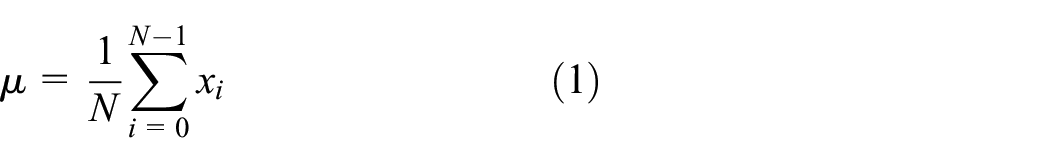

Mean value: the mean value is calculated for accelerometer (

where

Standard deviation (STD): it finds the spread in the sensors data around the mean value. It can be calculated as

we calculate the STD of accelerometer (

Entropy: entropy is useful to differentiate between the activities who have similar power spectral density but having different patterns of movement. 65 Equation (3) finds the entropy of accelerometer along each axis

Cross correlation: we find the correlation between the accelerometer axes such as

where

RMS: the

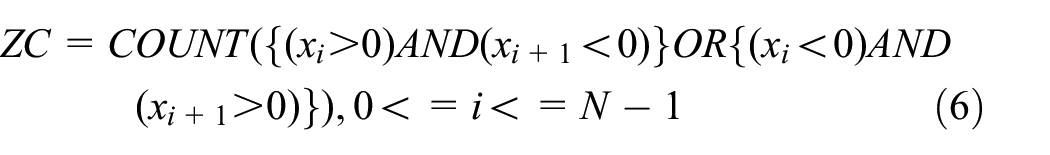

Zero-crossing (ZC): Chang et al. 66 defined ZC is the number of times the signal crosses zero and its sign is changed. In this article, we consider ZC for the accelerometer along three axes. Mathematically, it can be written by equation (6)

Maximum value: we calculate the maximum value of accelerometer (

Frequency-domain features: in this article, we consider six frequency-domain features based on the fast Fourier transform (FFT) of acceleration data. Since it is double-sided, first we shift the FFT. The six features are the FFT magnitude:

Equation (10) finds the

Process of features vector construction. The time-series data are collected from various sensors. The statistical measurements of each sensor output are then calculated and summarized to make the final features vector.

Classification algorithms for the proposed HPAR

The task of HPAR is to label a given recorded activity from A1 to A13. In order to perform such labeling, we use supervised machine learning algorithm more commonly known as classifiers. First stage is the training, where the activities represented in the form of features vectors along with their labels are used to train the parameters of a given classifiers. Afterward, this trained model is evaluated by using it to predict the label a given test activity such that this particular test sample is disjoint from the training set.

A multitude of classification algorithms have been studied in the literature on HPAR. However, a universal classifier does not exist that outperforms all others for activity recognition.

35

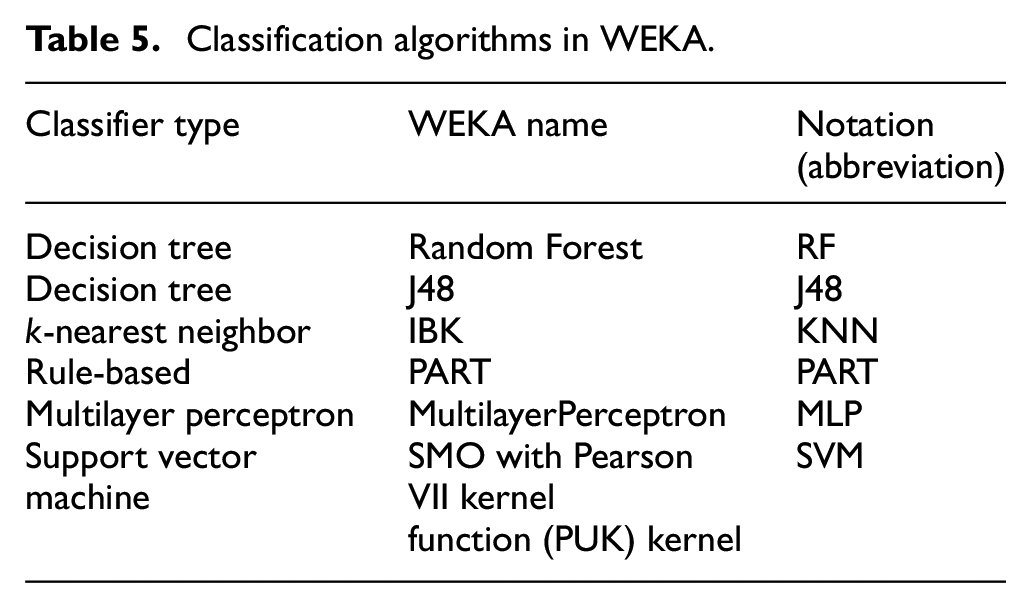

The most common classifiers used are

Classification algorithms in WEKA.

Simulation results and discussion

In this section, we briefly investigate the performance analysis of window size on classifiers for HPAR. We considered the window size

We used 10-fold cross validation to evaluate the classifiers’ performance. In 10-fold cross validation, the feature data set is divided into 10 equal bins. Out of these bins, 10% are used for testing and 90% are used for training. Evaluation is repeated 10 times, and each time a different bin is used for testing. It eventually uses the whole data set for training and testing. Finally, an average of the 10 iterations is used for the performance metric.

Role of windows on the performance comparison of classifiers for HPAR

Figure 4 shows the classification accuracy comparison of various classifiers with various window sizes. It clearly depicts that a window size of 3 s used for features extraction has better performance. All the six classifiers have higher accuracy for

Classifier accuracy comparison for various window sizes.

Performance comparison of

Performance comparison of individual activity recognition in the proposed HPAR

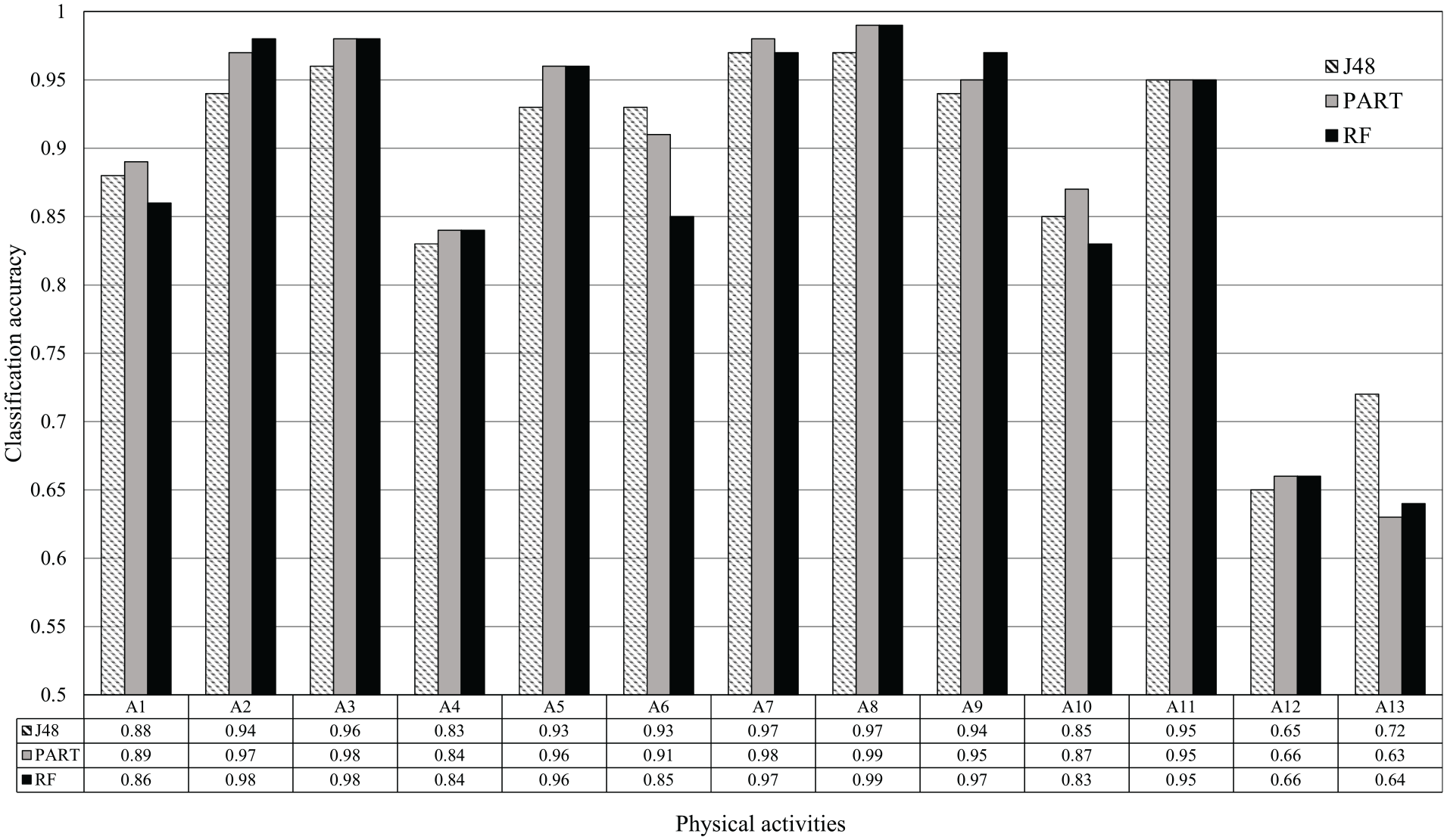

Figure 6 shows the performance comparison of individual activity recognition using

Physical activity recognition rates of

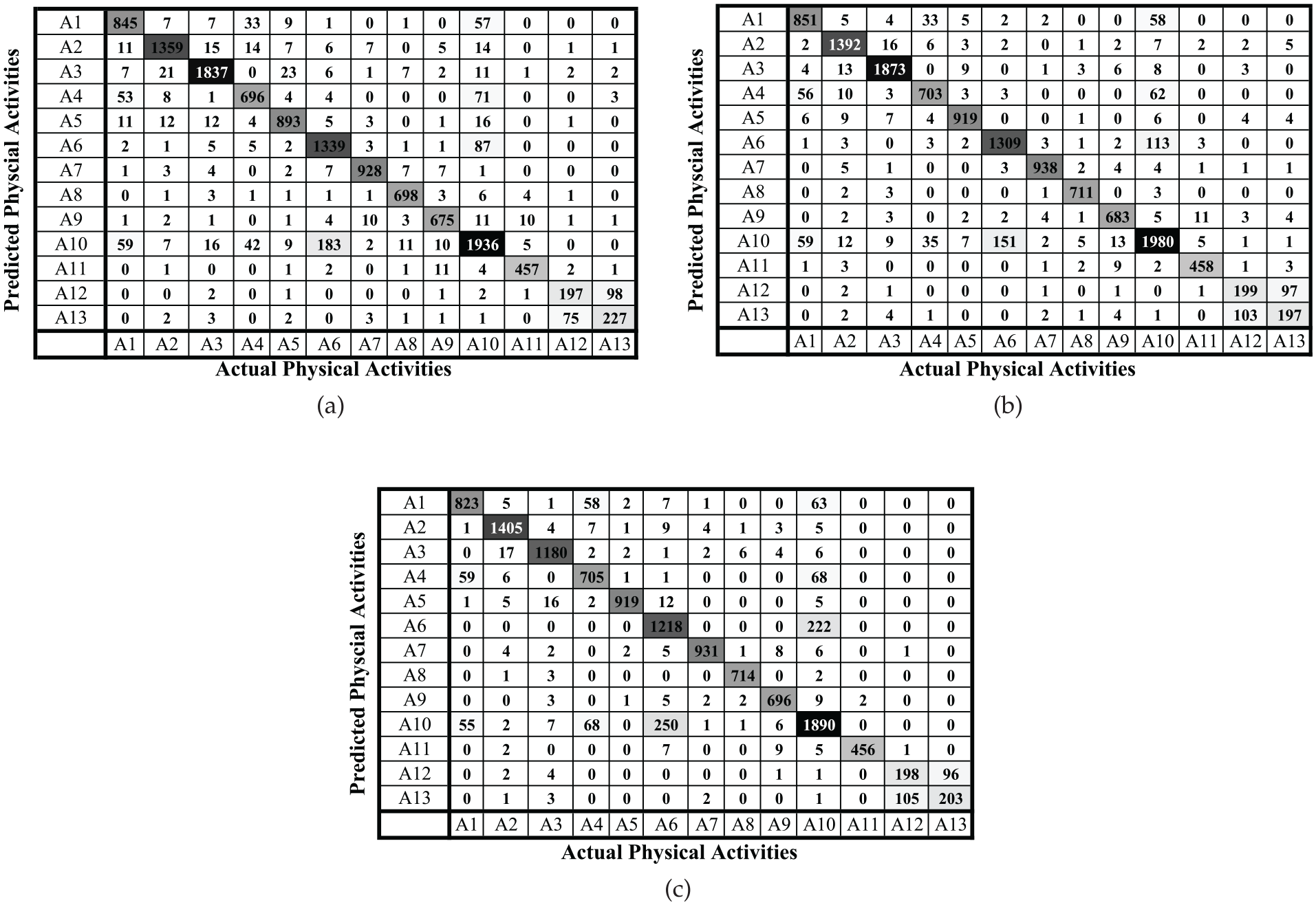

Figure 7(a)–(c) shows the confusion matrices of

Confusion matrices of

Table 6 shows the

True positive rates (TPR) and precision values achieved by

Bold values represents that these activities are recognized with higher accuracies.

Conclusion

This article proposed a web-based framework for human physical activity recognition that integrates wearable sensors, smartphones, and processing with a recognition server. The smartphone collects data from wearable sensors using Bluetooth and transfers it to the server using HTTP. The transferred data are then represented in features vectors and given to the via already trained classifier in order to recognize the activity. The feedback of such recognition is then sent to the smartphone via the Internet. We collected data for 13 physical activities using several sensors such as accelerometer, gyroscope, magnetometer, body temperature, and pressure sensors. The window size for feature extraction was evaluated, where it was found that a window of 3 s performs the best from among 2-, 3-, and 4-s windows. We evaluated six classifiers for physical activity recognition which are random forests, decision tree, a rule-based classifier, KNN, MLP, and SVM. It was found that on the given data set, RF, the decision tree, and rule-based classifiers perform better than SVM, KNN, and MLP by achieving an overall classification accuracy of more than 94%. In future, we plan to use more sensors such as electrocardiogram (ECG) and breathing sensors for healthcare and evaluate more state-of-the art machine learning algorithms such as deep learning.

Footnotes

Handling Editor: Bingang Xu

Author contributions

All authors contributed to the paper. F.U., A.I., and H.A. contributed to conceptualization, software, formal analysis, and writing. A.U.R., K.S., A.B., and S.A. contributed to data curation, software, and methodology. S.Y. and K.S.K. contributed to validation, funding acquisition, and review and editing

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by a National Research Foundation of Korea-Grant funded by the Korean Government (Ministry of Science and ICT)-NRF-2020R1A2B5B02002478.