Abstract

We propose a new type of authentication system based on behavioral characteristics for smartphone users. With the sensor and touch screen data in the smartphone, the combination of the motion state detection mode and the authentication mode can effectively distinguish between legitimate smartphone users and other users. The system deploys software on the smartphone to collect data from sensors and touch screens, and upload the data to the cloud. We apply random forest algorithm on the data to extract features and achieve motion state detection. Multilayer perceptron algorithm is used for user authentication in corresponding motion state. The system effectively implements an implicit and continuous authentication mode, which can achieve user identity authentication without users’ being aware of it. The system proposed in this article can achieve 95.96% accuracy with false rejection rate of 2.55% and false acceptance rate of 6.94%.

Introduction

Smartphone is a very attractive target for attackers to get personal and valuable information. User authentication is critical to preventing privacy leak caused by vulnerabilities in confidentiality and integrity.

Currently, most software login mechanisms use explicit authentication, such as passwords and fingerprints. Biometric authentication methods like iris scanning 1 and face recognition2,3 can also be used for explicit authentication. However, for smart phone users, it is not convenient to re-authenticate if they try to access very sensitive information after passing the explicit authentication mechanism. 4 Therefore, after the user passes the initial authentication, the system will not authenticate the user again, or even if it is authenticated again, the explicit login method will be used. This mechanism leads to a significant risk that other users can control the user’s smartphone after the initial login of the legitimate user. The explicit authentication mechanism is also vulnerable to attackers. Simple username, password, or biometrics can be easily stolen or learned. This explicit authentication method allows the attackers to access proprietary or sensitive data and services, no matter whether they are stored in the cloud or on the mobile device itself.

In recent years, scholars have also done a lot of research on mobile terminal user identity authentication. Traditional authentication methods are generally based on private information owned by the user, such as passwords. Physiological biometric based methods utilize different personal biometric features such as fingerprints or iris images. Behavior-based authentication takes advantage of the different behaviors of users. There are many different physiological biometrics for identity verification, such as face recognition, 3 fingerprint, 5 and iris recognition. 1 However, physiology-based authentication requires users to participate in the process. Therefore, they are more useful for initial login verification. For implicitly continuous authentication and re-authentication, they are just as meaningless as passwords. Behavior-based authentication uses the user’s behavioral model to authenticate the user’s identity. It assumes that people have different behaviors for specific behaviors (i.e. gesture mode, 6 gait, 7 and global positioning system (GPS) mode), 8 and to most people they are stable features.

We need a system that can detect the identity in the user’s unknown state, and the system should not reveal the sensitive information of the user. We propose a behavior-based mobile terminal identity authentication system, using deep learning methods and human behavior features to identify and authenticate users when they use smartphones. This is an authentication system that collects their data through touch screen and multiple sensors built in users’ smartphones and analyze them. The system can continuously monitor the users’ sensor data and touch screen data when the network is connected, and upload the data file regularly to achieve continuous authentication. This continuous authentication process only requires the software running in the background, and other operations can be implemented without any manual operation. Specifically, we first collect sensor data and touch screen data in the smartphone, upload the data to the cloud, and do brief processing to extract the context characteristics of the sensor data to detect the current user’s motion state. Then, the authentication model is used to detect the authentication features of time. Finally, after the detection result is obtained, it is sent to the feedback module of the mobile phone app, and the corresponding action is performed for the detected result. Through these steps, our system achieves more efficient and more subtle and fine-grained authentication, than a common identity authentication system. We systematically evaluated all the design parameters of each design in this sensor-based and touch screen-based implicit authentication system. Based on our design choices, our evaluation demonstrates the benefits of improving accuracy through motion state detection and time features. At the same time, we also confirmed the accuracy of the entire detection mode of the system through experiments. We first collected the sensor data and touch screen data in the smartphone, uploaded the data to the cloud, and processed the motion state features of the sensor data to detect the current user’s motion state (using the random forest algorithm). Next, the system judges the state of the user at that moment based on the detected motion state, and uses the authentication model (using the multilayer perceptron (MLP) algorithm) to detect the authentication features of the user’s time domain. Combined with the motion state detection, the system can finally achieve 95.96% verification accuracy with false rejection rate (FRR) of 2.55% and false acceptance rate (FAR) of 6.94%. Moreover, the system is lightweight and only requires data collection and a final feedback module in the user’s mobile terminal system. Both the arithmetic part and the classification part are implemented in the cloud.

The contributions of this article are as follows:

The method of identity authentication using behavioral features is summarized, and the commonly used behavioral feature authentication methods are comprehensively analyzed. The pressure and sliding speed of the touch screen and the time under the three axes of the sensor (accelerometer, gyroscope) are proposed. Twenty-six behavioral features are studied, including the time features.

In view of the fact that the current mobile terminal user password is easily stolen, the user behavior is used to perform enhanced authentication. Through the collection of behavioral features, the motion state and users are distinguished by authentication algorithm. The algorithm has a high accuracy of identity authentication.

The algorithm in this article can be applied to implicit continuous user identity authentication, and the detection system is realized. By deploying relevant systems on the experimenter’s mobile phone, a more effective real-time detection is achieved.

Related work

Some people have conducted studies based on user’s identity authentication on mobile devices. As mentioned in the previous section, commonly used methods are biometric and behavioral authentication. Given that biometrics are easily stolen and replicated, we focus on behavioral authentication. Behavior-based authentication can be further divided into touch screen-based authentication mode and sensor-based authentication mode. The researches of these two modes are introduced below.

Touch screen-based authentication

Currently, the touch screen-based authentication includes the following categories:

Authenticate by doing specific actions on the touch screen (i.e. up, down, left, right, swiping, clicking, etc.) and their associated combinations as the user’s behavioral mode features.

Combine keystrokes, handwriting, and pinching gestures to authenticate users. The combined authentication method is realized by collecting data when the user inputs text in the background and the touch screen behavior when using the software.

Authenticate the users primarily based on the user input by using salient features such as speed, device acceleration, and stroke time.

Use gesture features with biometric and behavioral characteristics. It is a bit more conducive to the formation of individual behavioral features. Because it is combined with biometrics, it is relatively less likely to be imitated.

Touch screen-based authentication does achieve high accuracy. Mario Frank et al. 9 analyzed the behavioral features of users by collecting touch screen data. They extracted 30 relevant features of the touch screen data and used support vector machine (SVM) and k-nearest neighbor (KNN) to train the classifier. Their experimental results can reach an error rate of less than 4%. Meng et al. 10 proposed a method of collecting touch behavioral features based on web browsing on smartphones. They have achieved an average error rate of about 2.4% by using a combined classifier of particle swarm optimization–radial basis function network (PSO-RBFN). Feng et al. 11 proposed TIPS (touch-based identity protection service) that authenticates users in the background by continuously analyzing touch screen gestures in the context of a running application. They have achieved over 90% accuracy in the real-life naturalistic conditions.

However, research by Serwadda et al. 12 shows that gesture styles can be observed and automatically replicated. Moreover, the touch screen information contains sensitive information (such as information on the keyboard), for example, an attacker can use the touch screen information to find the user’s password. 13 Our system effectively circumvents user privacy and improves the security of detection. Considering about the security and user’s privacy, we do not consider the use of keystrokes, gestures, and certain touch screen features that may reveal sensitive information. Our system encrypts the collected data and further enhances security.

Sensor-based authentication

Several types of sensors that are commonly used today include accelerometers, magnetometers, gyroscopes, GPS, and so on. However, GPS information is sensitive, so its use requires explicit user permission. C Shen et al. 14 used the data collected by the sensor, combined with the user’s password input action to analyze, and used a classification learning method to authenticate the user. The results showed an error rejection rate of 6.85% and an error acceptance rate of 5.01% can be achieved. Kayacık et al. 15 proposed a lightweight user-based behavior model for user authentication mainly based on hard sensors and soft sensors. However, they do not show their authentication performance. SenSec 16 collected data from accelerometers, gyroscopes, and magnetometers to build gesture models as the user uses the device, which can achieve 75% accuracy in identifying. Nickel et al. 7 proposed an accelerometer-based behavior recognition method for identity authentication, using the k-NN algorithm. They can achieve 3.97% FAR and 22.22% FRR. Li and Bours 17 developed an approach to authenticate the user by using WiFi and accelerometer data, which achieved EER (equal error rate) of 9.19%. Shen et al. 18 proposed a system for authentication using sensors. They used a Markov decision procedure and one-class classification method to achieve a FRR of 5.03% and a FAR of 3.98%. Acar et al. 19 introduced a method called wearable assisted continuous authentication (WACA). WACA relies on sensor-based keystroke dynamics in which authentication data is acquired by a built-in sensor of a wearable device as the user types. They have achieved over 99% accuracy. S Mare et al. 20 proposed zero effort bilateral re-authentication (ZEBRA). In ZEBRA, users wear bracelet (with built-in accelerometers, gyroscopes, and radios) on their dominant wrists. It transmits the data monitored on sensor on to the bracelet and then to the computer terminal and uses the computer terminal for data analysis. For different thresholds of availability switching security, ZEBRA correctly validates 90% of users and identifies all opponents within 50 s.

Combined authentication

Compared with the touch screen-based authentication, it can be seen that the accuracy of the sensor authentication is high, but there is still a certain gap between the accuracy of the touch screen authentication and sensor authentication. Therefore, there are some studies that combine both the touch screens and sensors for identity authentication. Z Sitova et al. 21 proposed that the grip strength of different people is related to their biological characteristics and behavioral habits. They collected the user’s touch screen and sensors (a total of 96 features) for analysis in different sports modes, achieving EER of 7.16% (walking) and 10.05% (sitting). A Buriro et al. 22 proposed a model called DIALERAUTH—a mechanism which leverages the way a smartphone user taps/enters any text-independent 10-digit number (replicating the dialing process) and the hand’s micro-movements made while doing so. They used one layer-MLP and achieved FAR of about 12%.

The combination of identity authentication and certification, due to the combination of two sources of data, the number of features extracted is correspondingly increased, and the computational complexity is correspondingly increased. At the same time, in order to avoid collecting sensitive data during free use, many studies have adopted specific gestures/inputs for the data collection. This will not complete the real-time update of the model. Our system reduces the number of features by selecting features that do not directly reveal user sensitive data. On the other hand, it also helps the model to be updated when users are free to use. Due to the safety considerations, the features available on the touch screen have been reduced a lot. Our system chooses a combination of touch screen-based authentication and sensor-based authentication. This can avoid the security risks caused by using only the touch screen data, and can improve the accuracy of detection. In addition, wearable devices are not widely used. Most of the bracelets cannot be run away from the mobile device, and data transmission is also difficult. Considering universality, we put the main body of research on the use of mobile phone.

Behavior-based authentication system construction

The behavior-based user identity authentication system proposed in this article is shown in Figure 1. It consists of the client of the smartphone terminal, the authentication part, and the user’s personal database in the cloud. The client part includes a data collection module and a feedback module. It mainly performs data collection and provides result feedback. The cloud authentication part includes a database, a motion state detection module, and an authentication module, which mainly completes classification and authentication of data collected by the smart phone terminal, and generates detection result.

System framework.

Smartphone terminal

The framework of the smartphone is shown in Figure 2. The smartphone terminal acquires the required data by monitoring the user’s sensor and touch screen. The data collection module is composed of sensor and touch screen, is responsible for monitoring the changes of the sensors and the touch screen, and collects the data of the specified sensors and touch screen after the change is discovered. The collected data are saved in data files in a specified format, and these files are transferred to cloud server.

Smartphone terminal framework overview.

We train and detect the data in the cloud server. The feedback module is responsible for receiving the detected result returned by the cloud, and generating a feedback action on the smartphone side in real-time (such as pop-up prompt window, lock screen, re-authentic authentication, etc.).

Cloud server

The cloud server first collects the simple processed raw data from the mobile terminal. Then, it extracts time features from the original data to form two feature vectors: motion state feature vector and authentication feature vector. Refer to section “User’s behavior features” for specific feature selection. Next, the two feature vectors are respectively input into the motion state detecting module and the authentication module. The motion state detection module determines which motion state the user is in (i.e. the current motion state of the user), and transmits the detected motion state to the authentication module. We use random forest algorithm to detect the motion state. Random forest is often used to predict and classify because its computational complexity is relatively low and its training is faster. 23

The authentication module consists of a classifier and multiple authentication models for different motion states of different users. 12 The classification algorithm we choose is the MLP algorithm. 24 Specifically, to know the reasons why we choose the MLP algorithm refer to section “Classifier parameter determination.” When the motion state detecting module detects different motion state modes, the authentication module may select different authentication models, transfer the stored parameters to the classifier, and perform the detection by the classifier.

When the classifier of the authentication module finally generates the authentication result, it sends the result to the feedback module of the mobile terminal. If the authentication result indicates that the user is legitimate, the feedback module will allow the user to access to key data or cloud services in the application server using the cloud application. Otherwise, the feedback module can lock the smartphone or deny the user’s access to critical data or perform further checks. When the attacker’s operation is detected, we use other auxiliary authentication factors (e.g. touch screen drawing or password) for explicit authentication.

In this article, we use a virtual cloud server (8 core, 32 GB memory, and 10 Mbps bandwidth). It is completely sufficient for our training and experimentation. If the number of users increases, the cloud server can be expanded, which is an advantage of using the cloud server.

MLP

In the specific authentication model, we use the MLP model. The MLP network consists of a sensory (S) layer, an association (A) layer, and a response (R) layer. The S, A, and R layers are composed of similar neurons. 25 The S layer is the input layer of the network structure, used for the input of the feature vector. The A layer is the hidden layer in the network and the R layer is the output layer of the network. The S and A layers form a prediction matrix of the processing object through a connection relationship, and the joint structure between the A and R layers is paired to process the decision matrix of the object, and the parameters are adjusted by training to make the network orderly and have decision stable structure of capacity. 26 This article uses a hidden layer MLP, the structure of which is shown in Figure 3.

Schematic diagram showing how MLP works.

The MLP 27 based on back propagation learning is a typical feedforward network. The processing direction of information is carried out layer by layer from input layer to hidden layer to input layer. We need to determine three parameters for the MLP: the number of hidden layer nodes, the connection weight of the hidden layer to the output layer, and the threshold of the output layer. We use the backpropagation (BP) algorithm for backpropagation to achieve the purpose of adjusting the connection weight of the hidden layer to the output layer and the threshold of the output layer. The number of nodes in the hidden layer is determined by specific experiments. The specific experimental part is described in section “Determination of the number of hidden layers.” We improve the efficiency of training by constantly adjusting these parameters. Further, MLP enables parallel computing, fast processing, and powerful learning. It is also easy to implement nonlinear mapping process and has strong fault tolerance. Local neurons will not have a huge impact on global activities after damage, and will have patterns on input signals. The functions of transformation and feature extraction are less sensitive to the system with uncertainties and incomplete input patterns. The MLP can fully integrate and train the samples in the detection system to improve the detection accuracy.

Our MLP is a three-layered structure. The activation function used is the rectified linear unit (ReLU) function. The number of hidden layer nodes of MLP is 70; the number of input layer nodes is the dimension of the feature vector, which is 26; and the number of output layer nodes is the classification result, which is 2.

Random forest algorithm

Random forest algorithm is usually used for data mining. Random forest creates a model that predicts the value of a target variable based on several input variables. First, we try to use in four scenarios:

The user uses the smartphone without moving, such as standing or sitting.

The user uses the smartphone while moving: there is no limit to the way the user moves.

When the user uses the smartphone, the smartphone is fixed (e.g. on the table).

The user uses the smartphone on a mobile vehicle such as a train.

These four scenarios are not easy to distinguish: since scenarios 1, 3, and 4 are relatively fixed, the scenarios 3 and 4 are moving at a steady speed. They are easily misclassified to the scenarios 1. Therefore, we merge contexts 1, 3, and 4 into a static context and 2 as the mobile context. We use the data of the stationary state and the data of the moving state to train the model. The context detection accuracy exceeds 99%. The context detection time is also very short that is less than 3 ms. The experimental results are shown in section “Motion state detection model.”

Detecting method

Registration phase

At the start of the system, users must do the model training during the registration phase. When the user enters the registration phase, the system begins to monitor the sensor and touch screen, and collects the specified sensor data and touch screen data. We should confirm that the user is in a relatively static state to collect data. The system saves the collected data in the protected storage area of the mobile phone (the storage area belongs to the internal storage of the mobile phone, and the data stored in the internal storage area is only accessed by the system), and the data is collected every 20 min. We collect data in a static state four times for a total of 80 min, transfer the collected data to the cloud server, and store it in the database corresponding to the user. In the case of 80 min collection, we use the same method to collect data from the user in motion. Considering that the user is unlikely to be in motion for 20 min, this collection time will be controlled by the user himself. After the final 80 min collection, the data is transferred to the user’s database in the cloud. After this data collection, we can make the following assumptions:

The user is accustomed to his own device, and his device-specific “sensor behavior” no longer changes.

The system has observed enough information to stably estimate the user’s pattern for real potential behavior.

The cloud server then begins training the corresponding model of the user. After the training is over, the system switches to the continuous authentication phase.

Continuous authentication phase

When the cloud system completes the training of the user-corresponding model, the smartphone can start the authentication mode. A comprehensive authentication process requires a network connection to proceed smoothly. When the user does not have a network connection, we store the data for post-certification. When the network is connected, we upload data to achieve continuous authentication.

First, the cloud authentication system needs to determine the state of motion of the user and use the motion state detection model for detection. The authentication classifier is then responsible for determining whether the data are from a legitimate user. In this way, the authentication classifier can also be automatically updated when the behavior mode of the legitimate user changes over time.

On the one hand, we can achieve continuous authentication by uploading data in real-time during networking, and on the other hand, because the data of our training model is the data that is freely used by the user, it can also pass authentication in an implicit and continuous manner. Threshold decision mechanism allows the models to be updated in real time.

User’s behavior features

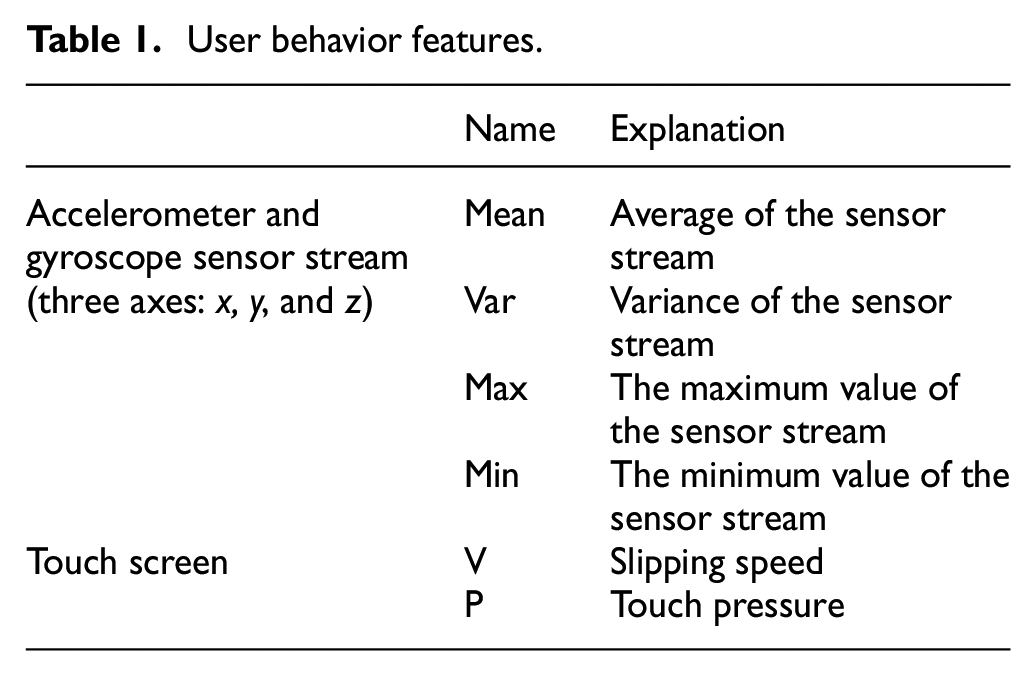

Touch screen features

The data of the touch screen of the mobile phone include many types, such as point coordinates touched by the user, touch force, touch duration, sliding speed, sliding direction, and so on. So, which touch screen data type do we need to select? For security purpose, we first rule out the user’s touch point coordinates. Because the user’s touch screen behavior is detected, the user’s input from the keyboard is also included. When the android phone triggers a keystroke, the position of each letter on the keyboard is fixed. Therefore, after collecting the coordinates of the point touched by the user, attackers can easily obtain the sensitive information of the user. 12 At the same time, consider the need to select data that are not easy to be imitated, which have strong characteristics and weak correlation. We finally choose two kinds of data: sliding speed and touch pressure. The collection method of these data is similar to the collection of sensor data, but the data of the touch screen are not continuously collected, and the collection behavior is triggered when the user touches the screen. Finally, the analysis and processing are carried out together. It should be noted here that since the data collection of the touch screen is not continuous, and the pressure data on the touch screen are not supported by all the touch screen drivers of the model, the touch screen data are only used as an auxiliary authentication factor.

Sensor features

The sensors in the mobile terminal include: hard sensors of the physical sensing device (e.g. an accelerometer, a gyroscope, a magnetometer, a gravimeter, a light sensor, etc.), and soft sensors (e.g. the screen is open/off). We choose accelerometer and gyroscope because other sensors are easily affected by environmental factors and these two sensors are related to the motion state of the users.

We split the sensor data stream into a series of time windows, and calculate the time domain statistics of the sensor data values in the time window. The size of the data stream of sensor i in the kth window is represented as Si(k).

We chose to calculate the following statistical features derived from each raw sensor stream in the time windows, including:

Mean: Average of the sensor stream.

Var: Variance of the sensor stream.

Max: The maximum value of the sensor stream.

Min: The minimum value of the sensor stream.

Ran: Range of sensor stream.

Next, we tried to remove redundant features by calculating the correlation between each pair of features. If there is a strong correlation between a pair of features, it means that they are similar for describing the user’s behavior pattern, so one of the features can be discarded. A weak correlation means that the selected features reflect different behaviors of the users, so these two features should be retained.

We calculated the user correlation coefficient between each pair of features using regressing method. Then, for each pair of features, we computed the average of the correlation coefficients for all users. The result shows that Ran has a high correlation with Var, which means Ran and Var have information redundancy. At the same time, Ran also has a higher correlation with Max. Therefore, we removed Ran from our feature set and picked the other seven to form our feature set. Both two sensors consist of three axes, so there is a total of 24 features.

Feature summary

Through the analysis of touch screen data and sensor data characteristics, a total of 26 data packet features were extracted for classification authentication of user behavior, as summarized in Table 1.

User behavior features.

Detection process

The system structure is shown in Figure 1. The system uses the username and password as the original input. The system first performs the registration training mode, collects the user’s specific behavior information, and stores it in the database corresponding to the user. After the detection module trains the classification model according to the data, the user identity can be authenticated.

When the system is connected to the network, we upload 2 min test data every hour to enter the system, and add data with good detection results (i.e. the degree of drift within the confidence) to the training database for retraining. At the same time, the feedback learning mode can also deal with the situation when the data has a small drift. In dealing with data drift, here we introduce a concept called confidence as a measure of whether the user’s behavior drift is within the acceptable limits. When the user’s behavior has produced a certain degree of drift, and the amount of data drift is within the specified confidence (in our system we set it to 0.2, and by observing most of the test results, it was found that the behavioral drift was not more than 0.2, so this metric was chosen), we first need to authenticate the user again in an explicit way. This operation is to prevent an attacker from using a small amount of data drift to cheat the model. When the user passes the explicit authentication, the system confirms that this is the behavior drift generated by the user himself, and inputs the drift data into the training model for retraining. Through this feedback learning mode, not only the real-time detection can be realized, but also the detection accuracy of the model can be improved. Hence, the real-time performance promotion of the model can be realized under the premise of ensuring security.

Experiment and evaluation

Training data collection

The smartphones we used for data collection were Xiaomi 6 series. We collected different types of data from 20 participants. See the Appendix for the users involved in data collection and testing. We collected sensor data from different sensors in the smartphone while collecting touch screen data. In order to answer the questions mentioned in the previous section about the design parameters of this system, we will discuss different types of experiments and experimental results in detail. All our experimental results are based on a 2 week free use of the user’s smartphone. In addition to the motion state detection experiment (which requires to use specified laboratory conditions), free use means that the user can use the device without restrictions, just like the way they use the phone in their daily lives.

Definition of evaluation indicators

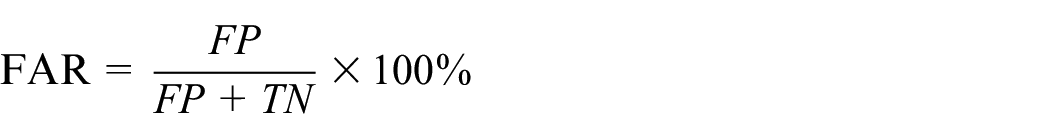

We use FRR, FAR, and accuracy to evaluate our models. The time window size is 6 s and the sampling rate is 50 Hz. 13

FRR describes the probability that a sample should be accepted or rejected. FAR describes the probability that a sample is incorrectly accepted. Accuracy describes the probability that a sample is correctly classified. The definitions are shown as follows

FN is the number of positive samples which are incorrectly rejected, FP is the number of negative samples which are incorrectly accepted, TP is the numbers of positive samples which are correctly accepted, TN is the numbers of negative samples which are correctly rejected, and N is the total number of samples.

Classifier parameter determination

Determination of feature dimensions

We know that an accelerometer is a sensor that detects the current acceleration of a cell phone. Because we live on the earth, we are affected by gravity all the time. Thus gravity will certainly have a slight impact on the data detected by the accelerometer. But considering the difference in gravity in different geographical locations, the impact may not be very large. So, should we use the data directly measured by the accelerometer, or should we use the data to remove the interference from gravity? We use the following method to remove the gravity influence. The data of the gravimeter is introduced when processing the accelerometer data. Because the accelerometer is disturbed by gravity, this interference can be removed by subtracting the gravimeter data directly from x, y, and z axes. In order to determine the effect of gravity on the accuracy of the detection, we first monitor the data of the gravimeter and train the three models to detect the one model using only accelerometers and gyroscopes, one model using only the accelerometer, and last one only considering the gravity factor with the accelerometer and gyroscope. The comparison results are shown in Table 2.

Accuracy using different sensors.

FRR: false rejection rate; FAR: false acceptance rate.

It can be seen that the one model using accelerometers and gyroscopes is more accurate than the one with accelerometers alone. In the case of using both the accelerometer and gyroscope, the accuracy of correct detection rate is reduced after removing gravity interference. It shows that after adding the gravity factors, a certain environmental noise is introduced. Thus, we chose to use the data obtained directly from the accelerometer and gyroscope for detection.

Determination of the number of hidden layers

The number of nodes in the hidden layer is another important parameter in the MLP model. We tested the effect of different hidden layer nodes on the system error rate. The result is shown in Figure 4. According this, we choose 70 layer nodes.

Effect of hidden layer nodes on FAR and FRR.

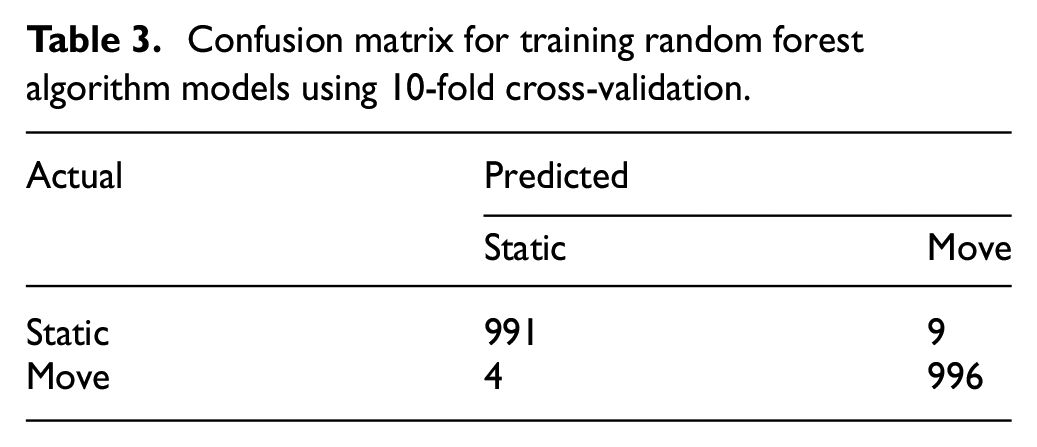

Motion state detection model

It can be known from the previous discussion that we choose the random forest algorithm when constructing the motion state detection model. First, we need to experiment with the detection model built by the random forest algorithm.

For the training and testing experiments of these motion state models, we need to re-collect the data for motion state detection. We allow users to use their smartphones in a fixed state of motion under controlled laboratory conditions. Users are required to freely use the smartphone with the data collection software installed for 20 min in each environment. They need to stay in the current environment till the end of the experiment. It should be noted that this recording process is only used to develop the motion state detection model during the experiment. In the actual case, the training process of the motion state detection is not required, and it can be used normally. Because our trained motion state detection model is common to all users, there is no need to retrain. We used motion state data from different experimental users to train the motion detection model without telling them for what the data is trained for. When we separately perform motion state detection on different users, we use a motion state detection model (i.e. classifier), which is trained with the motion state data of all experimental users. The granularity of this model is holistic (i.e. all users use the same model for motion state detection). This allows us to detect the current user’s motion state before authenticating the user. For the random forest algorithm, we also use 10-fold cross-validation to obtain the results in Table 3.

Confusion matrix for training random forest algorithm models using 10-fold cross-validation.

The data in the table is the average of the prediction results of 20 users (five users in each group, taking 100 groups of averages. Four groups are divided into 20 users, and the remaining 96 groups are randomly selected). It can be seen that the accuracy of the model can reach 99.25% rate after 10 iterations. It is proved that the detection results of the context state of the random forest are reliable.

Comparison of authentication module classification algorithms

For the same set of test data, we tested four algorithms: MLP, SVM, Naive Bayes, and linear regression. 28 The positive data to be detected were the sensor data collected by the specified user, and the comparison data were the data collected by other randomly selected users. We used a 10-fold cross-validation method when dividing the training set and the detection set. The comparison results are shown in Table 4. It can be seen that the MLP has higher detection accuracy than the other three algorithms.

Comparison of four different algorithms.

FRR: false rejection rate; FAR: false acceptance rate; MLP: multilayer perceptron; SVM: support vector machine.

Training certification model without considering motion state detection

After determining the system parameters and the algorithm, we began to train the authentication model. From the foregoing discussion, the ultimate goal of our system is the user’s identity authentication, so our user authentication model is trained separately by user granularity. In the training process of the user authentication model, we temporarily do not consider the impact of the motion state detection on the identity authentication detection results. For the 20 subjects tested, we trained users’ respective models and tested them separately for their respective sensor data and touch screen data. The positive data entered during the training was used by them. The sensor data and touch screen data were collected from the smartphones. The input contrast data were the sensor data and touch screen data of other randomly selected users (i.e. all experimental users), and the ratio of the two data volume was controlled at about 1:1 ratio to train the model. The test sample detection accuracy of the final model is the average of the accuracy of the 20 users.

For all sensor data and touch screen data collected by a user, we first choose to train a model for detection without considering the motion state. The final test results are shown in Table 5. It can be seen that the correct detection rate of our identity authentication system can reach 93.23% when the motion state detection is not considered.

Accuracy without considering the state of motion.

FRR: false rejection rate; FAR: false acceptance rate.

Combine motion state detection and classification authentication models

We have separately trained the authentication model for single user and the motion state detection model for all users. Next we need to combine the two models. The data are first input into the motion state detection model to determine the motion state of the user, and then the data are transmitted to the authentication detection model of the user’s corresponding motion state. In the previous section, we only identified the authentication detection model for the user’s granular training, and did not distinguish the user’s motion state. Therefore, here we first need to separate the data of the user in the motion state and the stationary state, and then train the user’s authentication detection model for different motion states. One thing to note here is that in order to make our model more convincing and fit better with reality, the positive data used in our model are the data collected when the user is in a specified state, while comparison data are the data collected by other users in the same state. We also used the 10-fold cross-validation method to get the training results when training the model. The final test results are shown in Table 6.

Accuracy under two motion states.

FRR: false rejection rate; FAR: false acceptance rate.

It can be seen that the training results of the model are better in the accuracy than the ones ignoring the motion state, after classification.

After training each user’s authentication detection model without motion, we need to combine the motion state detection model with the authentication detection model to conduct experiments. First, the data are input into our motion state detection model to get the current state of motion of the user. Then, the data are input into the corresponding user authentication model according to the obtained motion state, and finally the detection results are given out. Our final test results are shown in Table 7.

Accuracy after motion detection.

FRR: false rejection rate; FAR: false acceptance rate.

Although the accuracy is not high in the model under the individual motion state, but it is still better than the detection rate or correct rate when the motion state is not considered, which reaches 95.96%.

Conclusion

In this article, a new identity authentication system is proposed to improve the security of smartphones. We have successfully implemented an implicit continuous authentication system, which distinguishes between different motion states, and built the user authentication model based on deep learning. Our system can continuously monitor user’s sensor data and touch screen data, and perform periodic upload to achieve continuous authentication. This continuous authentication process only requires the software running in the background, and other operations can be implemented without any manual operations. Experiments have verified that our system has higher verification accuracy and faster detection speed. We have improved accuracy by combining touch screen features with the sensor features. At the same time, the detection efficiency and accuracy are further improved by the motion state detection and time features. The system can achieve accuracy of 95.96% (FRR 2.55% and FAR 6.94%). Moreover, the system is a lightweight system, and only requires data collection and a final feedback module in the user’s mobile terminal system. Both the arithmetic part and the classification part are implemented in the cloud. Therefore, our system overhead is negligible.

In practical applications, the overall design concept of the system can also be combined with other explicit authentication mechanisms, or be improved to achieve a more efficient and accurate user identity authentication system.

Footnotes

Appendix

In this section we provide background information on data collection and experiments that serves to further illustrate details of our experiments.

Handling Editor: Yanjiao Chen

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by National Key Research and Development Program of China (No.2017YFB0802300), the NSFC-Zhejiang Joint Fund for the Integration of Industrialization and Informatization (No. U1509219), the National Key Research and Development Program of China (No. 2018YFB0803503), and National Natural Science Foundation of China (No.61831007).