Abstract

In order to optimize the timeliness and pertinence of message delivery in emergency rescue scenarios and improve the service performance of emergency communications network, we propose a location-assistant content distribution scheme based on delay tolerant network. First of all, considering that the movement patterns of rescue teams tend to follow a predetermined course of action, we design a location-based group mobility model. Due to the intermittent network connectivity and variety of emergency service, a content-classification-based publish/subscribe architecture and a GenericSpray routing algorithm based on the prediction of overlap opportunity in spatio-temporal positions are proposed. Furthermore, we also give a cache management strategy based on the content significance. Since location-assistant content distribution scheme can predict the overlap of activity between rescue teams through the course of action, not only can the number of copy forwarding and message delivery delays be significantly reduced but also the priority delivery of important messages can be ensured by message classification. Simulation experiments show that compared with the traditional delay tolerant network routing algorithm and the classic first-in-first-out caching strategy, location-assistant content distribution scheme improves the performance of message delivery rate, transmission delay, and control overhead significantly.

Keywords

Introduction

Nowadays, frequent emergencies have brought huge loss to human beings, and how to provide various emergency communication services for emergency rescue workers quickly and effectively has become an important issue which needs to be solved urgently. 1 The impact of disasters on human life has been analyzed to develop an improved and applicable framework.2–4 Existing information transmission protocols, especially the protocols running in wired networks, mostly assume that the network is always connected, that is, there is an end-to-end path between any two nodes at any time. However, due to the sudden occurrence of disaster events and the large area involved, it is difficult to deploy a sufficient number of network nodes in a short period of time. In addition, complicated communication conditions and limited communication range of mobile terminal make the temporary emergency communication network unreliable, which forms a sparse and partial disconnected ad hoc network. 5 In this kind of network, the reliable delivery of data over the entire network cannot be guaranteed, because there often occurs a long-time network segmentation phenomenon (i.e. there are many subnets that cannot communicate with each other), and even the entire network cannot meet the requirement of area coverage. Therefore, there is an urgent need for an effective network organization and data delivery mechanism to enhance data delivery reliability, improve data delivery rate, and reduce delivery delay, hereby improve the network service performance.

Delay tolerant network (DTN) 6 is a special communication network, which can tolerate network interruption, high transmission delay, and wait for opportunities to organize network automatically without relying on pre-existing network infrastructure, especially for temporary emergency communications occasions. Hence, DTN has attracted extensive attention from the research community recently. For example, in the case of emergency rescue, DTN technology can be used to distribute the information about the personnel and disaster areas to the rescue teams. Moreover, rescue teams and members can also improve their rescue efficiency using DTN technology. On one hand, the DTN information transmission efficiency highly relies on the contact opportunities between different nodes, and it adapts an asynchronous store and wait message forwarding pattern to send data to the destination node hop-by-hop, so as to ensure the reliability of information delivery by sacrificing some transmission delay performance. On the other hand, the mobility of rescue teams is usually regular and consistent with the predetermined course of action, so we can improve the pertinence of message delivery and reduce the message delivery delays by predicting the overlap area and time of active locations between the rescue groups. In addition, the message content can be classified by priority, so as to ensure the preferential delivery of important emergency information.

According to the above analysis, we propose a location-assistant content distribution scheme (LACDS) based on DTN. First, considering that the movement patterns of rescue teams tend to follow a predetermined course of action, we design a location-based group mobility model (LGMM). Next, by introducing the publish/subscribe architecture based on content classification, we also propose a GenericSpray routing algorithm for predicting the opportunity overlap in spatio-temporal positions and give a cache management strategy based on content significance. LACDS enhances the reliability and timeliness of emergency message delivery by taking full advantages of the location information provided by the course of action. In other words, LACDS absorbs the idea of location-based services (LBS) and ensures preferential delivery of important messages by the categorization of classification, thus effectively improving the efficiency of message transmission and providing reliable and robust communication services for information exchange and coordinated actions among rescue teams.

The rest part of this article is organized as follows: section “Related work” summarizes the related work on DTN; the LGMM is designed and introduced in section “LGMM”; section “Content distribution mechanism” describes the LACDS based on business priorities and DTN in detail; section “Simulation and result analysis” verifies the validity of the proposed content distribution mechanism through simulation experiments. Finally, section “Conclusion” summarizes the work and points out the direction for future work.

Related work

DTN-related applications

As a new network architecture, DTN was first proposed by the Interplanetary Internet Research Group (IPNRG) to provide inter-satellite communication services that can tolerate disruptions and delays in network connectivity. 7 In recent years, with the development of wireless communication and the popularization of mobile terminals, applications based on DTN are increasingly enriched, including interplanetary Internet, tactical communication network, environmental monitoring network, mobile vehicle network and mobile social network. 8 For example, some research works propose using low-altitude aircraft or satellites to interconnect multiple isolated terrestrial networks to construct an air–ground integrated DTN network. 9 The pocket switched network (PSN) 10 proposed by the University of Cambridge and Intel Research Institute is a DTN opportunistic network formed by hand-held terminals. DTN is also applied to emergency environment 11 designs a decentralized trust management scheme (DTMS) to filter out malicious nodes in DTNs for satellite communications 12 presents a content distribution and retrieval framework in disaster networks for public protection which exploits the design principle of the native content centric networks architecture in the native DTN architecture. The mobile device can contact with each other through partial communication opportunities brought by contact between nodes, and it can also forward data by means of access points such as WiFi to access the Internet. Vehicle network based on DTN can improve the traffic efficiency and safety because it is beneficial to enhance the sharing of information between vehicles and provide traffic warning and road traffic detection. For example, EZCab is a taxi reservation application system, in which you can find and reserve a taxi by multi-hop forwarding in the vehicle network. Moreover, some scholars proposed a solution to reduce the load of cellular network by utilizing the DTN formed by hand-held terminals. 13 Some mobile terminals can directly distribute certain information to other terminals that subscribe to this content through DTN routing. And, some scholars also proposed that message ferrying mechanism provides temporary network connection through active movement of relay nodes, thereby improving the service performance of DTN. 14 DTN is also used in areas of information-centric network (ICN), mobile ICN, named data network (NDN), Internet of things (IoT), and so on. A survey on those applications is done and an alternate taxonomy for classifying existing DTN routing algorithms has been proposed.15,16

DTN content distribution mechanism

Unlike the traditional Internet, DTN adds a bundle layer between the transport layer and the application layer, providing an asynchronous message delivery method that ensures the reliable transmission of data in complex network environments. Nevertheless, there are still many technical challenges in the DTN networks, such as the design of efficient content distribution mechanism. 17 The two unresolved problems of efficient content distribution mechanism are as follows: (1) what kind of message content and cache management strategy should be chosen and (2) how to determine the message forwarding relay node hop-by-hop? In DTN, each node transmits data in a store-and-carry-forward mode, and each data to be forwarded are stored on an intermediate node for a long time, making content storage become a key service of DTN. Unlike traditional networks, DTNs combine message storage with routing to support the content-based networking, where the flow of messages in the network is driven by content. Therefore, content distribution in DTN generally adopts the content-based publish/subscribe model, 18 as shown in Figure 1. In the content distribution model, network nodes can be divided into three roles: subscribing nodes, publishing nodes, and intermediate nodes. And, the data are classified according to its content priority. The publishing and subscribing nodes select the corresponding data content for publication and subscription according to their interests, and the intermediate node forwards the received subscription request and publishing data.

Content-based publish/subscribe model in DTN.

In recent years, the industry conducted a systematic research on the content distribution under the DTN network environment. Li and Wu 19 design a content distribution system for DTN network, in which the subscribers’ subscription requests spread within the network in a flooding way. When receiving the request, the publishing node will send the corresponding data to the request node hop by hop until the subscribing node. A new content exchange protocol is used to maximize the information utility stored in nodes. 20 The protocol stipulates that the exchange order of message is decided by its practicality during each contact period of the node. Majumder et al. 21 propose a receiver-centric content distribution strategy. When two nodes meet each other, they ask each other for the content that they are interested in. However, the information transmission between network nodes only rely on the pull way adopted by subscribe nodes, and the publish nodes do not push data into the network. In addition, the approach proposed by Resta and Santi 22 deduces the social relations among nodes according to the meeting history of nodes and calculates the appropriate community boundaries. The data distribution in the community is directly matched according to the interest of the nodes, while the data transmission work between communities is handled by the marginal agent nodes.

DTN routing algorithm

As previously shown, DTN content distribution mechanism must also take the selection of proper routing strategies into account, so as to ensure the efficient end-to-end data transmission. So far, researchers put forward a variety of DTN routing algorithms aiming at different application scenarios, which can be divided into these types, such as node-mobility-mode-based routing, 23 routing based on predicting encounter probability with historical information, 24 flooding-based routing, and single- or multi-copy-based routing. And, among these, single-copy- and multi-copy-based routing are classified according to the number of messages copies transmitted in network, which is also a hot topic among the different kind of routing strategies. 15 S Merugu et al. 25 propose a new space–time routing framework which constructs space–time routing tables where the next hop node is selected from the current as well as the future neighbors. More specifically, single-copy-based routing algorithm in DTN keeps only one copy of the message during the transmission process, and the node will delete the message after it is passed to the next hop and no additional copies will be retained. For example, policy forward routing 26 selects an optimal path for message transmission using the network topology information. In the process of message transmission, there is no message copy mechanism, and thus, the redundant copies will not appear in network. In DTN multi-copy routing algorithm, the node will still retain the message after the message is passed to the next hop node, so as to make a variety of message copies transmitted in network to increase the probability of message delivery. A typical representative of the multi-copy routing algorithms is Epidemic routing, 27 in which each relay node copies messages in turn and forwards them in a flood mode, the messages delivery rate of Epidemic routing is high, but it consumes a large number of resources. Due to this problem, probabilistic routing (such as Prophet) 28 transmits messages in a certain probability distribution according to the actual situation of node mobility and network topology, so that the redundancy of message forwarding can be reduced to a certain extent. In addition, Spyropoulos et al. 29 proposed SprayAndWait Routing, which can limit message copies effectively and improve the overall routing performance.

DTN cache management strategy

Generally speaking, the nodes in DTN have limited computing ability and storage capacity, and usually DTN adopts method based on multi-copy routing to maintain multiple copies of a message, which greatly increases the usage of the cache resource. As a result, the cache management algorithm is vital to the performance of distributing content in DTN. 30 Quite a number of mechanisms are proposed to solve such a problem. For example, Li et al. 31 proposed an adaptive optimal cache management strategy for DTN, using a control channel to get global information of network, such as opportunities to transmit messages, the meeting time, and meeting frequency of nodes, and decide which kind of information should be rejected depending on these information. Lindgren et al. 32 use optimization theory to design the cache management and messages discarding strategies, which can optimize the performance of DTN from many aspects, such as delivery rate, transmission delay, and network overhead.

LGMM

In the wireless network, the mobility model of nodes is the variation pattern of nodes’ absolute or relative physical location over time. By studying the mobility model of nodes, we can design the routing algorithm which conforms to the movement pattern of the nodes, so as to provide a better communication service for the users. For example, by predicting the locations of nodes, the message transmission and load balancing mechanism can be implemented better. 33

At present, the mobility model of nodes can be divided into two categories: individual mobility model and system mobility model. When describing the mobility pattern, the individual mobility model considers the absolute position change of nodes. The mobility model depicts the mobility characteristics of the nodes as individuals. Typical individual mobility models include random walk model and random waypoint model. 34 The process of forwarding the message between nodes in the wireless network is closely related to the neighbor relationship of the node, and the relative position of the node directly determines its neighbor relationship. Therefore, for message forwarding, the relative position between nodes is a factor that needs to be considered. The system mobility model is proposed to describe the variation pattern of the relative position of the nodes. The model describes the mobility characteristics of some nodes as a whole. Typical system mobility models include group mobility model and community model. 35

In the emergency communication scene, the mobility of emergency rescue teams has typical characteristics of group movement and presents unique movement patterns. When an emergency occurs, many emergency rescue teams are often required to go to multiple areas of accident, such as search and rescue teams, medical teams, transportation teams, and security teams. These rescue teams’ action model generally has the following pattern: first, each member of the rescue team that performing a specific task will gather to a designated area according to the command’s orders and then they quickly rush to the area where the rescuers are located and start the rescue operation. After a rescue operation in the accident area is completed, the rescue team continues to go to the next site (target area) to perform another rescue task, and the rescue team members can be alone or work in groups to perform these rescue tasks. The above process is repeated until the rescue mission is over. It is not hard to find that the movements of the nodes in the rescue team has a very strong integrity, they usually follow the predetermined course of action and timeline, and perform specific rescue operations according to the location information; But, at the same time, the operation of these nodes in the rescue area is random and presents a strong individuality.

To reflect the unique mobility characteristics of rescue team in the emergency communication scenario, based on the traditional group mobility model, we introduce the course of action to it, and nodes finish their assembly and rescue activities according to the predetermined positions and course of action. We name such a mobility model as LGMM. An important feature of LGMM is using the course of action, which includes spatio-temporal location information, and the table specifies geographic coordinates and radius of the rescue areas and action time. Details are as follows: Initially, each rescue group can enter the rescue area from different locations and follows a specific course of action, nodes have equal status in the group, and nodes rally to the designated area according to the established route. After arriving at the accident area, the nodes of each group will expand and launch the rescue operation in the area. After finishing the rescue operations in this area, the rescue teams will go to another designated area according to the course of action to carry out rescue missions. When each group of nodes is in transitions between different areas, they will follow the shortest available roads constraint, so as to save time. It should be noted that the course of action can be dynamically changed and adjusted according to the needs of the emergency rescue, and the command will promptly notify the rescue teams the table by means of satellite communication.

In order to understand the above LGMM better, Figure 2 gives a simple example in an emergency scenario. In the diagram, three emergency rescue teams (A, B, and C) are set up, and each team contains a certain number of rescue workers. A1∼A3, B1∼B3, and C1∼C3 are rescue areas defined in the course of action that node sets A, B, and C should arrive, and the corresponding course of action is shown in Table 1.

An example of group moving model based on location information in emergency scenario.

Course of action on the emergency occasion of Figure 2.

From Table 1, we can find that according to the course of action, the nodes of different rescue teams have opportunities to meet at specific locations and times during the implementation of the secure operation. DTN content distribution mechanism can take advantage of this kind of encounter to speed up the delivery of emergency messages, as in the A2, B2, C2, B3, and C3 areas of Figure 2.

Content distribution mechanism

Content publishing/subscribing based on content classification

Overview

Efficient content delivery mechanism is the key to improve the service quality of emergency communication network. In emergency rescue scenario, each rescue team will release various kinds of information according to the actual disaster situation and the rescue progress. Staff from different teams will subscribe and receive the information according to their respective mission demands. Therefore, a content-based publish/subscribe model is proposed to distribute all kinds of emergency rescue information in DTN. Under this architecture, all kinds of information are classified according to the content of the message. Each member in the rescue team only requests the classified messages of their interest, so as to avoid unnecessary transmission of stored data when the nodes meet, thereby the total amount of messages transmitted over the network is greatly reduced.

The publish/subscribe mode mentioned above is an asynchronous communication mechanism, which separates the source node and the destination node of the data. The data replication and forwarding are driven by the node’s interest and do not require the existence of an end-to-end path. So, it provides great flexibility and adaptability for data distribution and is especially suitable for emergency communication networks that are unstable in topology and often do not have an end-to-end path.

Content classification

Emergency communication environment is complex and changeable. According to the importance of information, emergency rescue information can be roughly divided into four categories:

Information of class A: mainly refers to real-time and most important command and control information, such information should be given high priority in network transmission.

Information of class B: mainly refers to the rescue area’s situation information, such as disaster information, and personnel location. The real-time and importance of such information is only lower than information of class A.

Information of class C: mainly includes meteorological and hydrological information and other content. The importance of such information is lower than the upper two.

Information of class D: in addition to the above information, the real-time and importance feature of such information is somewhat low. When DTN networks become congested, a part of such information may be discarded to satisfy the transmission requirement of high-priority information.

Information release and subscription

When a node in a DTN network wants to publish data, the application layer of the node marks the related attributes of the data to be released and then sends it to the layer of the bundle and stores the data in the node’s cache. Data-related attributes include release time, classification number (priority), sequence number, and expiration time. In addition, when the application layer data are large, it may need to be segmented into multiple bundle layer messages and mark the head of each segment with information such as creation time, classification number, serial number, version number, and expiration date. In this case, the node subscribing to this data can recover the content of the data at the application layer only after obtaining all the fragment messages of the same version number.

When a node wants to get interested data, it performs a subscription operation. The node’s application layer will pass the subscribed information to the bundle layer, and mark with the release time, classification number, sequence number, and expiration date. When a subscribing node encounters another node, it sends a subscription request message to the neighbor node most likely to reach the destination node. If the subscribing node does not receive the requested message at the expiration of the validity period, it will send the same subscription request again. If a node receives the message it subscribes to, it will delete the previously published subscription request to reduce cache usage and unnecessary message transmission. This node can declare the message that needs to be deleted specially, or piggyback this message when a new subscription request is issued.

Information processing flow

Each node in the network maintains three tables: a request table, a request count table, and a message table. When a node receives a subscription request from another node, it will operate according to the information in the three tables it maintains. The request table records related information about publication or received subscription requests by this node, including the source node that publishes the subscription request, the sending time, the request sequence number, the classification number, and the expiration date of the message. The request count table records the number of requests received by the node for various types of messages in the network. The message table records the information about the messages sent or received by this node. When a node receives a subscription request message from another node, the corresponding operation is executed according to the flow shown in Figure 3.

Processing flow of node after receiving subscription request.

When the request of a node is handled by some nodes, and the response message is received by the node, it will check whether the request is published by it. If so, the bundle layer of this node will transmit this message to the application layer. If not, the node will send the message to the previous hop node indicated in the request table. If there is no such record, it will temporarily cache the message and forward it until the next encounter.

Message routing predicted by spatial and temporal location overlapping opportunity

In order to improve the performance of data transmission in emergency communication networks, the information of spatio-temporal activity locations contained in the course of action can be used to calculate the overlapping contact opportunities of member positions in different rescue teams, and then, we can determine the optimal intermediate forwarding node which will effectively improve the pertinence and accuracy of message forwarding, and improve the efficiency of data transmission and network performance. For example, as shown in Table 1, it can be determined that the space rescue area A2 (A group) and B2 (B group) will occur space–time overlap during the time period of 10:40–12:30, which makes nodes in groups A and B have the opportunity to meet (see Figure 2). In fact, nodes in different rescue teams can predict the chances of encounters between their neighbors and target nodes through the course of action, and then, select the optimal intermediate node to forward messages. In other words, the node will preferentially forward the message cached to the neighbor node that has earlier chance of encountering the target node. In this way, message will arrive at the target area more quickly, which improves the pertinence and timeliness of message delivery.

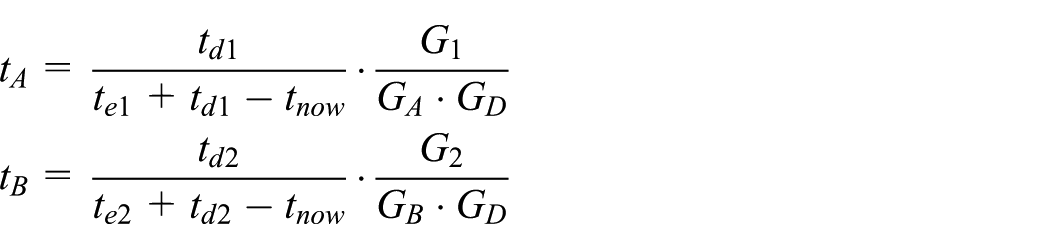

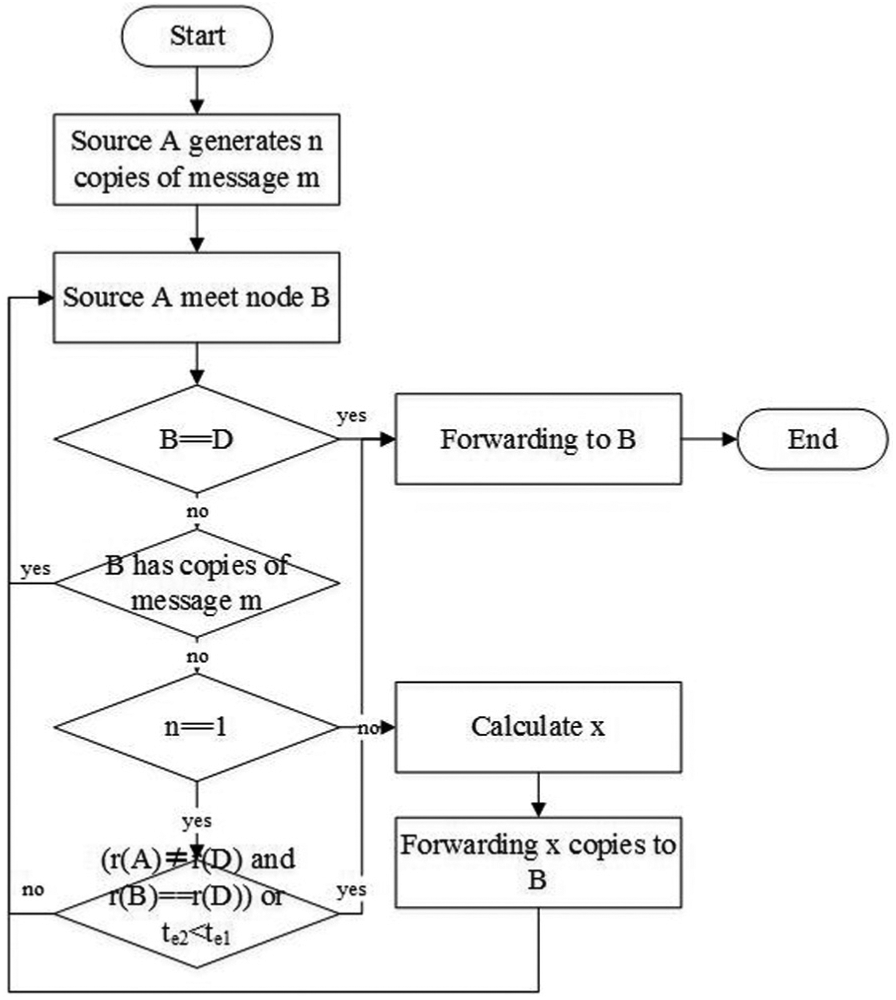

Since nodes in the DTN need to transmit messages by encountering chance, in order to make the message arrive at the destination node as soon as possible, the method of saving multiple copies of messages in the network is usually adopted to raise the probability that one copy reaches the destination node as soon as possible. In order to reduce network resource consumption due to excessive message copies, based on the experience of SprayAndWait routing, message forwarding among members of an emergency team must effectively limit the number of message copies. A basic principle is that there is a fixed upper limit on the number of copies of a message in the network. When two nodes encounter with each other, the node which can earlier overlap with the rescue team where the target node is located and has longer overlapping period, will have more chance to encounter with the target node, and also hold more copies of the message. In this article, the message forwarding route mentioned above is called GenericSpray. Its message forwarding process is as follows (as shown in Figure 4):

Step 1: suppose node A in a rescue team wants to send message m to the destination node, meanwhile A holds n copies of m (n > 1). When node A encounters with node B, if node B has no copy of message m, node A will forward x copies of m to node B and retains the remaining (n − x) copies. x is determined by the following method.

Step 2: using the course of action, we can estimate the earliest possible meeting time te1 between node A’s rescue team and the destination node D’s rescue team. Suppose the overlapping activity time after encountering is

Flowchart of message forwarding.

The encounter chance weight mentioned above is an evaluation of the next meeting chances between nodes A or B and the destination node of the message, and the forwarding copy number is determined by their ratio. Then, node A sends a copy of the message to node B with the probability

Step 3: as time goes on, the number of message copies m held by node A gradually decreases. If n = 1, when node A meets node B, if node B has no copy of message m and satisfies the following conditions, node A will give its own copy of the message to node B and removes the message from the cache: node A and destination node D belongs to different rescue teams, while nodes B and D are in the same rescue team, or the expected meeting time of nodes B and D is earlier than that time of nodes A and D.

Strategy of cache management

Currently, the most common cache management method is the first-in-first-out (FIFO) principle. The core concept of this method of discarding messages in sequential order is to treat all kinds of messages equally and allocate cache resources evenly. However, in the real network, the importance of all kinds of information and real-time requirements are not the same. As mentioned above, the information in emergency rescue can be divided into four categories based on the importance level.

In order to ensure that important messages will not be discarded prematurely due to insufficient cache, the content delivery mechanism proposed in this article adopts the following cache management strategy: when the cache is not enough, it is necessary to allocate cache resources according to demand rather than allocating them equally. That means, we manage the cache according to the importance of the content of the message (content importance (CI)). Specifically, first, the discarding and forwarding priorities of the message are distinguished according to the message types. Then, the same type of messages is distinguished according to the requested times of the messages, and the more messages are requested, the higher the importance is. In other words, when the cache is insufficient, messages that are low important and lack times of being requested will be discarded first. If both of the two messages have the same number of request times, the message with the shortest remaining valid time will be discarded first. In addition, the node shall delete the message from the cache after it submits the message to the destination node. Finally, each node caches messages that are published or subscribed by itself in the first order. Figure 5 shows the decision-making tree of cache management based on the CI policy.

Decision tree of cache management based on CI strategy.

Simulation and result analysis

Simulation environment and parameter settings

In order to evaluate the performance of location-assisted content delivery mechanism designed for emergency rescue scenario in this article, we first implement a location-based group movement model (LGMM) on the ONE simulation platform. 36 Then, we implement the GenericSpray algorithm which can predict the encounter chance. We make comparison with other typical DTN routing algorithms on the performance synthetically.

Finally, the cache management mechanism based on CI policy is implemented on ONE platform and compared with the performance of the classic FIFO cache management mechanism. ONE is a discrete event-based network simulation platform developed by Helsinki University of Science and Technology. It is now widely used in the field of DTN research. Table 2 shows some important parameters in the simulation test. The simulation experiment scene is shown in Figure 6.

The parameter settings in simulation experiments.

LGMM: location-based group mobility model.

Diagram of emergency rescue simulation scenario.

In the experimental scene, three rescue teams A, B, and C are set. Each group contains 50 member-nodes. Initially, each rescue team is ready to stand by at designated locations with a radius of 100 m, and there is no overlap with each other. Then, after the emergency happens, the nodes of each group are deployed to their respective rescue areas under the command of the emergency command structure according to the course of action and perform rescue operations after reaching the rescue area. The radius of the rescue area is set to 200 m; meanwhile, only the rescue areas of groups B and C will overlap partially, and the geographical centers are 200 m apart. After the rescue mission is completed in the first target area, the three rescue teams are assigned to move to the second rescue area again, and only the rescue areas of groups A and B will overlap and the distance between their centers is also 200 m. Finally, the three rescue teams will return to their respective assembly areas after completing the rescue mission in the second rescue area. The above process is repeated.

In the simulation experiment, it is assumed that the three rescue teams have 30 min of deployment time in the two sets of rescue operations. The assembly time of gathering in the area of a radius of 100 m is not set and the total duration of rescue mission is 3 h. They can both be adjusted. Throughout the whole rescue operation, nodes in group A send messages to nodes in group C at a fixed rate, with a message size of 500 KB to 1 MB, and the validity of a message is 2 h. Message is generated at rate of 12 per minute, the source and the destination node of the message are random, and the cache capacity of a node is 40 MB.

Simulation results and performance analysis

Performance comparison under different assembly time

Based on the simulation scenario described in section “Simulation environment and parameter settings,” the other parameters are kept unchanged. By adjusting the stay time of the node group in the rescue area, the performance of the routing algorithm is observed during the whole process. This simulation comprehensively compares the GenericSpray routing proposed in this article with Epidemic routing based on flooding, probabilistic Prophet routing, and SprayAndWait routing which limits replicas. The detailed simulation results are shown in Figures 7–9. Based on the scenario, we can conclude that the longer the aggregation time of a node group, the less chance of node to encounter with each other and the greater the span of the asynchronous transmission path in time from the source node to the destination node.

Changes of message delivery rate under different assembly time.

Changes of message delivery delay under different assembly time.

Changes in transmission costs under different assembly time.

From the experiment, we can see that compared to Epidemic and Prophet algorithms, the performance of SprayAndWait and GenericSpray algorithm is much better. The latter two algorithms have much higher delivery rate than the former two, the delay is close, and the transmission cost can be ignored. The inefficiencies of the Epidemic and Prophet algorithms mainly result from the blind copy-flood policy, which makes the network is full of redundant copies of messages. This wastes the cache and consumes valuable bandwidth hardly. Although Prophet limits the number of copies by estimating the chances of nodes encounter, the effect is very limited. The reason is that Prophet regards node movement to be independent and individual, and it does not take advantage of the rules of the group movement. Due to the prediction of chances of encountering between nodes, the relevance and accuracy of message forwarding is enhanced. The overall performance of GenericSpray is better than SprayAndWait.

In fact, the close delivery delay in Figure 8 is only an illusion, because the delivery rates of the four algorithms are quite different. For example, when the assembly time is set to 30 min, the delay of the GenericSpray is slightly larger than that of SprayAndWait (Figure 8) seemingly, but the truth will be seen after calculating the distribution of the delay by selecting the successfully delivered message of the two algorithms (Figure 10). It is observed from Figure 10 that GenericSpray produces even 5 min less delivery delays than SprayAndWait averagely for subgroups that are delivered successfully both.

Probability density distribution of delivery delay difference between two routing algorithms.

In this set of experiments, as transit nodes, nodes in Group B and the source node (Group A node) both have opportunities to contact with the destination node (Group C node), but the time is limited. Since nodes in group A and nodes in group C do not meet during the whole time, nodes in group A must forward the message to nodes in group B to carry it as soon as possible. When encountering nodes in group C, nodes in group B will send the message to them.

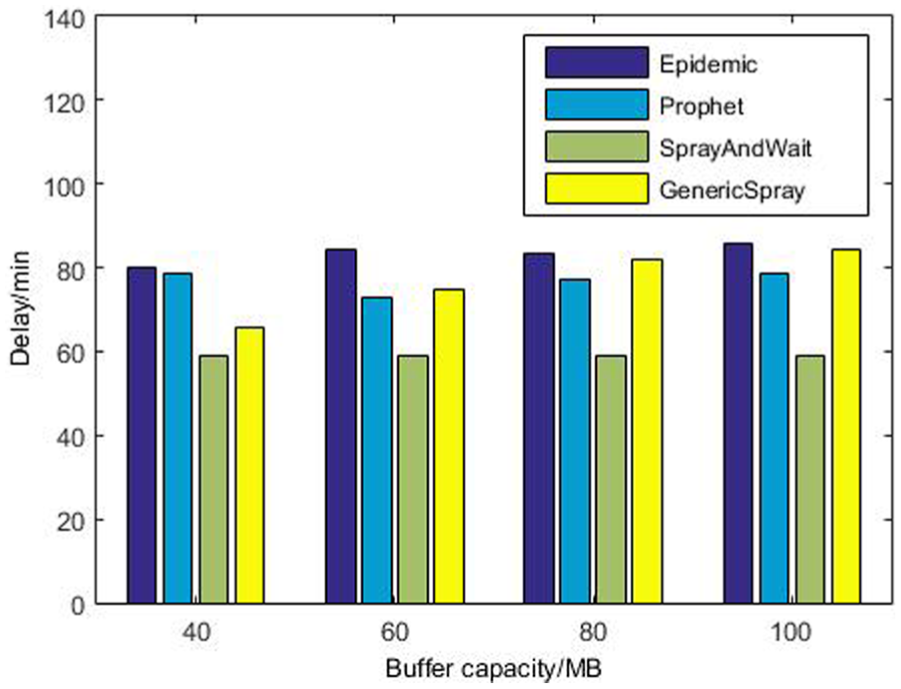

Performance comparison under different cache capacities

From the simulation analysis, it can be seen that under the experimental scenario, nodes in group B will carry messages for a long time before completing the forwarding, which has a large pressure on their buffer space. Therefore, in the following experiments, assembly time is fixed to 30 min, we adjust the node’s cache capacity and view the performance of the routing algorithm changes as shown in Figures 11–13.

The change of message delivery rate with different node cache capacities.

The change of message delivery delay with different node cache capacities.

The change of transmission overhead with different node buffer capacities.

The results display that the comparison of the four routing algorithms performance with different cache capacities is similar to the previous one. However, the increased cache enhances the performance of Epidemic, Prophet, and GenericSpray in varying degrees, except SprayAndWait. This is because Epidemic and Prophet are based on copy-replicated. The larger the node’s cache capacity, the more messages it can carry, which bring better message transmit performance, especially for Epidemic. Based on prediction of opportunity, GenericSpray will purposefully gather messages to B group nodes to carry, and the transmission performance will be improved after the buffer capacity is increased. However, GenericSpray has a limited cache requirement because of the relatively fixed number of copies. In contrast, although SprayAndWait limits the number of copies, it does not tend to concentrate messages on group B nodes. The distribution of messages on nodes is completely determined by the nodes’ chances of encountering, so its demand for cache capacity is very low. Experiment results shows that 40 MB space is sufficient.

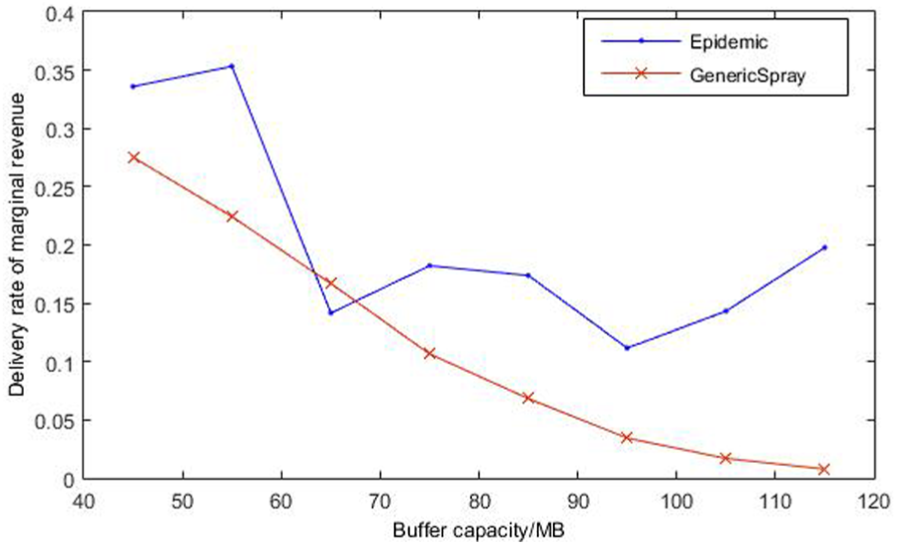

Figure 14 compares Epidemic and GenericSpray on the metric of marginal revenue (that means the percentage increase in the delivery rate for each additional 10 MB of cache space) from cache delivery. Clearly, Epidemic’s marginal revenue is greater than that of GenericSpray. GenericSpray’s marginal revenue declines more rapidly, however, Epidemic is even rising after a turning point. This is enough to illustrate that the demand of cache for GenericSpray is very modest.

Delivery rate of the marginal revenue to the buffer capacity.

Performance comparison under different cache strategies

Finally, all the messages are divided into four levels according to the publish/subscribe framework in the simulation experiment, and the importance of the message is reduced in turn. Table 3 shows that the total number of messages proportion for different levels of messages, respectively.

Different levels of the proportion of messages.

We use the LGMM as the mobile model of group node and use GenericSpray as the routing algorithm for message transmission. Furthermore, the cache size of each node is set to 20 MB, the message lifetime is set to 300 s, and the size of each message is set between 500 KB and 1 MB. The entire simulation experiment duration is 7000 s. Table 4 shows the experiment results comparison of the cache management mechanism based on CI policy with FIFO mechanism.

Performance comparison under CI and FIFO cache strategy.

CI: content importance; FIFO: first-in-first-out.

As we can see from the comparison of the experiment results in Table 4, the cache management mechanism based on the CI policy can provide a better service quality guarantee for the important messages, especially when the bandwidth resources and the storage resources are limited, the type A messages only lose one, while FIFO loses more than half of the messages. In addition, the four types of message are strictly guaranteed according to the importance of messages, and the success rates of delivering important messages are significantly higher than those of non-important messages. Obviously, this CI strategy is very necessary for carrying out the development of emergency rescue smoothly in complicated and harsh network environments.

Conclusion

Emergency communication environment is complex and changeable, which makes it hard to guarantee network coverage and connectivity. How to deliver emergency command and disaster information timely and reliably is very vital to ensure the organization of rescue. DTN makes use of communication opportunities caused by nodes contact to transmit data hop by hop, which is very suitable for providing robust information delivery service in emergency communications.

However, most of the existing DTN information transmission mechanisms do not fully consider the emergency rescue activities and business demands, which results in some performance issues.

Based on the summary and analysis of the existing DTN content delivery mechanism and routing algorithm, by taking the movement rule and service characteristics of the rescue team in the emergency communication environment into consideration, we design an LGMM and propose a location-assisted DTN content delivery mechanism which describes the publish/subscribe architecture based on content categorization and expounds a DTN generic routing algorithm that predicts the opportunity of location overlap. A cache management strategy based on CI is also raised.

LACDS effectively combines content cache management, information publish/subscribe and message routing mechanism. It predicts the chance of encounters among rescue groups through the course of action. Not only it can significantly reduce message delivery delay and the number of copy forwarding but also effectively improve the relevance and timeliness of messaging through the message classification to ensure the priority delivery of important news. On ONE simulation platform, the proposed information transmission mechanism is compared with the typical DTN routing algorithm and cache management clue, which verifies the feasibility and effectiveness of the proposed algorithm. In the future, social network features can be fully utilized to improve the accuracy and timeliness of message forwarding. Embedding network coding into content distribution can also further improve the efficiency of message transmission. Last but not least, the safety and fairness should also be considered.

Footnotes

Handling Editor: Al-Sakib Khan Pathan

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.