Abstract

As neurorehabilitation research continues to grow, the field must ensure its scientific discoveries are implemented into routine clinical care. Without targeted efforts to increase the implementation of evidence into practice, patients may never see the benefits of interventions, assessments, and technologies developed in the confines of empirical studies. This article serves as a response to Lynch et al’s 2018 Point of View piece in Neurorehabilitation and Neural Repair that underscored the urgent need for implementation studies to expedite the application of neurorehabilitation evidence in practice. To address this need, we provide the following 4 considerations investigators should contemplate when designing their own studies at the intersection of implementation and neurorehabilitation research: (a) consideration of guiding theories, models, and frameworks, (b) consideration of implementation strategies, (c) considerations of target outcomes, and (d) consideration of hybrid effectiveness-implementation designs. To conclude, we also provide a study exemplar to depict how these considerations can be integrated into the neurorehabilitation research field to narrow the evidence-to-practice gap.

Keywords

Introduction

Over the past decade, there has been a substantial increase in research to establish assessments, interventions, and technologies designed to mitigate the devastating effects of stroke.1-3 Despite these empirical investigations and their related findings, extensive well-documented barriers continue to limit the implementation of stroke rehabilitation research into routine clinical practice. 4 The most frequently cited barriers to evidence implementation include clinicians’ lack of time, inadequate training, organizational constraints, and poor intervention descriptions in the literature.4-7 Without targeted efforts to overcome these barriers, the stroke rehabilitation field will be limited in its ability to provide high-quality, evidence-based care to the stroke survivor population.

Fortunately, the field of implementation research is dedicated to developing and deploying implementation strategies that are designed to combat barriers to evidence use in practice.8-10 Lynch et al’s 11 Point of View article in Neurorehabilitation and Neural Repair strongly advocated for journals, funding agencies, practitioners, investigators, and policymakers to actively support the conduct of implementation research with the goal of advancing stroke rehabilitation. Specifically, Lynch et al asserted that “investing in research to determine how best to translate research findings into clinical practice. . .should be seen as a priority to the research and clinical community.” Indeed, the intersection of stroke rehabilitation and implementation research is an understudied area, leaving significant knowledge gaps in the strategies most effective for facilitating evidence use in practice. 12 While the need for such research is apparent, specific examples of implementation research studies in stroke rehabilitation are just beginning to emerge in the literature.13-16 As such, stroke rehabilitation researchers have few exemplars for how to design studies guided by implementation research principles, theories, analyses, and outcomes.

This article serves as a response to Lynch et al’s 11 call for implementation research studies that are urgently needed in the stroke rehabilitation field. We present a review of (a) implementation theories, models, and frameworks, (b) implementation strategies, (c) target outcomes, and (d) implementation study designs—all of which should be taken into consideration when conducting implementation research. We also provide a transparent example of a study to demonstrate how these considerations can be integrated into neurorehabilitation research and practice.

Overview of Implementation Research

Implementation research – often referred to as “implementation science” – is the scientific study of methods to promote the use of research findings in real-world practice. 10 Studies of implementation may include the identification of the contextual factors that impede or promote the implementation of specific best practices, 17 may be observational studies that examine the natural process used to adopt evidence-based interventions in routine care, 18 or may include experimental studies designed to test the effect of “implementation strategies” on improving practice patterns of practitioners, clinics, and systems. 19 To advance the translation of evidence into practice, neurorehabilitation researchers are encouraged to consider the following principles from the implementation science field and implementation research logic models 20 when developing their own implementation studies and research agendas.

Consideration #1: Guiding Theories, Models, and Frameworks

Theories, models, and frameworks (herein forth collectively referred to as “theories”) in implementation research are intended to help researchers identify implementation determinants (eg, barriers), select and evaluate implementation strategies, frame study questions, and drive study objectives. 21 By applying theory, researchers can generate hypotheses, predict relationships among variables, and/or contextualize findings to maximize their generalizability to other settings. However, implementation theories have been historically underutilized, threatening the manner in which study findings can be interpreted and extrapolated. 22 We recommend that neurorehabilitation investigators interested in conducting implementation research familiarize themselves with theories that can be used to guide the design and conduct of their studies. Nilsen 23 indicated that theories have 3 main purposes in implementation research: (1) describe or guide the process of moving research into practice; (2) understand and/or explain what influences outcomes related to implementation, and (3) evaluate implementation. The Exploration, Preparation, Implementation, and Sustainment framework, 24 for instance, has been widely applied to inform the process of translating evidence into real-world care. It describes how the dynamic interactions between contextual factors (eg, organizational processes; patient characteristics; partnerships) influence the implementation of evidence-based practices. 25 In the rehabilitation field specifically, Quatman-Yates et al 26 applied this framework to (a) explore the need for a community-based fall prevention program initiated by community paramedics and the factors influencing program implementation, (b) develop and deploy strategies to support program uptake, and (c) identify opportunities to promote program sustainment overtime.

As another example, the Consolidated Framework for Implementation Research (CFIR), 27 is a meta-theoretical framework comprised of 39 contextual constructs that influence the extent to which evidence-based practices are implemented. It is considered to be a determinant framework and has been applied to understand the barriers and facilitators – or independent variables – that have an impact on implementation outcomes (to be described later) in healthcare systems.17,28,29 Though determinant frameworks, such as the CFIR, are helpful for identifying the multi-level factors (eg, clinician-, organization-, policy-level) that may have a hypothesized relationship with the implementation of evidence-based practices, Nilsen explains that these types of frameworks do not attempt to claim the causal mechanisms of implementation. Given this limitation of determinant frameworks, the implementation science field often borrows influences from behavior change theories (eg, Theory of Planned Behavior 30 ; Social Cognitive Theory 31 ) as these theories help to explain how individual- and systems-level changes occur when studying the implementation of new practices or interventions. 23

To evaluate implementation, the commonly used Reach, Effectiveness, Adoption, Implementation, and Maintenance (RE-AIM) framework 32 provides structure for assessing the uptake of evidence-based practices by practitioners, organizations, and/or systems. Reach refers to the individuals to which an intervention has been delivered and is often measured by the total number of those who participated. Effectiveness (or efficacy) is measured by assessing whether an intervention achieved its desired outcomes and is viewed as the “traditional” form of evaluation to answer the question, “Did the intervention work?” Adoption, not to be confused with Reach, is the number of clinics, organizations, facilities, or hospitals who use the intervention or program of interest. For instance, if 10 clinics were provided the tools and resources to implement EMG-triggered neuromuscular electrical stimulation with stroke survivors, and 7 clinics reporting using it as part of routine practice, this yields a 70% adoption rate. Implementation refers to the extent to which an intervention was implemented as intended, which is otherwise known as “fidelity.” 33 This definition of implementation can be measured through clinician self-report, electronic health record review, and/or observational assessments to determine (a) if all components of an intervention were delivered as expected and (b) what adaptations were made to the intervention when it was implemented. Maintenance includes the assessment of interventions used by clinicians or systems overtime. Like the example provided for Adoption, if after 1 year, only 5 clinics reported their continued use of EMG-triggered neuromuscular electrical stimulation, one may indicate a decline in intervention Maintenance during a 12-month period.

The theories, models, and frameworks described above serve as just 3 examples that can applied in neurorehabilitation research. The following online resource is also a useful tool for investigators interested in comparing and contrasting theories that may be most suitable for their work: https://dissemination-implementation.org/tool/. Additionally, Moullin et al 34 provide 10 key recommendations to consider when selecting, applying, and reporting theories in implementation studies.

Consideration #2: Selecting Implementation Strategies

Broadly defined, implementation strategies are considered to be the approaches used to promote the uptake of evidence-based interventions, assessments, and programs in real-world practice. 35 Examples of implementation strategies include developing educational materials relative to a particular intervention, appointing local champions to lead implementation efforts, embedding clinician reminders into electronic health record systems, performing chart audits, providing feedback to clinicians on their use of an intervention overtime, and changing electronic systems to allow for more streamlined documentation.36,37 Unsurprisingly, just as a single rehabilitation intervention cannot lead to guaranteed improvements for all patients, no single implementation strategy can result in guaranteed improvements for all healthcare systems. Rather, implementation strategies must be developed in response to the needs and abilities of these systems, their clinicians, and their patient populations, hence the critical importance of applying implementation theory to guide implementation studies. For instance, if clinicians’ lack of knowledge and skill in using a particular intervention is perceived to be a barrier (or determinant) hindering implementation, then an appropriate implementation strategy may be to deploy a series of ongoing training sessions combined with expert consultations to enhance clinicians’ knowledge and skill acquisition. 38 All too often, however, implementation strategies are selected and deployed without thorough understanding of the contextual factors that either promote or impede implementation efforts and the hypothesized mechanisms through which implementation changes occur.39,40 In neurorehabilitation research in particular, implementation studies have yielded more promising results when the selection of implementation strategies was informed by theory as compared to those studies that did not clearly use theory to guide decision-making. 41 Though taxonomies exist to help researchers identify potential implementation strategies that can support evidence-based practice uptake,37,42 we strongly encourage researchers to first identify the determinants and mechanisms hypothesized to influence implementation prior to selecting strategies that aim to support the uptake of evidence-based interventions or programs. Once selected, implementation experts suggest that strategies should be clearly reported based on recommendations by Proctor et al 35 to maximize their replicability in other studies and real-world practice scenarios.

Consideration #3: Evaluating Implementation Outcomes

Unlike studies commonly conducted in stroke rehabilitation research, patient-level outcomes are not typically the primary focus of implementation studies. Rather, studies of implementation collect data that are representative of changes in implementation outcomes. As defined by Proctor et al, 43 implementation outcomes are “the effects of deliberate and purposive actions to implement new treatments, practices, and services.” In addition to the aforementioned RE-AIM model, other implementation outcomes include acceptability, adoption, appropriateness, costs, feasibility, fidelity, penetration (or reach), and sustainability.44,45 Options for measuring these outcomes include, for instance, (a) conducting focus groups with practitioners to determine the extent to which an intervention can be sustainably used in practice, (b) completing chart reviews to evaluate practitioners’ adoption of a particular intervention or standardized assessment, and (c) observing the behavioral patterns of practitioners to assess their level of fidelity to an intervention protocol. Proctor et al 43 provide in-depth descriptions of each of these implementation outcomes as well as recommendations for measurement.

Consideration #4: Conducting Hybrid Effectiveness-Implementation Studies

One valid concern about conducting implementation research in the stroke rehabilitation field is the strength of the available “evidence-based” interventions and assessments to be implemented. Certainly, interventions and assessments should be supported by high-quality evidence before being implemented in practice, but stroke rehabilitation research is still evolving, particularly with regard to intervention development. Accordingly, stroke rehabilitation research may be well-suited for hybrid effectiveness-implementation designs. 46 These hybrid designs are characterized by the collection of implementation outcomes (eg, adoption) as well as patient-level outcomes (eg, grip strength). As described by Landes et al, 47 Hybrid Type 1 studies are designed with a primary purpose to test the effectiveness of an intervention on patient outcomes while also allowing for the collection of data representing intervention implementation, such as the barriers or facilitators influencing intervention use. Hybrid Type 2 studies have an equal focus on intervention effectiveness and the effectiveness of one or more implementation strategies. Lastly, Hybrid Type 3 studies prioritize testing implementation strategies while also collecting data reflecting intervention effectiveness on patient-level outcomes. 46

While these 4 considerations collectively serve as a general “primer” for neurorehabilitation researchers interested in implementation science, they can be further illuminated through study exemplars. Below, we describe a multi-year pilot implementation study – currently in progress – to depict how 3 of the aforementioned considerations have been integrated into the research design and study activities.

Study Exemplar: Implementation STRategies for Outcome Measurement

Background

Upper extremity motor deficits afflict up to 80% of stroke survivors 48 and lead to major impairments in self-care and work-related activities. Occupational therapy practitioners commonly treat these significant impairments at the acute, inpatient, and outpatient levels of care. 49 Standardized upper extremity assessments of motor function are widely recommended to guide treatment selection and allow for the objective evaluation of stroke survivors’ response to occupational therapy treatment.50,51 Despite the availability of valid and reliable assessments,52,53 particularly the Fugl–Meyer Assessment-UE (FMA-UE) 54 and the Action Research Arm Test (ARAT), 50 rates of assessment adoption remain as low as 5% among occupational therapy practitioners. 55 Thus, the insufficient use of standardized assessments indicates an critical need to (a) identify the factors influencing implementation and to (b) deploy strategies to support upper extremity assessment implementation in stroke rehabilitation. To address this need, Implementation STRategies for Outcome Measurement (I-STROM) was designed as an exploratory sequential mixed-methods study to address the following objectives:

Objective 1. Establish the modifiable determinants (eg, barriers and facilitators) perceived to impact the adoption of 2 standardized upper extremity assessments (FMA-UE and ARAT) by occupational therapy practitioners.

Objective 2. Develop and test a bundle implementation strategies to facilitate adoption of the Fugl–Meyer and ARAT by occupational therapy practitioners across acute, inpatient, and outpatient stroke rehabilitation.

Consideration #1: Guiding Theories, Models, and Frameworks

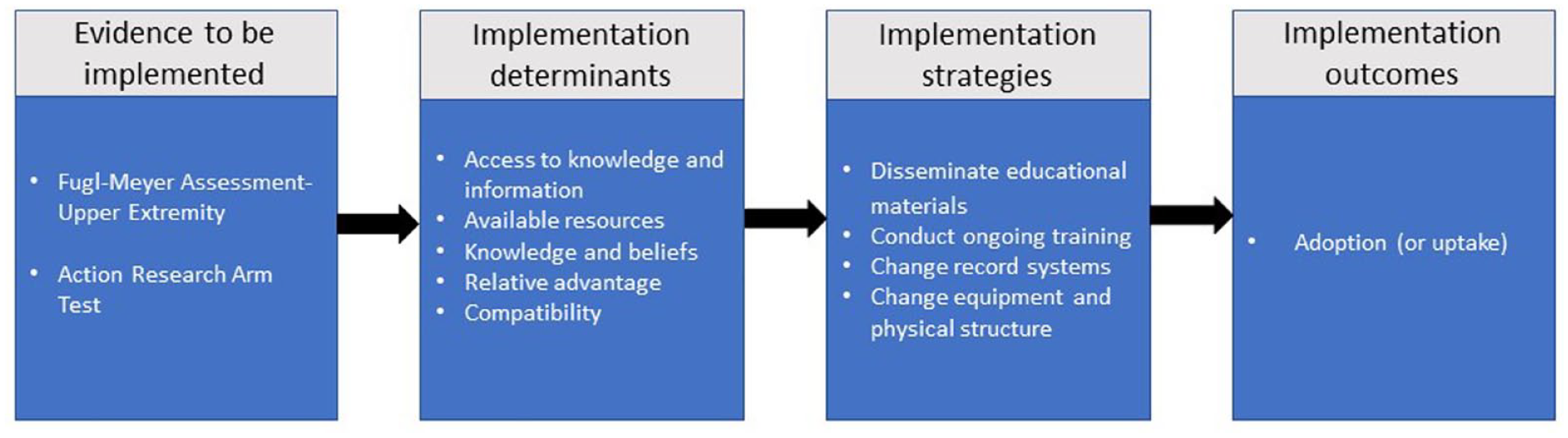

The I-STROM study is guided primarily by the CFIR. 27 Our a priori selection of the CFIR was informed by recent literature on common determinants influencing evidence-based practice implementation in occupational therapy. 4 Early I-STROM study activities included the collection of focus group data from occupational therapy practitioners in acute care, inpatient rehabilitation, and outpatient rehabilitation. Our focus group question guide was developed using resources found on the CFIR’s technical assistance website (cfirguide.org). Once collected, focus group data were analyzed by means of open coding to identify the following 5 major determinants of FMA-UE and ARAT implementation across the stroke care continuum: (a) access to knowledge and information; (b) available resources; (c) knowledge and beliefs; (d) relative advantage; (e) compatibility.

Consideration #2: Selecting Implementation Strategies

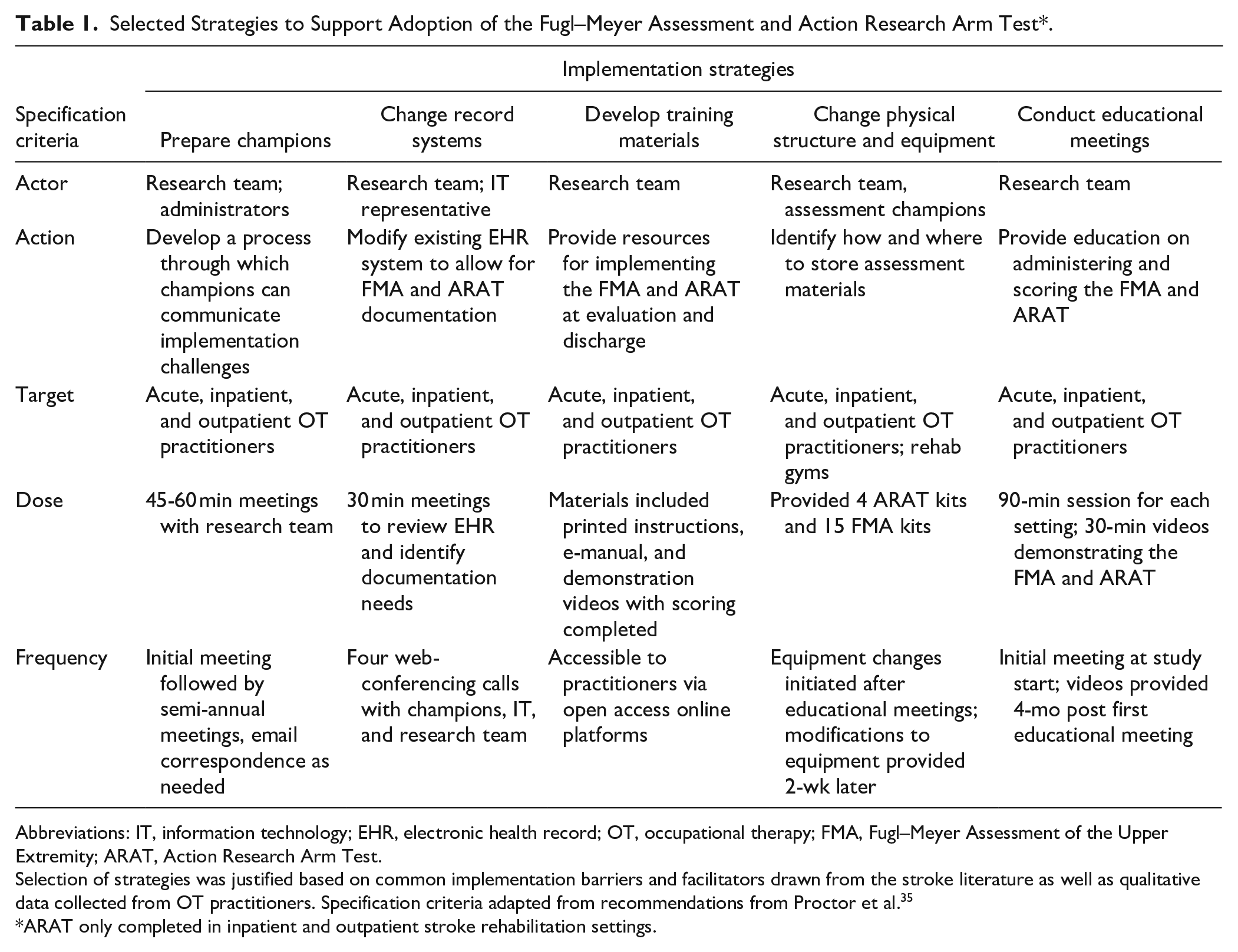

To promote standardized assessment adoption, we selected strategies in response to our 5 identified implementation determinants. These strategies were drawn from the Expert Recommendations for Implementing Change (ERIC) taxonomy. 37 The ERIC taxonomy is a catalog of 73 implementation strategies that can be deployed to enhance the uptake of evidence in practice. For instance, practitioners indicated during focus groups that a lack of available resources (eg, sufficient documentation options) hindered their ability to track changes in FMA-UE and ARAT scores overtime. Thus, from the ERIC taxonomy, we selected to change record systems and collaborated with our rehabilitation informatics department to change fields in the electronic health record system that allowed for more streamlined documentation of the FMA-UE and ARAT. Our selection of strategies was informed by guidance from the implementation science literature,56,57 were vetted with key informants from each practice setting (acute, inpatient, outpatient), and were specified based on reporting recommendations for implementation strategies. 35 Our final set of strategies is listed and described in Table 1.

Selected Strategies to Support Adoption of the Fugl–Meyer Assessment and Action Research Arm Test*.

Abbreviations: IT, information technology; EHR, electronic health record; OT, occupational therapy; FMA, Fugl–Meyer Assessment of the Upper Extremity; ARAT, Action Research Arm Test.

Selection of strategies was justified based on common implementation barriers and facilitators drawn from the stroke literature as well as qualitative data collected from OT practitioners. Specification criteria adapted from recommendations from Proctor et al. 35

ARAT only completed in inpatient and outpatient stroke rehabilitation settings.

Consideration #3: Evaluating Implementation Outcomes

Given our prior data indicating that the adoption rates of the FMA-UE and ARAT were as low as 5%, we hypothesized that our bundle of implementation strategies would improve the adoption of these assessments across all 3 practice settings. As defined by Proctor, adoption is the intentional act of employing an innovation or evidence-based practice. 43 Our approach to measuring changes in adoption are currently ongoing through the collection and analysis of electronic health record data from stroke survivors who were referred to occupational therapy in either acute care, inpatient rehabilitation, and/or outpatient rehabilitation. Our preliminary baseline data indicated that, before occupational therapy practitioners were exposed to our bundle of implementation strategies, the FMA-UE was used in just 3.5% of occupational therapy encounters (eg, evaluations or sessions) between July 2020 and June 2021. During this same time period, the ARAT was not used in any practice setting (Figure 1).

Implementation research logic model as applied to the I-STROM study. Format adapted from Smith et al.

Conclusion

The burgeoning advancements in stroke rehabilitation research are encouraging for practitioners, investigators, patients, and caregivers. However, with these advancements comes the responsibility to ensure that promising interventions, assessments, and technologies can be effectively implemented into practice. Lynch et al’s 11 recognition of the value of implementation research is quite timely given the rapid growth of stroke rehabilitation research and the expected rise in stroke survivors in the coming decades. 58 The study described in this paper serves as one example of a mixed-methods implementation study design that can be applied to the stroke rehabilitation field. While the described study is heavily guided by implementation science methodology, we encourage investigators to weigh several considerations presented in this Point of View piece before embarking upon their own implementation research agenda. By doing so, investigators will advance the integration of implementation science methodologies into neurorehabilitation research, expediting the application of evidence in real-world care.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research has been supported in part by an Implementation Research Grant (#AOTFIR21JUCKETT) funded by the American Occupational Therapy Foundation.