Abstract

Plain Language Summary

We developed a measure to verify that the Alert Program® for self-regulation is being delivered as intended by its developers. Through reviewing existing research, analyzing program materials, and consulting experts, we identified the essential components that should be present when using the program. Expert review showed strong agreement on the core elements, and testing demonstrated that different evaluators could use the measure consistently. This fidelity measure will help ensure that children reliably receive the program across different settings.

Keywords

Introduction

The occupational therapy profession emphasizes developing evidence-based practices that are effective, collaborative, and accessible for clients (American Occupational Therapy Association, 2017). To achieve this objective, implementing fidelity measures is essential as they assess whether program delivery adheres to the developers’ intended design. Such measures are vital for producing dependable evidence regarding the intervention’s efficacy (Song et al., 2010). While evidence-based practice has received significant attention in autism research (Steinbrenner et al., 2020), fidelity measures for interventions addressing core occupational therapy needs remain scarce. This gap is particularly problematic for occupational therapy interventions commonly sought by caregivers of autistic children; differences in sensory reactivity and self-regulation fall into this category (Pfeiffer et al., 2017).

Self-regulation is a complex concept encompassing the ability to regulate behaviors, arousal or attention levels, and emotions (Blackwell et al., 2014). Self-regulation challenges can significantly impact quality of life and impose severe limitations, as individuals lack effective strategies to manage their emotional and arousal responses (Martini et al., 2016). The inability to self-regulate has far-reaching consequences on a child’s engagement in various occupations, including academic pursuits and social interactions (Gill et al., 2018; Mac Cobb et al., 2014a). In the long term, these difficulties may lead to social isolation, as the child may struggle with underdeveloped social-emotional behaviors and experience challenges with self-esteem (Wells et al., 2012). Thus, addressing self-regulation challenges is a critical area of focus for occupational therapists.

Children with self-regulation challenges, including autistic children, often exhibit behavioral difficulties with self-control, frustration, socialization, and academic tasks (Barnes et al., 2008). Research indicates that 80%–90% of autistic people experience some degree of sensory reactivity differences (Ben-Sasson et al., 2009; Philpott-Robinson et al., 2016; Schaaf et al., 2012). These sensory processing differences involve challenges regulating behavioral responses to sensory inputs, manifesting as sensory over-responsivity, sensory under-responsivity, and/or sensory seeking (Miller et al., 2007). Such sensory differences are closely linked to the behavioral challenges of poor self-regulation (Miller et al., 2007), thereby exacerbating day-to-day challenges in learning environments where social interactions become increasingly complex (Wells et al., 2012). Occupational engagement in learning is greatly impacted by sensory differences, which are also linked with emotional dysregulation and anxiety (Lidstone et al., 2014).

Occupational therapists employ diverse interventions to enhance self-regulation skills, acknowledging each individual’s unique needs and strengths. The Alert Program® (AP) is one approach commonly used in clinical and research contexts (Williams & Shellenberger, 1996). The AP has several distinctive features that differentiate it from other self-regulation interventions. It utilizes a car engine metaphor that many children find relatable. The program features a sequential structure with progressive stages (further described in Methods). The AP is commonly implemented in clinical settings, potentially indicating practical utility. In addition, its emphasis on sensorimotor strategies aligns with occupational therapy’s holistic approach to intervention, which addresses physical, cognitive, and emotional aspects of functioning (Nash et al., 2015). The AP was specifically developed to assist children in developing social strategies and increasing their awareness of arousal through sensory-based coping interventions. Through program participation, children learn to identify and modulate their arousal levels, optimize their daily performance, and adapt to environmental requirements (Nash et al., 2015).

Despite the AP’s widespread use in clinical practice, a significant gap exists in the literature regarding standardized fidelity measures for this intervention. The AP has been utilized in various research contexts, with studies examining its effectiveness across different populations and settings. Research has investigated the program’s impact on children with autism spectrum disorder (Barnes et al., 2008), learning disabilities (Wells et al., 2012), and emotional regulation challenges (Nash et al., 2015). These studies have generally reported positive outcomes in improving self-regulation skills, though methodological approaches and implementation methods have varied considerably across investigations. This absence impacts both research and clinical practice in occupational therapy in several ways. First, without a fidelity measure, researchers cannot verify if the AP is being implemented as intended, potentially compromising the validity of outcome studies. Second, clinicians lack a structured framework to guide their implementation, which may lead to drift from the program’s core principles. Third, the absence of a standardized measure impedes comparison across studies, limiting the development of a robust evidence base for the AP.

Developing a fidelity instrument is vital for improving the validity and rigor of intervention research (Borrelli et al., 2005). A fidelity instrument for the AP would guide implementation and ensure consistent use of the program. Moncher and Prinz (1991) provided comprehensive recommendations for promoting and verifying fidelity within intervention studies, addressing critical elements such as treatment definitions, implementor training and characteristics, supervision, verification methods, and data reporting (see Supplemental Table 1). These recommendations extend beyond developing a fidelity measure by including guidelines for implementing and monitoring interventions effectively. By incorporating these established principles, researchers can ensure that interventions are implemented consistently and faithfully, allowing for better comparison and replication of results across studies.

Therefore, the primary objective of this study was to develop a fidelity instrument that can effectively assess the fidelity and adherence of occupational therapists when delivering the AP to children.

Method

Before beginning our fidelity measure development, it was important to understand the core structure of the AP. The AP consists of three progressive stages (Williams & Shellenberger, 1996). The first stage focuses on helping children learn the language and labeling associated with their engine levels. Using the metaphor of a car engine, the AP teaches children how to recognize, modify, and regulate their arousal states (Blackwell et al., 2014). This metaphorical approach helps children understand and conceptualize their internal arousal states in a relatable way. The second stage encourages children to employ various sensorimotor strategies to modulate their engine levels effectively. Finally, in the third stage, children are supported in developing personalized strategies to facilitate engagement in meaningful occupations and reflecting on their progress (Gill et al., 2018). This stage empowers children to recognize their emotions and attention state and develop the ability to adapt their arousal states to suit different situations (Mac Cobb et al., 2014a).

Identification and specification of fidelity criteria,

Development of methods to quantify the fidelity criteria, and

Assessment of the reliability and validity of the fidelity criteria.

A final fourth step was implemented to establish inter-rater reliability. While inter-rater reliability could conceptually fit within Step 3, it was separated because Step 3 focused on establishing content validity through expert consensus, whereas Step 4 specifically tested the measure’s reliability in practical application with multiple raters.

Step 1: Identifying the Fidelity Criteria

Two concurrent activities comprised Step 1: conducting a narrative literature review and analyzing the AP intervention manual. While the AP manual contains comprehensive implementation details, the literature review was essential to understand how researchers and clinicians adapted the program across different contexts and populations. This dual approach ensured the fidelity measure would capture both the developer’s intentions and real-world implementation practices.

The narrative review was conducted following the Scale for the Assessment of Narrative Review Articles (SANRA) guidelines (Baethge et al., 2019) to ensure methodological quality. These guidelines address six key criteria: justification of the review’s importance, clear articulation of aims, comprehensive description of literature search methods, proper article referencing, scientific reasoning in interpretation, and appropriate presentation of relevant data.

For the narrative review, key search terms (self regul*, Alert program, and children) were used in major databases: CINAHL, Medline, PsychInfo, ERIC, and in hand-searching selected occupational therapy journals. Literature was searched through February 2018 with inclusion criteria: (a) published in English, (b) peer-reviewed, and (c) pertaining to the implementation of the AP intervention. All study designs that contained implementation details were included. Duplicate studies identified across different databases were screened and included only once in the final analysis.

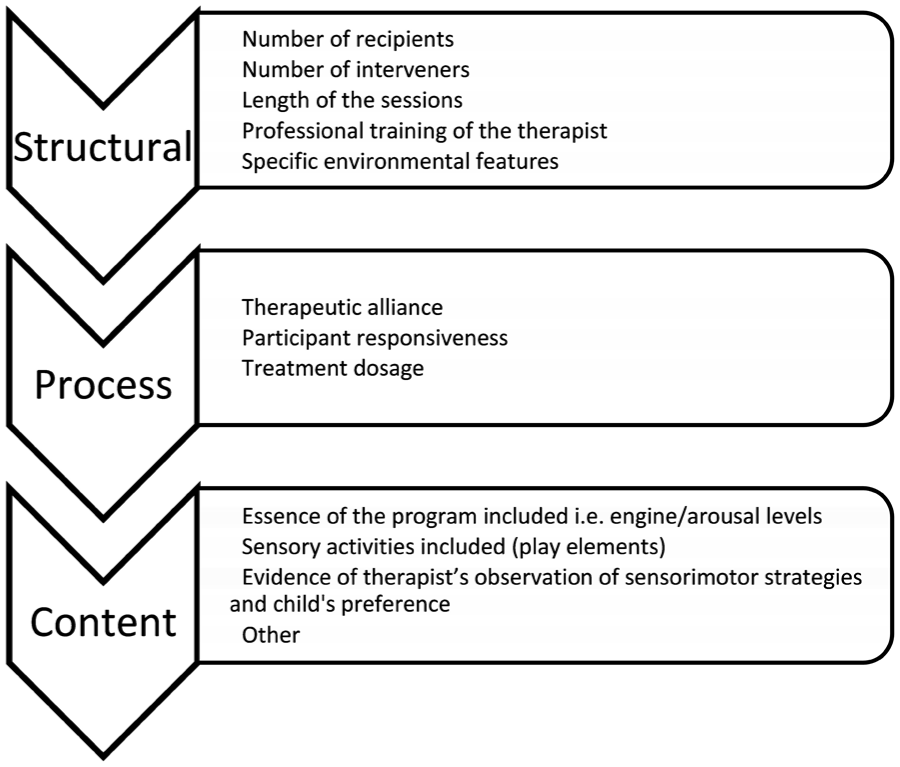

Studies were reviewed independently by two reviewers, with the lead author reviewing all studies and each co-reviewed by another author (AEL/SJL/KPR). In cases of disagreement, a fourth author was consulted. Data extraction initially focused on structure and process elements. However, early in the review, a third element, content, was added (Figure 1). This content element was part of the theoretical framework recommended by Mowbray et al. (2003) but not explicitly included in the initial extraction plan. Upon reviewing the literature and AP manual, it became apparent that content elements (specific AP activities, language, and techniques) were essential for comprehensive fidelity assessment. This addition ensured alignment with established fidelity frameworks.

Breakdown of Core Elements for Narrative Review of Literature.

All authors reviewed the AP intervention manual, and three authors completed the online AP training modules to verify core elements as intended by its developers.

Step 2: Development and Expert Review of the Fidelity Measure

Based on Step 1 outcomes, a preliminary fidelity measure was constructed using a systematic process. The identified elements were organized into three categories (structural, process, and content). For each element, specific criteria with operational definitions were created based on both the AP manual and literature. These were then formatted into a structured checklist with a rating system to assess implementation quality. This preliminary measure incorporated both essential program elements (e.g., use of engine terminology) and implementation variables (e.g., session length, environmental setup) identified in research studies.

Four experts from Australia and the United States reviewed the measure, focusing on three key areas: assessment of each item’s essentiality, evaluation of terminology alignment, and identification of missing elements. Experts were specifically asked to review:

The comprehensiveness of structural elements (session format, length, therapist qualifications)

The appropriateness of process elements (therapeutic alliance, participant engagement)

The accuracy of content elements (AP-specific components and activities)

The clarity and usability of the measure for research and clinical applications.

Step 3: Revision of the Fidelity Measure

In Step 3, the Mowbray framework (Mowbray et al., 2003) was adapted to prioritize content validity through expert consensus before formal reliability testing. This approach ensured the measure’s content accurately reflected AP core components before assessing implementation consistency.

Step 4: Inter-Rater Reliability

Step 4 specifically tested the practical reliability of the refined measure when used by different evaluators. Establishing strong inter-rater reliability is critical for any fidelity measure that will be used across different research teams or clinical settings to ensure consistent evaluation of program implementation.

Two independent raters (HB and EM) with AP training assessed 15 randomly selected intervention videos from a larger study (one session per child). Each rater independently evaluated whether core structural, process, and content elements were present in each session. Percent agreement was calculated to assess inter-rater reliability, which provides a direct measure of consistency between raters.

Results

Step 1: Identifying the Fidelity Criteria

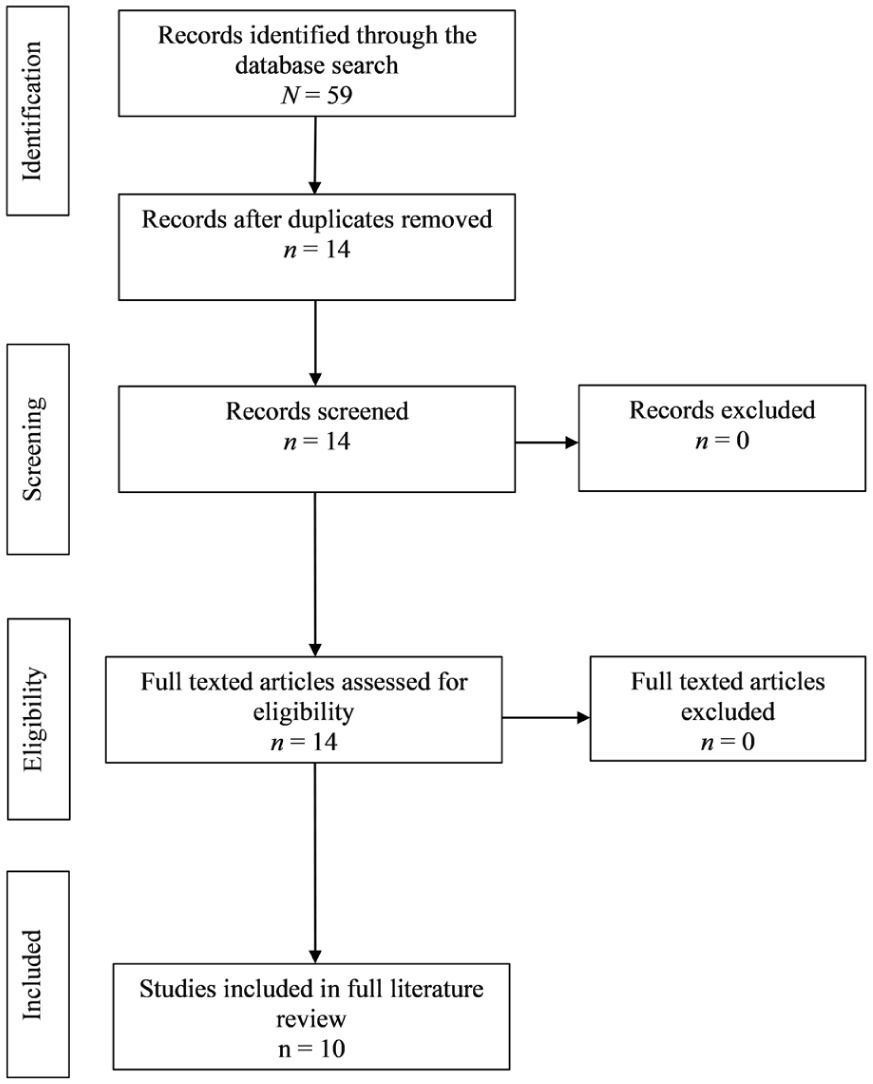

The narrative literature review identified 59 studies, with the screening process depicted in Figure 2. Following duplicate removal and full-text review against inclusion criteria by the first author, 14 studies were included: Barnes et al. (2008); Blackwell et al. (2014); Gill et al. (2018); Mac Cobb et al. (2014a, 2014b); Mah and Doherty (2021); Nash et al. (2015); Pfeiffer et al. (2011); Soh et al. (2015); Soler et al. (2019); Wagner et al. (2017, 2020a, 2020b); and Wells et al. (2012). Data extraction was guided by a standardized form developed to capture the three key elements of fidelity (structural, process, and content) and their subcomponents.

Reporting Items for Narrative Review (Adapted from Page et al., 2021).

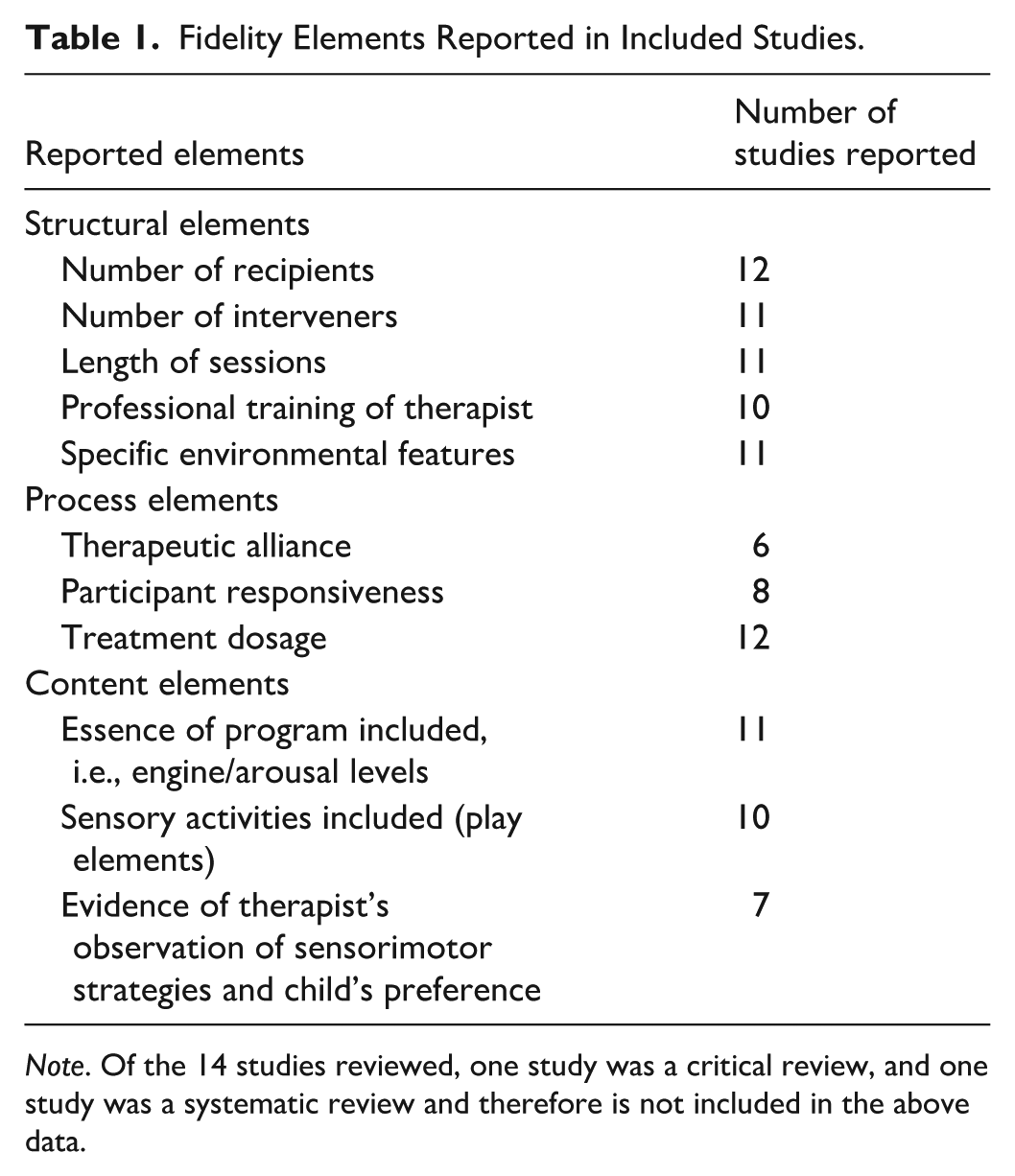

Analysis of the included studies against the elements identified in Step 1 revealed varying levels of fidelity element coverage. Table 1 presents a comprehensive breakdown of how frequently each fidelity element was reported across the reviewed studies. Of the 14 studies reviewed, one study was a critical review, and one study was a systematic review and therefore are not included in the data presented in Table 1.

Fidelity Elements Reported in Included Studies.

Note. Of the 14 studies reviewed, one study was a critical review, and one study was a systematic review and therefore is not included in the above data.

A detailed analysis of these elements revealed that two studies (Blackwell et al., 2014; Wagner et al., 2017) included all structural, process, and content elements as outlined earlier. Four studies included two of three complete fidelity elements, with Soler et al. (2019) omitting structural elements, Nash et al. (2015) and Wells et al. (2012) omitting process elements, and Wagner et al. (2020a) omitting content elements. All other remaining studies only included one complete fidelity element.

Structural Elements

Seven studies included all structural elements (Blackwell et al., 2014; Mac Cobb et al., 2014a; Nash et al., 2015; Soh et al., 2015; Wagner et al., 2017, 2020a; Wells et al., 2012). Two studies omitted the therapists’ professional training (Mac Cobb et al., 2014a; Soler et al., 2019), one did not report on specific environmental features (Mah & Doherty, 2021), and one did not discuss the length of intervention sessions (Barnes et al., 2008). Across all remaining studies, intervention sessions ranged from 10 to 90 minutes.

Process Elements

Five studies included all process elements (Blackwell et al., 2014; Soler et al., 2019; Wagner et al., 2017, 2020a, 2020b). Six studies did not report on the therapeutic alliance (Barnes et al., 2008; Mac Cobb et al., 2014a, 2014b; Mah & Doherty, 2021; Soh et al., 2015; Wells et al., 2012) and five did not state participant responsiveness (Mac Cobb et al., 2014b; Nash et al., 2015; Pfeiffer et al., 2011; Soh et al., 2015; Wells et al., 2012). However, all reported treatment dosages, which ranged from 3 to 16 sessions.

Content Elements

Six studies included all content elements (Blackwell et al., 2014; Mah & Doherty, 2021; Nash et al., 2015; Soler et al., 2019; Wagner et al., 2017; Wells et al., 2012). Two studies had no information regarding sensory activities (Barnes et al., 2008; Mac Cobb et al., 2014b), and five studies did not report evidence of therapeutic observations of sensorimotor strategies and child preferences (Barnes et al., 2008; Mac Cobb et al., 2014a, 2014b; Soh et al., 2015; Wagner et al., 2020a).

Step 2: Development and Expert Review of the Fidelity Measure

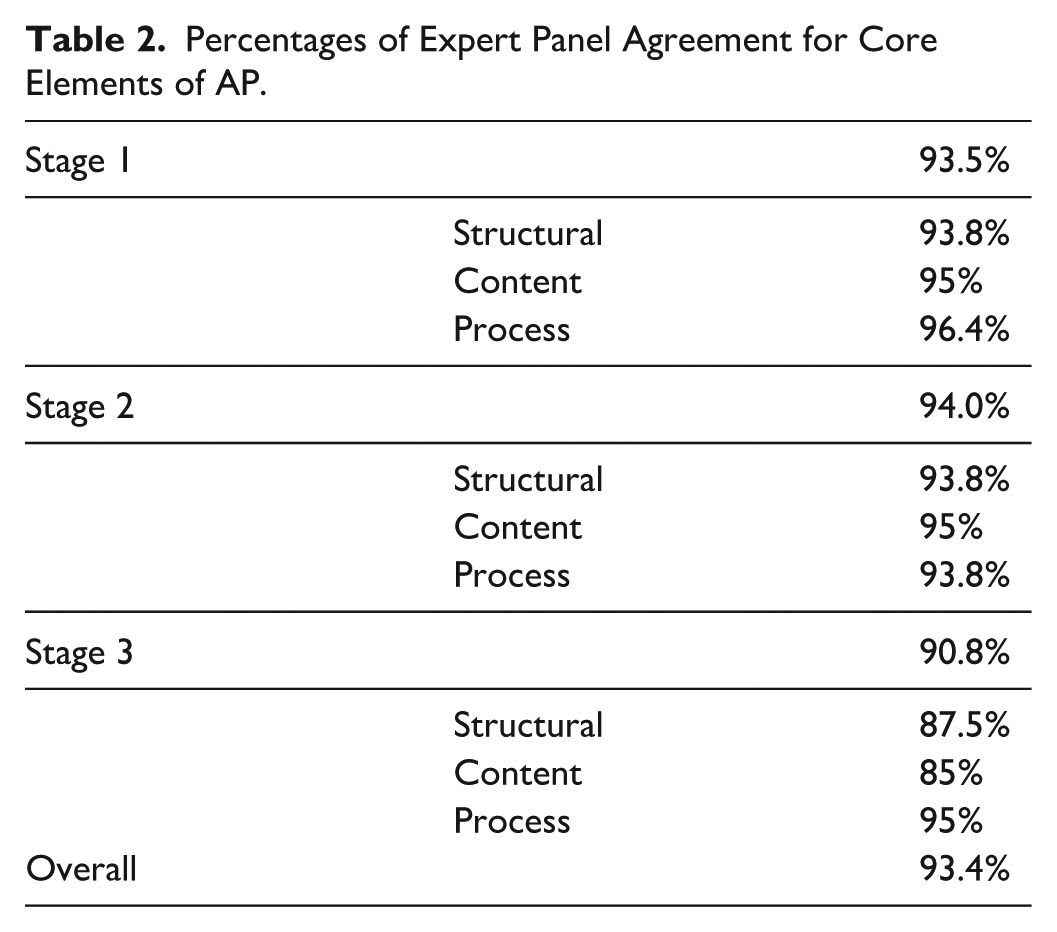

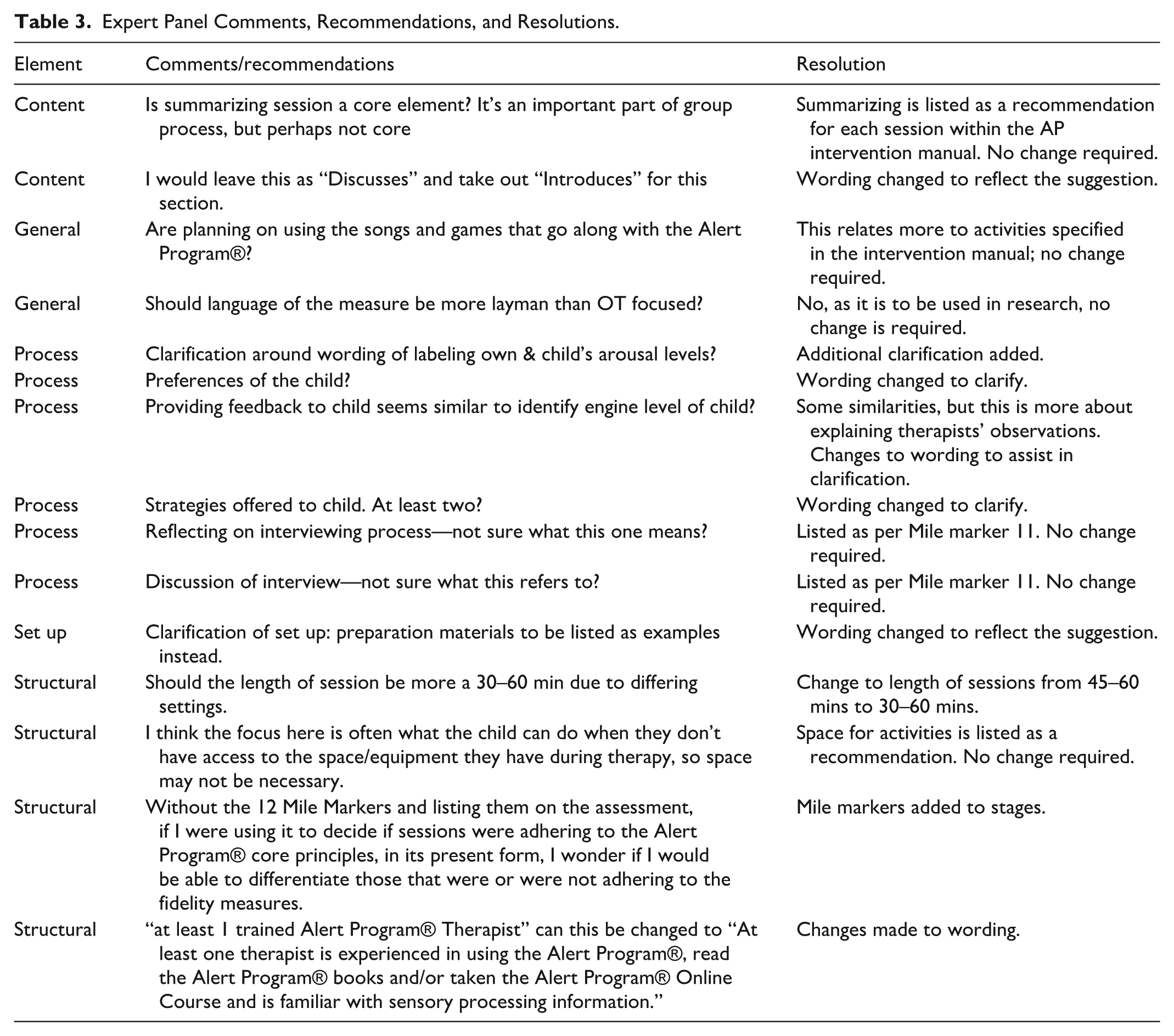

The preliminary fidelity measure was reviewed by all experts, with an agreement rate of 93.4% that the measure contained the core elements of the AP. Table 2 summarizes the agreement rate for the individualized AP stages, with Stage 3 having the least agreement (90.8%). One expert indicated that various additional elements were required within Stage 3. Overall, 15 narrative comments were received focusing on terminology alignment and implementation specifics.

Percentages of Expert Panel Agreement for Core Elements of AP.

Step 3: Revision of the Fidelity Measure

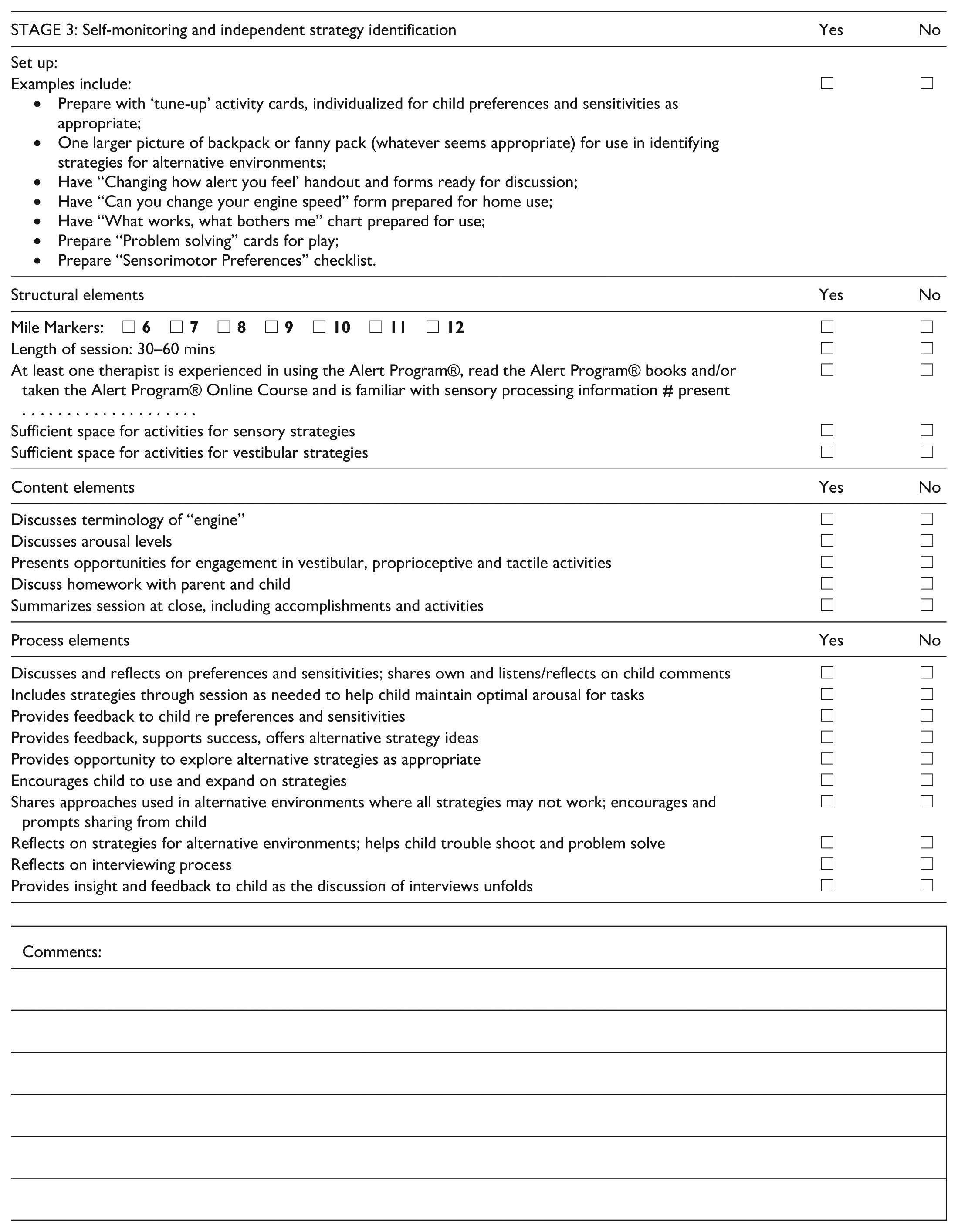

Expert panel feedback resulted in refinements to the measure (See Appendix A for the updated measure). As detailed in Table 3, these modifications included eight specific language and terminology adjustments to align with established AP standards, incorporation of previously omitted mile markers (a critical AP component), refinement of scoring criteria specifications, and adaptations to enhance applicability across diverse clinical settings. Six additional expert recommendations were documented but required no further actions as they were already addressed in the measure design.

Expert Panel Comments, Recommendations, and Resolutions.

Step 4: Inter-Rater Reliability

The observed agreement between raters (HB & EM) indicated 91% agreement on the preliminary fidelity measure.

Discussion

Our preliminary fidelity measure was developed following established recommendations for core elements of fidelity (Century et al., 2010; Di Rezze et al., 2012; Mowbray et al., 2003). While existing literature around fidelity elements typically focuses on structural and process elements, we determined that content elements were essential to accurately represent the AP. The addition of content elements creates a more comprehensive approach to fidelity assessment that captures key aspects of the AP.

In revising our preliminary fidelity measure, expert advice led us to incorporate the specific language used in the AP, emphasizing the importance of identifying, labeling, and using child-appropriate language, the use of sensory measures and equipment, feedback mechanisms to child and carers, home-based labeling, and provision of strategies to assist children with self-regulation. The high level of expert agreement (93.4%) indicates substantial consensus on the measure’s core elements, supporting its content validity as recommended by Zamanzadeh and colleagues (2015), who emphasize the importance of expert panels in establishing content validity for newly developed measurement instruments.

The inter-rater reliability assessment showed excellent percent agreement (91%) between two independent clinicians, supporting the measure’s potential reliability for assessing AP fidelity. This high agreement suggests the measure offers clear, observable criteria that can be consistently identified by different raters—a critical characteristic for any fidelity measure intended for widespread use (Gisev et al., 2013; McHugh, 2012). Further testing with larger samples and diverse raters would strengthen confidence in the measure’s reliability across varied clinical and research contexts.

The developed fidelity measure addresses a gap in occupational therapy literature (see Appendix A). Prior to this study, standardized methods to assess AP implementation consistency were limited, with only preliminary work by Barnes et al. (2008) addressing aspects of implementation fidelity. This measure provides several potential benefits.

First, it offers a framework addressing essential components of the AP, supporting more consistent implementation. Second, it provides a tool for researchers to document fidelity in intervention studies, potentially enhancing research rigor. Third, it may serve as a reference for clinicians implementing the AP. Fourth, the measure’s format makes it applicable for both research documentation and clinical supervision contexts.

The fidelity measure provides a foundation for establishing consistency in AP implementation across research and practice settings. By incorporating Moncher and Prinz’s (1991) recommendations for fidelity assessment (see Supplemental Table 1), we developed a comprehensive measure that addresses not only the structural aspects of program delivery but also the essential process and content elements that define the AP. This approach aligns with current understanding of intervention fidelity as a multidimensional construct that requires attention to both the technical and theoretical aspects of program delivery.

Together, these benefits position the fidelity measure as a valuable addition to the occupational therapy toolkit for those implementing and researching the Alert Program®.

Limitations

Despite the study’s strengths, such as a comprehensive literature review and expert input, there were limitations. The study’s reliance on electronic journals and databases might have missed relevant literature from other sources. In addition, the small group of expert opinions may introduce unintended bias. To minimize this bias, experts were selected from multiple nations, Australia and the United States (Smith & Noble, 2014). However, while a formal Delphi study would have broadened our consensus building, this approach was not used in this study. Future research could benefit from a more extensive expert panel and a more structured consensus-building process. Regarding reliability, this study established percent agreement between two raters, representing initial evidence only. Additional testing using more raters, diverse clinical scenarios, and statistical methods such as intraclass correlation coefficients would further evaluate the measure’s reliability.

Implications for Occupational Therapy Practice

Assessing fidelity in intervention delivery is crucial for achieving consistency in how well a program is executed according to the established protocol. While fidelity does not determine intervention effectiveness, ensuring fidelity enables clinicians and researchers to more accurately measure the true impact of an intervention (Murphy & Gutman, 2012). Equipping researchers with intervention fidelity measures makes it possible to conduct more rigorous research studies that are replicable and comparable (Gill et al., 2018).

The fidelity measure was based on the core elements of the AP, ensuring that interventions remained faithful to the AP’s underlying principles (Murphy & Gutman, 2012). Higher-quality intervention research that examines and discloses fidelity can enhance practice by ensuring practitioners provide consistent support for children with self-regulation needs that are aligned with the AP’s underlying principles.

The fidelity measure may also assist those undertaking AP training to ensure they understand and can implement the AP as intended by the developers in research, education, and practice contexts (Parham et al., 2011). The AP interveners will also be able to test their adherence to the AP’s fidelity and assess whether their implementation of the program achieves the same results as found in clinical trials (Bellg et al., 2004).

Conclusion

Knowing that established interventions are true to their intent is key to best practice. The absence of intervention fidelity measures makes this challenging, particularly for widely used approaches like the Alert Program®. Our fidelity tool for the AP provides a foundation for more consistent implementation assessment, potentially supporting both research and clinical applications. This measure offers a standardized approach to evaluating how closely the program delivery aligns with its intended design. Future research might examine how adherence to program components relates to outcomes for children with self-regulation challenges, furthering our understanding of this specific intervention approach.

Supplemental Material

sj-docx-1-otj-10.1177_15394492261422638 – Supplemental material for Development of a Fidelity Instrument for Delivering the Alert Program® for Self-Regulation

Supplemental material, sj-docx-1-otj-10.1177_15394492261422638 for Development of a Fidelity Instrument for Delivering the Alert Program® for Self-Regulation by Dianne Blackwell, Alison E. Lane, Kelsey Philpott-Robinson and Shelly J. Lane in OTJR: Occupational Therapy Journal of Research

Footnotes

Appendix

| STAGE 3: Self-monitoring and independent strategy identification | Yes | No |

|---|---|---|

| Set up: | ||

| Examples include: |

|

|

| Structural elements | Yes | No |

| Mile Markers: |

|

|

| Length of session: 30–60 mins |

|

|

| At least one therapist is experienced in using the Alert Program®, read the Alert Program® books and/or taken the Alert Program® Online Course and is familiar with sensory processing information # present . . . . . . . . . . . . . . . . . . . . |

|

|

| Sufficient space for activities for sensory strategies |

|

|

| Sufficient space for activities for vestibular strategies |

|

|

| Content elements | Yes | No |

| Discusses terminology of “engine” |

|

|

| Discusses arousal levels |

|

|

| Presents opportunities for engagement in vestibular, proprioceptive and tactile activities |

|

|

| Discuss homework with parent and child |

|

|

| Summarizes session at close, including accomplishments and activities |

|

|

| Process elements | Yes | No |

| Discusses and reflects on preferences and sensitivities; shares own and listens/reflects on child comments |

|

|

| Includes strategies through session as needed to help child maintain optimal arousal for tasks |

|

|

| Provides feedback to child re preferences and sensitivities |

|

|

| Provides feedback, supports success, offers alternative strategy ideas |

|

|

| Provides opportunity to explore alternative strategies as appropriate |

|

|

| Encourages child to use and expand on strategies |

|

|

| Shares approaches used in alternative environments where all strategies may not work; encourages and prompts sharing from child |

|

|

| Reflects on strategies for alternative environments; helps child trouble shoot and problem solve |

|

|

| Reflects on interviewing process |

|

|

| Provides insight and feedback to child as the discussion of interviews unfolds |

|

|

| Comments: |

Acknowledgements

We acknowledge the support of the Hunter Medical Research Institute and are grateful for the contributions made by the University of Newcastle occupational therapy clinical educators Ellen Mason and Jackson Brent, Hannah K Burke, PhD, our expert panel members Mary-Sue Williams and Sherry Shellenberger, Kelly Tanner, PhD, and Emily Saunderson.

Author’s Note

The research described in this study has been presented as an e-poster at the Australian Occupational Therapy Annual Conference in July 2019 (Sydney, NSW).

Ethical Considerations

This study was approved by the University of Newcastle Ethics Committee, H-2018-0036, as part of a more extensive study on the impact of sensory subtypes on outcomes of The Alert Program® for autistic children.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The Hunter Medical Research Institute provided support for this research.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The video data used in this study is not available due to privacy and ethical restrictions. However, the fidelity measure, inter-rater reliability sheets, and other supporting materials from this study are available from the corresponding author upon reasonable request

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.